Submitted:

13 January 2025

Posted:

13 January 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Overview of Paper

1.2. AI – The New Normal or Déjà Vu?

1.3. The Current AI Landscape

“…the broad suite of technologies that can match or surpass human capabilities, particularly those involving cognition.”

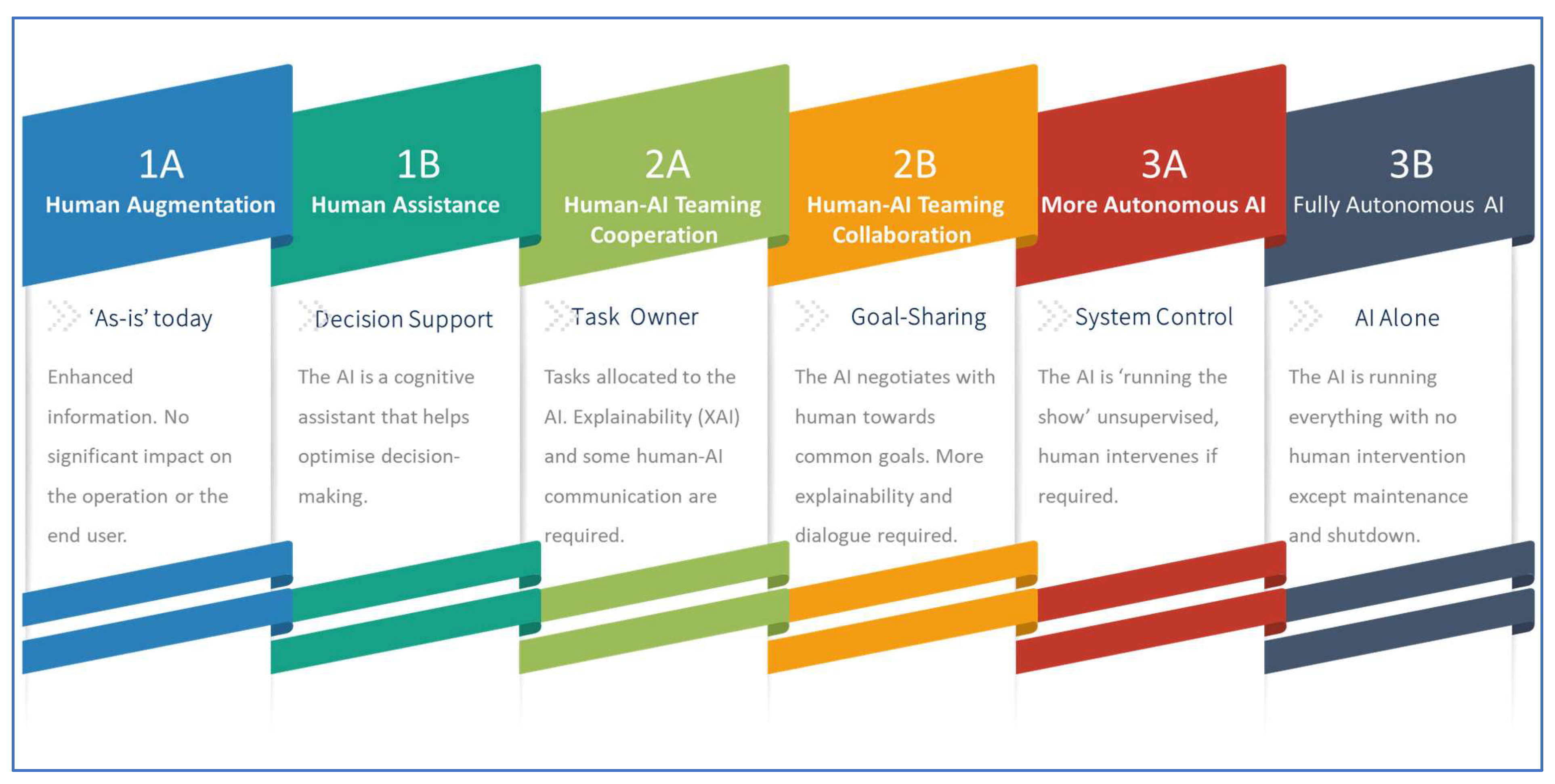

1.4. AI Levels of Autonomy – The European Regulatory Perspective

- Cooperation Level 2A: cooperation is a process in which the AI-based system works to help the end user accomplish his or her own goal. The AI-based system works according to a predefined task allocation pattern with informative feedback to the end user on the decisions and/or actions implementation. The cooperation process follows a directive approach. Cooperation does not imply a shared situation awareness1 between the end user and the AI-based system. Communication is not a paramount capability for cooperation.

- Collaboration Level 2B: collaboration is a process in which the end user and the AI-based system work together and jointly to achieve a predefined shared goal and solve a problem through a co-constructive approach. Collaboration implies the capability to share situation awareness and to readjust strategies and task allocation in real time. Communication is paramount to share valuable information needed to achieve the goal.

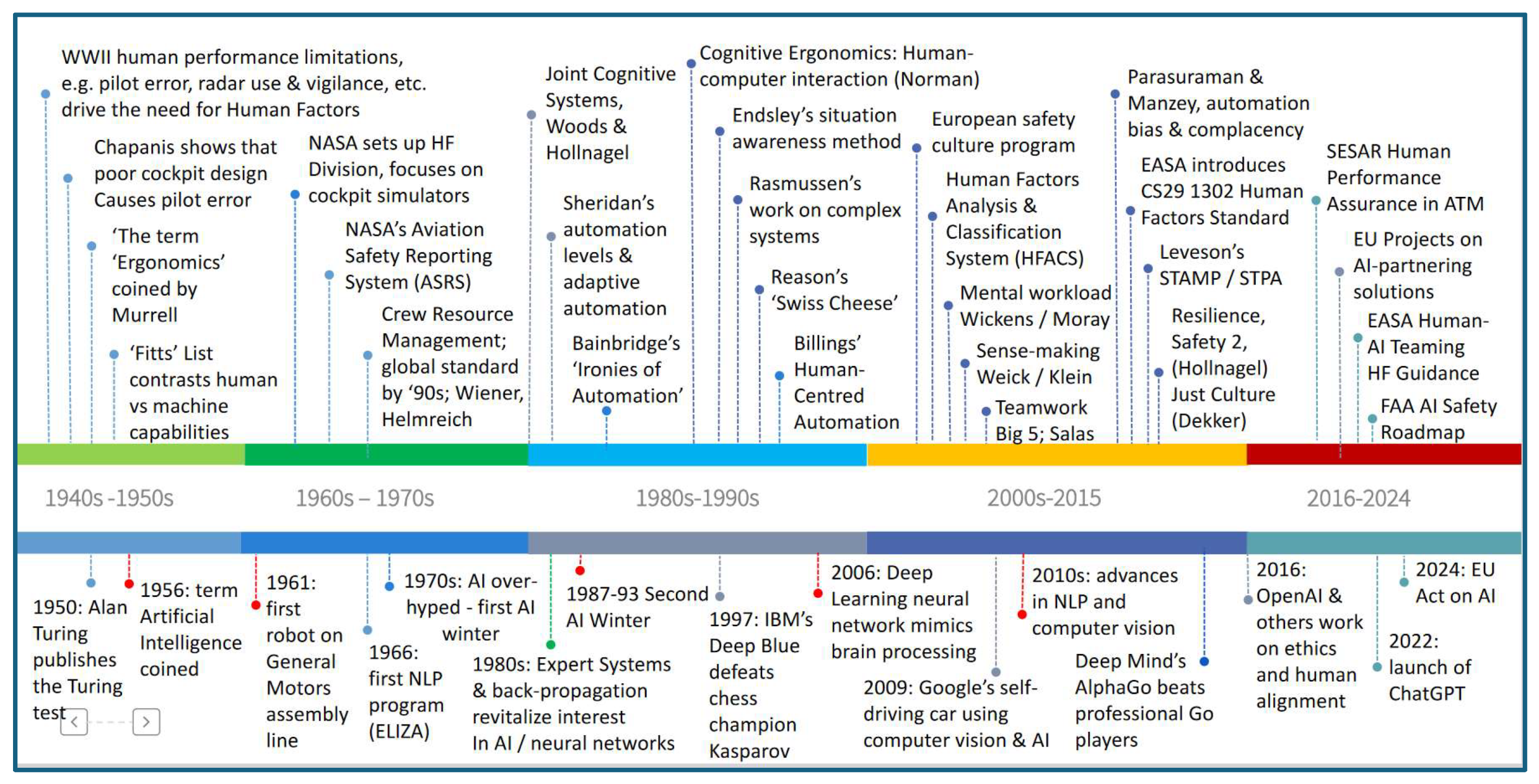

2. The Human Factors Landscape

2.1. The Origins of Human Factors

2.2. Key Waypoints in Human Factors

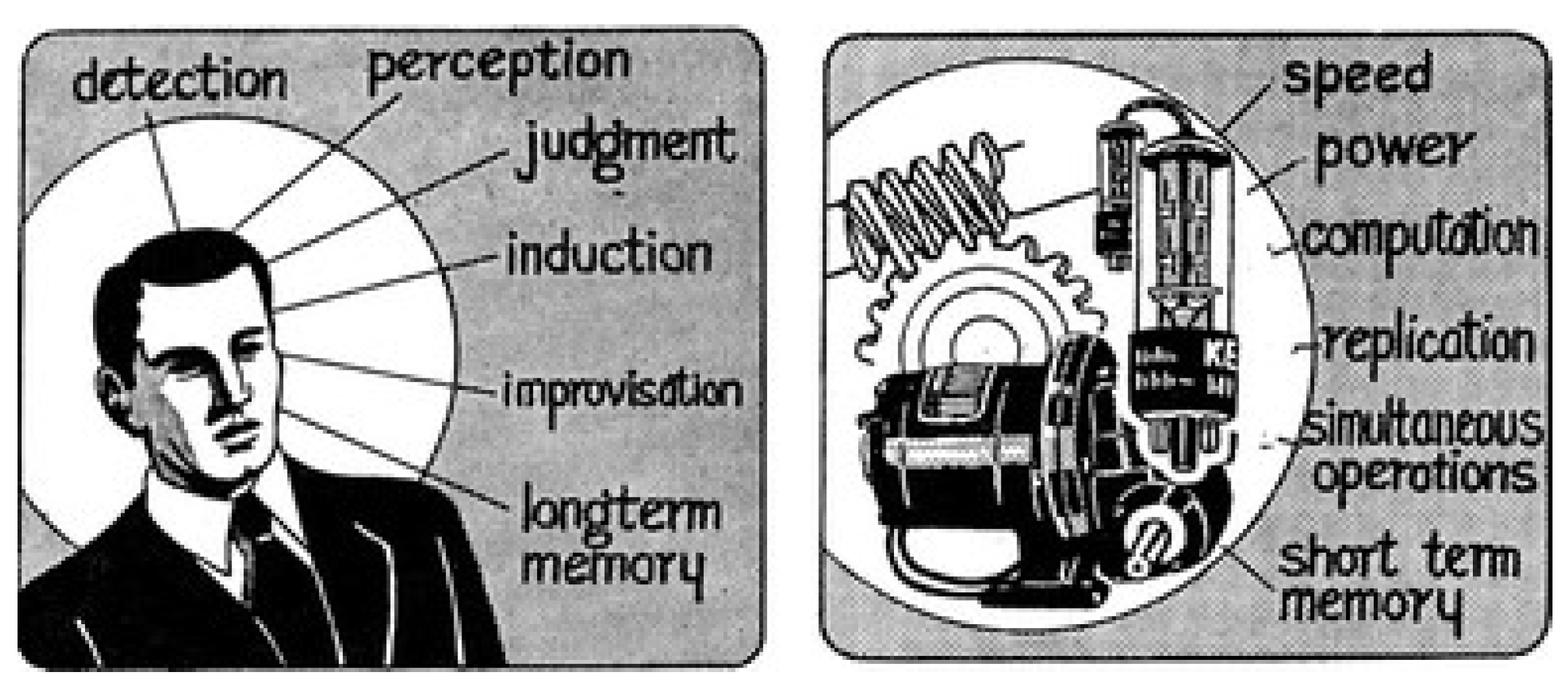

2.2.1. Fitts’ List

2.2.2. Aviation Safety Reporting System

2.2.3. Crew Resource Management

2.2.4. Human-Centred Design & Human Computer Interaction

2.2.5. Joint Cognitive Systems

2.2.5. Ironies of Automation

2.2.6. Levels of Automation & Adaptive Automation

- The computer offers no assistance, human must take all decisions and actions

- The computer offers a complete set of decision/action alternatives, or

- Narrows the selection down to a few, or

- Suggests one alternative, and

- Executes that suggestion if the human approves, or

- Allows the human a restricted veto time before automatic execution

- Executes automatically, then necessarily informs the human, and

- Informs the human only if asked, or

- Informs the human only if it, the computer, decides to

- The computer decides everything, acts autonomously, ignores the human

2.2.7. Situation Awareness, Mental Workload & Sense-Making

2.2.8. Rasmussen & Reason – Complex Systems, Swiss Cheese, and Accident Aetiology

2.2.9. Human Centred Automation

- The human must be in command

- To command effectively, the human must be involved

- To be involved, the human must be informed

- The human must be able to monitor the automated system

- Automated systems must be predictable

- Automated systems must be able to monitor the human

- Each element of the system must have knowledge of the others’ intent

- Functions should be automated only if there is good reason to do so

- Automation should be designed to be simple to train, learn, and operate

2.2.10. HFACS (& NASA-HFACS and SHIELD)

2.2.11. Safety Culture

2.2.12. Teamwork and the Big Five

2.2.13. Bias and Complacency

2.2.14. SHELL, STAMP/STPA, HAZOP and FRAM

2.2.15. Just Culture and AI

2.2.16. HF Requirements Systems – CS25/1302, SESAR HP, SAFEMODE, EASA & FAA

2.3. Contemporary Human Factors & AI Perspectives

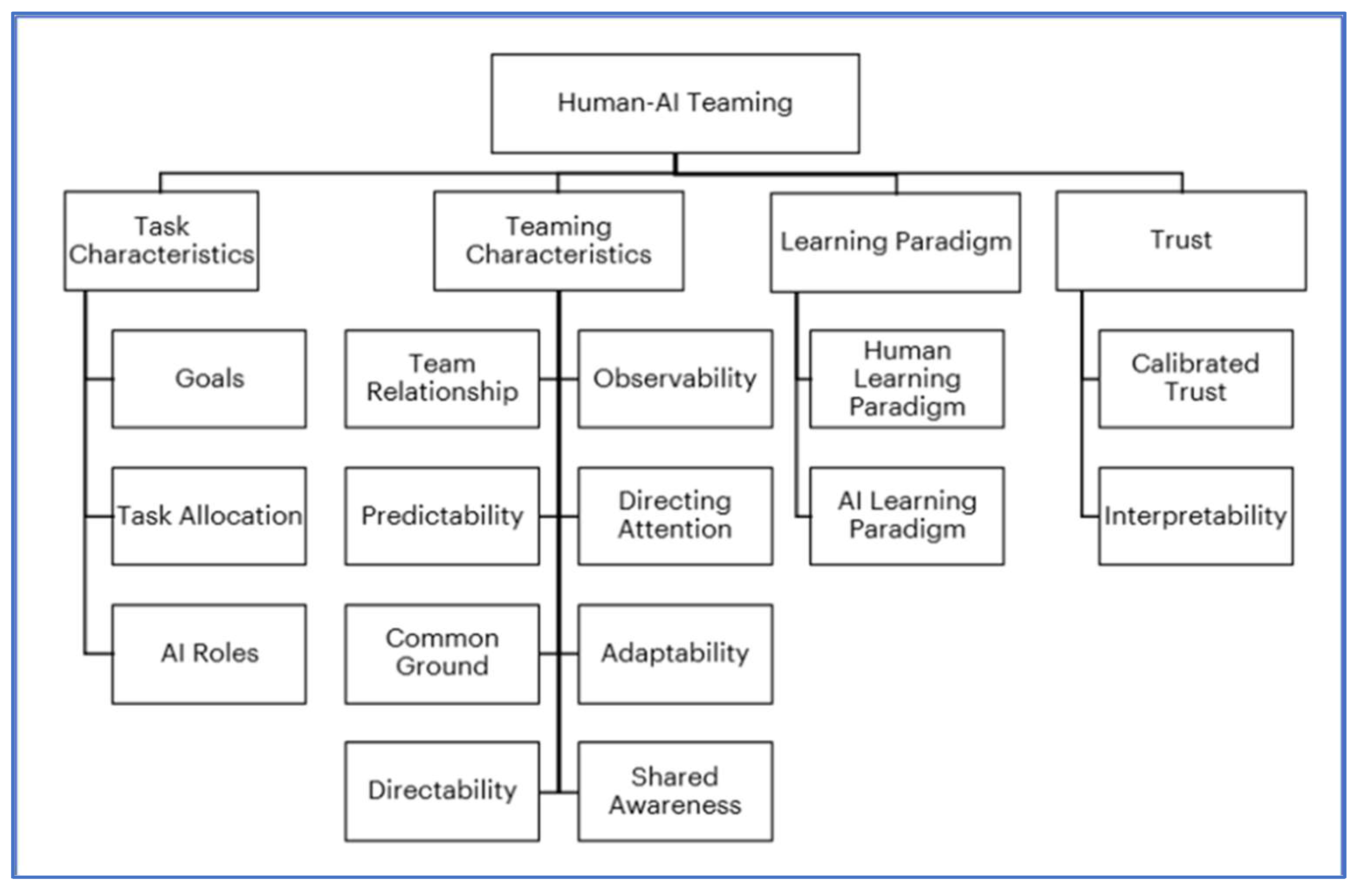

2.3.1. HACO – a Human-AI Teaming Taxonomy

- Directing attention to critical features, suggestions and warnings during an emergency or complex work situation. This could be of particular benefit in flight upset conditions in aircraft suffering major disturbances.

- Calibrated Trust wherein the humans learn when to trust and when to ignore the suggestions or override the decisions of the AI.

- Adaptability to the tempo and state of the team functioning.

2.3.2. AI Anthropomorphism and Emotional AI

3. Human Factors Requirements for Human-AI Systems

- It must capture the key Human Factors areas of concern with Human-AI systems.

- It must specify these requirements in ways that are answerable and justifiable via evidence.

- It must accommodate the various forms of AI that humans may need to interact with in safety critical systems (note – this currently excludes LLMs) both now and in the medium future, including ML and more advanced Intelligent Assistants (EASA’s Categories 1A through to 3A).

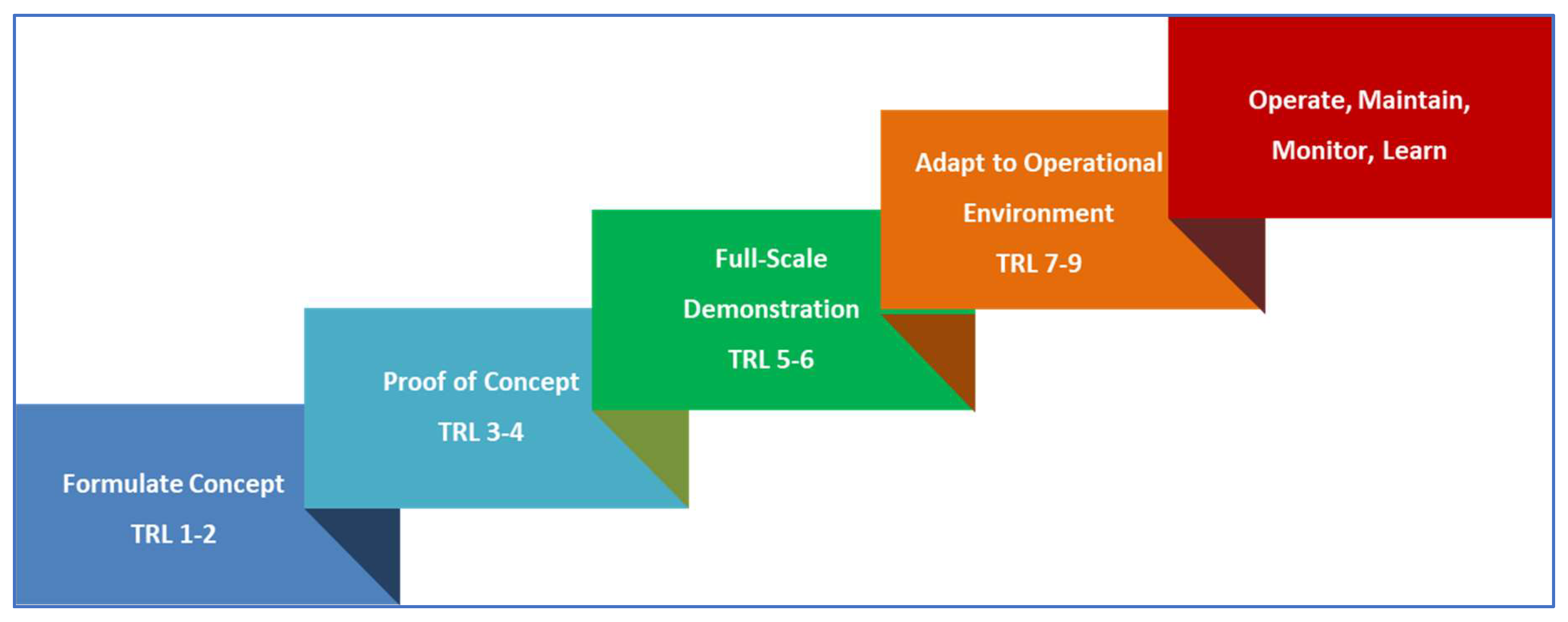

- It must be capable of working at different stages of design maturity of the Human-AI system, from early concept through to deployment into operations.

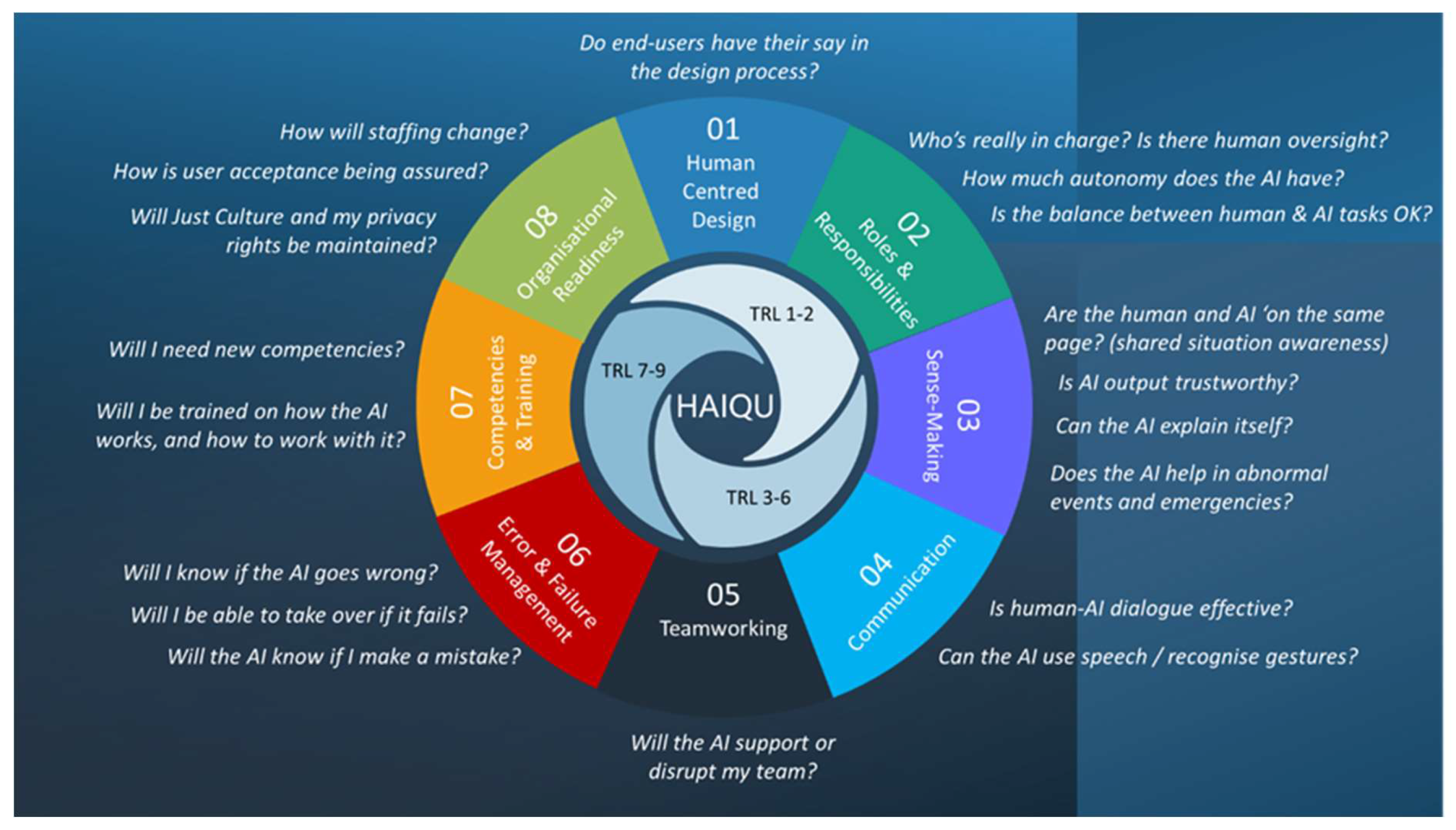

3.1. The Human Factors Requirements Set Architecture

- Human Centred Design – this is an over-arching Human Factors area, aimed at ensuring the HAT is developed with the human end-user in mind, seeking their involvement in every design stage.

- Roles and responsibilities – this area is crucial if the intent is to have a powerful and productive human-AI partnership, and helps ensure the human retains both agency and ‘final authority’ of the HAT system’s output. It is also a reminder that only humans can have responsibility – an AI, no matter how sophisticated, is computer code. It also aims to ensure the end user still has a viable and rewarding role.

- Sense-Making – this is where shared situation awareness, operational explainability, and human-AI interaction sit, and as such has the largest number of requirements. Arguably this area could be entitled (shared) situation awareness, but sense-making includes not only what is happening and going to happen, but why it is happening, and why the AI makes certain assessments and predictions.

- Communication – this area will no doubt evolve as HATs incorporate natural language processing (NLP), whether using pilot/ATCO ‘procedural’ phraseology or true natural language.

- Teamworking – this is possibly the area in most urgent need of research for HAT, in terms of how such teamworking should function in the future. For now, the requirements are largely based on existing knowledge and practices.

- Errors and Failure Management – the requirements here focus on identification of AI ‘aberrant behaviour’ and the subsequent ability to detect, correct, or ‘step in’ to recover the system safely.

- Competencies and Training – these requirements are typically applied once the design is fully formalised, tested and stable (TRL 7 onwards). The requirements for preparing end users to work with and mange AI-based systems will not be ‘business as usual, and new training approaches and practices will almost certainly be required.

- Organisational Readiness – the final phase of integration into an operational system is critical if the system is to be accepted and used by its intended user population. In design integration, t is easy to fall at this last fence. Impacts on staffing levels (including levels of pay), concerns of staff and unions, as well as ethical and wellbeing issues are key considerations at this stage to ensure a smooth HAT-system integration.

4. Application of Human Factors Requirements to a HAT Prototype

5. Conclusions

Funding

Acknowledgments

Conflicts of Interest

| 1 | Shared situation awareness involves the ability of both humans and AI systems to gather, process, exchange and interpret information relevant to a particular context or environment, leading to a shared comprehension of the situation at hand [35] |

| 2 | REGULATION (EU) No 376/2014 OF THE EUROPEAN PARLIAMENT AND OF THE COUNCIL of 3 April 2014. |

| 3 | |

| 4 | |

| 5 | |

| 6 | |

| 7 | |

| 8 |

References

- Turing, A.M. (1950) Computing machinery and intelligence. Mind, 49, 433-460. [CrossRef]

- Turing, A.M. and Copeland, B.J. (2004) The Essential Turing: Seminal Writings in Computing, Logic, Philosophy, Artificial Intelligence, and Artificial Life plus The Secrets of Enigma. Oxford University Press: Oxford.

- Dasgupta, Subrata (2014). It Began with Babbage: The Genesis of Computer Science. Oxford University Press. p. 22. ISBN 978-0-19-930943-6.

- Burkov, A. (2019) The Hundred Page Machine Learning Book. ISBN: 199957950X.

- Mueller, J.P., Massaron, L. and Diamond, S. (2024) Artificial Intelligence for Dummies (3rd Edition). Hoboken New Jersey: John Wiley & Sons.

- Lighthill, J. (1973) Artificial Intelligence: a General Survey. Artificial Intelligence – a paper symposium. UK Science Research Council. http://www.chilton-computing.org.uk/inf/literature/reports/lighthill_report/p001.htm.

- https://www.perplexity.ai/page/a-historical-overview-of-ai-wi-A8daV1D9Qr2STQ6tgLEOtg.

- OpenAI. 2023. GPT-4 Technical Report. ArXiv preprint abs/2303.08774 (2023). [CrossRef]

- Huang, L. Yu, W., Ma, W., Zhong, W., Feng, Z., Wang, H., Chen, Q., Peng, W., Feng, J., Qin, B., and Liu, T. (2024) A Survey on Hallucination in Large Language Models: Principles, Taxonomy, Challenges, and Open Question. ACM Transactions on Information Systems. [CrossRef]

- Weinberg, J., Goldhardt, J., Patterson, S. and Kepros, J. (2024) Assessment of accuracy of an early artificial intelligence large language model at summarizing medical literature: ChatGPT 3.5 vs. ChatGPT 4.0. Journal of Medical Artificial Intelligence, 7, 33. [CrossRef]

- Grabb, D. (2023) The impact of prompt engineering in large language model performance: a psychiatric example. Journal of Medical Artificial Intelligence, Vol 6, paper 20. [CrossRef]

- Hable, I., Sujan, M. and Lawton, T. (2024) Moving beyond the AI sales pitch – Empowering clinicians to ask the right questions about clinical AI. Future Healthcare Journal, 11, 3. [CrossRef]

- DeCanio, S. (2016) Robots and Humans – complements or substitutes? Journal of Macroeconomics, 49, 280-291.

- Defoe, A. (2017) AI Governance » A Research Agenda. Future of Humanity Institute. https://www.fhi.ox.ac.uk/ai-governance/#1511260561363-c0e7ee5f-a482.

- Dalmau-Codina, R. and Gawinowski, G. (2023) Learning With Confidence the Likelihood of Flight Diversion Due to Adverse Weather at Destination.IEEE Transactions on Intelligent Transportation. [CrossRef]

- Dubey, P., Dubey, P., and Hitesh, G. (2025) Enhancing sentiment analysis through deep layer integration with long short-term memory networks. Int. J of Electrical and Computer Engineering, 15, 1, 949-957. [CrossRef]

- Luan, H., Yang, K., Hu, T., Hu, J., Liu, S., Li, R., He, J., Yan, R., Guo, X., Qian, X. and Niu, B. (2025) Review of deep learning-based pathological image classification: From task-specific models to foundation models. Future Generation Computer Systems, Vol 164. [CrossRef]

- Elazab, A., Wang, C., Abdelaziz, M., Zhang, J., Gu, J., Gorriz, J.M., Zhang, Y. and Chang, C. (2024) Alzheimer’s disease diagnosis from single and multimodal data using machine and deep learning models: Achievements and future directions. Expert Systems with Applications, Vol 255 Part C. [CrossRef]

- Lamb, H., Levy, J. and Quigley, C. (2023) Simply Artificial Intelligence. DK Simply Books, Penguin: London.

- Macey-Dare, R. (2023) How Soon is Now? Predicting the Expected Arrival Date of AGI- Artificial General Intelligence. 1204. https://papers.ssrn.com/sol3/papers.cfm?abstract_id=4496418.

- Hawking, S. (2018) Brief answers to the big questions. Hachette: London, pp.181-196.

- HAIKU EU Project (2023) https://haikuproject.eu/ see also https://cordis.europa.eu/project/id/101075332.

- SAFETEAM EU Project (2023) https://safeteamproject.eu/1186.

- Eurocontrol (2023) Technical interchange meeting (TIM) on Human-Systems Integration. Eurocontrol Innovation Hub, Bretigny. https://www.eurocontrol.int/event/technical-interchange-meeting-tim-human-systems-integration.

- European Parliament (2023) EU AI Act: first regulation on artificial intelligence. https://www.europarl.europa.eu/topics/en/article/20230601STO93804/eu-ai-act-first-regulation-on-artificial-intelligence.

- Duchevet, A., Dong-Bach V., Peyruqueou, V., De-La-Hogue, T., Garcia, J., Causse, M. and Imbert, J-P. (2024) FOCUS: An Intelligent Startle Management Assistant for Maximizing Pilot Resilience. ICCAS 2024, Toulouse, France.

- Bjurling, O., Müller, H., Burgén, J., Bouvet, C.J. and Berberian, B. (2024) Enabling Human-Autonomy Teaming in Aviation: A Framework to Address Human Factors in Digital Assistants Design. J. Phys.: Conf. Ser. 2716 012076. [CrossRef]

- Duchevet, A., Imbert, J-P., De La Hoguea, T., Ferreirab, A., Moensb, L., Colomerc, A., Canteroc, J., Bejaranod, C. and Rodríguez Vázquez, A.L. (2024) HARVIS: a digital assistant based on cognitive computing for non-stabilized approaches in Single Pilot Operations. In 34th Conference of the European Association for Aviation Psychology, Athens, Transportation Research Procedia 66 (2022) 253–261. [CrossRef]

- Minaskan, N., Alban-Dormoy, C., Pagani, A., Andre, J-M. and Stricker, D. (2022) Human Intelligent Machine Teaming in Single Pilot Operation: A Case Study,“ in Augmented Cognition, Bd. 13310, Cham, Springer International Publishing, pp. 348–360. [CrossRef]

- Kirwan, B., Venditti, R., Giampaolo, N. and Villegas Sanchez, M. (2024) A Human Centric Design Approach for Future Human-AI Teams in Aviation. In Ahram, T., Casarotto, L. and Costa, P. (Eds) Human Interactions and Emerging Technologies IHIET 2024, Venice, August 26-28. [CrossRef]

- European Commission (2022) CORDIS Results Pack on AI in air traffic management: A thematic collection of innovative EU-funded research results. October 2022. https://www.sesarju.eu/node/4254.

- EASA (2023) EASA Concept Paper: first usable guidance for level 1 & 2 machine learning applications. February. https://www.easa.europa.eu/en/newsroom-and-events/news/easa-artificial-intelligence-roadmap-20-published.

- Kaliardos, W. (2023) Enough Fluff: Returning to Meaningful Perspectives on Automation. FAA, US Department of Transportation, Washington DC. https://rosap.ntl.bts.gov/view/dot/64829.

- Kirwan, B. (2024) 2B or not 2B? The AI Challenge to Civil Aviation Human Factors. In Contemporary Ergonomics and Human Factors 2024, Golightly, D., Balfe, N. and Charles, R. (Eds.). Chartered Institute of Ergonomics & Human Factors. ISBN: 978-1-9996527-6-0. Pages 36-44.

- Kilner, A., Pelchen-Medwed, R., Soudain, G., Labatut, M. and Denis, C. (2024) Exploring Cooperation and Collaboration in Human AI Teaming (HAT) – EASA AI Concept paper V2.0. In 14th European Association for Aviation Psychology Conference EAAP 35, 8-11 October, Thessaloniki, Greece.

- https://en.wikipedia.org/wiki/Ergonomics.

- Nick Joyce. (2013). "Alphonse Chapanis: Pioneer in the Application of Psychology to Engineering Design". Association for Psychological Science. https://www.psychologicalscience.org/observer/alphonse-chapanis-pioneer-in-the-application-of-psychology-to-engineering-design.

- Meister, D. (1999) The History of Human Factors and Ergonomics. CRC Press Imprint: Abingdon, UK.

- Fitts P M (1951) Human engineering for an effective air-navigation and traffic-control system. Columbus, OH: Ohio State University. https://apps.dtic.mil/sti/citations/tr/ADB815893.

- Chapanis, A. (1965). On the allocation of functions between men and machines. Occupational Psychology, 39(1), 1–11.

- De Winter, J.C.F. and Hancock, P. A. (2015) Reflections on the 1951 Fitts list: Do humans believe now that machines surpass them? Paper presented at 6th International Conference on Applied Human Factors and Ergonomics (AHFE 2015) and theAffiliated Conferences, AHFE 2015. Procedia Manufacturing 3 (2015) 5334 – 5341. [CrossRef]

- Helmreich, R.L., Merritt, A.C. and Wilhelm, J.A. (1999) The Evolution of Crew Resource Management Training in Commercial Aviation. International Journal of Aviation Psychology, 9 (1), 19-32. [CrossRef]

- https://en.wikipedia.org/wiki/Crew_resource_management.

- https://skybrary.aero/articles/team-resource-management-trm.

- https://en.wikipedia.org/wiki/Maritime_resource_management.

- Norman, D.A. (2013). The design of everyday things (Revised and expanded editions ed.). Cambridge, MA London: The MIT Press. ISBN 978-0-262-52567-1.

- Norman, D.A. (1986). User Centered System Design: New Perspectives on Human-computer Interaction. CRC. ISBN 978-0-89859-872-8. [CrossRef]

- Schneiderman, B. and Plaisant, C. (2010) Designing the User Interface: Strategies for Effective Human-Computer Interaction. Addison-WesleyISBN: 9780321537355.

- Schneiderman, B. (2022) Human-Centred AI. Oxford University Press: Oxford.

- Gunning, D. and Aha, D. (2019). DARPA’s Explainable Artificial Intelligence (XAI) Program. AI Magazine, [online] 40(2), pp.44–58. [CrossRef]

- Hollnagel, E. and Woods, D.D. (1983). "Cognitive Systems Engineering: New wine in new bottles". International Journal of Man-Machine Studies. 18 (6): 583–600. [CrossRef]

- Hollnagel, E. & Woods, D.D. (2005). Joint Cognitive Systems: Foundations of Cognitive Systems Engineering. CRC Press.

- Hutchins, Edwin (July 1995). "How a Cockpit Remembers Its Speeds". Cognitive Science. 19 (3): 265–288.

- Bainbridge, L. (1983). Ironies of automation. Automatica, 19(6), 775-779. [CrossRef]

- Endsley, M.R. (2023) Ironies of artificial intelligence. Ergonomics, 66, 11. [CrossRef]

- Sheridan T B and Verplank W L (1978) Human and computer control of undersea teleoperators. Department of Mechanical Engineering, MIT, Cambridge, MA, USA.

- Sheridan, T.B. (1991) Automation, authority and angst – revisited. In: Human Factors Society, eds. Proceedings of the Human Factors Society 35th Annual Meeting, Sep 2-6, San Francisco, California. Human Factors & Ergonomics Society Press. 1991, 18–26. [CrossRef]

- Parasuraman, R., Sheridan, T.B., & Wickens, C.D. (2000). A Model for Types and Levels of Human Interaction with Automation. IEEE Transactions on Systems, Man, and Cybernetics - Part A: Systems and Humans, 30(3), 286-297. [CrossRef]

- Wickens, C., Hollands, J.G., Banbury, S. and Parasuraman, R. (2012) Engineering Psychology and Human Performance. London: Taylor and Francis.

- Endsley, M.R. (1995). Toward a Theory of Situation Awareness in Dynamic Systems. Human Factors, 37(1), 32-64. [CrossRef]

- Moray, N. (Ed.) (2013) Mental workload – its theory and measurement. NATO Conference Serioes III on Human Factors, Greece, 1979. Springer, US. ISBN: 9781475708851.

- Klein, G., Moon, B., and Hoffman, R. (2006) Making Sense of Sensemaking 1: Alternative Perspectives. IEEE Intelligent Systems, 21, 4, 70-73. [CrossRef]

- Rasmussen, J. (1983) Skills, rules, knowledge; signals, signs, and symbols; and other distinctions in human performance models," and IEEE Transactions on Systems, Man, Cybernetics, vol. SMC-13, no. 3,1983. [CrossRef]

- Vicente, K.J. and Rasmussen, J. (1992), Ecological interface design: theoretical foundations. IEEE Transactions on Systems, Man, and Cybernetics, 22, 589-606. [CrossRef]

- Reason J.T. (1990), Human Error. Cambridge: Cambridge University Press.

- Billings, C.E. (1996). Human-centred aviation automation: principles and guidelines (Report No. NASA-TM-110381). National Aeronautics and Space Administration. https://ntrs.nasa.gov/citations/19960016374.

- Shappel, S.A. and Wiegmann, D.A. (2000) The Human Factors Analysis and Classification System—HFACS; DOT/FAA/AM-00/7; U.S. Department of Transportation: Washington, DC, USA, 2000.

- Dillinger, T.; Kiriokos, N. NASA Office of Safety and Mission Assurance Human Factors Handbook: Procedural Guidance and Tools; NASA/SP-2019-220204; National Aeronautics and Space Administration (NASA): Washington, DC, USA, 2019.

- Stroeve, S., Kirwan, B. Turan, O., Kurt, R.E., van Doorn, B., Save, L., Jonk, P., Navas de Maya, B., Kilner, A., Verhoeven, R., Farag, Y.B.A., Demiral, A., Bettignies-Thiebaux, B., de Wolff, L., de Vries, V., Ahn, S., and Pozzi, S. (2023) SHIELD Human Factors Taxonomy and Database for Learning from Aviation and Maritime Safety Occurrences. Safety 2023, 9, 14. [CrossRef]

- IAEA. Safety Culture; Safety Series No. 75-INSAG-4; International Atomic Energy Agency: Vienna, Austria, 1991.

- Kirwan, B. (2024) The Impact of Artificial Intelligence on Future Aviation Safety Culture. Future Transportation, 4, 349-379. [CrossRef]

- Salas, E., Sims, D.E., & Burke, C.S. (2005). Is There a “Big Five” in Teamwork? Small Group Research, 36(5), 555-599. [CrossRef]

- Parasuraman, R. (1997) Humans and Automation: Use, Misuse, Disuse, and Abuse. Human Factors, 39, 2, 230-253. [CrossRef]

- Parasuraman, R. and Manzey, D.H. (2012) Complacency and bias in human use of automation: an attentional integration. Human Factors, 52, 3. [CrossRef]

- Tversky, A. and Kahneman, D. (1974) Judgment under Uncertainty: Heuristics and Biases. Science, Vol. 185, No. 4157, Sep. 27, pp. 1124-1131.

- Klayman, J. (1995) Varieties of Confirmation Bias. Psychology of Learning and Motivation. 32, 385-418. [CrossRef]

- Hawkins, F. H. (1993). Human Factors in Flight (H. W. Orlady, Ed.) (2nd ed.). Routledge. [CrossRef]

- Leveson, N.G. (2018) Safety Analysis in Early Concept Development and Requirements Generation. Paper presented at the 28th annual INCOSE international symposium. Wiley. [CrossRef]

- Kletz, T. (1999) HAZOP and HAZAN: Identifying and Assessing Process Industry Hazards (4th edition). Taylor and Francis, Oxfordshire, UK.

- Kirwan, B., Venditti, R., Giampaolo, N. and Villegas Sanchez, M. (2024) A Human Centric Design Approach for Future Human-AI Teams in Aviation. In Ahram, T., Casarotto, L. and Costa, P. (Eds) Human Interactions and Emerging Technologies. IHIET 2024, Venice, August 26-28. [CrossRef]

- Hollnagel, E. (2012) FRAM: The Functional Resonance Analysis Method. Modelling Complex Socio-technical Systems. CRC Press, Boca Raton, Florida. [CrossRef]

- Hollnagel, E. (2013). A Tale of Two Safeties. International Journal of Nuclear Safety and Simulation, 4(1), 1-9. Retrieved from https://www.erikhollnagel.com/A_tale_of_two_safeties.pdf.

- Kumar, R.S.S, Snover, J, O’Brien, D., Albert, K. and Viljoen, S. (2019) Failure modes in machine learning. Microsoft Corporation & Berkman Klein Center for Internet and Society at Harvard University. November.

- https://skybrary.aero/articles/just-culture.

- Franchina, F. (2023) Artificial Intelligence and the Just Culture Principle. Hindsight 35, November, pp.39-42. EUROCONTROL, rue de la Fusée 96, B-1130, Brussels. https://skybrary.aero/articles/hindsight-35.

- MARC Baumgartner & Stathis Malakis (2023) Just Culture and Artificial Intelligence: do we need to expand the Just Culture playbook? Hindsight 35, November, pp43-45. EUROCONTROL, Rue de la Fusee 96, B-1130 Brussels. https://skybrary.aero/articles/hindsight-35.

- Dubey, A., Kumar, A., Jain, S., Arora, V., & Puttaveerana, A. (2020). HACO: A Framework for Developing Human-AI Teaming. In Proceedings of the 13th Innovations in Software Engineering Conference on Formerly Known as India Software Engineering Conference (pp. 1-9). Association for Computing Machinery. [CrossRef]

- Rochlin, G.I. (1996). "Reliable Organizations: Present Research and Future Directions". Journal of Contingencies and Crisis Management. 4 (2): 55–59. ISSN 1468-5973. [CrossRef]

- Schecter, A., Hohenstein, J., Larson, L., Harris, A., Hou, T., Lee, W., Lauharatanahirun, N., DeChurch, L, Contractor, N. and Jung, M. (2023) Vero: an accessible method for studying human-AI teamwork.

- Zhang, G., Chong, L., Kotovsky, K. and Cagan, J. (2023) Trust in an AI versus a Human teammate: the effects of teammate identity and performance on Human-AI cooperation. Computers in Human Behaviour, 139, 107536. [CrossRef]

- Ho, Manh-Tung, Mantello, P. and Ho, Manh-Toan (2023) An analytical framework for studying attitude towards emotional AI: The three-pronged approach. In MethodsX, 10, 102149. [CrossRef]

- Lees, M.J. and Johnstone, M.C. (2021) Implementing safety features of Industry 4.0 without compromising safety culture. International Federation of Automation Control (IFAC) Papers Online, 54-13, 680-685. [CrossRef]

- Koch, M. (1999). The neurobiology of startle. Progress in Neurobiology, 59(2), 107-128. [CrossRef]

- Thackray, R. I., Touchstone, R. M., & Civil Aeromedical Institute. (1983). Rate of initial recovery and subsequent radar monitoring performance following a simulated emergency involving startle. (FAA-AM-83-13). ttps://rosap.ntl.bts.gov/view/dot/21242.

- BEA. (2012). Rapport final, accident survenu le 1er Juin 2009, vol AF447 Rio de Janeiro—Paris. https://bea.aero/docspa/2009/f-cp090601/pdf/f-cp090601.pdf.

| HUMAN-CENTRED DESIGN |

| Are licensed end-users participating in design exercises such as focus groups, scenario-based testing, prototyping and simulation (e.g. ranging from desk-top simulation to full scope simulation)? |

| Are end-user opinions helping to inform and validate the design concept, as part of an integrated project team including product owner, data scientists, safety, security, Human Factors and operational expertise? |

| Are end-users involved in any hazard identification exercises (e.g. HAZOP, STPA, FRAM etc.)? |

| Do licensed end-users participate in validation and testing activities? |

| ROLES & RESPONSIBILITIES |

| Have any of the various human roles / responsibilities changed? |

| Are there any new roles, or suppressed roles? |

| What is the overall level of autonomy – is the human still in charge? |

| Has the basis for task allocation been articulated, and does it follow Human Factors guidance? |

| Are there criteria for task-switching between human and AI? |

| Is task-switching controlled by the human, by the AI, or a mixture of both? |

| In the case of AI control, can the human reject a task? |

| Can the AI detect poor decision-making by the user and offer alternatives? |

| Can the human monitor and adjust the AI’s goal formulation? |

| If human-AI negotiation is possible, who makes the final decision? |

| SENSE-MAKING |

| Is all the required information presented to the user in an uncluttered way? |

| Is the interaction medium appropriate for the task, e.g. keyboard, touchscreen, voice, and even gesture recognition? |

| Do visual/oral/auditory displays and controls follow Human Factors guidance (e.g. colour coding, luminance, auditory range etc.)? |

| Does the location of a new AI interface with the operational workplace support rather than hinder critical operations? |

| Does the AI-human interface reinforce the end-user’s situation awareness. |

| In cases of moderate (or higher) uncertainty, are alternative suggestions/explanations offered? |

| Can the human query the information/decision via an explainability function? |

| Can the AI provide alternatives, along with likelihood estimates? |

| Is the end-user aware of the key parameters the AI is optimising? Can the end-user alter them? |

| Can the human view both data that were used and data that were ignored by the AI, e.g. anomalies or outliers? |

| COMMUNICATION |

| Is human-AI communication via a proceduralised phraseology (e.g. as pilots and controllers use)? |

| If natural language is used, how is context-sensitivity ensured, so that misunderstandings are avoided? |

| Are humans made aware of what the AI may be monitoring, e.g. speech aspects (to detect stress or fatigue), gestures, etc.? |

| Is the human-AI communication load acceptable to the human? |

| If spoken natural language is used, can the AI process end-user requests, responses and reactions, and acknowledge the user's intentions. |

| In case of confirmed misunderstanding by the end user, can the IA resolve the issue? |

| TEAMWORK |

| Has it been determined which roles will interact with the AI? |

| How will the impact of suppressed or new roles (if any) on teamwork be managed? |

| Is the AI role, its task allocation and authority level made clear to each team member? |

| Is AI-derived situation awareness communicated to the entire team to ensure coherent team situation awareness? |

| Does the human rather than the AI control or oversee task allocation and the overall workflow? |

| Are there sufficient skilled human crew to operate or recover the system in case of AI failure or erroneous behaviour? |

| Can the AI detect a breakdown of team functioning and signal this to the team or compensate accordingly? |

| ERROR & FAILURE MANAGEMENT |

| Is the human trained on AI error modes and how to verify AI results? |

| Are users trained to recognise and take corrective action on strange or erroneous AI outputs? |

| Has the human seen examples of AI incorrect information / advice in simulation training? |

| Does the AI inform the end-user of a detected / predicted shift from normal to emergency or degraded mode? |

| Are there sufficient independent (non-AI) displays of critical functions to allow the human to verify that context is changing? |

| Are end users trained to recognise unsafe AI operating conditions and to take corrective action? |

| Is it clear that the AI won’t interfere with existing alerts and warning systems (e.g. TCAS, STCA etc.)? |

| COMPETENCIES & TRAINING |

| Will working with the AI technology be a competency requirement? |

| Will there be new competency requirements to maintain the AI |

| Is personnel licensing affected in any way? Are there any new licensing requirements? |

| Will the AI’s interactions / monitoring of the team inform competency assessment, and will end-users be aware? |

| If the AI is a learning system, how will human competencies be updated? |

| Have the risks of skills loss been identified and mitigated where required (e.g. with fallback training)? |

| Do existing training systems need to change (computer-based training, classroom training, simulator training, on-the-job training)? |

| Will humans help train the AI (supervised human-in-the-loop training)? |

| Will simulator exercises show what AI failure / misbehaviour looks like? |

| ORGANISATIONAL READINESS |

| Will the overall number of staff increase, decrease or remain the same (Management, Operational, Engineering, Support)? |

| Will new or existing staff be paid lower wages? |

| Do end-users think implementing the AI is a good idea? |

| Do the human team members feel the AI output exceeds that of a human, and improves overall task/system performance? |

| Are end-users comfortable with any legal liability implications of using the AI in safety-critical contexts? |

| Will existing protections afforded by Just Culture in the organisation be affected by the implementation of the AI? |

| Does the system comply with national and EU data protection provisions (e.g., GDPR)? |

| Are users always aware whenever they are interacting with the AI? |

| Could the system have any negative effects on the physical or mental wellbeing of the end user, including moral integrity? |

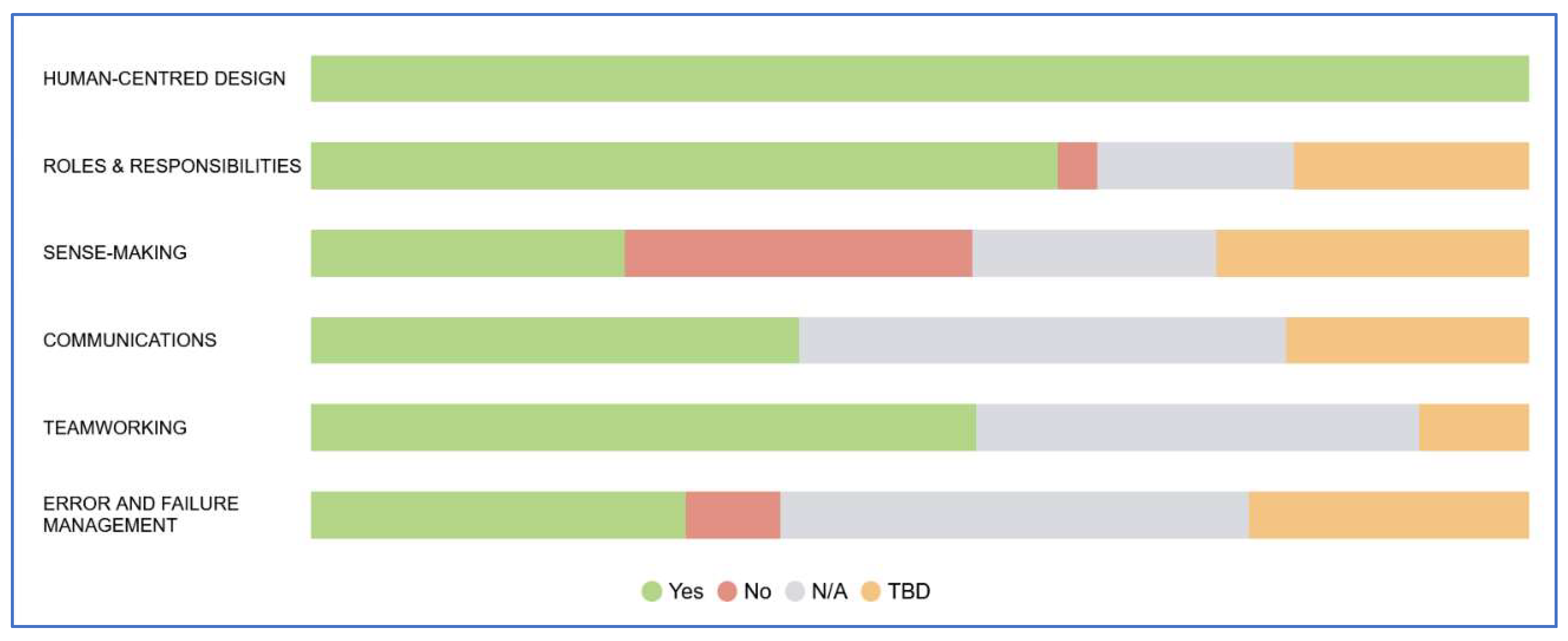

| Human Factors Requirement Question | Y/N N/A TBD |

Justification |

|---|---|---|

| Human Centred Design | ||

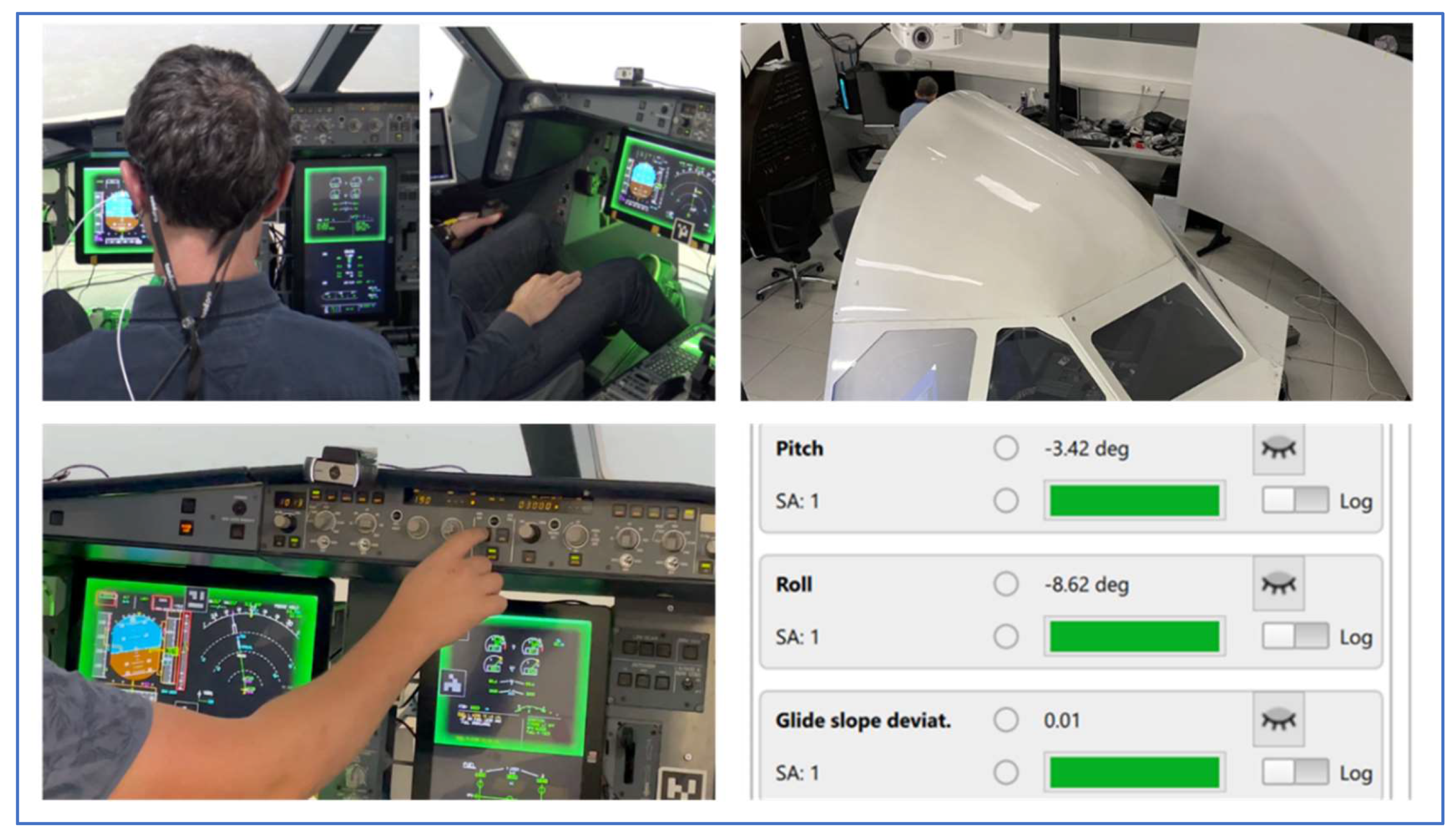

| Are licensed end-users participating in design exercises such as focus groups, scenario-based testing, prototyping and simulation (e.g. ranging from desk-top simulation to full scope simulation)? | Y | ENAC pilots and commercial airline pilots are involved in Val1 and Val2 simulation exercises. ENAC pilots are involved in the design process, and the product owner is also a pilot. |

| Are end-user opinions helping to inform and validate the design concept, as part of an integrated project team including product owner, data scientists, safety, security, Human Factors and operational expertise? | Y | ENAC pilots are involved, and the product owner is a pilot. Additionally there are data science and Human Factors experts in the design team. Security is outside the scope of the study as it is TRL4-5. |

| Are end-users involved in any hazard identification exercises (e.g. HAZOP, STPA, FRAM etc.)? | Y | An ENAC pilot was involved in the first HAZOP. Additionally, points raised by pilots during Val1 simulation were fed into the first HAZOP. |

| Roles & Responsibilities | ||

| Are there any new roles, or suppressed roles? | Y | This is a Single Pilot Operation (SPO) concept study, so one flight crew member is no longer there. |

| What is the level of autonomy – is the human still in charge? | Y | The pilot remains in charge |

| Does this level of autonomy change dynamically? Who/what determines when it changes? | TBD | This aspect is being developed in the Concept of Operations (CONOPS) |

| Are the new / residual human roles consistent, and seen as meaningful by the intended users? | Y | Yes, for pilots it is like a clever flight director or attention director, but the pilot is still in control. |

| Sense-Making | ||

| Is the interaction medium appropriate for the task, e.g. keyboard, touchscreen, voice, and even gesture recognition? | Y / TBD |

Startle and SA support colour etc. was appreciated in Val 1; Electronic Flight Bag (EFB) not used due to the emergency nature of the event. Red was seen as too strong. Voice was suggested to back up the visual direction of SA (this has since been implemented). Changes being tested in Val2 experiment. |

| Does the AI build its own situation representation? | Y | Yes, coming from the aircraft data-bus, and from the pilot's attentional behaviour (eye-tracking). Context is also from the SOPs (Standard Operating Procedures) for the events. |

| Is the AI's situation representation made accessible to the end user, via visualisation and/or dialogue? | Y | It is explained to pilots how the AI works and how it uses eye tracking, the parameters it uses and how it has been informed by pilots for different flight phases (supervised training). The EFB summarises the AI's situation assessment. |

| Does the AI-human interface reinforce the end-user’s situation awareness, so that human and AI can remain 'on the same page'? | Y | Pilots feel it helped their SA, and speed of gaining a situational picture. |

| Can the human modify the AI’s parameters to explore alternative courses of action? | N/ TBD |

The pilot can follow an alternative course of action, though there is no 'interaction' on this with the IA. The acceptability of the directed attention will be discussed with pilots in Val2, and whether they would want the ability to alter parameters during an event. |

| Is at least some operational explainability possible, rather than the AI being a ‘black box’? | Y | Explainability is via the EFB. However, due to the very short response times in a loss of control in flight scenario, pilots had little time for explainability. |

| Can the humans still detect and correct their own errors, or those of their colleagues? | Y | SPO (Single Pilot Operations) concept, so only one crew member. AI is there to guide the pilot out of startle response / temporary loss of situation awareness, and to prevent loss of control in flight. |

| Does the IA possess the ability to detect human errors or misjudgement and notify them or directly correct them? | Y | The IA is intended to detect temporary performance decrement due to startle, and to guide the pilot, but does not go as far as correcting his/her action. |

| Does the level of human workload enable the human to remain proactive rather than reactive, except for short periods? | Y | For these types of sudden scenarios the pilot is in reactive mode. The answer 'yes' is given as it is a short period, and the aim is to reduce cognitive stress, giving them more 'headspace' to deal with the event. |

| Can the human detect errors by the AI and intercede accordingly? | Y | Pilots in the first set of simulations detected when the AI should have deactivated but did not (they could deactivate it manually). This will be improved for the second round of simulations. |

| Does the human trust the AI, but not over-trust it? Is the human taught how to recognise AI malfunction or bad judgement? | Y | Pilots detected some smart agent anomalies during the experiment so did not over-trust it. Can be further explored in Val 2, e.g. using a trust scale. |

| Can the human suggest changes to the parameters the AI is using, to explore alternative courses of action? | N | The pilot can follow an alternative course of action, though there is no 'interaction' on this with the AI. |

| Communications | ||

| Is human-AI communication via a proceduralised phraseology (e.g. as pilots and controllers use)? | Y | Aural alerts for Val2 are using standard (procedural) phraseology (e.g. “Vertical Speed”) |

| Are humans made aware of what human performance aspects the AI may be monitoring or recording, e.g. speech (to detect stress or fatigue), gestures, psychophysiological parameters (EEG skin conductance, eye movements, etc.? | Y | Eye tracking, and other measures (ECG, Galvanic Skin Response, etc.) are employed for Val 1 & 2 as inputs to detect startle. Pilots are fully briefed on all measures used in the simulations and sign a consent form. Data are de-identified from pilot details. |

| Does the AI communication mode, whether oral or audio-visual, avoid the use of emotional mimicry (i.e. mimicking human emotions)? | Y | There is no emotional mimicry employed in the FOCUS AI CONOPS |

| Is the AI clear and concise in times of high stress (e.g. emergencies)? | Y | Yes. No clarity issues identified in Val 1. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).