Submitted:

08 January 2025

Posted:

10 January 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Materials and Methods

- Software Development Engineer in Test (A4Q-SDET): This exam consists of 40 multiple-choice questions, each with exactly one correct answer, and each question worth one point. The exam focuses on evaluating the technical and theoretical competencies of software development engineers in testing, emphasizing the integration of effective test strategies and techniques into the software development lifecycle.

- ISTQB® Certified Tester Foundation Level (CTFL): This exam includes 40 multiple-choice questions spanning cognitive levels K1 through K3. The questions evaluate foundational knowledge of software testing principles, processes, and tools, emphasizing understanding core concepts and applying them in basic scenarios.

- ISTQB® Certified Tester Advanced Level Test Manager (CTAL-TM): This exam contains 50 multiple-choice questions. Each question has either one or two correct answers. Questions are weighted between one and three points. The exam assesses advanced knowledge of test management, including the formulation of test strategies, leadership of testing teams, and the coordination of test-related communication with stakeholders.

- ISTQB® Certified Tester Expert Level Test Manager (CTEL-TM): This exam includes two components: 20 multiple-choice questions addressing cognitive levels K2 through K4, assessing advanced analytical and managerial skills. An essay question component (K5 through K6) designed to evaluate the ability to analyze complex scenarios and create sophisticated solutions. The essay portion was excluded from this study.

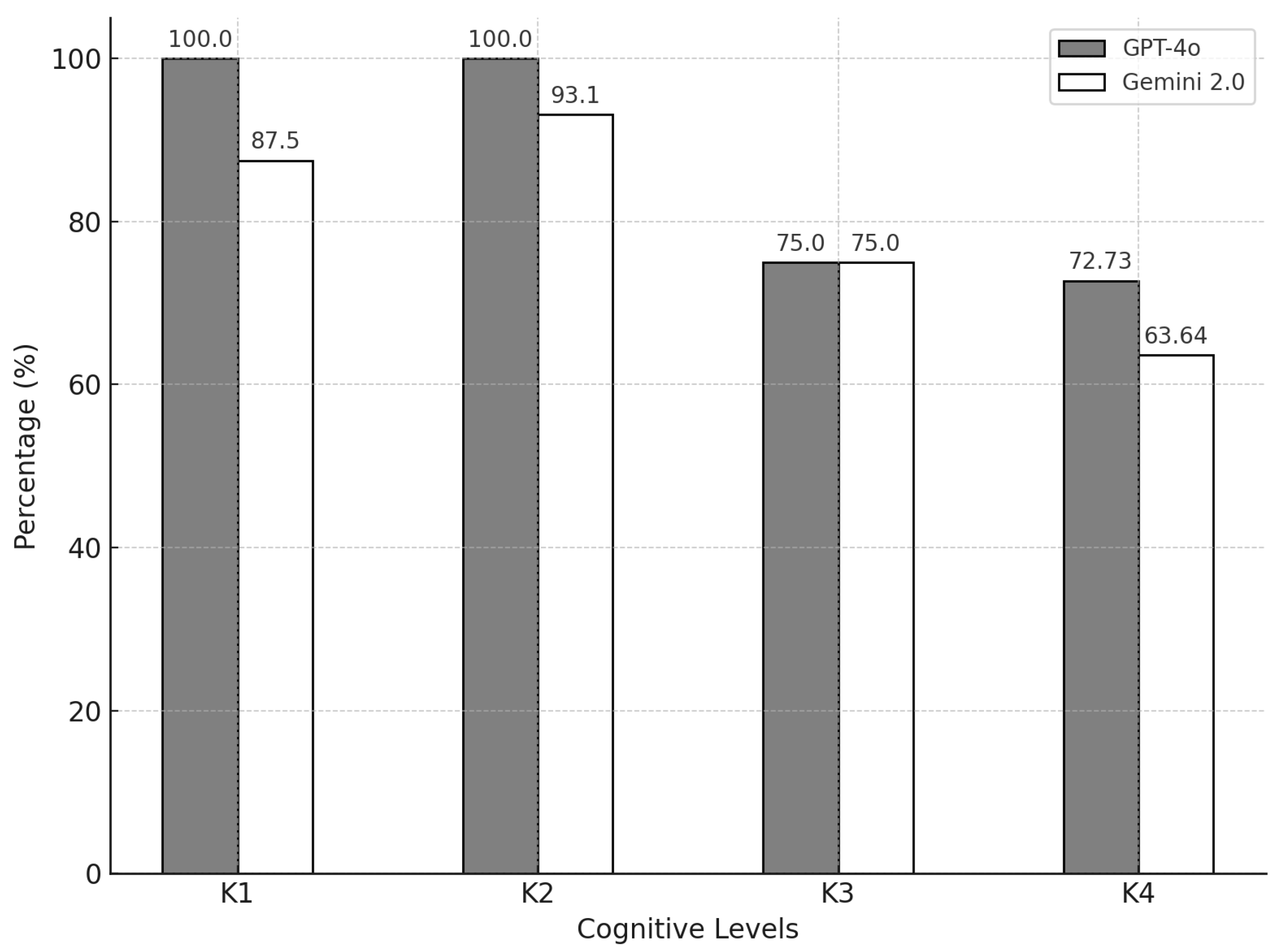

- K1 – Remember: Recognizing or recalling a term or concept.

- K2 – Understand: Explaining or interpreting a statement related to the question topic.

- K3 – Apply: Applying a concept or technique in a given context.

- K4 – Analyze: Breaking down information related to a procedure or technique and distinguishing between facts and inferences.

- K5 – Evaluate: Making judgments based on criteria, detecting inconsistencies or fallacies, and assessing the effectiveness of a process or product.

- K6 – Create: Combining elements to form a coherent or functional whole, inventing a product, or devising a procedure.

- 40 questions from A4Q-SDET,

- 40 questions from CTFL,

- 50 questions from CTAL-TM, and

- 20 questions from CTEL-TM (excluding essay questions).

3. Results

4. Discussion

- Dynamic Real-World Testing: Evaluating model performance in live environments, including GUI testing, defect prediction, and agile workflows.

- Open-Ended Responses: Assessing the ability of LLMs to tackle essay-style questions and generate comprehensive test strategies.

- Multiple Responses: Investigating the impact of generating multiple answers per question on accuracy and consistency.

- Data and Framework Updates: Studying the effect of regularly updating LLM training data to reflect new syllabi, frameworks, and tools.

- Longitudinal Integration: Analyzing the long-term impact of LLMs on productivity and quality in software testing teams.

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Ding, Y. Artificial Intelligence in Software Testing for Emerging Fields: A Review of Technical Applications and Developments. Applied and Computational Engineering 2024, 112, 161–175. [Google Scholar] [CrossRef]

- Wang, J.; Huang, Y.; Chen, C.; Liu, Z.; Wang, S.; Wang, Q. Software Testing With Large Language Models: Survey, Landscape, and Vision. IEEE Transactions on Software Engineering 2024, 50, 911–936. [Google Scholar] [CrossRef]

- Hourani, H.; Hammad, A.; Lafi, M. The Impact of Artificial Intelligence on Software Testing. In Proceedings of the 2019 IEEE Jordan International Joint Conference on Electrical Engineering and Information Technology (JEEIT), Amman, Jordan; 2019; pp. 565–570. [Google Scholar] [CrossRef]

- Khaliq, Z.; Farooq, S.U.; Khan, D.A. Artificial Intelligence in Software Testing : Impact, Problems, Challenges and Prospect, 2022. arXiv:2201.05371. [CrossRef]

- Manojkumar, V.; Mahalakshmi, R. Test Case Optimization Technique for Web Applications. In Proceedings of the 2024 Third International Conference on Distributed Computing and Electrical Circuits and Electronics (ICDCECE), Ballari, India; 2024; pp. 1–7. [Google Scholar] [CrossRef]

- Retzlaff, N. AI Integrated ST Modern System for Designing Automated Standard Affirmation System. In Proceedings of the 2024 4th International Conference on Advance Computing and Innovative Technologies in Engineering (ICACITE), Greater Noida, India; 2024; pp. 1386–1391. [Google Scholar] [CrossRef]

- Amalfitano, D.; Coppola, R.; Distante, D.; Ricca, F. AI in GUI-Based Software Testing: Insights from a Survey with Industrial Practitioners. In Quality of Information and Communications Technology; Bertolino, A., Pascoal Faria, J., Lago, P., Semini, L., Eds.; Springer Nature Switzerland: Cham, 2024; Volume 2178, pp. 328–343. [Google Scholar] [CrossRef]

- Guilherme, V.; Vincenzi, A. An initial investigation of ChatGPT unit test generation capability. In Proceedings of the 8th Brazilian Symposium on Systematic and Automated Software Testing, Campo Grande, MS Brazil; 2023; pp. 15–24. [Google Scholar] [CrossRef]

- Schütz, M.; Plösch, R. A Practical Failure Prediction Model based on Code Smells and Software Development Metrics. In Proceedings of the Proceedings of the 4th European Symposium on Software Engineering, Napoli Italy, 2023; pp. 14–22. [CrossRef]

- Stradowski, S.; Madeyski, L. Can we Knapsack Software Defect Prediction? Nokia 5G Case. In Proceedings of the 2023 IEEE/ACM 45th International Conference on Software Engineering: Companion Proceedings (ICSE-Companion), Melbourne, Australia, 2023; pp. 365–369. [CrossRef]

- Pandit, M.; Gupta, D.; Anand, D.; Goyal, N.; Aljahdali, H.M.; Mansilla, A.O.; Kadry, S.; Kumar, A. Towards Design and Feasibility Analysis of DePaaS: AI Based Global Unified Software Defect Prediction Framework. Applied Sciences 2022, 12, 493. [Google Scholar] [CrossRef]

- Yi, G.; Chen, Z.; Chen, Z.; Wong, W.E.; Chau, N. Exploring the Capability of ChatGPT in Test Generation. In Proceedings of the 2023 IEEE 23rd International Conference on Software Quality, Reliability, and Security Companion (QRS-C), Chiang Mai, Thailand, 2023; pp. 72–80. [CrossRef]

- Olsthoorn, M. More effective test case generation with multiple tribes of AI. In Proceedings of the Proceedings of the ACM/IEEE 44th International Conference on Software Engineering: Companion Proceedings, Pittsburgh Pennsylvania, 2022; pp. 286–290. [CrossRef]

- Straubinger, P.; Fraser, G. A Survey on What Developers Think About Testing. In Proceedings of the 2023 IEEE 34th International Symposium on Software Reliability Engineering (ISSRE), Florence, Italy; 2023; pp. 80–90. [Google Scholar] [CrossRef]

- German Testing Board. Probeprüfungen, 2025.

- International Software Testing Qualifications Board. About Us, 2025.

- Alliance for Qualification. About A4Q, 2025.

- American Software Testing Qualifications Board. What Are the Levels of ISTQB Exam Questions?, 2025.

- Vaillant, T.S.; Almeida, F.D.d.; Neto, P.A.M.S.; Gao, C.; Bosch, J.; Almeida, E.S.d. Developers’ Perceptions on the Impact of ChatGPT in Software Development: A Survey, 2024. arXiv:2405.12195. [CrossRef]

- Newton, P.; Xiromeriti, M. ChatGPT performance on multiple choice question examinations in higher education. A pragmatic scoping review. Assessment & Evaluation in Higher Education 2024, 49, 781–798. [Google Scholar] [CrossRef]

- Zhu, L.; Mou, W.; Yang, T.; Chen, R. ChatGPT can pass the AHA exams: Open-ended questions outperform multiple-choice format. Resuscitation 2023, 188, 109783. [Google Scholar] [CrossRef] [PubMed]

| Exam | Model | Correct / Total | Percentage | Outcome |

|---|---|---|---|---|

| A4Q-SDET | GPT-4o | 33 / 40 | 82.50% | Passed |

| A4Q-SDET | Gemini 2.0 Flash Experimental | 37 / 40 | 92.50% | Passed |

| CTFL | GPT-4o | 38 / 40 | 95.00% | Passed |

| CTFL | Gemini 2.0 Flash Experimental | 34 / 40 | 85.00% | Passed |

| CTAL-TM | GPT-4o | 76 / 88 | 86,36% | Passed |

| CTAL-TM | Gemini 2.0 Flash Experimental | 70 / 88 | 79.55% | Passed |

| CTEL-TM | GPT-4o | 16 / 20 | 80.00% | Passed |

| CTEL-TM | Gemini 2.0 Flash Experimental | 16 / 20 | 80.00% | Passed |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).