4. System Architecture and Design

This section will provide the overall structure of the system used to the model and assess financial risk using DDPM modelling. The system is composed of several key components, from data ingestion to model training, scenario generation and risk evaluation.

4.1. Workflow Overview

The system has a linear pipeline architecture:

Data Acquisition: Historical price data for AAPL, GOOG, and AMZN is retrieved from a structured CSV file.

Preprocessing: Prices are transformed into daily returns and normalized using standard scaling.

Training: A denoising diffusion model based on GRU layers is trained to learn how to denoise return sequences.

Sampling: Once trained, the model is used to generate new return paths by inverting the noise process.

Risk Analysis: The pathways generated are used to estimate the Conditional Value-at-Risk (CVaR) and compared with classical methods.

This arrangement enables the generation of realistic data-based scenarios, which can be utilized for enhanced risk assessment.

4.2. Data Analysis

Taken from open-source platform “Kaggle” dataset of prices of assets from S&P500. Have chosen 3 companies who have a high-level of correlation between each other and have a similar problems in crisis time like COVID-19.

4.3. Return Calculation and Data Cleaning

Asset returns were calculated using this formula:

where

is the return at time t and

is the adjusted closing price at time

.

Data was cleaning using dropna function to delete all empty or NaN values.

4.4. Analysing Dataset

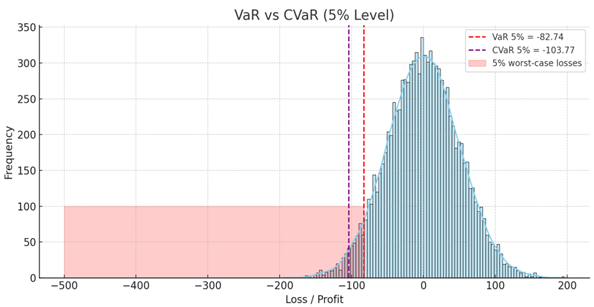

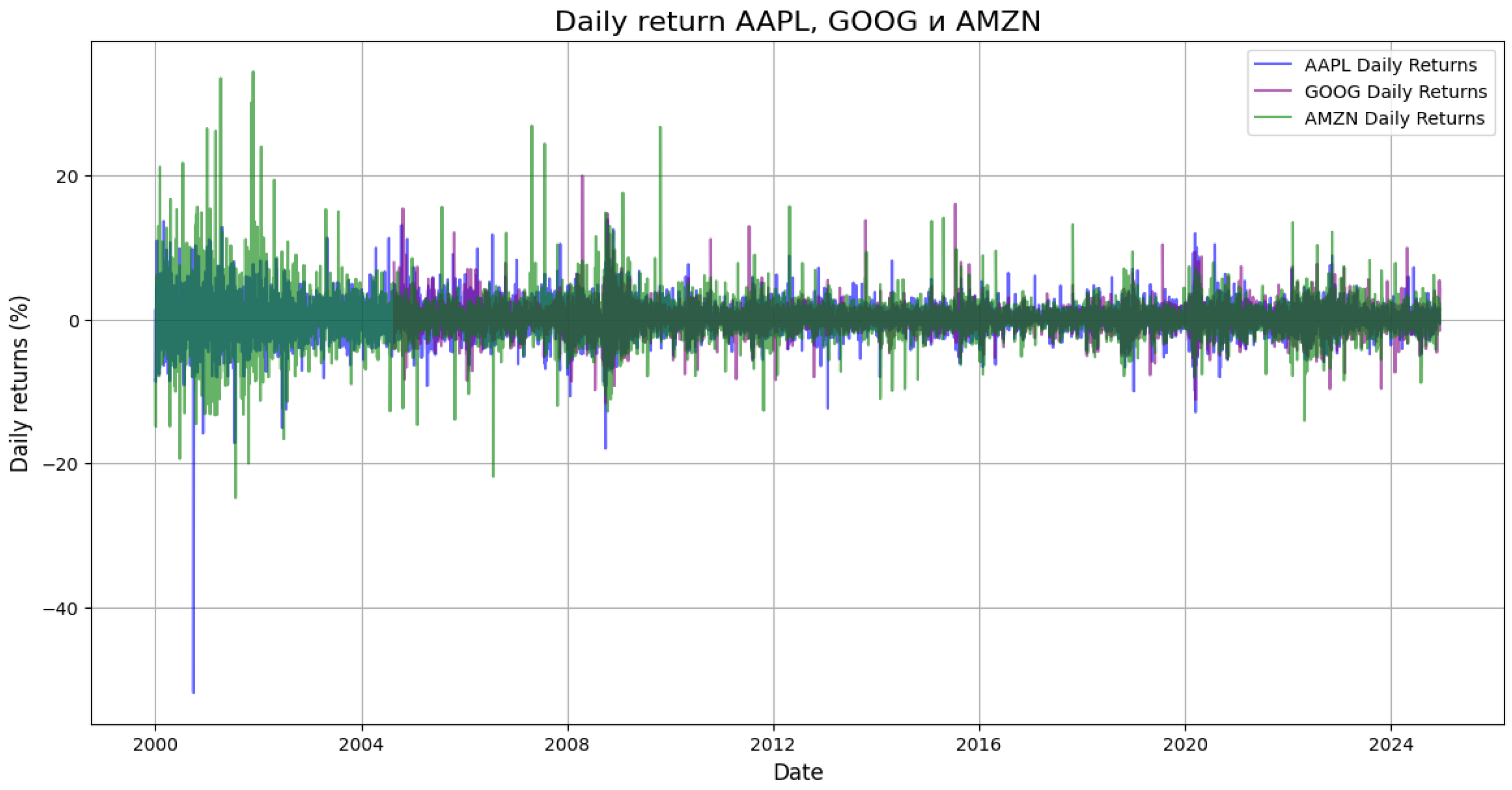

Before proceeding with the development of the models, the dataset was examined closely to determine the overall trends concerning the selected stocks—AAPL, GOOG, and AMZN. As an initial step, I calculated the daily returns using the adjusted closing prices. The computation facilitated the ease of transforming raw price data into percentage change over time, which is more suitable for model development and risk ascertainment.

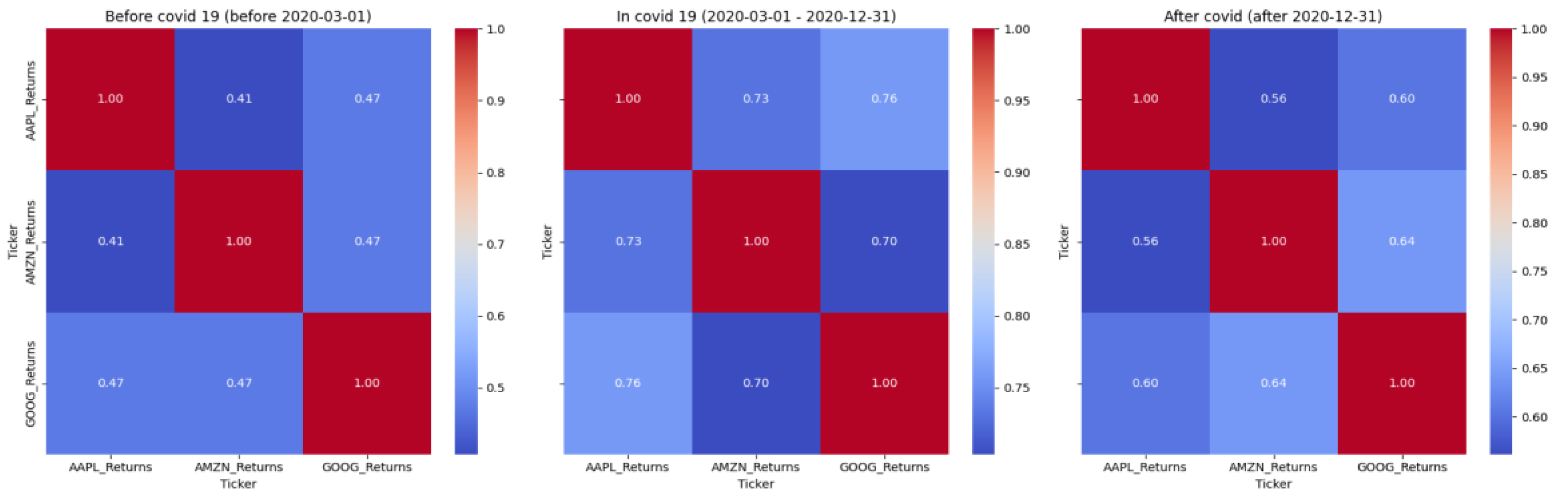

In order to investigate the interdependencies between the assets, I estimated the correlation matrix of the daily returns. In this way, I could analyze to what extent the stocks move together in times of calm market conditions and in times of market volatility. The findings indicated that, although the assets have a general correlation, the intensity of the correlation varies across various periods—most notably for the pre-COVID-19 market crash period.

I also compared the return distributions of both stocks. Histograms and KDE plots such as the ones shown above indicated that the data is not normally distributed and fat-tailed — a common property of financial returns that justifies the use of generative models like diffusion-based methods.

The first analysis furnished the foundation for the modeling stage and enabled the architectural choices that had been made during the model's design process.

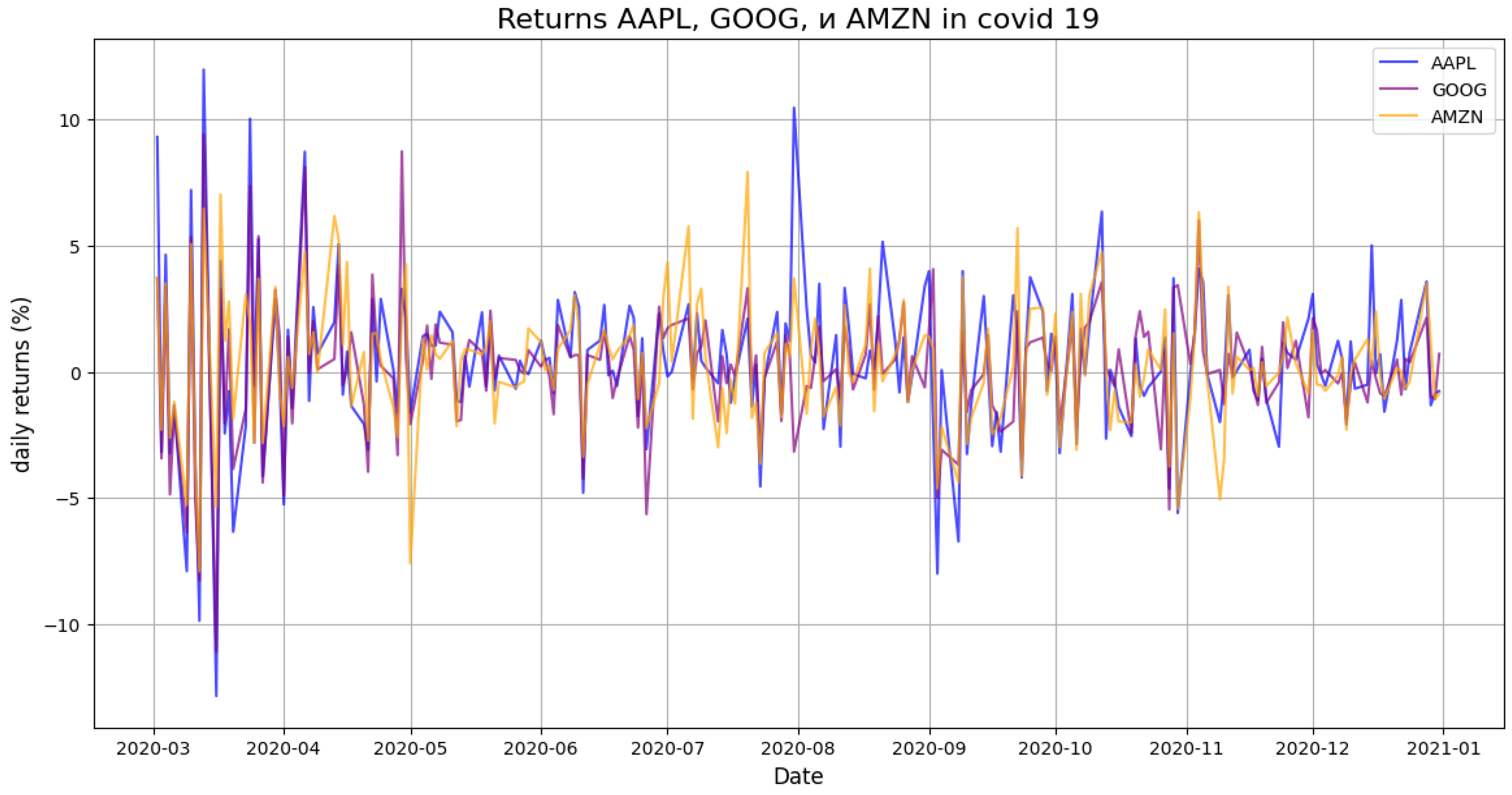

Figure 1.

Daily returns of APPLE, GOOGLE and AMAZON.

Figure 1.

Daily returns of APPLE, GOOGLE and AMAZON.

Figure 2.

Correlation Matrix of Daily Returns.

Figure 2.

Correlation Matrix of Daily Returns.

In order to better understand the data structure and identify the characteristics of asset behavior in different periods, I conducted an analysis of daily returns and correlations between AAPL, GOOG and AMZN stocks. The division into three key stages — before the pandemic, during COVID-19, and after — made it possible to track how the relationship between assets changed in the face of market instability.

Graphs of yields and correlations helped to visualize:

periods of high volatility (for example, in 2020),

dramatic increase in synchronicity between assets during the crisis,

a return to a more fragmented dynamic after it.

This analysis confirmed that the market does not behave the same over time, and that the statistical properties of assets depend on the regime. Therefore, it was important to use a model that could account for instability, nonlinear dependencies, and the "thick tails" of the yield distribution. It is precisely these requirements that are met by the diffusion generative approach, on which the main model in this study is based.

Figure 3.

Returns APPLE, GOOGLE and AMAZON on COVID-19 period.

Figure 3.

Returns APPLE, GOOGLE and AMAZON on COVID-19 period.

Figure 4.

Correlation matrix in COVID-19 period.

Figure 4.

Correlation matrix in COVID-19 period.

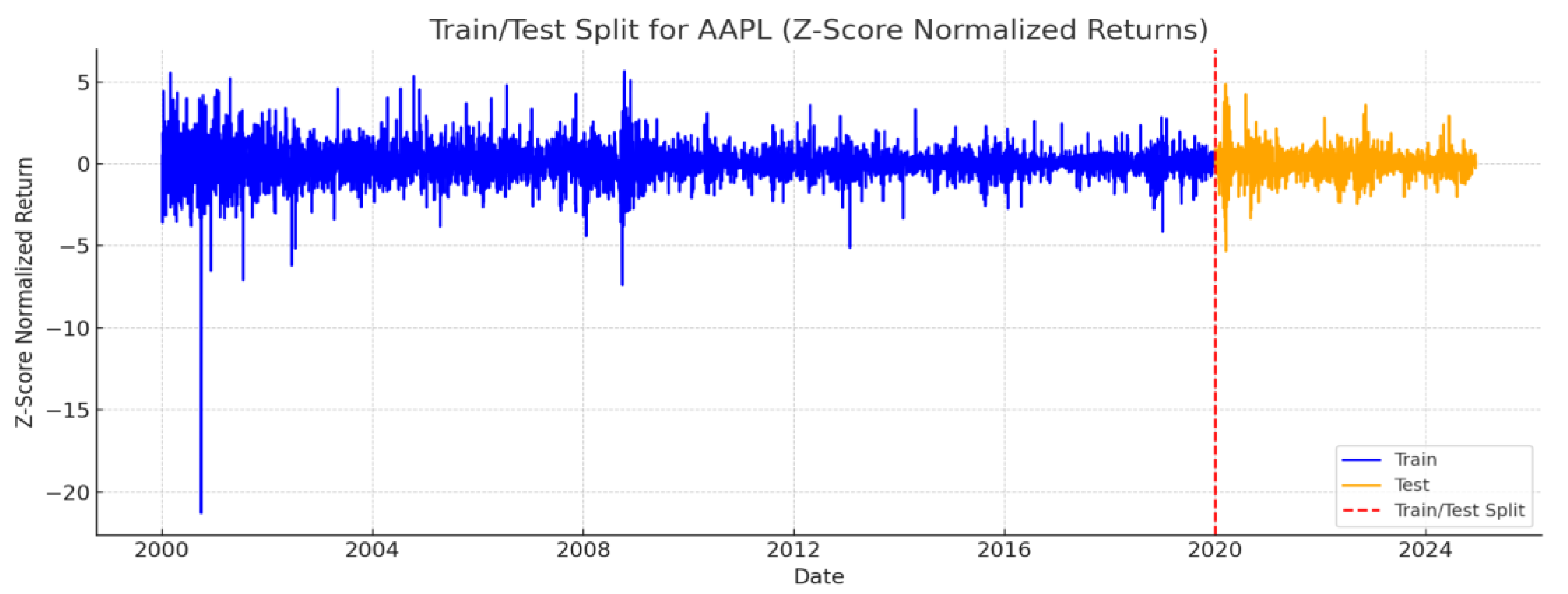

Figure 5.

Split on train and test.

Figure 5.

Split on train and test.

4.5. Data Normalization

Yields from different companies behave differently - some are more volatile, others change smoothly. In order to avoid skewing towards "noisy" assets when training the model, I decided to bring the data to a single scale. Three different normalization methods were used for this.:

Z-score: ;

Min-Max scaling: ;

Logarithmic transformation: ;

4.6. Model Training

To test how well our diffusion-based model works, we trained it on daily returns that were standardized beforehand. The training ran for 300 full passes through the data (epochs), and we used a learning method (Adam optimizer) that adjusts weights step by step, based on how wrong the predictions are. Our goal was to reduce the difference between added noise and the noise predicted by the model, using a basic squared error approach.

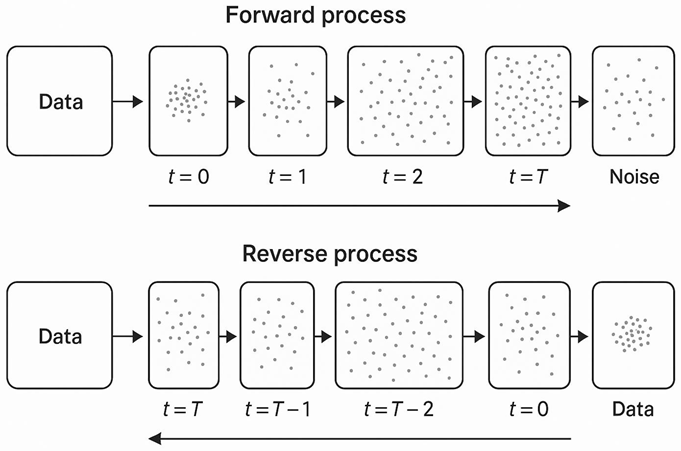

Forward process(adding a noise):

Reverse process(reverse generation):

During the training, we used a specific schedule to control the amount of noise added at each step. When it was time to generate new samples, the model reversed this noise process step by step, creating data that should look similar to real returns. We also applied constraints to keep the generated values from going into extreme ranges.

To see how our model compares, we built and trained three other models:

A simple multilayer network that tries to learn the data directly,

A variational autoencoder (VAE) that learns a compressed version of the data and tries to reconstruct it,

A generative adversarial network (GAN) that trains two models to compete — one generates data, and the other tries to detect if it’s real or not.

In addition to distributional metrics, we also evaluated how close the predicted values were to the real ones using traditional regression scores. Specifically, we used mean squared error (MSE) to measure the average prediction error, and R² to check how much of the variability in the target values the model was able to explain. These metrics are especially useful when comparing direct predictors such as MLP or VAE to our DDPM, even though the latter is not trained as a point estimator.

Mean Squared Error(MSE):

R-Squared(:

Our diffusion model showed stronger performance. It generated samples that were statistically closer to the real data, and the estimated tail risk was more accurate than the other methods.

4.7. Results

In this part of the research, we provide the result of CVaR calculation using a generative model of Denoising Diffusion Probabilistic Methods (DDPM). To compare, the model output was compared with two conventional methods: Historical Simulation and Monte Carlo Simulation. Due to the computational difficulty in handling the enormous S&P 500 data, typically beyond the capacity of normal computing, our research was limited to a representative sample of three of the most dominant stocks: Apple (AAPL), Amazon (AMZN), and Google (GOOGL). These firms were selected based on their high liquidity and significance to the index, thus offering a big enough foundation to assess model performance without lowering the generalizability of overall results.

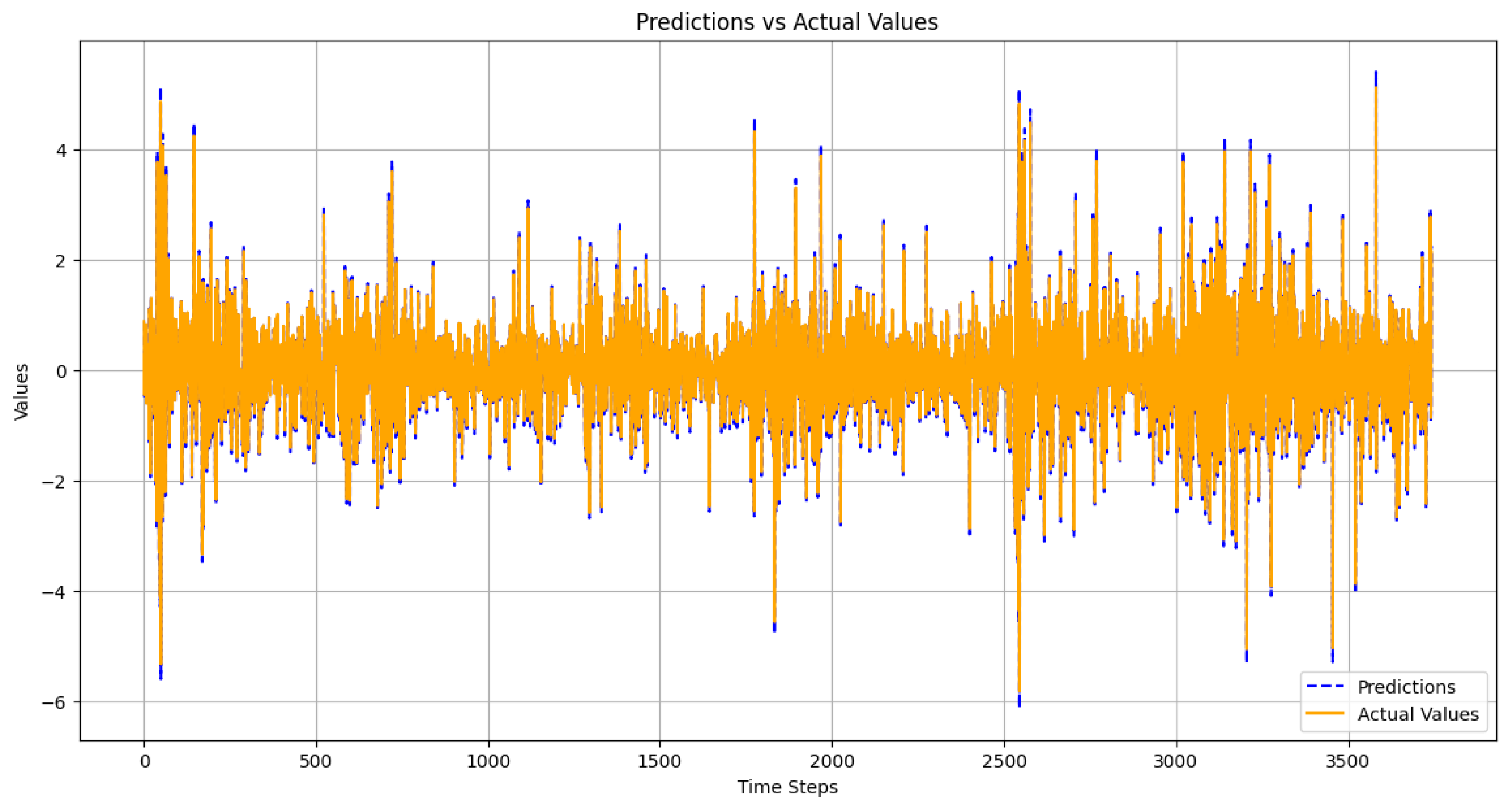

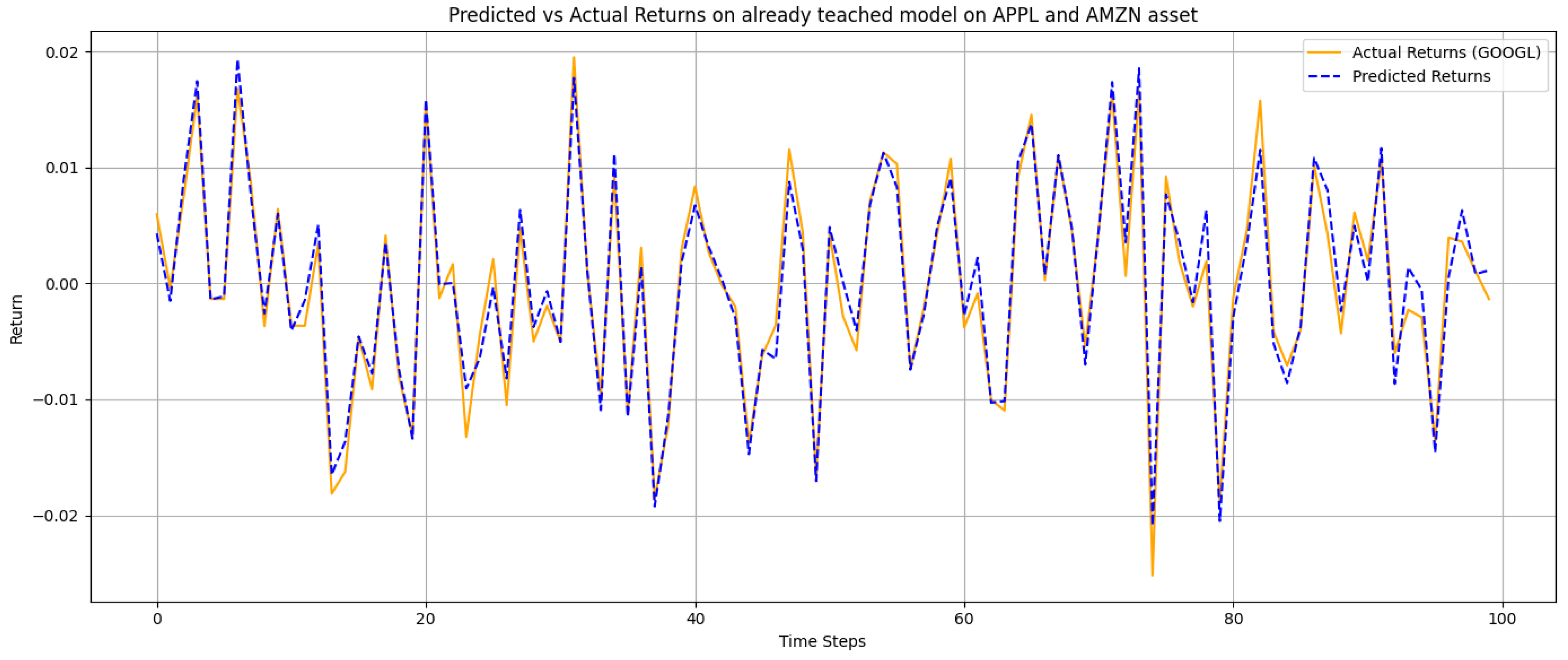

Figure 6.

Predicted vs actual returns. The model closely follows real data and reflects major fluctuations, which supports its use for risk analysis.

Figure 6.

Predicted vs actual returns. The model closely follows real data and reflects major fluctuations, which supports its use for risk analysis.

This plot contrasts forecasted values according to the diffusion model with true return history over time. From the chart, one can see that the forecasts run very close behind the actual movements for most of the series. The model captures both the overall trend and short-run fluctuations, even as it misses some of the more extreme spikes at times. Overall, this alignment suggests that the model is able to learn the underlying data structure and replicate it quite well, which is important for establishing risk in volatile situations.

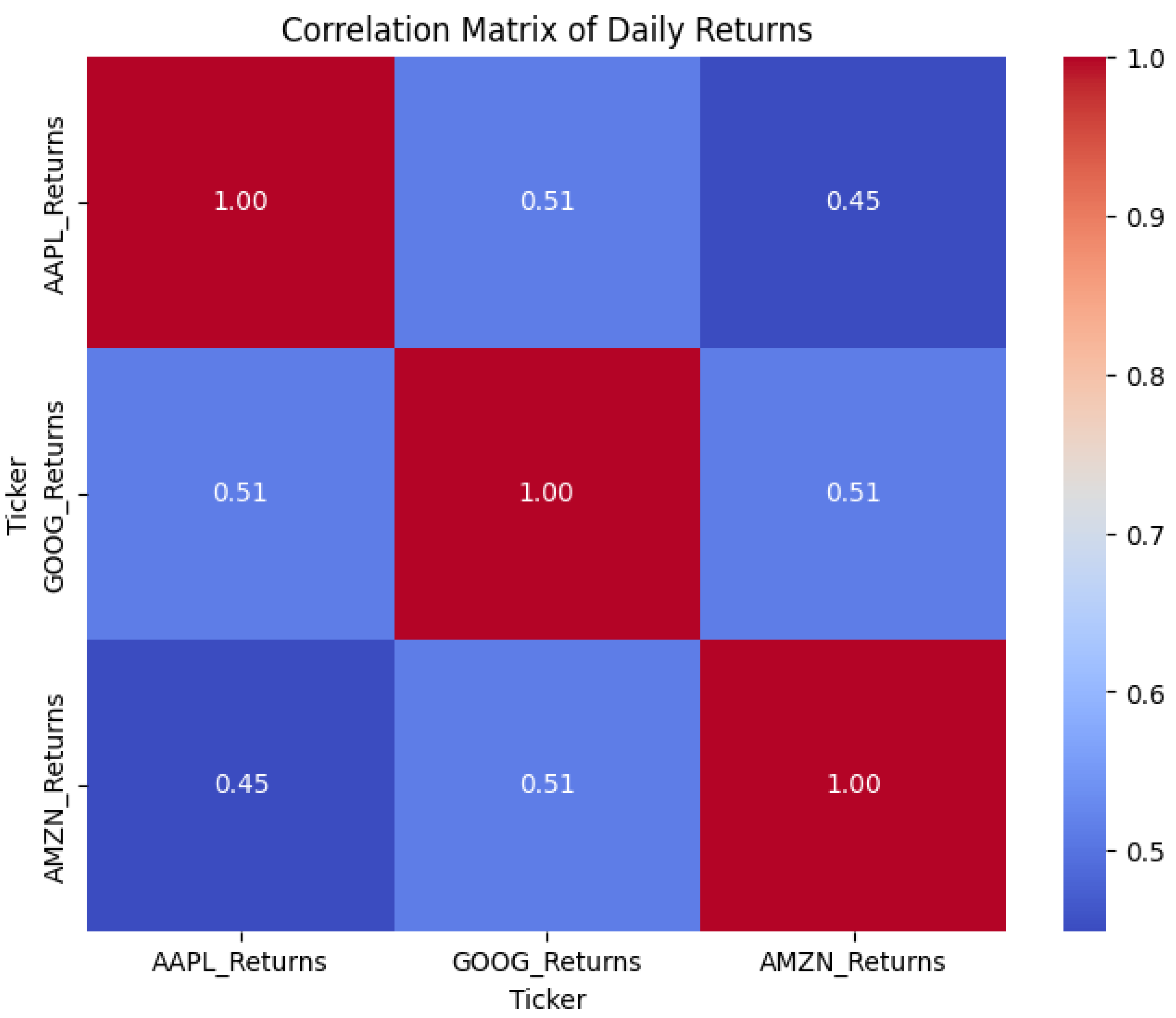

The estimation was conducted on daily return data with focus on the 5% tail of the distribution. We can see from

Table 1 that the CVaR as estimated by the DDPM model differs from the true test CVaR by only -0.20, while the conventional methods had higher positive deviations between 0.27 and 0.29. This indicates that the generative method provides a more precise and realistic estimation of the tail risk.

Besides the quantitative comparison of CVaR values, I also conducted an examination of the shape of the return distributions realized under each approach. This was done so that we would have some sense of how each approach handles large negative returns, a challenge which has a direct impact on CVaR estimation accuracy. The graph in

Figure 1 illustrates the degree to which the distribution of the DDPM model aligns with the test data itself, particularly in the lower tail, where losses are more likely to be incurred. Yet, both Historical Simulation and Monte Carlo approaches seem to dampen the impact of the tail, which can result in loss underestimation. This visual discrepancy confirms the results above and illustrates the value of incorporating generative models in risk measurement.

Figure 7.

Comparison of return distributions and risk thresholds (VaR and CVaR at 5%) across three methods: Historical Simulation, Monte Carlo, and the proposed DDPM-based model. The DDPM distribution demonstrates closer alignment with the real test data in the lower tail region, indicating more accurate modeling of extreme losses.

Figure 7.

Comparison of return distributions and risk thresholds (VaR and CVaR at 5%) across three methods: Historical Simulation, Monte Carlo, and the proposed DDPM-based model. The DDPM distribution demonstrates closer alignment with the real test data in the lower tail region, indicating more accurate modeling of extreme losses.

To find the predictability of the model with Diffusion Denosing Probabilistic Models (DDPM), training data were 5-fold cross-validation tested. In every instance, the model showed low error rate and explanatory power in all folds. Mean Squared Error (MSE) was between 0.0009 and 0.0027, and the coefficient of determination (R²) was greater than 0.997 in all the folds. On average, the model estimated MSE to be 0.0016 and R² to be 0.9984, indicating that there was good fit between observed and predicted values and data structure was highly explanatory in nature.

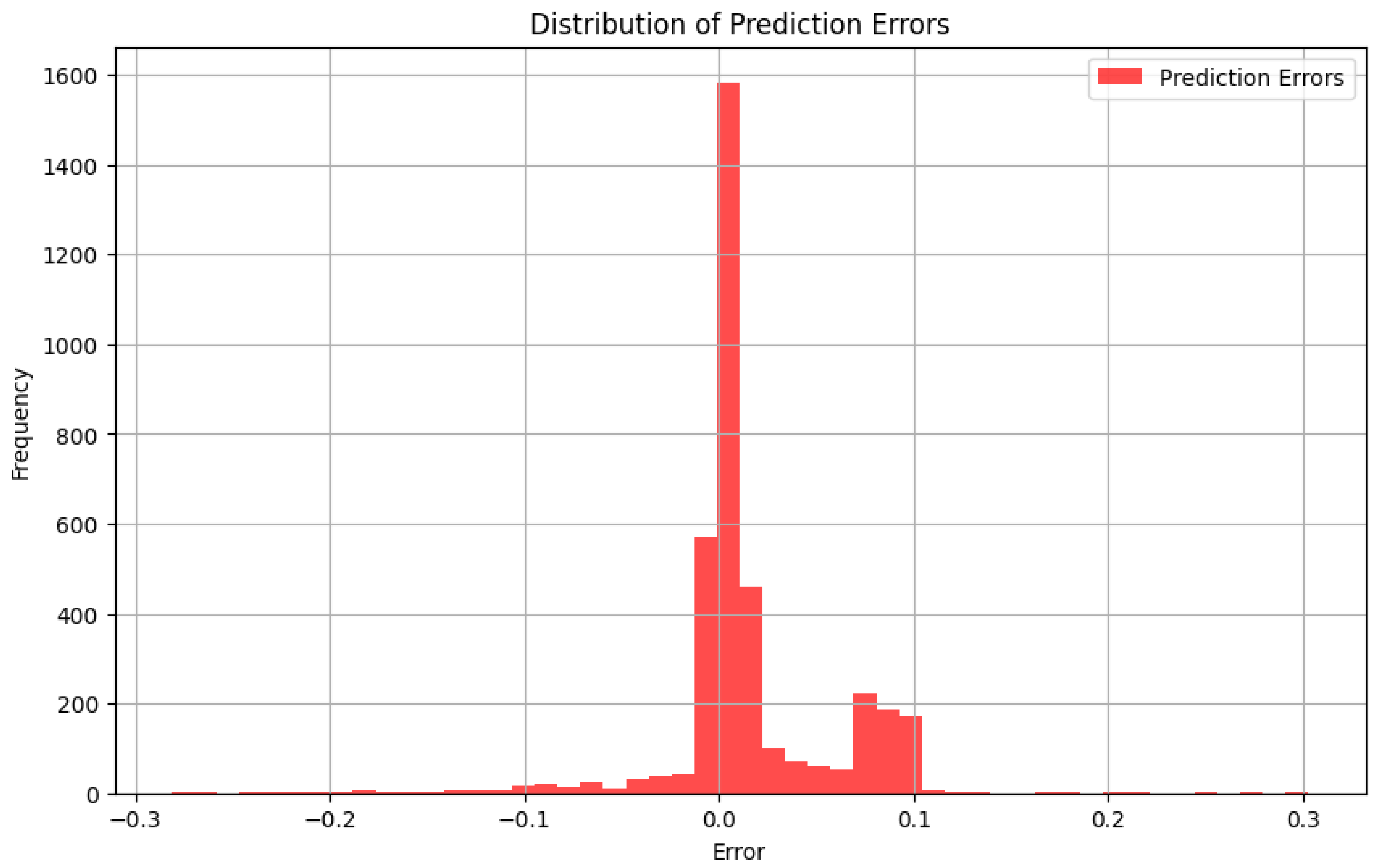

To better estimate the quality of predictions made by the DDPM model, we analyzed the distribution of prediction errors, which we have defined as the difference between the predicted and actual return values. A well-performing model should have errors concentrated around zero with low dispersion. The below histogram shows the distribution of prediction errors over the whole test set.

Figure 8.

Error histogram of predictions. The majority of the errors lie near zero, indicating that the model makes unprejudiced predictions with reasonably low variance.

Figure 8.

Error histogram of predictions. The majority of the errors lie near zero, indicating that the model makes unprejudiced predictions with reasonably low variance.

To check the model's ability to generalize, I trained the model on return data for Apple (AAPL) and Amazon (AMZN) and tested it on an unseen stock, namely Google (GOOGL). The aim was to check if the model could recognize and learn about a new asset that it wasn't exposed to during training. The predicted returns on GOOGL were checked against the actual returns for the same period of time. Although the asset was not in the training set, the model had predictions that followed the actual data closely enough with a very low mean squared error and very high R² score. That indicates the model would be able to generalize patterns learned from AAPL and AMZN to another asset with similar market action.

Figure 9.

Predicted vs actual returns for GOOGL. The model was trained only on AAPL and AMZN. Despite having no prior exposure to GOOGL, the predictions track the actual values closely, demonstrating the model's ability to generalize across similar financial assets.

Figure 9.

Predicted vs actual returns for GOOGL. The model was trained only on AAPL and AMZN. Despite having no prior exposure to GOOGL, the predictions track the actual values closely, demonstrating the model's ability to generalize across similar financial assets.

Figure 10.

Illustration of the DDPM training pipeline. The original image is gradually corrupted with noise through multiple stages, producing an intermediate noisy sample ztz_tzt. A U-Net model is then trained to reverse this process by predicting the clean signal or the noise component, enabling recovery of the original image through denoising.

Figure 10.

Illustration of the DDPM training pipeline. The original image is gradually corrupted with noise through multiple stages, producing an intermediate noisy sample ztz_tzt. A U-Net model is then trained to reverse this process by predicting the clean signal or the noise component, enabling recovery of the original image through denoising.