1. Introduction

Some diseases are closely linked to the types of activities patients engage in when specific symptoms appear. For example, patients with chronic obstructive pulmonary disease (COPD) may experience breathlessness during daily activities, such as brushing teeth, eating, or ascending or descending stairs [

1]. COPD symptoms reduce patient's physical activity levels and can negatively affect sleep quality [

2]. The severity of COPD is assessed using various methods, including spirometry [

3], the COPD assessment test (CAT) [

4], and functional tests such as the 6-min walk test (6 MWT). The 6 MWT plays a crucial role in evaluating functional exercise capacity, where patients walk back and forth as quickly and as far as possible over a 30-meter straight path for six minutes. However, this test can be challenging for patients with COPD with reduced respiratory capacity [

5]. Furthermore, verbal prompts from healthcare professionals may influence the results, raising concerns about objectivity [

6].

Similarly, patients with arrhythmia may experience symptoms while performing certain movements. To diagnose arrhythmia, patients may undergo Holter electrocardiogram (ECG) monitoring. During this test, patients record their activities on a log sheet whenever they experience symptoms such as chest pain, palpitations, or shortness of breath [

7] (p. 7). Patients must continuously document their activities while wearing the Holter ECG for 24 to 48 hours, which can be burdensome. Additionally, because the recordings are made by the patients themselves, the results are subjective. Furthermore, as doctors compare the ECG with the log sheet for diagnosis, any omission in the patient's recordings could reduce diagnostic accuracy.

In recent years, studies have increasingly focused on automatically recognizing individual activities using accelerometers [

8,

9,

10,

11,

12]. By utilizing these findings, the relationship between symptom onset and activity could be better understood, potentially addressing the aforementioned issues and enabling more straightforward and objective assessments of severity and diagnoses. One study recognized various activities such as standing, sitting, lying, and walking, by using a dual-axis accelerometer attached to the patient’s thigh, combined with a position sensor located at the waist, to monitor activity during ambulatory ECG monitoring [

13].

In research, more comprehensive and high-resolution data are utilized to recognize activities with greater precision [

14,

15]. For example, modern 9-axis inertial measurement units (IMUs) combine accelerometers, gyroscopes, and magnetometers to acquire acceleration, angular velocity, and geomagnetic data at high sampling rates. Acceleration refers to the rate of change in an object’s velocity per unit time, reflecting its tilt, vibration, and movement. Angular velocity represents the rate of rotation of an object, indicating its rotational motion. Geomagnetism, referring to Earth's magnetic field, provides information on the direction and position of an object. While some high-precision IMUs are larger than typical wearable devices, they offer the advantage of simultaneously acquiring data from multiple sensors at higher sampling rates. These devices are commercially available and widely used in research settings [

16].

However, in clinical settings, reducing data volume is preferred for several reasons:

Faster processing and efficient transmission: Reduced data can be transmitted and received more quickly, even at the same communication speed. This allows more information to be exchanged within the same bandwidth, alleviating network congestion, and improving overall communication performance.

Lower power consumption: Reducing data volume minimizes power requirements for data acquisition and processing. This can simplify cooling systems and enable the use of smaller batteries while maintaining the same operating times.

Extended data acquisition periods: Portable accelerometers have limited data storage capacity. Reducing the data volume allows for longer continuous measurement, enabling extended patient monitoring on a single charge.

Device miniaturization: A smaller data volume reduces the need for large storage capacity, allowing for smaller batteries and potential device miniaturization, which is crucial for patient comfort during long-term use.

Cost-effectiveness: Reducing data volume decreases the required storage capacity and processing power, potentially lowering device costs.

These benefits make data reduction strategies particularly valuable in clinical applications requiring long-term continuous monitoring.

Recent studies have investigated the number and combination of axes used in accelerometers and their accuracy in activity recognition [

17,

18]. However, to the best of our knowledge, there has been limited research in clinical contexts, particularly regarding activities related to breathlessness in patients with COPD or those recorded on Holter ECG log sheets. This gap presents an opportunity for further investigation into activity recognition optimization in specific clinical settings.

By acquiring and processing the minimum necessary data using wearable devices, we aimed to achieve a simple and objective severity assessment of COPD and the diagnosis of arrhythmia via Holter ECG. Our previous research [

19] demonstrated that data from 9-axis IMUs worn on either the non-dominant wrist or chest can recognize activities with high accuracy using a single attachment position. Therefore, in this study, we aimed to determine how many axes could be reduced from the 9-axis data while maintaining high accuracy in recognizing activities that cause breathlessness in patients with COPD or activities listed in Holter ECG log sheets using accelerometer data from either the non-dominant wrist or chest. Additionally, we aimed to clarify the relationship between acceleration, angular velocity, and geomagnetism, and the types of activities recognized.

2. Materials and Methods

2.1. Participants

A total of 30 healthy individuals (17 females, 13 males) were recruited for this study. All participants provided written informed consent prior to their involvement. The cohort had a mean age of 21.0 years (SD = 0.87, range: 19–23 years). Hand dominance distribution showed 29 right-handed individuals and one left-handed individual. This research was conducted in accordance with the Declaration of Helsinki and received approval from the Ethics Committee of Okayama University (approval number: R2203-001, approved on April 14, 2022).

2.2. Device

The ActiGraph GT9X Link (ActiGraph LLC, Pensacola, FL, USA) is an activity-monitoring device equipped with a primary 3-axis accelerometer and an inertial measurement unit (IMU). The IMU includes a secondary tri-axis accelerometer, tri-axis gyroscope, and tri-axis magnetometer, measuring acceleration, rotational velocity, and magnetic flux, respectively. This IMU functionality enables the device to provide data on rotation and position for advanced applications.

2.3. Procedure

Five ActiGraph GT9X Link devices were attached to each participant as follows:

(i) One on the dominant wrist using a link wristband.

(ii) One on the non-dominant wrist using a link wristband.

(iii) One on the chest using a pouch and belt.

(iv) One on the hip contralateral to the dominant wrist using a link belt clip.

(v) One on the thigh contralateral to the dominant wrist using a pouch and belt.

The IMU recordings were performed at a sampling frequency of 100 Hz for all positions. The idle sleep mode was disabled. Participants completed nine activities, each lasting 2 minutes at a self-selected pace, in the following order: lying in the supine position, standing, sitting, taking a meal, brushing teeth, using the restroom, walking, ascending or descending stairs, and running. Activities between taking a meal and brushing teeth were classified as "other movements."

2.4. Activity Types

This study focused on nine activities from two distinct categories. The first category comprised fundamental activities (lying in the supine position, standing, sitting, walking, ascending or descending stairs, and running) previously identified using a tri-axis accelerometer in prior studies [20, 21]. The second category encompassed activities of daily living, specifically those during which patients with COPD reported experiencing dyspnea in a 24-item questionnaire [

22] or as listed in Holter ECG recording forms (taking a meal, brushing teeth, and using the restroom). Detailed descriptions of each activity are available in our previous study [

19].

2.5. Data Processing and Feature Extraction

Data were processed using ActiGraph ActiLife software (version 6.13.4) as previously described [

19]. Extracted data were segmented into 10-second non-overlapping time-domain and frequency-domain features [

23]. These features included mean, standard deviation, variance, maximum, minimum, root mean square, signal magnitude area, correlation between axes, entropy, energy, kurtosis, skewness, median, interquartile range, and autoregressive coefficients. A total of 156 features were utilized per window during model training and testing.

2.6. Axes

The following combinations of sensor data were evaluated:

(i) Nine axes (Acc_Gyr_Mag): Accelerometer data (x-, y-, and z-axes), gyroscope data (roll, pitch, and yaw), and magnetometer data (x-, y-, and z-axes).

(ii) Six axes (Acc_Gyr): Accelerometer data (x-, y-, and z-axes) and gyroscope data (roll, pitch, and yaw).

(iii) Six axes (Acc_Mag): Accelerometer data (x-, y-, and z-axes) and magnetometer data (x-, y-, and z-axes).

(iv) Six axes (Gyr_Mag): Gyroscope data (roll, pitch, and yaw) and magnetometer data (x-, y-, and z-axes).

(v) Three axes (Acc): Accelerometer data (x-, y-, and z-axes).

(vi) Three axes (Gyr): Gyroscope data (roll, pitch, and yaw).

(vii) Three axes (Mag): Magnetometer data (x-, y-, and z-axes).

2.7. Model Training and Testing

Model training and testing were performed as previously described [

19]. A leave-one-subject-out (LOSO) cross-validation was performed following the approach outlined in a previous study [

24]. The dataset consisting of 30 participants was divided into two exclusive subsets: a training subset containing data from 29 participants and a test subset comprising data from a single participant. The training data were used to train the random forest (RF) classifier model [

25]. After training, the performance of the model was evaluated using the test data. This process was iterated 30 times by varying the combinations of training and test subsets.

2.8. Model Evaluation

The performance of the classifier was assessed using the following indices:

- ·

Precision: Precision is defined as the proportion of predicted positive cases actually identified. Precision was determined using the following equation:

- ·

Recall: Recall is defined as the proportion of actual positive cases correctly identified. Recall was determined using the following equation:

- ·

F-value: The F-value is defined as the harmonic mean of precision and recall. The F-value was determined using the following equation:

The F-value ranges from 0 to 1 [

26]. An activity was considered recognizable if its F-value was equal to or greater than 0.7, as an area under the curve (AUC) of 0.7 or higher was deemed highly or moderately accurate in previous studies [27, 28]. Confusion matrices were generated to concisely present the prediction results of the activity classification.

2.9. Attachment Positions

Participants wore the GT9X Link devices on their dominant wrist, non-dominant wrist, chest, hip, and thigh. As accelerometer data from devices worn on the non-dominant wrist or chest demonstrated high accuracy in activity recognition [

19], two approaches were employed to evaluate activity classification effectiveness. Non-dominant wrist classifier: using non-dominant wrist sensor data to predict activities. Chest classifier: using chest sensor data to predict activities. These methods assessed the system's ability to recognize activities based on data from these body locations.

3. Results

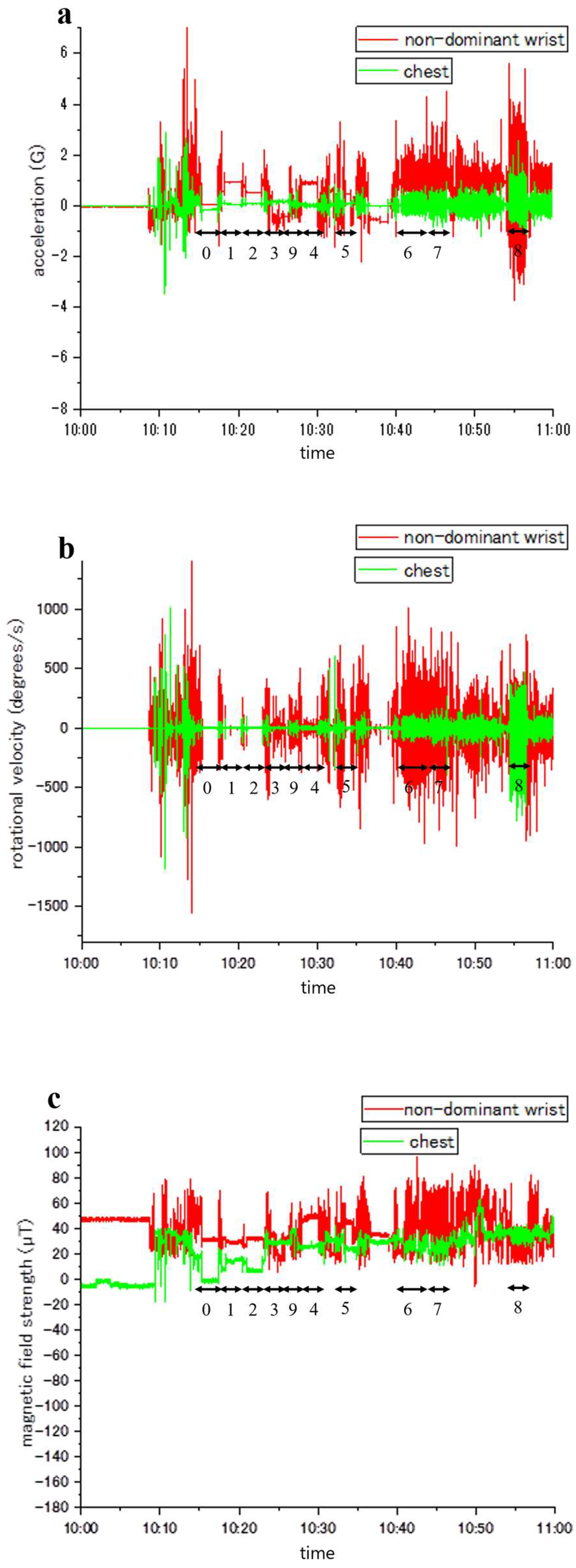

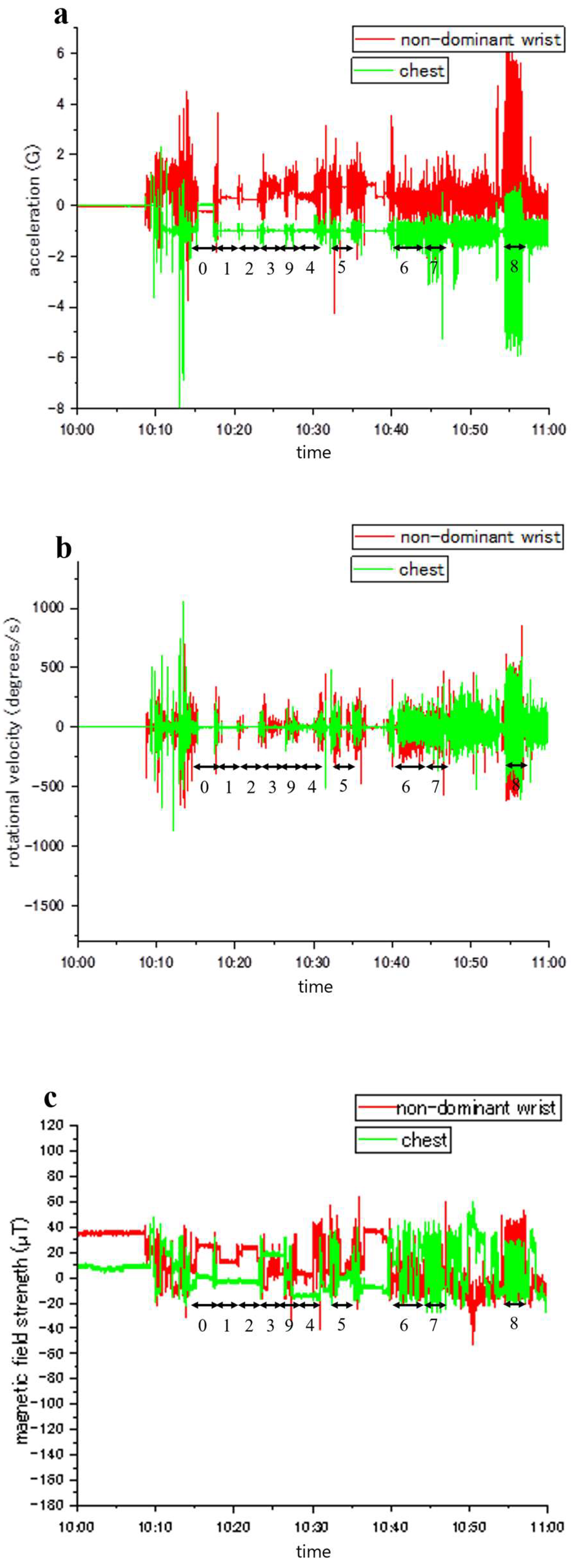

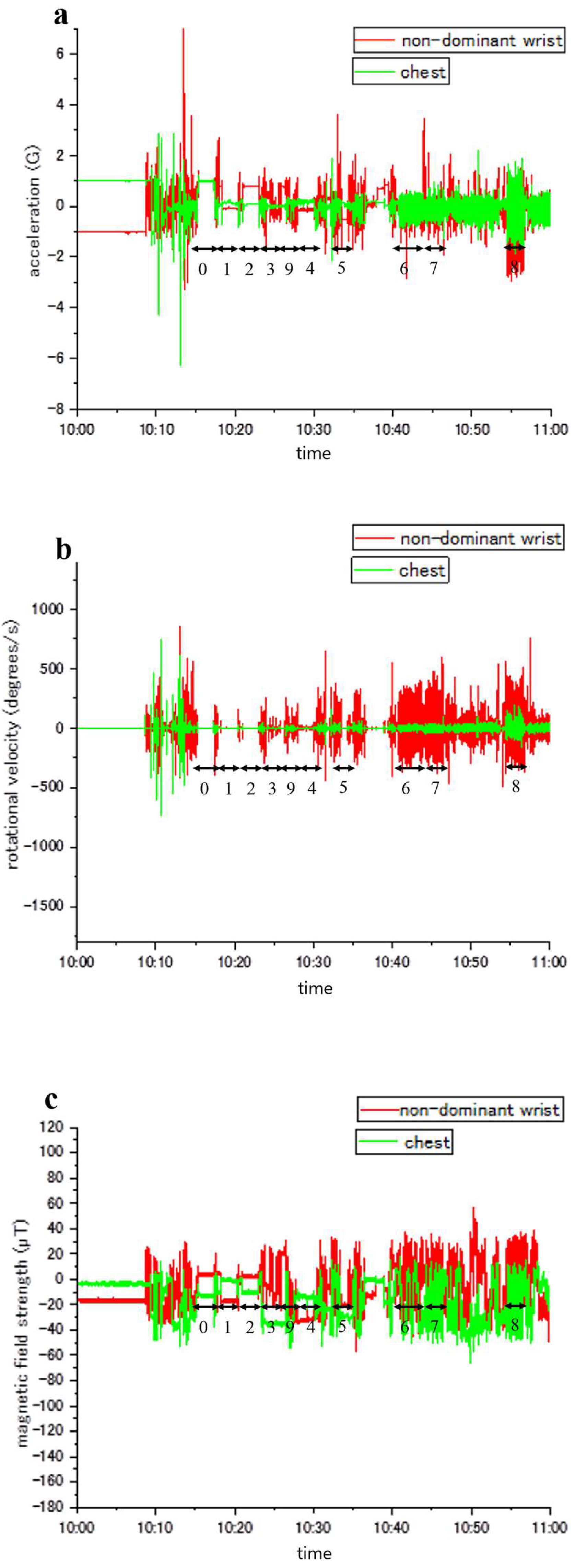

Figure 1,

Figure 2,

Figure 3 illustrate representative samples of unprocessed acceleration, angular velocity, and magnetic field strength, respectively. Throughout the experiments, the activities of the participants were documented and aligned with accelerometer readings (denoted by numbers 0–9). Variations in activity types and sensor placements led to distinct patterns of fluctuation in acceleration, angular velocity, and magnetic field strength.

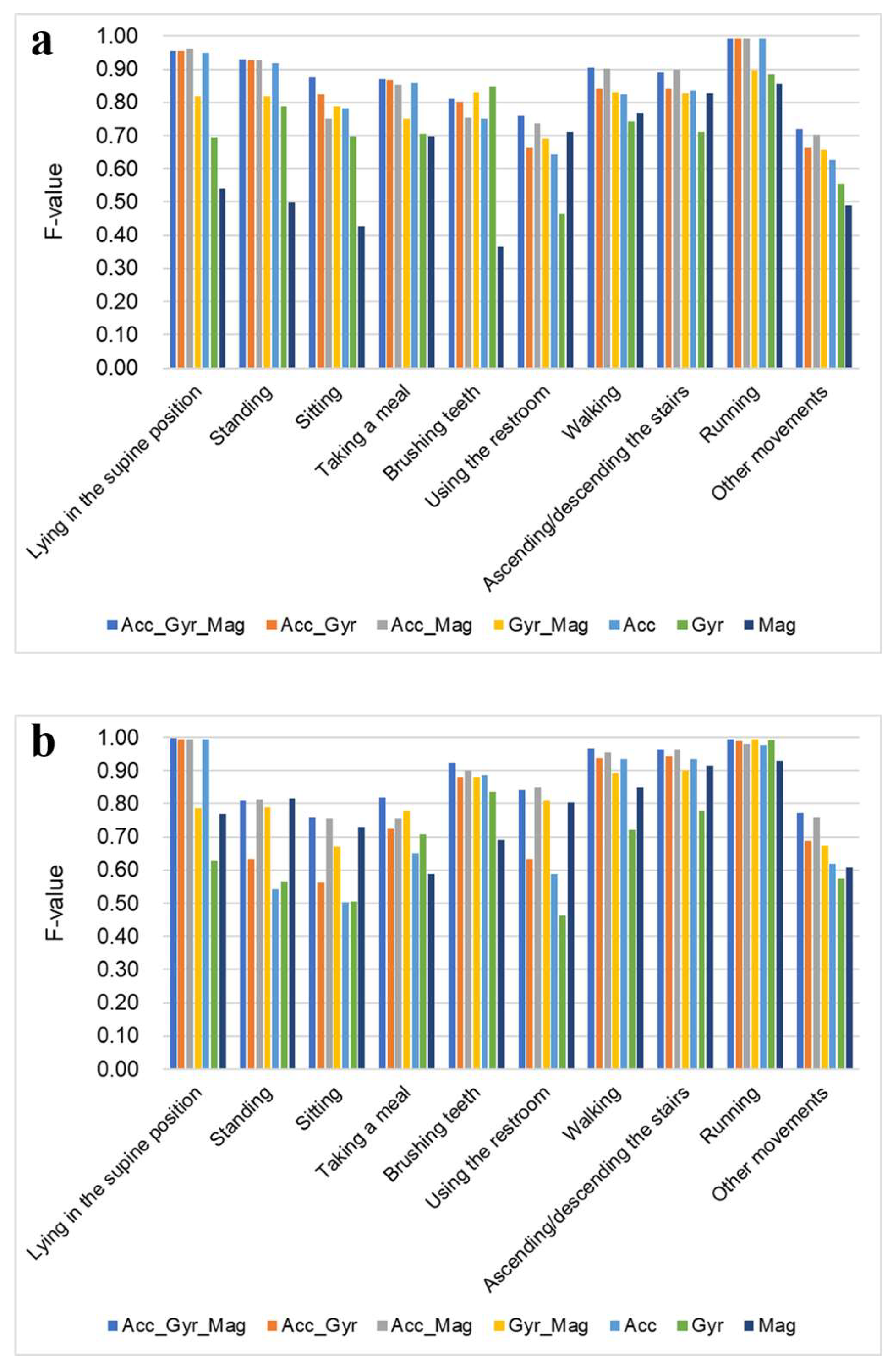

Recognition accuracy for each activity was evaluated using acceleration data from a 9-axis accelerometer. Activities with F-values 0.7 or higher were considered recognizable.

Figure 4a and

Table 1a present the activity recognition results for different axis combinations when the accelerometer was attached to the non-dominant wrist. Generally, Acc_Gyr_Mag achieved the highest F-values for each activity, except for brushing teeth. When using the six axes, Acc_Gyr and Acc_Mag achieved the highest F-values, except for brushing teeth, where Gyr_Mag performed better. When using three axes, Acc achieved the highest F-values for most activities, except for brushing teeth and using the restroom, which were most accurately recognized by Gyr and Mag, respectively. For lying in the supine position, sitting, taking a meal, and running, Acc showed F-values equivalent to the 9-axis.

Figure 4b and

Table 1b present the activity recognition results for different axis combinations when the accelerometer was attached to the chest. Overall, Acc_Gyr_Mag achieved the highest F-values for each activity. When using six axes, Acc_Mag achieved the highest F-values, except for taking a meal and running, where Gyr_Mag performed better. When using three axes, the axis with the highest F-value depended on the type of activity recognized. Acc had the highest recognition accuracy for lying in the supine position, brushing teeth, walking, and ascending or descending stairs. In particular, for lying in the supine position and walking, the recognition accuracy of Acc was considerably higher than that of the other two axes. Gyr exhibited the highest recognition accuracy for taking a meal and running. In particular, for taking a meal, the recognition accuracy of Gyr was considerably higher than those of the other two axes. Mag achieved the highest recognition accuracy for standing, sitting, and using the restroom. The accuracy was considerably higher than that of the other two axes. For lying in the supine position, sitting, standing, using the restroom, ascending or descending stairs, and running, six or three axes achieved F-values comparable to nine axes.

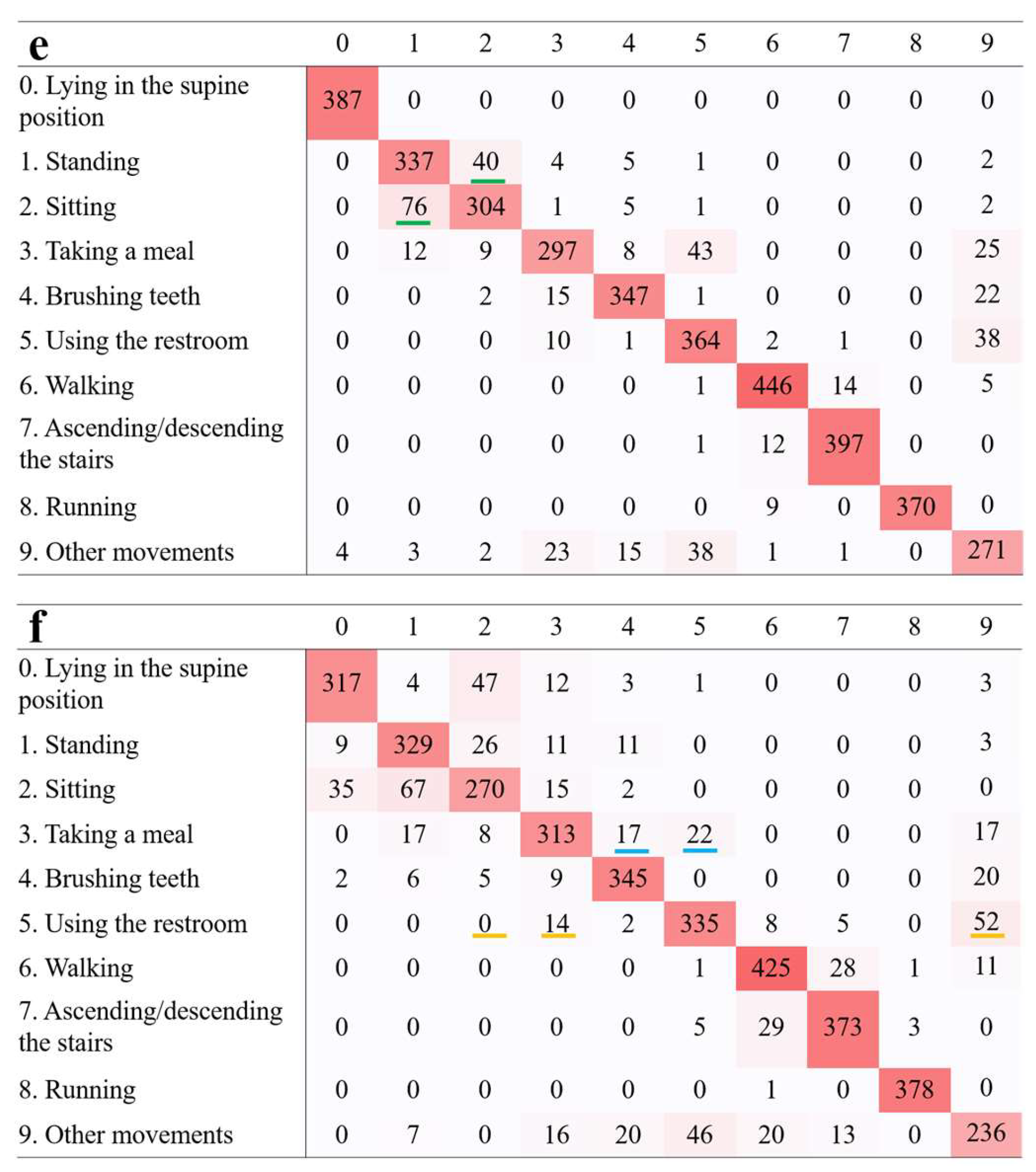

Figure 5 and

Figure 6 present excerpts from the confusion matrix for activity recognition using non-dominant wrist and chest classifiers. Most activities were correctly classified along the diagonal, though some misclassifications occurred. These patterns varied based on the attachment position and combination of axes. Confusion matrices of all combinations of axes are available in

Figures S1 and S2.

4. Discussion

When the device was worn on the non-dominant wrist, the 9-axis sensor recognized all activities, except brushing teeth, with high accuracy. This high accuracy was likely due to the comprehensive information provided by all nine axes. Previous studies demonstrated that using data from multiple sensors can improve activity recognition accuracy [18, 29]. Among the 6-axis combinations, those including acceleration data were more accurate. Similarly, in the 3-axis combinations, acceleration data often yielded more accurate results than other axes. Therefore, acceleration data play a crucial role in activity recognition when the device is worn on the non-dominant wrist. Using only 3-axis acceleration data, some activities, such as lying in the supine position, sitting, taking a meal, and running, were recognized with accuracy comparable to that of the 9-axis sensor. This finding suggests that 3-axis acceleration data may suffice for accurately recognizing certain activities.

For brushing teeth, the triaxial angular velocity provided more accurate results than the 9-axis sensor. Among the six axes combinations, those excluding angular velocity exhibited the lowest accuracy, indicating that angular velocity plays a crucial role in classifying the activity of brushing teeth when the device is worn on the non-dominant wrist. Comparing the Mag and Gyr_Mag confusion matrices, incorporating angular velocity reduced misclassification of brushing teeth as stationary movements (such as lying in the supine position, standing, or sitting) or as taking a meal (

Figure 5b,c). During the activity of brushing teeth, the wrist of the non-brushing hand remained suspended. Including angular velocity data likely aided in recognizing this state. Particularly, angular velocity helped differentiate between brushing teeth and standing, where both postures involve freely hanging wrists hanging; however, there is typically less movement during standing. Angular velocity was instrumental in differentiating between these two states based on the degree of movement.

When using the restroom, the triaxial magnetometer demonstrated greater accuracy than the other three axes. In 6-axis comparisons, combinations excluding the magnetometer exhibited the lowest accuracy, indicating that the magnetometer is critical for classifying the activity of using the restroom when the device is worn on the non-dominant wrist. Comparing the Gyr and Gyr_Mag confusion matrices, incorporating a magnetometer reduced misclassification of using the restroom as taking a meal, sitting, or other movements (

Figure 5b,c). During the act of using the restroom, considerable changes in both the direction and position of the wrist likely facilitated improved recognition.

When worn on the chest, the 9-axis sensor recognized all activities with high accuracy, likely due to the comprehensive information from all nine axes. A previous study showed that activity classification using acceleration and ECG data from a patch-type sensor achieved higher accuracy than activity classification using acceleration data alone [

30]. Among 6-axis combinations, acceleration and magnetometer data recognized most activities with high accuracy, except taking a meal and running. Activities such as lying in the supine position, standing, sitting, using the restroom, and ascending or descending stairs were classified with accuracy comparable to that of the 9-axis sensor.

Accuracy varied substantially across activities when using three axes. Acceleration was particularly effective for recognizing lying in the supine position and walking, whereas angular velocity was most effective for taking a meal. The magnetometer showed high accuracy for standing, sitting, and using the restroom. Some activities such as lying in the supine position, standing, and running were recognized with accuracy comparable to the 9-axis configuration. When utilizing three axes, it is important to adjust the types of axes used based on the specific activity being recognized. However, overall accuracy is lower with three axes, making six or nine axes preferable unless specific activities are targeted.

Even with six axes, combinations including acceleration (Acc_Gyr and Acc_Mag) were more accurate than those without acceleration (Gyr_Mag) for recognizing lying in the supine position and walking, indicating that acceleration data are particularly effective for recognizing these activities when the device is attached to the chest. Adding acceleration data eliminated the confusion between lying in the supine position and standing or sitting, as seen in the Gyr and Acc_Gyr confusion matrices (

Figure 6b, d). This improvement likely reflects acceleration's ability to capture the tilt of the body, which differs by approximately 90° across these postures. Similarly, comparing the confusion matrices of Gyr and Acc_Gyr, adding acceleration data reduced confusion between walking and ascending or descending stairs (

Figure 6b, d). This enhancement may be due to its capacity to capture the body's movement. While walking involves minimal movement in the vertical direction (z-axis), ascending or descending stairs involves considerable vertical movement.

In the case of 6-axis configurations, Gyr_Mag combination demonstrated higher accuracy for recognizing taking a meal than the other 6-axis combinations. This result, combined with angular velocity’s high accuracy among 3-axis options, suggests angular velocity is effective for recognizing taking a meal when the device is attached to the chest. Comparing the confusion matrices of Mag and Gyr_Mag, adding angular velocity data reduced misclassification of taking a meal as either brushing teeth or using the restroom (

Figure 6c, f). This improvement may be attributed to the angular velocity's ability to capture the body's rotation associated with eating.

Even with 6-axis configurations, combinations including a magnetometer (Acc_Mag, Gyr_Mag) demonstrated considerably higher accuracy for recognizing standing, sitting, and using the restroom than those without a magnetometer (Acc_Gyr), indicating that the magnetometer is particularly effective in recognizing these activities when the device is attached to the chest. Comparing the confusion matrices of Acc and Acc_Mag, adding a magnetometer to acceleration data reduced the confusion between standing and sitting (

Figure 6a, e) by capturing differences in chest height. Adding a magnetometer considerably reduced misclassification of using the restroom as taking a meal, sitting, or other movements, as seen in the Gyr and Gyr_Mag confusion matrices (

Figure 6b, f). For the act of using the restroom, frequent directional and positional changes of the chest may have made the magnetometer particularly helpful in recognizing the activity.

When attached to the non-dominant wrist, the 9-axis configuration provides optimal performance. However, 6- or even 3-axis configurations can recognize specific activities with comparable accuracy. For 3- and 6-axis configurations, including acceleration data is recommended. When attached to the chest, the 9-axis configuration provides optimal performance. Similar to the wrist attachment, 6-axis or 3-axis configurations can recognize specific activities with comparable accuracy. However, because the 3-axis configuration can only recognize specific activities with high accuracy, the use of a 6-axis setup is recommended unless there is a specific need to recognize only particular activities. The combination of an accelerometer and magnetometer is recommended for a 6-axis configuration on the chest.

This study has several limitations. First, the participants were young, healthy individuals. Activity patterns in older adults and individuals with health conditions may differ. Second, activities were performed in controlled environments, which may not reflect real-world scenarios. Data collected in free-living conditions could yield different results. Future research should address these limitations to enhance the generalizability of our findings.

5. Conclusions

This study is the first to examine how many axes can be reduced from nine axes while maintaining high accuracy in recognizing activities that cause breathlessness in patients with COPD, as well as activities recorded on Holter ECG activity cards. The highest accuracy was achieved with nine axes for both the nondominant wrist and chest. For the nondominant wrist, even when reduced to three axes, acceleration alone recognized specific activities with high accuracy. Some activities, such as lying in the supine position, sitting, taking a meal, and running, could be recognized with accuracy comparable to nine axes. Furthermore, when using six axes, including acceleration is essential. For the chest, when reducing the number of axes, it is recommended to reduce up to six axes, with a combination of acceleration and magnetometer data. This combination can achieve accuracy comparable to nine axes for specific activities, such as lying in the supine position, standing, sitting, using the restroom, and ascending or descending stairs.

Acceleration effectively detects tilt, vibration, and movement of objects, making it suitable for recognizing various activities. Angular velocity detects rotational motion of objects, aiding in recognizing brushing teeth when worn on the non-dominant wrist, and taking a meal when worn on the chest. This is because these activities involve rotational movements. Magnetometer data detect the direction and position of objects and are effective in recognizing activities, such as using the restroom, where the body's direction and position vary markedly. Reducing axes based on the device's attachment position and activities to be recognized, patient activities can be monitored with several advantages:

1. Faster and more efficient data transmission

2. Lower power consumption

3. Longer operational duration

4. Smaller device size

5. Reduced costs

These improvements could enable simple and objective severity assessments and diagnoses. Miniaturized devices can reduce the burden of wearing them, potentially leading to fewer patients removing the devices prematurely owing to discomfort. Additionally, reduced device costs could increase adoption of these monitoring devices in clinical settings.

Supplementary Materials

The following supporting information can be downloaded at the website of this paper posted on Preprints.org. Figures S1 and S2: Supplementary materials.

Author Contributions

Conceptualization, M. M; methodology, M. K. and M. M.; software, T. Y.; formal analysis, T. Y.; investigation, M. K.; resources, M. K. and M. M.; data curation, T. Y. and M. K.; writing—original draft preparation, T. Y.; writing—review and editing, M. M.; visualization, T. Y.; supervision, M. M.; project administration, M. M.; funding acquisition, M. M. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by JSPS KAKENHI under Grant JP21K12787.

Institutional Review Board Statement

The study was conducted in accordance with the Declaration of Helsinki and approved by the Ethics Committee of Okayama University (protocol code: R2203-001; date of approval: April 14, 2022).

Informed Consent Statement

Informed consent was obtained from all participants involved in the study.

Data Availability Statement

The data presented in this study are available upon request from the corresponding author. The data are not publicly available due to confidentiality issues.

Acknowledgments

We thank all participants for their participation in our study.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Satoh, H.; Iwashima, A.; Endo, Y.; Nakayama, H.; Hasegawa, T.; Suzuki, E. Effect of Proactive Use of Inhaled Procaterol on Dyspnea in Daily Activities and Quality of Life in Patients with Chronic Obstructive Pulmonary Disease. Nihon Kokyuki Gakkai Zasshi 2009, 47, 772–780. (in Japanese). [Google Scholar]

- Miravitlles, M.; Ribera, A. Understanding the Impact of Symptoms on the Burden of COPD. Respir. Res. 2017, 18, 67. [Google Scholar] [CrossRef] [PubMed]

- Hankinson, J.L.; Odencrantz, J.R.; Fedan, K.B. Spirometric Reference Values from a Sample of the General U.S. Population. Am. J. Respir. Crit. Care Med. 1999, 159, 179–187. [Google Scholar] [CrossRef]

- Jones, P.W.; Harding, G.; Berry, P.; Wiklund, I.; Chen, W.H.; Kline Leidy, N. Development and First Validation of the COPD Assessment Test. Eur. Respir. J. 2009, 34, 648–654. [Google Scholar] [CrossRef] [PubMed]

- Holland, A.E.; Spruit, M.A.; Troosters, T.; Puhan, M.A.; Pepin, V.; Saey, D.; McCormack, M.C.; Carlin, B.W.; Sciurba, F.C.; Pitta, F.; Wanger, J.; MacIntyre, N.; Kaminsky, D.A.; Culver, B.H.; Revill, S.M.; Hernandes, N.A.; Andrianopoulos, V.; Camillo, C.A.; Mitchell, K.E.; Lee, A.L.; Hill, C.J.; Singh, S.J. An Official European Respiratory Society/American Thoracic Society Technical Standard: Field Walking Tests in Chronic Respiratory Disease. Eur. Respir. J. 2014, 44, 1428–1446. [Google Scholar] [CrossRef]

- Weir, N.A.; Brown, A.W.; Shlobin, O.A.; Smith, M.A.; Reffett, T.; Battle, E.; Ahmad, S.; Nathan, S.D. The Influence of Alternative Instruction on 6-Min Walk Test Distance. Chest 2013, 144, 1900–1905. [Google Scholar] [CrossRef]

- Chung, E.K. Ambulatory Electrocardiography: Holter Monitor Electrocardiography; Springer Science+Business Media, 2013.

- Ankita, S.; Rani, S.; Babbar, H.; Coleman, S.; Singh, A.; Aljahdali, H.M. An Efficient and Lightweight Deep Learning Model for Human Activity Recognition Using Smartphones. Sensors (Basel) 2021, 21, 1–17. [Google Scholar] [CrossRef] [PubMed]

- Ueda, K.; Tamai, M.; Yasumoto, K. A Method for Recognizing Living Activities in Homes Using Positioning Sensor and Power Meters I.E.E.E. International Conference on Pervasive Computing and Communication Workshops, PerCom Workshops 2015, 2015; Volume 2015; pp. 354–359. [CrossRef]

- Bozkurt, F. A Comparative Study on Classifying Human Activities Using Classical Machine and Deep Learning Methods. Arab. J. Sci. Eng. 2022, 47, 1507–1521. [Google Scholar] [CrossRef]

- Muhammad Masum, A.K.; Jannat, S.; Bahadur, E.H.; Golam Rabiul Alam, M.; Khan, S.I.; Robiul Alam, M. Human Activity Recognition Using Smartphone Sensors: A Dense Neural Network Approach. ICASERT. 1st International Conference on Advances in Science, Engineering and Robotics Technology, 2019. [CrossRef]

- Golestani, N.; Moghaddam, M. Human Activity Recognition Using Magnetic Induction-Based Motion Signals and Deep Recurrent Neural Networks. Nat. Commun. 2020, 11, 1551. [Google Scholar] [CrossRef] [PubMed]

- Wetzler, M.L.; Borderies, J.R.; Bigaignon, O.; Guillo, P.; Gosse, P. Validation of a Two-Axis Accelerometer for Monitoring Patient Activity During Blood Pressure or ECG Holter Monitoring. Blood Press. Monit. 2003, 8, 229–235. [Google Scholar] [CrossRef] [PubMed]

- Hendry, D.; Chai, K.; Campbell, A.; Hopper, L.; O’Sullivan, P.; Straker, L. Development of a Human Activity Recognition System for Ballet Tasks. Sports Med. Open 2020, 6, 10. [Google Scholar] [CrossRef]

- Dang, X.; Li, W.; Zou, J.; Cong, B.; Guan, Y. Assessing the Impact of Body Location on the Accuracy of Detecting Daily Activities with Accelerometer Data. iScience 2024, 27, 108626. [Google Scholar] [CrossRef] [PubMed]

- Ims, S.D.; Data Logger Type Inertial Sensor (IMU: Inertial Measurement Unit). Tec Gihan Co., Ltd. Available online: https://www.tecgihan.co.jp/products/ims-sd-imu (accessed on 21 Nov 2024).

- Wieland, F.; Nigg, C. A Trainable Open-Source Machine Learning Accelerometer Activity Recognition Toolbox: Deep Learning Approach. Jmir Ai 2023, 2, e42337. [Google Scholar] [CrossRef] [PubMed]

- Huang, E.J.; Yan, K.; Onnela, J.P. Smartphone-Based Activity Recognition Using Multistream Movelets Combining Accelerometer and Gyroscope Data. Sensors (Basel) 2022, 22, 1–20. [Google Scholar] [CrossRef] [PubMed]

- Yamane, T.; Kimura, M.; Morita, M. Application of Nine-Axis Accelerometer-Based Recognition of Daily Activities in Clinical Examination. Phys. Act. Health 2024, 8, 29–46. [Google Scholar] [CrossRef]

- Sumikawa, A.; Terui, Y.; Sugano, A.; Matsui, Y.; Uemura, S.; Satake, M.; Shioya, T. Validity of the Evaluation of Posture and Movement by a New Tri-axial Accelerometer: Judgement Criteria, Sensitivity and Specificity. Rigakuryoho Kagaku 2018, 33, 561–567. [Google Scholar] [CrossRef]

- Trost, S.G.; Zheng, Y.; Wong, W.K. Machine Learning for Activity Recognition: Hip Versus Wrist Data. Physiol. Meas. 2014, 35, 2183–2189. [Google Scholar] [CrossRef]

- Eakin, E.G.; Resnikoff, P.M.; Prewitt, L.M.; Ries, A.L.; Kaplan, R.M. Validation of A New Dyspnea Measure. the UCSD Shortness of Breath Questionnaire. Chest 1998, 113, 619–624. [Google Scholar] [CrossRef] [PubMed]

- Liu, S.; Gao, R.X.; Freedson, P.S. Computational Methods for Estimating Energy Expenditure in Human Physical Activities. Med. Sci. Sports Exerc. 2012, 44, 2138–2146. [Google Scholar] [CrossRef] [PubMed]

- Esterman, M.; Tamber-Rosenau, B.J.; Chiu, Y.C.; Yantis, S. Avoiding Non-independence in FMRI Data Analysis: Leave One Subject Out. Neuroimage 2010, 50, 572–576. [Google Scholar] [CrossRef] [PubMed]

- Breiman, L. Random Forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Bull, K.; He, Y.H.; Jejjala, V.; Mishra, C. Machine Learning CICY Threefolds. Phys. Lett. B 2018, 785, 65–72. [Google Scholar] [CrossRef]

- Fischer, J.E.; Bachmann, L.M.; Jaeschke, R. A Readers’ Guide to the Interpretation of Diagnostic Test Properties: Clinical Example of Sepsis. Intensive Care Med. 2003, 29, 1043–1051. [Google Scholar] [CrossRef] [PubMed]

- Swets, J.A. Measuring the Accuracy of Diagnostic Systems. Science 1988, 240, 1285–1293. [Google Scholar] [CrossRef] [PubMed]

- Kavuncuoğlu, E.; Özdemir, A.T.; Uzunhisarcıklı, E. Investigating the Impact of Sensor Axis Combinations on Activity Recognition and Fall Detection: an Empirical Study. Multimed Tools Appl 2024. [Google Scholar] [CrossRef]

- Ren, Y.; Liu, M.; Yang, Y.; Mao, L.; Chen, K. Clinical Human Activity Recognition Based on a Wearable Patch of Combined Tri-axial ACC and ECG Sensors. Digit. Health 2024, 10, 20552076231223804. [Google Scholar] [CrossRef]

|

Disclaimer/Publisher’s Note: The statements, opinions, and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

Figure 1.

Figure 1. Illustration of waveform data plotted against the x-axis, depicting sensor readings from two distinct body locations. The non-dominant wrist data is represented by a red line, while the chest data is denoted by a green line. Numerical labels (0-9) correspond to specific physical activities as follows: 0, lying in a supine position; 1, standing; 2, sitting; 3, taking a meal; 4, brushing teeth; 5, using the restroom; 6, walking; 7, ascending/descending the stairs; 8, running; and 9, other movements. (a) X-axis acceleration measurements. (b) X-axis angular velocity recordings. (c) X-axis magnetic field intensity observations.

Figure 1.

Figure 1. Illustration of waveform data plotted against the x-axis, depicting sensor readings from two distinct body locations. The non-dominant wrist data is represented by a red line, while the chest data is denoted by a green line. Numerical labels (0-9) correspond to specific physical activities as follows: 0, lying in a supine position; 1, standing; 2, sitting; 3, taking a meal; 4, brushing teeth; 5, using the restroom; 6, walking; 7, ascending/descending the stairs; 8, running; and 9, other movements. (a) X-axis acceleration measurements. (b) X-axis angular velocity recordings. (c) X-axis magnetic field intensity observations.

Figure 2.

Figure 2. Illustration of waveform data plotted against the y-axis, depicting sensor readings from two distinct body locations. The non-dominant wrist data is represented by a red line, while the chest data is denoted by a green line. Numerical labels (0-9) correspond to specific physical activities as follows: 0, lying in a supine position; 1, standing; 2, sitting; 3, taking a meal; 4, brushing teeth; 5, using the restroom; 6, walking; 7, ascending/descending the stairs; 8, running; and 9, other movements. (a) Y-axis acceleration measurements. (b) Y-axis angular velocity recordings. (c) Y-axis magnetic field intensity observations.

Figure 2.

Figure 2. Illustration of waveform data plotted against the y-axis, depicting sensor readings from two distinct body locations. The non-dominant wrist data is represented by a red line, while the chest data is denoted by a green line. Numerical labels (0-9) correspond to specific physical activities as follows: 0, lying in a supine position; 1, standing; 2, sitting; 3, taking a meal; 4, brushing teeth; 5, using the restroom; 6, walking; 7, ascending/descending the stairs; 8, running; and 9, other movements. (a) Y-axis acceleration measurements. (b) Y-axis angular velocity recordings. (c) Y-axis magnetic field intensity observations.

Figure 3.

Figure 3. Illustration of waveform data plotted against the z-axis, depicting sensor readings from two distinct body locations. The non-dominant wrist data is represented by a red line, while the chest data is denoted by a green line. Numerical labels (0-9) correspond to specific physical activities as follows: 0, lying in a supine position; 1, standing; 2, sitting; 3, taking a meal; 4, brushing teeth; 5, using the restroom; 6, walking; 7, ascending/descending the stairs; 8, running; and 9, other movements. (a) Z-axis acceleration measurements. (b) Z-axis angular velocity recordings. (c) Z-axis magnetic field intensity observations.

Figure 3.

Figure 3. Illustration of waveform data plotted against the z-axis, depicting sensor readings from two distinct body locations. The non-dominant wrist data is represented by a red line, while the chest data is denoted by a green line. Numerical labels (0-9) correspond to specific physical activities as follows: 0, lying in a supine position; 1, standing; 2, sitting; 3, taking a meal; 4, brushing teeth; 5, using the restroom; 6, walking; 7, ascending/descending the stairs; 8, running; and 9, other movements. (a) Z-axis acceleration measurements. (b) Z-axis angular velocity recordings. (c) Z-axis magnetic field intensity observations.

Figure 4.

Figure 4. F-value comparison of different combinations of accelerometer axis with respect to each activity. (a) The accelerometer was attached to the non-dominant wrist. (b) The accelerometer was attached to the chest.

Figure 4.

Figure 4. F-value comparison of different combinations of accelerometer axis with respect to each activity. (a) The accelerometer was attached to the non-dominant wrist. (b) The accelerometer was attached to the chest.

Figure 5.

Confusion matrices comparing anticipated and actual activities, based on data from a non-dominant-wrist-mounted sensor. The horizontal entries represent the actual activities performed, while the vertical entries depict the activities inferred by the activity recognition classifier. (a) Gyroscope. The orange highlight denotes errors in distinguishing between using the restroom, sitting, taking a meal, and other movements. (b) Magnetometer. The purple highlight denotes errors in distinguishing between brushing teeth, lying in the supine position, standing, sitting, and taking a meal. (c) Gyroscope and magnetometer. The orange and purple highlights signify enhanced precision in recognition when contrasted with (a) and (b), respectively.

Figure 5.

Confusion matrices comparing anticipated and actual activities, based on data from a non-dominant-wrist-mounted sensor. The horizontal entries represent the actual activities performed, while the vertical entries depict the activities inferred by the activity recognition classifier. (a) Gyroscope. The orange highlight denotes errors in distinguishing between using the restroom, sitting, taking a meal, and other movements. (b) Magnetometer. The purple highlight denotes errors in distinguishing between brushing teeth, lying in the supine position, standing, sitting, and taking a meal. (c) Gyroscope and magnetometer. The orange and purple highlights signify enhanced precision in recognition when contrasted with (a) and (b), respectively.

Figure 6.

Confusion matrices comparing anticipated and actual activities, based on data from a chest-mounted sensor. (a) Accelerometer. The green highlight denotes errors in distinguishing between standing and sitting. (b) Gyroscope. The blue highlight denotes errors in distinguishing between lying in the supine position, standing, and sitting. The orange highlight denotes errors in distinguishing between using the restroom, sitting, taking a meal, and other movements. The red highlight denotes errors in distinguishing between walking and ascending/descending the stairs. (c) Magnetometer. The light blue highlight denotes errors in distinguishing between taking a meal, brushing teeth, and using the restroom. (d) Accelerometer and gyroscope. The blue and red highlights signify enhanced precision in recognition when contrasted with (b). (e) Accelerometer and magnetometer. The green highlight signifies enhanced precision in recognition when contrasted with (a). (f) Gyroscope and magnetometer. The orange and light blue highlights signify enhanced precision in recognition when contrasted with (b) and (c), respectively.

Figure 6.

Confusion matrices comparing anticipated and actual activities, based on data from a chest-mounted sensor. (a) Accelerometer. The green highlight denotes errors in distinguishing between standing and sitting. (b) Gyroscope. The blue highlight denotes errors in distinguishing between lying in the supine position, standing, and sitting. The orange highlight denotes errors in distinguishing between using the restroom, sitting, taking a meal, and other movements. The red highlight denotes errors in distinguishing between walking and ascending/descending the stairs. (c) Magnetometer. The light blue highlight denotes errors in distinguishing between taking a meal, brushing teeth, and using the restroom. (d) Accelerometer and gyroscope. The blue and red highlights signify enhanced precision in recognition when contrasted with (b). (e) Accelerometer and magnetometer. The green highlight signifies enhanced precision in recognition when contrasted with (a). (f) Gyroscope and magnetometer. The orange and light blue highlights signify enhanced precision in recognition when contrasted with (b) and (c), respectively.

Table 1a.

Evaluation results of the non-dominant wrist classifier.

Table 1a.

Evaluation results of the non-dominant wrist classifier.

| |

|

Precision |

Recall |

F-value |

| Lying in the supine position |

Acc |

0.9462 |

0.9635 |

0.9496 |

| Gyr |

0.7696 |

0.6889 |

0.6947 |

| Mag |

0.5148 |

0.5942 |

0.5402 |

| Acc_Gyr |

0.9510 |

0.9686 |

0.9556 |

| Acc_Mag |

0.9511 |

0.9791 |

0.9614 |

| Gyr_Mag |

0.8287 |

0.8250 |

0.8183 |

| Acc_Gyr_Mag |

0.9446 |

0.9737 |

0.9552 |

| Standing |

Acc |

0.9176 |

0.9353 |

0.9186 |

| Gyr |

0.7698 |

0.8410 |

0.7890 |

| Mag |

0.5038 |

0.5641 |

0.4975 |

| Acc_Gyr |

0.9298 |

0.9485 |

0.9272 |

| Acc_Mag |

0.9316 |

0.9404 |

0.9261 |

| Gyr_Mag |

0.8843 |

0.8331 |

0.8192 |

| Acc_Gyr_Mag |

0.9350 |

0.9459 |

0.9306 |

| Sitting |

Acc |

0.8087 |

0.8026 |

0.7812 |

| Gyr |

0.6978 |

0.7425 |

0.6984 |

| Mag |

0.4160 |

0.4949 |

0.4269 |

| Acc_Gyr |

0.8578 |

0.8282 |

0.8258 |

| Acc_Mag |

0.7625 |

0.7618 |

0.7509 |

| Gyr_Mag |

0.8150 |

0.8115 |

0.7894 |

| Acc_Gyr_Mag |

0.9171 |

0.8690 |

0.8769 |

| Taking a meal |

Acc |

0.8594 |

0.8829 |

0.8576 |

| Gyr |

0.6457 |

0.7930 |

0.7053 |

| Mag |

0.7353 |

0.7226 |

0.6982 |

| Acc_Gyr |

0.8571 |

0.9093 |

0.8684 |

| Acc_Mag |

0.8506 |

0.8904 |

0.8543 |

| Gyr_Mag |

0.7169 |

0.8225 |

0.7525 |

| Acc_Gyr_Mag |

0.8650 |

0.9068 |

0.8712 |

| Brushing teeth |

Acc |

0.7800 |

0.7442 |

0.7524 |

| Gyr |

0.8805 |

0.8515 |

0.8486 |

| Mag |

0.4199 |

0.3921 |

0.3640 |

| Acc_Gyr |

0.8439 |

0.7876 |

0.8013 |

| Acc_Mag |

0.7945 |

0.7393 |

0.7545 |

| Gyr_Mag |

0.8287 |

0.8410 |

0.8316 |

| Acc_Gyr_Mag |

0.8433 |

0.7979 |

0.8104 |

| Using the restroom |

Acc |

0.6773 |

0.6484 |

0.6444 |

| Gyr |

0.5862 |

0.4075 |

0.4646 |

| Mag |

0.7384 |

0.7173 |

0.7127 |

| Acc_Gyr |

0.7043 |

0.6544 |

0.6640 |

| Acc_Mag |

0.7441 |

0.7782 |

0.7376 |

| Gyr_Mag |

0.7764 |

0.6681 |

0.6921 |

| Acc_Gyr_Mag |

0.7904 |

0.7634 |

0.7588 |

| Walking |

Acc |

0.8781 |

0.8310 |

0.8241 |

| Gyr |

0.7993 |

0.7620 |

0.7421 |

| Mag |

0.7427 |

0.8097 |

0.7675 |

| Acc_Gyr |

0.8761 |

0.8632 |

0.8413 |

| Acc_Mag |

0.9170 |

0.9033 |

0.9017 |

| Gyr_Mag |

0.8436 |

0.8465 |

0.8314 |

| Acc_Gyr_Mag |

0.9295 |

0.9010 |

0.9039 |

| Ascending/descending the stairs |

Acc |

0.8634 |

0.8418 |

0.8374 |

| Gyr |

0.7314 |

0.7237 |

0.7114 |

| Mag |

0.8118 |

0.8527 |

0.8287 |

| Acc_Gyr |

0.8721 |

0.8333 |

0.8413 |

| Acc_Mag |

0.9105 |

0.8947 |

0.8976 |

| Gyr_Mag |

0.8326 |

0.8324 |

0.8269 |

| Acc_Gyr_Mag |

0.8979 |

0.8920 |

0.8890 |

| Running |

Acc |

0.9974 |

0.9897 |

0.9926 |

| Gyr |

0.9005 |

0.9032 |

0.8835 |

| Mag |

0.8461 |

0.8729 |

0.8549 |

| Acc_Gyr |

0.9974 |

0.9897 |

0.9926 |

| Acc_Mag |

0.9974 |

0.9897 |

0.9926 |

| Gyr_Mag |

0.9247 |

0.9034 |

0.8968 |

| Acc_Gyr_Mag |

0.9974 |

0.9897 |

0.9926 |

| Other movements |

Acc |

0.6256 |

0.6574 |

0.6256 |

| Gyr |

0.5614 |

0.5713 |

0.5547 |

| Mag |

0.5704 |

0.4783 |

0.4903 |

| Acc_Gyr |

0.6604 |

0.6944 |

0.6624 |

| Acc_Mag |

0.7210 |

0.7322 |

0.7038 |

| Gyr_Mag |

0.6962 |

0.6539 |

0.6586 |

| Acc_Gyr_Mag |

0.7316 |

0.7423 |

0.7192 |

Table 1b.

Evaluation results of the chest classifier.

Table 1b.

Evaluation results of the chest classifier.

| |

|

Precision |

Recall |

F-value |

| Lying in the supine position |

Acc |

0.9922 |

1.0000 |

0.9956 |

| Gyr |

0.6341 |

0.6380 |

0.6282 |

| Mag |

0.8043 |

0.7923 |

0.7700 |

| Acc_Gyr |

0.9922 |

1.0000 |

0.9956 |

| Acc_Mag |

0.9922 |

1.0000 |

0.9956 |

| Gyr_Mag |

0.7997 |

0.8154 |

0.7855 |

| Acc_Gyr_Mag |

0.9956 |

1.0000 |

0.9976 |

| Standing |

Acc |

0.5640 |

0.5951 |

0.5434 |

| Gyr |

0.5938 |

0.6212 |

0.5647 |

| Mag |

0.8219 |

0.8756 |

0.8139 |

| Acc_Gyr |

0.6455 |

0.6874 |

0.6333 |

| Acc_Mag |

0.8345 |

0.8641 |

0.8118 |

| Gyr_Mag |

0.7981 |

0.8459 |

0.7905 |

| Acc_Gyr_Mag |

0.8528 |

0.8675 |

0.8094 |

| Sitting |

Acc |

0.5480 |

0.5410 |

0.5032 |

| Gyr |

0.5538 |

0.5660 |

0.5070 |

| Mag |

0.7834 |

0.7846 |

0.7295 |

| Acc_Gyr |

0.6019 |

0.6103 |

0.5624 |

| Acc_Mag |

0.7496 |

0.7821 |

0.7555 |

| Gyr_Mag |

0.7120 |

0.6949 |

0.6691 |

| Acc_Gyr_Mag |

0.8119 |

0.7667 |

0.7591 |

| Taking a meal |

Acc |

0.6444 |

0.6884 |

0.6513 |

| Gyr |

0.6970 |

0.7562 |

0.7069 |

| Mag |

0.6323 |

0.5881 |

0.5875 |

| Acc_Gyr |

0.7315 |

0.7408 |

0.7230 |

| Acc_Mag |

0.8037 |

0.7516 |

0.7558 |

| Gyr_Mag |

0.8095 |

0.7933 |

0.7774 |

| Acc_Gyr_Mag |

0.8442 |

0.8161 |

0.8187 |

| Brushing teeth |

Acc |

0.9271 |

0.8731 |

0.8861 |

| Gyr |

0.8518 |

0.8466 |

0.8353 |

| Mag |

0.7378 |

0.7342 |

0.6917 |

| Acc_Gyr |

0.9112 |

0.8825 |

0.8820 |

| Acc_Mag |

0.9282 |

0.8968 |

0.8998 |

| Gyr_Mag |

0.8897 |

0.8904 |

0.8801 |

| Acc_Gyr_Mag |

0.9386 |

0.9171 |

0.9224 |

| Using the restroom |

Acc |

0.6606 |

0.5841 |

0.5876 |

| Gyr |

0.5777 |

0.4117 |

0.4627 |

| Mag |

0.8377 |

0.8074 |

0.8028 |

| Acc_Gyr |

0.7006 |

0.6178 |

0.6337 |

| Acc_Mag |

0.8543 |

0.8768 |

0.8491 |

| Gyr_Mag |

0.8405 |

0.8068 |

0.8096 |

| Acc_Gyr_Mag |

0.8567 |

0.8569 |

0.8420 |

| Walking |

Acc |

0.9371 |

0.9454 |

0.9358 |

| Gyr |

0.8527 |

0.7020 |

0.7219 |

| Mag |

0.8053 |

0.9082 |

0.8486 |

| Acc_Gyr |

0.9711 |

0.9354 |

0.9362 |

| Acc_Mag |

0.9606 |

0.9547 |

0.9541 |

| Gyr_Mag |

0.8876 |

0.9099 |

0.8912 |

| Acc_Gyr_Mag |

0.9761 |

0.9593 |

0.9661 |

| Ascending/descending the stairs |

Acc |

0.9518 |

0.9331 |

0.9360 |

| Gyr |

0.7427 |

0.8711 |

0.7785 |

| Mag |

0.9302 |

0.9078 |

0.9144 |

| Acc_Gyr |

0.9383 |

0.9601 |

0.9422 |

| Acc_Mag |

0.9642 |

0.9679 |

0.9643 |

| Gyr_Mag |

0.8965 |

0.9117 |

0.8993 |

| Acc_Gyr_Mag |

0.9532 |

0.9785 |

0.9641 |

| Running |

Acc |

0.9956 |

0.9750 |

0.9776 |

| Gyr |

0.9906 |

0.9947 |

0.9923 |

| Mag |

0.9263 |

0.9374 |

0.9283 |

| Acc_Gyr |

0.9952 |

0.9861 |

0.9888 |

| Acc_Mag |

1.0000 |

0.9750 |

0.9800 |

| Gyr_Mag |

0.9906 |

0.9974 |

0.9937 |

| Acc_Gyr_Mag |

1.0000 |

0.9889 |

0.9933 |

| Other movements |

Acc |

0.6476 |

0.6389 |

0.6186 |

| Gyr |

0.6179 |

0.5684 |

0.5741 |

| Mag |

0.6795 |

0.5762 |

0.6081 |

| Acc_Gyr |

0.7025 |

0.6980 |

0.6886 |

| Acc_Mag |

0.7815 |

0.7577 |

0.7581 |

| Gyr_Mag |

0.7213 |

0.6548 |

0.6725 |

| Acc_Gyr_Mag |

0.7917 |

0.7777 |

0.7739 |

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).