Submitted:

03 December 2024

Posted:

03 December 2024

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

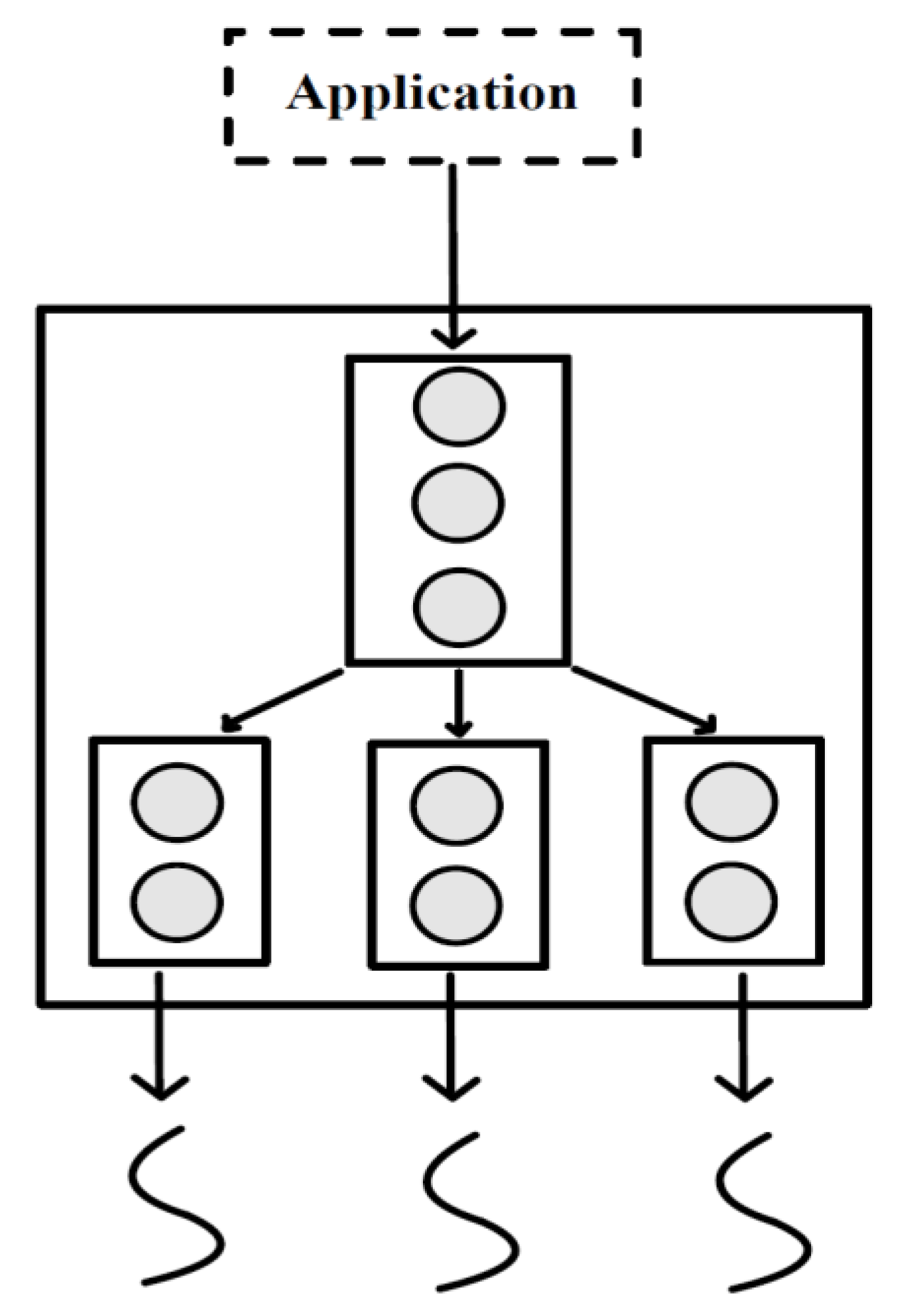

2. Heterogeneous Computing

2.1. Promises and Challenges

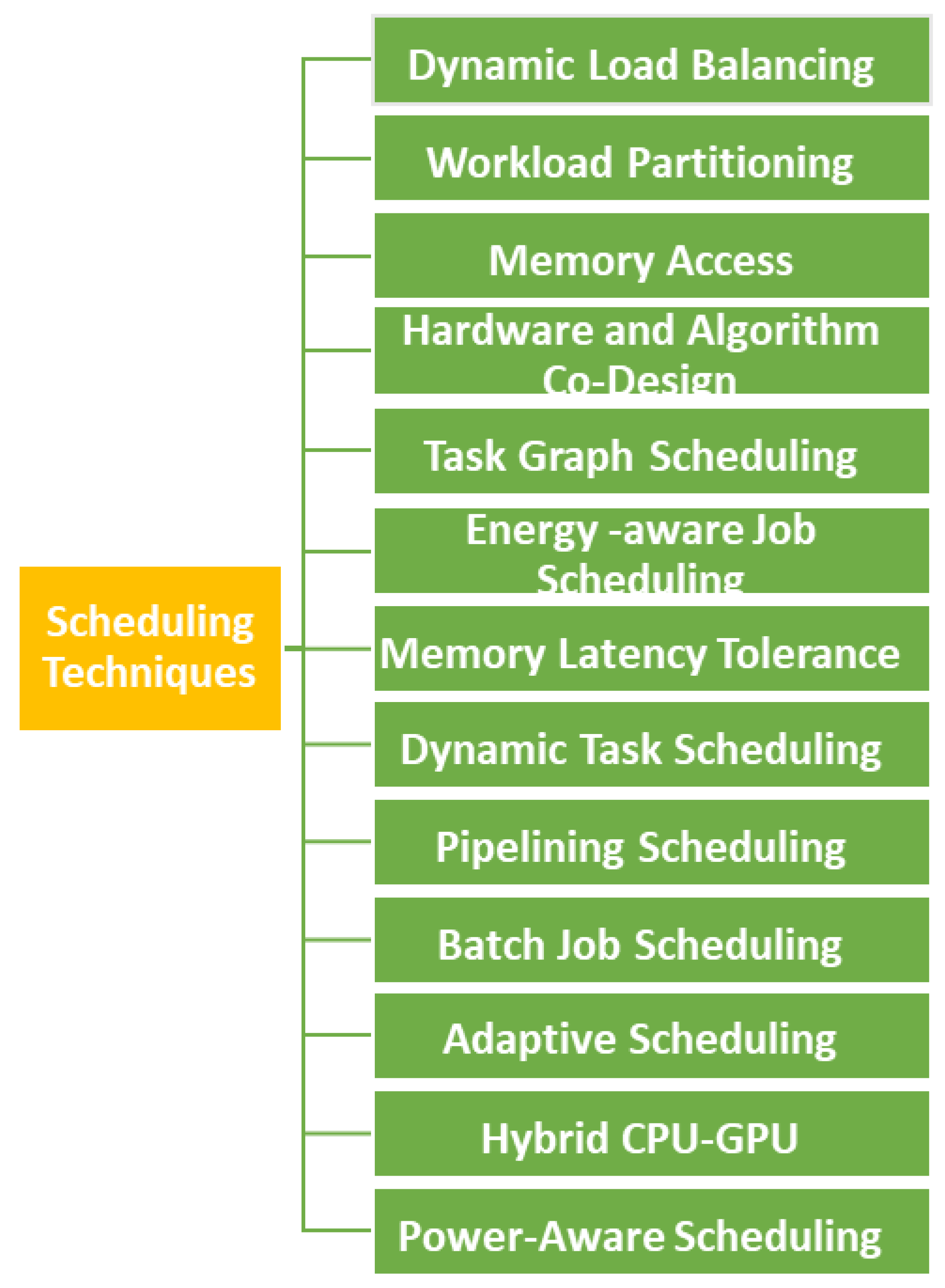

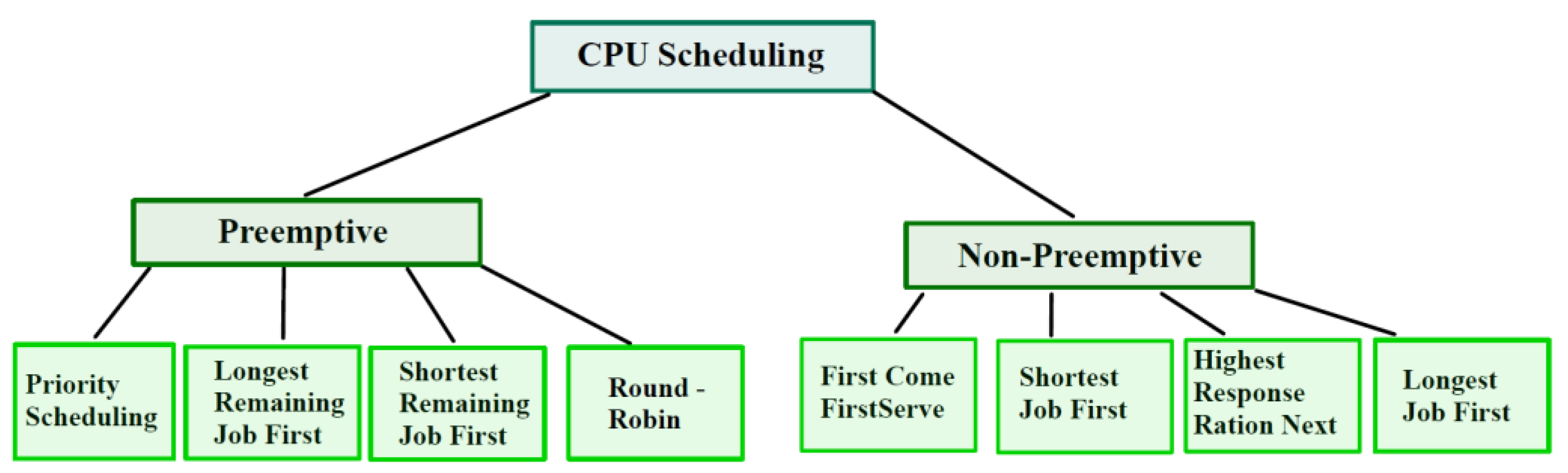

3. Scheduling Strategies

3.1. Dynamic Load Balancing

- Lower Bound Analysis: The authors prove that the competitive ratio (the ratio of makespan achieved by the online algorithm to the optimal makespan) of any online algorithm is lower-bounded by a certain value, which depends on the number of CPUs (m) and GPUs (k). They investigate the influence of task graph knowledge and task flexibility on the lower bound.

- Competitive Algorithm: The authors propose a ð2þ1Þ-competitive algorithm, called QA, which improves upon a previous algorithm. They refine the algorithm and its analysis to achieve better performance.

- Generalization to Multiple Processor Types: The authors extend their results to the case of platforms with multiple types of processors, adapting the lower bounds and the online algorithm accordingly.

- Heuristic Approach: The authors propose a simple heuristic based on the QA algorithm and the system-oriented heuristic EFT. This heuristic is both competitive and performs well in practice.

3.2. Workload Partitioning

3.3. Memory Access Scheduling

- Request Source Isolation: To prevent interference between CPU and GPU memory requests, a new memory request queue is created based on the request source. This isolation ensures that GPU requests do not interfere with CPU requests and vice versa, enhancing overall memory access performance.

- Dynamic Bank Partitioning: For the CPU request queue, a dynamic bank partitioning strategy is implemented. It dynamically maps the CPU requests to different bank sets based on the memory characteristics of the applications. By considering the access behavior and characteristics of multiple parallel applications, this strategy eliminates memory request interference among CPU applications without affecting bank-level parallelism.

- Criticality-Aware Scheduling: The GPU request queue incorporates the concept of criticality to measure the difference in memory access latency among GPU cores. A criticality-aware memory scheduling approach is implemented, prioritizing requests based on their criticality level. This strategy balances the locality and criticality of application access, effectively reducing memory access latency differences among GPU cores.

3.4. Hardware and Algorithm Co-Design

3.5. Prefetching Mechanisms

3.6. Fine-Grained Warp Scheduling

3.7. Context Switching Strategies

3.8. Energy-Aware Job Scheduling Techniques

3.9. Memory Latency Tolerance

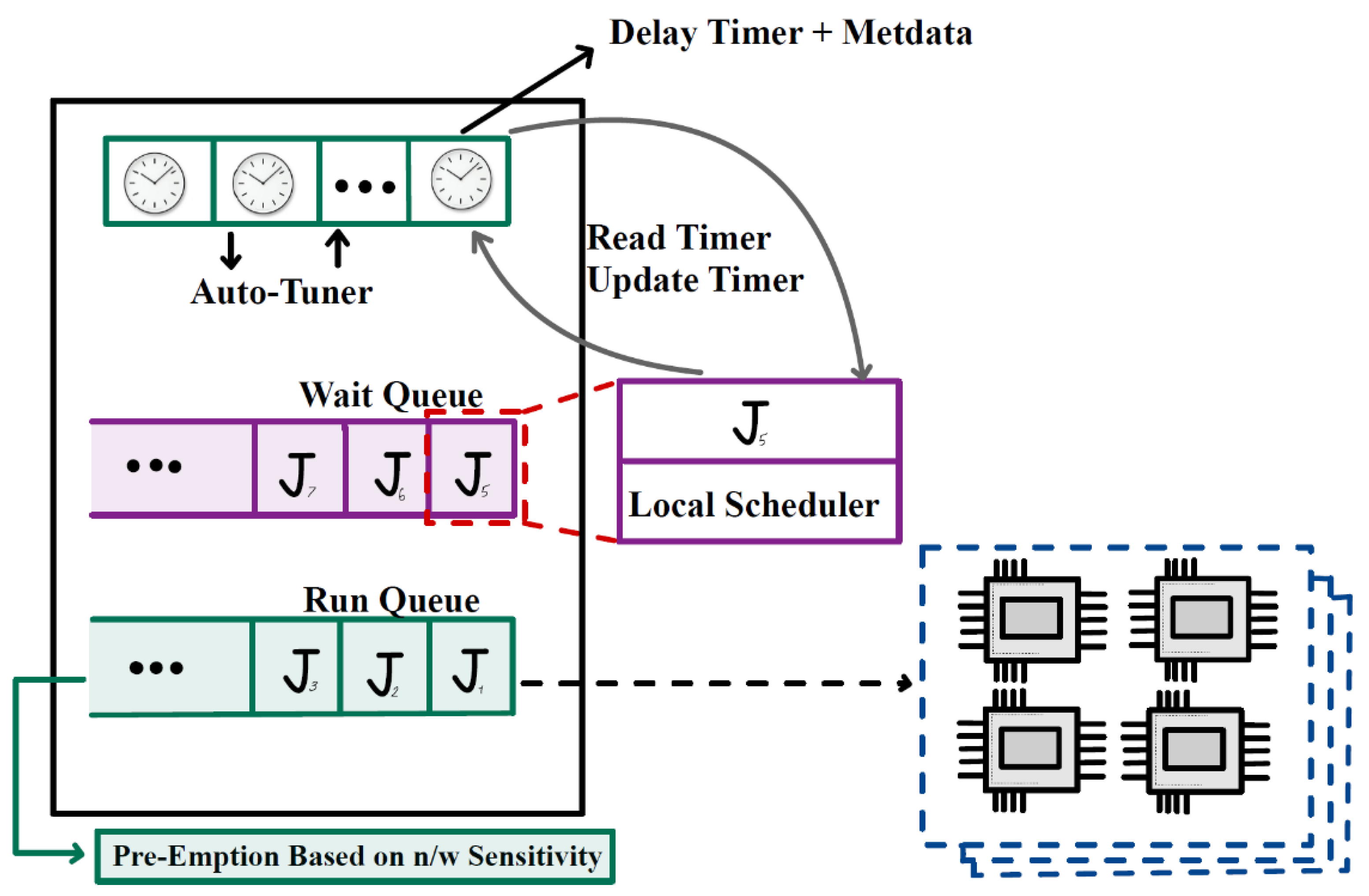

3.10. Dynamic Task Scheduling

- Load-Aware Scheduling Strategy:

- 2.

- Genetic Algorithm-Based Scheduling Strategy:

3.11. Adaptive Scheduling

- Off-line-Tuned Static and Dynamic Scheduling Strategies:

- 2.

- Adaptive Heterogeneous Scheduling Strategies:

- 3.

- Increasing Abstraction Level of the Programming Model:

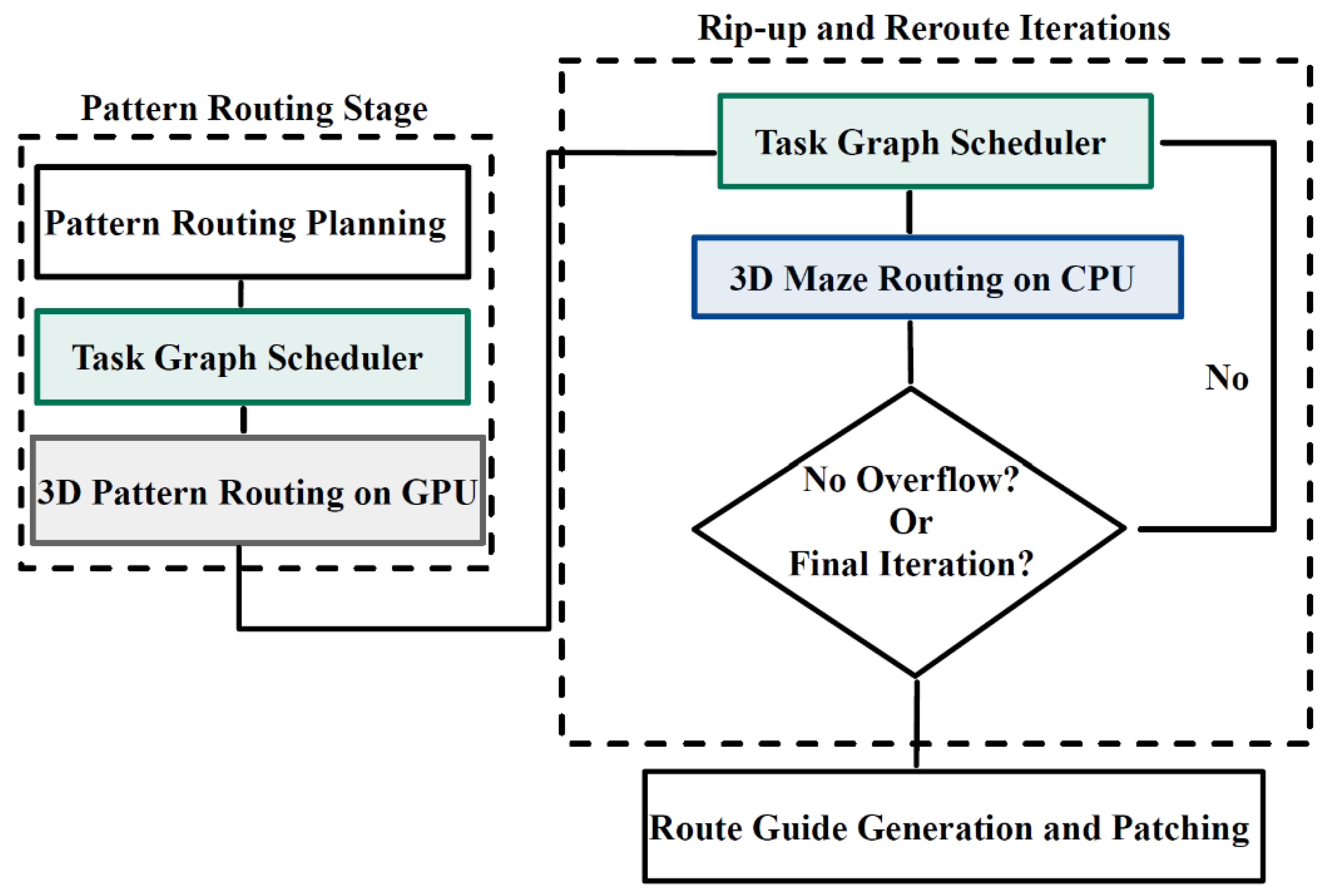

3.12. Task Graph Scheduling

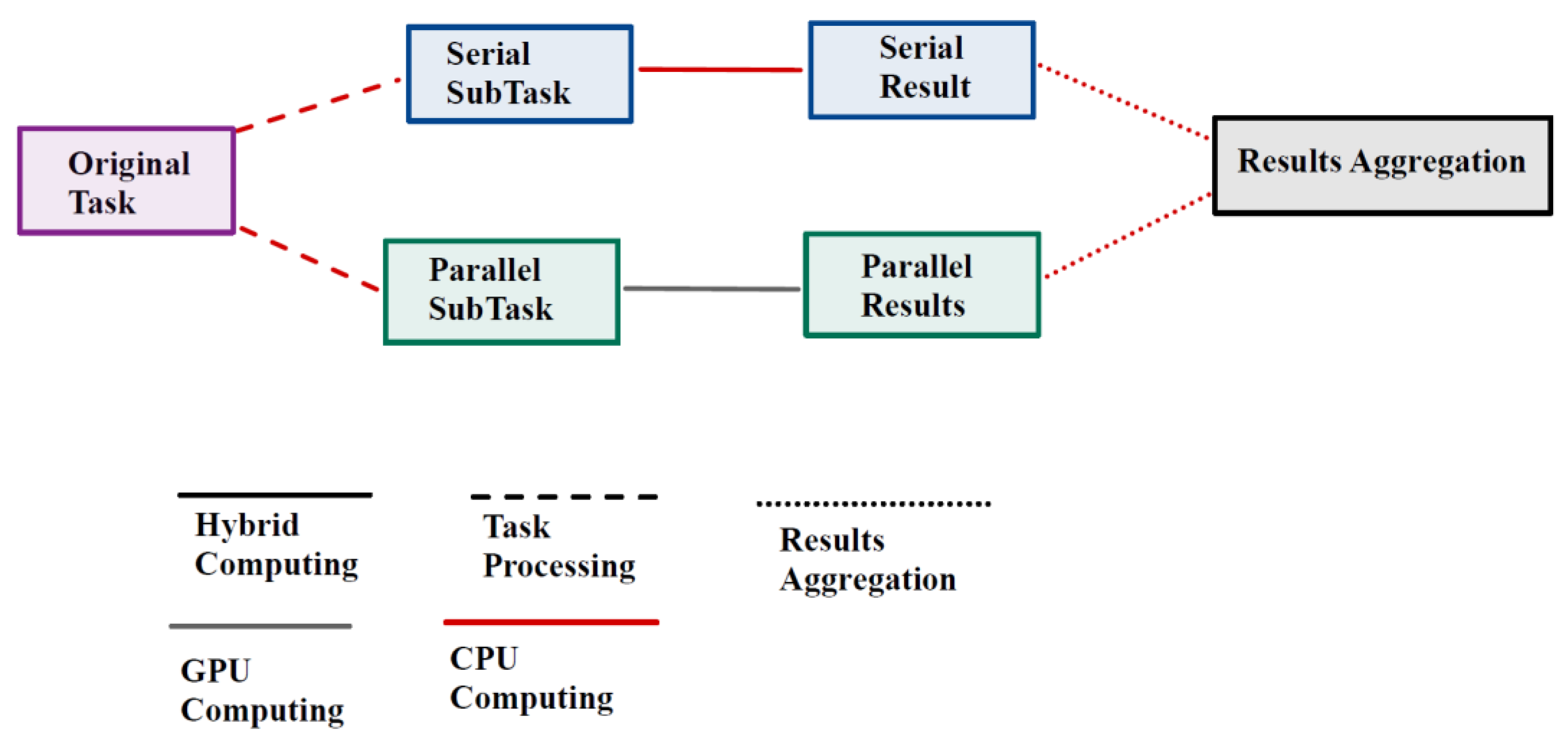

3.13. Hybrid Scheduling

3.14. Pipelined Scheduling

3.15. Power Aware/Deadline Aware Scheduling

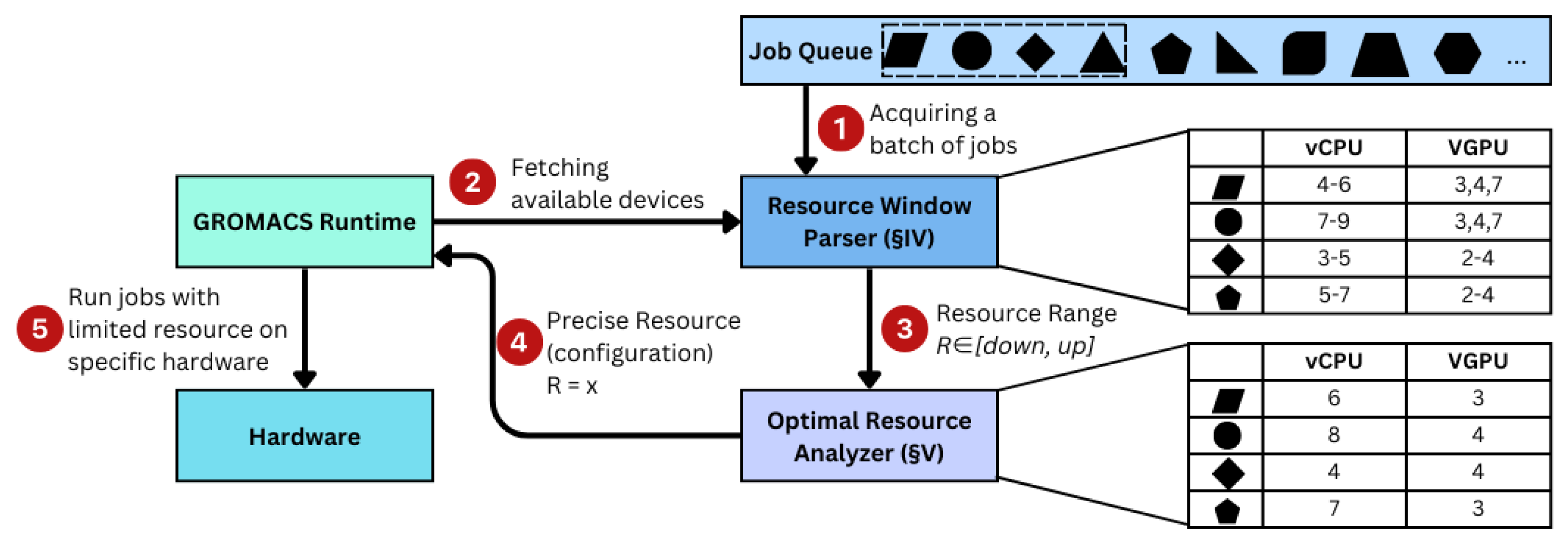

3.16. Batch Job Scheduling

4. Summary of the Review

5. Conclusions and Future Scope

References

- Mittal, S.; Vetter, J.S. A Survey of CPU-GPU Heterogeneous Computing Techniques. ACM Comput. Surv. 2015, 47, 1–35. [Google Scholar] [CrossRef]

- Fang, J.; Wang, M.; Wei, Z. A Memory Scheduling Strategy for Eliminating Memory Access Interference in Heterogeneous System. J. Supercomput. 2020, 76, 3129–3154. [Google Scholar] [CrossRef]

- Tang, X.; Fu, Z. CPU-GPU Utilization Aware Energy-Efficient Scheduling Algorithm on Heterogeneous Computing Systems. IEEE Access 2020, 8, 58948–58958. [Google Scholar] [CrossRef]

- Wang, Z.; et al. Enabling Latency-Aware Data Initialization for Integrated CPU/GPU Heterogeneous Platform. IEEE Trans. Comput.-Aided Des. Integr. Circuits Syst. 2020, 39, 3433–3444. [Google Scholar] [CrossRef]

- Roeder, J.; Rouxel, B.; Altmeyer, S.; Grelck, C. Energy-aware Scheduling of Multi-version Tasks on Heterogeneous Real-time Systems. In Proceedings of the 36th Annual ACM Symposium on Applied Computing, March 2021; pp. 501–510. [Google Scholar]

- Fang, J.; Zhang, J.; Lu, S.; Zhao, H. Exploration on task scheduling strategy for CPU-GPU heterogeneous computing system. In Proceedings of the 2020 IEEE Computer Society Annual Symposium on VLSI (ISVLSI), 306-311, IEEE, 2020.

- Canon, L.-C.; Marchal, L.; Simon, B.; Vivien, F. Online scheduling of task graphs on heterogeneous platforms. IEEE Trans. Parallel Distrib. Syst. 2019, 31, 721–732. [Google Scholar] [CrossRef]

- Constantinescu, D.-A.; Navarro, A.; Corbera, F.; Fernández-Madrigal, J.-A.; Asenjo, R. Efficiency and Productivity for Decision Making on Low-Power Heterogeneous CPU+GPU SoCs. J. Supercomput. 2021, 77, 44–65. [Google Scholar] [CrossRef]

- Li, Z.; et al. Efficient Algorithms for Task Mapping on Heterogeneous CPU/GPU Platforms for Fast Completion Time. J. Syst. Archit. 2021, 114, 101936. [Google Scholar] [CrossRef]

- Grinsztajn, N.; et al. Readys: A Reinforcement Learning Based Strategy for Heterogeneous Dynamic Scheduling. In Proceedings of the 2021 IEEE International Conference on Cluster Computing (CLUSTER); IEEE, 2021.

- Seo, W.; et al. SLO-aware Inference Scheduler for Heterogeneous Processors in Edge Platforms. ACM Trans. Archit. Code Optim. 2021, 18, 1–26. [Google Scholar] [CrossRef]

- Harichane, I.; Makhlouf, S.A.; Belalem, G. KubeSC-RTP: Smart Scheduler for Kubernetes Platform on CPU-GPU Heterogeneous Systems. Concurrency Comput. Pract. Exp. 2022, 34, e7108. [Google Scholar] [CrossRef]

- Asad, A.; Kaur, R.; Mohammadi, F. A Survey on Memory Subsystems for Deep Neural Network Accelerators. Future Internet 2022, 14, 146. [Google Scholar] [CrossRef]

- Fang, J.; et al. Resource Scheduling Strategy for Performance Optimization Based on Heterogeneous CPU-GPU Platform. 2022.

- Kaur, R.; Mohammadi, F. Power Estimation and Comparison of Heterogeneous CPU-GPU Processors. In Proceedings of the 2023 IEEE 25th Electronics Packaging Technology Conference (EPTC), 5 December 2023; pp. 948–951. IEEE. [Google Scholar]

- Albahar, H.; et al. SchedTune: A Heterogeneity-Aware GPU Scheduler for Deep Learning. In Proceedings of the 2022 22nd IEEE International Symposium on Cluster, Cloud and Internet Computing (CCGrid); IEEE, 2022.

- He, Z.; Sun, Y.; Wang, B.; Li, S.; Zhang, B. CPU-GPU Heterogeneous Computation Offloading and Resource Allocation Scheme for Industrial Internet of Things. IEEE Internet of Things J. 2023. [Google Scholar] [CrossRef]

- Hu, B.; Yang, X.; Zhao, M. Energy-Minimized Scheduling of Intermittent Real-Time Tasks in a CPU-GPU Cloud Computing Platform. IEEE Trans. Parallel Distrib. Syst. 2023, 34, 2391–2402. [Google Scholar] [CrossRef]

- Garcia-Hernandez, J.J.; Morales-Sandoval, M.; Elizondo-Rodríguez, E. A Flexible and General-Purpose Platform for Heterogeneous Computing. Computation 2023, 11, 97. [Google Scholar] [CrossRef]

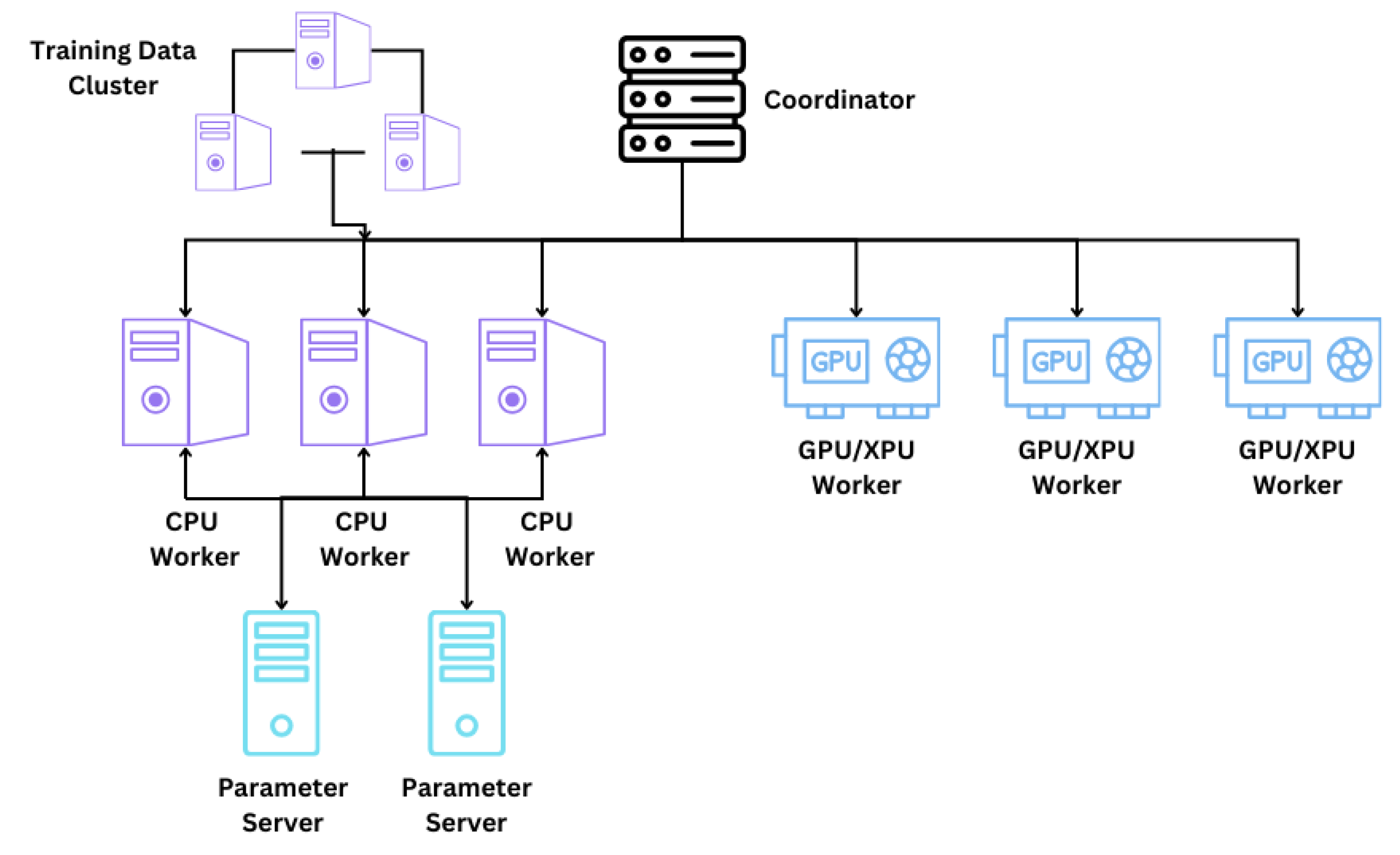

- Liu, J.; Wu, Z.; Feng, D.; Zhang, M.; Wu, X.; Yao, X.; Yu, D.; Ma, Y.; Zhao, F.; Dou, D. Heterps: Distributed deep learning with reinforcement learning based scheduling in heterogeneous environments. Future Gener. Comput. Syst. 2023, 148, 106–117. [Google Scholar] [CrossRef]

- Kaur, R.; Mohammadi, F. Comparative Analysis of Power Efficiency in Heterogeneous CPU-GPU Processors. In Proceedings of the 2023 Congress in Computer Science, Computer Engineering, & AppliedComputing (CSCE), 24 July 2023; pp. 756–758. IEEE.

- Kaur, R.; Saluja, N. Comparative Analysis of 1-bit Memory Cell in CMOS and QCA Technology. In Proceedings of the 2018 International Flexible Electronics Technology Conference (IFETC), Ottawa, ON, Canada; 2018; pp. 1–3. [Google Scholar] [CrossRef]

- Yang, Z.; Wu, H.; Xu, Y.; Wu, Y.; Zhong, H.; Zhang, W. Hydra: Deadline-aware and efficiency-oriented scheduling for deep learning jobs on heterogeneous GPUs. IEEE Trans. Comput. 2023, 72, 2224–2236. [Google Scholar] [CrossRef]

- Zhang, X. Mixtran: An efficient and fair scheduler for mixed deep learning workloads in heterogeneous GPU environments. Cluster Comput. 2024, 27, 2775–2784. [Google Scholar] [CrossRef]

- Zou, A.; Li, J.; Gill, C.D.; Zhang, X. RTGPU: Real-time GPU scheduling of hard deadline parallel tasks with fine-grain utilization. IEEE Trans. Parallel Distrib. Syst. 2023, 34, 1450–1465. [Google Scholar] [CrossRef]

- Hsu, K.-C.; Tseng, H.-W. Simultaneous and Heterogeneous Multithreading. In Proceedings of the 56th Annual IEEE/ACM International Symposium on Microarchitecture, 2023.

- Gao, X. TAS: A Temperature-Aware Scheduling for Heterogeneous Computing. IEEE Access 2023, 11, 54773–54781. [Google Scholar] [CrossRef]

- Chun, C.-K.; Lai, K.-C. A Load Balance Scheduling Approach for Generative AI on Cloud-Native Environments with Heterogeneous Resources. In Proceedings of the 2024 10th International Conference on Applied System Innovation (ICASI); IEEE; 2024. [Google Scholar]

- Chen, H.; Zhang, N.; Xiang, S.; Zeng, Z.; Dai, M.; Zhang, Z. Allo: A Programming Model for Composable Accelerator Design. Proc. ACM Program. Lang. 2024, 8, 593–620. [Google Scholar] [CrossRef]

- Ahmad, W.; Gautam, G.; Alam, B.; Bhati, B.S. An Analytical Review and Performance Measures of State-of-Art Scheduling Algorithms in Heterogeneous Computing Environment. Arch. Comput. Methods Eng. 2024, 1–23. [Google Scholar] [CrossRef]

- Belkhiri, A.; Dagenais, M. Analyzing GPU Performance in Virtualized Environments: A Case Study. Future Internet 2024, 16, 72. [Google Scholar] [CrossRef]

- Xue, W.; Wang, H.; Roy, C.J. CPU-GPU heterogeneous code acceleration of a finite volume Computational Fluid Dynamics solver. Future Gener. Comput. Syst. 2024, 158, 367–377. [Google Scholar] [CrossRef]

- Ye, Z.; Gao, W.; Hu, Q.; Sun, P.; Wang, X.; Luo, Y.; Zhang, T.; Wen, Y. Deep Learning Workload Scheduling in GPU Datacenters: A Survey. ACM Comput. Surv. 2024, 56, 1–38. [Google Scholar] [CrossRef]

- Tayeb, H.; Bramas, B.; Faverge, M.; Guermouche, A. Dynamic Tasks Scheduling with Multiple Priorities on Heterogeneous Computing Systems.2024.

- Tariq, U.U.; Ali, H.; Nadeem, M.S.; Jan, S.R.; Sabrina, F.; Grandhi, S.; Wang, Z.; Liu, L. Energy-aware Successor Tree Consistent EDF Scheduling for PCTGs on MPSoCs. IEEE Access 2024. [CrossRef]

- Kwon, S.; Bahn, H. Enhanced Scheduling of AI Applications in Multi-Tenant Cloud Using Genetic Optimizations. Appl. Sci. 2024, 14, 4697. [Google Scholar] [CrossRef]

- Zhou, Y.; Ren, Z.; Shao, E.; Ma, L.; Hu, Q.; Wang, L.; Tan, G. FILL: A heterogeneous resource scheduling system addressing the low throughput problem in GROMACS. CCF Trans. High Perform. Comput. 2024, 6, 17–31. [Google Scholar] [CrossRef]

- Wang, Y.; Liu, C.; Wong, D.; Kim, H. GCAPS: GPU Context-Aware Preemptive Priority-based Scheduling for Real-Time Tasks. arXiv preprint arXiv:2406.05221, 2024.

- Wang, S.; Chen, S.; Shi, Y. GPARS: Graph predictive algorithm for efficient resource scheduling in heterogeneous GPU clusters. Future Gener. Comput. Syst. 2024, 152, 127–137. [Google Scholar] [CrossRef]

- Sharma, A.; Bhasi, V.M.; Singh, S.; Kesidis, G.; Kandemir, M.T.; Das, C.R. GPU Cluster Scheduling for Network-Sensitive Deep Learning. arXiv preprint arXiv:2401.16492, 2024.

- Lannurien, V.; Slimani, C.; d'Orazio, L.; Barais, O.; Paquelet, S.; Boukhobza, J. HeROcache: Storage-Aware Scheduling in Heterogeneous Serverless Edge-The Case of IDS. In Proceedings of the 24th IEEE/ACM International Symposium on Cluster, Cloud and Internet Computing, 2024.

- Yan, K.; Song, Y.; Liu, T.; Tan, J.; Wei, X.; Fu, X. HSAS: Efficient task scheduling for large scale heterogeneous systolic array accelerator cluster. Future Gener. Comput. Syst. 2024, 154, 440–450. [Google Scholar] [CrossRef]

- Shobaki, G.; Muyan-Özçelik, P.; Hutton, J.; Linck, B.; Malyshenko, V.; Kerbow, A.; Ramirez-Ortega, R.; Gordon, V.S. Instruction Scheduling for the GPU on the GPU. In Proceedings of the 2024 IEEE/ACM International Symposium on Code Generation and Optimization (CGO), 435-447, IEEE, 2024.

- Mohammadjafari, A.; Khajouie, P. Optimizing Task Scheduling in Heterogeneous Computing Environments: A Comparative Analysis of CPU, GPU, and ASIC Platforms Using E2C Simulator.arXiv preprint arXiv:2405.08187, 2024.

- Ndamlabin, E.; Bramas, B. RSCHED: An effective heterogeneous resources management for simultaneous execution of task-based applications. 2024.

- McGowen, J.; Dagli, I.; Dantam, N.T.; Belviranli, M.E. Scheduling for Cyber-Physical Systems with Heterogeneous Processing Units under Real-World Constraints. In Proceedings of the 38th ACM International Conference on Supercomputing, 298-311, 2024.

- Verma, P.; Maurya, A.K.; Yadav, R.S. A survey on energy-efficient workflow scheduling algorithms in cloud computing. Softw. Pract. Exp. 2024, 54, 637–682. [Google Scholar] [CrossRef]

- Fang, J.; Wei, Z.; Liu, Y.; Hou, Y. TB-TBP: A task-based adaptive routing algorithm for network-on-chip in heterogeneous CPU-GPU architectures. J. Supercomput. 2024, 80, 6311–6335. [Google Scholar] [CrossRef]

- Al Abdul Wahid, S.; Asad, A.; Mohammadi, F. A Survey on Neuromorphic Architectures for Running Artificial Intelligence Algorithms. Electronics 2024, 13, 2963. [Google Scholar] [CrossRef]

- Asad, A.; Kaur, R.; Mohammadi, F. Noise Suppression Using Gated Recurrent Units and Nearest Neighbor Filtering. In Proceedings of the 2022 International Conference on Computational Science and Computational Intelligence (CSCI), Las Vegas, NV, USA, 2022, pp. 368-372. [CrossRef]

- Kaur, R.; Asad, A.; Mohammadi, F. A Comprehensive Review of Processing-in-Memory Architectures for Deep Neural Networks. Computers 2024, 13, 174. [Google Scholar] [CrossRef]

| Paper | Scheduling Technique | Description | Focus | Flexibility | Advantages | Challenges |

|---|---|---|---|---|---|---|

| [2] | Fine-grained warp scheduling | Prioritizes individual threads within a warp to enhance parallelism. | Thread execution optimization | High | Enhanced parallelism, reduced resource contention | Increased complexity, potential overhead |

| [4] | Memory latency tolerance | Overlaps memory access with computation to minimize performance impact. | Memory access optimization | Medium | Reduced memory latency impact | Complexity in implementation |

| [2,24] | Memory bank parallelism | Exploits GPU memory access capabilities to increase throughput. | Memory throughput optimization | Medium | Improved memory access patterns | Potential conflicts in memory banks |

| [2] | Context switching | Efficiently switches between warps to optimize GPU resource usage. | Resource utilization optimization | High | Reduced idle times, better utilization | Overhead and potential synchronization issues |

| [2] | Prefetching mechanisms | Anticipates and fetches data in advance to reduce memory access latency. | Data access optimization | Low | Reduced latency, improved data availability | Prediction overhead, redundancy risk |

| [7,10] | Dynamic load balancing | Redistributes workload in real time based on system conditions. | Workload distribution | High | Improved responsiveness, balanced resources | Potential runtime overhead |

| [6,39] | Dynamic task scheduling | Real-time adaptation of task execution priorities to maximize system efficiency. | Task execution optimization | High | Adaptability to workload changes | Higher runtime complexity |

| [16,17] | Adaptive scheduling | Adjusts policies dynamically based on workload and system state. | Workload adaptation | High | Better resource utilization | May incur frequent adjustments |

| [14,42] | Hybrid scheduling | Combines static and dynamic approaches for optimized task execution. | Mixed scheduling strategies | Medium | Flexibility, efficiency in diverse workloads | Requires careful design |

| [23] | Power-aware scheduling | Manages energy consumption while maintaining performance. | Energy efficiency | Medium | Reduced power usage | May compromise speed |

| [25,38] | Real-time GPU scheduling | Focuses on guaranteeing deadlines for GPU-based tasks. | Timing-critical applications | Medium | Ensures predictable performance | Limited to real-time systems |

| [33,36] | Batch job scheduling | Processes multiple tasks in batches to optimize resource usage. | Resource optimization | Medium | High throughput | May cause latency for smaller tasks |

| [20] | Reinforcement learning-based scheduling | Utilizes RL to dynamically allocate tasks across heterogeneous resources. | Intelligent task allocation | High | Optimized scheduling in uncertain environments | Training overhead, scalability |

| [29] | Composable schedules | Incrementally builds custom schedules for modular hardware designs. | Hardware optimization | High | Flexibility in hardware design | Limited generalizability |

| [31,37] | Resource partitioning | Divides resources between tasks to improve throughput and reduce conflicts. | Resource allocation | Medium | Improved resource utilization | May require significant tuning |

| [41] | Storage-aware scheduling | Optimizes storage placement and access to reduce overhead in serverless edge computing. | Storage and task placement | Medium | Better QoS and reduced cold starts | Relies on caching performance |

| [8,27] | Temperature-aware scheduling | Adapts task schedules to maintain system performance under temperature constraints. | Thermal optimization | Medium | Prolonged hardware lifespan, performance stability | Overhead of temperature monitoring |

| [40] | Network-sensitive scheduling | Minimizes communication delays for distributed deep learning workloads by consolidating jobs. | Communication overhead optimization | Medium | Reduced communication delays, faster completion | Complex job placement algorithms |

| [43] | Register-pressure-aware scheduling | Balances schedule length and register usage to optimize GPU task execution. | Instruction scheduling | Medium | Improved GPU instruction performance | Algorithm complexity |

| [18,44] | Task graph scheduling | Optimizes execution of task dependencies across heterogeneous platforms. | Dependency management | Medium | Reduced completion times | High complexity for large workloads |

| [19,32] | Pipelined task scheduling | Overlaps task execution stages to improve efficiency and system throughput. | Staged execution optimization | High | Faster task completion | Increased task management complexity |

| [35] | Energy-efficient scheduling | Uses successor tree consistency and DVS to minimize power consumption in heterogeneous systems. | Power optimization | Medium | Reduced energy usage | May impact real-time performance |

| [45,48] | Task-based routing | Plans routes and allocates resources dynamically in heterogeneous NoC architectures. | Network-on-Chip scheduling | Medium | Optimized resource allocation, reduced latency | High computational requirements |

| Ref No. | Scheduling Technique | Focus Area | Strategy Type | Key Features |

|---|---|---|---|---|

| [2] | Warp Scheduling | Thread assignment to warps | Dynamic | Efficient resource allocation and thread management |

| [2] | Memory Latency Tolerance | Minimizing memory latency | Dynamic | Overlapping memory access and computation |

| [2,24] | Memory Bank Parallelism | Enhancing memory throughput | Dynamic | Exploiting parallel memory access capabilities |

| [2] | Context Switching | Optimizing resource usage | Dynamic | Efficient warp switching for resource optimization |

| [2] | Prefetching Mechanisms | Data access optimization | Dynamic | Anticipating and fetching data in advance |

| [2] | Fine-grained Scheduling | Individual thread prioritization | Dynamic | Enhancing parallelism and reducing contention |

| [46] | Workload Partitioning | CPU-GPU workload division | Static/Dynamic | Effective distribution based on workload and system conditions |

| [2,24] | Memory Access Scheduling | Memory latency optimization | Dynamic | Effective scheduling to reduce memory latency |

| [9,12,18,27,38,47,48] | Task Graph Scheduling | Task graph optimization | Dynamic | Dynamic redistribution based on task graph characteristics |

| [7,10],28] | Dynamic Load Balancing | Workload redistribution | Dynamic | Adapting workload distribution in real-time |

| [2] | Hardware-Algorithm Co-design | Optimization for specific algorithms | Dynamic | Co-design approaches for algorithm and hardware optimization |

| [6,18,26,37,39,45] | Dynamic Task Scheduling | Real-time task optimization | Dynamic | Adapting task priorities based on system load |

| [8,16,17,40] | Adaptive Scheduling Policies | Dynamic scheduling adjustments | Dynamic | Real-time policy changes based on workload and system state |

| [19,20,25,32] | Pipelined Task Scheduling | Task execution pipelining | Dynamic | Overlapping task execution stages for improved efficiency |

| [14,42,44] | Hybrid Scheduling Strategies | Combined scheduling approaches | Mixed | Utilizing a mix of static and dynamic scheduling techniques |

| [29,30,31,33,35,36] | Batch Job Scheduling | Job processing optimization | Static/Dynamic | Scheduling multiple jobs for optimized resource usage |

| [23,41,43] | Power-aware Scheduling | Energy-efficient task management | Dynamic | Adjusting scheduling based on power consumption considerations |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).