Submitted:

04 November 2024

Posted:

05 November 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Traditional image processing methods achieved an accuracy range of 70% to 85%,

- Machine Learning-Based Methods reported accuracies ranging from 80% to 90%,

- Deep learning approaches demonstrated higher accuracy levels, often exceeding 90%.

2. Theoretical Background

2.1. Literature Review

2.2. YOLO Model

3. Methodology

3.1. Environmental Set-Up

3.2. Data Collection and Model Training

3.3. Metrics for Evaluation

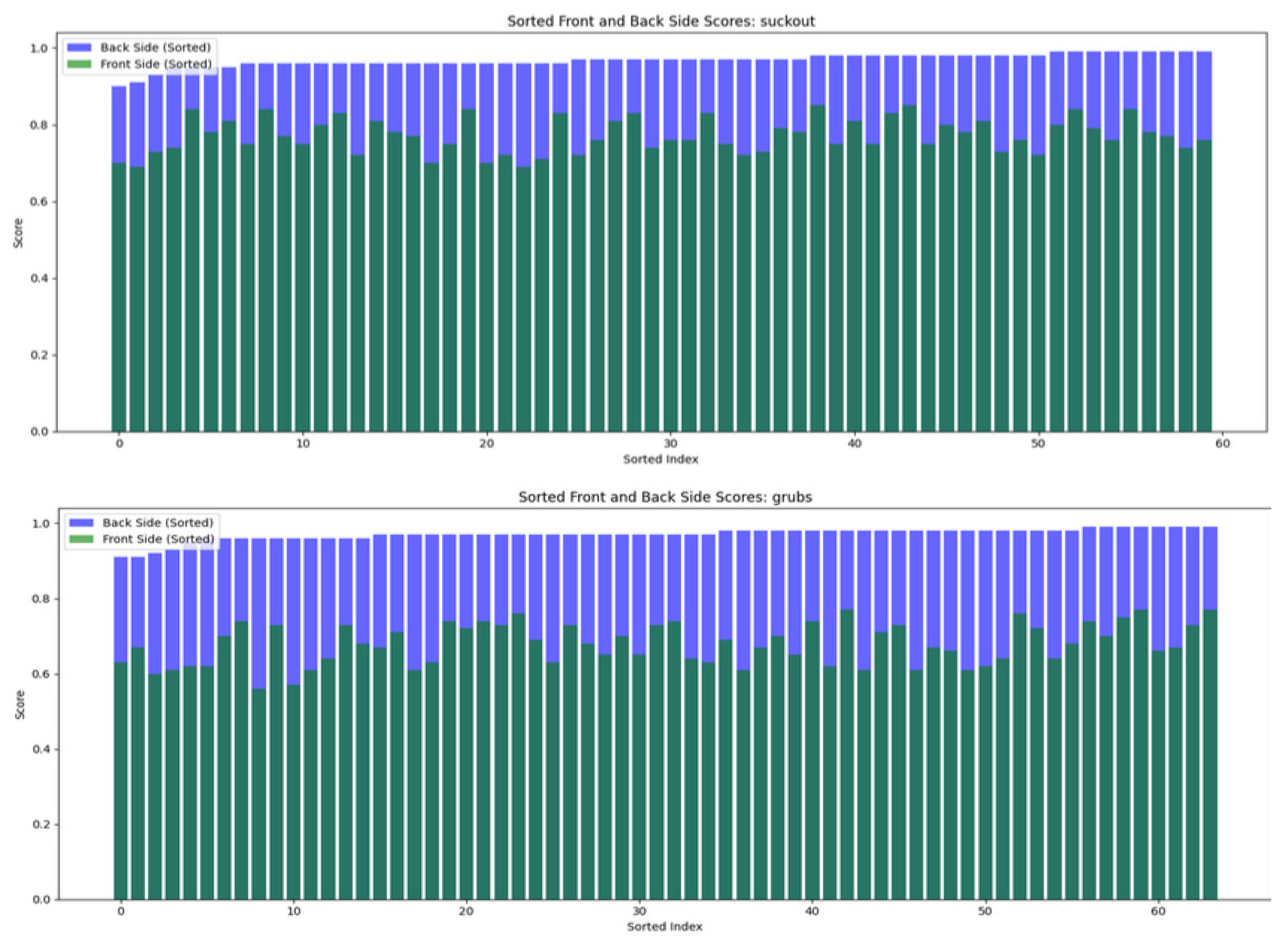

- Front-Only Classification: Based solely on the front side.

- Dual-Side Combined Classification: Combines results from both front and back sides.

4. Results

5. Discussion

6. Conclusions

- We utilized a controlled, fully enclosed environment with identical digitization conditions for each defect, thus making a significant contribution toward implementing a technical solution for automatic defect inspection on leather in an industrial setting.

- We applied computer vision models to detect, classify, and segment defects on the flesh side of industrial leather.

- We investigated defects in the industry, known internally as grubs (larval damage) and suckout (cut damage), which, to the best of our knowledge, have not been studied by any previous authors.

Acknowledgements

| 1 | |

| 2 | Available online: https://www.rmaelectronics.com/computar-v1226-mpz/) |

| 3 | Available online: https://www.cvat.ai/

|

References

- Grand View Research. Available online: https://www.grandviewresearch.com/industry-analysis/leather-goods-market (accessed on 25 October 2024).

- Cognitive Market Research. Available online: https://www.cognitivemarketresearch.com/genuine-leather-market-report (accessed on 25 October 2024).

- Mascianà, P. World statistical compendium for raw hides and skins, leather and leather footwear. Intergovernmental group on meat and dairy products sub-group on hides and skins. Food and Agricultural Organization of the United Nations, Rome, 2015.

- Food and Agriculture Organization of the United Nations—FAOSTAT Database. Available online: https://www.fao.org/faostat/en/#data/QCL (accessed on 26 October 2024).

- Chen, Z.; Jiehang, D.; Zhu, Q.; Wang, H.; Chen, Y. A systematic review of machine-vision-based leather surface defect inspection. Electronics 2022, 11, 2383. [Google Scholar] [CrossRef]

- Chen, Z.; Zhu, Q.; Zhou, X.; Deng, J.; Song, W. Experimental Study on YOLO-Based Leather Surface Defect Detection. IEEE Access 2024, 12, 32830–32848. [Google Scholar] [CrossRef]

- Wang, M.; Xie, X.; Qiu, H.; Li, J. GEI-YOLOv9-based leather defect detection algorithm research. Fourth International Conference on Image Processing and Intelligent Control 2024, 13250, 571–576. [Google Scholar]

- Tabernik, D.; Šela, S.; Skvarč, J.; Skočaj, D. Segmentation-based deep-learning approach for surface-defect detection. Journal of Intelligent Manufacturing 2020, 31, 759–776. [Google Scholar] [CrossRef]

- Wróbel, A.; Szymczyk, P. An Application of a Material Defect Detection System Using Artificial Intelligence. International Journal of Modern Manufacturing Technologies 2023, 15, 221–228. [Google Scholar] [CrossRef]

- Thangakumar, J. Revolutionizing leather quality assurance through deep learning powered precision in defect detection and segmentation by a comparative analysis of Mask RCNN and YOLO v8. In 2024 International Conference on Advances in Data Engineering and Intelligent Computing Systems (ADICS), Chennai, India, April, 2024.

- Silva, V.; Pinho, R.d.; Allahdad, M.K.; Silva, J.; Ferreira, M.J.; Magalhães, L. A Robust Real-time Leather Defect Segmentation Using YOLO. In 2023 18th Iberian Conference on Information Systems and Technologies (CISTI), Aveiro, Portugal, June, 2023.

- Wang, C.Y.; Yeh, I.H.; Liao, H.Y.M. Yolov9: Learning what you want to learn using programmable gradient information. 2024. [Google Scholar]

- Yang, S.; Cao, Z.; Liu, N.; Sun, Y.; Wang, Z. Maritime electro-optical image object matching based on improved YOLOv9. Electronics 2024, 13, 2774. [Google Scholar] [CrossRef]

- Huang, X.; Liang, C.; Li, X.; Kang, F. An Underwater Crack Detection System Combining New Underwater Image-Processing Technology and an Improved YOLOv9 Network. Sensors 2024, 24, 5981. [Google Scholar] [CrossRef] [PubMed]

- Rizzieri, N.; Dall’Asta, L.; Ozoliņš, M. Diabetic Retinopathy Features Segmentation without Coding Experience with Computer Vision Models YOLOv8 and YOLOv9. Vision 2024, 8, 48. [Google Scholar] [CrossRef] [PubMed]

- Wang, X.; Zhang, C.; Qiang, Z.; Liu, C.; Wei, X.; Cheng, F. A Coffee Plant Counting Method Based on Dual-Channel NMS and YOLOv9 Leveraging UAV Multispectral Imaging. Remote Sensing 2024, 16, 3810. [Google Scholar] [CrossRef]

- Bustamante, A.; Belmonte, L.M.; Morales, R.; Pereira, A.; Fernández-Caballero, A. Bridging the Appearance Domain Gap in Elderly Posture Recognition with YOLOv9. Applied Sciences 2024, 14, 9695. [Google Scholar] [CrossRef]

- Mi, Z.; Yan, W.Q. Strawberry Ripeness Detection Using Deep Learning Models. Big Data and Cognitive Computing 2024, 8, 92. [Google Scholar] [CrossRef]

- Li, J.; Feng, Y.; Shao, Y.; Liu, F. IDP-YOLOV9: Improvement of Object Detection Model in Severe Weather Scenarios from Drone Perspective. Applied Sciences 2024, 14, 5277. [Google Scholar] [CrossRef]

- Wan, L.; Li, Z.; Zhang, C.; Chen, G.; Zhao, P.; Wu, K. Algorithm Improvement for Mobile Event Detection with Intelligent Tunnel Robots. Big Data and Cognitive Computing 2024, 8, 147. [Google Scholar] [CrossRef]

- Xu, W.; Zhu, D.; Deng, R.; Yung, K.; Ip, A.W. Violence-YOLO: Enhanced GELAN Algorithm for Violence Detection. Applied Sciences 2024, 14, 6712. [Google Scholar] [CrossRef]

- Wang, A.; Chen, H.; Liu, L.; Chen, K.; Lin, Z.; Han, J.; Ding, G. Yolov10: Real-time end-to-end object detection, 2024.

- Hussain, M.; Khanam, R. In-Depth Review of YOLOv1 to YOLOv10 Variants for Enhanced Photovoltaic Defect Detection. Solar 2024, 4, 351–386. [Google Scholar] [CrossRef]

- Tan, L.; Liu, S.; Gao, J.; Liu, X.; Chu, L.; Jiang, H. Enhanced self-checkout system for retail based on improved YOLOv10. Journal of Imaging 2024, 10, 248. [Google Scholar] [CrossRef] [PubMed]

- Qiu, X.; Chen, Y.; Cai, W.; Niu, M.; Li, J. LD-YOLOv10: A Lightweight Target Detection Algorithm for Drone Scenarios Based on YOLOv10. Electronics 2024, 13, 3269. [Google Scholar] [CrossRef]

- Liu, W.; Wang, S.; Gao, X.; Yang, H. A Tomato Recognition and Rapid Sorting System Based on Improved YOLOv10. Machines 2024, 12, 689. [Google Scholar]

- Zhang, C.; Peng, N.; Yan, J.; Wang, L.; Chen, Y.; Zhou, Z.; Zhu, Y. A Novel YOLOv10-DECA Model for Real-Time Detection of Concrete Cracks. Buildings 2024, 14, 3230. [Google Scholar] [CrossRef]

- Ali, M.L.; Zhang, Z. The YOLO Framework: A Comprehensive Review of Evolution, Applications, and Benchmarks in Object Detection. Preprints 2024. accepted. [Google Scholar]

| Model | Backbone | Head | Label Allocation |

|---|---|---|---|

| YOLOv9 | CSPDarkNet53 | conv, anchor boxes, multi-label classification, Auto Learning Bounding Box | Programmable Gradient Information (PGI) |

| YOLOv10 | CSPDarkNet53 or custom | conv, decoupled detection head, anchor-free, multi-label classification | Consistent dual assignments for NMS-free training |

| YOLOv11 | Ultralytics Backbone | Multi-task support for detection, segmentation, classification, pose estimation, OBB | Optimized Task-Aligner Assigner |

| Model | Confidence Error Loss | Box Regression Loss | Classification Loss |

|---|---|---|---|

| YOLOv9 | BCE | PGIoU (Programmable Gradient IoU) | BCE |

| YOLOv10 | BCE | DIoU (Dual IoU) | BCE |

| YOLOv11 | BCE | CIoU + DFL | BCE |

| Sample size of leather | Average accuracy detection (%) | Average accuracy classification (%) | ||||

|---|---|---|---|---|---|---|

| grubs | suckout | grubs | suckout | grubs | suckout | |

| Grain side | 300 | 300 | 85.8 | 87.1 | 66.8 | 78.2 |

| Flesh side | 300 | 300 | 93.5 | 91.8 | 98.2 | 97.6 |

| Metric | Grain side (%) | Flesh side (%) |

|---|---|---|

| Accuracy | 85 | 93 |

| F1-Score | 86 | 93 |

| Precision | 87 | 92 |

| Recall | 84 | 94 |

| Suckout Accuracy | 87 | 92 |

| Grubs Accuracy | 85 | 94 |

| Grubs | Suckout | |||

|---|---|---|---|---|

| Mean Accuracy (%) | STD (%) | Mean Accuracy (%) | STD (%) | |

| Grain side | 68.44 | 5.83 | 78.28 | 5.1 |

| Flesh side | 97.19 | 1.58 | 96.5 | 1.44 |

| Author | Sample size | Defects | Leather side | YOLO model |

Best accuracy (%) |

|---|---|---|---|---|---|

| Wang, M. et al. [7] | 6288 | Bubble, dent, broken glue | Grain | YOLOv9 | 94.7 |

| Andrzej Wróbel and Piotr Szymczyk [9] | 400 | General defects (not categorized) | Grain | YOLOv5 | 95 |

| Chen, Z. et al. [6] | 2855 | Cavity, pinhole, scratch, rotten surface, growth line, healing wound, crease, bacterial wound | Grain | YOLOv5-v8 | 85.1 |

| Thangakumar, J., et al. [10] | Not specified | Various leather defects | Not specified | YOLOv8 | 92 |

| The proposed model in this paper: | 1200 | Grubs (larval damage) and suckout (cut damage) | Both grain and flush | YOLOv11 | 97.6 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).