Submitted:

18 October 2024

Posted:

18 October 2024

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

2. Objectives

- Compare the performance of Traditional models and Transformer models in the email classification task for phishing and non-phishing labels.Evaluating its efficacy via cuantitative metrics such as precision, recall, F1-score and accuracy.

- Explore the enhancements brought by the implementation of transformer models in text classification task via an analysis of classification accuracy and their accuracy to process complex and diverse content.

- Conduct a thorough analysis of the registries of failed classification done by Traditional models and Transformer models equally. By identifying recurring patterns and root causes of errors, this objective aims to propose actionable improvements and refinements for future phishing detection methodologies, enhancing their effectiveness and reliability.

3. Related Work

3.1. Analysis of Phishing Websites

3.2. Analysis of Phishing URLs

3.3. Analysis of the Content of Phishing Emails

3.4. Deep Learning for Phishing Detection

3.5. Transformer Models for Phishing Detection

3.6. Datasets Used in Previous Investigations

| Year | Dataset Name | Linear Regression | Sequential | Decision Trees | Random Forest | Naïve Bayes | CNN | Roberta |

|---|---|---|---|---|---|---|---|---|

| 2020 | Email Classification [29] | 94.08 | 0 | 0 | 0 | 0 | 0 | 0 |

| 2023 | Email Spam Classification [30] | 0 | 86.2 | 0 | 0 | 79.87 | 0 | 78.57 |

| 2001 | Enron Spam Data (No Code) [31] | 0 | 0 | 0 | 0 | 95 | 0 | 0 |

| 2018 | Fraud Email Dataset [32] | 92 | 0 | 0 | 0 | 97 | 0 | 0 |

| 2023 | Phishing Email Detection [33] | 0 | 0 | 93.1 | 0 | 0 | 97 | 99.36 |

| 2023 | Phishing-Mail [34] | 0 | 0 | 92.82 | 0 | 0 | 99.03 | 96.81 |

| 2023 | Pishing Email Detection [35] | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 2018 | Pishing-2018 Monkey [36] | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 2019 | Pishing-2019 Monkey [37] | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 2020 | Pishing-2020 Monkey [38] | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 2021 | Pishing-2021 Monkey [39] | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 2022 | Pishing-2022 Monkey [40] | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 2018 | Private-pishing4mbox [41] | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 2023 | Spam (or) Ham [42] | 0 | 99.72 | 0 | 0 | 96.9 | 0 | 0 |

| 2020 | Spam Classification for Basic NLP [43] | 0 | 81 | 96.77 | 0 | 98.49 | 0 | 98.33 |

| 2021 | Spam Email [44] | 0 | 96.67 | 97.21 | 0 | 99.13 | 0 | 0 |

| 2021 | Spam_assasin [45] | 0 | 0 | 98.6 | 98.87 | 0 | 0 | 0 |

| 2024 | Phishing Validation Emails Dataset [46] | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

4. Data and Methods

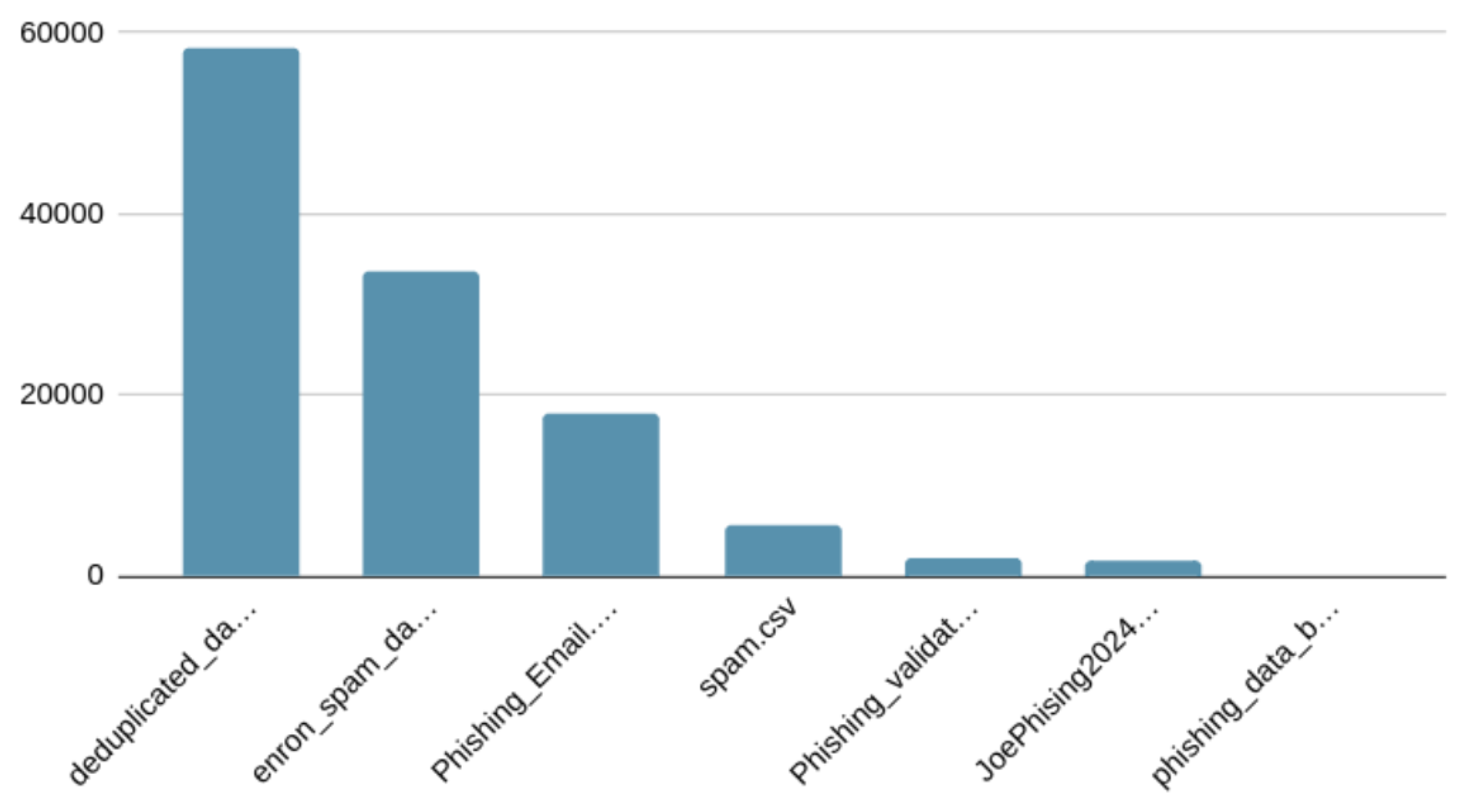

4.1. Data Collection

4.2. Applied Models

- Logistic Regression: A linear model utilized for binary classification. It uses probability to determine if the given data can be classified into a particular label.

- Random Forest: A model that uses decision trees for training and learning. After creating these decision trees, it utilizes predictions to improve the precision of the responses.

- Support Vector Machine (SVM): A model that classifies data by determining which hyperplane best separates the classes in the feature space.

- Naive Bayes: A statistical model that uses Bayes’ theorem with the assumption of independence between features. It works well with large datasets.

- BERT (bert-base-uncased): The BERT (Bidirectional Encoder Representations from Transformers) model is a pretrained model that utilizes bidirectional transformer logic, allowing it to analyze provided text data in both directions.

- DistilBERT (distilbert-base-uncased): A compact version of BERT that maintains about 97% of its accuracy while consuming fewer resources.

- XLNet (xlnet-base-cased): A model that generalizes BERT using permutation-based prediction, capturing dependencies without the constraint of conditional independence.

- RoBERTa (roberta-base): RoBERTa (Robustly optimized BERT approach) is a variant of BERT that improves training logic, including more data and steps to enhance the robustness and precision of the model.

- ALBERT (A Lite BERT): A lightweight version of BERT that reduces the model size through parameter sharing and embedding matrix factorization, maintaining high performance with fewer parameters.

4.3. Evaluation Metrics

- Precision: Precision is the proportion of true positives among all the positive predictions. It measures the accuracy of the positive predictions made by the model.

- Recall: Recall is the proportion of true positives among all the actual positive data. It measures the model’s ability to capture all the positive samples.

- F1-Score: The F1-Score is obtained using both recall and precision. It provides a balanced measure considering both values, offering a single metric that reflects how well the model handled imbalanced data.

- Accuracy: Accuracy is the proportion of correct predictions among all the predictions made by the model.

- True Positive Rate (TPR): TPR is another term for recall. It represents the proportion of actual positives correctly identified by the model.

5. Evaluation Experiments

5.1. Experiment Setup

5.1.1. Traditional Machine Learning Model Parameters

-

Logistic Regression

- –

- Maximum Iterations (max_iter): 1000

- –

- Random State: 42

-

Random Forest

- –

- Number of Estimators (n_estimators): 100

- –

- Random State: 42

-

Support Vector Machine (SVM)

- –

- Kernel: Linear (kernel=’linear’)

- –

- Regularization Parameter (C): 1

- –

- Random State: 42

-

Naive Bayes

- –

- Alpha (Smoothing Parameter): 1.0

- –

- Random State: 42

5.1.2. Transformer Model Parameters

-

Model Names:

- –

- distilbert-base-uncased

- –

- bert-base-uncased

- –

- xlnet-base-cased

- –

- roberta-base

- –

- albert-base-v2

-

Training Settings:

- –

- Tokenizer: AutoTokenizer from Hugging Face’s transformers library.

- –

-

Dataset: EmailDataset class defined with:

- *

- Texts and labels from the dataset.

- *

- Tokenizer for encoding texts with special tokens, padding, and truncation.

- –

- Optimizer: AdamW optimizer with a learning rate of 2e-5.

- –

- Device: Utilizes CUDA if available, otherwise CPU.

- –

- Epochs: 3

-

Model Evaluation:

- –

- Batch Size: 16 for both training and testing DataLoader.

- –

- Loss Function: Cross-entropy loss.

- –

- Metrics: Classification report with precision, recall, F1-score, and support.

5.2. Results and Discussion

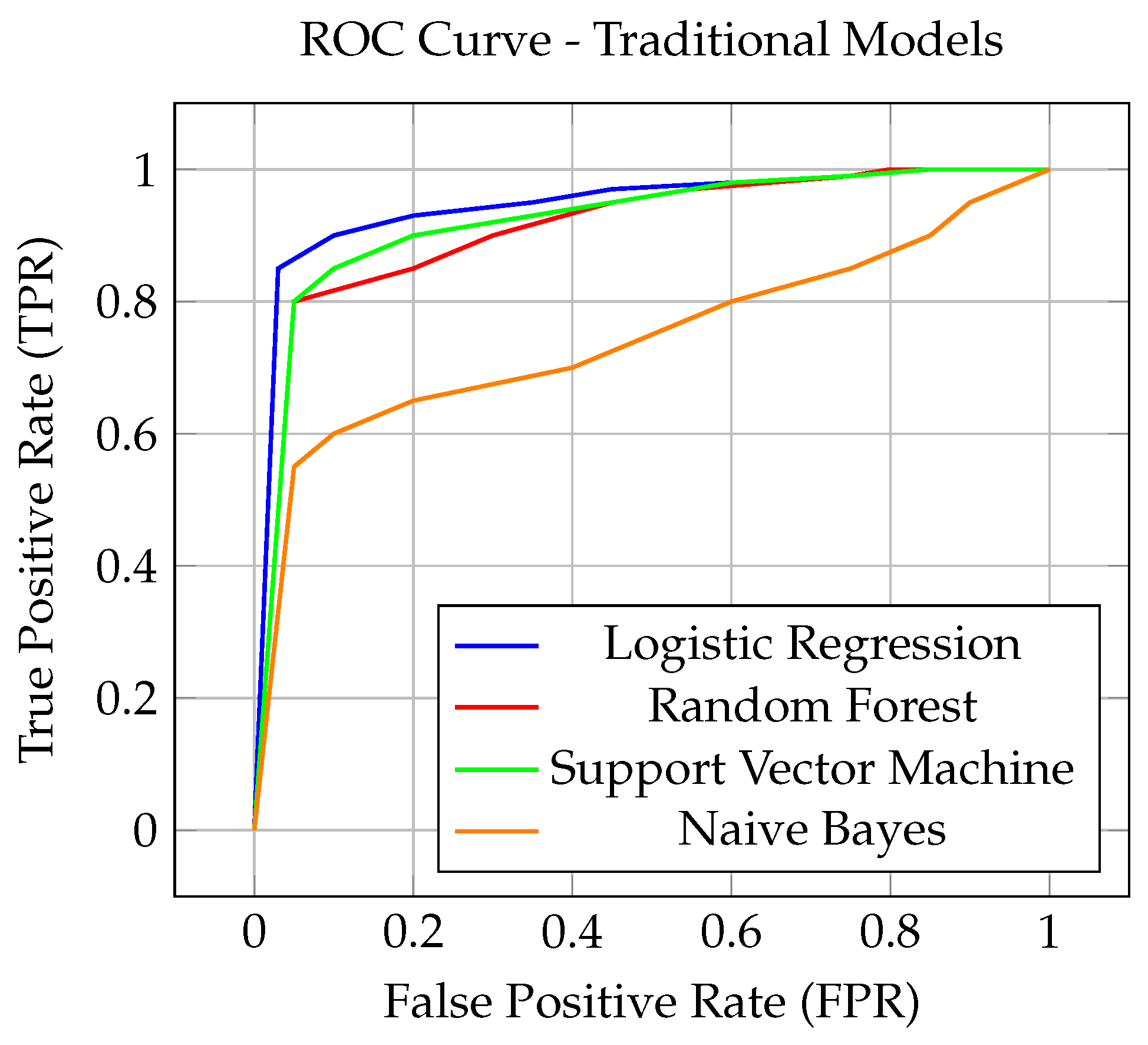

5.2.1. Traditional Machine Learning for Phishing Email Detection

| Model | Class | Precision | Recall | F1-Score | Accuracy |

|---|---|---|---|---|---|

| Logistic Regression | Phishing | 0.9863 | 0.9801 | 0.9832 | 0.9808 |

| Not Phishing | 0.9736 | 0.9818 | 0.9777 | ||

| Random Forest | Phishing | 0.9843 | 0.9804 | 0.9824 | 0.9799 |

| Not Phishing | 0.9740 | 0.9791 | 0.9766 | ||

| Support Vector Machine | Phishing | 0.9898 | 0.9846 | 0.9872 | 0.9854 |

| Not Phishing | 0.9795 | 0.9864 | 0.9830 | ||

| Naive Bayes | Phishing | 0.9502 | 0.9877 | 0.9686 | 0.9633 |

| Not Phishing | 0.9826 | 0.9308 | 0.9560 |

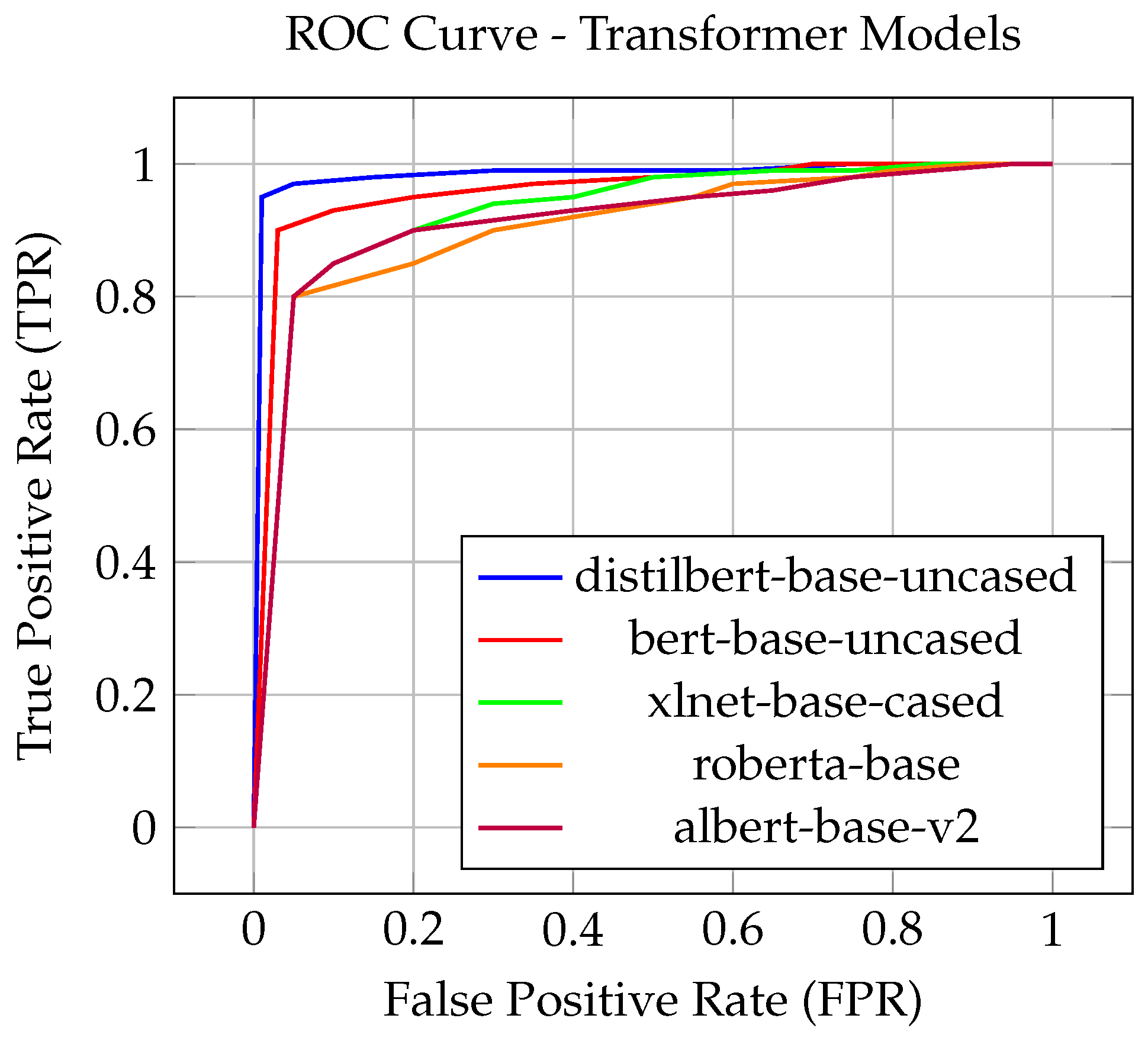

5.2.2. Transformer-Based Machine Learning for Phishing Email Detection

| Model | Class | Precision | Recall | F1-Score | Accuracy |

|---|---|---|---|---|---|

| distilbert-base-uncased | Phishing | 0.9933 | 0.9890 | 0.9911 | 0.9899 |

| Not Phishing | 0.9853 | 0.9911 | 0.9882 | ||

| bert-base-uncased | Phishing | 0.9947 | 0.9897 | 0.9922 | 0.9911 |

| Not Phishing | 0.9863 | 0.9929 | 0.9896 | ||

| xlnet-base-cased | Phishing | 0.9828 | 0.9971 | 0.9899 | 0.9884 |

| Not Phishing | 0.9961 | 0.9768 | 0.9863 | ||

| roberta-base | Phishing | 0.9928 | 0.9974 | 0.9951 | 0.9943 |

| Not Phishing | 0.9964 | 0.9903 | 0.9934 | ||

| albert-base-v2 | Phishing | 0.9939 | 0.9853 | 0.9896 | 0.9881 |

| Not Phishing | 0.9806 | 0.9919 | 0.9862 |

5.3. Analysis of Failed Predictions

5.3.1. Error Analysis for Traditional Model

5.3.2. Error Analysis for Transformer Models

6. Conclusions and Future Work

6.1. Conclusions

6.2. Future Work

References

- Laudon, K.; Traver, C. E-commerce 2023: Business, Technology, Society; Pearson, 2023.

- Cellucci, N.; Moore, T.; Salaky, K. How Much Does Internet Cost Per Month? 2024. Accessed: 2024-9-15.

- Kirchner, A.S.; Schilling, J. Email communication trends in the digital age. Journal of Internet Services 2022, 10, 123–130. [Google Scholar]

- Blanchard, D.G. Cybersecurity challenges in the evolving landscape of online communication. Cybersecurity Today 2021, 14, 45–55. [Google Scholar]

- of Investigation, F.B. 2022 Internet Crime Report. FBI Annual Reports 2022, 12, 5–10. [Google Scholar]

- Smith, M. Identity theft and its economic impacts: A review. Journal of Cybersecurity 2021, 15, 18–25. [Google Scholar]

- Verizon. 2023 Data Breach Investigations Report. Verizon DBIR Reports 2023, 16, 10–20. [Google Scholar]

- Johnson, R. The role of email in modern application security. Journal of Information Security 2021, 11, 150–160. [Google Scholar]

- Williams, P.; Hamilton, D. Email as a critical step in online authentication systems. Cybersecurity Journal 2022, 18, 22–30. [Google Scholar]

- Group, A.P.W. 2022 Phishing Activity Trends Report. APWG Annual Reports 2022, 7, 2–15. [Google Scholar]

- Anderson, D. The rise of phishing attacks in the digital era. Cybersecurity Insights 2022, 19, 30–40. [Google Scholar]

- Liu, C. Phishing in the modern world: Beyond emails. Journal of Digital Forensics 2023, 20, 5–15. [Google Scholar]

- Patel, N. The increasing effectiveness of phishing in social engineering attacks. Information Security Review 2023, 24, 50–60. [Google Scholar]

- Thompson, B. Social engineering in phishing: A review of strategies. Journal of Information Warfare 2021, 17, 35–45. [Google Scholar]

- Patel, A.J. Text analysis as a defense against phishing emails. Journal of Cybersecurity Technology 2022, 9, 65–75. [Google Scholar]

- Zhang, M.; Brown, D. CNN-based phishing email detection. Journal of Machine Learning Security 2021, 12, 100–110. [Google Scholar]

- Wilson, J. Reducing phishing attacks through machine learning techniques. Cybersecurity Advances 2023, 8, 25–35. [Google Scholar]

- Gonzalez, L. Malware and spyware in phishing attacks: A threat to personal data. Journal of Information Security 2022, 21, 12–20. [Google Scholar]

- Yamamoto, S. Enhancing online security by mitigating phishing threats. Cybersecurity Innovations 2022, 6, 15–25. [Google Scholar]

- Tang, L.; Mahmoud, Q.H. A survey of machine learning-based solutions for phishing website detection. Machine Learning and Knowledge Extraction 2021, 3, 672–694. [Google Scholar] [CrossRef]

- Samad, A.S.; Balasubaramanian, S.; Al-Kaabi, A.S.; Sharma, B.; Chowdhury, S.; Mehbodniya, A.; Webber, J.L.; Bostani, A. Analysis of the performance impact of fine-tuned machine learning model for phishing URL detection. Electronics 2023, 12. [Google Scholar] [CrossRef]

- Agrawal, G.; Kaur, A.; Myneni, S. A review of generative models in generating synthetic attack data for cybersecurity. Electronics 2024, 13. [Google Scholar] [CrossRef]

- Roumeliotis, K.I.; Tselikas, N.D.; Nasiopoulos, D.K. Next-generation spam filtering: Comparative fine-tuning of LLMs, NLPs, and CNN models for email spam classification. Electronics 2024, 13. [Google Scholar] [CrossRef]

- Salloum, S.; Gaber, T.; Vadera, S.; Shaalan, K. A systematic literature review on phishing email detection using natural language processing techniques. IEEE Access 2022, 10, 65703–65727. [Google Scholar] [CrossRef]

- Altwaijry, N.; Al-Turaiki, I.; Alotaibi, A. Deep learning for phishing email detection. Journal of Information Security and Applications 2020, 53. [Google Scholar] [CrossRef]

- Newaz, I.; Jamal, M.K.; Hasan Juhas, F.; Patwary, M.J.A. A Hybrid Classification Technique using Belief Rule Based Semi-Supervised Learning. 2022 25th International Conference on Computer and Information Technology (ICCIT), 2022, pp. 466–471. [CrossRef]

- Jamal, K.; Hossain, M.A.; Mamun, N.A. Improving Phishing and Spam Detection with DistilBERT and RoBERTa. arXiv preprint 2023, [2311.04913]. [CrossRef]

- Lee, Y.; Saxe, J.; Harang, R.E. CATBERT: Context-Aware Tiny BERT for Detecting Social Engineering Emails. ArXiv 2020, abs/2010.03484. [CrossRef]

- Awe, T. Email Classification. https://www.kaggle.com/datasets/taiwoawe/email-classification, 2020. Accessed: 2024-02-01.

- Tapakah68. Email Spam Classification. https://www.kaggle.com/datasets/tapakah68/email-spam-classification, 2023. Accessed: 2024-02-01.

- Wiechmann, M. Enron Spam Data (No Code). https://www.kaggle.com/datasets/marcelwiechmann/enron-spam-data, 2001. Accessed: 2024-02-01.

- LL, L. Fraud Email Dataset (has more than 4 codes for Naïve Bayes). https://www.kaggle.com/datasets/llabhishekll/fraud-email-dataset, 2018. Accessed: 2024-02-01.

- Journal, S. Phishing Email Detection. https://www.kaggle.com/datasets/subhajournal/phishingemails/data, 2023. Accessed: 2024-02-01.

- Mourya, S. Phishing-Mail. https://www.kaggle.com/datasets/somumourya/fishing-mail, 2023. Accessed: 2024-02-01.

- Journal, S. Pishing Email Detection. https://www.kaggle.com/datasets/subhajournal/phishingemails, 2023. Accessed: 2024-02-01.

- Jose. Pishing-2018 Monkey. https://monkey.org/~jose/phishing/, 2018. Accessed: 2024-02-01.

- Jose. Pishing-2019 Monkey. https://monkey.org/~jose/phishing/, 2019. Accessed: 2024-02-01.

- Jose. Pishing-2020 Monkey. https://monkey.org/~jose/phishing/, 2020. Accessed: 2024-02-01.

- Jose. Pishing-2021 Monkey. https://monkey.org/~jose/phishing/, 2021. Accessed: 2024-02-01.

- Jose. Pishing-2022 Monkey. https://monkey.org/~jose/phishing/, 2022. Accessed: 2024-02-01.

- Jose. Private-pishing4mbox. https://monkey.org/~jose/phishing/, 2018. Accessed: 2024-02-01.

- Sivapragasam, A. Spam (or) Ham (Has a lot of cases for Naïve and Decision trees). https://www.kaggle.com/datasets/arunasivapragasam/spam-or-ham?select=spam+%28or%29+ham.csv, 2023. Accessed: 2024-02-01.

- Naidu, C. Spam Classification for Basic NLP. https://www.kaggle.com/datasets/chandramoulinaidu/spam-classification-for-basic-nlp, 2020. Accessed: 2024-02-01.

- Rhitazajana. Spam Email. https://www.kaggle.com/datasets/rhitazajana/spam-email, 2021. Accessed: 2024-09-15.

- Olalekan, G. Spam_assasin. https://www.kaggle.com/datasets/ganiyuolalekan/spam-assassin-email-classification-dataset, 2021. Accessed: 2024-02-01.

- Miltchev, R.; Rangelov, D.; Evgeni, G. Phishing validation emails dataset, 2024. [CrossRef]

- Bishop, C.M. Pattern Recognition and Machine Learning; Springer: New York, 2006. [Google Scholar]

- Hastie, T.; Tibshirani, R.; Friedman, J. The Elements of Statistical Learning: Data Mining, Inference, and Prediction, 2 ed.; Springer: New York, 2009. [Google Scholar]

- Batutin, A. Choose Your AI Weapon: Deep Learning or Traditional Machine Learning? https://shelf.io/blog/choose-your-ai-weapon-deep-learning-or-traditional-machine-learning, 2023. Accessed: 2024-09-19.

- Amatriain, X. Transformer models: an introduction and catalog. ResearchGate 2023. [Google Scholar] [CrossRef]

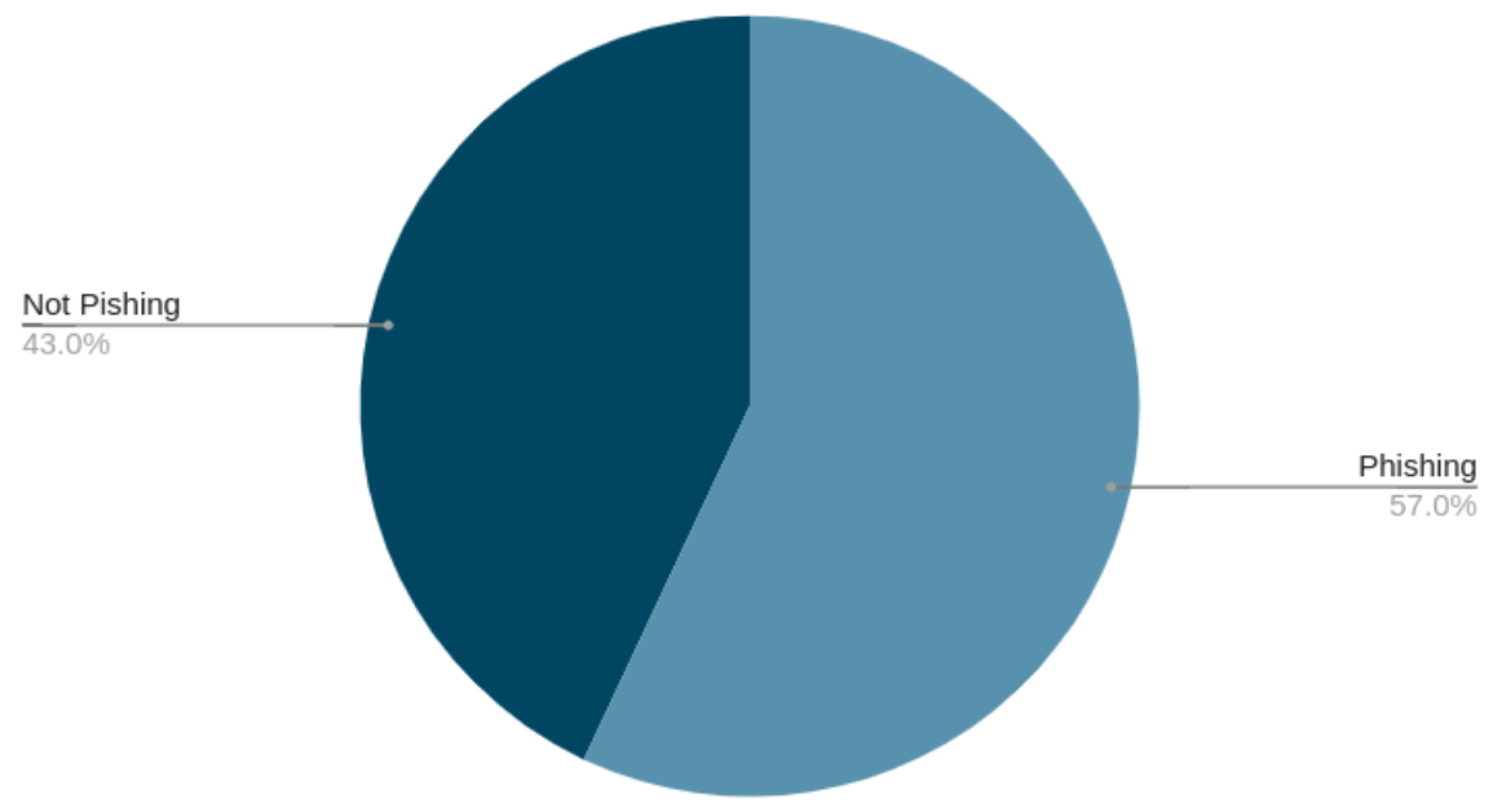

| Specifications | Statistics |

|---|---|

| Number of Emails | 119,148 |

| Number of Words | 30,478,934 |

| Average Words per Email | 255.81 |

| Average Words per Sentence | 15.53 |

| Highest Word Count in an Email | 15,828 |

| Lowest Word Count in an Email | 10 |

| Number of Phishing Emails | 68,912 |

| Number of Non-Phishing Emails | 50,236 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).