Submitted:

01 October 2024

Posted:

02 October 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Robotic Grasping

2.2. Lightweight Neural Network

2.3. CNN-Transformer Hybrid Network

3. Methods

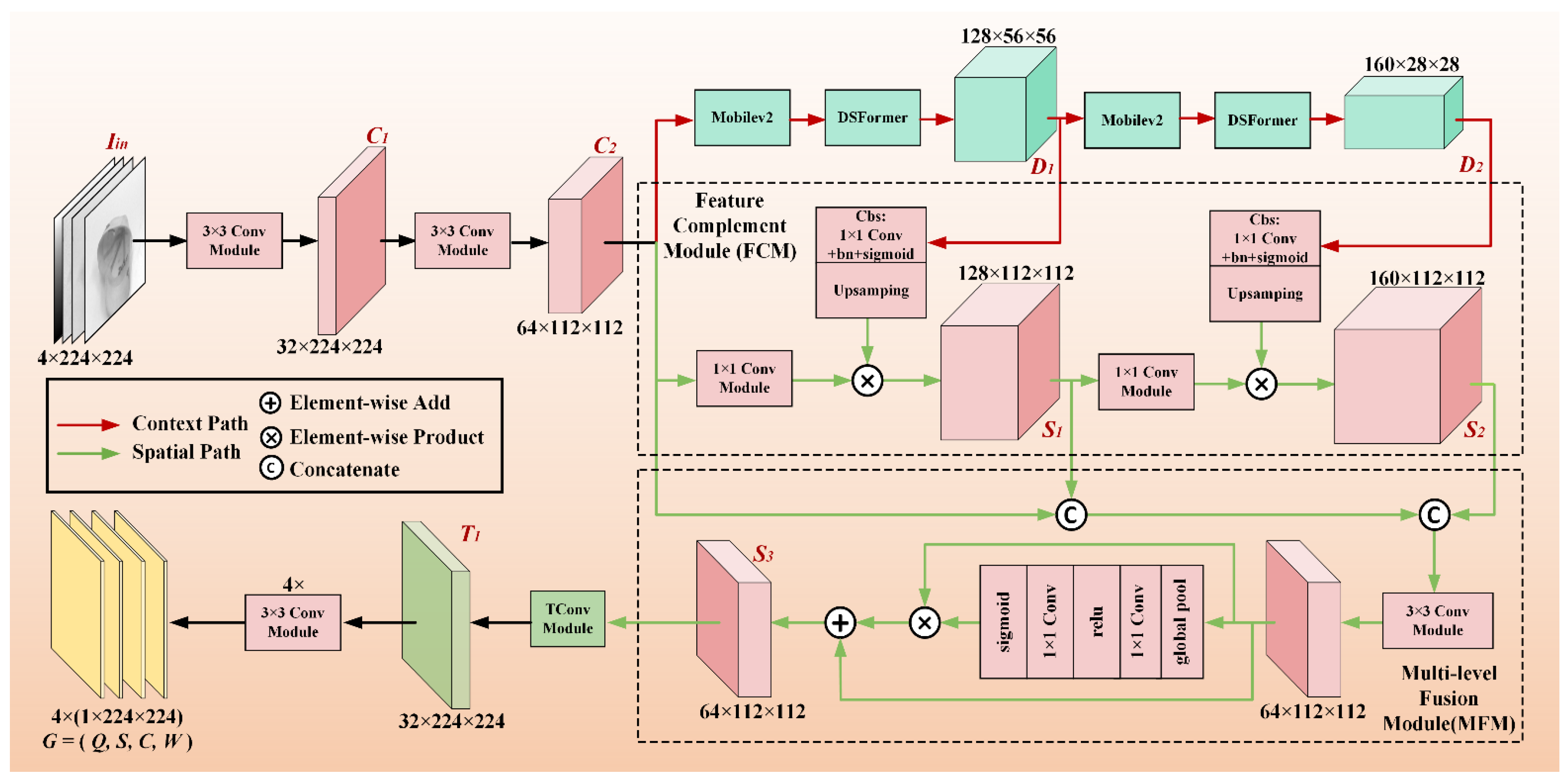

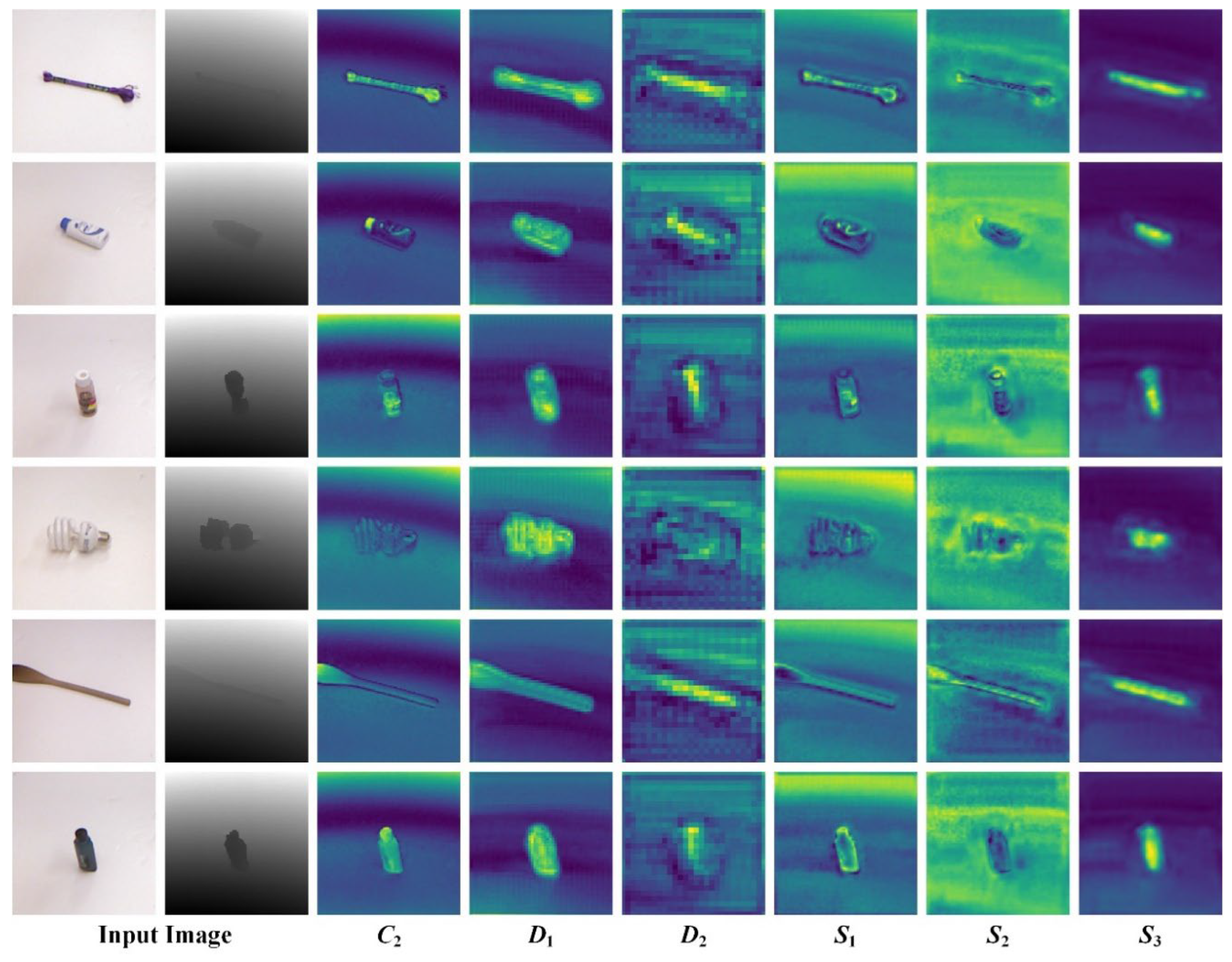

3.1. Network Structure

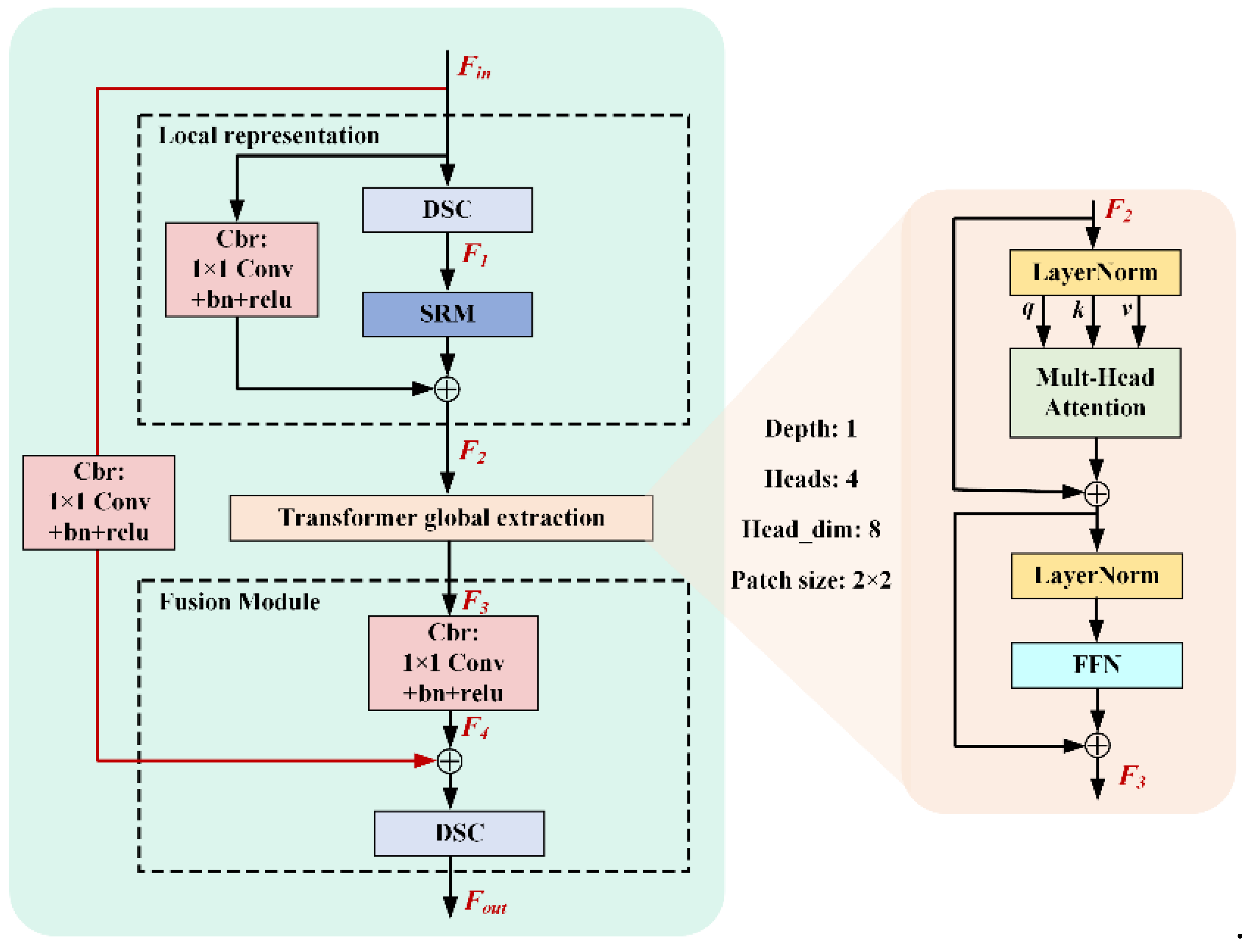

3.2. DSFormer

3.3. The Context and Spatial Paths

3.4. Loss Function

4. Experiments

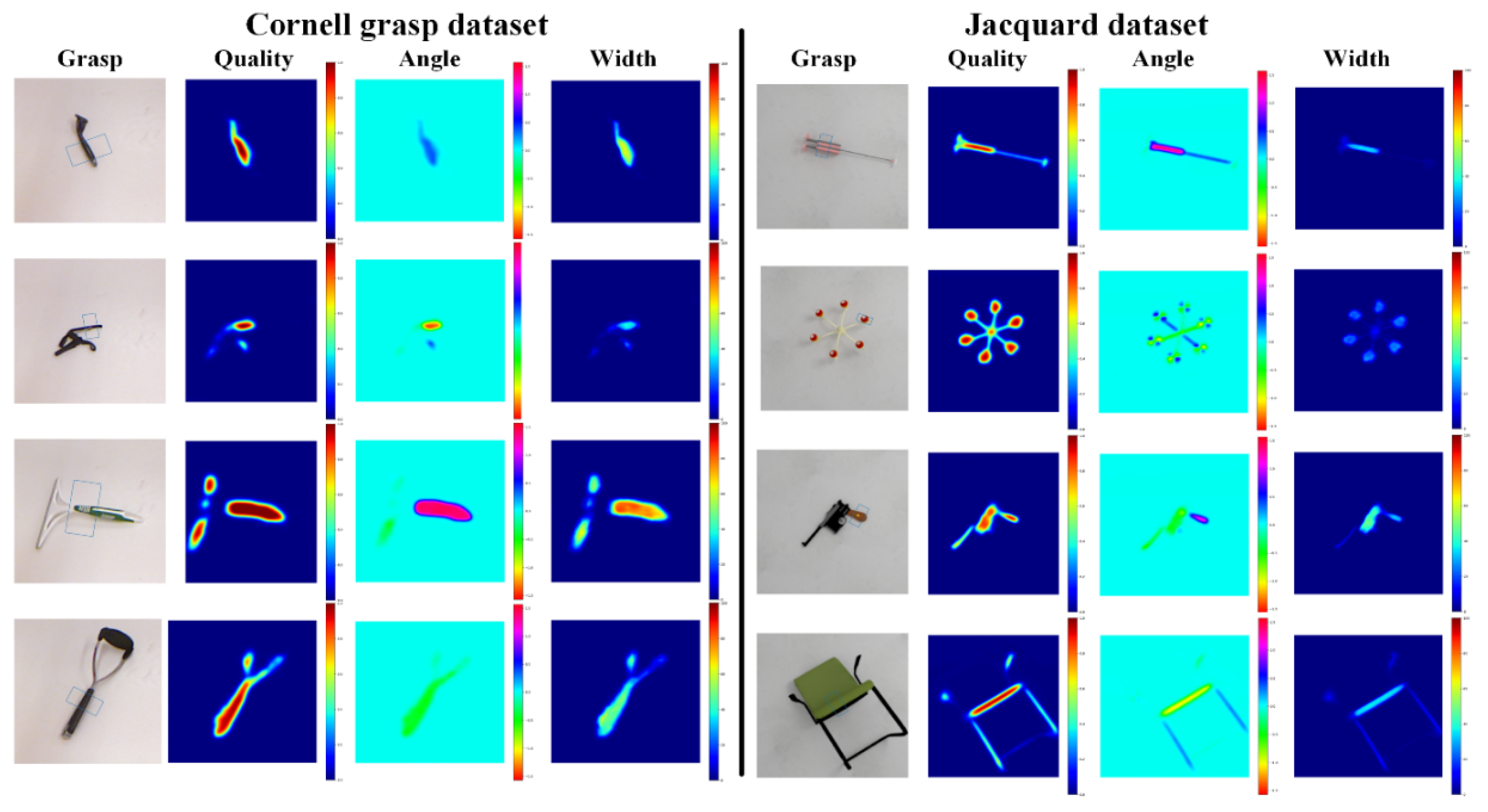

4.1. Grasp Detection Metric

4.2. Implementation Details

4.3. Comparisons with the Existing Methods

4.4. Ablation Studies

5. Conclusions

Author Contributions

Funding

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Lenz I, Lee H and Saxena A. Deep learning for detecting robotic grasps. The International Journal of Robotics Research 2015, 34, 705–724. [Google Scholar] [CrossRef]

- Zhou X, Lan X, Zhang H, et al. Fully convolutional grasp detection network with oriented anchor box. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) 2018, pp.7223-7230. IEEE.

- Zhang H, Lan X, Bai S, et al. A multi-task convolutional neural network for autonomous robotic grasping in object stacking scenes. In Proceedings of the 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) 2019, pp.6435-6442. IEEE.

- Vaswani A, Shazeer N, Parmar N, et al. Attention is all you need. Advances in neural information processing systems 2017, 30. [Google Scholar]

- Lin X, Sun S, Huang W, et al. EAPT: efficient attention pyramid transformer for image processing. IEEE Transactions on Multimedia 2021, 25, 50–61. [Google Scholar]

- Dosovitskiy A, Beyer L, Kolesnikov A, et al. An image is worth 16x16 words: Transformers for image recognition at scale. arXiv preprint 2010, arXiv:201011929.

- Liu Z, Lin Y, Cao Y, et al. Swin transformer: Hierarchical vision transformer using shifted windows. In Proceedings of the IEEE/CVF international conference on computer vision 2021, pp.10012-10022.

- Liu Y, Sangineto E, Bi W, et al. Efficient training of visual transformers with small datasets. Advances in Neural Information Processing Systems 2021, 34, 23818–23830. [Google Scholar]

- Bicchi A and Kumar, V. Robotic grasping and contact: A review. In Proceedings of the 2000 ICRA Millennium conference IEEE international conference on robotics and automation Symposia proceedings (Cat No 00CH37065) 2000; pp. 348–353.

- Maitin-Shepard J, Cusumano-Towner M, Lei J, et al. Cloth grasp point detection based on multiple-view geometric cues with application to robotic towel folding. In Proceedings of the 2010 IEEE International Conference on Robotics and Automation 2010, pp.2308-2315. IEEE.

- León B, Ulbrich S, Diankov R, et al. Opengrasp: a toolkit for robot grasping simulation. In Proceedings of the Simulation, Modeling, and Programming for Autonomous Robots: Second International Conference, SIMPAR 2010, Darmstadt, Germany, November 15-18, 2010 Proceedings 2 2010, pp.109-120. Springer.

- Krizhevsky A, Sutskever I and Hinton GE. Imagenet classification with deep convolutional neural networks. Advances in neural information processing systems 2012, 25. [Google Scholar]

- Li Y, Liu J and Wang L. Lightweight network research based on deep learning: a review. In Proceedings of the 2018 37th Chinese control conference (CCC) 2018, pp.9021-9026. IEEE.

- Luo J-H, Wu J and Lin W. Thinet: A filter level pruning method for deep neural network compression. In Proceedings of the IEEE international conference on computer vision 2017, pp.5058-5066.

- You S, Xu C, Xu C, et al. Learning from multiple teacher networks. In Proceedings of the 23rd ACM SIGKDD international conference on knowledge discovery and data mining 2017, pp.1285-1294.

- Li Y, Chen Y, Dai X, et al. Micronet: Improving image recognition with extremely low flops. In Proceedings of the IEEE/CVF International conference on computer vision 2021, pp.468-477.

- Touvron H, Cord M, Sablayrolles A, et al. Going deeper with image transformers. In Proceedings of the IEEE/CVF international conference on computer vision 2021, pp.32-42.

- Mehta S and Rastegari, M. Mobilevit: light-weight, general-purpose, and mobile-friendly vision transformer. arXiv preprint 2021, arXiv:2110.02178. [Google Scholar]

- Lou M, Zhou H-Y, Yang S, et al. TransXNet: Learning both global and local dynamics with a dual dynamic token mixer for visual recognition. arXiv preprint 2023, arXiv:231019380.

- Hu J, Shen L and Sun G. Squeeze-and-excitation networks. In Proceedings of the IEEE conference on computer vision and pattern recognition 2018, pp.7132-7141.

- Chollet, F. Xception: Deep learning with depthwise separable convolutions. In Proceedings of the IEEE conference on computer vision and pattern recognition 2017; pp. 1251–1258.

- Lee H, Kim H-E and Nam H. Srm: A style-based recalibration module for convolutional neural networks. In Proceedings of the IEEE/CVF International conference on computer vision 2019, pp.1854-1862.

- Sandler M, Howard A, Zhu M, et al. Mobilenetv2: Inverted residuals and linear bottlenecks. In Proceedings of the IEEE conference on computer vision and pattern recognition 2018, pp.4510-4520.

- Girshick, R. Fast r-cnn. In Proceedings of the IEEE international conference on computer vision 2015; pp. 1440–1448.

- Depierre A, Dellandréa E and Chen L. Jacquard: A large scale dataset for robotic grasp detection. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) 2018, pp.3511-3516. IEEE.

- Asif U, Tang J and Harrer S. GraspNet: An Efficient Convolutional Neural Network for Real-time Grasp Detection for Low-powered Devices. In: IJCAI 2018, pp.4875-4882.

- Chu F-J, Xu R and Vela PA. Real-world multiobject, multigrasp detection. IEEE Robotics and Automation Letters 2018, 3, 3355–3362. [Google Scholar] [CrossRef]

- Kumra S, Joshi S and Sahin F. Antipodal Robotic Grasping using Generative Residual Convolutional Neural Network. In Proceedings of the 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) 2020.

- Liu D, Tao X, Yuan L, et al. Robotic objects detection and grasping in clutter based on cascaded deep convolutional neural network. IEEE Transactions on Instrumentation and Measurement 2021, 71, 1–10. [Google Scholar]

- Ouyang W, Huang W and Min H. Robot grasp with multi-object detection based on RGB-D image. In Proceedings of the 2021 China Automation Congress (CAC) 2021, pp.6543-6548. IEEE.

- Tian H, Song K, Li S, et al. Lightweight pixel-wise generative robot grasping detection based on RGB-D dense fusion. IEEE Transactions on Instrumentation and Measurement 2022, 71, 1–12. [Google Scholar]

- Wang S, Jiang X, Zhao J, et al. Efficient fully convolution neural network for generating pixel wise robotic grasps with high resolution images. In Proceedings of the 2019 IEEE international conference on robotics and biomimetics (ROBIO) 2019, pp.474-480. IEEE.

- Wang S, Zhou Z and Kan Z. When transformer meets robotic grasping: Exploits context for efficient grasp detection. IEEE robotics and automation letters 2022, 7, 8170–8177. [Google Scholar] [CrossRef]

- Zhang H, Lan X, Bai S, et al. Roi-based robotic grasp detection for object overlapping scenes. In Proceedings of the 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) 2019, pp.4768-4775. IEEE.

- Morrison D, Corke P and Leitner J. Learning robust, real-time, reactive robotic grasping. The International journal of robotics research 2020, 39, 183–201. [Google Scholar] [CrossRef]

- Le M-T and Lien J-JJ. Lightweight Robotic Grasping Model Based on Template Matching and Depth Image. IEEE Embedded Systems Letters 2022, 14, 199–202. [Google Scholar] [CrossRef]

| Methods | Input size | Input Mode | Accuracy (%) | Inference Time (ms) (hardware env) | |

| Cornell | Jacquard | ||||

| GraspNet[26] | 244×244 | RGB-D | 90.6 | - | 24 (NVIDIA Jetson TX1 GPU) |

| GN[27] | 227×227 | RGB-D | 96.1 | - | 120 (NVIDIA GeForce GTX Titan X GPU) |

| EFCNet[32] | 400×400 | RGB-D | 91.0 | - | 8 (NVIDIA GeForce GTX 1060 GPU) |

| ROI-GD[34] | 800×600 | RGB/RG-D | 93.5 | 93.6 | 40 (NVIDIA GeForce GTX 1080Ti GPU) |

| GR-ConvNet[28] | 224×224 | RGB-D/ RGB-D | 96.6 | 94.6 | 20(NVIDIA GeForce GTX 1080Ti GPU) |

| GPN-GD[30] | 227×227 | RGB-D | 97.2 | - | 81 (NVIDIA GeForce RTX 2080 Ti GPU) |

| Q-YNet[29] | 112×112 | RGB-D/ RGB-D | 95.2 | 92.1 | 48 (NVIDIA GeForce RTX 2080TI GPU) |

| TF-Grasp[33] | 224×224 | RGB-D/ RGB-D | 98.0 | 94.6 | 41.6 (NVIDIA GeForce RTX 3090 GPU) |

| DFusion[31] | 224×224 | RGB-D/ RGB-D | 98.9 | 94.0 | 15 (NVIDIA GeForce RTX 3080 GPU) |

| SGNet (ours) | 224×224 | RGB-D/ RGB-D | 99.4 | 93.4 | 12.5(NVIDIA GeForce RTX 3070 GPU) |

| Methods | Input Mode | Parameters | Accuracy (%) | |

| Cornell | Jacquard | |||

| GG-CNN2[35] | D | 66K | 64.0 | 84.0 |

| GraspNet[26] | RGB-D | 3.8M | 90.2 | - |

| TM-DNet[36] | RGB-D | 1.5M | 92.5 | - |

| DFusion[31] | RGB-D | 7.2M | 98.9 | 94.0 |

| SGNet (ours) | RGB-D | 1M | 99.4 | 93.4 |

| Method | MVIT | DSF | BP | Parameters | Accuracy (%) |

| SGNet-Ⅰ | √ | × | × | 2,037,504 | 97.2 |

| SGNet-Ⅱ | × | √ | × | 1,047,220 | 97.7 |

| SGNet-Ⅲ | √ | × | √ | 2,062,464 | 98.3 |

| SGNet (ours) | × | √ | √ | 1,072,180 | 99.4 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).