4. Problem Detailing

Probability Theory and Statistics - Probabilistic methods are central to machine learning and AI, particularly in tasks like supervised and unsupervised learning. Bayesian methods, probabilistic graphical models, and maximum likelihood techniques are employed. - Problems: A primary mathematical challenge lies in uncertainty estimation and developing methods for probabilistic models in the presence of incomplete or heavily noisy data.

Problems: These include uncertainty estimation, handling incomplete data, and noise. Probabilistic models must be flexible enough to account for all these factors.

Linear Algebra: Used for data analysis and working with high-dimensional spaces, which is critical for neural networks and dimensionality reduction methods like PCA. - Analysis of data and operations in high-dimensional spaces are vital aspects of machine learning and AI tasks, especially for neural networks. High data dimensionality increases computational and modeling complexity, making efficient methods for processing such data essential. - Methods like PCA (Principal Component Analysis) are used to reduce the number of features (variables) while preserving the most significant information. PCA identifies the main directions of data variation, enabling: - Reduction of computational volume; - Decreased likelihood of overfitting; - Improved data visualization in 2D and 3D; - Faster model training. - This method extracts key components, which is particularly important in tasks with thousands of features, such as image processing, text analysis, or genetic data, where the original data is high-dimensional.

Problems: The curse of dimensionality, where working with large datasets becomes computationally complex.

Optimization Theory: Forms the foundation of most learning algorithms. Gradient descent and its modifications are applied to minimize loss functions.

Problems: Local minima and slow convergence in multidimensional spaces remain challenges.

Differential Equations: Used to model dynamic systems, such as recurrent neural networks and LSTMs.

Problems: Solving nonlinear differential equations in real time poses a significant mathematical difficulty.

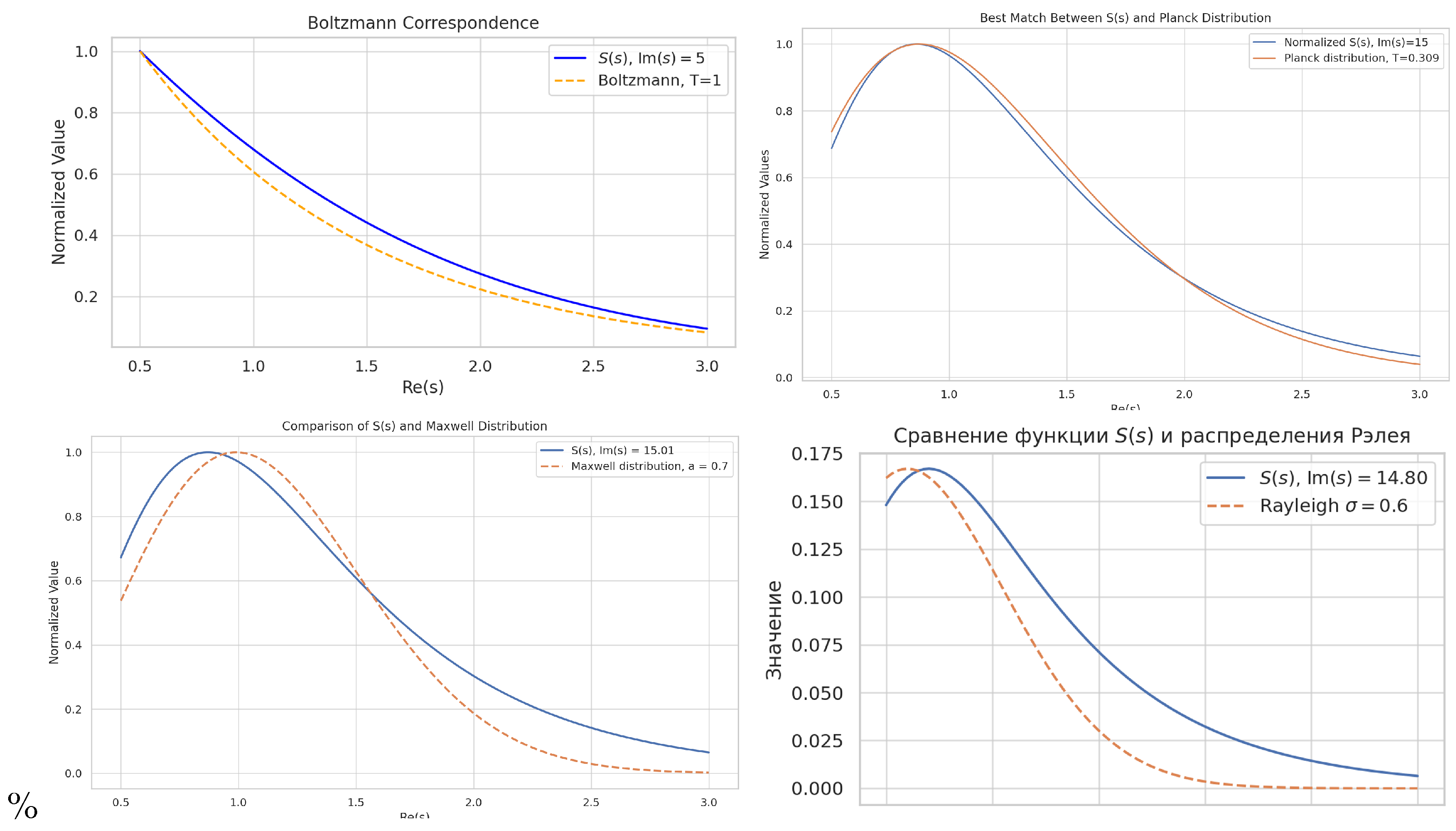

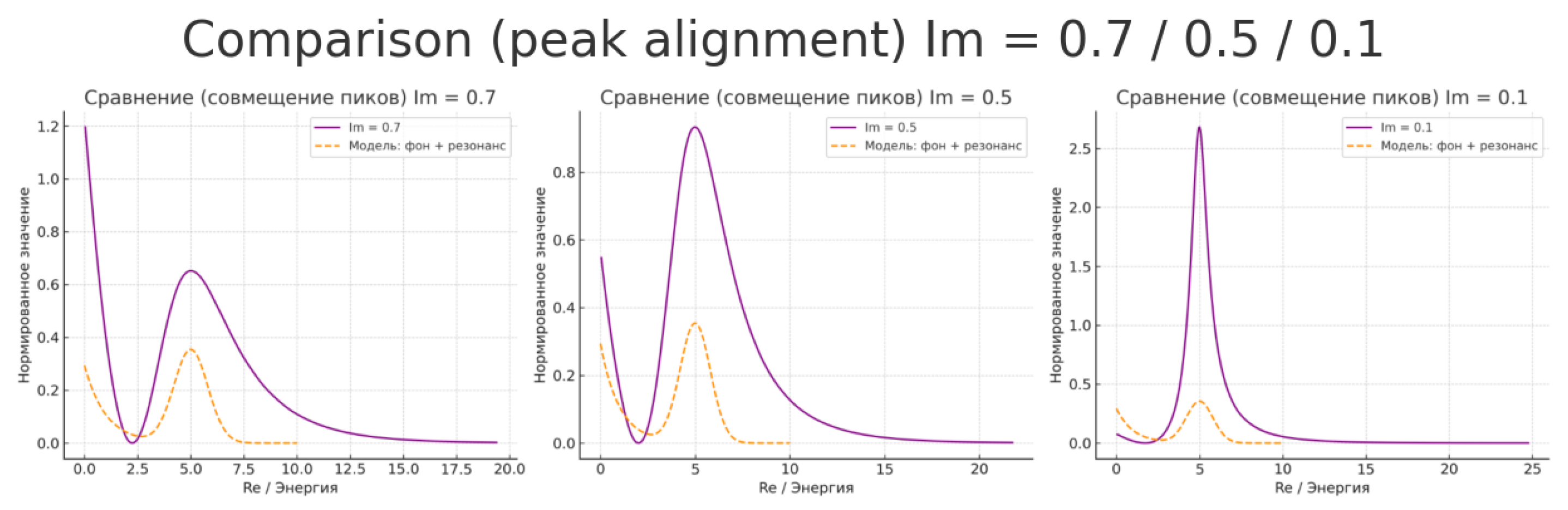

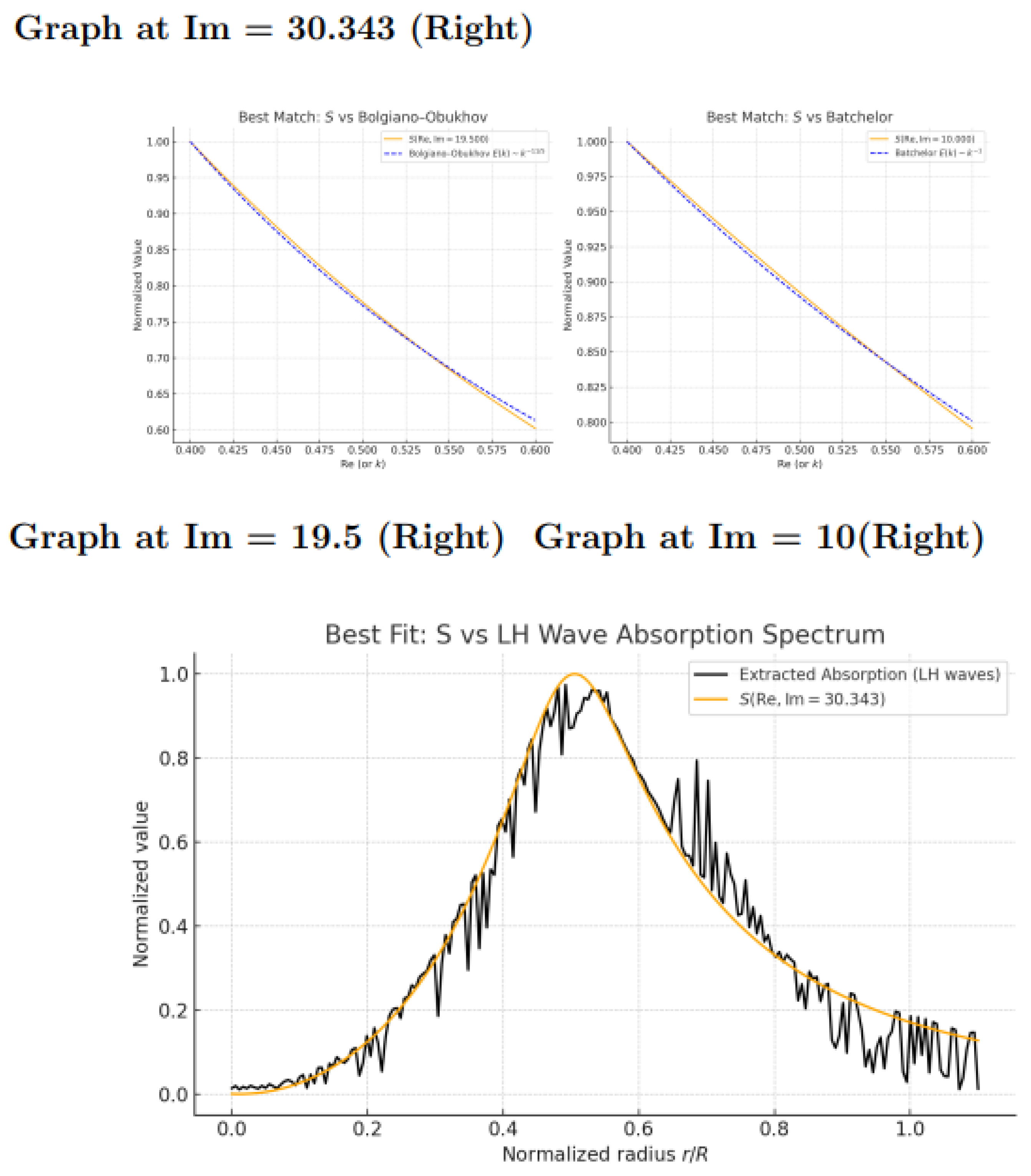

Information Theory: Assesses how to encode and transmit information with minimal loss, relating to entropy reduction and minimizing losses in model training.

Problems: Balancing entropy and data volume when training models with noise.

Computability Theory: Crucial for determining tasks solvable by algorithms and exploring AI’s limits.

Problems: Tasks that are fundamentally unsolvable by algorithmic means, especially in the context of strong AI.

Computability Theory: The primary task of this theory is to determine which problems can be solved algorithmically and to study the boundaries of computation. It plays a key role in understanding which tasks are computable and which are not, particularly relevant to developing efficient AI algorithms.

Problems: In the context of strong AI, a major issue is intractable problems that cannot be solved algorithmically, such as the halting problem. This imposes fundamental limits on creating a strong AI capable of solving any task. Other problems, like computing all consequences of complex systems or handling chaotic dynamics, may also be incomputable within existing algorithmic frameworks.

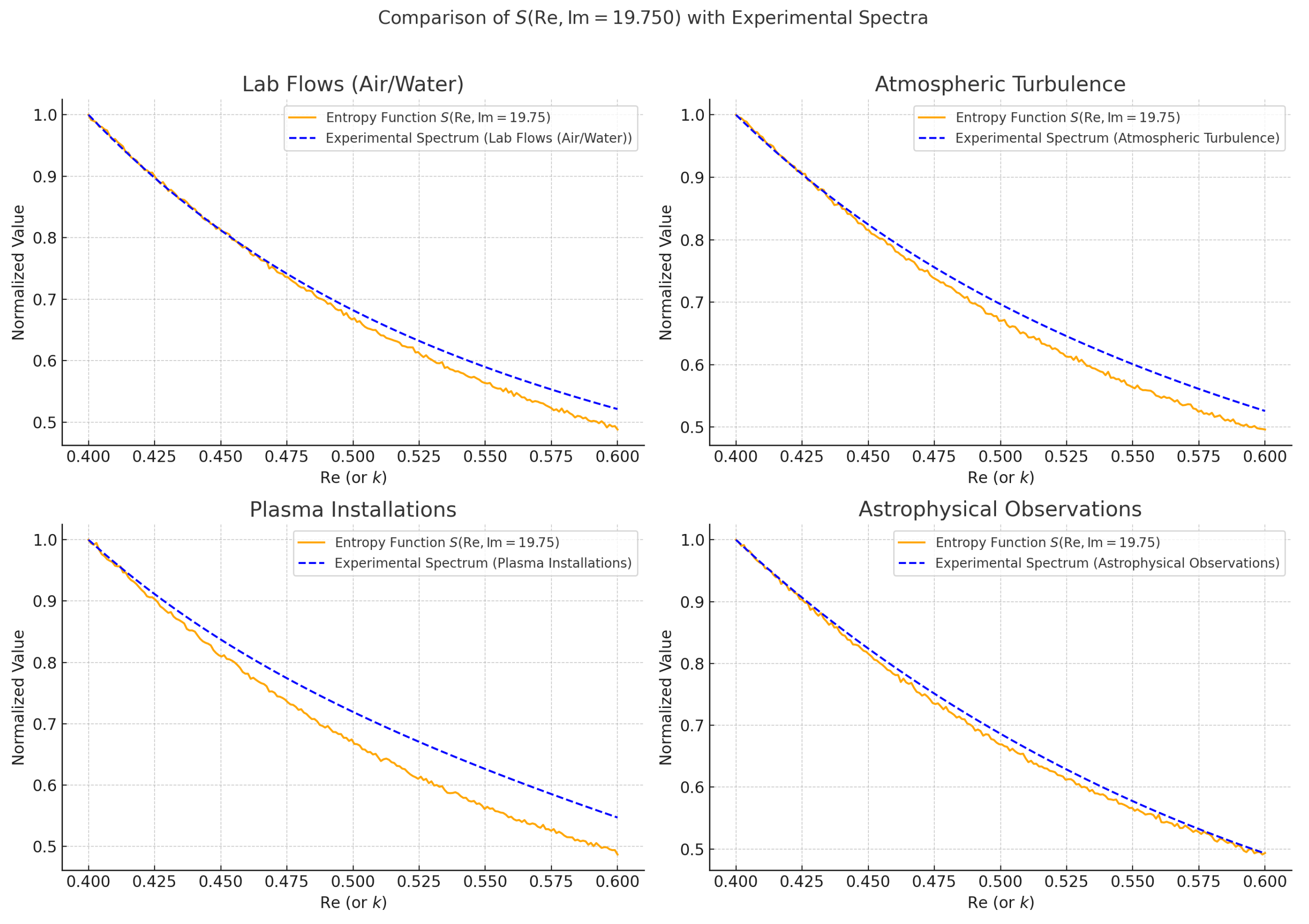

Stochastic Methods and Random Process Theory: These methods are applied in AI algorithms to manage uncertainty and noise, such as in Monte Carlo methods and stochastic gradient descent.

Problems: Efficiently handling uncertainty and optimizing random processes under limited resources.

Stochastic Methods and Random Process Theory: Stochastic methods are widely used in AI to address uncertainty, noise, and probabilistic models. Examples include the Monte Carlo method, used for numerical modeling of complex systems via random sampling, and stochastic gradient descent (SGD), applied to optimize parameters in neural networks with large datasets. - Monte Carlo Method: Employed for numerical simulation of complex systems by generating numerous random samples. This method is particularly useful when analytical solutions are impossible or overly complex. In AI, Monte Carlo is used in planning tasks, such as Monte Carlo Tree Search (MCTS) in games and strategic modeling. - Stochastic Gradient Descent: Used for optimizing models with large datasets. Unlike classical gradient descent, which updates parameters based on the entire dataset, SGD does so using random subsets, speeding up training, especially in deep neural networks, and helping avoid local minima traps. - Problems: - Efficient Uncertainty Handling: Stochastic methods often struggle to accurately model complex systems, relying on large data volumes or numerous samples, which can slow training and optimization. Uncertainty in data distribution and structure can lead to significant prediction deviations and complicate training. - Optimization with Limited Resources: Real-world AI systems often face computational and memory constraints. Monte Carlo methods demand substantial computational power for accurate results, especially in high-parameter or complex dynamic tasks. Meanwhile, SGD can suffer from high volatility, necessitating multiple runs with varying initial conditions. - Accuracy vs. Speed Tradeoff: Stochastic methods face a dilemma: balancing prediction accuracy and computation speed. For instance, increasing Monte Carlo sample sizes improves accuracy but extends computation time, critical in real-time applications with limited resources and a need for rapid decisions.

Thus, optimizing stochastic processes under resource constraints remains a key challenge in applying these methods to AI, especially in systems requiring large data processing or real-time operation.

Neural Networks and Deep Learning: Deep neural networks are based on multilayer structures involving linear transformations and nonlinear activation functions.

Problems: The primary issue is the explainability (interpretability) of neural network decisions, alongside overfitting and generalization.

Neural Networks and Deep Learning: Deep Neural Networks (DNNs) consist of multiple layers, each performing linear transformations on input data followed by nonlinear activation functions. These layers enable the detection of complex, multidimensional relationships in data, making neural networks highly effective for tasks like image recognition, speech processing, and text analysis.

Problems: 1. **Explainability (Interpretability)**: - A major challenge in deep learning is the lack of transparency in model operation, rendering them "black boxes." With thousands or millions of parameters, interpreting how deep neural networks arrive at specific decisions is difficult. This raises trust issues in AI systems, especially in critical applications like medicine or finance, where understanding the decision-making process is vital. - Methods to address this include: - **LIME** (Local Interpretable Model-agnostic Explanations): Provides local interpretations for individual predictions. - **SHAP** (SHapley Additive exPlanations): A game-theory-based algorithm evaluating each feature’s contribution to a prediction. - **Neuron Activation Analysis**: Visualizing how specific layers and neurons respond to input data to better understand what the network learns at each stage.

2. **Overfitting**: - Overfitting occurs when a model learns the training data too well, memorizing its specifics rather than general patterns. This degrades performance on new, unseen data, impairing generalization. - Strategies to combat overfitting: - **Regularization**: Techniques like L2 regularization (weight decay) penalize overly large weights, limiting model complexity. - **Dropout**: Randomly dropping neurons during training to prevent excessive adaptation to training data. - **Early Stopping**: Halting training when performance peaks on validation data, before overfitting occurs.

3. **Generalization**: - Generalization is a model’s ability to perform well on new, unseen data, directly tied to the overfitting problem. The goal is to develop models that capture core data patterns rather than noise or random correlations. - Ways to improve generalization: - **Data Augmentation**: Generating additional training data through random modifications (e.g., image rotation, noise addition) to help models learn broader features. - **Simpler Models**: Models with fewer parameters often generalize better than overly complex ones. - **Cross-Validation**: Techniques like k-fold cross-validation provide insight into a model’s generalization across different data subsets.