Submitted:

12 August 2024

Posted:

14 August 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

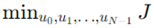

1.1. Sensors:

- Camera: Captures visual information from the vehicle's surroundings, aiding in object detection and recognition.

- Radar: Uses radio waves to detect the distance, speed, and movement of objects, providing crucial data for obstacle avoidance.

- Lidar: Employs laser beams to create high-resolution 3D maps of the environment, essential for accurate distance measurement and object detection.

- Ultrasonic: Utilized for short-range detection, often in parking scenarios, to identify nearby obstacles.

- GPS: Provides global positioning data, enabling the vehicle to determine its precise location and navigate routes.

- IMU (Inertial Measurement Unit): Measures the vehicle's acceleration and rotational rates, helping in understanding the vehicle's motion and orientation.

- The data from these sensors feed into the Perception module.

1.2. Perception:

1.3. Planning:

1.4. Control:

- Brake: Controls the braking system to slow down or stop the vehicle as needed.

- Engine: Manages the power output of the engine to control the vehicle's speed and acceleration.

- Speed: Adjusts the speed of the vehicle according to the planned trajectory and current road conditions.

- Steering Wheel: Adjusts the direction of the vehicle based on the planned path.

- The control module executes the plan created by the planning module, translating the high-level decisions into specific commands for the vehicle’s mechanical systems.

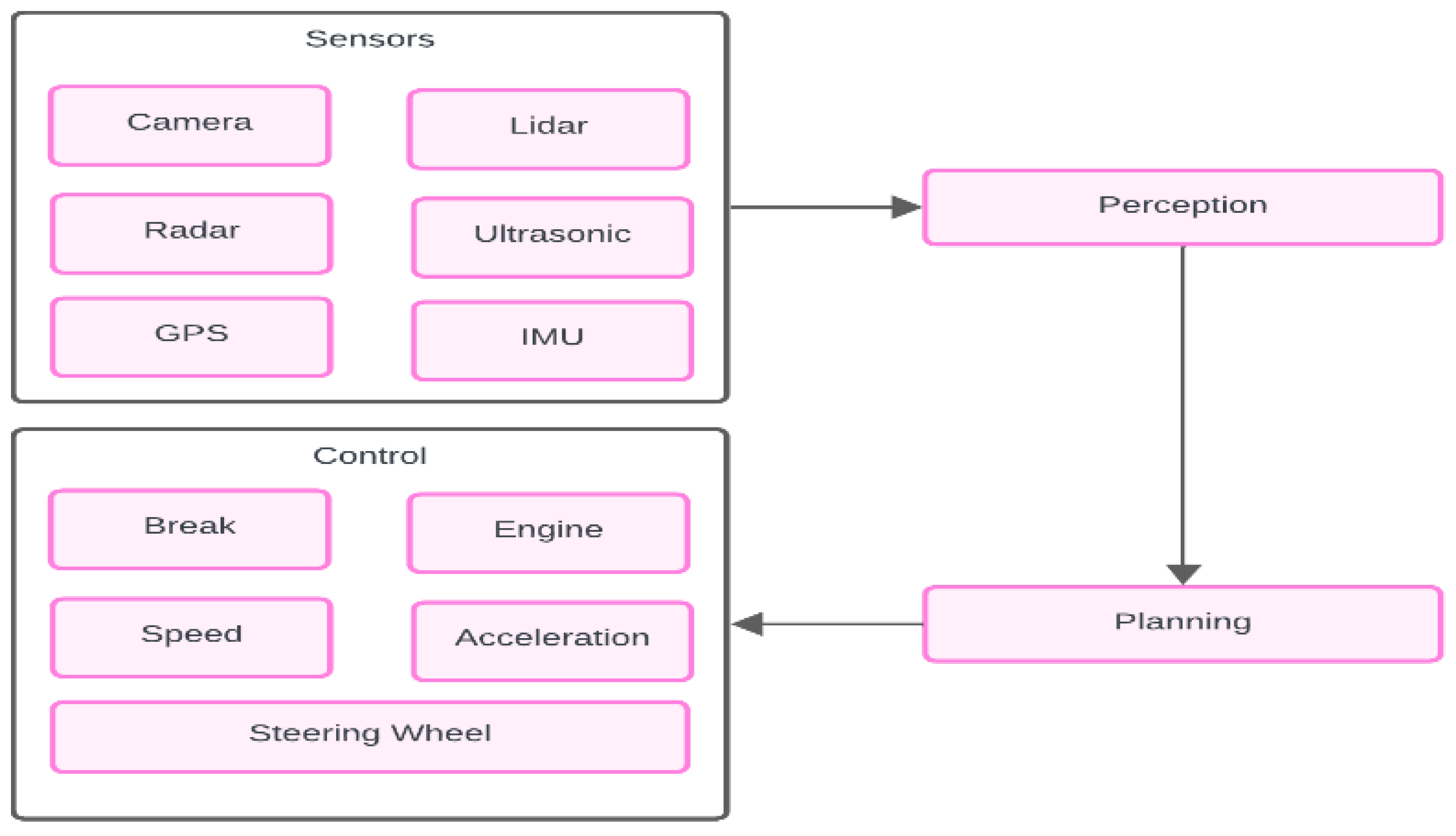

2. AI in Autonomous Vehicles

2.1. Perception Systems

2.1.1. Sensor Types

2.1.2. Object Detection and Classification:

2.2. Machine Learning Algorithms:

2.2.1. OpenCV and Python in AI Automotive:

- OpenCV is a powerful open-source computer vision library designed for real-time image processing and computer vision tasks.

- It provides a wide range of functions and algorithms for tasks such as image/video capture, image manipulation, object detection and tracking, feature extraction, and more.

- OpenCV is written in C++, but it has Python bindings, making it accessible and widely used in Python-based projects.

- Python serves as a versatile programming language for developing AI algorithms, including those used in autonomous vehicles.

- Its ease of use, extensive libraries, and readability make it a preferred choice for prototyping, testing, and implementing algorithms.

- Python integrates seamlessly with OpenCV, allowing developers to leverage OpenCV's functionalities within Python scripts for automotive AI tasks.

- Autonomous vehicles heavily rely on image processing and computer vision for environment perception.

- OpenCV, combined with Python, enables developers to perform a range of tasks critical for autonomous driving:

- Object Detection: Detecting and recognizing objects such as vehicles, pedestrians, cyclists, and obstacles in real-time video streams using techniques like Haar cascades or deep learning-based models (e.g., YOLO, SSD).

- Lane Detection: Identifying lane markings and boundaries to facilitate lane-keeping and autonomous navigation.

- Traffic Sign Recognition: Recognizing and interpreting traffic signs and signals for compliance and decision-making.

- Pedestrian Detection: Detecting and tracking pedestrians to ensure safe interactions in urban environments.

- Feature Extraction: Extracting relevant features from images or video frames for scene analysis and understanding.

- Developers can implement algorithms using OpenCV's functions and methods within Python scripts.

- For example, object detection can be achieved by using pre-trained models (e.g., Haar cascades or deep learning models) provided by OpenCV or custom-trained models integrated with OpenCV's deep learning module.

- Lane detection algorithms can utilize techniques like edge detection (e.g., Canny edge detector) and Hough transforms for line detection, which are readily available in OpenCV's library.

- Python's flexibility allows for algorithmic customization, parameter tuning, and integration with other AI frameworks or modules.

- The results obtained from OpenCV and Python algorithms are integrated into the broader autonomous vehicle system.

- These algorithms contribute to the perception module of autonomous systems, providing crucial inputs for decision-making and control.

- For instance, object detection outputs inform collision avoidance strategies, lane detection results guide autonomous steering, and traffic sign recognition influences navigation decisions.

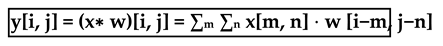

2.2.2. Convolutional Neural Networks (CNNs):

- Positioning the Kernel:

- Element-wise Multiplication:

- Summation:

- Output Assignment:

2.2.3. Recurrent Neural Networks (RNNs):

2.2.4. Decision Trees and Random Forests:

2.2.5. Deep Reinforcement Learning:

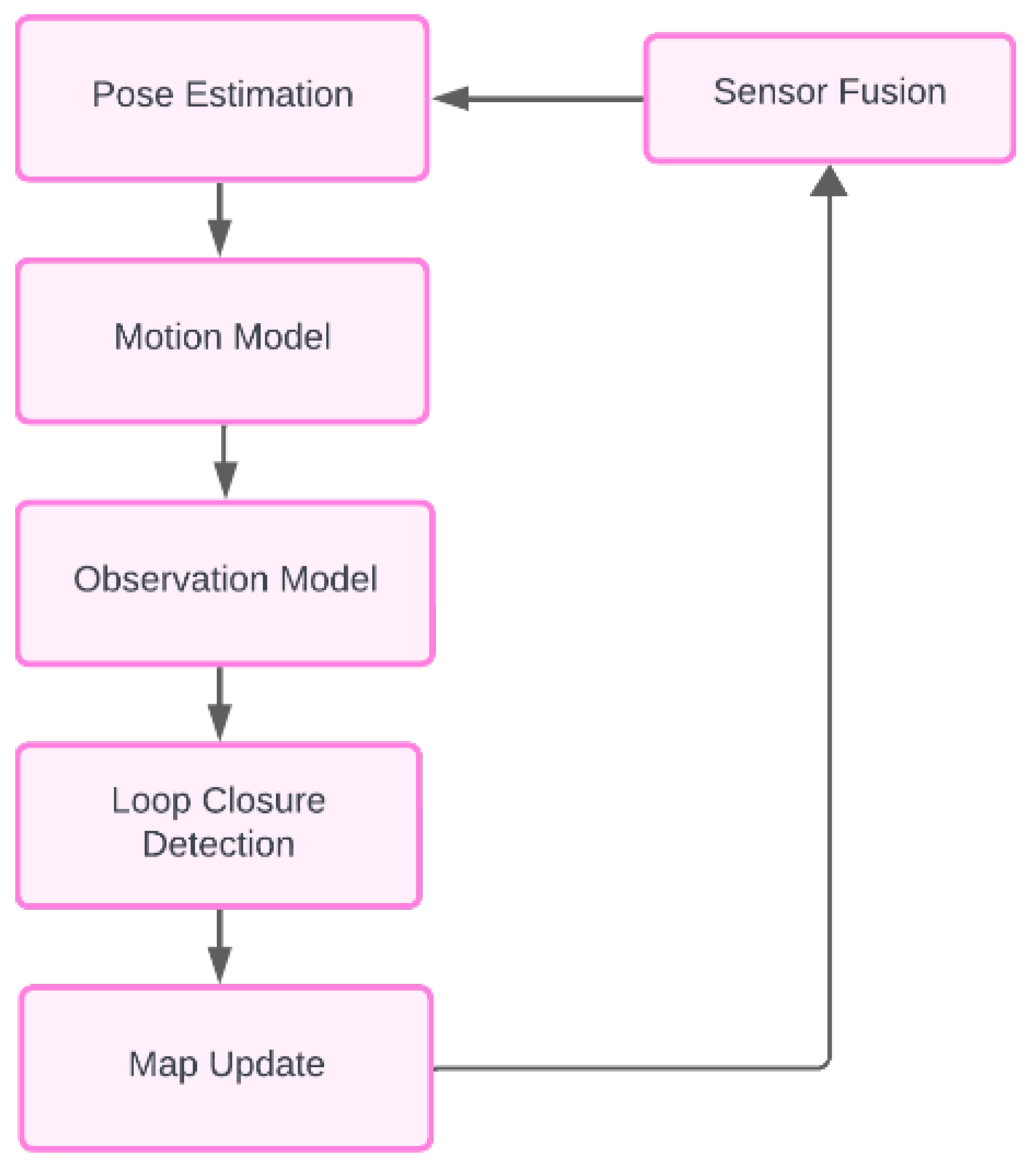

2.3. Localization and Mapping:

2.3.1. SLAM Algorithm Overview:

2.3.2. Equations and Formulations:

2.4. Decision-Making Algorithms:

2.4.1. Behavior Prediction

2.4.2. Decision-Making Algorithms

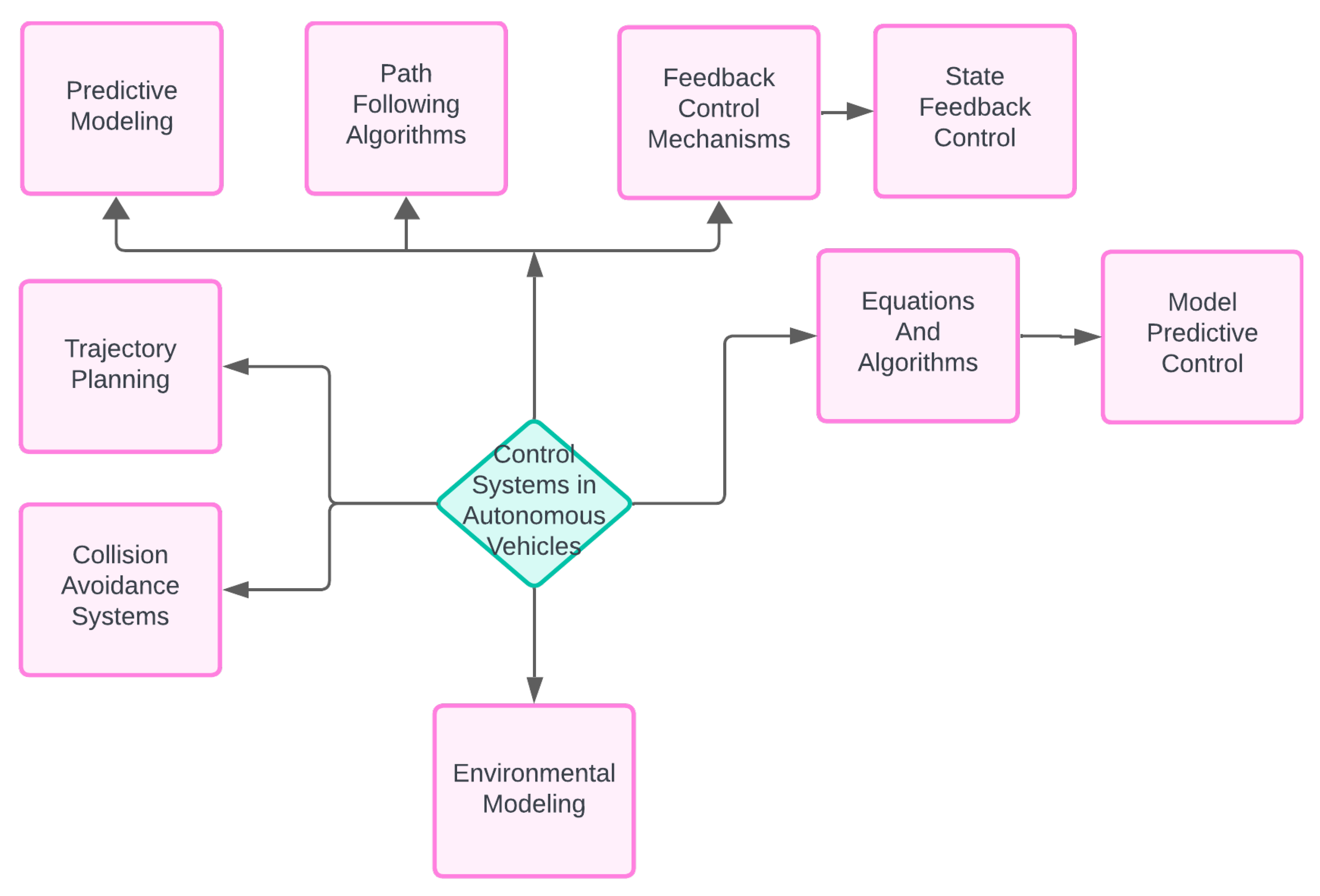

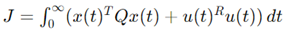

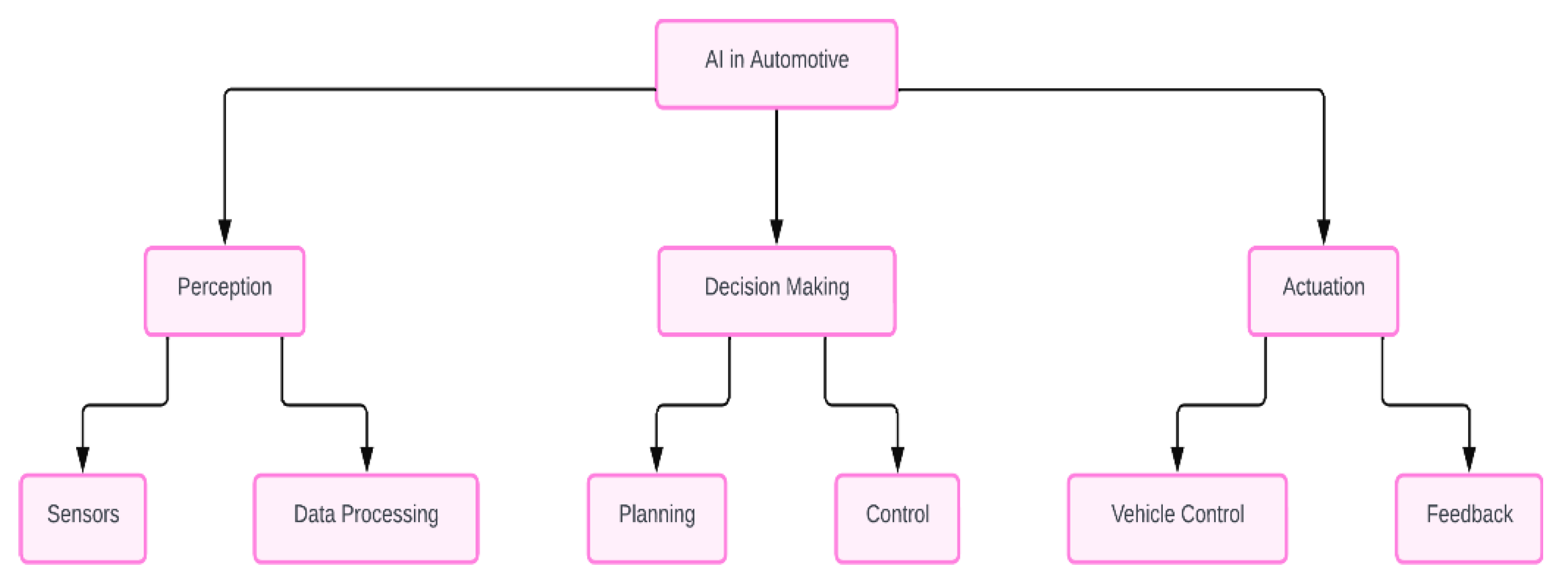

2.5. Control Systems:

2.5.1. Safety Considerations

2.5.2. Equations and Algorithms

- x(t)) is the state vector.

- u(t) is the control input.

- A is the state matrix.

- B is the input matrix.

- Q is a positive semi-definite state weighting matrix.

- R is a positive definite control weighting matrix.

- Q is a positive semi-definite state weighting matrix.

- R is a positive definite control weighting matrix.

- Qf is the terminal state weighting matrix.

- xk is the state vector at time step k.

- uk is the control input at time step k.

- System dynamics: xk+1 = Axk+Buk

- Control input constraints: umin ≤ uk ≤ umax

- State constraints: xmin ≤ xk ≤ xmax

2.6. Sensor Fusion:

2.6.1. Sensor Types and Characteristics

2.6.2. Sensor Fusion Techniques

2.7. Behavior Prediction:

Conclusion

Conflicts of Interest

References

- Equation [1]: Deep Learning Specialization by Andrew Ng (Coursera), Course 4: Convolutional Neural Networks: This course provides a thorough understanding of CNNs, including the convolution operation, pooling, and different CNN architectures. https://www.coursera.org/specializations/deep-learning.

- CS231n: Convolutional Neural Networks for Visual Recognition (Stanford University), This course covers CNNs extensively, including lectures and assignments that provide hands-on experience. http://cs231n.stanford.edu/.

- "Deep Learning" by Ian Goodfellow, Yoshua Bengio, and Aaron Courville, Chapter 9 focuses on convolutional networks, providing detailed explanations and mathematical foundations. https://www.deeplearningbook.org/.

- "A Beginner's Guide to Convolutional Neural Networks (CNNs)" by Adit Deshpande. This article explains the basics of CNNs, including the convolution operation, with visual aids and code snippets.

- "ImageNet Classification with Deep Convolutional Neural Networks" by Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton. This seminal paper introduced the AlexNet architecture and demonstrated the power of CNNs in image classification tasks. [1409.0575] ImageNet Large Scale Visual Recognition Challenge (arxiv.org).

- "Going Deeper with Convolutions" by Christian Szegedy et al. This paper introduces the Inception architecture, which is a variant of CNNs that achieved state-of-the-art results on the ImageNet dataset. [1409.4842] Going Deeper with Convolutions (arxiv.org).

- Equation [2]:; "Deep Learning" by Ian Goodfellow, Yoshua Bengio, and Aaron Courville: This textbook is a comprehensive resource on deep learning and covers RNNs extensively. You can find the relevant discussion in Chapter 10, which deals with sequence modeling and RNNs. https://www.deeplearningbook.org/.

- "Neural Networks and Deep Learning: A Textbook" by Charu C. Aggarwal:.

- This book provides a thorough introduction to neural networks and deep learning, including detailed sections on RNNs. The equation for the hidden state in RNNs is discussed in Chapter 8.

- https://www.springer.com/gp/book/9783319944623.

- "Sequence Modeling: Algorithms and Applications" by Jakub M. Tomczak, Tobias Gaunt, and Oliver Dürr:.

- This book covers various aspects of sequence modeling, including RNNs, and provides detailed mathematical formulations.

- https://link.springer.com/book/10.1007/978-3-030-46661-0.

- Online Courses and Resources:.

- Many online courses on platforms like Coursera, edX, and Udacity offer deep learning courses that include modules on RNNs. For instance, the "Deep Learning Specialization" by Andrew Ng on Coursera covers RNNs in one of its courses.

- https://www.coursera.org/specializations/deep-learning.

- Academic Papers and Tutorials:.

- The paper "Learning to forget: Continual prediction with LSTM" by Sepp Hochreiter and Jürgen Schmidhuber introduces the Long Short-Term Memory (LSTM), an extension of RNNs, and provides foundational knowledge on RNNs.

- Equation [3]:Thrun, S., Burgard, W., & Fox, D. (2005). Probabilistic Robotics. MIT Press.

- Welch, G., & Bishop, G. (1995). An Introduction to the Kalman Filter. University of North Carolina at Chapel Hill.

- Equation [4], Equation [5]: Wikipedia on Extended Kalman Filter: This page offers a comprehensive overview of the EKF, detailing the continuous-time and discrete-time models, the initialization process, and the prediction-update steps. It explains the mathematical formulation of the EKF and its application in navigation systems and GPS.Extended Kalman Filter - Wikipedia.

- Equation [6]:Equation [7]:Introduction to Robotics and Perception - This source explains the relationship between a robot's linear and angular velocities, including how these velocities translate into motion in a differential drive robot. The model considers both linear and angular components to describe the motion accurately (RoboticsBook).

- Probabilistic Models for Robot Motion - This reference provides a comprehensive overview of motion models for mobile robots, including kinematic models that account for linear and angular velocities. It discusses the effects of noise and uncertainties in real-world applications, which could be crucial for your paper (Wolfram Demonstrations Project).

- Equation [8]: Lowry, S., Sünderhauf, N., Newman, P., Leonard, J.J., Cox, D., Corke, P., & Milford, M.J. (2015). Visual place recognition: A survey. IEEE Transactions on Robotics, 32(1), 1-19. DOI: 10.1109/TRO.2015.2496823. Mur-Artal, R., & Tardós, J.D. (2017).

- ORB-SLAM2: An open-source SLAM system for monocular, stereo, and RGB-D cameras. IEEE Transactions on Robotics, 33(5), 1255-1262. DOI: 10.1109/TRO.2017.2705103.

- Equation [9]: Simultaneous Localization and Mapping (SLAM) using RTAB-Map This paper presents a graph-based SLAM approach using RTAB-Map (Real-Time Appearance-Based Mapping), which focuses on large-scale and long-term SLAM applications. It utilizes vision sensors to localize the robot and map the environment. The algorithm includes a robust map update mechanism to integrate new sensor data effectively, ensuring that the occupancy grid map remains accurate over time (fjp.github.io) (ar5iv).

- SLAM using Grid-based FastSLAMThis study explores the FastSLAM algorithm, which combines particle filters with occupancy grid mapping. Each particle in the filter maintains its own map, and the map update step involves incorporating sensor measurements to update the occupancy probabilities of grid cells. This approach helps in managing the uncertainties associated with sensor data and ensures that the map is continuously refined as the robot navigates through the environment (fjp.github.io) (ar5iv).

- Equation[10],Equation [11],Equation [12]: Optimal Control: Linear Quadratic Methods Anderson and Moore's book provides a thorough exploration of LQR theory and applications. It covers the mathematical foundations and derivations of the LQR problem, including the solution of the algebraic Riccati equation and the design of optimal control laws. This book is a comprehensive resource for understanding the theoretical aspects of LQR and its implementation in various systems (Anderson & Moore, 2007).

- Time-varying Linear Quadratic RegulatorThe lecture notes from Stanford University detail the extension of LQR to time-varying systems, offering insights into the dynamic programming solutions and the use of LQR for trajectory optimization. These notes illustrate the practical applications of LQR in stabilizing nonlinear systems by linearizing around nominal trajectories, demonstrating its versatility and robustness in control design (Stanford University, 2020) (Stanford University).

- Anderson, B. D., & Moore, J. B. (2007). Optimal Control: Linear Quadratic Methods: Comparison of optimization approaches on linear quadratic regulator design for trajectory tracking of a quadrotor Baris Ata · Mashar Cenk Gencal.

- (PDF) Comparison of Optimization Approaches on Linear Quadratic Regulator Design for Trajectory Tracking of a Quadrotor (researchgate.net).

- Equation [13]: Qin, S. J., & Badgwell, T. A. (2003). "A survey of industrial model predictive control technology." Control Engineering Practice, 11(7), 733-764. Mayne, D. Q., Rawlings, J. B., Rao, C. V., & Scokaert, P. O. M. (2000). "Constrained model predictive control: Stability and optimality." Automatica, 36(6), 789-814.

- Chen, J., Hu, X., Peng, J., Tang, Y., & Wu, Y. (2020). Autonomous vehicles in intelligent transportation systems: A review. IEEE Transactions on Intelligent Transportation Systems, 21(11), 4746-4765. This paper provides a comprehensive review of autonomous vehicles, including AI techniques used for perception, decision-making, and control.

- Badue, C., Guidolini, R., Carneiro, R. V., Azevedo, P., Cardoso, V., Forechi, A., Jesus, L., Berriel, R., Paixão, T. M., Mutz, F., Veronese, L., Oliveira-Santos, T., & Silva, A. (2021). Self-driving cars: A survey. Expert Systems with Applications, 165, 113816. A detailed survey on self-driving cars, covering various AI algorithms used in the field.

- Grigorescu, S., Trasnea, B., Cocias, T., & Macesanu, G. (2020). A survey of deep learning techniques for autonomous driving. Journal of Field Robotics, 37(3), 362-386. This survey focuses on deep learning techniques for autonomous driving, highlighting the latest advancements and applications in the industry.

- Schwarting, W., Alonso-Mora, J., & Rus, D. (2018). Planning and decision-making for autonomous vehicles. Annual Review of Control, Robotics, and Autonomous Systems, 1, 187-210. This paper reviews planning and decision-making strategies for autonomous vehicles, including AI-based approaches.

- Thrun, S. (2010). Toward robotic cars. Communications of the ACM, 53(4), 99-106. An early but influential paper on the development of robotic cars and the role of AI in making them a reality.

- Liu, Y., Sun, L., Cheng, Y., & Li, H. (2019). Artificial intelligence for autonomous driving: A survey. IEEE Transactions on Intelligent Transportation Systems, 21(11), 4679-4710. A comprehensive survey on the application of AI in autonomous driving, discussing various AI methods and their impact.

- González, D., Pérez, J., Milanés, V., & Nashashibi, F. (2016). A review of motion planning techniques for automated vehicles. IEEE Transactions on Intelligent Transportation Systems, 17(4), 1135-1145.

- This review covers different motion planning techniques for automated vehicles, including AI-driven approaches.

- Sun, Z., Bebis, G., & Miller, R. (2006). On-road vehicle detection: A review. IEEE Transactions on Pattern Analysis and Machine Intelligence, 28(5), 694-711.

- A review focusing on the AI techniques used for on-road vehicle detection, a crucial aspect of autonomous driving.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).