3. Beam Learning with MARL

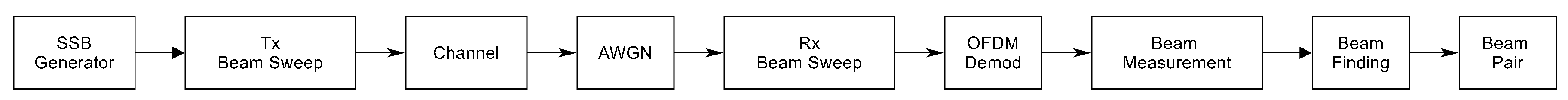

The codebook learning process is initiated by first obtaining a fixed conventional codebook through the initial access procedures and beam management procedures outlined in the 5G New Radio (5GNR) technical report. The steps involved in this fixed codebook learning process are summarized as follows:

Procedure 1 : When a connection is established between a transmitter and a receiver, an initial beam alignment is required. This involves finding an optimal transmit-receive beam pair that maximizes the signal strength between the devices. Various methods like synchronization signal blocks (SSBs) and reference signals are used to aid in this process. This is shown in

Figure 4.

Procedure 2 : Refining transmit-end beam via non-zero-power CSI-RS and SRS. After initial beam acquisition, this beam management aims to refine the beams to improve the communication link further. In this step, reference signals are sent in different directions using finer beams within the initial angular range. UE or BS assesses these beams with fixed receive beam and selects best transmit beam.

This proposed system initially employs a standard beamforming procedure and gradually transitions into a more efficient MARL based system over time. This method essentially substitutes standard codebook beams with learned beams on a one-to-one correspondence basis. The angular spacing between nearby beams is determined by the number of beams, which corresponds to the number of agents in the MARL framework. This approach simplifies implementation without necessitating alterations to the existing infrastructure. Once the codebook is learned, it can be utilized until the link’s performance deteriorates due to significant changes in the deployment site.

It is important to note that a learned multibeam codebook differs from simply parallelizing multiple single beams. Unlike fixed codebook beams, learned beams are not matched filters to the antenna array response and can adopt any arbitrary shape suitable for the scattering environment of deployment. Consequently, this may result in beam overlap, leading to inter-user interference, if cooperation between the beams for multiple simultaneous users is not established. The proposed MARL-based approach addresses this issue by cooperatively learning the beamforming vector for each beam per user group.

In this research, the procedures for initial beam acquisition and subsequent beam learning are segmented into the following major steps:

SSB beam sweeping

Beam measurement and determination at UE

Beam reporting to BS by UE

Send SRS to BS from UE for uplink transmit end beam refinement and also for MARL based downling transmit end beam refinement. This procedure differs from method in 5GNR by the fact that the standard used NZ-CSI-RS for downlink transmit end beam refinement. This requires CSI feedback from UE and can work only with traditional matched filter based codebooks as full channel estimate feedback from UE which is required for non-codebook based beamforming is unavailable or impractical to achieve and resource intensive.

Send NZ-CSI-RP to UE only to get RSRP feedback (RSRP consumes very little resource).

Decode received SRS and estimate uplink Channel at BS.

Send RSRP measurement in SRS to UE for beam refinement at UE.

At BS, use RSRP and channel estimate acquired in step e and step f to learn downlink transmit end beam codebook through the proposed MARL algorithm.

To make RL, or in this case MARL, applicable, the environment must be modeled as a Markov process. In [

9], this is achieved by incorporating the current beamforming vector as a function of the previous beamforming vector. A similar approach as in [

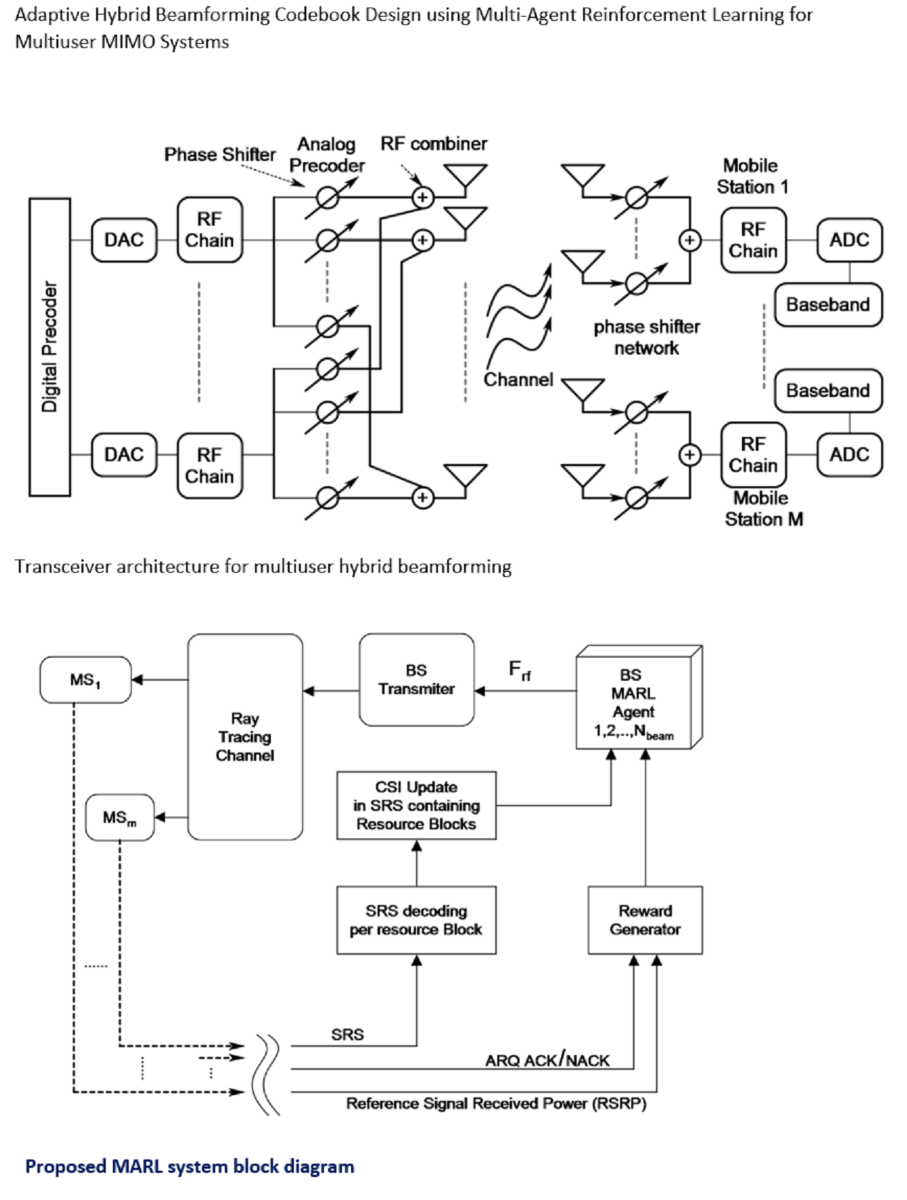

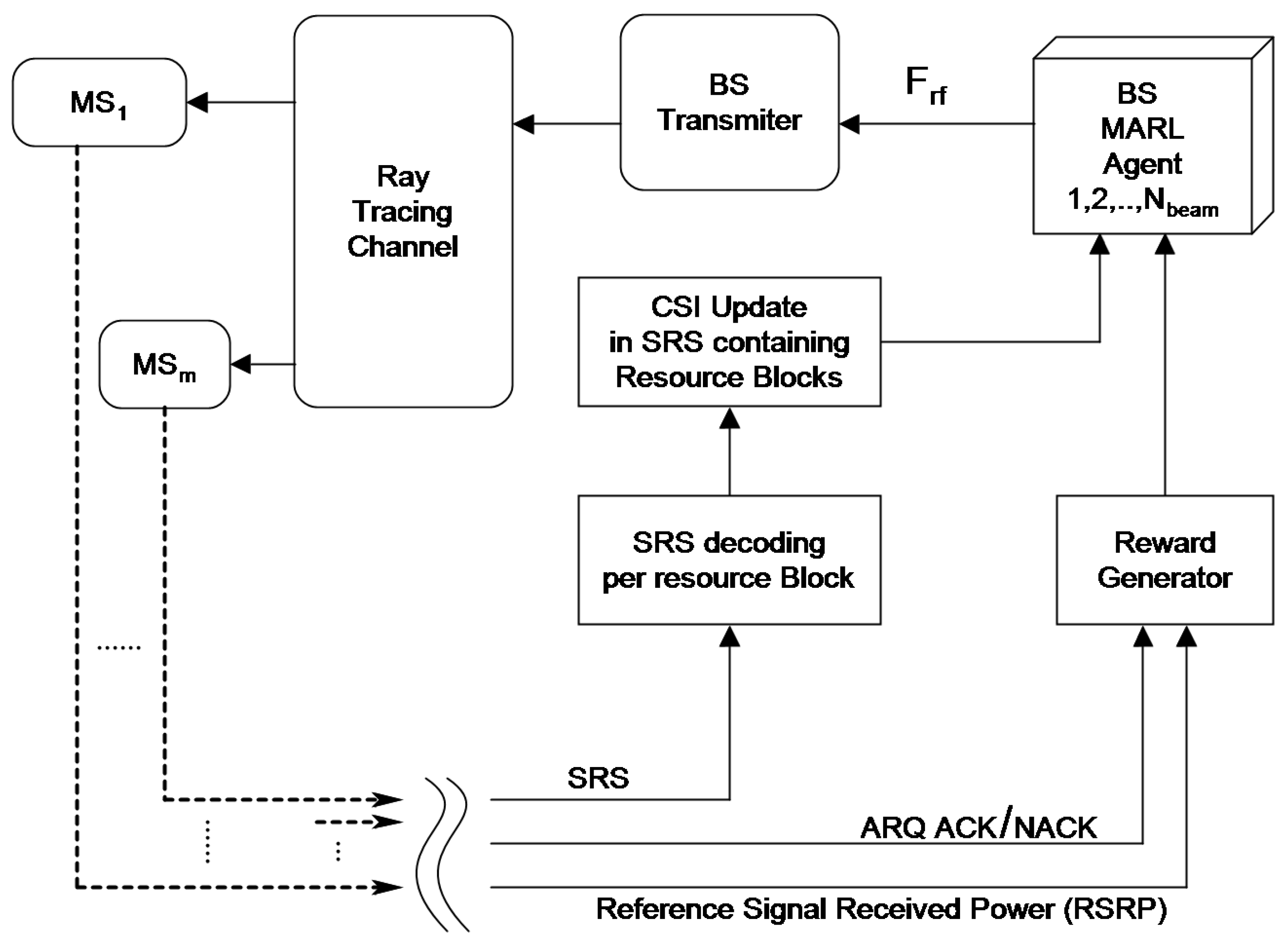

9] is followed, extending this method by also considering the partial and imperfect CSI acquired by the BS during uplink SRS transmission by the UE. The operation of the entire system is illustrated in

Figure 5, with each processing block and signal flow explained subsequently.

3.1. Details of MARL

The proposed MARL algorithm builds upon the Wolpertinger Architecture [

13], following a similar approach as described in [

9]. The Wolpertinger Architecture adapts the DDPG, originally crafted for continuous action spaces, to function within a discrete action space through the utilization of a K-nearest neighbor (KNN) classifier. To address non-stationary environment issues in a multi-agent RL system with continuous action spaces, the MADDPG offers a solution. MADDPG achieves this through centralized training and decentralized execution. To accommodate a discrete action space in MARL, an improvisation on MADDPG is made by implementing each agent in MARL using the Wolpertinger Architecture. Thus, the proposed MARL essentially embodies MADDPG, with each agent designed to adhere to the Wolpertinger Architecture.

The proposed beam learning problem presents a significant challenge due to the large number of possible actions. For instance, considering a base station with 32 antennas, 3-bit phase shifters, and 4 RF channels, each agent faces around potential actions. This complexity is further compounded with additional antennas and higher-resolution phase shifters, rendering conventional deep Q-network frameworks impractical.

Additionally, multi-agent deep Q-networks suffer from instability and renders the environment non-stationary. To overcome these limitations, the Wolpertinger architecture is introduced, offering a solution for navigating spaces with extensive sets of discrete actions [

13]. This architecture, rooted in the actor-critic framework, is trained using the DDPG algorithm [

14]. Notably, the Wolpertinger architecture incorporates a KNN classifier, enabling DDPG to effectively handle tasks with discrete, finite, yet exceptionally high-dimensional action spaces. Below, a concise overview of the key components of the Wolpertinger architecture is provided.

Actor Networks: The actor maps states from the observation space to actions, serving as a function approximator for this mapping process. Since the actions obtained from the actor fall into a continuous action space, the predicted action may not align perfectly with the action space of the problem. Therefore, this prediction is referred to as a proto action and is quantized by a KNN classifier to obtain an action available in the discrete action space.

KNN search: KNN search is employed to determine the nearest neighbor of the proto action within the discrete action space. This algorithm utilizes the distance, also known as squared Euclidean distance, as a metric to identify the closest vector to the proto action. In essence, the KNN algorithm assesses the spatial proximity of the proto action to the available discrete actions, helping to quantize and align the predicted action with the specific options within the discrete action space.

Exploration noise process: Noise helps agents explore the environment more effectively by injecting randomness into their actions. Exploration is essential in reinforcement learning to discover new states and actions that can lead to better policies. Without exploration, agents might get stuck in suboptimal policies. The noise added to actions is often generated from a stochastic process, such as a Gaussian distribution, Ornstein-Uhlenbeck process, or other types of noise sources. Ornstein-Uhlenbeck process is used in this work to generate noise that is added to the actions of an agent. This noise has the property of being temporally correlated, which means that it tends to stay close to its current value over short time intervals, mimicking the behavior of real-world systems. The peak noise magnitude needs to be such that after adding it to the action in element-wise manner produces resultant magnitude large enough to cover the full range of phase shifter array.

Critic Networks: The critic network functions as a Q function, accepting both the state and action inputs and generating the anticipated Q value for the specific state-action combination. Given that the KNN function yields k potential actions, the critic network evaluates k distinct state-action pairs (with a shared state), ultimately pinpointing the action that attains the highest Q value among them.

target Networks: The target network is a separate neural network that mirrors the actor network. It’s parameters are updated less frequently, providing a stable target for the training process. The periodic update of the target network’s parameters enhances the stability and convergence of the learning process, leading to improved training efficiency and more accurate action value estimations.

In this scope, the input (State), outputs (action) and reward process of the MARL algorithm is defined.

State: State comprises of the concatenated vector of the phases of all phase shifter at time t and average normalized envelop of Channel estimate obtained through procedures given in step a through step h of the major steps mentioned at the beginning of this

Section 3.

Action: Action comprises element-wise changes of all the phases in the state vector at time t.

Reward: Proper reward design is pivotal for shaping effective RL policies, achieving goals efficiently, and avoiding unintended behaviors. In this proposed work, the reward function is designed to satisfy two goals, namely maximize average beamforming gain in turn maximizing sum rate of the system and reducing inter-beam interference.In the proposed approach, the end-to-end system is implemented, where the ARQ signal is sent by the UE to the BS based on whether a frame was received correctly or not, and the received ARQ is also used as an input for reward modeling, addressing concerns that RSRP alone may provide a misleading indication of beamforming gain maximization in a multi-beam system with interference. The proposed reward processing is detailed in Algorithm 1.

|

Algorithm 1:Reward Function |

- 1:

Initialize dynamic threshold for RSRP, . - 2:

Observe RSRP feedback from UE,

- 3:

Observe ACK/NACK (True/False) from UE.

- 4:

if and then

- 5:

; - 6:

; - 7:

else if and and then

- 8:

; - 9:

else if and and then

- 10:

; - 11:

else if and then

- 12:

; - 13:

else - 14:

; - 15:

end if |

Steps of MARL learning for

N agents is given as pseudo-code in Algorithm 2.

|

Algorithm 2:MARL based beam learning |

- 1:

Initialize actor networks, critic networks with random weights - 2:

Initialize target networks and with the weights of actor and critic networks - 3:

Initialize the replay memory D, minibatch size B, discount factor

- 4:

Initialize reward processing algorithm - 5:

Initialize N beams with beam-steering codebook in of Section 3 for N clusters and N agents. - 6:

Initialize a random process N for action exploration - 7:

For each agent, initialize random initial beamforming vector as state, x. - 8:

fort=1 to max-episode-length do

- 9:

For each agent i, select proto action w.r.t. the current policy and exploration. - 10:

for each agent i, quantize proto action to valid beamforming vector with KNN for k=1. - 11:

Execute action and observe reward r (with Algorithm 1) and new state

- 12:

Store in replay buffer D

- 13:

- 14:

for agent i=1 to N do

- 15:

Sample a random minibatch of S samples from D

- 16:

Set

- 17:

Update critic by minimizing the loss

- 18:

Update actor using the sampled policy gradient: - 19:

- 20:

end for

- 21:

Update target network parameter for each agent i: - 22:

- 23:

end for |

3.2. Data Preprocessing

The SRS provides the BS with comprehensive channel information across the entire bandwidth. Utilizing this information, the BS optimizes resource allocation, giving preference to areas with superior channel quality over other bandwidth segments. In this proposed work, emphasis was placed on a central cluster consisting of four resource blocks (RBs), each encompassing a bandwidth of 180 kHz. Within each RB, 12 subcarriers are positioned at 15 kHz intervals, resulting in a combined bandwidth of 720 kHz. A frequency-domain vector comprising 48 complex numbers is derived through channel estimation across this contiguous frequency range. Given that only a narrow band of the entire spectrum is required for the proposed algorithm, achieving high SNR for SRS transmission is feasible.

For further analysis, this complex vector is transformed into its magnitude and then downscaled by a factor of 2, resulting in a real-valued vector comprising 24 elements. To ensure consistency, in the subsequent preprocessing stage, this 24-element vector is normalized by dividing it by its maximum value. This procedure is iterated for each of the four simultaneous users, producing four channel vectors.

The other part of the state vector input consists of the phases of the phase shifter network for a particular RF chain of length which is 32 in this case. This is also normalized by the maximum absolute value of the phase vector. Here, four such phase shifter vectors are obtained for four RF chains.

The input to each actor network is the corresponding state. The state is the concatenation of the 24 length channel vector given as and 32 length phase vector which equals . Thus the length of state vectors are which is 56 in this case. The output of the actor networks are also the predicted phase update vectors which is of length , ie, 32. The actor network includes a pair of hidden layers, each containing neurons equating to 560. These layers are subsequently activated using Rectified Linear Units (ReLU). The outcome of the actor network stands as the anticipated action. This outcome is then passed through hyperbolic tangent (tanh) activations, which are scaled by .

Thus the length of the input of each critic network for a 4 agents can be given as , ie 336 in this case. The output of the critic network is the predicted Q value, which is a real valued scalar. hence, output dimension of critic network is 1. The critic network is composed of two hidden layers, each layer containing , ie, 1680 neurons. Following this, ReLU activations are applied to these layers.

Hyper parameter for the MARL is given in

Table 2.

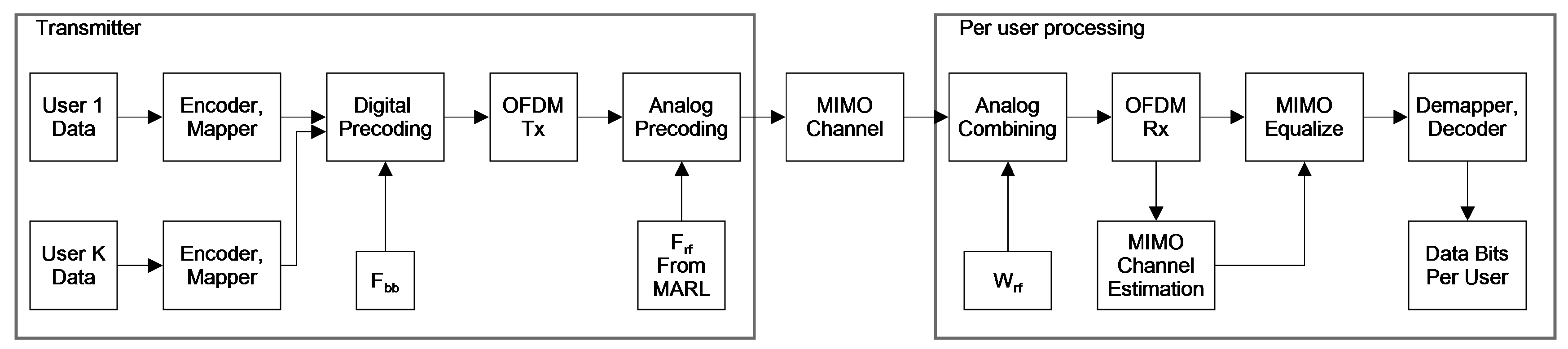

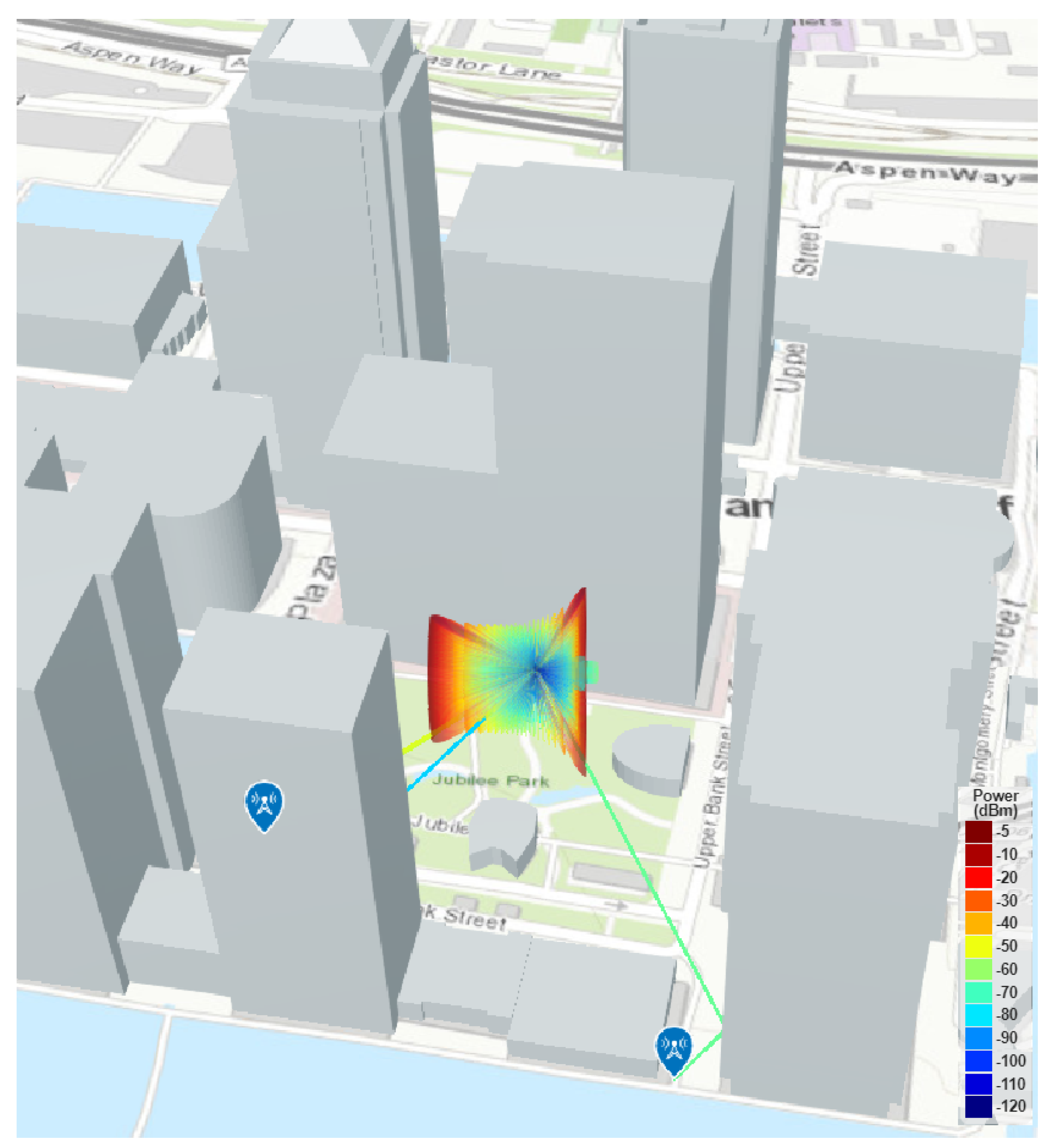

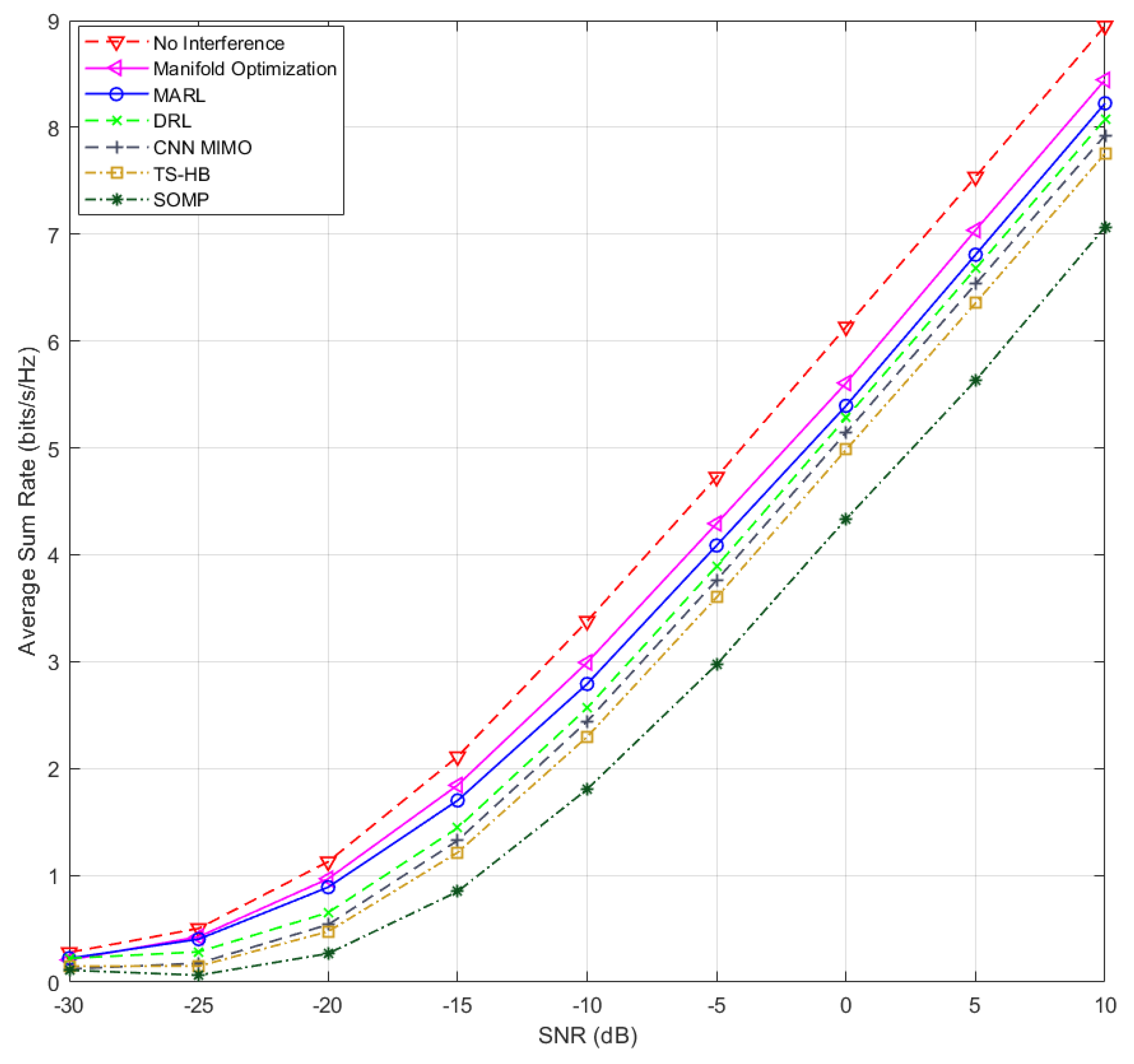

3.3. System Level Simulation with MARL

In this proposed work, a

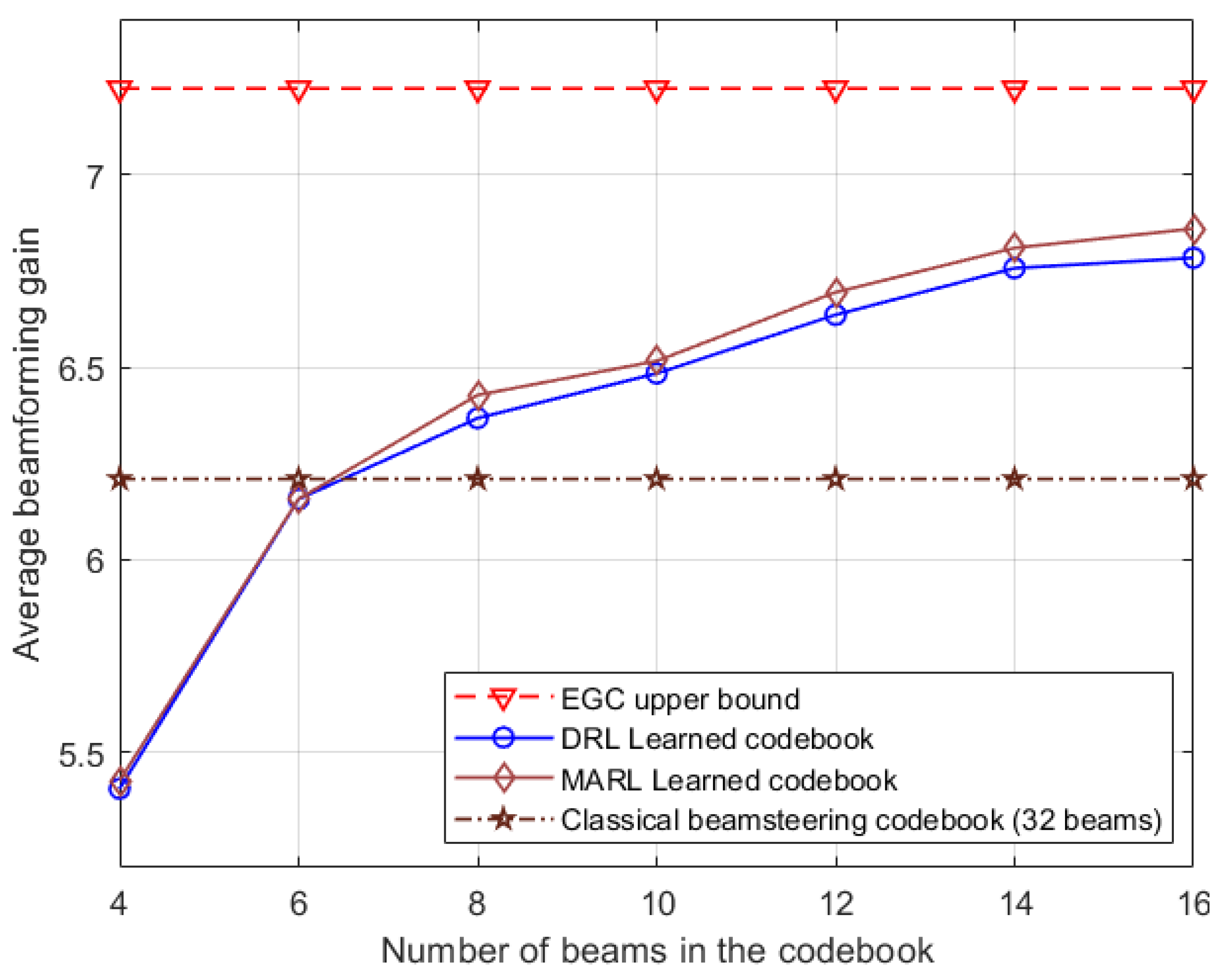

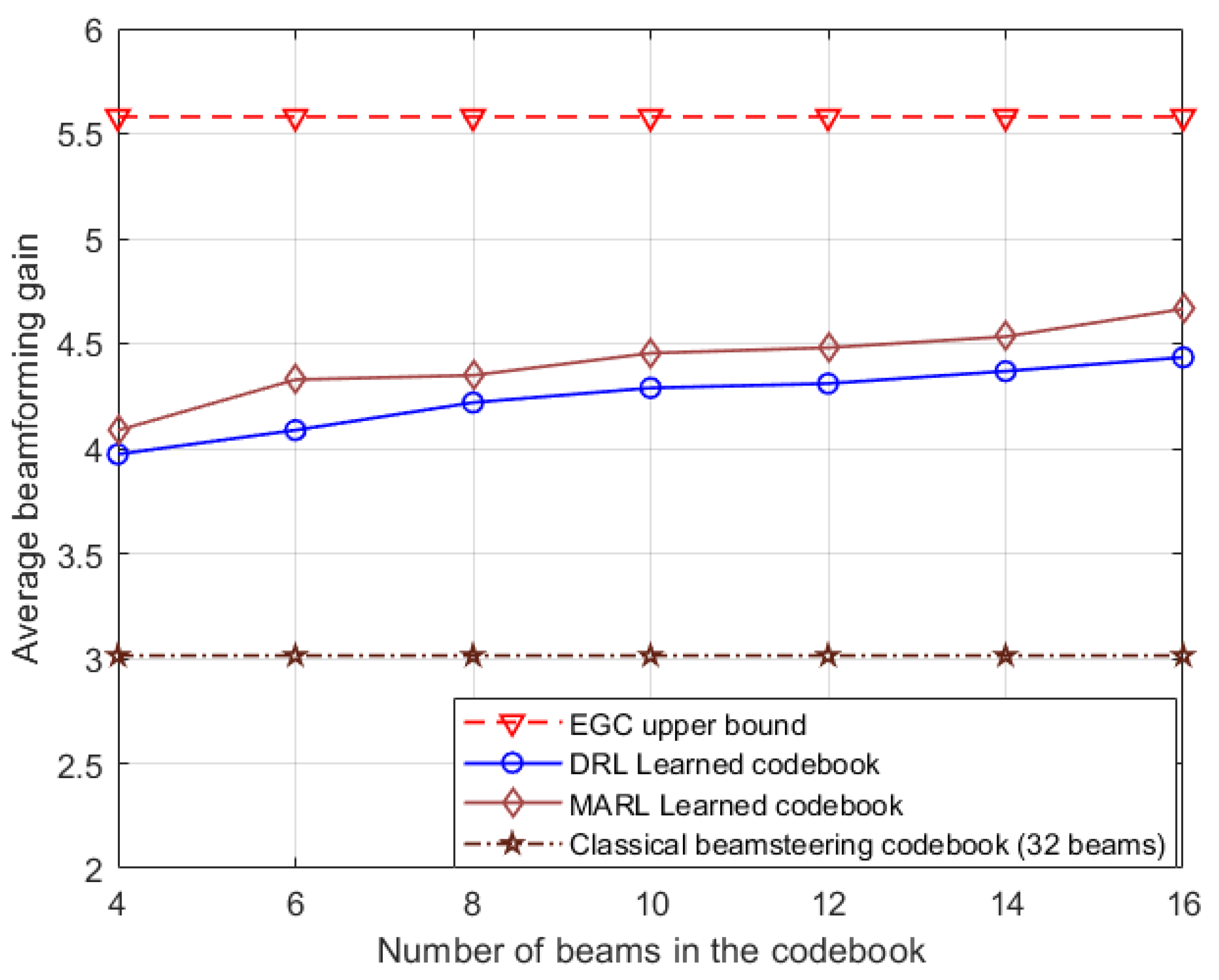

sector of a cell for simulation purposes is modeled, restricting transmissions within this azimuth range. Although the 4 RF channels can concurrently serve 4 users within this angular space, real-world scenarios typically involve more than 4 active users. To address this, users with similar channels are served with a single beam. The assignment of each user to a specific beam, whether before or after the MARL-based codebook learning process, is determined through beam sweeping. Consequently, the number of beams in the learned codebook remains consistent with the initial access codebook, which is adjustable for performance assessment.

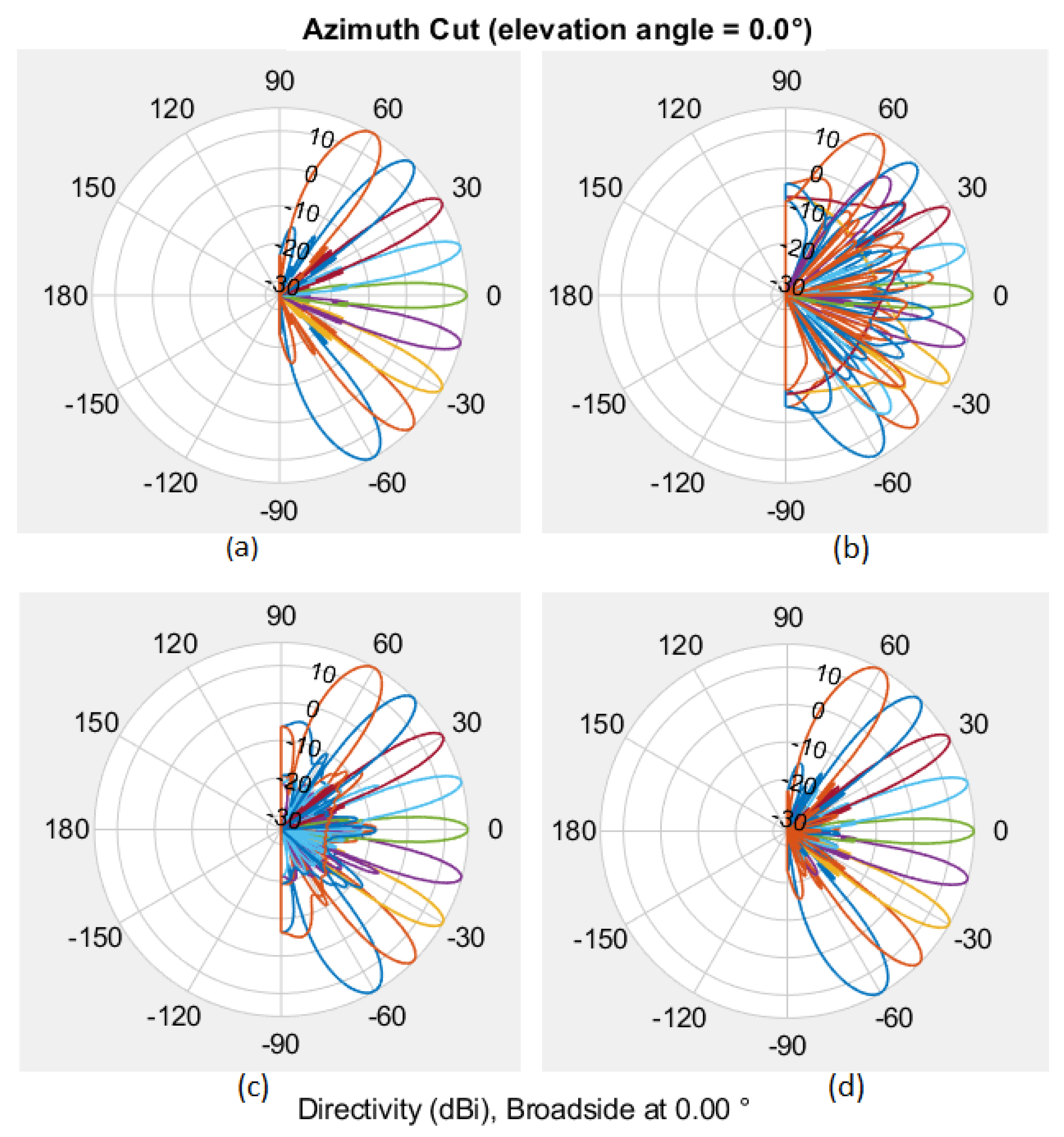

Figure 6 illustrates the radiation pattern for one such codebook with 9 beams, showcasing variations for different quantization bits.

In the proposed MARL algorithm, the number of agents corresponds to the number of beams utilized in the sector. This configuration effectively breaks down the task of selecting a beam from a large set into finding a single beam within a smaller subset, thereby enhancing the efficiency of the codebook learning process. An additional and significant benefit of employing one agent per beam is the ability to identify optimal non-interfering beams within the sector, even in nLOS scenarios. Each agent in the MARL algorithm strives to maximize individual beamforming gain while minimizing interference with other agents, as reflected in the reward processing outlined in Algorithm 1.

Upon completion of the learning phase, the acquired codebook becomes readily deployable within the initial access procedure. Users can now be efficiently served using the learned codebook, rendering the traditional matched filter-based beam codebook obsolete. This transition marks a significant advancement in the efficiency and adaptability of beamforming techniques, as the learned codebook optimally caters to the dynamic needs and complexities of the communication environment without relying on pre-defined beam patterns.

This learned codebook is valid until there is no significant change in terms of macro structures within the sector. Although such time will be there only occasionally, in case of such large changes in the structure or re-placement of the BS, learning has be initiated again for all the beams.

Next, the analog beamforming codebook selection for the UE is carried out. In this work, a conventional beamforming codebook tailored for the UE is employed. The process of selecting beams from the codebook for the UE involves a standard beam search procedure, encompassing steps such as sounding, measurement, and feedback.

In the final step, the baseband beamforming vector (

) at the BS is calculated. This computation follows the procedure outlined in [

3]. In this process, the BS formulates its zero-forcing digital precoder

based on the quantized channel feedback received from the UE. Due to the utilization of RF beamforming and the presence of sparse mmWave channels, it is anticipated that the effective MIMO channel will be well-conditioned [

17,

18]. This favorable channel condition enables the utilization of a straightforward multi-user digital beamforming strategy like zero-forcing, which can achieve performance close to the optimal level [

19]. The algorithm for obtaining the baseband beamforming vector

is detailed in the second stage of the procedure presented in [

3].