Formulating Principles of the Physical, Informatic Basis of Intelligence

Taking stock of the advances in understanding the physical basis of intelligence in brains and machines, we can propose three general principles that pave the way for achieving an interface to AI, and therefore a path to lasting subjective intelligence, through a PNE.

Overview of the Principles

The first principle of physical intelligence is that the memory that allows concepts, now in machines as well as brains, is implemented in a distributed representation of connection weights among elementary processing neurons. The connection weights reflect associations, literally the strength of the associations among simple nodes or neurons in a large network architecture. For both brains and artificial intelligence, these networks are typically multi-leveled, so that the information is not simply at one associational level, but is achieved with multiple levels that can organize abstracted meaning at higher levels, thereby achieving abstract concepts. From this principle of distributed representational architecture, it is now clear that human brains and machines operate on similar mechanisms of information processing.

Two additional principles are important, one from neuropsychology and one from physics and information theory. For the second principle of physical intelligence, when we study how psychological function emerges from the activity of the human brain, we see that there is no separation of mind from brain. The mind is not just what the brain does, it is the brain (Changeux, 2002). More specifically, the functions of mind emerge literally from the neurodevelopmental process, the growth of the brain over time. This can be described as the principle that cognition is neural development (Tucker & Luu, 2012). The mind, in its neural form, is constantly growing, constantly organizing its internal associations (concepts) in exact identity with the strengthening or weakening of the brain’s connections. Because the process of mind is always developing, the constituent neural connections of mind must also be dynamic, growing, and regulated in that growth. The neurodevelopmental process of mind is the physical substance of our psychological identities, which are also (in exact register) profoundly developmental, changing and growing over time (Tucker & Luu, 2012). Importantly, we argue that this principle of neurodevelopmental identity implies that the mind, and the process of subjective experience, can be reconstructed fully from an adequate neurodevelopmental process description in information terms.

There is an important corollary of the second principle, already well-known in machines and brains: in the dynamic growth of connections, plasticity is achieved at the expense of stability (Grossberg, 1980). The dynamic development of learning in distributed representations operates in the same generic connection/association space, such that new learning invariably challenges the old memory. In the phenomenology of mind, careful reflection may teach us to recognize the old self that becomes at risk with each significant new learning experience (Johnson & Tucker, 2021; Tucker & Johnson, in preparation).

The third principle of physical intelligence is important for understanding the fundamental nature of information, and why the information processing of mind is not restricted to its evolved biological form (brains). This is the principle of the informatic basis of organisms. In physics we observe that the processes of matter, whether physical or chemical, tend toward greater entropy, meaning they tend to lose their complexity and release energy. Life has seemed to defy this fundamental rule of thermodynamics, maintaining its complexity through metabolism of external energy sources, homeostasis, and growth (Schrodinger, 1944).

Recent advances in the physics of information have provide a new perspective on the organization of information that defines a living organism as a non-equilibrium steady state (NESS), a self-organizing, growing form of information complexity that manifests rather defies the fundamental physics of entropy in the minimization of free energy (Friston, 2019). The cognitive functioning of this NESS — the self-evidencing that emerges in its operation as a good regulator (modeling its world for better or worse in order to live) can be fully specified in an information description that is not unique to minds, or brains, or even biological things. Rather, the physics of information may offer an functional description of mind with the same terms that apply to physical, entropic, systems. The implication is that the neurodevelopmental process of organizing intelligence is not restricted to the particular biological form of evolved brains, but could be extended to a sufficiently complex computer.

Finally, an important practical point is that we can build tools to make tools. We do not need to build the PNE by hand, but instead could rely on a neuroscience-informed AI for the coding of a durable PNE. AI tools are now well-proven and rapidly-improving, such that well-trained LLMs can assist scientists and engineers with digesting and integrating today’s complex literatures. We just need to train them to embody the essential neuromorphic principles, such as are now manifested by the massive neuroscience literature, in order to construct an advanced PNE.

We next attempt to explain these three foundational principles in ways that clarify their interrelations. These principles share the requirement to overcome the ingrained tendencies we have to restrict our thinking to familiar levels of analysis, where the psychological realm is somehow floating above the biological, the biological is somehow alive in a way the physical is not, and the computing of information is an abstraction that parallels but does not quite touch the stuff of these familiar and separate domains. Instead, we will imagine that there is but one phenomenon of intelligence, and we can learn to abandon our familiar categories of experience to describe it, and perhaps achieve it, with a computable information theory of active inference.

The Identity of Mind and Developing Brain Anatomy

The first two principles, of distributed representation and neurodevelopmental identity, are closely related, the first describing a static idealized state of distributed representation and the second describing the dynamic network necessary for a functioning human intelligence. Putting them together, the enabling insight for recognizing the feasibility of a PNE is the identity of the mind with the brain’s dynamic, growing, anatomical architecture.

The initial recognition came from studies of learning in brain networks, which showed that the regulation of connection weights in synaptic strengths during learning followed the same activity-dependent specification as the formation and maintenance of synapses and their neurons in embryological and fetal neural development (von de Malsburg & Singer, 1988). This is never a static implementation; the brain’s functional anatomy is continually developing through embryogenesis, through fetal activity-dependent specification of neural connections, through growth of learning in childhood, and then continuing throughout life (Luu & Tucker, 2023). Once the neurodevelopmental identity of brain and mind is recognized, it becomes clear that a theory of mind must be a theory of neural development. The neurodevelopmental identity principle can be stated in phenomenological terms: cognition is neural development, continued in the present moment (Tucker & Luu, 2012). What we experience in consciousness is the neurodevelopmental process that is constructing our expectation of the near future. Which all too quickly, of course, becomes the present moment, which will be captured or not in the ongoing consolidation of memory.

The connectional architecture of the human brain is now understood sufficiently that a first approximation can be achieved for any individual’s brain. An important advance was the anatomical characterization of primate cortical anatomy, described as the Structural Model (García-Cabezas, Zikopoulos, & Barbas, 2019). Current anatomically-correct, large-scale neuromorphic emulations are now providing insights for interpreting neurophysiological evidence (Sanda et al., 2021), implying the functional equivalence of brain activity with a reasonably complex neurocomputational emulation.

Furthermore, the nature of the processing unit replicated in each cortical column is being specified by the computational model of the canonical cortical microcolumn (Bastos et al., 2012), allowing the regional anatomical differences in cortical columns (which are now well characterized in humans) to provide predictive information on the processing characteristics of each cortical region, and thus generic human cortical column emulations. Recognizing that neural emulations could achieve reasonable accuracy by simulating cortical columns, rather than multi-billions of individual neurons, simplifies the computational emulation problem significantly (Bennett, 2023), with a computing anatomy that science could soon specify in adequate detail.

With the realization that the mind’s information is represented in the connectional anatomy of the cortex, supported by the vertical integration of the neuraxis, and with the increasing insights into the architecture of these connections that yields cognitive function, we can see that each act of mind is implemented through the developing synaptic differentiation and integration of the brain’s physical, anatomical connectivity. With this realization, major features of human neuropsychology can be read from the brain’s connectional anatomy (Luu & Tucker, 2023; Luu, Tucker, & Friston, 2023; Tucker, 2007; Tucker & Luu, 2012, 2021, 2023).

Of course, the mind is embodied: we experience and think through bodily image schemas (Johnson & Tucker, 2021). Therefore the personal neuromorphic emulation must be a bodily emulation as well, very likely being acceptable only when provided with a functional bodily avatar that feels like us.

At first, the principle of the identity of mind with brain anatomy and function may seem to imply that the mind must die when the brain dies (Ororbia & Friston, 2023). This is the theory of mortal weights (Hinton & Salakhutdinov, 2006). The connectional structure (weights) of our networks will dissolve when we die.

However, because the brain/mind is indeed physical, and therefore fully defined by its informational (entropic) content, the next realization is the possibility of identifying the information architecture of the brain/mind in information theory with enough detail that it becomes computable. Once it does, it will be possible to achieve a durable neuroinformatic replication of your individual self in non-biological form.

The insight is that if the mind is identical with brain anatomy, then a sufficiently accurate computational reconstruction of the developing (non-equilibrium steady state) brain anatomy (preferably with the joint emulation of the essential bodily homeostatic controls and their essential subjectivity) can then manifest a reasonable approximation to the personal mind, the subjective process and experience of self.

Fundamentally, the emerging insight into the neurodevelopmental basis of experience prepares us to cross this next level of analysis, to understand the identity of intelligence not just with the biological basis of mind but with information theory, as described next.

The Informatic Basis of Organisms is Computable

As stated above, a fundamental schism between our understanding of animate (living) and inanimate (dead) matter has been represented by the concept of entropy. The laws of physics, particularly thermodynamics, require that all physical interactions tend toward greater entropy, the loss of complexity of form and the release of free energy. In contrast, life appears to find ways to avoid entropy (Schrodinger, 1944).

This distinction has implied that two different sciences are required for living and non-living things. This implication may be wrong, as shown by more recent interpretations of the physics of self-organizing (non-equilibrium steady state thermodynamic) living systems.

These interpretations have approached the brain’s cognitive function with physical principles of Bayesian mechanics that align closely with the changing thermodynamics of physical systems (Adams, Shipp, & Friston, 2013; Friston, 2010; Friston & Price, 2001; Hobson & Friston, 2012). The implication is that the self-organization of living organisms is not a fundamental violation of entropy, but rather a level of non-equilibrium steady state of complex systems (biological organisms) in which minimizing free energy allows growth or development of the self-organized form (Friston 2019). This theoretical advance can be described as the principle of the informatic basis of organisms.

The Bayesian formulations of physical systems are aligned precisely with principles of information theory, consistent with the intimate relation between information and thermodynamics in modern physics (Parrondo, Horowitz, & Sagawa, 2015). The emerging realization is that the physical mechanisms of complex, developing (Bayesian) self-organizing systems like the human brain may have an adequate functional description in information form (Friston, 2019; Friston, Wiese, & Hobson, 2020; Ramstead, Constant, Badcock, & Friston, 2019).

Now, a sufficiently accurate information model should computable, not restricted to a particular physical form. As the Bayesian mechanics of nonequilibrium steady state systems (brains) are characterized in sufficient detail in the near future, a computational implementation of that detail should naturally emerge.

Of course, there may be physical computers that are mortal, with their inherent knowledge limited to the hardware weights. But, in that case the information theoretical description is inadequate, incomplete. If it is restricted to mortal computers (Ororbia & Friston, 2023), the theory of active inference may be computable only be through biological form (in people’s all too mortal brains).

However, the advances in understanding the biological brain are being aided considerably by computational simulations. Such that we are understanding key neurophysiological systems by building computational simulations of them (Marsh et al., 2024; Sanda et al., 2021). These demonstrations imply that an information theory description can be made to be highly neuromorphic for the human brain, implementing the physical principles of neural development that we now know to be identical to the principles of cognitive and emotional development.

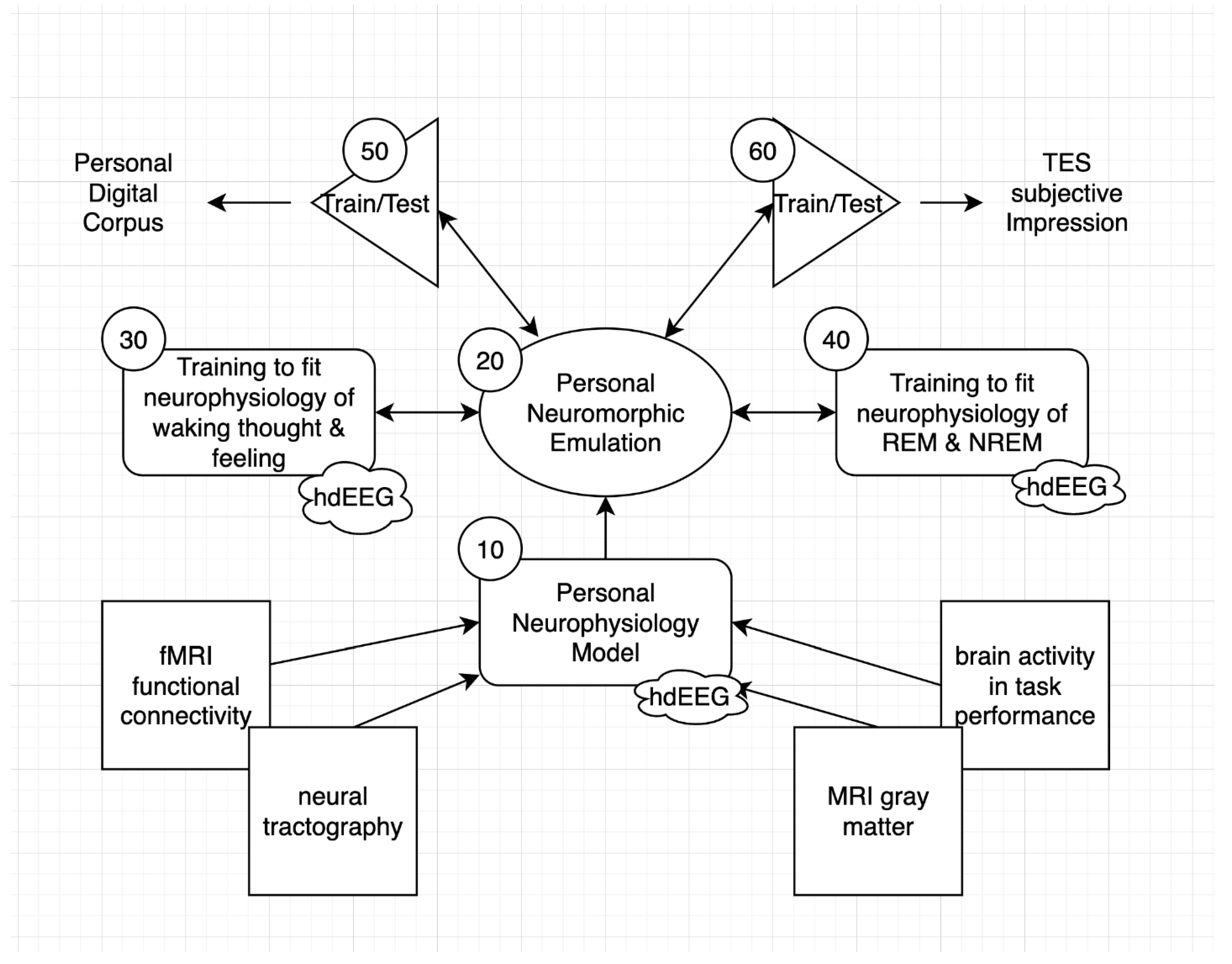

If so, then an emulation of a specific brain should be possible through building a replicant neuroanatomical architecture and training it to achieve the emotional and cognitive functionality of that person, with extensive support through neuromorphic AI. As we will emphasize below, this may be best achieved in the near term by successfully emulating the memory consolidation of the enduring self in the essential neurophysiological exercises of the nightly stages of sleep.

The replicant neuroanatomical architecture with a high degree of precision in emulating the individual’s brain is an essential starting point. We emphasize that the general architecture of the human brain is now understood in ways that can be aligned with current theories of active inference (Tucker & Luu, 2021), and structural and functional neuroimaging provides detail on individual human anatomy and function (Jirsa, Sporns, Breakspear, Deco, & McIntosh, 2010). The detail required for a replicant neuroanatomical architecture will require several orders of magnitude improvement over these generic models. The current neuroscience literature is vast and rapidly growing. We can imagine development of a specialized LLM AI trained on the current literature, and linked to advanced neuronal modeling software/hardware (Khacef et al., 2023), allowing construction of a high precision individual neuromorphic model. The question is how to emulate the weights of the person’s brain with sufficient accuracy to reconstruct the functional self.

Inferring the Weights of a Mortal Computer

In discussing the computational and power requirements for large deep learning models, Hinton (Hinton, 2022) emphasizes that the assumption of conventional computation, that the software is independent from the hardware, may not be necessary for alternative computing methods. These methods could achieve effective machine learning with lower power requirements by modifying hardware weights (rather than the power-hungry memory writes of conventional digital computers). Hinton described such an approach as “mortal” computing, in that the weights, being hardware, cannot outlive the hardware.

Practically, the weights of large conventional deep learning models can be seen to become fixed in a similar sense, in that — although the model as a whole may be copied — the weights are not easily reconstructed without repeating massive, and even difficult to reproduce, training programs. To address this problem, Hinton, Vinyals, and Dean (2015) proposed a distillation approach, in which rigorously emulating a large model’s predictions allow the transfer of that model from a large cumbersome physical form to a more compact, or a more specialist, form (Hinton, Vinyals, & Dean, 2015).

Ororbia and Friston have recently explored the implications of mortal computing within the framework of active inference (Ororbia & Friston, 2023). In their analysis, the assumption is still that a mortal computer, like a person, is restricted to the lifespan of its hardware. Under the first principle of physical intelligence, that connections are the basis of intelligence similarly in ANNs and minds, replicating the connection weights from a person’s brain faces a similar problem faced by replicating complex models as discussed by Hinton et al (2015): The synaptic weights are not easily exported from the brain with our currently conceivable technology.

We propose that a possible solution is suggested by the realization that the connection weights of the human brain are not static, but are constantly developing, consolidating memories through the ongoing exchange of information between limbic and neocortical networks (Buzsaki, 1996). The synaptic weights are of course important, but the effective information in the consolidation of experience is not the static weights, but their flux in the non-equilibrium steady state that is continually revised, continually developing, during waking through the process of active inference and during sleep through the consolidation of active inference.

The Bayesian mechanics of memory consolidation (reflecting the reciprocal dynamics of active inference: generative expectancy and error-correction) are thus complexly interwoven in the cognition of waking consciousness. However, they may be separated and laid bare, revealed in their primordial forms, in the sequential neurophysiological mechanisms for consolidating experience in the stages of sleep. Generative expectancy, and the implicit self-evidencing of the core historical self, is maintained and reorganized each night by REM sleep. Error-correction, and the learning of new states of the world, is consolidated in Non-REM (NREM) sleep. A sufficiently accurate modeling of this active process of daily and nightly self-organization (Luu & Tucker, 2023) provides the basis for inferring the information process of a PNE.

Thus the information theory of active inference might be developed to characterize an individual’s brain sufficiently that it would be computable in general (immortal) form. The challenge may be to recognize that the human active inference of experience is developmental, dynamic, not with static weights but with neurophysiological processes of ongoing consolidation that represent information dynamically, in the continuing flux of synaptic transmission rather than any static synaptic strength. Importantly, as we will see, whereas the synaptic weights of the human brain are not readily measurable, the flux of synaptic transmission in the consolidation in sleep may be.

Consolidating Active Inference in Sleep and Dreams

To understand such neural dynamics, a key realization is that the process of sleep negotiates the stability-plasticity dilemma of neural development in ways that are complementary to the waking process of active inference. In brief, NREM sleep consolidates new learning (Klinzing, Niethard, & Born, 2019). This is the evidence of the world that corrects the errors of personal predictions, but in a way that stabilizes the new information within the neural architecture at the expense of the old self (existing connections). The unpredicted events of the day become the significant uncertainties that must be integrated in mind through the slow oscillations and spindles of NREM sleep. The unexpected information of the day’s experience engenders anxiety, so that it engages priority in the dynamic consolidation process of ongoing neural development (Tucker & Luu, 2023). Transitioning from rumination to enduring knowledge, the potentially significant information of the day becomes the plasticity, the new learning, of the network consolidation in NREM sleep.

The companion to NREM sleep is REM, the paradoxical sleep in which our brains are active in the vivid, emotional, and bizarre experiences of our dreams. Neurobiologically, REM is the primordial mechanism for consolidating self-organization, establishing the instinctual structure of the genome through actively exercising this structure through the endogenous neurodevelopmental challenges of REM dreams (Luu & Tucker, 2023). The connections/associations of the network in dream experiences are quasi-random, phenomenally bizarre events, but they are experienced by the primordial self, the implicit actor in the dream (you) who must attempt to self-preserve in each highly unexpected, unpredicted, dream scenario. The effect is to exercise the self-preservation affordances of the implicit self, the anonymous narrator who experiences each dream episode.

Examining the emergence of REM in the human fetus, we can infer that the Bayesian expectancy of the core self — the generative process of active inference — is instinctual, initially specified by the ontogenetic instructions of the genome. We begin self-organizing our integral neural architecture of activity-dependent specification through REM dreams fairly early in fetal development, around 25 weeks gestational age, well before we have any postnatal experience to consolidate (Friston et al., 2020; Hobson, 2005; Jouvet, 1994; Luu & Tucker, 2023). All we have at this early fetal stage is the nascent instinctual motives endowed by the genome. With the core (largely subcortical) mechanisms of the primordial self, we are able to exercise and grow these mechanisms during the quasi-random experiences of fetal REM sleep in ways that allow each of us to self-organize an increasingly general organismic self, ready for the first experiences of infancy.

This dominance of REM in self-organization continues in the newborn infant’s first days and weeks of experience, when 50% of the time is spent in REM sleep. Only later in the first year of post-natal life does NREM become sufficiently well-organized to contribute its unique counterpart to neural self-organization, and the consolidation of memory, in sleep (Luu & Tucker, 2023). Just as NREM sleep consolidates recent, explicit, external memory in the mature brain (Diekelmann & Born, 2010; Rasch & Born, 2013) it seems as if the maturation of NREM sleep later in the first year of life achieves the increasing differentiation of the Markov blanket separating the individuated self from the social world (Luu & Tucker, 2023).

REM continues the exercise and thus continuing integration of the primordial self in each night’s dreams throughout life, as an ongoing counterpart to the disruption by new learning caused by NREM consolidation of external information. An important paradox is that this REM process, by strengthening the integral stability of the self in the face of (unpredictable dream) plasticity, will provide the basis for generative intelligence through primary process cognition, the implicit manifestation of organismic creativity (Hobson, 2009; Hobson & Friston, 2012). The mechanism for achieving stability of the implicit self — apparently by challenging it with the unpredictable bizarre experiences of dreams — becomes the generator of novel creativity (Tucker & Johnson, in preparation).

Although the theoretical formulation of the Bayesian mechanics of sleep as the complements to the waking elements of active inference is still in development (Tucker, Luu, & Friston, in preparation), the basics of memory consolidation can be summarized in a way that emphasizes the opportunity for accurately modeling a mortal computer. The creation of memory, the ongoing stuff of the self, requires the consolidation of daily experience within the architecture of our existing cerebral networks, the Bayesian priors of the self. We don’t need to extract fixed weights of the mortal computer; we can observe these connections in the complement of active inference each night, in the dynamic neurophysiology of our dreams. As an old self copes with new dream experiences, it manifests its essential Bayesian mechanics of its non-equilibrium steady state, the primordial self.

The pattern of human sleep, with 5 or so NREM-REM cycles each night is unique even among big primates (Samson & Nunn, 2015), apparently supporting the extended neural plasticity and developmental neoteny of the long human juvenile period (Luu & Tucker, 2023). This pattern varies from extended NREM and brief REM intervals in early cycles to brief NREM and extended REM cycles later in the night’s sleep, apparently reflecting a shift from consolidating recent memories in NREM (favoring plasticity) toward more general integration of the enduring self in REM (favoring stability) (Tucker, Luu, and Friston, in preparation). As a result, the consolidation dynamics most important to emulate for constructing a full PNE may be those of the late night, reflecting engagement of the foundational weights of the childhood self. To the extent that the NREM integration of external events balances the ongoing REM exercise of the implicit historical self, these alternative modes of connection weight re-organization may reflect the variational Bayesian mechanics of self-evidencing through adaptive experience that could then be manifest in an adequate PNE.