Submitted:

20 June 2024

Posted:

21 June 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

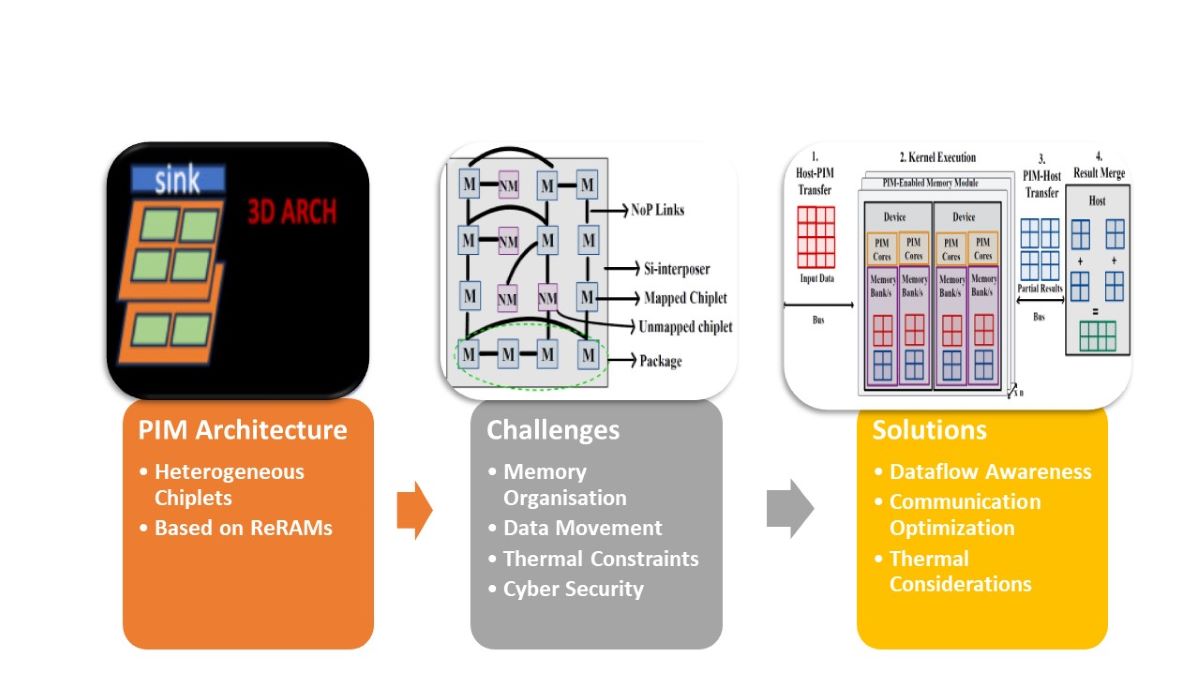

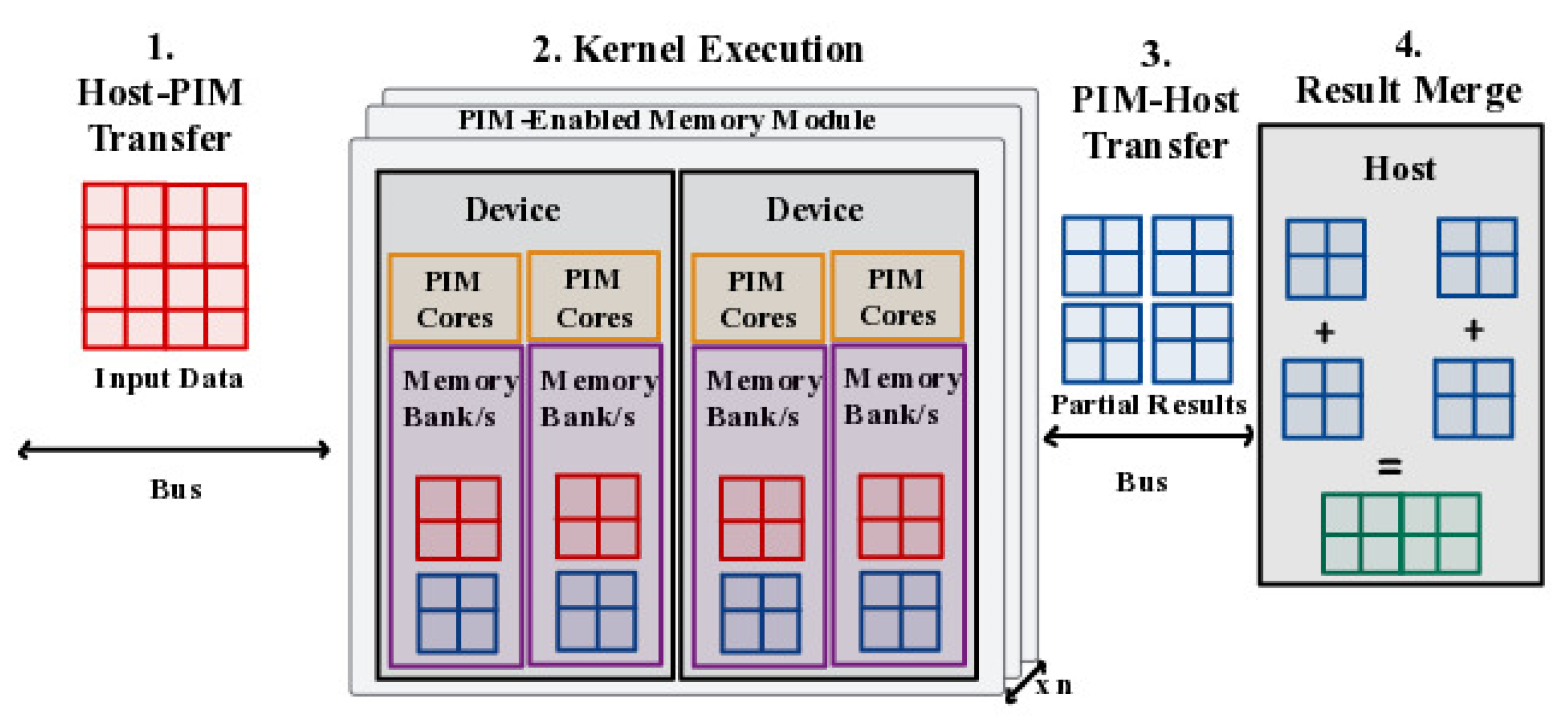

2. Processing in Memory (PIM)

2.1. Introduction

2.2. Challenges

- Memory Organization: PIM requires a rethinking of memory organization to enable processing elements within the memory subsystem. CPUs and GPUs have different memory access patterns and requirements, which need to be accommodated in the design. Efficiently organizing and managing data in a PIM architecture can be complex, especially when dealing with heterogeneous processing units.

- Programming Model: PIM architectures require a programming model that allows developers to express data and task parallelism effectively. Developing software for PIM architectures can be challenging due to the need for explicit data placement and synchronization between the CPU and GPU components. The programming models need to be designed to fully exploit the potential parallelism offered by PIM while maintaining ease of use.

- Data Movement: Efficient data movement is crucial for PIM architectures. Moving data between the CPU and GPU components can incur significant overhead due to the communication between different memory spaces. Minimizing data movement and optimizing data transfer mechanisms become essential for achieving high performance in heterogeneous CPU-GPU architectures.

- Power and Thermal Constraints: PIM architectures can potentially consume significant power due to the increased integration of processing elements within the memory subsystem. Managing power and thermal constraints in heterogeneous CPU-GPU architectures is critical to prevent overheating and ensure reliable operation. Designing efficient power management techniques that balance performance and energy consumption is a significant challenge.

- Memory Consistency and Coherence: Maintaining memory consistency and coherence in PIM architectures is complex, particularly in heterogeneous CPU-GPU systems. CPUs and GPUs often have their own caches and memory hierarchies, which need to be synchronized to ensure data integrity and correctness. Developing efficient coherence protocols and memory consistency models for heterogeneous PIM architectures is a non-trivial task.

- Hardware Design and Integration: Hardware design challenges arise when integrating processing elements within the memory subsystem. PIM architectures require modifications to the memory controller, cache hierarchy, and interconnects to enable efficient data processing within memory. Co-designing the hardware components and optimizing the integration of processing elements in a heterogeneous CPU-GPU architecture is a significant challenge.

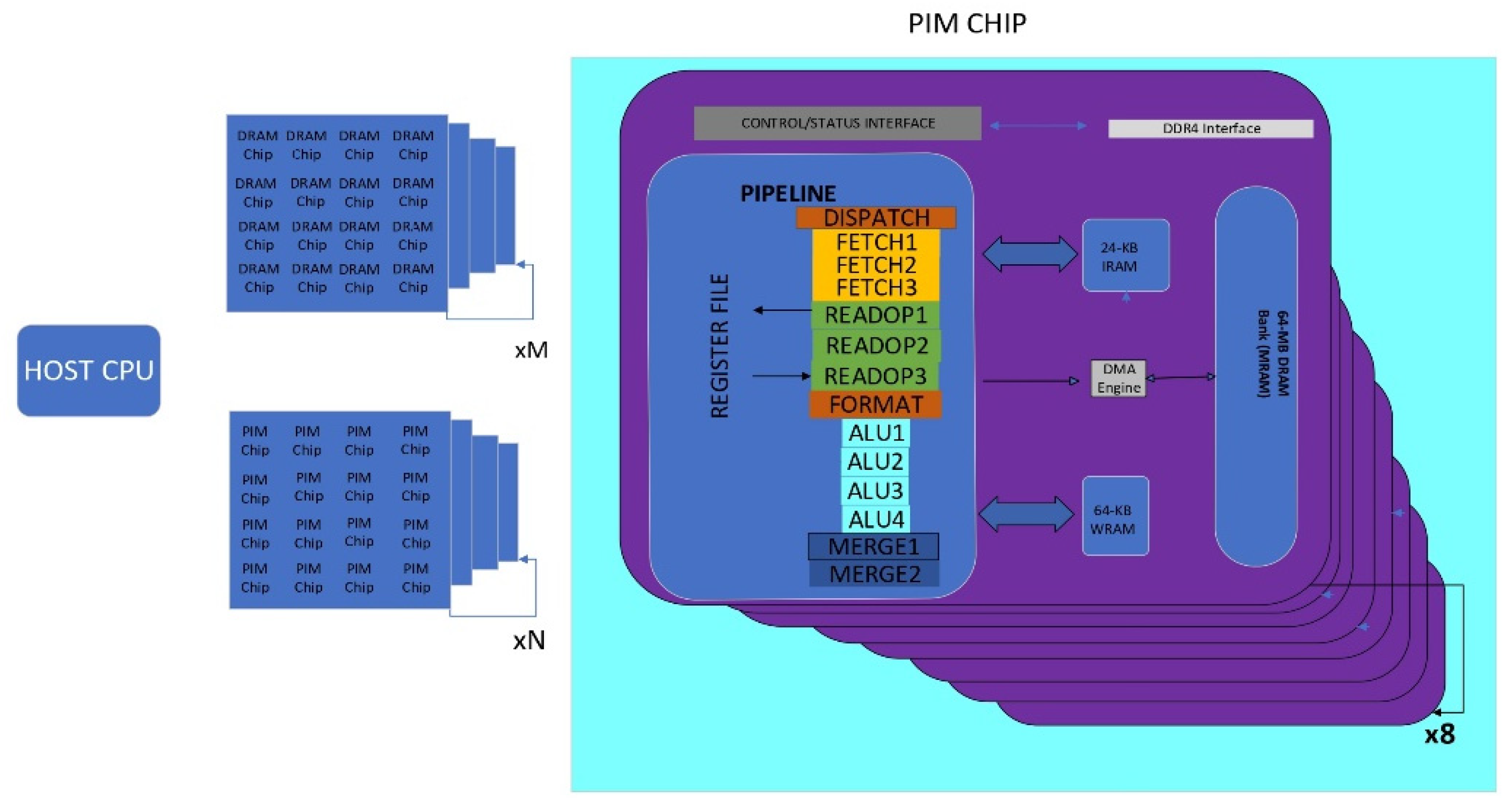

3. PIM Based Systems

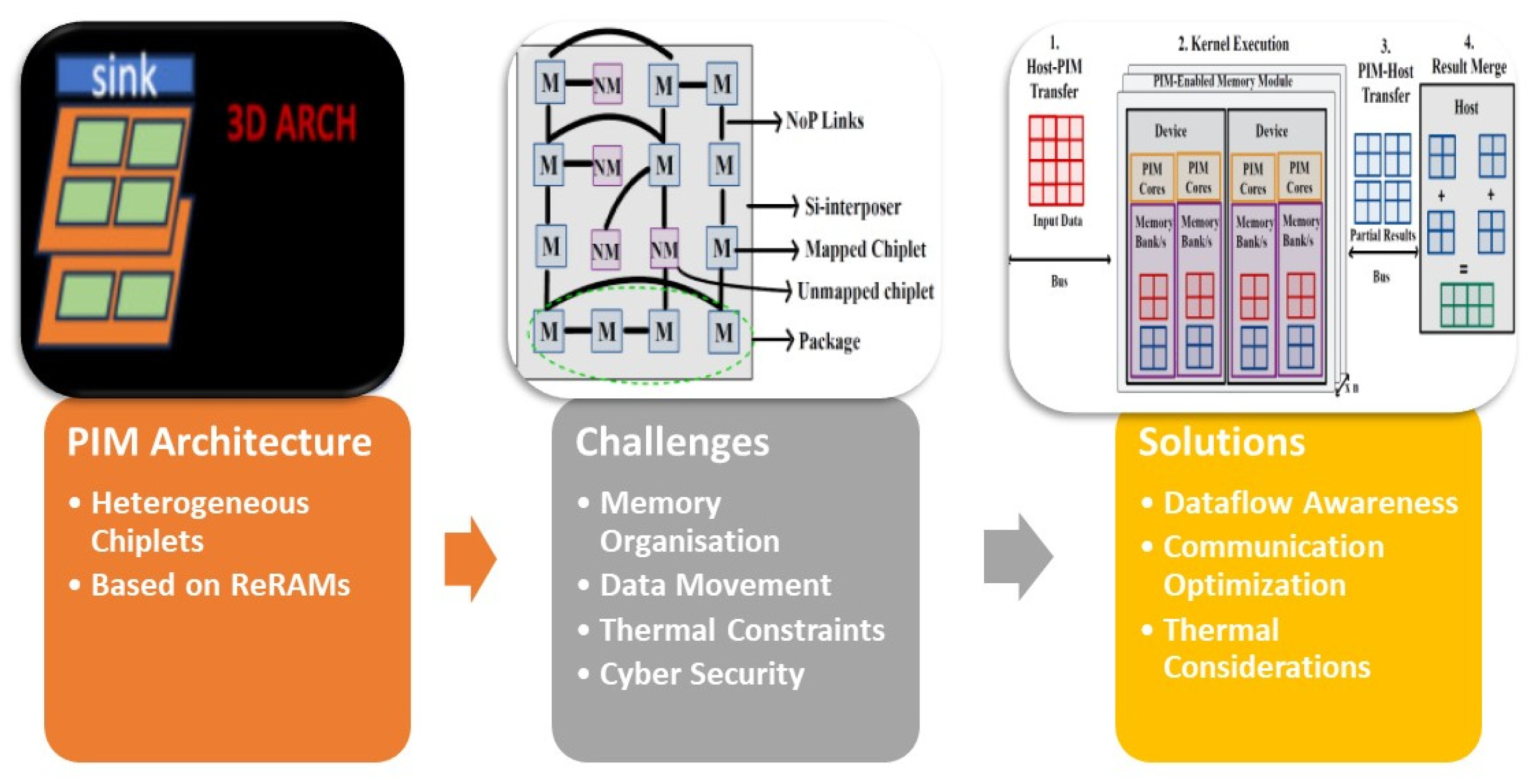

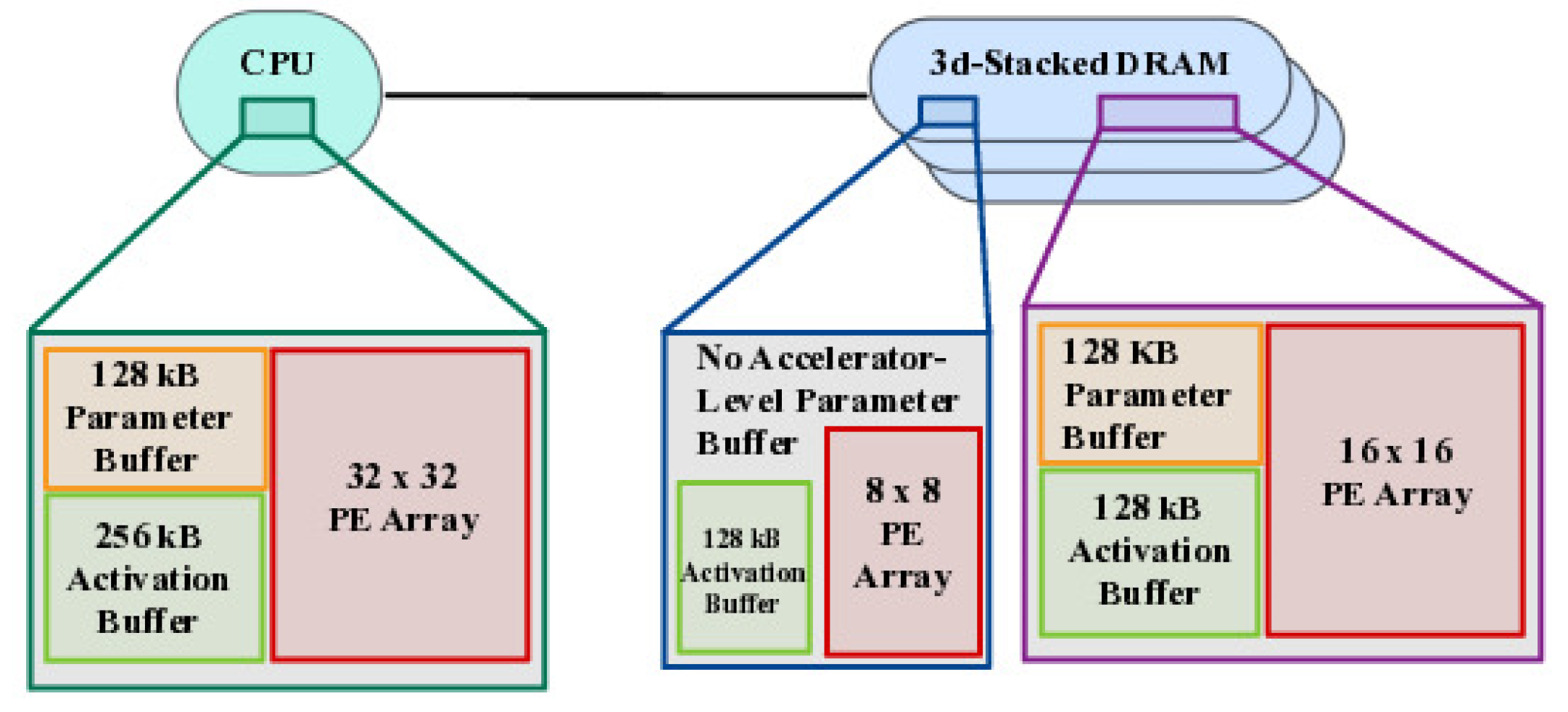

3.1. Heterogenous PIM Architecture

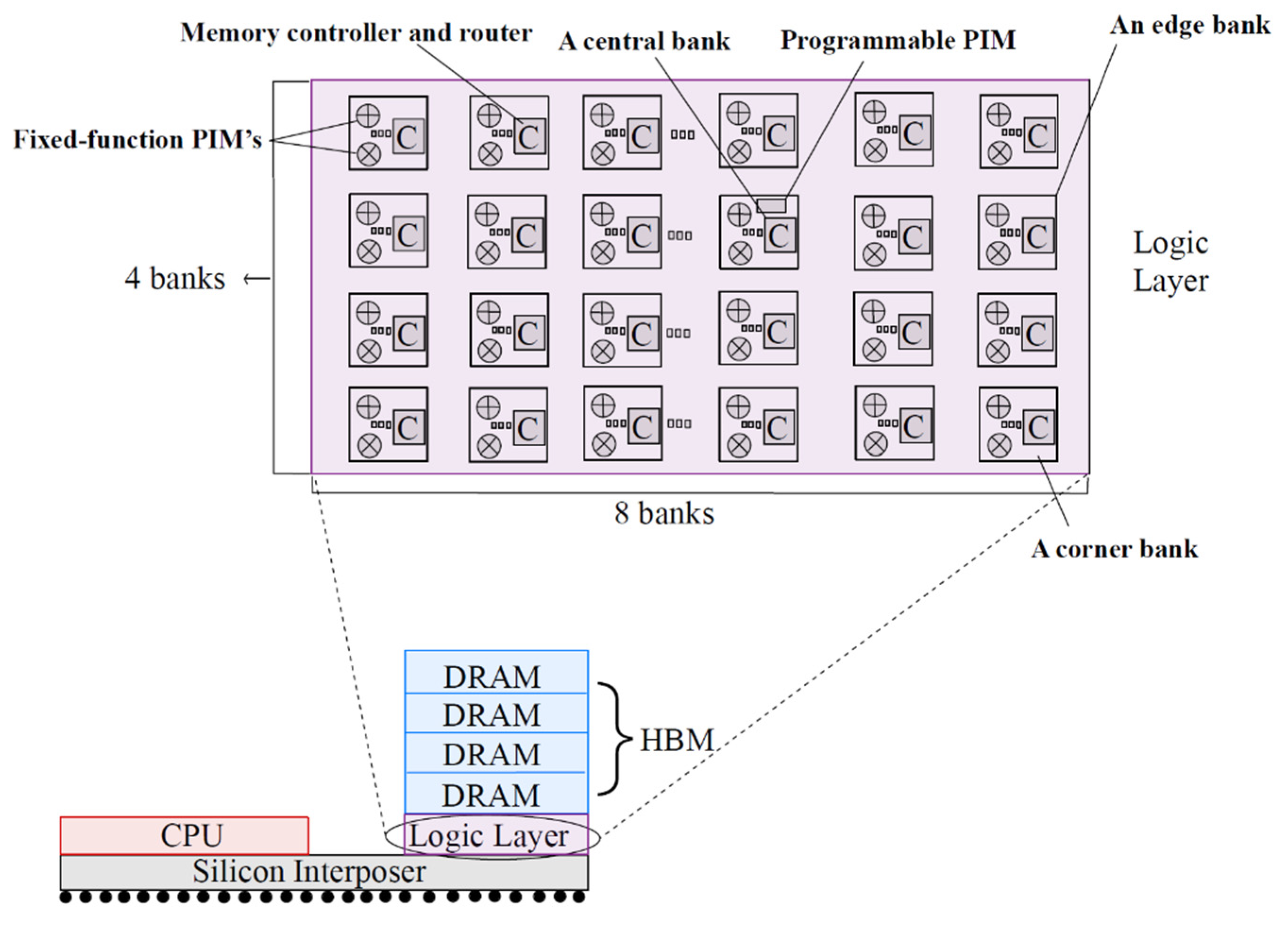

3.2. Data Flow Aware Architecture

3.3. Thermally Aware Architecture

3.4. Processing-in-Memory Systems Applications

3.4.1. Graph Neural Networks

3.4.2. NN Inference

4. Necessity of Cyber Security in PIM

5. Summary of the Review

- Exploring advanced memory technologies: Further investigation into emerging memory technologies, such as memristors or spintronics, can offer new opportunities for enhancing the performance and energy efficiency of PIM architectures.

- Optimizing communication and interconnectivity: Continued research on efficient on-chip interconnection networks and communication protocols can further reduce data movement and latency in PIM architectures.

- Integration with emerging technologies: Exploring the integration of PIM architectures with other emerging technologies, such as neuromorphic computing or quantum computing, can lead to novel and more efficient computing systems.

- Security and privacy considerations: Addressing the cybersecurity challenges associated with deep neural networks and PIM architectures, including adversarial attacks, model stealing attacks, and privacy concerns, is crucial for the widespread adoption of these technologies.

- Hardware-software co-design: Further exploration of hardware-software co-design approaches can enable better optimization and utilization of PIM architectures, considering the unique characteristics of deep learning workloads.

- Real-world application deployment: Conducting practical experiments and case studies to evaluate the performance, energy efficiency, and scalability of PIM architectures in real-world deep learning applications can provide valuable insights for their adoption.

6. Conclusion

References

- Liu, J.; Zhao, H.; Ogleari, M.A.; Li, D.; Zhao, J. Processing-in-Memory for Energy-Efficient Neural Network Training: A Heterogeneous Approach. In Proceedings of the 51st Annual IEEE/ACM International Symposium on Microarchitecture (MICRO-51); IEEE Press: 655-668, 2018. [Google Scholar] [CrossRef]

- Sharma, H.; Narang, G.; Doppa, J.R.; Ogras, U.; Pande, P.P. Dataflow-Aware PIM-Enabled Manycore Architecture for Deep Learning Workloads. arXiv preprint. arXiv:abs/2403.19073, 2024.

- Narang, G.; Ogbogu, C.; Doppa, J.; Pande, P. TEFLON: Thermally Efficient Dataflow-Aware 3D NoC for Accelerating CNN Inferencing on Manycore PIM Architectures. ACM Trans. Embed. Comput. Syst. Just Accepted. 20 May. [CrossRef]

- Joardar, B.K.; Choi, W.; Kim, R.G.; Doppa, J.R.; Pande, P.P.; Marculescu, D.; Marculescu, R. 3D NoC-Enabled Heterogeneous Manycore Architectures for Accelerating CNN Training: Performance and Thermal Trade-Offs. In Proceedings of the Eleventh IEEE/ACM International Symposium on Networks-on-Chip, 19 October 2017; pp. 1–8. [Google Scholar]

- Giannoula, C.; Yang, P.; Vega, I.F.; Yang, J.; Li, Y.X.; Luna, J.G.; Sadrosadati, M.; Mutlu, O.; Pekhimenko, G. Accelerating Graph Neural Networks on Real Processing-In-Memory Systems. arXiv preprint 26 February 2024. arXiv:2402.16731.

- Oliveira, G.F.; Gómez-Luna, J.; Ghose, S.; Boroumand, A.; Mutlu, O. Accelerating Neural Network Inference with Processing-in-DRAM: From the Edge to the Cloud. IEEE Micro 2022, 42, 25–38. [Google Scholar] [CrossRef]

- Gómez-Luna, J.; El Hajj, I.; Fernandez, I.; Giannoula, C.; Oliveira, G.F.; Mutlu, O. Benchmarking Memory-Centric Computing Systems: Analysis of Real Processing-in-Memory Hardware. In Proceedings of the 2021 12th International Green and Sustainable Computing Conference (IGSC), 18 October 2021; pp. 1–7. [Google Scholar]

- Ogbogu, C.; Joardar, B.K.; Chakrabarty, K.; Doppa, J.; Pande, P.P. Data Pruning-enabled High Performance and Reliable Graph Neural Network Training on ReRAM-based Processing-in-Memory Accelerators. ACM Transactions on Design Automation of Electronic Systems 2024.

- Dhingra, P.; Ogbogu, C.; Joardar, B.K.; Doppa, J.R.; Kalyanaraman, A.; Pande, P.P. FARe: Fault-Aware GNN Training on Re-RAM-based PIM Accelerators. arXiv preprint 19 January 2024. arXiv:2401.10522.

- Lee, S.; Kang, S.H.; Lee, J.; Kim, H.; Lee, E.; Seo, S.; Yoon, H.; Lee, S.; Lim, K.; Shin, H.; Kim, J. Hardware Architecture and Software Stack for PIM Based on Commercial DRAM Technology: Industrial Product. In Proceedings of the 2021 ACM/IEEE 48th Annual International Symposium on Computer Architecture (ISCA), 14 June 2021; pp. 43–56. [Google Scholar]

- Joardar, B.K.; Arka, A.I.; Doppa, J.R.; Pande, P.P.; Li, H.; Chakrabarty, K. Heterogeneous Manycore Architectures Enabled by Processing-in-Memory for Deep Learning: From CNNs to GNNs (ICCAD Special Session Paper). In Proceedings of the 2021 IEEE/ACM International Conference on Computer-Aided Design (ICCAD), 1 November 2021; pp. 1–7. [Google Scholar]

- Zheng, Q.; Wang, Z.; Feng, Z.; Yan, B.; Cai, Y.; Huang, R.; Chen, Y.; Yang, C.L.; Li, H.H. Lattice: An ADC/DAC-less ReRAM-Based Processing-in-Memory Architecture for Accelerating Deep Convolutional Neural Networks. In Proceedings of the 2020 57th ACM/IEEE Design Automation Conference (DAC), 20 July 2020; pp. 1–6. [Google Scholar]

- Zhao, X.; Chen, S.; Kang, Y. Load Balanced PIM-Based Graph Processing. ACM Transactions on Design Automation of Electronic Systems 2024.

- Sharma, H.; Mandal, S.K.; Doppa, J.R.; Ogras, U.Y.; Pande, P.P. SWAP: A Server-Scale Communication-Aware Chiplet-Based Manycore PIM Accelerator. IEEE Trans. Comput. Aided Des. Integr. Circuits Syst. 2022, 41, 4145–4156. [Google Scholar] [CrossRef]

- Jiang, H.; Huang, S.; Peng, X.; Yu, S. MINT: Mixed-Precision RRAM-Based In-Memory Training Architecture. In Proceedings of the 2020 IEEE International Symposium on Circuits and Systems (ISCAS), 12 October 2020; pp. 1–5. [Google Scholar]

- Das, A.; Russo, E.; Palesi, M. Multi-Objective Hardware-Mapping Co-Optimisation for Multi-DNN Workloads on Chiplet-Based Accelerators. IEEE Trans. Comput. 2024, 1–1. [Google Scholar] [CrossRef]

- Hyun, B.; Kim, T.; Lee, D.; Rhu, M. Pathfinding Future PIM Architectures by Demystifying a Commercial PIM Technology. In Proceedings of the 2024 IEEE International Symposium on High-Performance Computer Architecture (HPCA), 2 March 2024; pp. 263–279. [Google Scholar]

- Lopes, A.; Castro, D.; Romano, P. PIM-STM: Software Transactional Memory for Processing-In-Memory Systems. In Proceedings of the 29th ACM International Conference on Architectural Support for Programming Languages and Operating Systems, 27 April 2024; Volume 2, pp. 897–911. [Google Scholar]

- Bavikadi, S.; Sutradhar, P.R.; Ganguly, A.; Dinakarrao, S.M.P. Reconfigurable Processing-in-Memory Architecture for Data Intensive Applications. In Proceedings of the 2024 37th International Conference on VLSI Design and 2024 23rd International Conference on Embedded Systems (VLSID), IEEE; 2024; pp. 222–227. [Google Scholar]

- An, Y.; Tang, Y.; Yi, S.; Peng, L.; Pan, X.; Sun, G.; Luo, Z.; Li, Q.; Zhang, J. StreamPIM: Streaming Matrix Computation in Racetrack Memory. In Proceedings of the 2024 IEEE International Symposium on High-Performance Computer Architecture (HPCA), 2 March 2024; pp. 297–311. [Google Scholar]

- Gogineni, K.; Dayapule, S.S.; Gómez-Luna, J.; Gogineni, K.; Wei, P.; Lan, T.; Sadrosadati, M.; Mutlu, O.; Venkataramani, G. SwiftRL: Towards Efficient Reinforcement Learning on Real Processing-In-Memory Systems. arXiv preprint 7 May 2024. arXiv:2405.03967.

- Yang, Z.; Ji, S.; Chen, X.; Zhuang, J.; Zhang, W.; Jani, D.; Zhou, P. Challenges and Opportunities to Enable Large-Scale Computing via Heterogeneous Chiplets. In Proceedings of the 2024 29th Asia and South Pacific Design Automation Conference (ASP-DAC), 22 January 2024; pp. 765–770. [Google Scholar]

- Wang, C. Social Media Platform-Oriented Topic Mining and Information Security Analysis by Big Data and Deep Convolutional Neural Network. Technol. Forecast. Soc. Change 2024, 199, 123070. [Google Scholar] [CrossRef]

- Miranda-García, A.; Rego, A.Z.; Pastor-López, I.; Sanz, B.; Tellaeche, A.; Gaviria, J.; Bringas, P.G. Deep Learning Applications on Cybersecurity: A Practical Approach. Neurocomputing 2024, 563, 126904. [Google Scholar] [CrossRef]

- Çavuşoğlu, Ü.; Akgun, D.; Hizal, S. A Novel Cyber Security Model Using Deep Transfer Learning. Arab. J. Sci. Eng. 2024, 49, 3623–3632. [Google Scholar] [CrossRef]

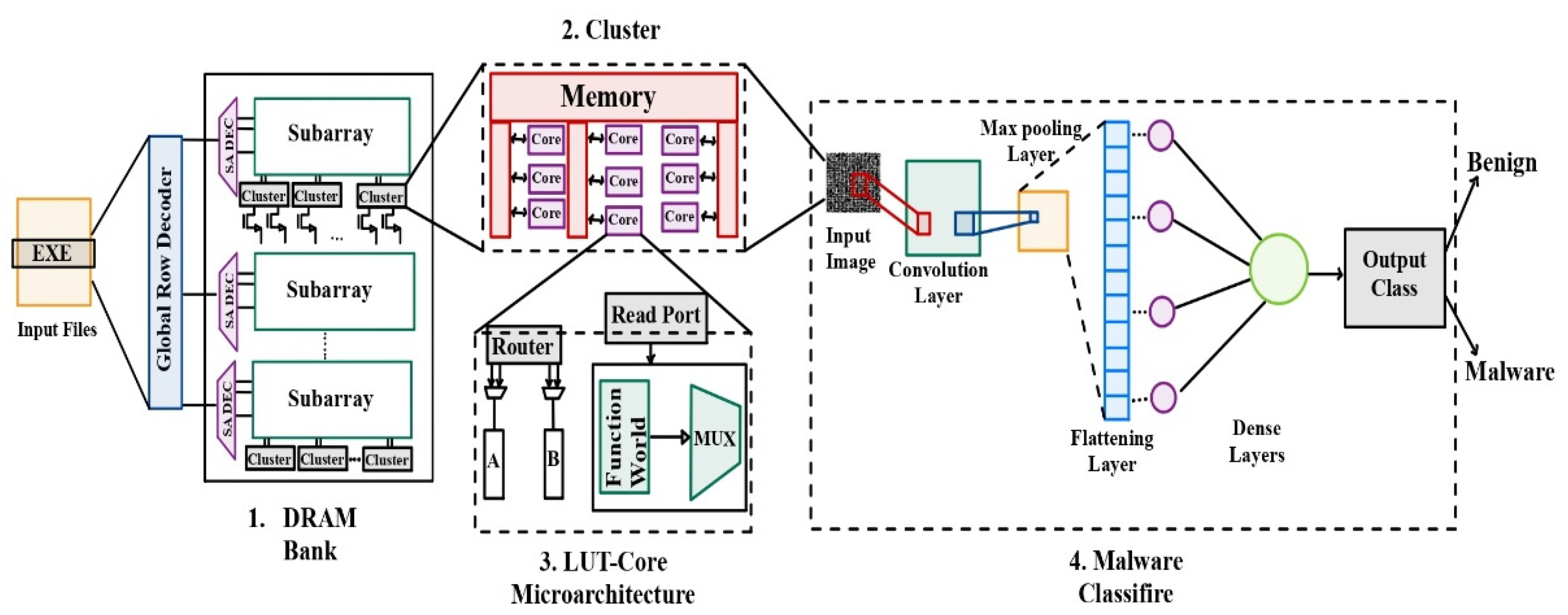

- Kasarapu, S.; Bavikadi, S.; Dinakarrao, S.M. Empowering Malware Detection Efficiency within Processing-in-Memory Architecture. arXiv preprint 12 April 2024. arXiv:2404.08818.

- Kanellopoulos, K.; Bostanci, F.; Olgun, A.; Yaglikci, A.G.; Yuksel, I.E.; Ghiasi, N.M.; Bingol, Z.; Sadrosadati, M.; Mutlu, O. Amplifying Main Memory-Based Timing Covert and Side Channels using Processing-in-Memory Operations. arXiv preprint 17 April 2024. arXiv:2404.11284.

- Asad, A.; Kaur, R.; Mohammadi, F. A Survey on Memory Subsystems for Deep Neural Network Accelerators. Future Internet 2022, 14, 146. [Google Scholar] [CrossRef]

- Kaur, R.; Mohammadi, F. Power Estimation and Comparison of Heterogeneous CPU-GPU Processors. In Proceedings of the 2023 IEEE 25th Electronics Packaging Technology Conference (EPTC), 5 December 2023; pp. 948–951. [Google Scholar]

- Kaur, R.; Mohammadi, F. Comparative Analysis of Power Efficiency in Heterogeneous CPU-GPU Processors. In Proceedings of the 2023 Congress in Computer Science, Computer Engineering, & Applied Computing (CSCE), 24 July 2023; pp. 756–758. [Google Scholar]

- Kaur, R.; Saluja, N. Comparative Analysis of 1-bit Memory Cell in CMOS and QCA Technology. In Proceedings of the 2018 International Flexible Electronics Technology Conference (IFETC), Ottawa, ON, Canada; 2018; pp. 1–3. [Google Scholar] [CrossRef]

- Safayenikoo, P.; Asad, A.; Fathy, M.; Mohammadi, F. An Energy Efficient Non-Uniform Last Level Cache Architecture in 3D Chip-Multiprocessors. In Proceedings of the 2017 18th International Symposium on Quality Electronic Design (ISQED), Santa Clara, CA, USA; 2017; pp. 373–378. [Google Scholar] [CrossRef]

- Asad, A.; AL-Obaidy, F.; Mohammadi, F. Efficient Power Consumption using Hybrid Emerging Memory Technology for 3D CMPs. In Proceedings of the 2020 IEEE 11th Latin American Symposium on Circuits & Systems (LASCAS), San Jose, Costa Rica; 2020; pp. 1–4. [Google Scholar] [CrossRef]

- Asad, A.; Kaur, R.; Mohammadi, F. Noise Suppression Using Gated Recurrent Units and Nearest Neighbor Filtering. In Proceedings of the 2022 International Conference on Computational Science and Computational Intelligence (CSCI), Las Vegas, NV, USA; 2022; pp. 368–372. [Google Scholar] [CrossRef]

| Paper | Approach/Architecture | Description | Key Features | Advantages | Challenges |

| [1] | Hardware Design with 3D Stacked Memory | Integration of fixed-function arithmetic units and programmable cores on a 3D die-stacked memory | Minimizes data movement, improves system performance, programming model and runtime system for offloading and scheduling | - Reduced data movement between processor and memory -Improved system performance - Enables efficient offloading and scheduling |

- Complex hardware design and integration - Programming model and runtime system development |

| [7] | UPMEM PIM Architecture | DRAM memory arrays combined with in-order cores (DRAM Processing Units - DPUs) on the same chip | Improves performance and energy efficiency in memory-bound workloads, benchmarking against CPU and GPU counterparts | - Enhanced performance in memory-bound workloads - Improved energy efficiency - Direct integration of processing units in memory |

- Limited scalability for certain workloads - Programming and software support for DPUs |

| [10] | Practical PIM Architecture with Commodity DRAM | Exploits bank-level parallelism in commercial DRAM and 2.5D/3D stacking integration technologies | Higher bandwidth, lower energy per bit transfer, no changes to host processors or application code | - Increased memory bandwidth - Reduced energy consumption per bit transfer - Seamless integration with existing systems |

- Overcoming stacking and integration challenges - Ensuring compatibility with diverse memory systems |

| [12] | Lattice Architecture with NVPIM | Utilizes Nonvolatile Processing-In-Memory (NVPIM) based on Resistive Random Access Memory (ReRAM) for accelerating DCNNs | Eliminates analog-digital conversions, reduces data copies/writes, improved energy efficiency and performance | - Eliminates costly analog-digital conversions - Reduced data copies and writes - Improved energy efficiency and performance |

- Integration and compatibility with existing systems - Achieving high-density ReRAM arrays |

| [18] | PIM-STM Library | Library providing various implementations of Transactional Memory (TM) for PIM systems | Efficient TM implementation in PIM devices, evaluation of different design choices and algorithms | - Efficient implementation of Transactional Memory (TM) in PIM devices - Provides guidelines and alternative design choices for TM in PIM architectures |

- Ensuring TM consistency and correctness - Overhead of TM implementations on PIM systems |

| [19] | Reconfigurable PIM Architecture | PIM architecture integrated within DRAM sub-arrays, leveraging multi-functional look-up-tables | Higher energy efficiency, programmability, and flexibility for CNN and DNN processing | - Increased energy efficiency - Programmability and flexibility for CNN and DNN processing - Utilizes multi-functional look-up-tables for operations |

- Designing efficient and scalable lookup-table-based architectures - Memory access and data dependencies |

| [20] | StreamPIM Architecture | Utilizes racetrack memory (RM) techniques and domain-wall nanowires to address memory wall issue | Improved performance and energy efficiency in large-scale applications, tight coupling of memory core and computation units | - Addresses memory wall issue in large-scale applications - Improved performance and energy efficiency - Tight coupling of memory core and computation units |

- Overcoming challenges in RM fabrication and integration - Ensuring reliable and efficient data movement in RM |

| Paper | Architecture | Challenges | Proposed Solutions |

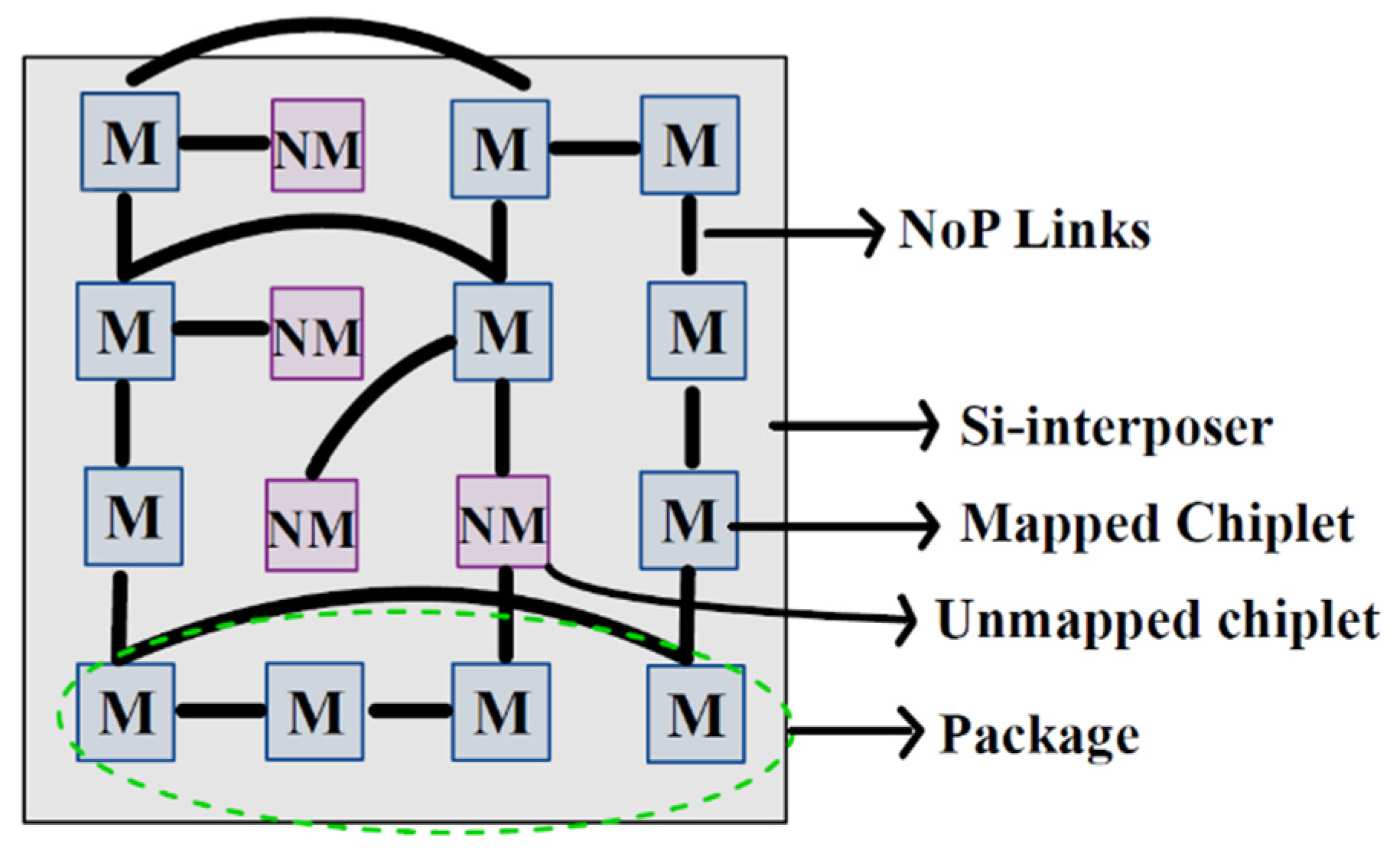

| [2] | Chiplet-based 2.5D architectures | Communication limitations, energy efficiency, cost advantages | Integration of multiple smaller dies through a network-on-interposer (NoI) |

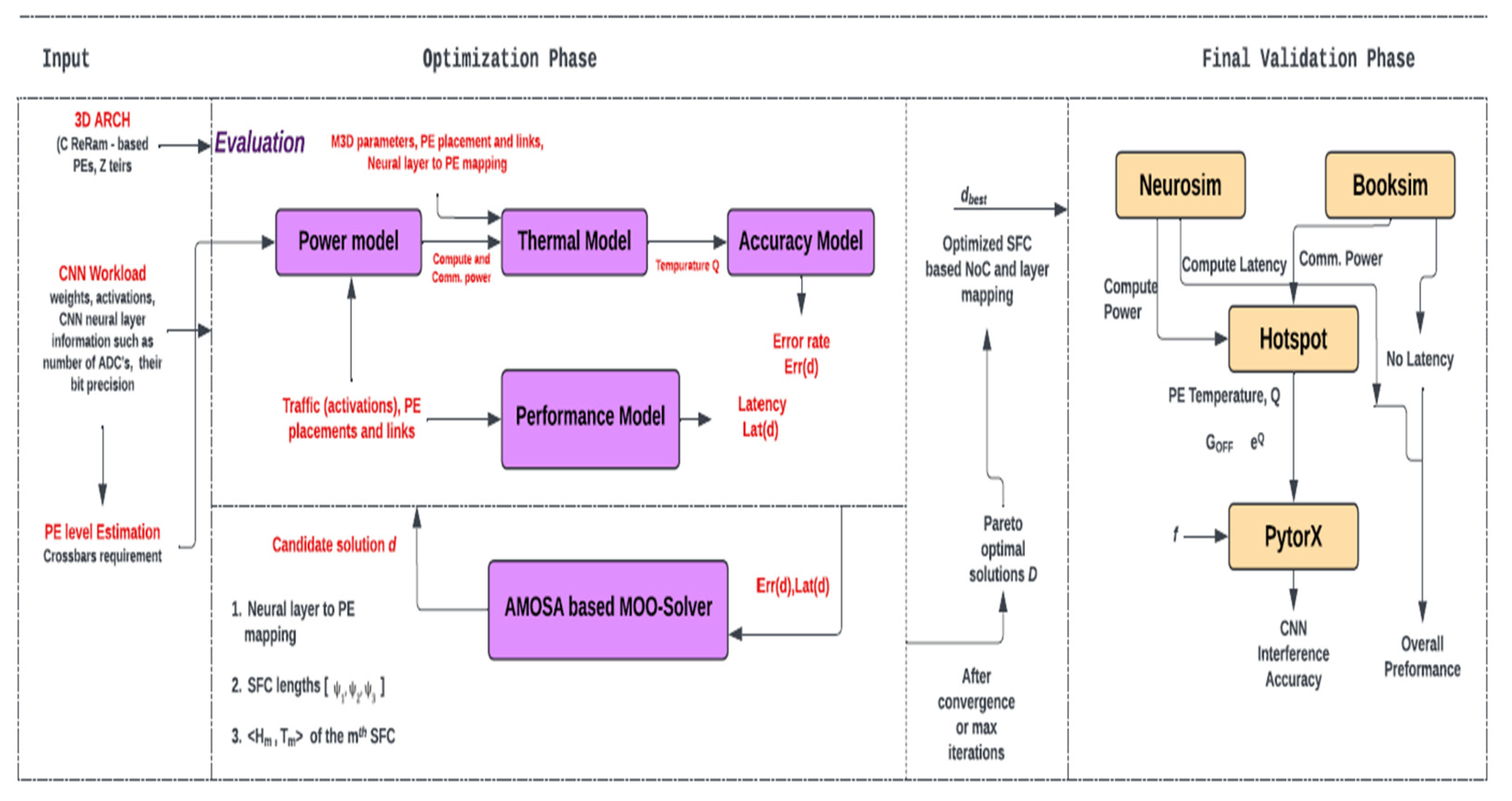

| [3] | Thermally optimized dataflow-aware monolithic 3D (M3D) NoC architecture | Efficient communication, thermal challenges of ReRAMs | Space-filling curves (SFCs) for dataflow-awareness, avoiding thermal hotspots, distributing high-power consuming cores |

| [11] | ReRAM-based processing-in-memory (PIM) architectures | Model accuracy, performance, noise, hard faults, process variations, limited write endurance | ReRAM-based heterogeneous manycore PIM designs |

| [14] | Network-on-package (NoP) architecture for DL workloads | Communication requirements, fabrication costs | SWAP architecture based on DL traffic characteristics |

| [15] | Mixed-precision RRAM-based compute-in-memory (CIM) architecture | Higher weight precision, ADC resolution | MINT architecture with analog computation inside memory array |

| [16] | Multi-accelerator systems, hardware-mapping co-optimization | Latency, energy consumption, cost | MOHaM framework for multi-objective hardware-mapping co-optimization |

| Paper | Methodology | Advantages | Challenges |

| [22] | Heterogeneous chip-lets | -Scaling up and scaling out computing systems -Reduced design complexity and costs |

- Chip-let interface standards - Packaging and security issues - Software programming |

| [23] | Deep Convolutional Neural Networks (DCNN) | - Superior accuracy and performance - Timely and effective social network security topic mining and analysis models |

-- |

| [24] | Deep Learning Techniques (LSTMs, DNNs, CNNs) combined with Transfer Learning | -Effective application in cybersecurity - Promising experimental results |

Challenges in spam filtering, malware detection, and adult content filtering |

| [25] | Deep Neural Networks and Transfer Learning | - High classification accuracies - State-of-the-art techniques in intrusion detection systems using deep learning |

-- |

| [26] | Processing-in-Memory (PIM) architecture | -Efficient malware detection - Higher throughput and improved energy efficiency |

|

| [27] | Processing-in-Memory (PiM) architectures and Timing Attacks | -- | -Security implications of PiM architectures - High-throughput timing attacks exploiting PiM architectures |

| Paper | Architecture | Challenges | Proposed Solutions | Future Scope | |

| [2] | Chiplet-based 2.5D architectures | Communication limitations, energy efficiency, cost advantages | Integration of multiple smaller dies through a network-on-interposer (NoI) | Exploring advanced interconnect technologies, optimizing power efficiency further | |

| [3] | Thermally optimized dataflow-aware monolithic 3D (M3D) NoC architecture | Efficient communication, thermal challenges of ReRAMs | Space-filling curves (SFCs) for dataflow-awareness, avoiding thermal hotspots, distributing high-power consuming cores | Investigating advanced thermal management techniques, extending to new memory technologies | |

| [11] | ReRAM-based processing-in-memory (PIM) architectures | Model accuracy, performance, noise, hard faults, process variations, limited write endurance | ReRAM-based heterogeneous manycore PIM designs | Enhancing error tolerance, exploring novel training algorithms for PIM architectures | |

| [14] | Network-on-package (NoP) architecture for DL workloads | Communication requirements, fabrication costs | SWAP architecture based on DL traffic characteristics | Exploring advanced packaging technologies, optimizing for heterogeneous workloads | |

| [15] | Mixed-precision RRAM-based compute-in-memory (CIM) architecture | Higher weight precision, ADC resolution | MINT architecture with analog computation inside memory array | Investigating novel analog computing schemes, optimizing for large-scale deployment | |

| [16] | Multi-accelerator systems, hardware-mapping co-optimization | Latency, energy consumption, cost | MOHaM framework for multi-objective hardware-mapping co-optimization | Exploring dynamic workload allocation, optimizing for emerging DL algorithms | |

| [18] | TEFLON: A Design Space Exploration Framework for Hardware Accelerators | Design space exploration, accelerator architectures | TEFLON framework for exploring accelerator designs with customizable datapath and memory hierarchy | Enhancing design exploration capabilities, incorporating new architectural innovations | |

| [21] | Deep Learning Accelerators: A Comprehensive Survey | Deep learning accelerator architectures, performance, energy efficiency | Survey of various deep learning accelerator architectures and their characteristics | Investigating hardware-software co-design, exploring heterogeneous computing platforms | |

| [23] | Efficient Processing of Deep Learning Models: A Tutorial and Survey | Deep learning model compression, quantization, hardware-friendly optimization | Tutorial and survey on various techniques for efficient processing of deep learning models | Exploring federated learning approaches, optimizing for edge and IoT devices | |

| [27] | Hardware Architectures for Deep Learning: A Survey | Hardware architectures for deep learning, accelerators, memory systems | Comprehensive survey on hardware architectures for deep learning, including accelerators and memory systems | Investigating neuromorphic computing, exploring advanced memory technologies |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).