Submitted:

25 April 2024

Posted:

28 April 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

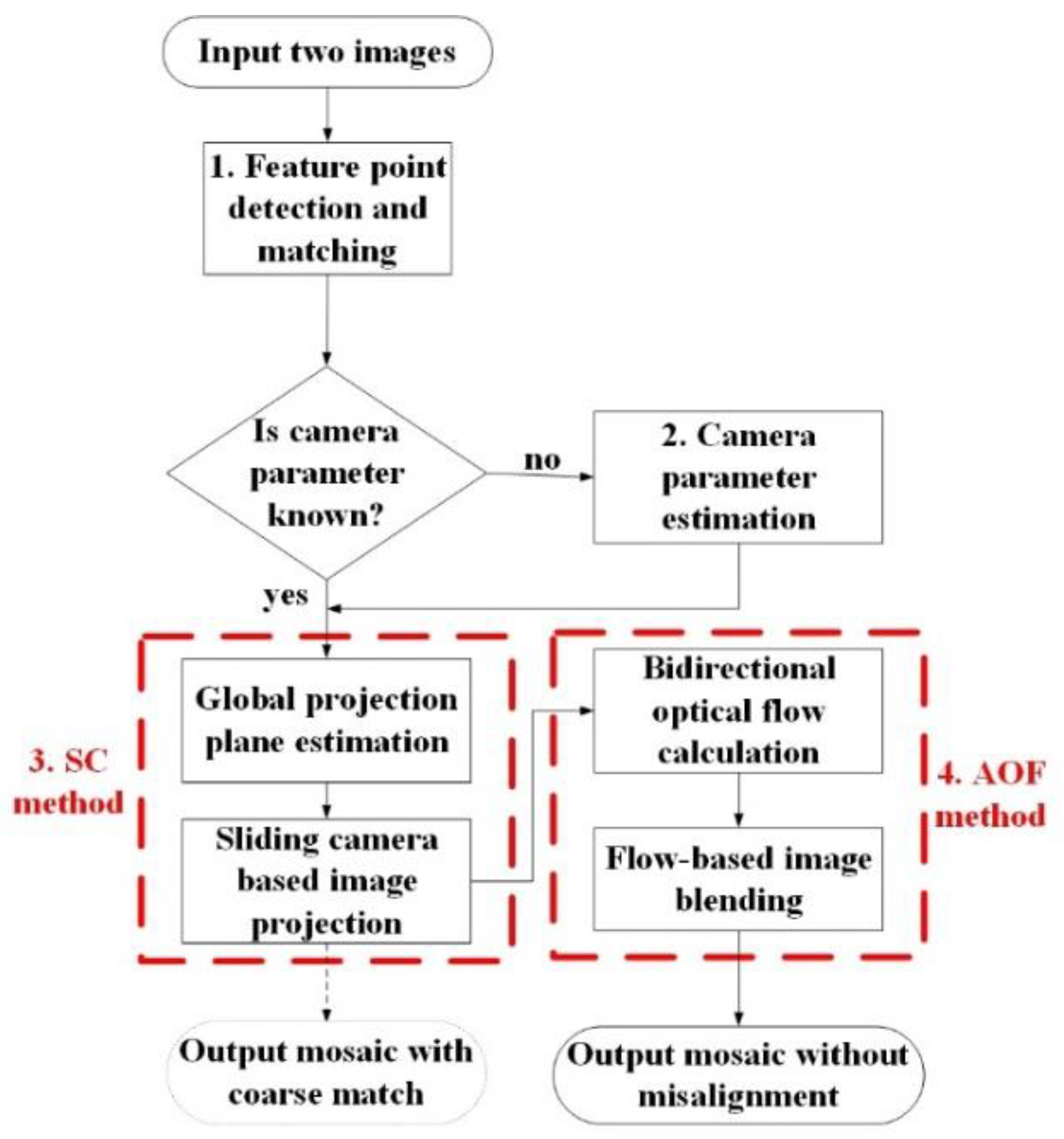

- The SC-AOF method innovatively uses an approach based on sliding camera to reduce perspective deformation. Combined with either a global projection model or a local projection model, this method can effectively reduce the perspective deformation.

- An optical flow-based image alignment and blending method is adopted to further mitigate misalignment and improve stitching quality of mosaic generated by a global projection model.

- Each step in the SC-AOF method can be combined with other methods to improve the stitching quality of the other methods. ‘

2. Related Works

3. Methodology

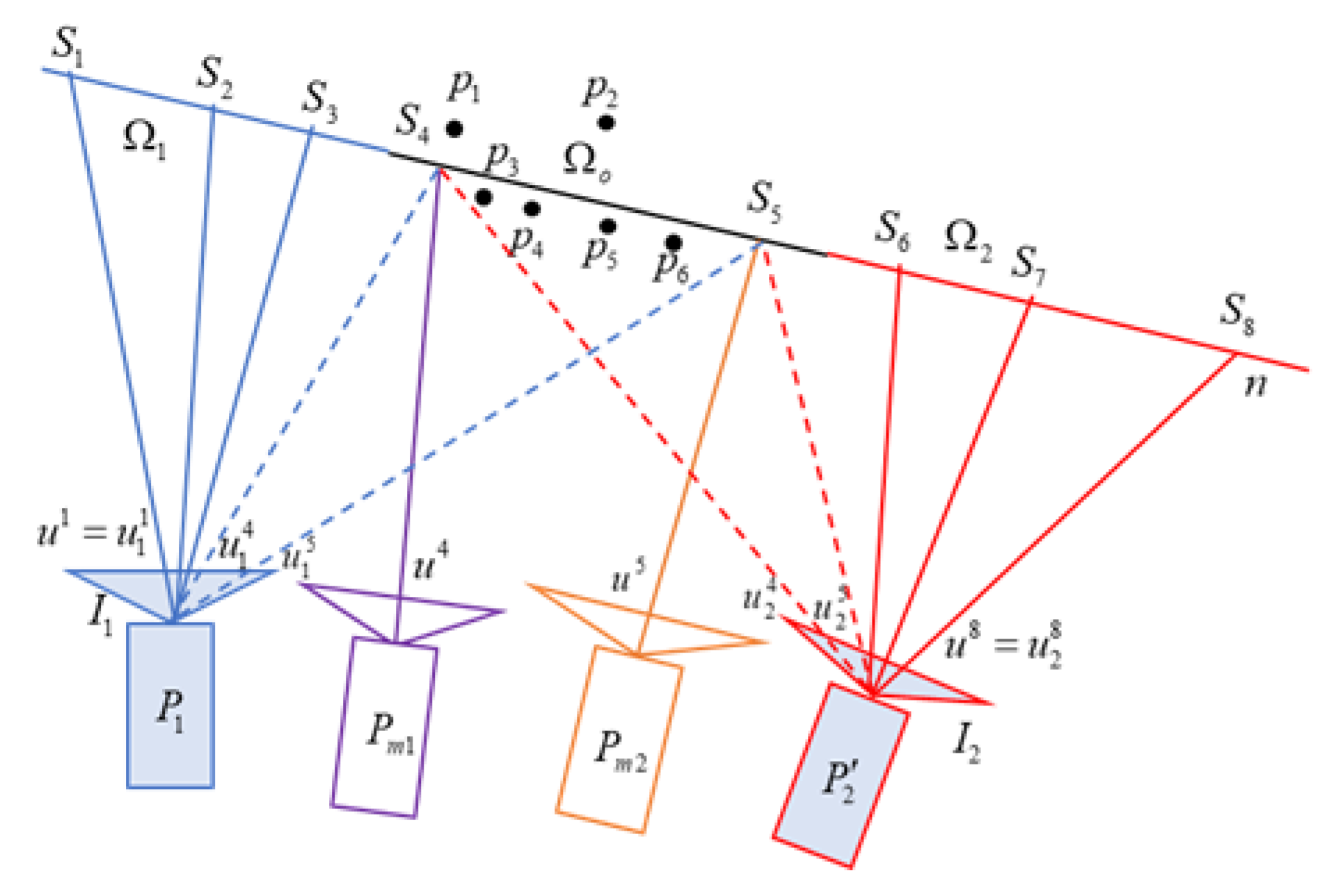

3.1. SC: Viewpoint Preservation Based on Sliding Camera

3.1.1. SC Stitching Process

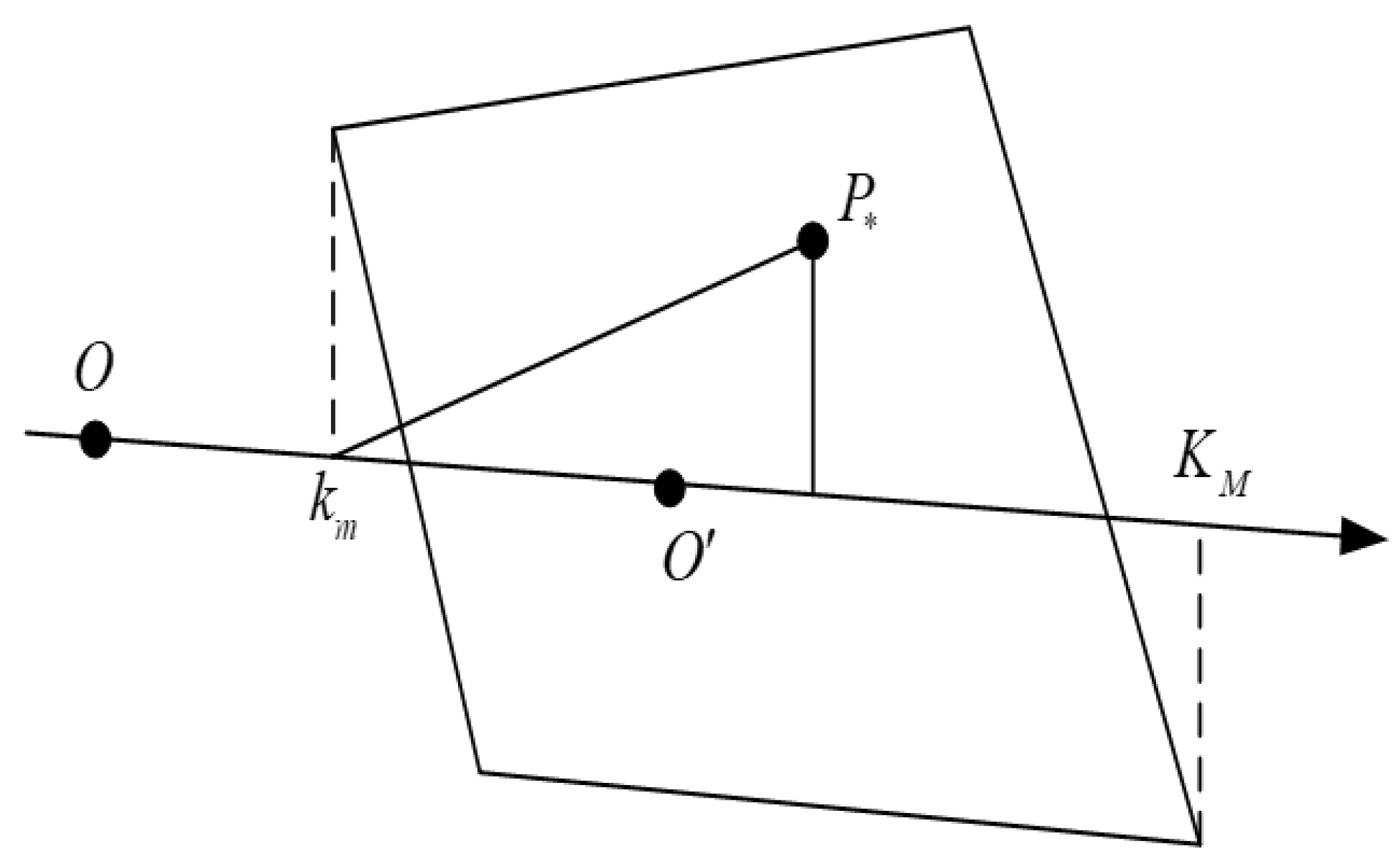

3.1.2. Global Projection Surface Calculation

3.1.3. Projection Matrix Adjustment and Sliding Camera Generation

3.2. SC: Viewpoint Preservation Based on Sliding Camera

3.2.1. Image Fusion Process of AOF

3.2.2. Calculation of Asymmetric Optical Flow

3.3. Estimation of Image Intrinsic and Extrinsic Parameters

4. Experiment

4.1. Effectiveness Analysis of SC-AOF Method

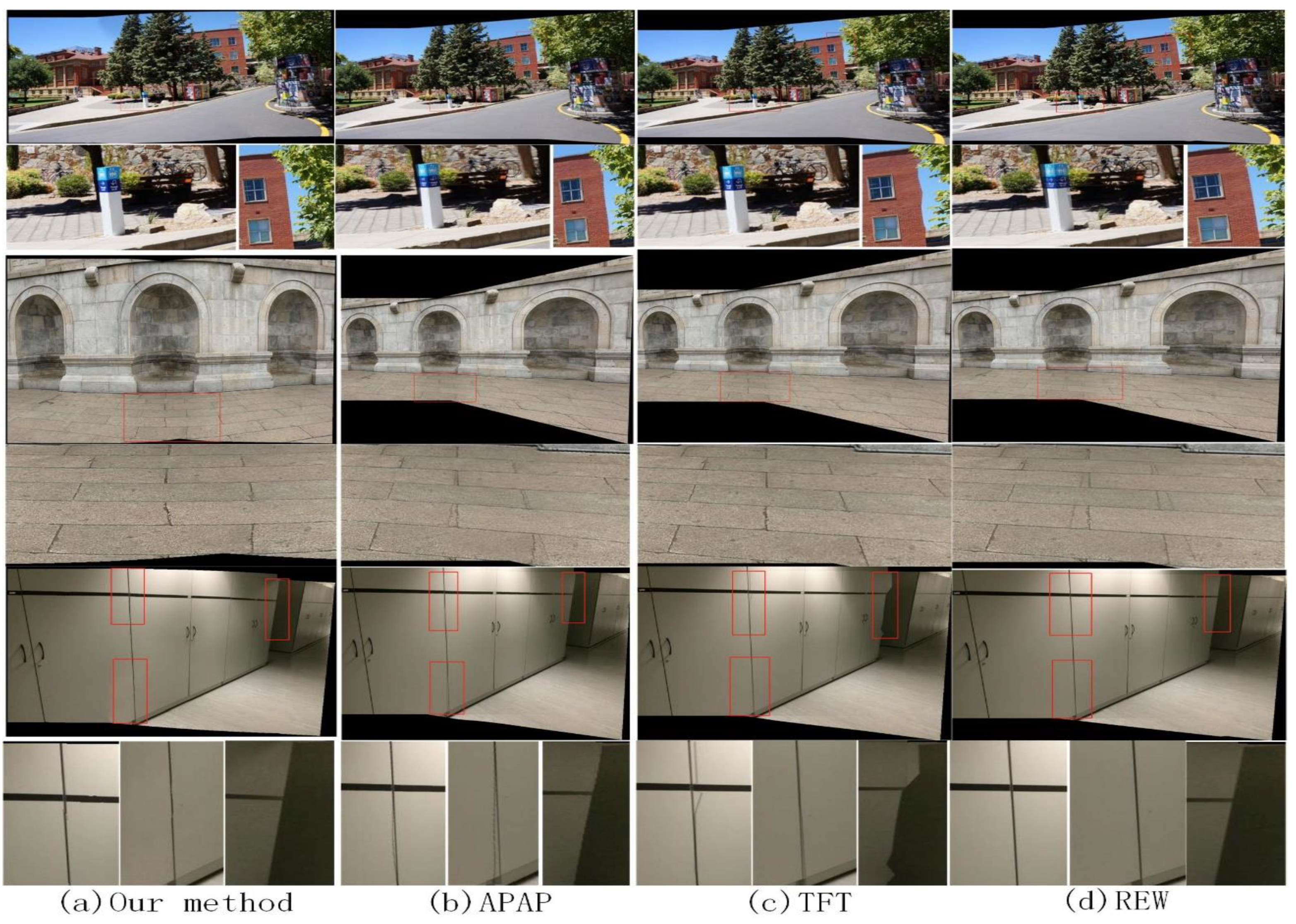

- The first two experiments compare typical methods for solving perspective deformation and local alignment respectively, and all the methods in the first two experiments are included in the third experiment to show the superiority of SC-AOF method in all aspects.

- Since the averaging methods generally underperforms linear blending ones, all methods to be compared adopt linear blending to achieve the best performance.

- All methods other than ours use the parameters recommended by their proposers. Our SC-AOF method has the following parameter settings in optical flow-based image blending: 10, 100, and 10

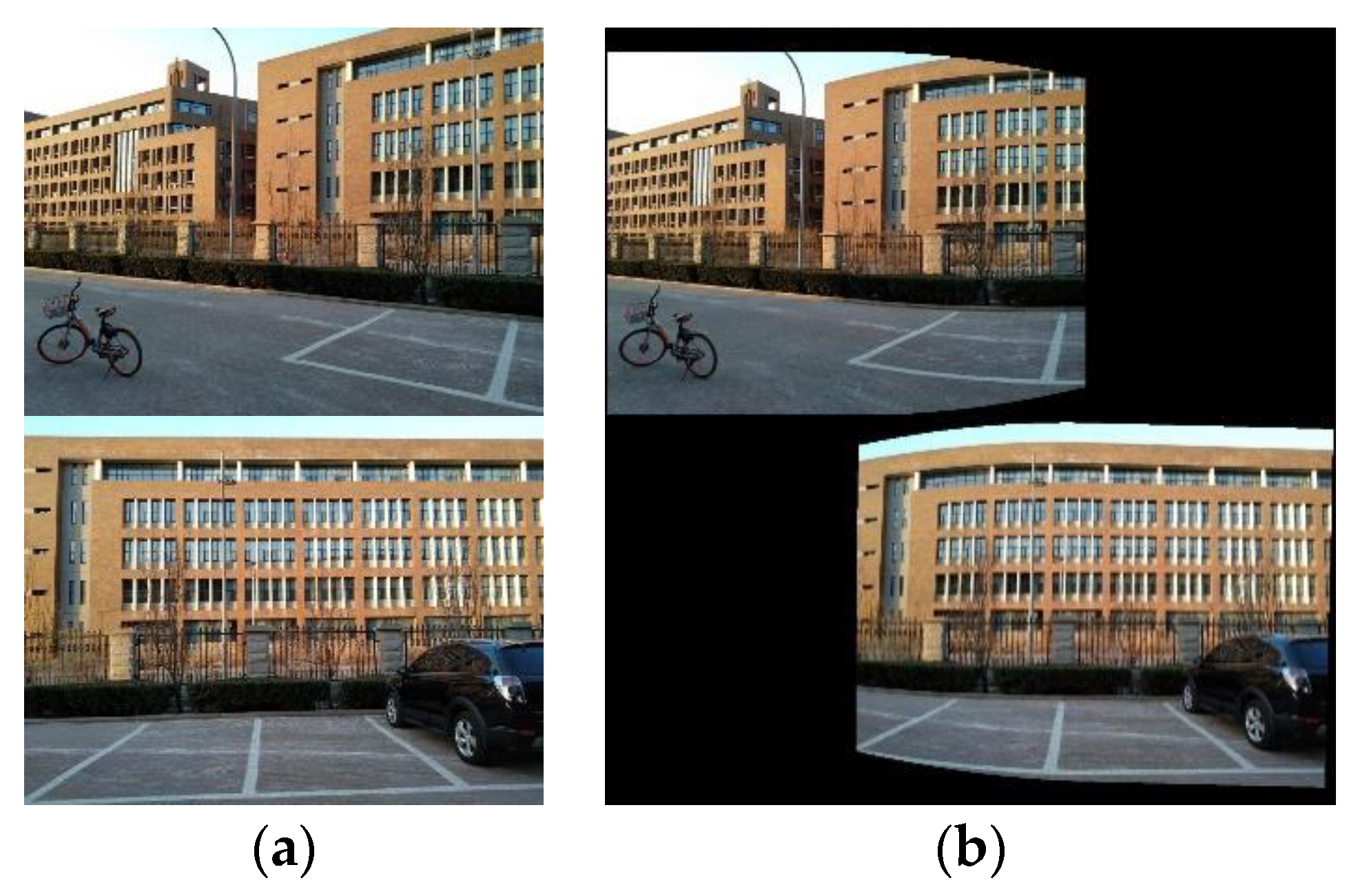

4.1.1. Perspective Deformation Reduction

4.1.2. Local Alignment

- The APAP method performs fairly well in most images, though with some alignment errors. This is because the moving DLT method smooths the mosaics to some extent.

- The TFT generated stitched image of excellent quality in planar areas. But when there is a sudden depth change in the scene, there will be serious distortions. This is because large errors appear when calculating plane using three vertices of a triangle in the area with sudden depth changes.

- The REW method has large alignment error in the planar area and aligns the images better than the APAP and TFT method in all other scenes. This is because the fewer feature points in the planar area might be filtered out as mismatched points by the REW method.

- APAP and AANAP have high scores on all image pairs, but the scores are lower than our method and REW, proving that APAP and AANAP blur mosaics to some extent.

- When SPHP is not combined with APAP, only the global homography is used to align the images, resulting in lower scores compared to other methods.

- TFT has higher scores on the datasets except for the building dataset. TFT can improve alignment accuracy but also bring instability.

- SPW combines quasi-homography and content-preserving warping to align images, which adding other constraints while also reducing the accuracy of alignment, resulting in lower scores compared to REW and our method.

- Both REW and our method use global homography matrix to coarsely align the images. Afterwards, in REW and our method, a deformation field and optical flow are applied to further align the images respectively. Therefore, the both methods has higher scores and robustness than other methods.

4.1.3. Stitching Speed Comparison

4.2. Compatibility of SC-AOF Method

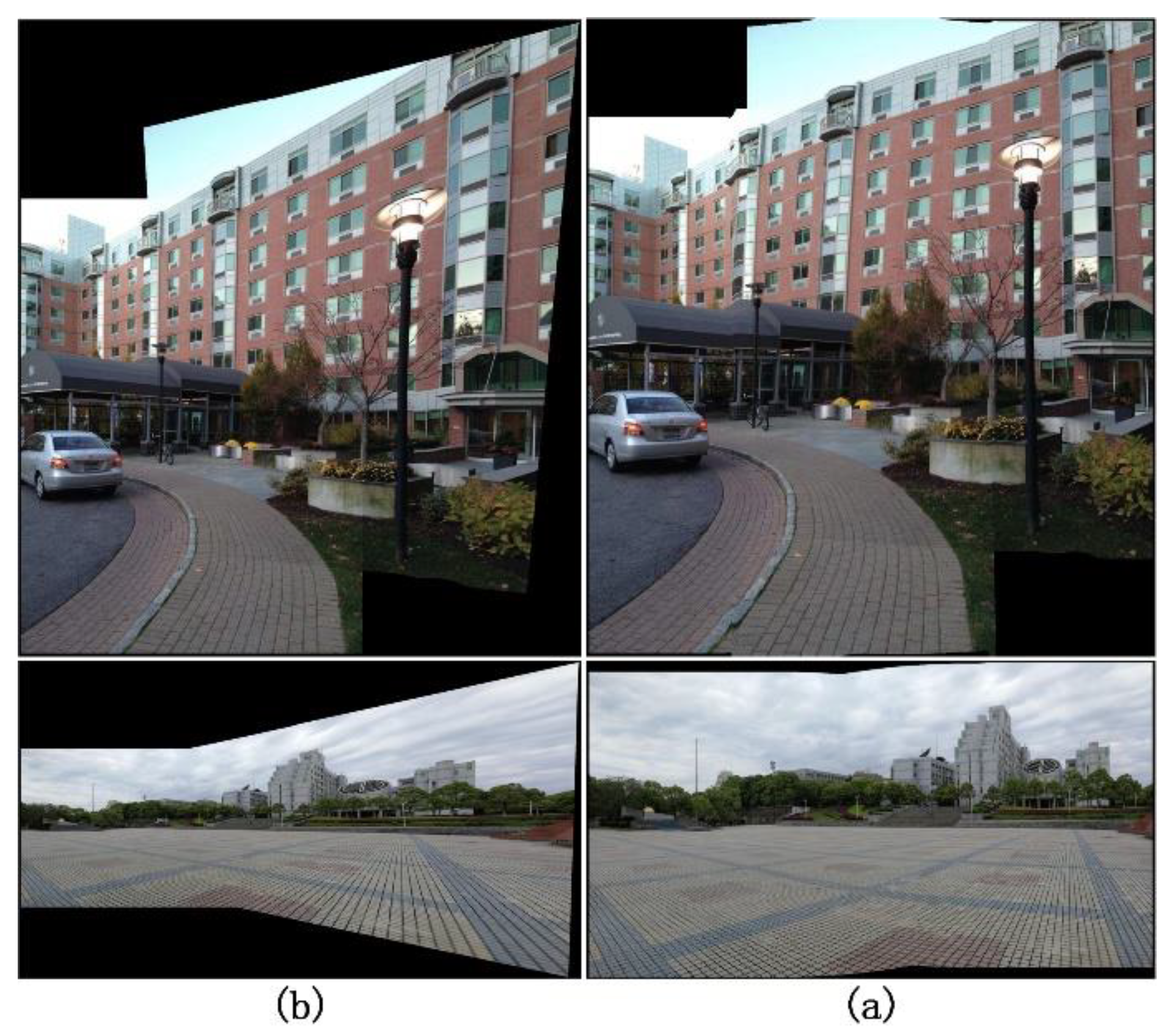

4.2.1. SC Module Compatibility Analysis

- Use the global similarity transformation to project onto the coordinate system to calculate the size and mesh vertices of the mosaic;

- Use (6)-(9) to calculate the weights of mesh vertices and the projection matrix, replace the homography in (2) with the homography matrix in local alignment model, and bring them into (12) to compute the warped images and blend them.

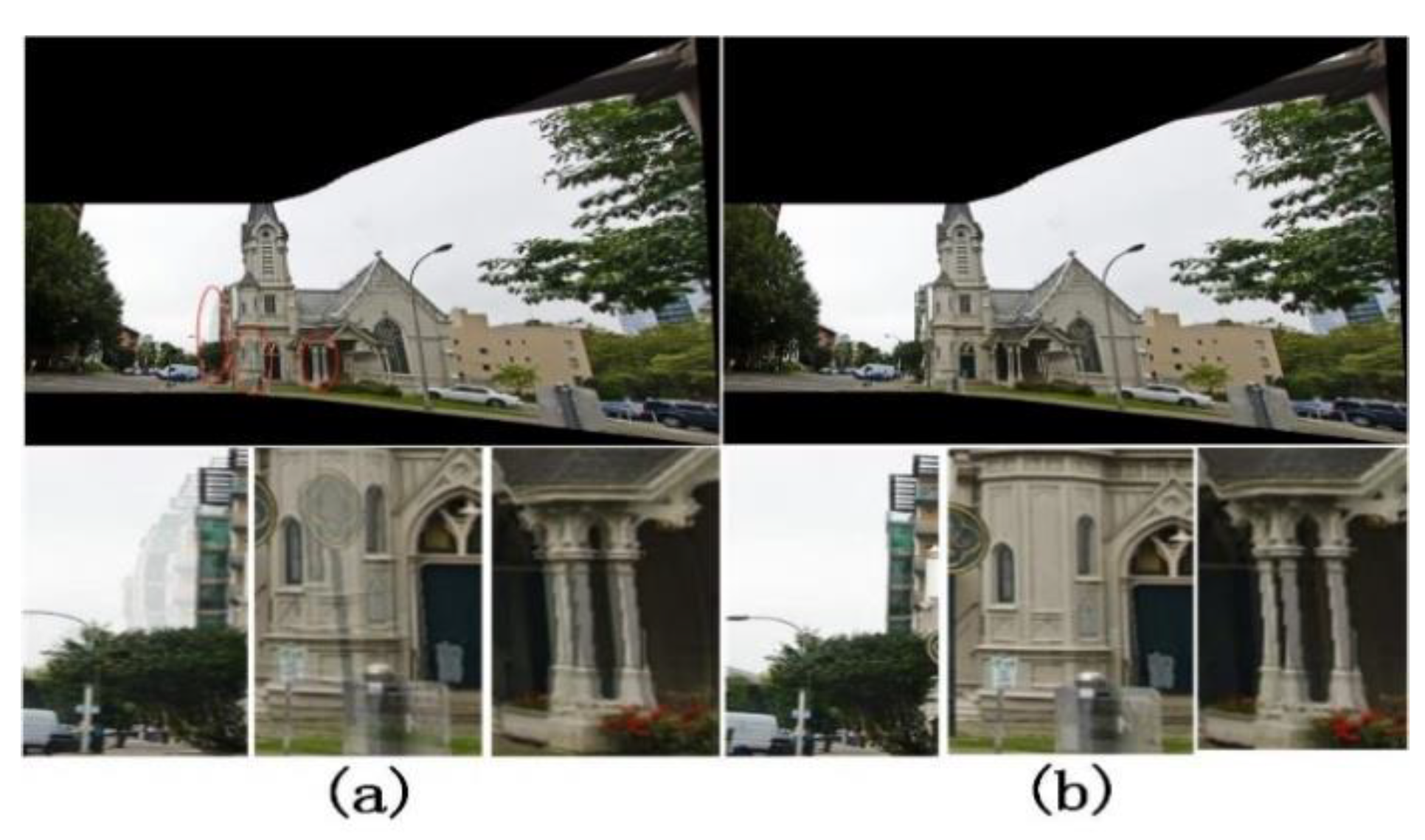

4.2.2. Blending Module Compatibility Analysis

- Generate two projected images using one of the other algorithms and calculate the blending parameters based on the overlapping areas;

-

Set the optical flow value to be 0, replace linear blending parameter with in (17) to blend warped images, preserve the blending band width in the low frequency-area and narrow the blending width in the high-frequency area to get a better image stitching effect.Figure 11 shows the image stitching effect of the APAP algorithm when using linear blending vs. when using our bending method. It can be seen that the blurring and ghosting in the stitched images are effectively mitigated when using our blending method. This shows that our blending algorithm can blend the aligned images better.

5. Conclusions

Author Contributions

Funding

References

- Abbadi, N.K.E.L.; Al Hassani, S.A.; Abdulkhaleq, A.H. A review over panoramic image stitching techniques[C]//Journal of Physics: Conference Series. IOP Publishing, 2021, 1999, 012115. [Google Scholar]

- Gómez-Reyes, J.K.; Benítez-Rangel, J.P.; Morales-Hernández, L.A.; et al. Image mosaicing applied on UAVs survey[J]. Applied Sciences, 2022, 12, 2729. [Google Scholar] [CrossRef]

- Xu, Q.; Chen, J.; Luo, L.; et al. UAV image stitching based on mesh-guided deformation and ground constraint[J]. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 2021, 14, 4465–4475. [Google Scholar] [CrossRef]

- Wen, S.; Wang, X.; Zhang, W.; et al. Structure Preservation and Seam Optimization for Parallax-Tolerant Image Stitching[J]. IEEE Access, 2022, 10, 78713–78725. [Google Scholar] [CrossRef]

- Tang, W.; Jia, F.; Wang, X. An improved adaptive triangular mesh-based image warping method[J]. Frontiers in Neurorobotics, 2023, 16, 1042429. [Google Scholar] [CrossRef] [PubMed]

- Li, J.; Deng, B.; Tang, R.; et al. Local-adaptive image alignment based on triangular facet approximation[J]. IEEE Transactions on Image Processing, 2019, 29, 2356–2369. [Google Scholar] [CrossRef]

- Lee, K.Y.; Sim, J.Y. Warping residual based image stitching for large parallax[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2020: 8198-8206.

- Zhu, S.; Zhang, Y.; Zhang, J.; et al. ISGTA: an effective approach for multi-image stitching based on gradual transformation matrix[J]. Signal, Image and Video Processing, 2023, 17, 3811–3820. [Google Scholar] [CrossRef]

- Zaragoza, J.; Chin, T.J.; Brown, M.S.; et al. As-Projective-As-Possible Image Stitching with Moving DLT[C]// Computer Vision and Pattern Recognition (CVPR). IEEE, 2013.

- Li, J.; Wang, Z.; Lai, S.; et al. Parallax-tolerant image stitching based on robust elastic warping[J]. IEEE Transactions on multimedia, 2017, 20, 1672–1687. [Google Scholar] [CrossRef]

- Xue, F.; Zheng, D. Elastic Warping with Global Linear Constraints for Parallax Image Stitching[C]//2023 15th International Conference on Advanced Computational Intelligence (ICACI). IEEE, 2023: 1-6.

- Liao, T.; Li, N. Natural Image Stitching Using Depth Maps[J]. arXiv, 2022; arXiv:2202.06276. [Google Scholar]

- Cong, Y.; Wang, Y.; Hou, W.; et al. Feature Correspondences Increase and Hybrid Terms Optimization Warp for Image Stitching[J]. Entropy, 2023, 25, 106. [Google Scholar] [CrossRef] [PubMed]

- Chang, C.H.; Sato, Y.; Chuang, Y.Y. Shape-preserving half-projective warps for image stitching[C]//Proceedings of the IEEE conference on computer vision and pattern recognition. 2014: 3254-3261.

- Chen, J.; Li, Z.; Peng, C.; et al. UAV image stitching based on optimal seam and half-projective warp[J]. Remote Sensing, 2022, 14, 1068. [Google Scholar] [CrossRef]

- Lin, C.C.; Pankanti, S.U.; Ramamurthy, K.N.; et al. Adaptive as-natural-as-possible image stitching[C]//Computer Vision & Pattern Recognition.IEEE, 2015. [CrossRef]

- CHEN Yusheng, CHUANG Yungyu. Natural Image Stitching with the Global Similarity Prior[C]. European Conference on Computer Vision. Springer International Publishing, 2016.

- Cui, J.; Liu, M.; Zhang, Z.; et al. Robust UAV thermal infrared remote sensing images stitching via overlap-prior-based global similarity prior model[J]. IEEE journal of selected topics in applied earth observations and remote sensing, 2020, 14, 270–282. [Google Scholar] [CrossRef]

- Liao, T.; Li, N. Single-perspective warps in natural image stitching[J]. IEEE transactions on image processing, 2019, 29, 724–735. [Google Scholar] [CrossRef] [PubMed]

- Li, N.; Xu, Y.; Wang, C. Quasi-homography warps in image stitching[J]. IEEE Transactions on Multimedia, 2017, 20, 1365–1375. [Google Scholar] [CrossRef]

- Du, P.; Ning, J.; Cui, J.; et al. Geometric Structure Preserving Warp for Natural Image Stitching[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2022: 3688-3696.

- Bertel, T.; Campbell ND, F.; Richardt, C. Megaparallax: Casual 360 panoramas with motion parallax[J]. IEEE transactions on visualization and computer graphics, 2019, 25, 1828–1835. [Google Scholar] [CrossRef] [PubMed]

- Meng, M.; Liu, S. High-quality Panorama Stitching based on Asymmetric Bidirectional Optical Flow[C]//2020 5th International Conference on Computational Intelligence and Applications (ICCIA). IEEE, 2020: 118-122.

- Hofinger, M.; Bulò, S.R.; Porzi, L.; et al. Improving optical flow on a pyramid level[C]//European Conference on Computer Vision. Cham: Springer International Publishing, 2020: 770-786.

- Shah ST, H.; Xuezhi, X. Traditional and modern strategies for optical flow: an investigation[J]. SN Applied Sciences, 2021, 3, 289. [Google Scholar] [CrossRef]

- Zhai, M.; Xiang, X.; Lv, N.; et al. Optical flow and scene flow estimation: A survey[J]. Pattern Recognition, 2021, 114, 107861. [Google Scholar] [CrossRef]

- Liu, C.; Yuen, J.; Torralba, A. Sift flow: Dense correspondence across scenes and its applications[J]. IEEE transactions on pattern analysis and machine intelligence, 2010, 33, 978–994. [Google Scholar] [CrossRef] [PubMed]

- Zhao, S.; Zhao, L.; Zhang, Z.; et al. Global matching with overlapping attention for optical flow estimation[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2022: 17592-17601.

- Rao, S.; Wang, H. Robust optical flow estimation via edge preserving filtering[J]. Signal Processing: Image Communication, 2021, 96, 116309. [Google Scholar] [CrossRef]

- Jeong, J.; Lin, J.M.; Porikli, F.; et al. Imposing consistency for optical flow estimation[C]//Proceedings of the IEEE/CVF conference on Computer Vision and Pattern Recognition. 2022: 3181-3191.

- Anderson, R.; Gallup, D.; Barron, J.T.; et al. Jump: virtual reality video[J]. ACM Transactions on Graphics (TOG), 2016, 35, 1–13. [Google Scholar] [CrossRef]

- Teed, Z.; Deng, J. Raft: Recurrent all-pairs field transforms for optical flow[C]//Computer Vision–ECCV 2020: 16th European Conference, Glasgow, UK, 23-28 August 2020, Proceedings, Part II 16. Springer International Publishing, 2020: 402-419.

- Huang, Z.; Shi, X.; Zhang, C.; et al. Flowformer: A transformer architecture for optical flow[C]//European Conference on Computer Vision. Cham: Springer Nature Switzerland, 2022: 668-685.

- https://github.com/facebookarchive/Surround360.

- Zhang Y, J. Camera calibration[M]//3-D Computer Vision: Principles, Algorithms and Applications. Singapore: Springer Nature Singapore, 2023: 37-65.

- Zhang Y, Zhao X, Qian D. Learning-Based Framework for Camera Calibration with Distortion Correction and High Precision Feature Detection[J]. arXiv, 2022; arXiv:2202.00158.

- Fang J, Vasiljevic I, Guizilini V, et al. Self-supervised camera self-calibration from video[C]//2022 International Conference on Robotics and Automation (ICRA). IEEE, 2022: 8468-8475.

| APAP | AANAP | SPHP | TFT | REW | SPW | Ours | |

|---|---|---|---|---|---|---|---|

| temple | 0.90 | 0.91 | 0.73 | 0.95 | 0.94 | 0.85 | 0.96 |

| rail-tracks | 0.87 | 0.90 | 0.62 | 0.92 | 0.92 | 0.87 | 0.93 |

| garden | 0.90 | 0.94 | 0.81 | 0.95 | 0.95 | 0.92 | 0.93 |

| building | 0.93 | 0.94 | 0.89 | 0.74 | 0.96 | 0.90 | 0.96 |

| school | 0.89 | 0.91 | 0.67 | 0.90 | 0.91 | 0.87 | 0.93 |

| wall | 0.83 | 0.91 | 0.68 | 0.90 | 0.93 | 0.81 | 0.92 |

| park-square | 0.95 | 0.96 | 0.80 | 0.97 | 0.97 | 0.95 | 0.97 |

| cabinet | 0.97 | 0.97 | 0.87 | 0.97 | 0.98 | 0.97 | 0.96 |

| APAP | AANAP | SPHP | TFT | REW | SPW | Ours | |

|---|---|---|---|---|---|---|---|

| temple | 8.8 | 27.6 | 20.5 | 2.8 | 1.1 | 4.5 | 3.5 |

| rail-tracks | 50.4 | 161.6 | 91.0 | 36.1 | 35.4 | 260.5 | 29.5 |

| garden | 57.9 | 148.0 | 72.3 | 41.1 | 20.6 | 64.4 | 32.9 |

| building | 14.3 | 47.0 | 19.3 | 53.4 | 2.8 | 10.4 | 6.6 |

| school | 8.6 | 37.6 | 3.5 | 6.8 | 4.4 | 9.8 | 10.8 |

| wall | 16.4 | 81.0 | 12.1 | 37.5 | 13.6 | 15.7 | 37.2 |

| park-square | 51.3 | 194.4 | 91.5 | 53.8 | 13.5 | 149.4 | 30.2 |

| cabinet | 6.3 | 23.8 | 4.2 | 1.6 | 0.8 | 3.8 | 3.7 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).