Submitted:

22 April 2024

Posted:

22 April 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

Aims and Contribution

2. Background

2.1. Challenges in Detecting AI-Generated Text

2.2. LLM Hallucination Is Not an Insurmountable Problem

2.3. Reevaluating the Critiques of the Reasoning Capabilities of LLMs

2.4. Summary of Literature and Identification of Research Gaps

3. Multimodal LLM Self-Reflective Strategy

- 1.

- Initial Response Evaluation: The LLM initially responds to a multimodal question, providing a baseline for its capability in interpreting, analysing and integrating all the provided modalities.

- 2.

- Conceptual Self-Reflection: The LLM is then prompted to assess its own understanding of the key concepts within the textual content of the exam question, inviting the LLM to describe and explain in greater detail its knowledge and understanding of a key concept in isolation from information contained in other modalities.

- 3.

- Visual Self-Reflection: The LLM is prompted to focus its perception and understanding on key visual cues and elements within an image and to reflect on this, again in isolation from information contained in other modalities.

- 4.

- Synthesis of Self-reflection: The LLM model is then prompted to reevaluate and revise its initial response from the first step in light of its responses arising from the self-reflective prompting.

- 5.

- Final Response Generation: The LLM responds with a final answer that uses high-order reasoning to integrate and potentially correct the initial response with a revised answer, aiming for greater accuracy, completeness and depth of understanding.

4. Materials and Methods

4.1. Evaluation of the multimodal self-reflection strategy

4.2. Comprehensive Multimodal Question Assessment

4.3. Proficiency Evaluation Process

5. Results

5.1. Case Study - Finance

5.2. Case Study - Computer Science

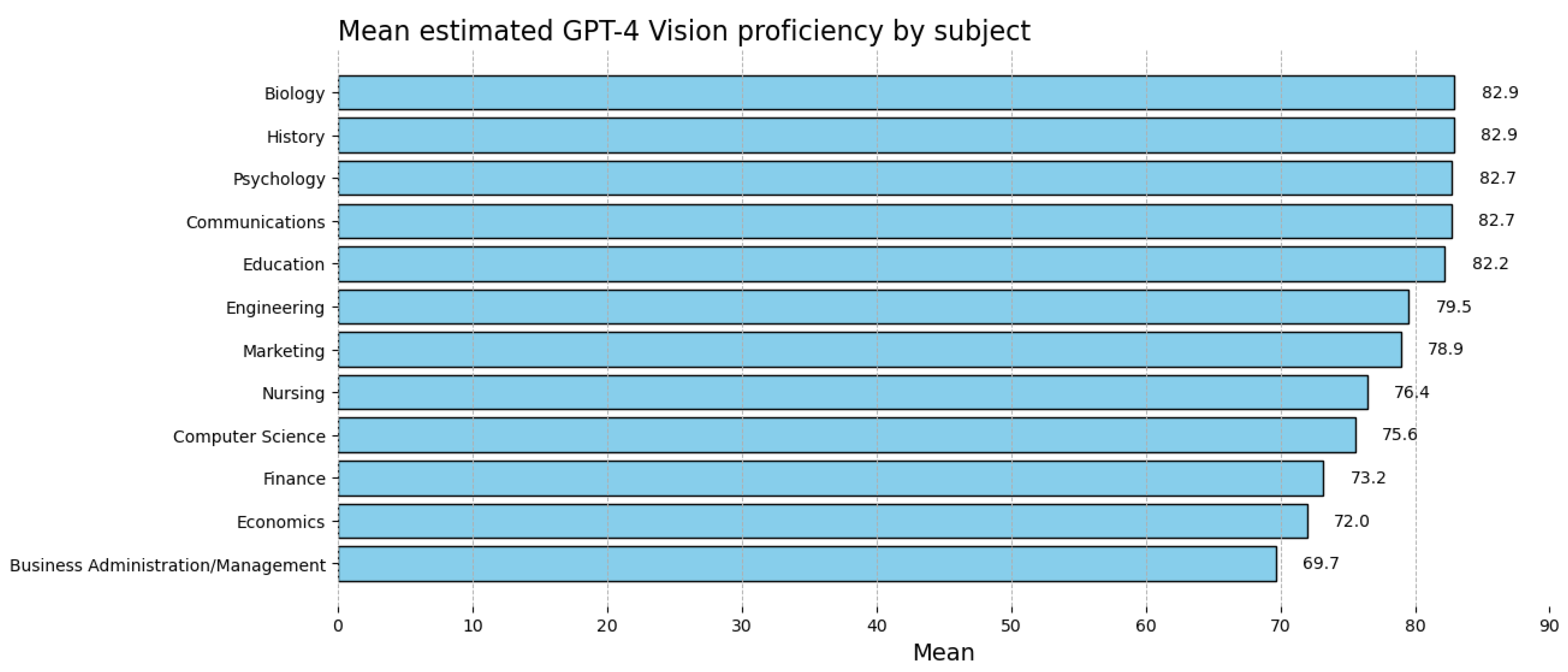

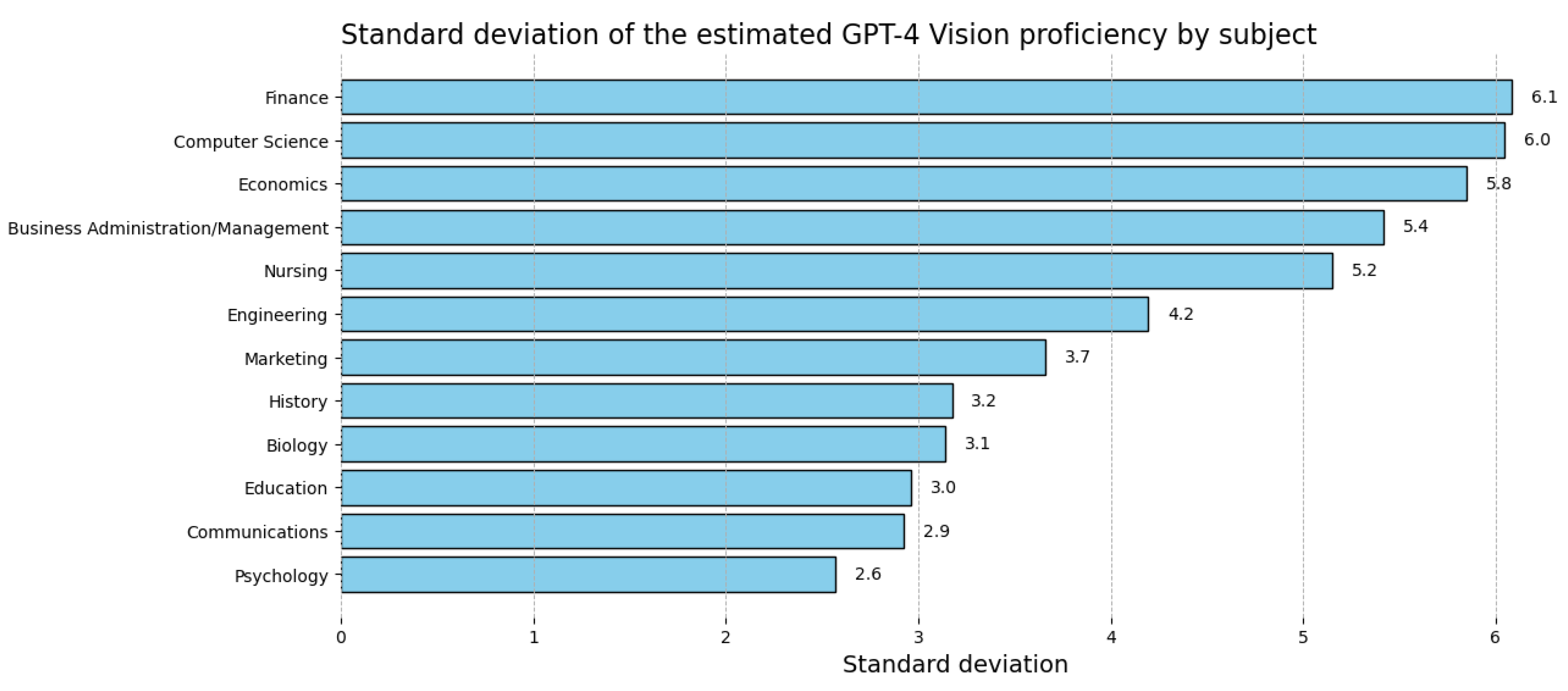

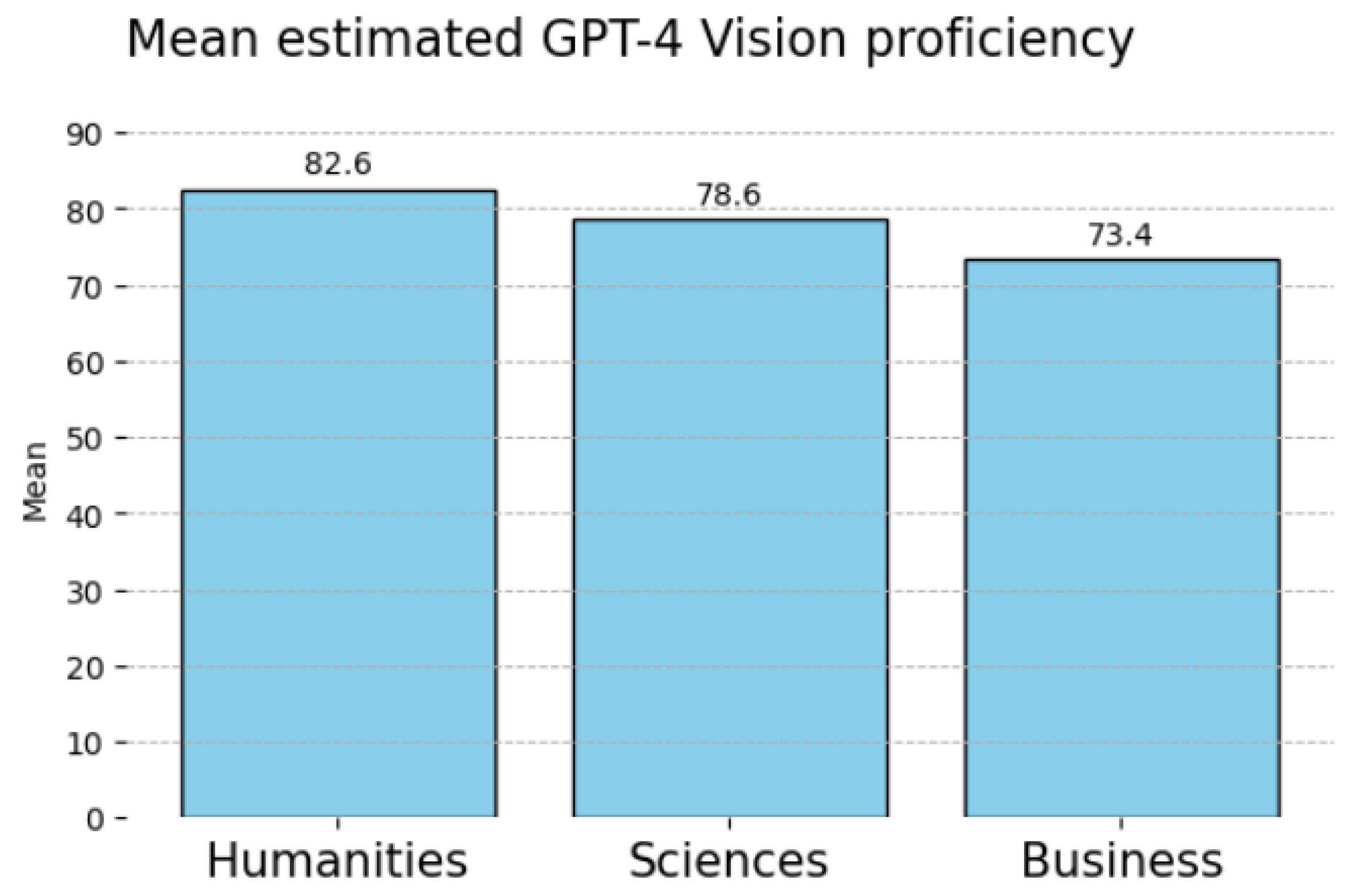

5.3. GPT-4 Multimodal Capability Estimations by Subject Area

6. Discussion

Recommendations

- 1.

- Proctored online exams: There is no substitute to effective proctoring. Therefore, it is recommended to ensure that all online exams are proctored as extensively as possible. Proctoring technologies that include real-time monitoring have their limitations, but they can deter the misuse of LLMs and other digital aids. Unproctored exams in the context of existing, and improving multimodal LLM capabilities can no longer be regarded as possessing validity.

- 2.

- Reinstatement of viva-voce exams: The reintroduction of viva-voce examinations, conducted online, can complement a suite of other assessments. Although viva-voce exams also possess limitations (as do all assessment types), they offer a dynamic, generally reliable, and direct assessment method for measuring student knowledge and reasoning skills. These exams are akin to professional interviews commonly used in industry, thus making them relevant for preparing students for real-world contexts.

- 3.

-

Enhanced multimodal exam strategies: If proctoring of online exams is not feasible, multimodal exams should be designed to maximally increase the cognitive load and processing complexity, making them more challenging for LLMs to provide reliable cheating assistance:

- Include multiple images alongside text per question to invoke a higher degree of reasoning across the modalities. This approach will increase the complexity of the questions and require a deeper level of reasoning and synthesis, which current LLMs may struggle to manage effectively.

- Design questions that necessitate the formulation of long-term strategies, forecasts, and projections. These types of questions require not only higher levels of conceptual understanding as well as causal relationships, but also the ability to project future trends and consequences which this research shows are a challenge to the predictive capabilities of LLMs.

- Integrate real-world scenarios that are current and relevant. Questions that reflect very recent developments or ongoing complex real-world problems and require up-to-date knowledge, making it difficult for LLMs to reason and generate accurate responses based solely on pre-existing and limited data for certain topics.

- Consider incorporating additional modalities into the questions such as video-based and/or audio-based questions alongside images and text, thus fully exploiting the current limitations of LLMs to process ot incorporate them all simultaneously.

- Explore formulating multimodal questions that require students to annotate the provided figure(s) or draw an additional figure as part of their answer which would again exploit some of the current LLM limitations.

- As much as possible, consider ways of linking multimodal exam questions with prior assessments completed by students during a teaching semester, and other course materials which would increase the difficulty of the LLM in producing a correct response.

- Consider incorporating some decoy questions specifically designed to detect LLM assistance. These questions could be subtly designed to prompt LLMs into revealing their non-human reasoning patterns through specific traps that exploit known LLM weaknesses, such as generating responses based on unlikely combinations of concepts or unusual context switches that a human would likely not make. This would not however be straightforward to implement since different multimodal LLMs will likely also have different responses to the decoy questions.

Limitations and Future Work

7. Conclusions

Acknowledgments

References

- Barber, M.; Bird, L.; Fleming, J.; Titterington-Giles, E.; Edwards, E.; Leyland, C. Gravity assist: Propelling higher education towards a brighter future - Office for Students, 2021.

- Butler-Henderson, K.; Crawford, J. A systematic review of online examinations: A pedagogical innovation for scalable authentication and integrity. Computers & Education 2020, 159, 104024. [Google Scholar]

- Coghlan, S.; Miller, T.; Paterson, J. Good proctor or “big brother”? Ethics of online exam supervision technologies. Philosophy & Technology 2021, 34, 1581–1606. [Google Scholar]

- Henderson, M.; Chung, J.; Awdry, R.; Mundy, M.; Bryant, M.; Ashford, C.; Ryan, K. Factors associated with online examination cheating. Assessment & Evaluation in Higher Education, 2022; 1–15. [Google Scholar]

- Dumulescu, D.; Muţiu, A.I. Academic leadership in the time of COVID-19—Experiences and perspectives. Frontiers in Psychology 2021, 12, 648344. [Google Scholar] [CrossRef] [PubMed]

- Whisenhunt, B.L.; Cathey, C.L.; Hudson, D.L.; Needy, L.M. Maximizing learning while minimizing cheating: New evidence and advice for online multiple-choice exams. Scholarship of Teaching and Learning in Psychology 2022. [Google Scholar] [CrossRef]

- Garg, M.; Goel, A. A systematic literature review on online assessment security: Current challenges and integrity strategies. Computers & Security 2022, 113, 102544. [Google Scholar]

- Arnold, I.J. Cheating at online formative tests: Does it pay off? The Internet and Higher Education 2016, 29, 98–106. [Google Scholar] [CrossRef]

- Ahsan, K.; Akbar, S.; Kam, B. Contract cheating in higher education: a systematic literature review and future research agenda. Assessment & Evaluation in Higher Education, 2021; 1–17. [Google Scholar]

- Crook, C.; Nixon, E. How internet essay mill websites portray the student experience of higher education. The Internet and Higher Education 2021, 48, 100775. [Google Scholar] [CrossRef]

- Noorbehbahani, F.; Mohammadi, A.; Aminazadeh, M. A systematic review of research on cheating in online exams from 2010 to 2021. Education and Information Technologies, 2022; 1–48. [Google Scholar]

- Allen, S.E.; Kizilcec, R.F. A systemic model of academic (mis) conduct to curb cheating in higher education. Higher Education, 2023; 1–21. [Google Scholar]

- Henderson, M.; Chung, J.; Awdry, R.; Ashford, C.; Bryant, M.; Mundy, M.; Ryan, K. The temptation to cheat in online exams: moving beyond the binary discourse of cheating and not cheating. International Journal for Educational Integrity 2023, 19, 21. [Google Scholar] [CrossRef]

- Malik, A.A.; Hassan, M.; Rizwan, M.; Mushtaque, I.; Lak, T.A.; Hussain, M. Impact of academic cheating and perceived online learning effectiveness on academic performance during the COVID-19 pandemic among Pakistani students. Frontiers in Psychology 2023, 14, 1124095. [Google Scholar] [CrossRef]

- Newton, P.M.; Essex, K. How common is cheating in online exams and did it increase during the COVID-19 pandemic? A systematic review. Journal of Academic Ethics, 2023; 1–21. [Google Scholar]

- McCabe, D.L. CAI Research Center for Academic Integrity, Durham, NC, 2005.

- Wajda-Johnston, V.A.; Handal, P.J.; Brawer, P.A.; Fabricatore, A.N. Academic dishonesty at the graduate level. Ethics & Behavior 2001, 11, 287–305. [Google Scholar]

- Ji, Z.; Yu, T.; Xu, Y.; Lee, N.; Ishii, E.; Fung, P. Towards Mitigating Hallucinationin Large Language Models via Self-Reflection. arXiv, 2023; arXiv:cs.CL/2310.06271. [Google Scholar]

- Richards, M.; Waugh, K.; Slaymaker, M.; Petre, M.; Woodthorpe, J.; Gooch, D. Bob or Bot: Exploring ChatGPT’s Answers to University Computer Science Assessment 2024. 24. [CrossRef]

- Chan, J.C.; Ahn, D. Unproctored online exams provide meaningful assessment of student learning. Proceedings of the National Academy of Sciences 2023, 120, e2302020120. [Google Scholar] [CrossRef] [PubMed]

- Martino, A.; Iannelli, M.; Truong, C. Knowledge injection to counter large language model (LLM) hallucination. European Semantic Web Conference. Springer, 2023, pp. 182–185.

- Yao, J.Y.; Ning, K.P.; Liu, Z.H.; Ning, M.N.; Yuan, L. LLM Lies: Hallucinations are not Bugs,but Features as Adversarial Examples. arXiv, 2023; arXiv:cs.CL/2310.01469. [Google Scholar]

- Zhang, Y.; Li, Y.; Cui, L.; Cai, D.; Liu, L.; Fu, T.; Huang, X.; Zhao, E.; Zhang, Y.; Chen, Y.; Wang, L.; Luu, A.T.; Bi, W.; Shi, F.; Shi, S. Siren’s Song in the AIOcean: A Survey on Hallucination in Large Language Models. arXiv, 2023; arXiv:cs.CL/2309.01219. [Google Scholar]

- Chang, Y.C.; Wang, X.; Wang, J.; Wu, Y.; Zhu, K.; Chen, H.; Yang, L.; Yi, X.; Wang, C.; Wang, Y.; Ye, W.; Zhang, Y.; Chang, Y.; Yu, P.S.; Yang, Q.; Xie, X. A Survey on Evaluation of Large Language Models. arXiv, 2023. [Google Scholar] [CrossRef]

- McKenna, N.; Li, T.; Cheng, L.; Hosseini, M.J.; Johnson, M.; Steedman, M. Sources of Hallucination by Large Language Models on Inference Tasks. 2023, 2758–2774. [Google Scholar] [CrossRef]

- Liu, H.; Ning, R.; Teng, Z.; Liu, J.; Zhou, Q.; Zhang, Y. Evaluating the Logical Reasoning Ability of ChatGPT and GPT-4. arXiv, 2023. [Google Scholar] [CrossRef]

- Schultz, M.; Callahan, D.L. Perils and promise of online exams. Nature Reviews Chemistry 2022, 6, 299–300. [Google Scholar] [CrossRef]

- Cotton, D.R.; Cotton, P.A.; Shipway, J.R. Chatting and cheating: Ensuring academic integrity in the era of ChatGPT. Innovations in Education and Teaching International 2024, 61, 228–239. [Google Scholar] [CrossRef]

- Alessio, H.M.; Malay, N.; Maurer, K.; Bailer, A.J.; Rubin, B. Examining the effect of proctoring on online test scores. Online Learning 2017, 21, 146–161. [Google Scholar] [CrossRef]

- Han, S.; Nikou, S.; Ayele, W.Y. Digital proctoring in higher education: a systematic literature review. International Journal of Educational Management 2023, 38, 265–285. [Google Scholar] [CrossRef]

- Abd-Alrazaq, A.; AlSaad, R.; Alhuwail, D.; Ahmed, A.; Healy, P.M.; Latifi, S.; Aziz, S.; Damseh, R.; Alrazak, S.A.; Sheikh, J.; others. Large language models in medical education: opportunities, challenges, and future directions. JMIR Medical Education 2023, 9, e48291. [Google Scholar] [CrossRef] [PubMed]

- Stribling, D.; Xia, Y.; Amer, M.K.; Graim, K.S.; Mulligan, C.J.; Renne, R. The model student: GPT-4 performance on graduate biomedical science exams. Scientific Reports 2024, 14, 5670. [Google Scholar] [CrossRef]

- Rudolph, J.; Tan, S.; Tan, S. ChatGPT: Bullshit spewer or the end of traditional assessments in higher education? Journal of applied learning and teaching 2023, 6, 342–363. [Google Scholar]

- Lo, C.K. What is the impact of ChatGPT on education? A rapid review of the literature. Education Sciences 2023, 13, 410. [Google Scholar] [CrossRef]

- Nikolic, S.; Daniel, S.; Haque, R.; Belkina, M.; Hassan, G.M.; Grundy, S.; Lyden, S.; Neal, P.; Sandison, C. ChatGPT versus engineering education assessment: a multidisciplinary and multi-institutional benchmarking and analysis of this generative artificial intelligence tool to investigate assessment integrity. European Journal of Engineering Education 2023, 48, 559–614. [Google Scholar] [CrossRef]

- Zhang, W.; Aljunied, M.; Gao, C.; Chia, Y.K.; Bing, L. M3exam A multilingual, multimodal, multilevel benchmark for examining large language models. Advances in Neural Information Processing Systems 2024, 36. [Google Scholar]

- Sadasivan, V.S.; Kumar, A.; Balasubramanian, S.; Wang, W.; Feizi, S. Can AI-Generated Text be Reliably Detected? arXiv 2023. [Google Scholar] [CrossRef]

- Orenstrakh, M.S.; Karnalim, O.; Suarez, C.A.; Liut, M. Detecing LLM-Generated Text in Computing Education: A Comparative Study for ChatGPT Cases. 2023; arXiv:cs.CL/2307.07411. [Google Scholar]

- Kumarage, T.; Agrawal, G.; Sheth, P.; Moraffah, R.; Chadha, A.; Garland, J.; Liu, H. A Survey of AI-generated Text Forensic Systems: Detection, Attribution, and Characterization. 2024; arXiv:cs.CL/2403.01152. [Google Scholar]

- Kalai, A.T.; Vempala, S.S. Calibrated Language Models Must Hallucinate. 2024; arXiv:cs.CL/2311.14648. [Google Scholar]

- Pal, A.; Sankarasubbu, M. Gemini Goes to Med School: Exploring the Capabilities of Multimodal Large Language Modelson Medical Challenge Problems & Hallucinations. 2024; arXiv:cs.CL/2402.07023. [Google Scholar]

- Nori, H.; King, N.; McKinney, S.; Carignan, D.; Horvitz, E. Capabilities of GPT-4 on Medical Challenge Problems. arXiv, 2023. [Google Scholar] [CrossRef]

- Stechly, K.; Marquez, M.; Kambhampati, S. GPT-4 Doesn’t Know It’s Wrong: An Analysis of Iterative Prompting for Reasoning Problems. arXiv, 2023. [Google Scholar] [CrossRef]

- Du, Y.; Li, S.; Torralba, A.; Tenenbaum, J.; Mordatch, I. Improving Factuality and Reasoning in Language Models through Multiagent Debate. arXiv, 2023. [Google Scholar] [CrossRef]

- Luo, J.; Xiao, C.; Ma, F. Zero-Resource Hallucination Prevention for Large Language Models. arXiv, 2023. [Google Scholar] [CrossRef]

- Creswell, A.; Shanahan, M. Faithful Reasoning Using Large Language Models. arXiv, 2022. [Google Scholar] [CrossRef]

- Wei, J.; Wang, X.; Schuurmans, D.; Bosma, M.; Ichter, B.; Xia, F.; Chi, E.; Le, Q.; Zhou, D. Chain-of-Thought Prompting Elicits Reasoning in Large Language Models. arXiv, 2023; arXiv:cs.CL/2201.11903. [Google Scholar]

- Yeadon, W.; Halliday, D.P. Exploring durham university physics exams with large language models. arXiv, 2023; arXiv:2306.15609 2023. [Google Scholar]

- Singla, A. Evaluating ChatGPT and GPT-4 for Visual Programming. Proceedings of the 2023 ACM Conference on International Computing Education Research-Volume 2, 2023, pp. 14–15.

- Zheng, C.; Liu, Z.; Xie, E.; Li, Z.; Li, Y. Progressive-Hint Prompting Improves Reasoning in Large Language Models. arXiv, 2023. [Google Scholar] [CrossRef]

- Han, S.J.; Ransom, K.J.; Perfors, A.; Kemp, C. Inductive reasoning in humans and large language models. Cognitive Systems Research 2024, 83, 101155. [Google Scholar] [CrossRef]

- Liévin, V.; Hother, C.E.; Motzfeldt, A.G.; Winther, O. Can large language models reason about medical questions? Patterns 2023. [Google Scholar] [CrossRef]

- Luo, L.; Lin, Z.; Liu, Y.; Shu, L.; Zhu, Y.; Shang, J.; Meng, L. Critique ability of large language models. arXiv, arXiv:2310.04815 2023.

- Feng, T.H.; Denny, P.; Wuensche, B.; Luxton-Reilly, A.; Hooper, S. More Than Meets the AI: Evaluating the performance of GPT-4 on Computer Graphics assessment questions. Proceedings of the 26th Australasian Computing Education Conference, 2024, pp. 182–191.

- Bloom, B.S.; Engelhart, M.D.; Furst, E.J.; Hill, W.H.; Krathwohl, D.R. ; others. Taxonomy of educational objectives: The classification of educational goals. Handbook 1: Cognitive domain; Longman New York, 1956. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N. ; Kaiser, Ł.; Polosukhin, I. Attention is all you need. Advances in neural information processing systems, 2017; 5998–6008. [Google Scholar]

| 1 | The author of this paper was one of the evaluators since the Computer Science question was devised by the author and was originally used in an actual exam to evaluate students. |

| 2 |

| Question Type (Subject) | Question and Competence Self-evaluation | Proficiency Score |

|---|---|---|

| (Nursing) Surgical Outcomes Dashboard |

Question: Analyze a dashboard displaying surgical outcomes, including success rates, complication rates, and patient satisfaction scores. Discuss how this data informs surgical quality improvement. Self-Analysis: GPT-4 could struggle with evaluating surgical outcomes data, particularly in understanding how success rates, complication rates, and patient satisfaction scores inform surgical quality improvement efforts. |

70 |

| (Education) Educational Technology Tools Comparison Table |

Question: Evaluate a table comparing various educational technology tools based on functionality, usability, and cost. Discuss how these tools can be effectively integrated into the classroom. Self-Analysis: While GPT-4 can compare educational technology tools, fully grasping how these tools integrate into classroom settings and enhance learning requires knowledge of pedagogical practices and technology effectiveness in education. |

83 |

| (Engineering) Automotive Aerodynamics Simulation Results |

Question: Evaluate the results of computational fluid dynamics simulations for car models. Discuss how aerodynamics influences vehicle design and fuel efficiency. Self-Analysis: GPT-4 can evaluate aerodynamics simulation results but may have difficulty in fully understanding the implications for vehicle design and fuel efficiency, which require integration of fluid dynamics principles and automotive engineering practices. |

80 |

| Step | User Prompt | GPT-4 Vision Response |

|---|---|---|

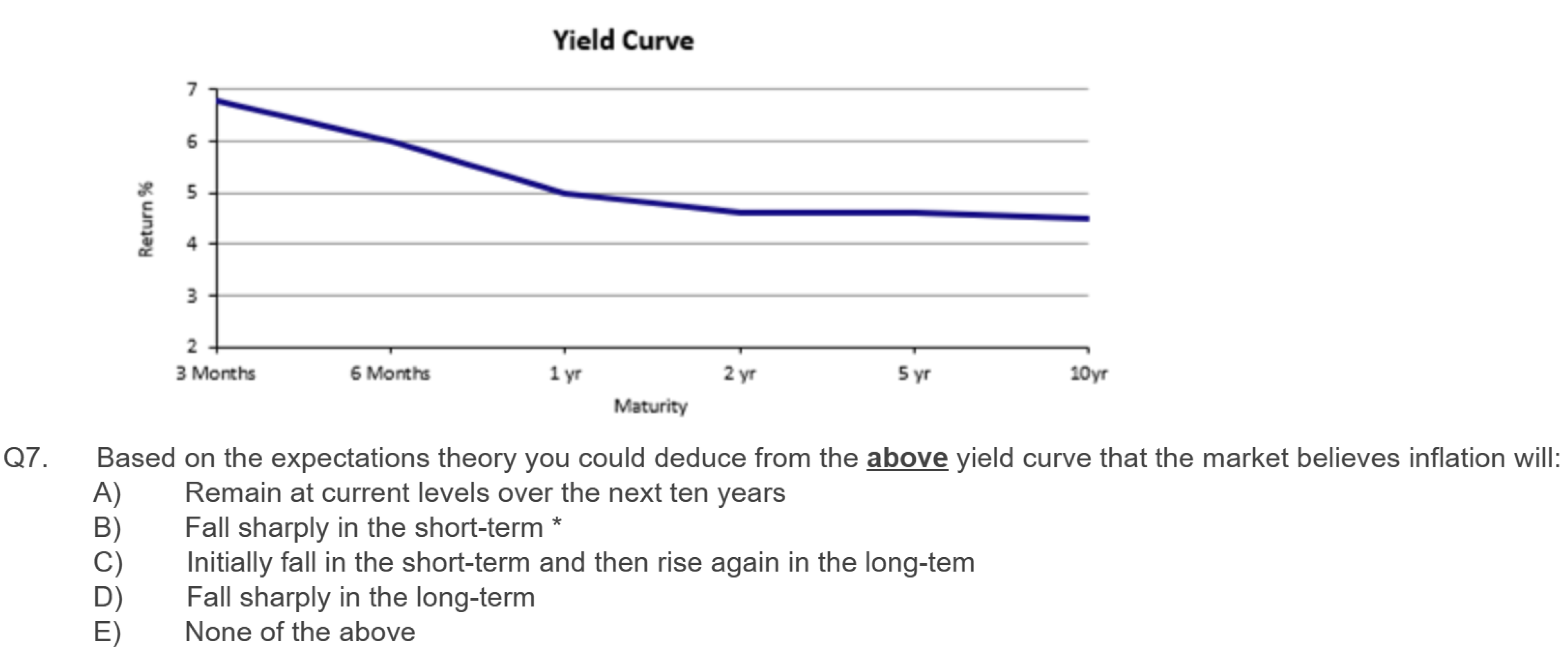

| (1) Initial response requiring concept and image reasoning | “Answer the following Finance exam question based on the supplied image. What trend will the market expect that the inflation will follow?” | “The market expectation inferred from the yield curve in the image is that in the short term, inflation may rise or remain relatively high, prompting central banks to maintain or raise interest rates to control inflationary pressures. Conversely, in the long term, the expectation of lower yields suggests that the market anticipates a reduction in inflation, potentially due to economic slowdown or effective monetary policy measures leading to lower interest rates.” INCORRECT ANSWER |

| (2) Self-reflection focusing on concept reasoning | “Expand and describe the expectations theory in the context of yield curves and how they affect short-term and long-term inflation.” | “The expectations theory holds that the yield curve affects inflation expectations in both the short and long term, although the mechanisms and strengths of these effects may differ. Short-term rates are closely tied to the central bank’s policy rate, which is influenced by the central bank’s inflation target. If the market believes that inflation will rise above the central bank’s target, it expects the central bank to increase the policy rate to combat inflation, thus increasing short-term yields. Consequently, a steep yield curve can signal rising short-term inflation expectations. The long end of the curve reflects the market’s expectations for inflation over a longer horizon. Suppose long-term rates are low relative to short-term rates, creating an inverted curve. In that case, it can indicate that the market expects the central bank to eventually reduce policy rates in response to lower inflation or even deflationary pressures. This scenario would indicate a forecast of stable to declining long-term inflation.”CORRECT |

| (3) Self-reflection focusing on the image reasoning | “Based on the yield curve in the image, at what point in time is the change in yield most acute?” | “The change in yield is most acute at the shorter end of the spectrum, particularly between the 3-month and 2-year maturities. This portion of the curve displays a steeper slope compared to the more gradual slope between the 2-year and 10-year maturities.” CORRECT |

| (4) Revised response post-self-reflection | “Based on your observations in the response and reasoning above, revise and improve your answer to the above question about the effect of yield curves on short-term and long-term inflation.” | “Based on the steepness of the yield curve between the 3-month and 2-year maturities, the market appears to expect a relatively sharp decline in short-term inflation expectations. The leveling off of the yield curve beyond the 2-year mark suggests that the market anticipates more stable, modest changes to long-term inflation expectations.” CORRECT ANSWER |

| Step | User Prompt | GPT-4 Vision Response |

|---|---|---|

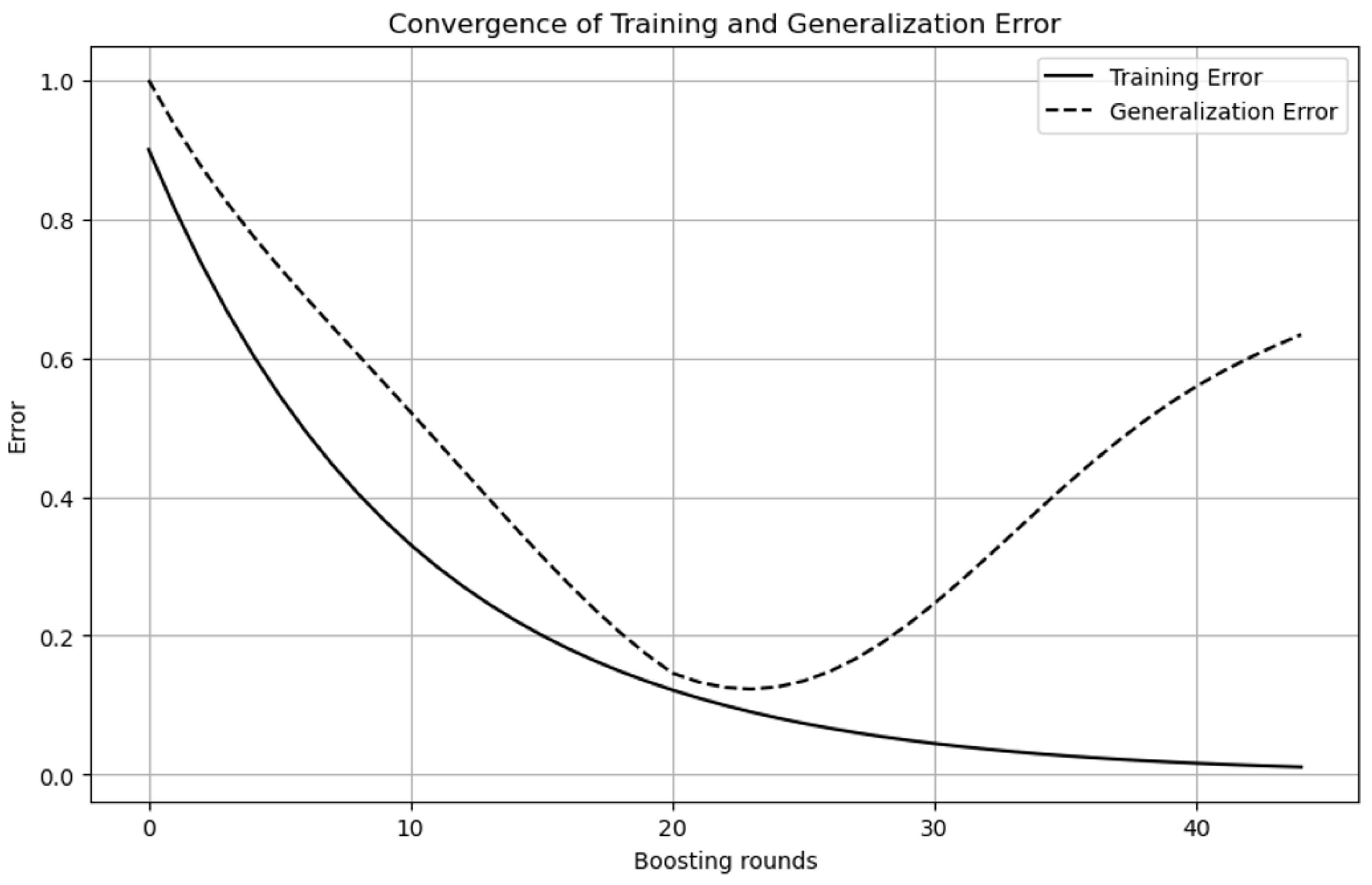

| (1) Initial response requiring concept and image reasoning | “Examine the figure below, which illustrates the convergence behaviour of training and generalization error for a hypothetical machine learning classifier trained by AdaBoost under typical conditions. Discuss the plausibility of observing these kinds of patterns with an algorithm like AdaBoost.” | “The figure presented demonstrates a typical convergence pattern for AdaBoost, a popular ensemble learning algorithm...The convergence behavior depicted in the graph is thus a plausible outcome when using AdaBoost without preventive measures against overfitting.” INCORRECT ANSWER |

| (2) Self-reflection focusing on concept reasoning | “Tell me about the typical effects on generalization by an algorithm like AdaBoost and, under normal circumstances how much it is likely to compromise generalizability by overfitting. ” | “Under standard conditions, the algorithm’s strength lies in its adaptive nature, concentrating on instances that were previously misclassified and thus, when properly tuned, it can be less prone to overfitting compared to non-ensemble methods. However, overfitting with AdaBoost can occur in the presence of noise and outliers in the training data...In a controlled experimental setting, it is often possible to achieve generalization error rates that are competitive with or even superior to other algorithms, provided that AdaBoost’s parameters are optimized through techniques like cross-validation. However, in the presence of highly noisy data or when the assumptions underlying the algorithm are violated, the deterioration in generalization could be considerable. Therefore, while AdaBoost has the potential for robust generalization, it also has a non-negligible risk of compromising generalizability due to overfitting, especially when the model complexity is not adequately controlled.” CORRECT |

| (3) Self-reflection focusing on the image reasoning | “Examine the figure carefully noting the value of the error when the overfitting you mentioned begins to take place and note carefully the point it has reached.” | “The graph indicates that overfitting begins to occur just after 10 boosting rounds, where the generalization error reaches its nadir and starts to increase. At this juncture, the training error continues to decrease, demonstrating the model’s increasing fit to the training data. By the 40th boosting round, the generalization error has increased significantly, surpassing an error rate of 0.4, which is a marked rise from its lowest point. This reflects a substantial degradation in the model’s predictive accuracy on unseen data, signaling a pronounced overfitting as the model is overly optimized for the training dataset.” CORRECT |

| (4) Revised response post-self-reflection | “Now, consider your response above about AdaBoost’s effects on generalisability and combine your response with the detailed observations above regarding when overfitting started and the significant degree to which it increased. Based on these responses, revise your answer to the original exam question about the plausibility of observing these trends with AdaBoost under standard conditions where the data is not corrupted and everything else is typical.” | “Upon integrating the detailed observations with the known effects of AdaBoost on generalizability, it is pertinent to re-evaluate the plausibility of the presented trends under typical, controlled conditions. When the dataset is clean and representative of the underlying distribution, AdaBoost, by incrementally correcting errors of the weak learners, should ideally show a decrease in both training and generalization errors. The convergence pattern would typically demonstrate a plateauing of the generalization error as the number of boosting rounds increases, indicating that the model has learned the general trends in the data without being unduly influenced by noise... Therefore, in a standard scenario with typical data quality and distribution, the observed pattern of the generalization error increasing sharply after a certain point would not be considered typical for AdaBoost...In conclusion, the graph’s indication of rapid overfitting after a relatively low number of boosting rounds is implausible in standard conditions with AdaBoost, where data is clean and well-prepared.” CORRECT ANSWER |

| Subject | GPT-4 Vision’s performance characteristics |

|---|---|

| Business Administration/ Management | Excels in extracting and summarizing business-related information from visual data, recognizing patterns and trends. Exhibits challenges in grasping the full scope of socio-economic contexts and generating deeper strategic insights. |

| Finance | Displays adeptness at quantitative financial analysis and understanding fundamental financial concepts. It encounters challenges with complex financial theories and strategic real-world applications. |

| Marketing | Excels in interpreting marketing data and grasping core concepts, aiding in trend identification. It struggles with deciphering more complex strategic implications and nuances of consumer psychology. |

| Economics | Identifies trends from economic data and understands foundational principles, but faces limitations in deeper theoretical analyses and predictive economic impacts. |

| Computer Science | Interprets technical diagrams and data trends in computer science contexts effectively but struggles with more sophisticated system dynamics and predictive analysis. |

| Nursing | Adeptly interprets data and fundamental nursing concepts but faces challenges in more demanding clinical reasoning and holistic healthcare strategy development. |

| Engineering | Excels in parsing engineering data and explaining technical concepts but encounters difficulties with contextual analyses and predictive evaluations. |

| Biology | Shows proficiency in interpreting biological data and explaining processes but struggles with understanding more complex concepts and performing predictive analysis. |

| Education | Parses educational data and links theories to practice well but struggles with the complexities of educational systems and multidisciplinary integration. |

| Psychology | Effectively interprets psychological data but struggles with more demanding constructs and forward-looking analyses that require a deeper understanding. |

| History | Processes historical data and concepts well but struggles with analyzing more complex relationships and conducting critical evaluations. |

| Communications | Analyzes communication trends and strategies effectively but lacks depth in grasping socio-cultural impacts and strategic ethical considerations. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).