Submitted:

18 April 2024

Posted:

19 April 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Step 1.

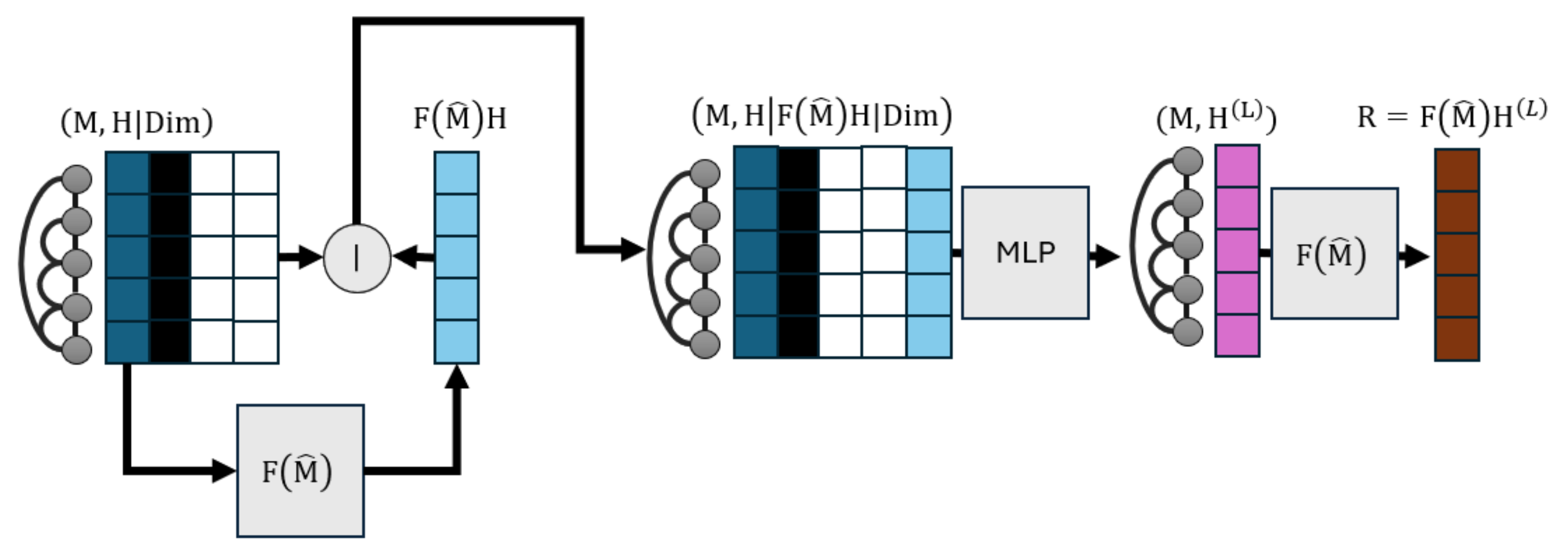

- We analyse positive definite graph filters, which are graph signal processing constructs that diffuse node representations, like embeddings of node features, through links. Graph filters are already responsible for the homophilous propagation of predictions in predict-then-propagate GNNs (Section 2.2) and we examine their ability to track optimization trajectories of their posterior outputs when appropriate modifications are made to inputs. In this case, both inputs and outputs are one-dimensional.

- Step 2.

- By injecting the prior modification process mentioned above in graph filtering pipelines, we end up creating a GNN architecture that can minimally edit input node representations to locally minimize loss functions given some mild conditions.

- Step 3.

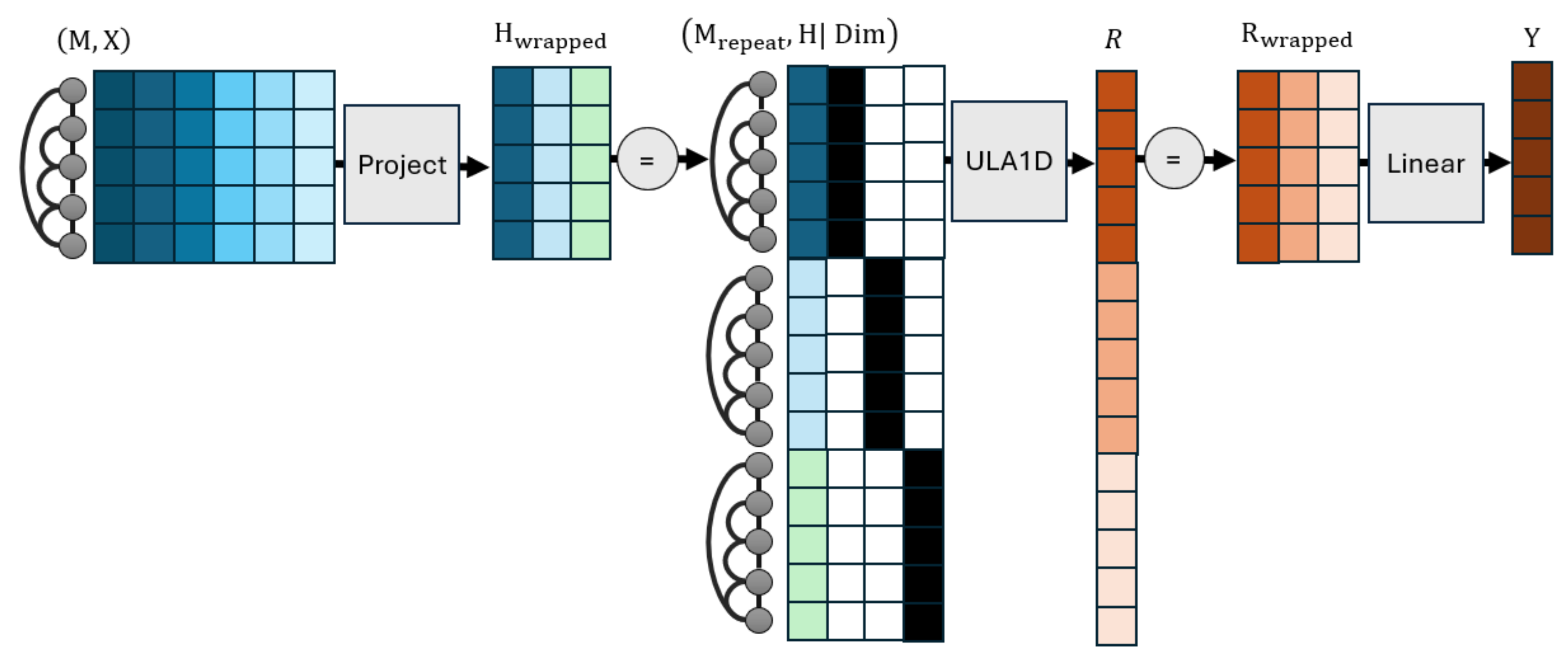

- We generalize our analysis to multidimensional node representations/features by folding the latter to one-dimensional counterparts while keeping track of which elements correspond to which dimension indexes, and transform inputs and outputs through linear relations to account for a different dimensions between them.

- a.

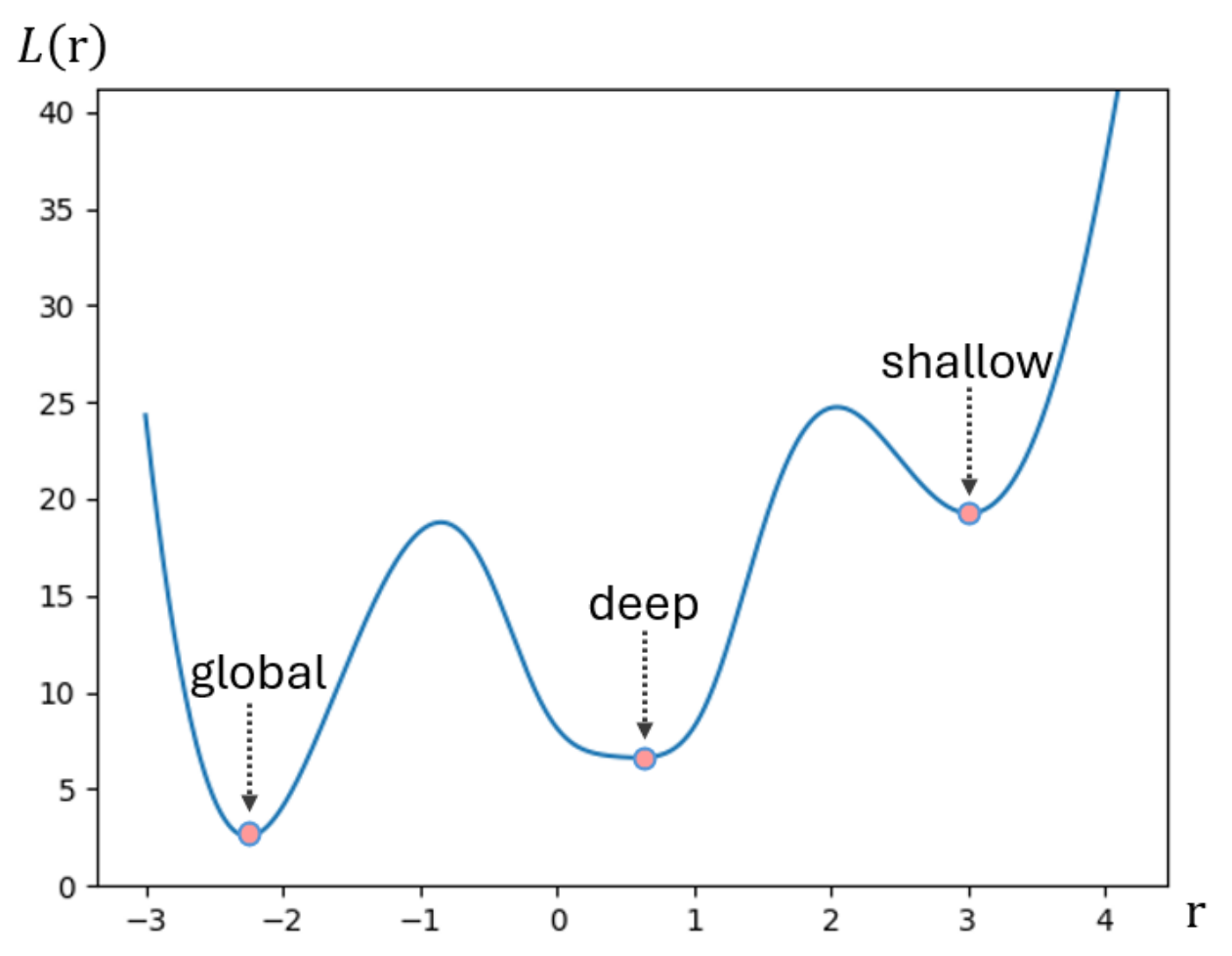

- We introduce the concept of local attraction as a means of producing architectures whose training can reach deep local minima of many loss functions.

- b.

- We develop ULA as a first example of an architecture that satisfies local attraction given the mild conditions summarized in Section 6.3.

- c.

- We experimentally corroborate the ability of ULA to find deep minima on training losses, as indicated by improvements compared to performant GNNs in new tasks.

2. Background

2.1. Graph Filters

2.2. Graph Neural Networks

2.3. Universal Approximation of AGFs

2.4. Symbols

| Symbol | Interpretation |

| The set of all AGFs. | |

| A GNN architecture function with parameters . | |

| An attributed graph with adjacency M and features . | |

| The node feature matrix predicted by on attributed graph . | |

| M | A graph adjacency matrix. |

| A finite or infinite set of attributed graphs. | |

| Gradients of a loss computed at | |

| A normalized version of a graph adjacency matrix. | |

| A graph filter of a normalized graph adjacency matrix. It is also a matrix. | |

| X | A node feature matrix. |

| Y | A node prediction matrix of a GNN. |

| R | A node output matrix of ULA1D. |

| H | A node representation matrix, often procured as a transformation of node features. |

| A loss function defined over AGFs A. | |

| A loss function defined over attributed graphs for node prediction matrix Y. | |

| Node representation matrix at layer ℓ of some architecture. Nodes are rows. | |

| Learnable dense transformation weights at layer ℓ of a neural architecture. | |

| Learnable biases at layer ℓ of a neural architecture. | |

| Activation function at layer ℓ of a neural architecture. | |

| The number of columns of matrix . | |

| The integer ceiling of real number x. | |

| The number of set elements. | |

| The set of a graph’s nodes. | |

| The set of a graph’s edges. | |

| A domain in which some optimization takes place. | |

| Parameters leading to local optimum. | |

| A connected neighborhood of . | |

| A graph signal prior from which optimization starts. | |

| A locally optimal posterior graph signal of some loss. | |

| The L2 norm. | |

| The maximum element. | |

| Horizontal matrix concatenation. | |

| Vertical matrix concatenation. | |

| Multiplayer perceptron with trainable parameters θ |

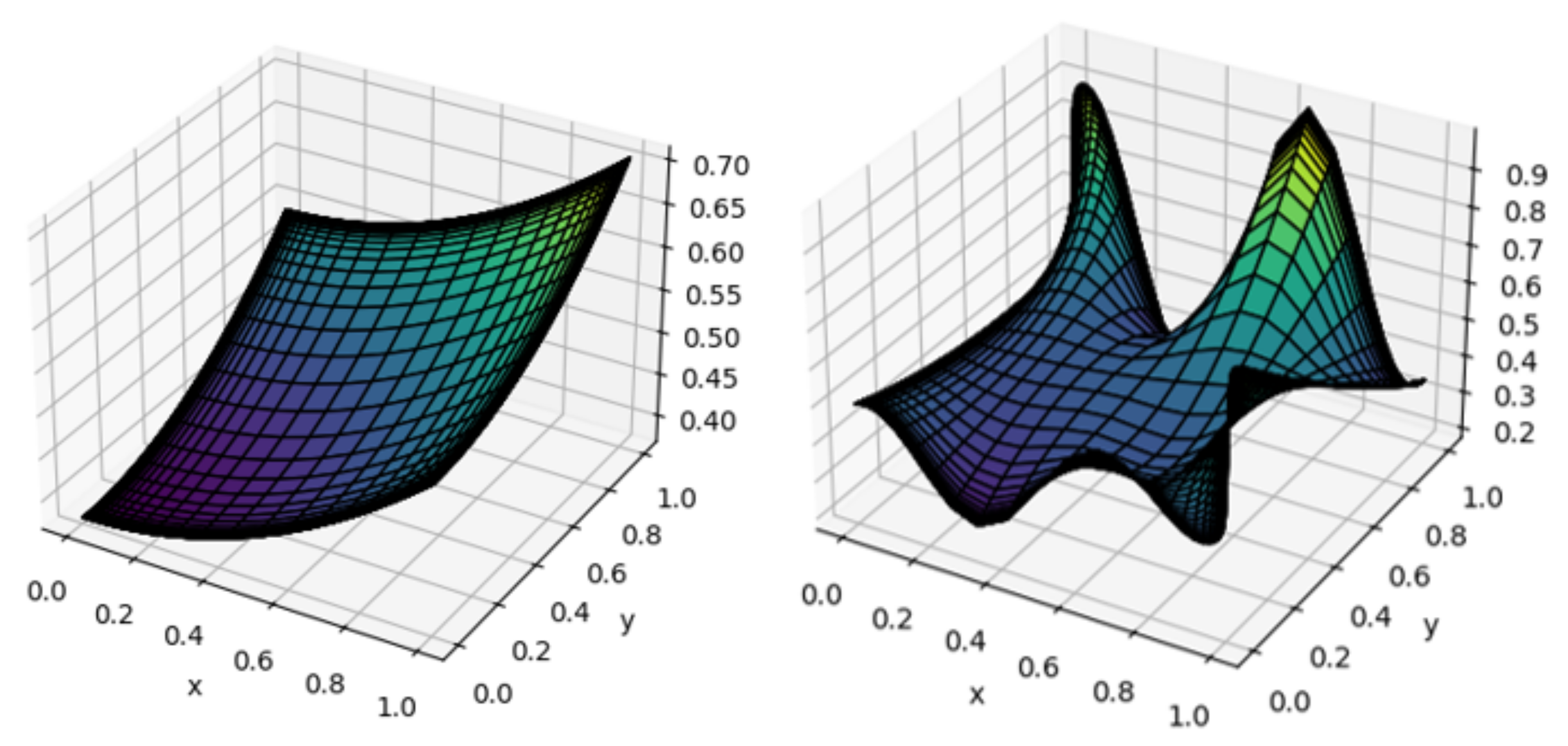

3. One-Dimensional Local Attraction

3.1. Problem Statement

3.2. Local Attractors for One-Dimensional Bode Features in One Graph

3.3. Attraction across Multiple Graphs

4. Universal Local Attractor

4.1. Multidimensional Universal Attraction

4.2. Different Node Feature and Prediction Dimensions

4.3. Implementation Details

- a.

- The output of ULA1D should be divided by 2 when the parameter initialization strategy of relu-activated layers is applied everywhere. This is needed to preserve input-output variance, given that there is an additional linear layer on top. This is not a hyperparameter. Contrary to the partial robustness to different variances exhibited by typical neural networks, having different variances between the input and output may mean that the original output starts from “bad” local minima, from which it may even fail to escape. In the implementation we experiment with later, failing to perform this division makes training get stuck near the architecture’s initialization.

- b.

- Dropout can only be applied before the output’s linear transformation. We recognize that dropout is a necessary requirement for architectures to generalize well, but it cannot be applied on input features due to the sparsity of the representation, and it cannot be applied on the transformation due to breaking any (approximate) invertibility that is being learned.

- c.

- Address the issue of dying neurons for ULA1D. Due to the few feature dimensions of this architecture, it is likely for all activations to become zero and make the architecture stop learning from certain samples from the beginning of training with randomly initialized parameters. In this work, we address this issue by employing leaky relu as the activation function in place of . This change does not violate universal approximation results that we enlist. Per Theorem 3, activation functions should remain Lipschitz and have Lipschitz derivatives almost everywhere; both properties are satisfied by .

- d.

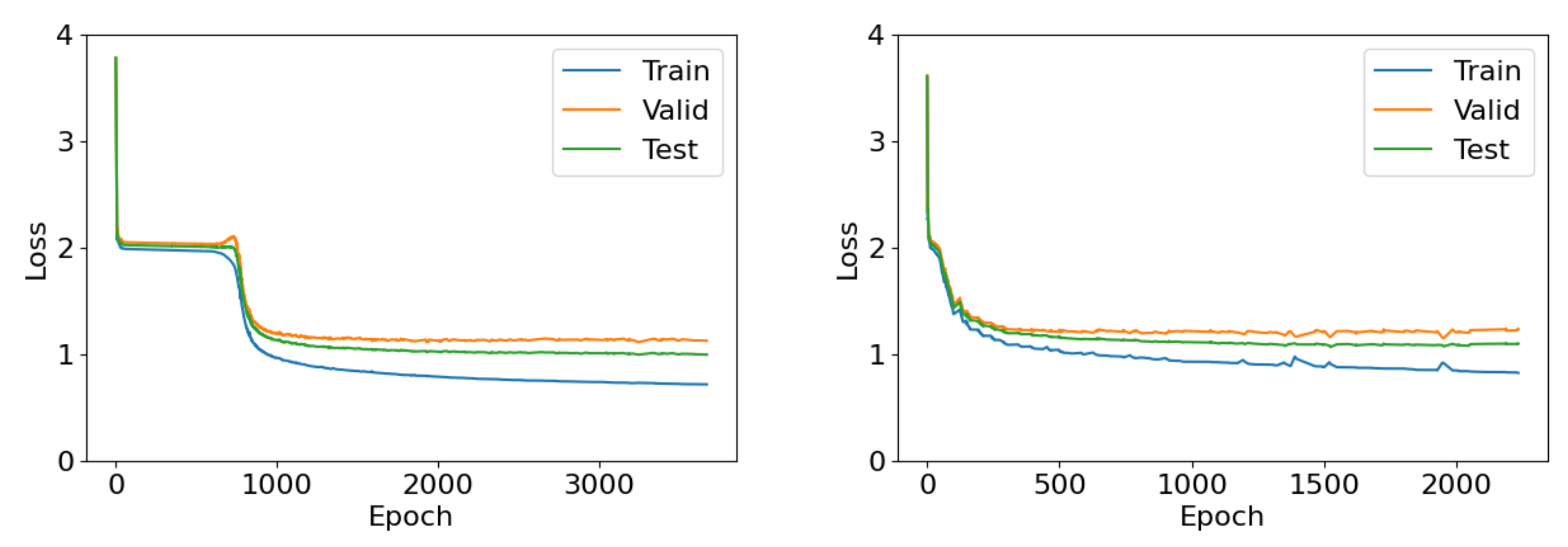

- Adopt a late stopping training strategy that repeats training epochs until both train and validation losses do not decrease for a number of epochs. This strategy lets training overcome saddle points where ULA creates a small derivative. For a more thorough discussion of this phenomenon refer to Section 6.2.

- e.

- Retain linear input and output transformations, and do not apply feature dropout. We previously mentioned the necessity of the first stipulation, but the second is also needed to maintain Lipschitz loss derivatives for the internal ULA1D architecture.

4.4. Running Time and Memory

5. Experiments

5.1. Tasks

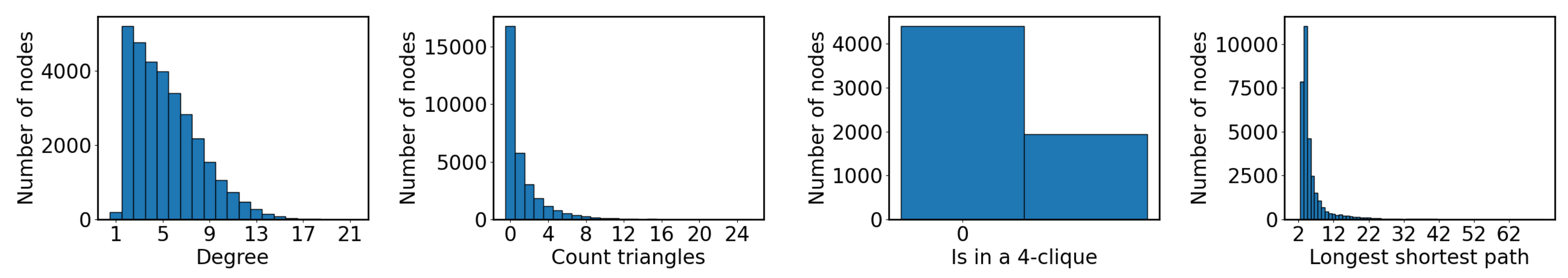

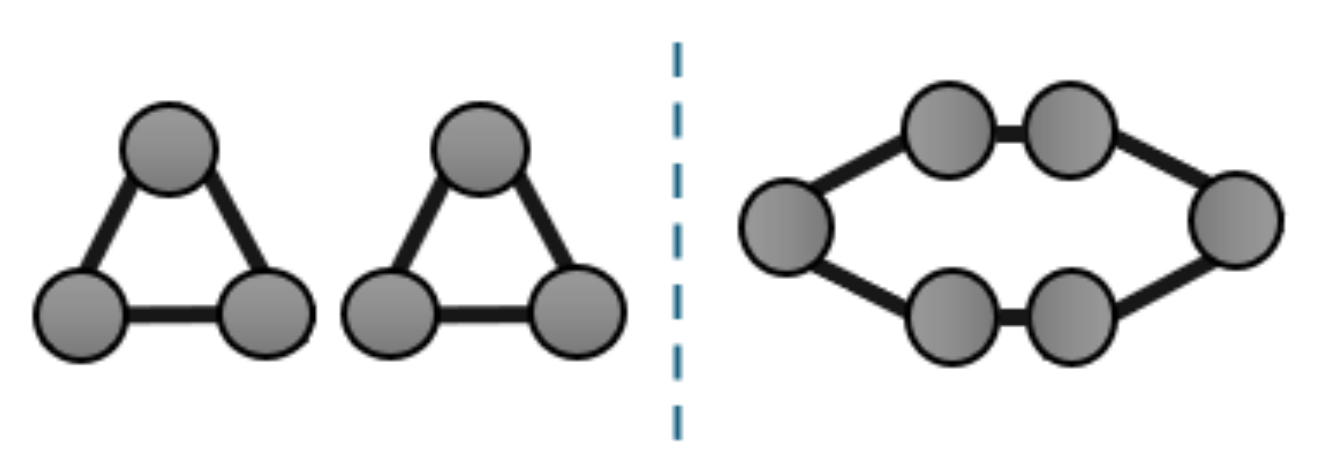

- Degree. Learning to count the number of neighbors of each node. Nodes are provided with an one-hot encoding of their identifiers within the graph, which is a non-equivariant input that architectures need to learn to transfer to equivariant objectives. We do not experiment with variations (e.g., other types of nodes encodings) that are promising for the improvement of predictive efficacy, as this is a basic go-to strategy and our goal is not—in this work— to find the best architectures but instead assess local attractiveness. To simplify experiment setup, we converted this discretized task into a classification one, where node degrees are the known classes.

- Triangle. Learning to count the number of triangles. Similarly to before, nodes are provided with an one-hot encoding of their identifiers within the graph, and we convert this discretized task into a classification one, where the node degrees observed in the training set are the known classes.

- 4Clique. Learning to identify whether each node belongs to a clique of at least four members. Similarly to before, nodes are provided with an one-hot encoding of their identifiers within the graph. Detecting instead of counting cliques yields a comparable number of nodes between the positive and negative outcome, so as to avoid complicating assessment that would need to account for class imbalances. We further improve class balance with a uniformly random number of chance of linking nodes. Due to the high computational complexity of creating the training dataset, we restrict this task only to graphs with a uniformly random number of nodes.

- LongShort. Learning the length of the longest among shortest paths from each node to all others. Similarly to before, nodes are provided with an one-hot encoding of their identifiers within the graph, and we convert this discretized task into a classification one, where path lengths in training data are the known classes.

- Propagate. This task aims to relearn the classification of an APPNP architecture with randomly initialized weights and uniformly random (the same for all graphs) that is applied on nodes with 16 feature dimensions, uniformly random features in the range and 4 output classes. This task effectively assesses our ability to reproduce a graph algorithm. It is harder than the node classification settings bellow in that features are continuous.

- 0.9Propagate. This is the same as the previous task with fixed . Essentially, this is perfectly replicable by APPNP, which means that that any failure of the latter to find deep minima for it should be attributed to the need for better optimization strategies.

- Diffuse. This is the same task as above, with the difference that the aim to directly replicate output scores in the range for each of the 4 output dimensions (these are not soft-maximized).

- 0.9Diffuse. This is the same as the previous task with fixed . Similarly to 0.9Propagate, this task is in theory perfectly replicable by APPNP.

- Cora [31]. A graph that comprises nodes and edges. Its nodes exhibit feature dimensions and are classified in one among 7 classes.

- Citeseer [32]. A graph that comprises nodes and edges. Its nodes exhibit feature dimensions and are classified in one among 6 classes.

- Pubmed [33]. A graph that comprises nodes and edges. Its nodes exhibit 500 feature dimensions and are classified iin one among 3 classes.

5.2. Compared Architectures

- MLP. A two-layer perceptron that does not leverage any graph information. We use this as a baseline to measure the success of graph learning.

- GAT [13]. A gated attention network that learns to construct edge weights based on the representations at each edge’s ends, and uses the weights to aggregate neighbor representations.

- GCNII [34]. An attempt to create a deeper variation of GCN that is explicitly design to be able to replicate the diffusion processes of graph filters, if needed. We employ the variation with 64 layers.

- APPNP [8]. The predict-then-propagate architecture with ppr base filter. Switching to different filters lets this approach maintain roughly similar performance, and we opt for its usage due to it being a standard player in GNN evaluation methodologies.

- ULA [this work]. Our universal local minimizer presented in this work. Motivated by the success of APPNP on node classification tasks, we also adopt the first 10 iterations of personalized PageRank as the filter of choice, and leave further improvements by tuning the filter to future work.

- GCNNRI. This is the GCN-like MPNN introduced by Abboud et al. [24]; it employs random node representations to admit greater (universal approximation) expressive power and serves as a state-of-the-art baseline. We adopt its publicly available implementation, which first transforms features through two GCN layers of Equation 2 and concatenates them with a feature matrix of equal dimensions sampled anew in each forward pass. Afterwards, GCNNRI applies three more GCN layers and then another three-layered MLP. The original architecture max-pooled node-level predictions before the last MLP to create an invariant output (i.e., one prediction for each graph), but we do not do so to accommodate our experiment settings by retaining equivariance, and therefore create a separate prediction for each node. We employ the variation that replace half of node feature dimensions with random representations.

5.3. Evaluation Methodology

5.4. Experiment Results

6. Discussion

6.1. Limitations of Cross-Entropy

6.2. Convergence Speed and Late Stopping

6.3. Requirements to Apply ULA

- a.

- The first condition is that processes graphs should be unweighted and undirected. Normalizing adjacency matrices is accepted (we do this in experiments—in fact it is necessary to obtain the spectral graph filters we work with), but structurally isomorphic graphs should obtain the same edge weights after normalization. Otherwise, Theorem 3 cannot hold in its present form. Future theoretical analysis can consider discretizing edge weights to obtain more powerful results.

- b.

- Furthermore, loss functions and output activations should be twice differentiable and each of their gradient dimensions Lipschitz. Our results can be easily extended to differentiable losses with continuous non-infinite gradients by framing their linearization needed by Theorem 1 as a locally Lipschitz derivative. However, more rigorous analysis is needed to extend results to non-differentiable losses, even to those that are differentiable “almost everywhere”.

- c.

- Lipschitz continuity of loss and activation derivatives is typically easy to satisfy, as any Lipschitz constant is acceptable. That said, higher constant values will be harder to train, as they will require more finegrained discretization. Notice that the output activation is considered part of the loss in our analysis, which may simplify matters. Characteristically, passing softmax activation through a categorical cross-entropy loss is known to create gradients equal to the difference between predictions and true labels, which is a 1-Lipschitz function and therefore accepts ULA. Importantly, having no activation with mabs or L2 loss is admissible in regression strategies, for example because the L2 loss has a 1-Lipschitz derivative, but using higher powers of score differences or KL-divergence is not acceptable, as their second derivatives are unbounded and thus not Lipschitz.

- d.

- Finally, ULA admits powerful minimization strategies but requires positional encodings to exhibit stronger expressive power. To this end, we suggest following progress on respective literature.

6.4. Threats to Validity

7. Conclusions and Future Directions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Appendix A. Terminology

Appendix B. Theoretical Proofs

Appendix C. Algorithmic Complexity

Appendix D. ULA as an MPNN

References

- Loukas, A. What graph neural networks cannot learn: Depth vs width. arXiv arXiv:1907.03199 2019.

- Nguyen, Q.; Mukkamala, M.C.; Hein, M. On the loss landscape of a class of deep neural networks with no bad local valleys. arXiv arXiv:1809.10749 2018.

- Sun, R.Y. Optimization for deep learning: An overview. Journal of the Operations Research Society of China 2020, 8, 249–294. [Google Scholar] [CrossRef]

- Bae, K.; Ryu, H.; Shin, H. Does Adam optimizer keep close to the optimal point? arXiv arXiv:1911.00289 2019.

- Ortega, A.; Frossard, P.; Kovačević, J.; Moura, J.M.; Vandergheynst, P. Graph signal processing: Overview, challenges, and applications. Proceedings of the IEEE 2018, 106, 808–828. [Google Scholar] [CrossRef]

- Page, L.; Brin, S.; Motwani, R.; Winograd, T.; et al. The pagerank citation ranking: Bringing order to the web 1999.

- Chung, F. The heat kernel as the pagerank of a graph. Proceedings of the National Academy of Sciences 2007, 104, 19735–19740. [Google Scholar] [CrossRef]

- Gasteiger, J.; Bojchevski, A.; Günnemann, S. Predict then propagate: Graph neural networks meet personalized pagerank. arXiv, arXiv:1810.05997 2018.

- Agarap, A.F. Deep learning using rectified linear units (relu). arXiv, arXiv:1803.08375 2018.

- Gilmer, J.; Schoenholz, S.S.; Riley, P.F.; Vinyals, O.; Dahl, G.E. Neural message passing for quantum chemistry. In Proceedings of the International conference on machine learning. PMLR; 2017; pp. 1263–1272. [Google Scholar]

- Balcilar, M.; Héroux, P.; Gauzere, B.; Vasseur, P.; Adam, S.; Honeine, P. Breaking the limits of message passing graph neural networks. In Proceedings of the International Conference on Machine Learning. PMLR; 2021; pp. 599–608. [Google Scholar]

- Kipf, T.N.; Welling, M. Semi-supervised classification with graph convolutional networks. arXiv arXiv:1609.02907 2016.

- Velickovic, P.; Cucurull, G.; Casanova, A.; Romero, A.; Lio, P.; Bengio, Y.; et al. Graph attention networks. stat 2017, 1050, 10–48550. [Google Scholar]

- Cai, J.Y.; Fürer, M.; Immerman, N. An optimal lower bound on the number of variables for graph identification. Combinatorica 1992, 12, 389–410. [Google Scholar] [CrossRef]

- Maron, H.; Ben-Hamu, H.; Serviansky, H.; Lipman, Y. Provably powerful graph networks. Advances in neural information processing systems 2019, 32. [Google Scholar]

- Morris, C.; Ritzert, M.; Fey, M.; Hamilton, W.L.; Lenssen, J.E.; Rattan, G.; Grohe, M. Weisfeiler and leman go neural: Higher-order graph neural networks. In Proceedings of the Proceedings of the AAAI conference on artificial intelligence, 2019, Vol. 33, pp. 4602–4609.

- Zaheer, M.; Kottur, S.; Ravanbakhsh, S.; Poczos, B.; Salakhutdinov, R.R.; Smola, A.J. Deep sets. Advances in neural information processing systems 2017, 30. [Google Scholar]

- Keriven, N.; Peyré, G. Universal invariant and equivariant graph neural networks. Advances in Neural Information Processing Systems 2019, 32. [Google Scholar]

- Maron, H.; Fetaya, E.; Segol, N.; Lipman, Y. On the universality of invariant networks. In Proceedings of the International conference on machine learning. PMLR; 2019; pp. 4363–4371. [Google Scholar]

- Xu, K.; Hu, W.; Leskovec, J.; Jegelka, S. How powerful are graph neural networks? arXiv arXiv:1810.00826 2018.

- Wang, H.; Yin, H.; Zhang, M.; Li, P. Equivariant and stable positional encoding for more powerful graph neural networks. arXiv arXiv:2203.00199 2022.

- Keriven, N.; Vaiter, S. What functions can Graph Neural Networks compute on random graphs? The role of Positional Encoding. Advances in Neural Information Processing Systems 2024, 36. [Google Scholar]

- Sato, R.; Yamada, M.; Kashima, H. Random features strengthen graph neural networks. In Proceedings of the 2021 SIAM international conference on data mining (SDM). SIAM, 2021; pp. 333–341.

- Abboud, R.; Ceylan, I.I.; Grohe, M.; Lukasiewicz, T. The surprising power of graph neural networks with random node initialization. arXiv arXiv:2010.01179 2020.

- Krasanakis, E.; Papadopoulos, S.; Kompatsiaris, I. Applying fairness constraints on graph node ranks under personalization bias. In Proceedings of the Complex Networks & Their Applications IX: Volume 2, Proceedings of the Ninth International Conference on Complex Networks and Their Applications COMPLEX NETWORKS 2020. Springer, 2021, pp. 610–622.

- Kidger, P.; Lyons, T. Universal approximation with deep narrow networks. In Proceedings of the Conference on learning theory. PMLR; 2020; pp. 2306–2327. [Google Scholar]

- Hoang, N.; Maehara, T.; Murata, T. Revisiting graph neural networks: Graph filtering perspective. In Proceedings of the 2020 25th International Conference on Pattern Recognition (ICPR). IEEE; 2021; pp. 8376–8383. [Google Scholar]

- Huang, Q.; He, H.; Singh, A.; Lim, S.N.; Benson, A.R. Combining Label Propagation and Simple Models Out-performs Graph Neural Networks. arXiv arXiv:2010.13993 2020.

- Zhou, D.; Bousquet, O.; Lal, T.; Weston, J.; Schölkopf, B. Learning with local and global consistency. Advances in neural information processing systems 2003, 16. [Google Scholar]

- Fey, M.; Lenssen, J.E. Fast graph representation learning with PyTorch Geometric. arXiv arXiv:1903.02428 2019.

- Bojchevski, A.; Günnemann, S. Deep gaussian embedding of graphs: Unsupervised inductive learning via ranking. arXiv arXiv:1707.03815 2017.

- Sen, P.; Namata, G.; Bilgic, M.; Getoor, L.; Galligher, B.; Eliassi-Rad, T. Collective classification in network data. AI magazine 2008, 29, 93. [Google Scholar] [CrossRef]

- Namata, G.; London, B.; Getoor, L.; Huang, B.; Edu, U. Query-driven active surveying for collective classification. In Proceedings of the 10th international workshop on mining and learning with graphs; 2012; Volume 8, p. 1. [Google Scholar]

- Chen, M.; Wei, Z.; Huang, Z.; Ding, B.; Li, Y. Simple and deep graph convolutional networks. In Proceedings of the International conference on machine learning. PMLR; 2020; pp. 1725–1735. [Google Scholar]

- Dunn, O.J. Multiple comparisons among means. Journal of the American statistical association 1961, 56, 52–64. [Google Scholar] [CrossRef]

- Demšar, J. Statistical comparisons of classifiers over multiple data sets. The Journal of Machine learning research 2006, 7, 1–30. [Google Scholar]

- Hollander, M.; Wolfe, D.A.; Chicken, E. Nonparametric statistical methods; John Wiley & Sons, 2013.

- He, K.; Zhang, X.; Ren, S.; Sun, J. Delving deep into rectifiers: Surpassing human-level performance on imagenet classification. In Proceedings of the IEEE international conference on computer vision, 2015; pp. 1026–1034.

- Nunes, M.; Fraga, P.M.; Pappa, G.L. Fitness landscape analysis of graph neural network architecture search spaces. In Proceedings of the Genetic and Evolutionary Computation Conference, 2021; pp. 876–884.

| 1 | If we replaced GNNs with independent optimization for each node when only one graph is analysed, we would not be able to impose structural properties that let the predictive performance generalize to validation and test data; this is demonstrated by experiment results for the MLP architecture in Section 5. |

| 2 | In Lemma 2, the subscript is the function’s input. |

| 3 | Larger radii can be obtained for near-quadratic objectives, for which is small. In practice, this can be imposed by also adding a large enough L2 regularization, but we leave theoretical study of this option to future work. |

| 4 | |

| 5 | We allow decreases in training losses to reset the stopping patience in order to prevent architectures from getting stuck at shallow minima, especially at the beginning of training. This increases the training time of architectures to requiring up to 6,000 epochs in some settings, but in return tends to find much deeper minima when convergence is slower. A related discussion is presented in Section 6.2. |

| 6 | In Lemma 2, the subscript is the function’s input. |

| Task | Eval. | MLP | GCN [12] | APPNP [8] | GAT [13] | GCNII [34] | GCNNRI [24] | ULA |

|---|---|---|---|---|---|---|---|---|

| Generalization | ||||||||

| Degree | acc ↑ | 0.162 (5.6) | 0.293 (2.8) | 0.160 (6.2) | 0.166 (5.6) | 0.174 (4.6) | 0.473 (2.0) | 0.541 (1.2) |

| Triangle | acc ↑ | 0.522 (5.2) | 0.523 (3.6) | 0.522 (5.2) | 0.522 (5.2) | 0.522 (5.2) | 0.554 (2.4) | 0.568 (1.2) |

| 4Clique | acc ↑ | 0.678 (4.9) | 0.708 (4.1) | 0.677 (6.2) | 0.678 (4.9) | 0.678 (4.9) | 0.846 (2.0) | 0.870 (1.0) |

| LongShort | acc ↑ | 0.347 (5.8) | 0.399 (3.0) | 0.344 (6.4) | 0.350 (4.2) | 0.348 (5.6) | 0.597 (2.0) | 0.653 (1.0) |

| Diffuse | mabs ↓ | 0.035 (4.0) | 0.032 (2.8) | 0.067 (7.0) | 0.035 (5.0) | 0.037 (6.0) | 0.030 (2.2) | 0.012 (1.0) |

| 0.9Diffuse | mabs ↓ | 0.021 (4.6) | 0.015 (3.0) | 0.034 (7.0) | 0.021 (4.4) | 0.021 (6.0) | 0.009 (1.8) | 0.007 (1.2) |

| Propagate | acc ↑ | 0.531 (2.6) | 0.501 (4.0) | 0.510 (3.6) | 0.455 (6.1) | 0.443 (6.9) | 0.503 (3.8) | 0.861 (1.0) |

| 0.9Propagate | acc ↑ | 0.436 (5.3) | 0.503 (3.0) | 0.452 (4.0) | 0.418 (5.8) | 0.401 (6.7) | 0.546 (2.2) | 0.846 (1.0) |

| Cora | acc ↑ | 0.683 (6.6) | 0.855 (4.2) | 0.866 (1.4) | 0.850 (4.1) | 0.863 (2.4) | 0.696 (6.4) | 0.859 (2.9) |

| Citeseer | acc ↑ | 0.594 (6.0) | 0.663 (3.9) | 0.676 (2.3) | 0.661 (4.0) | 0.679 (1.8) | 0.443 (7.0) | 0.669 (3.0) |

| Pubmed | acc ↑ | 0.778 (6.4) | 0.825 (3.7) | 0.835 (2.0) | 0.821 (4.0) | 0.835 (2.1) | 0.747 (6.6) | 0.829 (3.2) |

| Average (CD=1.1) | (5.2) | (3.5) | (4.7) | (4.8) | (4.7) | (3.5) | (1.6) | |

| Deepness (overtraining capability) | ||||||||

| Degree | acc ↑ | 0.172 (5.7) | 0.331 (3.0) | 0.170 (6.6) | 0.175 (5.7) | 0.186 (4.0) | 0.632 (1.2) | 0.571 (1.8) |

| Triangle | acc ↑ | 0.510 (4.7) | 0.510 (4.2) | 0.510 (4.7) | 0.510 (4.7) | 0.510 (4.7) | 0.536 (3.8) | 0.568 (1.2) |

| 4Clique | acc ↑ | 0.665 (5.0) | 0.779 (3.0) | 0.657 (6.4) | 0.665 (5.0) | 0.663 (5.6) | 0.898 (1.4) | 0.894 (1.6) |

| LongShort | acc ↑ | 0.347 (6.0) | 0.391 (3.0) | 0.347 (6.0) | 0.351 (4.0) | 0.347 (6.0) | 0.588 (2.0) | 0.688 (1.0) |

| Diffuse | mabs ↓ | 0.032 (4.4) | 0.028 (3.0) | 0.061 (7.0) | 0.032 (4.6) | 0.033 (6.0) | 0.026 (2.0) | 0.012 (1.0) |

| 0.9Diffuse | mabs ↓ | 0.021 (4.6) | 0.015 (3.0) | 0.034 (7.0) | 0.021 (4.4) | 0.021 (6.0) | 0.009 (1.8) | 0.007 (1.2) |

| Propagate | acc ↑ | 0.477 (4.2) | 0.502 (2.4) | 0.454 (4.4) | 0.461 (4.9) | 0.413 (6.6) | 0.503 (4.5) | 0.844 (1.0) |

| 0.9Propagate | acc ↑ | 0.493 (4.8) | 0.527 (2.8) | 0.534 (3.2) | 0.444 (6.3) | 0.451 (6.5) | 0.534 (3.4) | 0.873 (1.0) |

| Cora | acc ↑ | 0.905 (5.6) | 0.914 (3.2) | 0.905 (5.4) | 0.911 (3.8) | 0.864 (7.0) | 0.930 (2.0) | 0.996 (1.0) |

| Citeseer | acc ↑ | 0.865 (2.2) | 0.818 (3.8) | 0.794 (6.0) | 0.808 (5.0) | 0.767 (7.0) | 0.849 (3.0) | 0.959 (1.0) |

| Pubmed | acc ↑ | 0.868 (3.8) | 0.871 (2.0) | 0.862 (5.2) | 0.855 (6.8) | 0.859 (6.0) | 0.869 (3.2) | 0.956 (1.0) |

| Average (CD=1.1) | (4.2) | (3.0) | (5.6) | (5.0) | (6.0) | (2.6) | (1.2) | |

| loss (↓) | acc (↑) | |||||

|---|---|---|---|---|---|---|

| Train | Valid | Test | Train | Valid | Test | |

| MLP | 0.113 (min 0.103) | 1.061 | 1.059 | 1.000 | 0.657 | 0.659 |

| GCN | 0.198 (min 0.194) | 0.541 | 0.564 | 0.986 | 0.863 | 0.860 |

| APPNP | 0.288 (min 0.284) | 0.544 | 0.563 | 0.971 | 0.869 | 0.860 |

| GAT | 0.261 (min 0.254) | 0.546 | 0.574 | 0.983 | 0.866 | 0.854 |

| GCNII | 0.425 (min 0.423) | 0.656 | 0.668 | 0.951 | 0.866 | 0.849 |

| GCNNRI | 0.262 (min 0.015) | 0.839 | 0.936 | 0.929 | 0.789 | 0.759 |

| ULA | 0.161 (min 0.007) | 0.479 | 0.890 | 0.974 | 0.854 | 0.846 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).