Submitted:

15 March 2024

Posted:

15 March 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

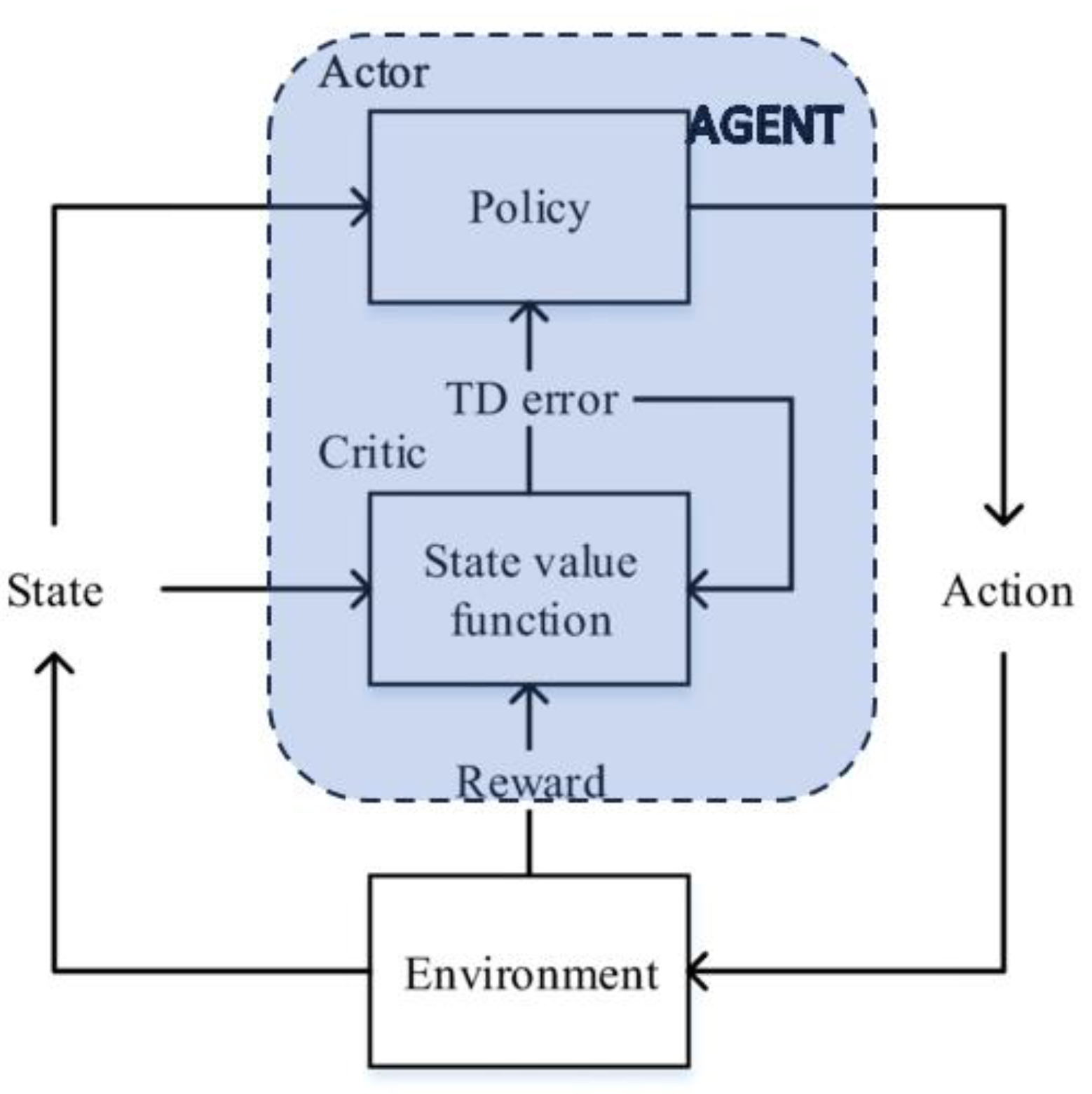

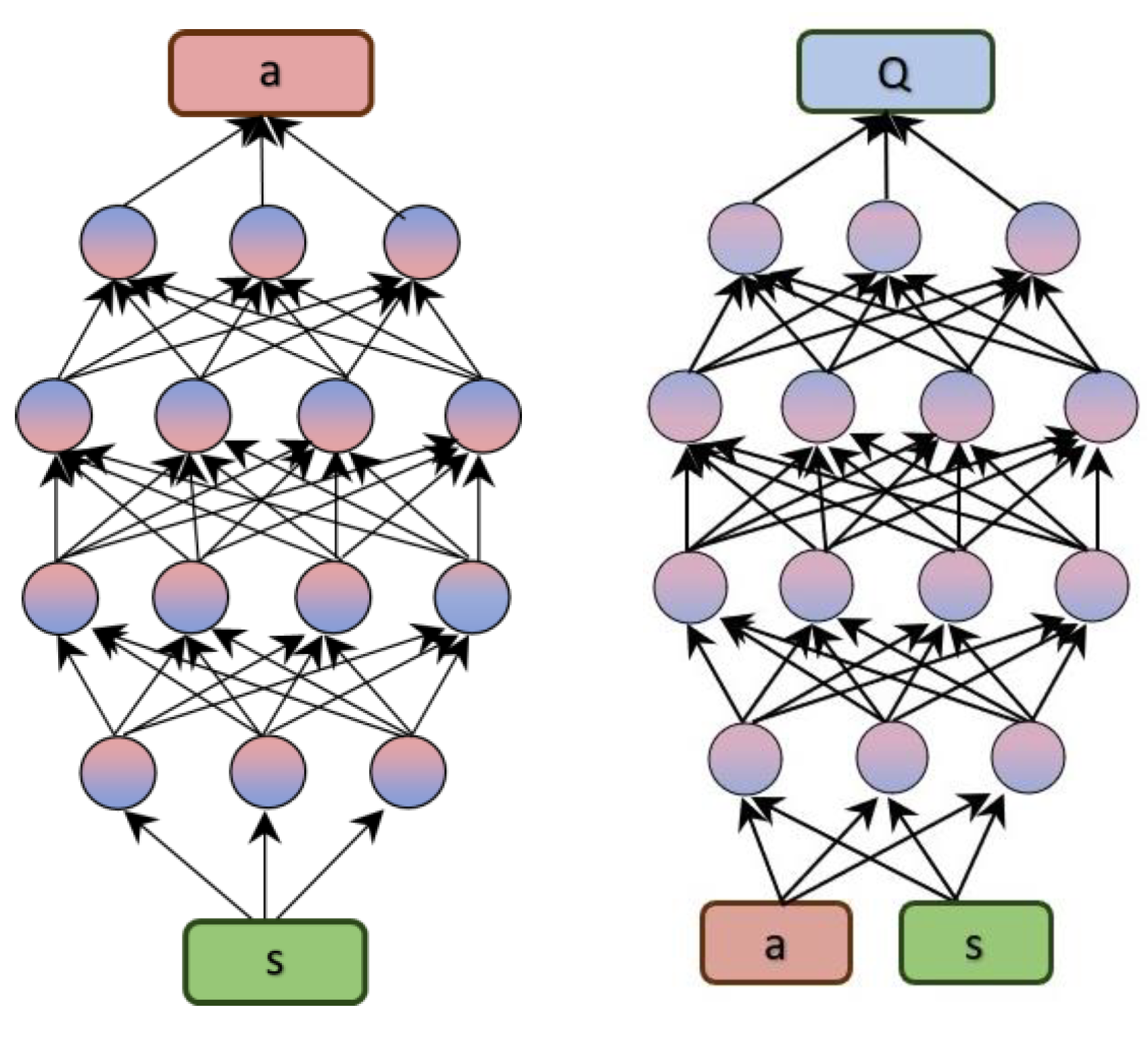

- Agent: The agent is the entity that is learning to behave in an environment. The most common structure for the agent is composed of two elements: Critic and Actor. The critic estimates the expected cumulative reward (value) associated with being in a certain state and following the policy defined by the actor. The actor is responsible for learning and deciding the optimal policy – the mapping from states to actions. It is essentially the decision-maker or policy function.

- Environment: The environment is the world that the agent interacts with.

- State: The state is the current condition of the environment.

- Action: An action is something that the agent can do in the environment.

- Reward: A reward is a signal that indicates whether an action was good or bad.

- Policy: A policy is a rule that tells the agent what action to take in a given state.

- Value function: A value function is a measure of how good it is to be in a given state.

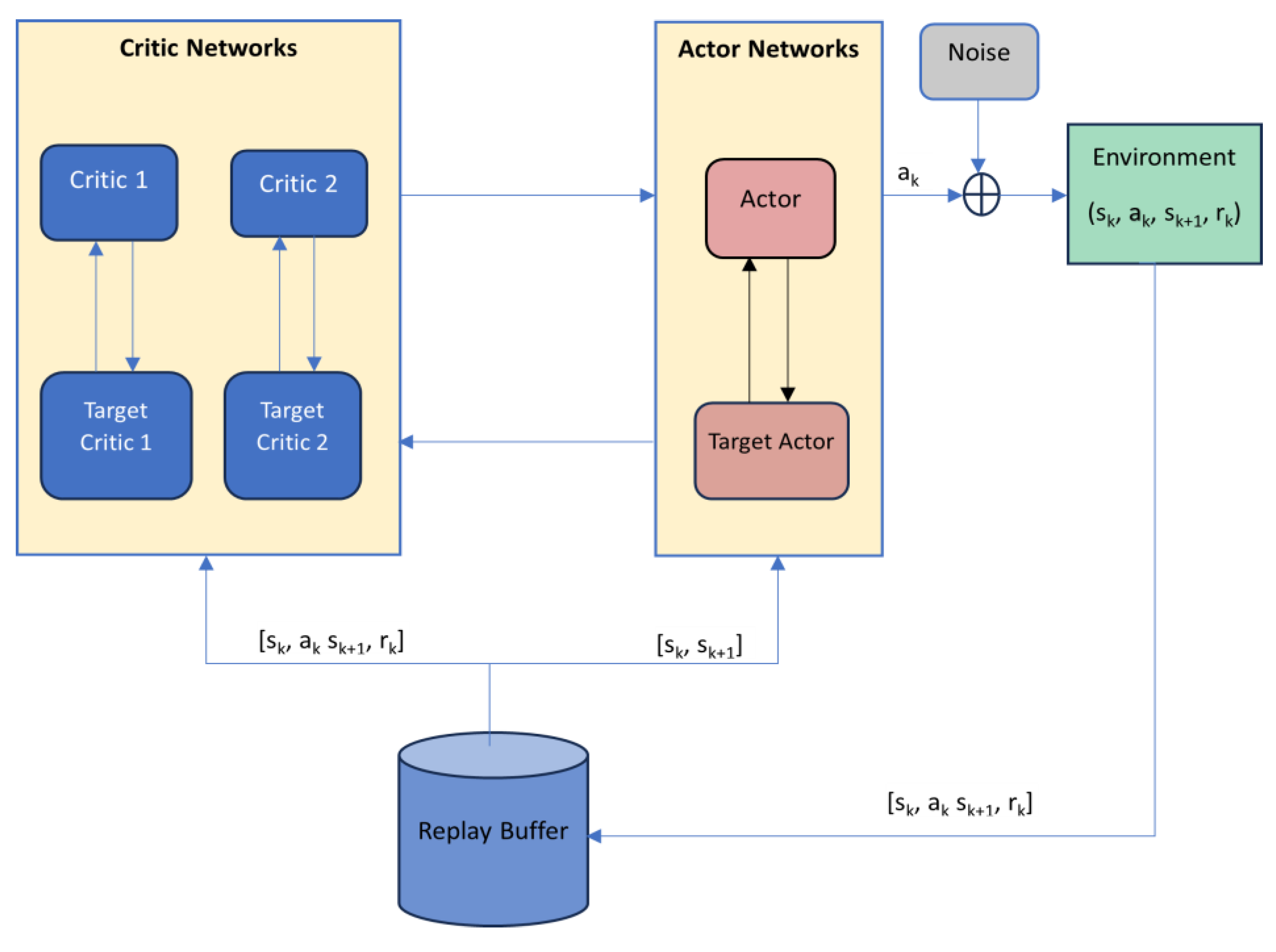

2. Twin Delayed Deep Deterministic Policy Gradient (TD3) Algorithm

2.1. Deep Deterministic Policy Gradient

- – TD-error

- – learning rate

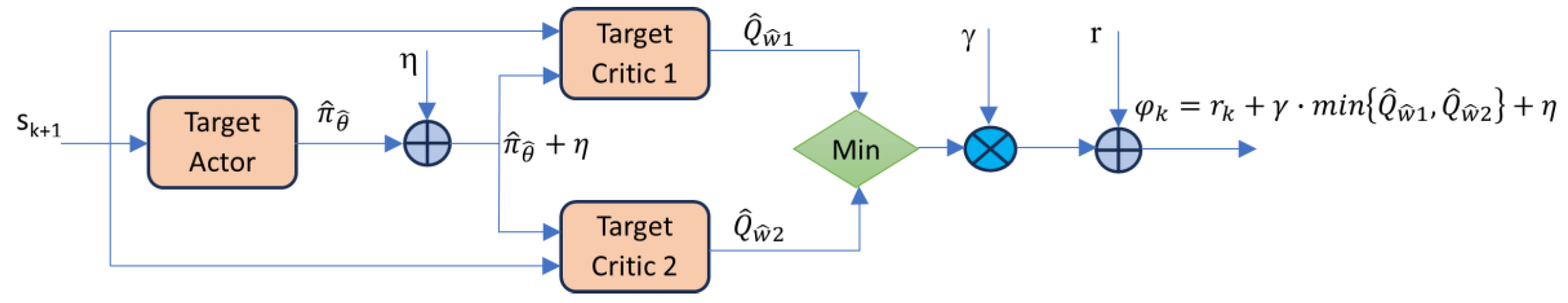

2.2. TD3 – The Main Characteristics

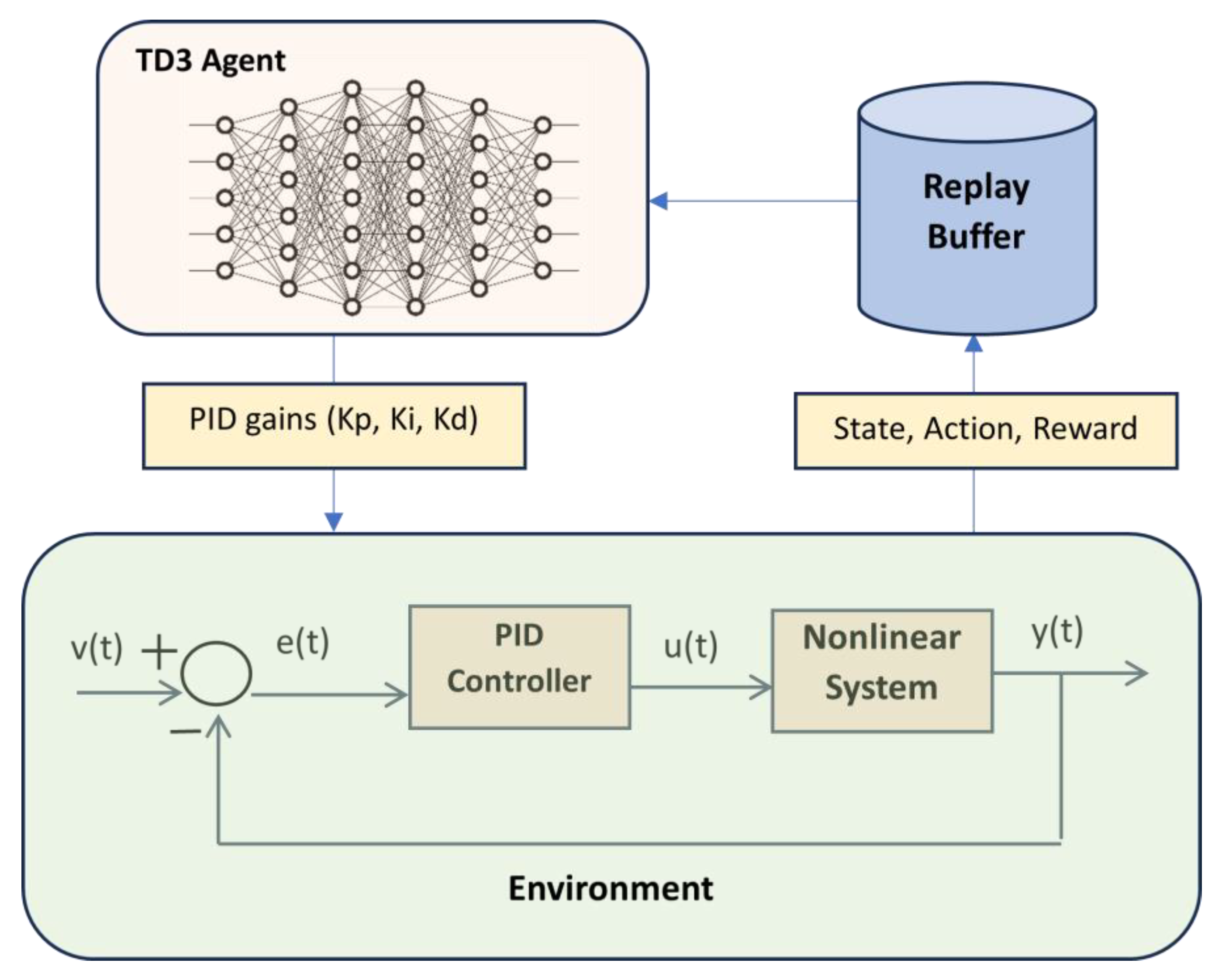

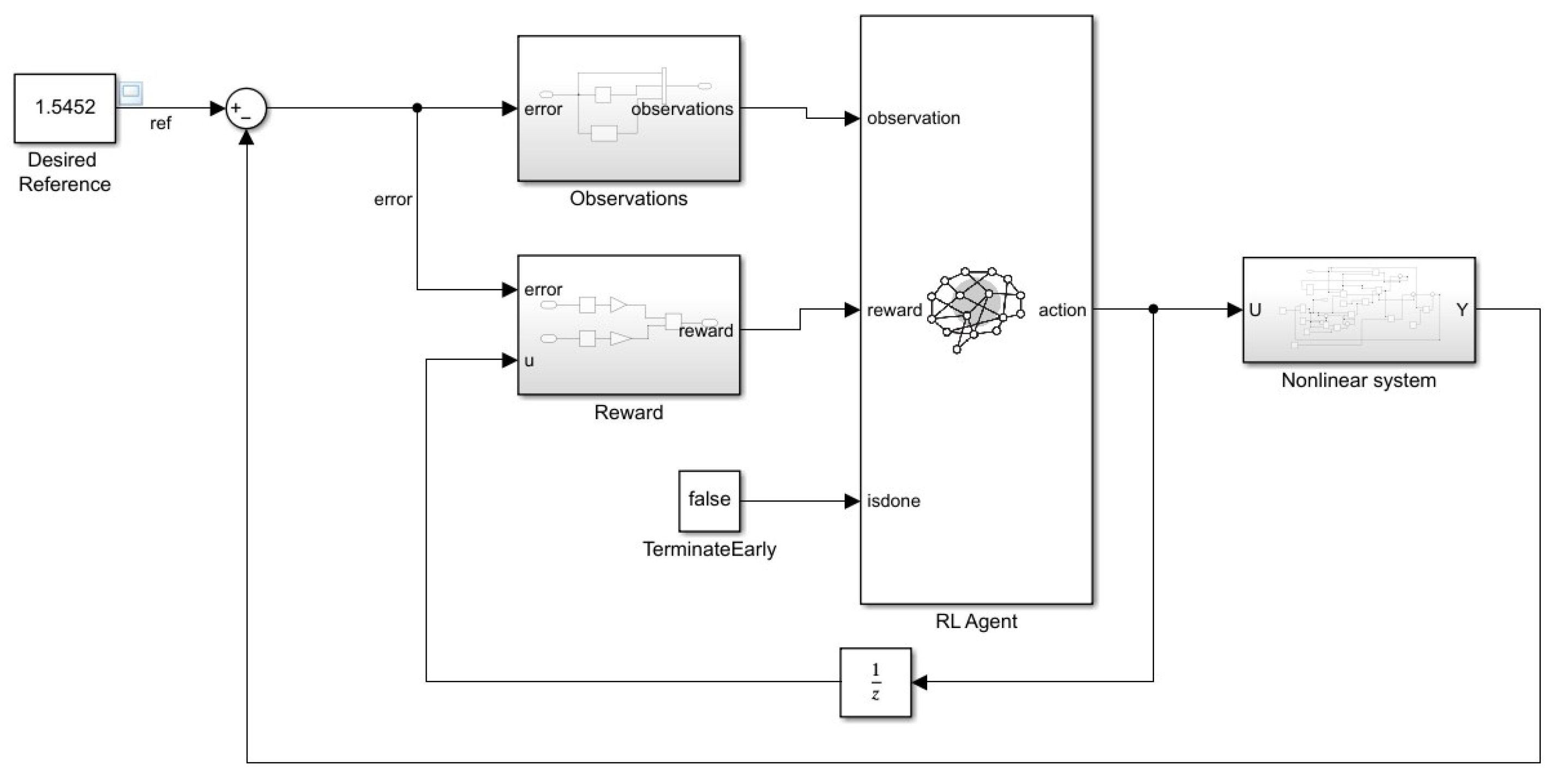

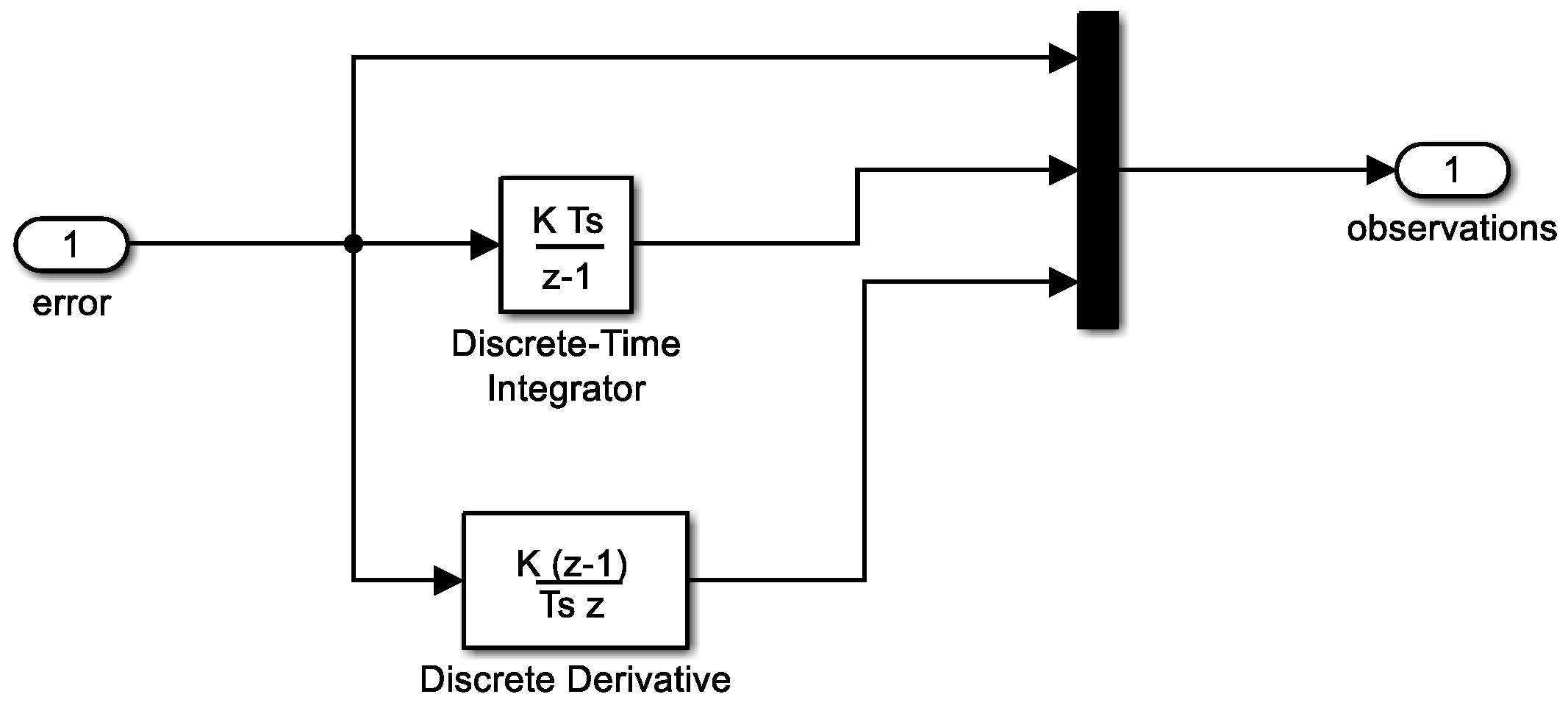

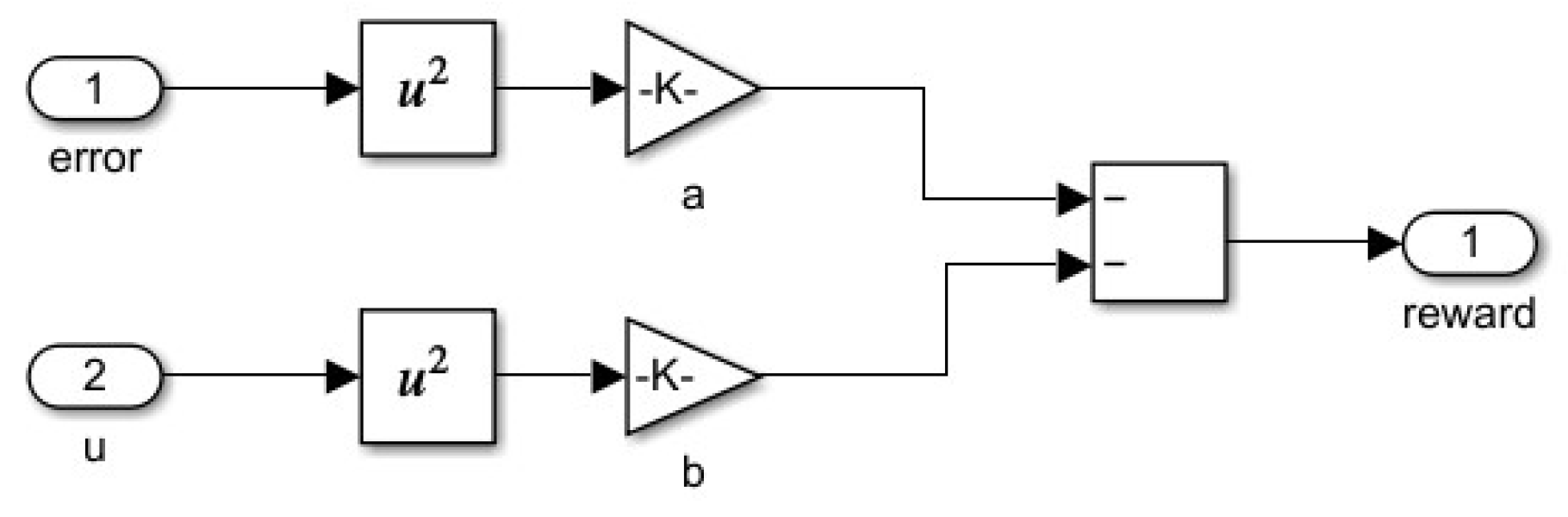

3. Tunning of PID Controllers Using TD3 Algorithm

- u is the output of the actor neural network.

- Kp, Ki and Kd are the PID controller parameters.

- e(t)=v(t)−y(t) where e(t) is system error, y(t) is the system output, and v(t) is the reference signal.

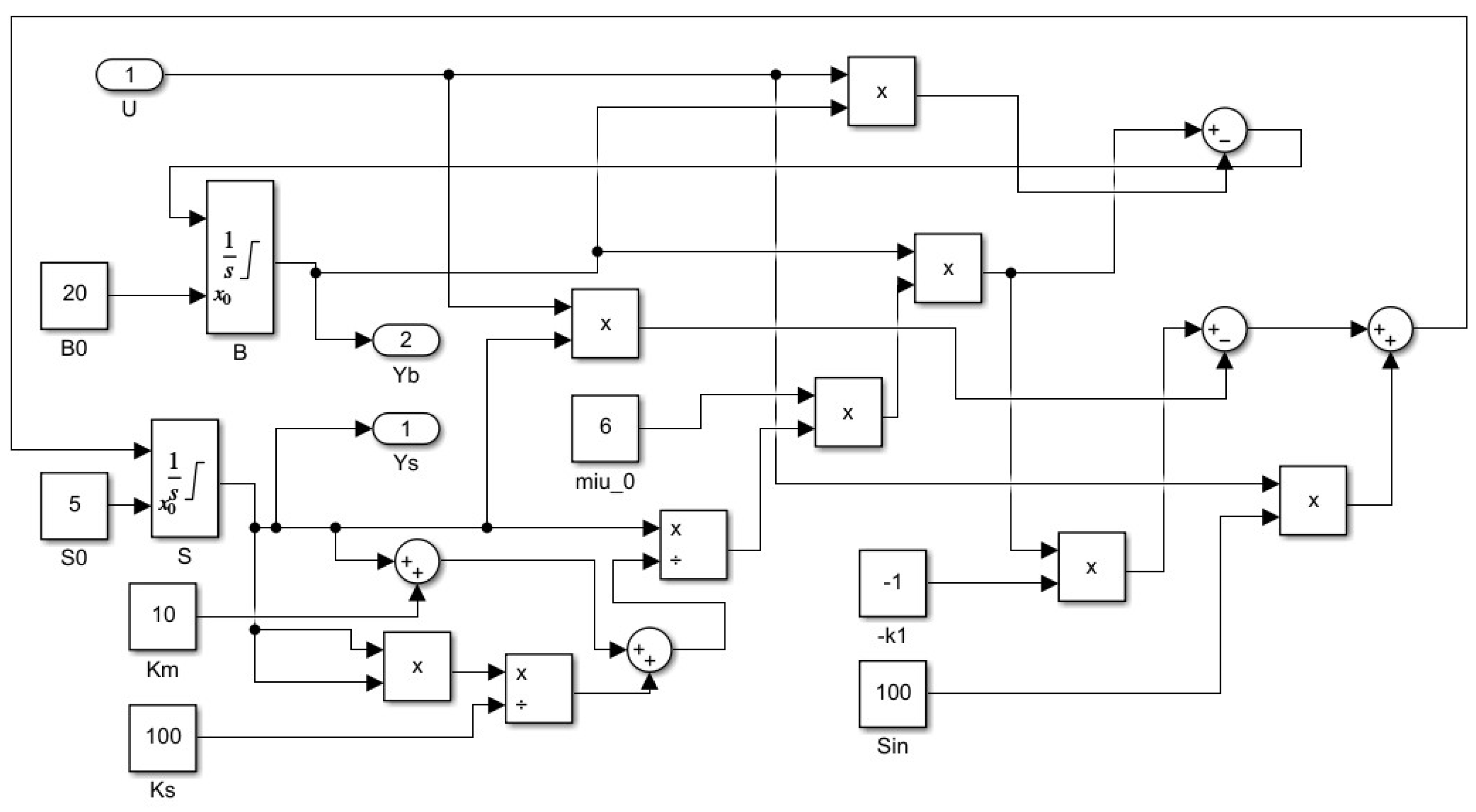

4. Tuning PID Controller for a Biotechnological System – Classical Approach

- - represents the state vector (the concentrations of the systems variables);

- - denotes the vector of reactions kinetics (the rates of the reactions);

- , is the matrix of the yield coefficients;

- represents the rate of production;

- is the exchange between the bioreactor and the exterior.

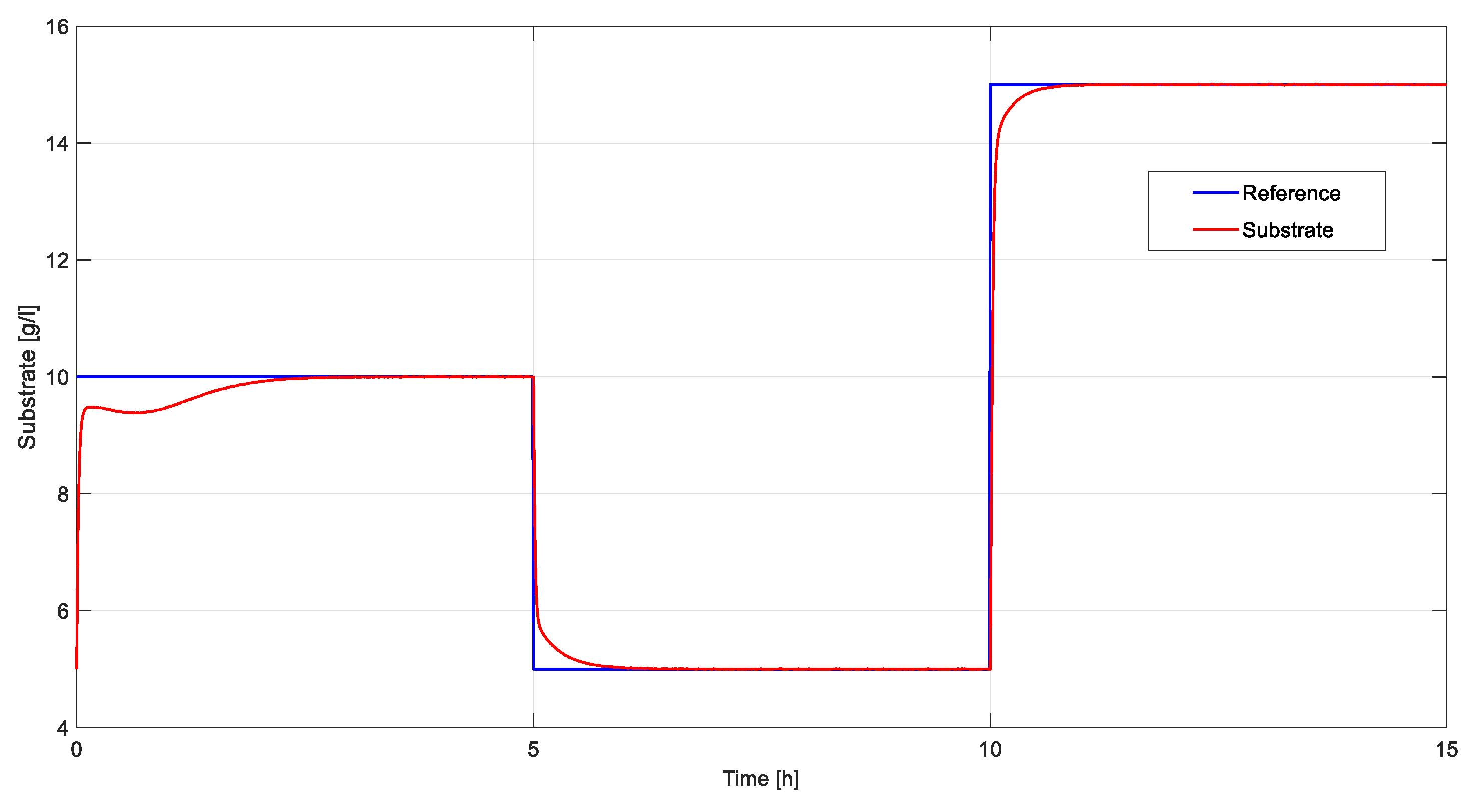

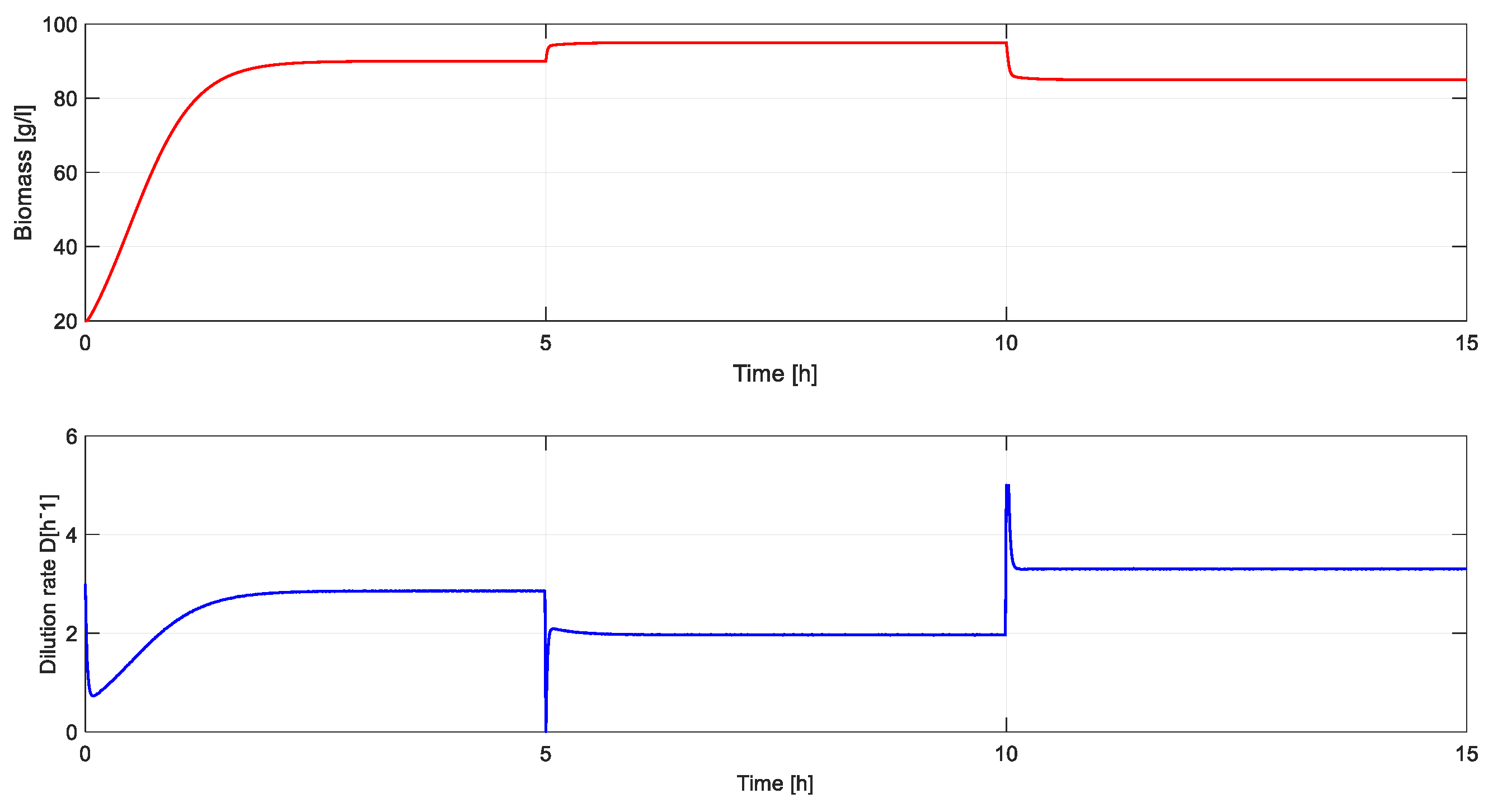

5. Simulation Results

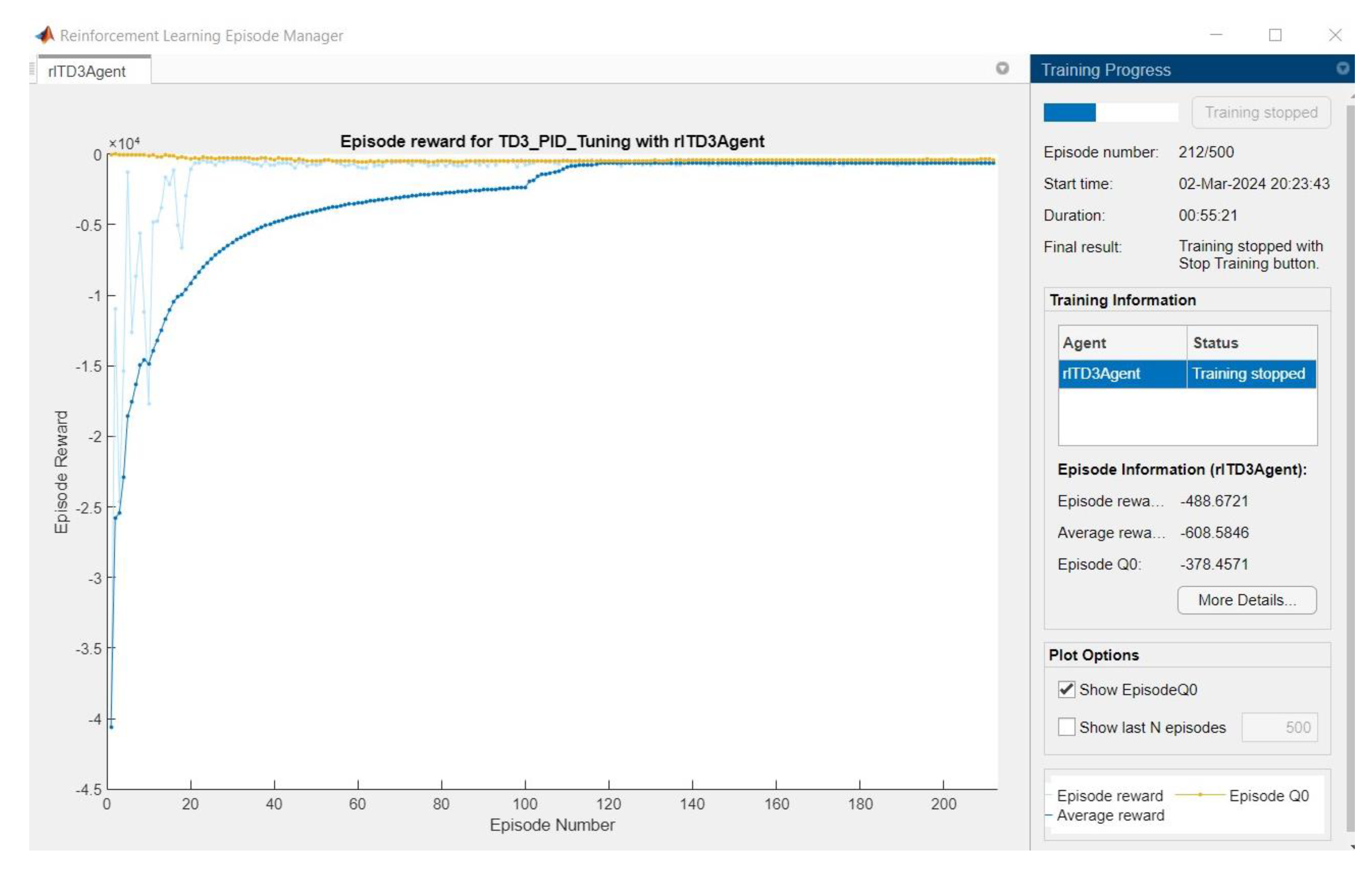

5.1. RL Approach

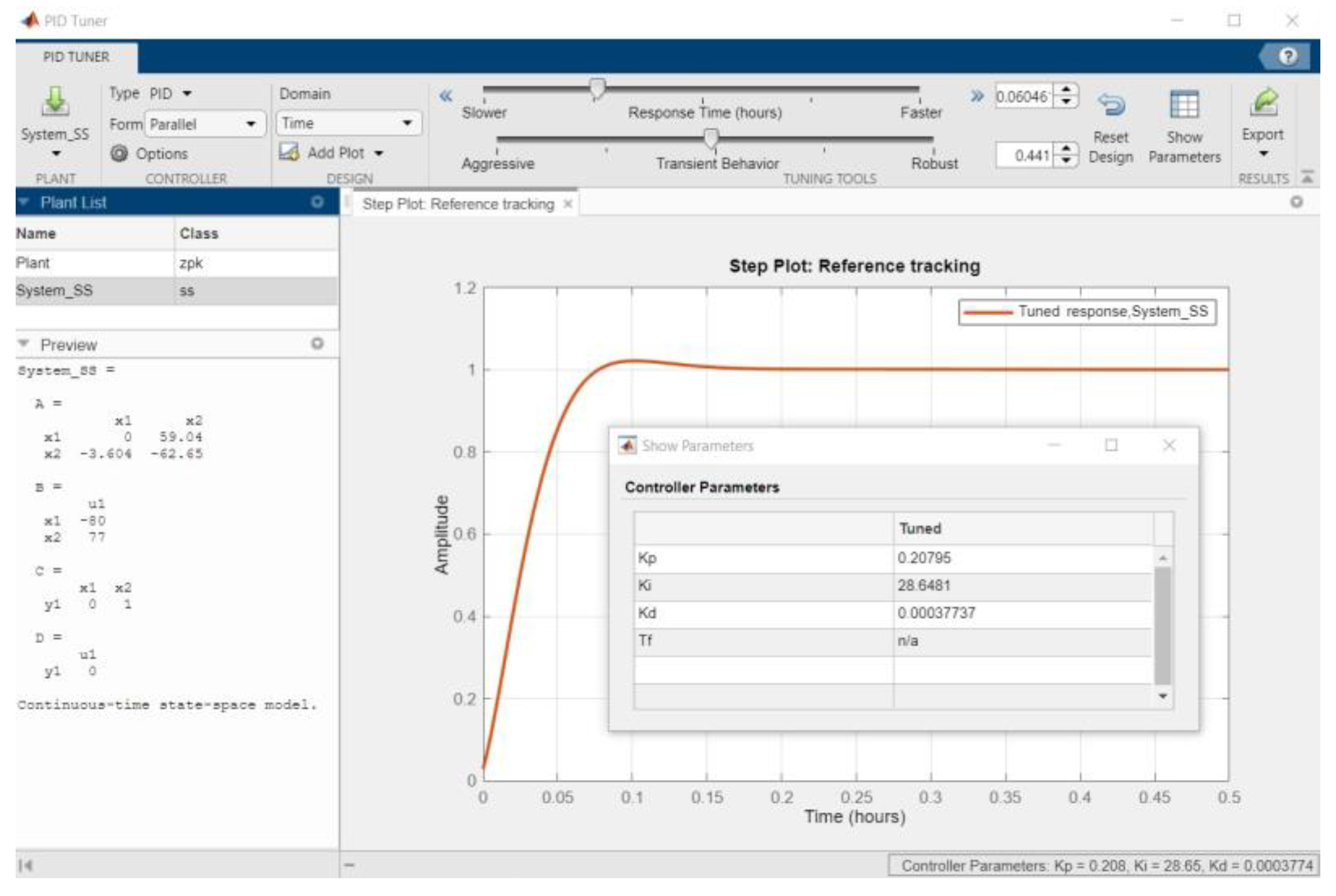

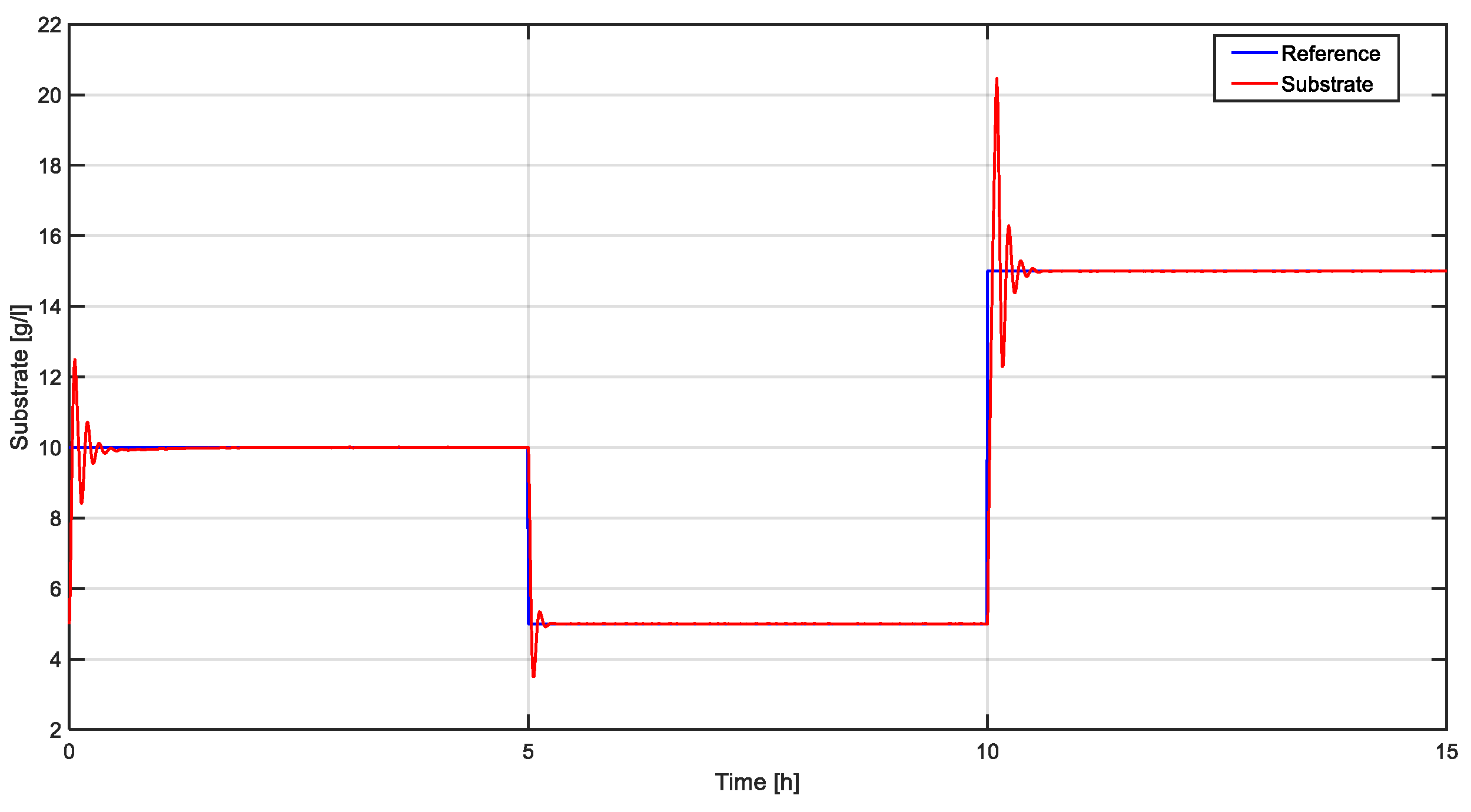

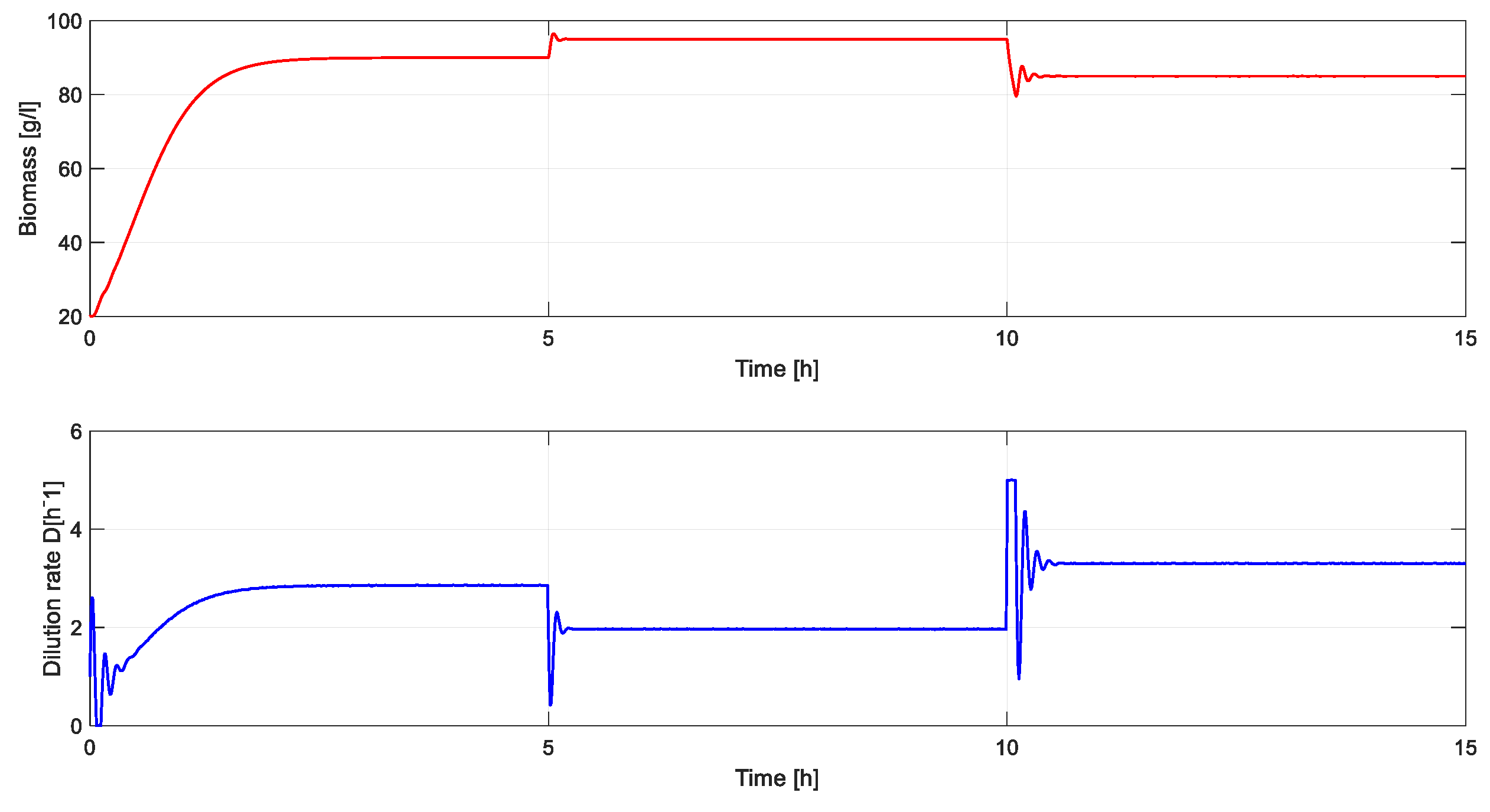

5.2. Classical Approach

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Borase RP, Maghade D, Sondkar S, Pawar S. A review of PID control, tuning methods and applications. International Journal of Dynamics and Control 2020:1–10. [CrossRef]

- Bucz Š, Kozáková A. Advanced methods of PID controller tuning for specified performance. PID Control for Industrial Processes 2018:73–119.

- Noordin, A.; Mohd Basri, M.A.; Mohamed, Z. Real-Time Implementation of an Adaptive PID Controller for the Quadrotor MAV Embedded Flight Control System. Aerospace 2023, 10, 59. [CrossRef]

- Amanda Danielle O. S. D.; André Felipe O. A. D.; João Tiago L. S. C.; Domingos L. A. N. and Carlos Eduardo T. D. PID Control for Electric Vehicles Subject to Control and Speed Signal Constraints, Journal of Control Science and Engineering. 2018, Article ID 6259049. [CrossRef]

- Aström, K.J., Hägglund, T. Advanced PID Control, vol. 461. Research Triangle Park, NC: ISA-The Instrumentation, Systems, and Automation Society. 2006.

- Liu, G.; Daley, S. Optimal-tuning PID control for industrial systems. Control Eng. Pract. 2001, 9, 1185–1194. [CrossRef]

- Aström, K.J., Hägglund, T. Revisiting the Ziegler–Nichols step response method for PID control. J. Process Control. 2004. 14 (6), 635–650. [CrossRef]

- Sedighizadeh M, Rezazadeh A. Adaptive PID controller based on reinforcement learning for wind turbine control. In: Proceedings of World Academy of Science, Engineering and Technology. vol. 27. 2008. pp. 257–62.

- Lillicrap TP, Hunt JJ, Pritzel A, Heess N, Erez T, Tassa Y, et al. Continuous control with deep reinforcement learning. arXiv Preprint, arXiv:150902971 2015.

- Brunton, S.L.; Kutz, J.N. Data-Driven Science and Engineering: Machine Learning, Dynamical Systems, and Control. Cambridge University Press: Cambridge, UK, 2019.

- H. Dong and H. Dong, Deep Reinforcement Learning. Singapore: Springer, 2020.

- Sutton, R.S.; Barto, A.G. Reinforcement Learning: An Introduction. MIT Press: Cambridge, MA, USA, 2018.

- Fujimoto, S. Hoof, H. and Meger, D. Addressing function approximation error in actor-critic methods. In Proc. Int. Conf. Mach. Learn., 2018, pp. 1587–1596.

- Muktiadji, R.F.; Ramli, M.A.M.; Milyani, A.H. Twin-Delayed Deep Deterministic Policy Gradient Algorithm to Control a Boost Converter in a DC Microgrid. Electronics 2024, 13, 433. [CrossRef]

- Yao, J.; Ge, Z. Path-Tracking Control Strategy of Unmanned Vehicle Based on DDPG Algorithm. Sensors 2022, 22, 7881. [CrossRef]

- H. V. Hasselt, A. Guez, and D. Silver, ‘‘Deep reinforcement learning with double Q-learning,’’ in Proc. AAAI Conf. Artif. Intell., vol. 30, 2016, pp. 1–7.

- Rathore, A.S.; Mishra, S.; Nikita, S.; Priyanka, P. Bioprocess Control: Current Progress and Future Perspectives. Life 2021, 11, 557. [CrossRef]

- Sendrescu, D.; Petre, E.; Selisteanu, D. Nonlinear PID controller for a Bacterial Growth Bioprocess. In Proceedings of the 2017 18th International Carpathian Control Conference (ICCC), Sinaia, Romania, 28–31 May 2017; Institute of Electrical and Electronics Engineers (IEEE): Piscataway, NJ, USA, 2017; pp. 151–155.

- MathWorks—PID Tuner. Available online: https://www.mathworks.com/help/control/ref/pidtuner-app.html.

- MathWorks—Twin-Delayed Deep Deterministic Policy Gradient Reinforcement Learning Agent. Available online: https://www.mathworks.com/help/reinforcement-learning/ug/td3-agents.html.

| Notation | RL Element |

|---|---|

| sk | current state |

| sk+1 | next state |

| ak | current action |

| ak+1 | next action |

| rk | reward at state sk |

| Q | Q-function (critic) |

| π | policy function (actor) |

| δ | TD target |

| action space | |

| state space |

| Parameter | Value |

|---|---|

| Mini-batch size | 128 |

| Experience buffer length | 500000 |

| Gaussian noise variance | 0.1 |

| Steps in episode | 200 |

| Maximum number of episodes | 1000 |

| Optimizer | Adam |

| Discount factor | 0.97 |

| Fully connected learning size | 32 |

| Critic learning rate | 0.01 |

| Actor learning rate | 0.01 |

| Tuning method | Kp | Ki | Kd | Overshoot [%] | Settling time [h] |

|---|---|---|---|---|---|

| TD3 based tuning | 0.62 | 3.57 | 0.00023 | 0 | 0.8 |

| Linearization- based tuning | 0.207 | 28.64 | 0.00037 | 20 ÷ 40 | 0.4 ÷ 0.5 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).