Submitted:

28 January 2024

Posted:

29 January 2024

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

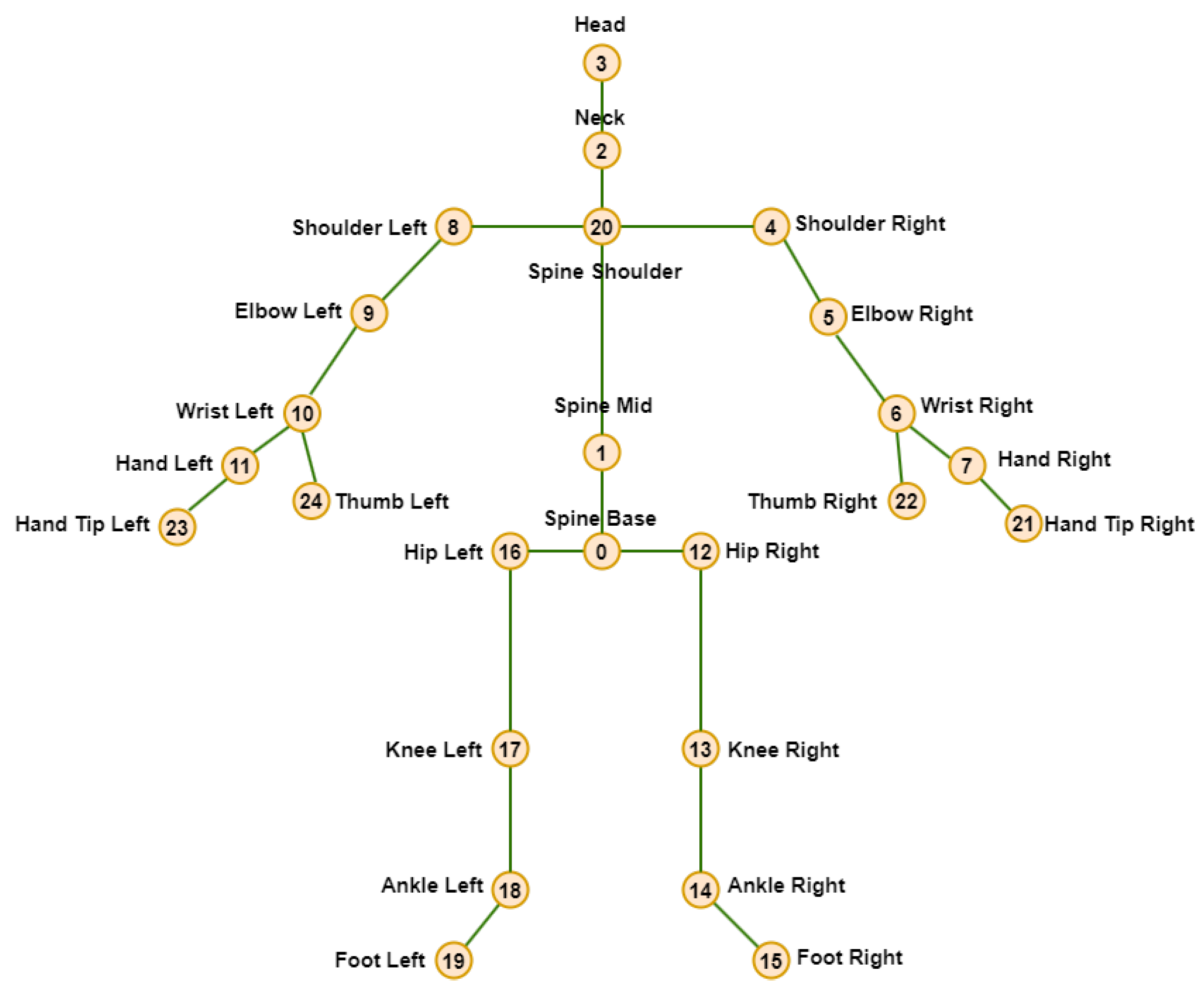

1.1. Introduction to Skeleton-based Human Motion Prediction

1.2. Description of the Work

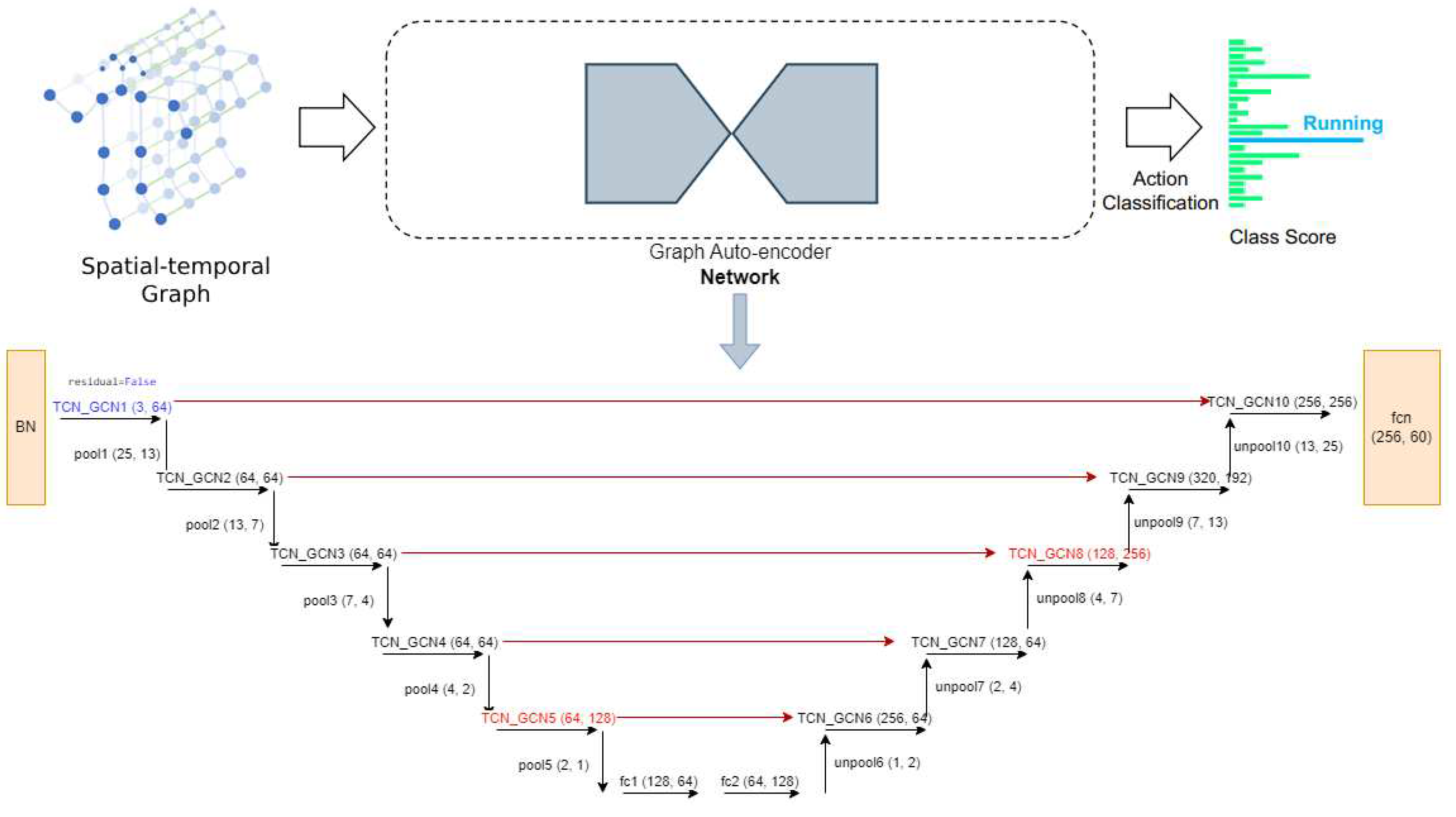

1.3. Graph Autoencoders

1.4. The Basic Description of the Graph Autoencoder Skeleton-based Human Action Recognition Algorithm

- A novel spatiotemporal graph-autoencoder network for skeleton-based human action recognition; our GA-GCN pipeline is illustrated in Figure 2. Additional skip connections were incorporated to improve the learning process by enabling the direct flow of information from earlier layers to later layers.

- Outperforming most of the existing methods on two common skeleton-based action recognition datasets.

- Achieving notable improvement in the performance by introducing additional multiple modalities; see the experimental Section 4.

2. Related Work

2.1. Graph Convolutional Networks

2.2. GCN-based Skeleton Action Recognition

- Static and dynamic techniques: In static techniques, the topologies of GCNs remain constant throughout the inference process, whereas they are dynamically inferred throughout the inference process for dynamic techniques.

- topology shared and topology non-shared techniques: Topologies are shared across all channels in topology shared techniques, whereas various topologies are employed in various channels or channel groups in topology non-shared techniques.

3. Materials and Methods

3.1. Datasets

- Cross-subject (X-sub): 20 individuals provide training data, and the remaining 20 provide testing data.

- Cross-view (X-view): The testing data are derived from the views of camera 1, while the training data are derived from the views of cameras 2 and 3.

- Cross-subject (X-sub): 53 individuals provide training data, and the remaining 53 provide testing data.

- Cross-setup (X-setup): the 32 setups were divided into odd and even numbers where samples with even-numbered setup IDs provide training data, and the remaining samples with odd-numbered setup IDs provide testing data.

3.2. Preliminaries

3.3. Spatiotemporal Graph Autoencoder Network for Skeleton-based Human Action Recognition Algorithm

3.4. Spatiotemporal Input Representations

3.5. Modalities of GA-GCN

4. Results

4.1. Implementation Details

4.2. Experimental results

5. Discussion

5.1. Comparison of GA-GCN Modalities

5.2. Comparison with the State-of-the-Art

6. Conclusions and Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Shahroudy, A.; Liu, J.; Ng, T.T.; Wang, G. NTU RGB+D: A Large Scale Dataset for 3D Human Activity Analysis. IEEE Conference on Computer Vision and Pattern Recognition, 2016. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27-30 June 2016. [Google Scholar]

- Liu, J.; Shahroudy, A.; Perez, M.; Wang, G.; Duan, L.Y.; Kot, A.C. NTU RGB+D 120: A Large-Scale Benchmark for 3D Human Activity Understanding. IEEE Transactions on Pattern Analysis and Machine Intelligence 2019. [Google Scholar] [CrossRef] [PubMed]

- Kay, W.; Carreira, J.; Simonyan, K.; Zhang, B.; Hillier, C.; Vijayanarasimhan, S.; Viola, F.; Green, T.; Back, T.; Natsev, P.; others, *!!! REPLACE !!!*. The kinetics human action video dataset. arXiv preprint 2017, arXiv:1705.06950 2017. [Google Scholar]

- Liu, J.; Shahroudy, A.; Wang, G.; Duan, L.Y.; Kot, A.C. Skeleton-based online action prediction using scale selection network. IEEE transactions on pattern analysis and machine intelligence 2019, 42, 1453–1467. [Google Scholar] [CrossRef] [PubMed]

- Johansson, G. Visual perception of biological motion and a model for its analysis. Perception & psychophysics 1973, 14, 201–211. [Google Scholar]

- Li, M.; Chen, S.; Chen, X.; Zhang, Y.; Wang, Y.; Tian, Q. Actional-structural graph convolutional networks for skeleton-based action recognition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15-20 June 2019; 2019; pp. 3595–3603. [Google Scholar]

- Liu, J.; Shahroudy, A.; Xu, D.; Kot, A.C.; Wang, G. Skeleton-based action recognition using spatio-temporal LSTM network with trust gates. IEEE transactions on pattern analysis and machine intelligence 2017, 40, 3007–3021. [Google Scholar] [CrossRef] [PubMed]

- Liu, J.; Wang, G.; Duan, L.Y.; Abdiyeva, K.; Kot, A.C. Skeleton-based human action recognition with global context-aware attention LSTM networks. IEEE Transactions on Image Processing 2017, 27, 1586–1599. [Google Scholar] [CrossRef] [PubMed]

- Hamilton, W.; Ying, Z.; Leskovec, J. Inductive representation learning on large graphs. Advances in neural information processing systems 2017, 30. [Google Scholar]

- Kipf, T.N.; Welling, M. Variational graph auto-encoders. arXiv preprint 2016, arXiv:1611.07308 2016. [Google Scholar]

- Chen, Y.; Zhang, Z.; Yuan, C.; Li, B.; Deng, Y.; Hu, W. Channel-wise topology refinement graph convolution for skeleton-based action recognition. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, BC, Canada, 11-17 October 2021; pp. 13359–13368. [Google Scholar]

- Cai, Y.; Ge, L.; Liu, J.; Cai, J.; Cham, T.J.; Yuan, J.; Thalmann, N.M. Exploiting spatial-temporal relationships for 3d pose estimation via graph convolutional networks. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Seoul, South Korea, 27 October - 2 November 2019; pp. 2272–2281. [Google Scholar]

- Malik, J.; Elhayek, A.; Guha, S.; Ahmed, S.; Gillani, A.; Stricker, D. DeepAirSig: End-to-End Deep Learning Based in-Air Signature Verification. IEEE Access 2020, 8, 195832–195843. [Google Scholar] [CrossRef]

- Bruna, J.; Zaremba, W.; Szlam, A.; Lecun, Y. Spectral Networks and Locally Connected Networks on Graphs. In Proceedings of the International Conference on Learning Representations (ICLR), Banff, AB, Canada, 14–16 April 2014. [Google Scholar]

- Defferrard, M.; Bresson, X.; Vandergheynst, P. Convolutional neural networks on graphs with fast localized spectral filtering. Advances in neural information processing systems 2016, 29. [Google Scholar]

- Kipf, T.N.; Welling, M. Semi-supervised classification with graph convolutional networks. In Proceedings of the International Conference on Learning Representations (ICLR), Toulon, France, 24 - 26 April 2017. [Google Scholar]

- Duvenaud, D.K.; Maclaurin, D.; Iparraguirre, J.; Bombarell, R.; Hirzel, T.; Aspuru-Guzik, A.; Adams, R.P. Convolutional networks on graphs for learning molecular fingerprints. Advances in neural information processing systems 2015, 28. [Google Scholar]

- Niepert, M.; Ahmed, M.; Kutzkov, K. Learning convolutional neural networks for graphs. In Proceedings of the International conference on machine learning. PMLR, New York, NY, USA, 20-22 June 2016. [Google Scholar]

- Veličković, P.; Cucurull, G.; Casanova, A.; Romero, A.; Lio, P.; Bengio, Y. Graph attention networks. In Proceedings of the International Conference on Learning Representations (ICLR), Vancouver, BC, Canada, 30 April - 3 May 2018. [Google Scholar]

- Liu, Z.; Zhang, H.; Chen, Z.; Wang, Z.; Ouyang, W. Disentangling and unifying graph convolutions for skeleton-based action recognition. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, Seattle, WA, USA, 4-19 June 2020. [Google Scholar]

- Shi, L.; Zhang, Y.; Cheng, J.; Lu, H. Two-stream adaptive graph convolutional networks for skeleton-based action recognition. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, Long Beach, CA, USA, 15-20 June 2019; pp. 12026–12035. [Google Scholar]

- Tang, Y.; Tian, Y.; Lu, J.; Li, P.; Zhou, J. Deep progressive reinforcement learning for skeleton-based action recognition. In Proceedings of the IEEE conference on computer vision and pattern recognition, Salt Lake City, UT, USA, 18-23 June 2018; pp. 5323–5332. [Google Scholar]

- Veeriah, V.; Zhuang, N.; Qi, G.J. Differential recurrent neural networks for action recognition. In Proceedings of the IEEE international conference on computer vision, Santiago, Chile, 7-13 December 2015; pp. 4041–4049. [Google Scholar]

- Yan, S.; Xiong, Y.; Lin, D. Spatial temporal graph convolutional networks for skeleton-based action recognition. In Proceedings of the Thirty-second AAAI conference on artificial intelligence, Orleans, LA, USA, 2–7 February 2018. [Google Scholar]

- Ye, F.; Pu, S.; Zhong, Q.; Li, C.; Xie, D.; Tang, H. Dynamic gcn: Context-enriched topology learning for skeleton-based action recognition. In Proceedings of the 28th ACM International Conference on Multimedia, Seattle, WA, USA, 12-16 October 2020. [Google Scholar]

- Zhao, R.; Wang, K.; Su, H.; Ji, Q. Bayesian graph convolution lstm for skeleton based action recognition. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Seoul, South Korea, 27 October - 2 November 2019; pp. 6882–6892. [Google Scholar]

- Huang, Z.; Shen, X.; Tian, X.; Li, H.; Huang, J.; Hua, X.S. Spatio-temporal inception graph convolutional networks for skeleton-based action recognition. In Proceedings of the 28th ACM International Conference on Multimedia, Seattle, WA, USA, 12-16 October 2020; 2020; pp. 2122–2130. [Google Scholar]

- Zhang, P.; Lan, C.; Zeng, W.; Xing, J.; Xue, J.; Zheng, N. Semantics-guided neural networks for efficient skeleton-based human action recognition. proceedings of the IEEE/CVF conference on computer vision and pattern recognition, Seattle, WA, USA, 13-19 June 2020. [Google Scholar]

- Cheng, K.; Zhang, Y.; Cao, C.; Shi, L.; Cheng, J.; Lu, H. Decoupling gcn with dropgraph module for skeleton-based action recognition. In Proceedings of the Computer Vision–ECCV 2020: 16th European Conference, Proceedings, Part XXIV 16. Glasgow, UK, 23–28 August 2020; Springer: Berlin, Germany; pp. 536–553. [Google Scholar]

- Chen, Y.; Dai, X.; Liu, M.; Chen, D.; Yuan, L.; Liu, Z. Dynamic convolution: Attention over convolution kernels. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, Seattle, WA, USA, 13-19 June 2020; pp. 11030–11039. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE conference on computer vision and pattern recognition, Las Vegas, NV, USA, 27-30 June 2016; pp. 770–778. [Google Scholar]

- Li, S.; Li, W.; Cook, C.; Zhu, C.; Gao, Y. Independently recurrent neural network (indrnn): Building a longer and deeper rnn. In Proceedings of the IEEE conference on computer vision and pattern recognition, Salt Lake City, UT, USA, 18-23 June 2018; pp. 5457–5466. [Google Scholar]

- Li, C.; Zhong, Q.; Xie, D.; Pu, S. Co-occurrence feature learning from skeleton data for action recognition and detection with hierarchical aggregation. arXiv preprint 2018, arXiv:1804.06055 2018. [Google Scholar]

- Si, C.; Chen, W.; Wang, W.; Wang, L.; Tan, T. An attention enhanced graph convolutional lstm network for skeleton-based action recognition. proceedings of the IEEE/CVF conference on computer vision and pattern recognition, Long Beach, CA, USA, 15-20 June 2019; pp. 1227–1236. [Google Scholar]

- Shi, L.; Zhang, Y.; Cheng, J.; Lu, H. Skeleton-based action recognition with directed graph neural networks. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, Long Beach, CA, USA, 15-20 June 2019; pp. 7912–7921. [Google Scholar]

- Cheng, K.; Zhang, Y.; He, X.; Chen, W.; Cheng, J.; Lu, H. Skeleton-based action recognition with shift graph convolutional network. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, Seattle, WA, USA, 13-19 June 2020; pp. 183–192. [Google Scholar]

- Song, Y.F.; Zhang, Z.; Shan, C.; Wang, L. Stronger, faster and more explainable: A graph convolutional baseline for skeleton-based action recognition. proceedings of the 28th ACM international conference on multimedia, Seattle, WA, USA, 12-16 October 2020; pp. 1625–1633. [Google Scholar]

- Korban, M.; Li, X. Ddgcn: A dynamic directed graph convolutional network for action recognition. In Proceedings of the Computer Vision–ECCV 2020: 16th European Conference, Proceedings, Part XX 16. Glasgow, UK, 23–28 August 2020; Springer: Berlin, Germany; pp. 761–776. [Google Scholar]

- Shi, L.; Zhang, Y.; Cheng, J.; Lu, H. Decoupled spatial-temporal attention network for skeleton-based action-gesture recognition. In Proceedings of the Asian Conference on Computer Vision, Kyoto, Japan, 30 November - 4 December 2020. [Google Scholar]

- Plizzari, C.; Cannici, M.; Matteucci, M. Skeleton-based action recognition via spatial and temporal transformer networks. Computer Vision and Image Understanding 2021, 208, 103219. [Google Scholar] [CrossRef]

- Chen, Z.; Li, S.; Yang, B.; Li, Q.; Liu, H. Multi-scale spatial temporal graph convolutional network for skeleton-based action recognition. In Proceedings of the AAAI conference on artificial intelligence, virtual, 2-9 February 2021; 2021; pp. 1113–1122. [Google Scholar]

- Trivedi, N.; Sarvadevabhatla, R.K. PSUMNet: Unified Modality Part Streams are All You Need for Efficient Pose-based Action Recognition. In Computer Vision–ECCV 2022 Workshops: Proceedings, Part V; Springer: Berlin, Germany, 2023; pp. 211–227. [Google Scholar]

- Liu, J.; Shahroudy, A.; Xu, D.; Wang, G. Spatio-temporal lstm with trust gates for 3d human action recognition. In Proceedings of the Computer Vision–ECCV 2016: 14th European Conference, Proceedings, Part III 14. Amsterdam, The Netherlands, 11-14 October 2016; Springer: Berlin, Germany, 11 October; pp. 816–833. [Google Scholar]

- Ke, Q.; Bennamoun, M.; An, S.; Sohel, F.; Boussaid, F. Learning clip representations for skeleton-based 3d action recognition. IEEE Transactions on Image Processing 2018, 27, 2842–2855. [Google Scholar] [CrossRef] [PubMed]

| Modality | bone | vel | fast-motion |

|---|---|---|---|

| joint | FALSE | FALSE | FALSE |

| joint motion | FALSE | TRUE | FALSE |

| bone | TRUE | FALSE | FALSE |

| bone motion | TRUE | TRUE | FALSE |

| joint fast motion | FALSE | FALSE | TRUE |

| joint motion fast motion | FALSE | TRUE | TRUE |

| bone fast motion | TRUE | FALSE | TRUE |

| bone motion fast motion | TRUE | TRUE | TRUE |

| Methods | Accuracy (%) |

|---|---|

| GA-GCN joint modality | 95.14 |

| GA-GCN joint motion modality | 93.05 |

| GA-GCN bone modality | 94.77 |

| GA-GCN bone motion modality | 91.99 |

| GA-GCN after ensemble joint, joint motion, bone and bone motion modalities in our machine | 96.51 |

| GA-GCN joint fast motion modality | 94.63 |

| GA-GCN joint motion fast motion modality | 92.61 |

| GA-GCN bone fast motion modality | 94.41 |

| GA-GCN bone motion fast motion modality | 91.54 |

| GA-GCN after ensemble joint fast motion, joint motion fast motion, bone fast motion and bone motion fast motion modalities in our machine | 96.36 |

| GA-GCN with 8 modalities | |

| joint, joint motion, bone, bone motion, joint fast motion, joint motion fast motion, bone fast motion and bone motion fast motion | 96.7 |

| Methods | NTU-RGB+D | |

|---|---|---|

| X-Sub (%) | X-View (%) | |

| Ind-RNN [32] | 81.8 | 88.0 |

| HCN [33] | 86.5 | 91.1 |

| ST-GCN [24] | 81.5 | 88.3 |

| 2s-AGCN [21] | 88.5 | 95.1 |

| SGN [28] | 89.0 | 94.5 |

| AGC-LSTM [34] | 89.2 | 95.0 |

| DGNN [35] | 89.9 | 96.1 |

| Shift-GCN [36] | 90.7 | 96.5 |

| DC-GCN+ADG [29] | 90.8 | 96.6 |

| PA-ResGCN-B19 [37] | 90.9 | 96.0 |

| DDGCN [38] | 91.1 | 97.1 |

| Dynamic GCN [25] | 91.5 | 96.0 |

| MS-G3D [20] | 91.5 | 96.2 |

| CTR-GCN [11] | 92.4 | 96.8 |

| DSTA-Net [39] | 91.5 | 96.4 |

| ST-TR [40] | 89.9 | 96.1 |

| 4s-MST-GCN [41] | 91.5 | 96.6 |

| PSUMNet [42] | 92.9 | 96.7 |

| GA-GCN | 92.3 | 96.7 |

| Methods | NTU-RGB+D 120 | |

|---|---|---|

| X-Sub (%) | X-Set (%) | |

| ST-LSTM [43] | 55.7 | 57.9 |

| GCA-LSTM [8] | 61.2 | 63.3 |

| RotClips+MTCNN [44] | 62.2 | 61.8 |

| ST-GCN [24] | 70.7 | 73.2 |

| SGN [28] | 79.2 | 81.5 |

| 2s-AGCN [21] | 82.9 | 84.9 |

| Shift-GCN [36] | 85.9 | 87.6 |

| DC-GCN+ADG [29] | 86.5 | 88.1 |

| MS-G3D [20] | 86.9 | 88.4 |

| PA-ResGCN-B19 [37] | 87.3 | 88.3 |

| Dynamic GCN [25] | 87.3 | 88.6 |

| CTR-GCN [11] | 88.9 | 90.6 |

| DSTA-Net [39] | 86.6 | 89.0 |

| ST-TR [40] | 82.7 | 84.7 |

| 4s-MST-GCN [41] | 87.5 | 88.8 |

| PSUMNet [42] | 89.4 | 90.6 |

| GA-GCN | 88.8 | 90.4 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).