Submitted:

23 January 2024

Posted:

24 January 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

- This study aims to develop a personalized federated learning process to mitigate the impact of data heterogeneity on federated learning.

- 2.

- A each client.

- 3.

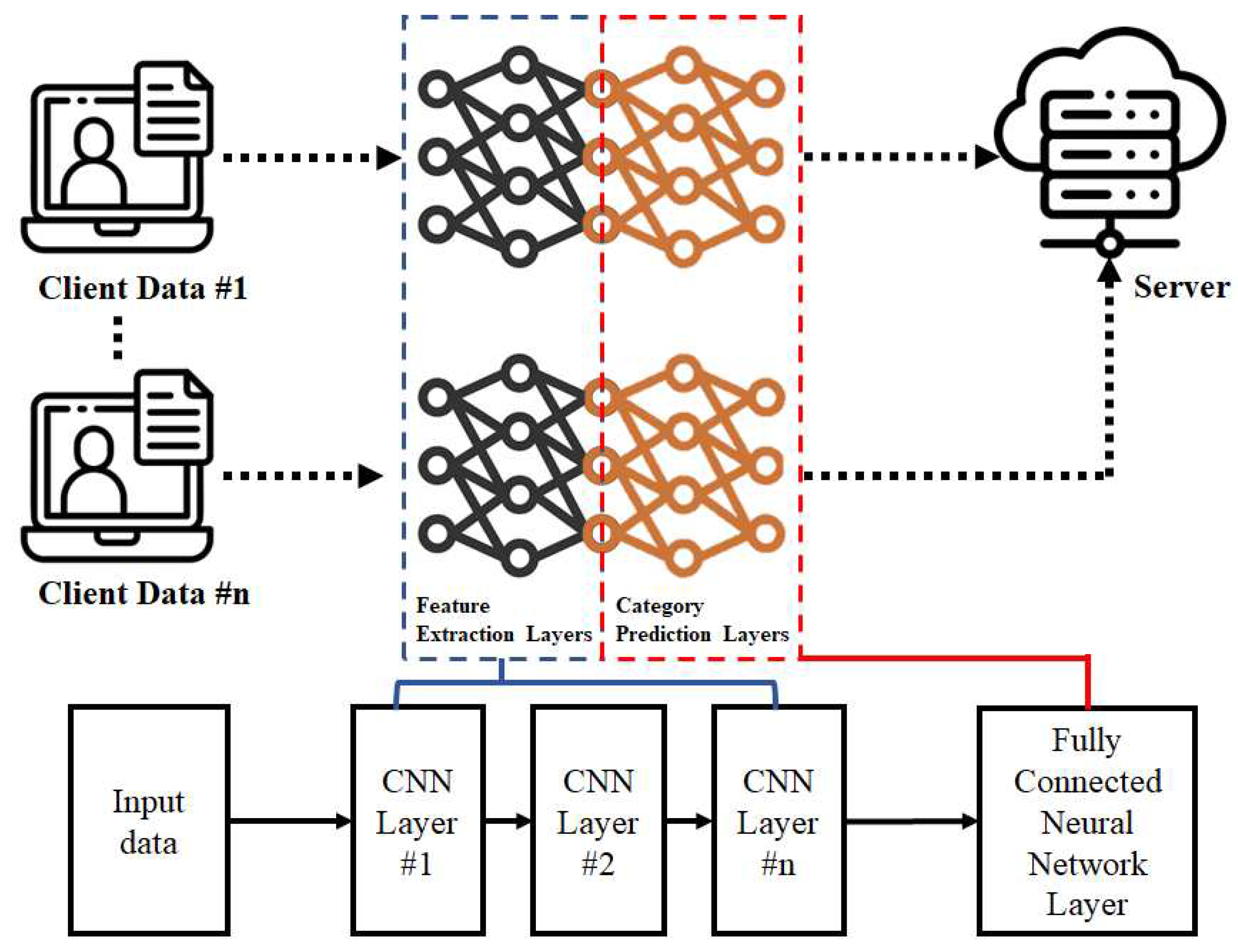

- This method includes personalized feature extraction for client data.

2. Related Work

- 4.

- neural network model is used to establish personalized models for The concept of federated learning was first presented by Google in 2017. This facilitates the training of machine learning models without centralized data. Instead, user data are stored on the client’s side, and all clients participate in the training process. The primary objective of federated learning is to safeguard user privacy and achieve a more generalized model. During training, client-side processing exclusively handles user data, and the model gradient undergoes encryption upon returning to the server to prevent access by other clients. Furthermore, in federated learning, the server-side aggregation algorithm considers all client neural network model parameters to yield a more generalized model. McMahan et al. proposed a Federated Averaging (FedAvg) algorithm to aggregate client model parameters on the server side in federated learning [13]. The FedAvg algorithm computes the average of the client model parameters and employs them as a global model for a specific round.

3. Research Methodology

4.1. Personalized Client Models

4.1. Adaptive Feature Extraction

| Algorithm adaptive algorithm in federated learning model |

| let Wf be the parameters of feature extraction layers . let Wg be the parameters of category predictions layer. let i be ith global round. let c be the cth selected client.let γ be ths learning rate let β be ths mixing ration, and the initial value is 0.5. for i=1,2...,N do setβ to initial value if i==N then all clients do new β = betaupdate (β) client own model ← () break else each selected clients C ∈ {C1,C2...Cm} parallel do receive from server. new β = betaupdate (β) () ← modelupdate (( keep the send back to server finish the federated learning |

4.1. Adaptive Mixing Ratio - β

4. Experiment Results and Analysis

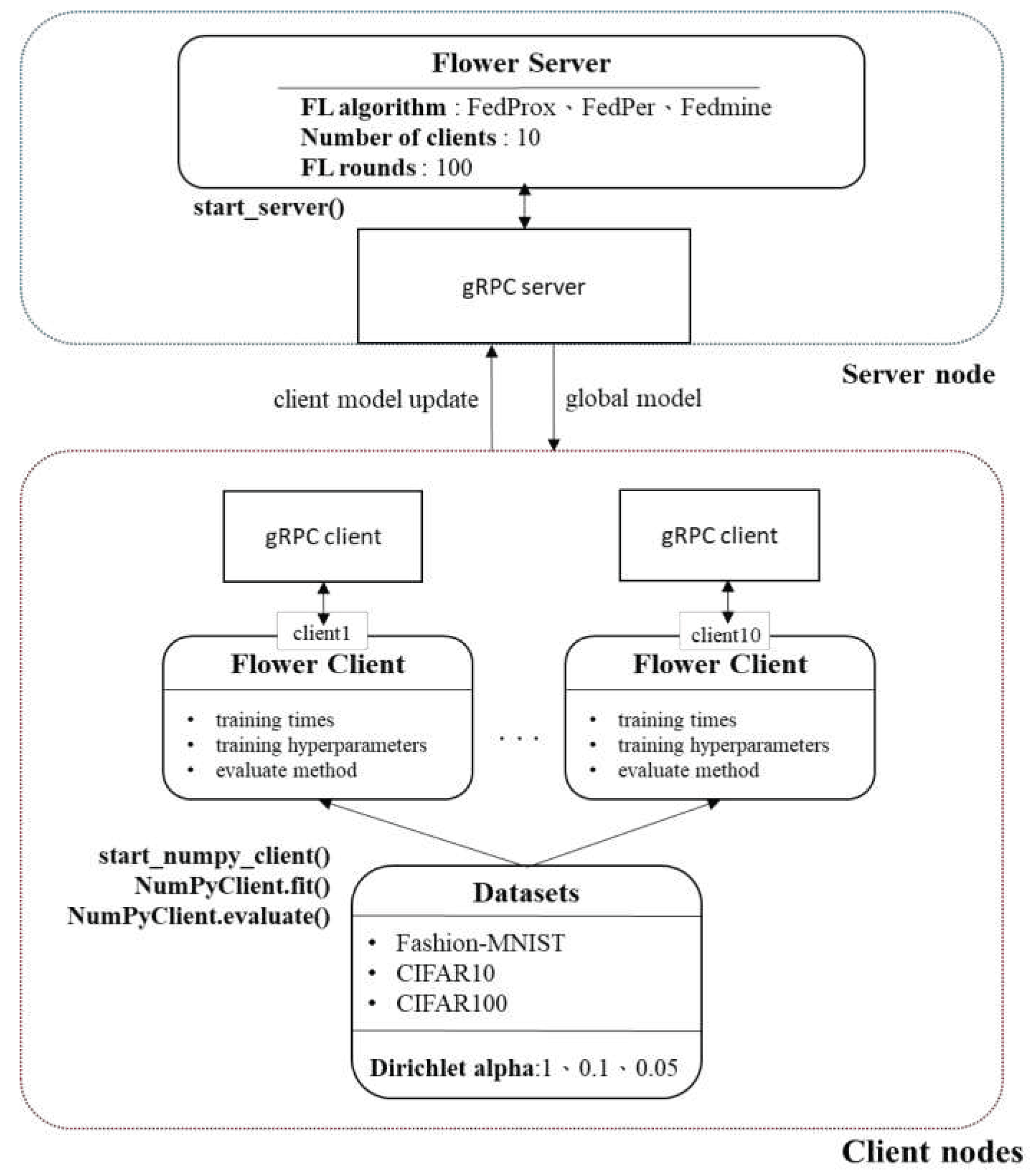

4.1. Experimental Framework and Dataset

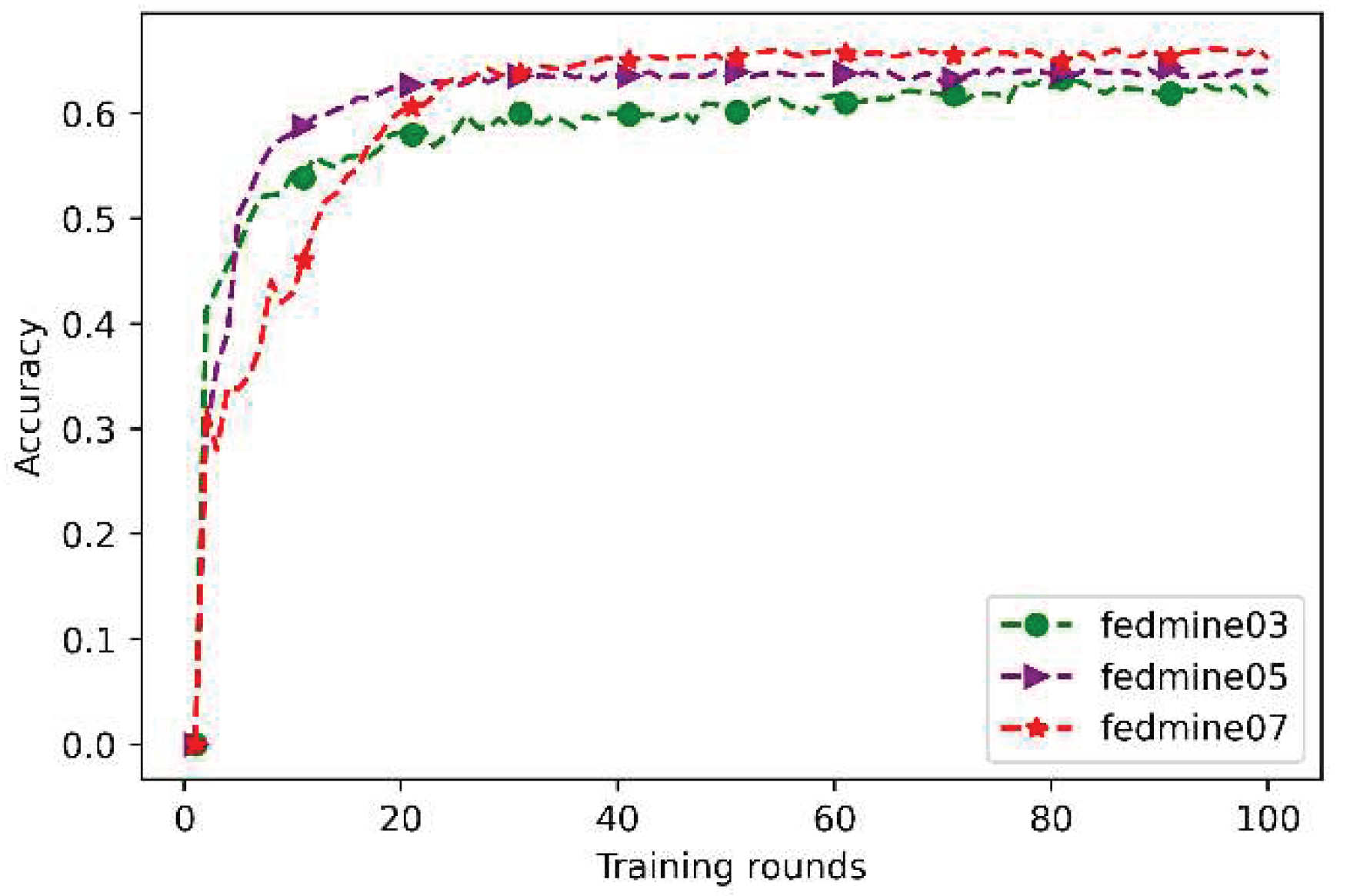

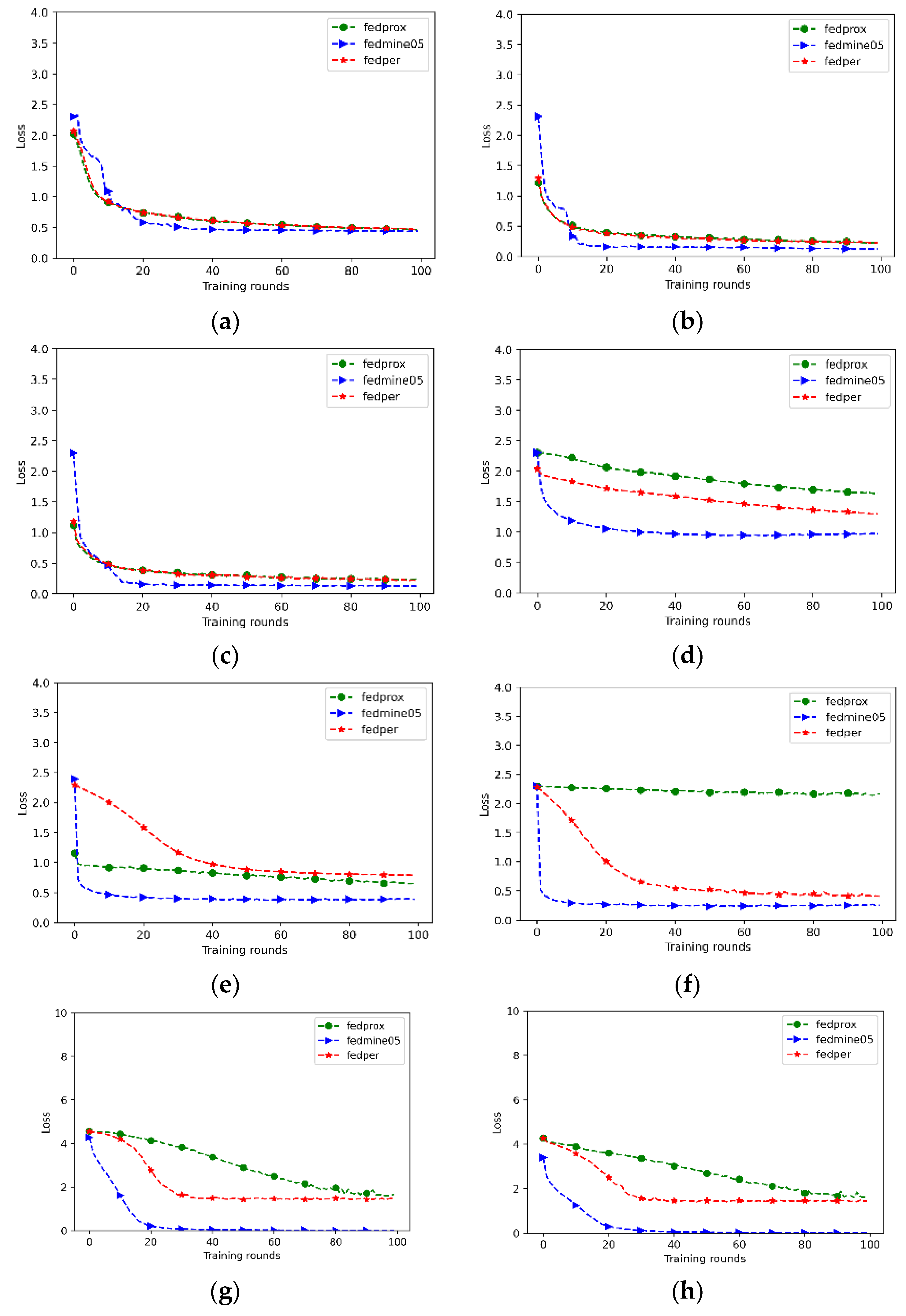

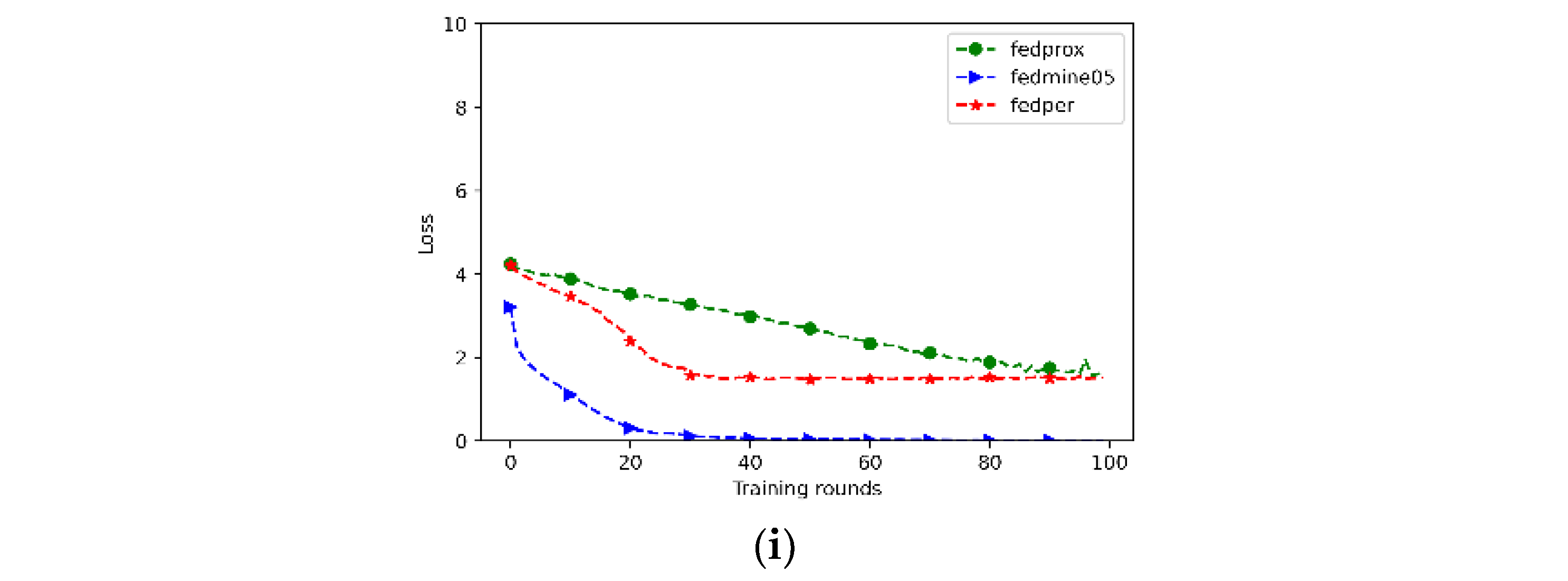

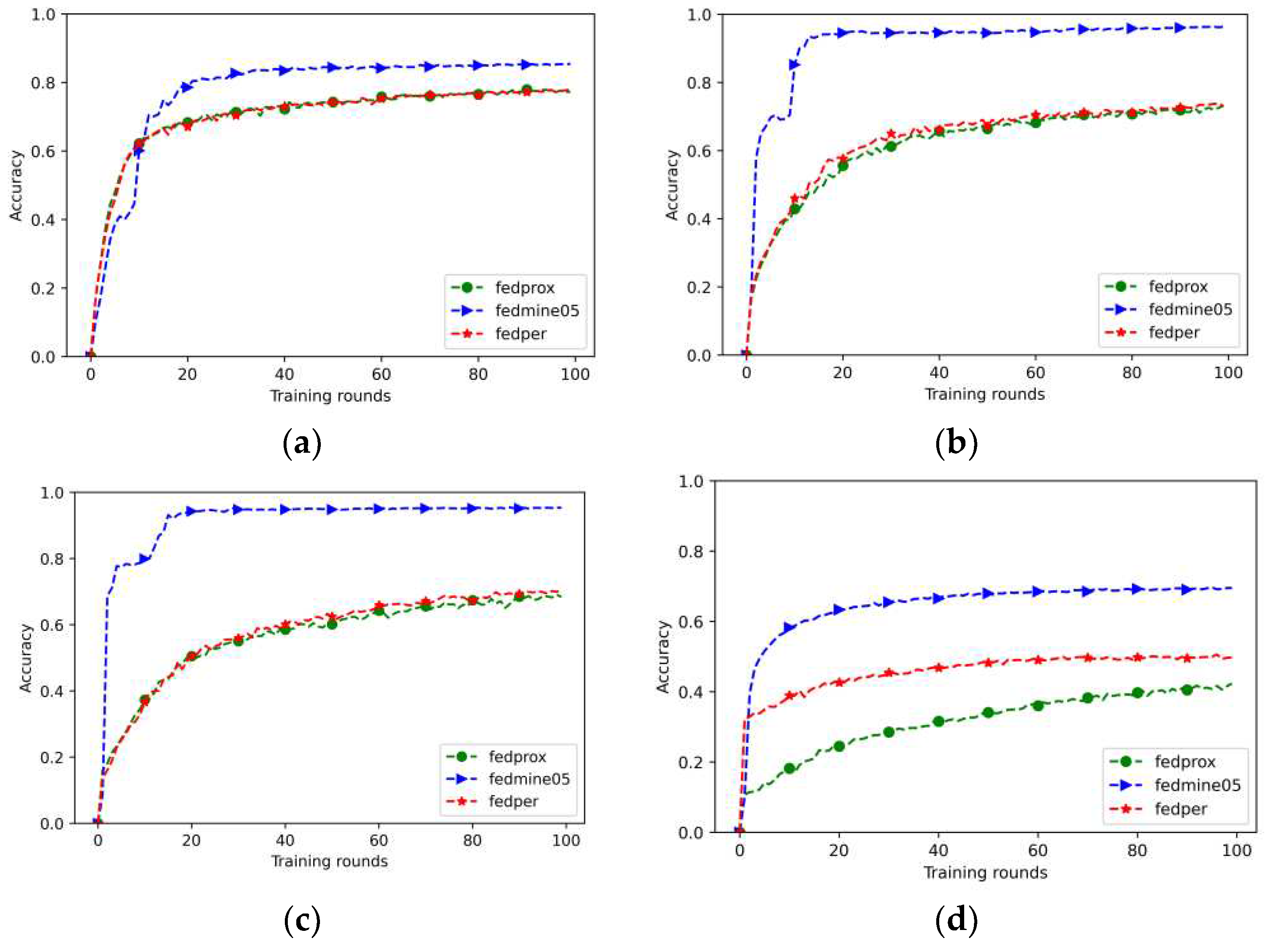

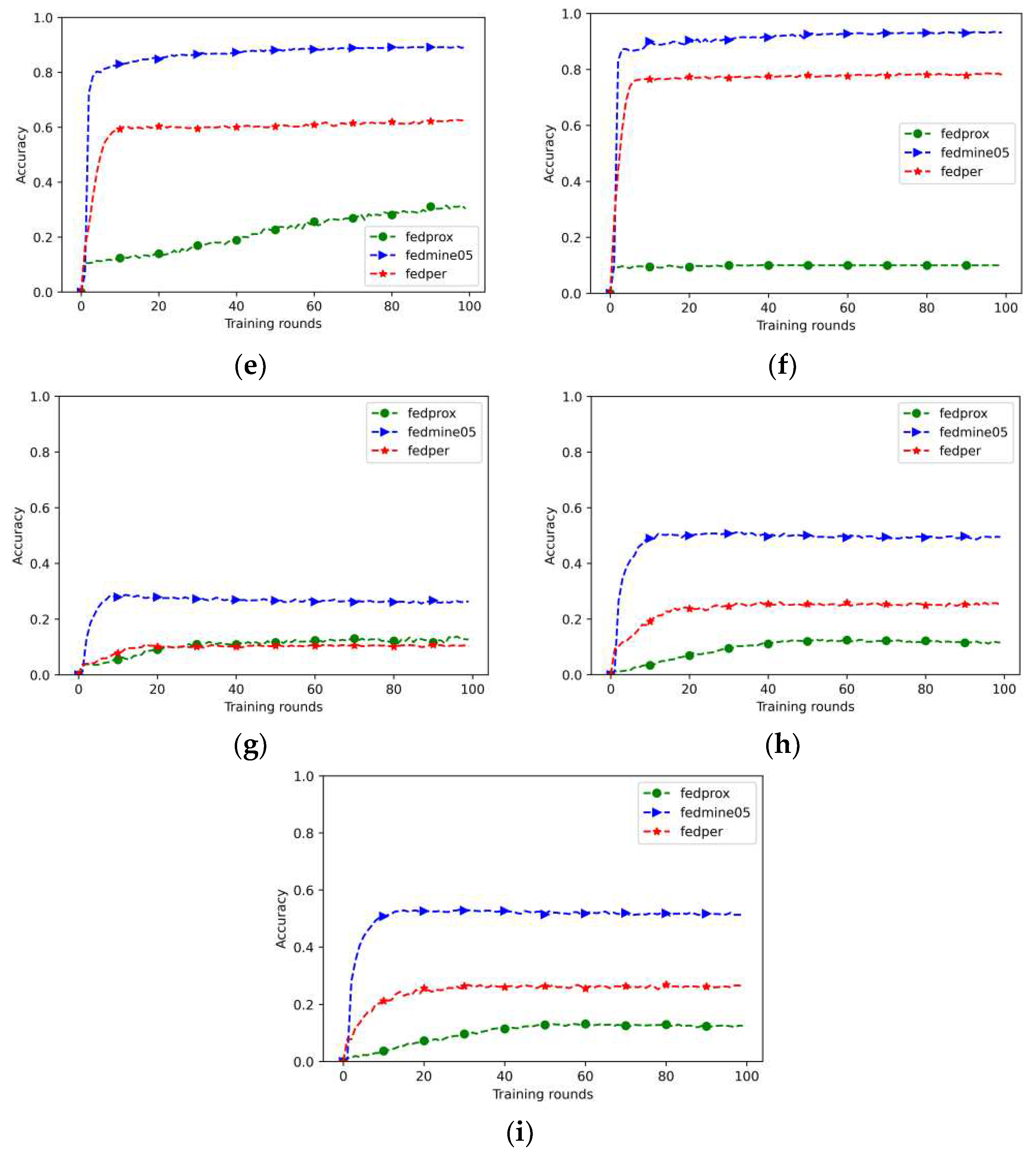

4.2. Experimental Results

5. Conclusions

References

- Chung, Y.-L. Application of an Effective Hierarchical Deep-Learning-Based Object Detection Model Integrated with Image-Processing Techniques for Detecting Speed Limit Signs, Rockfalls, Potholes, and Car Crashes. Future Internet 2023, 15, 322. [Google Scholar] [CrossRef]

- Yen, C.-T.; Chen, G. A Deep Learning-Based Person Search System for Real-World Camera Images. Journal of Internet Technology 2022, 23, 839–851. [Google Scholar]

- Ma, Y.W.; Chen, J.L.; Chen, Y.J.; Lai, Y.H. Explainable deep learning architecture for early diagnosis of Parkinson’s disease. Soft Computing 2023, 27, 2729–2738. [Google Scholar] [CrossRef]

- Prasetyo, H.; Prayuda AW, H.; Hsia, C.H.; Wisnu, M.A. Integrating Companding and Deep Learning on Bandwidth-Limited Image Transmission. Journal of Internet Technology 2022, 23, 467–473. [Google Scholar] [CrossRef]

- Li, Q.; Wen, Z.; Wu, Z.; Hu, S.; Wang, N.; Li, Y.; Liu, X.u.; He, B. A Survey on Federated Learning Systems: Vision, Hype and Reality for Data Privacy and Protection. IEEE Transactions on Knowledge and Data Engineering 2023, 35, 3347–3366. [Google Scholar] [CrossRef]

- Lyu, L.; Yu, H.; Zhao, J.; Yang, Q. Threats to Federated Learning. In Federated Learning; Lecture Notes in Computer Science; Yang, Q., Fan, L., Yu, H., Eds.; Springer: Cham, 2020; p. 12500. [Google Scholar] [CrossRef]

- Tan, Y.; Long, G.; Liu, L.; Zhou, T.; Lu, Q.; Jiang, J.; Zhang, C. Fedproto: Federated prototype learning across heterogeneous clients. In Proceedings of the AAAI Conference on Artificial Intelligence 2022, 36, 8432–8440. [Google Scholar] [CrossRef]

- Xu, J.; Glicksberg, B.S.; Su, C.; Walker, P.; Bian, J.; Wang, F. Federated learning for healthcare informatics. Journal of Healthcare Informatics Research 2021, 5, 1–19. [Google Scholar] [CrossRef]

- Zheng, Z.; Zhou, Y.; Sun, Y.; Wang, Z.; Liu, B.; Li, K. Applications of federated learning in smart cities: recent advances, taxonomy, and open challenges. Connection Science 2022, 34, 1–28. [Google Scholar] [CrossRef]

- Nikolaidis, F.; Symeonides, M.; Trihinas, D. Towards Efficient Resource Allocation for Federated Learning in Virtualized Managed Environments. Future Internet 2023, 15, 261. [Google Scholar] [CrossRef]

- Li, T.; Sahu, A.K.; Talwalkar, A.; Smith, V. Federated learning: Challenges, methods, and future directions. IEEE signal processing magazine 2020, 37, 50–60. [Google Scholar] [CrossRef]

- Singh, P.; Singh, M.K.; Singh, R.; Singh, N. Federated learning: Challenges, methods, and future directions. In Federated Learning for IoT Applications; Springer International Publishing: Cham, 2022; pp. 199–214. [Google Scholar]

- McMahan, B.; Moore, E.; Ramage, D.; Hampson, S.; y Arcas, B.A. Communication-efficient learning of deep networks from decentralized data. Artificial intelligence and statistics, 2017; 1273–1282. [Google Scholar]

- Karimireddy, S.P.; Kale, S.; Mohri, M.; Reddi, S.; Stich, S.; Suresh, A.T. Scaffold: Stochastic controlled averaging for federated learning. International conference on machine learning, 2020; 5132–5143. [Google Scholar]

- Karimireddy, S.P.; Jaggi, M.; Kale, S.; Mohri, M.; Reddi, S.J.; Stich, S.U.; Suresh, A.T. Mime: Mimicking centralized stochastic algorithms in federated learning. arXiv arXiv:2008.03606, 2020.

- Wang, H.; Kaplan, Z.; Niu, D.; Li, B. Optimizing federated learning on non-iid data with reinforcement learning. IEEE INFOCOM 2020-IEEE Conference on Computer Communications, 2020; 1698–1698. [Google Scholar]

- Zhao, Y.; Li, M.; Lai, L.; Suda, N.; Civin, D.; Chandra, V. Federated learning with non-iid data. arXiv arXiv:1806.00582, 2018. [CrossRef]

- Li, T.; Sahu, A.K.; Zaheer, M.; Sanjabi, M.; Talwalkar, A.; Smith, V. Federated optimization in heterogeneous networks. Proceedings of Machine learning and systems 2020, 2, 429–450. [Google Scholar]

- Wu, Q.; He, K.; Chen, X. Personalized federated learning for intelligent IoT applications: A cloud-edge based framework. IEEE Open Journal of the Computer Society 2020, 1, 35–44. [Google Scholar] [CrossRef]

- Li, T.; Hu, S.; Beirami, A.; Smith, V. Ditto: Fair and robust federated learning through personalization. International Conference on Machine Learning, 2021; 6357–6368. [Google Scholar]

- Arivazhagan, M.G.; Aggarwal, V.; Singh, A.K.; Choudhary, S. Federated learning with personalization layers. arXiv arXiv:1912.00818, 2019.

- Zhang, Y.; Yang, Q. An overview of multi-task learning. National Science Review 2018, 5, 30–43. [Google Scholar] [CrossRef]

- Duchi, J.; Hazan, E.; Singer, Y. Adaptive subgradient methods for online learning and stochastic optimization. Journal of machine learning research 2011, 12, 7. [Google Scholar]

- Deng, Y.; Kamani, M.M.; Mahdavi, M. Adaptive personalized federated learning. arXiv arXiv:2003.13461, 2020.

- Beutel, D.J.; Topal, T.; Mathur, A.; Qiu, X.; Fernandez-Marques, J.; Gao, Y.; Sani, L.; Li, K.H.; Parcollet, T.; de Gusmão, P.P.B.; Lane, N.D. Flower: A friendly federated learning research framework. arXiv arXiv:2007.14390, 2020.

- Li, K.H.; de Gusmão PP, B.; Beutel, D.J.; Lane, N.D. Secure aggregation for federated learning in flower. In Proceedings of the 2nd ACM International Workshop on Distributed Machine Learning; 2021; pp. 8–14. [Google Scholar]

- Brum, R.; Drummond, L.; Castro, M.C.; Teodoro, G. Towards optimizing computational costs of federated learning in clouds. 2021 International Symposium on Computer Architecture and High Performance Computing Workshops (SBAC-PADW), 2021; 35–40. [Google Scholar]

- Xiao, H.; Rasul, K.; Vollgraf, R. Fashion-mnist: a novel image dataset for benchmarking machine learning algorithms. arXiv arXiv:1708.07747, 2017.

- Krizhevsky, A.; Hinton, G. Learning multiple layers of features from tiny images. 2009. [Google Scholar]

- Li, H.; Cai, Z.; Wang, J.; Tang, J.; Ding, W.; Lin, C.; Shi, Y. FedTP: Federated Learning by Transformer Personalization. IEEE Transactions on Neural Networks and Learning Systems. [CrossRef]

- Zhai, R.; Chen, X.; Pei, L.; Ma, Z. A Federated Learning Framework against Data Poisoning Attacks on the Basis of the Genetic Algorithm. Electronics 2023, 12, 560. [Google Scholar] [CrossRef]

| Method | Characteristic | Research Target & Advantages |

|---|---|---|

| FedAvg [13] | The first federated learning algorithm proposed by Google. | FedAvg is a collaborative training neural network with data privacy. |

| Mime [15] | It combines control-variates and server-level optimizer state. | Mime overcomes the natural client-heterogeneity and is faster than any centralized method. |

| FAVOR [16] | It proposes a new method based on Q-learning to select a subset of devices. | FAVOR focuses on validation accuracy and penalizes the use of more communication rounds. |

| FedProx [18] | FedProx allows for the emergence of inadequately trained local models and adds proximal term to the clients’ loss function | FedProx reduces the impact of non-IID on the federated learning and improves the accuracy relative to FedAvg. |

| FedPer [21] | It separates the client model into two parts and trains individually | FedPer demonstrates the ineffectiveness of FedAvg and the effectiveness in modeling personalization tasks. |

| FedTP [30] | It learns personalized self-attention for each client while aggregating the other parameters among the clients. | It combines FedTP with the other methods, including FedPer, FedRod and KNN-Per, to further enhance the model performance. It achieves better accuracy and learning performance. |

| Proposed Method | It proposes a personalized federated learning with adaptive feature extraction and category prediction. | The study shows faster convergence speed and lower data loss than the FedProx and the FedPer federated learning algorithms in Fashion-MNIST, CIFAR10, and CIFAR100 datasets. |

| Parameters\Dataset | Fashion-MNIST | CIFAR10 | CIFAR100 |

|---|---|---|---|

| Input Shape | (28, 28, 1) | (32, 32, 3) | (32, 32, 3) |

| CNN Layer | 2 | 2 | 3 |

| FCNN Layer | 1 | 1 | 2 |

| Federated round | 100 | 100 | 100 |

| Participating clients | 10 | 10 | 10 |

| Participating fraction | 0.8 | 0.8 | 0.8 |

| Client training epoch | 1 | 1 | 3 |

| Data batch size | 32 | 32 | 32 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).