Submitted:

29 January 2024

Posted:

30 January 2024

You are already at the latest version

Abstract

Keywords:

Introduction

Methods

Results

Discussion

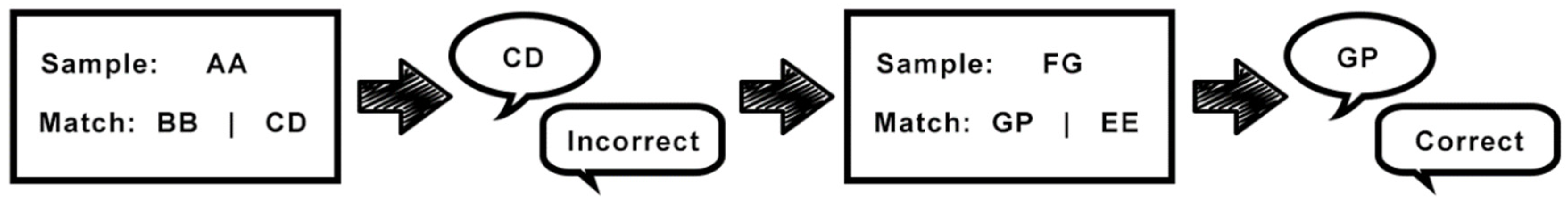

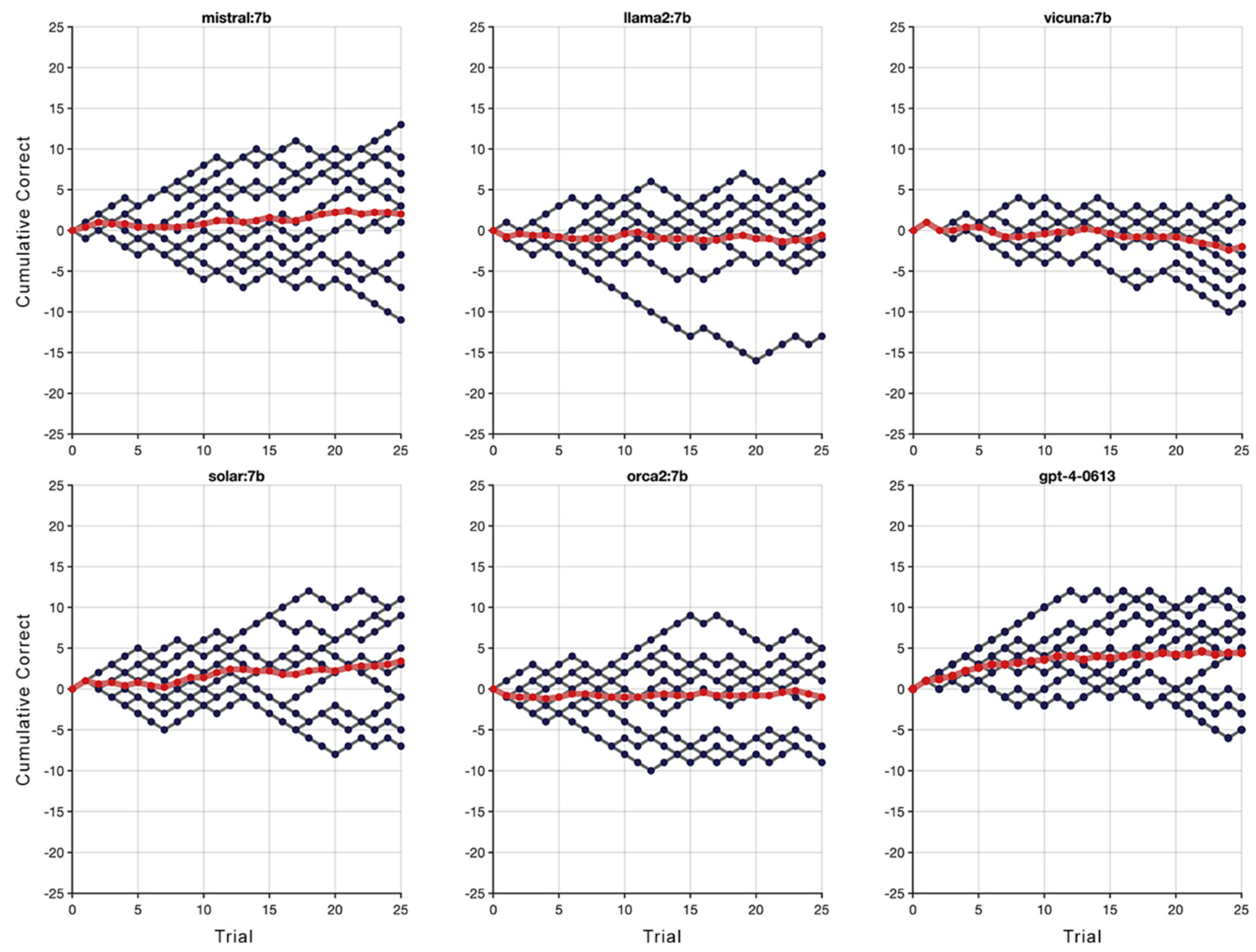

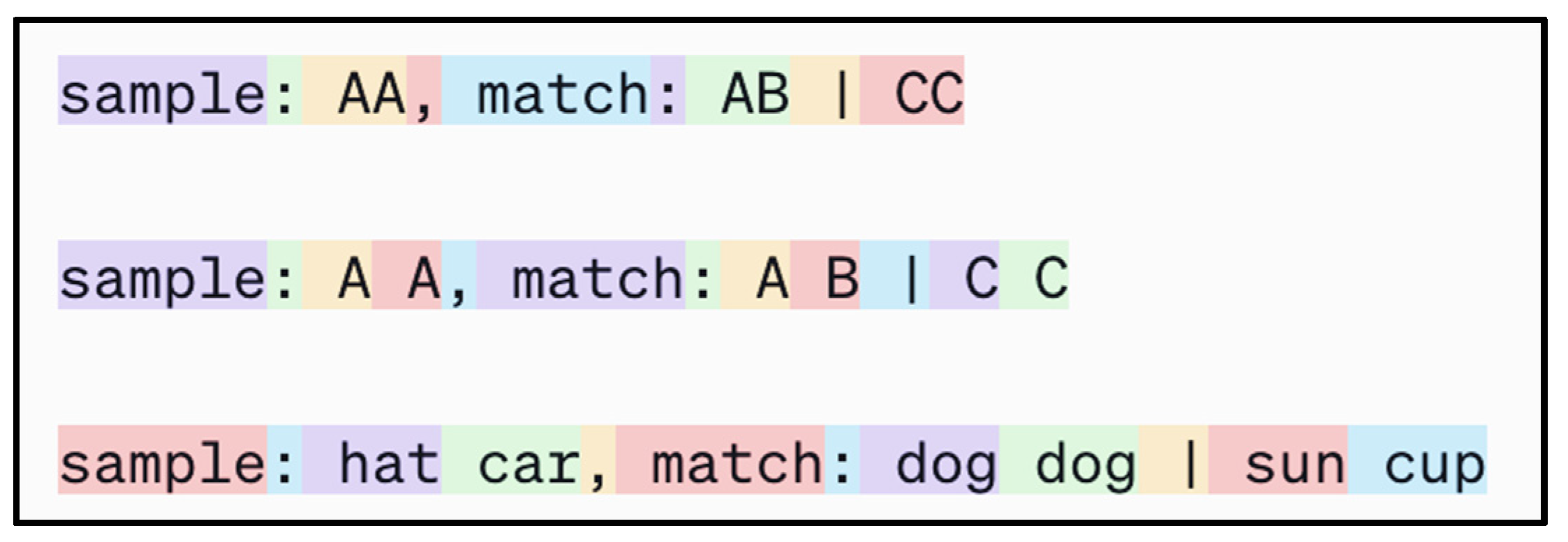

- Simultaneous Same-Different (S/D) Task: In this task, subjects are trained to respond differently based on whether two presented stimuli are the same or different, and includes the RMTS test.

- Analogical Reasoning Tests: These assess the ability to draw parallels between different sets of relations, beyond mere perceptual similarity.

- Transitive Inference Tasks: These tasks test the ability to infer relationships between elements based on their positions within a sequence.

- Hierarchical Reasoning: Evaluates the ability to understand and reason about hierarchical structures.

- The Physical Causality Task: This evaluates understanding of physical causation principles.

- The Social Learning and Imitation Task: Assesses the capacity for learning through observation of others' actions.

- The Theory of Mind Tasks: These are designed to test the understanding that others have beliefs, desires, and intentions different from one's own.

Conflict of interest statement

References

- D. C. Penn, K. J. Holyoak and D. J. Povinelli, "Darwin’s mistake: Explaining the discontinuity between human and nonhuman minds," Behavioral and Brain Sciences, vol. 31, pp. 109-178, 2008.

- C. Darwin, "The descent of man, and selection in relation to sex.," John Murray, 1871.

- M. Bekoff, C. Allen and G. Burghardt, "The Cognitive Animal," MIT Press, 2002.

- M. Pepperberg, "Intelligence and rationality in parrots. Rational Animals?," Oxford University Press, pp. 469-488, 2005.

- J. D. Smith, W. E. Shields and D. A. Washburn, "The comparative psychology of uncertainty monitoring and metacognition," Behavioral and Brain Sciences, vol. 26, pp. 317-373, 2003.

- M. Tomasello, J. Call and B. Hare, "Chimpanzees understand psychological states – The question is which ones and to what extent.," Trends in Cognitive Sciences, vol. 7, no. 4, pp. 153-156, 2003.

- D. J. Smith, B. N. Jackson and B. A. Church, "Breaking the Perceptual-Conceptual Barrier: Relational Matching and Working Memory," Memory & Cognition, vol. 47, no. 3, pp. 544-560, 2019.

- E. A. Wasserman and M. Young, "Same-different discrimination: The keel and backbone of thought and reasoning," Journal of Experimental Psychology, vol. 36, pp. 3-22, 2010.

- J. Locke, An essay concerning human understanding, Philadelphia, PA: Troutman & Hayes, 1690.

- W. James, The principles of psychology, New York, NY: Dover, 1890.

- G. S. Halford, W. H. Wilson and S. Phillips, "Relational knowledge: The foundation of higher cognition," Trends in Cognitive Sciences, vol. 14, pp. 497-505, 2010.

- G. J. and I. Litvan, "Importance of deficits in executive functions," Lancet, vol. 354, pp. 1921-1923, 1999.

- OpenAI, "GPT-4 Technical Report," arXiv, 2023.

- D. Premack, "Animal cognition," Annual Review of Psychology, vol. 34, pp. 351-362, 1983.

- A. Shivaram, R. Shao, N. Simms, S. Hespos and D. Gentner, "When do Children Pass the Relational-Match-To-Sample Task?," Proceedings of the Annual Meeting of the Cognitive Science Society, 2023.

- D. E. Carter and T. J. Werner, "Complex learning and information processing by pigeons: A critical analysis," Journal of the Experimental Analysis of Behavior, no. 29, pp. 565-601, 1978.

- P. W. Holmes, "Transfer of matching performance in pigeons," Journal of the Experimental Analysis of Behavior, vol. 31, pp. 103-114, 1979.

- D. A. Washburn and D. M. Rumbaugh, "Rhesus monkey (Macaca mulatto) complex learning skills reassessed," International Journal of Primatology, vol. 12, pp. 377-388, 1991.

- D. M. R. and M. Columbo, "On the limits of the matching concept in monkeys (Cebus apella)," Journal of the Experimental Analysis of Behavior, no. 52, pp. 225-236, 1989.

- J. S. Katz, A. A. Wright and J. Bachevalier, "Mechanisms of same/different abstract-concept learning by rhesus monkeys (Macaca mulatta)," Journal of Experimental Psychology: Animal Behavior Processes, vol. 28, pp. 358-368, 2002.

- W. E. Shields, J. D. Smith and D. A. Washburn, "Uncertain responses by humans and rhesus monkeys (Macaca mulatta) in a psychophysical same-different task," Journal of Experimental Psychology, vol. 126, pp. 147-164, 1997.

- Gentner, Shao, Simms and Hespos, "Learning Same and Different Relations: Cross-Species Comparisons," Current Opinions in Behavioral Sciences, vol. 37, pp. 84-89, 2001.

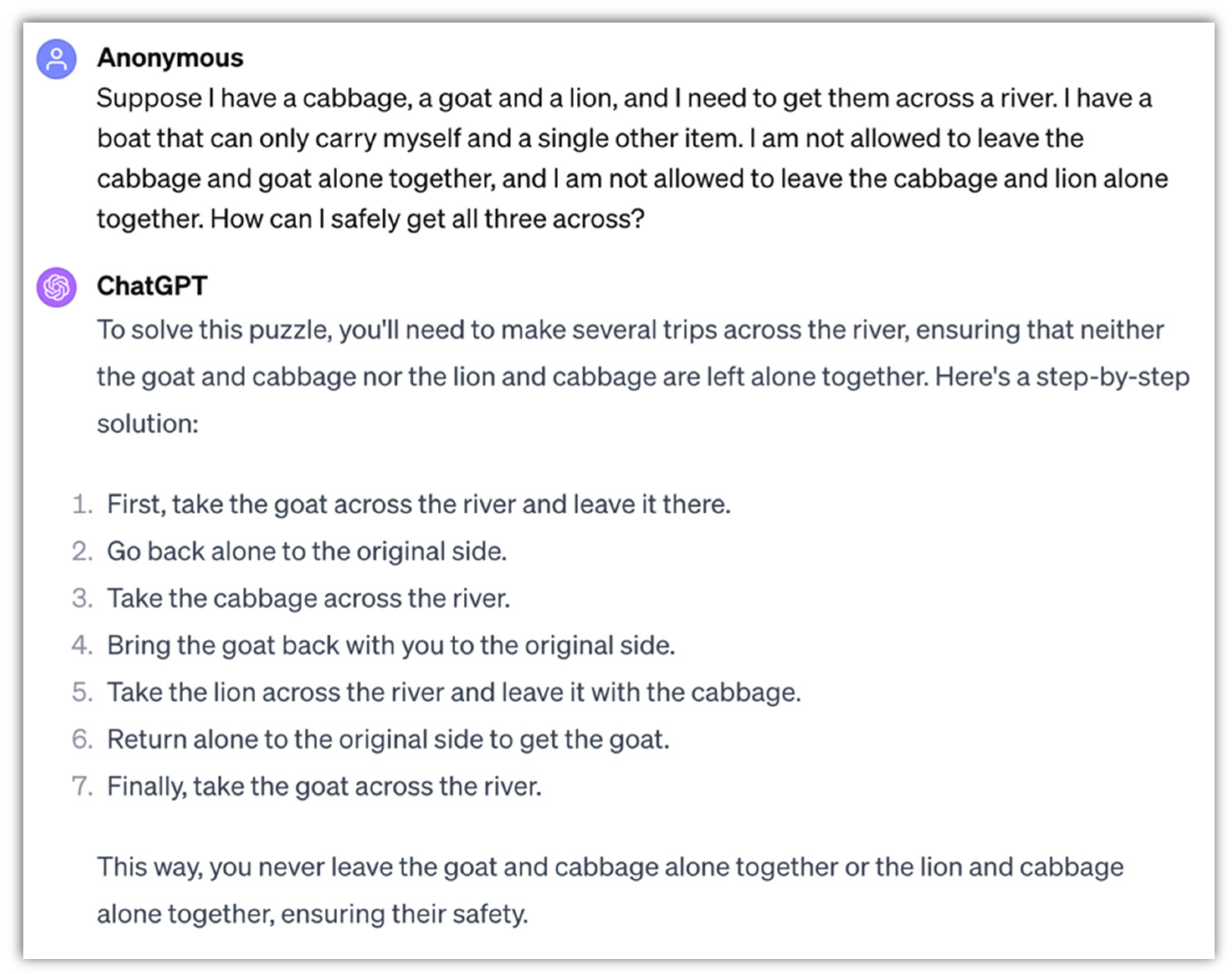

- M. Ascher, "A River-Crossing Problem in Cross-Cultural Perspective," Mathematics Magazine, vol. 63, no. 1, pp. 26-29, 1990.

- "River crossing puzzle," [Online]. Available: https://en.wikipedia.org/wiki/River_crossing_puzzle. [Accessed 18 December 2023].

- "Wolf, goat, and cabbage problem," [Online]. Available: https://en.wikipedia.org/wiki/Wolf,_goat_and_cabbage_problem. [Accessed 18 December 2023].

| 1 | |

| 2 | |

| 3 |

Link to full GPT4 conversation: https://chat.openai.com/share/a99df312-31f4-4cd9-beec-2cd95be58179

Link to full GPT3.5 conversation: https://chat.openai.com/share/790b425f-368e-4acf-a206-717019c6736d

|

| 4 | Link to GPT4 conversation https://chat.openai.com/share/0b008c03-4d91-4a8c-8287-e1a84a265abb

|

| 5 | A solution to the wolf, goat, and cabbage riddle would be: (1) take the goat over, (2) return empty-handed, (3) take the cabbage over, (4) return with the goat, (5) take the wolf over, (6) return with nothing, and finally (7) take the goat over. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).