Submitted:

15 January 2024

Posted:

16 January 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Language Bias

2.2. Multimodal Fusion

2.3. Collaborative Learning

3. Methods

3.1. Definition of Bias

3.2. Multimodal Collaborative Learning in VQA

- Parallel data methods: in parallel data, the observation data of one modality in the dataset is required to be directly associated with the observation data of another modality. For example, in a video-audio dataset, it is required that the video and voice samples come from the same speaker.

- Non-parallel data methods: in non-parallel data, methods do not require a direct correlation between different modalities, and usually methods in this data context achieve co-learning through overlap at the category level. For example, in OK-VQA, multimodal datasets are combined with out-of-domain knowledge from Wikipedia to improve the generalization of quizzes.

- Hybrid data approach: in hybrid data, different modalities are connected to each other through shared modalities. For example, in the case of multilingual image captioning, the image modality always matches the caption in any of the languages, and the role of intermediate modalities is to establish correspondences between the different languages so that the images can be associated to different languages.

3.3. CoD-VQA

- Bias prediction: single branch prediction of instances and in the training dataset to obtain unimodal predictions and .

- Modality selection: based on the and obtained in the previous step, binary cross-entropy calculation is performed with to obtain the corresponding bias loss in different modalities. Then according to the size of the loss and the result of the bias detector, we determine which modality in the image and text is the “deprived” modality.

- Modal Fusion: After determining which modality is “deprived”, we fix the “enriched” modality and use modal fusion to get a new modal representation, which enhances the “deprived” modality in the joint representation. We use modal fusion to obtain a new modal representation, which enhances the participation of the “scarcity” modality in the joint representation.

3.4. Reducing Bias

| Algorithm 1: CoD-VQA |

|

3.4.1. Bias Prediction

3.4.2. Selecting the “Scarce” Modality

3.4.3. Modality Fusion

4. Experiments

4.1. Datasets and Evaluation

4.2. Results on the VQA-CP v2 and VQA v2 Datasets

4.2.1. Quantitative Results

- Comparing to the backbone models used, our method demonstrates a significant improvement over the UpDn baseline model, achieving an approximate 20% performance boost, showcasing the effectiveness of our approach in reducing language bias.

- Our method also exhibits considerable performance gains compared to other debiasing methods. CoD-VQA achieves state-of-the-art performance without using additional annotations, obtaining the best overall accuracy in the “All” category. In specific question types, the CF-VQA variant performs best in “Y/N” questions, while CoD shows better performance in question types requiring more visual content, labeled as "Other."

- Comparing with methods employing data augmentation and additional annotations, our approach similarly demonstrates competitive performance. When using the same UpDn baseline model, our method exhibits approximately 1.8% improvement over the latest feature-enhancement method, D-VQA. Additionally, CoD outperforms in the “Other” question category, strongly validating the efficacy of our debiasing approach.

- On the VQA v2 dataset, our method displays robust generalization capabilities, overcoming the constraints of unknown regularization effects present in the v2 dataset.

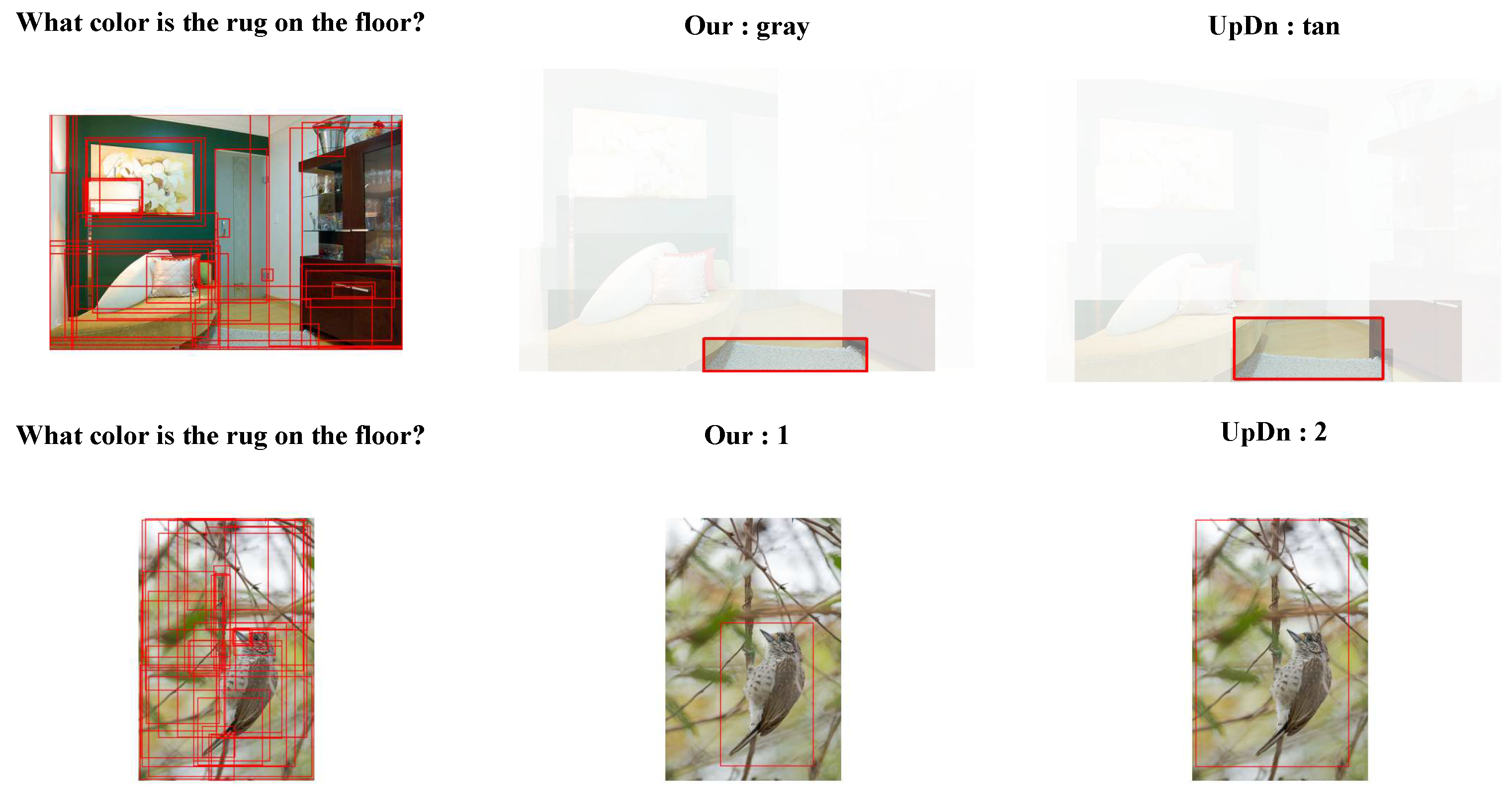

4.2.2. Qualitative Results

4.3. Ablation Studies

4.3.1. Modality Selection Evaluation

- Distinguishing different scanty modalities within samples has a beneficial impact on model performance.

- Language modality biases are more challenging in the overall bias problem in VQA compared to visual modality biases. When we default the “scanty” modality to the visual modality, the model’s performance improves slightly compared to when fixed as the language modality.

4.3.2. Comparison of Other Baseline Models

4.3.3. VQA-VS

4.4. Analysis of Other Metrics

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Agrawal, A., Batra, D., and Parikh, D. Analyzing the behavior of visual question answering models. arXiv preprint arXiv:1606.07356 (2016).

- Arora, R., and Livescu, K. Multi-view cca-based acoustic features for phonetic recognition across speakers and domains. In 2013 IEEE International Conference on Acoustics, Speech and Signal Processing (May 2013). [CrossRef]

- Baltrusaitis, T., Ahuja, C., and Morency, L.-P. Multimodal machine learning: A survey and taxonomy. IEEE Transactions on Pattern Analysis and Machine Intelligence (Feb 2019), 423–443. [CrossRef]

- Cadene, R., Dancette, C., Cord, M., Parikh, D., et al. Rubi: Reducing unimodal biases for visual question answering. Advances in neural information processing systems 32 (2019).

- Chen, L., Yan, X., Xiao, J., Zhang, H., Pu, S., and Zhuang, Y. Counterfactual samples synthesizing for robust visual question answering. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (2020), pp. 10800–10809.

- Chen, T., Kornblith, S., Norouzi, M., and Hinton, G. A simple framework for contrastive learning of visual representations. Cornell University - arXiv,Cornell University - arXiv (Feb 2020).

- Clark, C., Yatskar, M., and Zettlemoyer, L. Don’t take the easy way out: Ensemble based methods for avoiding known dataset biases. arXiv preprint arXiv:1909.03683 (2019).

- Frome, A., Corrado, G., Shlens, J., Bengio, S., Dean, J., Ranzato, M., and Mikolov, T. Devise: A deep visual-semantic embedding model. Neural Information Processing Systems,Neural Information Processing Systems (Dec 2013).

- Guo, Y., Cheng, Z., Nie, L., Liu, Y., Wang, Y., and Kankanhalli, M. Quantifying and alleviating the language prior problem in visual question answering. In Proceedings of the 42nd international ACM SIGIR conference on research and development in information retrieval (2019), pp. 75–84.

- Han, X., Wang, S., Su, C., Huang, Q., and Tian, Q. Greedy gradient ensemble for robust visual question answering. arXiv: Computer Vision and Pattern Recognition (2021).

- Han, X., Wang, S., Su, C., Huang, Q., and Tian, Q. Greedy gradient ensemble for robust visual question answering. In Proceedings of the IEEE/CVF International Conference on Computer Vision (2021), pp. 1584–1593.

- Han, X., Wang, S., Su, C., Huang, Q., and Tian, Q. General greedy de-bias learning. IEEE Transactions on Pattern Analysis and Machine Intelligence (2023). [CrossRef]

- Liu, J., Fan, C., Zhou, F., and Xu, H. Be flexible! learn to debias by sampling and prompting for robust visual question answering. Information Processing & Management (May 2023), 103296. [CrossRef]

- Luo, H., Lin, G., Yao, Y., Liu, F., Liu, Z., and Tang, Z. Depth and video segmentation based visual attention for embodied question answering. IEEE Transactions on Pattern Analysis and Machine Intelligence 45, 6 (Jun 2023), 6807–6819. [CrossRef]

- Niu, Y., Tang, K., Zhang, H., Lu, Z., Hua, X.-S., and Wen, J.-R. Counterfactual vqa: A cause-effect look at language bias. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (2021), pp. 12700–12710.

- Oord, A., Li, Y., and Vinyals, O. Representation learning with contrastive predictive coding. Cornell University - arXiv,Cornell University - arXiv (Jul 2018).

- Ramakrishnan, S., Agrawal, A., and Lee, S. Overcoming language priors in visual question answering with adversarial regularization. Advances in Neural Information Processing Systems 31 (2018).

- Selvaraju, R. R., Lee, S., Shen, Y., Jin, H., Ghosh, S., Heck, L., Batra, D., and Parikh, D. Taking a hint: Leveraging explanations to make vision and language models more grounded. In Proceedings of the IEEE/CVF international conference on computer vision (2019), pp. 2591–2600.

- Shrestha, R., Kafle, K., and Kanan, C. A negative case analysis of visual grounding methods for vqa. arXiv preprint arXiv:2004.05704 (2020).

- Socher, R., Ganjoo, M., Sridhar, H., Bastani, O., Manning, C., and Ng, A. Zero-shot learning through cross-modal transfer. International Conference on Learning Representations,International Conference on Learning Representations (Jan 2013).

- Wang, J., Pradhan, M. R., and Gunasekaran, N. Machine learning-based human-robot interaction in its. Information Processing & Management 59, 1 (Jan 2022), 102750. [CrossRef]

- Wen, Z., Xu, G., Tan, M., Wu, Q., and Wu, Q. Debiased visual question answering from feature and sample perspectives. Advances in Neural Information Processing Systems 34 (2021), 3784–3796.

- Yuhas, B., Goldstein, M., and Sejnowski, T. Integration of acoustic and visual speech signals using neural networks. IEEE Communications Magazine (Nov 1989), 65–71. [CrossRef]

- Zhu, X., Mao, Z., Liu, C., Zhang, P., Wang, B., and Zhang, Y. Overcoming language priors with self-supervised learning for visual question answering. arXiv preprint arXiv:2012.11528 (2020).

| Data Set | VQA-CP v2 Test | VQA v2 val | |||||||

|---|---|---|---|---|---|---|---|---|---|

| Method | Base | All | Y/N | Num. | Other | All | Y/N | Num. | Other |

| GVQA | - | 31.30 | 57.99 | 13.68 | 22.14 | 48.24 | 72.03 | 31.17 | 34.65 |

| SAN | - | 24.96 | 38.35 | 11.14 | 21.74 | 52.41 | 70.06 | 39.28 | 47.84 |

| UpDn | - | 39.96 | 43.01 | 12.07 | 45.82 | 63.48 | 81.18 | 42.14 | 55.66 |

| S-MRL | - | 38.46 | 42.85 | 12.81 | 43.20 | 63.10 | - | - | - |

| HINT | UpDn | 46.73 | 67.27 | 10.61 | 45.88 | 63.38 | 81.18 | 42.99 | 55.56 |

| SCR | UpDn | 49.45 | 72.36 | 10.93 | 48.02 | 62.2 | 78.8 | 41.6 | 54.5 |

| AdvReg | UpDn | 41.17 | 65.49 | 15.48 | 35.48 | 62.75 | 79.84 | 42.35 | 55.16 |

| RUBi | UpDn | 44.23 | 67.05 | 17.48 | 39.61 | - | - | - | - |

| RUBi | S-MRL | 47.11 | 68.65 | 20.28 | 43.18 | 61.16 | - | - | - |

| LM | UpDn | 48.78 | 72.78 | 14.61 | 45.58 | 63.26 | 81.16 | 42.22 | 55.22 |

| LMH | UpDn | 52.01 | 72.58 | 31.12 | 46.97 | 56.35 | 65.06 | 37.63 | 54.69 |

| DLP | UpDn | 48.87 | 70.99 | 18.72 | 45.57 | 57.96 | 76.82 | 39.33 | 48.54 |

| DLR | UpDn | 48.87 | 70.99 | 18.72 | 45.57 | 57.96 | 76.82 | 39.33 | 48.54 |

| AttAlign | UpDn | 39.37 | 43.02 | 11.89 | 45.00 | 63.24 | 80.99 | 42.55 | 55.22 |

| CF-VQA(SUM) | UpDn | 53.55 | 91.15 | 13.03 | 44.97 | 63.54 | 82.51 | 43.96 | 54.30 |

| Removing Bias | LMH | 54.55 | 74.03 | 49.16 | 45.82 | - | - | - | - |

| CF-VQA(SUM) | S-MRL | 55.05 | 90.61 | 21.50 | 45.61 | 60.94 | 81.13 | 43.86 | 50.11 |

| GGE-DQ-iter | UpDn | 57.12 | 87.35 | 26.16 | 49.77 | 59.30 | 73.63 | 40.30 | 54.29 |

| GGE-DQ-tog | UpDn | 57.32 | 87.04 | 27.75 | 49.59 | 59.11 | 73.27 | 39.99 | 54.39 |

| AdaVQA | UpDn | 54.67 | 72.47 | 53.81 | 45.58 | - | - | - | - |

| CoD(Ours) | UpDn | 60.14 | 85.66 | 39.08 | 52.54 | 62.86 | 78.65 | 45.01 | 54.13 |

| Methods of data augmentation and additional annotation: | |||||||||

| AttReg | LMH | 59.92 | 87.28 | 52.39 | 47.65 | 62.74 | 79.71 | 41.68 | 55.42 |

| CSS | UpDn | 58.95 | 84.37 | 49.42 | 48.24 | 59.91 | 7.25 | 39.77 | 55.11 |

| CSS+CL | UpDn | 59.18 | 86.99 | 49.89 | 47.16 | 57.29 | 67.27 | 38.40 | 54.71 |

| Mutant | UpDn | 61.72 | 88.90 | 49.68 | 50.78 | 62.56 | 82.07 | 42.52 | 53.28 |

| D-VQA | UpDn | 61.91 | 88.93 | 52.32 | 50.39 | 64.96 | 82.18 | 44.05 | 57.54 |

| KDDAug | UpDn | 60.24 | 86.13 | 55.08 | 48.08 | 62.86 | 80.55 | 41.05 | 55.18 |

| OLP | UpDn | 57.59 | 86.53 | 29.87 | 50.03 | - | - | - | - |

| Language | Vision | Both | VQA-CP Test | |

|---|---|---|---|---|

| 1 | ✔ | 54.72 | ||

| 2 | ✔ | 56.36 | ||

| 3 | ✔ | 60.14 |

| Model | Yes/No | Num. | Other | Overall |

|---|---|---|---|---|

| SAN | 39.44 | 12.91 | 46.65 | 39.11 |

| +CoD | 81.81 | 47.46 | 38.20 | 52.56 |

| UpDn | 43.01 | 12.07 | 45.82 | 39.96 |

| +CoD | 85.66 | 39.08 | 52.54 | 60.14 |

| RuBi | 67.05 | 17.48 | 39.61 | 44.23 |

| +CoD | 79.93 | 45.78 | 46.04 | 55.87 |

| LXMERT | 42.84 | 18.91 | 55.51 | 46.23 |

| +CoD | 82.51 | 57.84 | 58.64 | 65.47 |

| +D-VQA | 80.43 | 58.57 | 67.23 | 69.75 |

| VQA-VS OOD Test Sets | |||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Model | Base | Language-Based | Visual-Based | Multi-Modality | Mean | ||||||

| QT | KW | KWP | QT+KW | KO | KOP | QT+KO | KW+KO | QT+KW+KO | |||

| S-MRL | - | 27.33 | 39.80 | 53.03 | 51.96 | 27.74 | 35.55 | 42.17 | 50.79 | 55.47 | 42.65 |

| UpDn | 32.43 | 45.10 | 56.06 | 55.29 | 33.39 | 41.31 | 46.45 | 54.29 | 56.92 | 46.80 | |

| +LMH | UpDn | 33.36 | 43.97 | 54.76 | 53.23 | 33.72 | 41.39 | 46.15 | 51.14 | 54.97 | 45.85 |

| +SSL | UpDn | 31.41 | 43.97 | 54.74 | 53.81 | 32.45 | 40.41 | 45.53 | 52.89 | 55.42 | 45.62 |

| BAN | - | 33.75 | 46.64 | 58.36 | 57.11 | 34.56 | 42.45 | 47.92 | 56.26 | 59.77 | 48.53 |

| LXMERT | - | 36.46 | 51.95 | 64.17 | 64.22 | 37.69 | 46.40 | 53.54 | 62.46 | 67.44 | 53.70 |

| CoD-VQA(Ours) | UpDn | 32.91 | 49.65 | 62.65 | 61.51 | 34.46 | 43.58 | 51.47 | 60.84 | 66.35 | 51.49 |

| Method | CGR | CGW | CGD |

|---|---|---|---|

| UpDn | 44.27 | 40.63 | 3.91 |

| HINT | 45.21 | 34.87 | 10.34 |

| RUBi | 39.60 | 33.33 | 6.27 |

| LMH | 46.44 | 35.84 | 10.60 |

| CSS | 46.70 | 37.89 | 8.87 |

| GGE-DQ-iter | 44.35 | 27.91 | 16.44 |

| GGE-DQ-tog | 42.74 | 27.47 | 15.27 |

| CoD (Ours) | 37.50 | 21.46 | 16.04 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).