Submitted:

23 December 2023

Posted:

27 December 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. State-of-the-art

2. Materials and Methods

2.1. Objectives

2.1.1. Hypothesis being tested

- H1: AI-driven technologies are fundamentally reshaping educational practices and attitudes, especially in technologically advanced fields.

- H2: High levels of academic burnout are related to increased technology anxiety and the adoption of dysfunctional learning strategies.

- H3: Effective use of LLMs, combined with metacognitive strategies, significantly improves students’ learning and motivation.

2.2. Procedures and participants

2.3. Instruments

- Academic Burnout Model, 4 items (ABM-4): Initially designed to assess work-related burnout (Kristensen et al. 2005), this scale measures the extent of specific aspects students might feel as a result of prolonged engagement with intense academic activities. These aspects include study-demand exhaustion, the emotional impact of academic pressures, and the depletion experienced from academic endeavors.

- AI Technology Anxiety Scale, 3 items (AITA-3): This scale measures the anxiety students may feel when interacting with generative AI technologies, including fears of job displacement or subject matter obsolescence. The scale was adapted from Wilson et al. (2023, p. 186), which defined technology anxiety as "the tension from the anticipation of a negative outcome related to the use of technology deriving from experiential, behavioral, and physiological elements".

- Intrinsic Motivation Scale, 3 items (IMOV-3): Designed to assess the level of students’ intrinsic motivation towards learning, this scale, adapted from Hanum; Hasmayni and Lubis (2023), evaluates the inherent satisfaction and interest in the learning process itself. This scale is particularly relevant given the literature suggesting a significant relationship between the use of AI technologies and students’ motivation to learn.

- Learning Strategies Scale with Large Language Models, 6 items (LS/LLMs-6): This scale encompasses two distinct dimensions involving LLMs, one focusing on Dysfunctional Learning Strategies (DLS/LLMs-3) and the other on Metacognitive Learning Strategies (MLS/LLMs-3), each consisting of three items. The DLS/LLMs-3 sub-scale investigates potential counterproductive learning strategies that students might adopt when using LLMs, which could impede effective learning, and includes both unique and conventional items of dysfunctional strategies. In contrast, the MLS/LLMs-3 sub-scale assesses the self-regulatory practices that students employ while learning with LLMs, aimed at enhancing learning outcomes. This sub-scale is specifically tailored to include items directly related to LLMs. Based on Oliveira and Caliatto (2018); Pereira; Santos and Ferraz (2020), these scales provide a comprehensive assessment of learning strategies in the context of LLMs, covering both the metacognitive techniques that enhance learning and the dysfunctional methods that may potentially hinder it.

- LLMs Acceptance Model Scale, 5 items (TAME/LLMs-5): This scale evaluates students’ readiness to integrate Large Language Models (LLMs) into their learning process. It has been adapted from Sallam et al. (2023) to provide a more streamlined approach for assessing AI technology acceptance and intention to use.

2.3.1. Adaptation review and validity

2.4. Data Analysis

2.4.1. EFA decision processes

- Sample Adequacy: Prior to factor extraction, it’s imperative to evaluate whether the data set is suitable for factor analysis. To this end, Bartlett’s test of Sphericity and the Kaiser-Meyer-Olkin (KMO) measure were employed (Damasio 2012; Taherdoost et al. 2022). Bartlett’s test is used to test the hypothesis that the correlation matrix is not an identity matrix, essentially assessing whether the variables are interrelated and suitable for structure detection. A significant result from Bartlett’s test allows for the rejection of the null hypothesis, indicating the factorability of our data (Taherdoost et al. 2022). On the other hand, the KMO measure evaluates the proportion of variance among variables that could be attributed to common variance. With the KMO index ranging from 0 to 1, values above 0.5 are considered suitable for factor analysis (Damasio 2012; Taherdoost et al. 2022).

- Factor Retention: Determining the optimal number of factors to retain is a crucial aspect of EFA, as it defines the dimensionality of the constructs. In this study, three distinct methods were adopted to identify the optimal number of factors: the Kaiser-Guttman criterion (Taherdoost et al. 2022), parallel analysis (Crawford et al. 2010), and the factor forest approach, which involves a pre-trained machine learning model (Goretzko and Bühner 2020). Factor extraction plays a significant role in simplifying data complexity and revealing the dataset’s underlying structure, thereby ensuring the dimensions of the constructs measured are captured accurately, and the analysis truly reflects the data’s nature.

- Internal Reliability Assessment: A critical component of EFA is the evaluation of the internal reliability of the scales. Reliability assessment refers to the process of examining how consistently a scale measures a construct. Ensuring consistent measurement is pivotal, as it confirms that any observed variations in data accurately reflect differences in the underlying construct, rather than resulting from measurement error or inconsistencies (Dunn; Baguley and Brunsden 2013). For this purpose, we employed two key metrics: McDonald’s omega () and Cronbach’s alpha (). The omega metric provides an estimate of the scales’ internal consistency, presenting a robust alternative to the traditionally utilized Cronbach’s alpha (Dunn; Baguley and Brunsden 2013).

- Factor Extraction: A fundamental next step involves selecting an appropriate method for factor extraction, which dictates how factors are derived from the data. In this research, Principal Axis Factoring (PAF) was employed. Unlike methods that require multivariate normality, PAF is adept at handling data that may not fully meet these criteria, making it a suitable choice in exploratory contexts, especially with smaller sample sizes (Goretzko; Pham and Bühner 2021; Taherdoost et al. 2022). PAF’s capability to uncover latent constructs within the data without imposing stringent distributional assumptions aligns well with the exploratory nature of the survey (Goretzko; Pham and Bühner 2021).

- Rotation Method: The final step in factorial analysis often involves choosing a rotation method to achieve a theoretically coherent and interpretable factor solution. In this study, promax rotation, a widely used method for oblique rotation, was selected (Sass and Schmitt 2010). The rationale for using an oblique rotation like promax lies in its suitability for scenarios where factors are presumed to be correlated. Unlike orthogonal rotations, which assume factors are independent, oblique rotations acknowledge and accommodate the possibility of inter-factor correlations (Damasio 2012; Sass and Schmitt 2010).

2.5. Use of AI Tools

3. Results

3.1. LLMs Usage Preferences Among Students

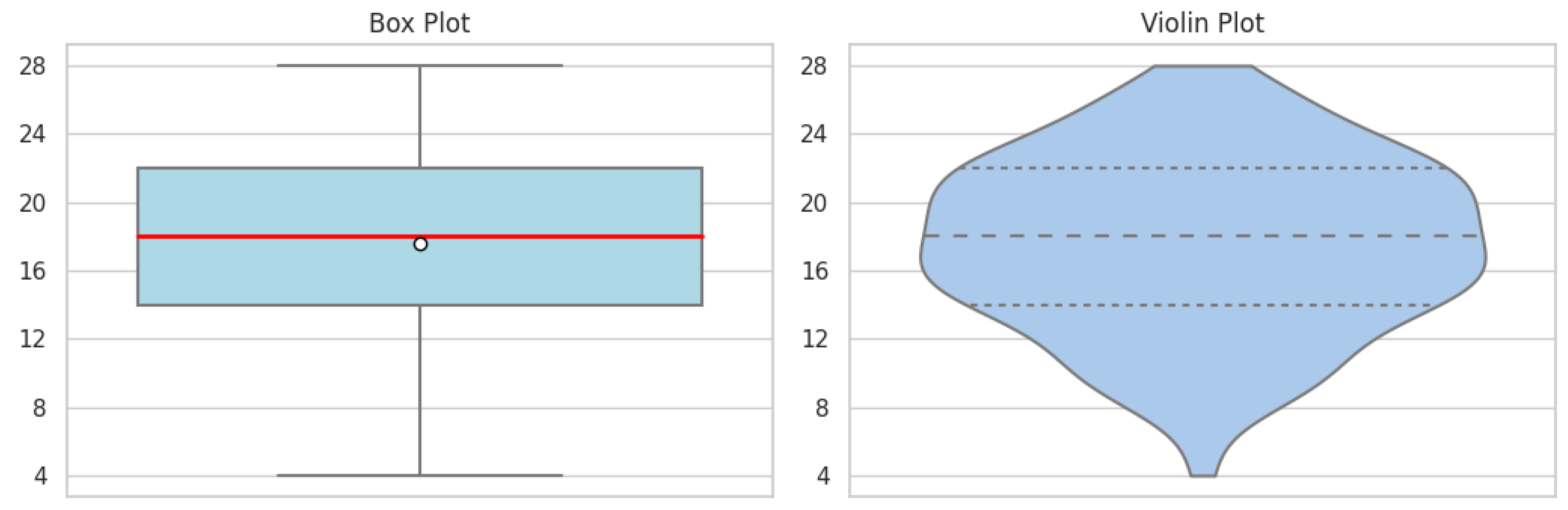

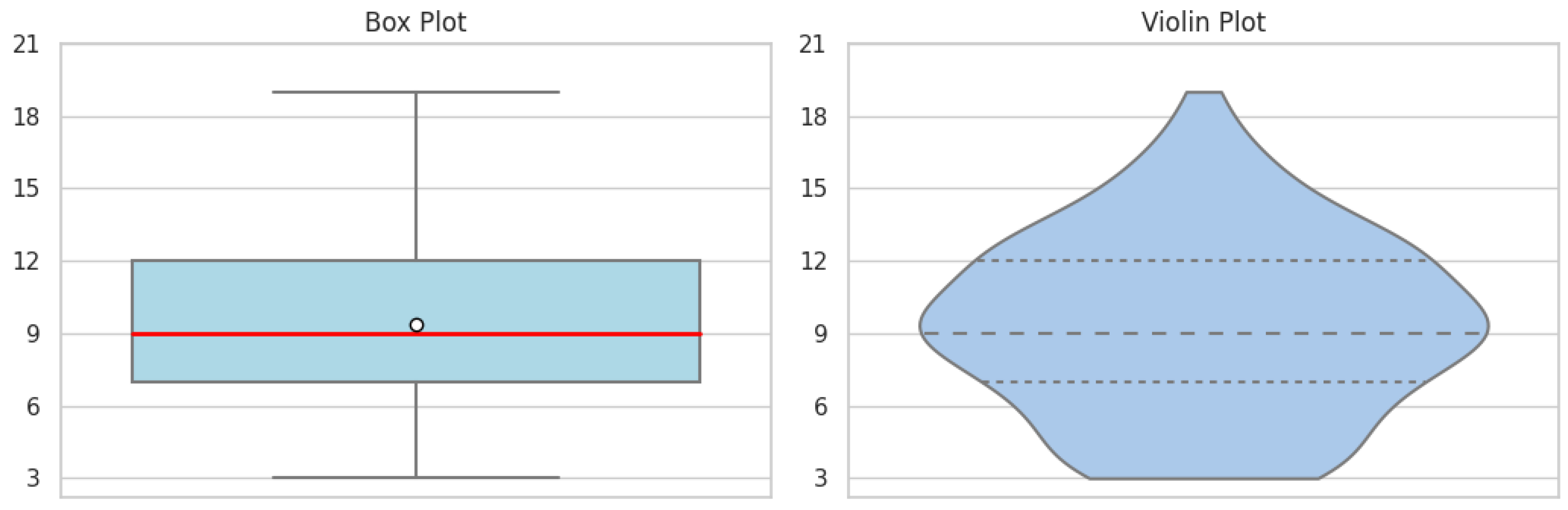

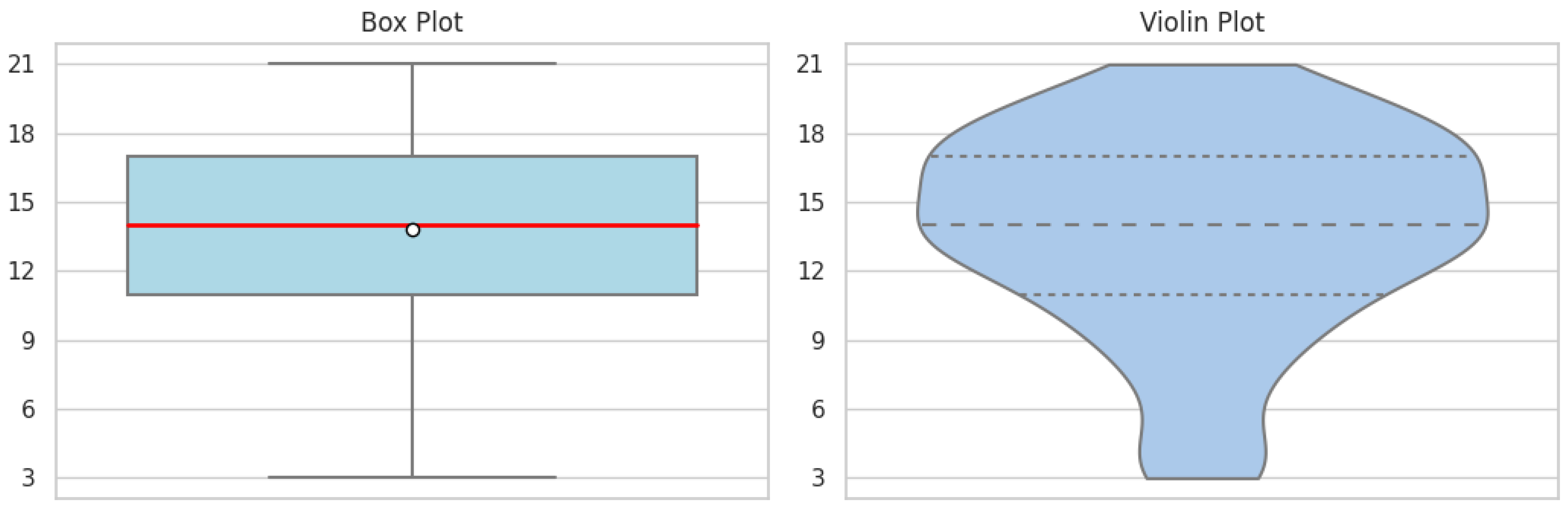

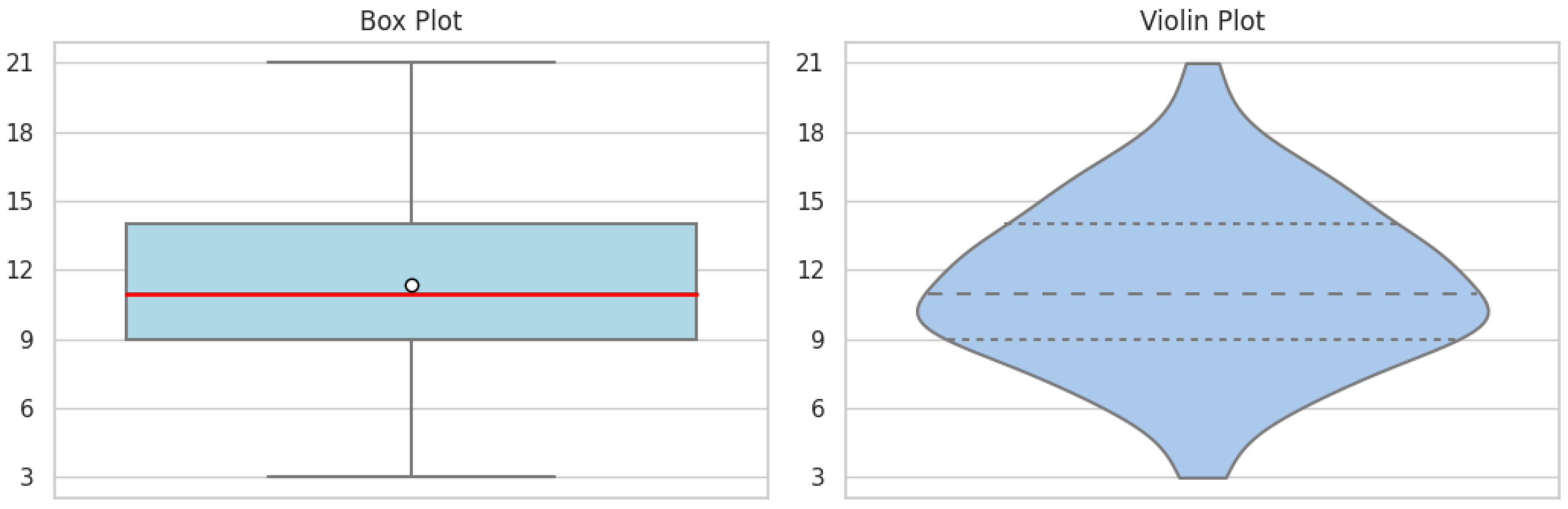

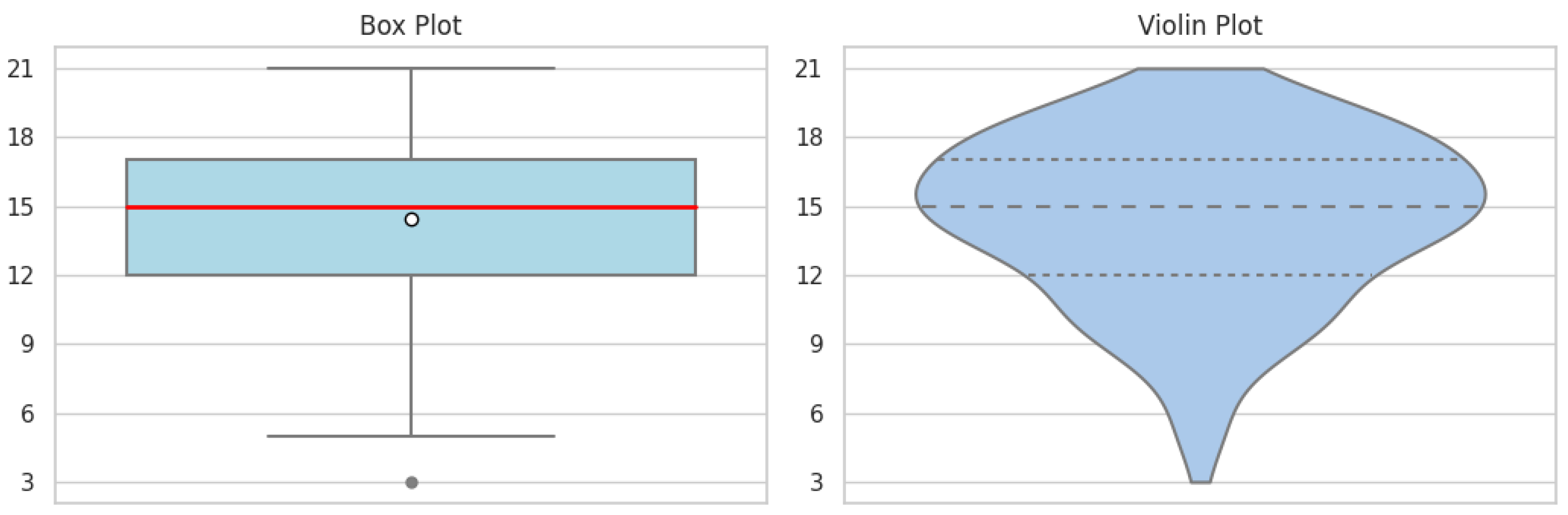

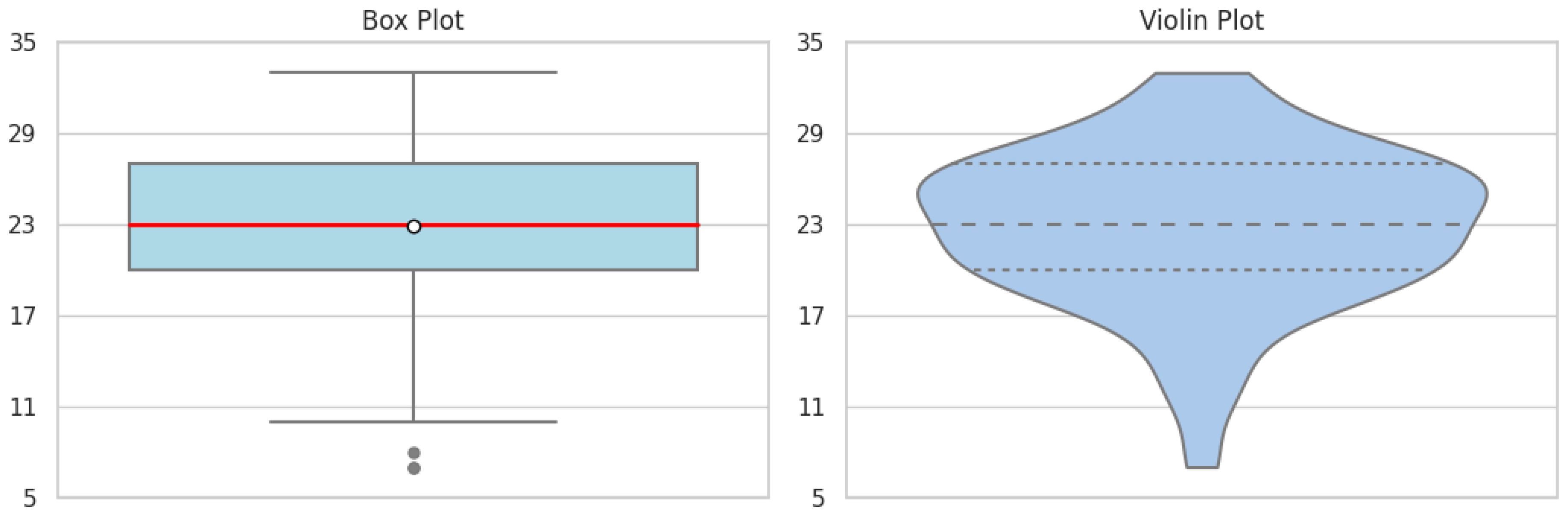

3.2. Validity Assessment Through Factorial Analysis

3.3. Psychometric Scales’ Descriptive Statistics

3.4. Spearman’s Correlation for Categorical Variables

4. Discussion

4.1. An Overwhelming Acceptance of LLMs

4.2. The Role of AI in Computer and Data Science Education

4.3. Metacognition in Modern Learning Environments

4.4. LLMs and Mental Health Among Students

5. Conclusion

5.1. Limitations and Prospects for Future Research

Author Contributions

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| ABM-4 | Academic Burnout Model Scale - 4 items. |

| AI | Artificial Intelligence. |

| AITA-3 | Artificial Intelligence Technology Anxiety Scale - 3 items. |

| CI/UFPB | Center for Informatics, Federal University of Paraíba. |

| DLS-3 | Dysfunctional Learning Strategies Sub-scale - 3 items. |

| EFA | Exploratory Factorial Analysis. |

| IMOV-3 | Intrinsic Motivation Scale - 3 items. |

| LLMs | Large Language Models. |

| LS/LLMs-6 | Learning Strategies Scale with LLMs - 6 items. |

| MLS-3 | Metacognitive Learning Strategies Sub-scale - 3 items. |

| TAME/LLMs-5 | Technology Acceptance Model for LLMs - 5 items. |

Appendix A. Questionnaire item’s transcription

Appendix A.1. ABM-4

-

Original item: Nunca me sinto capaz de alcançar meus objetivos acadêmicos.Suggested translation: I never feel able to achieve my academic goals.

-

Original item: Tenho dificuldade para relaxar depois das aulas.Suggested translation: I have trouble relaxing after school.

-

Original item: Fico esgotado quando tenho que ir à universidade.Suggested translation: I get exhausted when I have to go to college.

-

Original item: As demandas do meu curso me deixam emocionalmente cansado(a).Suggested translation: The demands of my course make me emotionally tired.

Appendix A.2. AITA-3

-

Original item: Sinto como se não pudesse acompanhar as mudanças causadas pelos modelos de inteligência artificial.Suggested translation: I feel like I can’t keep up with the changes caused by artificial intelligence models.

-

Original item: Me preocupo que programadores sejam substituídos pelos modelos de inteligência artificial.Suggested translation: I worry that programmers will be replaced by artificial intelligence models.

-

Original item: Tenho medo que modelos de inteligência artificial tornem conteúdos que aprendi na faculdade obsoletos.Suggested translation: I’m afraid artificial intelligence models will render content I learned in college obsolete.

Appendix A.3. IMOV-3

-

Original item: Gosto de ir em todos as aulas do meu curso.Suggested translation: I like to go to every class in my course.

-

Original item: Muitas vezes, fico tão empolgado que perco a noção do tempo quando estou envolvido em um projeto ou atividade acadêmica.Suggested translation: I’m often so excited that I lose track of time when I’m involved in a project or academic activity.

-

Original item: Para mim, aprender sobre programação é um interesse pessoal.Suggested translation: For me, learning about programming is a personal interest.

Appendix A.4. LS/LLMs-6

-

Original item: Utilizo LLMs (ChatGPT, Bard etc.) para tirar dúvidas e preencher lacunas no meu conhecimento sobre programação.Suggested translation: I use LLMs (ChatGPT, Bard, etc.) to clarify doubts and fill gaps in my knowledge about programming.

-

Original item: Utilizo LLMs (ChatGPT, Bard etc.) para formular e resolver atividades de programação.Suggested translation: I use LLMs (ChatGPT, Bard, etc.) to formulate and solve programming activities.

-

Original item: Corrijo meus códigos utilizando LLMs (ChatGPT, Bard etc.).Suggested translation: I correct my codes using LLMs (ChatGPT, Bard etc.).

-

Original item: Deixo para estudar para as provas de última hora.Suggested translation: I only start studying at the last minute.

-

Original item: Tenho dificuldade para encontrar erros em respostas e códigos gerados por LLMs (ChatGPT, Bard etc.).Suggested translation: I have difficulty finding errors in responses and codes generated by LLMs (ChatGPT, Bard etc.).

-

Original item: Sinto que estou apenas memorizando informações em vez de realmente entender os conteúdos.Suggested translation: I feel like I’m just memorizing information instead of really understanding the contents.

Appendix A.5. TAME/LLMs-5

-

Original item: LLMs (ChatGPT, Bard etc.) tornam a programação mais democrática e acessível para as pessoas.Suggested translation: LLMs (ChatGPT, Bard etc.) make programming more democratic and accessible for people.

-

Original item: Acredito que LLMs (ChatGPT, Bard etc.) podem ser melhor exploradas pelos professores nas aulas, atividades e/ou provas.Suggested translation: I believe that LLMs (ChatGPT, Bard etc.) can be better explored by teachers in classes, activities and/or tests.

-

Original item: Me sinto confiante com os textos e/ou códigos gerados por LLMs (ChatGPT, Bard etc.).Suggested translation: I feel confident with the texts and/or codes generated by LLMs (ChatGPT, Bard etc.).

-

Original item: Penso que LLMs (ChatGPT, Bard etc.) são muito eficientes em programação.Suggested translation: I think LLMs (ChatGPT, Bard etc.) are very efficient in programming.

-

Original item: Prefiro programar sem ajuda de LLMs (ChatGPT, Bard etc.).Suggested translation: I prefer programming without the help of LLMs.

Appendix B. Libraries used for data analysis

- Pandas: As the backbone of our data manipulation efforts, Pandas provided an efficient platform for organizing, cleaning, and transforming the collected survey data into a structured format suitable for analysis.

- Pingouin: This library was instrumental in performing advanced statistical calculations. It allowed us to conduct Spearman’s correlation analysis, offering insights into the relationships between various psychometric scales.

- NumPy: Essential for handling complex linear algebra operations, NumPy supported our data processing needs, especially in managing multi-dimensional arrays and matrices.

- Matplotlib and Seaborn: These visualization tools were pivotal in creating intuitive and informative graphs. Matplotlib, with its flexible plotting capabilities, and Seaborn, which extends Matplotlib’s functionality with advanced visualizations, together provided a comprehensive view of our data’s distribution and trends.

- RPy2: Serving as a bridge between Python and R, RPy2 enabled us to leverage R’s robust statistical and graphical capabilities within our Python-centric analysis pipeline.

- FactorAnalyzer: This library facilitated the Exploratory Factor Analysis (EFA), allowing us to uncover the underlying structure of our psychometric scales and validate their dimensionalities.

- Scipy: We utilized its statistical functions, particularly Spearman’s rank correlation, to explore the associations between different variables, enhancing the robustness of our findings.

- R libraries (mlr, psych, ineq, MVN, GPArotation): These R packages, integrated into our analysis via RPy2, offered specialized functions ranging from machine learning (mlr) and psychological statistics (psych) to multivariate normality testing (MVN) and factor rotation methods (GPArotation).

References

- Cooper, G. Examining Science Education in ChatGPT: AN Exploratory Study of Generative Artificial Intelligence. Journal of Science Education and Technology 2023, 32, 444–452. [Google Scholar] [CrossRef]

- Chiu, T. K. F.; Moorhouse, B. L.; Chai, C. S.; and Ismailov, M. Teacher support and student motivation to learn with Artificial Intelligence (AI) based chatbot. Interactive Learning Environments 2023. [CrossRef]

- Choi, J. H.; Hickman, K. E.; Monahan, A., and Schwarcs, D. B. ChatGPT Goes to Law School. Journal of Legal Education 2023, 71, 387. [CrossRef]

- Crawford, A. V.; Green, S. B., Levy, R.; Lo, W.; Scott, L.; Svetina, D.; and Thompson, M. S. Evaluation of parallel analysis methods for determinning the number of factors. Educational and Psychological Measurement 2010, 79, 885–901. [CrossRef]

- Damasio, B. Uses of Exploratory Factorial Analysis in Psychology. Avaliação Psicológica 2012, 11, 213–228. [Google Scholar]

- Dunn, T. J.; Baguley, T.; and Brunsden, V. From alpha to omega: A practical solution to the pervasive problem of internal consistency estimation. British Journal of Psychology 2013, 105, 399–412. [CrossRef]

- Dwivedi, Y. K.; Kshetri, N.; Huges, L.; Slade, E. L.; Jeyaraj, A.; Kar, A. K.; Baabdullah, A. M.; Koohang, A.; Raghavan, V.; Ahuja, M.; et al. Opinion Paper: “So what if ChatGPT wrote it?” Multidisciplinary perspectives on opportunities, challenges and implications of generative conversational AI for research, practice and policy. International Journal of Information Management 2023, 71. [Google Scholar] [CrossRef]

- Essel, H.B.; Vlachopoulos, D.; Tachie-Menson, A.; Johnson, E.E.; Baah, P.K. The impact of a virtual teaching assistant (chatbot) on students’ learning in Ghanaian higher education. International Journal of Educational Technology in Higher Education 2022, 19, 1–19. [Google Scholar] [CrossRef]

- Gao, S.; Gao, A.K. On the Origin of LLMs: An Evolutionary Tree and Graph for 15,821 Large Language Models. ArXiv preprints 2023, 1–14. [Google Scholar] [CrossRef]

- Gilbert, W.; Bureau, J. S.; Poellhuber, B.; Guay, F. Educational contexts that nurture students’ psychological needs predict low distress and healthy lifestyle through facilitated self-control. Current Psychology 2022, 42, 29661–29681. [Google Scholar] [CrossRef]

- Goretzko, D.; Pham, T.T.H.; Bühner, M. Exploratory factor analysis: Current use, methodological developments and recommendations for good practice. Current Psychology 2021, 40, 3510–3521. [Google Scholar] [CrossRef]

- Goretzko, D.; Bühner, M. One model to rule them all? Using machine learning algorithms to determine the number of factors in exploratory factor analysis. Psychological Methods 2020, 25, 776–786. [Google Scholar] [CrossRef] [PubMed]

- Hadi, M.U.; Al-Tashi, G.; Qureshi, R.; Shah, A.; Muneer, A.; Shaikh, M.B.; Al-Garadi, M.A.; Wu, J.; Mirjalili, S. Large Language Models: A Comprehensive Survey of Applications, Challenges, Limitations, and Future Prospects. TechRxiv preprints 2023, 1–44. [Google Scholar] [CrossRef]

- Hanum S., F.; Hasmayni, B.; Lubis, A. H. The Analysis of Chat GPT Usage Impact on Learning Motivation among Scout Students. International Journal of Research and Review 2023, 10, 632–638. [Google Scholar] [CrossRef]

- Hung, J.; Chen, J. The Benefits, Risks and Regulation of Using ChatGPT in Chinese Academia: A Content Analysis. Social Sciences 2023, 12, 380. [Google Scholar] [CrossRef]

- Kristensen, T. S.; Borritz, M.; Villadsen, E.; Christensen, B.C. The Copenhagen Burnout Inventory: A new tool for the assessment of burnout. Work and Stress: An International Journal of Work, Health and Organisations 2005, 19, 192–207. [Google Scholar] [CrossRef]

- Lee, Y. F.; Hwang, G. J.; Chen, P. Y. Impacts of an AI-based chabot on college students’ after-class review, academic performance, self-efficacy, learning attitude, and motivation. Educational technology research and development 2023, 70, 1843–1865. [Google Scholar] [CrossRef]

- Mofatteh, M. Risk factors associated with stress, anxiety, and depression among university undergraduate students. AIMS Public Health 2020, 8, 36–65. [Google Scholar] [CrossRef]

- Neji, W.; Boughattas, N.; Ziadi, F. Exploring New AI-Based Technologies to Enchance Students’ Motivation. Informing Science Institute 2023, 20. [Google Scholar] [CrossRef]

- Oliveira, A. F.; Caliatto, S.G. Exploratory Factor Analysis of a Scale of Learning Strategies. Educação: Teoria e Prática 2018, 18, 548–565. [Google Scholar] [CrossRef]

- Okonkwo, C.W.; Ade-Ibijola, A. Chatbots applications in education: A systematic review. SComputers and Education: Artificial Intelligence 2021, 2, 380. [Google Scholar] [CrossRef]

- Pahune, S.; Chandrasekharan, M. Several Categories of Large Language Models (LLMs): A Short Survey. International Journal of Research in Applied Science and Engineering Technology 2023, 11. [Google Scholar] [CrossRef]

- Patil, V.H.; Singh, S.N.; Mishra, S.; Donavan, D.T. Efficient theory development and factor retention criteria: Abandon the ’eigenvalue greater than one’ criterion. Journal of Business Research 2008, 61, 162–170. [Google Scholar] [CrossRef]

- Pereira, C.P.da S.; Santos, A.A.A.dos; Ferraz, A.S. Learning Strategies Assessment Scale for Vocational Education: Adaptation and psychometric studies. Revista Portuguesa de Educação 2020, 33, 75–93. [Google Scholar] [CrossRef]

- Ramos, A.S.M. Generative Artificial Intelligence based on large language models - tools for use in academic research. Scielo preprints 2023. [Google Scholar] [CrossRef]

- Rahman, M.; Terano, H. J. R.; Rahman, N.; Salamzadeh, A.; Rahaman, S. ChatGPT and Academic Research: A Review and Recommendations Based on Practical Examples. Journal of Education, Management and Development Studies 2023, 3, 1–12. [Google Scholar] [CrossRef]

- Sallam, M.; Salim N., A.; Barakat, M.; Al-Mahzoum, K.; Al-Tammemi A., B.; Malaeb, D.; Hallit, R.; Hallit, S. Assessing Health Students’ Attitudes and Usage of ChatGPT in Jordan: Validation Study. Journal of Medical Internet Research 2023, 9. [Google Scholar] [CrossRef] [PubMed]

- Sass, D. A.; Schmitt, T. A. A comparative investigation of rotation criteria within exploratory factor analysis. Multivariate Behavioral Research 2010, 45, 73–103. [Google Scholar] [CrossRef]

- Shanahan, M. Talking About Large Language Models. ArXiv preprint 2022, 5, 1–13. [Google Scholar] [CrossRef]

- Taherdoost, H.; Sahibuddin, S.; Jalaliyoon, N. Exploratory Factor Analysis: concepts and theory. In Advances in Applied and Pure Mathematics; 2022; Jerzy Balicki. 27, WSEAS; pp. 375–382. Available online: https://hal.science/hal-02557344/document.

- Theobald, M. Self-regulated learning training programs enhance university students’ academic performance, self-regulated learning strategies, and motivation: A meta-analysis. Contemporary Educational Psychology 2021, 66. [Google Scholar] [CrossRef]

- Tu, X.; Zou, J.; Su, W.J.; Zhang, L. What Should Data Science Education Do with Large Language Models?Should Data Science Education Do with Large Language Models? arXiv preprints 2023, 1–18. [Google Scholar] [CrossRef]

- Urban, M.; Děchtěrenko, F.; Lukavsky, J.; Hrabalová, V.; Švácha, F.; Brom, C.; Urban, K. ChatGPT Improves Creative Problem-Solving Performance in University Students: An Experimental Study. PsyArXiv preprints 2023. [Google Scholar] [CrossRef]

- Yin, J.; Goh, T.; Yang, B.; Xiaobin, Y. Conversation Technology With Micro-Learning: The Impact of Chatbot-Based Learning on Students’ Learning Motivation and Performance. Journal of Educational Computing Research 2023, 59. [Google Scholar] [CrossRef]

- Zhai, X. ChatGPT User Experience: Implications for Education. SSRN Eletronic Journal 2023. [Google Scholar] [CrossRef]

- Wilson, M. L.; Huggins-Manley, A. C.; Ritzhaupt, A. D.; Ruggles, K. Development of the Abbreviated Technology Anxiety Scale (ATAS). Behavior Research Methods 2023, 55, 185–199. [Google Scholar] [CrossRef]

| Scales | KMO Test | Bartlett’s Test of Sphericity |

|---|---|---|

| ABM-4 | 0.742 | 0.000 |

| AITA-3 | 0.562 | 0.000 |

| IMOV-3 | 0.581 | 0.000 |

| LS/LLMs-6 | 0.682 | 0.000 |

| TAME/LLMs-5 | 0.689 | 0.000 |

| Scales | Kaiser criterion | Parallel analysis | Factor Forest |

|---|---|---|---|

| ABM-4 | 1 | 1 | 1 |

| AITA-3 | 1 | 1 | 1 |

| IMOV-3 | 1 | 1 | 1 |

| LS/LLMs-6 | 2 | 2 | 2 |

| TAME/LLMs-5 | 1 | 2 | 1 |

| Scales | Cronbachs’ alpha () | McDonalds’ omega () | Cumulative variance explained |

|---|---|---|---|

| ABM-4 | 0.702 | 0.702 | 0.528 |

| AITA-3 | 0.619 | 0.664 | 0.579 |

| IMOV-3 | 0.578 | 0.625 | 0.545 |

| LS/LLMs-6 | 0.640 | 0.739 | 0.618 |

| TAME/LLMs-5 | 0.656 | 0.663 | 0.426 |

| Scales | Items | Factor 1 | Factor 2 |

|---|---|---|---|

| ABM-4 | Item 1 | 0.70 | - |

| Item 2 | 0.74 | - | |

| Item 3 | 0.72 | - | |

| Item 4 | 0.74 | - | |

| AITA-3 | Item 1 | 0.53 | - |

| Item 2 | 0.85 | - | |

| Item 3 | 0.86 | - | |

| LS/LLMs-6 | Item 1 | 0.83 | 0.00 |

| Item 2 | 0.85 | 0.05 | |

| Item 3 | 0.86 | 0.04 | |

| Item 4 | 0.11 | 0.68 | |

| Item 5 | 0.02 | 0.71 | |

| Item 6 | 0.09 | 0.76 | |

| IMOV-3 | Item 1 | 0.72 | - |

| Item 2 | 0.82 | - | |

| Item 3 | 0.67 | - | |

| TAME/LLMs-5 | Item 1 | 0.69 | - |

| Item 2 | 0.69 | - | |

| Item 3 | 0.63 | - | |

| Item 4 | 0.74 | - | |

| Item 5 | 0.47 | - |

| Scales | ABM-4 | AITA-3 | IMOV-3 | MLS/LLMs-6 | DLS/LLMs-6 | TAME/LLMs-6 |

|---|---|---|---|---|---|---|

| ABM-4 | 1 | 0.27 | -0.14 | 0.05 | 0.41 | 0.16 |

| AITA-3 | 0.27 | 1 | 0.00 | 0.09 | 0.34 | 0.16 |

| IMOV-3 | -0.14 | 0.00 | 1 | -0.03 | -0.31 | -0.04 |

| MLS/LLMs-6 | 0.05 | 0.09 | -0.03 | 1 | 0.11 | 0.60 |

| DLS/LLMs-6 | 0.41 | 0.34 | -0.31 | 0.11 | 1 | 0.13 |

| TAME/LLMs-5 | 0.16 | 0.16 | -0.04 | 0.60 | 0.13 | 1 |

| Scales | ABM-4 | AITA-3 | IMOV-3 | MLS/LLMs-6 | DLS/LLMs-6 | TAME/LLMs-6 |

|---|---|---|---|---|---|---|

| ABM-4 | - | 0.00 | 0.06 | 0.46 | 0.00 | 0.04 |

| AITA-3 | 0.00 | - | 0.99 | 0.23 | 0.00 | 0.03 |

| IMOV-3 | 0.06 | 0.99 | - | 0.64 | 0.00 | 0.60 |

| MLS/LLMs-6 | 0.46 | 0.23 | 0.64 | - | 0.13 | 0.00 |

| DLS/LLMs-6 | 0.00 | 0.00 | 0.00 | 0.13 | - | 0.07 |

| TAME/LLMs-5 | 0.04 | 0.03 | 0.60 | 0.00 | 0.07 | - |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).