2. Concept and setup

The pertinence of explainability varies from case to case and should by no means be considered as an ubiquitous modeling requirement. Thus, there may well be cases where the concept is irrelevant and its consideration would add little, if any, value. Undoubtedly, health applications of AI is an area where explainability is greatly important (Aniek F. Markus et al, 2020). Indeed, the ability to explain to the patient the suggested approach may be key to her decision and eventually to her medical treatment.

Admittedly, energy applications do not represent such a clear cut case for explainability. We would, however, argue that there are cases where the concept would indeed add value. Such is, in particular, the case of demand response, the overarching application context of this work. Demand response, at least in the narrow sense of the term, implies responding to energy price signals. Literature clearly identifies user risk as a main factor preventing a wider adoption of such demand response schemes (Dutta, G., Krishnendranath, M., 2017; Borenstein, S., 2013) and benefiting from the many advantages they may bring to users, energy retailers and grid operators, in terms of lower costs, as well as to renewable deployment planners who will find more opportunity for viable renewable energy. Thus, in this particular application context we would argue that explainability indeed comes with a clear potential to mitigate this well identified risk; this would come with important and unique value, increasing the chances of demand response adoption.

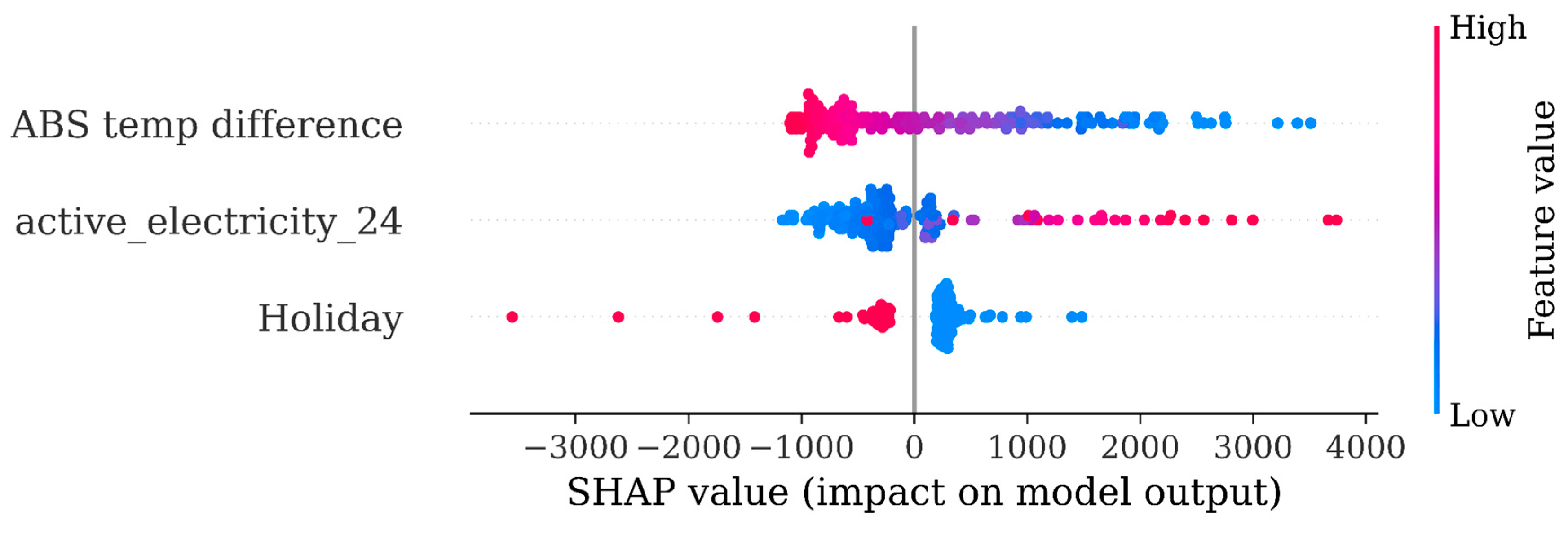

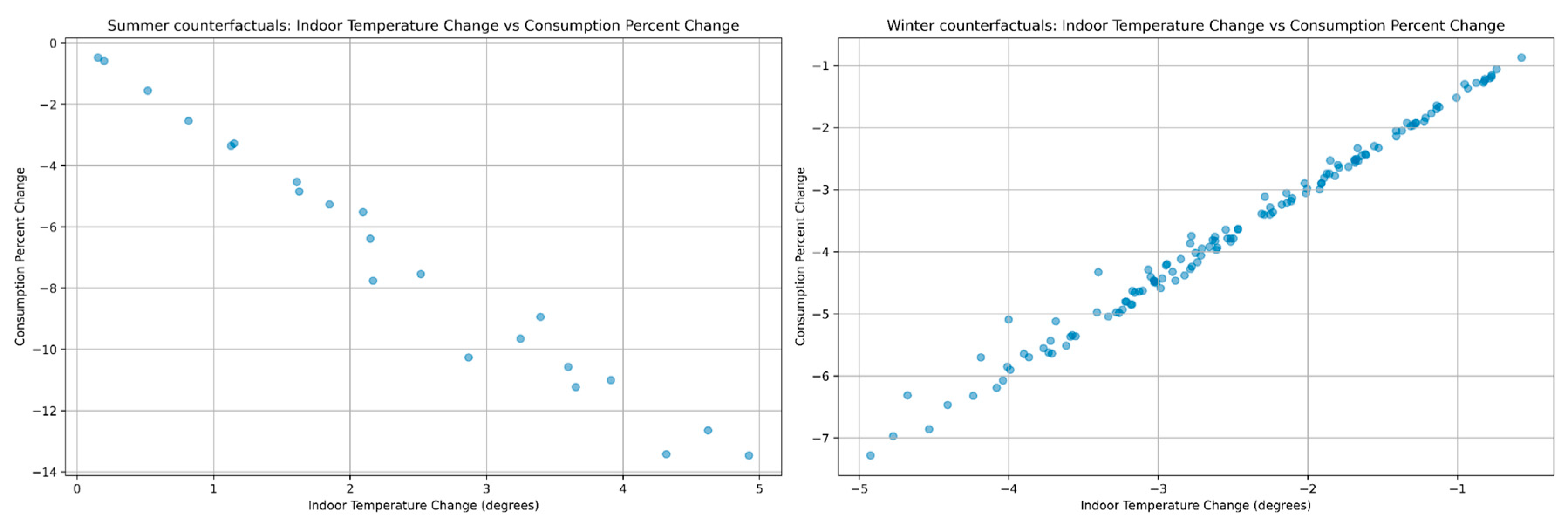

In an attempt to gain more insight on what would be important information for the users as regards the forecast they receive, we carried out interviews with four building energy experts/facility managers to discuss in more depth what information and functionality would add value to them or their building users when delivering the forecasting results. What came out in these discussions were the following two requirements: first, the concept of feature importance, i.e. information on how exactly each feature contributed to the forecast, perhaps with a seasonal variation; and second, the concept of counterfactuals, i.e. the empowerment of users to consider what-if scenarios. At this point the issue of actionability was also raised. Counterfactuals built around actionable features were highlighted as having a higher potential. Indeed, users cannot affect the weather; therefore, a weather counterfactual can only be of an indirect use and cannot contribute to any direct action. On the contrary, the indoor conditions (temperature, humidity, etc.) are actionable features as the user can typically act upon them, e.g. via thermostat settings. Surprisingly, as shown in the above literature review regarding features used in the forecasting, the indoor conditions are relatively rarely used among the selected feature set. Because of the inherent actionability of indoor conditions, a decision was made at this point to prioritize them in the modeling.

Indeed, as shown above in paragraph 1.3, there is a gradual and rather recent appreciation of explainability and its importance in forecasting. A number of explainable approaches that have very recently appeared in the energy literature were discussed there; as shown, these were variants of neural networks which incidentally are also the predominant approach used in energy forecasting exercises. However, neural networks inherently perform very poorly as regards explainability. Their complex and black box structure makes it impossible to gain insight and therefore confidence in their performance.

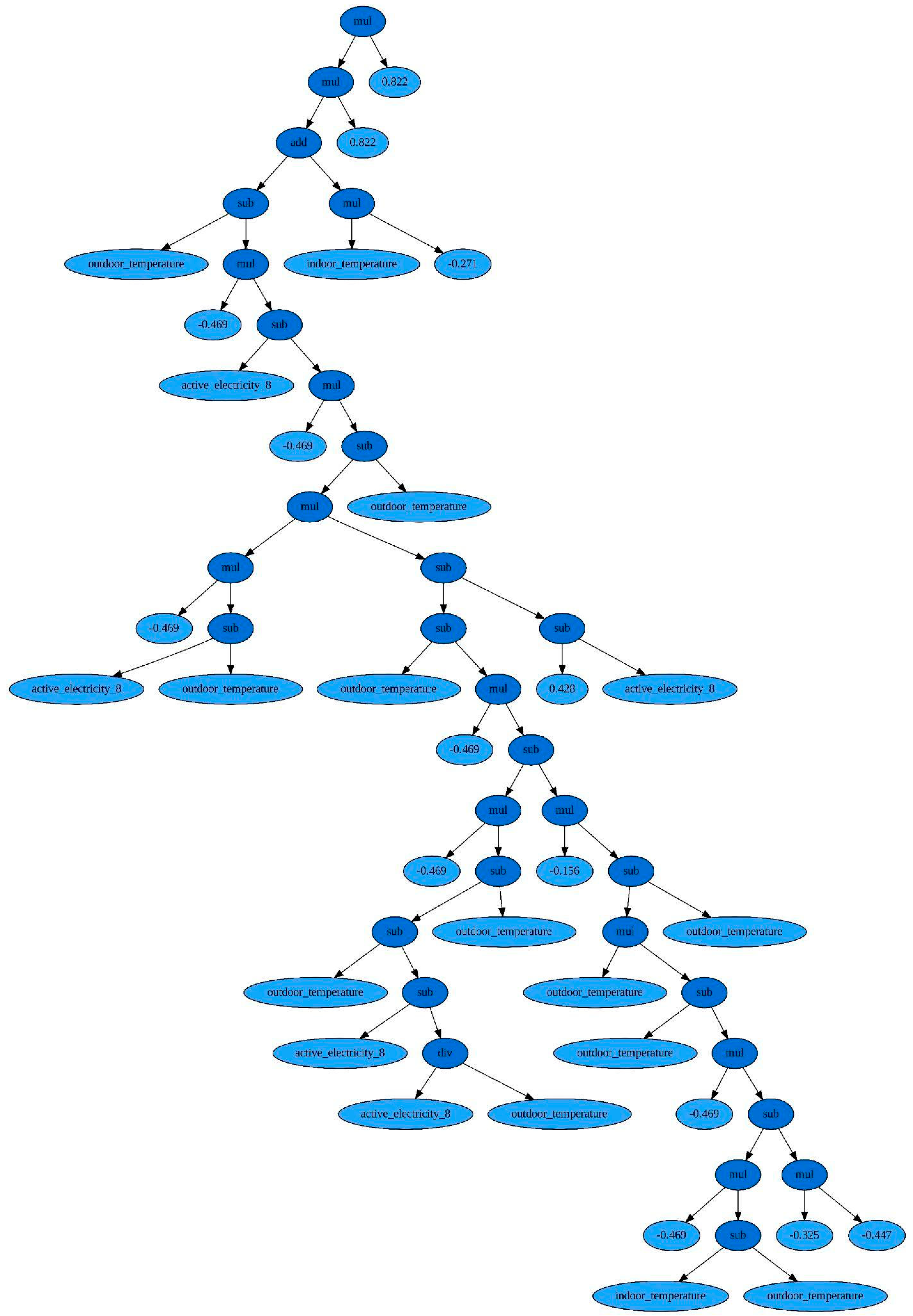

In this work we consider two distinct approaches to explainability. First, we will use genetic programming (GP) modeling. In artificial intelligence, GP is an evolutionary approach, i.e. a technique of evolving programs, starting from a population of random programs, fit for a particular task. Operations analogous to natural genetic processes are then applied; such are the selection of the fittest programs for reproduction (called crossover) and mutation according to a predefined fitness measure. Crossover swaps random parts of selected pairs (parents) to produce new and different offspring that become part of the new generation of programs, while mutation involves substitution of some random part of a program with some other random part of a program. GP is a distinct modeling approach that results in symbolic expressions which can potentially offer insights to the model performance. In addition, GP is a classical case of so-called global level explainability. It provides insights on the overall model performance. Up to this moment and to the best of our knowledge GP has not been used in the building energy forecasting literature. On the contrary, counterfactuals are referred to as instance or local level explainability as they do not relate to the model itself but only to a particular instance.

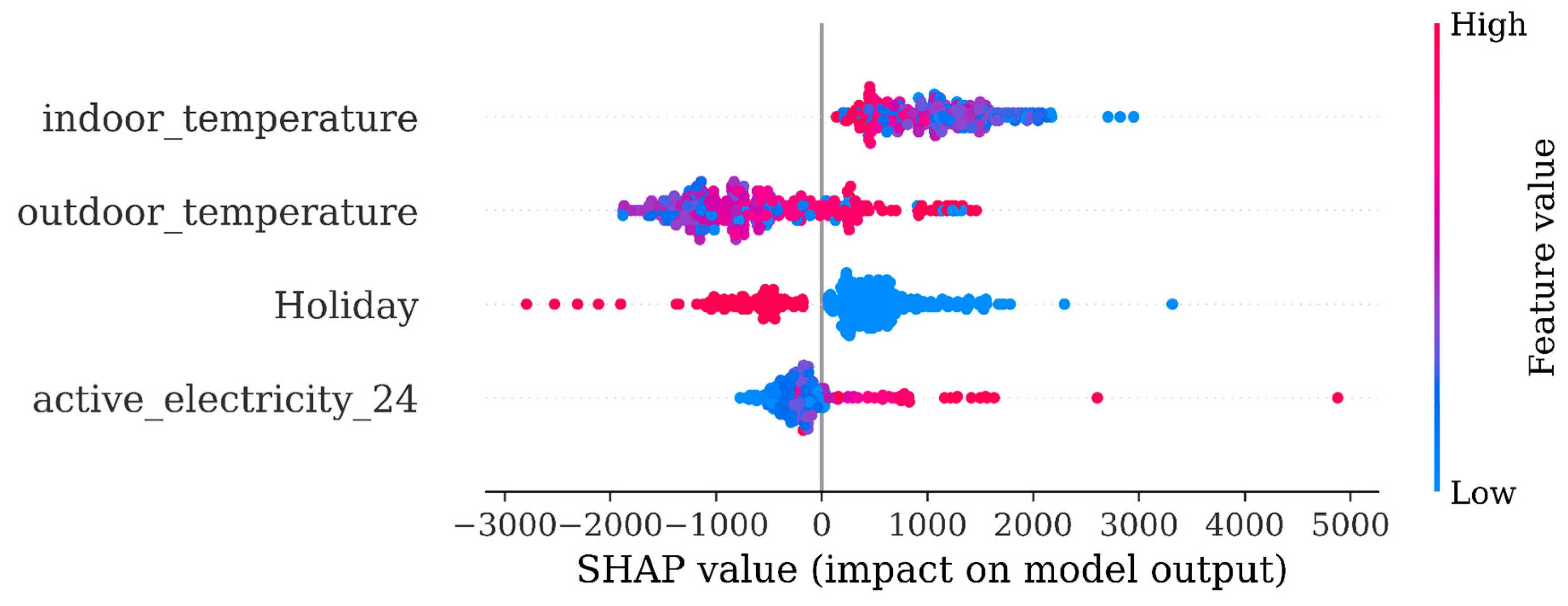

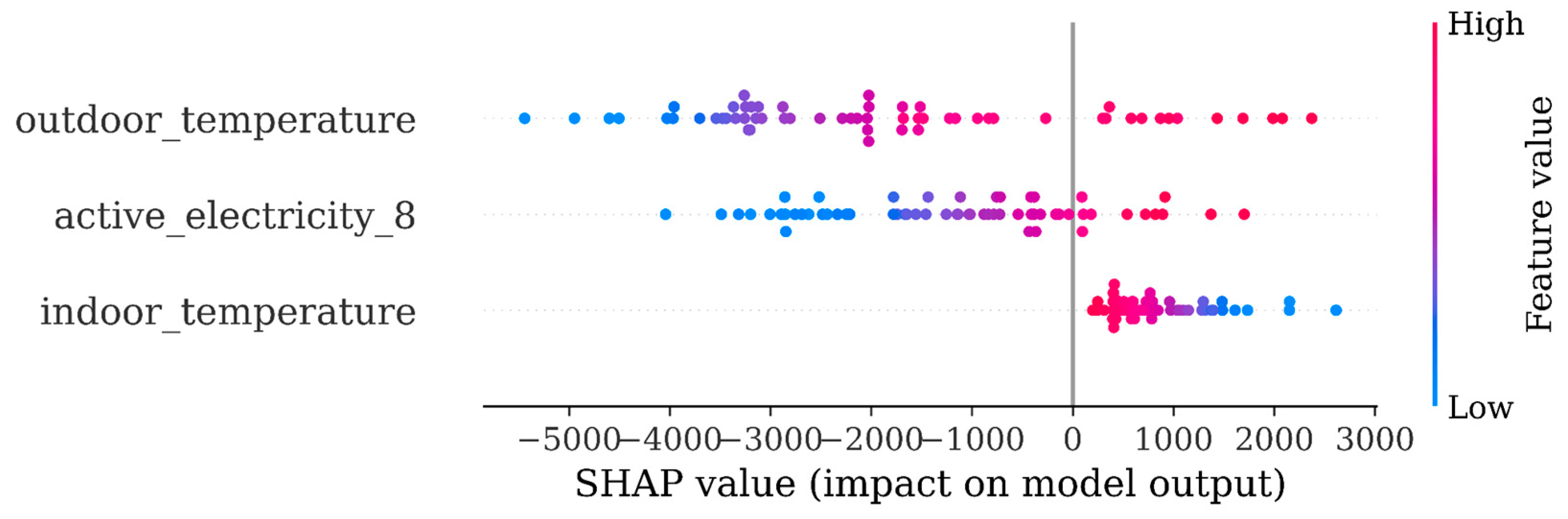

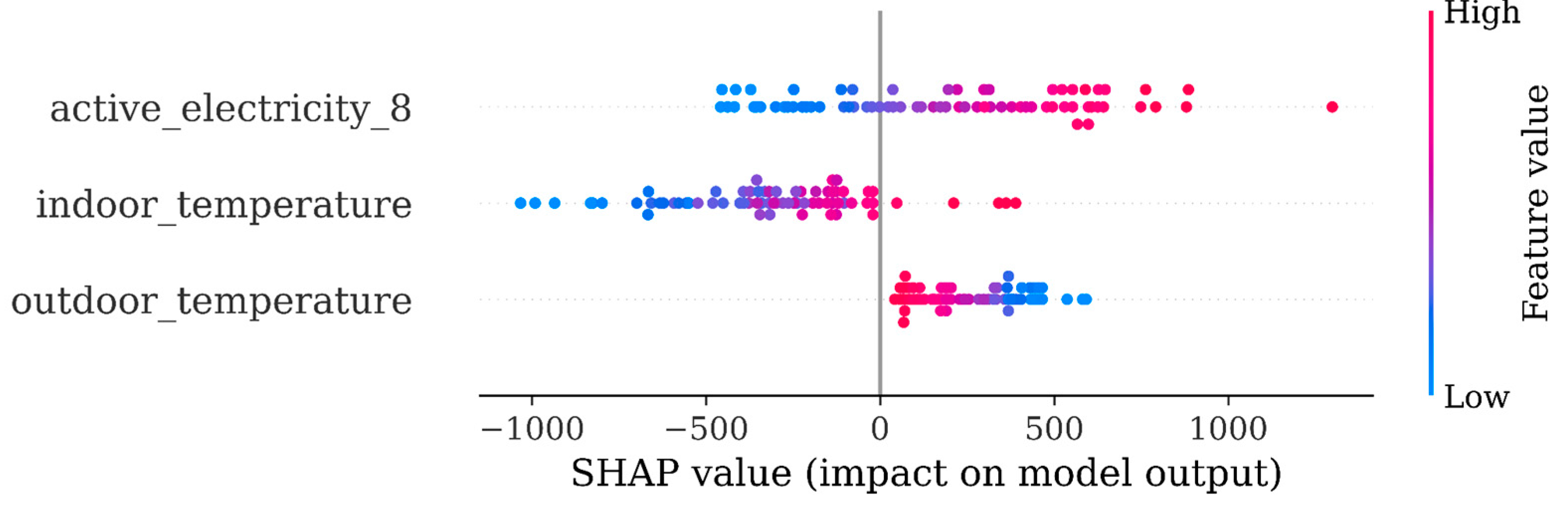

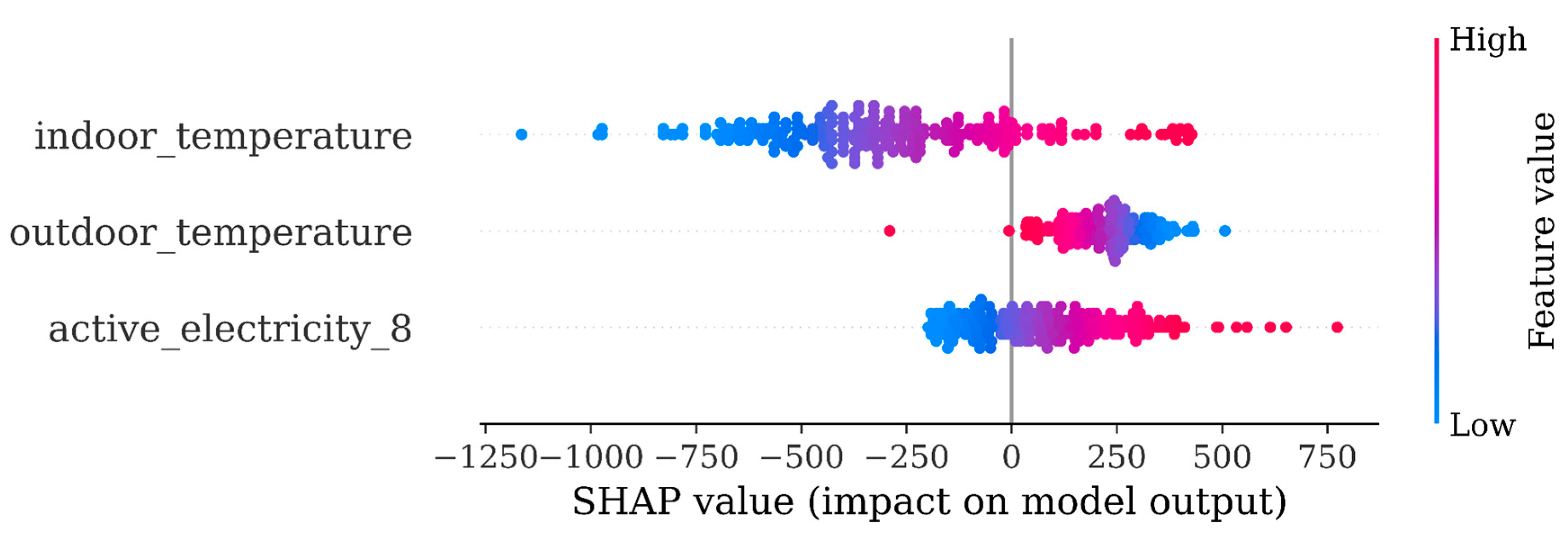

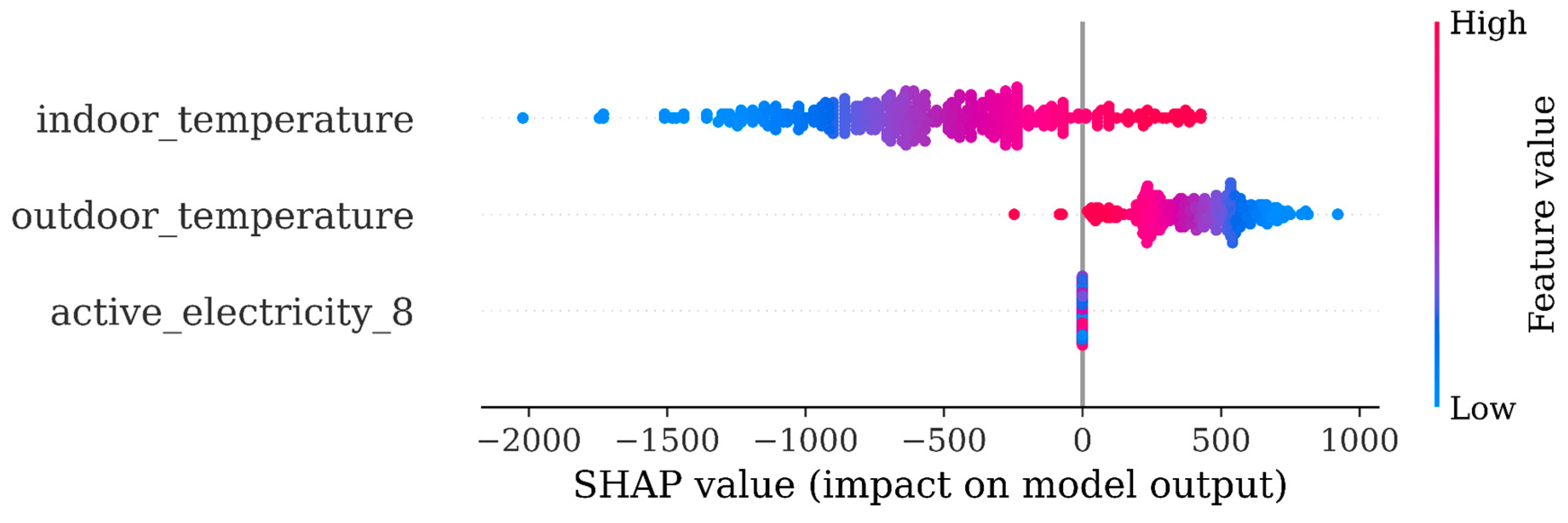

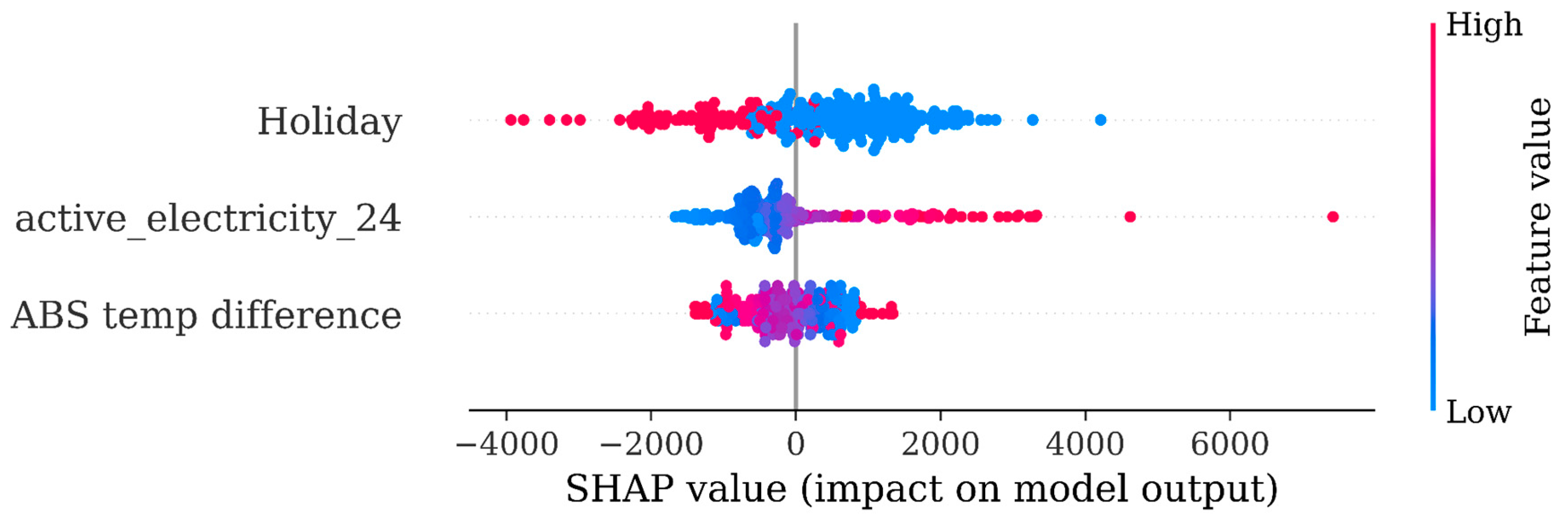

A second approach that we will use for explainability purposes will be that of SHAP — which stands for SHapley Additive exPlanations. This approach was first published in 2017 by Lundberg (Lundberg S. and Lee S, 2017). In short, SHAP values provide for a quantification of the contribution that each feature brings to the model. Thus, it is an excellent way to track feature importance, which has already been highlighted above as a key mandate for explainability, especially within the demand response application context.

Therefore, although GP is a distinct modeling approach, SHAP values are not anything close; they are an approach that can be used regardless of the underlying model and its purpose is to highlight feature importance. For this reason they are called model agnostic; they are equally relevant regardless of the model used, which could be a gradient boosting, a neural network or anything that takes some features as input and produces some forecasts as output.

Interestingly, SHAP values have been recently (Emanuele Albini, 2022) employed in a counterfactual context, making them also appropriate for our second explainability use case. The proposed method generates counterfactual explanations that show how changing the values of a feature would impact the prediction of a machine learning model.

Also, it may be that explainability comes with an accuracy tradeoff. Thus, we considered it important to benchmark the GP performance in terms of accuracy and for this purpose we opted for two mainstream modeling approaches in the forecasting literature. First, there are the structural time series (STS) models which are a family of probability models for time series that include and generalize many standard time-series modeling ideas, including: autoregressive processes, moving averages, local linear trends, seasonality, and regression and variable selection on external covariates (other time series potentially related to the series of interest). And, second, there are the neural networks and in particular their LSTM variant which is often used in the energy forecasting literature.

We have opted not to use hybrid models; although it is often reported that they come with some increased accuracy they would be a very poor selection in terms of explainability as we would also need to understand the relative impact of every model for the various space instances. This would be an impressive task also if SHAP were to be applied. Overall, without denying the accuracy benefits that hybrid approaches may bring, we consider them an inappropriate path when explainability considerations are deemed important, as they are in our use case.

Finally, as the investigation was prompted by the design needs of a demand response controller, additionally to the GP and SHAP related explainability investigations, there were two further issues that were deemed important. Indeed, if a demand response controller seeks to provide user support, then seeking to run counterfactuals on actionable features seems to be a preferable and value adding approach. Actionable features are those that the user of the controller can act upon. Such is, for example, the case of indoor temperature that can be acted upon by changing the thermostat settings. Conversely, features such as weather, or consumption history, or day of the week and hour of the day, features that dominate the forecasting literature, are inherently non actionable. Also, the fast deployment of a real life controller would be directly impacted upon by the duration of the training period. Thus, the investigation of a fast training option of the forecasting algorithm would come at a clear benefit and would be preferable, provided performance is not overtly eroded.