Submitted:

22 July 2023

Posted:

26 July 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Deep Learning-Based Segmentation Methods

2.2. Zero-Shot Learning in Segmentation

2.3. Combining Continuous Outputs

3. Methodology

3.1. DeepLabV3+ Architecture

3.2. Pyramid Vision Transformer Architecture

- we apply two different data augmentation, defined in [19]: DA1, base data augmentation consisting in horizontal and vertical flip, 90° rotation; DA2, this technique performs a set of operations to the original images in order to derive new ones. These operations comprehend shadowing, color mapping, vertical or horizontal flipping, and others.

- we apply three different learning strategy: learning rate of 1e-4; learning rate of 5e-4; learning rate of 5e-5 decaying to 5e-6 after 15 epochs.

3.3. SAM Architecture

3.4. SEEM Architecture

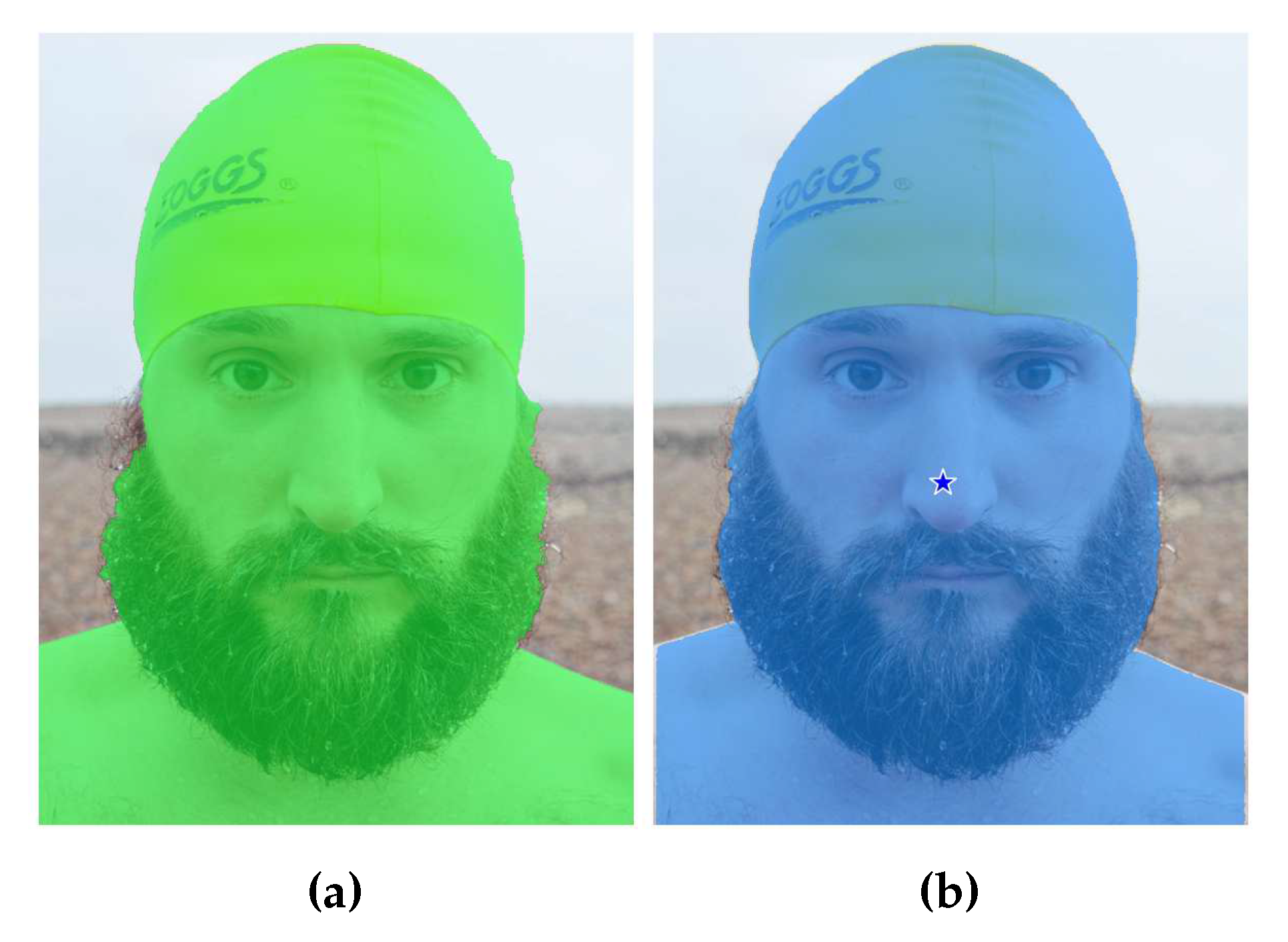

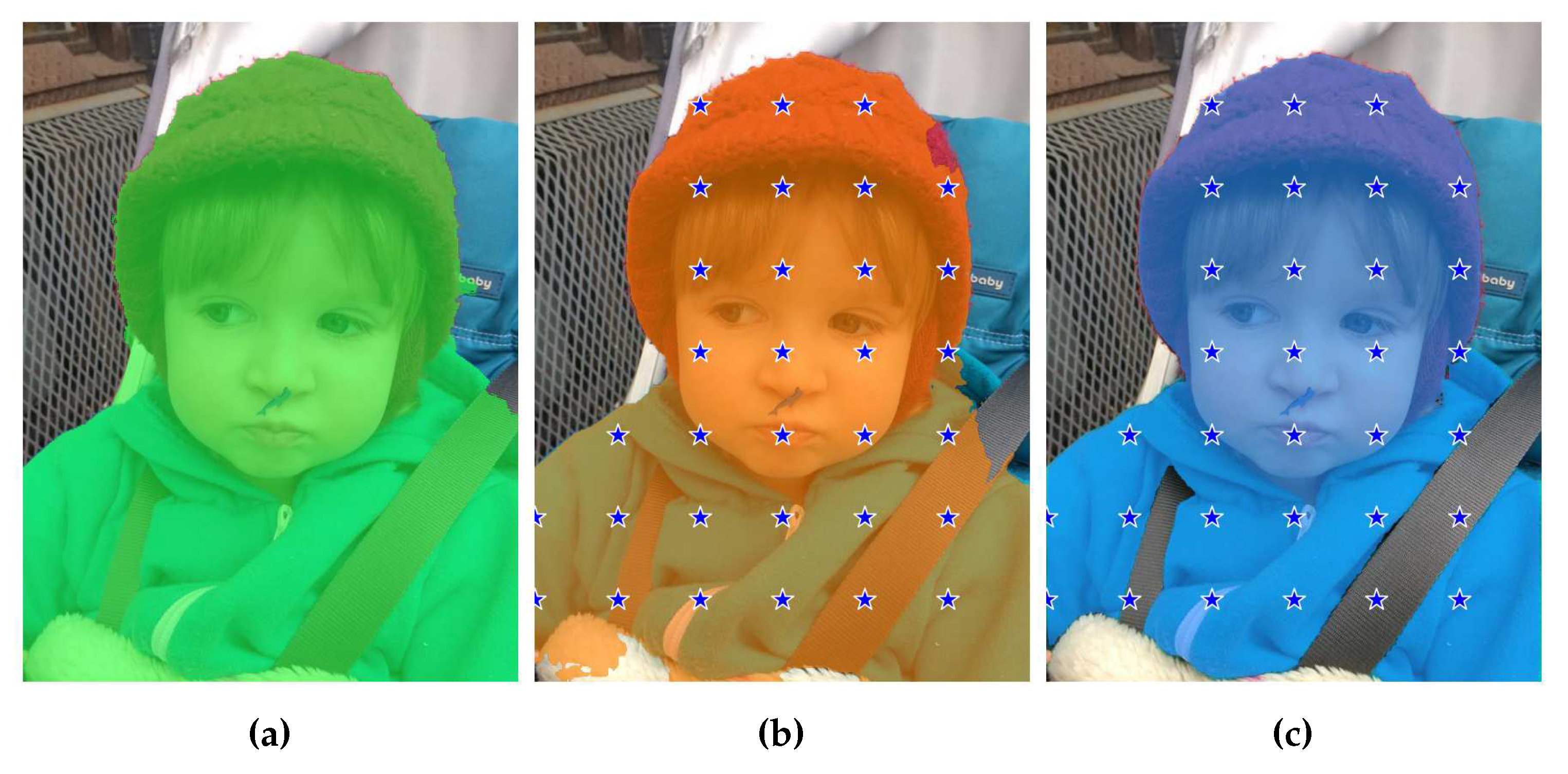

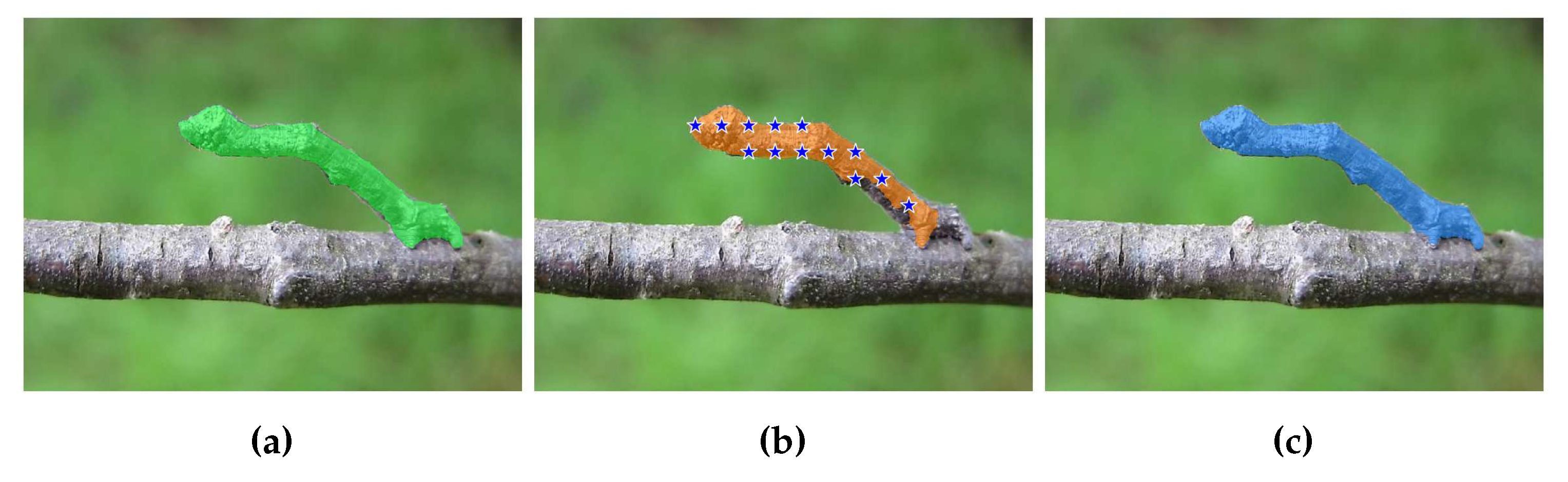

3.5. Checkpoints Engineering

- A

- selects the average coordinates of the blob as the checkpoint. While simple and straightforward, a drawback of this method is that checkpoints may occasionally fall outside the blob region.

- B

- determines the center of mass of the blob as the checkpoint. It is similar to Method A and is relatively simple, but we observed that the extracted checkpoints are less likely to lie outside the blob region.

- C

- randomly selects a point within the blob region as the checkpoint. The primary advantage of this method is its simplicity and efficiency. By randomly selecting a point within the blob, it allows for a diverse range of checkpoints to be generated.

- D

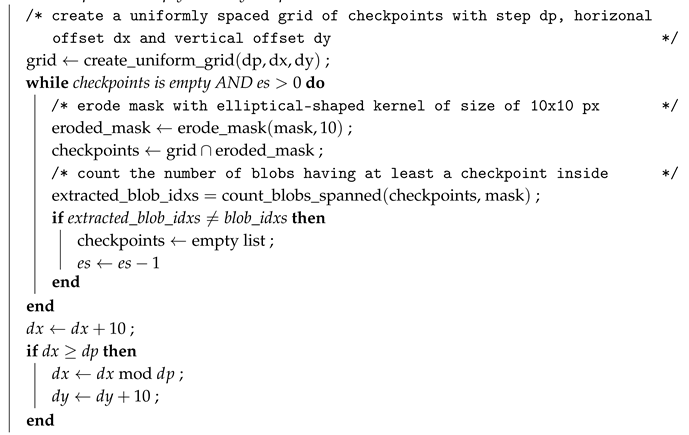

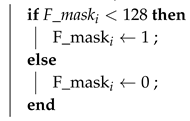

- enables the selection of multiple checkpoints within the blob region. Initially, a grid is created with uniform sampling steps of size b in both the x and y directions. Checkpoints are chosen from the grid if they fall within the blob region. We also applied a modified version of this method that considers eroded (smaller) masks. In Table 1, this modified version is referred to as "bm" (border mode). Erosion is a morphological image processing technique used to reduce the boundaries of objects in a segmentation mask. It works by applying a predefined kernel, in our case, an elliptical-shaped kernel with a size of 10x10 pixels, to the mask. The kernel slides through the image, and for each position, if all the pixels covered by the kernel are part of the object (i.e., white), the central pixel of the kernel is set to white in the output eroded mask. Otherwise, it is set to background (i.e., black). This process effectively erodes the boundaries of the objects in the mask, making them smaller and removing noise or irregularities around the edges. In certain cases, this method (both eroded or not) may fail to find any checkpoints inside certain blobs. To address this, we implemented a fallback strategy described in Algorithm 1, in which Method D is considered in the modified version. The objective of the fallback strategy is to shift the grid of checkpoints horizontally first and then vertically, continuing this process while no part of the grid overlaps with the segmentation mask.

| Algorithm 1:Method D with mask erosion and fallback strategy. |

|

Input: mask ; // segmentation mask from which to sample the checkpoints dp ; //grid sampling step es ; // erosion size Result: checkpoints ; //List to store the selected checkpoints ; //offsets along x and y directions /* count the number of non trivial blobs in mask */ ; whilecheckpoints is empty AND do  end |

4. Experimental Setup

Datasets

Performance Metrics

Baseline Extraction

Implementation Details

Refinement Step Description

- Combining Complementary Information: The SAM model and the DeepLabV3+ model have different strengths and weaknesses in capturing certain object details or handling specific image characteristics. By combining their logits through the weighted-rule, we can leverage the complementary information captured by each model. This can lead to a more comprehensive representation of the object boundaries and semantic regions, resulting in a more accurate final segmentation.

- Noise Reduction and Consensus: The weighted-rule approach helps reduce the impact of noise or uncertainties in individual model predictions. By combining the logits, the noise or errors inherent in one model’s prediction may be offset or diminished by the other model’s more accurate predictions. This consensus-based aggregation can effectively filter out noisy predictions.

- Addressing Model Biases: Different models can exhibit biases or tendencies in their predictions due to architectural differences, training data biases, or inherent limitations. The refinement step enables the combination of predictions from multiple models, mitigating the impact of any model biases and enhancing the overall robustness of the segmentation.

- Enhanced Object Boundary Localization: The weighted-rule of logits can help improve the localization and delineation of object boundaries. As the logits from both models contribute to the final segmentation, the refinement step tends to emphasize areas of high consensus, resulting in sharper and more accurate object boundaries. This can be particularly beneficial in cases where individual models may struggle with precise boundary detection.

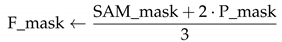

| Algorithm 2:Combining continuous outputs of SAM and segmentator model |

|

Input: SAM_mask ; //logits based segmentation mask produced by SAM P_mask ; // logits based segmentation mask produced by a segmentator model /* binary segmentation mask produced by the fusion procedure */ Result: F_mask /* load, convert to single precision and normalize SAM_mask */ // apply absolute value // convert to uint8 precision  // apply fusion

// apply fusion/* binarize */  end |

5. Results

6. Discussion

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Chen, L.C.; Zhu, Y.; Papandreou, G.; Schroff, F.; Adam, H. Encoder-Decoder with Atrous Separable Convolution for Semantic Image Segmentation. In Proceedings of the Computer Vision – ECCV 2018; Ferrari, V.; Hebert, M.; Sminchisescu, C.; Weiss, Y., Eds.; Springer International Publishing: Cham, 2018; pp. 833–851. [Google Scholar]

- Kirillov, A.; Mintun, E.; Ravi, N.; Mao, H.; Rolland, C.; Gustafson, L.; Xiao, T.; Whitehead, S.; Berg, A.C.; Lo, W.Y.; et al. Segment Anything. arXiv 2023, arXiv:2304.02643. [Google Scholar]

- Zou, X.; Yang, J.; Zhang, H.; Li, F.; Li, L.; Gao, J.; Lee, Y.J. Segment Everything Everywhere All at Once. arXiv 2023, arXiv:2304.06718. [Google Scholar]

- Wang, W.; Xie, E.; Li, X.; Fan, D.P.; Song, K.; Liang, D.; Lu, T.; Luo, P.; Shao, L. PVT v2: Improved baselines with Pyramid Vision Transformer. Computational Visual Media 2022, 8, 415–424. [Google Scholar] [CrossRef]

- Le, T.N.; Nguyen, T.V.; Nie, Z.; Tran, M.T.; Sugimoto, A. Anabranch Network for Camouflaged Object Segmentation. Journal of Computer Vision and Image Understanding 2019, 184, 45–56. [Google Scholar] [CrossRef]

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional Networks for Biomedical Image Segmentation. In Proceedings of the Medical Image Computing and Computer-Assisted Intervention – MICCAI 2015; Navab, N.; Hornegger, J.; Wells, W.M.; Frangi, A.F., Eds.; Springer International Publishing: Cham, 2015; pp. 234–241. [Google Scholar]

- Badrinarayanan, V.; Kendall, A.; Cipolla, R. SegNet: A Deep Convolutional Encoder-Decoder Architecture for Image Segmentation. IEEE Transactions on Pattern Analysis and Machine Intelligence 2017, 39, 2481–2495. [Google Scholar] [CrossRef] [PubMed]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale. In Proceedings of the International Conference on Learning Representations; 2021. [Google Scholar]

- Ke, L.; Ye, M.; Danelljan, M.; Liu, Y.; Tai, Y.W.; Tang, C.K.; Yu, F. Segment Anything in High Quality. arXiv arXiv:2306.01567.

- Wu, J.; Fu, R.; Fang, H.; Liu, Y.; Wang, Z.; Xu, Y.; Jin, Y.; Arbel, T. Medical SAM Adapter: Adapting Segment Anything Model for Medical Image Segmentation. arXiv arXiv:2304.12620.

- Cheng, D.; Qin, Z.; Jiang, Z.; Zhang, S.; Lao, Q.; Li, K. SAM on Medical Images: A Comprehensive Study on Three Prompt Modes. arXiv 2023, arXiv:2305.00035. [Google Scholar]

- Hu, C.; Xia, T.; Ju, S.; Li, X. When SAM Meets Medical Images: An Investigation of Segment Anything Model (SAM) on Multi-phase Liver Tumor Segmentation. arXiv arXiv:2304.08506. [CrossRef]

- Zhang, Y.; Zhou, T.; Wang, S.; Liang, P.; Chen, D.Z. Input Augmentation with SAM: Boosting Medical Image Segmentation with Segmentation Foundation Model. arXiv 2023, arXiv:2304.11332. [Google Scholar]

- Shaharabany, T.; Dahan, A.; Giryes, R.; Wolf, L. AutoSAM: Adapting SAM to Medical Images by Overloading the Prompt Encoder. arXiv 2023, arXiv:2306.06370. [Google Scholar]

- Kuncheva, L.I. Diversity in multiple classifier systems. Information Fusion 2005, 6, 3–4, Diversity in Multiple Classifier Systems. [Google Scholar] [CrossRef]

- Kittler, J. Combining classifiers: A theoretical framework. Pattern Analysis and Applications 1998, 1, 18–27. [Google Scholar] [CrossRef]

- Nanni, L.; Brahnam, S.; Lumini, A. Ensemble of Deep Learning Approaches for ATC Classification. In Proceedings of the Smart Intelligent Computing and Applications; Satapathy, S.C.; Bhateja, V.; Mohanty, J.R.; Udgata, S.K., Eds.; Springer Singapore: Singapore, 2020; pp. 117–125. [Google Scholar]

- Melotti, G.; Premebida, C.; Goncalves, N.M.M.d.S.; Nunes, U.J.C.; Faria, D.R. Multimodal CNN Pedestrian Classification: A Study on Combining LIDAR and Camera Data. In Proceedings of the 2018 21st International Conference on Intelligent Transportation Systems (ITSC); 2018; pp. 3138–3143. [Google Scholar] [CrossRef]

- Nanni, L.; Lumini, A.; Loreggia, A.; Formaggio, A.; Cuza, D. An Empirical Study on Ensemble of Segmentation Approaches. Signals 2022, 3, 341–358. [Google Scholar] [CrossRef]

- Lin, T.Y.; Maire, M.; Belongie, S.; Hays, J.; Perona, P.; Ramanan, D.; Dollár, P.; Zitnick, C.L. Microsoft COCO: Common Objects in Context. In Proceedings of the 13th European Conference on Computer Vision (ECCV 2014); Springer; 2014; pp. 740–755. [Google Scholar] [CrossRef]

- Kim, Y.W.; Byun, Y.C.; Krishna, A.V.N. Portrait Segmentation Using Ensemble of Heterogeneous Deep-Learning Models. Entropy 2021, 23. [Google Scholar] [CrossRef]

- Liu, L.; Liu, M.; Meng, K.; Yang, L.; Zhao, M.; Mei, S. Camouflaged locust segmentation based on PraNet. Computers and Electronics in Agriculture 2022, 198, 107061. [Google Scholar] [CrossRef]

- Nguyen, H.C.; Le, T.T.; Pham, H.H.; Nguyen, H.Q. VinDr-RibCXR: A benchmark dataset for automatic segmentation and labeling of individual ribs on chest X-rays. In Proceedings of the 2021 International Conference on Medical Imaging with Deep Learning (MIDL 2021); 2021. [Google Scholar]

- Rahman, M.A.; Wang, Y. Optimizing Intersection-Over-Union in Deep Neural Networks for Image Segmentation. In Proceedings of the International Symposium on Visual Computing (ISVC 2016); Springer; 2016; pp. 234–244. [Google Scholar] [CrossRef]

- Sudre, C.H.; Li, W.; Vercauteren, T.; Ourselin, S.; Cardoso, J.M. Generalised Dice Overlap as a Deep Learning Loss Function for Highly Unbalanced Segmentations. In Proceedings of the Deep Learning in Medical Image Analysis and Multimodal Learning for Clinical Decision Support: Third International Workshop, and 7th International Workshop, ML-CDS 2017, Held in Conjunction with MICCAI 2017; Springer,, 2017, DLMIA 2017; pp. 240–248. [Google Scholar] [CrossRef]

- Perazzi, F.; Krähenbühl, P.; Pritch, Y.; Sorkine-Hornung, A. Saliency filters: Contrast based filtering for salient region detection. 2012 IEEE Conference on Computer Vision and Pattern Recognition.

- Margolin, R.; Zelnik-Manor, L.; Tal, A. How to Evaluate Foreground Maps. In Proceedings of the 2014 IEEE Conference on Computer Vision and Pattern Recognition; 2014; pp. 248–255. [Google Scholar] [CrossRef]

- Fan, D.P.; Cheng, M.M.; Liu, Y.; Li, T.; Borji, A. Structure-measure: A New Way to Evaluate Foreground Maps. In Proceedings of the IEEE International Conference on Computer Vision; 2017. [Google Scholar]

- Everingham, M.; Van Gool, L.; Williams, C.K.; Winn, J.; Zisserman, A. The PASCAL Visual Object Classes (VOC) Challenge. International Journal of Computer Vision 2010, 88, 303–338. [Google Scholar] [CrossRef]

- Liu, W.; Shen, X.; Pun, C.M.; Cun, X. Explicit Visual Prompting for Universal Foreground Segmentations. arXiv 2023, arXiv:2305.1847. [Google Scholar]

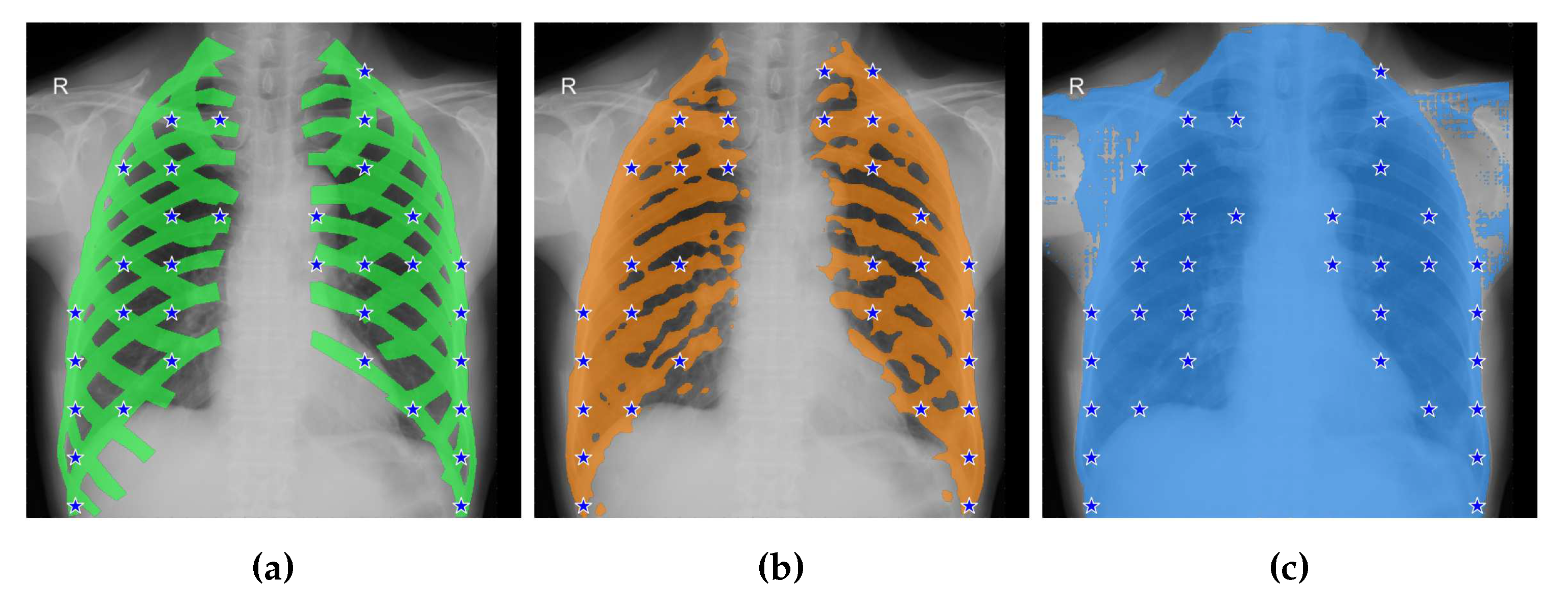

| CAMO | Portrait | Locust-mini | VinDr-RibCXR | ||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| IoU | Dice | IoU | Dice | IoU | Dice | IoU | Dice | ||||||||||||

| baseline (DLV3+) | 60.63 | 71.75 | 97.01 | 98.46 | 74.34 | 83.01 | 63.48 | 77.57 | |||||||||||

| PVTv2 | 71.75 | 81.07 | |||||||||||||||||

| oracle | DLV3+ | PVTv2 | oracle | DLV3+ | oracle | DLV3+ | oracle | DLV3+ | |||||||||||

| Model | Method | IoU | Dice | IoU | Dice | IoU | Dice | IoU | Dice | IoU | Dice | IoU | Dice | IoU | Dice | IoU | Dice | IoU | Dice |

| SAM - ViT-L | A | 48.88 | 57.63 | 48.57 | 58.34 | 49.19 | 58.81 | 79.72 | 84.96 | 78.23 | 83.68 | 40.58 | 50.68 | 37.05 | 46.99 | 27.46 | 42.54 | 27.23 | 42.49 |

| B | 48.98 | 57.69 | 48.93 | 58.78 | 49.12 | 58.62 | 80.13 | 85.38 | 78.54 | 83.91 | 39.47 | 49.43 | 37.77 | 47.68 | 27.15 | 42.18 | 27.23 | 42.49 | |

| C | 47.94 | 56.06 | 47.94 | 57.64 | 45.73 | 55.35 | 79.71 | 84.10 | 79.65 | 83.28 | 34.74 | 44.61 | 33.74 | 43.39 | 25.36 | 40.26 | 27.30 | 42.58 | |

| D 10 | 46.51 | 58.96 | 45.65 | 57.67 | 44.79 | 56.77 | 22.39 | 31.29 | 23.14 | 31.98 | 36.86 | 49.41 | 32.54 | 44.54 | 26.53 | 41.70 | 26.54 | 41.71 | |

| D 30 | 66.01 | 76.32 | 60.19 | 70.37 | 61.37 | 71.40 | 93.08 | 96.28 | 92.76 | 96.09 | 61.27 | 72.06 | 53.87 | 64.90 | 26.80 | 42.08 | 26.99 | 42.33 | |

| D 50 | 67.26 | 76.54 | 60.23 | 69.89 | 62.36 | 71.70 | 95.84 | 97.85 | 95.65 | 97.74 | 63.88 | 74.13 | 57.70 | 67.54 | 28.06 | 43.52 | 27.75 | 43.23 | |

| D 100 | 62.56 | 71.13 | 53.62 | 62.27 | 57.81 | 66.45 | 96.55 | 98.23 | 96.46 | 98.18 | 52.88 | 62.26 | 50.01 | 58.46 | 27.44 | 42.82 | 27.76 | 43.14 | |

| D 10 bm | 52.05 | 64.15 | 47.62 | 59.38 | 47.30 | 59.02 | 23.63 | 32.74 | 24.35 | 33.40 | 43.35 | 55.84 | 36.11 | 48.20 | 27.17 | 42.54 | 26.54 | 41.70 | |

| D 30 bm | 68.25 | 77.88 | 60.76 | 70.66 | 62.27 | 72.08 | 93.20 | 96.35 | 92.92 | 96.19 | 65.09 | 75.67 | 55.68 | 66.67 | 28.36 | 43.94 | 26.98 | 42.32 | |

| D 50 bm | 67.48 | 76.52 | 60.89 | 70.47 | 62.29 | 71.59 | 95.88 | 97.87 | 95.69 | 97.76 | 64.26 | 74.34 | 58.24 | 68.07 | 28.03 | 43.43 | 27.84 | 43.36 | |

| D 100 bm | 61.13 | 69.74 | 53.44 | 61.95 | 57.28 | 66.06 | 96.57 | 98.23 | 96.48 | 98.18 | 53.16 | 62.48 | 50.23 | 58.59 | 26.70 | 41.95 | 27.76 | 43.14 | |

| SAM - ViT-H | A | 51.96 | 59.89 | 49.79 | 58.78 | 50.76 | 59.23 | 76.60 | 81.69 | 75.36 | 80.27 | 40.16 | 50.34 | 36.42 | 46.32 | 26.40 | 41.41 | 25.99 | 40.94 |

| B | 51.98 | 59.97 | 50.60 | 59.50 | 50.40 | 58.93 | 76.18 | 81.30 | 76.05 | 80.93 | 40.37 | 50.47 | 36.89 | 46.63 | 26.40 | 41.41 | 25.98 | 40.93 | |

| C | 50.20 | 58.30 | 49.60 | 58.35 | 50.31 | 59.09 | 70.77 | 76.56 | 70.51 | 75.45 | 36.33 | 45.68 | 35.77 | 45.42 | 25.43 | 40.27 | 25.51 | 40.39 | |

| D 10 | 68.67 | 78.72 | 60.49 | 71.54 | 63.00 | 73.76 | 91.35 | 95.25 | 91.21 | 95.16 | 47.17 | 59.87 | 39.59 | 51.94 | 27.87 | 43.32 | 28.03 | 43.50 | |

| D 30 | 77.39 | 85.28 | 65.90 | 75.58 | 68.69 | 77.74 | 95.01 | 97.41 | 94.79 | 97.28 | 68.47 | 78.38 | 63.42 | 72.69 | 30.71 | 46.70 | 30.67 | 46.70 | |

| D 50 | 76.21 | 83.92 | 63.69 | 72.82 | 68.24 | 76.87 | 95.82 | 97.84 | 95.65 | 97.75 | 70.21 | 80.00 | 66.21 | 75.67 | 32.18 | 47.97 | 31.85 | 47.78 | |

| D 100 | 67.53 | 75.35 | 55.89 | 64.06 | 60.73 | 68.71 | 95.90 | 97.87 | 95.82 | 97.82 | 53.50 | 62.72 | 50.52 | 59.00 | 26.45 | 41.54 | 26.10 | 41.11 | |

| D 10 bm | 71.73 | 81.40 | 61.41 | 72.28 | 63.79 | 74.33 | 91.45 | 95.31 | 91.29 | 95.21 | 52.76 | 65.02 | 43.85 | 55.76 | 29.76 | 45.64 | 28.14 | 43.65 | |

| D 30 bm | 77.27 | 85.45 | 65.58 | 75.31 | 68.31 | 77.43 | 95.05 | 97.43 | 94.83 | 97.30 | 69.73 | 79.55 | 63.69 | 73.02 | 32.97 | 48.97 | 30.66 | 46.69 | |

| D 50 bm | 74.27 | 82.33 | 63.05 | 72.31 | 67.68 | 76.42 | 95.83 | 97.85 | 95.69 | 97.77 | 68.65 | 78.51 | 65.95 | 75.47 | 29.44 | 44.71 | 31.72 | 47.61 | |

| D 100 bm | 65.74 | 73.94 | 55.50 | 63.51 | 60.52 | 68.54 | 95.89 | 97.86 | 95.81 | 97.81 | 53.62 | 63.02 | 50.02 | 58.60 | 26.23 | 41.25 | 26.10 | 41.11 | |

| SEEM | A | 48.24 | 55.46 | 38.58 | 44.58 | 38.12 | 44.52 | 93.52 | 95.73 | 92.94 | 95.23 | 39.20 | 47.81 | 35.87 | 43.31 | 32.13 | 48.42 | 32.12 | 48.41 |

| B | 48.24 | 55.46 | 38.37 | 44.38 | 37.82 | 44.21 | 93.52 | 95.73 | 92.94 | 95.23 | 39.20 | 47.81 | 35.87 | 43.31 | 32.13 | 48.42 | 32.12 | 48.41 | |

| C | 44.64 | 51.09 | 41.65 | 47.76 | 33.97 | 39.67 | 92.31 | 94.45 | 89.84 | 92.14 | 32.98 | 40.61 | 26.25 | 32.73 | 31.58 | 47.78 | 31.90 | 48.18 | |

| D 10 | 57.77 | 65.56 | 53.82 | 61.56 | 45.39 | 52.46 | 95.90 | 97.88 | 95.87 | 97.86 | 63.93 | 72.13 | 58.21 | 65.93 | 32.15 | 48.46 | 32.05 | 48.35 | |

| D 30 | 57.18 | 64.76 | 53.37 | 61.08 | 52.74 | 60.16 | 95.89 | 97.87 | 95.86 | 97.86 | 61.97 | 69.96 | 59.54 | 67.14 | 32.13 | 48.43 | 32.12 | 48.42 | |

| D 50 | 55.09 | 62.23 | 51.64 | 58.74 | 50.91 | 58.10 | 95.85 | 97.85 | 95.84 | 97.84 | 59.30 | 67.12 | 58.35 | 65.94 | 31.98 | 48.26 | 32.05 | 48.33 | |

| D 100 | 51.92 | 58.68 | 49.14 | 55.89 | 50.38 | 57.16 | 95.80 | 97.83 | 95.80 | 97.82 | 47.42 | 55.07 | 43.45 | 50.74 | 31.79 | 48.03 | 31.83 | 48.08 | |

| D 10 bm | 58.89 | 66.79 | 54.26 | 62.11 | 52.97 | 60.75 | 95.92 | 97.89 | 95.91 | 97.88 | 62.39 | 70.80 | 57.33 | 65.59 | 32.17 | 48.48 | 32.12 | 48.42 | |

| D 30 bm | 57.57 | 65.25 | 53.27 | 61.03 | 52.87 | 60.29 | 95.92 | 97.89 | 95.91 | 97.88 | 60.79 | 68.74 | 58.10 | 65.68 | 32.07 | 48.35 | 32.11 | 48.42 | |

| D 50 bm | 55.11 | 62.38 | 51.62 | 58.65 | 51.55 | 58.77 | 95.89 | 97.87 | 95.89 | 97.87 | 58.16 | 66.20 | 57.05 | 64.71 | 31.94 | 48.19 | 32.05 | 48.33 | |

| D 100 bm | 51.78 | 58.62 | 48.83 | 55.53 | 49.86 | 56.67 | 95.81 | 97.83 | 95.79 | 97.82 | 47.67 | 55.43 | 43.82 | 51.14 | 31.71 | 47.94 | 31.82 | 48.06 | |

| FUSION | D 30 | - | - | 64.23 | 74.83 | 73.57 | 82.22 | - | - | 97.16 | 98.54 | - | - | 75.43 | 83.74 | - | - | 61.28 | 75.90 |

| D 30 bm | - | - | 64.02 | 74.71 | 73.31 | 81.99 | - | - | 97.16 | 98.54 | - | - | 75.41 | 83.74 | - | - | 61.28 | 75.89 | |

| D 50 | - | - | 63.72 | 74.19 | 73.31 | 81.88 | - | - | 97.18 | 98.55 | - | - | 75.36 | 83.77 | - | - | 60.87 | 75.59 | |

| D 50 bm | - | - | 63.66 | 74.13 | 73.14 | 81.77 | - | - | 97.18 | 98.55 | - | - | 75.38 | 83.78 | - | - | 60.85 | 75.58 | |

| IoU ↑ | Dice ↑ | MAE ↓ | F-score ↑ | E-measure ↑ | |

|---|---|---|---|---|---|

| DLV3+ | 60.63 | 71.75 | 8.39 | 75.57 | 83.04 |

| PVTv2 | 71.75 | 81.07 | 5.74 | 82.46 | 89.96 |

| EVPv2 (current SOTA) | - | - | 5.80 | 78.60 | 89.90 |

| SAM ViT-H D-50 DLV3+ | 63.69 | 72.82 | 12.51 | 73.86 | 79.71 |

| SAM ViT-H D-50 DLV3+ fusion | 65.00 | 75.42 | 7.51 | 79.17 | 85.41 |

| SAM ViT-H D-50 PVTv2 | 68.24 | 76.87 | 10.74 | 77.37 | 83.05 |

| SAM ViT-H D-50 PVTv2 fusion | 73.31 | 81.88 | 5.60 | 83.32 | 90.00 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).