Submitted:

01 June 2023

Posted:

02 June 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Paper Organization

- Section 2, Related Work, presents literature reviews with similar objectives to ours, but with different focus areas.

- Section 3, Research Questions, presents the research questions guiding this review.

- Section 4, Methodology, presents the reviewing process used to develop a potential mapping scheme for the pHRI field and human factors classification.

- Section 5, Results, presents the classification results, which provide answers to the research questions formulated and identify trends and gaps in the literature.

- Section 6, Discussion, proposes suggestions for filling the identified literature gaps.

- Section 7, Limitations of the review, addresses the validity and limitations of this review.

- Section 8, Conclusion, provides a summary of the key findings and their implications for future research in pHRI.

2. Related Work

3. Research Questions

- RQ1: What are the existing applications or contexts in the literature, and how can they be categorized?

- RQ2: What are the most commonly studied human factors that can be used to develop quantitative measures of the human state?

- RQ3: What are the quantification approaches employed to evaluate human factors?

4. Methodology

- Justifying the need for conducting a literature review, as described in Section 1, Introduction.

- Defining the research questions, as explained in Section 3, Research Questions.

- Determining a search strategy, assessing its comprehensiveness, establishing selection criteria, and conducting a quality assessment. These steps are detailed in this section.

- Extracting data from the identified studies, organizing and categorizing the information obtained, and visualizing the results. These steps are detailed in Section 5, Results.

- Addressing the limitations of the review by conducting a validity assessment, as described in Section 7, Limitations of the Review.

4.1. Search Strategy

4.2. Study Selection and Quality Assessment

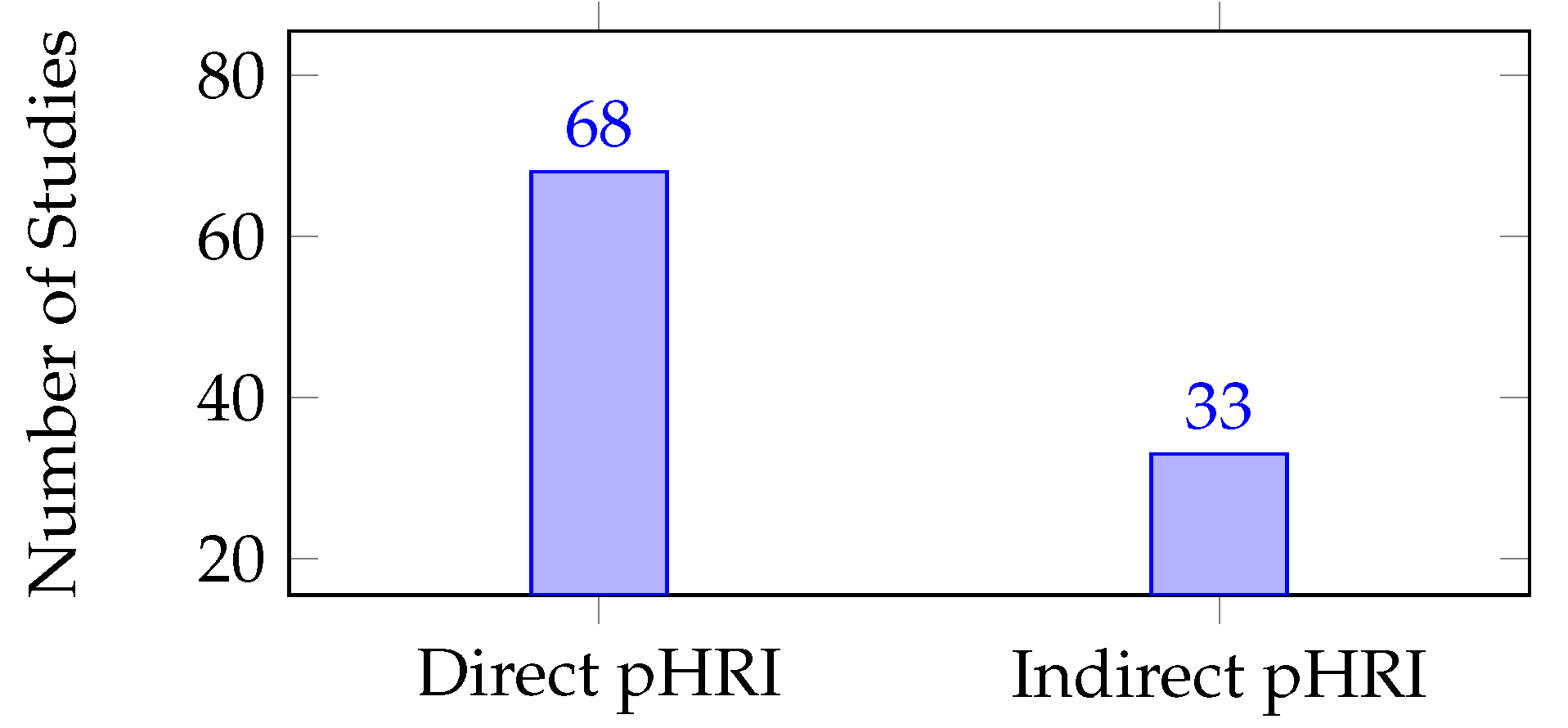

- Initial screening: Abstracts of papers were checked against the inclusion criteria, with full texts read for papers that were in doubt. This resulted in 128 papers.

- Full-text assessment: Quality assessment was conducted during this phase to ensure that each paper included information relevant to the research questions. Exclusion criteria were applied, resulting in 99 papers being included in the study.

- Physical coupling between humans and robots takes place, direct or indirect pHRI.

- Robot used has manipulation capabilities (i.e. has multi degrees of freedom arm(s)).

- Human factors are evaluated during physical interaction.

- Human factor evaluations of individuals with mental disorders were excluded [40], as experimental verification is required to generalize the factors of these special populations to the neurotypical population.

- Unclear identification of physical coupling between agents within a study.

- Studies that are not in English [45].

- Inaccessible full text.

5. Results

5.1. Mapping Scheme for pHRI

5.1.1. Direct pHRI

5.1.2. Indirect pHRI

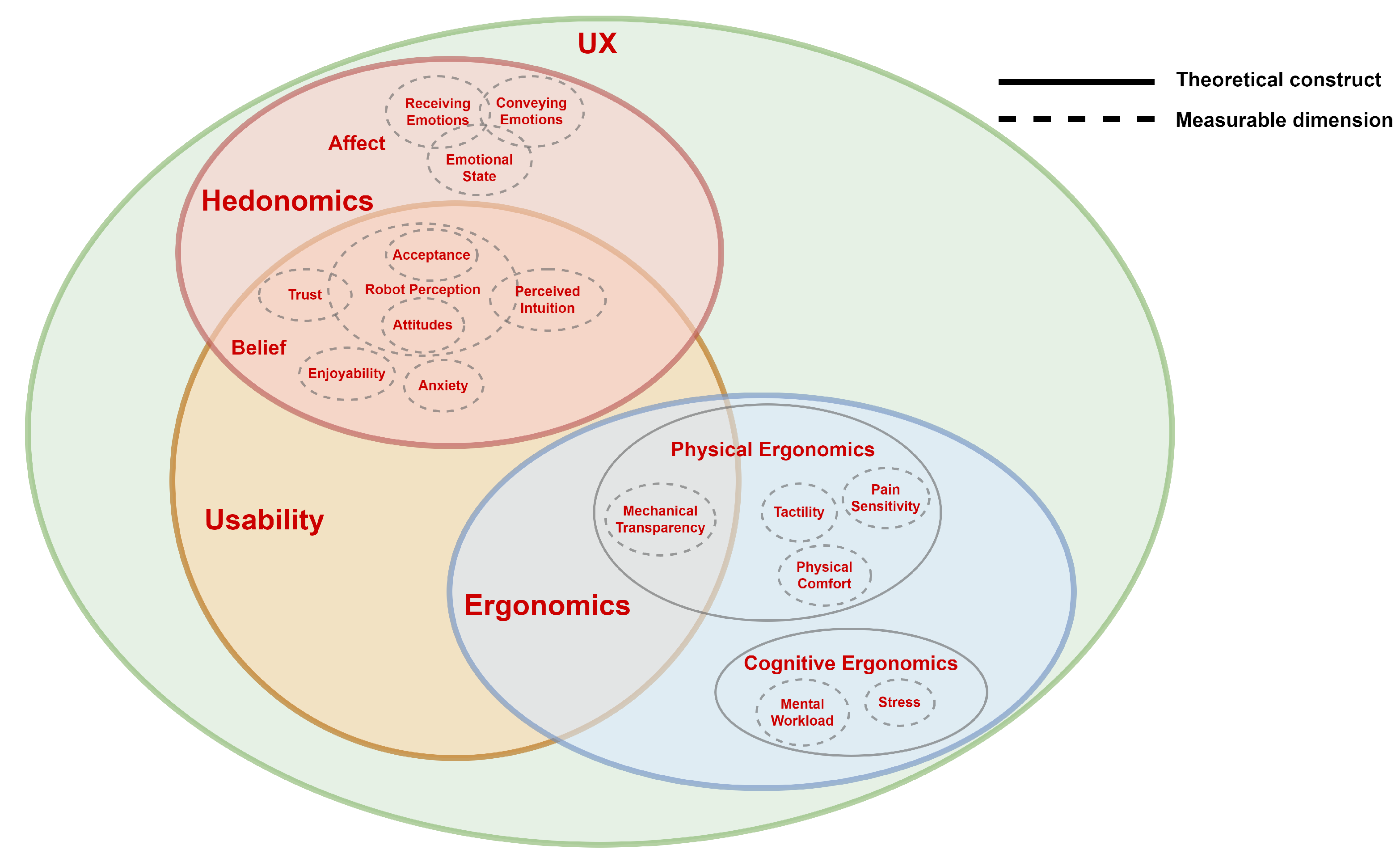

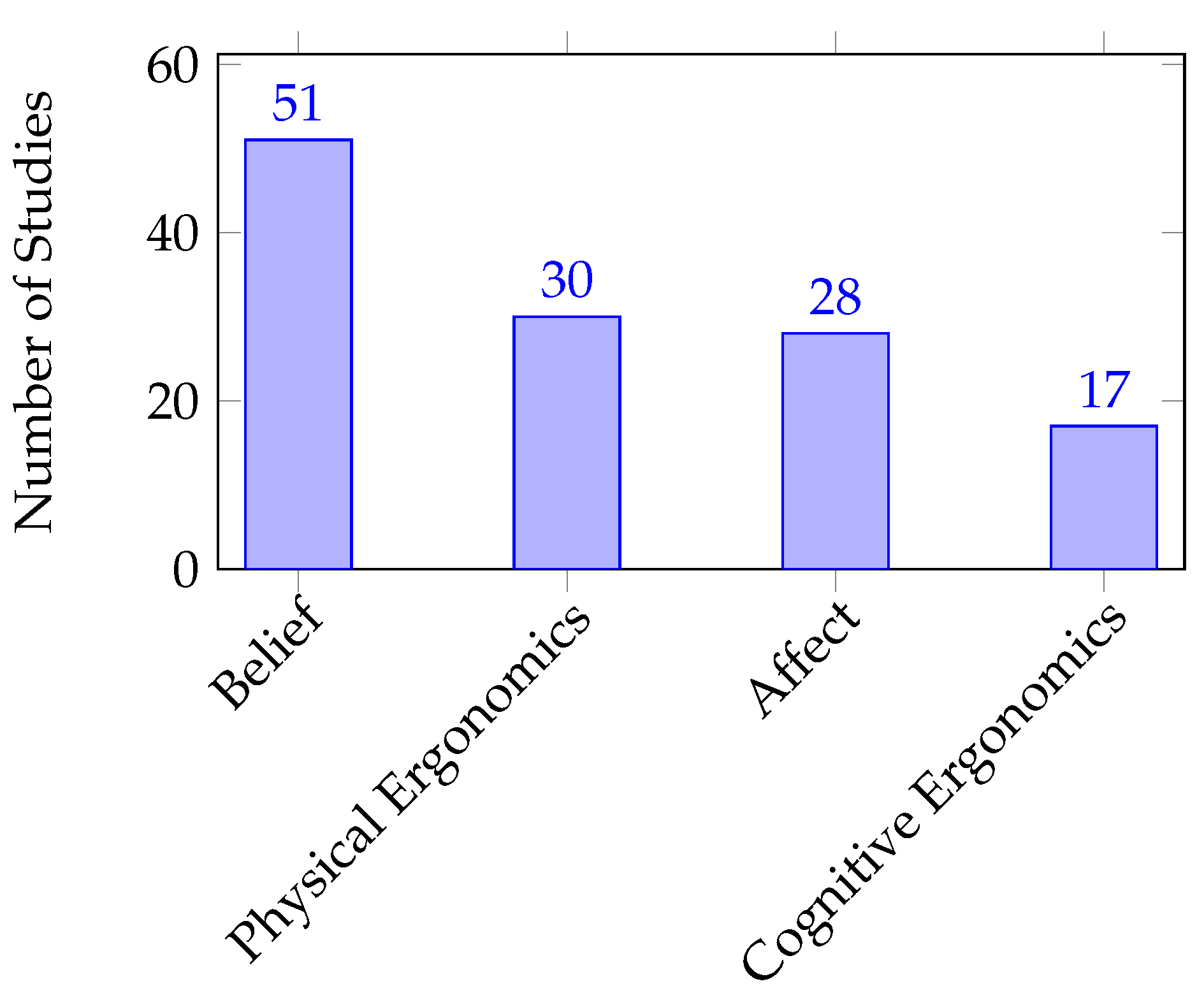

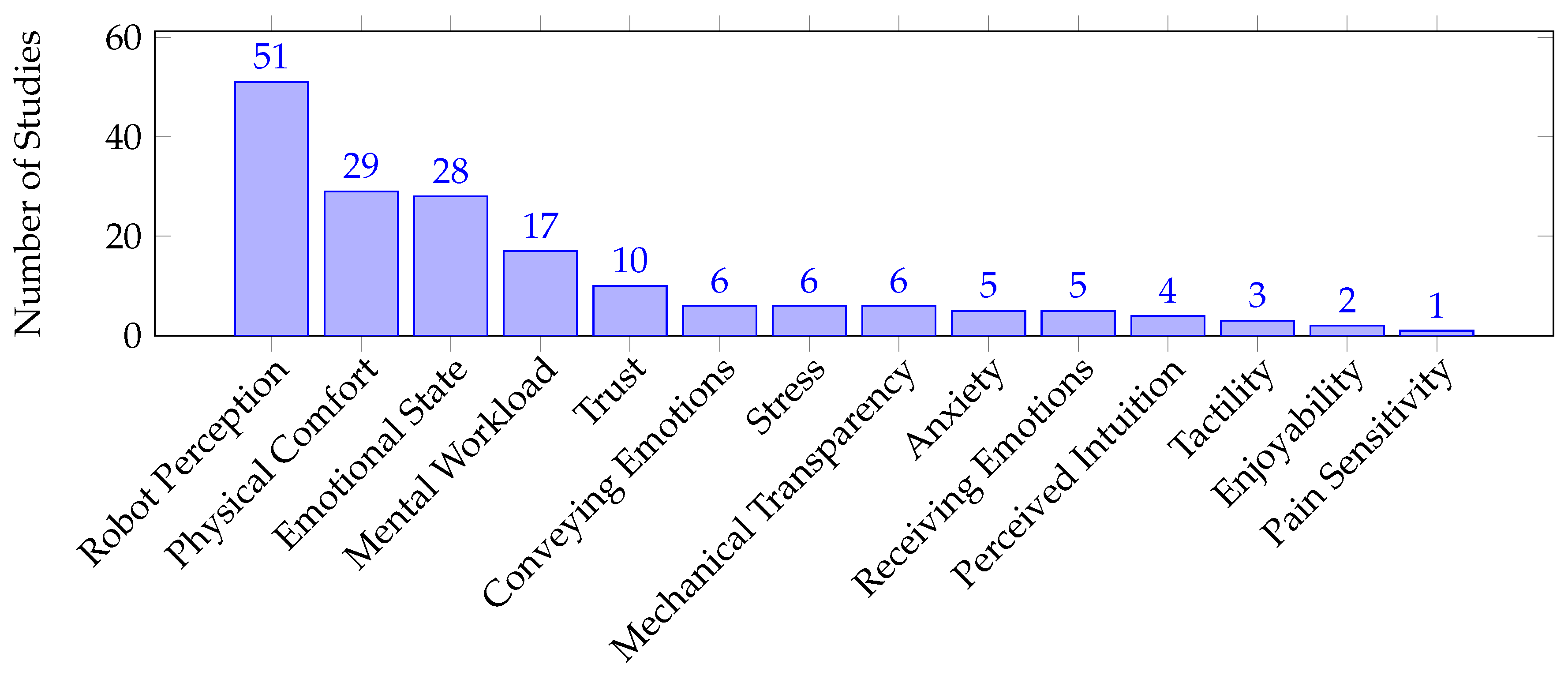

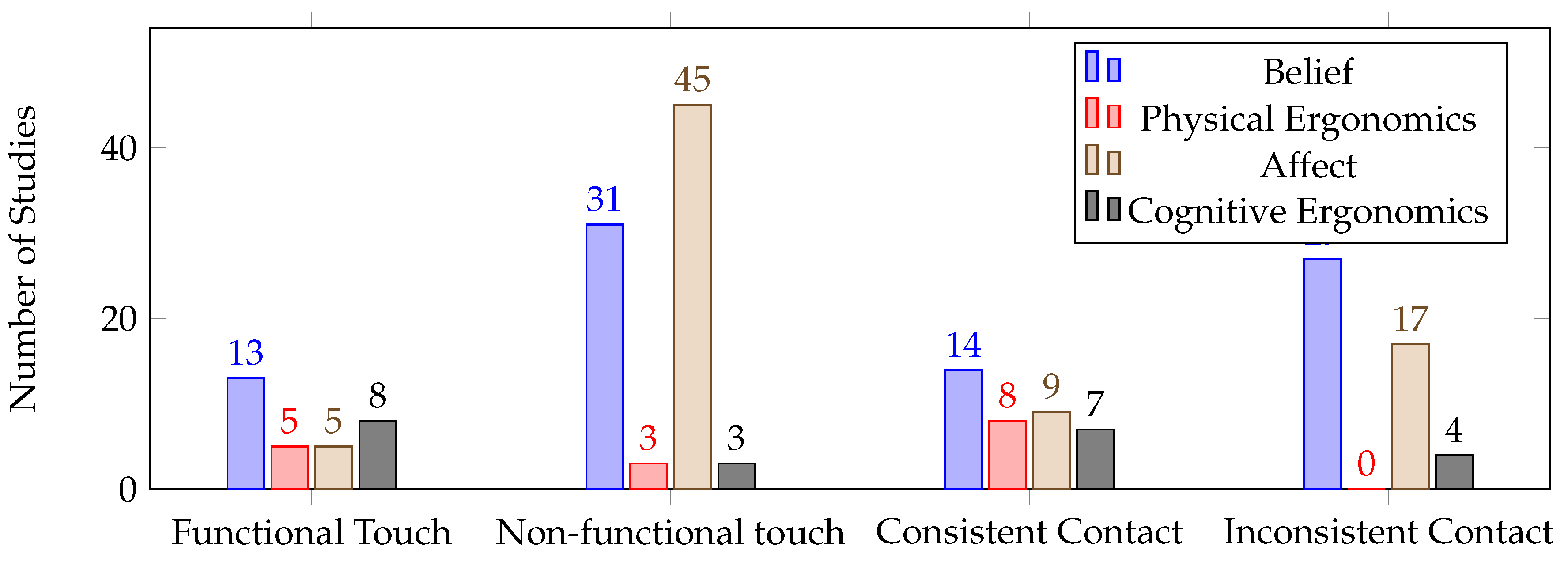

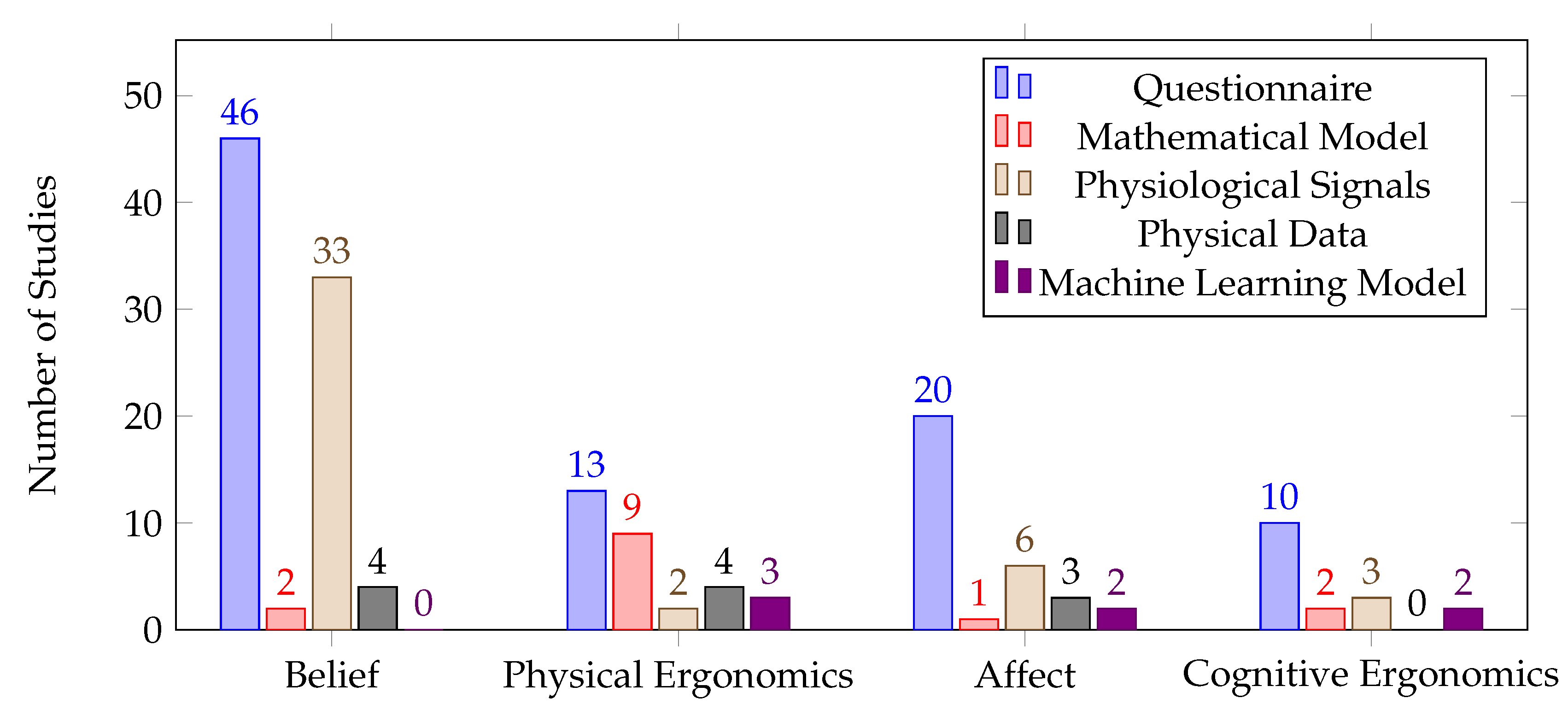

5.2. Human Factors Classification

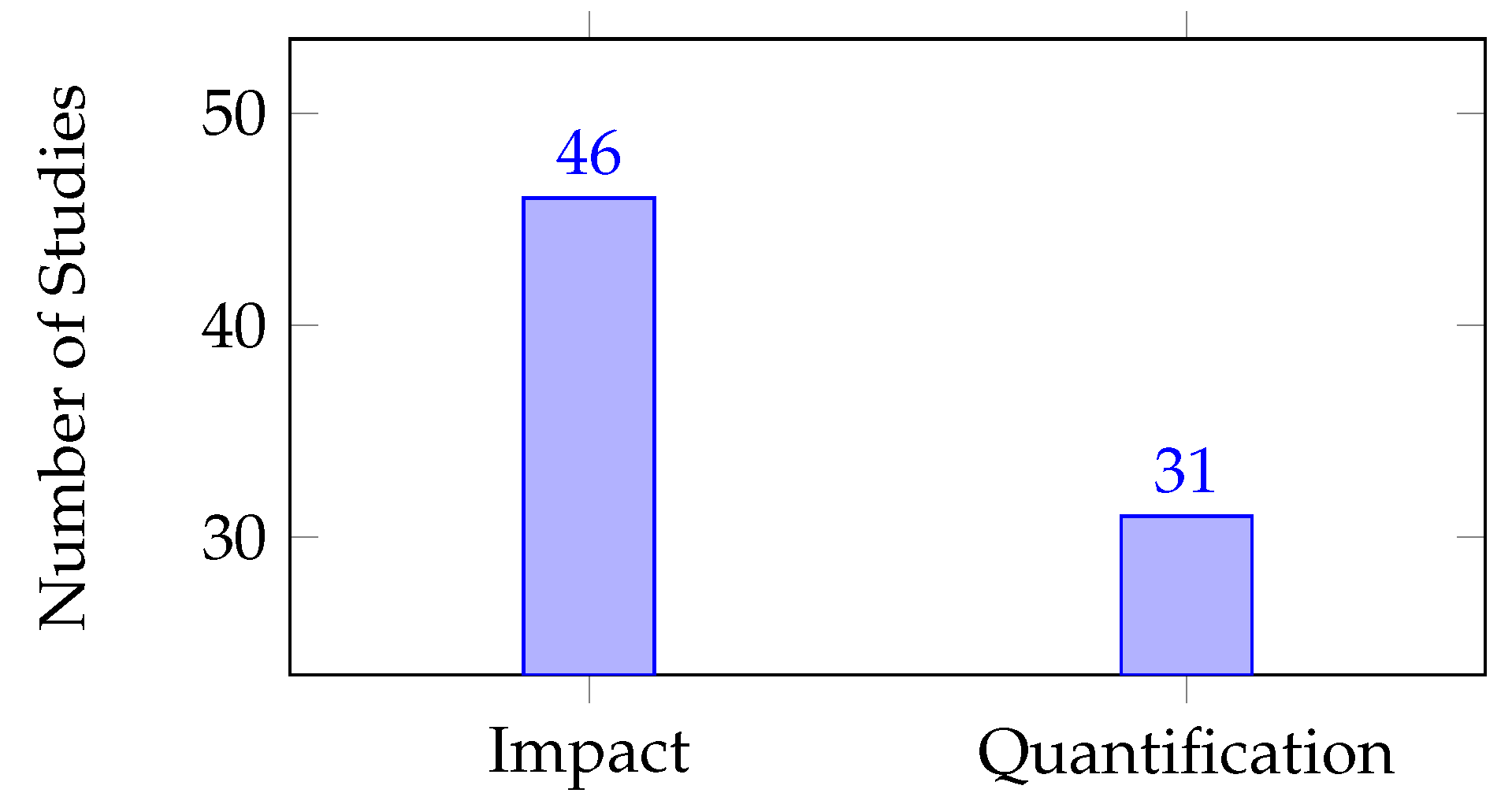

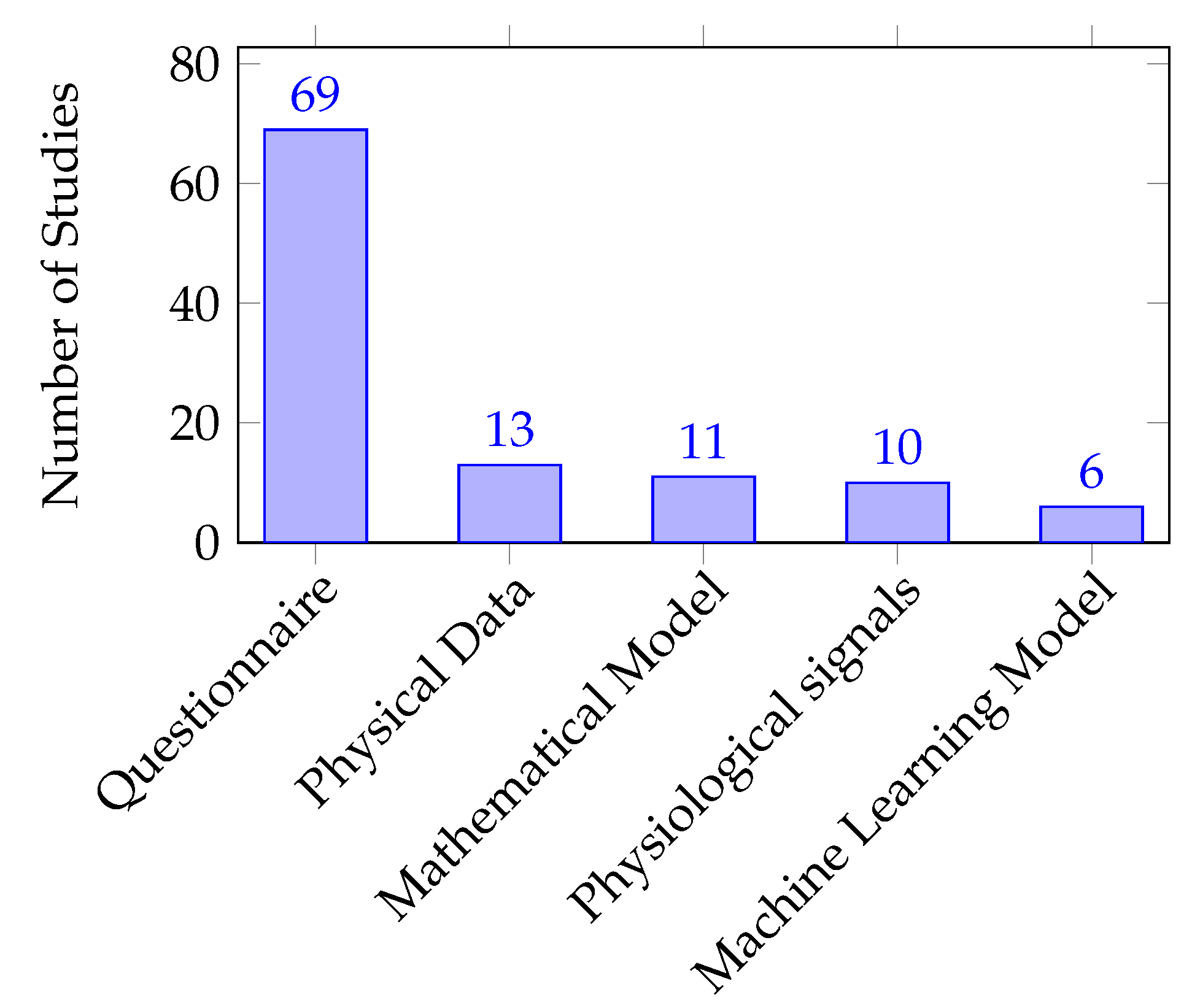

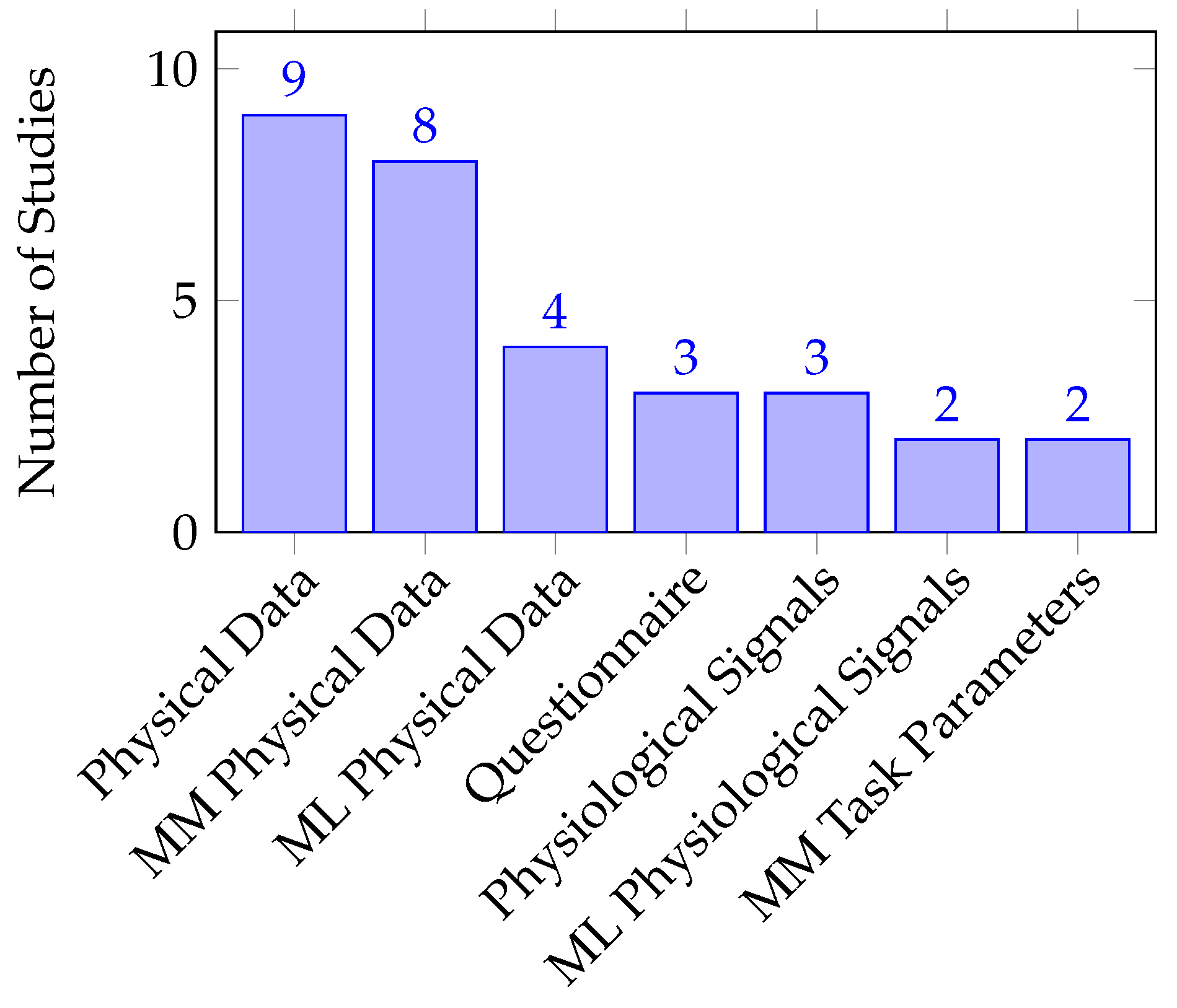

5.3. Quantification Approaches of Human Factors

6. Discussion

6.1. Mapping Scheme for pHRI

6.1.1. Direct pHRI

6.1.2. Indirect pHRI

6.2. Human Factor Classification

6.3. Human Factors Quantification

7. Limitations of the Review

8. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Ageing and health. Available online: https://www.who.int/news-room/fact-sheets/detail/ageing-and-health. (accessed on 20 October 2022).

- Ageing. Available online: https://www.un.org/en/global-issues/ageing. (accessed on 17 May 2023).

- Building The Caregiving Workforce Our Aging World Needs. Glob. Coalit. Aging 2021.

- Society 5.0. Available online: https://www8.cao.go.jp/cstp/english/society5_0/index.html. (accessed on 29 November 2022).

- Schweighart, R.; O’Sullivan, J.L.; Klemmt, M.; Teti, A.; Neuderth, S. Wishes and Needs of Nursing Home Residents. Healthcare 2022, 10, 854. [Google Scholar] [CrossRef] [PubMed]

- What is Human Factors and Ergonomics | HFES. Available online: https://www.hfes.org/About-HFES/What-is-Human-Factors-and-Ergonomics. (accessed on 30 November 2022).

- Rubagotti, M.; Tusseyeva, I.; Baltabayeva, S.; Summers, D.; Sandygulova, A. Perceived safety in physical human robot interaction—A survey. Rob. Auton. Syst. 2022, 104047. [Google Scholar] [CrossRef]

- Pervez, A.; Ryu, J. Safe physical human robot interaction-past, present and future. J. Mech. Sci. 2008, 22, 469–483. [Google Scholar] [CrossRef]

- Selvaggio, M.; Cognetti, M.; Nikolaidis, S.; Ivaldi, S.; Siciliano, B. Autonomy in physical human-robot interaction: A brief survey. IEEE Robot. Autom. Lett. 2021, 6, 7989–7996. [Google Scholar] [CrossRef]

- Losey, D.P.; McDonald, C.G.; Battaglia, E.; O’Malley, M.K. A Review of Intent Detection, Arbitration, and Communication Aspects of Shared Control for Physical Human–Robot Interaction. Appl. Mech. Rev. 2018, 70, 010804. [Google Scholar] [CrossRef]

- Coronado, E.; Kiyokawa, T.; Ricardez, G.A.G.; Ramirez-Alpizar, I.G.; Venture, G.; Yamanobe, N. Evaluating quality in human-robot interaction: A systematic search and classification of performance and human-centered factors, measures and metrics towards an industry 5.0. J. Manuf. Syst. 2022, 63, 392–410. [Google Scholar] [CrossRef]

- Hopko, S.; Wang, J.; Mehta, R. Human Factors Considerations and Metrics in Shared Space Human-Robot Collaboration: A Systematic Review. Front. Robot. AI. 2022, 9. [Google Scholar] [CrossRef]

- Ortenzi, V.; Cosgun, A.; Pardi, T.; Chan, W.P.; Croft, E.; Kulić, D. Object Handovers: A Review for Robotics. IEEE Trans. Robot. 2021, 37, 1855–1873. [Google Scholar] [CrossRef]

- Prasad, V.; Stock-Homburg, R.; Peters, J. Human-Robot Handshaking: A Review. Int. J. Soc. Robot. 2022, 14, 277–293. [Google Scholar] [CrossRef]

- Simone, V.D.; Pasquale, V.D.; Giubileo, V.; Miranda, S. Human-Robot Collaboration: an analysis of worker’s performance. Procedia Comput. Sci. 2022, 200, 1540–1549. [Google Scholar] [CrossRef]

- Lorenzini, M.; Lagomarsino, M.; Fortini, L.; Gholami, S.; Ajoudani, A. Ergonomic Human-Robot Collaboration in Industry: A Review. Front. Robot. AI. 2023, 9, 813907. [Google Scholar] [CrossRef] [PubMed]

- Hu, Y.; Benallegue, M.; Venture, G.; Yoshida, E. Interact With Me: An Exploratory Study on Interaction Factors for Active Physical Human-Robot Interaction. IEEE Robot. Autom. Lett. 2020, 5, 6764–6771. [Google Scholar] [CrossRef]

- Fujita, M.; Kato, R.; Tamio, A. Assessment of operators’ mental strain induced by hand-over motion of industrial robot manipulator. 19th International Symposium in Robot and Human Interactive Communication, 2010, pp. 361–366. [CrossRef]

- Gualtieri, L.; Fraboni, F.; De Marchi, M.; Rauch, E. Development and evaluation of design guidelines for cognitive ergonomics in human-robot collaborative assembly systems. Appl. Ergon. 2022, 104, 103807. [Google Scholar] [CrossRef] [PubMed]

- Dehais, F.; Sisbot, E.A.; Alami, R.; Causse, M. Physiological and subjective evaluation of a human–robot object hand-over task. Appl. Ergon. 2011, 42, 785–791. [Google Scholar] [CrossRef] [PubMed]

- He, K.; Simini, P.; Chan, W.P.; Kulić, D.; Croft, E.; Cosgun, A. On-The-Go Robot-to-Human Handovers with a Mobile Manipulator. 2022 31st IEEE International Conference on Robot and Human Interactive Communication (RO-MAN), 2022, pp. 729–734. [CrossRef]

- Zolotas, M.; Luo, R.; Bazzi, S.; Saha, D.; Mabulu, K.; Kloeckl, K.; Padir, T. Productive Inconvenience: Facilitating Posture Variability by Stimulating Robot-to-Human Handovers. 2022 31st IEEE International Conference on Robot and Human Interactive Communication (RO-MAN); IEEE: Napoli, Italy, 2022; pp. 122–128. [Google Scholar] [CrossRef]

- Peternel, L.; Fang, C.; Tsagarakis, N.; Ajoudani, A. Online Human Muscle Force Estimation for Fatigue Management in Human-Robot Co-Manipulation. 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2018, pp. 1340–1346. [CrossRef]

- Mizanoor Rahman, S.M.; Ikeura, R. Cognition-based variable admittance control for active compliance in flexible manipulation of heavy objects with a power-assist robotic system. Robotics biomim. 2018, 5, 7. [Google Scholar] [CrossRef] [PubMed]

- Fitter, N.; Kuchenbecker, K. Synchronicity Trumps Mischief in Rhythmic Human-Robot Social-Physical Interaction. Springer Proceedings in Adv. Robot. 2020, 10, 269–284. [Google Scholar] [CrossRef]

- Langer, A.; Feingold-Polak, R.; Mueller, O.; Kellmeyer, P.; Levy-Tzedek, S. Trust in socially assistive robots: Considerations for use in rehabilitation. Neurosci. Biobehav. Rev. 2019, 104, 231–239. [Google Scholar] [CrossRef]

- Chen, T.L.; King, C.H.; Thomaz, A.L.; Kemp, C.C. Touched by a robot: An investigation of subjective responses to robot-initiated touch. 2011 6th ACM/IEEE International Conference on Human-Robot Interaction (HRI), 2011, pp. 457–464. [CrossRef]

- Sauer, J.; Sonderegger, A.; Schmutz, S. Usability, user experience and accessibility: towards an integrative model. Ergonomics 2020, 63, 1207–1220. [Google Scholar] [CrossRef]

- ISO 9241-11:2018(en), Ergonomics of human-system interaction — Part 11: Usability: Definitions and concepts. https://www.iso.org/obp/ui/#iso:std:iso:9241:-11:ed-2:v1:en.

- Petersen, K.; Vakkalanka, S.; Kuzniarz, L. Guidelines for conducting systematic mapping studies in software engineering: An upyear. Inf. Softw. Technol. -01. [CrossRef]

- Kitchenham, B.; Brereton, P. A systematic review of systematic review process research in software engineering. Inf. Softw. Technol. 2013, 55, 2049–2075. [Google Scholar] [CrossRef]

- Wohlin, C. Guidelines for snowballing in systematic literature studies and a replication in software engineering. the 18th International Conference; ACM Press: London, England, United Kingdom, 2014; pp. 1–10. [Google Scholar] [CrossRef]

- Sigurjónsson, V.; Johansen, K.; Rösiö, C. Exploring the operator’s perspective within changeable and automated manufacturing – A literature review. Procedia CIRP 2022, 107, 369–374. [Google Scholar] [CrossRef]

- Simões, A.C.; Pinto, A.; Santos, J.; Pinheiro, S.; Romero, D. Designing human-robot collaboration (HRC) workspaces in industrial settings: A systematic literature review. J. Manuf. Syst. 2022, 62, 28–43. [Google Scholar] [CrossRef]

- Hamacher, A.; Bianchi-Berthouze, N.; Pipe, A.G.; Eder, K. Believing in BERT: Using expressive communication to enhance trust and counteract operational error in physical Human-robot interaction. 2016 25th IEEE International Symposium on Robot and Human Interactive Communication (RO-MAN), 2016, pp. 493–500. [CrossRef]

- Hu, Y.; Abe, N.; Benallegue, M.; Yamanobe, N.; Venture, G.; Yoshida, E. Toward Active Physical Human–Robot Interaction: Quantifying the Human State During Interactions. IEEE Trans. Hum. Mach. Syst. 2022. [Google Scholar] [CrossRef]

- Naneva, S.; Sarda Gou, M.; Webb, T.L.; Prescott, T.J. A Systematic Review of Attitudes, Anxiety, Acceptance, and Trust Towards Social Robots. Int J of Soc Robotics 2020, 12, 1179–1201. [Google Scholar] [CrossRef]

- Fraboni, F.; Gualtieri, L.; Millo, F.; De Marchi, M.; Pietrantoni, L.; Rauch, E. Human-Robot Collaboration During Assembly Tasks: The Cognitive Effects of Collaborative Assembly Workstation Features. Proceedings of the 21st Congress of the International Ergonomics Association (IEA 2021); Springer International Publishing: Cham, Switzerland, 2022; pp. 242–249. [Google Scholar] [CrossRef]

- Naggita, K.; Athiley, E.; Desta, B.; Sebo, S. Parental Responses to Aggressive Child Behavior towards Robots, Smart Speakers, and Tablets. 2022 31st IEEE International Conference on Robot and Human Interactive Communication (RO-MAN); IEEE: Napoli, Italy, 2022; pp. 337–344. [Google Scholar] [CrossRef]

- Sandygulova, A.; Amirova, A.; Telisheva, Z.; Zhanatkyzy, A.; Rakhymbayeva, N. Individual Differences of Children with Autism in Robot-assisted Autism Therapy. 2022 17th ACM/IEEE International Conference on Human-Robot Interaction (HRI); IEEE: Sapporo, Japan, 2022; pp. 43–52. [Google Scholar] [CrossRef]

- Ficuciello, F.; Villani, L.; Siciliano, B. Variable Impedance Control of Redundant Manipulators for Intuitive Human-Robot Physical Interaction. IEEE Trans. Robot. 2015, 31, 850–863. [Google Scholar] [CrossRef]

- Ma, X.; Quek, F. Development of a child-oriented social robot for safe and interactive physical interaction. IEEE/RSJ 2010 International Conference on Intelligent Robots and Systems, IROS 2010 - Conference Proceedings, 2010, pp. 2163–2168. [CrossRef]

- Kunimura, H.; Ono, C.; Hirai, M.; Muramoto, M.; Matsuzaki, W.; Uchiyama, T.; Shiratori, K.; Hoshino, J. Baby robot "YOTARO"; Vol. 6243 LNCS, 2010. [CrossRef]

- Yamashita, Y.; Ishihara, H.; Ikeda, T.; Asada, M. Path Analysis for the Halo Effect of Touch Sensations of Robots on Their Personality Impressions. SOCIAL ROBOTICS, (ICSR 2016); Agah, A.; Cabibihan, J.; Howard, A.; Salichs, M.; He, H., Eds., 2016, Vol. 9979, pp. 502–512. [CrossRef]

- Ishii, S. Meal-assistance Robot “My Spoon”. JRSJ 2003, 21, 378–381. [Google Scholar] [CrossRef]

- Okuda, M.; Takahashi, Y.; Tsuichihara, S. Human Response to Humanoid Robot That Responds to Social Touch. Appl. Sci. 2022, 12. [Google Scholar] [CrossRef]

- Jørgensen, J.; Bojesen, K.; Jochum, E. Is a Soft Robot More “Natural”? Exploring the Perception of Soft Robotics in Human–Robot Interaction. Int. J. Soc. Robot. 2022, 14, 95–113. [Google Scholar] [CrossRef]

- Au, R.H.Y.; Ling, K.; Fraune, M.R.; Tsui, K.M. Robot Touch to Send Sympathy: Divergent Perspectives of Senders and Recipients. 2022 17th ACM/IEEE International Conference on Human-Robot Interaction (HRI); IEEE: Sapporo, Japan, 2022; pp. 372–382. [Google Scholar]

- Mazursky, A.; DeVoe, M.; Sebo, S. Physical Touch from a Robot Caregiver: Examining Factors that Shape Patient Experience. 2022 31st IEEE International Conference on Robot and Human Interactive Communication (RO-MAN); IEEE: Napoli, Italy, 2022; pp. 1578–1585. [Google Scholar] [CrossRef]

- Kunold, L. Seeing is not Feeling the Touch from a Robot. 2022 31st IEEE International Conference on Robot and Human Interactive Communication (RO-MAN); IEEE: Napoli, Italy, 2022; pp. 1562–1569. [Google Scholar] [CrossRef]

- Block, A.E.; Seifi, H.; Hilliges, O.; Gassert, R.; Kuchenbecker, K.J. In the Arms of a Robot: Designing Autonomous Hugging Robots with Intra-Hug Gestures. ACM Trans. Hum.-Robot Interact. 2022, 12, 47. [Google Scholar] [CrossRef]

- Bainbridge, W.; Nozawa, S.; Ueda, R.; Okada, K.; Inaba, M. A Methodological Outline and Utility Assessment of Sensor-based Biosignal Measurement in Human-Robot Interaction A System for Determining Correlations Between Robot Sensor Data and Subjective Human Data in HRI. Int. J. Soc. Robot. 2012, 4, 303–316. [Google Scholar] [CrossRef]

- Hayashi, A.; Rincon-Ardila, L.; Venture, G. Improving HRI with Force Sensing. Machines 2022, 10. [Google Scholar] [CrossRef]

- Giorgi, I.; Tirotto, F.; Hagen, O.; Aider, F.; Gianni, M.; Palomino, M.; Masala, G. Friendly But Faulty: A Pilot Study on the Perceived Trust of Older Adults in a Social Robot. IEEE Access 2022, 10, 92084–92096. [Google Scholar] [CrossRef]

- Burns, R.; Lee, H.; Seifi, H.; Faulkner, R.; Kuchenbecker, K. Endowing a NAO Robot With Practical Social-Touch Perception. Front. Robot. AI. -19. [CrossRef]

- Zheng, X.; Shiomi, M.; Minato, T.; Ishiguro, H. Modeling the Timing and Duration of Grip Behavior to Express Emotions for a Social Robot. IEEE Robot. Autom. Lett. 2021, 6, 159–166. [Google Scholar] [CrossRef]

- Shiomi, M.; Hagita, N. Audio-Visual Stimuli Change not Only Robot’s Hug Impressions but Also Its Stress-Buffering Effects. Int. J. Soc. Robot. 2021, 13, 469–476. [Google Scholar] [CrossRef]

- Law, T.; Malle, B.; Scheutz, M. A Touching Connection: How Observing Robotic Touch Can Affect Human Trust in a Robot. Int. J. Soc. Robot. 2003; 13. [Google Scholar] [CrossRef]

- Gaitan-Padilla, M.; Maldonado-Mejia, J.; Fonseca, L.; Pinto-Bernal, M.; Casas, D.; Munera, M.; Cifuentes, C. Physical Human-Robot Interaction Through Hugs with CASTOR Robot. SOCIAL ROBOTICS, ICSR 2021; Li, H.; Ge, S.; Wu, Y.; Wykowska, A.; He, H.; Liu, X.; Li, D.; PerezOsorio, J., Eds., 2021, Vol. 13086, pp. 814–818. [CrossRef]

- Zheng, X.; Shiomi, M.; Minato, T.; Ishiguro, H. What Kinds of Robot’s Touch Will Match Expressed Emotions? IEEE Robot. Autom. Lett. 2020, 5, 127–134. [Google Scholar] [CrossRef]

- Zamani, N.; Moolchandani, P.; Fitter, N.; Culbertson, H. ; IEEE. Effects of Motion Parameters on Acceptability of Human-Robot Patting Touch. 2020 IEEE HAPTICS SYMPOSIUM (HAPTICS), 2020, pp. 664–670. [CrossRef]

- Shiomi, M.; Minato, T.; Ishiguro, H. Effects of Robot’s Awareness and its Subtle Reactions Toward People’s Perceived Feelings in Touch Interaction. J. Robot. Mechatron. 2020, 32, 43–50. [Google Scholar] [CrossRef]

- Fitter, N.; Kuchenbecker, K. How Does It Feel to Clap Hands with a Robot? Int. J. Soc. Robot. 2020, 12, 113–127. [Google Scholar] [CrossRef]

- Claure, H.; Khojasteh, N.; Tennent, H.; Jung, M. Using expectancy violations theory to understand robot touch interpretation. 2020, pp. 163–165. [CrossRef]

- Willemse, C.; van Erp, J. Social Touch in Human-Robot Interaction: Robot-Initiated Touches can Induce Positive Responses without Extensive Prior Bonding. Int. J. Soc. Robot. 2019, 11, 285–304. [Google Scholar] [CrossRef]

- Shiomi, M.; Shatani, K.; Minato, T.; Ishiguro, H. How Should a Robot React Before People’s Touch?: Modeling a Pre-Touch Reaction Distance for a Robot’s Face. IEEE Robot. Autom. Lett. 2018, 3, 3773–3780. [Google Scholar] [CrossRef]

- Orefice, P.; Ammi, M.; Hafez, M.; Tapus, A. Pressure Variation Study in Human-Human and Human-Robot Handshakes: Impact of the Mood. 2018 27TH IEEE International Symposium on Robot and Human Interactive Communication IEEE RO-MAN 2018); Cabibihan, J.; Mastrogiovanni, F.; Pandey, A.; Rossi, S.; Staffa, M., Eds., 2018, pp. 247–254.

- Midorikawa, R.; Niitsuma, M. Effects of Touch Experience on Active Human Touch in Human-Robot Interaction. IFAC-PapersOnLine, 2018, Vol. 51, pp. 154–159. 2: Issue; 22. [CrossRef]

- Hirano, T.; Shiomi, M.; Iio, T.; Kimoto, M.; Tanev, I.; Shimohara, K.; Hagita, N. How Do Communication Cues Change Impressions of Human-Robot Touch Interaction? Int. J. Soc. Robot. 2018, 10, 21–31. [Google Scholar] [CrossRef]

- Fitter, N.; Kuchenbecker, K. Teaching a robot bimanual hand-clapping games via wrist-worn IMUs. Front. Robot. AI. 2018, 5. [Google Scholar] [CrossRef]

- Block, A.; Kuchenbecker, K. Emotionally Supporting Humans Through Robot Hugs. ACM/IEEE International Conference on Human-Robot Interaction, 2018, pp. 293–294. [CrossRef]

- Avelino, J.; Correia, F.; Catarino, J.; Ribeiro, P.; Moreno, P.; Bernardino, A.; Paiva, A.; Kosecka, J. The Power of a Hand-shake in Human-Robot Interactions. 2018 IEEE/RSJ International Conference on Intelligent Robots and systems (IROS); Maciejewski, A.; Okamura, A.; Bicchi, A.; Stachniss, C.; Song, D.; Lee, D.; Chaumette, F.; Ding, H.; Li, J.; Wen, J.; Roberts, J.; Masamune, K.; Chong, N.; Amato, N.; Tsagwarakis, N.; Rocco, P.; Asfour, T.; Chung, W.; Yasuyoshi, Y.; Sun, Y.; Maciekeski, T.; Althoefer, K.; AndradeCetto, J.; Chung, W.; Demircan, E.; Dias, J.; Fraisse, P.; Gross, R.; Harada, H.; Hasegawa, Y.; Hayashibe, M.; Kiguchi, K.; Kim, K.; Kroeger, T.; Li, Y.; Ma, S.; Mochiyama, H.; Monje, C.; Rekleitis, I.; Roberts, R.; Stulp, F.; Tsai, C.; Zollo, L., Eds., 2018, pp. 1864–1869.

- Andreasson, R.; Alenljung, B.; Billing, E.; Lowe, R. Affective Touch in Human-Robot Interaction: Conveying Emotion to the Nao Robot. Int. J. Soc. Robot. 2018, 10, 473–491. [Google Scholar] [CrossRef]

- Shiomi, M.; Hagita, N. Do Audio-Visual Stimuli Change Hug Impressions? SOCIAL ROBOTICS, ICSR 2017; Kheddar, A.; Yoshida, E.; Ge, S.; Suzuki, K.; Cabibihan, J.; Eyssel, F.; He, H., Eds., 2017, Vol. 10652, pp. 345–354. [CrossRef]

- Alenljung, B.; Andreasson, R.; Billing, E.; Lindblom, J.; Lowe, R. User Experience of Conveying Emotions by Touch. 2017 26TH IEEE International Symposium on Robot and Human Interactive Communication (RO-MAN); Howard, A.; Suzuki, K.; Zollo, L., Eds., 2017, pp. 1240–1247.

- Willemse, C.; Huisman, G.; Jung, M.; van Erp, J.; Heylen, D. Observing Touch from Video: The Influence of Social Cues on Pleasantness Perceptions. Haptics : Perception, Devices, Control, and Applications, Eurohaptics 2016, PT II; Bello, F.; Kajimoto, H.; Visell, Y., Eds., 2016, Vol. 9775, pp. 196–205. [CrossRef]

- Trovato, G.; Do, M.; Terlemez, O.; Mandery, C.; Ishii, H.; Bianchi-Berthouze, N.; Asfour, T.; Takanishi, A. Is hugging a robot weird? Investigating the influence of robot appearance on users’ perception of hugging. IEEE-RAS International Conference on Humanoid Robots, 2016, pp. 318–323. [CrossRef]

- Martinez-Hernandez, U.; Rubio-Solis, A.; Prescott, T. ; IEEE. Bayesian perception of touch for control of robot emotion. 2016 International Joint Conference on Neural Networks (IJCNN), 2016, pp. 4927–4933.

- Tsalamlal, M.; Martin, J.; Ammi, M.; Tapus, A.; Amorim, M. ; IEEE. Affective Handshake with a Humanoid Robot: How do Participants Perceive and Combine its Facial and Haptic Expressions? 2015 International Conference on Affective Computing and Intelligent Interaction (ACII), 2015, pp. 334–340.

- Jindai, M.; Ota, S.; Ikemoto, Y.; Sasaki, T. Handshake request motion model with an approaching human for a handshake robot system. Proceedings of the 2015 7th IEEE International Conference on Cybernetics and Intelligent Systems, CIS 2015 and Robotics, Automation and Mechatronics, RAM 2015, 2015, pp. 265–270. [Google Scholar] [CrossRef]

- Haring, K.; Watanabe, K.; Silvera-Tawil, D.; Velonaki, M.; Matsumoto, Y. Touching an Android robot: Would you do it and how? Proceedings - 2015 International Conference on Control, Automation and Robotics, ICCAR 2015, 2015, pp. 8–13. [Google Scholar] [CrossRef]

- Ammi, M.; Demulier, V.; Caillou, S.; Gaffary, Y.; Tsalamlal, Y.; Martin, J.; Tapus, A. ; ACM. Haptic Human-Robot Affective Interaction in a Handshaking Social Protocol. Proceedings of the 2015 ACM/IEEE International Conference on Human-Robot Interaction (HRI’15), 2015, pp. 263–270. [CrossRef]

- Chen, T.; King, C.H.; Thomaz, A.; Kemp, C. An Investigation of Responses to Robot-Initiated Touch in a Nursing Context. Int. J. Soc. Robot. 2014, 6, 141–161. [Google Scholar] [CrossRef]

- Nie, J.; Park, M.; Marin, A.; Sundar, S. ; Assoc Comp Machinery. Can You Hold My Hand? Physical Warmth in Human-Robot Interaction. Proceeding of the Seventh annual ACM/IEEE International Conference on Human-Robot Interaction, 2012, pp. 201–202.

- Jindai, M.; Watanabe, T. Development of a handshake request motion model based on analysis of handshake motion between humans. IEEE/ASME International Conference on Advanced Intelligent Mechatronics, AIM, 2011, pp. 560–565. [CrossRef]

- Evers, V.; Winterboer, A.; Pavlin, G.; Groen, F. The Evaluation of Empathy, Autonomy and Touch to Inform the Design of an Environmental Monitoring Robot. SOCIAL ROBOTICS, ICSR 2010; Ge, S.; Li, H.; Cabibihan, J.; Tan, Y., Eds., 2010, Vol. 6414, pp. 285–294.

- Cramer, H.; Kemper, N.; Amin, A.; Wielinga, B.; Evers, V. ’Give me a hug’: the effects of touch and autonomy on people’s responses to embodied social agents. Comput. Animat. 2009, 20, 437–445. [Google Scholar] [CrossRef]

- Nomura, T.; Suzuki, T.; Kanda, T.; Kato, K. Measurement of Anxiety toward Robots. Proceedings - IEEE International Workshop on Robot and Human Interactive Communication, 2006, pp. 372–377. [CrossRef]

- Zheng, X.; Shiomi, M.; Minato, T.; Ishiguro, H. How Can Robots Make People Feel Intimacy Through Touch? J. Robot. Mechatron. 2020, 32, 51–58. [Google Scholar] [CrossRef]

- Wang, Q.; Liu, D.; Carmichael, M.G.; Aldini, S.; Lin, C.T. Computational Model of Robot Trust in Human Co-Worker for Physical Human-Robot Collaboration. IEEE Robot. Autom. Lett. 2022, 7, 3146–3153. [Google Scholar] [CrossRef]

- Eimontaite, I.; Gwilt, I.; Cameron, D.; Aitken, J.M.; Rolph, J.; Mokaram, S.; Law, J. Language-free graphical signage improves human performance and reduces anxiety when working collaboratively with robots. Int. J. Adv. Manuf. 2019, 100, 55–73. [Google Scholar] [CrossRef]

- Memar, A.H.; Esfahani, E.T. Objective Assessment of Human Workload in Physical Human-robot Cooperation Using Brain Monitoring. ACM Trans. Hum.-Robot Interact. 2020, 9, 1–21. [Google Scholar] [CrossRef]

- Yamada, Y.; Umetani, Y.; Hirasawa, Y. Proposal of a psychophysiological experiment system applying the reaction of human pupillary dilation to frightening robot motions. IEEE SMC’99 Conference Proceedings. 1999 IEEE International Conference on Systems, Man, and Cybernetics (Cat. No.99CH37028), 1999, Vol. 2, pp. 1052–1057 vol.2. [CrossRef]

- Maurtua, I.; Ibarguren, A.; Kildal, J.; Susperregi, L.; Sierra, B. Human–robot collaboration in industrial applications: Safety, interaction and trust. Int. J. Adv. Robot 2017, 14, 1729881417716010. [Google Scholar] [CrossRef]

- Sahin, M.; Savur, C. Evaluation of Human Perceived Safety during HRC Task using Multiple Data Collection Methods. 2022 17th Annual System of Systems Engineering Conference (SOSE), 2022, pp. 465–470. [CrossRef]

- Paez Granados, D.F.; Yamamoto, B.A.; Kamide, H.; Kinugawa, J.; Kosuge, K. Dance Teaching by a Robot: Combining Cognitive and Physical Human–Robot Interaction for Supporting the Skill Learning Process. IEEE Robot. Autom. Lett. 2017, 2, 1452–1459. [Google Scholar] [CrossRef]

- Materna, Z.; Kapinus, M.; Beran, V.; Smrž, P.; Zemčík, P. Interactive Spatial Augmented Reality in Collaborative Robot Programming: User Experience Evaluation. 2018 27th IEEE International Symposium on Robot and Human Interactive Communication (RO-MAN), 2018-08, pp. 80–87. [CrossRef]

- Schmidtler, J.; Bengler, K.; Dimeas, F.; Campeau-Lecours, A. A questionnaire for the evaluation of physical assistive devices (quead): Testing usability and acceptance in physical human-robot interaction. 2017 IEEE International Conference on Systems, Man, and Cybernetics, SMC 2017, 2017, Vol. 2017–January, pp 876. [Google Scholar] [CrossRef]

- Reyes-Uquillas, D.; Hsiao, T. Safe and intuitive manual guidance of a robot manipulator using adaptive admittance control towards robot agility. Robot. Comput. Integr. Manuf. 2021, 70. [Google Scholar] [CrossRef]

- Shiomi, M.; Hirano, T.; Kimoto, M.; Iio, T.; Shimohara, K. Gaze-Height and Speech-Timing Effects on Feeling Robot-Initiated Touches. J. Robot. Mechatron. 2022, 32, 68–75. [Google Scholar] [CrossRef]

- Bock, N.; Hoffmann, L.; Pütten, R.V. Your Touch Leaves Me Cold, Robot. ACM/IEEE International Conference on Human-Robot Interaction, 2018, pp. 71–72. [CrossRef]

- Li, J.; Ju, W.; Reeves, B. Touching a Mechanical Body: Tactile Contact With Body Parts of a Humanoid Robot Is Physiologically Arousing. J. hum. robot interact. 2017, 6, 118–130. [Google Scholar] [CrossRef]

- Gopinathan, S.; Otting, S.; Steil, J. A User Study on Personalized Stiffness Control and Task Specificity in Physical Human-Robot Interaction. Front. Robot. AI. 2017, 4. [Google Scholar] [CrossRef]

- Der Spaa, L.; Gienger, M.; Bates, T.; Kober, J. Predicting and Optimizing Ergonomics in Physical Human-Robot Cooperation Tasks. Proceedings - IEEE International Conference on Robotics and Automation, 2020, pp. 1799–1805. [CrossRef]

- Carmichael, M.; Aldini, S.; Liu, D. Human user impressions of damping methods for singularity handling in human-robot collaboration. Australasian Conference on Robotics and Automation, ACRA, 2017, Vol. 2017-December, pp. 107–113.

- Novak, D.; Beyeler, B.; Omlin, X.; Riener, R. Workload Estimation in Physical Human-Robot Interaction Using Physiological Measurements. Interact. Comput. 2015, 27, 616–629. [Google Scholar] [CrossRef]

- Ferland, F.; Aumont, A.; Letourneau, D.; Michaud, F. Taking Your Robot For a Walk: Force-Guiding a Mobile Robot Using Compliant Arms. Proceedings of the 8TH ACM/IEEE International on Human-Robot Interaction (HRI 2013); Kuzuoka, H.; Evers, V.; Imai, M.; Forlizzi, J., Eds., 2013, pp. 309–316.

- Chen, T.; Kemp, C. Lead me by the hand: Evaluation of a direct physical interface for nursing assistant robots. 5th ACM/IEEE International Conference on Human-Robot Interaction, HRI 2010, 2010, pp. 367–374. [Google Scholar] [CrossRef]

- Yazdani, A.; Novin, R.; Merryweather, A.; Hermans, T. ; IEEE. DULA and DEBA: Differentiable Ergonomic Risk Models for Postural Assessment and Optimization in Ergonomically Intelligent pHRI. 2022 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2022, pp. 9124–9131. [CrossRef]

- Behrens, R.; Pliske, G.; Umbreit, M.; Piatek, S.; Walcher, F.; Elkmann, N. A Statistical Model to Determine Biomechanical Limits for Physically Safe Interactions With Collaborative Robots. Front. Robot. AI. 2022, 8. [Google Scholar] [CrossRef]

- Tan, J.T.C.; Duan, F.; Kato, R.; Arai, T. Safety Strategy for Human–Robot Collaboration: Design and Development in Cellular Manufacturing. Adv. Robot . 2010, 24, 839–860. [Google Scholar] [CrossRef]

- Michalos, G.; Kousi, N.; Karagiannis, P.; Gkournelos, C. Seamless human robot collaborative assembly - An automotive case study | Elsevier Enhanced Reader. Mechatronics 2018. [Google Scholar] [CrossRef]

- Eyam, A.; Mohammed, W.; Martinez Lastra, J. Emotion-driven analysis and control of human-robot interactions in collaborative applications. Sensors 2021, 21. [Google Scholar] [CrossRef]

- Amanhoud, W.; Hernandez Sanchez, J.; Bouri, M.; Billard, A. Contact-initiated shared control strategies for four-arm supernumerary manipulation with foot interfaces. Int. J. Rob. Res. 2021, 40, 986–1014. [Google Scholar] [CrossRef]

- Aleotti, J.; Micelli, V.; Caselli, S. Comfortable robot to human object hand-over. 2012 IEEE RO-MAN: The 21st IEEE International Symposium on Robot and Human Interactive Communication, 2012, pp. 771–776. [CrossRef]

- Pan, M.K.; Croft, E.A.; Niemeyer, G. Evaluating Social Perception of Human-to-Robot Handovers Using the Robot Social Attributes Scale (RoSAS). Proceedings of the 2018 ACM/IEEE International Conference on Human-Robot Interaction; ACM: Chicago IL USA, 2018; pp. 443–451. [Google Scholar] [CrossRef]

- Lambrecht, J.; Nimpsch, S. Human Prediction for the Natural Instruction of Handovers in Human Robot Collaboration. 2019 28th IEEE International Conference on Robot and Human Interactive Communication (RO-MAN), 2019, pp. 1–6. [CrossRef]

- Lagomarsino, M.; Lorenzini, M.; Balatti, P.; Momi, E.D.; Ajoudani, A. Pick the Right Co-Worker: Online Assessment of Cognitive Ergonomics in Human-Robot Collaborative Assembly. IEEE Trans. Cogn. Develop. I: 1–1. Conference Name. [CrossRef]

- Hald, K.; Rehm, M.; Moeslund, T.B. Proposing Human-Robot Trust Assessment Through Tracking Physical Apprehension Signals in Close-Proximity Human-Robot Collaboration. 2019 28th IEEE International Conference on Robot and Human Interactive Communication (RO-MAN), 2019, pp. 1–6. [CrossRef]

- Strabala, K.W.; Lee, M.K.; Dragan, A.D.; Forlizzi, J.L.; Srinivasa, S.; Cakmak, M.; Micelli, V. Towards Seamless Human-Robot Handovers. J. hum. robot interact. -01. [CrossRef]

- Qin, M.; Brawer, J.; Scassellati, B. Task-Oriented Robot-to-Human Handovers in Collaborative Tool-Use Tasks. 2022 31st IEEE International Conference on Robot and Human Interactive Communication (RO-MAN); IEEE: Napoli, Italy, 2022; pp. 1327–1333. [Google Scholar] [CrossRef]

- Nowak, J.; Fraisse, P.; Cherubini, A.; Daures, J.P. Assistance to Older Adults with Comfortable Robot-to-Human Handovers. 2022 IEEE International Conference on Adv. Robot. and Its Social Impacts (ARSO), 2022-05, pp. 1–6. [CrossRef]

- Huber, M.; Radrich, H.; Wendt, C.; Rickert, M.; Knoll, A.; Brandt, T.; Glasauer, S. ; IEEE. Evaluation of a novel biologically inspired Trajectory Generator in Human-Robot Interaction. RO-MAN 2009: The 18TH IEEE International Symposium on Robot and Human Interactive Communication, 2009, pp. 712–+.

- Nikolaidis, S.; Hsu, D.; Srinivasa, S. Human-robot mutual adaptation in collaborative tasks: Models and experiments. Int. J. Rob. Res. S: 36, 618–634. Publisher; -01. [CrossRef]

- Muller, T.; Subrin, K.; Joncheray, D.; Billon, A.; Garnier, S. Transparency Analysis of a Passive Heavy Load Comanipulation Arm. IEEE Trans. Hum. Mach. Syst. [CrossRef]

- Wang, Y.; Lematta, G.J.; Hsiung, C.P.; Rahm, K.A.; Chiou, E.K.; Zhang, W. Quantitative Modeling and Analysis of Reliance in Physical Human–Machine Coordination. J Mech Robot . 2019, 11. [Google Scholar] [CrossRef]

- Lorenzini, M.; Kim, W.; De Momi, E.; Ajoudani, A. A synergistic approach to the Real-Time estimation of the feet ground reaction forces and centers of pressure in humans with application to Human-Robot collaboration. IEEE Robot. Autom. Lett. 2018, 3, 3654–3661. [Google Scholar] [CrossRef]

- Kim, W.; Lee, J.; Peternel, L.; Tsagarakis, N.; Ajoudani, A. Anticipatory Robot Assistance for the Prevention of Human Static Joint Overloading in Human-Robot Collaboration. IEEE Robot. Autom. Lett. 2018, 3, 68–75. [Google Scholar] [CrossRef]

- Peternel, L.; Fang, C.; Tsagarakis, N.; Ajoudani, A. A selective muscle fatigue management approach to ergonomic human-robot co-manipulation. Robot. Comput. Integr. Manuf. 2019, 58, 69–79. [Google Scholar] [CrossRef]

- Peternel, L.; Tsagarakis, N.; Caldwell, D.; Ajoudani, A. Robot adaptation to human physical fatigue in human–robot co-manipulation. Auton. Robot. 2018, 42, 1011–1021. [Google Scholar] [CrossRef]

- Kowalski, C.; Brinkmann, A.; Hellmers, S.; Fifelski-von Bohlen, C.; Hein, A. Comparison of a VR-based and a rule-based robot control method for assistance in a physical human-robot collaboration scenario. 2022 31st IEEE International Conference on Robot and Human Interactive Communication (RO-MAN); IEEE: Napoli, Italy, 2022; pp. 722–728. [Google Scholar] [CrossRef]

- Figueredo, L.; Aguiar, R.; Chen, L.; Chakrabarty, S.; Dogar, M.; Cohn, A. Human Comfortability: Integrating Ergonomics and Muscular-Informed Metrics for Manipulability Analysis during Human-Robot Collaboration. IEEE Robot. Autom. Lett. 2021, 6, 351–358. [Google Scholar] [CrossRef]

- Gao, Y.; Chang, H.; Demiris, Y. User Modelling Using Multimodal Information for Personalised Dressing Assistance. IEEE Access 2020, 8, 45700–45714. [Google Scholar] [CrossRef]

- Chen, L.; Figueredo, L.; Dogar, M. Planning for Muscular and Peripersonal-Space Comfort during Human-Robot Forceful Collaboration. IEEE-RAS International Conference on Humanoid Robots, 2019, pp. 409–416. [CrossRef]

- Madani, A.; Niaz, P.; Guler, B.; Aydin, Y.; Basdogan, C. Robot-Assisted Drilling on Curved Surfaces with Haptic Guidance under Adaptive Admittance Control. IEEE International Conference on Intelligent Robots and Systems, 2022, Vol. 2022-October, pp. 3723–3730. [CrossRef]

| Categories | Characteristics | Studies | ||

|---|---|---|---|---|

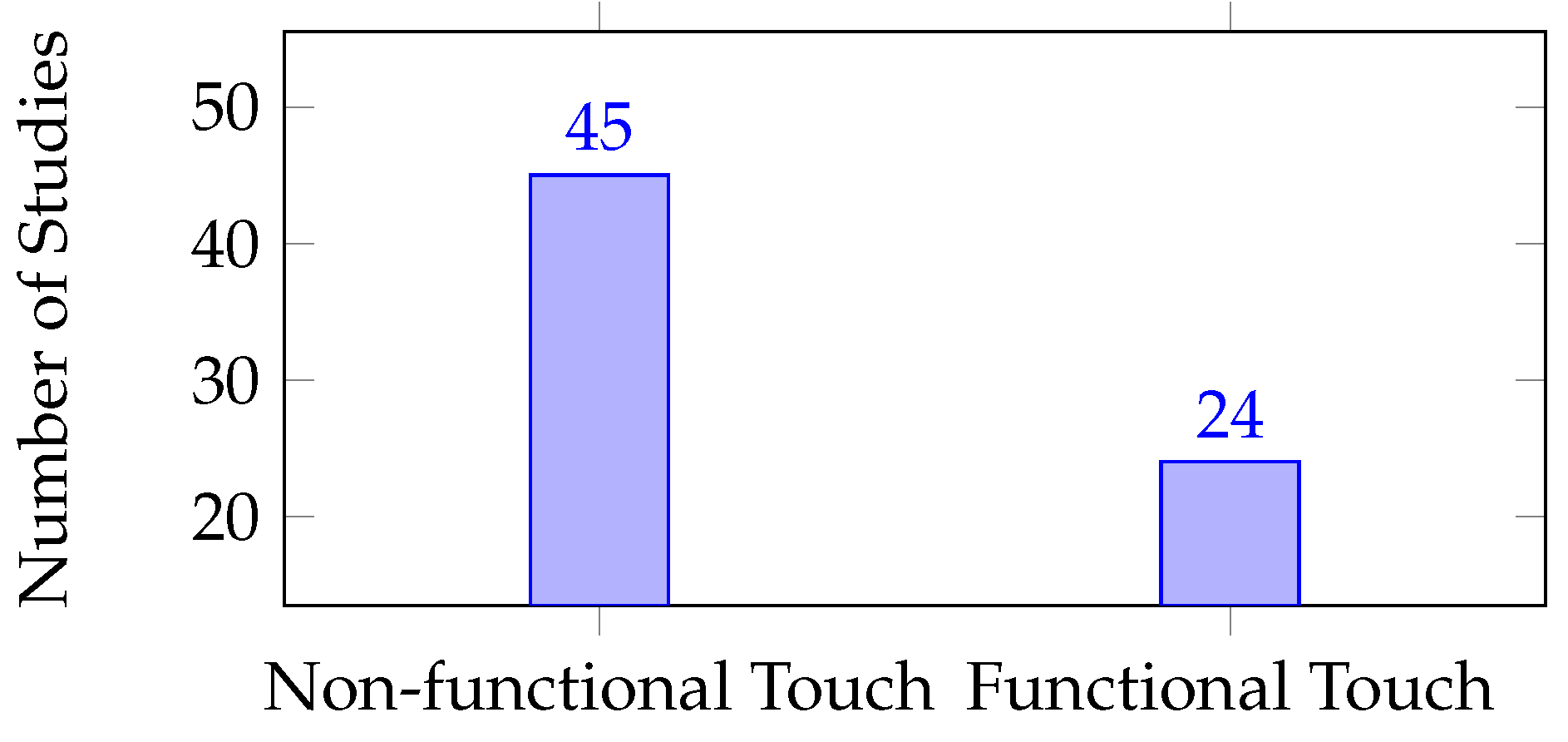

| Direct pHRI | Purpose | Non-Functional Touch | includes studies that involve physical touch between the robot and the human with the intention of communicating a psychological state, such as social touch [46], or for the purpose of exploration or curiosity, as in [47]. | [25,46,47,48,49,50,51,52,53,54,55,56,57,58,59,60,61,62,63,64,65,66,67,68,69,70,71,72,73,74,75,76,77,78,79,80,81,82,83,84,85,86,87,88,89] |

| Functional Touch | Includes studies that involved physical contact between the robot and the human for a specific purpose, such as manipulation, assistance, or control, i.e. instrumental touch. | [17,36,49,83,90,91,92,93,94,95,96,97,98,99,100,101,102,103,104,105,106,107,108,109] | ||

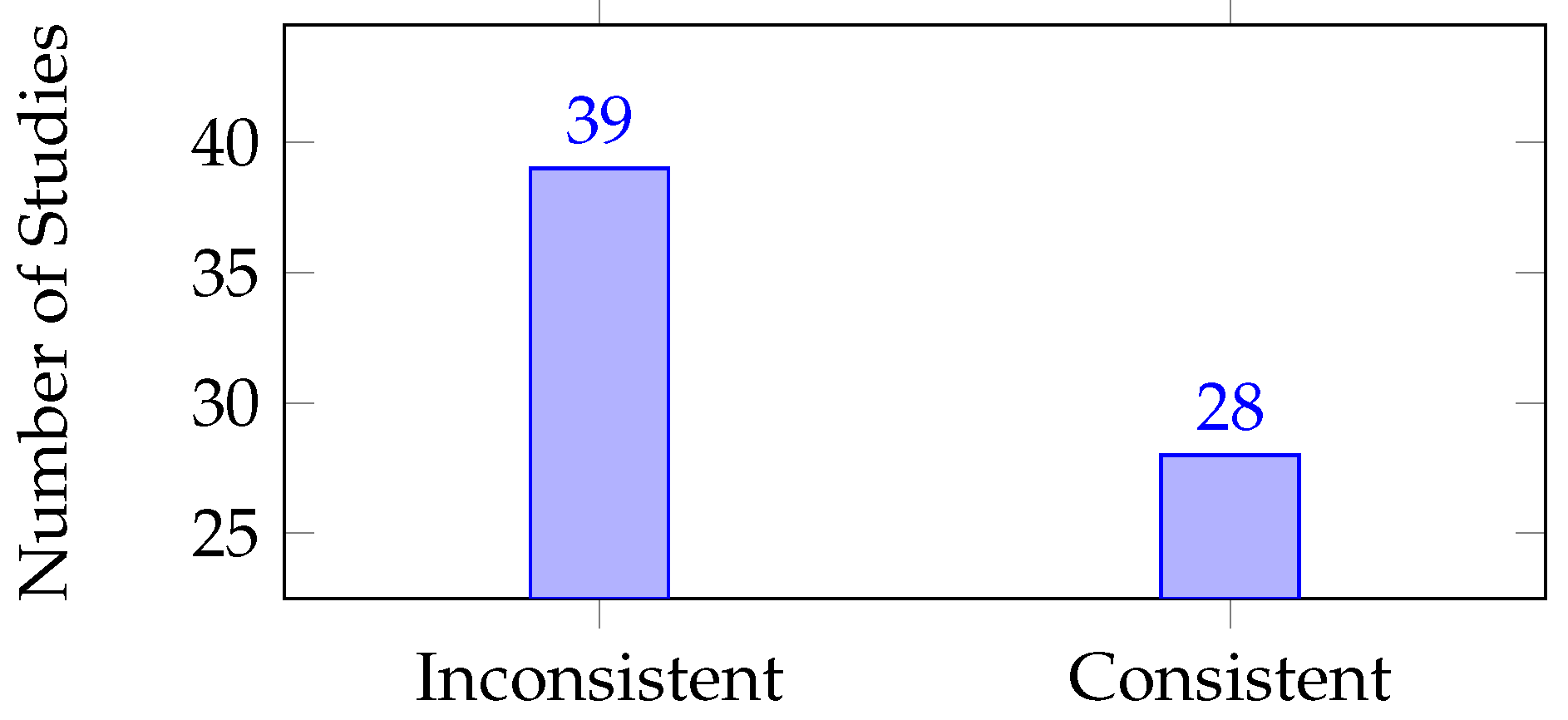

| Duration | Consistent Contact | Includes studies requiring continuous direct contact throughout the interaction. | [17,36,52,56,57,59,71,74,76,77,79,82,90,91,92,93,96,98,99,102,103,104,105,106,107,108,109,110] | |

| Inconsistent Contact | Includes studies where direct contact is not necessary throughout the entire interaction. | [25,46,47,48,49,50,51,53,54,58,60,61,62,63,64,65,66,67,68,69,70,72,73,75,78,80,81,83,84,85,86,87,88,89,94,95,97,100,101] | ||

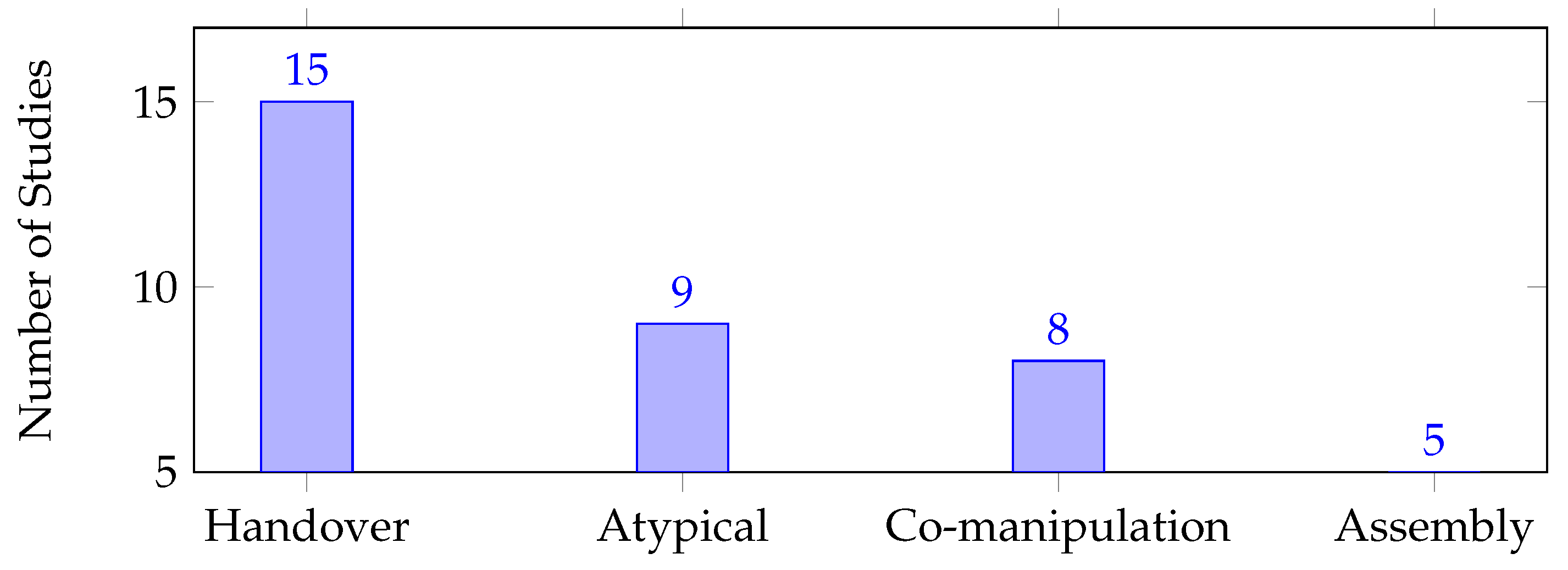

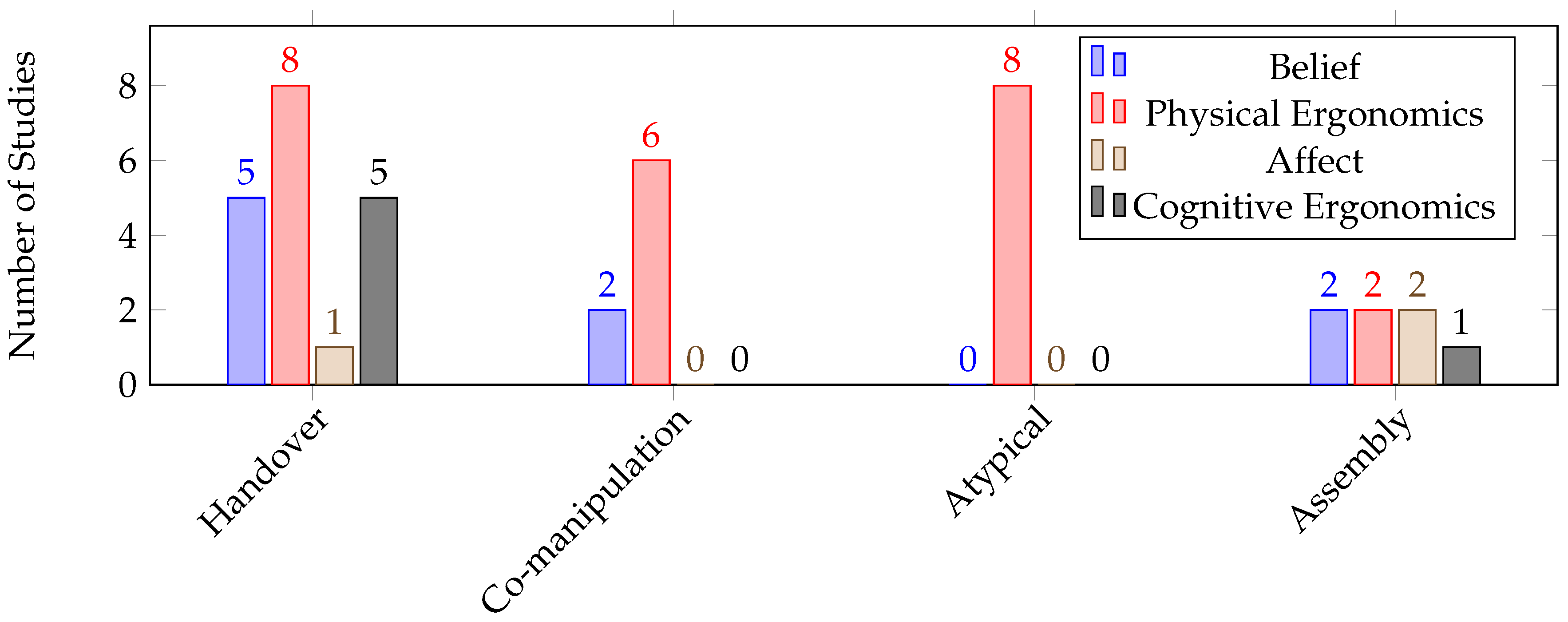

| Indirect pHRI | Assembly | Includes studies where one agent holds a part while the other agent assembles it with another part. | [109,111,112,113,114] | |

| Handover | Includes studies where one agent is handing over an object to the other agent. | [20,21,22,35,64,109,115,116,117,118,119,120,121,122,123] | ||

| Co-manipulation | Includes studies where both human and robot agents manipulate an object in the environment with the goal of changing its position or orientation. | [104,109,112,124,125,126,127,128] | ||

| Atypical | Includes studies that do not fall into any of the other categories of indirect pHRI, such as assistive holding/drilling, or dressing assistance. | [23,109,129,130,131,132,133,134,135] | ||

| Functional Touch | Non-Functional Touch | |

|---|---|---|

| Consistent Contact | [17,36,90,91,92,93,96,98,99,102,103,104,105,106,107,108] [109] | [52,56,57,59,71,74,76,77,79,82] |

| Inconsistent Contact | [49,83,94,95,97,100,101] | [25,46,47,48,49,51,53,54,58,60,61,62,63,64,65,66,67,68,69,70,72,73,75,78,80,81,83,84,85,86,87,88,89] |

| Measurable Dimension | Description |

|---|---|

| Tactility | Indicates the perceived pleasantness when touching a robot. |

| Physical Comfort | Includes studies that have evaluated human posture, muscular effort, joint torque overloading, peri-personal space, comfortable handover, legibility, and physical safety. |

| Mechanical Transparency | “Quantifies the ability of a robot to follow the movements imposed by the operator without noting any resistant effort” [125]. It includes predictability of the robot’s motion in following user physical instructions, naturalness and smoothness of the motion, sense of being in control, responsiveness to physical instruction of participants, feeling of resistive force, and frustration. |

| Robot Perception | Indicates the user’s perception towards the robot. It includes attitudes, impressions, opinions, preferences, favourability, likeability, willingness for another interaction, behaviour perception, politeness, anthropomorphism, animacy, vitality, perceived naturalness, agency, perceived intelligence, competence, perceived safety, emotional security, harmlessness, toughness, familiarity, friendship, companionship, friendliness, warmth, psychological comfort, helpfulness, reliable alliance, acceptance, ease of use, and perceived performance. |

| Perceived Intuition | Includes goal perception, whether the robot understands the goal of the task or not, robot intelligence, willingness to follow the robot’s suggestion, dependability, understanding of robot intention and perceived robot helpfulness. |

| Conveying Emotions | Indicates humans’ perspective on how they should convey their emotions to robots by physical touch. |

| Receiving Emotions | Indicates humans’ perspective of how humans expect to receive a robot’s emotions through physical touch. |

| Emotional State | Indicates recognition of a human’s emotional state during interaction without necessarily conveying their emotions using physical touch. |

| Human Factor Type | Measurable Dimension | Studies |

|---|---|---|

| Cognitive Ergonomics | Mental Workload | [17,22,25,35,36,70,90,92,97,106,108,114,117,118] |

| Stress | [17,20,36,57,101,118] | |

| Physical Ergonomics | Pain Sensitivity | [110] |

| Tactility | [55,74,76] | |

| Physical Comfort | [20,21,22,23,90,104,109,112,115,120,121,122,127,128,129,130,131,132,133,134] | |

| Mechanical Transparency | [104,105,107,114,125,135] | |

| Belief | Robot Perception | [17,25,35,36,46,49,50,51,52,53,54,55,57,58,59,62,65,66,68,69,71,72,74,77,80,81,83,84,85,86,87,88,91,94,95,96,98,100,103,116,123,135] |

| Trust | [54,58,84,86,90,114,118,119,124,126] | |

| Perceived Intuition | [36,70,87,114] | |

| Enjoyability | [25,70] | |

| Anxiety | [17,36,59,88,91] | |

| Affect | Emotional state | [36,50,51,53,61,63,64,65,67,70,71,77,83,91,93,102,111,113] |

| Conveying Emotions | [46,48,53,73,75,78] | |

| Receiving Emotions | [56,60,79,82,89] |

| Human Factor Type | Measurable Dimension | Direct pHRI | Indirect pHRI | ||||||

|---|---|---|---|---|---|---|---|---|---|

| Purpose | Duration | ||||||||

| Functional Touch | Non-functional Touch | Consistent | Inconsistent | Assembly | Handover | Co-manipulation | Atypical | ||

| Cognitive Ergonomics | Mental Workload | [90]* [92]* [106]* [36]* [17] [97] | [25] [70] | [108] [36]* [17] [92]* [106]* [90]* | [97] [25] [70] | [114] | [35] [117] [22] [118]* | ||

| Stress | [36]* [17] | [57] | [57] | [101] | [20] [118]* | ||||

| Physical Ergonomics | Pain Sensitivity | [110]* | |||||||

| Tactility | [55][76] [74] | [76] [74] | |||||||

| Physical Comfort | [90]* [109]* | [90]* [109]* | [112] [109]* | [115] [20] [120] [121] [21][122]* [22] [109]* | [112] [104]* [127]* [128]* [109]* | [131] [129]* [23]* [132]*[130]* [133] [134]* [109]* | |||

| Mechanical Transparency | [104] [105] [107] | [104] [105] [107] | [114] | [125] | [125] [135] | ||||

| Belief | Robot Perception | [36]* | [54] [52]* [46]* [53] [55] [57] [58] [62][25] [65] [66] [68] [69] [72] [77] [80] [81]* [83] [84] [85] [86] [87] [88]* [51] [49] [50] [47] [59] [71] [74] | [90] [17] [93] [36]* [96] [52]* [56] [57] [99] [59] [71] [102][76] [91] | [95] [49] [50] [51] [46]* [53]* [58] [62][100] [25] [65] [66] [68] [69] [72] [80] [81]* [83] [84] [85] [86] [87] [88]* [47] [54] [70] | [35][116]* [123] | [135] | ||

| Trust | [90]* | [54] [58] [84] [86] | [54] [58] [84] [86] | [114] | [119]* [118]* | [124][126]* | |||

| Perceived Intuition | [36]* | [70] [87] | [36]* | [70] [87] | [114] | ||||

| Enjoyability | [25] [70] | [25] [70] | |||||||

| Anxiety | [36]* [17] | [88]* [59] | [91] [36]* [17] | [88]* [59] | |||||

| Affect | Emotional State | [91] [36]* [83] [93] [102] | [50] [53]*[61] [63] [64] [65] [67]* [70] [83] [51] [77] | [36]* [91] [77] [17] | [50] [53]*[61] [63] [64][65] [67]* [70] [83] [51] | [113]* [111] | [64] | ||

| Conveying Emotions | [48] [75] [46]* [53]* [73]* [78]* | [48] [46]* [73]*; [53]* [75] [78]* | |||||||

| Receiving Emotions | [60] [79] [82] [89] [56]* | [79] [82] | [60] [89] | ||||||

| HF | MD | Q | Machine Learning Model | PS | Mathematical Model | PD | |||

|---|---|---|---|---|---|---|---|---|---|

| PD | PS | PD | TP | PS | |||||

| Cognitive Ergonomics | Mental Workload | [114] [25] [70] [108] [97] [35] [117] [22] | [106]* [92]* | [36]* [17] | [118]* | [90]* | |||

| Stress | [57] [101] | [36]* [17] [20] | [118]* | ||||||

| Physical Ergonomics | Pain Sensitivity | [110]* | |||||||

| Tactility | [55] [76] [74] | ||||||||

| Physical Comfort | [115] [20] [120] [121] [21] | [23]* [129]* [109]* | [20] [131] | [132] [133] [104] [134] [127] [128][90]* [122] | [130]* | [22] [125]* | |||

| Mechanical Transparency | [114][104][105][107][135] | [112] | |||||||

| Belief | Robot Perception | [46]* [47] [53]* [55] [57] [58] [59] [62][100] [25] [65] [66] [68] [69] [71] [72] [74] [77] [80] [81] [83] [84] [85] [86] [87] [123] [88] [91] [36]* [17] [51] [96] [35][116]* [49] [50] [95] [94] [98]*[103] [99] [135] | [52]* [36]* | [36]* [54] [81] | |||||

| PD | PS | PD | TP | PS | |||||

| Trust | [54] [58] [114] [84] [86] [124] | [90]* | [90]* | [119]* [126]* | |||||

| Perceived Intuition | [114] [70] [87] [36]* | [36]* | [36]* | ||||||

| Enjoyability | [25] [70] | ||||||||

| Anxiety | [88] [91] [59] | [36]* [17] | |||||||

| Affect | Emotional State | [53]* [61] [63] [64] [65] [70] [83] [36]* [50] [111] [71] [51] [77] [74] | [91] | [113]* [102] [83] [36]* [93] [111] | [67]* | ||||

| Conveying Emotions | [75] [48] | [78]* | [46] [53]* [73]* | ||||||

| Receiving Emotions | [56]* | ||||||||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).