Submitted:

14 April 2023

Posted:

17 April 2023

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Related Systems

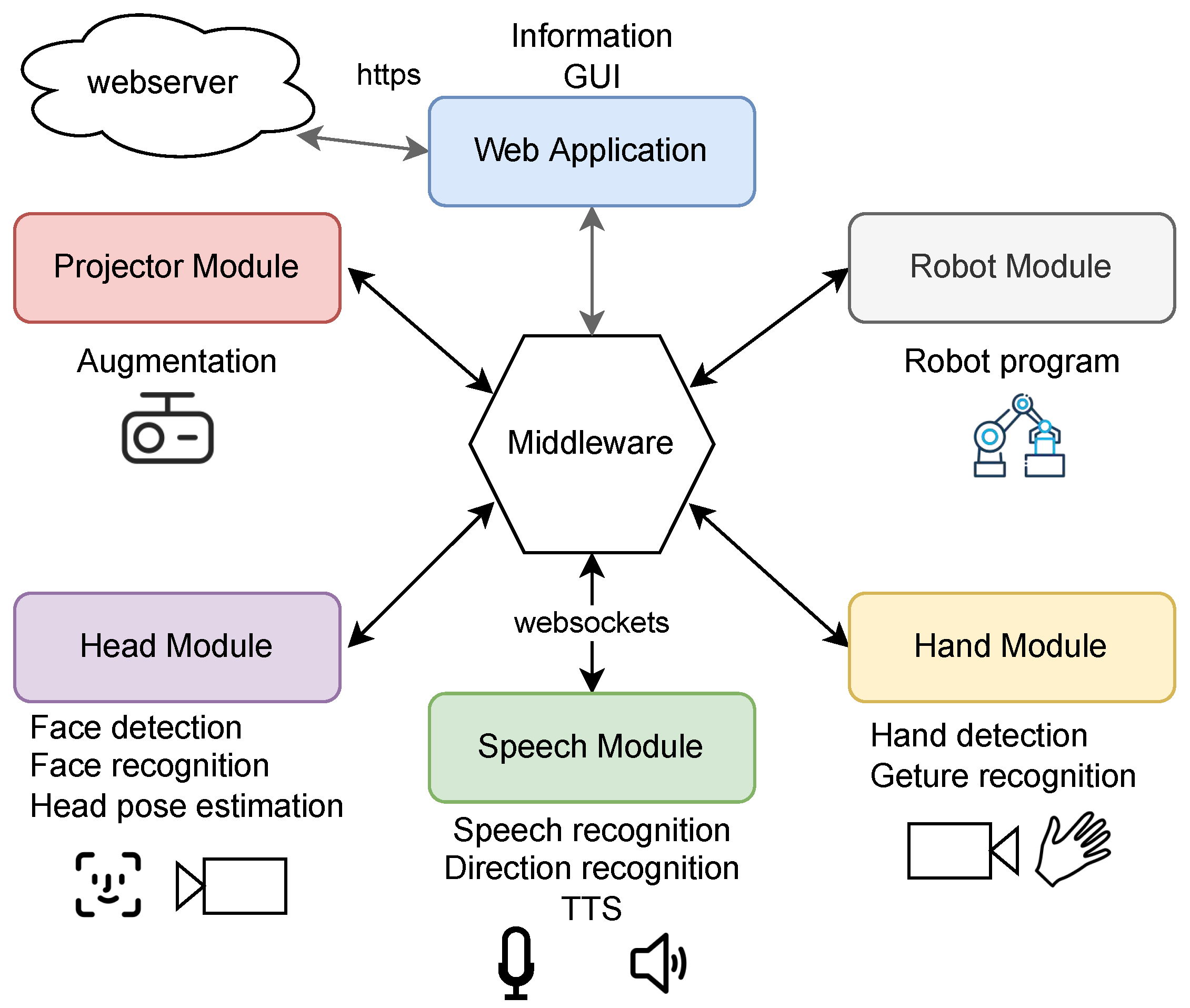

3. System Design

3.1. Use Case

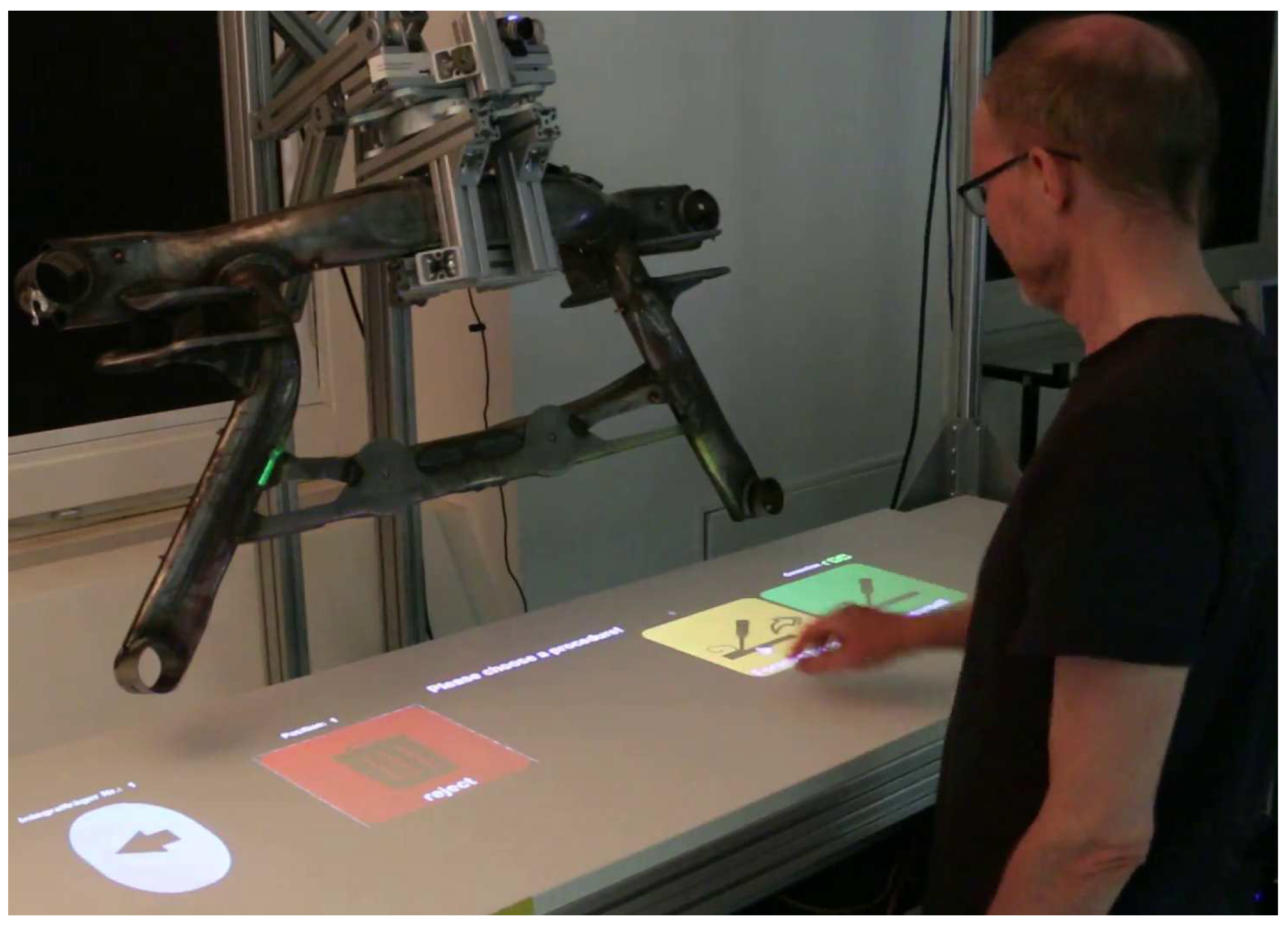

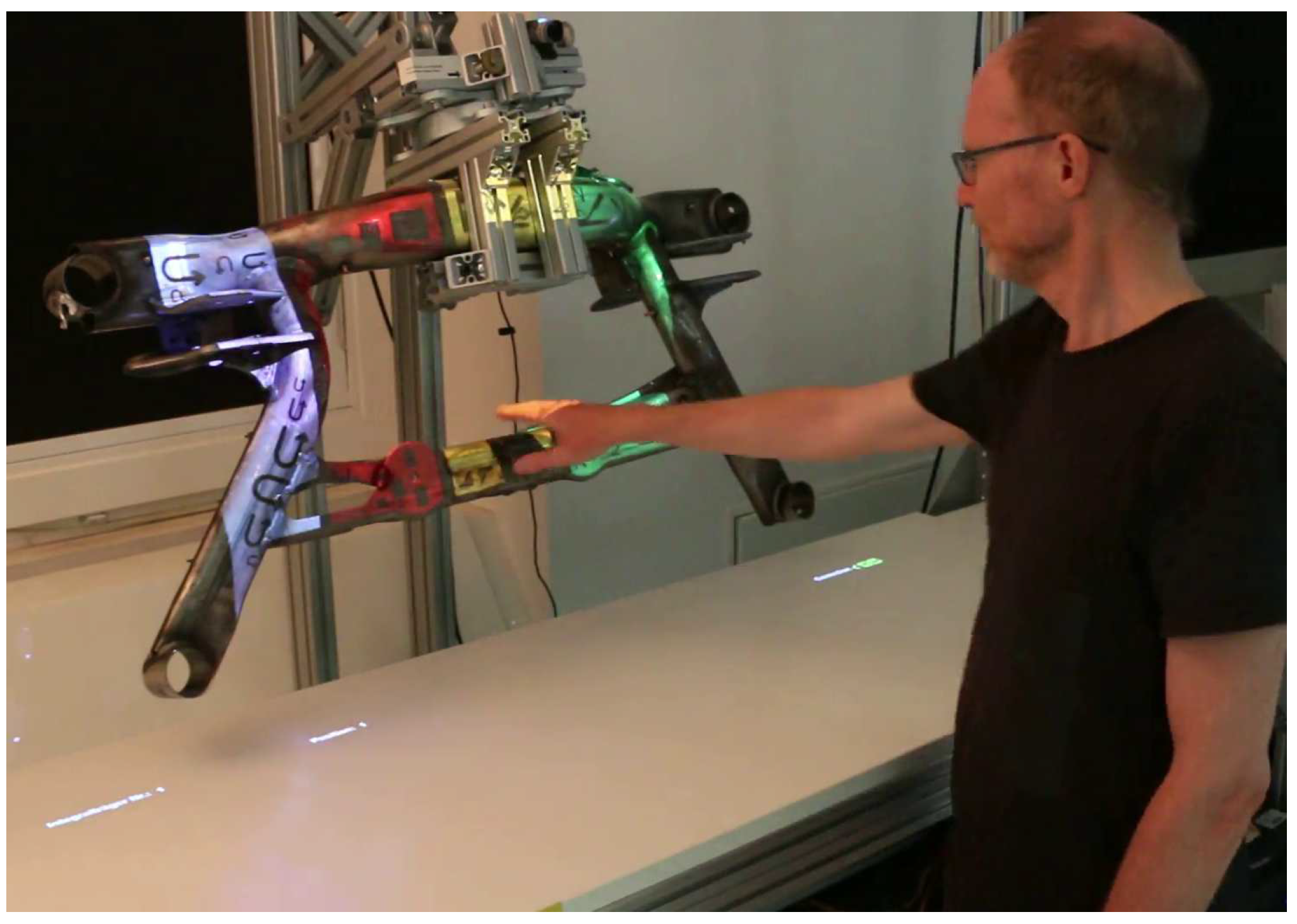

3.2. Workflow

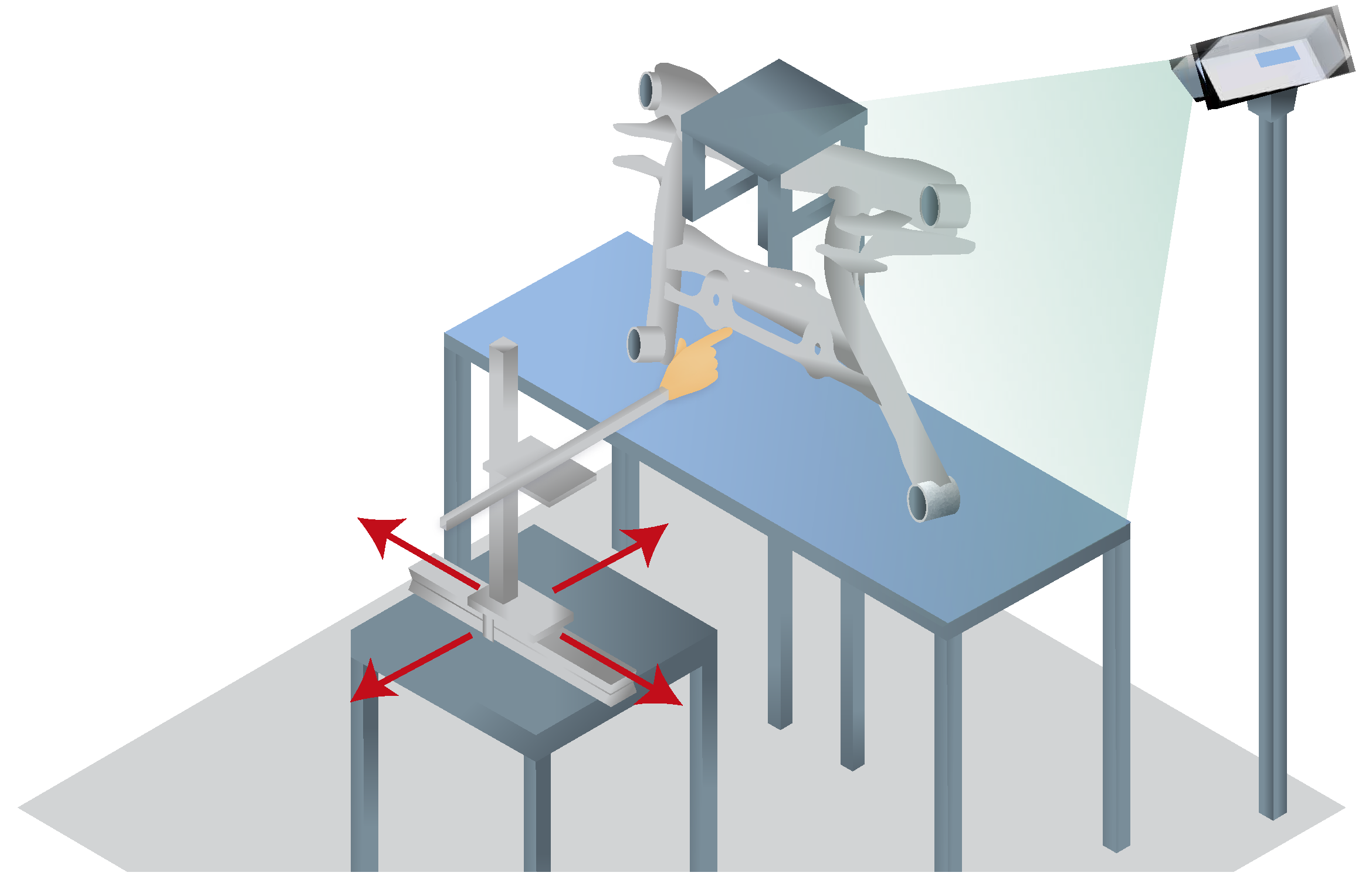

3.3. Setup

3.4. Middleware

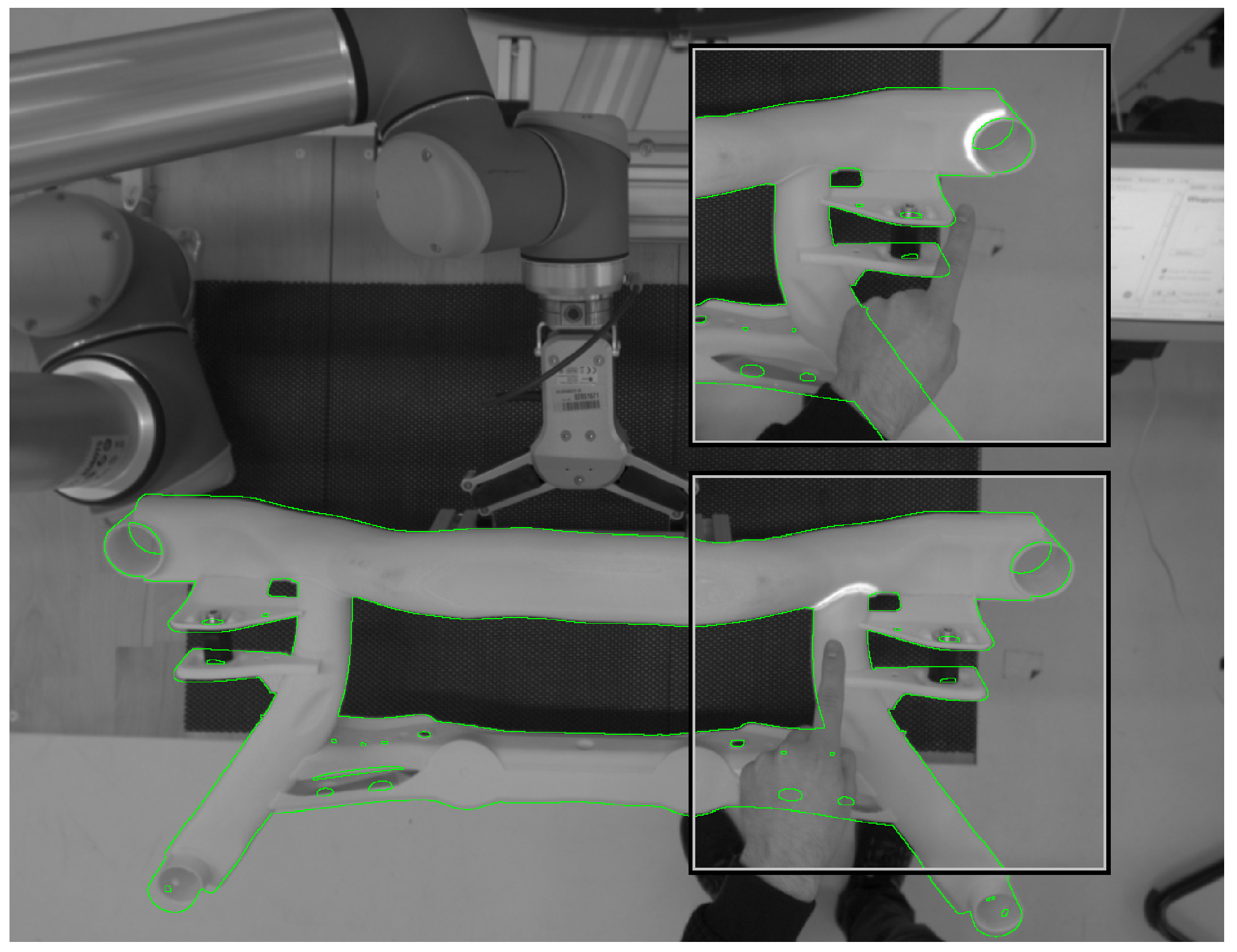

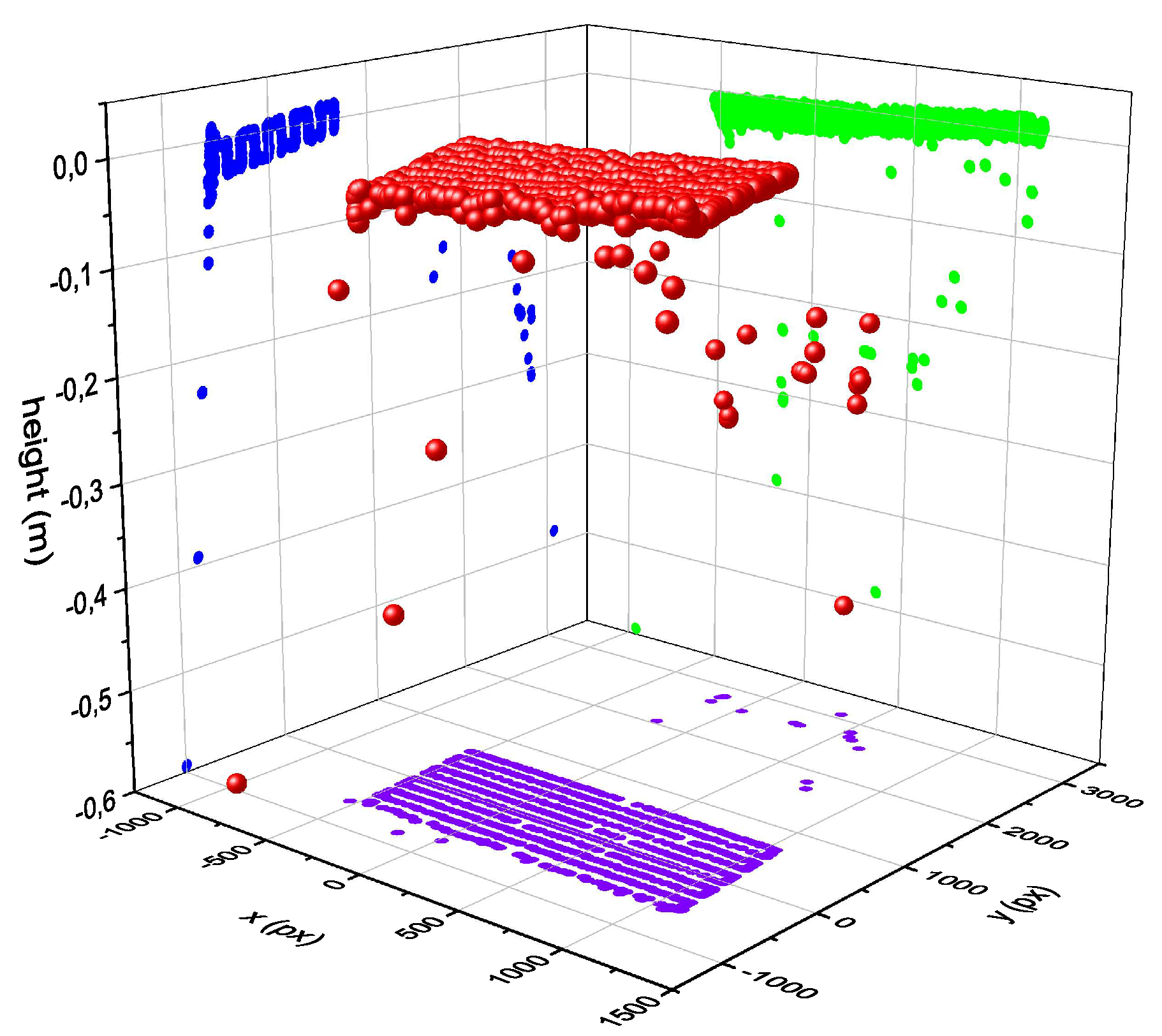

3.5. Hand Recognition Module

3.6. Head Orientation Module

3.7. Speech Modules

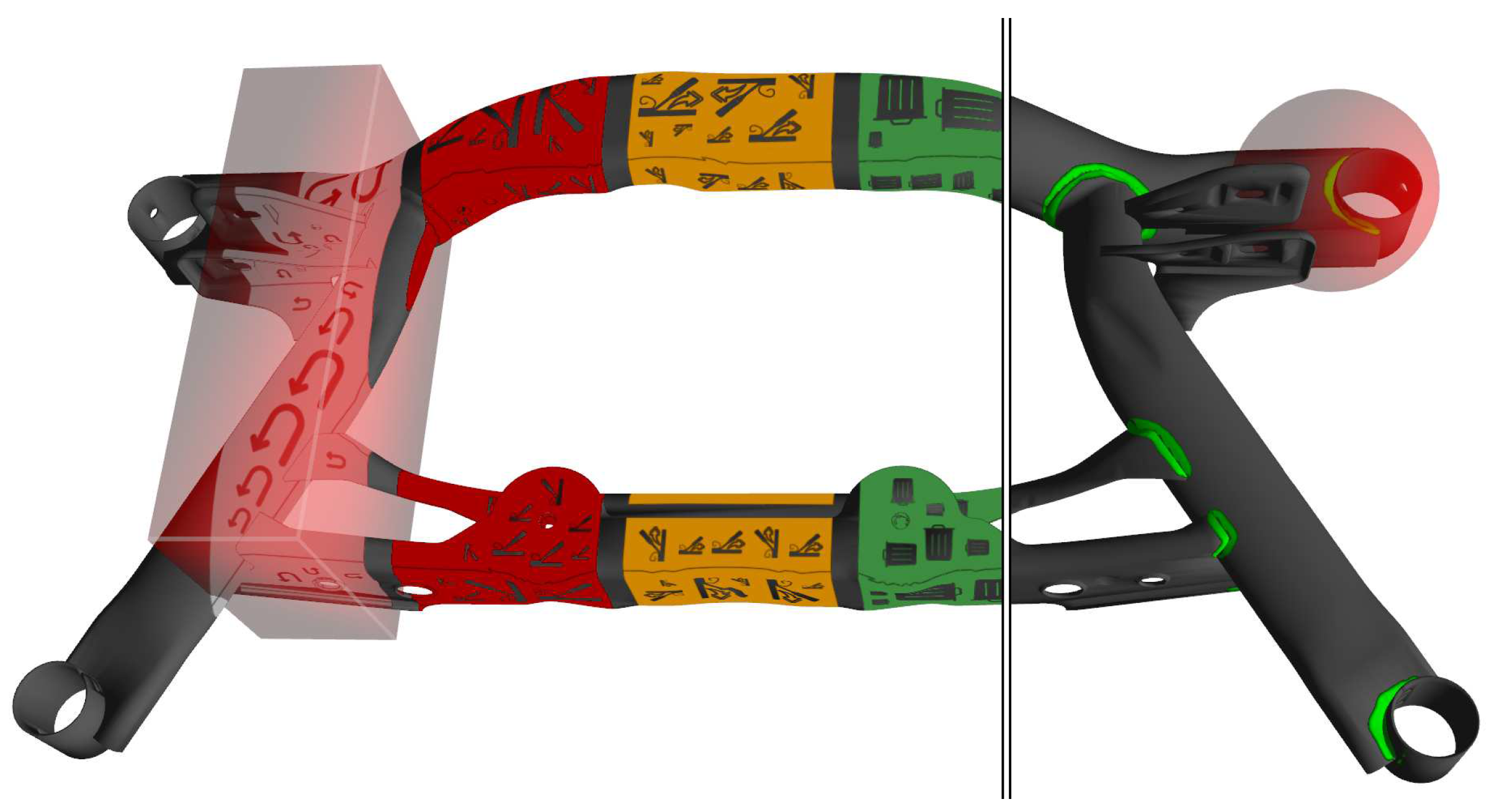

3.8. Projection and Registration Module

3.9. Robot Module

4. Technical assessment of recognition performance

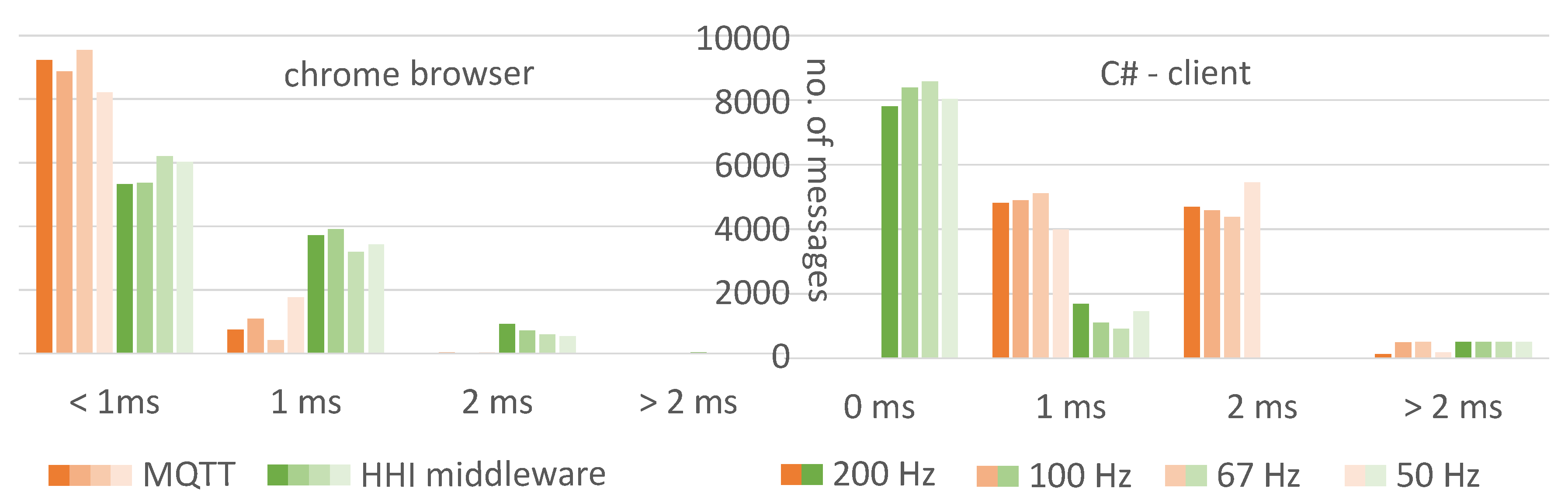

4.1. Performance Middleware

4.2. Speech Recognition

4.3. Hand Detection Accuracy

5. Uni- vs. Multimodal Interaction Evaluation

5.1. Study Design

5.2. Procedure

5.3. Participants

5.4. Hypotheses

6. Results

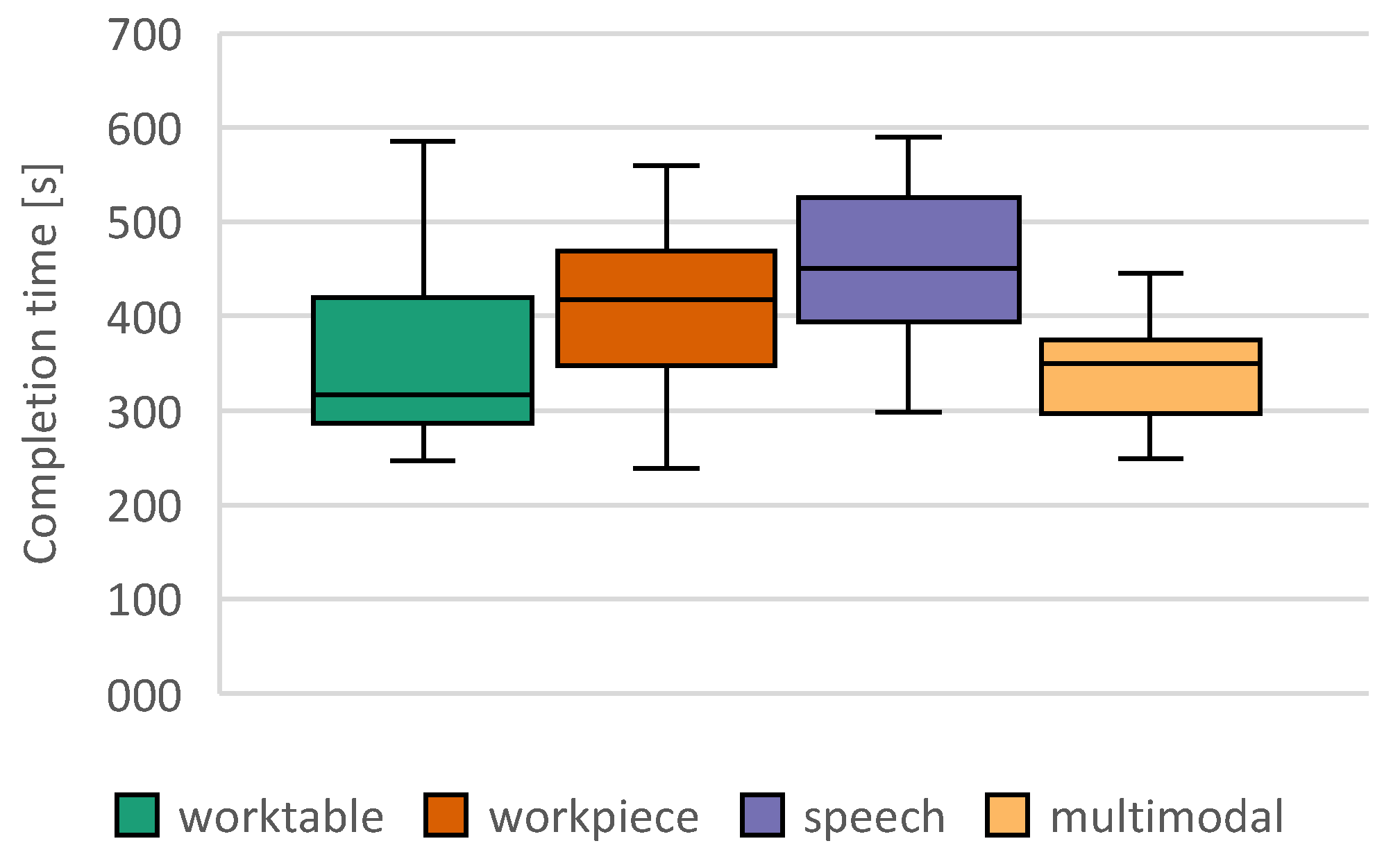

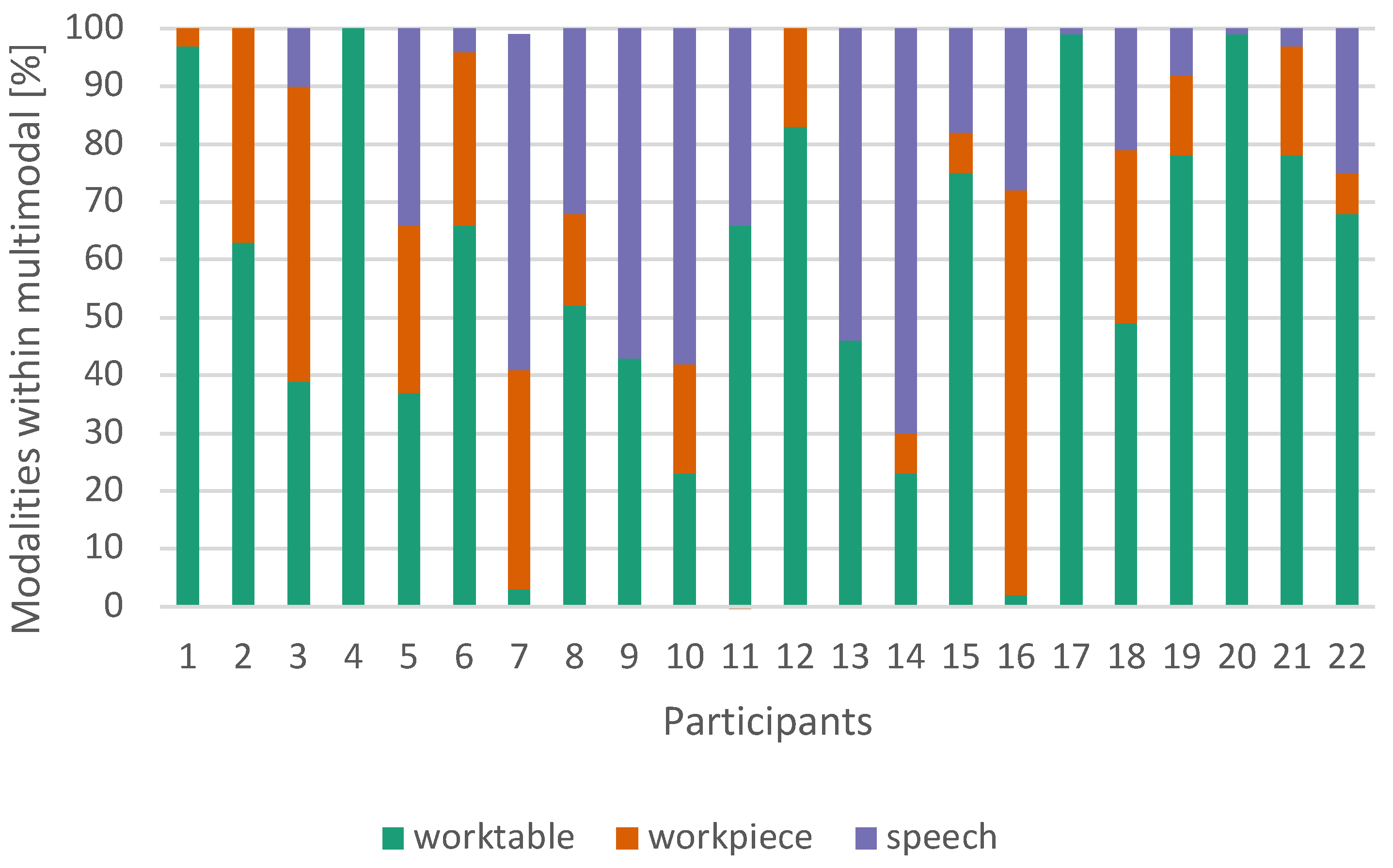

6.1. Objective Measurements

6.2. Subjective Measurements

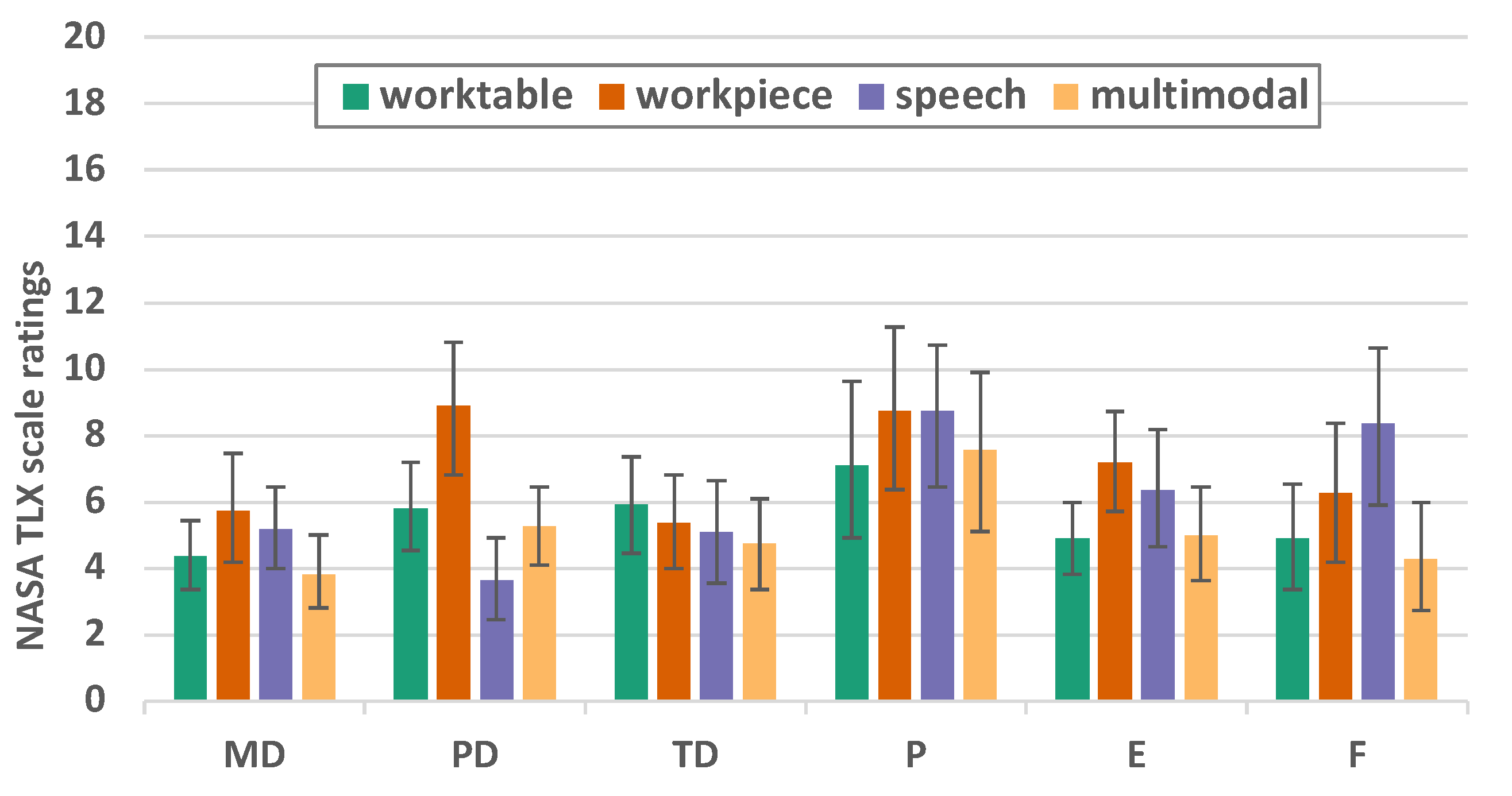

6.2.1. NASA TLX

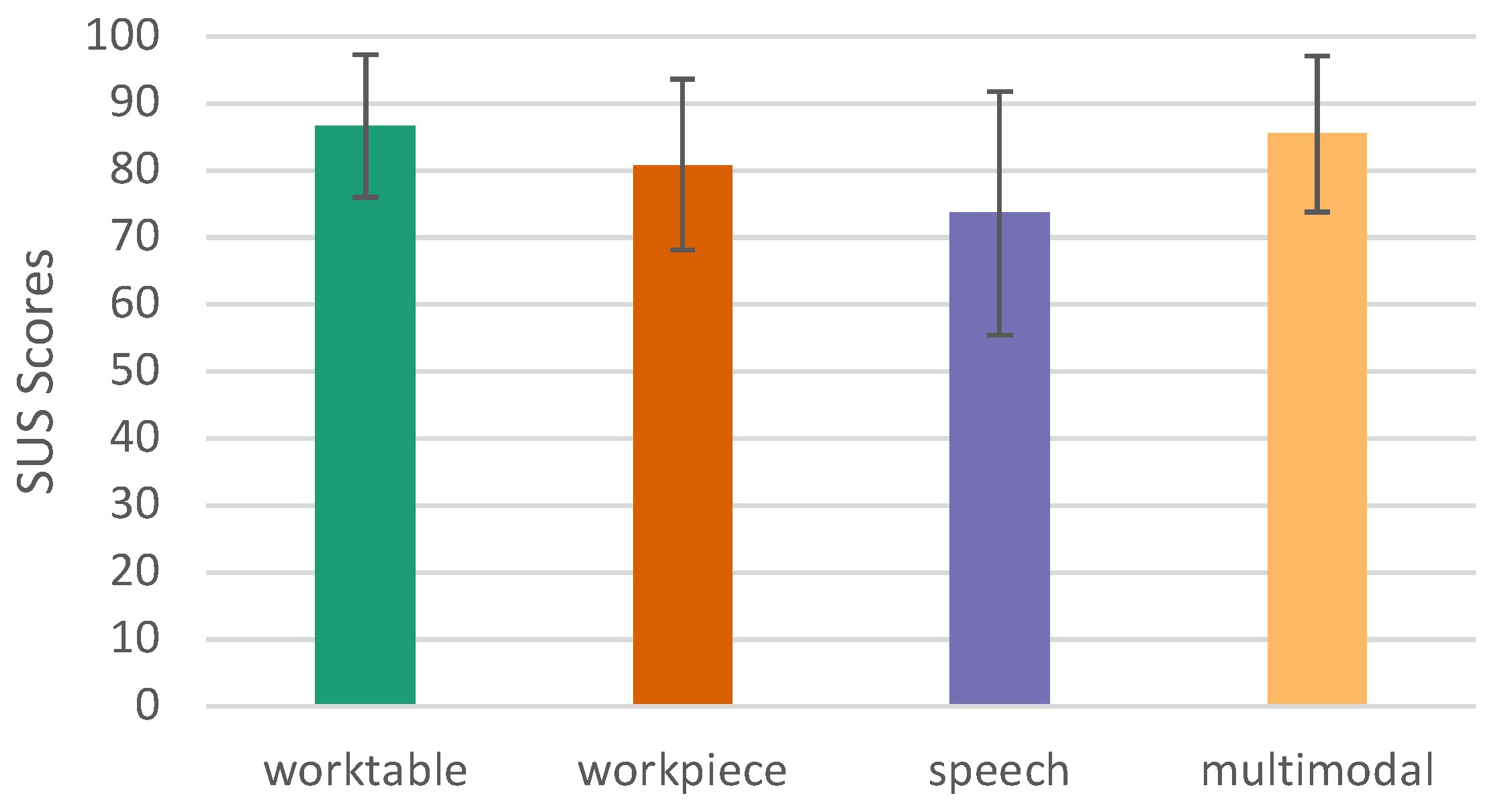

6.2.2. System Usability Score - SUS

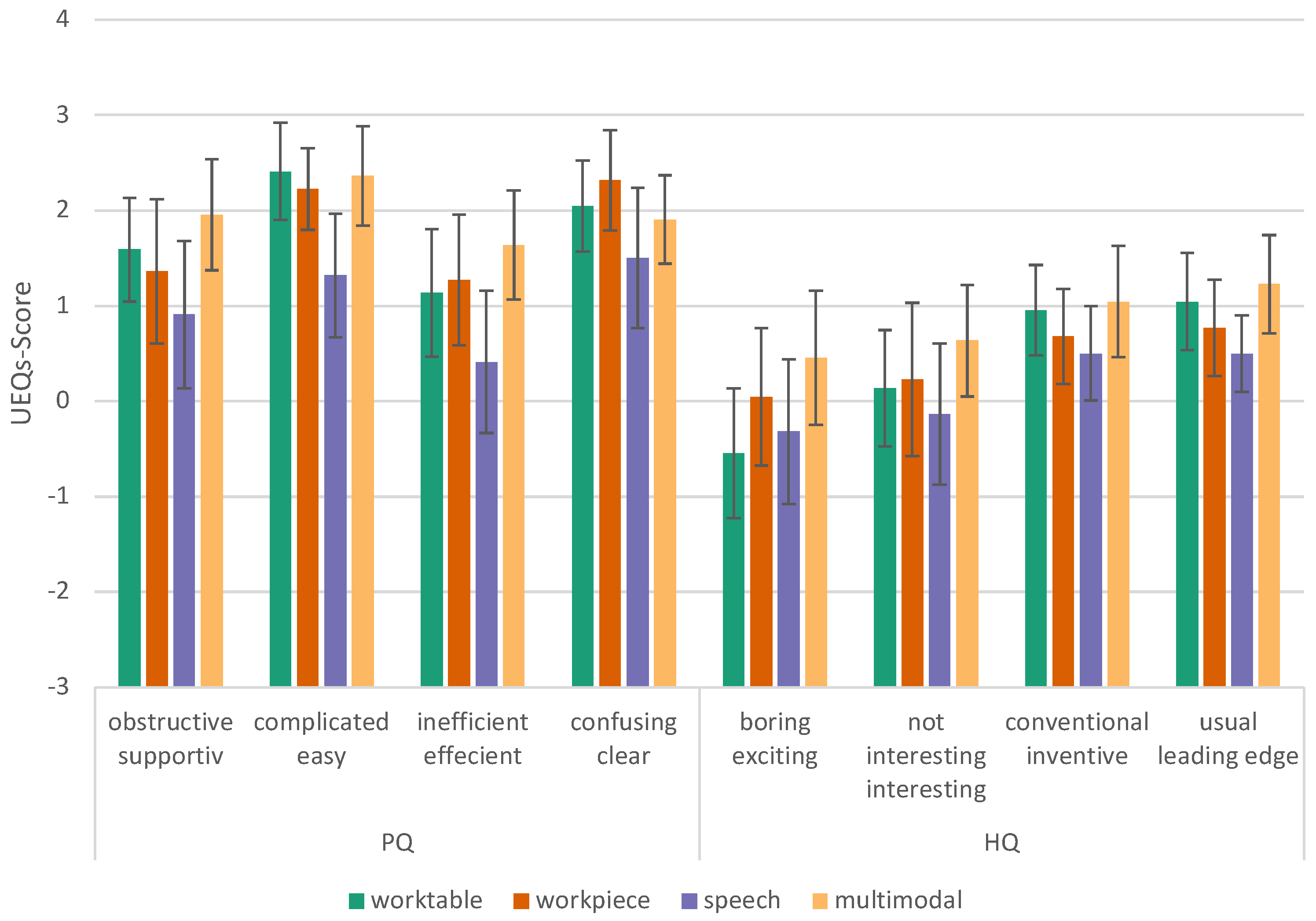

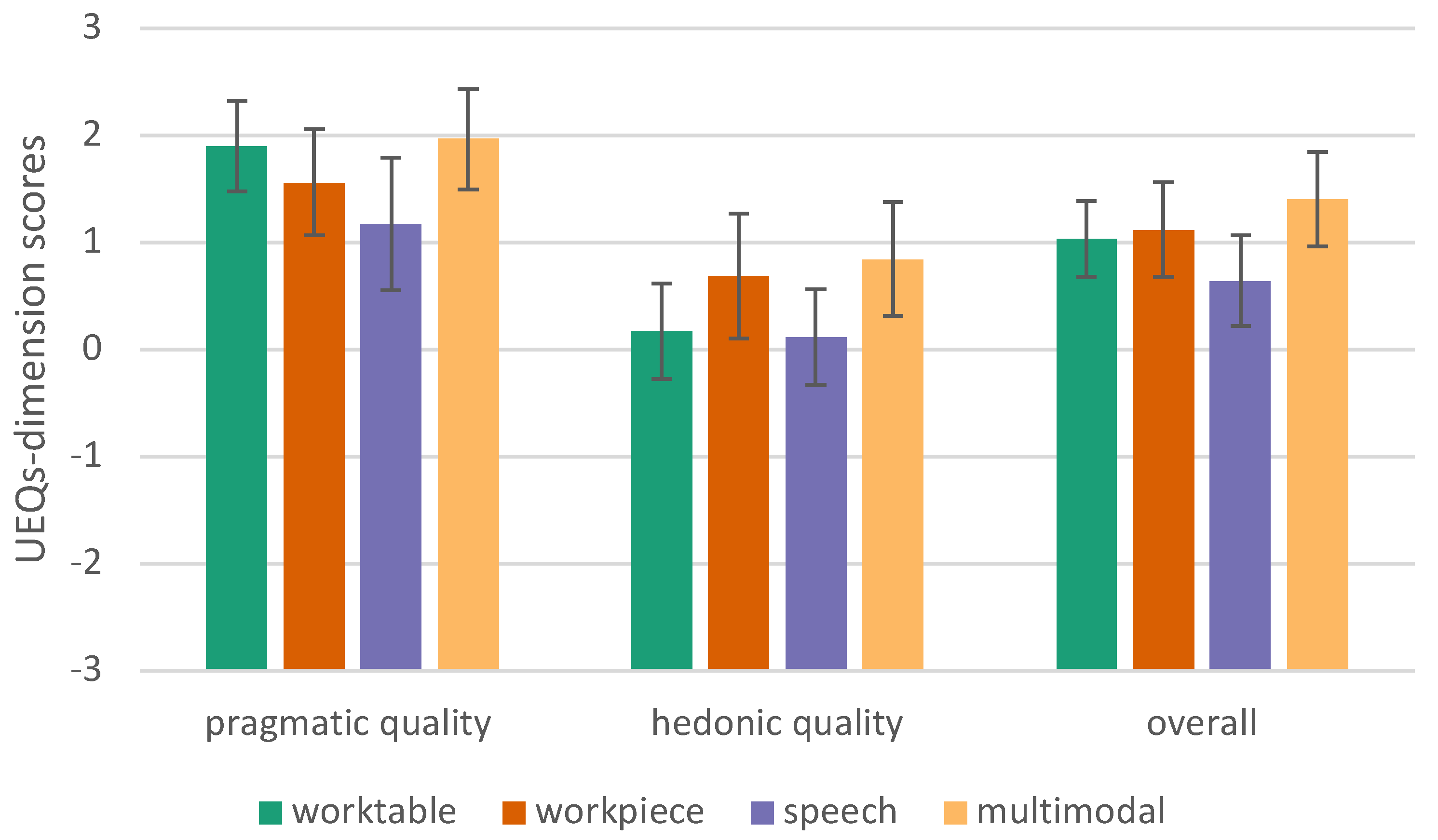

6.2.3. User Experience Questionnaire - short (UEQ-S)

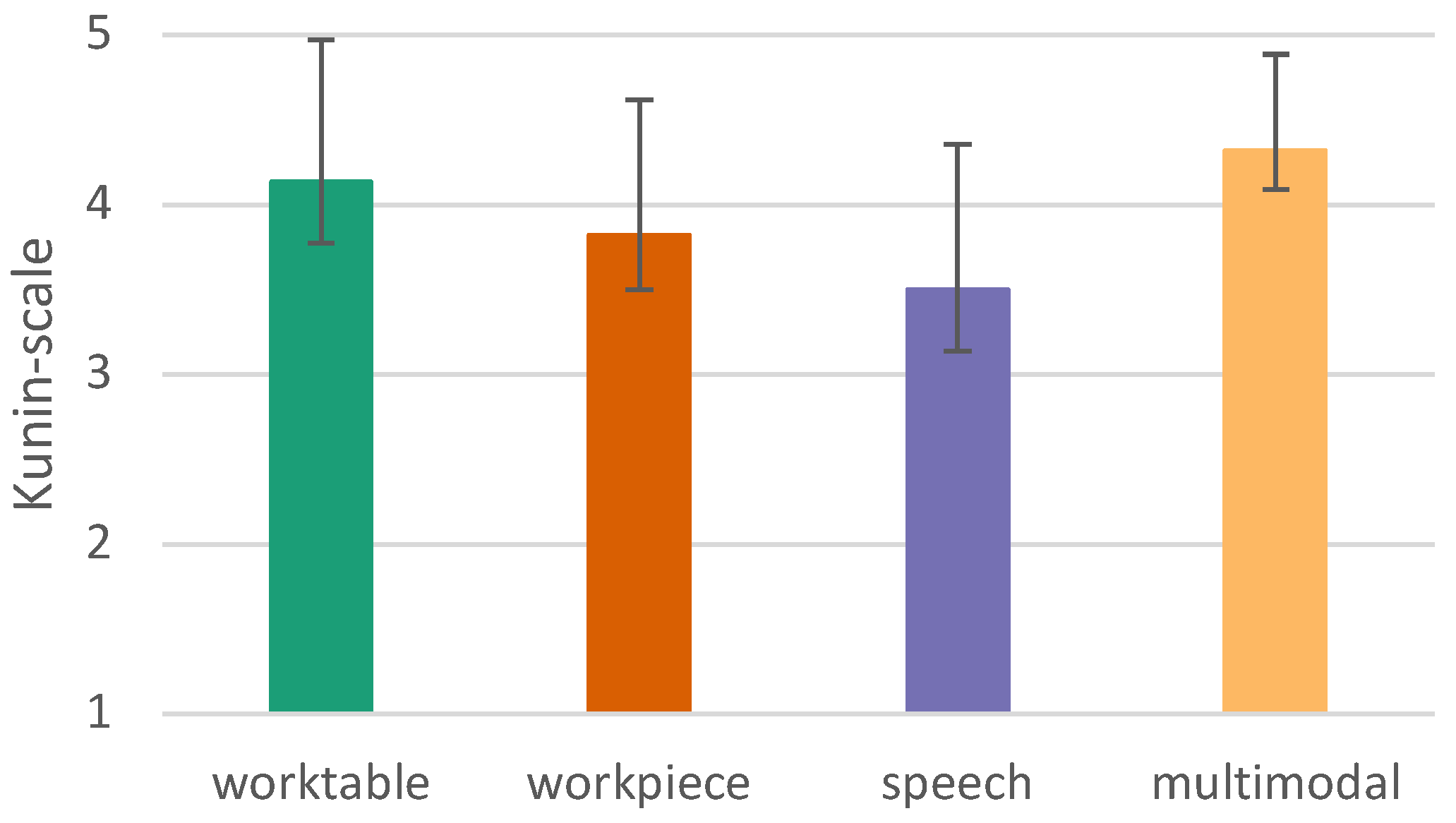

6.2.4. User Satisfaction

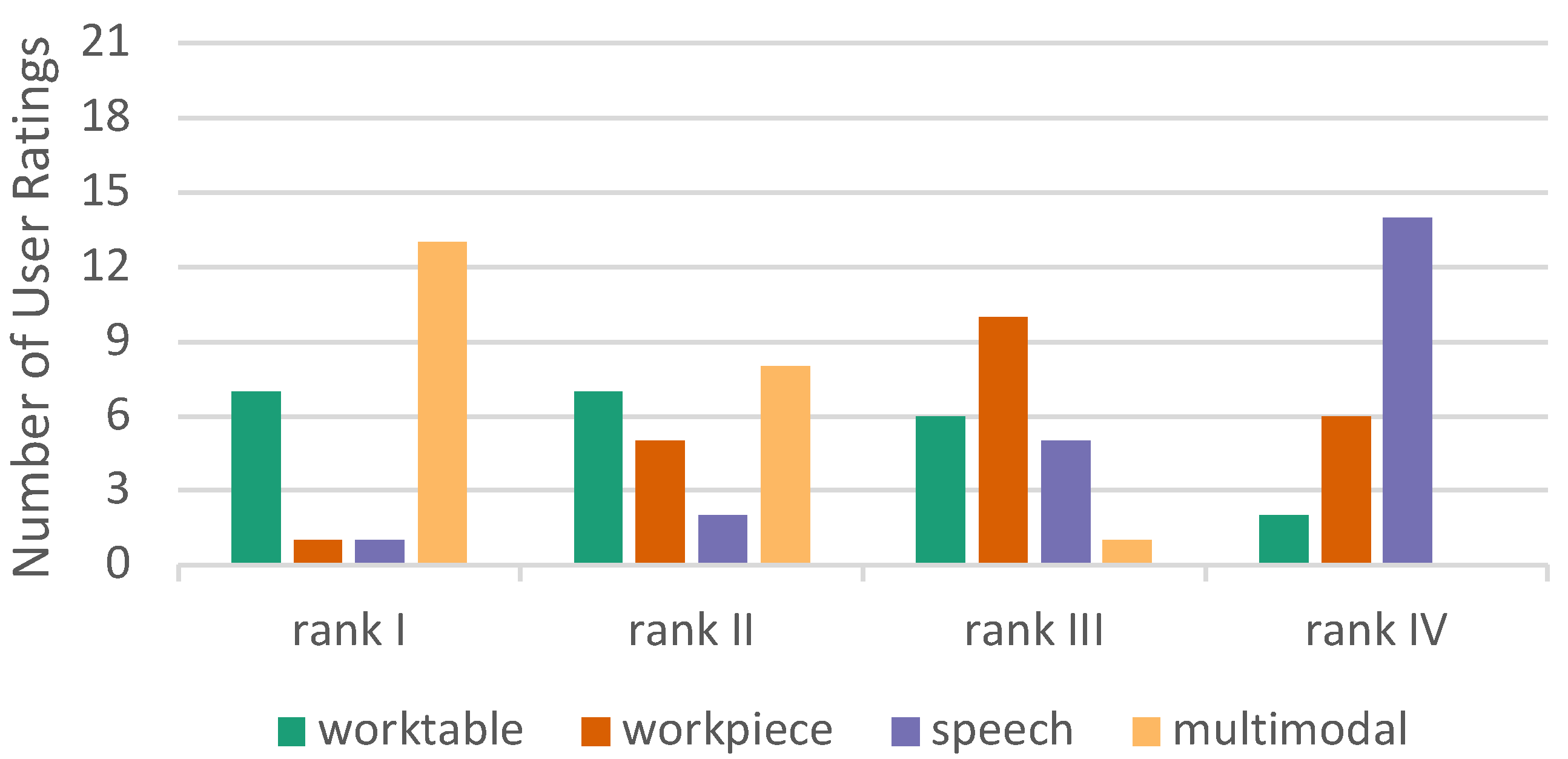

6.2.5. Overall ranking

7. Discussion

7.1. Limitations

7.2. Future Work

8. Conclusion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| CI | Confidence Interval |

| HCI | Human Computer Interaction |

| HMI | Human Machine Interaction |

| HQ | hedonic quality |

| HRC | Human Robot Collaboration |

| HRI | Human Robot Interactions |

| MDiff | mean difference |

| NASA TLX | NASA Task Load Index |

| PQ | pragmatic quality |

| SUS | System Usability Scale |

| UEQ-S | User Experience Questionnaire - short |

References

- Reeves, L.M.; Lai, J.; Larson, J.A.; Oviatt, S.; Balaji, T.; Buisine, S.; Collings, P.; Cohen, P.; Kraal, B.; Martin, J.C.; others. Guidelines for multimodal user interface design. Communications of the ACM 2004, 47, 57–59. [CrossRef]

- Oviatt, S. Ten myths of multimodal interaction. Communications of the ACM 1999, 42, 74–81. [CrossRef]

- Chojecki, P.; Czaplik, M.; Voigt, V.; Przewozny, D. Digitalisierung in der Intensivmedizin. In DIVI Jahrbuch 2018/2019, 1 ed.; DIVI Jahrbuch, Medizinisch Wissenschaftliche Verlagsgesellschaft, 2018; pp. 23–29.

- Gross, E.; Siegert, J.; Miljanovic, B.; Tenberg, R.; Bauernhansl, T. Design of Multimodal Interfaces in Human-robot Assembly for Competence Development. SSRN Scholarly Paper ID 3858769, Social Science Research Network, Rochester, NY, 2021. doi:10.2139/ssrn.3858769. [CrossRef]

- Benoit, C.; Martin, J.; Pelachaud, C.; Schomaker, L.; Suhm, B. Handbook of Multimodal and Spoken Dialogue Systems: Resources, Terminology and Product Evaluation, chapter AudioVisual and Multimodal Speech-Based Systems. Kluwer 2000, 2, 102–203.

- Jaimes, A.; Sebe, N. Multimodal human–computer interaction: A survey. Computer vision and image understanding 2007, 108, 116–134. [CrossRef]

- Oviatt, S. Multimodal Interactive Maps: Designing for Human Performance. Hum.-Comput. Interact. 1997, 12, 93–129. doi:10.1207/s15327051hci1201\%262_4. [CrossRef]

- Dumas, B.; Lalanne, D.; Oviatt, S. Multimodal interfaces: A survey of principles, models and frameworks. In Human machine interaction; Springer, 2009; pp. 3–26.

- Oviatt, S. Advances in robust multimodal interface design. IEEE Computer Graphics and Applications 2003, 23, 62–68. doi:10.1109/MCG.2003.1231179. [CrossRef]

- Abich, J.; Barber, D.J. The impact of human–robot multimodal communication on mental workload, usability preference, and expectations of robot behavior. Journal on Multimodal User Interfaces 2017, 11, 211–225. [CrossRef]

- Berg, J.; Lu, S. Review of Interfaces for Industrial Human-Robot Interaction. Current Robotics Reports 2020, 1, 27–34. doi:10.1007/s43154-020-00005-6. [CrossRef]

- Suzuki, R.; Karim, A.; Xia, T.; Hedayati, H.; Marquardt, N. Augmented Reality and Robotics: A Survey and Taxonomy for AR-Enhanced Human-Robot Interaction and Robotic Interfaces. Proceedings of the 2022 CHI Conference on Human Factors in Computing Systems; Association for Computing Machinery: New York, NY, USA, 2022; CHI ’22. doi:10.1145/3491102.3517719. [CrossRef]

- Nizam, S.M.; Abidin, R.Z.; Hashim, N.C.; Lam, M.C.; Arshad, H.; Majid, N. A review of multimodal interaction technique in augmented reality environment. Int. J. Adv. Sci. Eng. Inf. Technol 2018, 8, 1460.

- Hjorth, S.; Chrysostomou, D. Human–robot collaboration in industrial environments: A literature review on non-destructive disassembly. Robotics and Computer-Integrated Manufacturing 2022, 73, 102208. [CrossRef]

- Materna, Z.; Kapinus, M.; Španěl, M.; Beran, V.; Smrž, P. Simplified industrial robot programming: Effects of errors on multimodal interaction in WoZ experiment. 2016 25th IEEE International Symposium on Robot and Human Interactive Communication (RO-MAN), 2016, pp. 200–205. ISSN: 1944-9437, doi:10.1109/ROMAN.2016.7745111. [CrossRef]

- Saktheeswaran, A.; Srinivasan, A.; Stasko, J. Touch? Speech? or Touch and Speech? Investigating Multimodal Interaction for Visual Network Exploration and Analysis. IEEE Transactions on Visualization and Computer Graphics 2020, 26, 2168–2179. Conference Name: IEEE Transactions on Visualization and Computer Graphics, doi:10.1109/TVCG.2020.2970512. [CrossRef]

- Zhou, J.; Lee, I.; Thomas, B.; Menassa, R.; Farrant, A.; Sansome, A. Applying spatial augmented reality to facilitate in-situ support for automotive spot welding inspection. Proceedings of the 10th International Conference on Virtual Reality Continuum and Its Applications in Industry, 2011, pp. 195–200.

- Hart, S.G. NASA-task load index (NASA-TLX); 20 years later. Proceedings of the human factors and ergonomics society annual meeting. Sage publications Sage CA: Los Angeles, CA, 2006, Vol. 50, pp. 904–908. Issue: 9.

- Brooke, J. SUS-A quick and dirty usability scale. Usability evaluation in industry 1996, 189, 4–7. Publisher: London–.

- Strazdas, D.; Hintz, J.; Felßberg, A.M.; Al-Hamadi, A. Robots and Wizards: An Investigation Into Natural Human–Robot Interaction. IEEE Access 2020, 8, 207635–207642. Conference Name: IEEE Access, doi:10.1109/ACCESS.2020.3037724. [CrossRef]

- Rupprecht, P.; Kueffner-McCauley, H.; Trimmel, M.; Schlund, S. Adaptive Spatial Augmented Reality for Industrial Site Assembly. Procedia CIRP 2021, 104, 405–410. [CrossRef]

- Kumru, M.; Kılıcogulları, P. Process improvement through ergonomic design in welding shop of an automotive factory. 10th QMOD Conference. Quality Management and Organiqatinal Development. Our Dreams of Excellence; 18-20 June; 2007 in Helsingborg; Sweden. Linköping University Electronic Press, 2008, number 026.

- Gard, N.; Hilsmann, A.; Eisert, P. Projection distortion-based object tracking in shader lamp scenarios. IEEE Transactions on Visualization and Computer Graphics 2019, 25, 3105–3113. [CrossRef]

- Vehar, D.; Nestler, R.; Franke, K.H. 3D-EasyCalib™-Toolkit zur geometrischen Kalibrierung von Kameras und Robotern 2019.

- OPCUA consortium. OPC Unified Architecture (UA), 2020. Accessed: 2021-12-15.

- ROS. ROS - Robot Operating System, 2020. Accessed: 2021-12-15.

- Profanter, S.; Tekat, A.; Dorofeev, K.; Rickert, M.; Knoll, A. OPC UA versus ROS, DDS, and MQTT: Performance Evaluation of Industry 4.0 Protocols. 2019 IEEE International Conference on Industrial Technology (ICIT), 2019, pp. 955–962. ISSN: 2643-2978, doi:10.1109/ICIT.2019.8755050. [CrossRef]

- Saxen, F.; Handrich, S.; Werner, P.; Othman, E.; Al-Hamadi, A. Detecting Arbitrarily Rotated Faces for Face Analysis. 2019 IEEE International Conference on Image Processing (ICIP), 2019, pp. 3945–3949. doi:10.1109/ICIP.2019.8803631. [CrossRef]

- Redmon, J.; Farhadi, A. YOLOv3: An Incremental Improvement. ArXiv 2018, abs/1804.02767.

- Russakovsky, O.; Deng, J.; Su, H.; Krause, J.; Satheesh, S.; Ma, S.; Huang, Z.; Karpathy, A.; Khosla, A.; Bernstein, M.; others. Imagenet large scale visual recognition challenge. International journal of computer vision 2015, 115, 211–252. [CrossRef]

- Baltrušaitis, T.; Robinson, P.; Morency, L.P. OpenFace: An open source facial behavior analysis toolkit. 2016 IEEE Winter Conference on Applications of Computer Vision (WACV), 2016, pp. 1–10. doi:10.1109/WACV.2016.7477553. [CrossRef]

- Natal, A.; Shires, G.; Jägenstedt, P.; Wennborg, H. Web Speech API Specification. https://wicg.github.io/speech-api/, 2020. Accessed: 2021-12-26.

- Raskar, R.; Welch, G.; Low, K.L.; Bandyopadhyay, D. Shader lamps: Animating real objects with image-based illumination. Eurographics Workshop on Rendering Techniques. Springer, 2001, pp. 89–102.

- Gard, N.; Hilsmann, A.; Eisert, P. Markerless Closed-Loop Projection Plane Tracking for Mobile Projector Camera Systems. Proc. IEEE International Conference on Image Processing (ICIP), 2018.

- Gard, N.; Hilsmann, A.; Eisert, P. Combining Local and Global Pose Estimation for Precise Tracking of Similar Objects. Proc. Int. Conf. on Computer Vision Theory and Applications (VISAPP), 2022, pp. 745–756.

- Kern, J.; Weinmann, M.; Wursthorn, S. Projector-Based Augmented Reality for Quality Inspection of Scanned Objects. The international archives of photogrammetry, remote sensing and spatial information sciences 2017, 4, 83–90. doi:10.5194/isprs-annals-IV-2-W4-83-2017. [CrossRef]

- Light, R.A. Mosquitto: server and client implementation of the MQTT protocol. The Journal of Open Source Software 2017, 2, 265. doi:10.21105/joss.00265. [CrossRef]

- Hart, S.G.; Staveland, L.E. Development of NASA-TLX (Task Load Index): Results of empirical and theoretical research. In Advances in psychology; Elsevier, 1988; Vol. 52, pp. 139–183.

- Schneider, F.; Martin, J.; Schneider, G.; Schulz, C.M. The impact of the patient’s initial NACA score on subjective and physiological indicators of workload during pre-hospital emergency care. PLOS ONE 2018, 13, e0202215. Publisher: Public Library of Science, doi:10.1371/journal.pone.0202215. [CrossRef]

- Lewis, J.R. The system usability scale: past, present, and future. International Journal of Human–Computer Interaction 2018, 34, 577–590. Publisher: Taylor & Francis. [CrossRef]

- Gao, M.; Kortum, P.; Oswald, F.L. Multi-language toolkit for the system usability scale. International Journal of Human–Computer Interaction 2020, 36, 1883–1901. [CrossRef]

- Schrepp, M.; Hinderks, A.; Thomaschewski, J. Design and evaluation of a short version of the user experience questionnaire (UEQ-S). International Journal of Interactive Multimedia and Artificial Intelligence, 4 (6), 103-108. 2017.

- Kunin, T. The Construction of a New Type of Attitude Measure1 1955. 8, 65–77. doi:10.1111/j.1744-6570.1955.tb01189.x. [CrossRef]

- TU Berlin. Infos zum Fragenkatalog zur Selbstevaluation, 2020. Accessed: 2022-04-12.

- Huynh, H.; Feldt, L.S. Estimation of the Box correction for degrees of freedom from sample data in randomized block and split-plot designs. Journal of educational statistics 1976, 1, 69–82. [CrossRef]

- Cohen, J. Statistical power analysis for the behavioral sciences; Routledge, 2013; pp. 284––287.

- Jang, S.; Stuerzlinger, W.; Ambike, S.; Ramani, K. Modeling cumulative arm fatigue in mid-air interaction based on perceived exertion and kinetics of arm motion. Proceedings of the 2017 CHI Conference on Human Factors in Computing Systems, 2017, pp. 3328–3339.

- Strazdas, D.; Hintz, J.; Khalifa, A.; Abdelrahman, A.A.; Hempel, T.; Al-Hamadi, A. Robot System Assistant (RoSA): Towards Intuitive Multi-Modal and Multi-Device Human-Robot Interaction. Sensors 2022, 22. doi:10.3390/s22030923. [CrossRef]

- Oviatt, S.; Coulston, R.; Lunsford, R. When do we interact multimodally? Cognitive load and multimodal communication patterns. Proceedings of the 6th international conference on Multimodal interfaces, 2004, pp. 129–136.

| 95%-CI | |||||||

| (I) group | (J) group | MDiff(I-J) | SE | p | Lower | Upper | |

| PD | worktable | workpiece | -3.091 | .909 | .016 | -5.738 | -.444 |

| speech | worktable | -2.182 | .683 | .026 | -4.170 | -.194 | |

| workpiece | -5.273 | .917 | <.001 | -7.943 | -2.603 | ||

| multimodal | -1.636 | .468 | .013 | -2.999 | -.274 | ||

| multimodal | worktable | -.545 | .478 | 1.000 | -1.937 | .846 | |

| workpiece | -3.636 | .889 | .003 | -6.225 | -1.048 | ||

| E | worktable | workpiece | -2.273 | .724 | .030 | -4.382 | -.164 |

| speech | -1.455 | 1.029 | 1.000 | -4.452 | 1.543 | ||

| multimodal | -.091 | .664 | 1.000 | -2.025 | 1.843 | ||

| speech | workpiece | -.818 | .918 | 1.000 | -3.491 | 1.855 | |

| multimodal | workpiece | -2.182 | .755 | .053 | -4.380 | .016 | |

| speech | -1.364 | .725 | .444 | -3.476 | .749 | ||

| F | worktable | workpiece | -1.364 | .794 | .603 | -3.675 | .948 |

| speech | -3.455 | 1.193 | .052 | -6.928 | .019 | ||

| workpiece | speech | -2.091 | 1.143 | .490 | -5.420 | 1.238 | |

| multimodal | worktable | -.636 | .533 | 1.000 | -2.188 | .915 | |

| workpiece | -2.000 | .811 | .134 | -4.362 | .362 | ||

| speech | -4.091 | 1.057 | .005 | -7.168 | -1.014 | ||

| 95%-CI | ||||||

| (I) group | (J) group | MDiff (I-J) | SE | p | Lower | Upper |

| worktable | workpiece | 5.795 | 2.363 | .138 | -1.086 | 12.677 |

| speech | 12.955 | 3.898 | .019 | 1.603 | 24.306 | |

| multimodal | 1.136 | 1.930 | 1.000 | -4.485 | 6.758 | |

| workpiece | speech | 7.159 | 3.352 | .268 | -2.602 | 16.921 |

| multimodal | -4.659 | 2.363 | .371 | -11.539 | 2.221 | |

| speech | multimodal | -11.818 | 2.812 | .002 | -20.006 | -3.630 |

| 95%-CI | |||||||

| (I) group | (J) group | MDiff(I-J) | SE | p | Lower | Upper | |

| PQ | multimodal | speech | .795 | .212 | .007 | .177 | 1.414 |

| HQ | multimodal | workpiece | .670 | .200 | .018 | .087 | 1.254 |

| speech | .727 | .243 | .041 | 0.02 | 1.434 | ||

| OA | multimodal | speech | .761 | .170 | .001 | .268 | 1.255 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).