Submitted:

15 February 2023

Posted:

16 February 2023

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

2. Decompose Arbitrary Density by GMM

- Observed a dataset X, which is following a unknown distribution with density ;

- Assuming there is a GMM that matches the distribution of X :;

- The density of GMM: ;

- As general definition of GMM, and .

2.1. General Concept of GMM Decomposition

- is the density of GMM;

- , , n is the numbers of normal distributions;

- is the interval for locating ;

- are means for Gaussian components;

- is——;

- t is a real number hyper-parameter, usually .

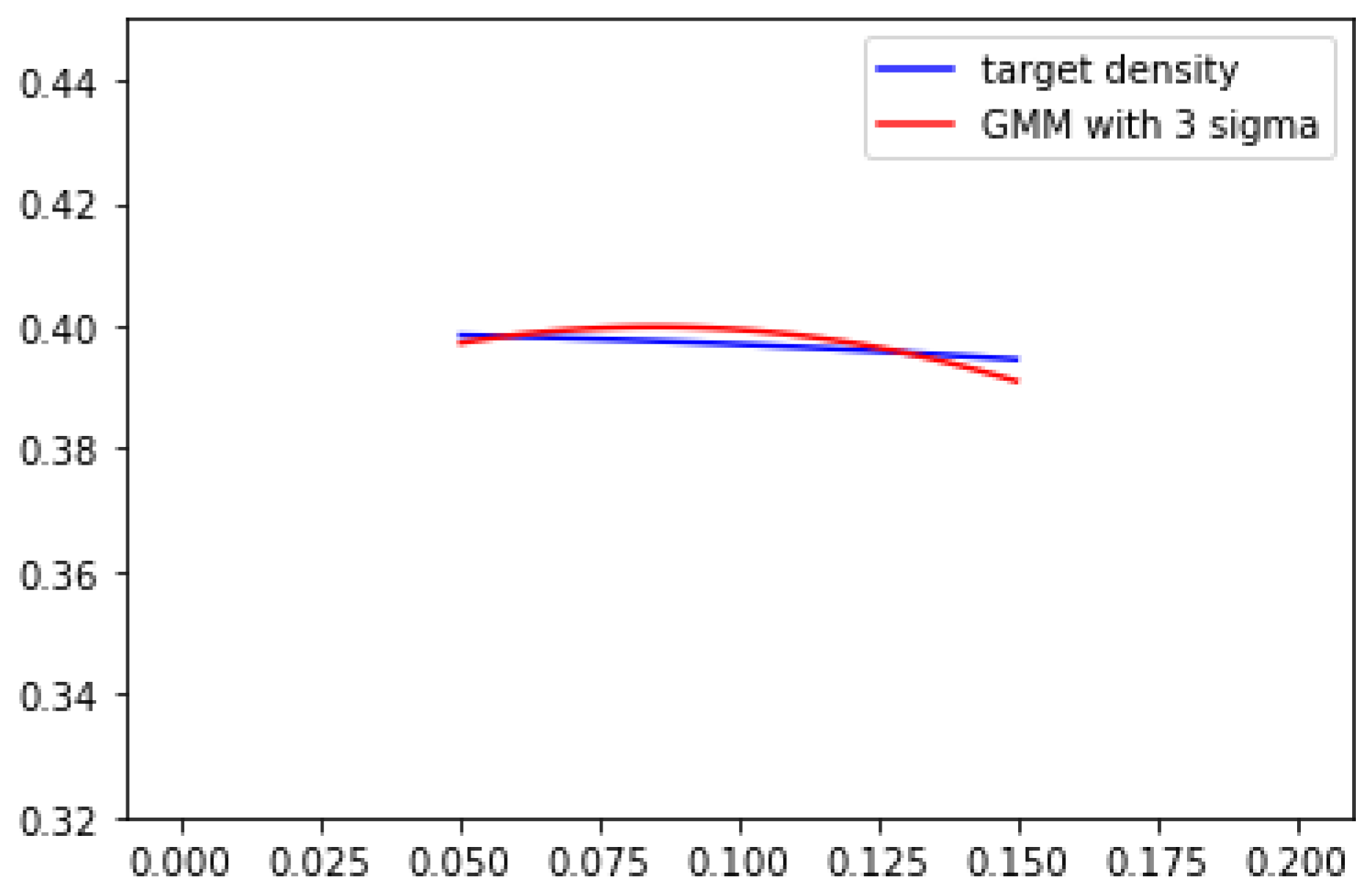

2.2. Approximate Arbitrary Density by GMM

2.3. Density Information Loss

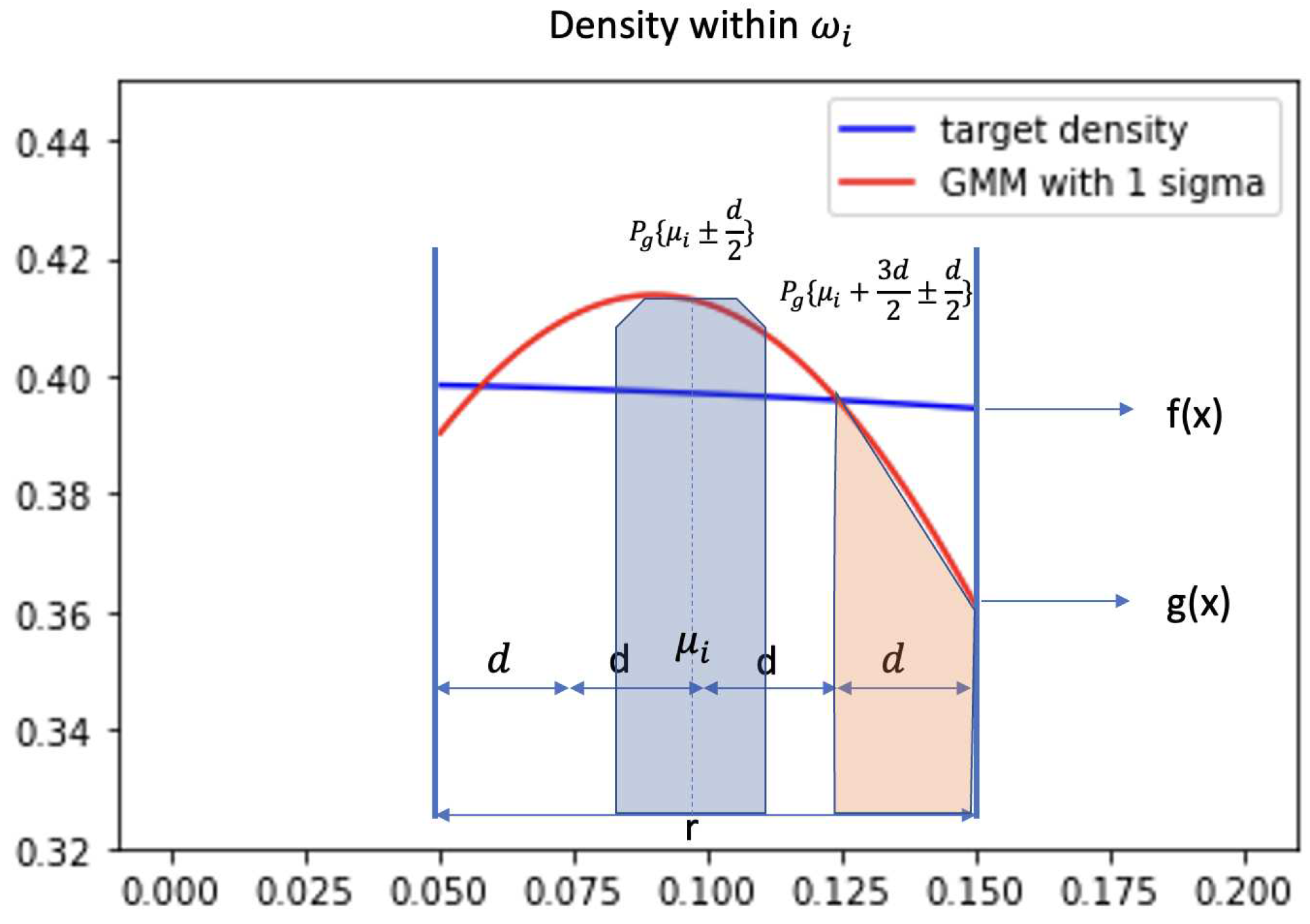

- Condition 1:

- Condition 2: Because is small, target density is approximately monotone and linear within . Where

- Condition 3: GMM density within double cross the target density. Two cross points are named a, b and .

2.4. Learning Algorithm

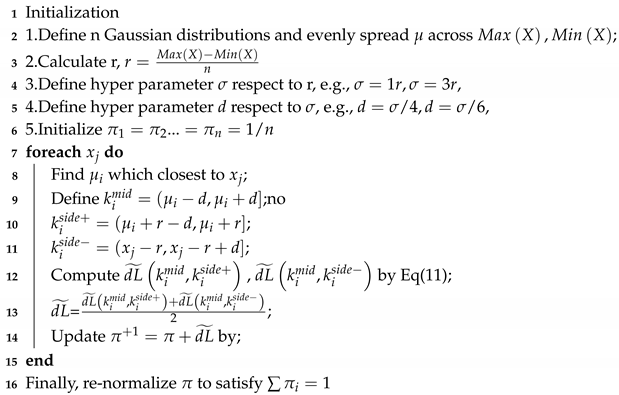

| Algorithm 1: |

|

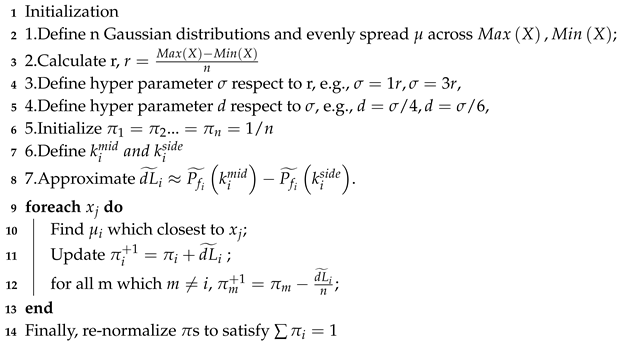

| Algorithm 2: |

|

3. Numerical Experiments

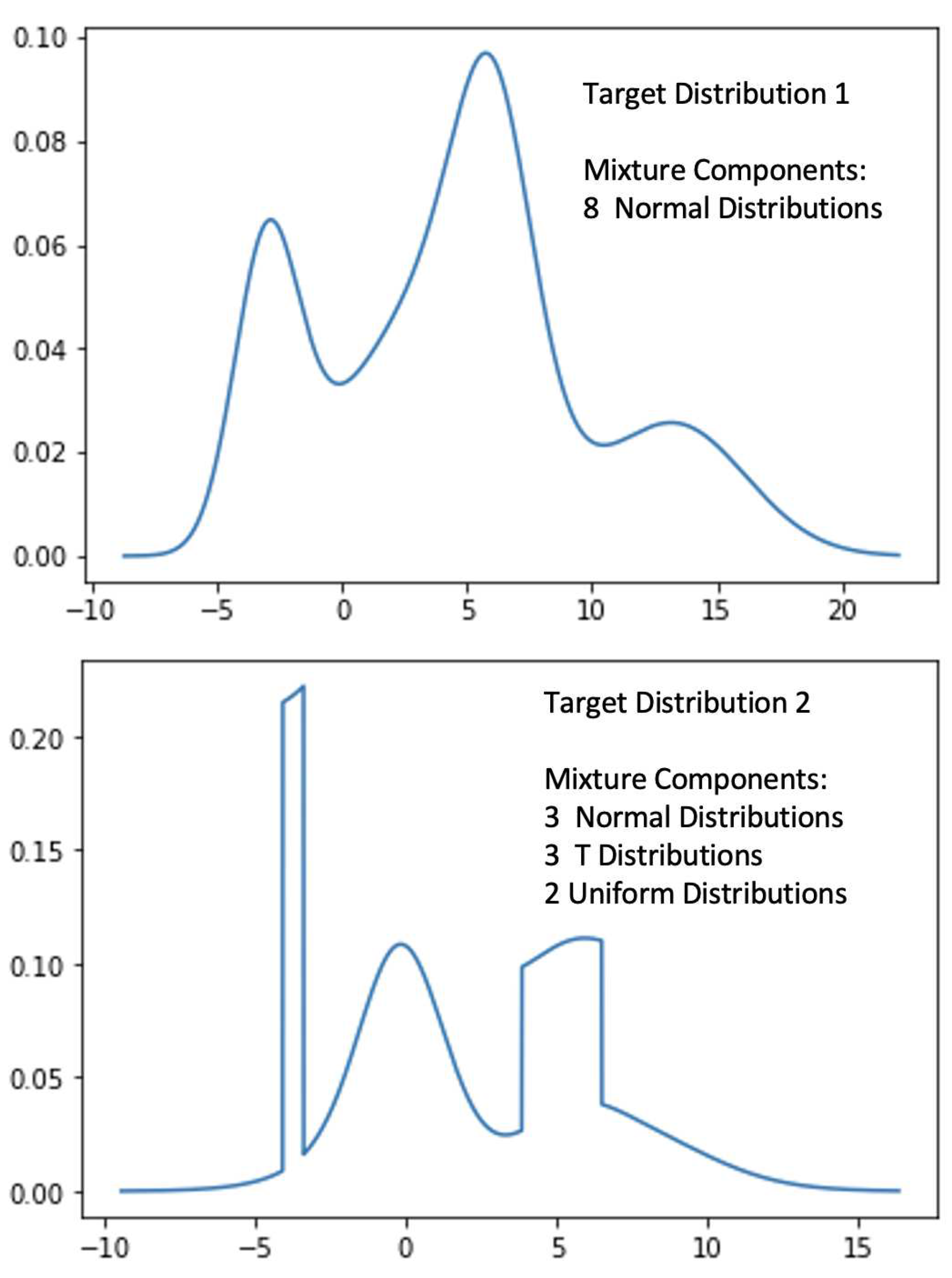

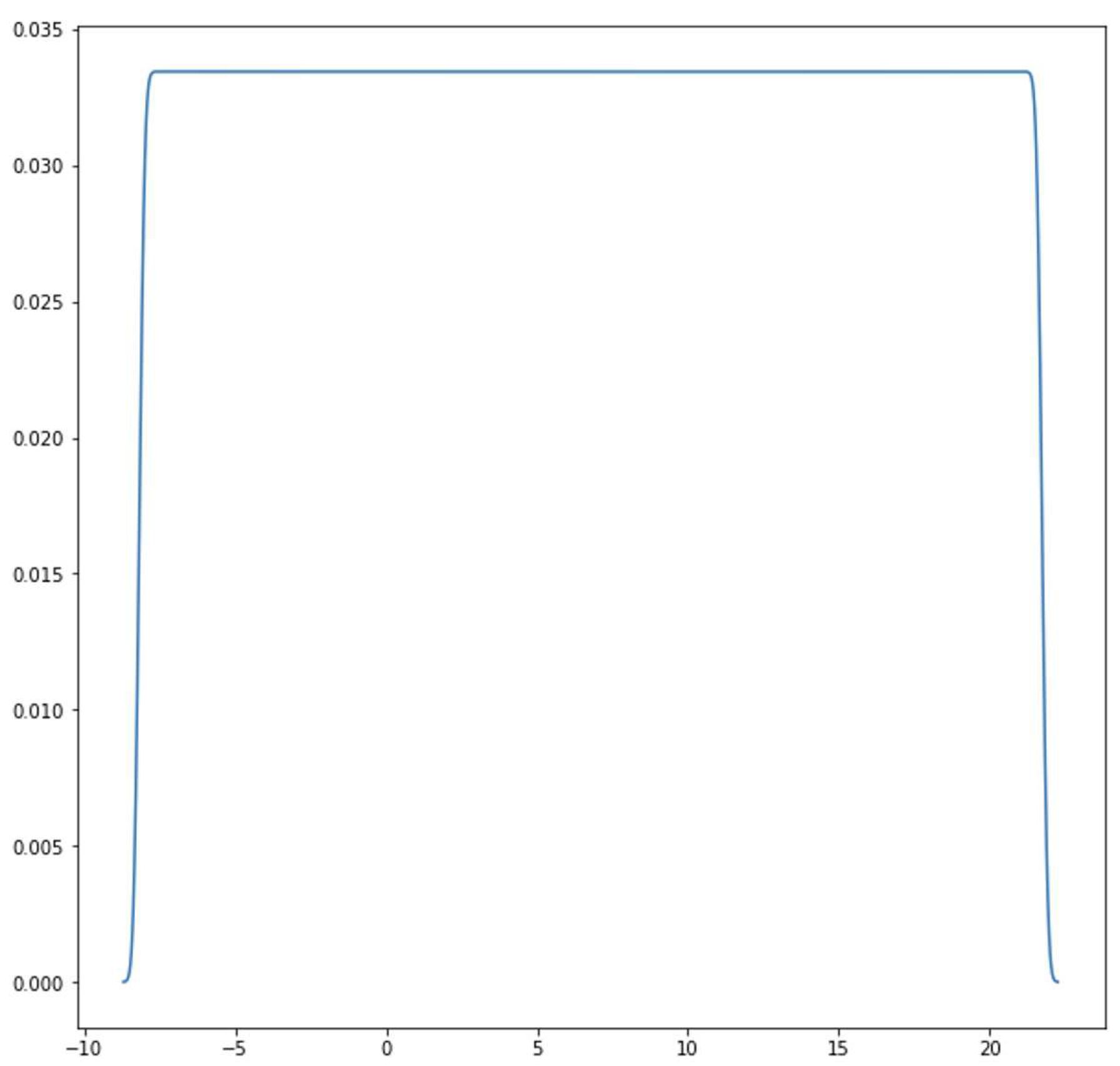

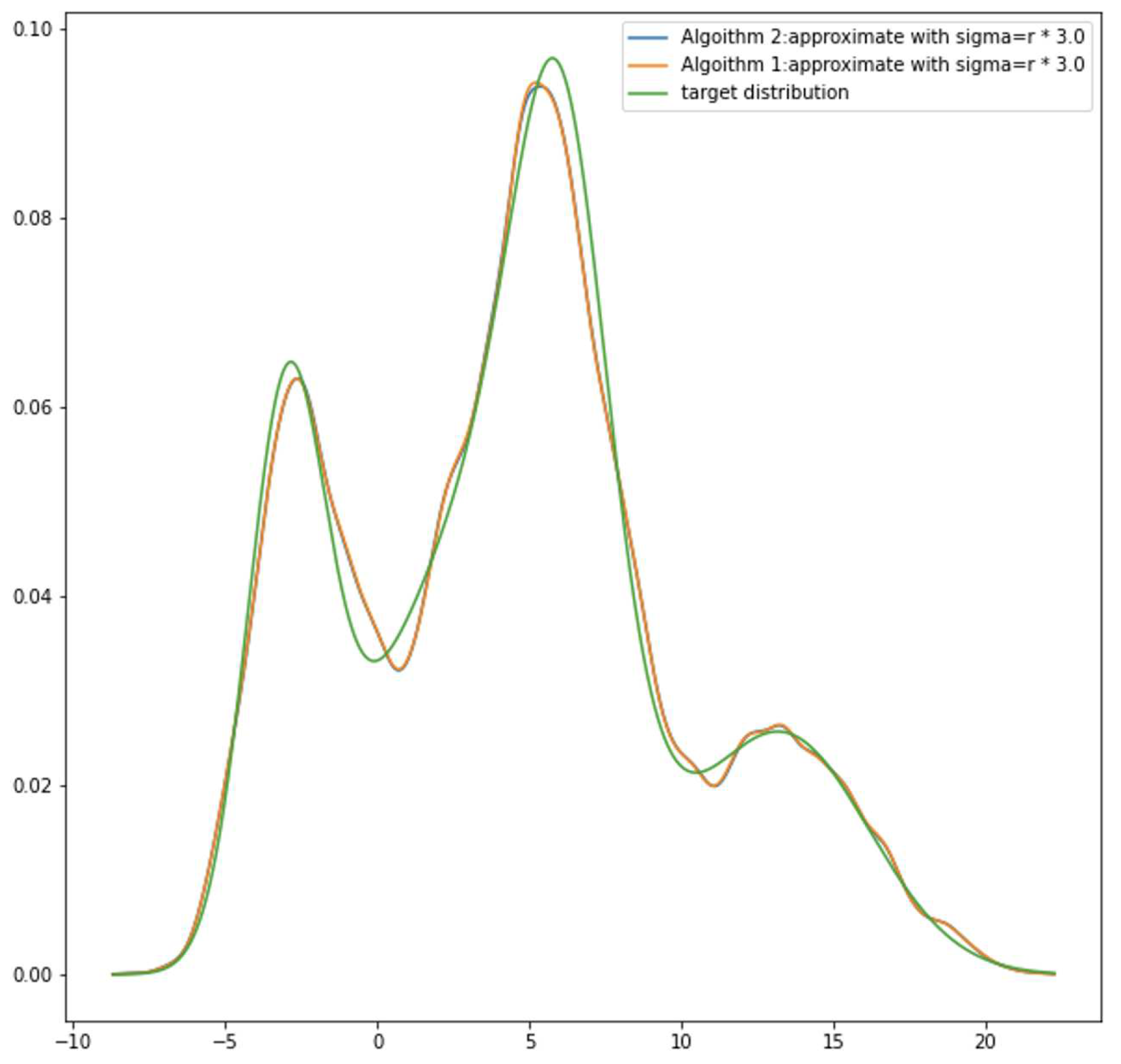

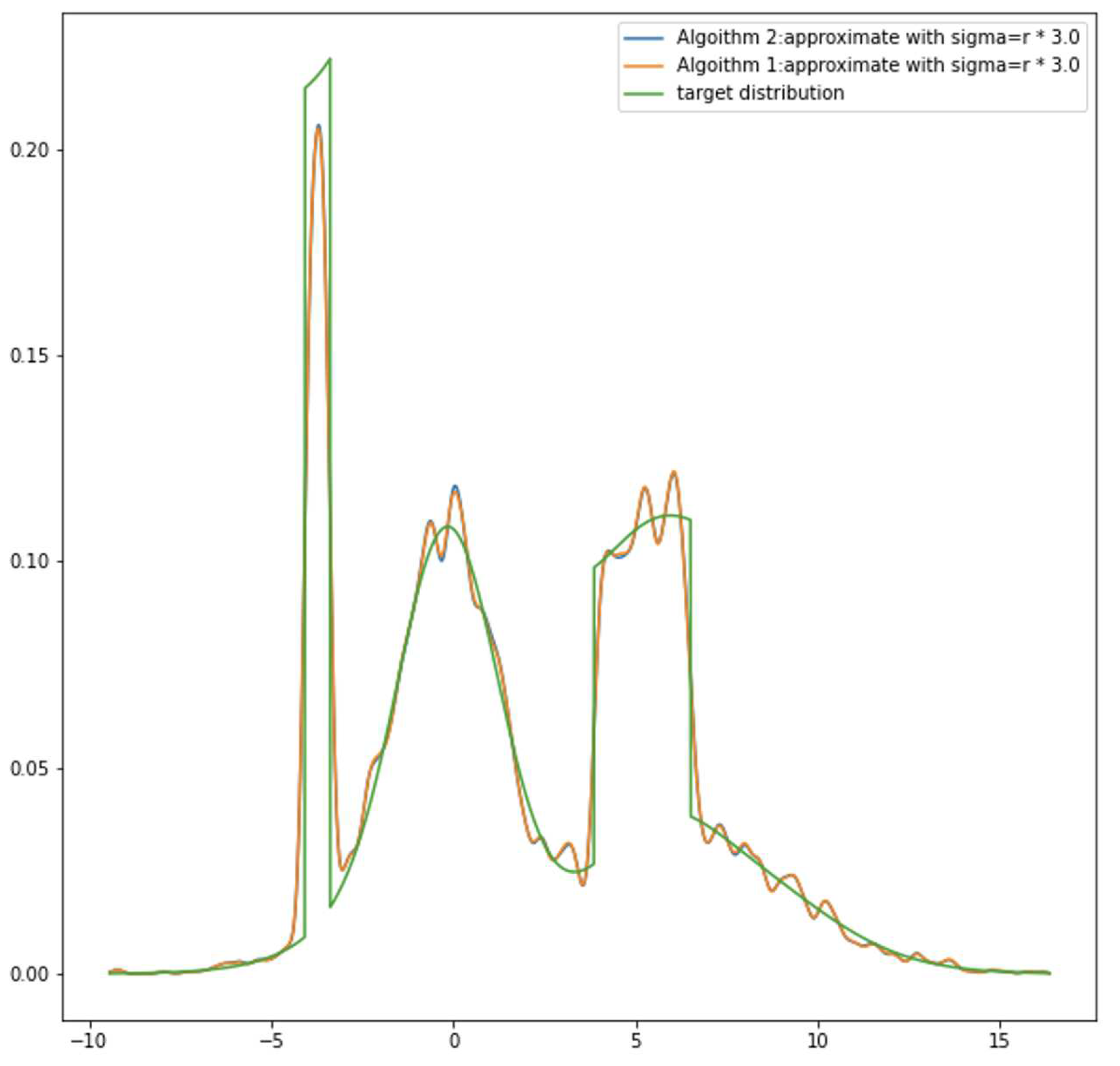

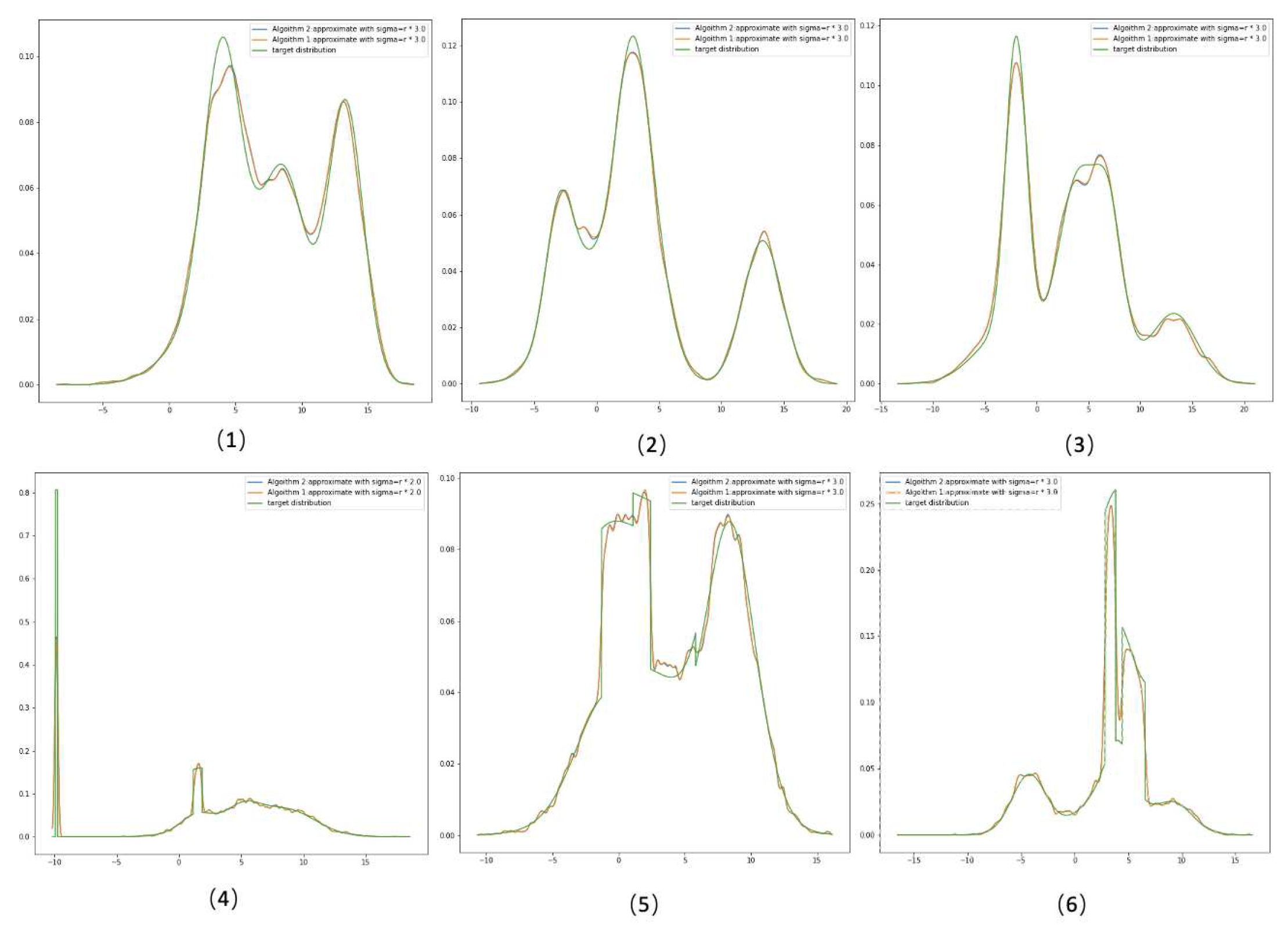

3.1. Random Density Approximation

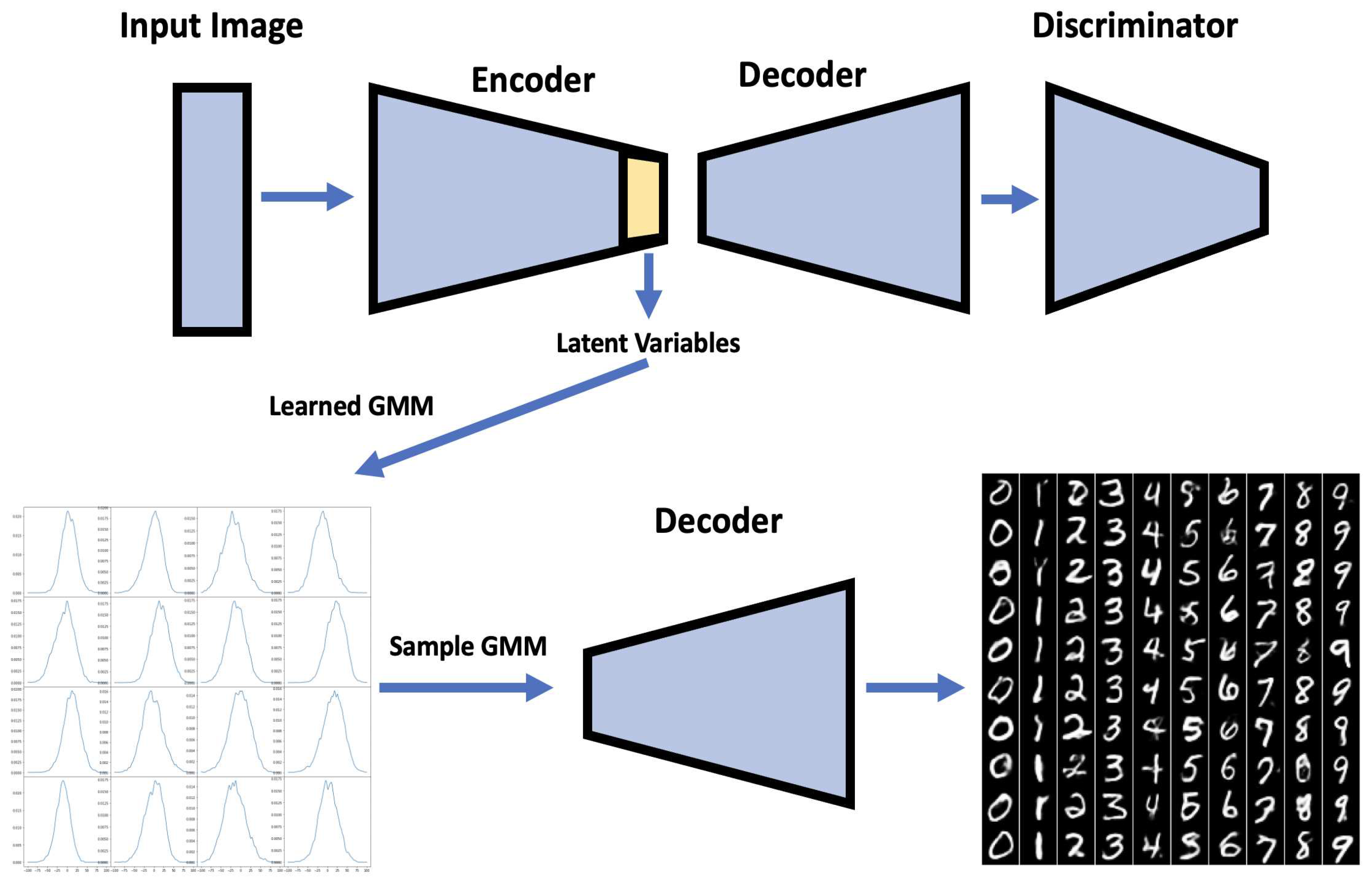

3.2. Neural Network Application

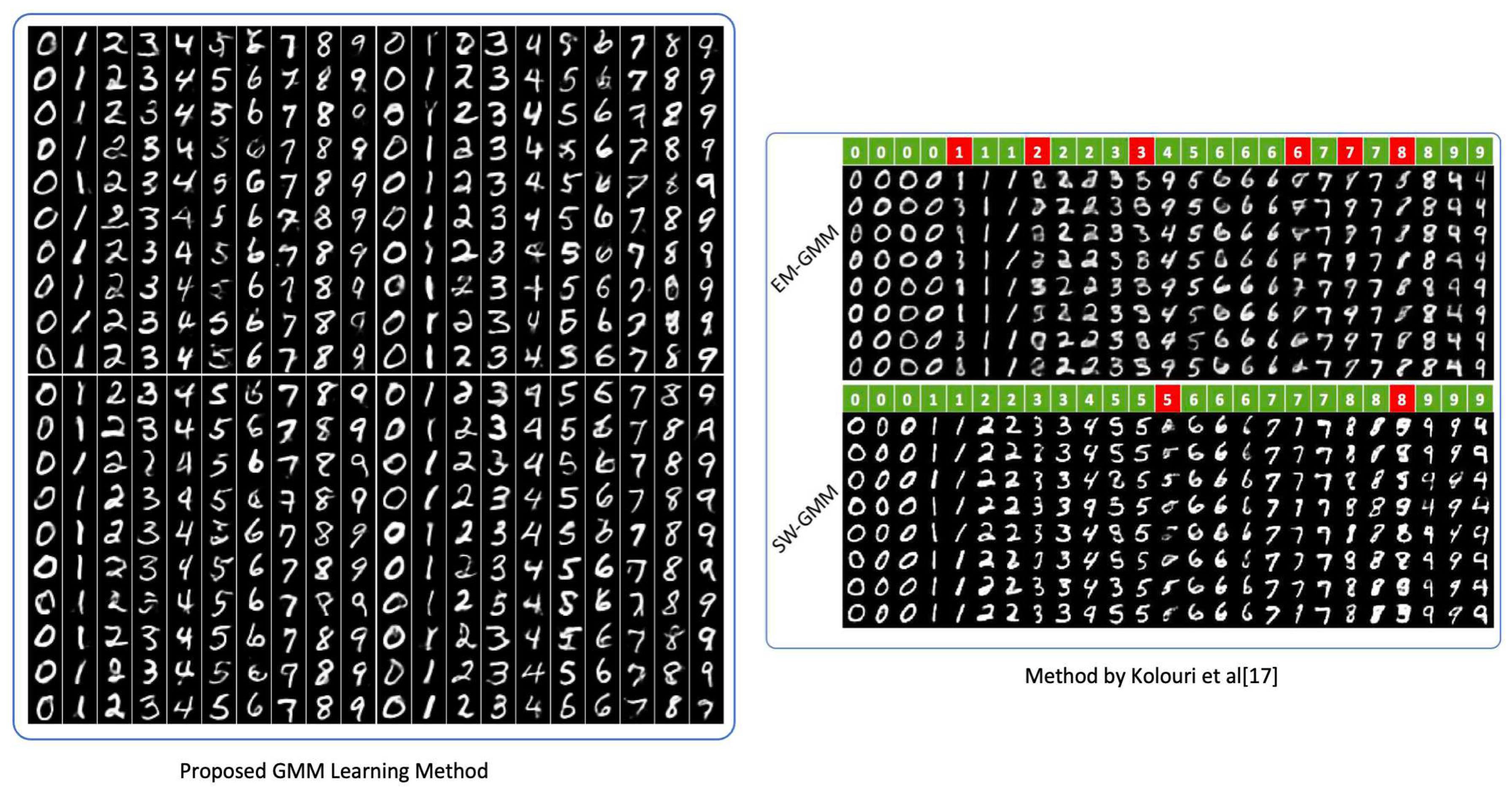

3.2.1. Mnist Data Set of Handwritten Digits Generation

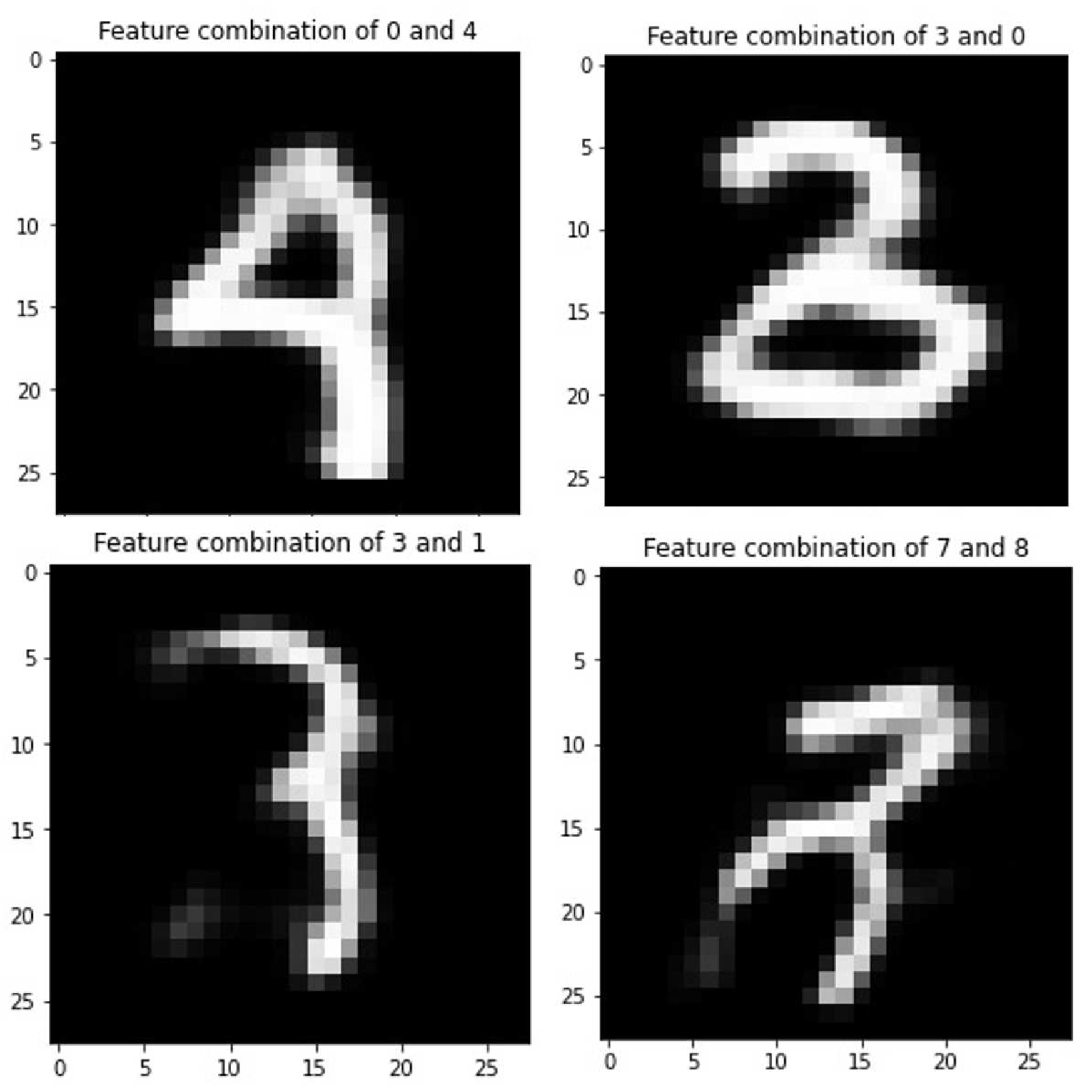

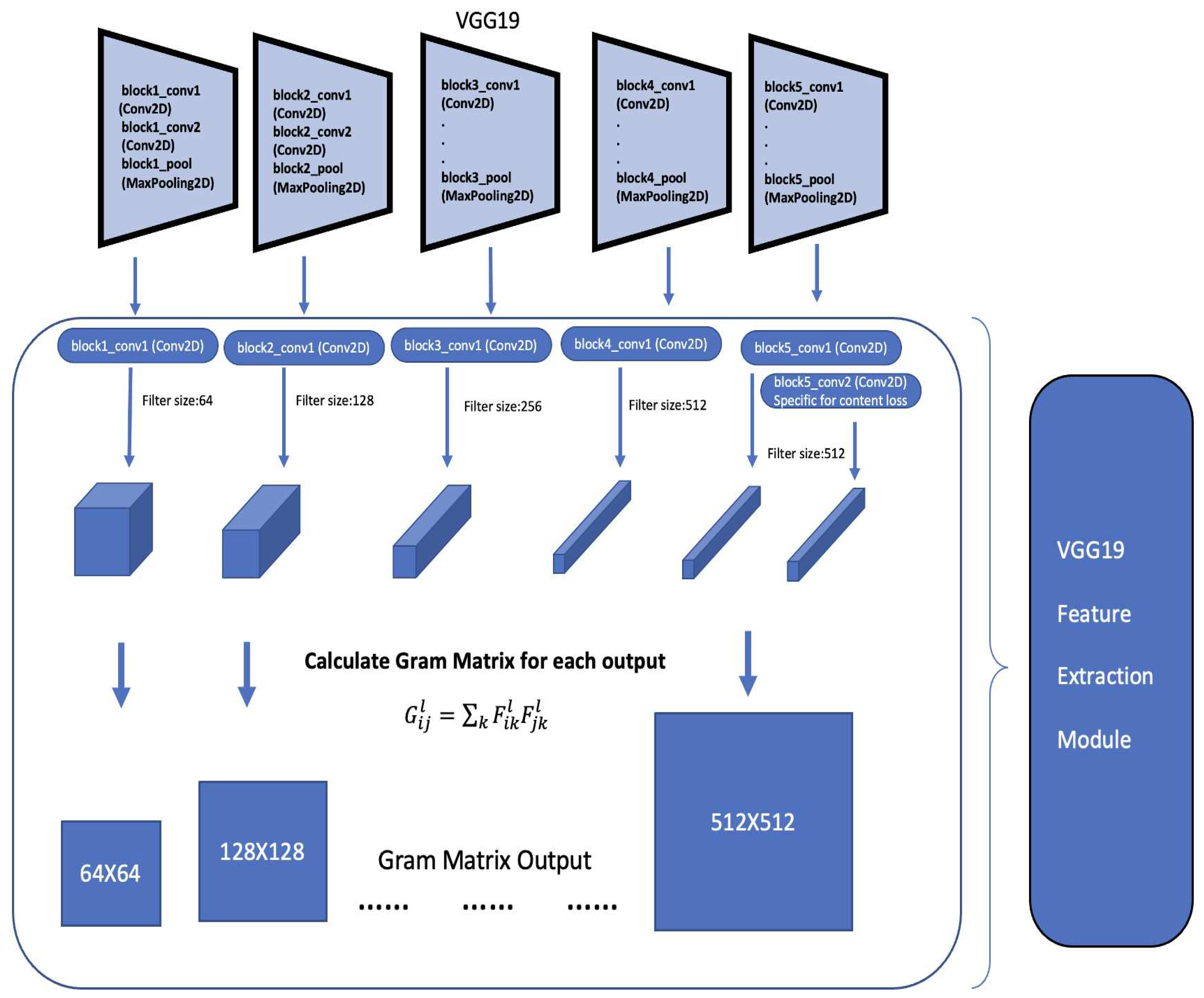

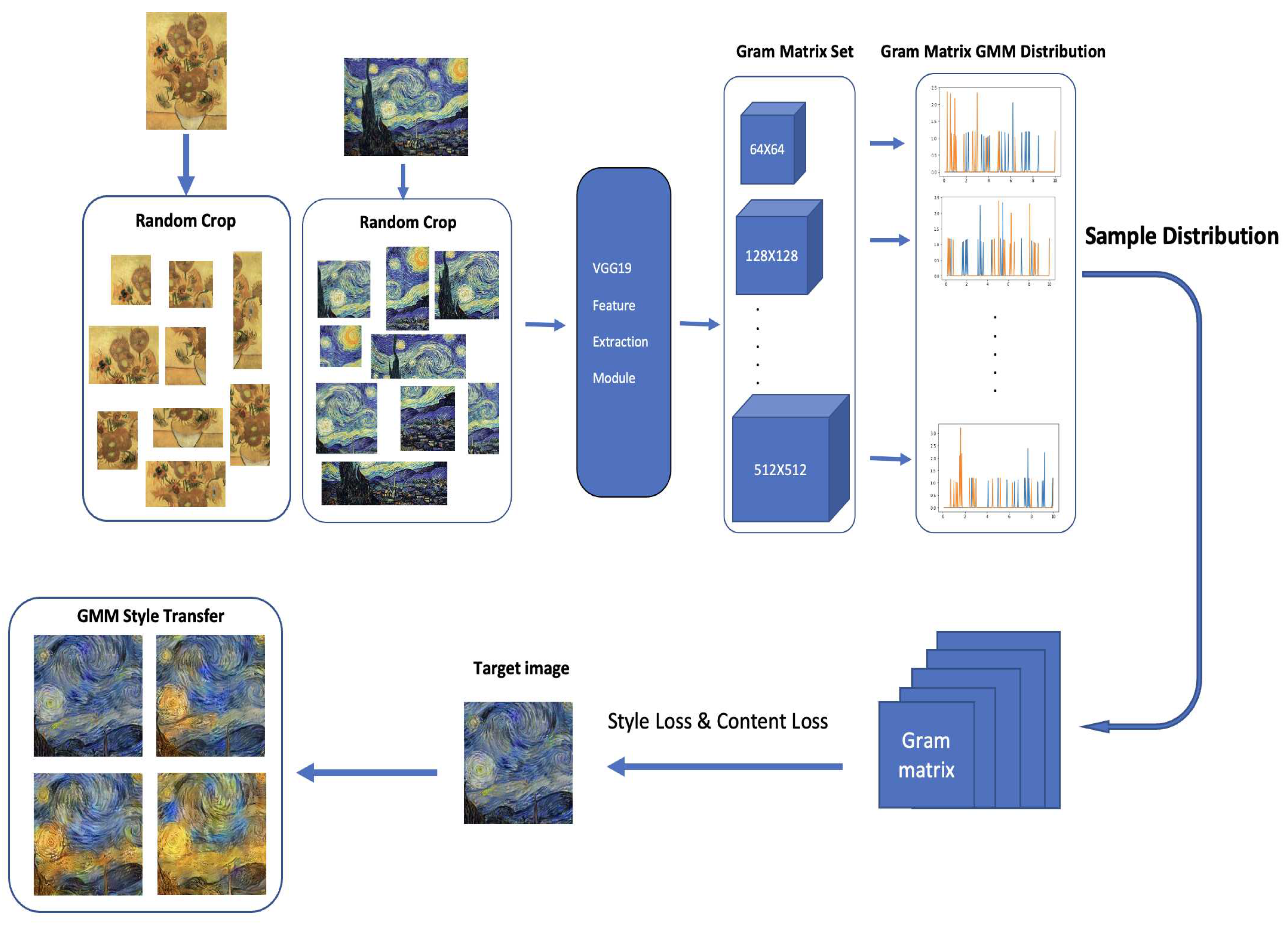

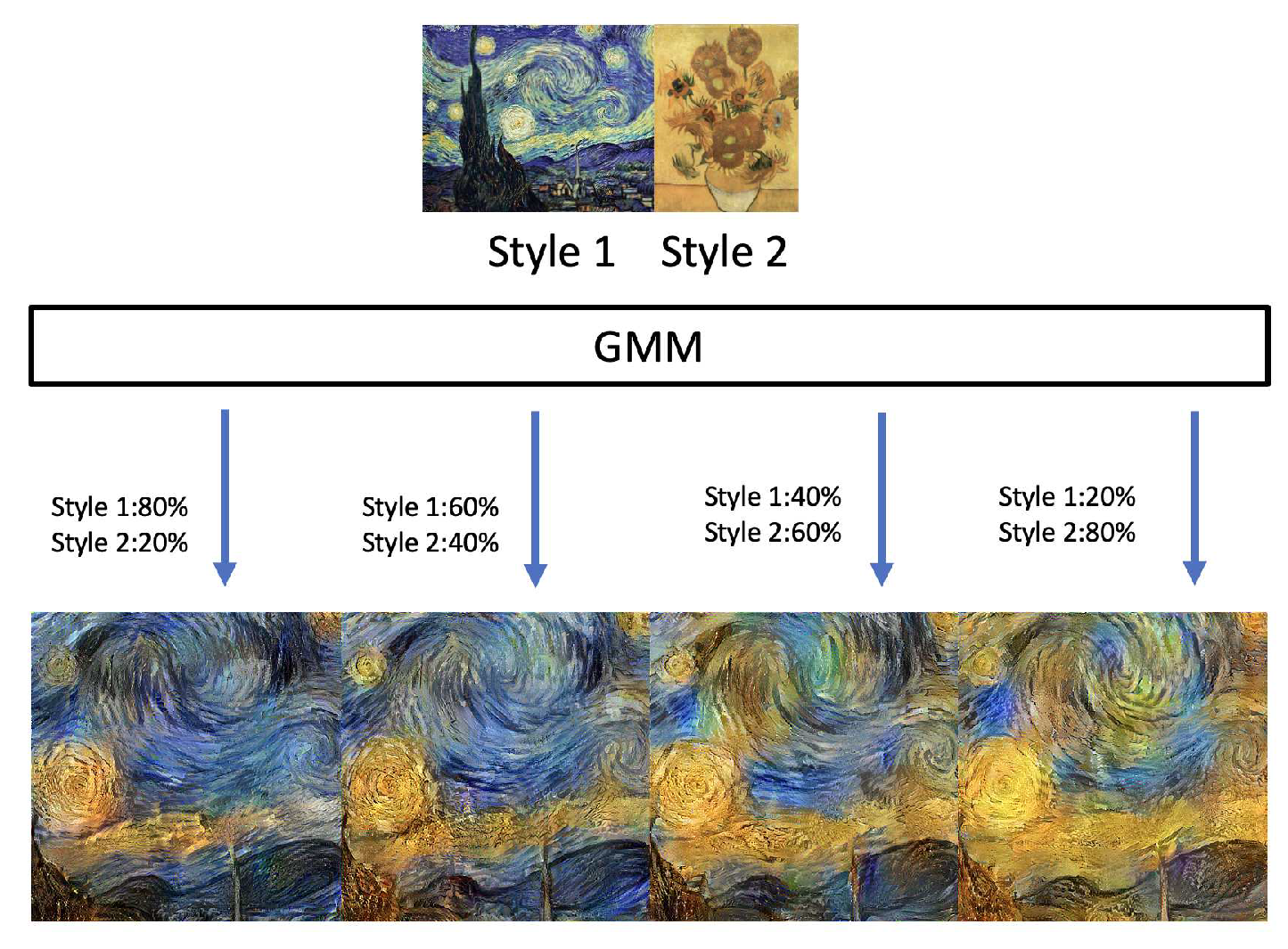

3.2.2. Style Transfer

4. Conclusions and Future Work

References

- Murphy, K.P. Machine learning: A probabilistic perspective (adaptive computation and machine learning series), 2018.

- Gal, Y. What my deep model doesn’t know. Personal blog post 2015. [Google Scholar]

- Kendall, A.; Gal, Y. What uncertainties do we need in bayesian deep learning for computer vision? Advances in neural information processing systems 2017, 30. [Google Scholar]

- Der Kiureghian, A.; Ditlevsen, O. Aleatory or epistemic? Does it matter? Structural safety 2009, 31, 105–112. [Google Scholar] [CrossRef]

- McLachlan, G.J.; Rathnayake, S. On the number of components in a Gaussian mixture model. Wiley Interdisciplinary Reviews: Data Mining and Knowledge Discovery 2014, 4, 341–355. [Google Scholar] [CrossRef]

- Li, J.; Barron, A. Mixture density estimation. Advances in neural information processing systems 1999, 12. [Google Scholar]

- Genovese, C.R.; Wasserman, L. Rates of convergence for the Gaussian mixture sieve. The Annals of Statistics 2000, 28, 1105–1127. [Google Scholar] [CrossRef]

- Dempster, A.P.; Laird, N.M.; Rubin, D.B. Maximum likelihood from incomplete data via the EM algorithm. Journal of the Royal Statistical Society: Series B (Methodological) 1977, 39, 1–22. [Google Scholar] [CrossRef]

- Verbeek, J.J.; Vlassis, N.; Kröse, B. Efficient greedy learning of Gaussian mixture models. Neural computation 2003, 15, 469–485. [Google Scholar] [CrossRef]

- Du, J.; Hu, Y.; Jiang, H. Boosted mixture learning of Gaussian mixture hidden Markov models based on maximum likelihood for speech recognition. IEEE transactions on audio, speech, and language processing 2011, 19, 2091–2100. [Google Scholar] [CrossRef]

- Kolouri, S.; Rohde, G.K.; Hoffmann, H. Sliced wasserstein distance for learning gaussian mixture models. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2018, pp. 3427–3436.

- Srebro, N. Are there local maxima in the infinite-sample likelihood of Gaussian mixture estimation? International Conference on Computational Learning Theory. Springer, 2007, pp. 628–629.

- Améndola, C.; Drton, M.; Sturmfels, B. Maximum likelihood estimates for gaussian mixtures are transcendental. International Conference on Mathematical Aspects of Computer and Information Sciences. Springer, 2015, pp. 579–590.

- Jin, C.; Zhang, Y.; Balakrishnan, S.; Wainwright, M.J.; Jordan, M.I. Local maxima in the likelihood of gaussian mixture models: Structural results and algorithmic consequences. Advances in neural information processing systems 2016, 29. [Google Scholar]

- Chen, Y.; Georgiou, T.T.; Tannenbaum, A. Optimal transport for Gaussian mixture models. IEEE Access 2018, 7, 6269–6278. [Google Scholar] [CrossRef] [PubMed]

- Li, P.; Wang, Q.; Zhang, L. A novel earth mover’s distance methodology for image matching with gaussian mixture models. Proceedings of the IEEE International Conference on Computer Vision, 2013, pp. 1689–1696.

- Beecks, C.; Ivanescu, A.M.; Kirchhoff, S.; Seidl, T. Modeling image similarity by gaussian mixture models and the signature quadratic form distance. 2011 International Conference on Computer Vision. IEEE, 2011, pp. 1754–1761.

- Jian, B.; Vemuri, B.C. Robust point set registration using gaussian mixture models. IEEE transactions on pattern analysis and machine intelligence 2010, 33, 1633–1645. [Google Scholar] [CrossRef] [PubMed]

- Yu, G.; Sapiro, G.; Mallat, S. Solving inverse problems with piecewise linear estimators: From Gaussian mixture models to structured sparsity. IEEE Transactions on Image Processing 2011, 21, 2481–2499. [Google Scholar] [PubMed]

- Campbell, W.M.; Sturim, D.E.; Reynolds, D.A. Support vector machines using GMM supervectors for speaker verification. IEEE signal processing letters 2006, 13, 308–311. [Google Scholar] [CrossRef]

- Ballard, D.H. Modular learning in neural networks. Aaai, 1987, Vol. 647, pp. 279–284.

- LeCun, Y. PhD thesis: Modeles connexionnistes de l’apprentissage (connectionist learning models); Universite P. et M. Curie (Paris 6), 1987.

- Gatys, L.A.; Ecker, A.S.; Bethge, M. A neural algorithm of artistic style. arXiv preprint arXiv:1508.06576 2015.

- Hinton, G.E.; Zemel, R. Autoencoders, minimum description length and Helmholtz free energy. Advances in neural information processing systems 1993, 6. [Google Scholar]

- Schwenk, H.; Bengio, Y. Training methods for adaptive boosting of neural networks. Advances in neural information processing systems 1997, 10. [Google Scholar]

- LeCun, Y. The MNIST database of handwritten digits. http://yann. lecun. com/exdb/mnist/ 1998.

- Goodfellow, I.; Pouget-Abadie, J.; Mirza, M.; Xu, B.; Warde-Farley, D.; Ozair, S.; Courville, A.; Bengio, Y. Generative adversarial networks. Communications of the ACM 2020, 63, 139–144. [Google Scholar] [CrossRef]

- Li, J.; Monroe, W.; Shi, T.; Jean, S.; Ritter, A.; Jurafsky, D. Adversarial learning for neural dialogue generation. arXiv preprint arXiv:1701.06547 2017.

- Goodfellow, I. Nips 2016 tutorial: Generative adversarial networks. arXiv preprint arXiv:1701.00160 2016.

- Kingma, D.P.; Salimans, T.; Jozefowicz, R.; Chen, X.; Sutskever, I.; Welling, M. Improved variational inference with inverse autoregressive flow. Advances in neural information processing systems 2016, 29. [Google Scholar]

- Ho, J.; Jain, A.; Abbeel, P. Denoising diffusion probabilistic models. Advances in Neural Information Processing Systems 2020, 33, 6840–6851. [Google Scholar]

- Song, Y.; Ermon, S. Generative modeling by estimating gradients of the data distribution. Advances in Neural Information Processing Systems 2019, 32. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv preprint arXiv:1409.1556 2014.

| Average Loss of 20 Experiments | ||

| Algorithm 1 | ||

| 0.07099 | 0.05091 | 0.044296 |

| Algorithm 2 | ||

| 0.06866 | 0.05085 | 0.04447 |

| Average Loss of 20 Experiments | ||

| Algorithm 1 | ||

| 0.08912 | 0.08738 | 0.09432 |

| Algorithm 2 | ||

| 0.08678 | 0.08749 | 0.09446 |

| Average Loss of 30 Experiments with Data size 20000 | ||||||

| Numbers of Components | ||||||

| 10 | 30 | 50 | 100 | 200 | 500 | 1000 |

| Average Loss of 30 Experiments with Data size 50000 | ||||||

| Numbers of Components | ||||||

| 10 | 30 | 50 | 100 | 200 | 500 | 1000 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).