5. Discussion

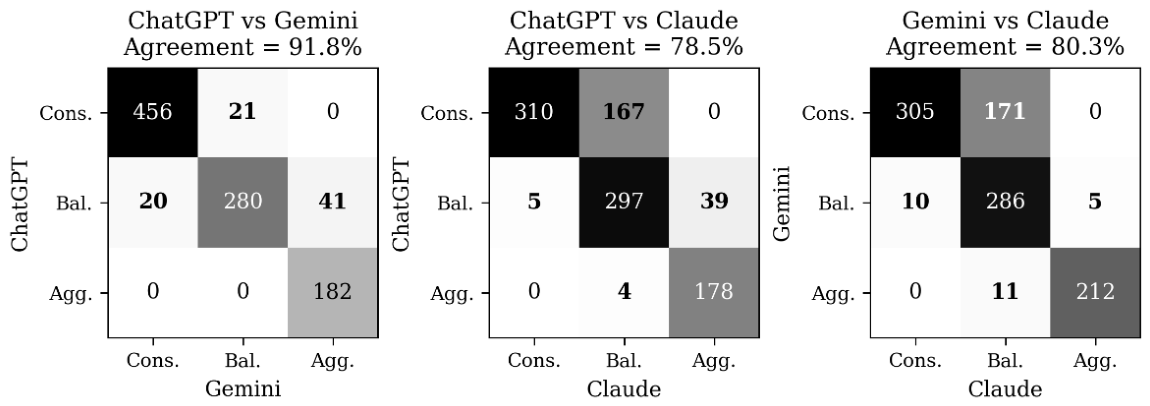

The empirical question posed at the outset of this study was whether contemporary generative AI (GAI) models, when prompted to perform the function of a goals-based investment advisor, generate recommendations that respond appropriately to financially relevant attributes whilst remaining invariant to demographic attributes that goals-based investing treats as conditionally immaterial. Four substantive conclusions emerge from our analysis. First, all three GAI models ground their recommendations overwhelmingly in the legitimate financial inputs (Risk Tolerance, Time Horizon, Goal Type and Annual Income), with McFadden pseudo-R² values of .874 (ChatGPT), .842 (Gemini) and .714 (Claude). The relatively lower fit for Claude indicates that a materially greater share of its variation is unaccounted for by the supplied client attributes. Second, within the demographic block, Age is significant in every GAI model and Marital Status is significant for ChatGPT only; both effects shift recommendations towards conservatism. Gender, Ethnicity and Employment Type are not detectably used by any of the three models, a pattern consistent with an absence of disparate-treatment bias on those protected characteristics. Third, the three GAI models are not interchangeable: the Friedman, pairwise Wilcoxon and pooled likelihood-ratio tests all reject equality, and the divergence is concentrated in how the models translate Risk Tolerance, Time Horizon and Goal Type into a recommendation. Claude is notably idiosyncratic, producing fewer Conservative and more Balanced recommendations than the other two GAI models, treating Education-funding goals as warranting more aggressive allocations rather than less, and exhibiting a steeper drop in risk at age 62 than the corresponding rise at age 28. Fourth, although the demographic interactions across GAI models are, with the exception of Age, statistically indistinguishable, the absolute magnitudes of demographic sensitivity vary substantially at economically realistic anchor scenarios, with consequential implications for downstream allocation decisions.

The dominance of financial inputs in driving recommendations is both encouraging and analytically informative. From the perspective of goals-based investing theory [

16,

17], the attributes that should rationally govern portfolio recommendation are precisely those (risk tolerance, time horizon, goal type and income) on which the GAI models converge. The McFadden pseudo-R² values of .714 to .874 are exceptionally high for a behavioral prediction task and indicate that the models behave largely as transparent functions of their inputs rather than as opaque pattern-matchers drawing on latent training-corpus regularities. This aligns with, and extends, the aggregate-level finding of Oehler and Horn [

9] that ChatGPT advice often tracks academic benchmarks more closely than that of established robo-advisors. Whereas Oehler and Horn establish alignment at the level of average recommendations, our attribute-level decomposition demonstrates that the alignment is not coincidental, but reflects appropriate marginal weighting of the relevant financial inputs. The result also strengthens the more cautious findings of Kim [

12] and Ko and Lee [

13], who establish that GAI-constructed portfolios exhibit defensible diversification properties, by extending the evidence into the conjoint-experimental domain where the implicit attribute weights, and not merely the output portfolios, are observable.

Equally consequential is the absence of detectable disparate treatment on Gender, Ethnicity and Employment Type. This finding stands in marked contrast to the documented pattern of bias in adjacent algorithmic-finance contexts. Bowen et al. [

10] report that GAI models assign systematically higher rejection rates and interest rates to Black mortgage applicants than to financially identical White applicants, with the disparity persisting even when explicit racial labels are removed. Lippens [

11], applying an audit methodology comparable to ours, finds that ChatGPT systematically downgrades job applicants whose names imply ethnic-minority status. Motoki et al. [

8] document substantial political bias in the same family of models. The audit-design parallel makes the contrast particularly striking: under near-identical methodological conditions, the same family of models exhibits material bias in lending and labor-market tasks but not in goals-based investment recommendation. Several non-exclusive explanations warrant consideration. One possibility is that the investment-advisory training corpus is itself less racially or gender-coded than the lending and labor-market discourses that drive bias in the Bowen and Lippens studies. A second possibility is that the alignment process, reinforcement learning from human feedback in particular, has been deliberately tuned by model developers to neutralize demographic cues in financial-advice contexts, owing to the salient regulatory exposure that disparate-impact findings would attract in this domain [

7]. A third, more sobering possibility is that the demographic invariance reflects the absence of bias in our particular elicitation rather than the absence of bias under all elicitations: where the client’s financial profile is fully specified, demographic cues may simply lack the residual informational role that they assume in lending decisions, in which credit-relevant variables are noisier and proxies more diagnostic. Distinguishing among these explanations is methodologically difficult and, in our view, an important agenda for future audit work.

Two demographic predictors exhibit detectable effect, namely Age (in all three models) and Marital Status (in ChatGPT). From a goals-based investing standpoint, age and marital status are not, strictly, recommendation-relevant once time horizon, risk tolerance and goal type have been specified; the time-horizon attribute should already capture the life-cycle considerations that age might otherwise proxy. The persistence of an age effect over and above the explicit time-horizon dummies therefore suggests that the GAI models are importing additional life-cycle assumptions, around retirement proximity, human-capital depletion or longevity risk, that lie outside the goals-based framework’s formal architecture. Such importation is economically defensible: an older client with a thirty-year horizon nonetheless faces a shorter expected remaining life than a younger client with the same horizon, and conservatism may rationally follow. Yet the same logic could rationalize the use of any attribute correlated with life expectancy or earnings stability, including, in principle, gender or ethnicity. The fact that the models impose conservatism on the basis of age but not on the basis of gender, despite well-documented gender-longevity correlations, suggests that the models are reasoning from a particular folk-theoretic conception of advisory practice rather than from a comprehensive actuarial framework. Claude’s asymmetric retirement-cliff response to age, a 0.085 reduction in P(Aggressive) when shifting age from 45 to 62, against negligible changes when shifting age from 45 to 28, is consistent with this interpretation: the model encodes the cultural prior that older clients should de-risk but does not symmetrically encode the prior that younger clients should risk on. The Marital Status effect for ChatGPT can be interpreted similarly: shortfall aversion on behalf of dependents is a defensible application of goals-based principles, yet the absence of an explicit dependent-funding goal in the prompt makes it difficult to confirm that the model is reasoning from goal structure rather than from a stereotype about family-status conservatism.

Perhaps the most consequential finding for practical deployment is the cross-model heterogeneity. The pooled likelihood-ratio test of Model × X interactions rejects the null that the three GAI models use client attributes identically (χ²(36) = 295.98, p < .0001), and the divergence is concentrated in the very attributes that goals-based investing prescribes as recommendation-relevant. At the anchor scenario constructed in

Section 4.3, the implied probability of an Aggressive recommendation spans a twenty-two-percentage-point range across the three models (0.03 for ChatGPT, 0.25 for Gemini, 0.12 for Claude). Claude’s reversal of the Education-funding coefficient, treating the goal as warranting more aggressive allocation rather than less, indicates that the models do not share a common semantic mapping of named life goals onto the risk spectrum. This raises a form of platform risk that is, to our knowledge, largely undocumented in the existing GAI-in-finance literature. Investors who delegate portfolio recommendation to a particular GAI model are, in effect, selecting a particular implicit advisory philosophy whose attribute-weighting profile may not be evident even after extended interaction. The risk is qualitatively distinct from the model-version risk noted by Schneider and Yilmaz [

18], who report performance variation across model releases within a single provider; the heterogeneity we document is contemporaneous, persists at the frontier of each provider’s offering and arises in the attribute weights themselves rather than in downstream realized returns.

These findings carry implications for several adjacent literatures and policy domains. For the literature on robo-advisors [

2,

14,

15], our results indicate that the migration from deterministic recommendation engines to GAI-enabled conversational interfaces is unlikely, on the present evidence, to reintroduce the demographic biases documented in the human-advisor literature [

4,

5,

6]. The contrast with Mullainathan et al. [

6] is especially striking: where human advisors in their audit study systematically steered clients into higher-cost actively managed products with effects varying by client demographics, the GAI models we audit display no such demographic patterning, despite recommending portfolios constructed from the same broad asset classes. The migration of bias hypothesized in our introduction therefore does not appear to materialize along the conventional protected dimensions; if bias has migrated, it has done so along the previously underappreciated dimension of platform identity. For fintech regulation, this is a complex finding because conventional disparate-impact frameworks are poorly equipped to govern a setting in which the salient differential is not between demographically distinct clients within a single platform but between identically situated clients across platforms. For robo-advisor governance, our results suggest that audit-style methodologies of the kind developed by Lippens [

11] and Motoki et al. [

8] should be incorporated into routine compliance monitoring of GAI-enabled advisory services, not merely as a one-off vendor assessment but as an ongoing surveillance instrument that tracks attribute weightings across model versions over time. For the broader scholarly conversation on AI accountability in financial services, the result that frontier GAI models differ materially in their handling of theoretically recommendation-relevant inputs whilst converging on the conditional irrelevance of protected characteristics suggests that the dominant fairness narratives may be insufficient as a description of where the consequential algorithmic variation actually resides.

Several limitations of the present study warrant explicit acknowledgement. First, the audit captures a single snapshot of three model versions at a fixed point in time. Generative AI models are updated continuously and the alignment procedures that govern their behavior are subject to change at the discretion of their developers; the patterns we document may evolve, and replication across model versions and time periods is therefore essential before any conclusion can be regarded as a general property of GAI-enabled advice. Second, our prompts are presented in English and the client names that signal gender and ethnicity are drawn from a United States cultural register; the absence of detectable disparate treatment in our experiment cannot be generalized to non-Anglo settings without further audit. Third, the experimental design supplies explicit risk-tolerance, time-horizon and goal-type fields, which represent strong, theoretically privileged anchors. In reality, clients may communicate these attributes through less structured natural-language interactions in which demographic cues might play a larger role; an extension of our design to less heavily anchored prompts is an obvious next step. Fourth, the three-portfolio choice set is a coarse simplification of the continuous allocation space in which real portfolio recommendations are situated, and effects that are subthreshold under our discrete ordinal measure may be detectable under continuous-allocation metrics. Fifth, although names are a well-established device for signaling implicit gender and ethnicity in audit research [

11,

38], their information content as cues to demographic identity is plausibly weaker than that of explicit labels, and a stronger experimental manipulation might reveal effects that ours does not. Sixth, our use of the default API temperature settings, while consistent with realistic use, introduces stochastic variation that the present study has not attempted to characterize systematically. Seventh, the demographic invariance we document is consistent both with the genuine absence of bias and with the presence of explicit safeguards in the alignment layer; the present audit cannot distinguish these mechanisms.

These limitations provide opportunities for future research. Longitudinal audit designs that track the attribute-weighting profiles of frontier GAI models across model versions and over time would establish whether the patterns we document are durable features of contemporary GAI advice or transient artefacts of particular alignment regimes. Adversarial audits, in which financially relevant attributes are deliberately omitted or rendered ambiguous, would probe whether demographic cues acquire a larger recommendation-influencing role when the legitimate signal is weakened. Multilingual and cross-jurisdictional extensions would establish whether the demographic invariance we observe generalizes beyond the English-language, United-States cultural setting in which our audit was conducted. Welfare-oriented extensions that map the cross-model heterogeneity we document into long-run client outcomes, using, for example, the diversified-portfolio benchmarks of Kim [

12] and Ko and Lee [

13], would translate the abstract platform-risk finding into the metric that ultimately matters for investors. The audit methodology developed here also extends naturally beyond goals-based portfolio recommendation to adjacent advisory domains, including tax-aware investing, debt management and intergenerational wealth transfer, where the theoretical separation between attribute-relevant and attribute-irrelevant client characteristics is similarly well defined. Finally, the comparison of frontier closed-source models with open-source alternatives, whose alignment procedures are at least partially inspectable, would shed light on the extent to which the patterns we document reflect inherent properties of the underlying language modelling versus deliberate design choices in the alignment layer.