Submitted:

01 May 2026

Posted:

05 May 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

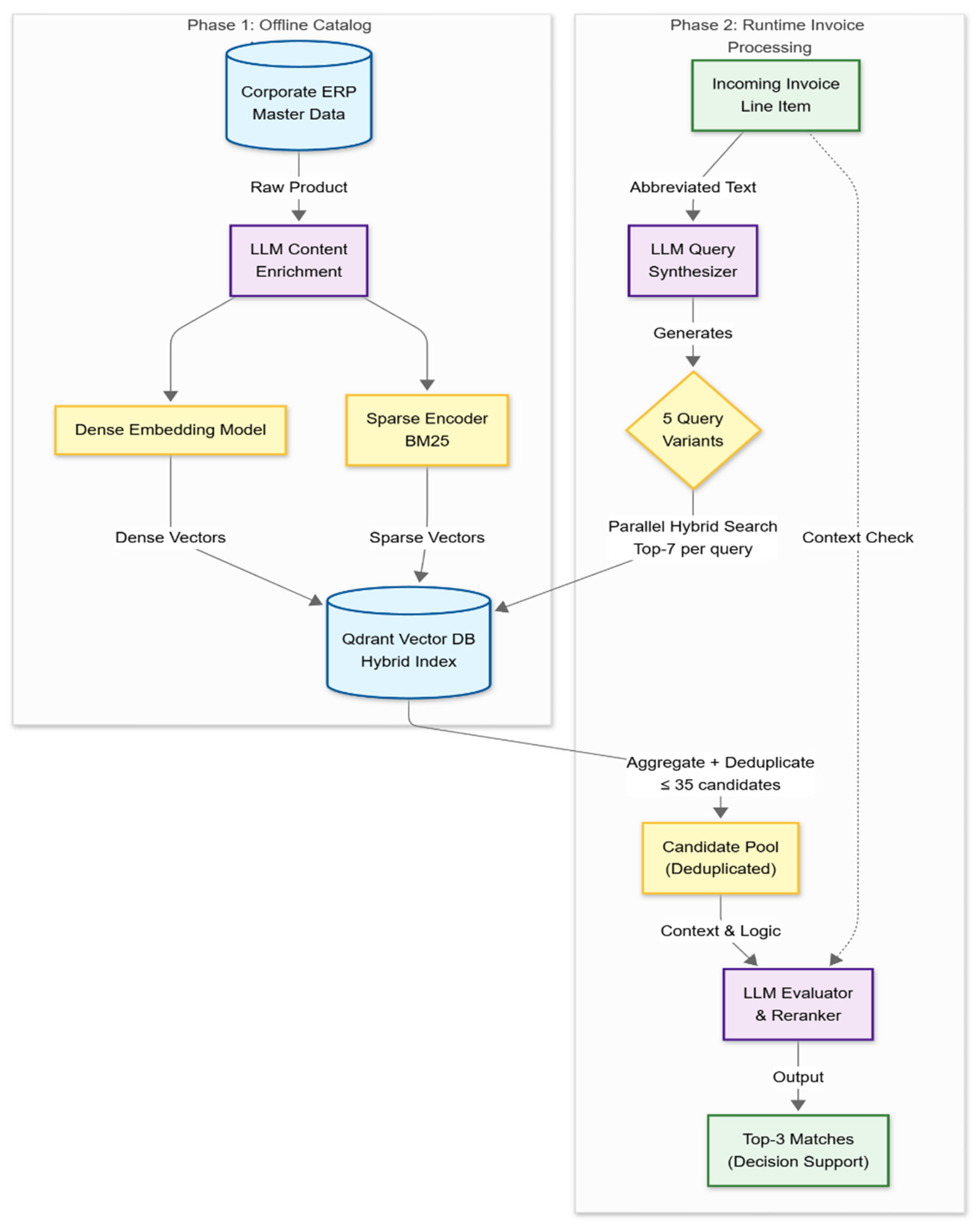

- Catalog Augmentation: We first proactively enrich the internal corporate catalog. An LLM generates additional keywords, synonyms, and potential invoice-variants for each product, and these enhanced entries are stored as embeddings in a vector database.

- Query Augmentation & Reranking: During live invoice processing, our system leverages an LLM to generate multiple augmented query variants from the raw, extracted invoice line. This “query expansion” retrieves a broad set of potential candidates, which are then evaluated by a specialized LLM-based reranker to produce the final Top-3 matches.

2. Related Work

2.1. E-Invoicing Adoption and RPA Governance

2.2. Automated Invoice Processing & Information Extraction (IE)

2.3. Entity Resolution (ER) & Product Matching for Accounts Payable (AP)

2.4. Applying Large Language Models (LLMs) for Contextual Matching and Judgment

- Proactive Catalog Enrichment: First, we use an LLM to “read” our internal product catalog and proactively generate realistic synonyms, common abbreviations, and alternative descriptions for each item. This is an automated form of master data enhancement. For example, “M6 Stainless Steel Hex Bolt, 10mm, 100-pack” might be enriched with terms like “SS M6 bolt,” “hex 10mm,” or “box of 100 bolts.” This enriched data is stored in a high-speed vector database, allowing our system to anticipate the messy, inconsistent language suppliers use before their invoices even arrive. This concept builds on RAG research into document-side augmentation (Raina and Gales, 2024) but applies it as a practical control for data quality in a procurement context.

- Interpreting Noisy Invoice Queries: When a noisy line item like “SS hexblt 10mm” is extracted from an invoice, it often fails to match the catalog directly. Our system uses an LLM to rewrite this ambiguous query into multiple, clearer variants (e.g., “stainless steel hex bolt 10mm,” “M10 hex bolt stainless”). This step mimics an AP clerk’s “best guess” at what the supplier meant to say, effectively translating vendor-specific shorthand into our internal terminology. This “query expansion” (Ma et al., 2023) is critical for handling OCR errors and vendor-specific phrasing, ensuring good candidates are found even when the initial data is poor.

- Applying Contextual Judgment (Reranking): The first two steps retrieve a list of potential matches from the catalog. This list is then passed to a final LLM-based reranker, which acts like a senior AP professional performing a final check. It compares the original invoice line to the top candidates and re-orders them based on deep contextual understanding. This stage is crucial for resolving ambiguities that lexical methods miss, such as a “box” versus an “each” unit of measure or packaging equivalences (e.g., “10-pack” vs. “10 units”). This LLM-based reranking (Adeyemi et al., 2023) applies nuanced business logic, significantly improving the quality of the final Top-3 matches presented to the user.

3. Materials and Methods

3.1. Research Design

3.2. System Architecture and Implementation

3.2.1. Phase 1: Catalog Augmentation and Vector Indexing

- Dense Retrieval: Each enriched catalog entry is encoded with OpenAI’s text-embedding-3-large model, with the output dimensionality reduced from the default 3,072 to 1,024 for storage and latency efficiency. Retrieval is performed by cosine similarity in this 1,024-dimensional space. This captures semantic context (e.g., understanding that “portable PC” and “laptop” are related).

- Sparse Retrieval (BM25): In parallel, each catalog entry is indexed with a BM25 representation, produced by FastEmbed’s Qdrant/bm25 model, which preserves exact-keyword signal (e.g., SKU codes such as “X1-Carbon” or “M6×10”) that embeddings tend to smooth over.

3.2.2. Phase 2: Real-Time Query Augmentation and Reranking

3.3. Data Preparation and Experimental Setup

- The Corporate Catalog (Master Data): The “right-side” dataset serves as the authorized product master file found in an ERP system.

- The Invoice Stream (Query Set): The “left-side” dataset was filtered to create a stream of incoming “invoice line items” that require reconciliation against the catalog.

3.4. Evaluation Metrics

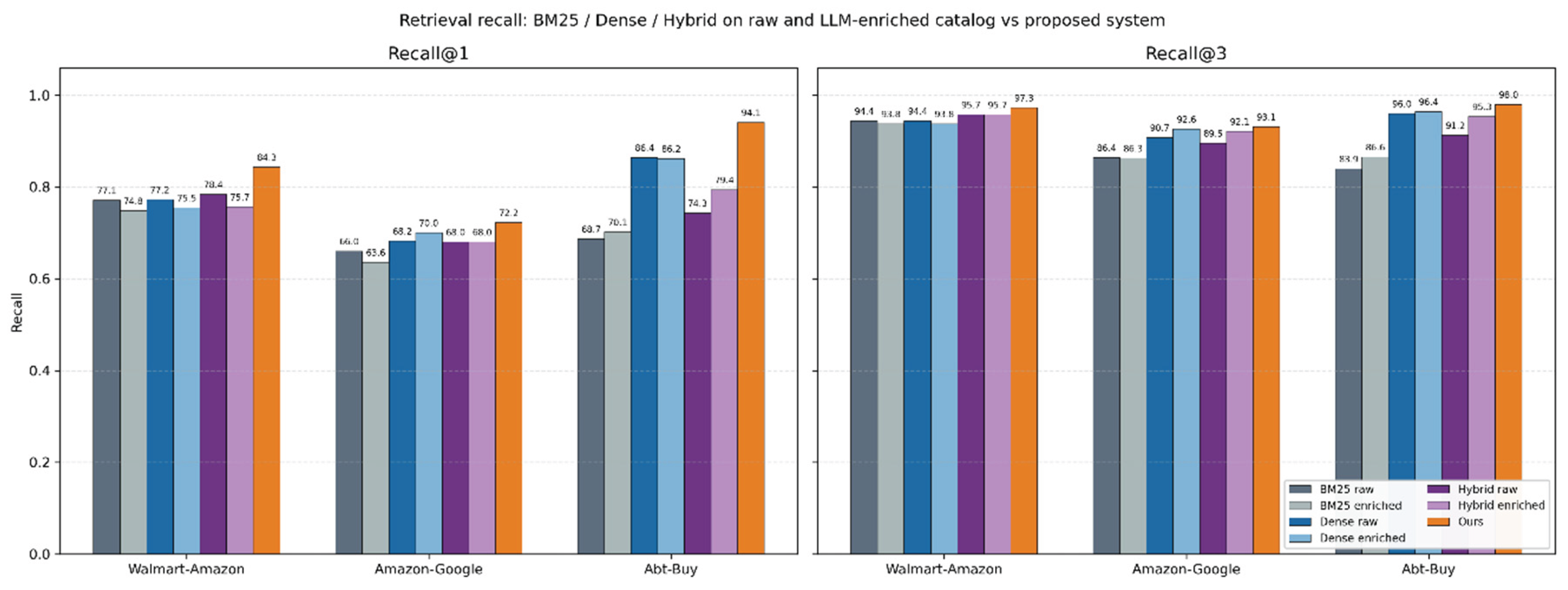

- Top-1 Recall: Proxies for “Touchless Automation”. This measures the percentage of invoices where the system’s first choice is correct, allowing for automatic posting without human review.

- Top-3 Recall: Proxies for “Decision Support Efficiency.” This measures how often the correct match appears in the top three suggestions. If the correct code is visible immediately, the AP clerk can validate it with a single click (taking seconds) rather than searching the catalog manually (taking minutes).

4. Results

4.1. Accuracy and Robustness

4.2. Analysis of Retrieval Baselines

4.3. Performance of the Proposed System

- Correction of Retrieval Errors: Even where the retrieval layer struggled (e.g., the drop in Walmart-Amazon enrichment), the Proposed System recovered significant ground, achieving an R@1 of 84.30%.

- Top-1 Accuracy: The system demonstrates its capability for automation, particularly in Abt-Buy, where it achieved a dominant 94.07% R@1, significantly outperforming the best baseline (Dense at 86.38%).

5. Discussion

5.1. Synthesis of Findings

5.2. Implications for Accounting Practice and Internal Controls

5.3. Limitations and Future Research

- Linguistic Scope and Mixed-Script Complexity: A primary limitation of this study is the linguistic homogeneity of the standard benchmarks (Abt-Buy, Amazon-Google, Walmart-Amazon), which are exclusively English. This contrasts with our target operational environment, which is characterized by high linguistic entropy. In our real-world use case, data is not simply “translated”; it involves complex code-switching, where invoices and catalog entries frequently mix Greek and English terms within the same line item (e.g., an English brand name paired with a Greek functional description, or mixed-script abbreviations). While the “Query Synthesizer” demonstrated robust handling of synonyms in the benchmarks, its primary value lies in its ability to normalize this hybrid Greek-English input, a capability not fully quantified by the current English-only datasets.

- Enrichment Trade-offs: Our experiments revealed that the “Augment-Both-Sides” strategy requires careful tuning. As observed in the Walmart-Amazon dataset, LLM-based catalog enrichment does not universally improve performance and can introduce noise (reducing R@1) in highly heterogeneous retail datasets. Future work should investigate governance mechanisms, such as confidence thresholds or “human-in-the-loop” review stages, to validate generated synonyms before they enter the vector index.

- A formal evaluation of end-to-end latency and per-line operational cost was out of scope for this study and is left to future work; such measurements would be required before the system could be positioned as a cost-reduction intervention rather than an accuracy/decision-support one.

5.4. Production Deployment Beyond the Benchmarks

5.5. Conclusions

Supplementary Materials

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

A.1. Catalog Enricher (Phase 1 — Offline)

| You are a keyword generator that enriches clean catalog items for better fuzzy retrieval and matching against noisy invoice lines in a RAG system. Task: For each catalog entry, return only concise keywords (no sentences, no labels, no duplicates or repeat of words). The goal is to expand the catalog entry with realistic variants and terms that could appear in invoices or receipts or other catalogs, improving recall during embedding or vector similarity search. Guidelines: • NEVER invent attributes not present in the input. Do not guess colors, sizes, capacities, or brands. • Keep the brand EXACT if present; add common brand abbreviations only if widely used (e.g., “hewlett packard” → “hp”). • Include synonyms for function/category if present (e.g., “headphones”, “headset”; “tv”, “television”). • Add realistic invoice-style variations (e.g., abbreviations). • Expand common abbreviations (e.g., “Tabl → tablets”, “Inj → injection”, “Amp → ampoule”), but keep both expanded and abbreviated forms when relevant. • Keep all numeric attributes EXACT (capacity, size, version). Also add common unit variants (e.g., “gb” and “gbyte”). Expand or clarify where needed. • No sentences, no marketing, no stopwords, no explanations. Keep each keyword short (≤4 words). • Focus only on metadata that could plausibly exist for this product in catalog descriptions. Return at least 5–10 concise, search-oriented keywords that maximize retrieval accuracy between catalog data and real catalog text. |

|

Output schema (Pydantic): class EnrichmentMetadata(BaseModel): entry1_enriched_metadata_keywords: list[str] entry2_enriched_metadata_keywords: list[str] entry3_enriched_metadata_keywords: list[str] entry4_enriched_metadata_keywords: list[str] entry5_enriched_metadata_keywords: list[str] |

A.2. Query Synthesizer (Phase 2 — Runtime)

| You are a Query Synthesizer for Product Matching in a vector-search pipeline. From a single product description, produce FIVE diverse, rich, high-recall queries to retrieve the best-matching product from a vector store of catalog lines. • Do NOT invent attributes. Use ONLY info present in the input. • Include every product/model code, sizes/dimensions, versions, and pack/count EXACTLY as they appear (when present). If absent, omit. • Exclude invoice meta: lots, discounts, prices, VAT, order numbers, dates, addresses, loyalty/offer text, etc. • Always preserve original script, casing, and diacritics for brand/model tokens; if you add an expansion, KEEP the original too. • Queries must be DENSE, INFORMATIVE (no minimal queries) and meaningfully different (avoid trivial rephrasings). DIVERSITY POLICY (pick the most helpful variations based on the product). Across the five queries, ensure you cover several of the following: • Category and form synonyms. • Acronym expansions or contracted/long-form variants. • Units/number/symbol/notation formatting variants found in input (e.g., “500 mg”/”500mg”, “2 x 500 g”/”2x500g”). • Packaging synonyms (add 1–2 besides the original). |

|

Output schema (Pydantic): class SearchQueries(BaseModel): search_queries: List[str] = Field( ..., description=“A list of 5 different search queries” ) |

A.3. Evaluator / Reranker (Phase 2 — Runtime, Final Stage)

| You are a Product Match Evaluator. Your goal is to identify and rank the best product matches for a given product line. INPUTS: (1) The original product line. (2) Up to 35 retrieved catalog lines (duplicates possible). TASK: Evaluate all candidates and return the top three most relevant catalog lines. EVALUATION PRIORITY (in order of importance): 1. Exact matches of product type or name. 2. Exact matches of brand name (brand text must match exactly if present). 3. Exact matches of product code(s) (e.g., SKU, EAN, or internal codes). 4. High textual similarity in product type or product name. 5. Exact matches or compatible values for size/dimensions or pack/count. 6. Other contextual or descriptive similarity. INSTRUCTIONS: • Always return the three best candidates, ranked from most to least relevant. • If no exact matches exist, return the three closest ones based on partial or semantic similarity. • If a candidate matches at least the product type or name, it is valid for ranking. • Never fabricate or modify product text; use the catalog lines as provided. • Focus on precision and meaningful relevance rather than sufficiency. |

|

Output schema (Pydantic): class Candidates(BaseModel): top_3_candidates: List[str] = Field( ..., description=“The top 3 candidates to the OCR-extracted “ “product line from the vector store catalog” ) |

References

- Adeyemi, M.; Oladipo, A.; Pradeep, R.; Lin, J. Zero-Shot Cross-Lingual Reranking with Large Language Models for Low-Resource Languages . arXiv.Org. 26 December 2023. Available online: https://arxiv.org/abs/2312.16159v1.

- Bardelli, C.; Rondinelli, A.; Vecchio, R.; Figini, S. Automatic Electronic Invoice Classification Using Machine Learning Models. Machine Learning and Knowledge Extraction 2020, 2(4), 617–629. [Google Scholar] [CrossRef]

- Bode, C.; Burkhart, D.; Schültken, R.; Vollmer, M. Future of Procurement. In Global Logistics and Supply Chain Strategies for the 2020s: Vital Skills for the Next Generation; Merkert, R., Hoberg, K., Eds.; Springer International Publishing, 2023; pp. 261–276. [Google Scholar] [CrossRef]

- Cohen, W. W.; Ravikumar, P.; Fienberg, S. E. A comparison of string distance metrics for name-matching tasks. IIWeb 2003, 3, 73–78. Available online: https://pubs.dbs.uni-leipzig.de/dc/files/Cohen2003Acomparisonofstringdistance.pdf.

- Cristani, M.; Bertolaso, A.; Scannapieco, S.; Tomazzoli, C. Future paradigms of automated processing of business documents. International Journal of Information Management 2018, 40, 67–75. [Google Scholar] [CrossRef]

- Flechsig, C.; Anslinger, F.; Lasch, R. Robotic Process Automation in purchasing and supply management: A multiple case study on potentials, barriers, and implementation. Journal of Purchasing and Supply Management 2022, 28(1), 100718. [Google Scholar] [CrossRef]

- Grabski, S. V.; Leech, S. A.; Schmidt, P. J. A review of ERP research: A future agenda for accounting information systems. Journal of Information Systems 2011, 25(1), 37–78. Available online: https://publications.aaahq.org/jis/article-abstract/25/1/37/1563. [CrossRef]

- Ha, H. T.; Horák, A. Information extraction from scanned invoice images using text analysis and layout features. Signal Processing: Image Communication 2022, 102, 116601. [Google Scholar] [CrossRef]

- Huang, F.; Vasarhelyi, M. A. Applying robotic process automation (RPA) in auditing: A framework. International Journal of Accounting Information Systems 2019, 35, 100433. [Google Scholar] [CrossRef]

- Huang, Y.; Lv, T.; Cui, L.; Lu, Y.; Wei, F. LayoutLMv3: Pre-training for Document AI with Unified Text and Image Masking . arXiv 2022, arXiv:2204.08387. [Google Scholar] [CrossRef]

- Jeong, Y.-B.; Seo, H.; Kim, Y.-H.; Kim, W.-Y. Retrieval-augmented visual parcel invoice understanding transformer for address correction. Engineering Applications of Artificial Intelligence 2025, 158, 111542. [Google Scholar] [CrossRef]

- Kim, G.; Hong, T.; Yim, M.; Nam, J.; Park, J.; Yim, J.; Hwang, W.; Yun, S.; Han, D.; Park, S. OCR-free Document Understanding Transformer . arXiv 2022, arXiv:2111.15664. [Google Scholar] [CrossRef]

- Kim, J.-I.; Shunk, D. L. Matching indirect procurement process with different B2B e-procurement systems. Computers in Industry 2004, 53(2), 153–164. [Google Scholar] [CrossRef]

- Köpcke, H.; Thor, A.; Rahm, E. Evaluation of entity resolution approaches on real-world match problems. Proceedings of the VLDB Endowment 2010, 3(1–2), 484–493. [Google Scholar] [CrossRef]

- Krieger, F.; Drews, P.; Funk, B. Automated invoice processing: Machine learning-based information extraction for long tail suppliers. Intelligent Systems with Applications 2023, 20, 200285. [Google Scholar] [CrossRef]

- Lewis, P.; Perez, E.; Piktus, A.; Petroni, F.; Karpukhin, V.; Goyal, N.; Küttler, H.; Lewis, M.; Yih, W.; Rocktäschel, T.; Riedel, S.; Kiela, D. Retrieval-augmented generation for knowledge-intensive NLP tasks. In Proceedings of the 34th International Conference on Neural Information Processing Systems NIPS ’20; 2020; pp. 9459–9474. [Google Scholar]

- Li, Y.; Li, J.; Suhara, Y.; Doan, A.; Tan, W.-C. Deep Entity Matching with Pre-Trained Language Models. Proceedings of the VLDB Endowment 2020, 14(1), 50–60. [Google Scholar] [CrossRef]

- Liu, H.; Li, C.; Wu, Q.; Lee, Y. J. Visual Instruction Tuning . arXiv 2023, arXiv:2304.08485. [Google Scholar] [CrossRef]

- Luo, C.; Shen, Y.; Zhu, Z.; Zheng, Q.; Yu, Z.; Yao, C. LayoutLLM: Layout Instruction Tuning with Large Language Models for Document Understanding . arXiv 2024, arXiv:2404.05225. [Google Scholar] [CrossRef]

- Luo, S.; Yu, J. SGFNet: A semantic graph-based multimodal network for financial invoice information extraction. Expert Systems with Applications 2024, 258, 125156. [Google Scholar] [CrossRef]

- Ma, X.; Gong, Y.; He, P.; Zhao, H.; Duan, N. Query Rewriting in Retrieval-Augmented Large Language Models. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing; Bouamor, H., Pino, J., Bali, K., Eds.; Association for Computational Linguistics, 2023; pp. 5303–5315. [Google Scholar] [CrossRef]

- Maurya, C. K.; Gantayat, N.; Dechu, S.; Horvath, T. Online Similarity Learning with Feedback for Invoice Line Item Matching . arXiv 2020, arXiv:2001.00288. [Google Scholar] [CrossRef]

- Mehrbod, A.; Zutshi, A.; Grilo, A.; Jardim-Gonsalves, R. Application of a semantic product matching mechanism in open tendering e-marketplaces. Journal of Public Procurement 2018, 18(1), 14–30. Available online: https://www.emerald.com/insight/content/doi/10.1108/jopp-03-2018-002/full/html. [CrossRef]

- Mistiawan, A.; Suhartono, D. Product Matching with Two-Branch Neural Network Embedding. Journal Européen Des Systèmes Automatisés 2024, 57(4). Available online: https://search.ebscohost.com/login.aspx?direct=true&profile=ehost&scope=site&authtype=crawler&jrnl=12696935&AN=179548284&h=XUVxwKQFHBME89obu5I7K7Q70spwBavC2gv5K8RxxfrYCNUNy%2FEIpSOfPIXGO37dbB4f0a9%2BHaX94RHACIPDsg%3D%3D&crl=c. [CrossRef]

- Mudgal, S.; Li, H.; Rekatsinas, T.; Doan, A.; Park, Y.; Krishnan, G.; Deep, R.; Arcaute, E.; Raghavendra, V. Deep Learning for Entity Matching: A Design Space Exploration. Proceedings of the 2018 International Conference on Management of Data SIGMOD ’18 2018, 19–34. [Google Scholar] [CrossRef]

- Ng, K. K. H.; Chen, C.-H.; Lee, C. K. M.; Jiao, J. (Roger); Yang, Z.-X. A systematic literature review on intelligent automation: Aligning concepts from theory, practice, and future perspectives. Advanced Engineering Informatics 2021, 47, 101246. [Google Scholar] [CrossRef]

- Nigam, P.; Song, Y.; Mohan, V.; Lakshman, V.; Ding, W.; Shingavi, A.; Teo, C. H.; Gu, H.; Yin, B. Semantic Product Search. In Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining; 2019; pp. 2876–2885. [Google Scholar] [CrossRef]

- O’Leary, D. E. Enterprise resource planning systems: Systems, life cycle, electronic commerce, and risk; Cambridge university press, 2000; Available online: https://books.google.com/books?hl=en&lr=&id=7fzMFG-tCmkC&oi=fnd&pg=PP11&dq=Enterprise+Resource+Planning+Systems+O%27Leary,+D.+E.+(2000)&ots=9a4Vlr0Y9P&sig=dRJS6XswJReudxUtffBskikKTNA.

- OpenAI, Achiam J.; Adler, S.; Agarwal, S.; Ahmad, L.; Akkaya, I.; Aleman, F. L.; Almeida, D.; Altenschmidt, J.; Altman, S.; Anadkat, S.; Avila, R.; Babuschkin, I.; Balaji, S.; Balcom, V.; Baltescu, P.; Bao, H.; Bavarian, M.; Belgum, J.; Zoph, B. GPT-4 Technical Report . arXiv 2024, arXiv:2303.08774. [Google Scholar] [CrossRef]

- Palm, R. B.; Winther, O.; Laws, F. CloudScan—A configuration-free invoice analysis system using recurrent neural networks . arXiv 2017, arXiv:1708.07403. [Google Scholar] [CrossRef]

- Peeters, R.; Bizer, C. Supervised Contrastive Learning for Product Matching. Companion Proceedings of the Web Conference 2022 WWW ’22 2022, 248–251. [Google Scholar] [CrossRef]

- Peeters, R.; Steiner, A.; Bizer, C. Entity Matching using Large Language Models . arXiv 2024, arXiv:2310.11244. [Google Scholar] [CrossRef]

- Raina, V.; Gales, M. Question-Based Retrieval using Atomic Units for Enterprise RAG . arXiv.Org. 20 May 2024. Available online: https://arxiv.org/abs/2405.12363v2.

- Robertson, S. E.; Walker, S.; Jones, S.; Hancock-Beaulieu, M. M.; Gatford, M. Okapi at TREC-3. Nist Special Publication Sp 1995, 109, 109. Available online: https://books.google.com/books?hl=en&lr=&id=j-NeLkWNpMoC&oi=fnd&pg=PA109&dq=Okapi+at+TREC-3&ots=YkE6HhAsME&sig=kDgCD0Ysml73EXihKaq8_229ZBQ.

- Salton, G.; Wong, A.; Yang, C. S. A vector space model for automatic indexing. Communications of the ACM 1975, 18(11), 613–620. [Google Scholar] [CrossRef]

- Schlegel, D.; Fundanovic, O.; Kraus, P. Rating Risks in Robotic Process Automation (RPA) Projects: An Expert Assessment Using an Impact-Uncontrollability Matrix. Procedia Computer Science, CENTERIS – International Conference on ENTERprise Information Systems / ProjMAN - International Conference on Project MANagement / HCist - International Conference on Health and Social Care Information Systems and Technologies 2023 2024, 239, 185–192. [Google Scholar] [CrossRef]

- Šimsa, Š.; Šulc, M.; Uřičář, M.; Patel, Y.; Hamdi, A.; Kocián, M.; Skalický, M.; Matas, J.; Doucet, A.; Coustaty, M.; Karatzas, D. DocILE Benchmark for Document Information Localization and Extraction . arXiv 2023, arXiv:2302.05658. [Google Scholar] [CrossRef]

- Strohmer, M. F.; Easton, S.; Eisenhut, M.; Epstein, E.; Kromoser, R.; Peterson, E. R.; Rizzon, E. Digital in Procurement. In Disruptive Procurement: Winning in a Digital World; Strohmer, M. F., Easton, S., Eisenhut, M., Epstein, E., Kromoser, R., Peterson, E. R., Rizzon, E., Eds.; Springer International Publishing, 2020; pp. 49–76. [Google Scholar] [CrossRef]

- Tang, G.; Xie, L.; Jin, L.; Wang, J.; Chen, J.; Xu, Z.; Wang, Q.; Wu, Y.; Li, H. MatchVIE: Exploiting Match Relevancy between Entities for Visual Information Extraction . arXiv 2021, arXiv:2106.12940. [Google Scholar] [CrossRef]

- Tater, T.; Gantayat, N.; Dechu, S.; Jagirdar, H.; Rawat, H.; Guptha, M.; Gupta, S.; Strak, L.; Kiran, S.; Narayanan, S. AI Driven Accounts Payable Transformation. Proceedings of the AAAI Conference on Artificial Intelligence 2022, 36(11), 12405–12413. [Google Scholar] [CrossRef]

- Tiwari, A. K.; Marak, Z. R.; Paul, J.; Deshpande, A. P. Determinants of electronic invoicing technology adoption: Toward managing business information system transformation. Journal of Innovation & Knowledge 2023, 8(3), 100366. [Google Scholar] [CrossRef]

- Wagner, R. A.; Fischer, M. J. The String-to-String Correction Problem. J. ACM 1974, 21(1), 168–173. [Google Scholar] [CrossRef]

- Wang, T.; Chen, X.; Lin, H.; Chen, X.; Han, X.; Wang, H.; Zeng, Z.; Sun, L. Match, Compare, or Select? An Investigation of Large Language Models for Entity Matching . arXiv 2024, arXiv:2405.16884. [Google Scholar] [CrossRef]

- Xu, Y.; Li, M.; Cui, L.; Huang, S.; Wei, F.; Zhou, M. LayoutLM: Pre-training of Text and Layout for Document Image Understanding. In Proceedings of the 26th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining; 2020; pp. 1192–1200. [Google Scholar] [CrossRef]

- Zeakis, A.; Papadakis, G.; Skoutas, D.; Koubarakis, M. Pre-Trained Embeddings for Entity Resolution: An Experimental Analysis. Proceedings of the VLDB Endowment 2023, 16(9), 2225–2238. [Google Scholar] [CrossRef]

[1] The three benchmarks are obtained from the official DeepMatcher dataset index at https://github.com/anhaidgroup/deepmatcher/blob/master/Datasets.md (Mudgal et al., 2018). Specifically, we use the Structured/Walmart-Amazon, Structured/Amazon-Google and Textual/Abt-Buy entries from that page. |

| Dataset | Domain | Catalog Size (Rows) | Evaluated Queries | Catalog Construction Strategy (Preprocessing) |

| Amazon-Google | Software & Tech | 3,226 | 1,167 | Composite Field Construction: We concatenated the Title and Manufacturer fields. The logic explicitly checks if the manufacturer is already present in the title; if not, it is appended to ensure the embedding captures the brand identity, which is critical for matching software licenses. |

| Abt-Buy | Consumer Electronics | 1,092 | 1,028 | Single Field Indexing: This dataset contained high-quality, descriptive Names. We indexed the Name field directly as it contained sufficient signal (Brand + Model + Spec) to distinguish SKUs without additional concatenation. |

| Walmart-Amazon | General Retail | 22,074 | 962 | Conditional Attribute Injection: This was the most heterogeneous dataset. We implemented a conditional logic that analyzed the Title. If key attributes like Brand or Model Number were missing from the title string, they were injected from their respective columns. This mirrors the “data cleansing” phase often required in ERP migrations. |

| Dataset | Method | Metric | Raw Catalog | Enriched Catalog | Proposed System |

| Amazon-Google | Dense | R@1 | 68.21% | 70.01% | |

| Sparse | R@1 | 65.98% | 63.58% | ||

| Hybrid | R@1 | 68.04% | 68.04% | 72.24% | |

| Dense | R@3 | 90.75% | 92.63% | ||

| Sparse | R@3 | 86.38% | 86.29% | ||

| Hybrid | R@3 | 89.55% | 92.12% | 93.14% | |

| Abt-Buy | Dense | R@1 | 86.38% | 86.22% | |

| Sparse | R@1 | 68.68% | 70.14% | ||

| Hybrid | R@1 | 74.32% | 79.38% | 94.07% | |

| Dense | R@3 | 96.01% | 96.38% | ||

| Sparse | R@3 | 83.95% | 86.58% | ||

| Hybrid | R@3 | 91.25% | 95.33% | 97.96% | |

| Walmart-Amazon | Dense | R@1 | 77.23% | 75.47% | |

| Sparse | R@1 | 77.13% | 74.84% | ||

| Hybrid | R@1 | 78.38% | 75.68% | 84.30% | |

| Dense | R@3 | 94.39% | 93.76% | ||

| Sparse | R@3 | 94.39% | 93.76% | ||

| Hybrid | R@3 | 95.74% | 95.74% | 97.30% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.