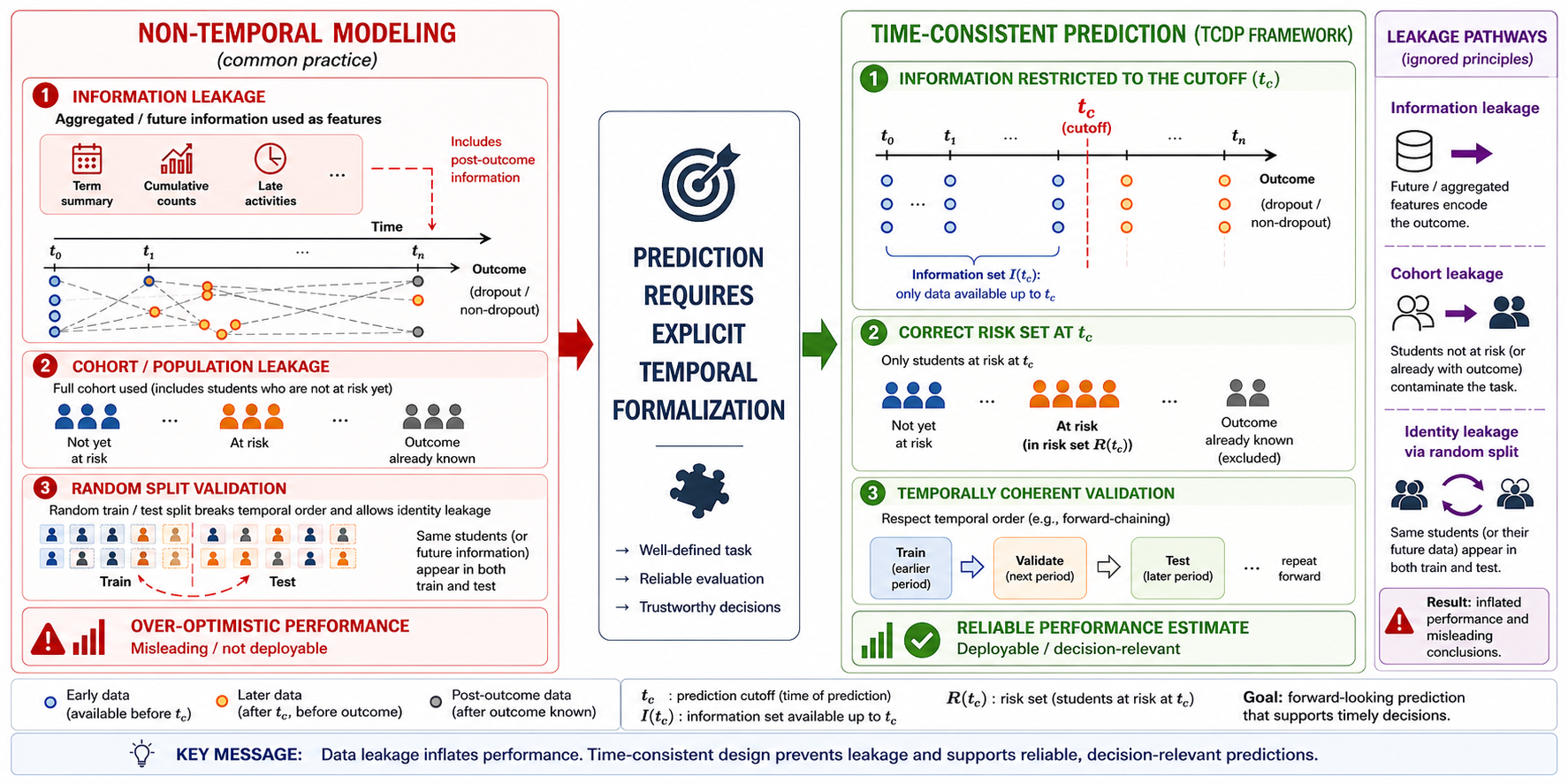

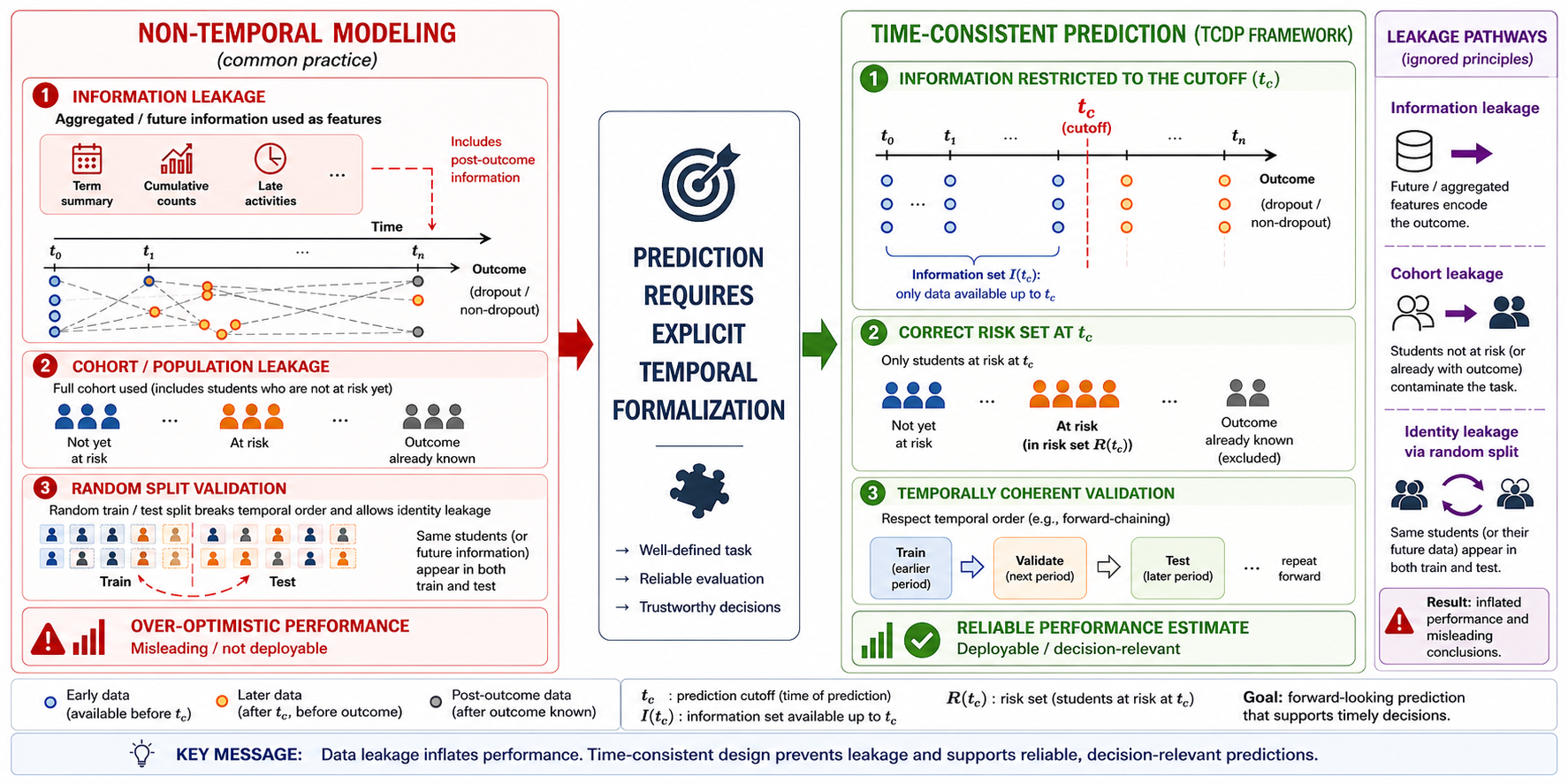

Data leakage represents a critical methodological challenge in machine learning–based predictive modeling, as it can inflate performance estimates and lead to misleading interpretations. In higher education contexts, where predictive models increasingly support institutional decision-making, the temporal and structural conditions under which predictions are generated and evaluated are often insufficiently specified. This study conceptualizes predictive modeling as a temporally formalized decision task and identifies four core design conditions: explicit specification of the prediction cutoff, temporal restriction of the information set, consistent definition of the at-risk popu-lation, and temporally coherent validation. The empirical analysis combines a structu-red review of recent dropout prediction studies with a controlled experimental de-monstration based on longitudinal student data. The review shows that the joint for-malization of these conditions remains uncommon, with many models relying on ret-rospective and temporally unspecified configurations. The experimental results de-monstrate that improper validation in longitudinal data structures can produce systematic performance inflation, particularly through identity leakage, and that mo-dels with higher representational capacity exploit such leakage more effectively. These findings indicate that predictive performance cannot be interpreted independently of the temporal and structural definition of the prediction task. The proposed framework provides a methodological basis for evaluating predictive models in higher education and other domains where decisions depend on temporally grounded predictions.