Submitted:

02 May 2026

Posted:

05 May 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

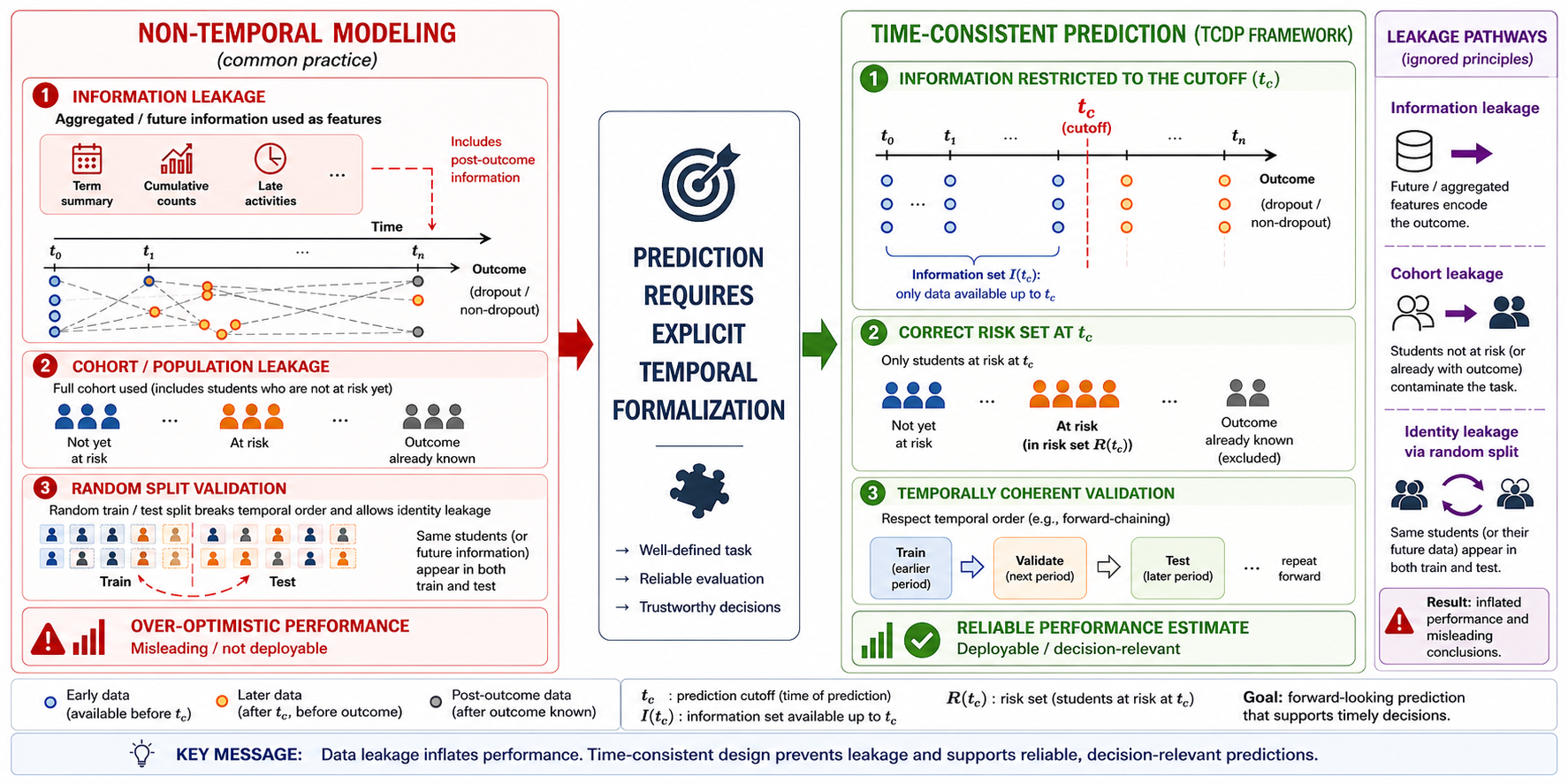

2. Conceptual and Methodological Framework

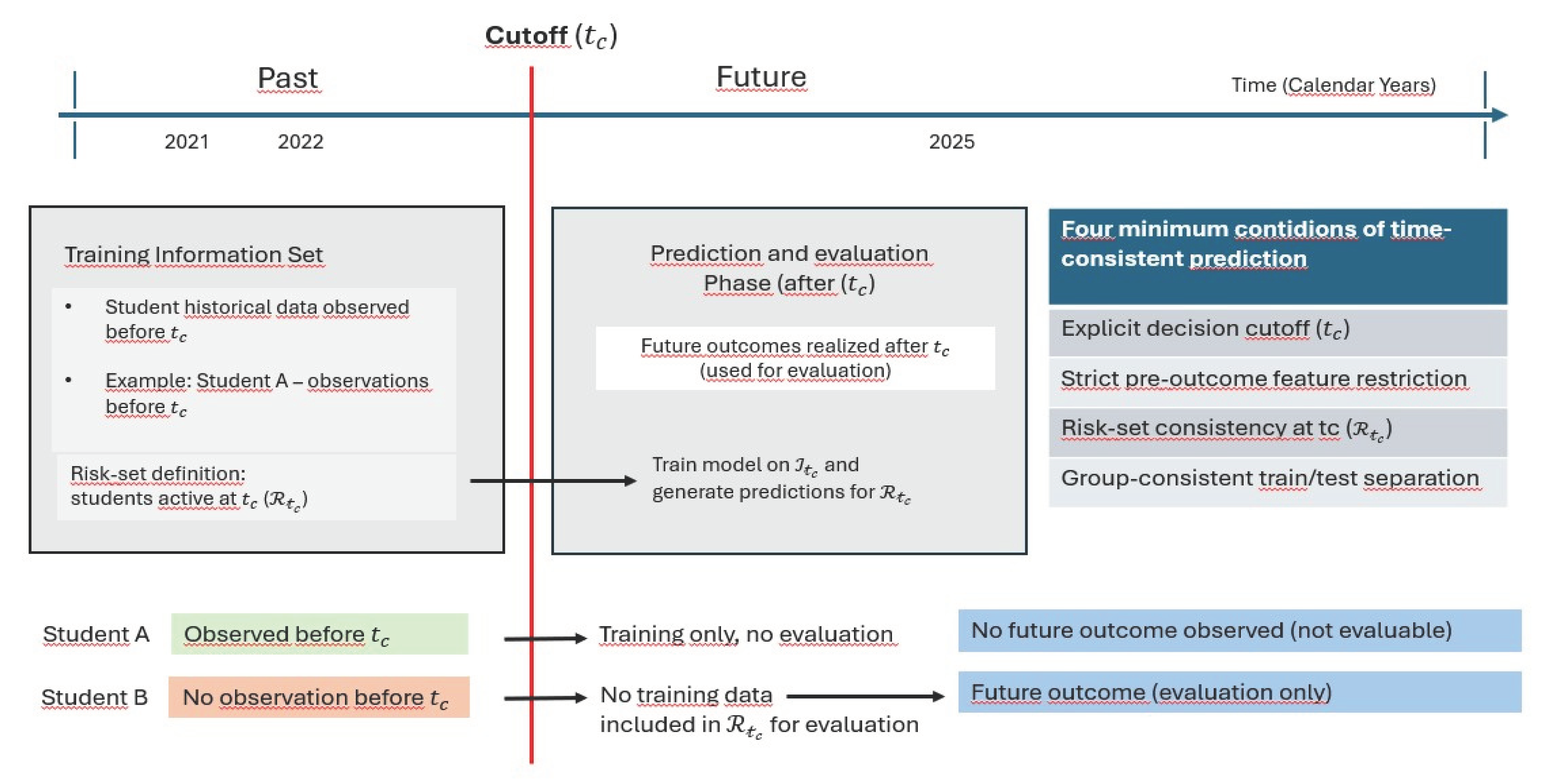

2.1. Prediction as a Time-Formalised Task

- (i)

- )

- (ii)

- )

- (iii)

- Specification of the Risk Set (R(t_c))

- (iv) Temporal and Cohort-Level Consistency (Temporal Hierarchy)

2.2. Consequences of Violating the Core Conditions

2.2.1. Absence of an Explicit Cutoff

2.2.2. Inconsistency of the Information Set

2.2.3. Violation of Risk-Set Consistency

2.2.4. Violation of Temporal Hierarchy

2.3. Data Leakage as Structural Exposure

2.3.1. Temporal Leakage

2.3.2. Identity Leakage

2.3.3. The Impact of Data Leakage on the Interpretation of Predictive Performance

3. Methodological Patterns in Dropout Prediction: A Literature-Based Analysis

3.1. Absence of an Explicit Prediction Cutoff

3.2. Predictive Framing and Retrospective Implementation

3.3. Information Set Configuration and Structural Inconsistency

3.4. Risk-Set Definition and Population Consistency

3.5. Validation Strategies and Temporal Hierarchy

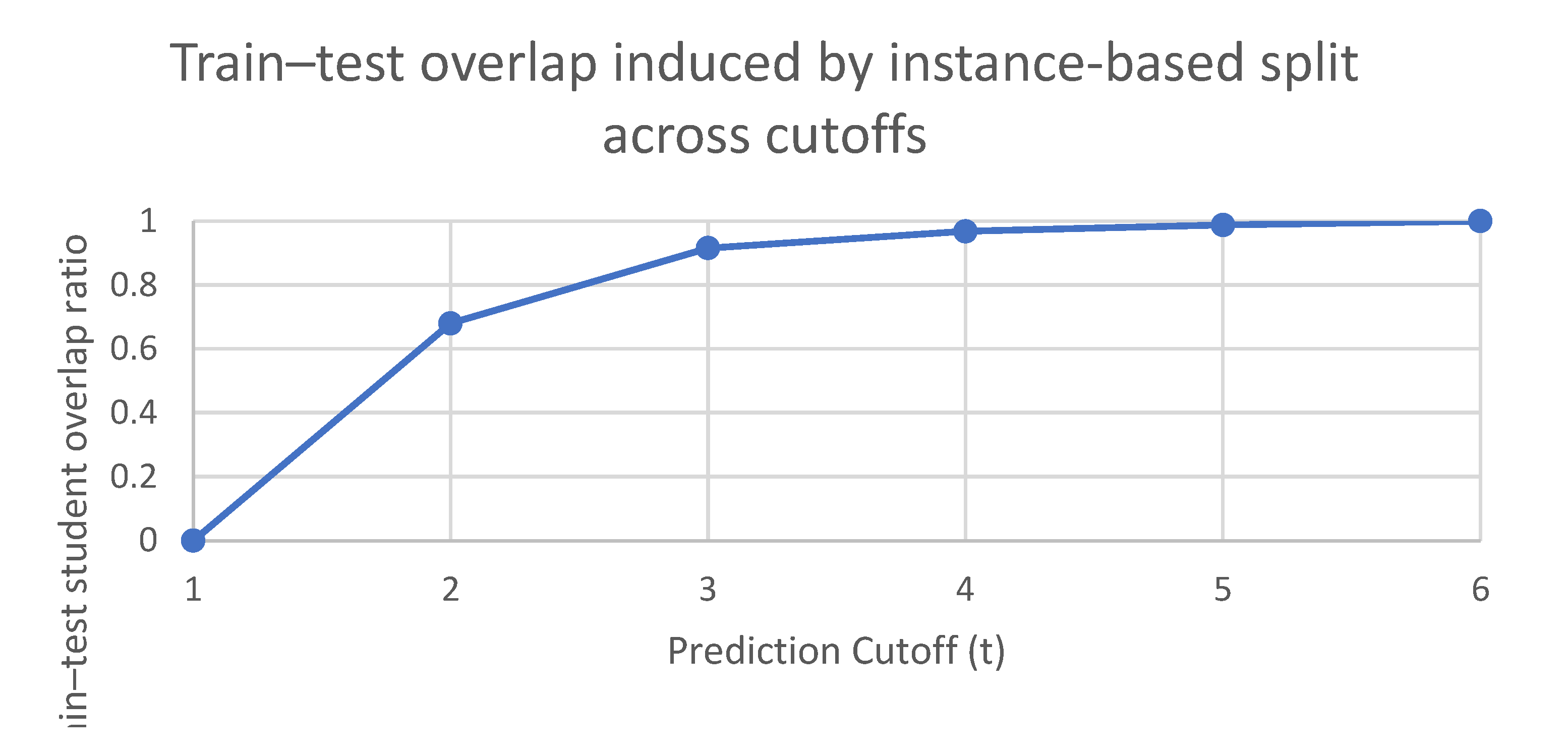

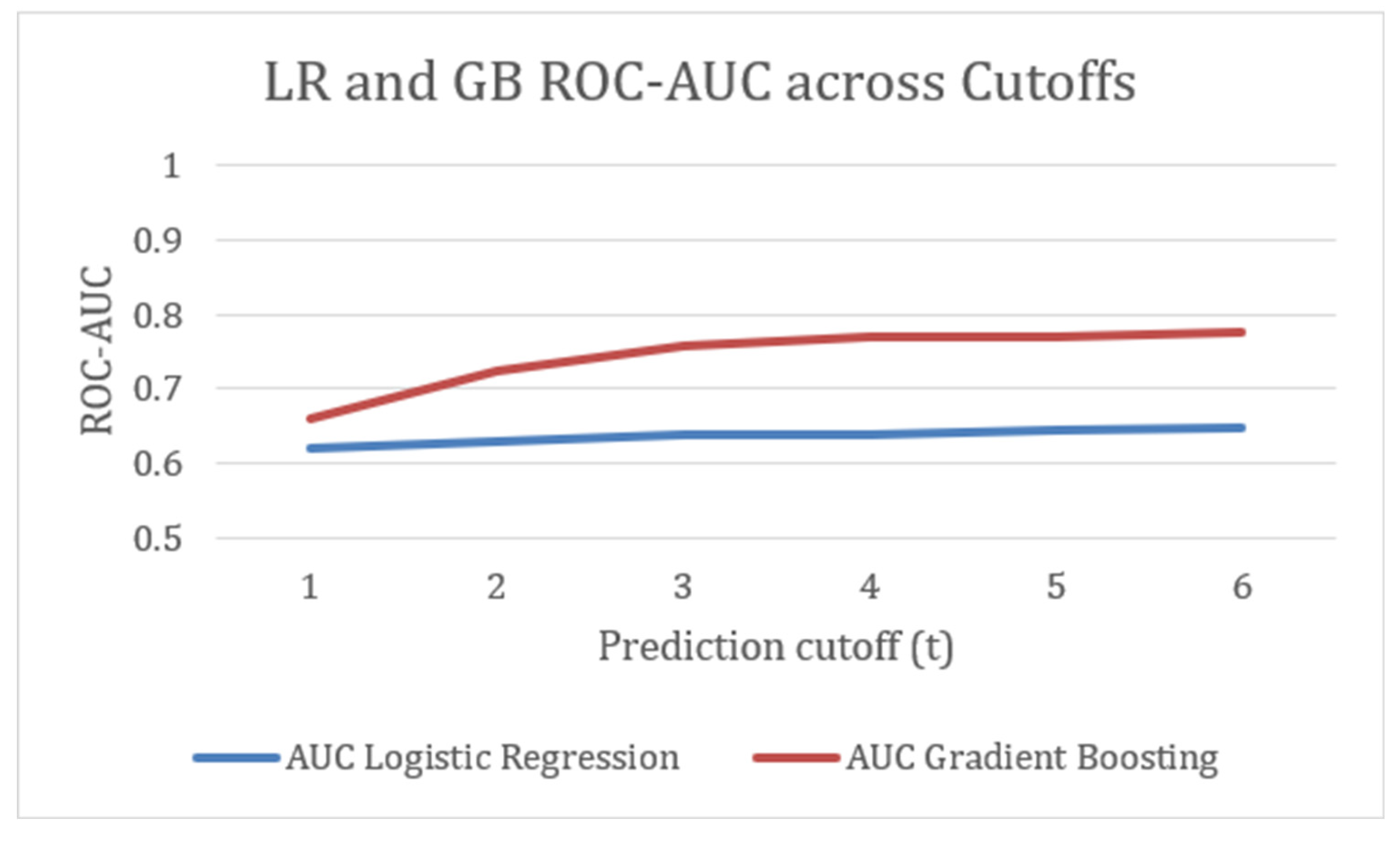

4. Empirical Demonstration: Identity Leakage in Longitudinal Data with Static Predictors

4.1. Experimental Design and Data Structure

4.2. Results and Analysis of Performance Inflation

5. Conclusion

Data Availability Statement

References

- Bognár, L.; Fauszt, T. Factors and conditions that affect the goodness of machine learning models for predicting the success of learning. Comput. Educ. Artif. Intell. 2022, 3, 100100. [Google Scholar] [CrossRef]

- Cho, C.-H.; Yu, Y.-W.; Kim, H.-G. A study on dropout prediction for university students using machine learning. Appl. Sci. 2023, 13, 12004. [Google Scholar] [CrossRef]

- Okoye, K.; Nganji, J.T.; Escamilla, J.; Hosseini, S. Machine learning model (RG-DMML) and ensemble algorithm for prediction of students’ retention and graduation in education. Comput. Educ. Artif. Intell. 2024, 6, 100205. [Google Scholar] [CrossRef]

- Rabelo, A.; Rodrigues, M.W.; Nobre, C.; Isotani, S.; Zárate, L. “Educational data mining and learning analytics: a review of educational management in e-learning”. Inf. Discov. Deliv. 2024, Vol. 52(No. 2), 149–163. [Google Scholar] [CrossRef]

- Bouihi, B.; Bousselham, A.; Aoula, E.; Ennibras, F.; Deraoui, A. Prediction of higher education student dropout based on regularized regression models. Eng. Technol. Appl. Sci. Res. 2024, 14, 17811–17815. [Google Scholar] [CrossRef]

- Hassan, M.A.; Muse, A.H.; Nadarajah, S. Predicting student dropout rates using supervised machine learning: Insights from the 2022 National Education Accessibility Survey in Somaliland. Appl. Sci. 2024, 14, 7593. [Google Scholar] [CrossRef]

- Vaarma, M.; Li, H. Predicting student dropouts with machine learning: An empirical study in Finnish higher education. Technol. Soc. 2024, 76, 102474. [Google Scholar] [CrossRef]

- Villar, A.; Robledo Velini de Andrade, C. Supervised machine learning algorithms for predicting student dropout and academic success: A comparative study. Discov. Artif. Intell. 2024, 4, 2. [Google Scholar] [CrossRef]

- Ouyang, F.; Zheng, L.; Jiao, P. Artificial intelligence in online higher education: A systematic review of empirical research from 2011 to 2020. Educ. Inf. Technol. 2022, 27, 7893–7925. [Google Scholar] [CrossRef]

- Kaufman, S.; Rosset, S.; Perlich, C.; Stitelman, O. Leakage in data mining: Formulation, detection, and avoidance. ACM Trans. Knowl. Discov. From Data 2012, 6, 1–21. [Google Scholar] [CrossRef]

- Kapoor, S.; Narayanan, A. Leakage and the reproducibility crisis in machine-learning-based science. Patterns 2023, 4, 100804. [Google Scholar] [CrossRef]

- Wen, J.; Thibeau-Sutre, E.; Diaz-Melo, M.; Samper-González, J.; Routier, A.; Bottani, S.; Colliot, O. Convolutional neural networks for Alzheimer’s disease classification on MRI: The leakage conundrum and a framework for design and evaluation. Nat. Commun. 2020, 11, 2441. [Google Scholar] [CrossRef]

- Apicella, A.; Isgrò, F.; Prevete, R. Don’t push the button! Exploring data leakage risks in machine learning and transfer learning. Artif. Intell. Rev. 2025, 58, 339. [Google Scholar] [CrossRef]

- Cawley, G.C.; Talbot, N.L. On over-fitting in model selection and subsequent selection bias in performance evaluation. J. Mach. Learn. Res. 2010, 11, 2079–2107. [Google Scholar]

- IBM. What is data leakage? 2023. Available online: https://www.ibm.com/topics/data-leakage (accessed on XX Month 2026).

- Sayre, R.; Costello, R.P. Data leakage in health outcomes prediction: A systematic review. J. Biomed. Inform. 2022, 129, 104044. [Google Scholar] [CrossRef]

- Hand, D.J. Classifier technology and the illusion of progress. Stat. Sci. 2006, 21, 2–14. [Google Scholar] [CrossRef]

- Lipton, Z. C.; Steinhardt, J. Troubling Trends in Machine Learning Scholarship: Some ML papers suffer from flaws that could mislead the public and stymie future research. Queue 2019, 17(1), 45–77. [Google Scholar] [CrossRef]

- Abdullah; Ali, R. H.; Koutaly, R.; Khan, T. A.; Ahmad, I. Enhancing Student Retention: Predictive Machine Learning Models for Identifying and Preventing University Dropout. 2025 International Conference on Innovation in Artificial Intelligence and Internet of Things (AIIT), Jeddah, Saudi Arabia; 2025, pp. 1–6. [CrossRef]

- Barros, B.M.; do Nascimento, H.A.D.; Guedes, R.; Monsueto, S.E. Evaluating splitting approaches in the context of student dropout prediction. arXiv 2023, arXiv:2305.08600. [Google Scholar] [CrossRef]

- Bond, M.; Khosravi, H.; De Laat, M.; Bergdahl, N.; Negrea, V.; Oxley, E.; Pham, P.; Chong, S.W.; Siemens, G. A meta systematic review of artificial intelligence in higher education: A call for increased ethics, collaboration, and rigour. Int. J. Educ. Technol. High. Educ. 2024, 21, 4. [Google Scholar] [CrossRef]

- Dake, D.K.; Buabeng-Andoh, C. Using machine learning techniques to predict learner drop-out rate in higher educational institutions. Mob. Inf. Syst. 2022, 2022, 2670562. [Google Scholar] [CrossRef]

- Gašević, D.; Dawson, S.; Siemens, G. Let’s not forget: Learning analytics are about learning. Comput. Educ. 2015, 82, 64–71. [Google Scholar] [CrossRef]

- Brooks, C.; Thompson, C. Predictive modelling in teaching and learning. In Handbook of Learning Analytics; Lang, C., Siemens, G., Wise, A., Gašević, D., Eds.; Society for Learning Analytics Research, 2017; pp. 61–73. [Google Scholar] [CrossRef]

- Roberts, D.R.; Bahn, V.; Ciuti, S.; Boyce, M.S.; Elith, J.; Guillera-Arroita, G.; Thuiller, W. Cross-validation strategies for data with temporal, spatial, or hierarchical structure. Ecography 2017, 40, 913–929. [Google Scholar] [CrossRef]

- Arlot, S.; Celisse, A. A survey of cross-validation procedures for model selection. Stat. Surv. 2010, 4, 40–79. [Google Scholar] [CrossRef]

- Bergmeir, C.; Hyndman, R.J.; Koo, B. A note on the validity of cross-validation for evaluating autoregressive time series forecasting. Comput. Stat. Data Anal. 2018, 120, 70–83. [Google Scholar] [CrossRef]

- Bollen, K.A.; Brand, J.E. A general panel model with random and fixed effects: A structural equations approach. Soc. Forces 2010, 89, 1–34. [Google Scholar] [CrossRef]

- Cerqua, A.; Letta, M.; Pinto, G. On the (mis)use of machine learning with panel data. Oxf. Bull. Econ. Stat. 2025, 1–13. [Google Scholar] [CrossRef]

- Shmueli, G. To explain or to predict? Stat. Sci. 2010, 25(3), 289–310. [Google Scholar] [CrossRef]

- Wooldridge, J.M. Econometric Analysis of Cross Section and Panel Data, 2nd ed.; MIT Press, 2010. [Google Scholar]

- Knight, S.; Buckingham Shum, S.; Littleton, K. Epistemology, assessment, and learning analytics. In Proceedings of the Fourth International Conference on Learning Analytics and Knowledge (LAK ‘14), 2014; pp. 129–138. [Google Scholar] [CrossRef]

- Hofman, J.M.; Sharma, A.; Watts, D.J. Prediction and explanation in social systems. Science 2017, 355, 486–488. [Google Scholar] [CrossRef]

- Fu, C.; Fang, Q. Curriculum-aware cognitive diagnosis via graph neural networks. Information 2025, 16, 996. [Google Scholar] [CrossRef]

- Shmueli, G. Predictive Analytics in Information Systems Research. MIS Q. 2010, 35, 553–572. [Google Scholar] [CrossRef]

- Hastie, T.; Tibshirani, R.; Friedman, J. The Elements of Statistical Learning: Data Mining, Inference, and Prediction, 2nd ed.; Springer, 2009. [Google Scholar] [CrossRef]

- Kleinbaum, D.G.; Kleinbaum, M. Survival Analysis: A Self-Learning Text, 3rd ed.; Springer, 2012. [Google Scholar] [CrossRef]

| Component | Designation |

Definition / Requirement |

| (i) | ) | The fixed time point at which prediction is issued. |

| (ii) | Information Set () | ). |

| (iii) | Risk Set () |

for whom the outcome has not yet occurred |

| (iv) | Temporal Hierarchy() | Preservation of temporal order during model training and evaluation: training data must precede test data chronologically; observations from the same individual must not overlap across sets; and information from later calendar periods must not be incorporated into earlier decision configurations. |

| Study (Year) | Cutoff | Information Set | Risk-set | Temporal Hierarchy |

| Cho (2023) | ✖ | ✖ | ✖ | ✖ |

| Hassan (2024) | ✖ | ✖ | ✖ | ✖ |

| Villar (2024) | ✖ | ✖ | ✖ | ✖ |

| Okoye (2024) | ✖ | ✖ | ✖ | ✖ |

| Song (2023) | ✖ | ✖ | ✖ | ✖ |

| Arthana (2024) | ✖ | ✖ | ✖ | ✖ |

| Bouihi (2024) | ✖ | ✖ | ✖ | ◐ |

| Kabáthová (2021) | ◐ | ✔ | ◐ | ✖ |

| Goren (2024) | ✔ | ✔ | ◐ | ◐ |

| Vaarma (2024) | ✔ | ✔ | ✔ | ✔ |

| Barros (2023) | ✔ | ✔ | ✔ | ✔ |

| Cutoff |

Student-level Overlap ratio |

AUC Logistic Regression |

AUC Gradient Boosting |

| 1 | 0.00 | 0.62 | 0.66 |

| 2 | 0.68 | 0.63 | 0.72 |

| 3 | 0.92 | 0.64 | 0.76 |

| 4 | 0.97 | 0.64 | 0.77 |

| 5 | 0.99 | 0.65 | 0.77 |

| 6 | 1.00 | 0.65 | 0.78 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.