Submitted:

01 May 2026

Posted:

04 May 2026

You are already at the latest version

Abstract

Keywords:

“All technology has the potential for both good and evil. But what matters is how we use it.”— Tim Berners-Lee, Computer Scientist

Etymology of Utopia and Dystopia

Framing AI utopian and Dystopian Narratives in News Headlines

The Binary Fallacy: Beyond Pure Utopia and Dystopia in AI Development

"A dystopia is a utopia that's gone wrong."Ursula K. Le Guin

The Myth of a Pure Utopia and the Low Probability of a Complete Dystopia

A Pragmatic Perspective: Mixed Effects of AI

- Anticipated Positive Impacts and Emerging Opportunities in an AI-Augmented Future

- 2.

- Negative Effects and Emerging Risks in an AI-Augmented Future

Literature Review

Education

Healthcare

Robotics, Automobile and Factories

Jobs and Careers

Society

Data

Data Collection and Extraction

Feature Engineering

Methodology

Exploratory Data Analysis (EDA)

- Linguistic and Geographical Diversity

- 2.

- AI news headlines textual analysis

Natural Language Processing Analysis

Statistical Analysis

Results and Analysis

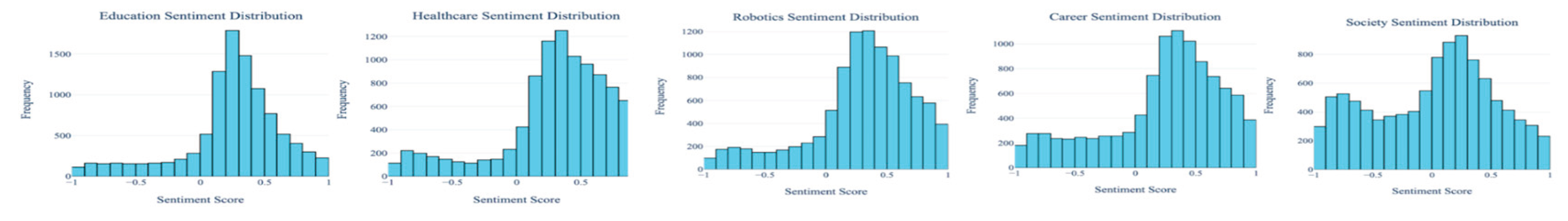

Sentiment Analysis

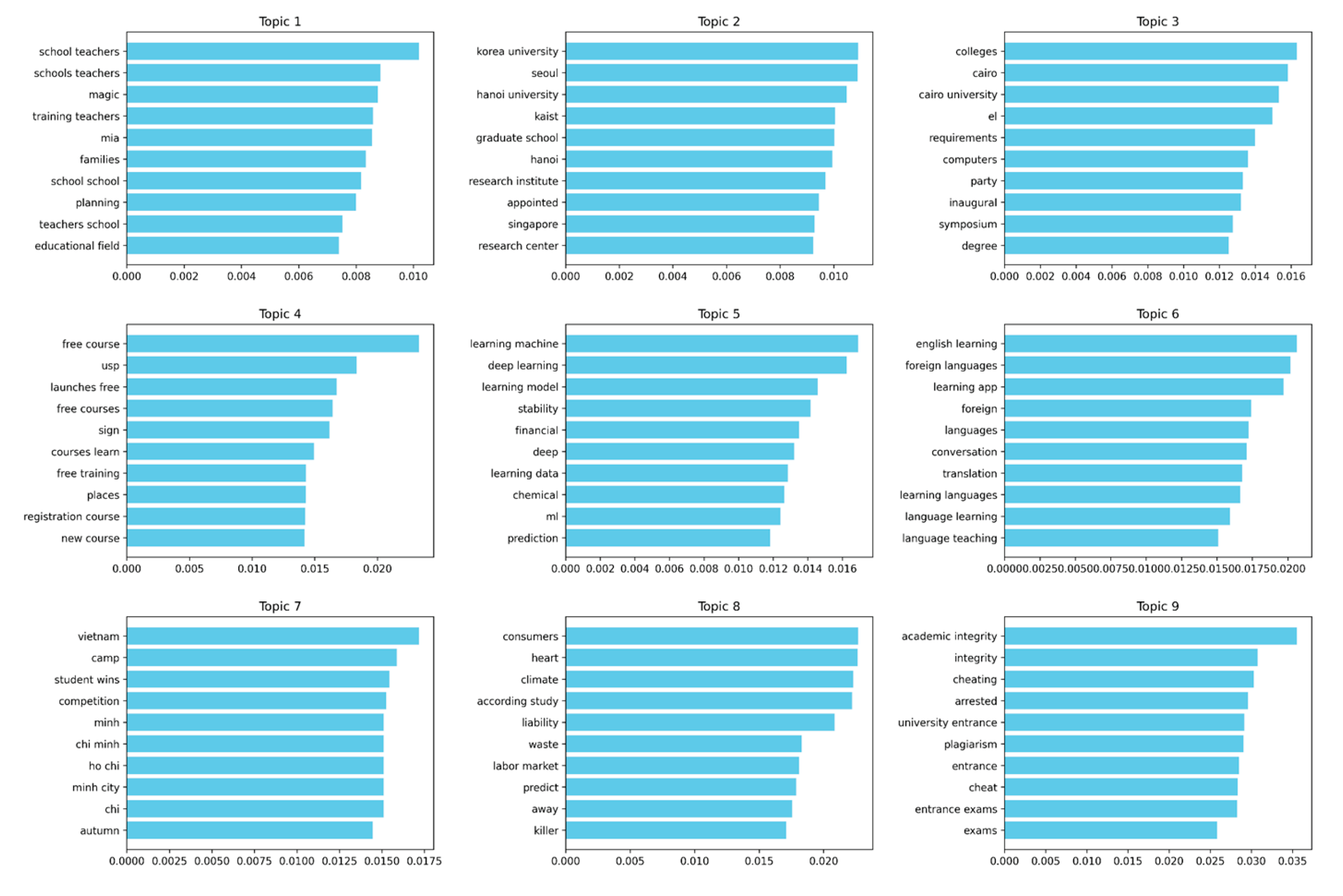

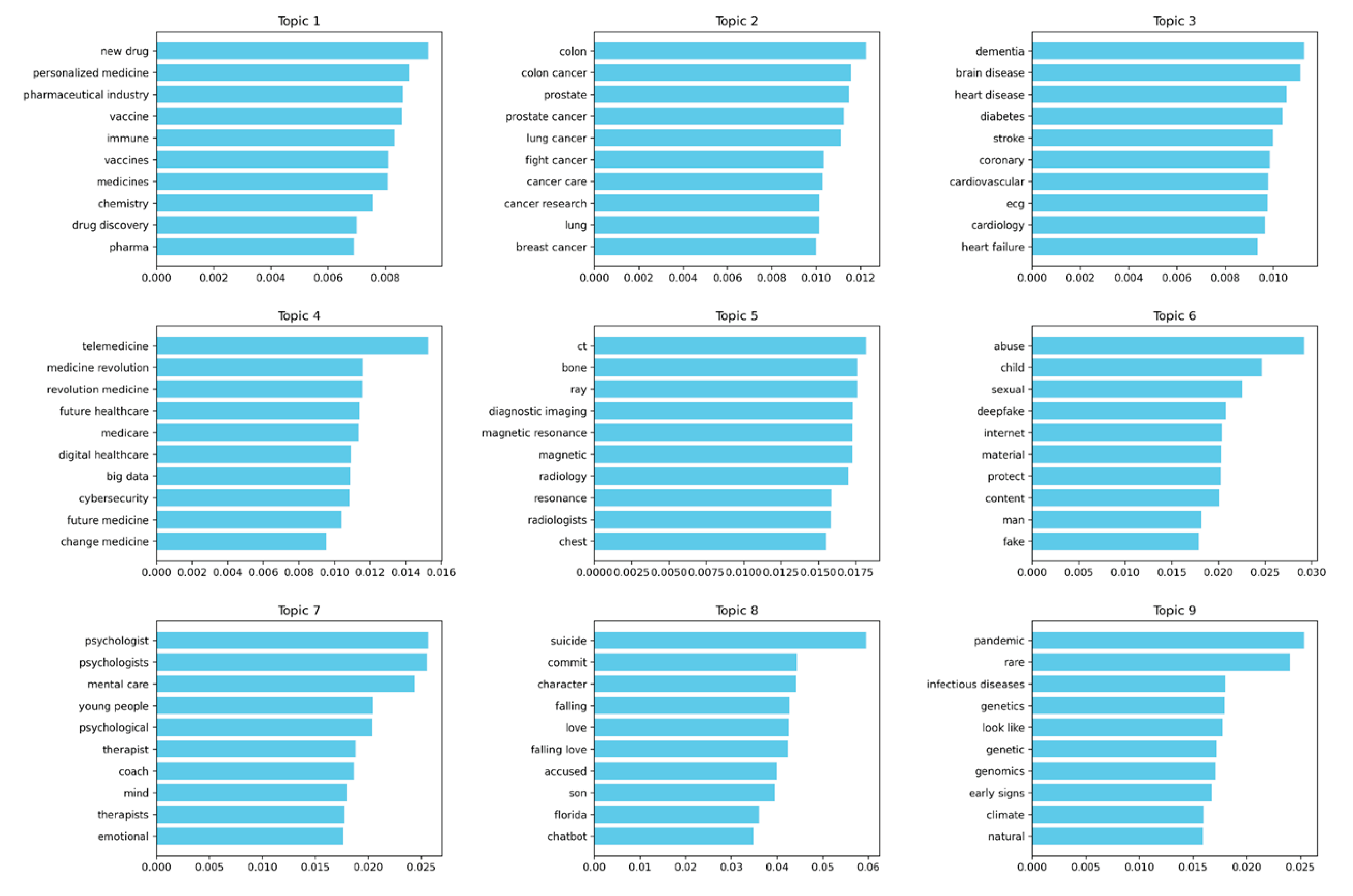

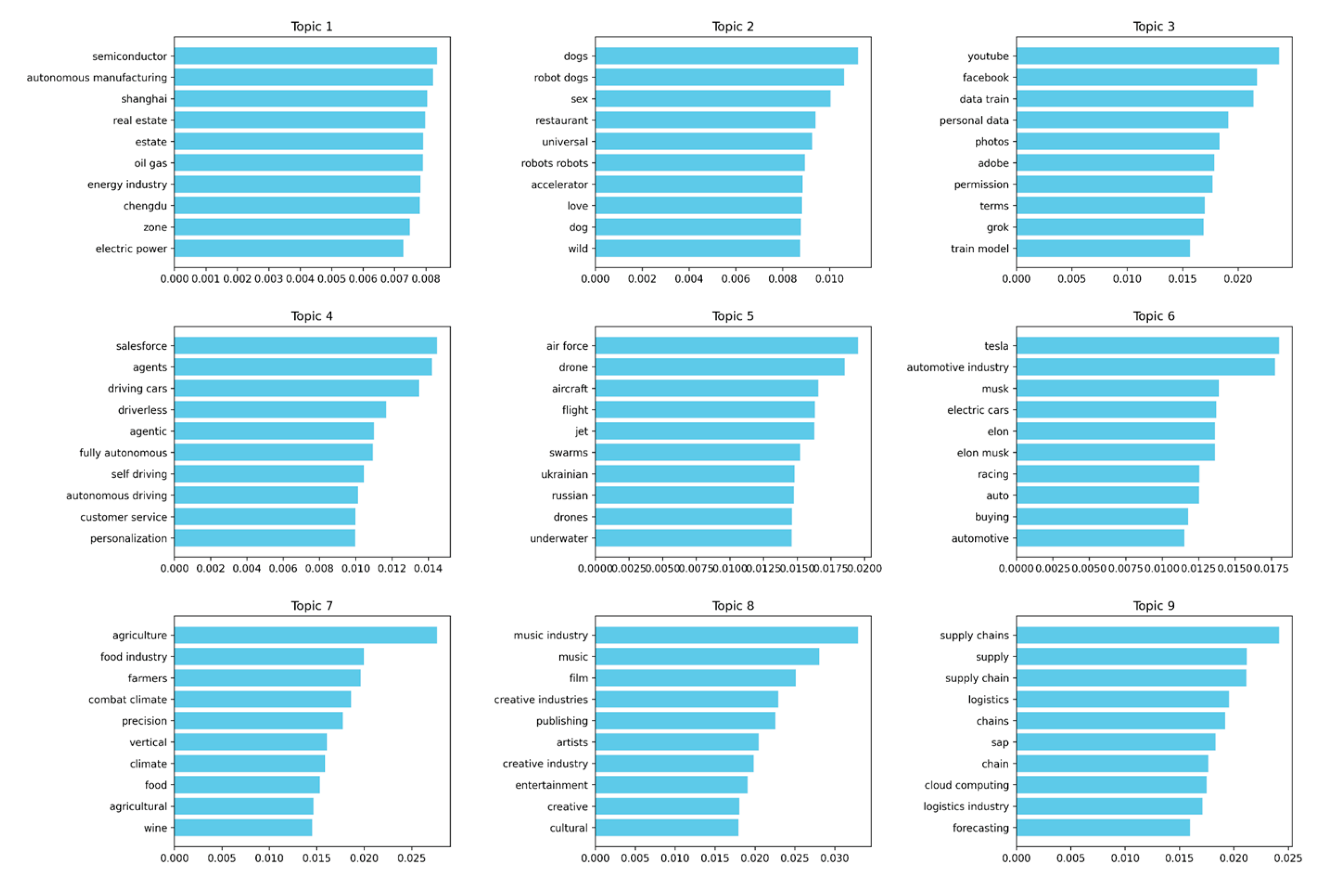

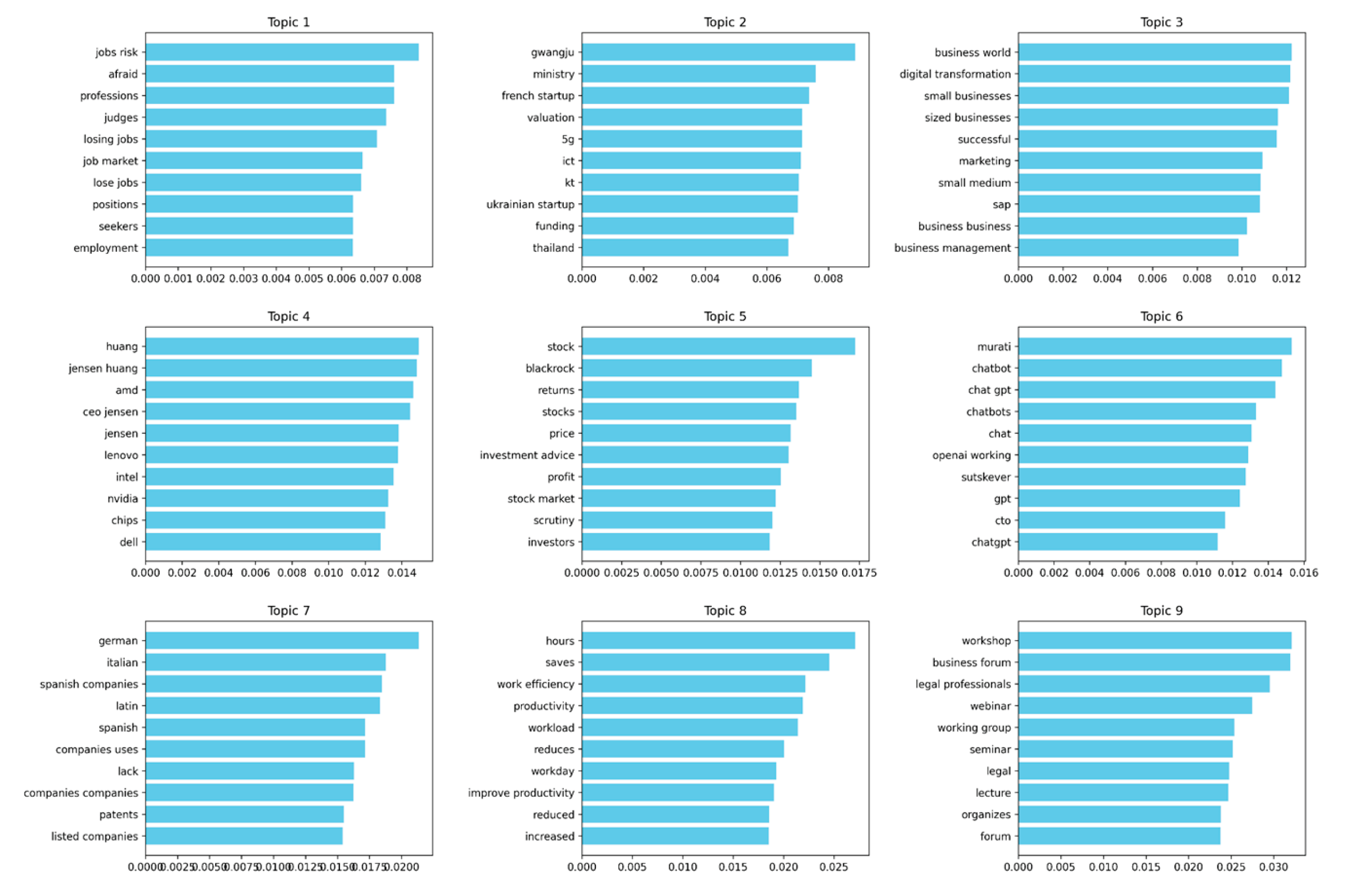

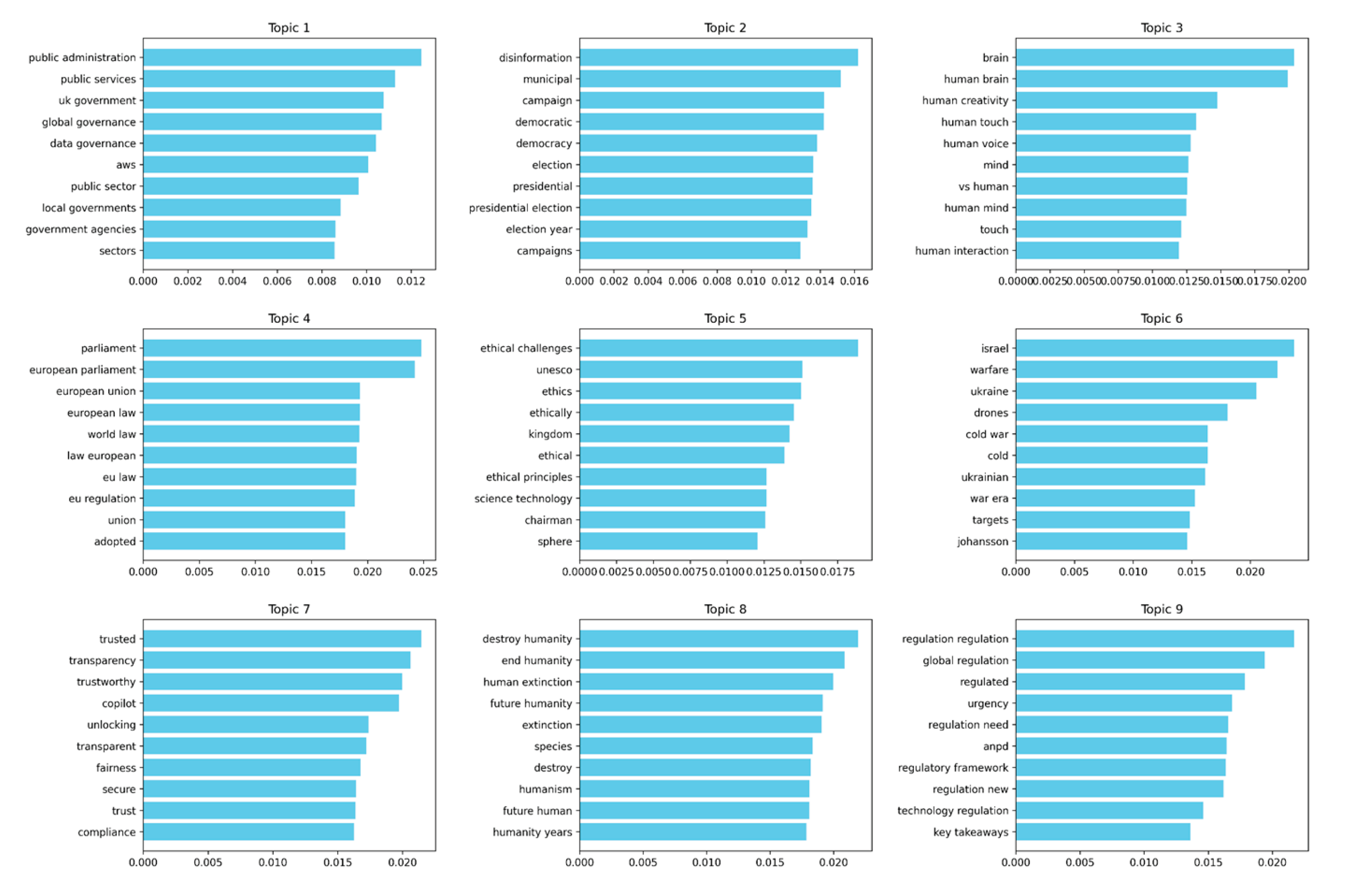

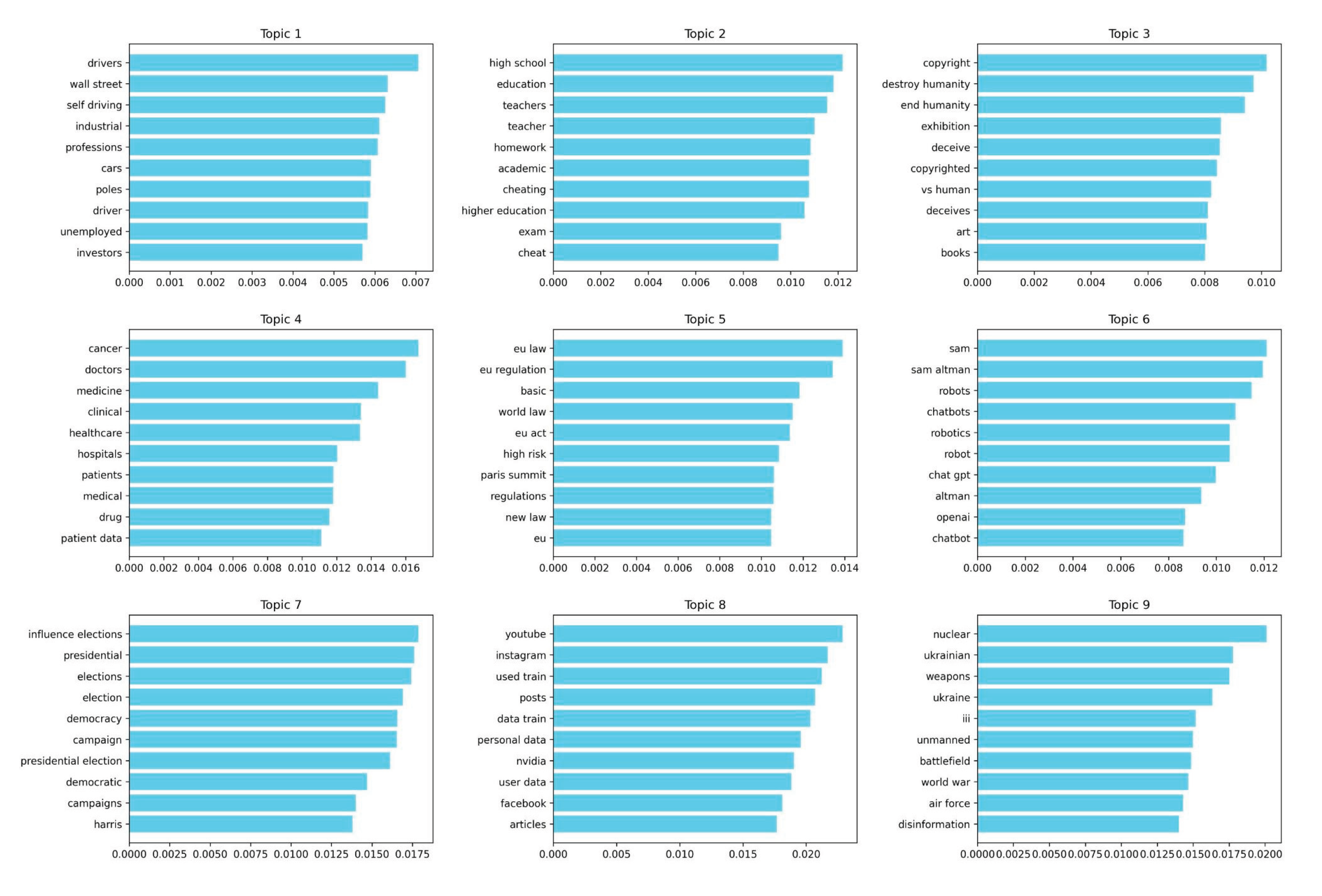

Topic Modelling

Cross-Domain Analysis of Sentiment in News Headlines

Normality Testing

Domain-Wise Sentiment Comparison

| Domain Pair | U-statistic | p-value | Effect Size (r) | Interpretation |

|---|---|---|---|---|

| Education vs. Healthcare | 41,978,129.00 | 0 | 0.16 | Small effect |

| Education vs. Robotics | 44,726,836.50 | 0 | 0.105 | Small effect |

| Education vs. Society | 63,714,804.00 | 0 | -0.274 | Small effect |

| Healthcare vs. Robotics | 52,559,970.00 | 0 | -0.051 | Small effect |

| Healthcare vs. Society | 68,426,711.00 | 0 | -0.369 | Medium effect |

| Robotics vs. Society | 66,616,369.00 | 0 | -0.332 | Medium effect |

| Career vs. Education | 52,451,658.50 | 0 | -0.049 | Small effect |

| Career vs. Healthcare | 45,255,945.00 | 0 | 0.095 | Small effect |

| Career vs. Robotics | 47,576,088.00 | 0 | 0.048 | Small effect |

| Career vs. Society | 63,428,451.00 | 0 | -0.269 | Small effect |

| Large Differences (Δ > 0.20) | Society vs. Healthcare (0.310), Robotics (0.282), Education (0.233), Career (0.224) |

| Moderate Differences (0.05 < Δ < 0.20) | Healthcare vs. Career (0.086), Education (0.078); Robotics vs. Career (0.058), Education (0.049) |

| Small Differences (Δ < 0.05) | Education vs. Career (0.008); Healthcare vs. Robotics (0.028) |

Future Research

Discussion

Conclusions

References

- Acemoglu, D.; Restrepo, P. Automation and new tasks: How technology displaces and reinstates labor. Journal of Economic Perspectives 2019, 33(2), 3–30. [Google Scholar] [CrossRef]

- Acharya, D. B.; Kuppan, K.; Divya, B. Agentic AI: Autonomous intelligence for complex goals—A comprehensive survey. IEEE Access 2025, 13, 18912–18936. [Google Scholar] [CrossRef]

- Aiken, M.; Balan, S. An analysis of Google Translate accuracy. Translation Journal 2011, 16(2). [Google Scholar]

- Ali, G. M. N.; Rahman, M. M.; Hossain, M. A.; Rahman, M. S.; Paul, K. C.; Thill, J. C.; Samuel, J. Public perceptions of COVID-19 vaccines: Policy implications from US spatiotemporal sentiment analytics. In Healthcare; MDPI, August 2021; Vol. 9, No. 9. [Google Scholar]

- Alowais, S. A.; Alghamdi, S. S.; Alsuhebany, N.; Alqahtani, T.; Alshaya, A. I.; Almohareb, S. N.; Aldairem, A.; Alrashed, M.; Bin Saleh, K.; Badreldin, H. A.; Al Yami, M. S.; Al Harbi, S.; Albekairy, A. M. Revolutionizing healthcare: The role of artificial intelligence in clinical practice. BMC Medical Education 2023, 23(689). [Google Scholar] [CrossRef]

- Al-Zahrani, A. M. Unveiling the Shadows: Beyond the Hype of AI in Education. Heliyon 2024, 10(9), e30696. [Google Scholar] [CrossRef]

- Ananyi, S. O.; Somieari-Pepple, E. Cost-benefit analysis of artificial intelligence integration in education management: Leadership perspectives. International Journal of Economics Environmental Development and Society 2023, 4(3), 353–370. [Google Scholar]

- Athaluri, S. A.; Manthena, S. V.; Kesapragada, V. S. R. K. M.; Yarlagadda, V.; Dave, T.; Duddumpudi, R. T. S. Exploring the Boundaries of Reality: Investigating the Phenomenon of Artificial Intelligence Hallucination in Scientific Writing Through ChatGPT References. Cureus 2023, 15(4). [Google Scholar] [CrossRef] [PubMed]

- Baccouri, N. Deep Translator: A flexible Python tool for translations [Python library]. 2020. Available online: https://deep-translator.readthedocs.io/en/latest/.

- Bairathi, A. A world with AI: Where will we be in 10, 20, and 50 years? NASSCOM Community. 11 February 2025. Available online: https://community.nasscom.in/communities/ai/world-ai-where-will-we-be-10-20-and-50-years.

- Bajwa, J.; Munir, U.; Nori, A.; Williams, B. Artificial intelligence in healthcare: Transforming the practice of medicine. Future Healthcare Journal 2021, 8(2), e188–e194. [Google Scholar] [CrossRef] [PubMed]

- Baker, R. S.; Hawn, A. Algorithmic Bias in Education. International Journal of Artificial Intelligence in Education 2021, 32, 1052–1092. [Google Scholar] [CrossRef]

- Baptista, E. What is DeepSeek and why is it disrupting the AI sector? Reuters. 28 January 2025. Available online: https://www.reuters.com/technology/artificial-intelligence/what-is-deepseek-why-is-it-disrupting-ai-sector-2025-01-27/ (accessed on 12 February 2025).

- Belanger, A. Netflix doc accused of using AI to manipulate true crime story. Ars Technica. 19 April 2024. Available online: https://arstechnica.com/tech-policy/2024/04/netflix-doc-accused-of-using-ai-to-manipulate-true-crime-story/?utm_source=chatgpt.com.

- Bendiab, G.; Hameurlaine, A.; Germanos, G.; Kolokotronis, N.; Shiaeles, S. Autonomous vehicles security: Challenges and solutions using blockchain and artificial intelligence. IEEE Transactions on Intelligent Transportation Systems 2023, 24(4), 3614–3637. [Google Scholar] [CrossRef]

- Bharadiya, J. Artificial intelligence in transportation systems a critical review. American Journal of Computing and Engineering 2023, 6(1), 34–45. [Google Scholar] [CrossRef]

- Bhuyan, S. S.; Sateesh, V.; Mukul, N.; Galvankar, A.; Mahmood, A.; Nauman, M.; Samuel, J. Generative Artificial Intelligence Use in Healthcare: Opportunities for Clinical Excellence and Administrative Efficiency. Journal of Medical Systems 2025, 49(1), 10. [Google Scholar] [CrossRef]

- Bird, S.; Klein, E.; Loper, E. Natural Language Processing with Python; O’Reilly Media, 2009; Available online: https://www.nltk.org/book/.

- Bond, S. How AI deepfakes polluted elections in 2024; NPR, 2024; Available online: https://www.npr.org/2024/12/21/nx-s1-5220301/deepfakes-memes-artificial-intelligence-elections (accessed on 12 February 2025).

- Bostrom, N. Superintelligence: Paths, dangers, strategies; Oxford University Press, 2014. [Google Scholar]

- Bostrom, N. Deep utopia: Life and meaning in a solved world; Ideapress Publishing, 2024. [Google Scholar]

- Bratton, L. What Big Tech execs have said about DeepSeek as US contemplates ban; Yahoo Finance, 2025; Available online: https://finance.yahoo.com/news/what-big-tech-execs-have-said-about-deepseek-as-us-contemplates-ban-140030220.html (accessed on 12 February 2025).

- Chang, Xinyu. Gender Bias in Hiring: An Analysis of the Impact of Amazon's Recruiting Algorithm. Advances in Economics, Management and Political Sciences 2023, 23, 134–140. [Google Scholar] [CrossRef]

- Chidipothu, N.; Anderson, R.; Samuel, J.; Pelaez, A.; Esguerra, J.; Hoque, M. N. Improving large language model (LLM) performance with retrieval augmented generation (RAG): Development of a transparent generative AI university support system for educational purposes. Journal of Big Data and Artificial Intelligence 2025, 3(1). [Google Scholar] [CrossRef]

- Chui, M.; Manyika, J.; Miremadi, M. Where machines could replace humans—and where they can’t (yet). McKinsey Quarterly. 2016. Available online: https://www.mckinsey.com/featured-insights/employment-and-growth/where-machines-could-replace-humans-and-where-they-cant-yet.

- Chustecki, M. Benefits and risks of AI in health care: Narrative review. Interactive Journal of Medical Research 2024, 13, e53616. [Google Scholar] [CrossRef]

- Cools, H.; Van Gorp, B.; Opgenhaffen, M. Where exactly between utopia and dystopia? A framing analysis of AI and automation in US newspapers. Journalism 2022. [Google Scholar] [CrossRef]

- Cordero, D. The downsides of artificial intelligence in healthcare. The Korean Journal of Pain 2024, 37(1), 87–88. [Google Scholar] [CrossRef] [PubMed]

- Cross, S.; Bell, I.; Nicholas, J.; Valentine, L.; Mangelsdorf, S.; Baker, S.; Titov, N.; Alvarez-Jimenez, M. Use of AI in mental health care: Community and mental health professionals survey. JMIR Mental Health 2024, 11, e60589. [Google Scholar] [CrossRef] [PubMed]

- Cuthbertson, A. AI and the meaning of life: Philosopher Nick Bostrom says technology could bring utopia but will force us to rethink our purpose. The Independent. 20 April 2024. Available online: https://www.the-independent.com/tech/ai-deep-utopia-nick-bostrom-cockaigne-b2530807.html (accessed on 12 February 2025).

- DeepSeek-AI, Guo, D., Yang, D., Zhang, H., Song, J., Zhang, R., Xu, R., Zhu, Q., Ma, S., Wang, P., et al. DeepSeek-R1: Incentivizing reasoning capability in LLMs via reinforcement learning. arXiv 2025. [Google Scholar] [CrossRef]

- Dehghan, A.; Cevik, M.; Bodur, M. Dynamic AGV Task Allocation in Intelligent Warehouses. arXiv, 2023; arXiv:2312.16026. [Google Scholar]

- Didast, F.; Nassih, R. Y.; Ait Lbachir, I. Artificial Intelligence and Logistics: Recent Trends and Development. International Journal of Advanced Computer Science and Applications 2024, 12(1). [Google Scholar]

- Dmitracova, O. 41% of companies worldwide plan to reduce workforces by 2030 due to AI; CNN, 8 January 2025; Available online: https://www.cnn.com/2025/01/08/business/ai-job-losses-by-2030-intl/index.html (accessed on 12 February 2025).

- Dutta, S.; Ranjan, S.; Mishra, S.; Sharma, V.; Hewage, P.; Iwendi, C. Enhancing educational adaptability: A review and analysis of AI-driven adaptive learning platforms. In 2024 4th International Conference on Innovative Practices in Technology and Management (ICIPTM); IEEE, February 2024; pp. 1–5. [Google Scholar] [CrossRef]

- Palace, Elysée. Statement on inclusive and sustainable artificial intelligence for people and the planet. 11 February 2025. Available online: https://www.elysee.fr/en/emmanuel-macron/2025/02/11/statement-on-inclusive-and-sustainable-artificial-intelligence-for-people-and-the-planet.

- Ettman, C. K.; Galea, S. The potential influence of AI on population mental health. JMIR Mental Health 2023, 10, e49936. [Google Scholar] [CrossRef]

- Faluyi, S. E. AI and job market: Analysing the potential impact of AI on employment, skills, and job displacement. African Journal of Marketing Management 2025, 17(1), 1–8. [Google Scholar] [CrossRef]

- Farhud, D. D.; Zokaei, S. Ethical issues of artificial intelligence in medicine and healthcare. Iranian Journal of Public Health 2021, 50(11), i–v. [Google Scholar] [CrossRef]

- Frank, M. R.; Autor, D.; Bessen, J. E.; Brynjolfsson, E.; Cebrian, M.; Deming, D. J.; Feldman, M.; Groh, M.; Lobo, J.; Moro, E.; Wang, D.; Youn, H.; Rahwan, I. Toward understanding the impact of artificial intelligence on labor. Proceedings of the National Academy of Sciences 2019, 116(14), 6531–6539. [Google Scholar] [CrossRef]

- Gammon, C.; Bornstein, M. Frey, B. B., Ed.; John Henry effect. In The SAGE encyclopedia of educational research, measurement, and evaluation; SAGE Publications, Inc., 2018; Vol. 4. [Google Scholar] [CrossRef]

- Garvey, M. D.; Samuel, J.; Pelaez, A. Would you please like my tweet?! An artificially intelligent, generative probabilistic, and econometric based system design for popularity-driven tweet content generation. Decision Support Systems 2021, 113497. [Google Scholar] [CrossRef]

- Girden, E. R. ANOVA: Repeated measures; Sage, 1992. [Google Scholar]

- Gmyrek, P.; Winkler, H.; Garganta, S. Buffer or bottleneck? Employment exposure to generative AI and the digital divide in Latin America. In ILO Working Paper 121; Geneva, ILO and The World Bank, 2024. [Google Scholar] [CrossRef]

- Google. Google News RSS feeds; Google, n.d.; Available online: https://news.google.com/rss (accessed on 13 February 2025).

- Grand View Research. AI companion market size, share & trends analysis report by type (Text-based, Voice-based, Multi-modal), by application (Mental Health Support, Education & Learning Aid), by industry vertical (Consumer, Businesses, Healthcare), and by region forecasts, 2025 - 2030 (Report No. GVR-4-68040-517-3). Grand View Research, 2024.

- Gridach, M.; Nanavati, J.; Abidine, K.; Mendes, L.; Mack, C. Agentic AI for scientific discovery: A survey of progress, challenges, and future directions. arXiv 2025, arXiv:2503.08979. [Google Scholar] [CrossRef]

- Grootendorst, M. BERTopic: Neural topic modeling with a class-based TF-IDF procedure. arXiv. 2022. Available online: https://arxiv.org/abs/2203.05794.

- Groves, M.; Mundt, K. Friend or foe? Google Translate in language for academic purposes. English for Specific Purposes 2015, 37, 112–121. [Google Scholar] [CrossRef]

- Gupta, M.; Kakar, I. S.; Peden, M.; Altieri, E.; Jagnoor, J. Media coverage and framing of road traffic safety in India. BMJ Global Health 2021, 6(3), e004499. [Google Scholar] [CrossRef] [PubMed]

- Hill, D. L. G. AI in imaging: The regulatory landscape. British Journal of Radiology 2024, 97(1155), 483–491. [Google Scholar] [CrossRef] [PubMed]

- Hoose, S.; Králiková, K. Artificial intelligence in mental health care: Management implications, ethical challenges, and policy considerations. Administrative Sciences 2024, 14(9), 227. [Google Scholar] [CrossRef]

- Hu, Q.; Rangwala, H. Towards Fair Educational Data Mining: A Case Study on Detecting At-Risk Students. In International Educational Data Mining Society; 2020. [Google Scholar]

- International Data Corporation (IDC). Artificial intelligence will contribute $19.9 trillion to the global economy through 2030 and drive 3.5% of global GDP in 2030.; IDC, 17 September 2024; Available online: https://www.idc.com/getdoc.jsp?containerId=prUS52600524.

- Jackson, J.; Paste, Staff. The 50 best dystopian movies of all time; Paste Magazine, 2023; Available online: https://www.pastemagazine.com/movies/dystopian-movies/best-dystopian-movies-of-all-time-1 (accessed on 12 February 2025).

- Jamieson, T.; Van Belle, D. A. How development affects news media coverage of earthquakes: Implications for disaster risk reduction in observing communities. Sustainability 2019, 11(7), 1970. [Google Scholar] [CrossRef]

- jmuwa. The best dystopian TV shows; IMDb, 2020; Available online: https://www.imdb.com/list/ls048004810/ (accessed on 12 February 2025).

- Johnston, C. How social media algorithms inherently create polarization. Psychology Today. 29 November 2020. Available online: https://www.psychologytoday.com/us/blog/cultural-psychiatry/202011/how-social-media-algorithms-inherently-create-polarization.

- Jones, K. S. A statistical interpretation of term specificity and its application in retrieval. Journal of Documentation 1972, 28(1), 11–21. [Google Scholar] [CrossRef]

- Jumaev, G. The impact of AI on job market: Adapting to the future of work; Zenodo., 8 January 2024. [Google Scholar] [CrossRef]

- Jumper, J.; Evans, R.; Pritzel, A.; et al. Highly accurate protein structure prediction with AlphaFold. Nature 2021, 596, 583–589. [Google Scholar] [CrossRef]

- Kashyap, R.; Samuel, Y.; Friedman, L. W.; Samuel, J. Artificial intelligence education & governance—human enhancive, culturally sensitive and personally adaptive HAI. Frontiers in Artificial Intelligence 2024, 7, 1443386. Available online: https://www.frontiersin.org/articles/10.3389/frai.2024.1443386.

- Khosla, V. AI: Dystopia or utopia? Khosla Ventures, 20 September 2024; Available online: https://www.khoslaventures.com/ai-dystopia-or-utopia/ (accessed on 12 February 2025).

- Klepper, D.; Swenson, A. AI-generated disinformation poses threat of misleading voters in 2024 election; PBS NewsHour, 14 May 2023; Available online: https://www.pbs.org/newshour/politics/ai-generated-disinformation-poses-threat-of-misleading-voters-in-2024-election (accessed on 12 February 2025).

- Kruskal, W. H.; Wallis, W. A. Use of ranks in one-criterion variance analysis. Journal of the American Statistical Association 1952, 47(260), 583–621. [Google Scholar] [CrossRef]

- Li, D.; He, W.; Guo, Y. Why AI still doesn’t have consciousness? CAAI Transactions on Intelligence Technology 2021, 6(2), 175–179. [Google Scholar] [CrossRef]

- Littman, M. L.; Ajunwa, I.; Berger, G.; Boutilier, C.; Currie, M.; Doshi-Velez, F.; Hadfield, G.; Horowitz, M. C.; Isbell, C.; Kitano, H.; Levy, K.; Lyons, T.; Mitchell, M.; Shah, J.; Sloman, S.; Vallor, S.; Walsh, T. Gathering strength, gathering storms: The One Hundred Year Study on Artificial Intelligence (AI100) 2021 study panel report . arXiv 2021. [Google Scholar] [CrossRef]

- Mann, H. B.; Whitney, D. R. On a test of whether one of two random variables is stochastically larger than the other. Annals of Mathematical Statistics 1947, 18(1), 50–60. [Google Scholar] [CrossRef]

- Marcinek, K.; Stanley, K. D.; Smith, G.; Cormarie, P.; Gunashekar, S. Risk-based AI regulation: A primer on the Artificial Intelligence Act of the European Union.; RAND Corporation, 20 November 2024; Available online: https://www.rand.org/pubs/research_reports/RRA3243-3.html.

- McInnes, L.; Healy, J.; Astels, S. hdbscan: Hierarchical density based clustering. The Journal of Open Source Software 2017, 2(11), 205. [Google Scholar] [CrossRef]

- McInnes, L.; Healy, J.; Melville, J. UMAP: Uniform Manifold Approximation and Projection for dimension reduction. arXiv. 2018. Available online: https://arxiv.org/abs/1802.03426.

- McKee, K.; Pilgrim, M. Universal Feed Parser (feedparser) [Python library]. n.d. Available online: https://feedparser.readthedocs.io/en/latest/ (accessed on 13 February 2025).

- Mhlanga, D. Open AI in Education, the Responsible and Ethical Use of ChatGPT Towards Lifelong Learning. 11 February 2023. Retrieved from papers.ssrn.com website. Available online: https://papers.ssrn.com/sol3/papers.cfm?abstract_id=4354422.

- Mill, J. S. Public and parliamentary speeches – Part I – November 1850 – November 1868; Toronto; University of Toronto Press, 1988. [Google Scholar]

- Mishra, V. Unchecked AI threatens democracy, warns UN chief. United Nations News. 15 September 2024. Available online: https://news.un.org/en/story/2024/09/1154316.

- Mitchell, M. What does it mean to align AI with human values? Quanta Magazine. 13 December 2022. Available online: https://www.quantamagazine.org/what-does-it-mean-to-align-ai-with-human-values-20221213/.

- More, T. Utopia; New York; Appleton-Century-Crofts, 1949. [Google Scholar]

- Nelson, L. Practical AI limitations you need to know. AFA Education Blog. 22 December 2024. Available online: https://afaeducation.org/blog/practical-ai-limitations-you-need-to-know/.

- Nguyen, B. Donald Trump, Elon Musk, Taylor Swift, and more: The 10 most AI deepfaked people right now. Quartz. 10 October 2024. Available online: https://qz.com/donald-trump-elon-musk-taylor-swift-beyonce-ai-deepfake-1851666681.

- O'Gieblyn, M. Does AI have a subconscious? WIRED. 23 May 2023. Available online: https://www.wired.com/story/does-ai-have-a-subconscious/.

- Ouchchy, L.; Coin, A.; Dubljević, V. AI in the headlines: The portrayal of the ethical issues of artificial intelligence in the media. AI & Society 35 2020, 927–936. [Google Scholar] [CrossRef]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Duchesnay, E. Scikit-learn: Machine learning in Python. Journal of Machine Learning Research 2011, 12, 2825–2830. Available online: https://jmlr.org/papers/v12/pedregosa11a.html.

- Pierce, D. Two possible futures for AI. The Verge. 29 October 2024. Available online: https://www.theverge.com/2024/10/29/24282333/ai-vision-anthropic-openai-shakealert-vergecast.

- Piocciochi, M.; Alwabel, R. A. Leveraging Artificial Intelligence in Education: Current Applications and Future Prospects. 18 June 2020. Retrieved. Available online: https://www.researchgate.net/publication/384016811_Leveraging_Artificial_Intelligence_in_Education_Current_Applications_and_Future_Prospects.

- Price, W. N., II. Risks and remedies for artificial intelligence in health care. In The Brookings Institution.; 2019; Available online: https://www.brookings.edu/articles/risks-and-remedies-for-artificial-intelligence-in-health-care/.

- Rahman, M. M.; Ali, G. M. N.; Li, X. J.; Samuel, J.; Paul, K. C.; Chong, P. H.; Yakubov, M. Socioeconomic factors analysis for COVID-19 US reopening sentiment with Twitter and census data. Heliyon (ScienceDirect by Elsevier) 2021, e06200. [Google Scholar] [CrossRef]

- Rahman-Jones, I. UK watchdog looking into Microsoft AI taking screenshots. BBC News. 21 May 2024. Available online: https://www.bbc.com/news/articles/cpwwqp6nx14o.

- Rainer, R. K., Jr.; Richey, R. G., Jr.; Chowdhury, S. How Robotics is Shaping Digital Logistics and Supply Chain Management: An Ongoing Call for Research. Journal of Business Logistics 2025, 46(1), e70005. [Google Scholar] [CrossRef]

- Rainie, L.; Anderson, J. Experts imagine the impact of artificial intelligence by 2040.; Imagining the Digital Future Center, 29 February 2024. [Google Scholar]

- Ramesh, A.; Dhariwal, P.; Nichol, A.; Chu, C.; Chen, M. Hierarchical text-conditional image generation with CLIP latents. ArXiv 2022, abs/2204.06125. Available online: https://arxiv.org/abs/2204.06125.

- Randieri, C. Unveiling the role of AI algorithms: Unmasking societal inequities and cultural prejudices. Forbes Technology Council. 19 July 2023. Available online: https://www.forbes.com/councils/forbestechcouncil/2023/07/19/unveiling-the-role-of-ai-algorithms-unmasking-societal-inequities-and-cultural-prejudices/.

- Reitz, K. Requests: HTTP for humans [Python library]. n.d. Available online: https://docs.python-requests.org/en/latest/.

- Reynaud, F.; Untersinger, M. Paris 2024: Controversial AI-led video surveillance put to the test during Olympics; Le Monde, 24 July 2024; Available online: https://www.lemonde.fr/en/pixels/article/2024/07/24/paris-2024-controversial-ai-led-video-surveillance-put-to-the-test-during-olympics_6697267_13.html.

- Richardson, L. Beautiful Soup documentation [Python library]. Crummy. 2023. Available online: https://www.crummy.com/software/BeautifulSoup/.

- Robins-Early, N. Trump posts deepfakes of Swift, Harris, and Musk in effort to shore up support. The Guardian. 19 August 2024. Available online: https://www.theguardian.com/us-news/article/2024/aug/19/trump-ai-swift-harris-musk-deepfake-images?utm_source=chatgpt.com.

- Robison, K. Anthropic’s CEO thinks AI will lead to a utopia—he just needs a few billion dollars first. The Verge. 16 October 2024. Available online: https://www.theverge.com/2024/10/16/24268209/anthropic-ai-dario-amodei-agi-funding-blog.

- Rodilosso, E. Filter bubbles and the unfeeling: How AI for social media can foster extremism and polarization. Philosophy & Technology 2024, 37(71). [Google Scholar] [CrossRef]

- Rolf, B.; Jackson, I.; Müller, M.; Lang, S.; Reggelin, T.; Ivanov, D. A review on reinforcement learning algorithms and applications in supply chain management. International Journal of Production Research 2022, 61(20), 7151–7179. [Google Scholar] [CrossRef]

- Rundle, J. New York State bans DeepSeek from government devices: State says app raises serious security and censorship concerns. The Wall Street Journal. 10 February 2025. Available online: https://www.wsj.com/articles/new-york-state-bans-deepseek-from-government-devices-de7a9df4.

- Sadaf, M.; Iqbal, Z.; Javed, A. R.; Saba, I.; Krichen, M.; Majeed, S.; Raza, A. Connected and automated vehicles: Infrastructure, applications, security, critical challenges, and future aspects. Technologies 2023, 11(5), 117. [Google Scholar] [CrossRef]

- SafeTREC. The role of media and road safety; California Active Transportation Safety Information Pages (CATSIP), n.d.; Available online: https://catsip.berkeley.edu/resources/role-media-and-road-safety.

- Samuel, J. A call for proactive policies for informatics and artificial intelligence technologies. In Scholars Strategy Network; Url, 2021; Available online: https://scholars.org/contribution/call-proactive-policies-informatics-andprovide in text.

- Samuel, J. The Critical Need for Transparency and Regulation amidst the Rise of Powerful Artificial Intelligence Models. In Scholars Strategy Network (SSN); Key Findings, 2023; Available online: https://scholars.org/contribution/critical-need-transparency-and-regulation.

- Samuel, J.; Ali, G. G.; Rahman, M.; Esawi, E.; Samuel, Y. Covid-19 public sentiment insights and machine learning for tweets classification. Information 2020, 11(6), 314. [Google Scholar] [CrossRef]

- Samuel, J.; Kashyap, R.; Samuel, Y.; Pelaez, A. Adaptive cognitive fit: Artificial intelligence augmented management of information facets and representations. International Journal of Information Management 2022, 65, 102505. [Google Scholar] [CrossRef]

- Samuel, J.; Khanna, T.; Esguerra, J.; Sundar, S.; Pelaez, A.; Bhuyan, S. S. The rise of artificial intelligence phobia! Unveiling news-driven spread of AI fear sentiment using ML, NLP, and LLMs. IEEE Access 2025, 13, 125944–125969. [Google Scholar] [CrossRef]

- Samuel, J.; Rahman, M.; Ali, Nawaz G. G., Md; Samuel, Y.; Pelaez, A.; Chong, P. H. J.; Yakubov, M. Feeling Positive About Reopening? New Normal Scenarios From COVID-19 US Reopen Sentiment Analytics. In in IEEE Access; 2020; vol. 8, pp. 142173–142190. Available online: https://ieeexplore.ieee.org/document/9154672. [CrossRef]

- Samuel, J.; Tripathi, A.; Mema, E. A new era of artificial intelligence beginswhere will it lead us? Editorial - Journal of Big Data and Artificial Intelligence 2024, 2(1). [Google Scholar]

- Samuel, Y.; Brennan-Tonetta, M.; Samuel, J.; Kashyap, R.; Kumar, V.; Madabhushi, S. K. K.; Chidipothu, N.; Anand, I.; Jain, P. Cultivation of Human Centered Artificial Intelligence: Culturally Adaptive Thinking in Education for AI (CATE-AI). Frontiers in Artificial Intelligence 2023, 6, 1198180. [Google Scholar] [CrossRef]

- Sapkota, R.; Roumeliotis, K. I.; Karkee, M. AI agents vs. Agentic AI: A conceptual taxonomy, applications and challenges. Information Fusion 2026, 126(Part B), 103599. [Google Scholar] [CrossRef]

- ScrapingBee. ScrapingBee API documentation. ScrapingBee. n.d. Available online: https://www.scrapingbee.com/documentation/.

- Service, R. F. Google’s DeepMind aces protein folding: Artificial intelligence firm takes crown in biannual contest; Science, 6 December 2018; Available online: https://www.science.org/content/article/google-s-deepmind-aces-protein-folding.

- Shapiro, S. S.; Wilk, M. B. An analysis of variance test for normality (complete samples). Biometrika 1965, 52(3–4), 591–611. [Google Scholar] [CrossRef]

- Shermer, M. When it comes to AI, think protopia, not dystopia or utopia; Skeptic, 26 July 2024; Available online: https://www.skeptic.com/reading_room/artificial-intelligence-think-protopia-not-dystopia-or-utopia/.

- Siafakas, N.; Vasarmidi, E. Risks of artificial intelligence (AI) in medicine. Pneumon 2024, 37(3), 40. [Google Scholar] [CrossRef]

- Silver, N. S. AI utopia and dystopia: What will the future have in store? Forbes. 20 June 2023. Available online: https://www.forbes.com/sites/nicolesilver/2023/06/20/ai-utopia-and-dystopia-what-will-the-future-have-in-store-artificial-intelligence-series-5-of-5/.

- Singal, P. Nick Bostrom discusses Superintelligence, AI, and Deep Utopia in Dinis Guarda YouTube podcast; IntelligentHQ, 2024; Available online: https://www.intelligenthq.com/nick-bostrom-discusses-superintelligence-ai-and-deep-utopia-in-dinis-guarda-youtube-podcast/.

- Smith, R. A. AI is starting to threaten white-collar jobs. Few industries are immune. The Wall Street Journal. 12 February 2024. Available online: https://www.wsj.com/lifestyle/careers/ai-is-starting-to-threaten-white-collar-jobs-few-industries-are-immune-9cdbcb90.

- Sodiya, E. O.; Umoga, U. J.; Amoo, O. O.; Atadoga, A. AI-driven warehouse automation: A comprehensive review of systems. GSC Advanced Research and Reviews 2024, 18(2), 272–282. [Google Scholar] [CrossRef]

- Solanki, A.; Jadiga, S. AI Applications for Improving Transportation and Logistics Operations. International Journal of Intelligent Systems and Applications in Engineering 2024, 12(2), 45–52. [Google Scholar]

- Swarns, C. When artificial intelligence gets it wrong. Innocence Project. 19 September 2023. Available online: https://innocenceproject.org/when-artificial-intelligence-gets-it-wrong/.

- TensorFlow. DeepDream. TensorFlow Tutorials. n.d. Available online: https://www.tensorflow.org/tutorials/generative/deepdream.

- The Guardian. Revealed: Bias found in AI system used to detect UK benefits fraud. 6 December 2024. Available online: https://www.theguardian.com/society/2024/dec/06/revealed-bias-found-in-ai-system-used-to-detect-uk-benefits.

- The Week UK. Future of generative AI: Utopia, dystopia or up to us? The Explainer, 31 July 2024; Available online: https://theweek.com/tech/future-of-generative-ai-utopia-dystopia-or-up-to-us.

- Tony Blair Institute for Global Change. The impact of AI on the labour market. 8 November 2024. Available online: https://institute.global/insights/economic-prosperity/the-impact-of-ai-on-the-labour-market.

- Tripathi, A.; Samuel, J.; Brennan-Tonetta, M.; Nguyen, H.; Mema, E. When machines createEnvisioning our future as shaped by the transformative power of generative AI. Journal of Big Data and Artificial Intelligence 2025, 3(1). [Google Scholar] [CrossRef]

- U.S. Equal Employment Opportunity Commission (EEOC). iTutorGroup to pay $365,000 to settle EEOC discriminatory hiring suit. EEOC Newsroom. 11 September 2023. Available online: https://www.eeoc.gov/newsroom/itutorgroup-pay-365000-settle-eeoc-discriminatory-hiring-suit.

- United Nations Regional Information Centre (UNRIC). Can artificial intelligence (AI) influence elections? 7 June 2024. Available online: https://unric.org/en/can-artificial-intelligence-ai-influence-elections/.

- UspeakGreek. Etymology and meaning of the word dystopian. 19 December 2023. Available online: https://uspeakgreek.com/art/literature/etymology-and-meaning-of-word-dystopian/.

- Utopia & Dystopia List of famous utopian movies. n.d. Available online: https://www.utopiaanddystopia.com/utopian-fiction/utopian-movies-list/.

- Vinson, D. W.; Arcan, M.; Niland, D.; Delahunty, F. Towards sustainable workplace mental health: A novel approach to early intervention and support. ArXiv. 2024. Available online: https://arxiv.org/abs/2402.01592.

- Wankhade, M.; Rao, A. C. S.; Kulkarni, C. A survey on sentiment analysis methods, applications, and challenges. Artificial Intelligence Review 2022, 55(7), 5731–5780. [Google Scholar] [CrossRef]

- Webb, M. The impact of artificial intelligence on the labor market. SSRN Electronic Journal. 2019. [Google Scholar] [CrossRef]

- Winton, A. Get a horse! America’s skepticism toward the first automobiles; The Saturday Evening Post, 9 January 2017; Available online: https://www.saturdayeveningpost.com/2017/01/get-horse-americas-skepticism-toward-first-automobiles/.

- Wladawsky-Berger, I. The emerging, unpredictable age of AI; MIT Initiative on the Digital Economy, 22 February 2017; Available online: https://ide.mit.edu/insights/the-emerging-unpredictable-age-of-ai/.

- World Economic Forum (WEF). The Future of Jobs Report 2025. WEF, 2025. Available online: https://www.weforum.org/publications/the-future-of-jobs-report-2025/.

- Younge, H. L. Utopia: or, Apollo’s golden days; Dublin, Ireland; Ptd. by George Faulkner, 1747. [Google Scholar]

- Yudkowsky, E. Pausing AI developments isn’t enough. We need to shut it all down; Time, 29 March 2023; Available online: https://time.com/6266923/ai-eliezer-yudkowsky-open-letter-not-enough/.

- Zajko, M. Artificial intelligence, algorithms, and social inequality: Sociological contributions to contemporary debates. Sociology Compass 2022, 16(3). [Google Scholar] [CrossRef]

- Zaman, B. U. Transforming Education Through AI, Benefits, Risks, and Ethical Considerations; Transforming Education through AI, Benefits, Risks, and Ethical Considerations, 2023. [Google Scholar] [CrossRef]

- Zhai, C.; Wibowo, S.; Li, L. D. The effects of over-reliance on AI dialogue systems on students' cognitive abilities: a systematic review. Smart Learning Environments 2024, 11(1), 28. [Google Scholar] [CrossRef]

- Zhang, W.; Deng, Y.; Liu, B.; Pan, S. J.; Bing, L. Sentiment analysis in the era of large language models: A reality check. arXiv 2023, arXiv:2305.15005. [Google Scholar] [CrossRef]

- Ziegler, B. It’s the year 2030: What will artificial intelligence look like? The Wall Street Journal. 21 September 2024. Available online: https://www.wsj.com/article/ai-future-2030.

| Domain | Keywords |

|---|---|

| Education | educate, learn, teach, study, academic, curriculum, pedagogy, student, school, classroom, course, professor, lecturer, university, college, campus, tutor |

| Healthcare | health, medical, doctor, nurse, hospital, clinic, pharmaceutical, drug, biotech, diagnosis, patient, treatment, vaccine, telemedicine, disease, cardio, immune, neuro, physician, medical technology, radiology, addiction, abuse, suicide, depression, psychology, surgery, therapy, mental, wellness, genomics, genetics, epidemic, pandemic, cancer, diabetes, biomedical, EHR, X-ray |

| Robotics | robot, autonomous, navigate, cyborg, industrial, agriculture, combat, weapon, force, sensor, driver, logistics, vehicle, electric, farm, automation, mobility, fleet, humanoid, automated, autopilot, aerial, unmanned, automotive, automobile, military, army, navy, naval, transportation, drone, warehouse, car, bus, train, truck, pilot, battery, plane, flight, aircraft, CAV, UAV, EV, ADAS, DARPA, SWARM, LiDAR, self-driving, pick-and-place, human-robot, supply chain, computer vision |

| Career | job, recruit, work, organization, career, professional, business, enterprise, company, employ, skill, corporate, layoff, manager, startup, entrepreneur, investment, investor, venture, replace, unemployed, hiring, hire, companies, firms, talent, CEO, CTO, CIO, CDO, HR |

| Society | ethical, regulation, social, trust, democratic, equal, legislation, culture, human, law, public, rights, society, privacy, societal, policy, governance, transparency, accountability, compliance, government, sustainability, health, election, war, relationship |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).