Submitted:

01 May 2026

Posted:

04 May 2026

You are already at the latest version

Abstract

Keywords:

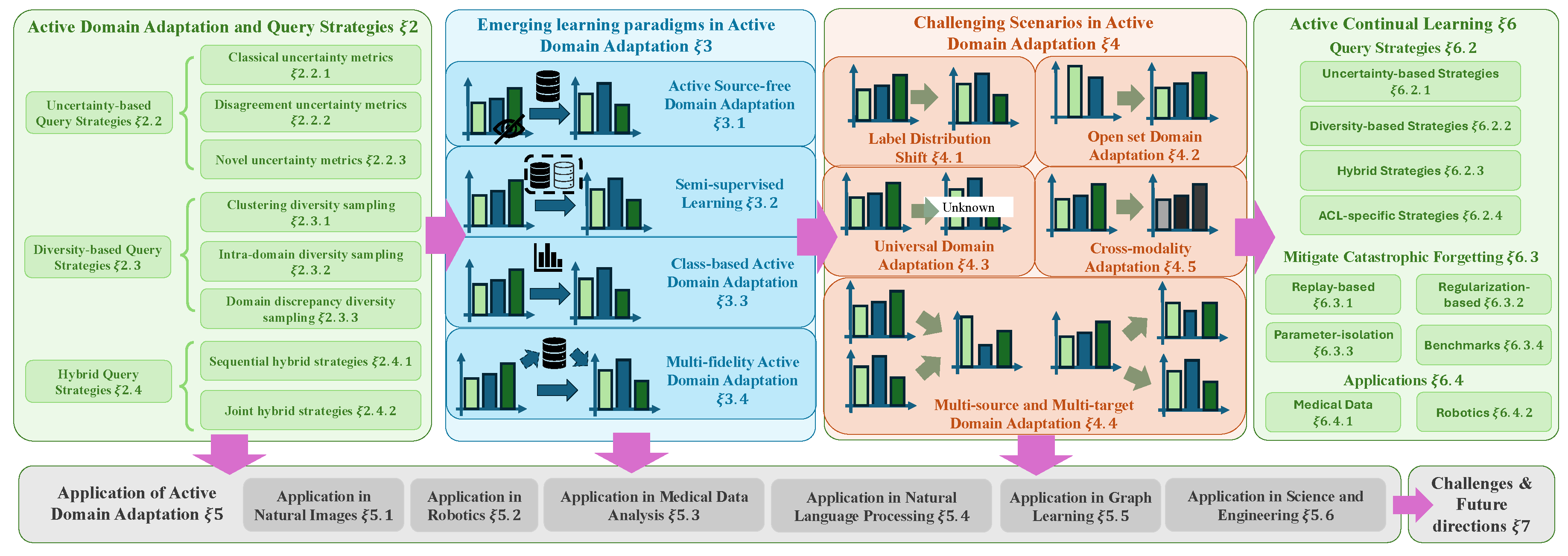

1. Introduction

1.1. Contributions of the Survey

1.2. Structure of the Survey

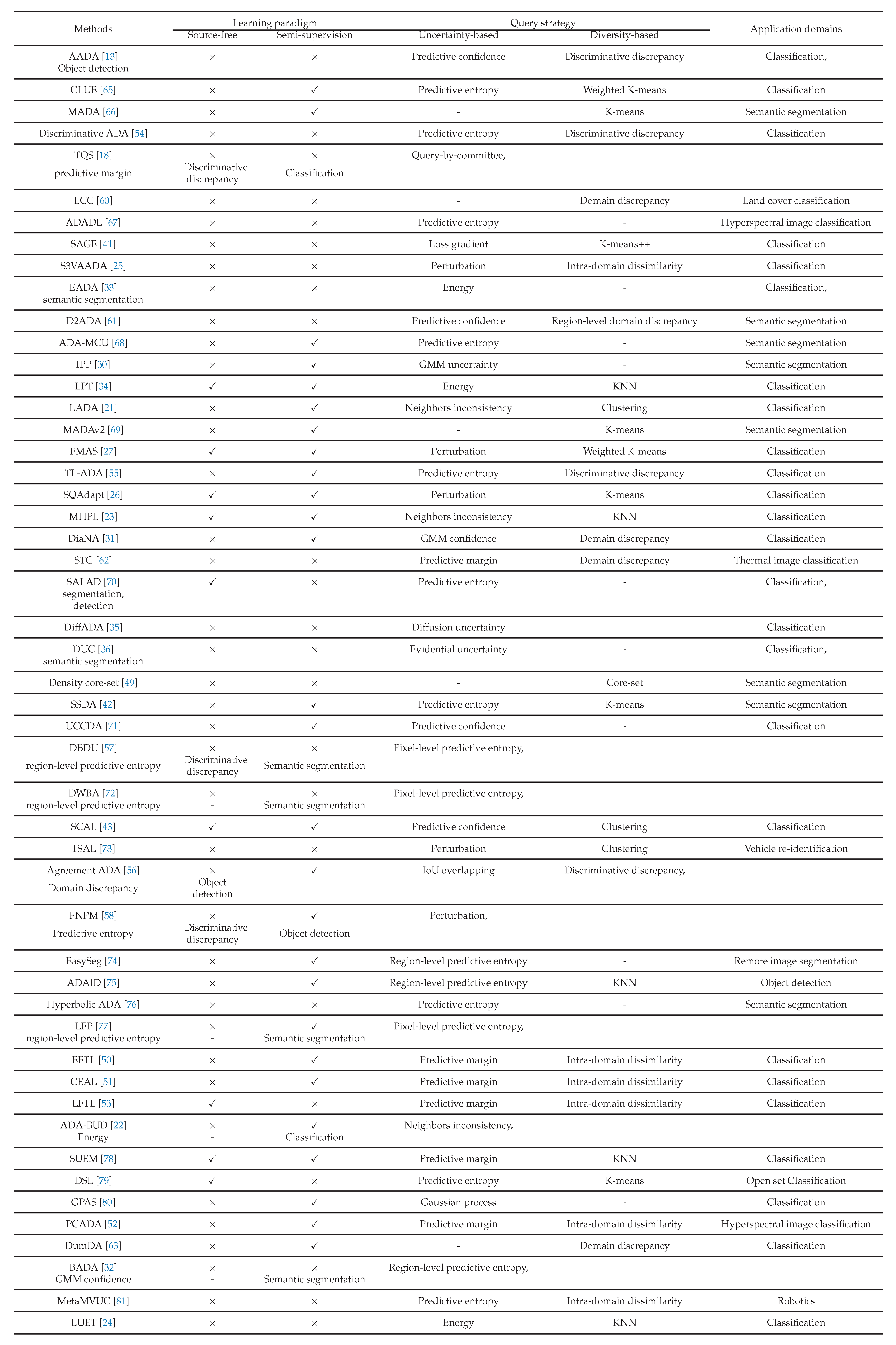

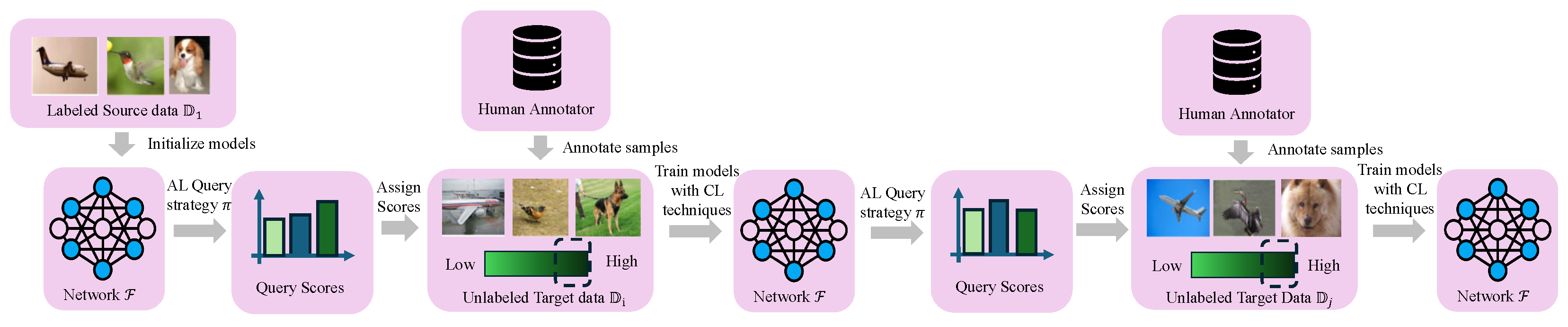

2. Active Domain Adaptation and Query Strategies

2.1. Problem Formulation

2.2. Uncertainty-Based Query Strategies

2.2.1. Classical Uncertainty Metrics

2.2.2. Disagreement-Based Uncertainty Metrics

2.2.3. Novel Uncertainty Metrics

2.3. Diversity-Based Query Strategies

2.3.1. Clustering-Based Diversity Sampling

2.3.2. Intra-Domain Diversity Sampling

2.3.3. Domain Discrepancy Diversity Sampling

2.4. Hybrid Query Strategies

2.4.1. Sequential Hybrid Strategies

2.4.2. Joint Hybrid Strategies

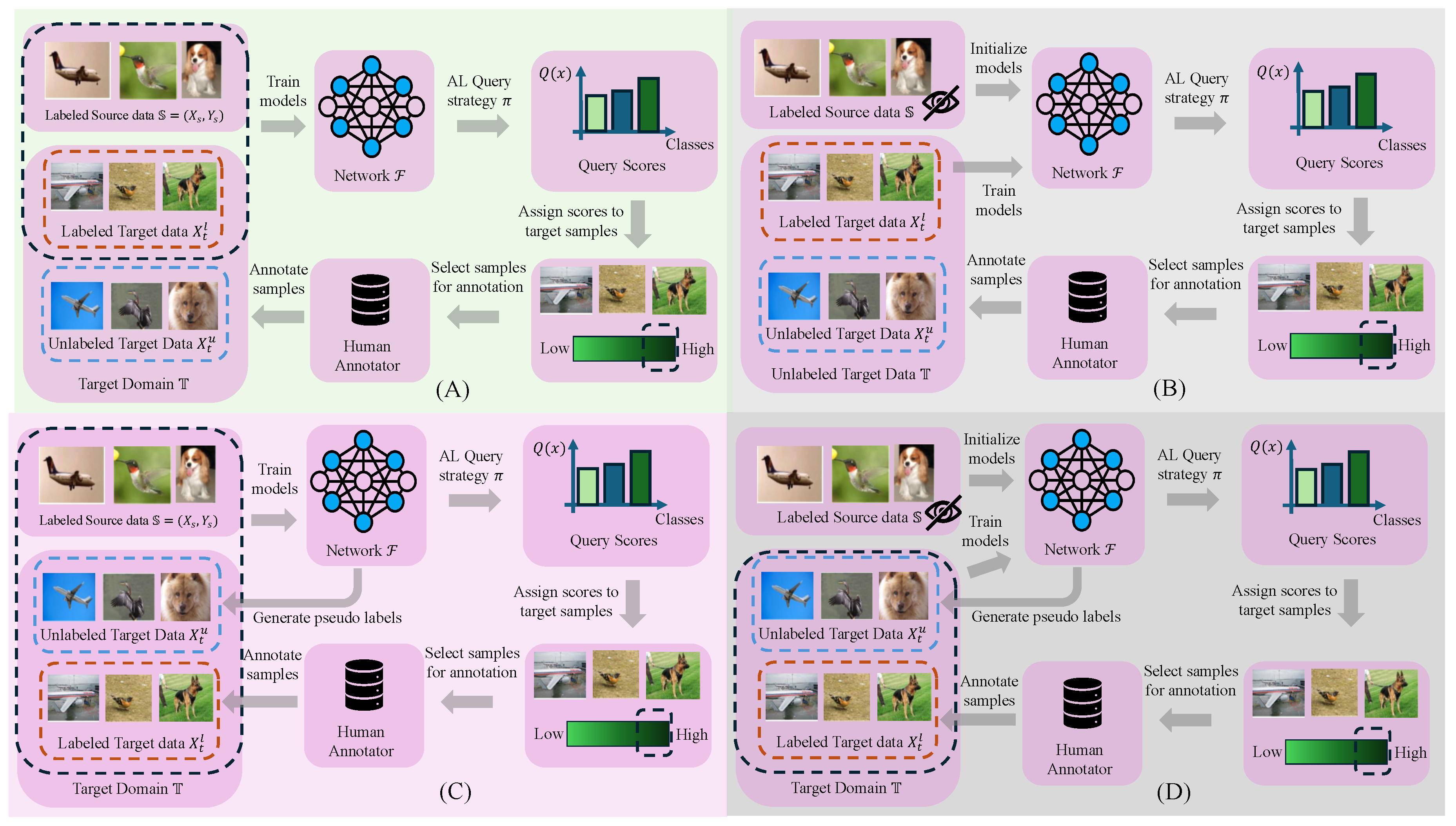

3. Emerging Learning Paradigms in Active Domain Adaptation

3.1. Active Source-Free Domain Adaptation

3.1.1. Source-Free Query Strategies

3.1.2. Source-Free Source Knowledge Utilization

3.2. Semi-Supervised Learning in Active Domain Adaptation

3.2.1. Pseudo Labeling Strategies

3.2.2. Selective Pseudo Labeling Strategies

3.3. Class-Balanced Active Domain Adaptation

3.4. Multi-fidelity active domain adaptation

4. Challenging Scenarios in Active Domain Adaptation

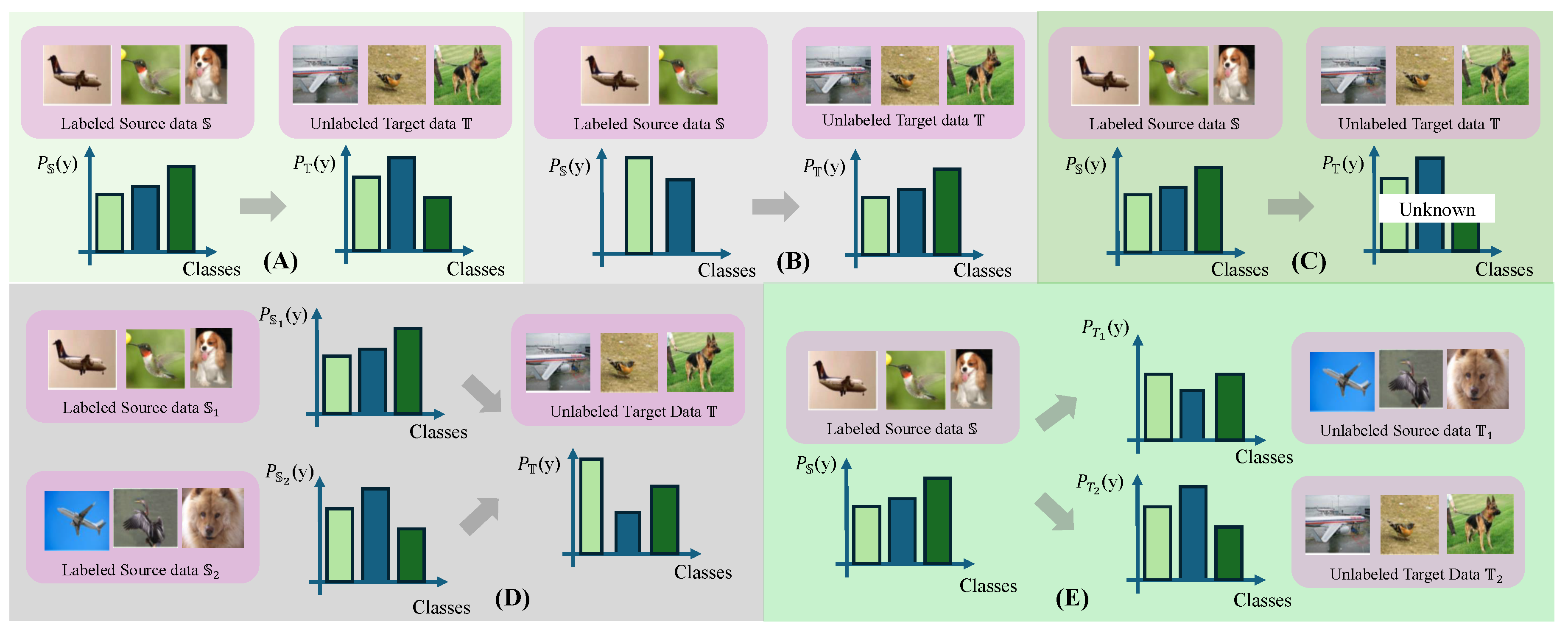

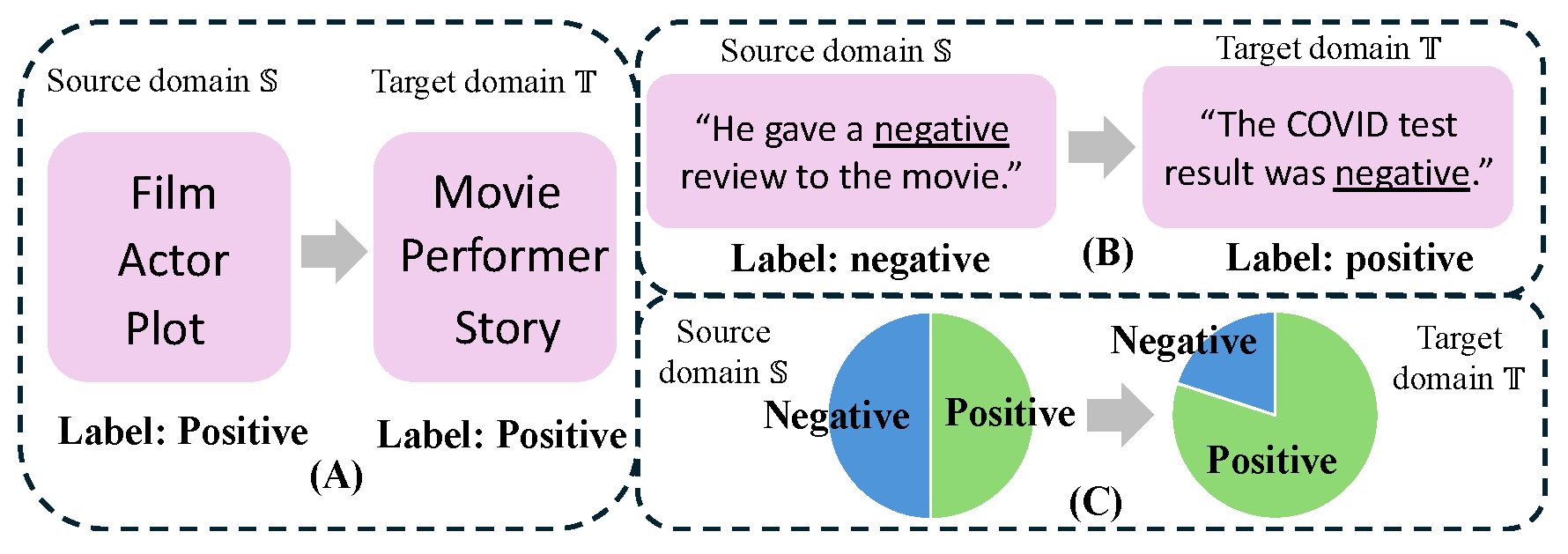

- Label distribution shift (Figure 3A): the class frequencies differ significantly between the source and target domains, leading to a change in the marginal label distribution , i.e., .

- Open set domain adaptation (Figure 3B): the target domain contains classes that are absent in the source domain. If the source and target label spaces are denoted as and , respectively, the relationship becomes , where the additional classes correspond to unknown categories .

- Universal domain adaptation (Figure 3C): the overlap between source and target label spaces is unknown, requiring models to simultaneously handle shared and domain-private classes.

-

- –

- Multi-source DA: models are trained on multiple source domains and adapted to a target domain , where the large distribution discrepancy and heterogeneity exists between source and target domains , and among the source domains themselves .

- –

- Multi-target DA: models are adapted from a single source domain to multiple target domains , where the large distribution discrepancy and heterogeneity exists between source and target domains , and among the target domains themselves .

- Cross-modality adaptation: source and target samples originate from different data modalities , resulting in substantial domain shifts .

4.1. Label Distribution Shift

4.2. Open-Set and Universal Domain Adaptation

4.3. Multi-Source or Multi-Target Domain Adaptation

4.4. Cross-Modality Adaptation

5. Applications of Active Domain Adaptation

5.1. Active Domain Adaptation in Natural Images

5.1.1. Image Classification

5.1.2. Object Detection

5.1.3. Semantic Segmentation

5.1.4. Multi-Tasks

5.1.5. Remote Sensing

5.1.6. Vehicle Re-Identification

5.2. Active Domain Adaptation in Robotics

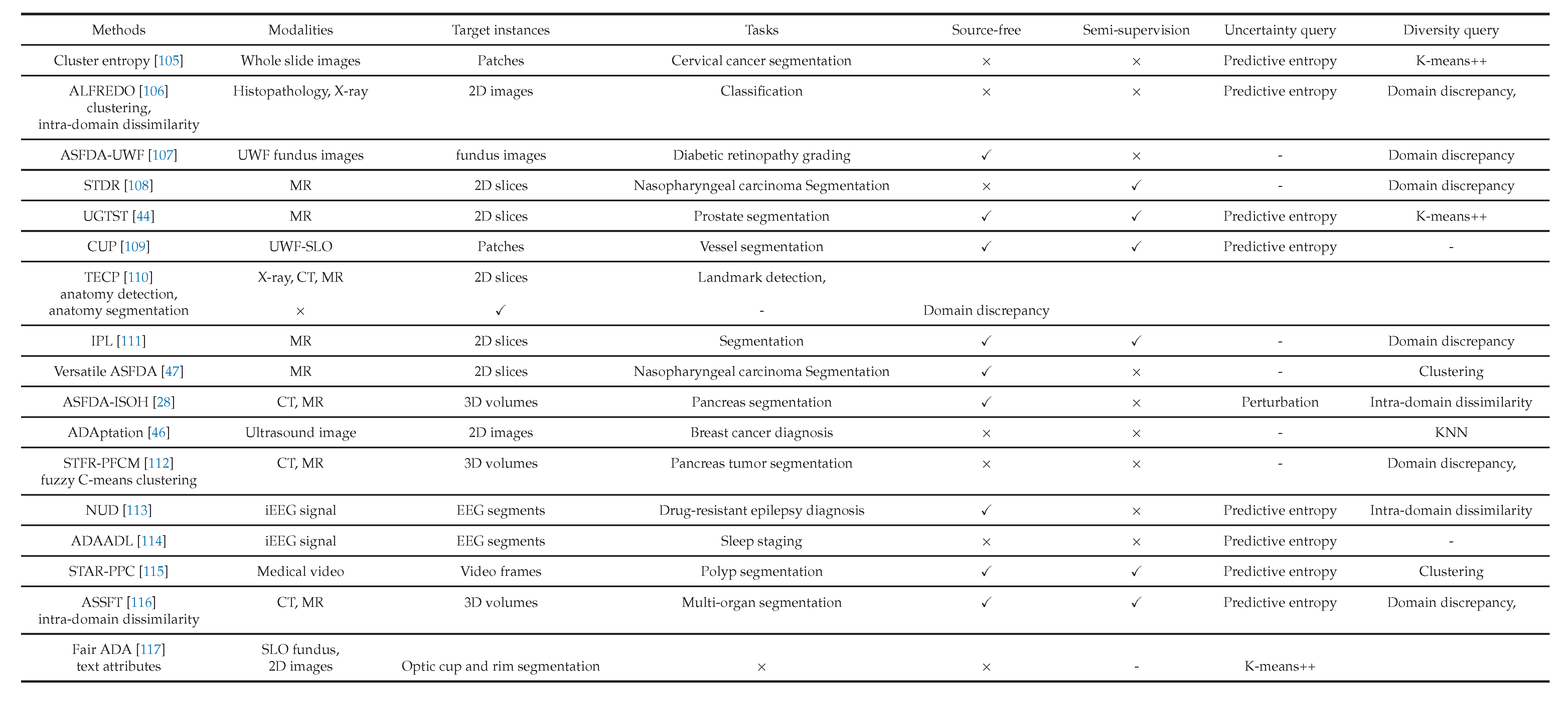

5.3. Active Domain Adaptation in Medical Data Analysis

5.3.1. Classification for Diagnosis

5.3.2. Medical Image and Video Segmentation

5.3.2.1. CT and MR images.

5.3.2.2. Pathologic images.

5.3.2.3. Medical videos.

5.3.3. Multi-Task Medical Data Analysis

5.3.4. Active Domain Adaptation of VFM/VLM in Medical Data

5.4. Active Domain Adaptation in Natural Language Processing

- Vocabulary shift: distinct lexical expressions are used to describe the same underlying concepts, and the distribution of tokens or textual features differs across domains, leading models trained on source-domain vocabulary to encounter unfamiliar or differently distributed words in the target domain (Figure 4A). This shift can be expressed as with , where x denotes textual features such as words, subwords, or embeddings.

- Context shift: contextual patterns that determine meaning vary across domains. Because NLP models rely heavily on contextual information to infer semantics, differences in contextual usage may cause incorrect predictions (Figure 4B). This shift is represented as , where y denotes labels and x represents contextual features.

- Label shift: the distribution of labels changes between domains, causing models trained on the source distribution to produce biased predictions in the target domain (Figure 4C). This shift can be written as .

5.5. Active Domain Adaptation in Graph Learning

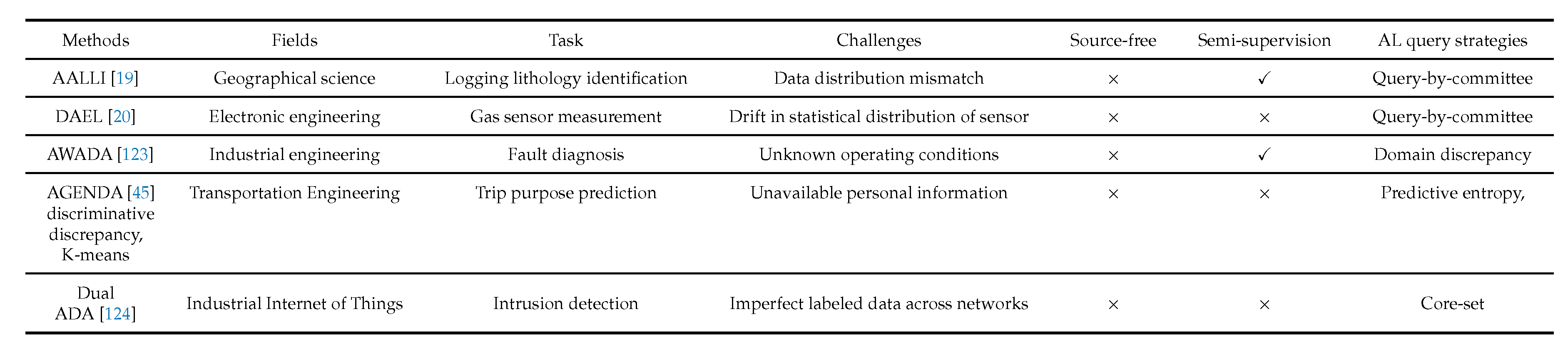

5.6. Active Domain Adaptation in Science and Engineering

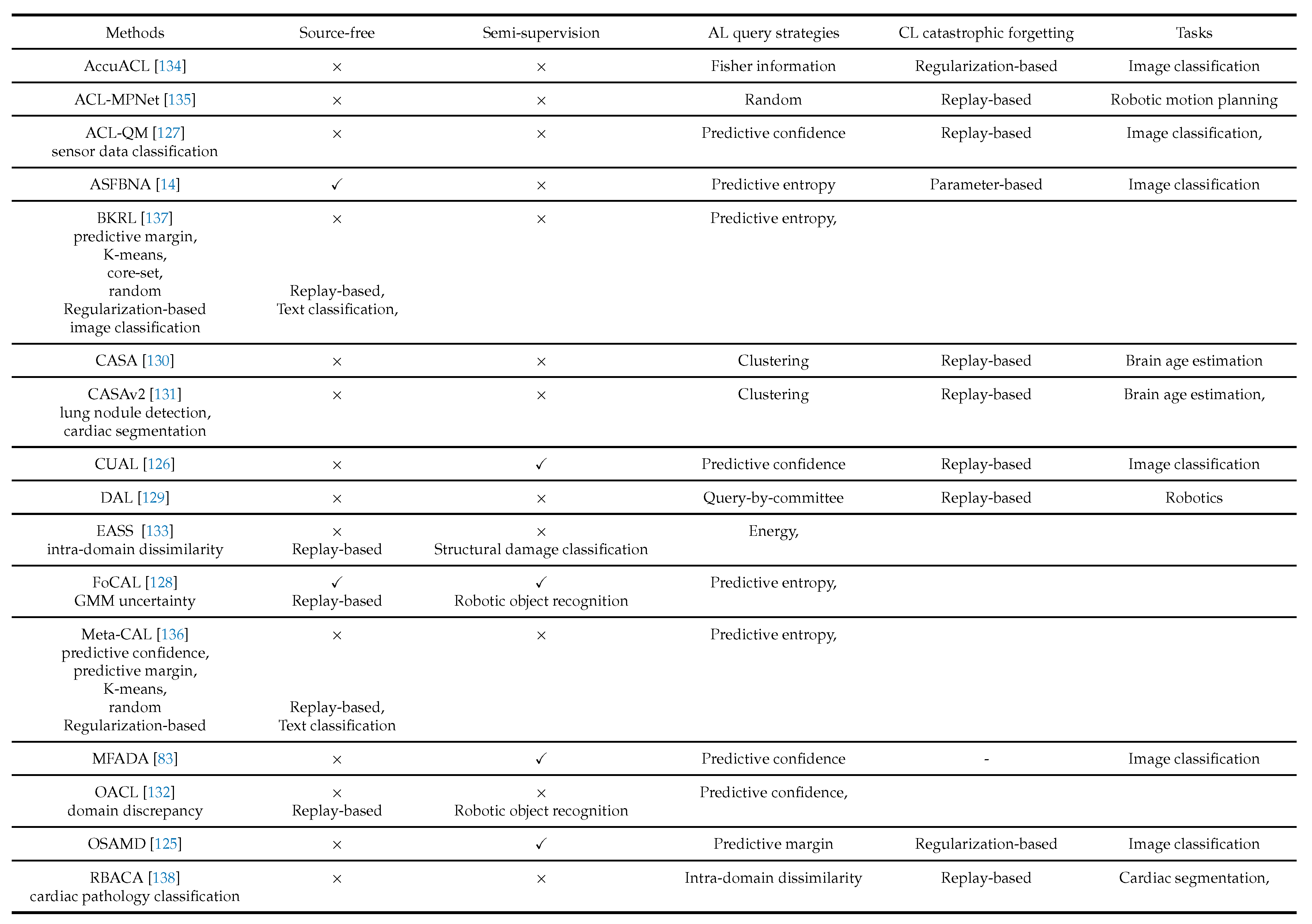

6. Active Continual Learning

6.1. Problem Formulation

6.2. Query Strategies in Active Continual Learning

6.2.1. Uncertainty-Based Strategies

6.2.2. Diversity-Based Strategies

6.2.3. Hybrid Strategies

6.2.4. ACL-Specific Query Strategies

6.2.5. Empirical Analyses of Query Strategies

6.3. Mitigate Catastrophic Forgetting in Active Continual Learning

6.3.1. Replay-Based Methods

6.3.2. Regularization-Based Methods

6.3.3. Parameter-Isolation Methods

6.4. Applications of Active Continual Learning

6.4.1. Medical Data Analysis

6.4.2. Robotics

7. Challenges and Future Directions

References

- Russakovsky, O.; Deng, J.; Su, H.; Krause, J.; Satheesh, S.; Ma, S.; Huang, Z.; Karpathy, A.; Khosla, A.; Bernstein, M.; et al. Imagenet large scale visual recognition challenge. Int. J. Comput. Vis. 2015, 115, 211–252. [Google Scholar] [CrossRef]

- Han, K.; Wang, Y.; Chen, H.; Chen, X.; Guo, J.; Liu, Z.; Tang, Y.; Xiao, A.; Xu, C.; Xu, Y.; et al. A survey on vision transformer. IEEE Trans. Pattern Anal. Mach. Intell. 2022, 45, 87–110. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. Adv. Neural Inf. Process. Syst. 2017, 30. [Google Scholar]

- Jawahar, G.; Sagot, B.; Seddah, D. What does BERT learn about the structure of language? In Proceedings of the Proceedings of the 57th annual meeting of the association for computational linguistics, 2019; pp. 3651–3657. [Google Scholar]

- Goh, G.B.; Hodas, N.O.; Vishnu, A. Deep learning for computational chemistry. J. Comput. Chem. 2017, 38, 1291–1307. [Google Scholar] [CrossRef]

- Khalil, R.A.; Saeed, N.; Masood, M.; Fard, Y.M.; Alouini, M.S.; Al-Naffouri, T.Y. Deep learning in the industrial internet of things: Potentials, challenges, and emerging applications. IEEE Internet Things J. 2021, 8, 11016–11040. [Google Scholar] [CrossRef]

- Luo, Y.; Zheng, L.; Guan, T.; Yu, J.; Yang, Y. Taking a closer look at domain shift: Category-level adversaries for semantics consistent domain adaptation. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2019; pp. 2507–2516. [Google Scholar]

- Kouw, W.M.; Loog, M. A review of domain adaptation without target labels. IEEE Trans. Pattern Anal. Mach. Intell. 2019, 43, 766–785. [Google Scholar] [CrossRef] [PubMed]

- Zhao, H.; Des Combes, R.T.; Zhang, K.; Gordon, G. On learning invariant representations for domain adaptation. In Proceedings of the International conference on machine learning. PMLR, 2019; pp. 7523–7532. [Google Scholar]

- Huang, S.J.; Jin, R.; Zhou, Z.H. Active learning by querying informative and representative examples. Adv. Neural Inf. Process. Syst. 2010, 23. [Google Scholar] [CrossRef]

- Ren, P.; Xiao, Y.; Chang, X.; Huang, P.Y.; Li, Z.; Gupta, B.B.; Chen, X.; Wang, X. A survey of deep active learning. ACM Comput. Surv. (CSUR) 2021, 54, 1–40. [Google Scholar] [CrossRef]

- Saha, A.; Rai, P.; Daumé, H., III; Venkatasubramanian, S.; DuVall, S.L. Active supervised domain adaptation. In Proceedings of the Joint European Conference on Machine Learning and Knowledge Discovery in Databases, 2011; Springer; pp. 97–112. [Google Scholar]

- Su, J.C.; Tsai, Y.H.; Sohn, K.; Liu, B.; Maji, S.; Chandraker, M. Active adversarial domain adaptation. In Proceedings of the Proceedings of the IEEE/CVF winter conference on applications of computer vision, 2020; pp. 739–748. [Google Scholar]

- Machireddy, A.; Krishnan, R.; Ahuja, N.; Tickoo, O. Continual active adaptation to evolving distributional shifts. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022; pp. 3444–3450. [Google Scholar]

- Cai, Z.; Sener, O.; Koltun, V. Online continual learning with natural distribution shifts: An empirical study with visual data. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2021; pp. 8281–8290. [Google Scholar]

- Wang, D.; Shang, Y. A new active labeling method for deep learning. In Proceedings of the 2014 International joint conference on neural networks (IJCNN); IEEE, 2014; pp. 112–119. [Google Scholar]

- Li, M.; Sethi, I.K. Confidence-based active learning. IEEE Trans. Pattern Anal. Mach. Intell. 2006, 28, 1251–1261. [Google Scholar] [CrossRef]

- Fu, B.; Cao, Z.; Wang, J.; Long, M. Transferable query selection for active domain adaptation. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2021; pp. 7272–7281. [Google Scholar]

- Chang, J.; Kang, Y.; Zheng, W.X.; Cao, Y.; Li, Z.; Lv, W.; Wang, X.M. Active domain adaptation with application to intelligent logging lithology identification. IEEE Trans. Cybern. 2021, 52, 8073–8087. [Google Scholar] [CrossRef]

- Yan, J.; Sun, R.; Liu, T.; Duan, S. Domain-adaptation-based active ensemble learning for improving chemical sensor array performance. Sens. Actuators A Phys. 2023, 357, 114411. [Google Scholar] [CrossRef]

- Sun, T.; Lu, C.; Ling, H. Local context-aware active domain adaptation. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023; pp. 18634–18643. [Google Scholar]

- Tian, Q.; Li, Y.; Yu, J.; Shen, J.; Ou, W. Rethinking Active Domain Adaptation: Balancing Uncertainty and Diversity. Image Vis. Comput. 2025, 158, 105492. [Google Scholar] [CrossRef]

- Wang, F.; Han, Z.; Zhang, Z.; He, R.; Yin, Y. Mhpl: Minimum happy points learning for active source free domain adaptation. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023; pp. 20008–20018. [Google Scholar]

- Sun, Y.; Shi, G.; Dong, W.; Li, X.; Dong, L.; Xie, X. Local Uncertainty Energy Transfer for Active Domain Adaptation. IEEE Transactions on Image Processing, 2025. [Google Scholar]

- Rangwani, H.; Jain, A.; Aithal, S.K.; Babu, R.V. S3vaada: Submodular subset selection for virtual adversarial active domain adaptation. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2021; pp. 7516–7525. [Google Scholar]

- Li, S.; Zhang, R.; Gong, K.; Xie, M.; Ma, W.; Gao, G. Source-free active domain adaptation via augmentation-based sample query and progressive model adaptation. IEEE Transactions on Neural Networks and Learning Systems, 2023. [Google Scholar]

- Tian, Q.; Zhang, H. Feature mixing and self-training for source-free active domain adaptation. Comput. Electr. Eng. 2023, 111, 108966. [Google Scholar] [CrossRef]

- Yang, J.; Yu, X.; Qiu, P.; Marcus, D.; Sotiras, A. Active Source-Free Cross-Domain and Cross-Modality Adaptation for Volumetric Medical Image Segmentation by Image Sensitivity and Organ Heterogeneity Sampling. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention, 2025; Springer; pp. 3–12. [Google Scholar]

- Nguyen, A.; Yosinski, J.; Clune, J. Deep neural networks are easily fooled: High confidence predictions for unrecognizable images. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2015; pp. 427–436. [Google Scholar]

- Zurbrügg, R.; Blum, H.; Cadena, C.; Siegwart, R.; Schmid, L. Embodied active domain adaptation for semantic segmentation via informative path planning. IEEE Robot. Autom. Lett. 2022, 7, 8691–8698. [Google Scholar] [CrossRef]

- Huang, D.; Li, J.; Chen, W.; Huang, J.; Chai, Z.; Li, G. Divide and adapt: Active domain adaptation via customized learning. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2023; pp. 7651–7660. [Google Scholar]

- Xu, X.; Yen, G.G.; Zhao, C.; Sun, Q.; Ren, W.; Sheng, L.; Tang, Y. Boundary-Based Active Domain Adaptation for Semantic Segmentation Under Adverse Conditions. IEEE Transactions on Neural Networks and Learning Systems, 2025. [Google Scholar]

- Xie, B.; Yuan, L.; Li, S.; Liu, C.H.; Cheng, X.; Wang, G. Active learning for domain adaptation: An energy-based approach. Proc. Proc. AAAI Conf. Artif. Intell. 2022, Vol. 36, 8708–8716. [Google Scholar] [CrossRef]

- Li, X.; Du, Z.; Li, J.; Zhu, L.; Lu, K. Source-free active domain adaptation via energy-based locality preserving transfer. In Proceedings of the Proceedings of the 30th ACM international conference on multimedia, 2022; pp. 5802–5810. [Google Scholar]

- Du, Z.; Li, J. Diffusion-based probabilistic uncertainty estimation for active domain adaptation. Adv. Neural Inf. Process. Syst. 2023, 36, 17129–17155. [Google Scholar]

- Xie, M.; Li, S.; Zhang, R.; Liu, C.H. Dirichlet-based Uncertainty Calibration for Active Domain Adaptation. In Proceedings of the The Eleventh International Conference on Learning Representations, 2023. [Google Scholar]

- Bao, W.; Yu, Q.; Kong, Y. Evidential deep learning for open set action recognition. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2021; pp. 13349–13358. [Google Scholar]

- Zhang, W.; Lv, Z.; Zhou, H.; Liu, J.W.; Li, J.; Li, M.; Li, Y.; Zhang, D.; Zhuang, Y.; Tang, S. Revisiting the domain shift and sample uncertainty in multi-source active domain transfer. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024; pp. 16751–16761. [Google Scholar]

- Tian, Q.; Yu, J.; Zhao, Y.; Li, W.; Lei, Z. Evidential Deep Learning for Open-Set Active Domain Adaptation. IEEE Transactions on Neural Networks and Learning Systems, 2025. [Google Scholar]

- Persello, C. Interactive domain adaptation for the classification of remote sensing images using active learning. IEEE Geosci. Remote Sens. Lett. 2012, 10, 736–740. [Google Scholar] [CrossRef]

- Bouvier, V.; Very, P.; Chastagnol, C.; Tami, M.; Hudelot, C. Stochastic Adversarial Gradient Embedding for Active Domain Adaptation. In Proceedings of the ECML, Bilbao, Spain, 2021. [Google Scholar]

- Wen, L.; Xu, Y.; Feng, Z.; Zhou, J.; Zhou, L.; Wang, Y. Semi-supervised domain adaptation for semantic segmentation via active learning with feature-and semantic-level alignments. IEEE Transactions on Intelligent Vehicles, 2024. [Google Scholar]

- Sun, Z.; Lin, L.; Yu, Y. You only label once: A self-adaptive clustering-based method for source-free active domain adaptation. IET Image Process. 2024, 18, 1268–1282. [Google Scholar]

- Luo, Z.; Luo, X.; Gao, Z.; Wang, G. An uncertainty-guided tiered self-training framework for active source-free domain adaptation in prostate segmentation. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention, 2024; Springer; pp. 107–117. [Google Scholar]

- Liao, C.; Chen, C.; Zhang, W.; Guo, S.; Liu, C. AGENDA: Predicting Trip Purposes with A New Graph Embedding Network and Active Domain Adaptation. ACM Trans. Knowl. Discov. From Data 2024, 18, 1–25. [Google Scholar] [CrossRef]

- Duan, Y.; Huang, Y.; Yang, X.; Han, L.; Xie, X.; Zhu, Z.; He, P.; Chan, K.H.; Cui, L.; Im, S.K.; et al. ADAptation: Reconstruction-Based Unsupervised Active Learning for Breast Ultrasound Diagnosis. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention, 2025; Springer; pp. 35–45. [Google Scholar]

- Wang, H.; Zhang, S.; Chen, J.; He, Y.; Xu, J.; Huang, H.; Xiao, J.; Li, L.; Liao, W.; Zhang, S.; et al. Versatile Source-Free Active Domain Adaptation for multi-center and multi-rater medical image segmentation. Inf. Fusion 2025, 103586. [Google Scholar] [CrossRef]

- Sener, O.; Savarese, S. Active Learning for Convolutional Neural Networks: A Core-Set Approach. In Proceedings of the International Conference on Learning Representations, 2018. [Google Scholar]

- Liu, S.; Jiang, Z.; Li, Y.; Peng, J.; Wang, Y.; Lin, W. Density matters: improved core-set for active domain adaptive segmentation. Proc. Proc. AAAI Conf. Artif. Intell. 2024, Vol. 38, 13999–14007. [Google Scholar] [CrossRef]

- He, J.; Liu, B.; Yin, G. Enhancing semi-supervised domain adaptation via effective target labeling. Proc. Proc. AAAI Conf. Artif. Intell. 2024, Vol. 38, 12385–12393. [Google Scholar] [CrossRef]

- Zhu, J.; Chen, X.; Hu, Q.; Xiao, Y.; Wang, B.; Sheng, B.; Chen, C.P. Clustering environment aware learning for active domain adaptation. IEEE Trans. Syst. Man. Cybern. Syst. 2024, 54, 3891–3904. [Google Scholar] [CrossRef]

- Luo, H.; Zhong, S.; Gong, C. Prototype-Guided Class-Balanced Active Domain Adaptation for Hyperspectral Image Classification. IEEE Transactions on Geoscience and Remote Sensing, 2025. [Google Scholar]

- Lyu, M.; Hao, T.; Xu, X.; Chen, H.; Lin, Z.; Han, J.; Ding, G. Learn from the learnt: Source-free active domain adaptation via contrastive sampling and visual persistence. In Proceedings of the European Conference on Computer Vision, 2024; Springer; pp. 228–246. [Google Scholar]

- Zhou, F.; Shui, C.; Yang, S.; Huang, B.; Wang, B.; Chaib-draa, B. Discriminative active learning for domain adaptation. Knowl.-Based Syst. 2021, 222, 106986. [Google Scholar] [CrossRef]

- Han, K.; Kim, Y.; Han, D.; Lee, H.; Hong, S. TL-ADA: Transferable loss-based active domain adaptation. Neural Netw. 2023, 161, 670–681. [Google Scholar] [CrossRef]

- Menke, M.; Wenzel, T.; Schwung, A. Bridging the gap: Active learning for efficient domain adaptation in object detection. Expert Syst. With Appl. 2024, 254, 124403. [Google Scholar] [CrossRef]

- Zhang, S.; Zhang, L.; Liu, Z. Active domain adaptation for semantic segmentation via dynamically balancing domainness and uncertainty. Image Vis. Comput. 2024, 148, 105132. [Google Scholar] [CrossRef]

- Nakamura, Y.; Ishii, Y.; Yamashita, T. Active domain adaptation with false negative prediction for object detection. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024; pp. 28782–28792. [Google Scholar]

- mathelin, A.D.; Deheeger, F.; MOUGEOT, M.; Vayatis, N. Discrepancy-Based Active Learning for Domain Adaptation. In Proceedings of the International Conference on Learning Representations, 2022. [Google Scholar]

- Kalita, I.; Kumar, R.N.S.; Roy, M. Deep learning-based cross-sensor domain adaptation under active learning for land cover classification. IEEE Geosci. Remote Sens. Lett. 2021, 19, 1–5. [Google Scholar] [CrossRef]

- Wu, T.H.; Liou, Y.S.; Yuan, S.J.; Lee, H.Y.; Chen, T.I.; Huang, K.C.; Hsu, W.H. D 2 ada: Dynamic density-aware active domain adaptation for semantic segmentation. In Proceedings of the European Conference on Computer Vision, 2022; Springer; pp. 449–467. [Google Scholar]

- Ustun, B.; Kaya, A.K.; Ayerden, E.C.; Altinel, F. Spectral transfer guided active domain adaptation for thermal imagery. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023; pp. 449–458. [Google Scholar]

- Tian, Q.; Pan, J.; Yang, Y.; Ou, W. Dual-Focus Memory Contrastive Learning for Active Domain Adaptation. Neural Netw. 2025, 108224. [Google Scholar] [CrossRef]

- Xie, M.; Li, Y.; Wang, Y.; Luo, Z.; Gan, Z.; Sun, Z.; Chi, M.; Wang, C.; Wang, P. Learning distinctive margin toward active domain adaptation. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022; pp. 7993–8002. [Google Scholar]

- Prabhu, V.; Chandrasekaran, A.; Saenko, K.; Hoffman, J. Active domain adaptation via clustering uncertainty-weighted embeddings. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2021; pp. 8505–8514. [Google Scholar]

- Ning, M.; Lu, D.; Wei, D.; Bian, C.; Yuan, C.; Yu, S.; Ma, K.; Zheng, Y. Multi-anchor active domain adaptation for semantic segmentation. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2021; pp. 9112–9122. [Google Scholar]

- Saboori, A.; Ghassemian, H. Adversarial discriminative active Deep Learning for domain adaptation in hyperspectral images classification. Int. J. Remote Sens. 2021, 42, 3981–4003. [Google Scholar] [CrossRef]

- Zhang, H.; Zhang, R. Active domain adaptation with multi-level contrastive units for semantic segmentation. In Proceedings of the Proceedings of the Asian Conference on Computer Vision, 2022; pp. 1640–1657. [Google Scholar]

- Ning, M.; Lu, D.; Xie, Y.; Chen, D.; Wei, D.; Zheng, Y.; Tian, Y.; Yan, S.; Yuan, L. MADAv2: Advanced multi-anchor based active domain adaptation segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2023, 45, 13553–13566. [Google Scholar] [CrossRef]

- Kothandaraman, D.; Shekhar, S.; Sancheti, A.; Ghuhan, M.; Shukla, T.; Manocha, D. Salad: Source-free active label-agnostic domain adaptation for classification, segmentation and detection. In Proceedings of the Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, 2023; pp. 382–391. [Google Scholar]

- Tian, Q.; Zhou, L.; Zhu, Y.; Kang, L. Active domain adaptation with mining diverse knowledge: An updated class consensus dictionary approach. Inf. Sci. 2024, 667, 120485. [Google Scholar] [CrossRef]

- Guan, L.; Yuan, X. Dynamic weighting and boundary-aware active domain adaptation for semantic segmentation in autonomous driving environment. IEEE Transactions on Intelligent Transportation Systems, 2024. [Google Scholar]

- Shang, L.; Zhao, D.; Nie, Y.; Zhao, K.; Xiao, L.; Dai, B. A Two-Stage Active Domain Adaptation Framework for Vehicle Re-Identification. In Proceedings of the Chinese Conference on Pattern Recognition and Computer Vision (PRCV), 2024; Springer; pp. 380–394. [Google Scholar]

- Yang, L.; Chen, H.; Yang, A.; Li, J. EasySeg: An error-aware domain adaptation framework for remote sensing imagery semantic segmentation via interactive learning and active learning. IEEE Trans. Geosci. Remote Sens. 2024, 62, 1–18. [Google Scholar] [CrossRef]

- Han, F.; Ye, P.; Duan, S.; Wang, L. Ada-iD: Active Domain Adaptation for Intrusion Detection. In Proceedings of the Proceedings of the 32nd ACM International Conference on Multimedia, 2024; pp. 7404–7413. [Google Scholar]

- Franco, L.; Mandica, P.; Kallidromitis, K.; Guillory, D.; Li, Y.T.; Darrell, T.; Galasso, F. Hyperbolic Active Learning for Semantic Segmentation under Domain Shift. In Proceedings of the Forty-first International Conference on Machine Learning, 2024. [Google Scholar]

- Peng, J.; Sun, M.; Lim, E.G.; Wang, Q.; Xiao, J. Prototype Guided Pseudo Labeling and Perturbation-based Active Learning for domain adaptive semantic segmentation. Pattern Recognit. 2024, 148, 110203. [Google Scholar] [CrossRef]

- Ouyang, J.; Zhang, Z.; Meng, Q.; Chi, J. Structure-Based Uncertainty Estimation for Source-Free Active Domain Adaptation. IET Comput. Vis. 2025, 19, e70020. [Google Scholar] [CrossRef]

- Wang, F.; Han, Z.; Sun, H.; Yin, Y. Active source-free open-set domain adaptation. Knowl.-Based Syst. 2025, 114342. [Google Scholar] [CrossRef]

- Safaei, B.; Vibashan, V.; Patel, V.M. Certainty and uncertainty guided active domain adaptation. In Proceedings of the 2025 IEEE International Conference on Image Processing (ICIP); IEEE, 2025; pp. 2342–2347. [Google Scholar]

- Gilles, M.; Furmans, K.; Rayyes, R. Metamvuc: Active learning for sample-efficient sim-to-real domain adaptation in robotic grasping. IEEE Robotics and Automation Letters, 2025. [Google Scholar]

- Wang, F.; Han, Z.; Yin, Y. BIAS: Bridging Inactive and Active Samples for active source free domain adaptation. Knowl.-Based Syst. 2024, 284, 111151. [Google Scholar]

- Sagawa, S.; Hino, H. Cost-effective framework for gradual domain adaptation with multifidelity. Neural Netw. 2023, 164, 731–741. [Google Scholar] [CrossRef]

- Hwang, S.; Lee, S.; Kim, S.; Ok, J.; Kwak, S. Combating label distribution shift for active domain adaptation. In Proceedings of the European Conference on Computer Vision, 2022; Springer; pp. 549–566. [Google Scholar]

- Xiao, W.; Gu, J.; Liu, H. Category-aware active domain adaptation. In Proceedings of the Forty-first International Conference on Machine Learning, 2024. [Google Scholar]

- You, K.; Long, M.; Cao, Z.; Wang, J.; Jordan, M.I. Universal domain adaptation. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2019; pp. 2720–2729. [Google Scholar]

- Ma, X.; Gao, J.; Xu, C. Active universal domain adaptation. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2021; pp. 8968–8977. [Google Scholar]

- Zhang, L.; Xu, L.; Motamed, S.; Chakraborty, S.; De la Torre, F. D3GU: Multi-target active domain adaptation via enhancing domain alignment. In Proceedings of the Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, 2024; pp. 2577–2586. [Google Scholar]

- Zhu, Y.; Ai, J.; Wu, L.; Guo, D.; Jia, W.; Hong, R. An active multi-target domain adaptation strategy: Progressive class prototype rectification. IEEE Transactions on Multimedia, 2024. [Google Scholar]

- Yao, X.; Peng, X.; Gao, J.; Yuan, Z.; Wu, X.; Xu, C. Active Cross-Modal Domain Adaptation. IEEE Transactions on Multimedia; 2025. [Google Scholar]

- Vázquez, D.; López, A.; Ponsa, D.; Marin, J. Cool world: domain adaptation of virtual and real worlds for human detection using active learning. In Proceedings of the Advances in Neural Information Processing Systems–Workshop on Domain Adaptation: Theory and Applications, 2011. [Google Scholar]

- Liu, G.; Yan, Y.; Subramanian, R.; Song, J.; Lu, G.; Sebe, N. Active domain adaptation with noisy labels for multimedia analysis. World Wide Web 2016, 19, 199–215. [Google Scholar] [CrossRef]

- Saenko, K.; Kulis, B.; Fritz, M.; Darrell, T. Adapting visual category models to new domains. In Proceedings of the European conference on computer vision, 2010; Springer; pp. 213–226. [Google Scholar]

- Gong, B.; Shi, Y.; Sha, F.; Grauman, K. Geodesic flow kernel for unsupervised domain adaptation. In Proceedings of the 2012 IEEE conference on computer vision and pattern recognition. IEEE, 2012; pp. 2066–2073. [Google Scholar]

- Venkateswara, H.; Eusebio, J.; Chakraborty, S.; Panchanathan, S. Deep hashing network for unsupervised domain adaptation. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2017; pp. 5018–5027. [Google Scholar]

- Peng, X.; Usman, B.; Kaushik, N.; Hoffman, J.; Wang, D.; Saenko, K. Visda: The visual domain adaptation challenge. arXiv 2017, arXiv:1710.06924. [Google Scholar] [CrossRef]

- Peng, X.; Bai, Q.; Xia, X.; Huang, Z.; Saenko, K.; Wang, B. Moment matching for multi-source domain adaptation. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2019; pp. 1406–1415. [Google Scholar]

- Caputo, B.; Müller, H.; Martinez-Gomez, J.; Villegas, M.; Acar, B.; Patricia, N.; Marvasti, N.; Üsküdarlı, S.; Paredes, R.; Cazorla, M.; et al. Imageclef 2014: Overview and analysis of the results. In Proceedings of the International conference of the cross-language evaluation forum for European languages, 2014; Springer; pp. 192–211. [Google Scholar]

- Ringwald, T.; Stiefelhagen, R. Adaptiope: A modern benchmark for unsupervised domain adaptation. In Proceedings of the Proceedings of the IEEE/CVF winter conference on applications of computer vision, 2021; pp. 101–110. [Google Scholar]

- Jiang, P.; Ergu, D.; Liu, F.; Cai, Y.; Ma, B. A Review of Yolo algorithm developments. Procedia Comput. Sci. 2022, 199, 1066–1073. [Google Scholar] [CrossRef]

- Xie, B.; Yuan, L.; Li, S.; Liu, C.H.; Cheng, X. Towards fewer annotations: Active learning via region impurity and prediction uncertainty for domain adaptive semantic segmentation. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2022; pp. 8068–8078. [Google Scholar]

- Persello, C.; Bruzzone, L. A novel active learning strategy for domain adaptation in the classification of remote sensing images. In Proceedings of the 2011 IEEE International Geoscience and Remote Sensing Symposium. IEEE, 2011; pp. 3720–3723. [Google Scholar]

- Matasci, G.; Tuia, D.; Kanevski, M. SVM-based boosting of active learning strategies for efficient domain adaptation. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2012, 5, 1335–1343. [Google Scholar] [CrossRef]

- Deng, C.; Liu, X.; Li, C.; Tao, D. Active multi-kernel domain adaptation for hyperspectral image classification. Pattern Recognit. 2018, 77, 306–315. [Google Scholar] [CrossRef]

- Liu, X.; Araki, K.; Harada, S.; Yoshizawa, A.; Terada, K.; Kurata, M.; Nakajima, N.; Abe, H.; Ushiku, T.; Bise, R. Cluster entropy: Active domain adaptation in pathological image segmentation. In Proceedings of the 2023 IEEE 20th International Symposium on Biomedical Imaging (ISBI); IEEE, 2023; pp. 1–5. [Google Scholar]

- Mahapatra, D.; Tennakoon, R.; George, Y.; Roy, S.; Bozorgtabar, B.; Ge, Z.; Reyes, M. ALFREDO: Active Learning with FeatuRe disEntangelement and DOmain adaptation for medical image classification. Med. Image Anal. 2024, 97, 103261. [Google Scholar] [CrossRef] [PubMed]

- Ran, J.; Zhang, G.; Xia, F.; Zhang, X.; Xie, J.; Zhang, H. Source-free active domain adaptation for diabetic retinopathy grading based on ultra-wide-field fundus images. Comput. Biol. Med. 2024, 174, 108418. [Google Scholar] [CrossRef]

- Wang, H.; Chen, J.; Zhang, S.; He, Y.; Xu, J.; Wu, M.; He, J.; Liao, W.; Luo, X. Dual-reference source-free active domain adaptation for nasopharyngeal carcinoma tumor segmentation across multiple hospitals. IEEE Transactions on Medical Imaging, 2024. [Google Scholar]

- Wang, H.; Luo, X.; Chen, W.; Tang, Q.; Xin, M.; Wang, Q.; Zhu, L. Advancing uwf-slo vessel segmentation with source-free active domain adaptation and a novel multi-center dataset. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention, 2024; Springer; pp. 75–85. [Google Scholar]

- Quan, Q.; Yao, Q.; Zhu, H.; Wang, Q.; Zhou, S.K. Which images to label for few-shot medical image analysis? Med. Image Anal. 2024, 96, 103200. [Google Scholar] [CrossRef]

- Chen, Y.; Luo, X.; Chen, R.; Li, Y.; Zhang, H.; Lyu, H.; Song, H.; Li, K. Source-Free Active Domain Adaptation via Influential-Points-Guided Progressive Teacher for Medical Image Segmentation. IEEE Transactions on Medical Imaging, 2025. [Google Scholar]

- Qin, C.; Wang, Y.; Zeng, F.; Zhang, J.; Cao, Y.; Yin, X.; Huang, S.; Chen, D.; Zhang, H.; Ju, Z. Active Domain Adaptation Based on Probabilistic Fuzzy c-means Clustering for Pancreatic Tumor Segmentation. IEEE Transactions on Fuzzy Systems, 2025. [Google Scholar]

- Wang, K.; Yang, M.; Liu, A.; Li, C.; Qian, R.; Chen, X. Active source-free domain adaptation for intracranial EEG classification via neighborhood uncertainty and diversity. Biomed. Signal Process. Control 2025, 104, 107464. [Google Scholar] [CrossRef]

- Ghasemigarjan, R.; Mikaeili, M.; Setarehdan, S.K.; Saboori, A. Enhancing EEG-based sleep staging efficiency with minimal channels through adversarial domain adaptation and active deep learning. J. Neural Eng. 2025, 22, 046043. [Google Scholar] [CrossRef]

- Li, J.; Wang, H.; Wang, W.; Qin, J.; Wang, Q.; Zhu, L. Source-Free Active Domain Adaptation for Efficient Medical Video Polyp Segmentation. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention, 2025; Springer; pp. 499–509. [Google Scholar]

- Yang, J.; Marcus, D.S.; Sotiras, A. Adapting Medical Vision Foundation Models for Volumetric Medical Image Segmentation via Active Learning and Selective Semi-supervised Fine-tuning. arXiv 2025, arXiv:2509.10784. [Google Scholar] [CrossRef]

- Wang, H.; Chen, W.; Luo, X.; Xing, Z.; Liu, L.; Qin, J.; Wu, S.; Zhu, L. Toward Fair and Accurate Cross-Domain Medical Image Segmentation: A VLM-Driven Active Domain Adaptation Paradigm. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2025; pp. 24102–24112. [Google Scholar]

- Chan, Y.S.; Ng, H.T. Domain adaptation with active learning for word sense disambiguation. In Proceedings of the Proceedings of the 45th Annual Meeting of the Association of Computational Linguistics, 2007; pp. 49–56. [Google Scholar]

- Attardi, G.; Simi, M.; Zanelli, A. Domain adaptation by active learning. In Proceedings of the International Workshop on Evaluation of Natural Language and Speech Tool for Italian, 2012; Springer; pp. 77–85. [Google Scholar]

- Zhao, S.; Ng, H.T. Domain adaptation with active learning for coreference resolution. In Proceedings of the Proceedings of the 5th International Workshop on Health Text Mining and Information Analysis (Louhi), 2014; pp. 21–29. [Google Scholar]

- Wu, F.; Huang, Y.; Yan, J. Active sentiment domain adaptation. Proceedings of the Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics 2017, Volume 1, 1701–1711. [Google Scholar]

- Wang, P.; Cao, Y.; Russell, C.; Shen, Y.; Luo, J.; Zhang, M.; Heng, S.; Luo, X. DELTA: Dual Consistency Delving with Topological Uncertainty for Active Graph Domain Adaptation. Transactions on Machine Learning Research, 2025. [Google Scholar]

- Liu, C.; He, X. Adversarial Weighted Active Domain Adaptation for Safety Assessment in Open Environments. IEEE Transactions on Industrial Informatics, 2024. [Google Scholar]

- Ma, W.; Lan, X.; Liu, R.; Wang, J.; Zhou, Q. A Dual Active Domain Adaptation Approach with Loss Prediction for IIoT Intrusion Detection under Imperfect Samples. IEEE Internet of Things Journal, 2025. [Google Scholar]

- Zhou, S.; Zhao, H.; Zhang, S.; Wang, L.; Chang, H.; Wang, Z.; Zhu, W. Online continual adaptation with active self-training. In Proceedings of the International conference on artificial intelligence and statistics. PMLR, 2022; pp. 8852–8883. [Google Scholar]

- Rios, A.S.; Ndiour, I.J.; Sydir, J.; Datta, P.; Tickoo, O.; Ahuja, N. CUAL: Continual Uncertainty-aware Active Learner. In Proceedings of the NeurIPS 2024 Workshop on Scalable Continual Learning for Lifelong Foundation Models, 2024. [Google Scholar]

- Bauer, J.C.; Trattnig, S.; Geng, P.; Raffin, T.; Daub, R. A continual active learning approach to adapt neural networks to distribution shifts in quality monitoring applications. Int. J. Adv. Manuf. Technol. 2025, 1–17. [Google Scholar] [CrossRef]

- Ayub, A.; Fendley, C. Few-shot continual active learning by a robot. Adv. Neural Inf. Process. Syst. 2022, 35, 30612–30624. [Google Scholar]

- Johnson, C.; Maldonado-Contreras, J.; Young, A. Accelerating constrained continual learning with dynamic active learning: A study in adaptive speed estimation for lower-limb prostheses. In Proceedings of the 2024 International Symposium on Medical Robotics (ISMR); IEEE, 2024; pp. 1–8. [Google Scholar]

- Perkonigg, M.; Hofmanninger, J.; Langs, G. Continual active learning for efficient adaptation of machine learning models to changing image acquisition. In Proceedings of the International Conference on Information Processing in Medical Imaging, 2021; Springer; pp. 649–660. [Google Scholar]

- Perkonigg, M.; Hofmanninger, J.; Herold, C.; Prosch, H.; Langs, G. Continual Active Learning Using Pseudo-Domains for Limited Labelling Resources and Changing Acquisition Characteristics. Mach. Learn. Biomed. Imaging 2022, 1, 1–28. [Google Scholar] [CrossRef]

- Nie, X.; Deng, Z.; He, M.; Fan, M.; Tang, Z. Online active continual learning for robotic lifelong object recognition. IEEE Transactions on Neural Networks and Learning Systems, 2023. [Google Scholar]

- Zhang, X.; Loo, C.K.; Chuah, J.H. Active continual learning with Energy Alignment Sampling Strategy (EASS) for structural damage classification. Appl. Intell. 2025, 55, 886. [Google Scholar] [CrossRef]

- Park, J.; Park, D.; Lee, J.G. Active Learning for Continual Learning: Keeping the Past Alive in the Present. In Proceedings of the The Thirteenth International Conference on Learning Representations, 2025. [Google Scholar]

- Qureshi, A.H.; Miao, Y.; Yip, M.C. Active continual learning for planning and navigation. In Proceedings of the ICML 2020 Workshop on Real World Experiment Design and Active Learning, 2020. [Google Scholar]

- Ho, S.; Liu, M.; Gao, S.; Gao, L. Learning to learn for few-shot continual active learning. Artif. Intell. Rev. 2024, 57, 280. [Google Scholar] [CrossRef]

- Vu, T.T.; Khadivi, S.; Ghorbanali, M.; Phung, D.; Haffari, G. Active continual learning: On balancing knowledge retention and learnability. In Proceedings of the Australasian Joint Conference on Artificial Intelligence, 2024; Springer; pp. 137–150. [Google Scholar]

- Daniel, R.; Verdelho, M.R.; Barata, C.; Santiago, C. Continual Deep Active Learning for Medical Imaging: Replay-Based Architecture for Context Adaptation. In Proceedings of the Iberian Conference on Pattern Recognition and Image Analysis, 2025; Springer; pp. 108–121. [Google Scholar]

- Rolnick, D.; Ahuja, A.; Schwarz, J.; Lillicrap, T.; Wayne, G. Experience replay for continual learning. Adv. Neural Inf. Process. Syst. 2019, 32. [Google Scholar]

- Lopez-Paz, D.; Ranzato, M. Gradient episodic memory for continual learning. Adv. Neural Inf. Process. Syst. 2017, 30. [Google Scholar]

- Kirkpatrick, J.; Pascanu, R.; Rabinowitz, N.; Veness, J.; Desjardins, G.; Rusu, A.A.; Milan, K.; Quan, J.; Ramalho, T.; Grabska-Barwinska, A.; et al. Overcoming catastrophic forgetting in neural networks. Proc. Natl. Acad. Sci. 2017, 114, 3521–3526. [Google Scholar] [CrossRef]

- Zenke, F.; Poole, B.; Ganguli, S. Continual learning through synaptic intelligence. In Proceedings of the International conference on machine learning. PMLR, 2017; pp. 3987–3995. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).