Submitted:

01 May 2026

Posted:

05 May 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Motivation

1.2. Goals

- Data driven method to model dynamics of the observable call options surface. We use non-parametric machine learning methods to extract the model dynamics from the observable call options on Deribit. Rather than assuming a parametric form for the drift and diffusion of the underlying, the model seeks to learn the evolution of option prices directly from historical time-series data across strikes and maturities. By focusing on the evolution of the entire surface rather than individual options, the model captures the co-movements and structural relationships inherent in a live options market, enabling a more realistic depiction of the dynamics observed in high-frequency cryptocurrency data.

- Ensure the model does not admit arbitrage. Absence of arbitrage is a basic consistency requirement for financial models. We want to ensure our model accounts for this by ensuring that outputs admitting arbitrage are either eliminated entirely, or at the least minimised. To ensure theoretical and practical validity, the model is explicitly designed to satisfy static, and minimise any violation of dynamic, no-arbitrage conditions. Static arbitrage constraints impose shape restrictions on the option surface—such as monotonicity in strike and convexity, and calendar spread consistency—ensuring that prices at a single point in time form a feasible and economically consistent surface. This is the more fundamental requirement in the sense that violations of static arbitrage constraints lead to immediate, model–free arbitrage opportunities. Dynamic arbitrage constraints, in contrast, govern the temporal evolution of option prices, ensuring that the model’s drift and diffusion dynamics are consistent with an equivalent martingale measure. What each of these constraints mean definitionally will be discussed in more depth in Section 2.2 and Section 2.3 below. The static conditions are incorporated directly into the learning process through constrained optimisation and diffusion shrinkage methods derived from the Arbitrage-Free Neural SDE framework of Cohen et al. (2023a).

- Use of the model for risk management and hedging. As application examples of the model fitted to the market in the present paper, we build on the work of Cohen et al. (see Cohen et al. (2022) and Cohen et al. (2023b)) to hedge options portfolios and provide value at risk (VaR) estimates via market simulation.

1.3. Significance and Contributions

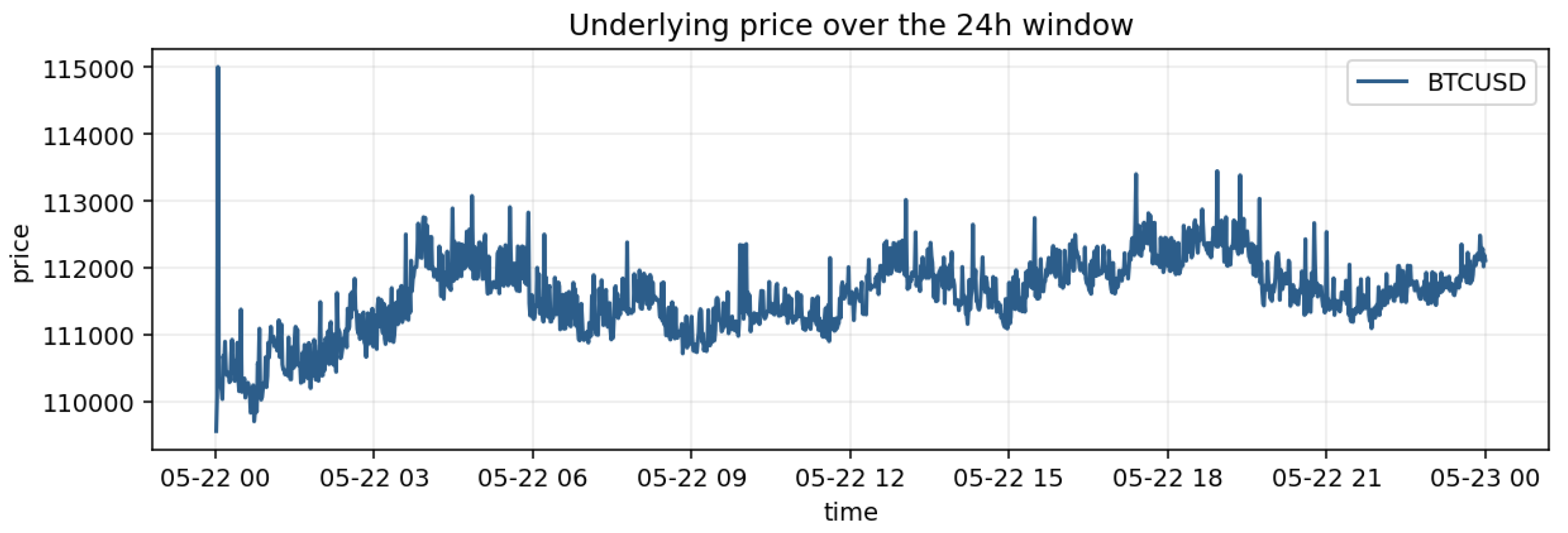

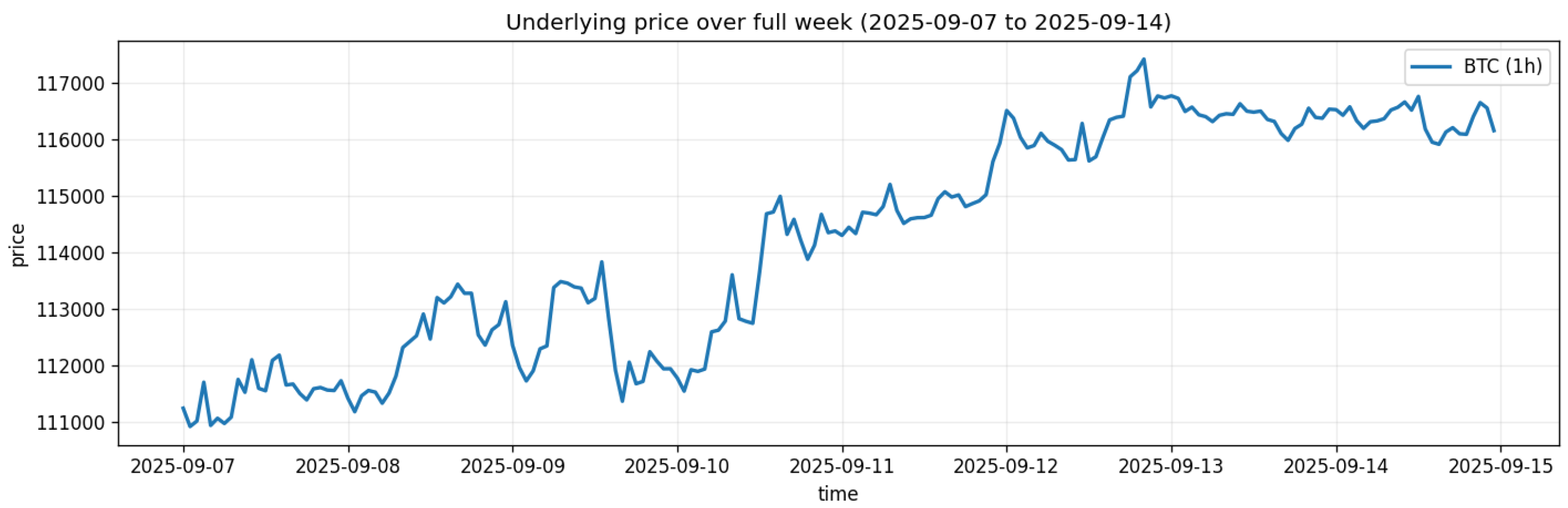

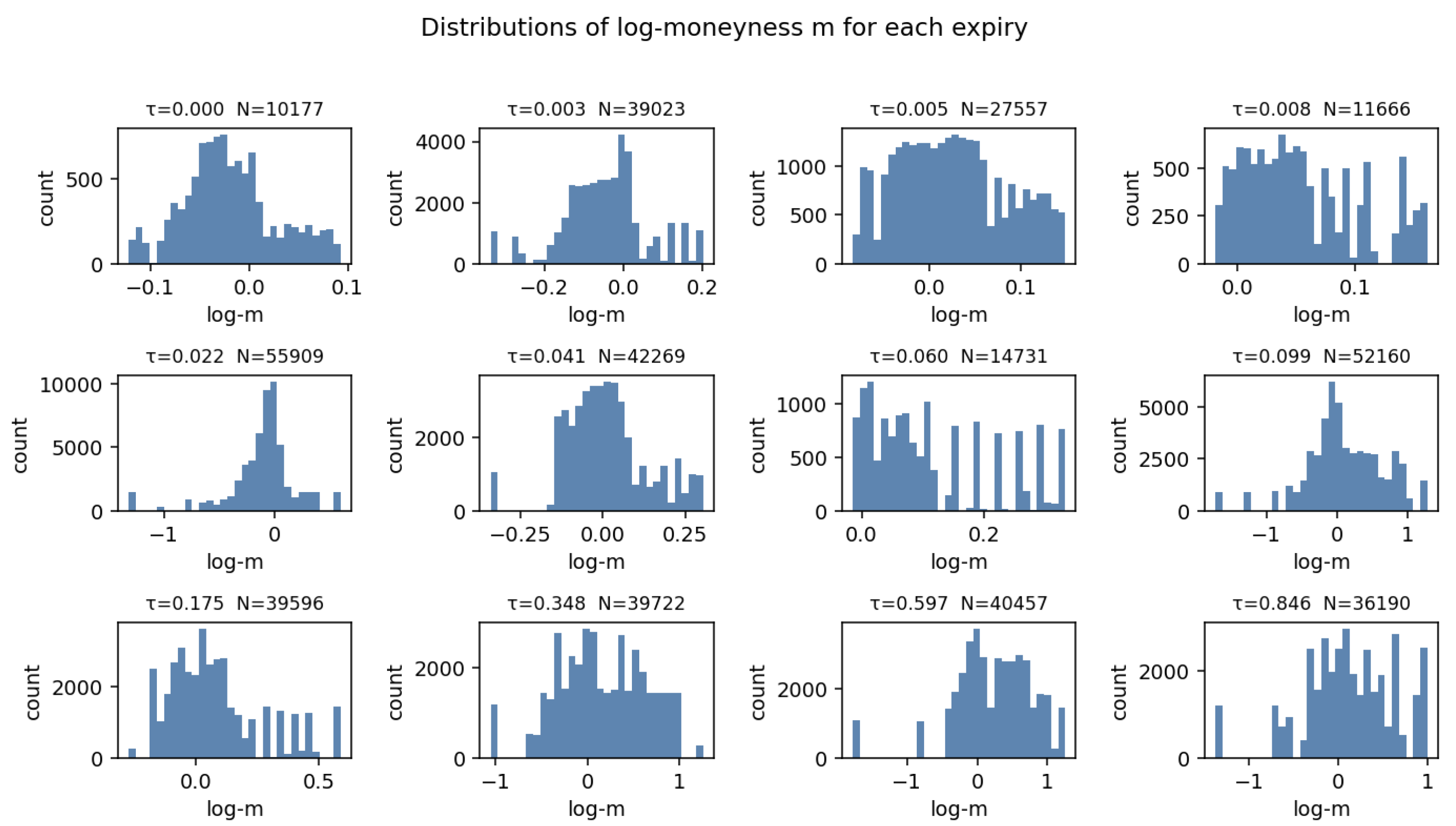

1.4. Overview of Dataset Used

2. Background

2.1. Market Models

2.2. Static Arbitrage

- 1.

- Monotonicity in maturity. Whenever two call prices share the same moneyness m but have different maturities , the call price must satisfy

- 2.

-

Monotonicity in moneyness. At any fixed maturity , when two moneyness values satisfy , the corresponding call prices must satisfyThese conditions are derived from the absence of ’calender spread’ arbitrage formalised by Carr and Madan (2005) and further reviewed by Gatheral (2011).

- 3.

- Convexity in moneyness. Also at a fixed , for any three consecutive moneyness points , convexity requires (see e.g. Breeden and Litzenberger 1978 and Roper and Rutkowski 2009)

- 4.

- Nonnegativity. For every node , the call price must be nonnegative:

2.3. Dynamic Arbitrage

2.4. Neural Networks in Options Pricing & Modelling

2.4.1. Dynamic Hedging via Deep Learning

2.4.2. Generative Models

2.4.3. Neural SDEs

2.4.4. Physics Informed Neural Networks (PINNs) for Options Pricing

2.5. Choice of Modelling Approach

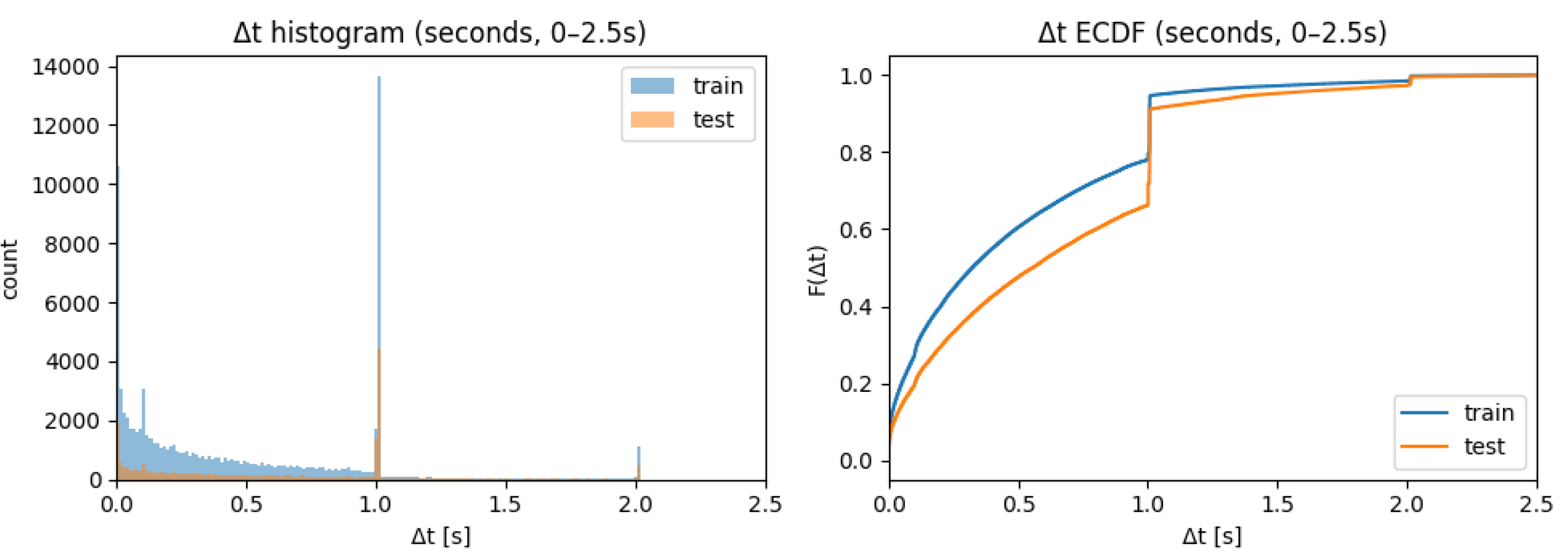

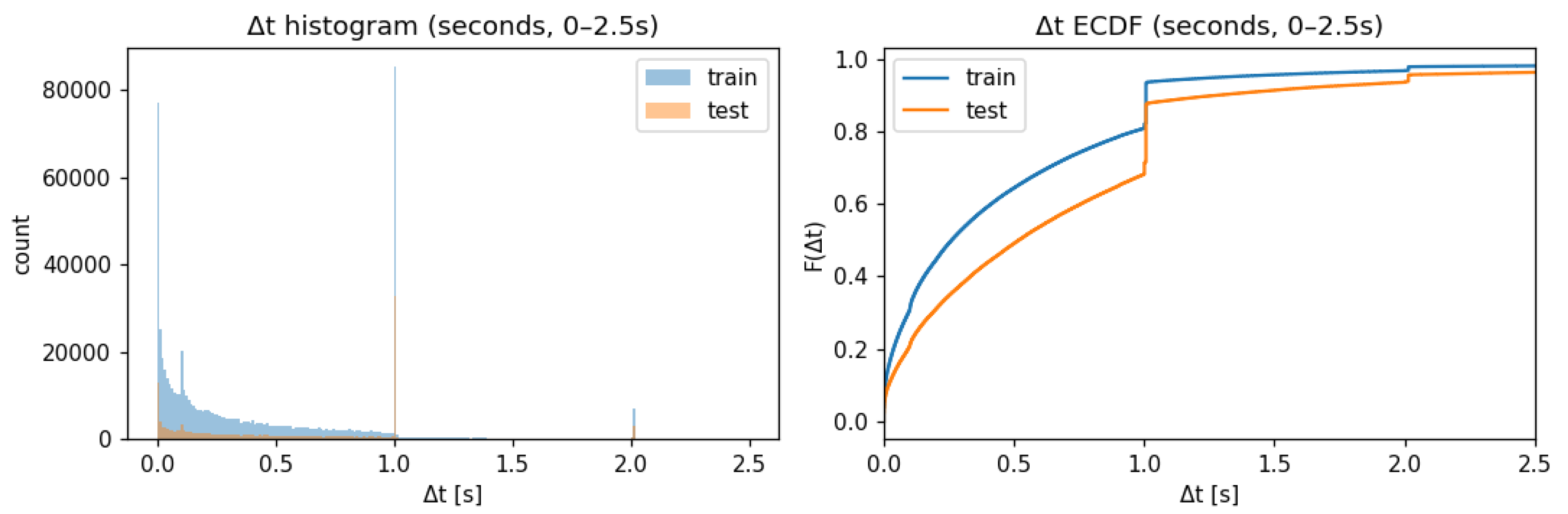

3. Data Preprocessing

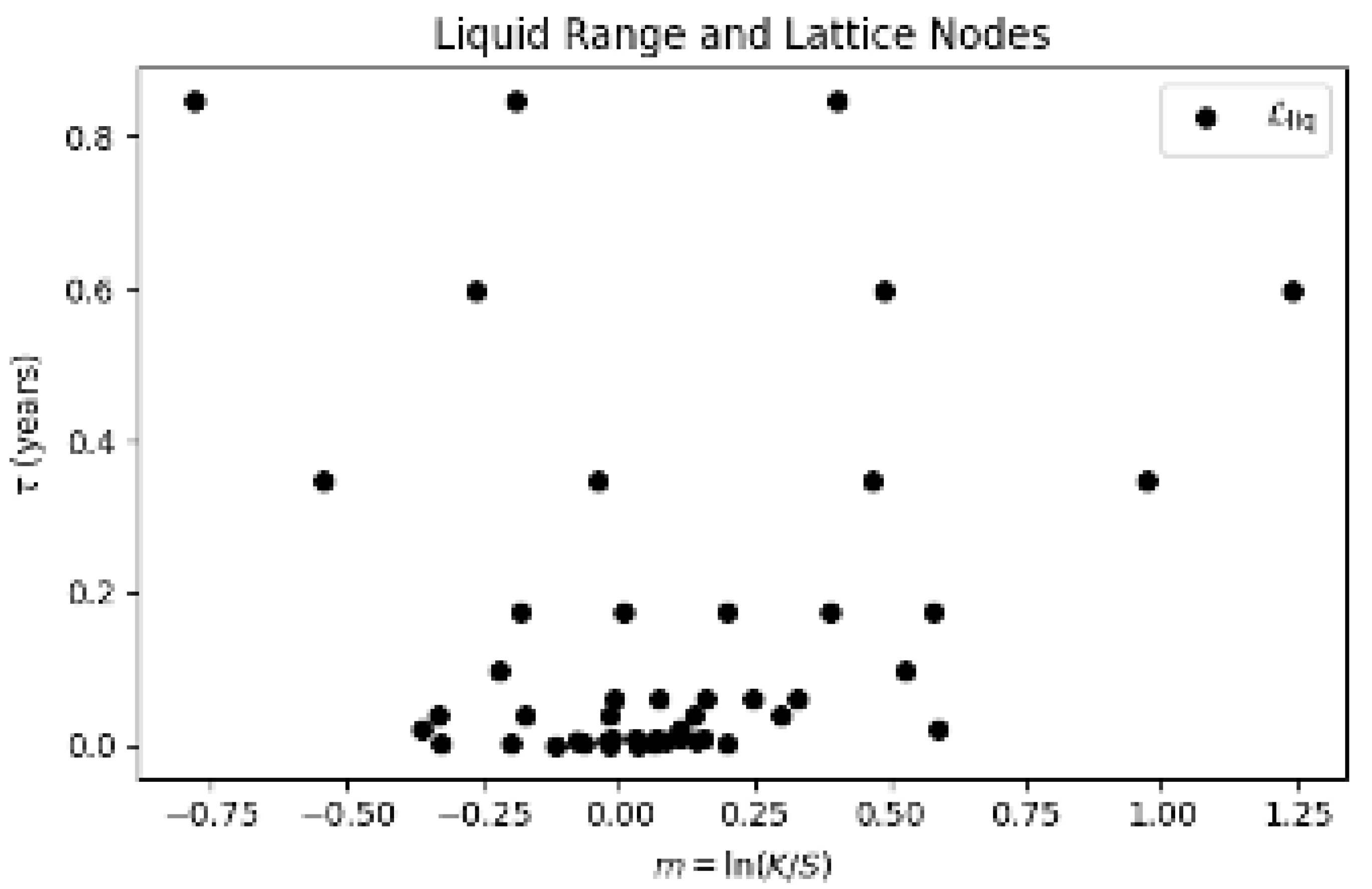

3.1. Static Grid for Options

3.1.1. Constructing a Static Grid at High-Frequency

| Algorithm 1: Static Lattice Construction |

|

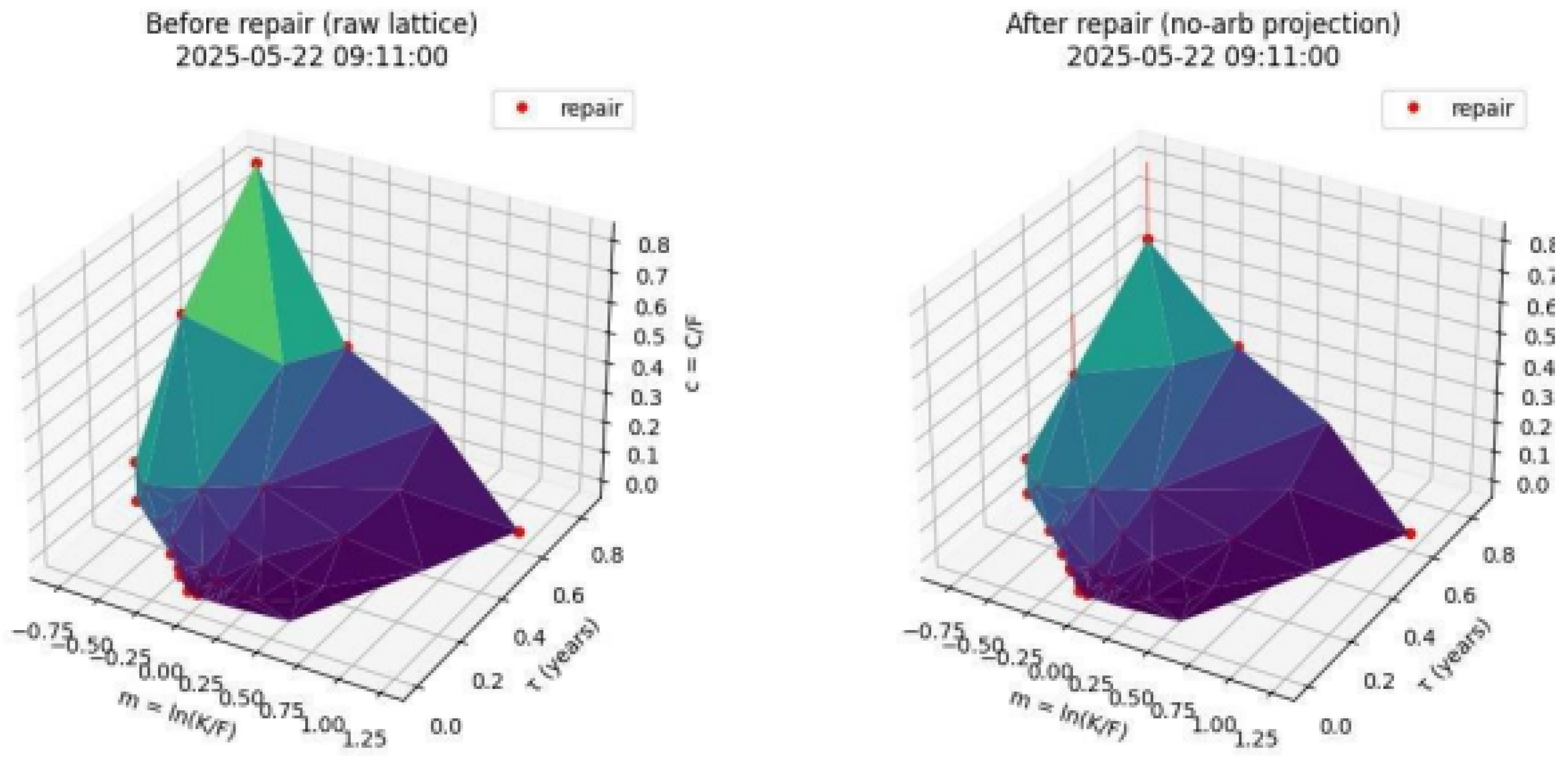

3.2. Arbitrage Repair of Historical Option Prices

- 1.

- Using the mid-price to construct our model. Within our data, and in the microstructure of markets, we do not see a single price for any asset, but rather two quotes: the bid, which is the highest price a buyer is willing to pay, and the ask, which is the lowest price a seller is willing to accept. The information contained within the spread of these relates to the liquidity of the option’s price and is captured within the lattice creation, via volume weighting, and the arbitrage cleaning process. The existence of an arbitrage-free surface between the spread is discussed in Appendix A.

- 2.

- Data artefacts of our lattice creation method. The lattice of pairs that are created using Algorithm 1 are not guaranteed to satisfy our static arbitrage constraints. Even if the mid-price captured a static arbitrage-free surface, we have interpolated these prices to common nodes which could potentially break convexity and/or monotonicity conditions.

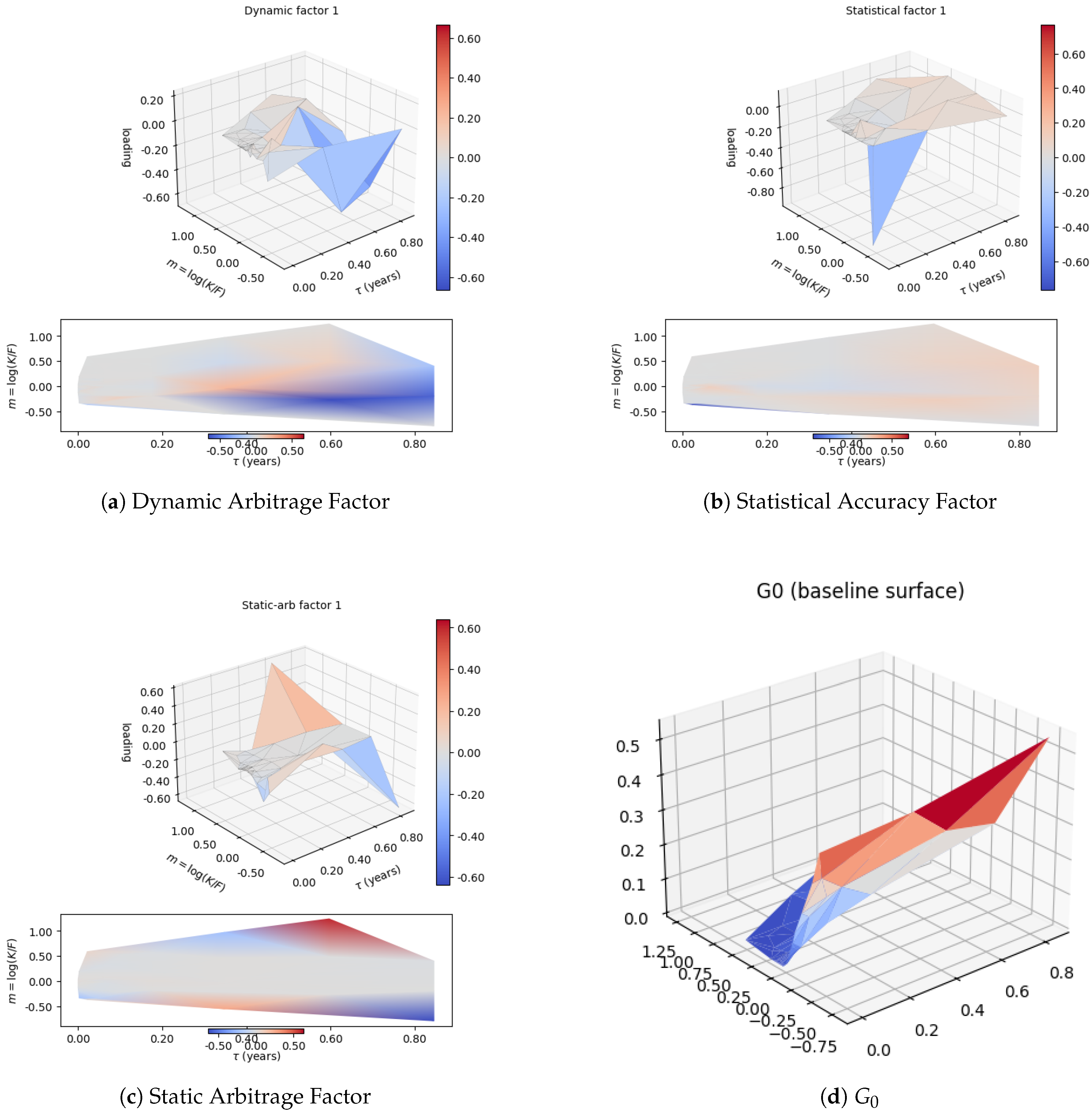

3.3. Linear Factor Representation

- 1.

- Minimal Dynamic Arbitrage — The first loading vector (denoted ) is chosen to align with the leading principal component of the empirical z-factor, i.e., the deterministic drift operator applied to the reconstructed surface (see Appendix B). This ensures the dominant direction of surface evolution is representable by the factor model, thereby minimising violation of the HJM-type drift restriction.

- 2.

- Statistical Accuracy — The second loading vector (denoted ) is the leading principal component of the residual price variation after projecting out . This captures the direction of maximum remaining variance, ensuring accurate reconstruction.

- 3.

- Minimal Static Arbitrage — The third loading vector (denoted ) is chosen from the residual subspace to minimise the static arbitrage violations (measured via the constraint matrix A) in the reconstructed surfaces. A hinge-penalty objective penalises violations of during the optimisation of this direction.

4. Neural SDE Modelling

4.1. Stochastic Dynamics of Market Factors

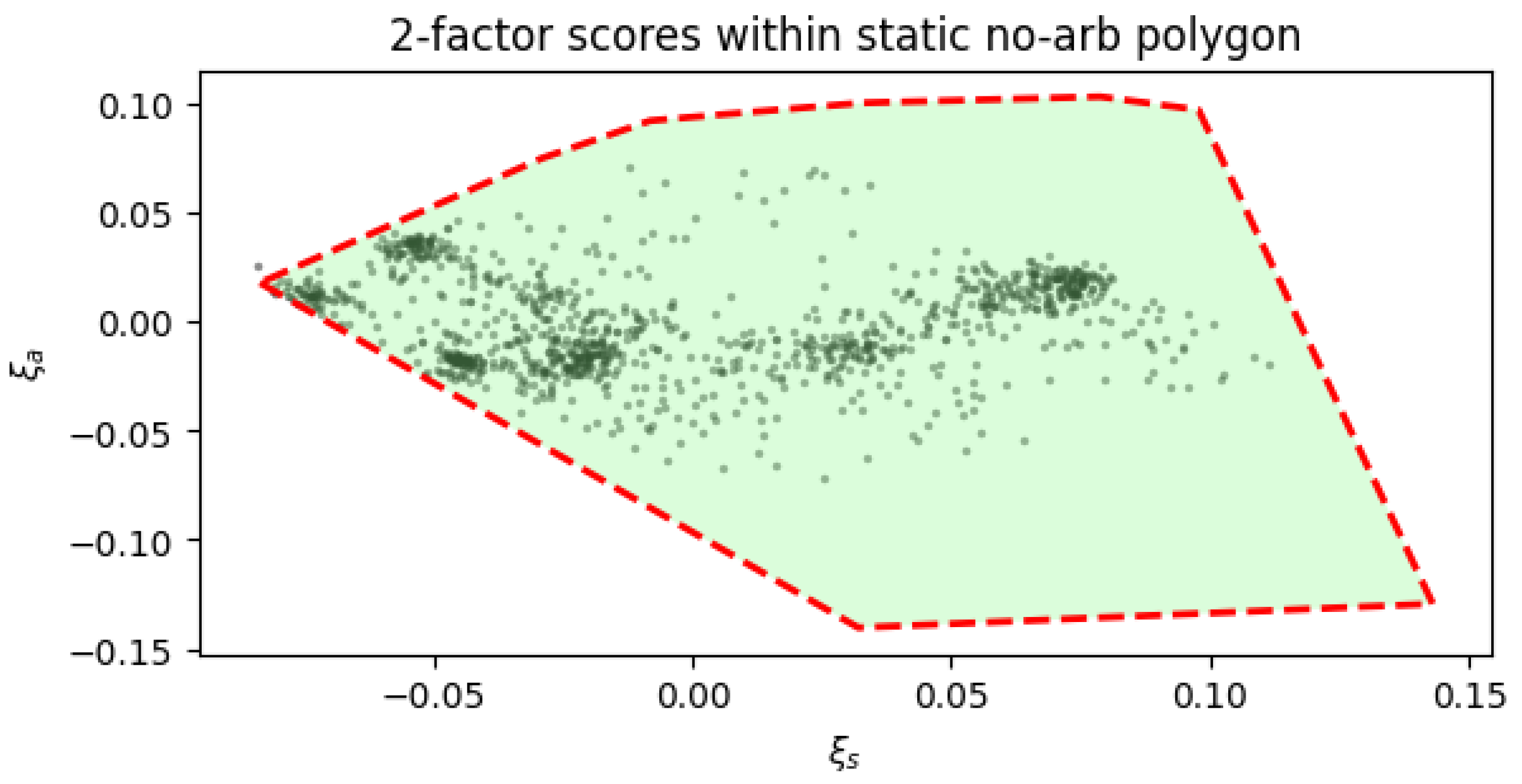

4.2. Arbitrage Free Factor Representation

4.3. Constrained Networks and No-Static-Arbitrage Guarantees

4.4. Training Results

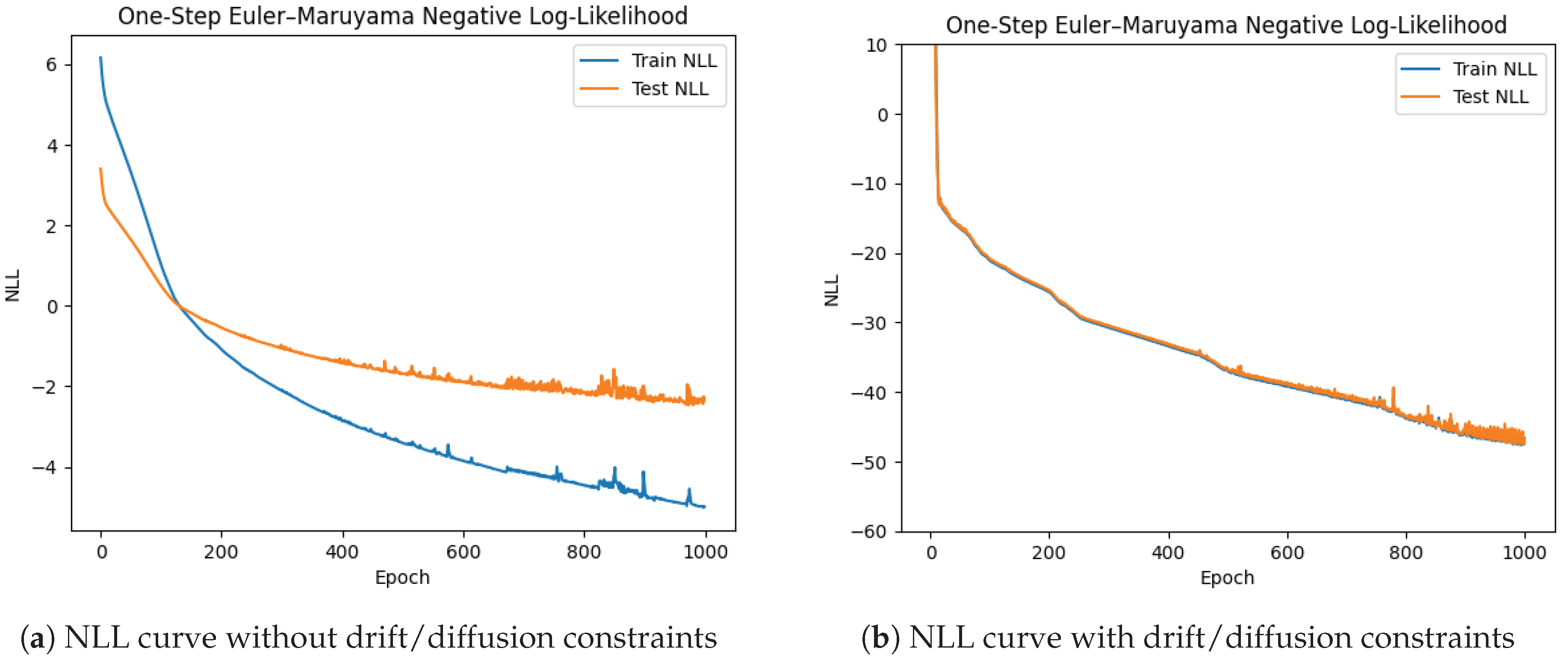

4.4.1. Loss Curve

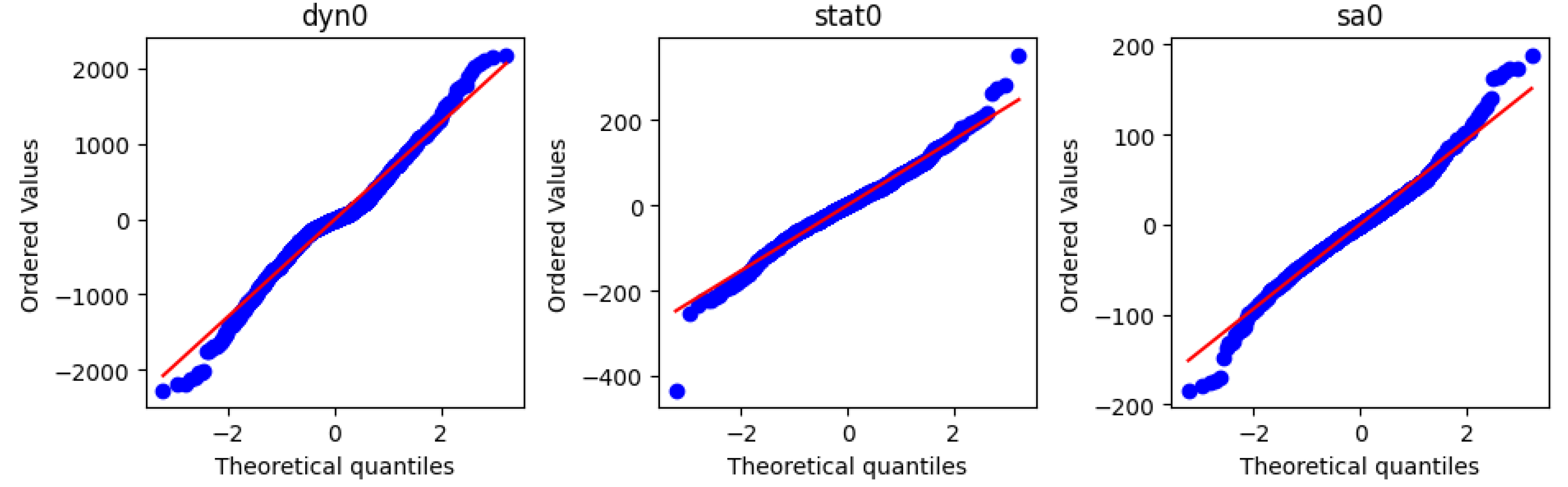

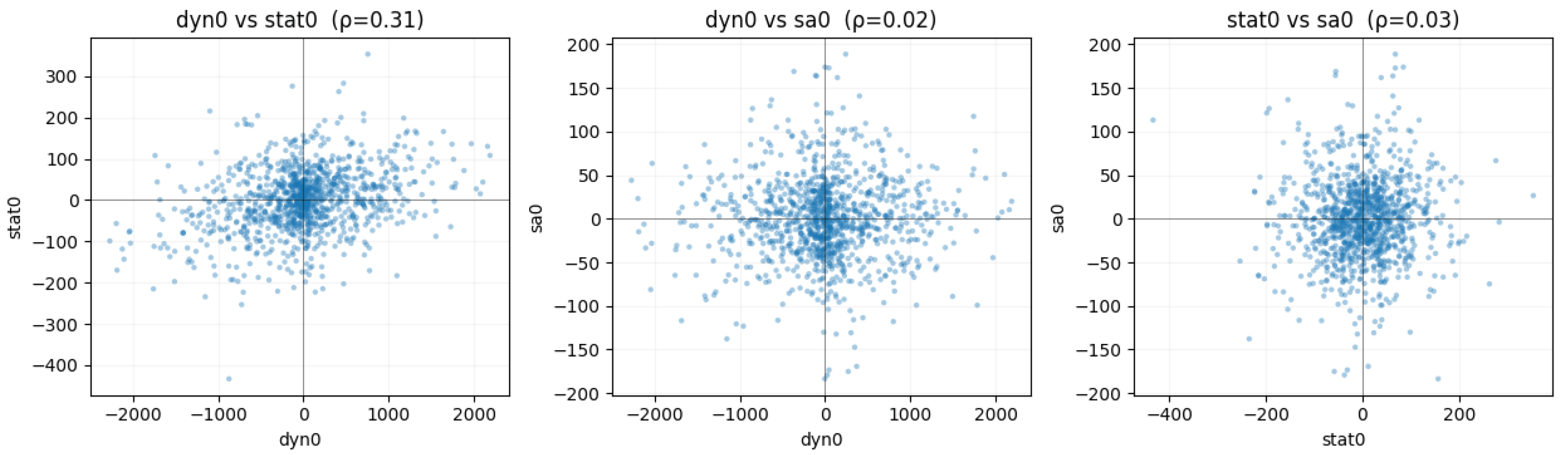

4.4.2. Residuals Plots

5. Applications

5.1. Market Simulation and VaR

5.1.1. Market Simulation Methodology

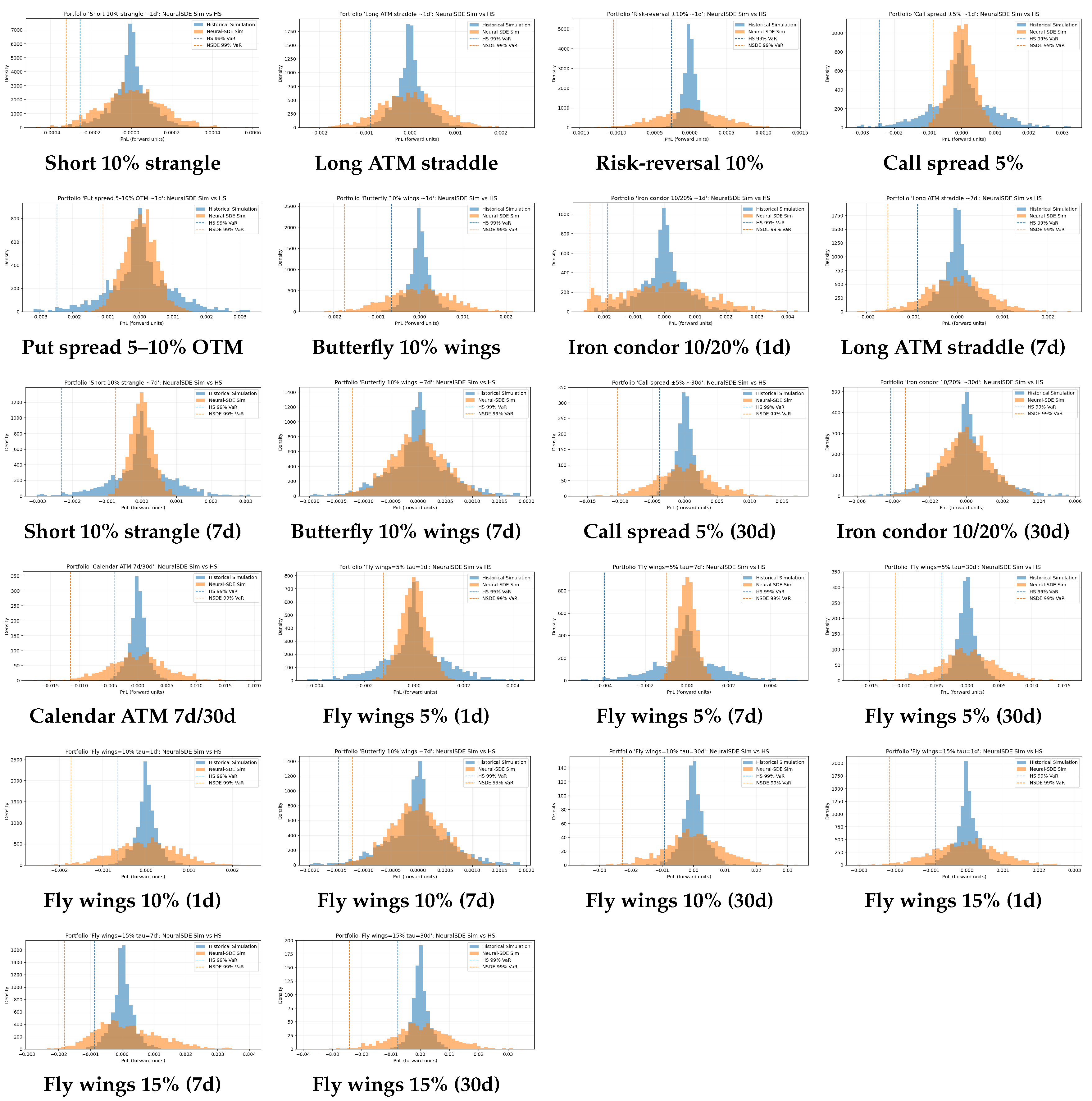

5.1.2. VaR of Option Portfolios

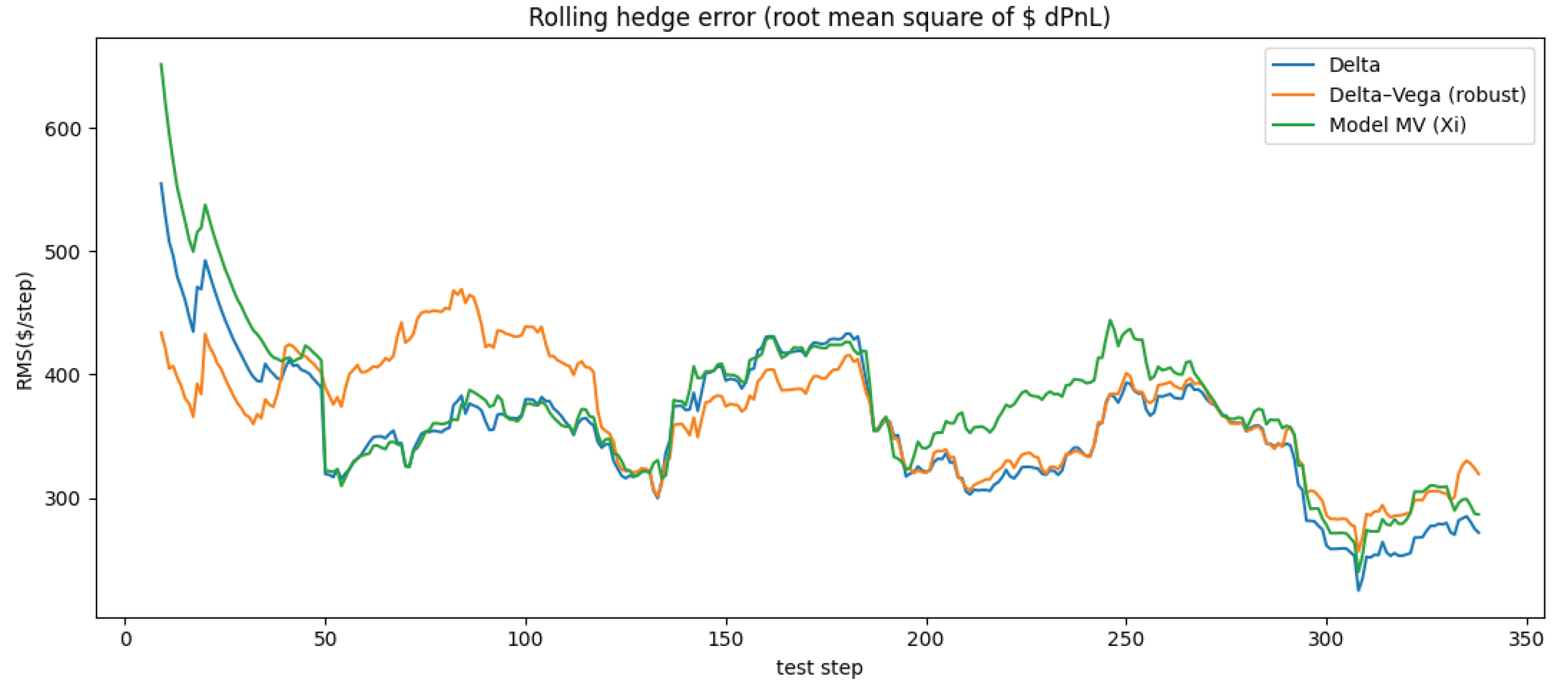

5.2. Hedging of Option’s Portfolios

5.2.1. Minimum Variance Hedging

5.2.2. Hedging an Example Portfolio (A 30-Day ATM Option)

6. Conclusions

Author Contributions

Funding

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Appendix A. On the Existence of an Arbitrage Free Surface Between the Bid and Ask Spread

Appendix B. Drift Restrictions, Dynamic Arbitrage and the ’z’ Factor

Appendix B.1. Drift of the Option Surface Under the Physical Measure

Appendix B.2. The Infinitesimal Drift Operator and the z-Factor

Appendix B.3. Dynamic No-Arbitrage Under the Basis Expansion

Appendix B.4. Principal Component Analysis of the z-Fact or

Appendix B.5. Minimising Dynamic Arbitrage

Appendix B.6. Empirical Computation of the ’z’ Factor

Appendix C. Training at Ultra High Frequency Intervals

Appendix D. Alternative Likelihoods for Training

Appendix E. Neural Network Architecture

References

- Ahmed, Mohamed Shaker, Ahmed A. El-Masry, Aktham I. Al-Maghyereh, and Satish Kumar. 2024. Cryptocurrency volatility: A review, synthesis, and research agenda. Research in International Business and Finance 71, 102472. [Google Scholar] [CrossRef]

- Aït-Sahalia, Yacine. 2002. Maximum likelihood estimation of discretely sampled diffusions: a closed-form approximation approach. Econometrica 70, 1: 223–262. [Google Scholar] [CrossRef]

- Aït-Sahalia, Yacine, and Jean Jacod. 2009. Estimating the degree of activity of jumps in high frequency data. [Google Scholar] [CrossRef]

- Ait-Sahalia, Yacine, Per A Mykland, and Lan Zhang. 2005. How often to sample a continuous-time process in the presence of market microstructure noise. The review of financial studies 18, 2: 351–416. [Google Scholar] [CrossRef]

- Bandi, Federico M., and Jeffrey R. Russell. 2006. Separating microstructure noise from volatility. Journal of Financial Economics 79, 3: 655–692. [Google Scholar] [CrossRef]

- Black, Fischer, and Myron Scholes. 1973. The pricing of options and corporate liabilities. Journal of political economy 81, 3: 637–654. [Google Scholar] [CrossRef]

- Breeden, Douglas T, and Robert H Litzenberger. 1978. Prices of state-contingent claims implicit in option prices. Journal of business, 621–651. [Google Scholar] [CrossRef]

- Buehler, Hans, Lukas Gonon, Josef Teichmann, and Ben Wood. 2019. Deep hedging. Quantitative Finance 19, 8: 1271–1291. [Google Scholar] [CrossRef]

- Caron, R. J., J. F. McDonald, and C. M. Ponic. 1989. A degenerate extreme point strategy for the classification of linear constraints as redundant or necessary. Journal of Optimization Theory and Applications 62, 2: 225–237. [Google Scholar] [CrossRef]

- Carr, Peter, and Dilip B Madan. 2005. A note on sufficient conditions for no arbitrage. Finance Research Letters 2, 3: 125–130. [Google Scholar] [CrossRef]

- Cohen, Samuel N, Christoph Reisinger, and Sheng Wang. 2020. Detecting and repairing arbitrage in traded option prices. Applied Mathematical Finance 27, 5: 345–373. [Google Scholar] [CrossRef]

- Cohen, Samuel N., Christoph Reisinger, and Sheng Wang. 2022. Hedging option books using neural-sde market models. [Google Scholar] [CrossRef]

- Cohen, Samuel N, Christoph Reisinger, and Sheng Wang. 2023a. Arbitrage-free neural-sde market models. Applied Mathematical Finance 30, 1: 1–46. [Google Scholar] [CrossRef]

- Cohen, Samuel N, Christoph Reisinger, and Sheng Wang. 2023b. Estimating risks of european option books using neural-sde market models. Journal of Computational Finance 26, 3. [Google Scholar]

- Cont, Rama, and Milena Vuletić. 2023. Simulation of arbitrage-free implied volatility surfaces. Applied Mathematical Finance 30, 2: 94–121. [Google Scholar] [CrossRef]

- Cuchiero, Christa, Wahid Khosrawi, and Josef Teichmann. 2020. A generative adversarial network approach to calibration of local stochastic volatility models. Risks 8, 4. [Google Scholar] [CrossRef]

- Davis, Mark HA, and David G Hobson. 2007. The range of traded option prices. Mathematical Finance 17, 1: 1–14. [Google Scholar] [CrossRef]

- DeLise, Timothy. 2021. Neural Options Pricing. Ph. D. thesis, Universite de Montreal. [Google Scholar]

- Dempster, Arthur P, Nan M Laird, and Donald B Rubin. 1977. Maximum likelihood from incomplete data via the em algorithm. Journal of the royal statistical society: series B (methodological) 39, 1: 1–22. [Google Scholar] [CrossRef]

- Derman, Emanuel, and Iraj Kani. 1994. Riding on a smile. Risk 7, 2: 32–39. [Google Scholar]

- Dhiman, Ashish, and Yibei Hu. 2023. Physics informed neural network for option pricing. ArXiv abs/2312.06711.

- Dridi, Naura, Lucas Drumetz, and Ronan Fablet. 2021. Learning stochastic dynamical systems with neural networks mimicking the euler-maruyama scheme. In 2021 29th European Signal Processing Conference (EUSIPCO). IEEE: pp. 1990–1994. [Google Scholar]

- Dupire, Bruno, and et al. 1994. Pricing with a smile. Risk 7, 1: 18–20. [Google Scholar]

- Durbin, James, and Siem Jan Koopman. 2012. Time series analysis by state space methods. Oxford university press. [Google Scholar]

- Friedman, Avner, and Mark A Pinsky. 1973. Asymptotic stability and spiraling properties for solutions of stochastic equations. Transactions of the American Mathematical Society 186, 331–358. [Google Scholar] [CrossRef]

- Fukasawa, Masaaki. 2011. Asymptotic analysis for stochastic volatility: martingale expansion. Finance and Stochastics 15, 4: 635–654. [Google Scholar] [CrossRef]

- Gatheral, Jim. 2011. The volatility surface: a practitioner’s guide. John Wiley & Sons. [Google Scholar]

- Gatheral, Jim, Thibault Jaisson, and Mathieu Rosenbaum. 2022. Volatility is rough. In Commodities. Chapman and Hall/CRC: pp. 659–690. [Google Scholar]

- Gierjatowicz, Patryk, Marc Sabate Vidales, David Siska, Lukasz Szpruch, and Zan Zuric. 2022. Robust pricing and hedging via neural stochastic differential equations. Journal of Computational Finance 26, 3: 1–32. [Google Scholar] [CrossRef]

- Goodfellow, Ian, Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David Warde-Farley, Sherjil Ozair, Aaron Courville, and Yoshua Bengio. 2020. Generative adversarial networks. Communications of the ACM 63, 11: 139–144. [Google Scholar] [CrossRef]

- Greff, Klaus, Sjoerd Van Steenkiste, and Jürgen Schmidhuber. 2017. Neural expectation maximization. In Advances in neural information processing systems 30. [Google Scholar]

- Hagan, Patrick S, Deep Kumar, Andrew S Lesniewski, and Diana E Woodward. 2002. Managing smile risk. In The Best of Wilmott 1. pp. 249–296. [Google Scholar]

- Heath, David, Robert Jarrow, and Andrew Morton. 1992. Bond pricing and the term structure of interest rates: A new methodology for contingent claims valuation. Econometrica: Journal of the Econometric Society, 77–105. [Google Scholar] [CrossRef]

- Heston, Steven L. 1993. A closed-form solution for options with stochastic volatility with applications to bond and currency options. The review of financial studies 6, 2: 327–343. [Google Scholar] [CrossRef]

- Hutchinson, James M., Andrew W. Lo, and Tomaso Poggio. 1994. A nonparametric approach to pricing and hedging derivative securities via learning networks. The Journal of Finance 49, 3: 851–889. [Google Scholar] [CrossRef]

- Jia, Junteng, and Austin R Benson. 2019. Neural jump stochastic differential equations. In Advances in Neural Information Processing Systems 32. [Google Scholar]

- Kolm, Petter N, and Gordon Ritter. 2019. Dynamic replication and hedging: A reinforcement learning approach. The Journal of Financial Data Science 1, 1: 159–171. [Google Scholar] [CrossRef]

- Lee, Suzanne S, and Per A Mykland. 2008. Jumps in financial markets: A new nonparametric test and jump dynamics. The review of financial studies 21, 6: 2535–2563. [Google Scholar] [CrossRef]

- Fan, Lei, and Justin Sirignano. 2024. Machine learning methods for pricing financial deriviatives. In Computational Finance. [Google Scholar]

- Lyons, T. J. 1995. Uncertain volatility and the risk-free synthesis of derivatives. Applied Mathematical Finance 2, 2: 117–133. [Google Scholar] [CrossRef]

- Phytides, Alexio. 2023. No-arbitrage option pricing with neural sdes. Master’s thesis, University of Cape Town. [Google Scholar]

- Roper, Michael, and Marek Rutkowski. 2009. On the relationship between the call price surface and the implied volatility surface close to expiry. International Journal of Theoretical and Applied Finance 12, 04: 427–441. [Google Scholar] [CrossRef]

- Schönbucher, Philipp J. 1999. A market model for stochastic implied volatility. SSRN Electronic Journal. [Google Scholar] [CrossRef]

- Sutton, Richard S, Andrew G Barto, and et al. 1998. Reinforcement learning: An introduction. Cambridge: MIT press, Volume 1. [Google Scholar]

- Tanios, R. 2021. Physics informed neural networks in computational finance. Master’s thesis, ETH Zurich. [Google Scholar]

- Vuletić, Milena, and Rama Cont. 2024. Volgan: a generative model for arbitrage-free implied volatility surfaces. Applied Mathematical Finance 31, 4: 203–238. [Google Scholar] [CrossRef]

- Wiese, Magnus, Lianjun Bai, Ben Wood, and Hans Buehler. 2019. Deep hedging: learning to simulate equity option markets. arXiv arXiv:1911.01700. [Google Scholar] [CrossRef]

- Wissel, Johannes Stefan. 2008. Arbitrage-free market models for liquid options. Ph. D. thesis, ETH Zurich. [Google Scholar]

- Yu, Jialin. 2007. Closed-form likelihood approximation and estimation of jump-diffusions with an application to the realignment risk of the chinese yuan. Journal of Econometrics 141, 2: 1245–1280. [Google Scholar] [CrossRef]

| Short 10% strangle | Long ATM straddle | Risk-reversal 10% | Call spread 5% |

| Put spread 5–10% OTM | Butterfly 10% wings | Iron condor 10/20% (1d) | Long ATM straddle (7d) |

| Short 10% strangle (7d) | Butterfly 10% wings (7d) | Call spread 5% (30d) | Iron condor 10/20% (30d) |

| Calendar ATM 7d/30d | Fly wings 5% (1d) | Fly wings 5% (7d) | Fly wings 5% (30d) |

| Fly wings 10% (1d) | Fly wings 10% (7d) | Fly wings 10% (30d) | Fly wings 15% (1d) |

| Fly wings 15% (7d) | Fly wings 15% (30d) |

| Portfolio | VaR NSDE 99 | VaR HS 99 | VaR NSDE 95 | VaR HS 95 | Description |

|---|---|---|---|---|---|

| Short 10% strangle ∼1d | -0.018899 | -0.003865 | -0.013648 | -0.002575 | Short 10% OTM call and 10% OTM put, d. |

| Short 10% strangle ∼7d | -0.014940 | -0.003988 | -0.010646 | -0.002645 | Same short strangle with maturity ∼7d. |

| Long ATM straddle ∼1d | -0.014211 | -0.002680 | -0.013370 | -0.001680 | Long ATM call + long ATM put, d. |

| Risk-reversal ±10% ∼1d | -0.006106 | -0.003822 | -0.006106 | -0.002410 | Long 10% OTM call, short 10% OTM put (directional skew), d. |

| Put spread 5–10% OTM ∼1d | -0.004825 | -0.002017 | -0.003476 | -0.001376 | Long 10% OTM put, short 5% OTM put; same expiry (debit put spread). |

| Iron condor 10/20% ∼30d | -0.004094 | -0.003429 | -0.003197 | -0.002309 | Short 10%/20% OTM call & put wings (short condor), d. |

| Butterfly 10% wings ∼7d | -0.003645 | -0.002377 | -0.002677 | -0.001515 | Same as above (ATM long body, OTM short wings). |

| Fly wings=15% =1d | -0.002920 | -0.004423 | -0.001855 | -0.002849 | Wider ±15% wing butterfly, 1d. |

| Fly wings=10% =30d | -0.002821 | -0.004889 | -0.002049 | -0.003254 | ±10% wing butterfly, 30d. |

| Fly wings=15% =30d | -0.002810 | -0.001905 | -0.001703 | -0.001362 | ±15% wing butterfly, 30d. |

| Iron condor 10/20% ∼1d | -0.002717 | -0.001909 | -0.001937 | -0.001197 | Short condor with inner 10% & outer 20% strikes, d. |

| Fly wings=10% =1d | -0.002506 | -0.004034 | -0.001470 | -0.002632 | ±10% wing butterfly, 1d. |

| Butterfly 10% wings ∼1d | -0.002506 | -0.004034 | -0.001470 | -0.002632 | Equivalent short-dated butterfly. |

| Fly wings=15% =7d | -0.002457 | -0.001571 | -0.002000 | -0.001165 | ±15% wing butterfly, 7d. |

| Calendar ATM 7d/30d | -0.001962 | -0.000974 | -0.001381 | -0.000652 | Long 30d ATM, short 7d ATM (calendar spread). |

| Fly wings=5% =1d | -0.001693 | -0.003198 | -0.001181 | -0.002135 | Tight ±5% wing butterfly, 1d. |

| Call spread ±5% ∼1d | -0.001653 | -0.002181 | -0.001653 | -0.001498 | Long 5% ITM call, short 5% OTM call; d. |

| Fly wings=5% =7d | -0.001541 | -0.003935 | -0.001112 | -0.002596 | ±5% wing butterfly, 7d. |

| Call spread ±5% ∼30d | -0.001495 | -0.001580 | -0.001054 | -0.001022 | 5% call spread, 30d. |

| Fly wings=5% =30d | -0.001460 | -0.001538 | -0.000985 | -0.000962 | Tight ±5% wing butterfly, 30d. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).