Submitted:

29 April 2026

Posted:

30 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Semi-Supervised Object Detection

2.2. Traffic Sign Detection

3. Methods

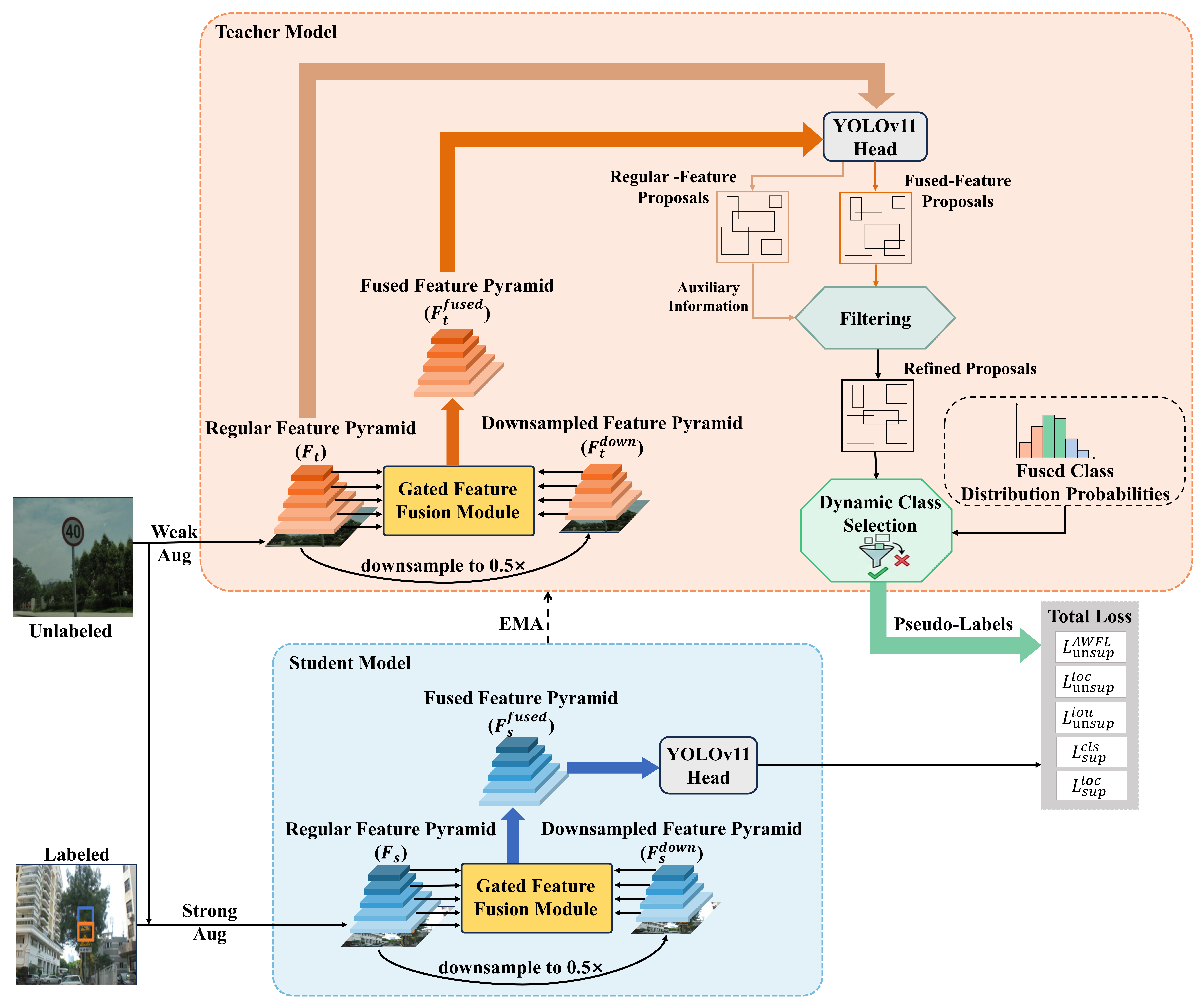

3.1. Overview of Our Method

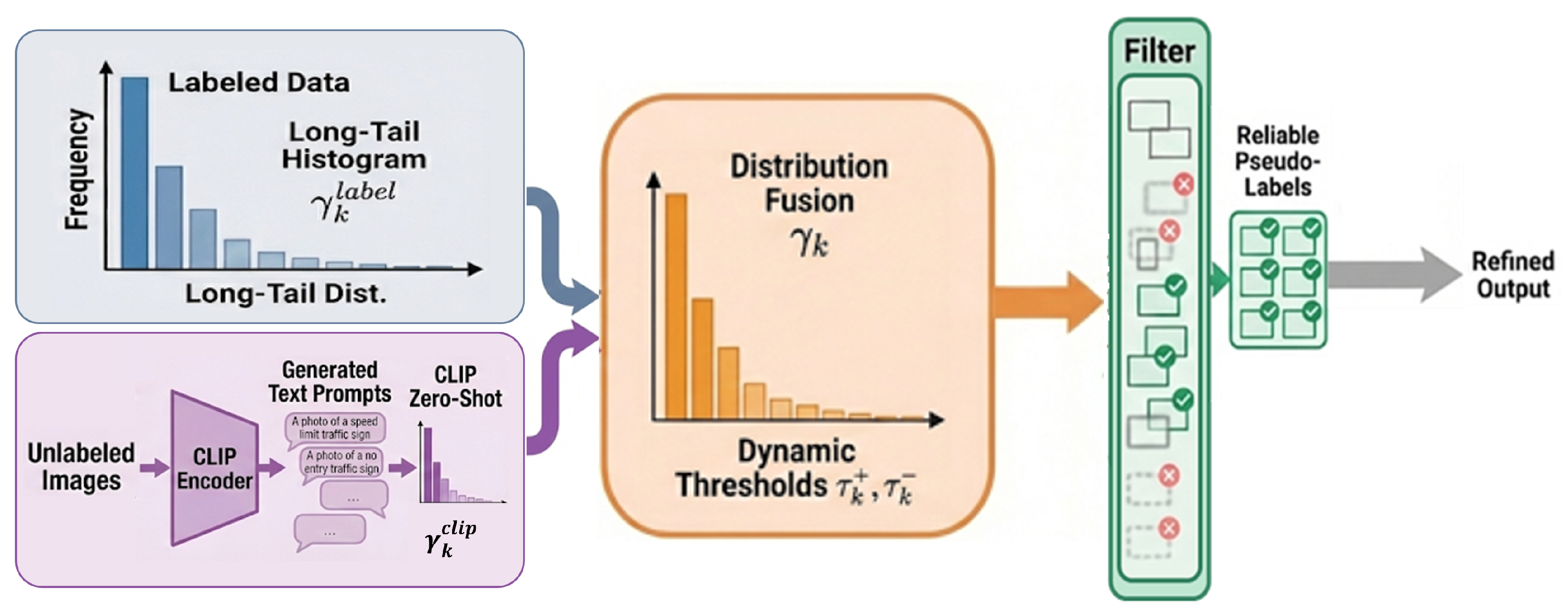

3.2. Class-Distribution-Based Dynamic Pseudo-Label Selection

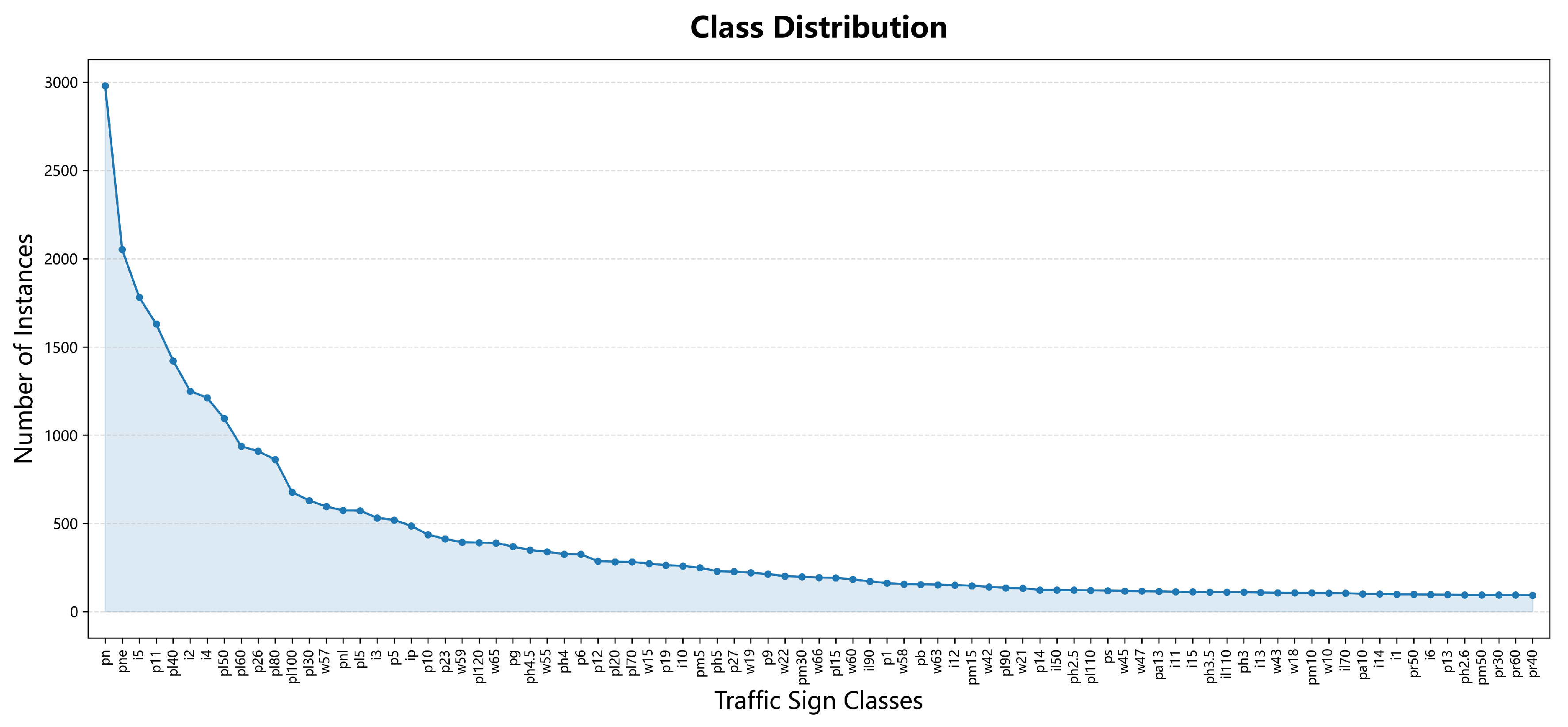

3.2.1. Theoretical Basis and Distribution Estimation

3.2.2. CLIP-Based Class Distribution Estimation

3.2.3. Class-Distribution-Based Threshold Setting

3.2.4. Dynamic Pseudo-Label Selection

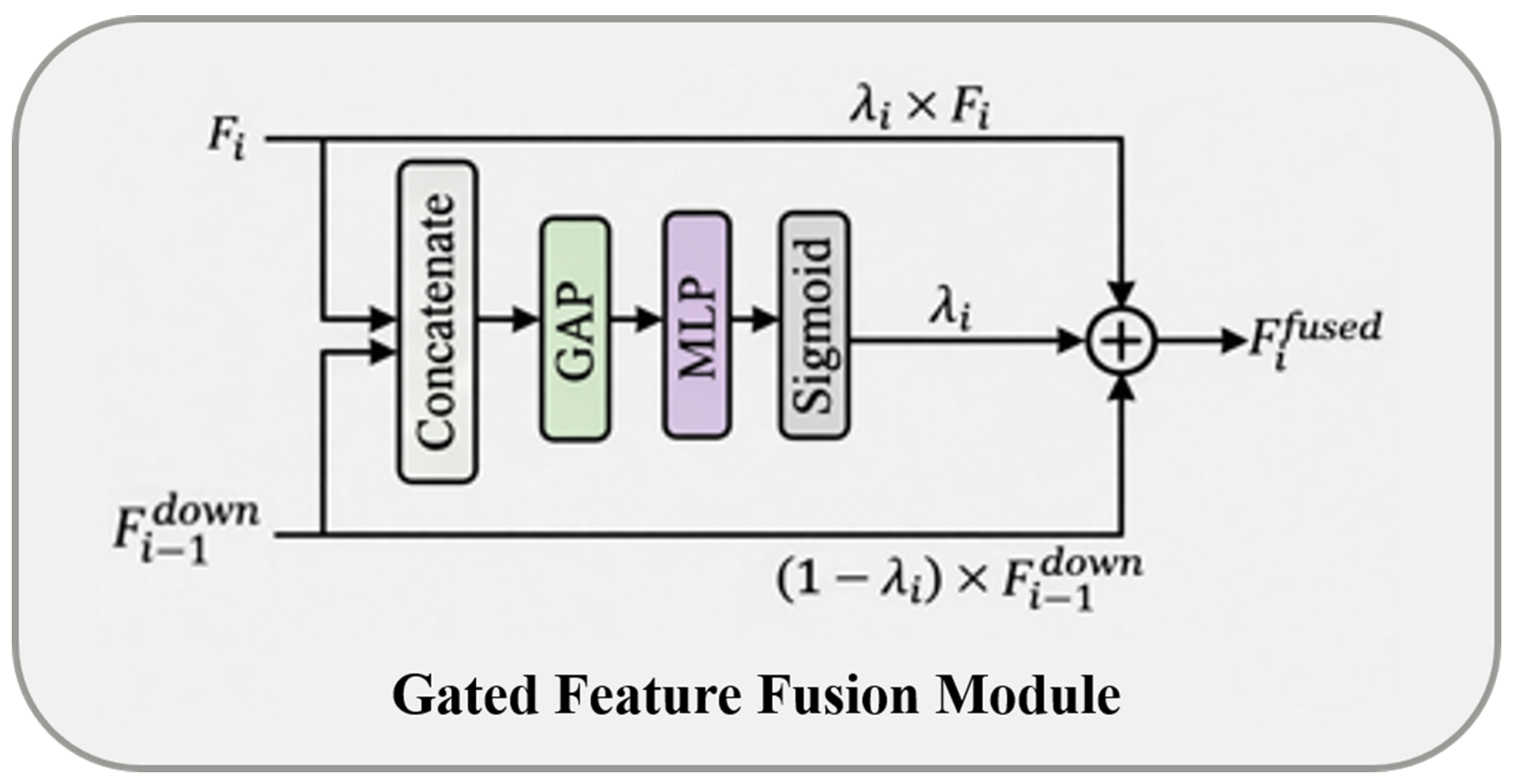

3.3. Gated-Feature-Fusion-Based Candidate Refinement Strategy

3.3.1. Feature Pyramid Construction for Feature Fusion

3.3.2. Candidate Boxes Selection Based on Confidence Gain

3.4. Overall Optimization Objective

4. Experiments

4.1. Evaluation Metrics

4.2. Ablation Study

4.3. Parameter Sensitivity Analysis

4.3.1. Positive- and Negative-Sample Reliability Ratios

4.3.2. Class Distribution Fusion Weight

4.4. Comparison with the State-of-the-Art Methods

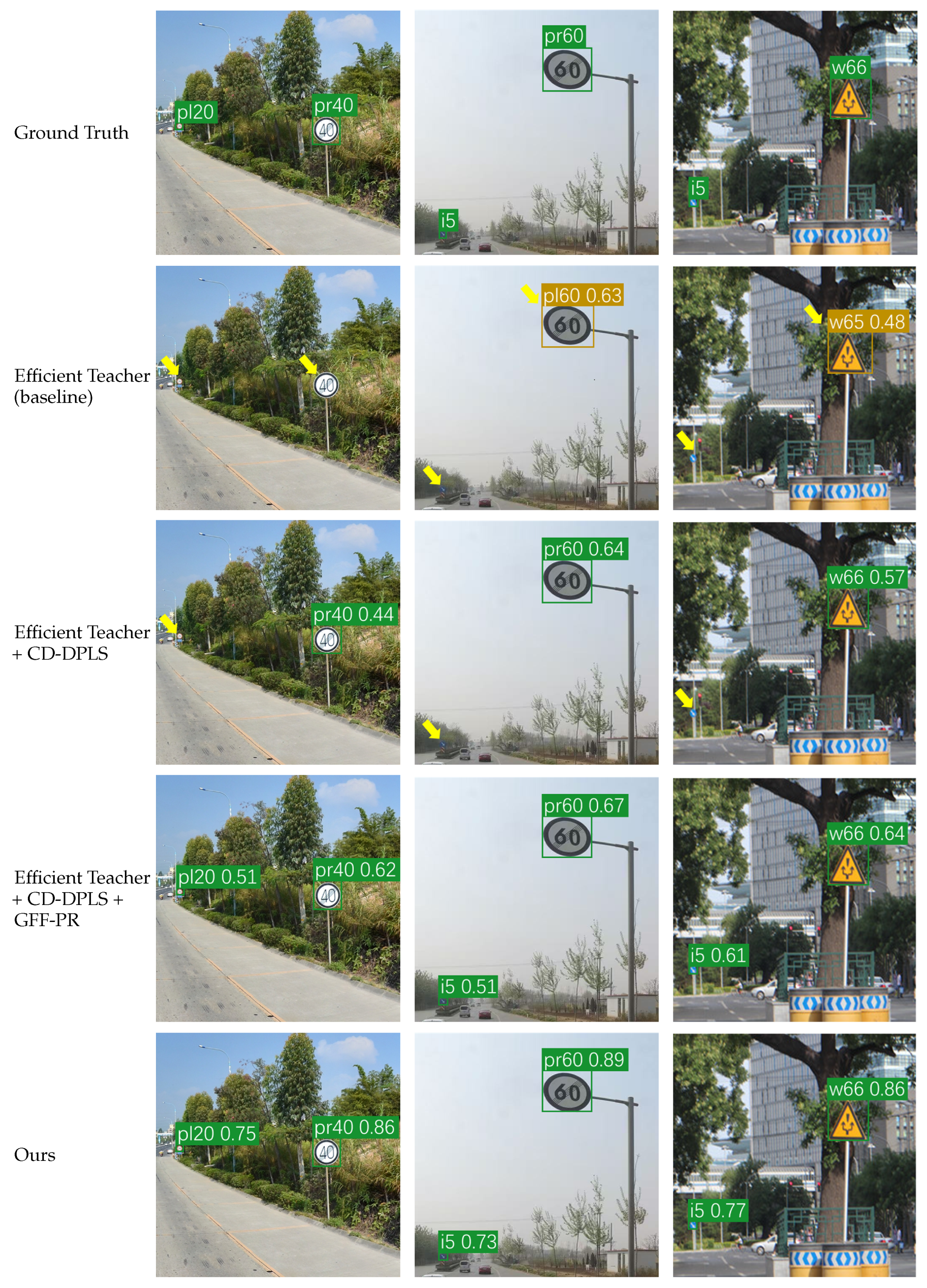

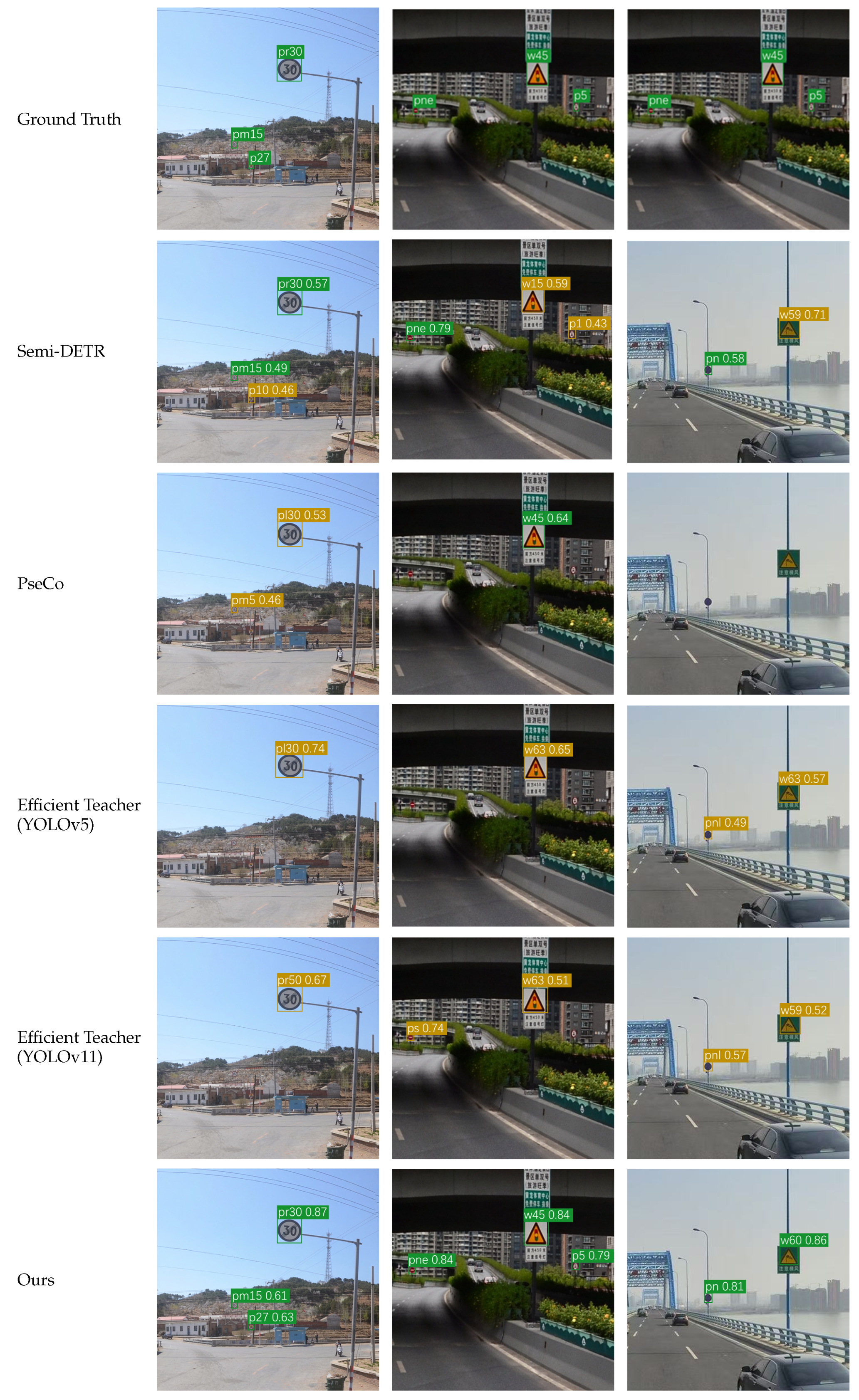

4.5. Visualization

5. Conclusion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Mogelmose, M.M.; Trivedi, M.M.; Moeslund, T.B. Vision-based traffic sign detection and analysis for intelligent driver assistance systems: Perspectives and survey. IEEE Trans. Intell. Transp. Syst. 2012, 13, 1484–1497. [Google Scholar] [CrossRef]

- Sun, H.; Wang, R.; Li, Y.; et al. SET: Spectral enhancement for tiny object detection. Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. (CVPR) 2025, 4713–4723. [Google Scholar]

- Sun, H.; Li, Y.; Yang, L.; et al. Uncertainty-aware gradient stabilization for small object detection. Proc. IEEE/CVF Int. Conf. Comput. Vis. (ICCV) 2025, 8407–8417. [Google Scholar]

- Wang, Y.; Bochkovskiy, A.; Liao, H.-Y.M. YOLOv7: Trainable bag-of-freebies sets new state-of-the-art for real-time object detectors. arXiv 2022, arXiv:2207.02696. [Google Scholar]

- Yang, C.; Zhuang, K.; Chen, M.; et al. Traffic sign interpretation via natural language description. IEEE Trans. Intell. Transp. Syst. 2024, 25, 18939–18953. [Google Scholar] [CrossRef]

- Tan, M.; Pang, R.; Le, Q.V. EfficientDet: Scalable and efficient object detection. Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. (CVPR) 2020, 10781–10790. [Google Scholar]

- Yang, W.; Wang, C.; Zhang, T.; et al. SA3Det++: Side-aware quality estimation for semi-supervised 3D object detection. IEEE Trans. Pattern Anal. Mach. Intell. 2025, 47, 10664–10679. [Google Scholar] [CrossRef]

- Shehzadi, T.; Hashmi, K.A.; Sarode, S.; et al. STEP-DETR: Advancing DETR-based semi-supervised object detection with super teacher and pseudo-label guided text queries. Proc. IEEE/CVF Int. Conf. Comput. Vis. (ICCV) 2025, 3069–3079. [Google Scholar]

- Chen, C.; Han, J.; Debattista, K. Virtual category learning: A semi-supervised learning method for dense prediction with extremely limited labels. IEEE Trans. Pattern Anal. Mach. Intell. 2024, 46, 5595–5611. [Google Scholar] [CrossRef]

- Luo, Y.; Zhu, J.; Li, M.; et al. Smooth neighbors on teacher graphs for semi-supervised learning. Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. (CVPR) 2018, 8896–8905. [Google Scholar]

- Yang, X.; Li, P.; Zhou, Q.; et al. Dense information learning based semi-supervised object detection. IEEE Trans. Image Process. 2025, 34, 1022–1035. [Google Scholar] [CrossRef]

- Zeng, X.; Liu, X.; Xiang, X. Confidence-weighted teacher: Semi-supervised object detection based on confidence correction. Pattern Recognit. Comput. Vis. LNCS 15043(2025), 1–15.

- Zhang, B.; Wang, Z.; Du, B. Boosting semi-supervised object detection in remote sensing images with active teaching. IEEE Geosci. Remote Sens. Lett. 2024, 21, 1–5. [Google Scholar] [CrossRef]

- Huang, T.-K.; Yeh, M.-C. Improving semi-supervised object detection by ROI-enhanced contrastive learning. APSIPA ASC 2024, 1–6. [Google Scholar]

- Zhang, R.; Xu, C.; Xu, F.; et al. S3OD: Size-unbiased semi-supervised object detection in aerial images. ISPRS J. Photogramm. Remote Sens. 2025, 221, 179–192. [Google Scholar] [CrossRef]

- Tran, P.V. SimLTD: Simple supervised and semi-supervised long-tailed object detection. Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. (CVPR) 2025, 4672–4681. [Google Scholar]

- Yang, X.; Song, Z.; King, I.; et al. A survey on deep semi-supervised learning. IEEE Trans. Knowl. Data Eng. 2023, 35, 8934–8954. [Google Scholar] [CrossRef]

- Wang, C.; Xu, C.; Li, X.; et al. Multi-clue consistency learning to bridge gaps between general and oriented objects in semi-supervised detection. Proc. AAAI 2025, 7582–7590. [Google Scholar] [CrossRef]

- Zhao, T.; Fang, Q.; Shi, S.; et al. Density-guided dense pseudo-label selection for semi-supervised oriented object detection. Proc. IEEE Int. Conf. Image Process. (ICIP) 2024, 1092–1098. [Google Scholar]

- Shehzadi, T.; Hashmi, K.A.; Stricker, D.; et al. Sparse Semi-DETR: Sparse learnable queries for semi-supervised object detection. Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. (CVPR) 2024, 5840–5850. [Google Scholar]

- Cao, F.; Yan, K.; Chen, H.; et al. SSCD-YOLO: Semi-supervised cross-domain YOLOv8 for pedestrian detection in low-light conditions. IEEE Access 2025, 13, 61225–61236. [Google Scholar] [CrossRef]

- Chen, S.; Zhang, Z.; Zhang, L.; et al. A semi-supervised learning framework combining CNN and multiscale transformer for traffic sign detection and recognition. IEEE Internet Things J. 2024, 11, 19500–19519. [Google Scholar] [CrossRef]

- Yang, Y.; Luo, H.; Xu, H.; et al. Towards real-time traffic sign detection and classification. IEEE Trans. Intell. Transp. Syst. 2016, 17, 2022–2031. [Google Scholar] [CrossRef]

- Yuan, X.; Hao, X.; Chen, H.; et al. Robust traffic sign recognition based on color global and local oriented edge magnitude patterns. IEEE Trans. Intell. Transp. Syst. 2014, 15, 1466–1477. [Google Scholar] [CrossRef]

- Liu, C.; Chang, F.; Chen, Z.; et al. Fast traffic sign recognition via high-contrast region extraction and extended sparse representation. IEEE Trans. Intell. Transp. Syst. 2016, 17, 79–92. [Google Scholar] [CrossRef]

- Chen, S.; Zhang, Z.; Ma, H.; et al. A content-adaptive hierarchical deep learning model for detecting arbitrary oriented road surface elements using MLS point clouds. IEEE Trans. Geosci. Remote Sens. 2023, 61, 1–16. [Google Scholar] [CrossRef]

- Wang, J.; Chen, Y.; Dong, Z.; et al. Improved YOLOv5 network for real-time multi-scale traffic sign detection. Neural Comput. Appl. 2023, 35, 7853–7865. [Google Scholar] [CrossRef]

- Manzari, O.N.; Boudesh, A.; Shokouhi, S.B. Pyramid transformer for traffic sign detection. Int. Conf. Comput. Knowl. Eng. 2022, 112–116. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; et al. Attention is all you need. Adv. Neural Inf. Process. Syst. (NeurIPS) 2017, 5998–6008. [Google Scholar]

- Wang, G.; Zhou, K.; Wang, L.; et al. Context-aware and attention-driven weighted fusion traffic sign detection network. IEEE Access 2023, 11, 42104–42112. [Google Scholar] [CrossRef]

- Zhang, J.; Xie, Z.; Sun, J.; et al. A cascaded R-CNN with multiscale attention and imbalanced samples for traffic sign detection. IEEE Access 2020, 8, 29742–29754. [Google Scholar] [CrossRef]

- Sohn, K.; Zhang, Z.; Li, C.L.; et al. A simple semi-supervised learning framework for object detection. arXiv 2020, arXiv:2005.04757. [Google Scholar] [CrossRef]

- Liu, Y.-C.; Ma, C.-Y.; He, Z.; et al. Unbiased teacher for semi-supervised object detection. arXiv 2021, arXiv:2102.09480. [Google Scholar] [CrossRef]

- Tang, Y.; Chen, W.; Luo, Y.; et al. Humble teachers teach better students for semi-supervised object detection. Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. (CVPR) 2021, 3132–3141. [Google Scholar]

- Xu, M.; Zhang, Z.; Hu, H.; et al. End-to-end semi-supervised object detection with soft teacher. Proc. IEEE/CVF Int. Conf. Comput. Vis. (ICCV) 2021, 3060–3069. [Google Scholar]

- Zhang, J.; Lin, X.; Zhang, W.; et al. Semi-DETR: Semi-supervised object detection with detection transformers. Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. (CVPR) 2023, 23809–23818. [Google Scholar]

- Wang, P.; Cai, Z.; Yang, H.; et al. Omni-DETR: Omni-Supervised Object Detection with Transformers. Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. (CVPR) 2022, 9367–9376. [Google Scholar]

- Li, G.; Li, X.; Wang, Y.; et al. PseCo: Pseudo labeling and consistency training for semi-supervised object detection. arXiv 2022, arXiv:2203.16348. [Google Scholar]

- Xu, B.; Chen, M.; Guan, W.; et al. Efficient Teacher: Semi-supervised object detection for YOLOv5. arXiv 2023, arXiv:2302.07577. [Google Scholar] [CrossRef]

- Liu, Y.C.; Ma, C.Y.; Kira, Z. Unbiased Teacher v2: Semi-supervised object detection for anchor-free and anchor-based detectors. Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. (CVPR) 2022, 9819–9828. [Google Scholar]

- Luo, G.; Zhou, Y.; Jin, L.; et al. Towards end-to-end semi-supervised learning for one-stage object detection. arXiv 2023, arXiv:2306.00930. [Google Scholar]

- Zhou, H.; Ge, Z.; Liu, S.; et al. Dense Teacher: Dense pseudo-labels for semi-supervised object detection. arXiv 2022, arXiv:2206.07246. [Google Scholar]

| DC-Fusion | SBR-Neck | SCA-Upsampling | mAP50 | mAP50:95 |

|---|---|---|---|---|

| 23.2% | 12.8% | |||

| ✓ | 27.9% | 17.5% | ||

| ✓ | 27.5% | 17.9% | ||

| ✓ | 25.7% | 19.2% | ||

| ✓ | ✓ | 28.8% | 19.8% | |

| ✓ | ✓ | ✓ | 32.1% | 20.4% |

| Feature Level | mAP50 | FPS | |||

|---|---|---|---|---|---|

| 32.8% | 8.9% | 22.1% | 36.1% | 56 | |

| F | 34.1% | 12.4% | 24.5% | 33.5% | 52 |

| 36.3% | 15.4% | 25.8% | 36.5% | 46 |

| Threshold Strategy | mAP50(All) | mAP50(Head) | mAP50(Tail) | Avg. Initial Candidates | Avg. Pseudo-labels |

|---|---|---|---|---|---|

| 0.9 (Fixed) | 30.5% | 58.2% | 15.4% | 138.6 | 20.5 |

| 0.5 (Fixed) | 33.8% | 52.1% | 20.6% | 162.4 | 45.8 |

| CDA (Dynamic) | 36.3% | 60.5% | 24.8% | 145.2 | 32.4 |

| 0.90 | 0.93 | 0.95 | 0.97 | 0.99 | |

|---|---|---|---|---|---|

| 0.80 | 33.8% | 34.2% | 34.5% | 34.2% | 33.9% |

| 0.85 | 34.2% | 34.6% | 34.9% | 34.9% | 34.5% |

| 0.90 | 34.5% | 34.9% | 35.1% | 36.3% | 35.3% |

| 0.95 | 34.3% | 34.7% | 34.8% | 35.2% | 34.9% |

| 1.00 | 33.9% | 34.3% | 34.5% | 34.7% | 34.4% |

| Value | 0.0 | 0.2 | 0.4 | 0.6 | 0.8 | 1.0 |

|---|---|---|---|---|---|---|

| mAP50 | 33.9% | 35.6% | 36.1% | 36.3% | 35.8% | 35.1% |

| Category | Method | 1% | 2% | 5% | 10% | FPS |

|---|---|---|---|---|---|---|

| End-to-end | Omni-DETR [37] | 9.07% | 15.1% | 20.1% | 28.7% | 10.7 |

| Semi-DETR [36] | 9.72% | 16.2% | 21.5% | 29.4% | 9.1 | |

| Two-stage | STAC [32] | 5.54% | 7.36% | 12.9% | 21.2% | 14.4 |

| Unbiased Teacher [33] | 6.81% | 12.5% | 16.7% | 24.5% | 16.8 | |

| PseCo [38] | 8.04% | 14.8% | 19.1% | 27.8% | 15.7 | |

| Humble Teacher [34] | 6.48% | 9.31% | 15.2% | 24.5% | 17.8 | |

| One-stage | Efficient Teacher (YOLOv5) [39] | 7.33% | 12.5% | 15.7% | 23.2% | 50.5 |

| Efficient Teacher (YOLOv11) | 8.58% | 14.7% | 19.4% | 27.8% | 49.3 | |

| Unbiased Teacher v2 [40] | 7.19% | 9.82% | 16.2% | 23.5% | 48.8 | |

| One Teacher [41] | 7.47% | 11.4% | 15.8% | 24.7% | 46.7 | |

| Dense Teacher [42] | 8.03% | 12.8% | 16.9% | 25.1% | 48.6 | |

| Ours | 11.5% | 18.9% | 26.6% | 36.3% | 45.8 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).