Submitted:

29 April 2026

Posted:

30 April 2026

You are already at the latest version

Abstract

Keywords:

I. Introduction

II. Methods

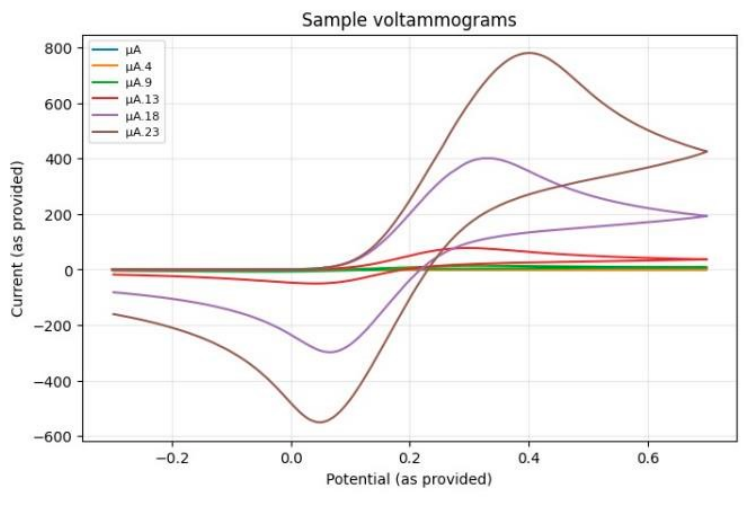

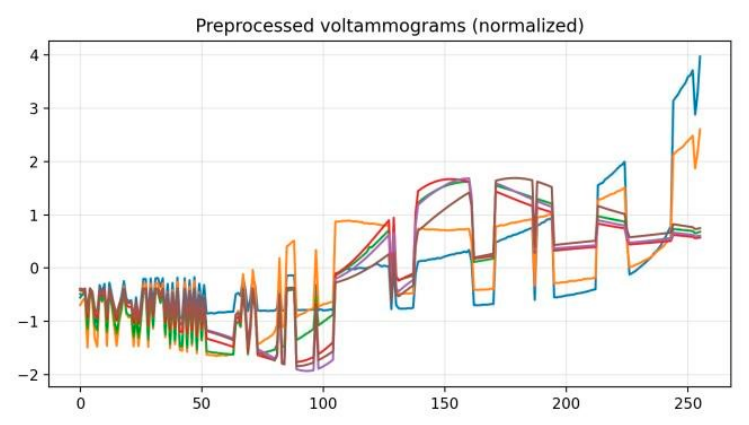

A. Dataset and Preprocessing

B. Synthetic Noise and Drift Artifacts

- • Gaussian noise: Random additive noise is added to the signal (simulating electronic noise). We use a noise level of σ = 0.15 (15% of a signal’s standard deviation) during training.

- • Baseline drift: A linear slope is added across the voltammogram, mimicking a drifting baseline current over time (e.g. due to electrode fouling or concentration changes).

- • Gain change: The signal is scaled by a random factor between 0.7 and 1.6, simulating a change in sensitivity (gain) of the sensor.

- • Peak shift: The voltammogram is circularly shifted by a few sample indices (up to ±15 points) to represent a shift in the reference electrode potential or timing.

- • Spike artifacts: Random impulsive spikes are injected at a few points with magnitude 1.5–3.5× the typical signal, representing transient interference or motion-induced artifacts.

C. TinyML Models

- 1)

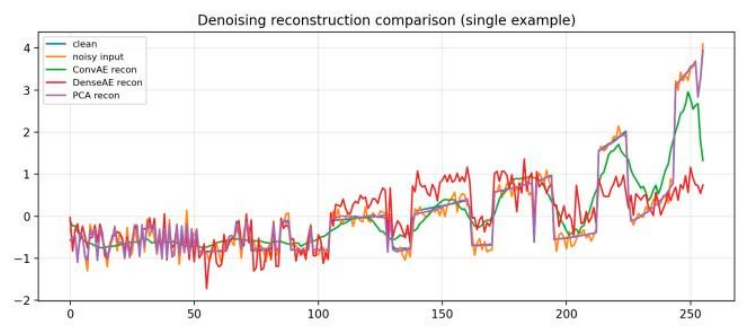

- Conv1D Denoising Autoencoder: a small onedimensional convolutional neural network that learns an encoded representation of the signal and reconstructs it. Our architecture uses two convolutional layers (with 8 and 16 filters of kernel size 7 and 5, respectively) each followed by downsampling (MaxPool factor 2) to encode the 256-length input into a compact latent feature map. A bottleneck convolution (with 8 filters of size 3) further compresses the representation. The decoder mirrors this with upsampling layers and convolutional filters to upsample back to the original length. The final layer is a 1-filter conv that outputs the reconstructed signal. This ConvAE leverages local receptive fields to naturally denoise high-frequency noise. The model we denote ConvAE tiny has 1,881 trainable parameters (7.5 KB in float32). We also test an even smaller variant ConvAE tinier (with only 4 and 8 filters in the conv layers, and 4 bottleneck filters) totaling 525 params.

- 2)

- Dense Denoising Autoencoder: a fully connected autoencoder that uses only dense layers. We flatten the 256-point input and pass it through a hidden layer of 64 neurons (ReLU activation), then a latent layer of 16 neurons. The decoder consists of another 64-neuron layer followed by an output layer of 256 (which is reshaped back to 1×256). This DenseAE tiny model has more parameters (35,216) because every input sample is connected, but it is straightforward to implement on most frameworks. We include it as a baseline for a small neural network without convolutional structure.

- 3)

- PCA Reconstruction: a principal component analysis approach that uses a linear subspace to approximate the signal. We compute the top-k principal components on the training set voltammograms (flattened to 256-d vectors). At test time, a signal is projected into this k dimensional subspace and then projected back (inverse transform) to produce a reconstruction. This acts as a linear “autoencoder” with k latent features. We evaluate PCA with k = 8 and k = 16 components, which account for the majority of variance in the training data. PCA has no learned nonlinear capacity but provides an interpretable and memory-efficient baseline.

D. Evaluation Metrics

III. Results

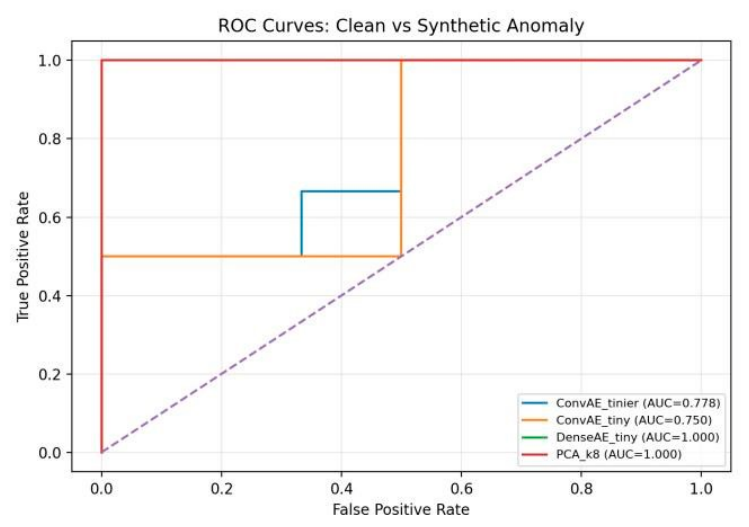

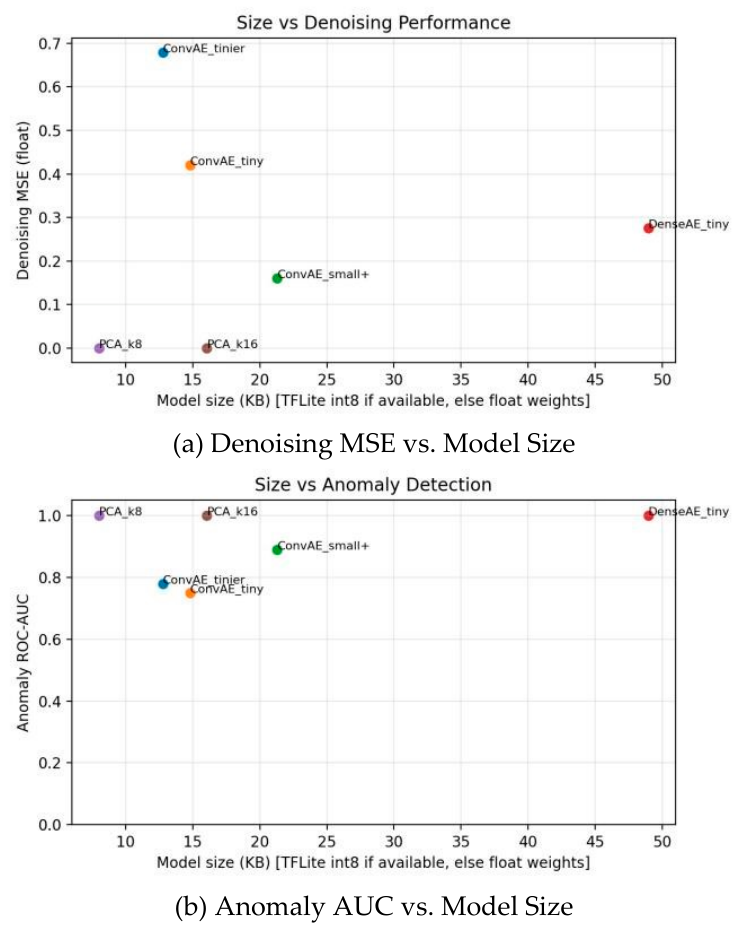

A. Denoising and Anomaly Detection Performance

| Model | Params | Size (KB) | MSE | ROC-AUC |

|---|---|---|---|---|

| ConvAE tinier | 525 | 12.8 | 0.678 | 0.778 |

| ConvAE tiny | 1881 | 14.8 | 0.420 | 0.750 |

| ConvAE small+ | 7089 | 21.3 | 0.161 | 0.889 |

| DenseAE tiny 35216 48.9 0.276 1.000 PCA (k=8) 2056 8.0* ≈0 1.000 | ||||

| 4112 | 16.1* | ≈0 | 1.000 | |

B. Model Size vs. Performance Trade-Offs

| Model | MACs | Flash (KiB) | RAM (KiB) | Latency (ms) |

| ConvAE-Tinier | 70,937 | 40.88 | 7.08 | 0.079 |

| ConvAE-Tiny | 235,825 | 49.71 | 10.28 | 0.145 |

| ConvAE-Small+ | 848,225 | 55.62 | 16.67 | 0.498 |

| DenseAE-Tiny | 35,216 | 46.04 | 5.04 | 0.021 |

C. Hardware Implementation

IV. Discussion

V. Conclusion

VI. Future Work

References

- Bandodkar, J.; Wang, J. Non-invasive wearable electrochemical sensors: a review. Trends Biotechnol. 2014, vol. 32(no. 7), 363–371. Available online: https://www.sciencedirect.com/science/article/pii/S016777991400069. [CrossRef] [PubMed]

- Ott, E. Strategies for assessing the limit of detection in voltammetric methods: comparison and evaluation of approaches. Analyst Available. 2024, vol. 149, 4295–4309. [Google Scholar] [CrossRef] [PubMed]

- Heikenfeld, J. , Wearable sensors: modalities, challenges, and prospects. Lab. A Chip 2018, vol. 18(no. 2), 217–248. [Google Scholar] [CrossRef] [PubMed]

- Popoola, O.A.; Stewart, G. B.; Mead, M. I.; Jones, R. L. Development of a baseline-temperature correction methodology for electrochemical sensors and its implications for long-term stability. Atmos. Environ. 2016, vol. 147, 330–343. Available online: https://www.sciencedirect.com/science/article/pii/S1352231016308317. [CrossRef]

- Calvo, E. M.; Renevey, P.; Lemay, M.; Bonetti, A.; Sole, M. P.; Cattenoz, R.; Emery, S.; Delgado-Gonzalo, R. Ultra-low-power physical activity classifier for wearables: From generic mcus to asics. in 2021 43rd Annual International Conference of the IEEE Engineering in Medicine Biology Society (EMBC), 2021; pp. 6978–6981. [Google Scholar]

- Stoppa, M.; Chiolerio, A. Wearable electronics and smart textiles: A critical review. Sensors 2014, vol. 14(no. 7), 11957–11992. Available online: https://www.mdpi.com/1424-8220/14/7/11957. [CrossRef]

- Filgueiras, T. P.; Bertemes-Filho, P.; Noveletto, F. Evaluating the accuracy of low-cost wearable sensors for healthcare monitoring. Micromachines 2025, vol. 16(no. 7). Available online: https://www.mdpi.com/2072-666X/16/7/791. [CrossRef] [PubMed]

- David, R.; Duke, J.; Jain, A.; Janapa Reddi, V.; Jeffries, N.; Li, J.; Kreeger, N.; Nappier, I.; Wang, T.; Warden, P. Tensorflow lite micro: Embedded machine learning on tinyml systems. arXiv 2021, arXiv:2010.08678. [Google Scholar]

- Vincent, P.; Larochelle, H.; Bengio, Y.; Manzagol, P.-A. Extracting and composing robust features with denoising autoencoders. In Proceedings of the 25th International Conference on Machine Learning (ICML), New York, NY, USA, 2008; ACM; pp. 1096–1103. [Google Scholar]

- Yan, K.; Zhang, D. Correcting instrumental variation and timevarying drift: A transfer learning approach with autoencoders. IEEE Trans. Instrum. Meas. 2016, vol. 65(no. 9), 2012–2022. [Google Scholar] [CrossRef]

- Jolliffe, I.T. Principal Component Analysis, 2nd ed.; ser. Springer Series in Statistics; Springer-Verlag: New York, NY, 2002. [Google Scholar]

- National Institute of Standards and Technology. Voltammogram data associated with manuscript titled strategies for assessing the limit of detection in voltammetric methods: Comparison and evaluation of approaches. Available online: https://data.nist.gov/od/id/mds2-3388.

- Alves, T. M. R.; Deroco, P. B.; Junior; Wachholz, D.; Vidotto, L. H. B. And L. T. Kubota, Wireless wearable electrochemical sensors: a review. Braz. J. Anal. Chem. 2021. [Google Scholar] [CrossRef]

- Biswas, N. B.; Read, T.; Levey, K. J.; Macpherson, J. V. Choosing the Correct Internal Reference Redox Species for Overcoming Reference Electrode Drift in Voltammetric pH Measurements. ACS Electrochem. vol. 1(no. 8), 1532–1539, 2025. [CrossRef] [PubMed]

- Kukla, S.; Uzunoglu, B.; Alam Khondkar, M. J.; Sweeney-Fanelli, T.; Andreescu, S.; Imtiaz, M. Lessons learned from designing a wireless universal electrochemical sensing system. 2025 IEEE 16th Annu. Ubiquitous Comput. Electron. Mob. Commun. Conf. (UEMCON) 2025, 0143–0149. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).