Submitted:

28 April 2026

Posted:

30 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

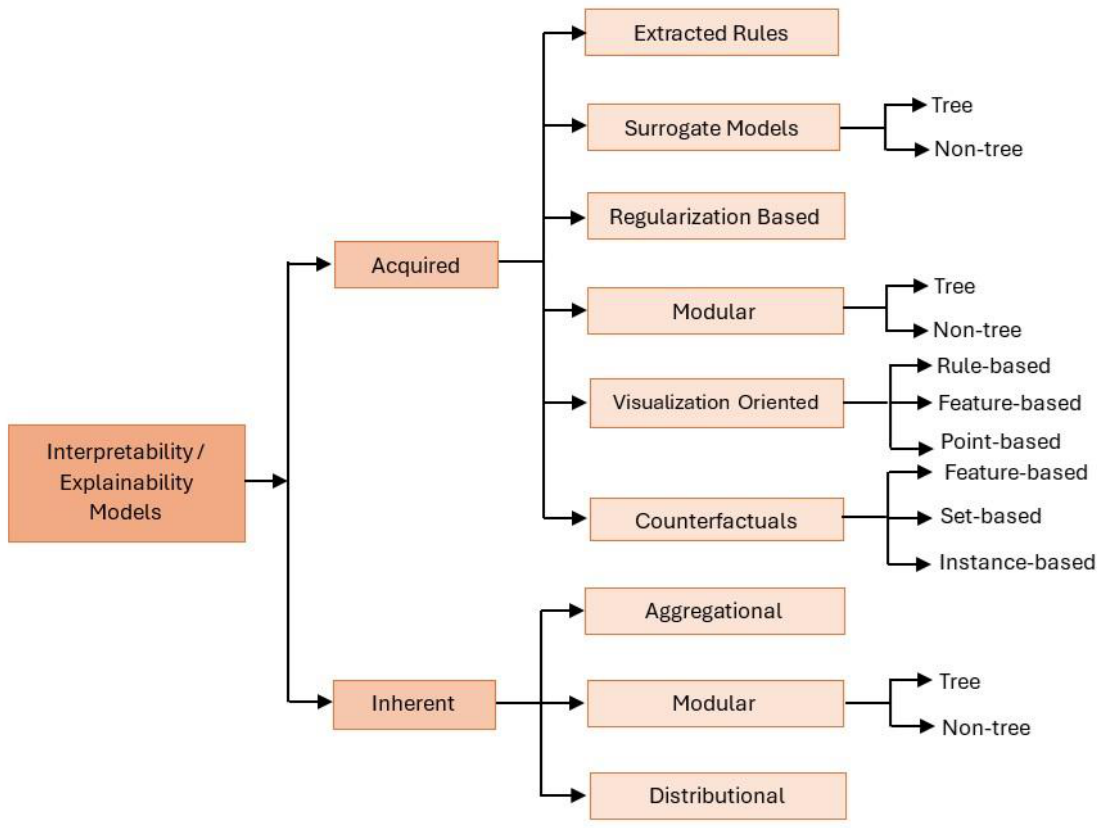

- A unified, cross-paradigm categorization of acquired and inherent interpretability or explainability methods. Unlike prior works that isolate techniques by specific ensemble types, this taxonomy goes beyond descriptive cataloging to map the underlying structural trade-offs applicable to all tree-based architectures.

- A survey of the specific interpretability and explainability approaches used in the above categories.

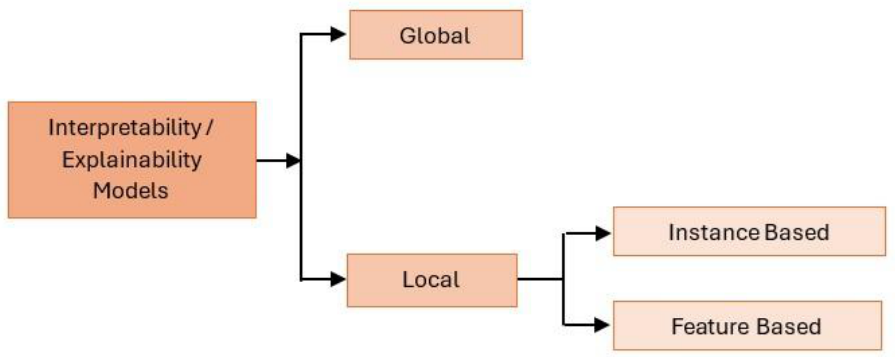

- A second taxonomy of interpretability and explainability methods based on their scope (global, local).

- A presentation of the interpretability and explainability methods/techniques used in various domain applications, like healthcare, finance, law, privacy preserving etc.

- Comparative analysis of the primary methodological models against four critical real-world constraints: Scalability, Computational Cost, Robustness/Stability, and Usability.

- Practical considerations for making possibly optimal design decisions for interpretable TBEs.

- A sketch addressing four critical open research challenges coming out of our analyses.

2. Background Knowledge

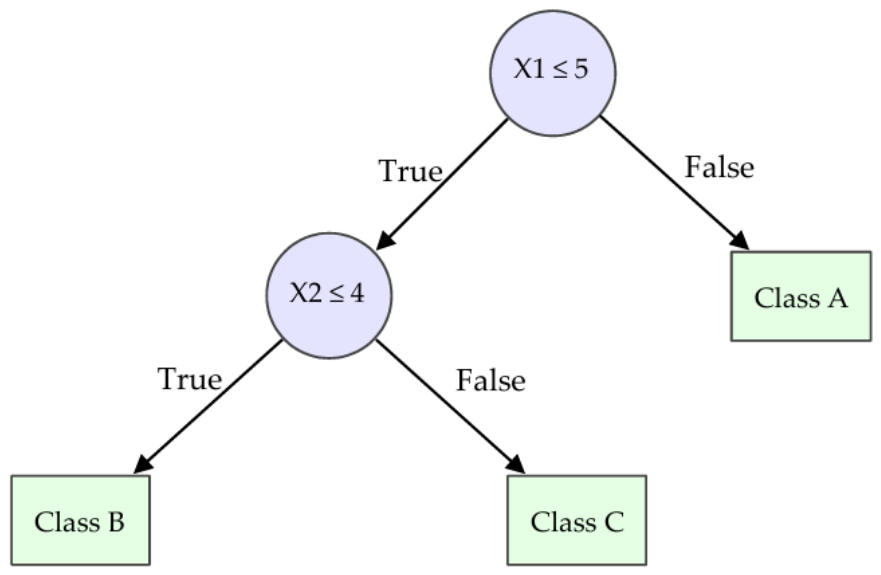

2.1. Tree Structure and Interpretability

2.2. From Single Trees to Ensembles of Trees

- – Ψens(x) represents the final ensemble output for input x.

- – k is the total number of independent decision trees in the ensemble.

- – Ψj(x) denotes the final prediction of the jth decision tree for input x.

- – The summing term in formula in (6) computes the sums of the predictions of the individual trees for each class label and then the label with the largest sum is taken as the final output. What internally happens is that first each tree predicts a probability for each class label, then the average probability across all trees is computed and based on that the final class label (the one with the largest average value) is assigned to Ψj(x).

- – The formula in (7) computes the arithmetic mean of the continuous outputs of the k individual trees.

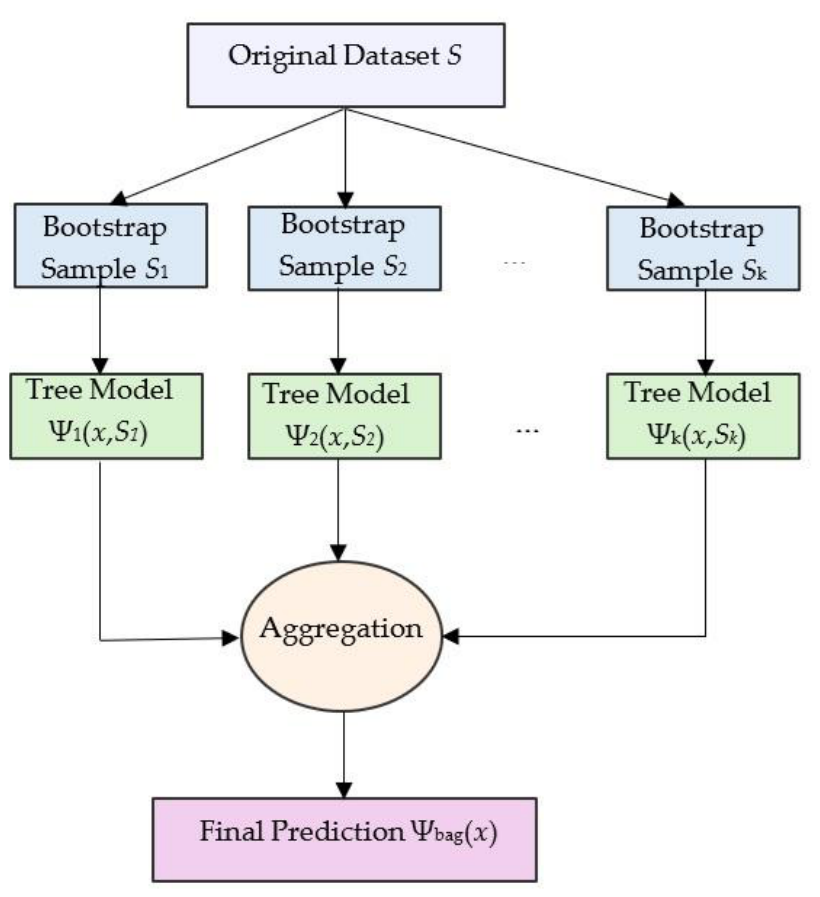

- Bagging (Bootstrap Aggregating): Exemplified by Random Forests [3], this method builds multiple trees in parallel on different subsets of data and averages their predictions. Mathematically, for an ensemble of k trees (consistent with our previously defined ensemble Π), the final aggregated prediction Ψbag(x) for an unseen feature vector x ∈ X is defined by formulas (6) and (7).

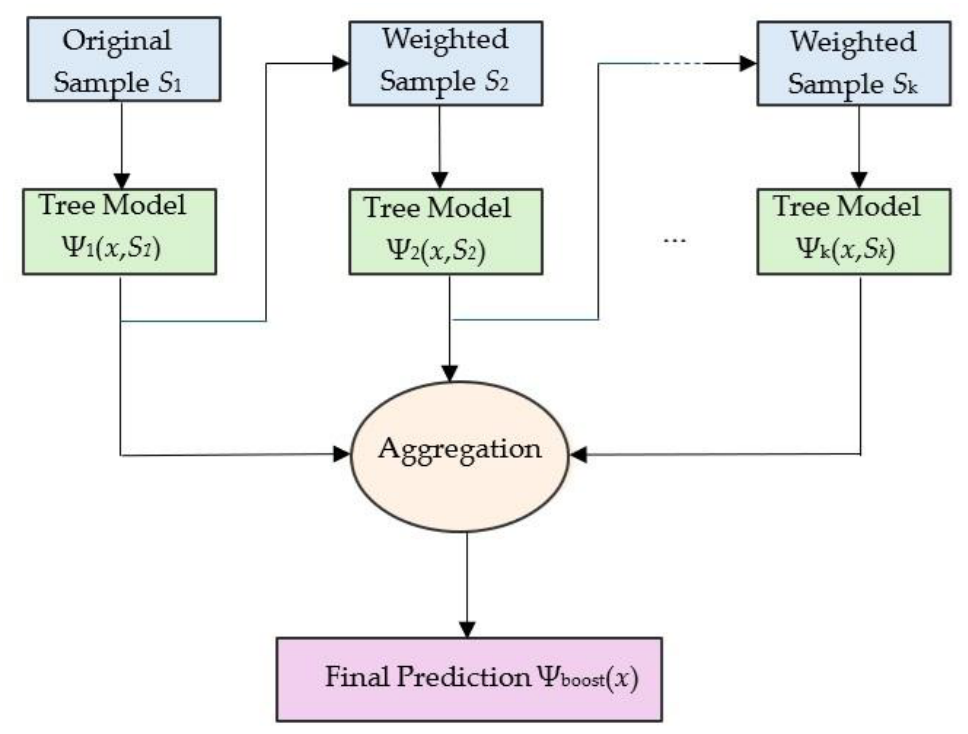

- Boosting: Exemplified by Gradient Boosting Machines (GBMs) and variants like XGBoost [4], this method builds trees sequentially. Each new tree corrects the errors of the previous ones. In formal terms, this is an additive expansion where each new tree fits the negative gradient of the loss function. Mathematically, for an ensemble of k trees (consistent with our previously defined ensemble Π), the final aggregated prediction Ψboost(x) for an unseen feature vector x ∈ X is defined by formulas (5) and (6).

2.3. Interpretability vs Explainability

- Interpretability: Refers to models that are naturally understandable by humans without the need for secondary tools. The model’s structure itself communicates the logic (e.g., a thin decision tree or a small rule list).

- Explainability: Refers to techniques applied to opaque models (black boxes) to approximate or visualize their decision-making process. For example, extracting feature importance scores from a dense Random Forest is an act of explainability, not interpretability.

2.4. Interpretability Evaluation Metrics

- Fidelity: Measures how accurately the interpretable explanation mimics the predictions of the original complex ensemble. Formally, for a dataset D containing |D| samples, fidelity is often defined as the accuracy of g with respect to f :

- Complexity: Quantifies the cognitive load required for a human to comprehend the generated explanation. For decision trees and rule sets, complexity is typically measured by structural properties such as the total number of nodes, the maximum depth of the tree, or the number of logical conditions. Bassan and Bianchini [16] formalize this concept, arguing that for an explanation to be human-tractable, its structural size C (g) must be polynomially bounded:

- Stability: Refers to the robustness and consistency of the explanation when the input data is subjected to minor variations. Let ϵ represent a noise added to the original input x. A highly unstable explanation is one where a tiny change in the input leads to a completely different explanation (g(x) ≠ g(x + ϵ)), even though the underlying complex model’s prediction remains practically unchanged (f(x) ≈ f(x+ϵ)). Such behavior in the explanation mechanism significantly reduces user trust, as highly similar input instances should ideally yield similar logical explanations [17].

2.5. Why Interpretability Matters

3. Related Work

3.1. General XAI Surveys and Overviews

- Breadth over Depth: By covering all ML models, they often reduce tree ensemble interpretability to standard Feature Importance, missing specialized techniques like MaxSAT-based induction.

- Lack of Structural Nuance: They typically classify ensembles as purely “post-hoc” problems, ignoring the emerging class of “inherently interpretable” ensemble architectures.

3.2. Surveys and Overviews Related to Tree Ensembles

- Outdated Taxonomies: Even general ensemble overviews like [10] fail to capture the new wave of “Interpretability-by-Design” architectures (e.g., FIGS) or the distinction between extraction and design.

- No domain consideration: Most tree-specific surveys treat interpretability as a purely technical problem, largely overlooking the impact of application domain on the models.

3.3. Technical Works Including Original Classification Taxonomies

3.4. Position of the Present Work

4. Methodology

4.1. Search Strategies and Sources

4.2. Inclusion and Exclusion Criteria

- Relevance: The paper proposed a new interpretability/explainability method specific to decision tree ensembles (Random Forests, GBMs, or hybrid architectures).

- Methodological clarity: The interpretability/explainability contribution was explicitly defined (e.g., rule extraction, structural simplification, or visual analytics).

4.3. Data Extraction and Synthesis

5. Categorization of Tree Ensemble Interpretability Models

5.1. Based on the Type of the Model

5.1.1. Acquired Interpretability/Explainability Models

- Extracted Rules: These methods algorithmically distill the dense, overlapping decision paths of a forest into a smaller, symbolic set of logical rules (e.g., IF-THEN statements) that explain the global boundary. The goal is to maximize logical coverage while minimizing the number of rules to prevent cognitive overload [15,22,24,25,26,27].

-

Surrogate Models: This approach frames interpretability/explainability as a “teacher-student” distillation task, involving the training of a secondary, inherently interpretable model to mimic the input-output behavior of the complex ensemble. We distinguish two subcategories:o Tree Surrogates: Approximating the ensemble using a single, shallow decision tree that balances fidelity with depth [28].

- Regularization Based: Rather than extracting new structures, these techniques apply mathematical constraints or smoothing operations to the existing tree structures post-training. This simplifies the decision boundaries by reducing the influence of noisy, deep splits while retaining the overall ensemble architecture [17,31].

-

Modular: These methods decompose the feature space post-training and apply distinct, localized explanations to specific sub-regions, creating a mosaic of simple explainers. We distinguish two types:o Tree Modular: Using local decision trees dedicated to specific, non-overlapping data regions [21].o Non-tree Modular: Using alternative local estimators to explain highly specific geometric sub-spaces.

-

Visualization Oriented: When symbolic logic or rule sets become too massive to read, these methods translate the ensemble’s structural topology or prediction behavior into human-readable visual abstractions. We discern three types of visualization:o Rule-based: Visualizing the topology and overlap of extracted rules in matrix formats [20].o Feature-based: Visualizing how features contribute to predictions globally or locally via heatmaps or attribution scores [29,32,33].o Point-based: Explaining the model through the visualization of representative data prototypes [34].

-

Counterfactuals: Methods that explain a specific prediction by identifying the minimum necessary changes to an input feature vector required to alter the model’s output, offering actionable recourse options. Again, we distinguish three types of counterfactual approaches:o Feature-based: Generating explainability by computationally modifying individual feature values via mathematical optimization to cross the decision boundary [37,39,40,42].o Set-based: Defining a continuous region or a definitive set of counterfactual conditions, offering robustness guarantees rather than a single point [35,38,41,43].o Instance-based: Providing explainability based on actual differences between real training instances that lead to different model predictions, grounding the recourse in historical data [36].

5.1.2. Inherent Interpretability Models

-

Modular: These architectures dynamically partition the dataset during the training phase itself, explicitly assigning specialized, simple models to distinct regions of the feature space.o Tree Modular: Using single decision trees as the local experts [46].

- Distributional: These frameworks focus on explaining models while strictly managing the underlying data distribution, often integrating interpretability with privacy preserving or federated learning protocols where feature transparency must be balanced with data security [12].

5.2. Based on the Interpretability Scope

- Global (Model-Level): The goal is to understand the entire model’s logic at a macro level, providing a complete overview of how the ensemble makes decisions across the whole feature space. Inherent architectures like FIGS [13] naturally fall here, as their constrained structure allows the user to view the whole model at once. Similarly, global rule extraction methods [15,25] and global surrogate trees target this objective by summarizing the entire forest’s boundaries.

-

Local (Instance-Level): The goal is to explain the model’s behavior for a specific, restricted region or an individual data point. This is critical in high-stakes domains (e.g., healthcare or mining safety) where operators need to know exactly why a specific decision or alarm was triggered. This objective is further divided into:o Instance Based: Methods that explain a specific prediction for a single data point. Examples include providing counterfactuals (e.g., finding the minimal changes to flip a specific prediction [49]) or utilizing local surrogate approximations.o Feature Based: Methods that identify which specific variables drove the prediction for a localized subset or outcome. This includes local feature attribution techniques and causal inference frameworks, such as the Causal Rule Ensemble (CRE) [26], which discover subgroups with heterogeneous treatment effects to distinguish true causal drivers from mere correlations in specific instances.

6. Analysis of Algorithmic Mechanisms

6.1. Acquired Interpretability/Explainability Models

6.1.1. Extracted Rules

6.1.2. Surrogate Models

6.1.3. Regularization Based

6.1.4. Modular

6.1.5. Visualization Oriented

6.1.6. Counterfactuals

6.2. Inherent Interpretability Models

6.2.1. Aggregational

6.2.2. Modular

6.2.3. Distributional

6.3. Comparative Analysis and Practical Trade-Offs

-

Extracted Rules (Heuristic):o Trade-offs: These methods offer high scalability and low-to-moderate computational cost, as they rely on fast pattern mining [15,22]. However, they exhibit moderate stability, as heuristic extraction is prone to variance when training data is noisy.o Usability: High, provided the extracted rule lists are kept short to avoid human cognitive overload.

-

Extracted Rules (Exact/MILP):o Trade-offs: By relying on mathematical optimization, these methods offer high robustness and absolute logical certainty [25,27]. The critical trade-off is their very low scalability and extremely high computational cost, as the underlying search spaces are often NP-hard for large ensembles [16,25].o Usability: Very high for compliance-heavy domains requiring absolute guarantees.

-

Surrogate Models:o Trade-offs: Surrogates excel in scalability and have low computational costs due to their model-agnostic training [28,30]. However, they suffer in stability and robustness due to the “fidelity gap”—the inherent approximation error between the surrogate and the true black-box boundary.o Usability: High, as they output familiar formats like single trees or linear equations.

-

Counterfactuals:o Trade-offs: These approaches have lower scalability and high computational costs because they require iterative optimization or mixed-integer programming for specific queries [37,42]. However, set-based counterfactuals offer high robustness guarantees [38].o Usability: Very high, as they provide immediately actionable recourse and “what-if” scenarios for end-users.

-

Inherent Architectures (Aggregational & Modular):o Trade-offs: Inherent models impose structural constraints during the training phase, leading to moderate computational costs during induction compared to standard bagging [13,46,47]. However, they offer high scalability at inference and high robustness, as their additive or routed structures naturally reduce variance [44].o Usability: High, providing immediate global or localized transparency without the need for secondary, error-prone explanation tools.

7. Domain-Specific Methodological Trends

7.1. Healthcare

7.2. Industrial and Physical Engineering

7.3. Finance, Law and Social Sciences

7.4. Privacy Preserving Applications

7.5. Summary

8. Practical Decision Framework

-

High-Stakes, Regulated Domains (e.g., Finance, Law):o Requirements: Absolute logical certainty for formal auditing; global or regional scope.o Trade-off: These methods guarantee maximum fidelity and mathematical robustness, but their extreme computational complexity strictly limits their scalability to smaller datasets and shallower ensembles.

-

Safety-Critical, Real-Time Systems (e.g., Engineering, Sensor Networks):o Requirements: Ultra-low inference latency; ability to handle concept drift; local scope.o Recommendation: Inherent modular architectures or fast, local instance-based surrogates (e.g., LIME) [57,58].o Trade-off: This approach maximizes operational scalability and real-time usability for immediate intervention, but inherently sacrifices global structural transparency.

-

Exploratory and Scientific Domains (e.g., Healthcare, Epidemiology):o Requirements: High-dimensional dataset handling (e.g., genomics, biomarkers); identification of true physiological drivers.o Recommendation: Advanced post-hoc feature attribution (e.g., TreeSHAP) or Causal Rule Ensembles [26,53].o Trade-off: Offers excellent scalability for massive feature spaces. However, practitioners must accept a potential “fidelity gap” where post-hoc attributions might misrepresent highly correlated features, requiring causal architectures for true intervention analysis.

-

Privacy-Preserving Environments (e.g., Decentralized Silos):o Requirements: Strict data confidentiality; distributed training across multiple institutions.o Recommendation: Distributional inherent models utilizing cryptographic protocols (e.g., Federated SHAP) [12,50].o Trade-off: Ensures strict data privacy and structural security, but incurs a massive computational overhead during explanation generation, rendering it unsuitable for low-latency applications.

9. Discussion and Open Challenges

- Standardized “Actionability” Metrics: The field currently optimizes for sparsity or surrogate fidelity, which often fail to correlate with human cognitive fit. A rule set might be mathematically minimal yet practically unactionable if it relies on immutable features. Future work must develop standardized, human-grounded evaluation protocols that penalize domain irrelevance and measure the real-world actionability of the generated explanations.

- Temporal Stability Under Concept Drift: Decision trees are notoriously sensitive to data perturbations. A major open gap is ensuring that local explanations and counterfactuals remain temporally consistent as the underlying data distribution experiences concept drift in dynamic environments.

- Scalable Privacy-Preserving Interpretability: While initial frameworks for explainable federated learning exist, balancing the massive computational overhead of cryptographic explanations (e.g., Secure Multiparty Computation) with real-time operational needs remains a highly restrictive bottleneck that future architectures must resolve.

- Exploration of “Blind Spots” in the Methodological Taxonomy: Our proposed classification framework (Figure 4) exposes distinct, under-researched niches within the XAI landscape. Notably, there is a prominent absence of literature concerning acquired modular non-tree approaches. While post-hoc modular trees (which partition the space for local decision trees) and inherent non-tree modules are well-documented, extracting regionalized algebraic models from an already-trained ensemble remains a significant gap. Future research should prioritize algorithms capable of this regional non-tree decomposition, bridging the gap between exact local fidelity and global structural understanding.

10. Conclusions

Author Contributions

Funding

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Breiman, L.; Friedman, J.; Olshen, R.; Stone, C. Classification and Regression Trees; Wadsworth: Belmont, CA, USA, 1984. [Google Scholar]

- Quinlan, J.R. C4.5: Programs for Machine Learning; Morgan Kaufmann: San Mateo, CA, USA, 1993. [Google Scholar]

- Haddouchi, M.; Berrado, A. A survey and taxonomy of methods interpreting random forest models. arXiv 2024, arXiv:2407.12759. [Google Scholar] [CrossRef]

- Gonçalves, V.; de Carvalho, V. A review of interpretability methods for gradient boosting decision trees. J. Braz. Comput. Soc. 2025, 31, 1. [Google Scholar] [CrossRef]

- Rudin, C.; Kim, B. Interpretable Machine Learning: Fundamental Principles and Ten Grand Challenges; Springer: Cham, Switzerland, 2021. [Google Scholar]

- Burkart, N.; Huber, M.F. A survey on the explainability of supervised machine learning. J. Artif. Intell. Res. 2021, 70, 245–317. [Google Scholar] [CrossRef]

- Linardatos, P.; Papastefanopoulos, V.; Kotsiantis, S. Explainable AI: A review of machine learning interpretability methods. Entropy 2021, 23, 1. [Google Scholar] [CrossRef]

- Minh, D.; Wang, H.X.; Li, Y.F.; Nguyen, T.N. Explainable artificial intelligence: A comprehensive review. Artif. Intell. Rev. 2022, 55, 3503–3568. [Google Scholar] [CrossRef]

- Nagahisarchoghaei, M.; Nur, N.; Cummins, L.; Nur, N.; Karimi, M.M.; Nandanwar, S.; Bhattacharyya, S.; Rahimi, S. An empirical survey on explainable AI technologies: Recent trends, use-cases, and categories from technical and application perspectives. Electronics 2023, 12, 1092. [Google Scholar] [CrossRef]

- Sepiolo, D.; Ligeza, A. Towards explainability of tree-based ensemble models: A critical overview. In New Advances in Dependability of Networks and Systems (Proceedings of the 17th International Conference on Dependability of Computer Systems DepCoS-RELCOMEX), Wrocław, Poland, 27 June–1 July 2022; Springer: Cham, Switzerland, 2022; Volume 484, pp. 287–296. [Google Scholar]

- Kotsiantis, S.B. Decision trees: A recent overview. Artif. Intell. Rev. 2013, 26, 159–190. [Google Scholar] [CrossRef]

- Chen, X.; Zhou, S.; Yang, K.; Fao, H.; Wang, H.; Wang, Y. Fed-EINI: An efficient and interpretable inference framework for decision tree ensembles in vertical federated learning. In Proceedings of the IEEE International Conference on Big Data, Orlando, FL, USA, 15–18 December 2021; pp. 1242–1248. [Google Scholar]

- Tan, Y.S.; Singh, C.; Nassar, K.; Agarwal, A.; Yu, B. Fast interpretable greedy-tree sums (FIGS). Proc. Natl. Acad. Sci. USA 2023, 120, e2218840120. [Google Scholar]

- Zharmagambetov, A.; Hada, S.S.; Carreira-Perpinan, M.A.; Gabidolla, M. An experimental comparison of old and new decision tree algorithms. arXiv 2020, arXiv:1911.03054. [Google Scholar] [CrossRef]

- Hatwell, J.; Gaber, M.M.; Azad, R.M.A. CHIRPS: Explaining random forest classification. Mach. Learn. 2024, 113, 4683–4719. [Google Scholar] [CrossRef]

- Bassan, I.; Bianchini, M. What makes an ensemble (un)interpretable? A complexity perspective. arXiv 2025, arXiv:2506.08216. [Google Scholar]

- Pfeifer, B.; Gevaert, A.; Loecher, M.; Holzinger, A. Tree smoothing: Post-hoc regularization of tree ensembles for interpretable machine learning. Inf. Sci. 2024, 658, 120015. [Google Scholar] [CrossRef]

- Wang, Z.; Gai, K. Decision tree-based federated learning: A survey. Blockchains 2024, 2, 40–60. [Google Scholar] [CrossRef]

- Aria, M.; Cuccurullo, C.; Gnasso, A. A comparison among interpretative proposals for random forests. Mach. Learn. Appl. 2021, 6, 100094. [Google Scholar] [CrossRef]

- Neto, M.P.; Paulovich, F.V. Explainable matrix—visualization for global and local interpretability of random forest classification ensembles. IEEE Trans. Vis. Comput. Graph. 2021, 27, 1427–1437. [Google Scholar] [CrossRef]

- Gulowaty, B.; Wozniak, M. Extracting interpretable decision tree ensemble from random forest. In Proceedings of the International Joint Conference on Neural Networks (IJCNN), Shenzhen, China (Virtual), 18–22 July 2021; pp. 1–8. [Google Scholar]

- Lal, G.R.; Chen, X.; Mithal, V. TE2Rules: Explaining tree ensembles using rules. arXiv 2024, arXiv:2206.14359. [Google Scholar] [CrossRef]

- Costa, V.G.; Pedreira, C.E. Recent advances in decision trees: An updated survey. Artif. Intell. Rev. 2023, 56, 4765–4800. [Google Scholar] [CrossRef]

- Deng, H. Interpreting tree ensembles with inTrees. Int. J. Data Sci. Anal. 2018, 7, 277–287. [Google Scholar] [CrossRef]

- Bonasera, L.; Carrizosa, E. A unified approach to extract interpretable rules from tree ensembles via integer programming. arXiv 2025, arXiv:2407.00843. [Google Scholar] [CrossRef]

- Bargagli-Stoffi, F.J.; Cadei, R.; Mock, L.; Lee, K.; Dominici, F. Causal rule ensemble: Interpretable discovery and inference of heterogeneous treatment effects. arXiv 2024, arXiv:2009.09036. [Google Scholar]

- Takemura, A.; Inoue, K. Generating explainable rule sets from tree-ensemble learning methods by answer set programming. In Proceedings of the 37th International Conference on Logic Programming (ICLP), Porto, Portugal (Virtual), 20–27 September 2021. [Google Scholar]

- Khalifa, F.A.; Abdelkader, H.M.; Elsaid, A.H. An analysis of ensemble pruning methods under the explanation of random forest. Inf. Syst. 2024, 120, 102310. [Google Scholar] [CrossRef]

- Di Teodoro, G.; Monaci, M.; Palagi, L. Unboxing tree ensembles for interpretability: A hierarchical visualization tool. Eur. J. Comput. Optim. 2024, 12, 100063. [Google Scholar] [CrossRef]

- Yang, Z.; Sudjianto, A.; Li, X.; Zhang, A. Inherently interpretable tree ensemble learning. arXiv 2024, arXiv:2410.19098. [Google Scholar] [CrossRef]

- Agarwal, A.; Tan, Y.S.; Ronen, O.; Singh, C.; Yu, B. Hierarchical shrinkage: Improving the accuracy and interpretability of tree-based methods. In Proceedings of the 39th International Conference on Machine Learning (ICML), Baltimore, MD, USA, 17–23 July 2022; pp. 111–135. [Google Scholar]

- Chen, C.-H.; Tanaka, K.; Kotera, M.; Funatsu, K. Comparison and improvement of the predictability and interpretability with ensemble learning models in QSPR. J. Cheminform. 2020, 12, 67. [Google Scholar] [CrossRef]

- Jetchev, D.; Vuille, M. XorSHAP: Privacy-preserving explainable AI for decision tree models. Cryptology ePrint Archive, Paper 2023/1859, 2023.

- Tan, S.; Soloviev, M.; Hooker, G. Tree space prototypes: Another look at making tree ensembles interpretable. In Proceedings of the 2020 ACM-IMS Foundations of Data Science Conference, Virtual Event, USA, 19–20 October 2020. [Google Scholar]

- Fernández, R.R.; de Diego, I.M.; Aceña, V.; Fernández-Isabel, A.; Moguerza, J.M. Random forest explainability using counterfactual sets. Inf. Fusion 2020, 63, 196–207. [Google Scholar] [CrossRef]

- Harvey, J.S.; Feng, G.; Zhao, T. Interpretable model-aware counterfactual explanations for random forest. arXiv 2025, arXiv:2510.27397. [Google Scholar] [CrossRef]

- Lucic, A.; Oosterhuis, H.; Haned, H.; de Rijke, M. FOCUS: Flexible optimizable counterfactual explanations for tree ensembles. In Proceedings of the Thirty-Sixth AAAI Conference on Artificial Intelligence (AAAI-22), Virtual Event, 22 February–1 March 2022. [Google Scholar]

- VanNostrand, P.M.; Zhang, H.; Hofmann, D.M.; Rundensteiner, E.A. FACET: Robust counterfactual explanation analytics. Proc. ACM Manag. Data 2023, 1, 4. [Google Scholar] [CrossRef]

- Dutta, S.; Long, J.; Mishra, S.; Tilli, C.; Magazzeni, D. Robust counterfactual explanations for tree-based ensembles. In Proceedings of the 39th International Conference on Machine Learning (ICML/PMLR), Baltimore, MD, USA, 17–23 July 2022. [Google Scholar]

- Monson, M.; Sabarmathi, G. From prediction to action: Counterfactual explanations and ensemble learning for explainable maternal health risk modelling. In Proceedings of the 2nd International Conference on New Frontiers in Communication, Automation, Management and Security (ICCAMS), Bangalore, India, 11–12 July 2025; IEEE; 2025, pp. 1–7. [Google Scholar]

- Kanamori, K.; Takagi, T.; Kobayashi, K.; Ike, Y. Counterfactual explanation trees: Transparent and consistent actionable recourse with decision trees. In Proceedings of the 25th International Conference on Artificial Intelligence and Statistics (AISTATS), Virtual Event, 28–30 March 2022. [Google Scholar]

- Parmentier, A.; Vidal, T. Optimal counterfactual explanations in tree ensembles. In Proceedings of the 38th International Conference on Machine Learning (ICML/PMLR), Virtual Event, 18–24 July 2021; Volume 139, pp. 8422–8431. [Google Scholar]

- Guidotti, R.; Monreale, A.; Ruggieri, S.; Naretto, F.; Turini, F.; Pedreschi, D.; Giannotti, F. Stable and actionable explanations of black-box models through factual and counterfactual rules. Data Min. Knowl. Discov. 2024, 38, 2825–2862. [Google Scholar] [CrossRef]

- Konstantinov, A.V.; Utkin, L.V. Interpretable machine learning with an ensemble of gradient boosting machines. Knowl.-Based Syst. 2021, 222, 106993. [Google Scholar] [CrossRef]

- Ibarguren, I.; Perez, M.J.; Muguerza, J.; Arbelaitz, O.; Yera, A. PCTBagging: From inner ensembles to ensembles. A trade-off between discriminating capacity and interpretability. Inf. Sci. 2022, 592, 198–217. [Google Scholar] [CrossRef]

- Bruggenjurgen, S.; Schaaf, N.; Kerschke, P.; Huber, M.F. Mixture of decision trees for interpretable machine learning. arXiv 2022, arXiv:2211.14617. [Google Scholar] [CrossRef]

- Vogel, R.; Schlosser, T.; Manthey, R.; Ritter, M.; Vodel, M.; Eibl, M.; Schneider, K.A. A meta algorithm for interpretable ensemble learning: The league of experts. Mach. Learn. Knowl. Extr. 2024, 6, 863–885. [Google Scholar] [CrossRef]

- Arwade, G.; Olafsson, S. Learning ensembles of interpretable simple structures. Ann. Oper. Res. 2025. [Google Scholar] [CrossRef]

- Blanchart, P. An exact counterfactual-example-based approach to tree-ensemble models interpretability. arXiv 2021, arXiv:2105.14820. [Google Scholar]

- Alshkeili, H.M.H.A.; Almheiri, S.J.; Khan, M.A. Privacy-preserving interpretability: An explainable federated learning model for predictive maintenance in sustainable manufacturing and Industry 4.0. AI 2025, 6, 117. [Google Scholar] [CrossRef]

- Haque, M.E.; Jahidul Islam, S.M.; Mia, S.; Sharmin, R.; Ashikuzzaman; Morshed, M.S.; Huque, M.T. StackLiverNet: A novel stacked ensemble model for accurate and interpretable liver disease detection. In Proceedings of the 16th International Conference on Computing Communication and Networking Technologies (ICCCNT), Indore, India, 6–11 July 2025. [Google Scholar]

- Moreno-Sanchez, P.A. Development of an explainable prediction model of heart failure survival by using ensemble trees. In Proceedings of the 2020 IEEE International Conference on Big Data, Atlanta, GA, USA (Virtual), 10–13 December 2020; pp. 4902–4910. [Google Scholar]

- Zheng, H.-L.; An, S.-Y.; Qiao, B.-J.; Guan, P.; Huang, D.-S.; Wu, W. A data-driven interpretable ensemble framework based on tree models for forecasting COVID-19. Environ. Sci. Pollut. Res. 2023, 30, 66085–66103. [Google Scholar] [CrossRef]

- Jia, J.-F.; Chen, X.-Z.; Bai, Y.-L.; Li, Y.-L.; Wang, Z.-Y. An interpretable ensemble learning method to predict the compressive strength of concrete. Structures 2022, 38, 644–655. [Google Scholar] [CrossRef]

- Song, Z.; Cao, S.; Yang, H. An interpretable framework for modeling global solar radiation using tree-based ensemble machine learning. Appl. Energy 2024, 365, 123238. [Google Scholar] [CrossRef]

- Li, L.; Qiao, J.; Yu, G.; Wang, L.; Li, H.-Y.; Liao, C.; Zhu, Z. Interpretable tree-based ensemble model for predicting beach water quality. Water Res. 2022, 211, 118078. [Google Scholar] [CrossRef]

- Qiu, Y.; Zhou, J. Short-term rockburst prediction in underground project: Insights from an explainable and interpretable ensemble learning model. Acta Geotech. 2023, 18, 6655–6685. [Google Scholar] [CrossRef]

- Saadallah, A. Online adaptive local interpretable tree ensembles for time series forecasting. In Proceedings of the IEEE International Conference on Data Mining Workshops (ICDMW), Abu Dhabi, UAE, 9–12 December 2024; pp. 229–237. [Google Scholar]

- Vultureanu-Albisi, A.; Badica, C. Improving students’ performance by interpretable explanations using ensemble tree-based approaches. In Proceedings of the IEEE 15th International Symposium on Applied Computational Intelligence and Informatics (SACI), Timisoara, Romania, 19–21 May 2021; pp. 215–220. [Google Scholar]

- Arévalo-Cordovilla, F.E.; Peña, M. Sci. Rep. 2025, 15, 223. [CrossRef] [PubMed]

| Methodological Family | Computational Cost | Scalability | Robustness & Stability | Real-Word Usability |

|---|---|---|---|---|

| Extracted Rules (Heuristic) | Low to Moderate | High | Low to Moderate (Prone to instability with noisy data) | High |

| Extracted Rules (Exact/MILP) | Very High (NP-hard search space) | Very Low | High (Mathematically guaranteed optimal boundaries) | High |

| Surrogate Models | Low | High | Moderate (Limited by the fidelity gap between the surrogate and the black box) | High |

| Counterfactuals | High (Requires iterative optimization or gradient descent per query) | Low | Moderate to High (Set-based methods offer strict robustness guarantees) | Very High |

| Inherent (Aggregational) | Moderate (Higher than standard bagging due to structural constraints) | Moderate | High (Additive structures inherently reduce variance and noise) | High |

| Inherent (Modular) | Moderate | High | High (Local experts remain stable within their defined feature regions) | High |

| Sector | Dominant Model | Operational Rationale |

Key Studies |

|---|---|---|---|

| Healthcare | Feature Attribution & Interaction (Post-hoc) | Need for biological plausibility and validation against medical literature. | [51,52,53] |

| Engineering | Feature Dependence & Adaptive Modular Ensembles | Validation against physical laws and adaptation to sensor concept drift. | [54,55,56,57,58] |

| Finance/Social | Local Surrogates & Fairness Constraints | Legal compliance; requirement for auditable instance-level reasons and bias detection. | [59,60] |

| Privacy Preserving | Cryptographic Extraction & Federated Architectures | Generating explanations while strictly preventing raw data reconstruction across silos. | [12,33,50] |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).