Submitted:

28 April 2026

Posted:

29 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Health Burden and Monitoring Urgency

2.2. Low-Cost Sensor Networks

2.3. Deep Learning for Air Quality Prediction

2.4. Image-Based AQI Estimation: Prior Art and Comparative Analysis

2.5. Research Gap

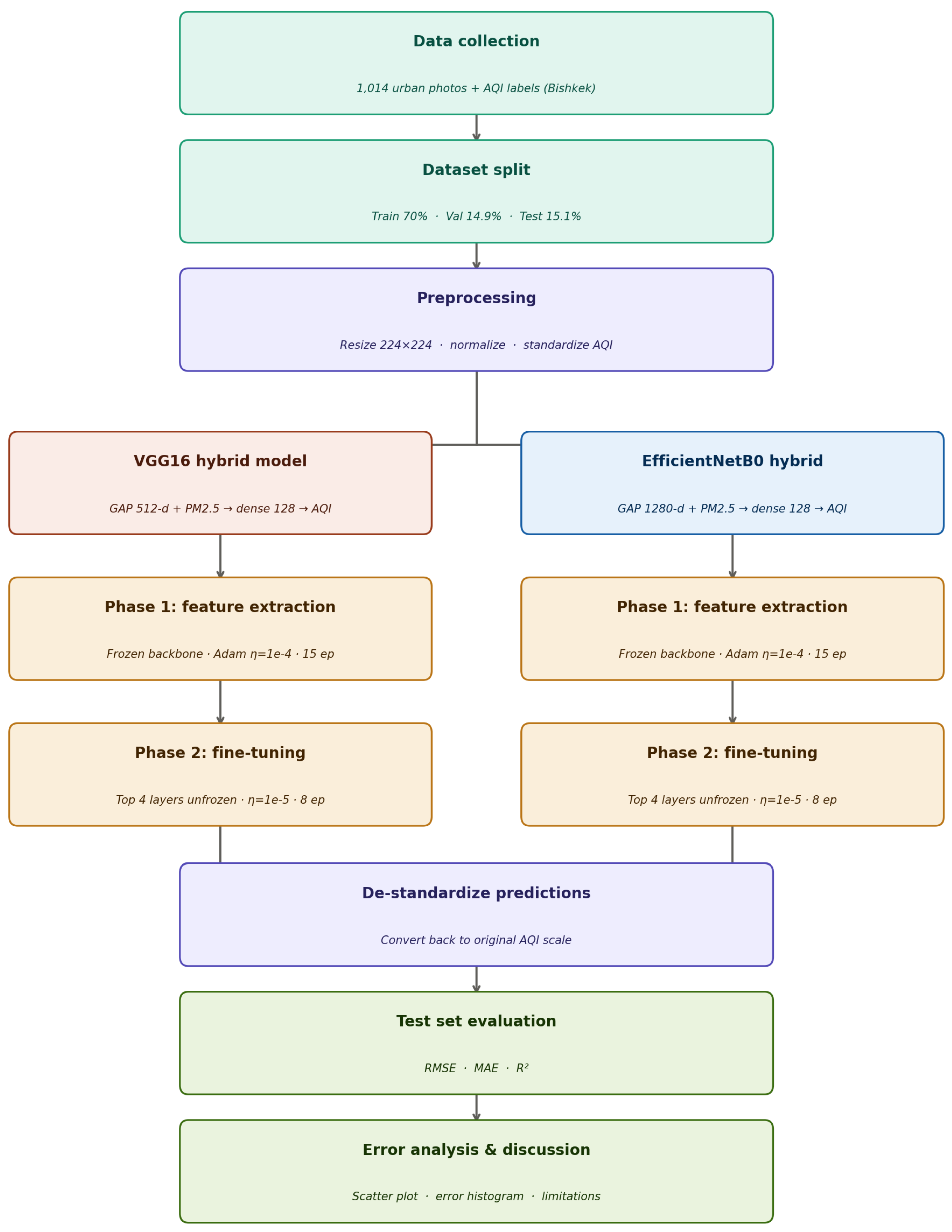

3. Methodology

3.1. Preprocessing

3.2. Model Architectures

3.2.1. VGG16 Hybrid Model

3.2.2. EfficientNetB0 Hybrid Model

3.3. Training Protocol

3.4. Evaluation Metrics

3.5. Use of Artificial Intelligence Tools

4. Results

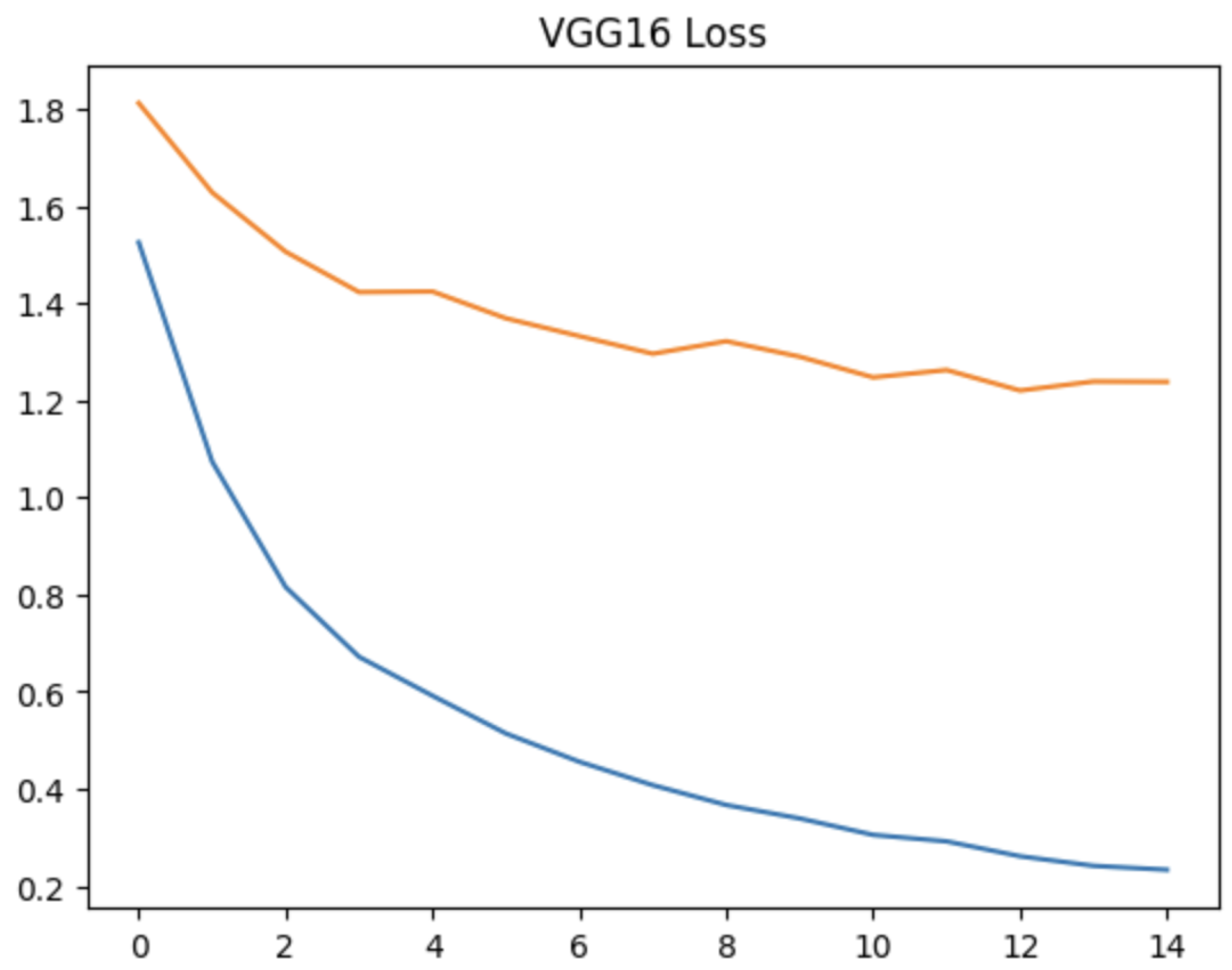

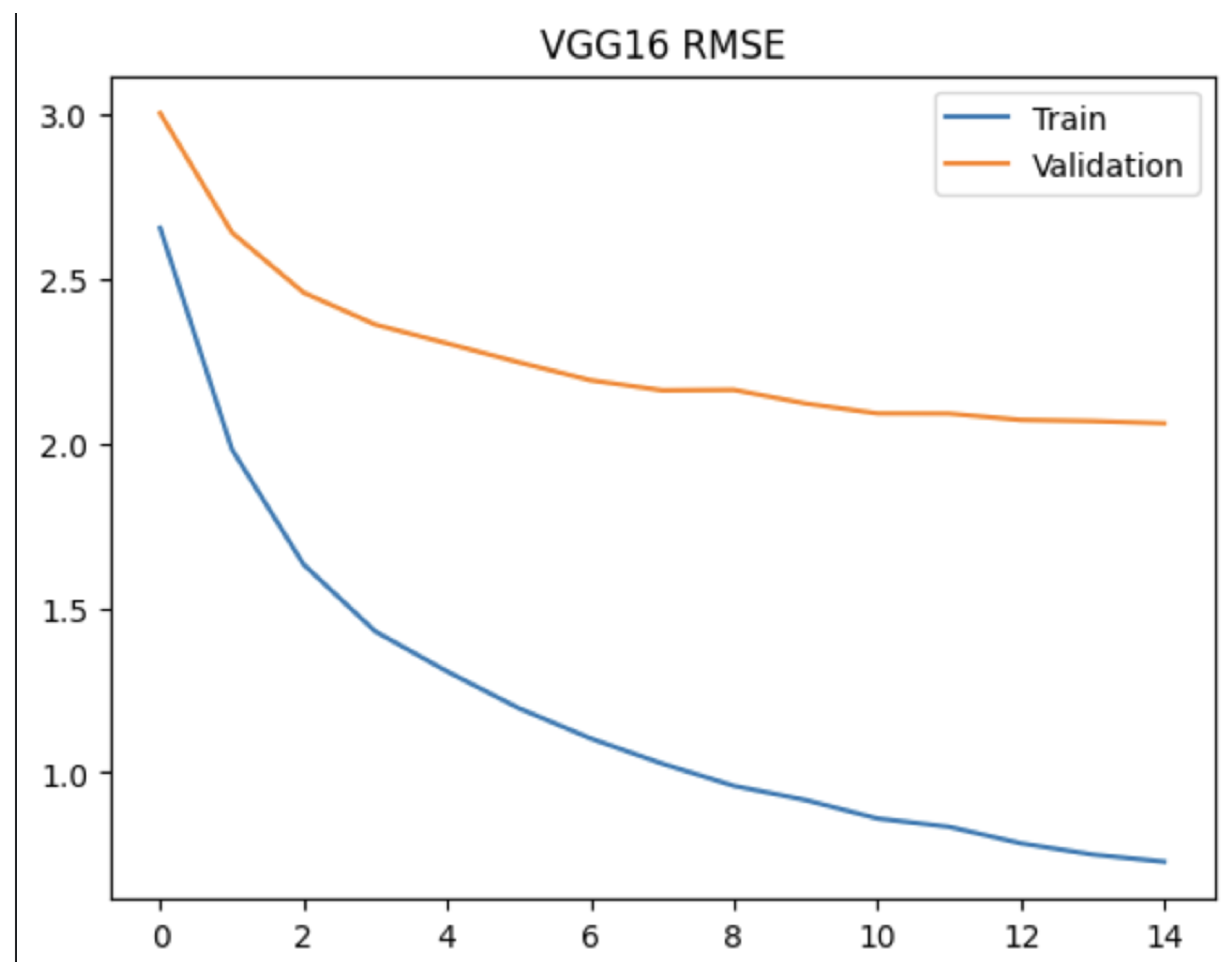

4.1. Training Dynamics

4.2. Test Set Evaluation

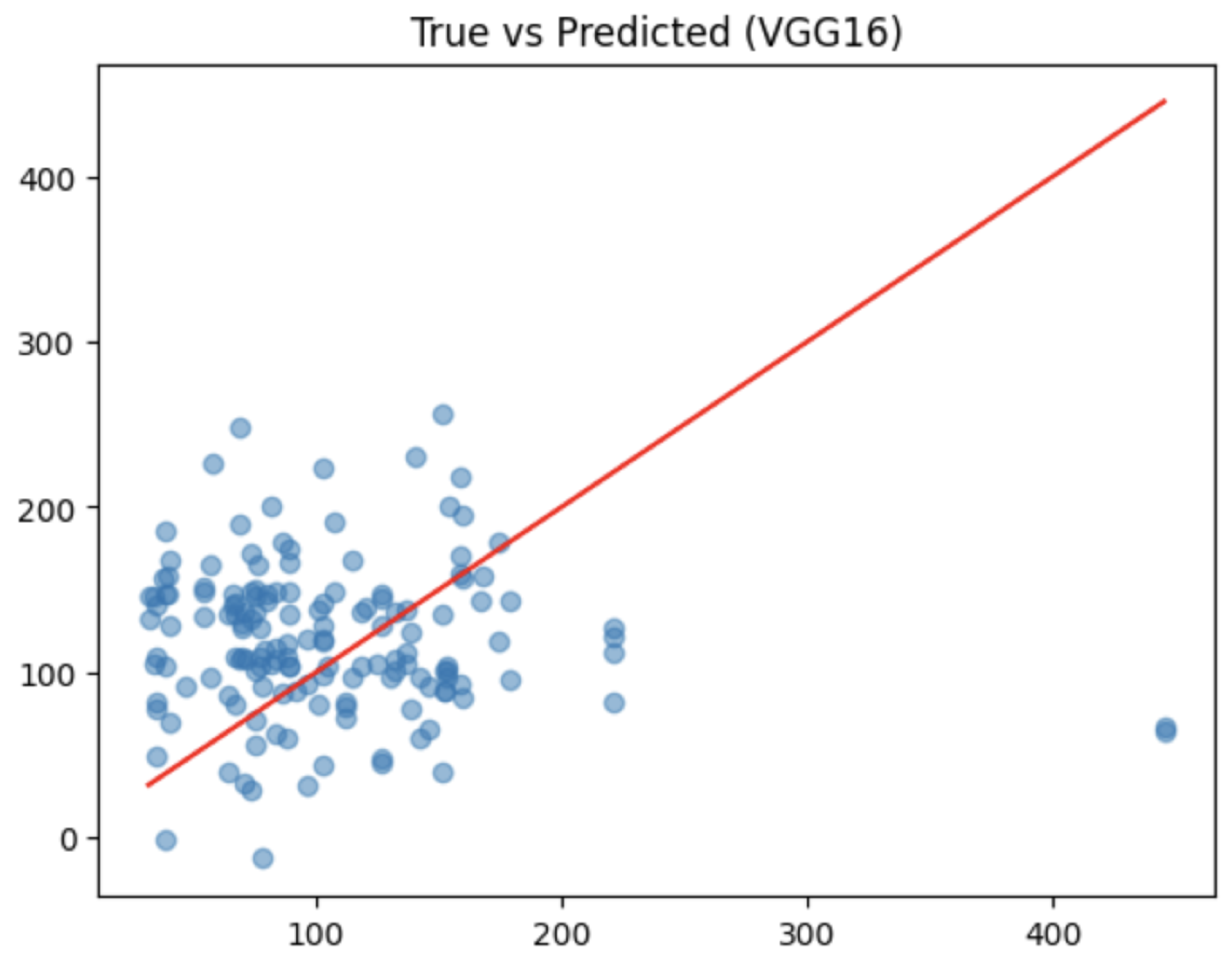

4.3. True vs. Predicted AQI Scatter Plot

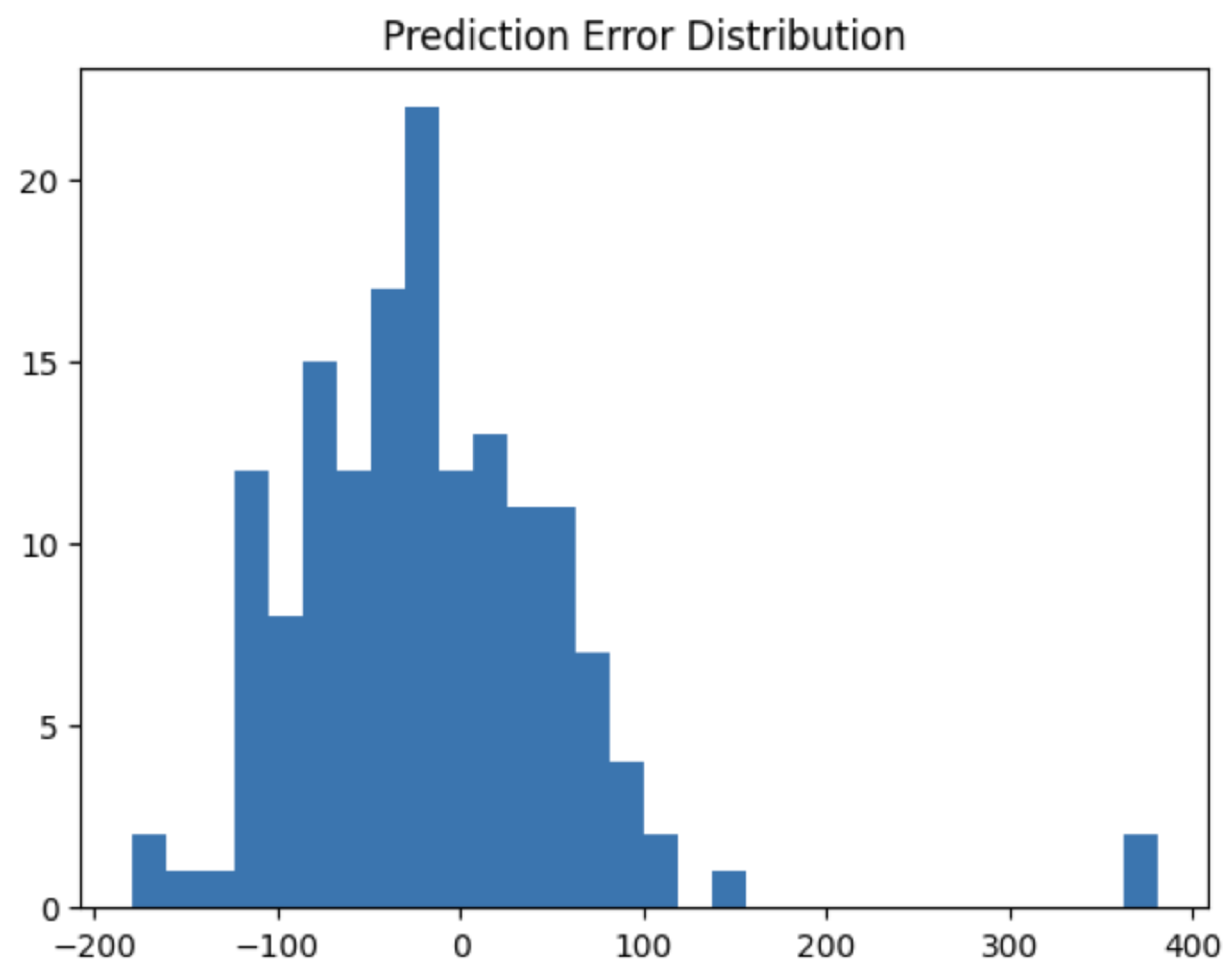

4.4. Prediction Error Distribution

5. Discussion

5.1. Interpreting the Negative R2 Values

5.2. Dataset Scale as the Primary Bottleneck

5.3. Contextualization of Indicators In Comparison with Previous Work

5.4. Label Noise from Sensor-Image Geographic Mismatch

5.5. Visual Confounders: Illumination and Scene Diversity

5.6. Right-Skewed Error Distribution: Implications

5.7. EfficientNetB0 vs. VGG16: Architectural Interpretation

5.8. Recommendations for Future Work

6. Conclusion

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- World Health Organization. WHO global air quality guidelines: Particulate matter (PM2.5 and PM10), ozone, nitrogen dioxide, sulfur dioxide and carbon monoxide. Technical report; World Health Organization: Geneva, Switzerland, 2021. [Google Scholar]

- Landrigan, P.J.; Fuller, R.; Acosta, N.J.R. The lancet commission on pollution and health. Lancet 2017, 391, 462–512. [Google Scholar] [PubMed]

- United Nations Children’s Fund. Ambient PM2.5 air pollution in Bishkek: Key messages. Technical report; UNICEF; Bishkek, Kyrgyzstan, 2023.

- World Bank. The global health cost of air pollution. Technical report; World Bank Group, 2023. [Google Scholar]

- Tan, M.; Le, Q.V. Efficientnet: Rethinking model scaling for convolutional neural networks. In Proceedings of the Proceedings of the 36th International Conference on Machine Learning. PMLR, 2019; pp. 6105–6114. [Google Scholar]

- GBD 2021 Risk Factors Collaborators. Global burden and strength of evidence for 88 risk factors in 204 countries and 811 subnational locations for the global burden of disease study 2021. Lancet 2024, 403, 2162–2203.

- Maji, K.J.; Arora, M.; Dikshit, A.K. Burden of disease attributed to ambient PM2.5 and PM10 exposure in 130 cities in China. Environ. Int. 2021, 123, 105–115. [Google Scholar]

- Kumar, P.; Omidvarborna, H.; Bhattacharya, S. The rise of low-cost sensing for managing air pollution in cities. Nat. Rev. Earth Environ. 2021, 2, 196–212. [Google Scholar]

- Liu, B.; Xu, M.; Ji, X. Low-cost outdoor air quality monitoring and source identification by dual-channel convolutional neural network on a raspberry pi. Sensors 2023, 23, 1320. [Google Scholar]

- Li, H.; Ge, Y.; Liu, M. Estimating ground-level PM2.5 with extra-trees across China. Int. J. Environ. Res. Public Health 2022, 19, 4084. [Google Scholar]

- Zhao, J.; Zhao, X.; Xu, H. Air quality prediction using multimodal data and transfer learning. IEEE Access 2022, 10, 12345–12358. [Google Scholar]

- Gulia, S.; Nagendra, S.M.S.; Khare, M.; Khanna, I. Urban air quality management: A review. Atmos. Pollut. Res. 2022, 13, 101286. [Google Scholar]

- Burmachach, N.; Isaev, R.; Gimaletdinova, G. Predicting passenger car prices with machine learning models. Preprints 2024. [Google Scholar] [CrossRef]

- Esenalieva, G.; Khan, M.T.; Ermakov, A.; Tursunbekova, E.T. Real-time sign language recognition. Alatoo Acad. Stud. 2024, 2024, 165–174. [Google Scholar] [CrossRef]

- Kow, P.Y.; Hsia, I.W.; Chang, L.C.; Chang, F.J. Real-time image-based air quality estimation by deep learning neural networks. J. Environ. Manag. 2022, 307, 114555. [Google Scholar]

- Zhang, Q.; Fu, F.; Tian, R. A deep learning and image-based model for air quality estimation. Sci. Total Environ. 2020, 724, 138178. [Google Scholar] [CrossRef] [PubMed]

- Xue, Y.; Wang, Y.; Zhang, D. Image-based air quality analysis using deep convolutional neural network. IEEE Trans. Geosci. Remote Sens. 2023, 61, 1–14. [Google Scholar]

- Dong, L.; Li, L.; Jiang, M. Estimating PM2.5 concentration from a single image using convolutional neural networks. Remote Sens. 2021, 13, 2148. [Google Scholar]

- Wang, X.; Wang, M.; Liu, X.; Mao, Y.; Chen, Y.; Dai, S. Surveillance-image-based outdoor air quality monitoring. Environ. Sci. Ecotechnology 2024, 18, 100319. [Google Scholar] [CrossRef] [PubMed]

- Mondal, J.J.; Islam, M.; Islam, R.; Rhidi, N.; Newaz, S.; Manab, M.A.; Islam, A.B.M.A.A.; Noor, J. Uncovering local aggregated air quality index with smartphone captured images leveraging efficient deep convolutional neural network. Sci. Rep. 2024, 14, 1320. [Google Scholar] [CrossRef] [PubMed]

- Utomo, S.; Rouniyar, A.; Jiang, G.H.; Chang, C.H.; Tang, K.C.; Hsu, H.C.; Hsiung, P.A. Air quality prediction from images in Indonesia: enhancing model explainability through visual explanation with AQI-Net and Grad-CAM. Environ. Data Sci. 2024, 3, e25. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. In Proceedings of the Proceedings of the International Conference on Learning Representations, 2015. [Google Scholar]

- Hardini, A.; Kusuma, P.; Dewi, R. An ensemble deep learning approach for air quality estimation in Delhi, India. Earth Sci. Inform. 2024, 17, 891–906. [Google Scholar]

- Aslam, N.; Khan, I.U.; Mirza, S. Low-cost video-based air quality estimation system using structured deep learning with selective state space modeling. Environ. Int. 2025, 198, 109012. [Google Scholar]

| Study | Architecture | Images | Target | Best metric |

|---|---|---|---|---|

| [15] | CNN–LSTM (VGG/ResNet) | 3,549 | AQI | R2 = 0.94 |

| [16] | ResNet CNN | 8,000+ | AQI | R2 = 0.71 |

| [11] | Multimodal CNN | 6,000+ | AQI | R2 = 0.65 |

| [17] | Deep CNN + attention | 15,000+ | AQI | R2 = 0.78 |

| [18] | EfficientNet + metadata | 12,000+ | PM2.5 | MAE = 18 g/m3 |

| [19] | CNN–LSTM (VGG16) | 7,213 | AQI | |

| [20] | DCNN (smartphone) | ∼1,000 | PM2.5 | — |

| [21] | AQI-Net (CNN+Grad-CAM) | 11,000+ | AQI | Acc = 99.8% |

| Present study | VGG16 / EffNetB0 | 1,014 | AQI | RMSE = 66.49 |

| Split | Samples | Proportion | Usage |

|---|---|---|---|

| Training | 710 | 70.0% | Model optimisation |

| Validation | 151 | 14.9% | Early stopping, hyperparameter tuning |

| Test | 153 | 15.1% | Final held-out evaluation |

| Total | 1,014 | 100% | — |

| VGG16 | EfficientNetB0 | |||

|---|---|---|---|---|

| Epoch | Val Loss | Val MAE | Val Loss | Val MAE |

| 1 | 1.8117 | 2.2562 | 0.6921 | 1.1066 |

| 3 | 1.5057 | 1.9558 | 0.7002 | 1.1084 |

| 5 | 1.4238 | 1.8677 | 0.6903 | 1.0896 |

| 10 | 1.2896 | 1.7282 | — | — |

| 13 | 1.2200 | 1.6582 | — | — |

| 15 | 1.2378 | 1.6823 | — | — |

| Model | RMSE | MAE | R2 |

|---|---|---|---|

| VGG16 + PM2.5 hybrid | 78.71 | 58.78 | −0.794 |

| EfficientNetB0 + PM2.5 hybrid | 66.49 | 49.00 | −0.280 |

| Improvement (EffNet vs VGG) | −15.5% | −16.6% | +0.51 abs. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).