2. Research Progress in Quantum State Purification and Quantum Error Correction

The core objective of quantum error correction is to protect quantum information from physical noise through encoding redundancy. Recent experimental milestones in quantum error correction have demonstrated real-time fault-tolerant error correction using the color code on trapped-ion platforms [

13], fault-tolerant universal gate sets on logical qubits encoded in the seven-qubit code [

14], and quantum error correction beyond the break-even point with discrete-variable bosonic encodings [

15], collectively establishing the practical viability of fault-tolerant quantum computation. The surface code, as the topological quantum error correcting code most deeply studied at present, has a two-dimensional lattice structure that only requires coupling between nearest-neighbor qubits, a characteristic that gives the surface code natural implementation advantages on hardware platforms such as superconducting quantum processors. Experiments based on superconducting platforms have confirmed that under distance-5 encoding, the error rate of logical qubits can be suppressed to a level better than that of a single physical qubit [

16]. This work marks the breakthrough of the "break-even point" in the field of quantum error correction and also provides a direct experimental benchmark for extending code distance. The practical feasibility of topological quantum memories has been further validated through the demonstration of real-time quantum error correction beyond the break-even point on superconducting platforms, where model-free reinforcement learning was employed to optimize feedback strategies and achieve a coherence gain exceeding a factor of two over the best physical qubit [

17]. On neutral atom platforms, the introduction of reconfigurable atomic arrays endows quantum processors with flexible long-range connectivity, and the logical quantum processor thereby implemented has demonstrated multiple encoding operations including surface codes, color codes, and fault-tolerant logical GHZ state preparation on up to 280 physical qubits [

18]. This architecture validates the feasibility of logical-level parallel control, while also revealing the profound impact of hardware topology on error correction performance. The graph-theoretic properties underlying such hardware connectivity structures—including network diagnosability under comparison diagnosis models [

19], edge connectivity of expanded hypercube-like architectures [

20], Hamiltonian path properties in interconnection digraphs [

21], and fault-tolerant path embedding in k-ary n-cube networks with faulty nodes—have been systematically studied in interconnection network theory [

22], providing mathematical tools that inform the topological design and fault-tolerance analysis of quantum processor architectures.

Encoding efficiency is the key bottleneck constraining resource overhead for large-scale fault-tolerant quantum computing. Quantum low-density parity-check codes, with their high encoding rate and favorable code distance growth characteristics, are considered strong candidates for overcoming the efficiency limitations of surface codes; a comprehensive review of this code family has systematically analyzed their construction, decoding, and fault-tolerance properties [

23]. Research has shown that non-local syndrome extraction circuits realized through atom rearrangement operations in reconfigurable atomic arrays can run high-efficiency quantum LDPC codes with constant-scale resource overhead, with fault-tolerant performance surpassing surface codes at scales of only a few hundred physical qubits [

24]. The precision of decoding algorithms also has a decisive impact on error correction effectiveness. The neural network decoder AlphaQubit based on a cyclic Transformer architecture achieved performance surpassing traditional matching decoders on real experimental data from the Google Sycamore processor; this decoder can adaptively learn complex noise characteristics such as crosstalk and leakage [

25]. The experimental implementation of dynamic surface codes further extends the temporal degrees of freedom of error correcting codes; by alternating the roles of data bits and measurement bits in each round of error correction, this scheme achieves error correction with built-in leakage removal on a hexagonal lattice [

26]. Magic state distillation is a key resource preparation step for realizing universal fault-tolerant quantum computing. On superconducting processors, researchers successfully prepared logical magic states with fidelity exceeding the break-even point using error correction encoding, demonstrating that adaptive circuits can improve magic state yield without increasing the number of physical qubits [

27]. The introduction of fault-tolerant post-selection technology provides a systematic optimization framework for reducing magic state preparation overhead, significantly reducing the resources required for strong logical error suppression [

28].

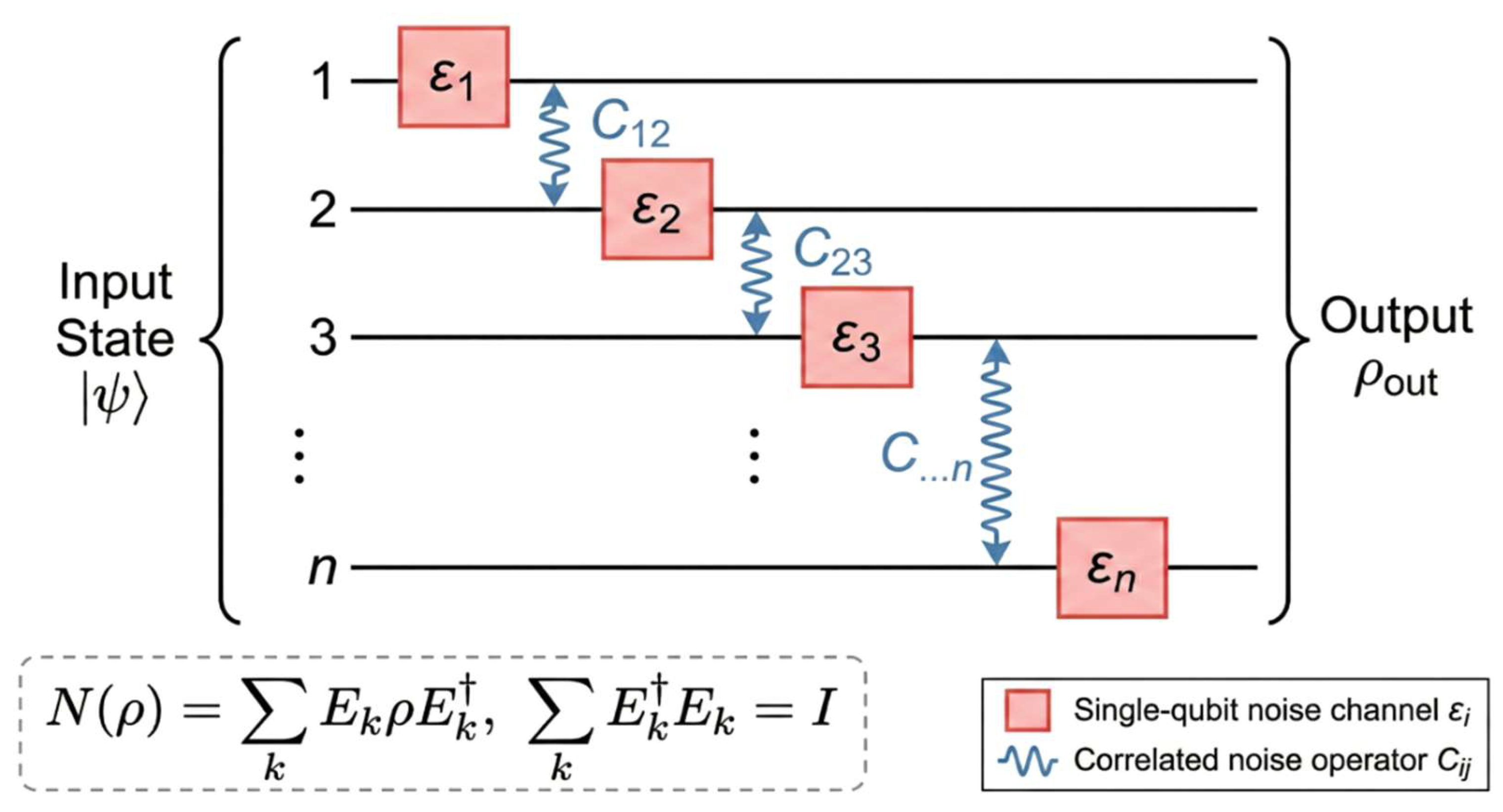

As quantum error correction technology matures, error mitigation technology oriented toward near-term noisy quantum devices constitutes another complementary technical approach. Probabilistic error cancellation methods obtain unbiased expected value estimates by learning and inverting noise channels; experiments based on sparse Pauli-Lindblad noise models have successfully demonstrated effective mitigation of correlated noise on a 20-qubit superconducting processor [

29]. Large-scale error mitigation experiments implemented by the IBM team on 127-qubit processors demonstrated the practical value of quantum computing before the arrival of the fault-tolerance threshold [

30]. This experiment adopted a combined strategy of zero-noise extrapolation and probabilistic error cancellation, obtaining physical observables superior to classical approximations on a 60-layer deep Ising model simulation circuit. However, the scalability of error mitigation technology faces fundamental theoretical constraints—even when circuit depth only slightly exceeds constant order, the number of sampling instances required in the worst case will exhibit super-polynomial growth [

31]. This finding profoundly reveals the intrinsic bottleneck of mitigation strategies in large-scale applications, and from a different angle illustrates the necessity of integrating purification operations with error correction mechanisms. Scalability experiments of zero-noise extrapolation methods on larger-scale quantum circuits further validate the above assertion, demonstrating at 26 qubits and circuit depth 120 layers that the accuracy of mitigated observables notably exceeds expectations [

32]. It is also worth noting that the challenge of coping with non-stationary dynamics in complex systems extends beyond quantum computing; in fields such as financial time series analysis, spatio-temporal graph attention mechanisms have been developed to capture regime-switching behavior in non-stationary environments [

33], and the conceptual need for adaptive strategies that respond to time-varying conditions is equally critical in quantum error correction, where processor noise characteristics are known to drift over time.

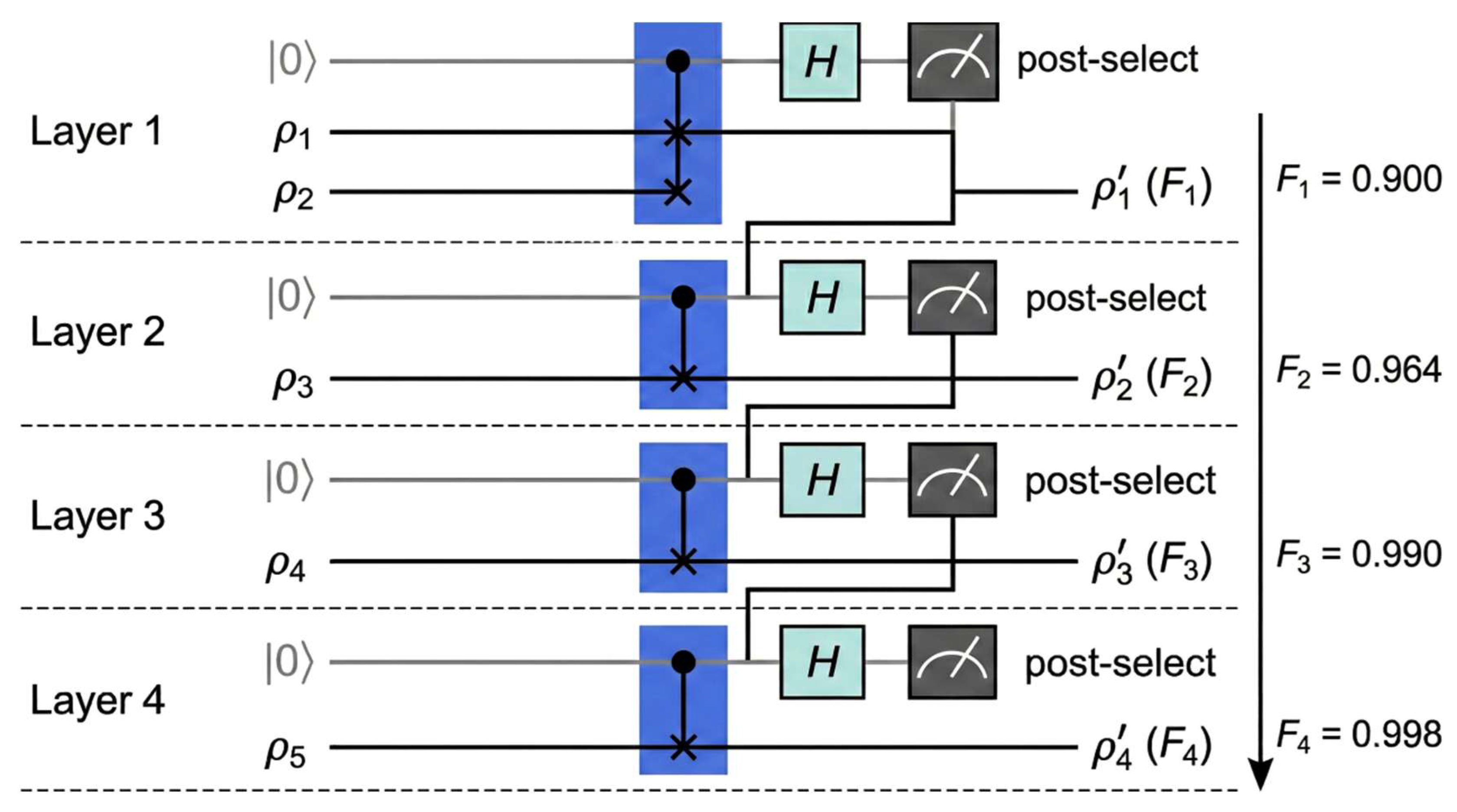

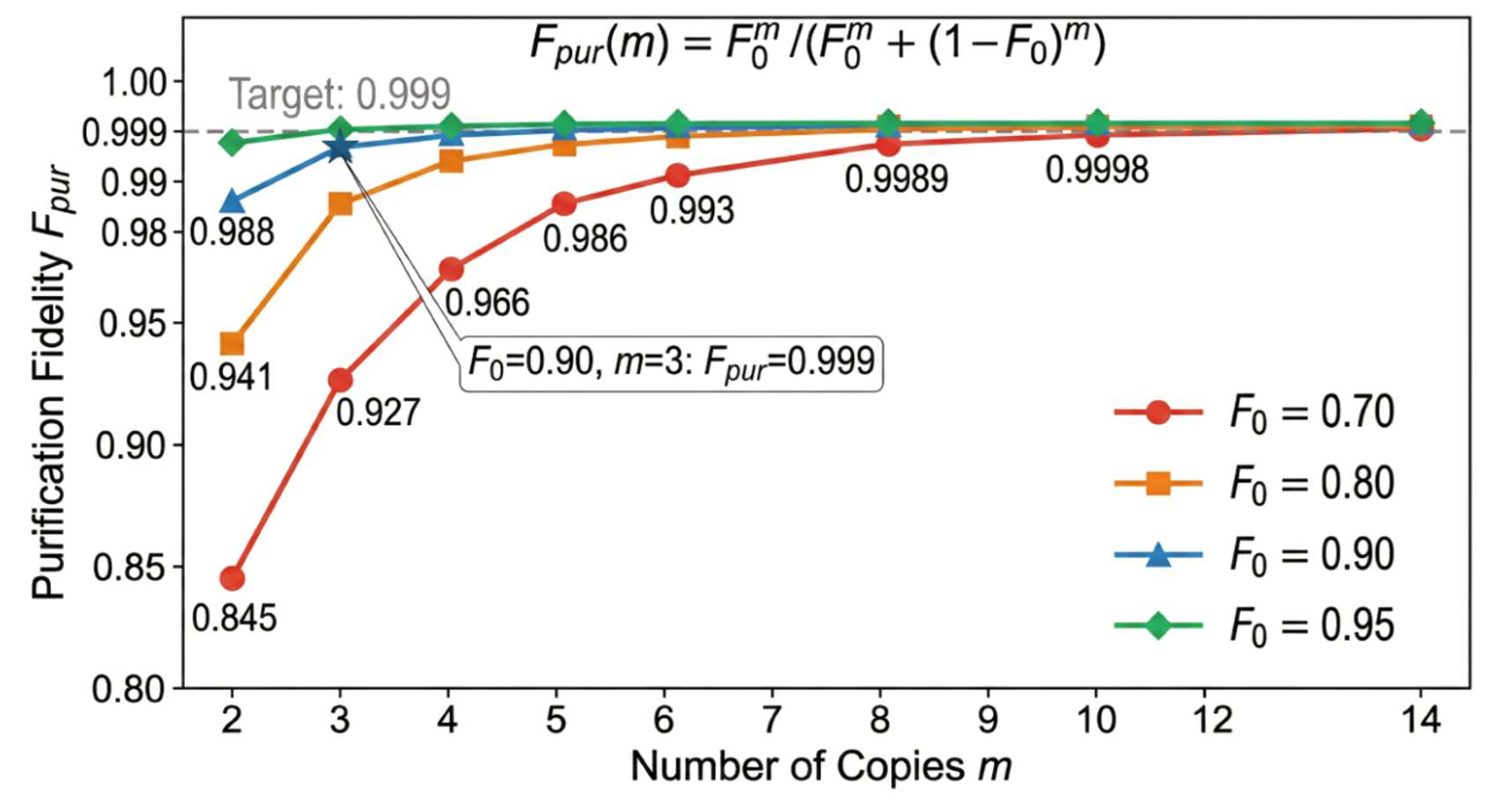

Quantum state purification provides a method for noise suppression whose theoretical basis is independent of specific noise models. At the algorithmic level of purification theory, a streaming purification protocol based on SWAP tests extends the purification process to quantum states of arbitrary dimension and proves that recursive SWAP test schemes are asymptotically optimal in sample complexity [

34]. Optimality analysis of purification protocols shows that under depolarizing noise, there exists a precise trade-off relationship between purification fidelity and success probability, providing theoretical guidance for purification strategy selection in resource-constrained scenarios [

35]. The virtual channel purification protocol extends purification ideas from the quantum state level to the quantum channel level; through the joint design of flag fault-tolerance technology and virtual state purification, this protocol can still provide rigorous error suppression guarantees without knowing the target quantum state or noise model [

36]. The joint optimization problem of time and space overhead in fault-tolerant quantum computing also merits attention; a scheme combining non-zero rate quantum LDPC codes with concatenated Steane codes has been proven capable of achieving fault-tolerant computation with constant space overhead and poly-logarithmic time overhead [

37]. At the intersection of quantum error correction and mitigation, experiments applying zero-noise extrapolation to error correction circuits reveal that logical errors exhibit polynomial dependence on noise intensity, a characteristic that gives extrapolation methods natural compatibility within the error correction framework [

38]. Comprehensive experimental validation oriented toward fault-tolerant architectures has achieved milestone progress on neutral atom platforms; this research integrated surface code error correction, transversal gate logical entanglement, and transversal teleportation based on the [[

15,

1,

3]] code in a system of up to 448 atoms, achieving for the first time all core elements of universal fault-tolerant quantum computation within a single experimental system [

39]. The theoretical breakthrough of constant-overhead magic state distillation demonstrates that carefully constructed error correction codes can minimize the distillation overhead exponent γ, thereby removing a long-standing resource bottleneck in universal quantum computing [

40]. Zero-level distillation schemes complete high-fidelity preparation of magic states at the physical level, reducing the number of physical qubits required for distillation by one to two orders of magnitude [

41]. New strategies in quantum error mitigation based on structural encoding and classical error correction codes further confirm the central role of coding redundancy in noise suppression [

42].

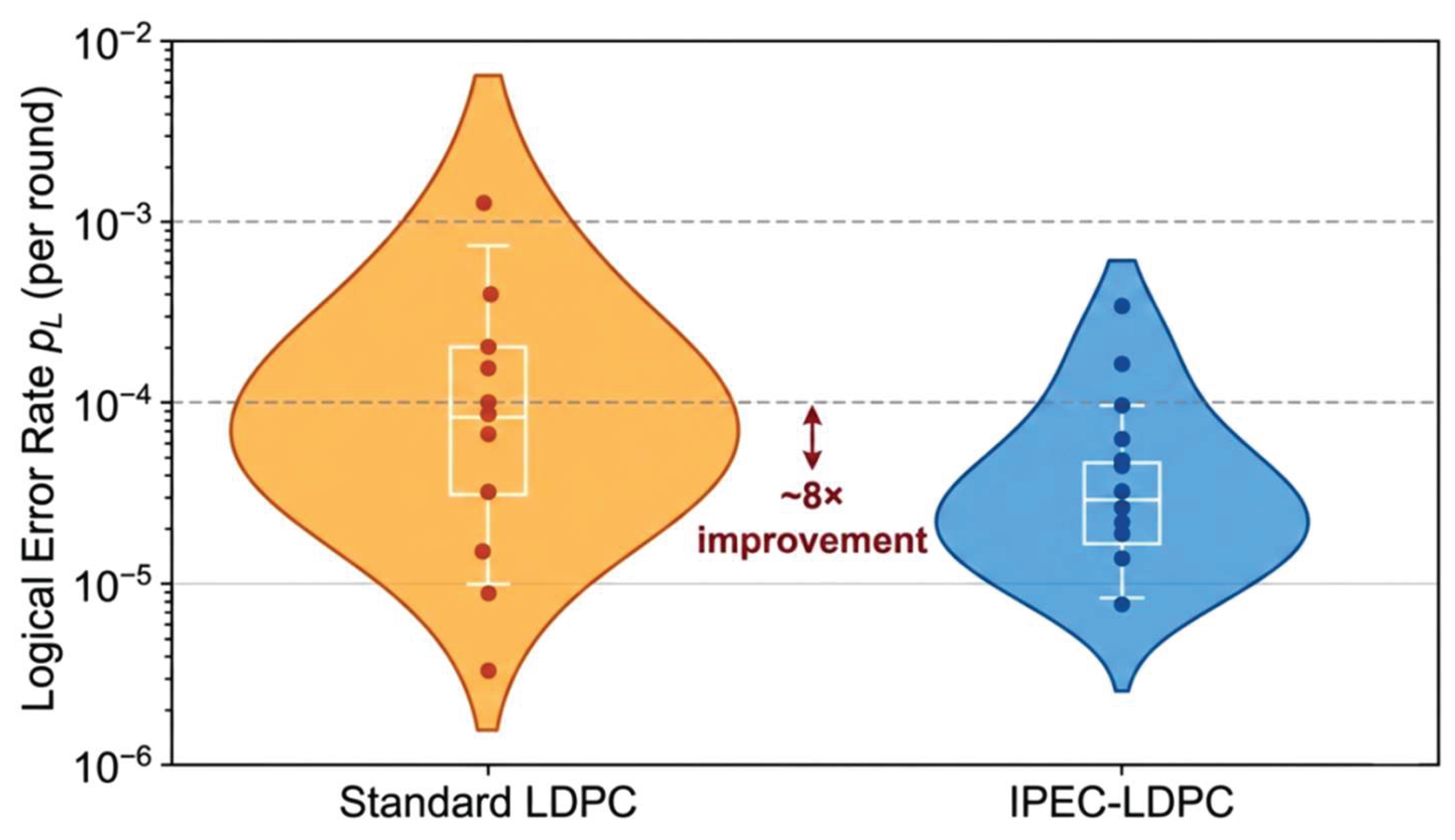

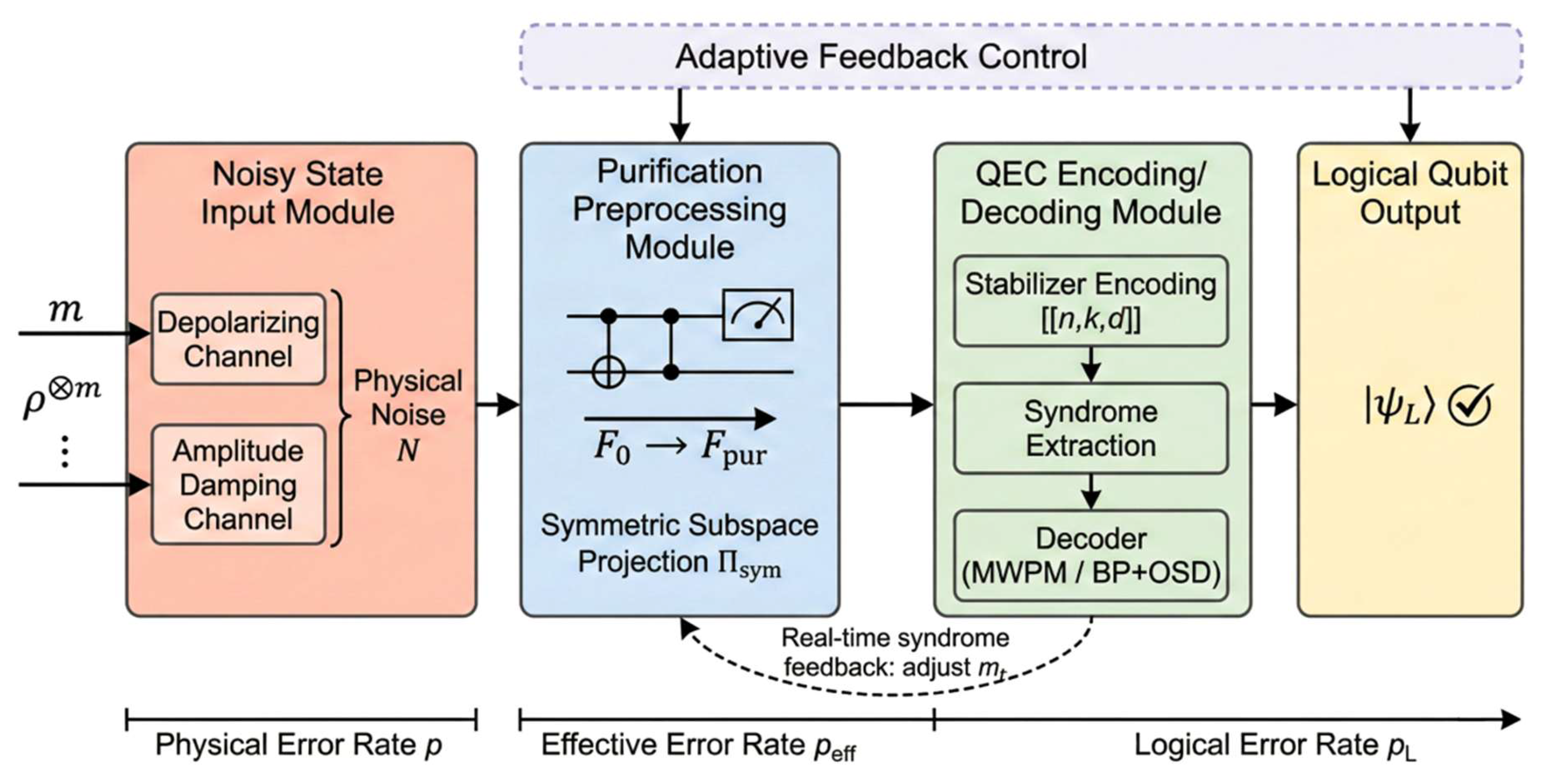

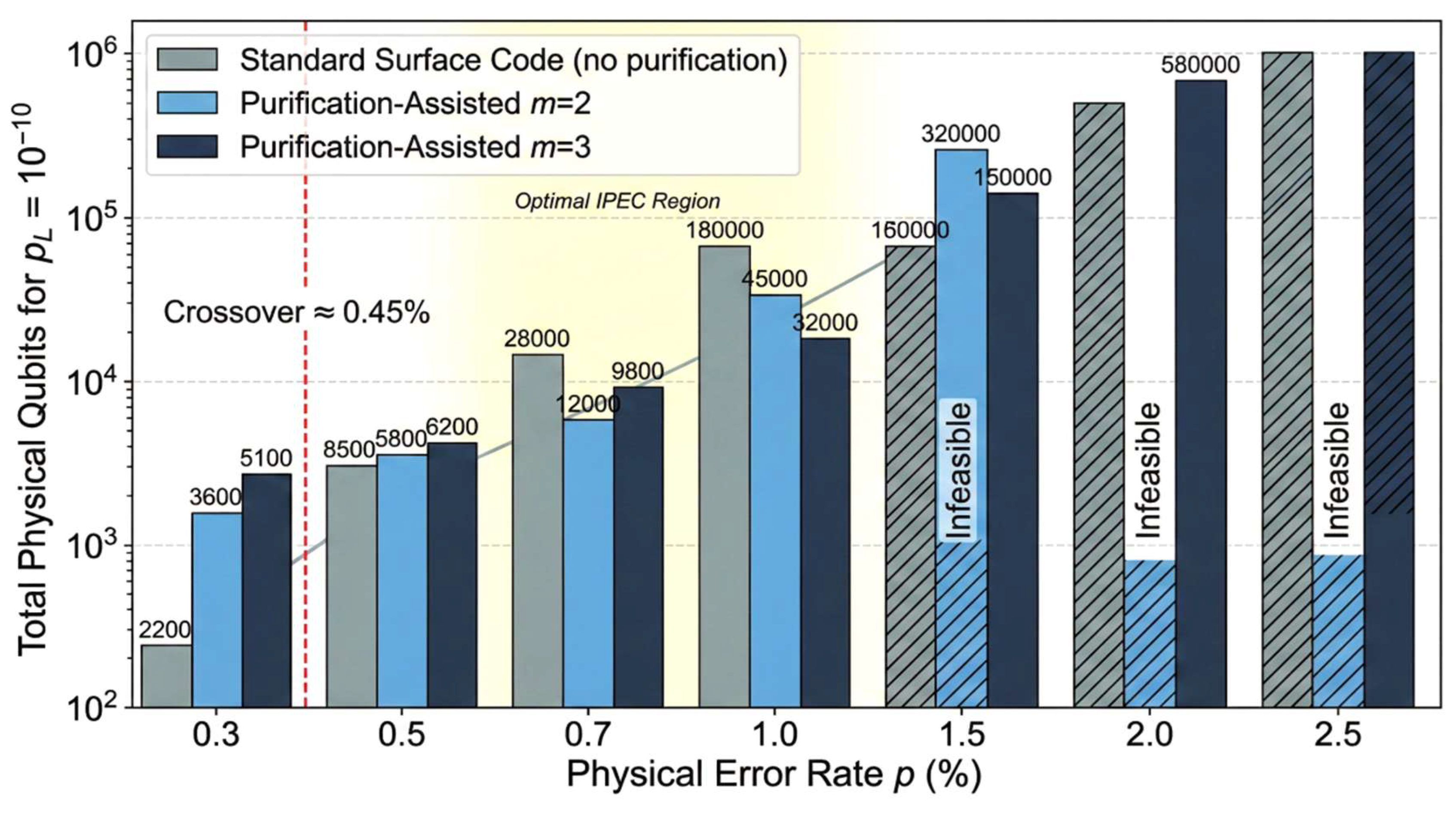

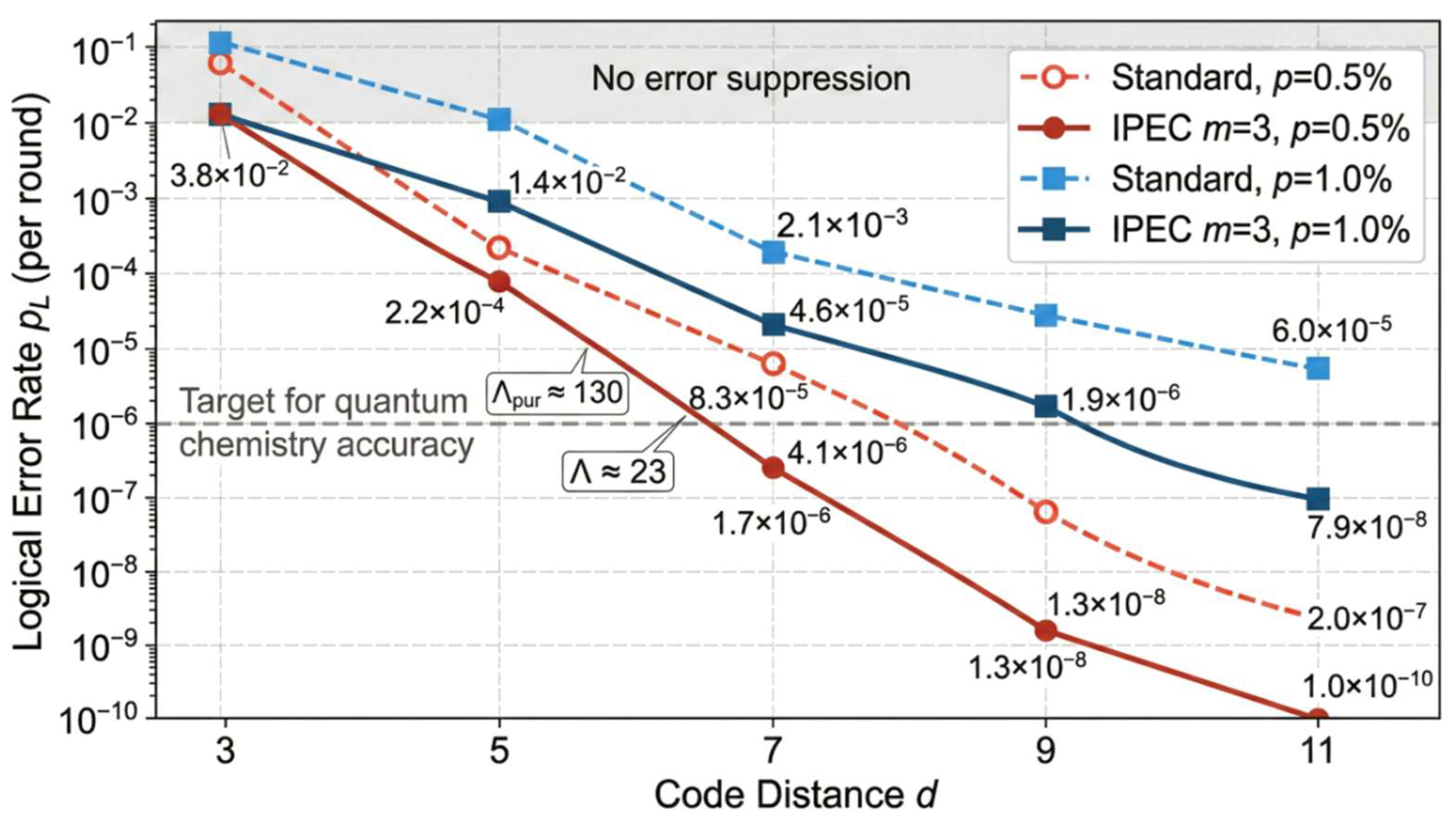

To clearly position the contributions of this work relative to existing approaches,

Table 1 provides a comparative summary of representative purification-related methods and the framework proposed in this paper.

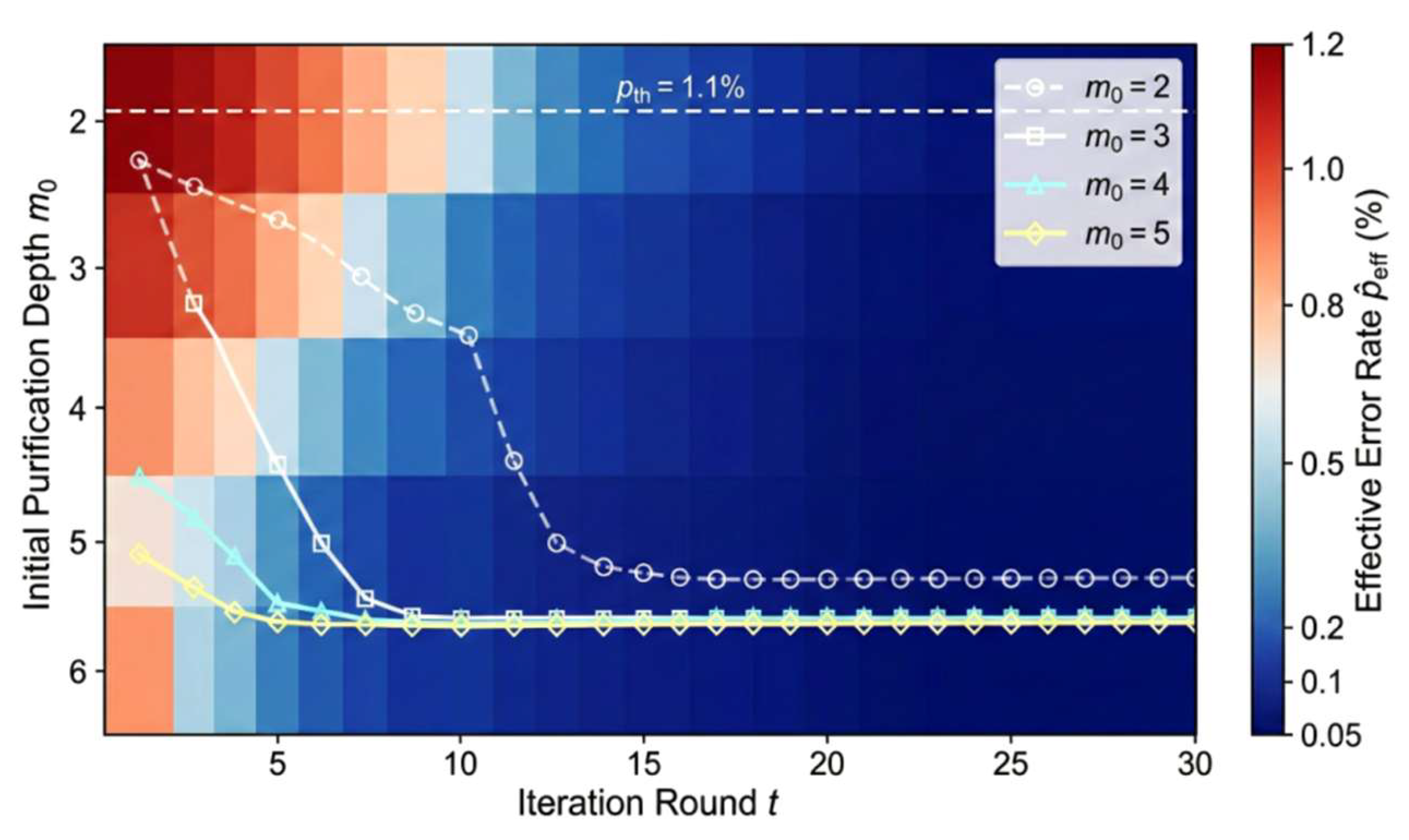

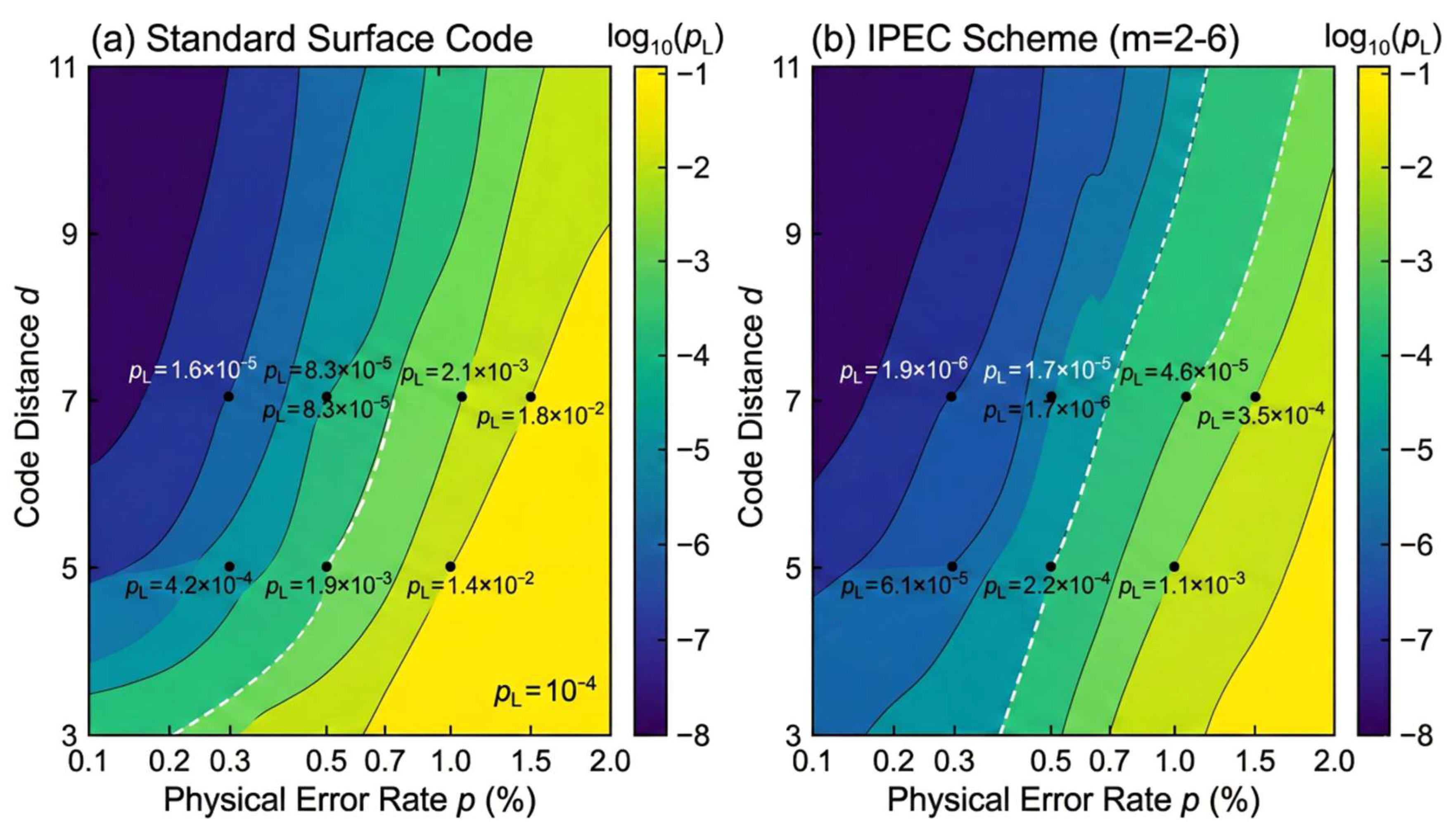

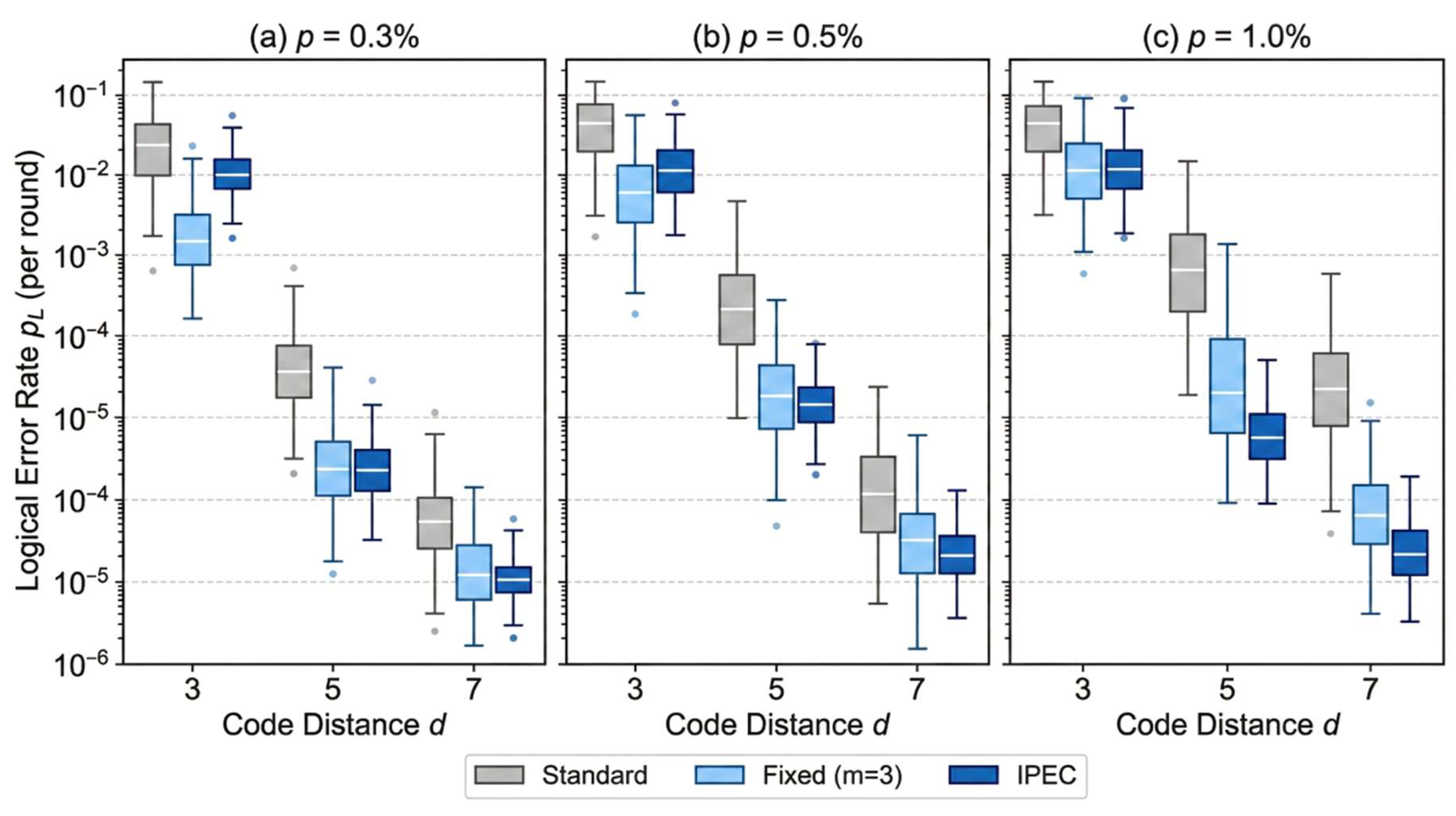

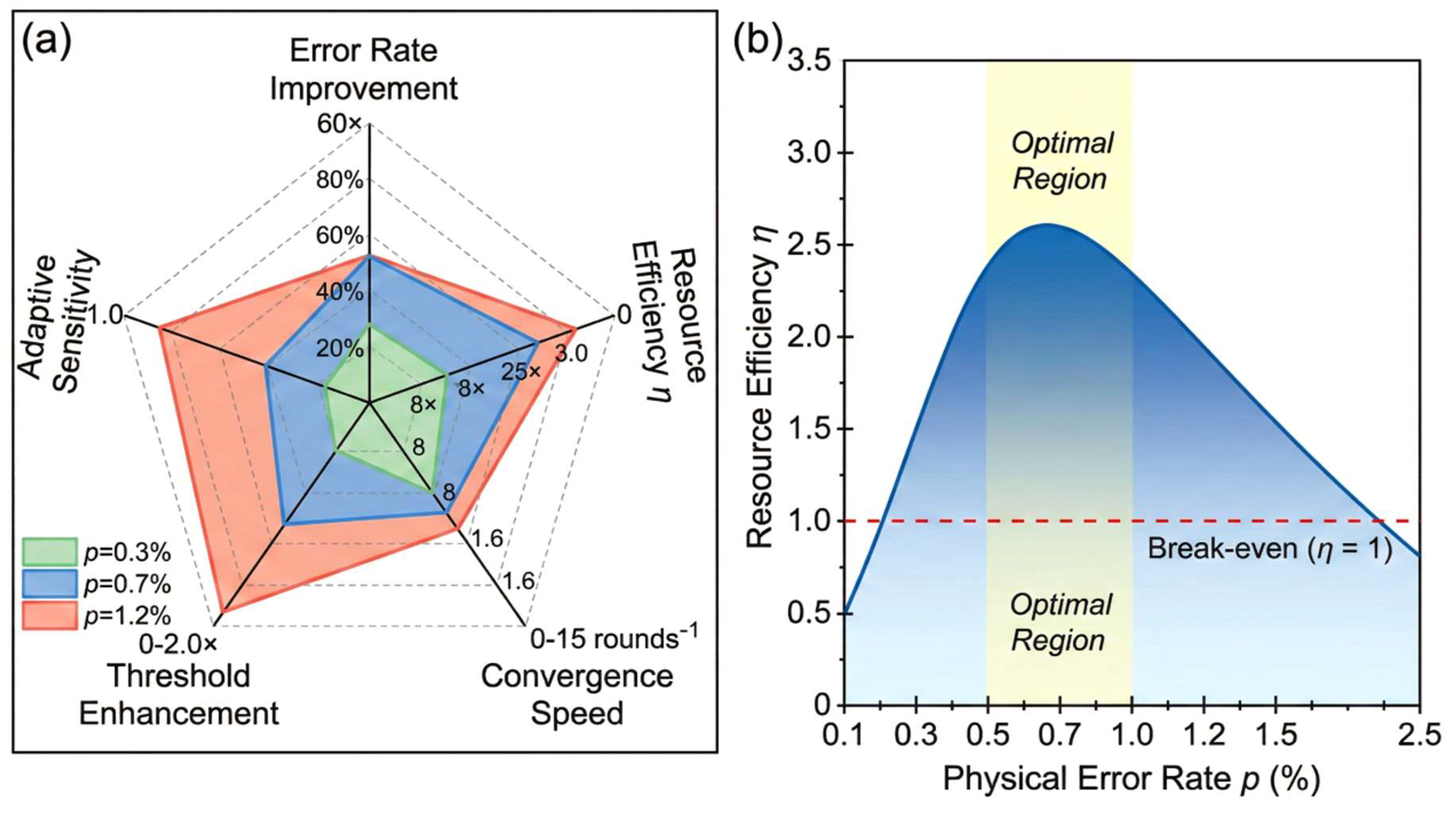

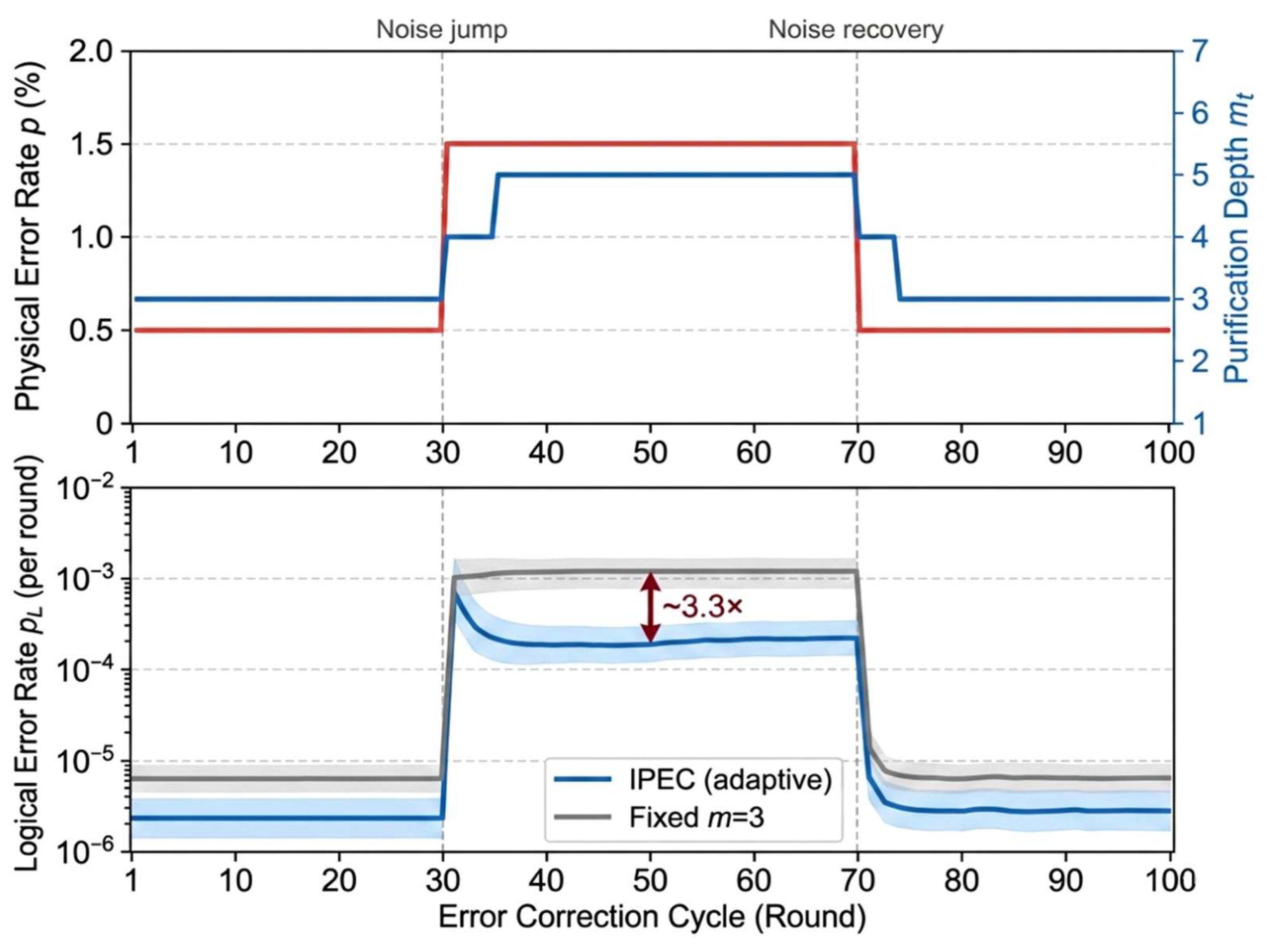

As shown in

Table 1, three key distinctions differentiate the proposed framework from existing approaches. First, this work establishes a unified framework that systematically embeds purification as an entropy suppression preprocessing layer into the error correction pipeline, rather than treating purification and error correction as isolated single-point techniques. Second, the IPEC algorithm is not a simple superposition of purification and error correction; its core innovation lies in the adaptive feedback mechanism that dynamically adjusts purification depth based on real-time syndrome information, enabling the system to respond to runtime noise fluctuations—a capability absent from all existing purification schemes. Third, this work provides quantitative characterization of the threshold enhancement (from approximately 1.1% to approximately 2.0% with three copies), whereas prior works either offer only qualitative theoretical analysis or rely solely on experimental observations without closed-form threshold expressions.

Synthesizing the above research advances, it can be seen that experimental validation of quantum error correcting codes has crossed fault-tolerance thresholds on multiple hardware platforms, error mitigation technology has demonstrated notable noise suppression effects on near-term devices, and quantum state purification theory has achieved systematic breakthroughs in algorithmic efficiency and optimality. However, existing work still has notable gaps in four aspects: purification operations have not yet been incorporated as organic components into the encoding and decoding processes of error correction codes; there is a lack of dynamic coordination mechanisms based on real-time feedback information between purification rounds and error correction strategies; the information-theoretic interpretation of how purification reduces the von Neumann entropy of noisy quantum states—and the quantitative relationship between entropy reduction rate and error correction performance—has not been established; and the quantitative contribution of purification to fault-tolerance thresholds and logical error rates has not yet obtained analytical theoretical characterization. These unresolved problems constitute the starting point of the research in this paper.