6.1. Stratification Granularity and Detection Power

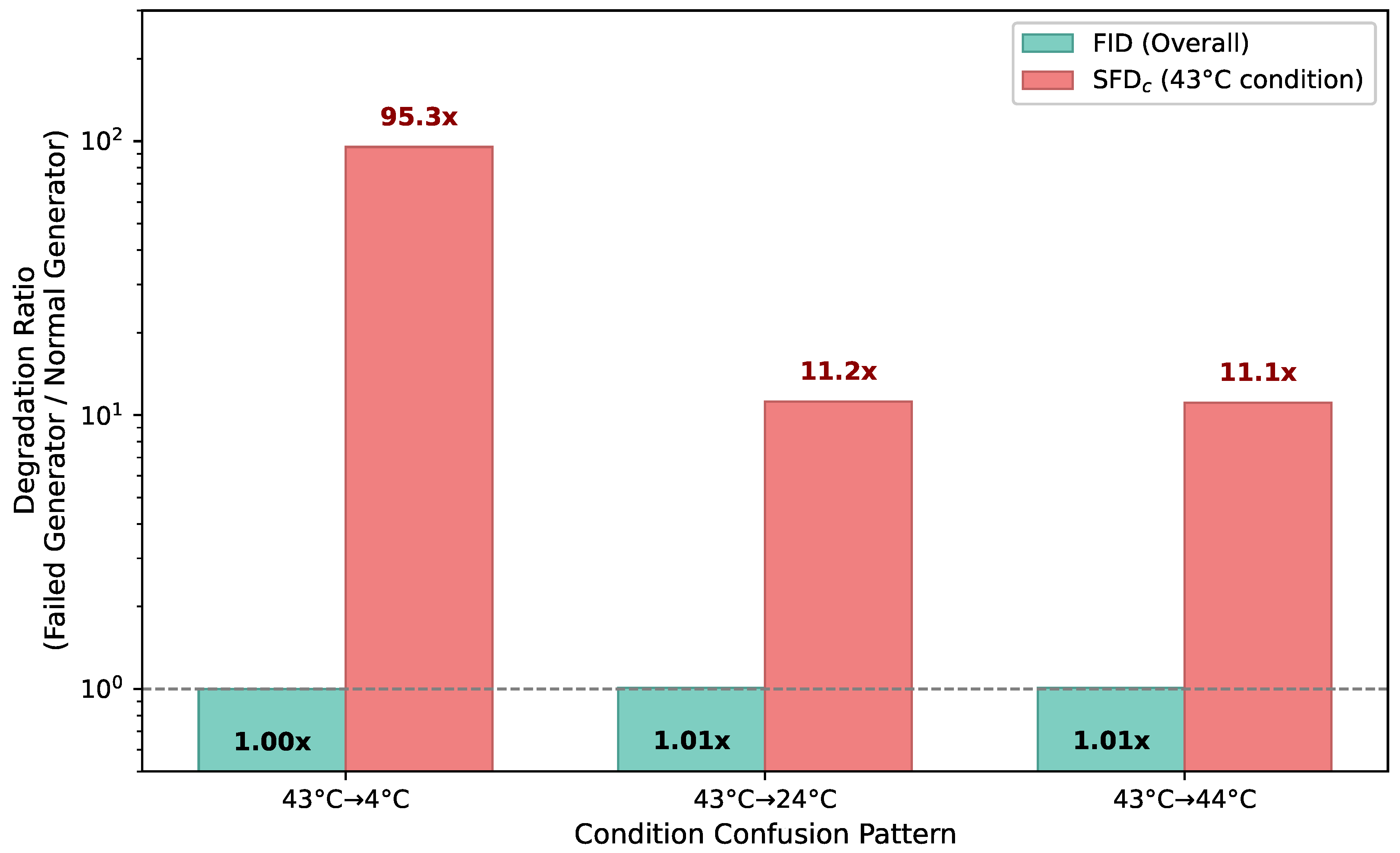

The most important insight to emerge from the eight experiments is the consistent pattern that FID’s dilution problem traces back to the absence of stratification, and that detection power improves as stratification granularity increases.

Table 15 summarizes the detection performance of each SFD variant in the CVAE Model D experiment.

Starting from a situation where FID judges the model as “no problem” at 1.01×, stratification by condition reveals a 1.97× quality gap, and adding the temporal axis raises it further to 3.18×. This progressive increase demonstrates that SFD’s framework can deliver diverse diagnostics through a single control parameter: the choice of stratification granularity.

There is, however, a counterbalancing consideration. Finer stratification reduces the number of samples per stratum, which in turn degrades statistical stability. As the K-sensitivity analysis showed, feature estimation became unreliable at . In practice, stratification granularity should be chosen to align with physically meaningful divisions (e.g., condition parameters, early-phase versus late-phase degradation) while ensuring that each stratum contains a sufficient number of samples (a rough guideline is at least five).

6.3. Mathematical Structure of Dilution: Variance Decomposition and Entropy

Why does dilution occur in FID? A deeper understanding can be obtained by connecting SFD to well-established results in multivariate statistics and information theory. The analysis below does not introduce new mathematics; rather, it draws on the classical covariance decomposition and the entropy of Gaussian mixtures to provide an interpretive framework that clarifies why SFD avoids dilution and when SFD is most beneficial.

Let the conditional distribution under condition

be

. The covariance matrix of the mixture distribution

decomposes into within-group and between-group components:

where

is the overall mean.

This decomposition has the same structure as the total-variance decomposition in analysis of variance (ANOVA): total variance = between-group variance + within-group variance. Because FID is computed using the mixture covariance , it incorporates the between-group component . When the conditional means differ substantially across conditions—as they do for degradation patterns at 4C versus 43C— becomes large and inflates well beyond any individual .

This inflation is the mathematical substance of dilution. The Fréchet distance in FID is computed on the basis of , so changes in a particular condition’s covariance can be dwarfed by the sheer magnitude of . Within-SFD, by contrast, computes the Fréchet distance directly from each condition’s , entirely bypassing and thereby detecting per-condition quality changes without dilution.

This variance decomposition also connects to entropy. The entropy of a multivariate Gaussian is determined by the determinant of its covariance matrix:

. Consequently, the entropy of the mixture distribution is always at least as large as the conditional entropy:

The gap

is the mutual information, which quantifies how strongly the condition parameter

C influences the distribution of the time series

X. The larger

is—that is, the more the distribution varies across conditions—the more severe the dilution in FID becomes.

From this analysis, the structural meaning of SFD comes into sharp focus. FID measures a “whole-distribution distance” corresponding to ; the more the conditions contribute (large ), the coarser this evaluation becomes. Within-SFD measures a “per-condition distribution distance” corresponding to and is unaffected by the magnitude of . Between-SFD evaluates the consistency of inter-condition distributional relationships and provides complementary information related to .

We note that this correspondence is a structural analogy rather than a strict equality. The Fréchet distance is a Wasserstein-2 distance and possesses different geometric properties from the KL divergence, which contains entropy differences directly. Nevertheless, the analogy yields an important practical implication: the larger is for a given dataset, the more severely FID is affected by dilution and the greater the benefit of introducing SFD. Battery degradation data, where degradation patterns differ qualitatively across temperature conditions, represent a domain with high and thus one where SFD offers the greatest advantage.

6.4. Practical Guidelines

We summarize practical guidelines for incorporating SFD into generative model development.

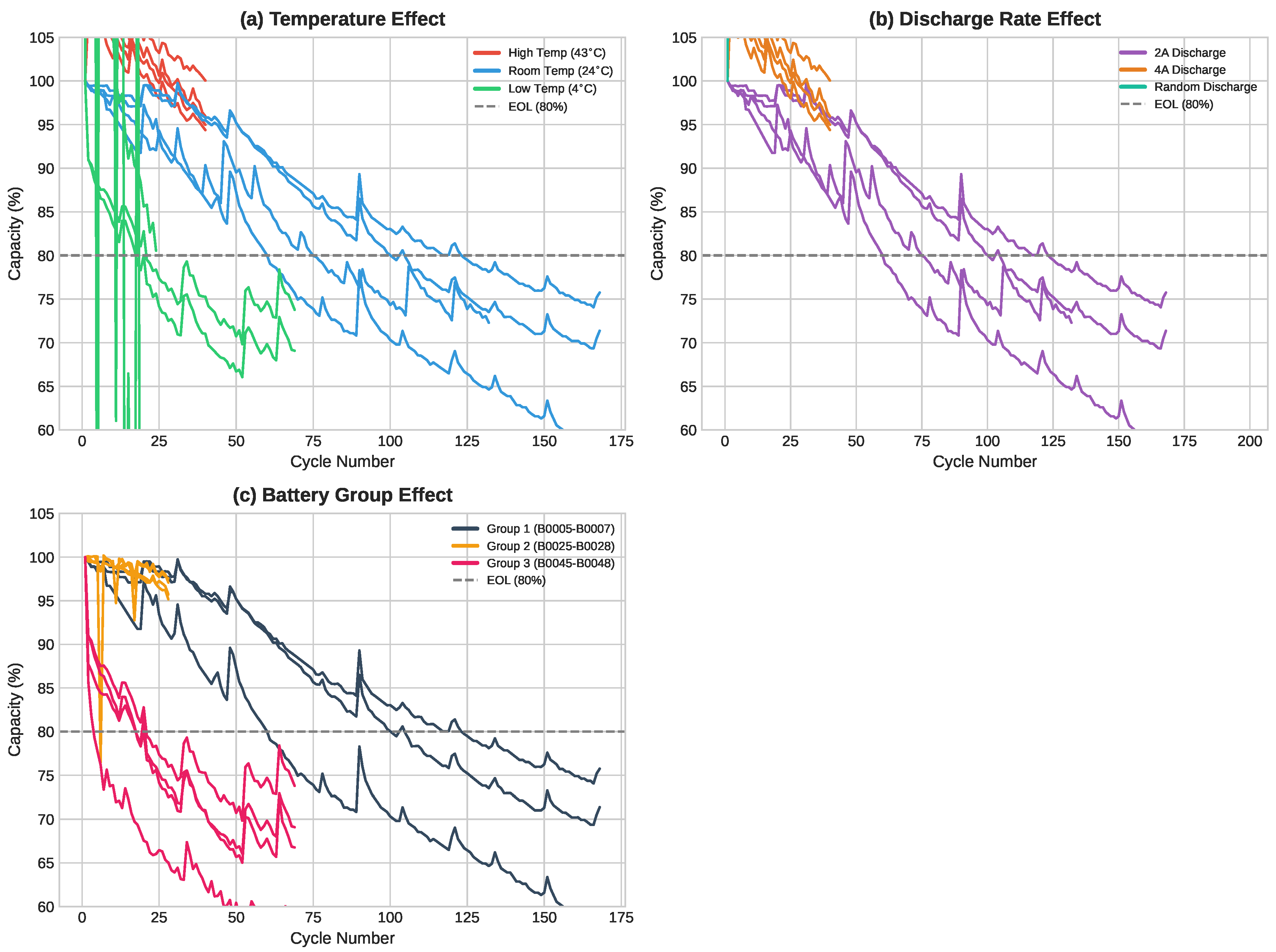

The need for condition-specific quality assurance is not hypothetical. In the battery digital twin paradigm, synthetic degradation data are increasingly used to augment training datasets, to simulate untested operating scenarios, and to support lifecycle management decisions[

16,

17]. When a digital twin generates synthetic 43

C degradation curves to fill a gap in the experimental database, the downstream safety assessment is only as reliable as the quality of those curves. Conventional evaluation via FID would not flag a quality failure localized to 43

C; SFD provides the means to do so.

When the goal is to identify which operating conditions exhibit poor generation quality, is the appropriate choice. When the quality of a specific temporal region—such as the late-life acceleration phase—is of particular concern, as in safety evaluation, or should be employed. When the question is which conditioning variables to include in the model, the Confounding Index (CI) provides a direct answer.

Regarding : to maximize per-condition detection sensitivity, (CFID-equivalent) is optimal. To simultaneously verify physical consistency across conditions, offers a practical compromise. Regarding K: the number of temporal segments should be chosen in light of the time series length and physically meaningful boundaries (e.g., the transition from linear fade to accelerated degradation), rather than purely on the basis of detection sensitivity.

6.5. Limitations and Future Directions

The current formulation of SFD has several limitations.

The most significant is the assumption of discrete strata. In this study, the temperature conditions of the battery data were naturally discrete (15C, 25C, 35C), so discretization posed no issue. For data in which temperature varies continuously in, say, 0.1C increments, appropriate binning is required, and the choice of bin width can influence evaluation outcomes. Extending SFD to continuous stratification via kernel density estimation is an important direction for broadening its applicability.

Our experimental validation relies exclusively on CVAE as the generative model. While CVAE was chosen for its controllability—the ability to systematically exclude conditions from training—the question of whether SFD’s advantages hold for other generative architectures (GANs, diffusion models, flow matching) remains empirically open. We emphasize, however, that SFD is a property of the evaluation metric, not of the generative model. The dilution problem analyzed in Section II arises from FID’s aggregation structure and is independent of how the data were generated. Thus, we expect SFD’s advantage to persist across generative architectures, though experimental confirmation with other model families is a natural direction for future work.

A related concern is statistical rigor. Results are reported as 3-seed averages, but confidence intervals and significance tests are not provided. For ratios such as FID = 1.01× and = 1.97×, the practical significance is visually apparent from the magnitude of the gap, but formal statistical testing—for example, bootstrap confidence intervals on the FD ratio—would strengthen the claims and is planned for an extended version of this work.

Strata with very few samples pose a further challenge. The NASA dataset contains only 2 batteries at 22C, making Gaussian covariance estimation unreliable in that stratum. While covariance regularization mitigates numerical instability, the resulting FD values carry inherent uncertainty that is not reflected in the reported numbers. Future work should incorporate uncertainty quantification, for instance through bootstrap resampling of the per-stratum FD.

The choice of feature extractor also substantially affects SFD values. As Experiment 6 demonstrated, the FID ratio increased from 1.01× with hand-crafted features (17-dim.) to 12.18× with InceptionTime-style features (32-dim.)—a change of over an order of magnitude depending on the feature space. While the structural advantage of SFD (per-condition FD > FID) holds regardless of the feature extractor, the absolute detection sensitivity is strongly feature-dependent. Leveraging large-scale pre-trained time series encoders such as TS2Vec[

28], or developing domain-agnostic feature extractors, are important avenues for improving SFD’s generality.

Although our validation is limited to battery degradation data, the problem that SFD addresses—condition-dependent quality degradation being diluted by FID in conditional time series generation—is not specific to batteries. SFD is applicable wherever condition parameters and distributional imbalance coexist. We outline several concrete scenarios below.

In

industrial fault diagnosis, normal operating data are abundant, but sensor time series for specific fault modes (bearing damage, shaft misalignment, etc.) are scarce[

33]. Generative models are increasingly used to synthesize fault data and rebalance training sets, but verifying whether the synthetic fault data faithfully reproduce actual fault patterns requires per-mode evaluation. Computing

by fault mode would enable diagnoses such as “bearing damage generation is adequate, but shaft misalignment generation is insufficient.” Moreover, because vibration patterns change qualitatively between the early and late stages of fault progression,

can provide temporally localized quality assessment.

In the synthesis of

medical time series (ECG, EEG, etc.), data for rare diseases inevitably form the minority class[

10,

34]. If synthetic data quality is inadequate for a specific disease subtype, a classifier trained on such data risks failing to detect that disease. Stratifying by disease type (

) and by temporal phase (e.g., pre-seizure versus post-seizure for

) provides a means of verifying generation quality at a clinically meaningful granularity.

In

materials science, time series data such as stress–strain curves and thermal analysis curves vary with chemical composition and processing conditions (sintering temperature, pressure, etc.)[

35]. Data for extreme compositions or high-temperature processes are costly to acquire and tend to be underrepresented, giving rise to the same dilution structure observed in battery degradation.

Empirical validation in these domains is left for future work. However, the formulation of SFD is data-agnostic: given an appropriate feature extractor, it can be applied directly.