Submitted:

27 April 2026

Posted:

28 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- 1.

- 2.

- 3.

- Finally, to demonstrate the system works, we zero-shot transfer the policy to a real scenario, achieving consistent behavior, integrated in a real ROS2 [13] aerial framework.

2. Related Work

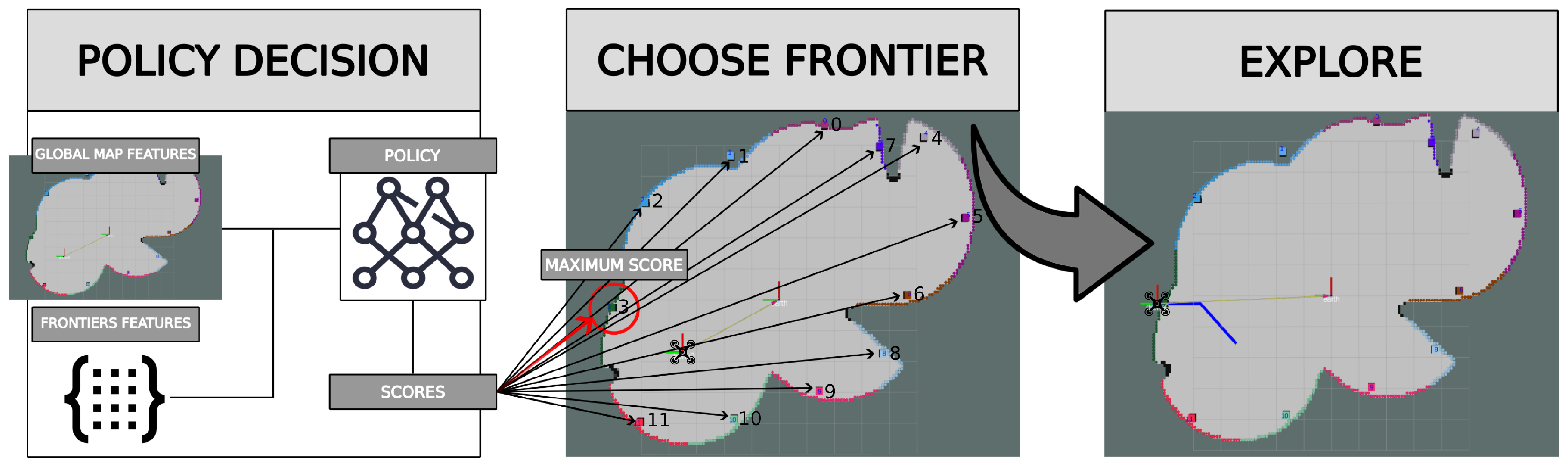

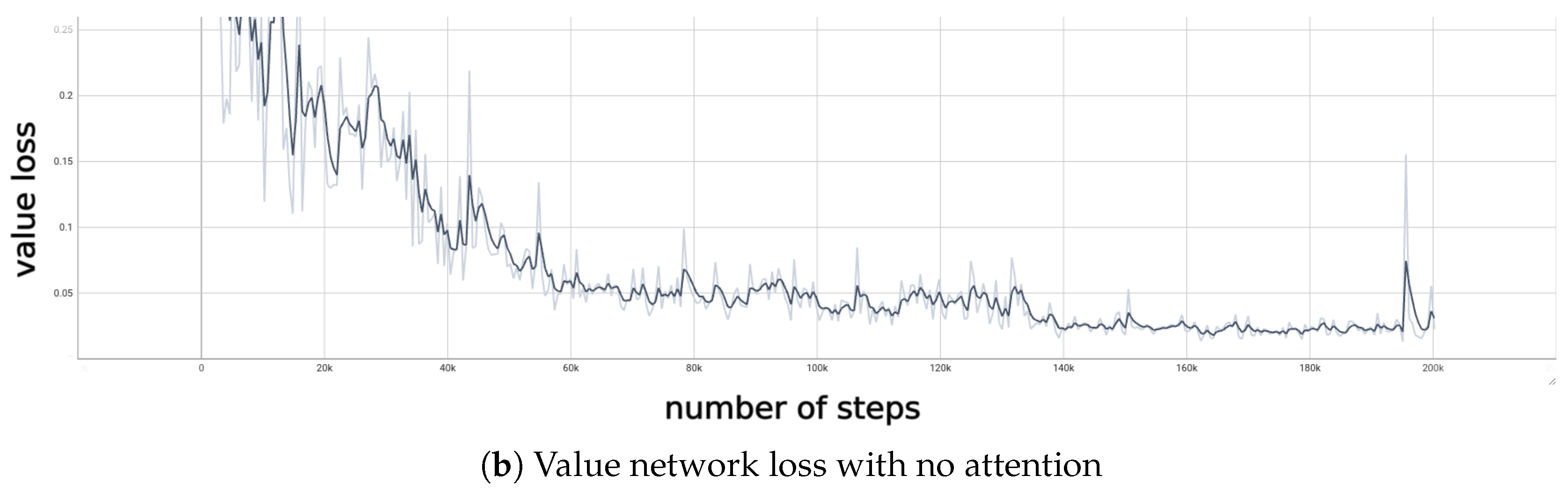

3. Exploration Robotics Framework

4. Reinforcement Learning Based Exploration Approach

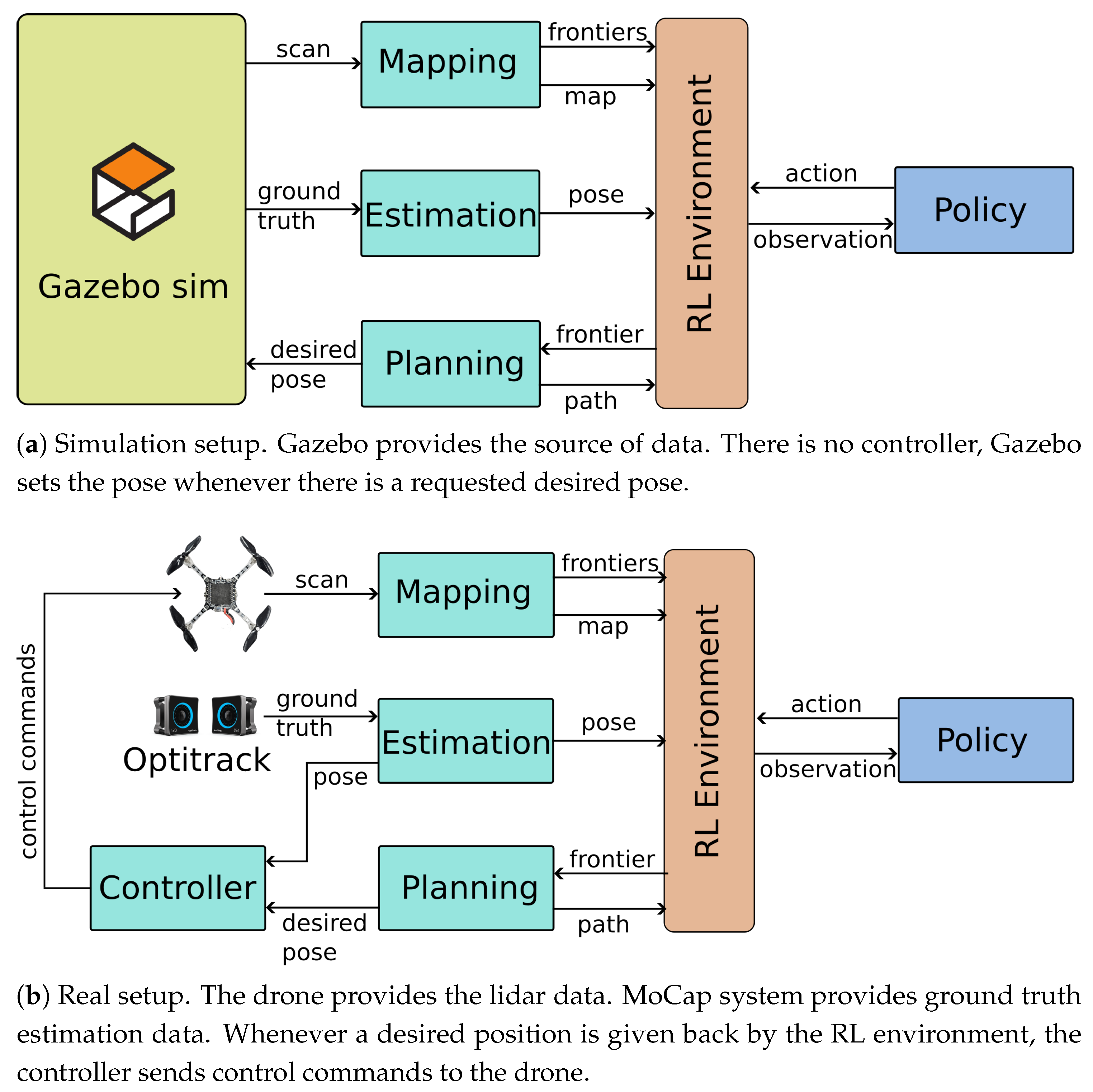

4.1. Observation and Action Spaces

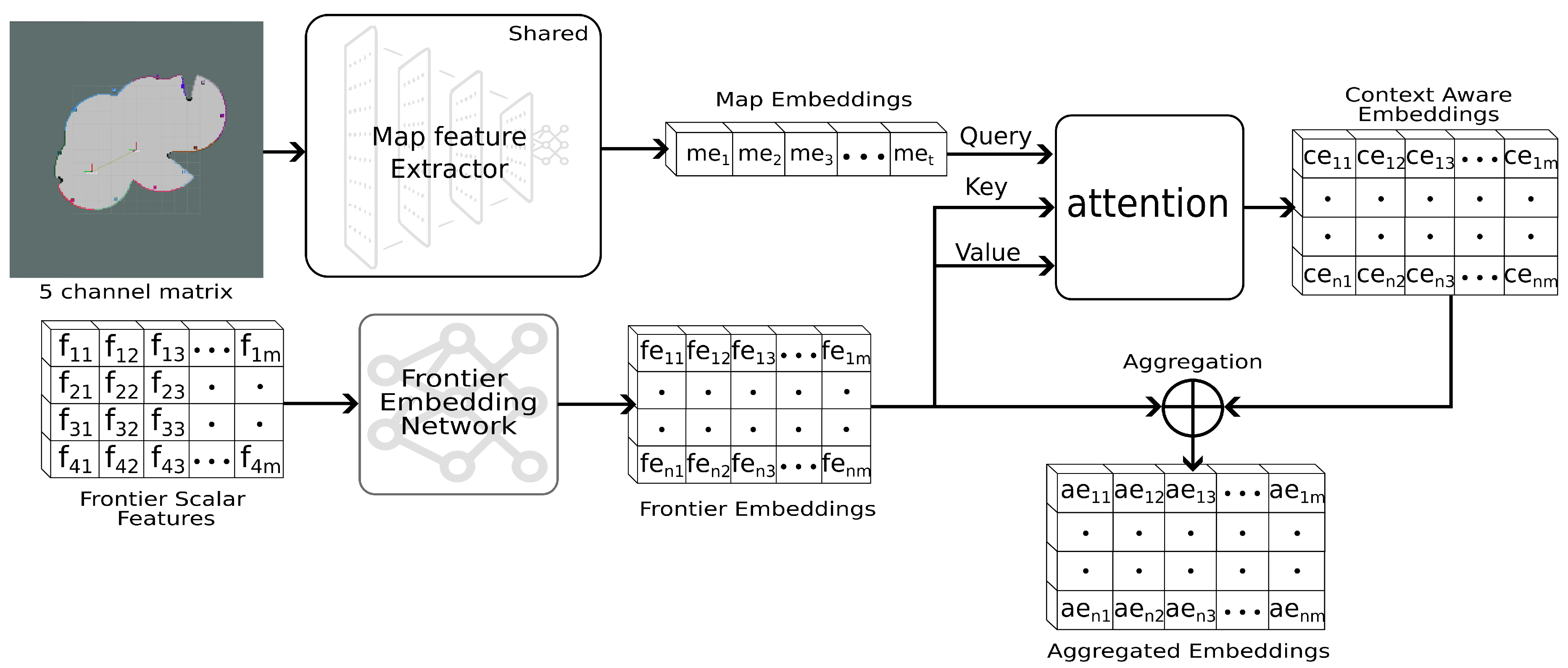

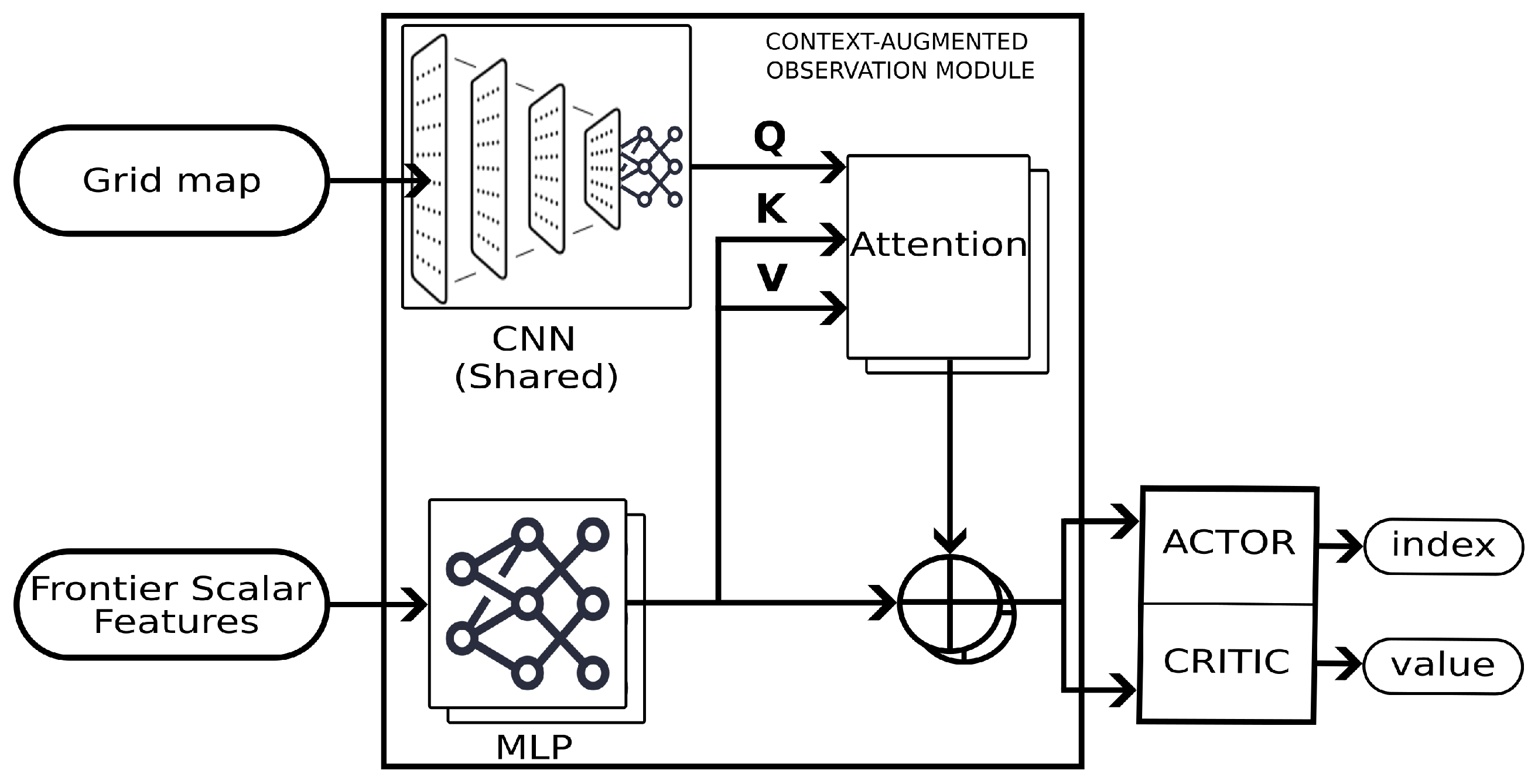

4.2. Policy Architecture

4.3. Context-Augmented Observation Module

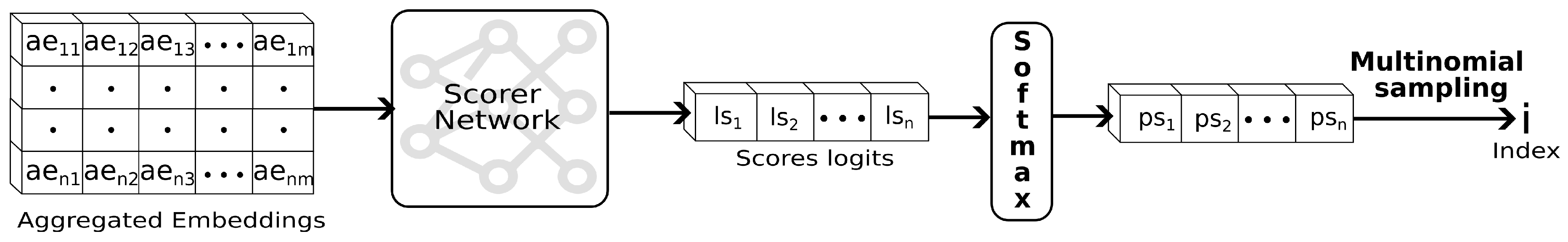

4.3.1. Actor

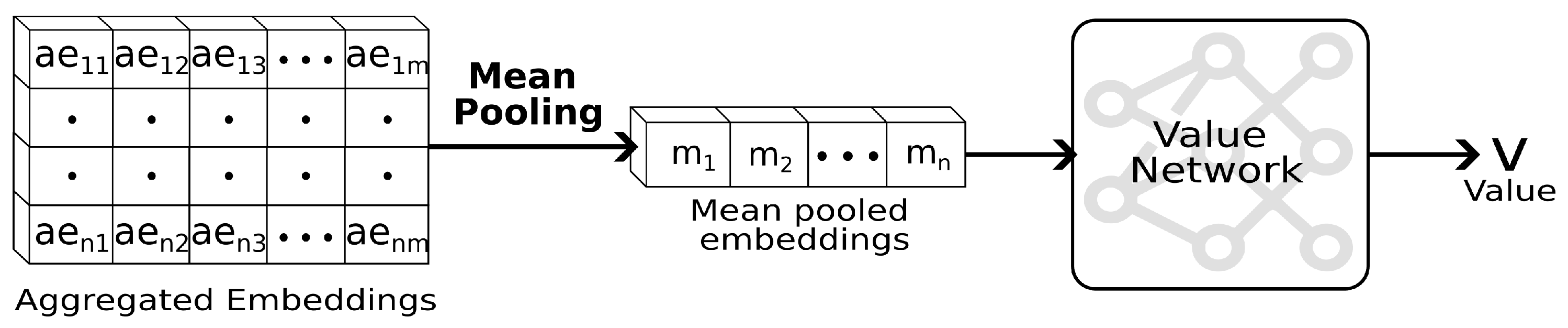

4.3.2. Critic

5. Experimental Results

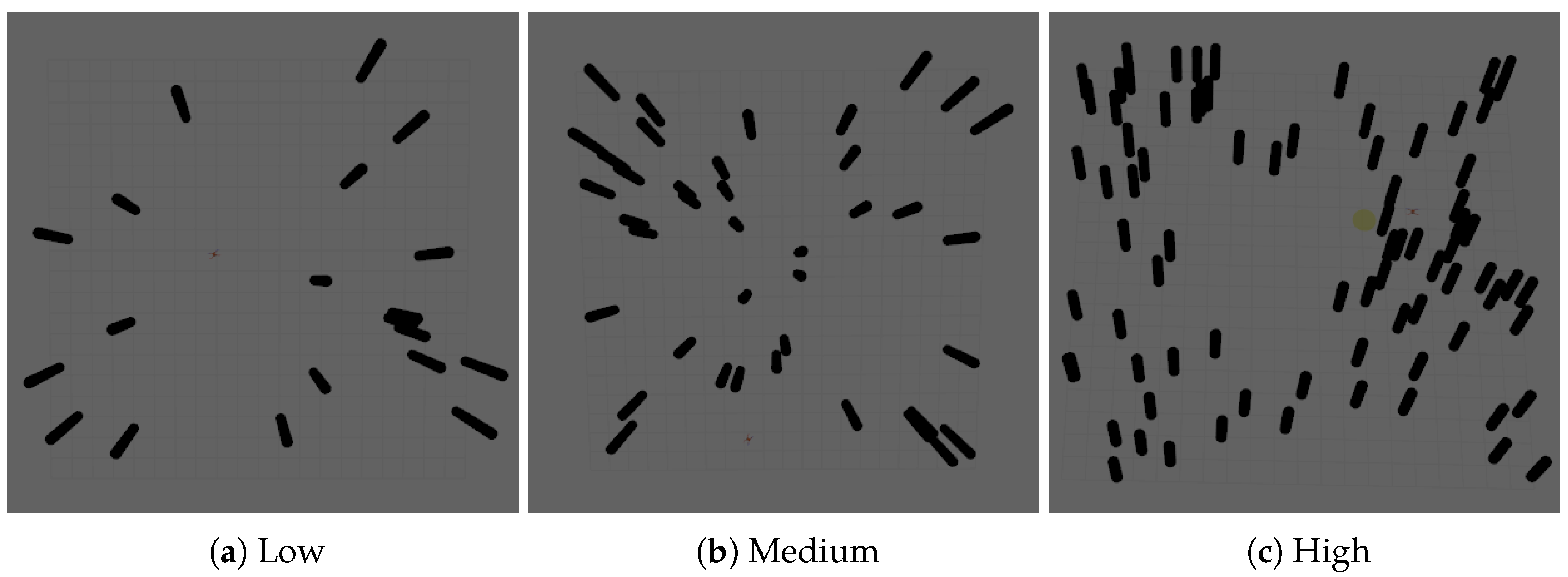

5.1. Training Setup

5.2. Training Parameters

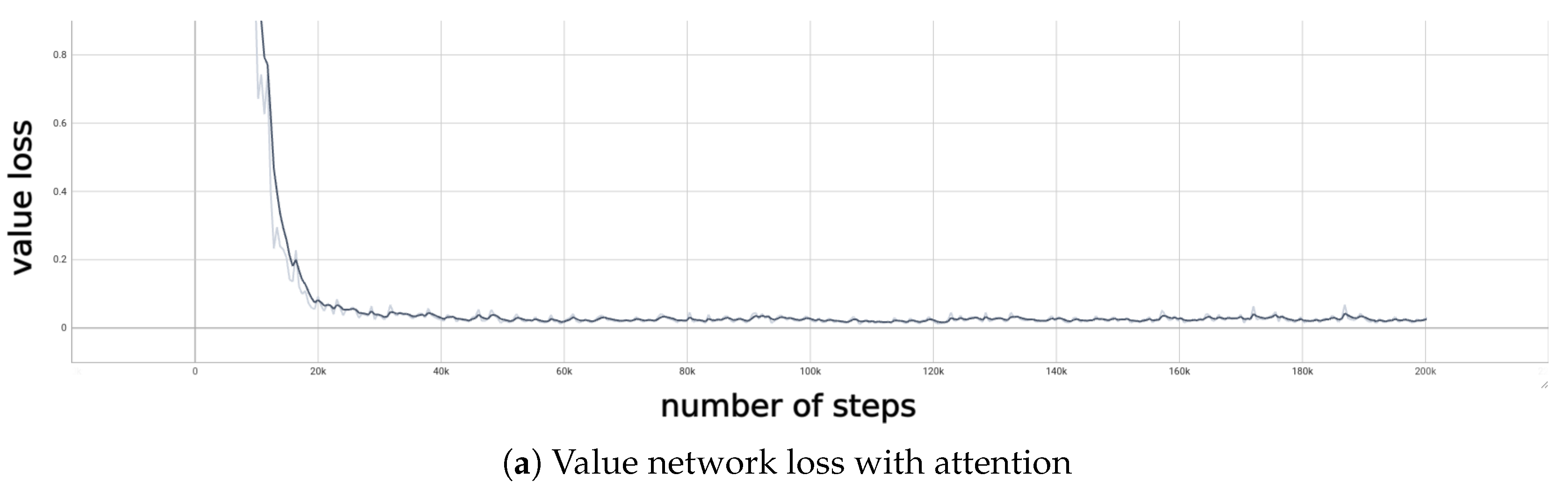

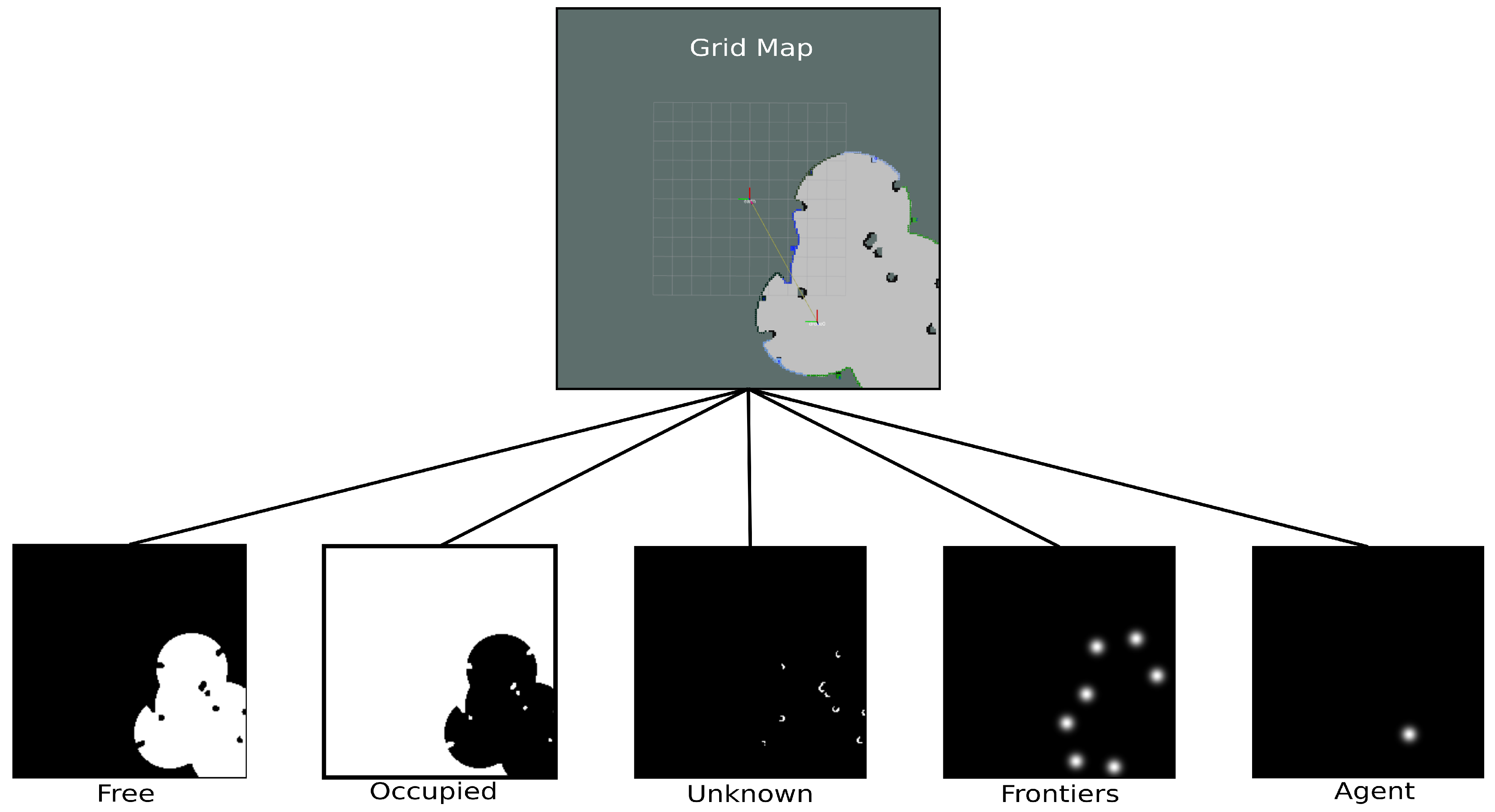

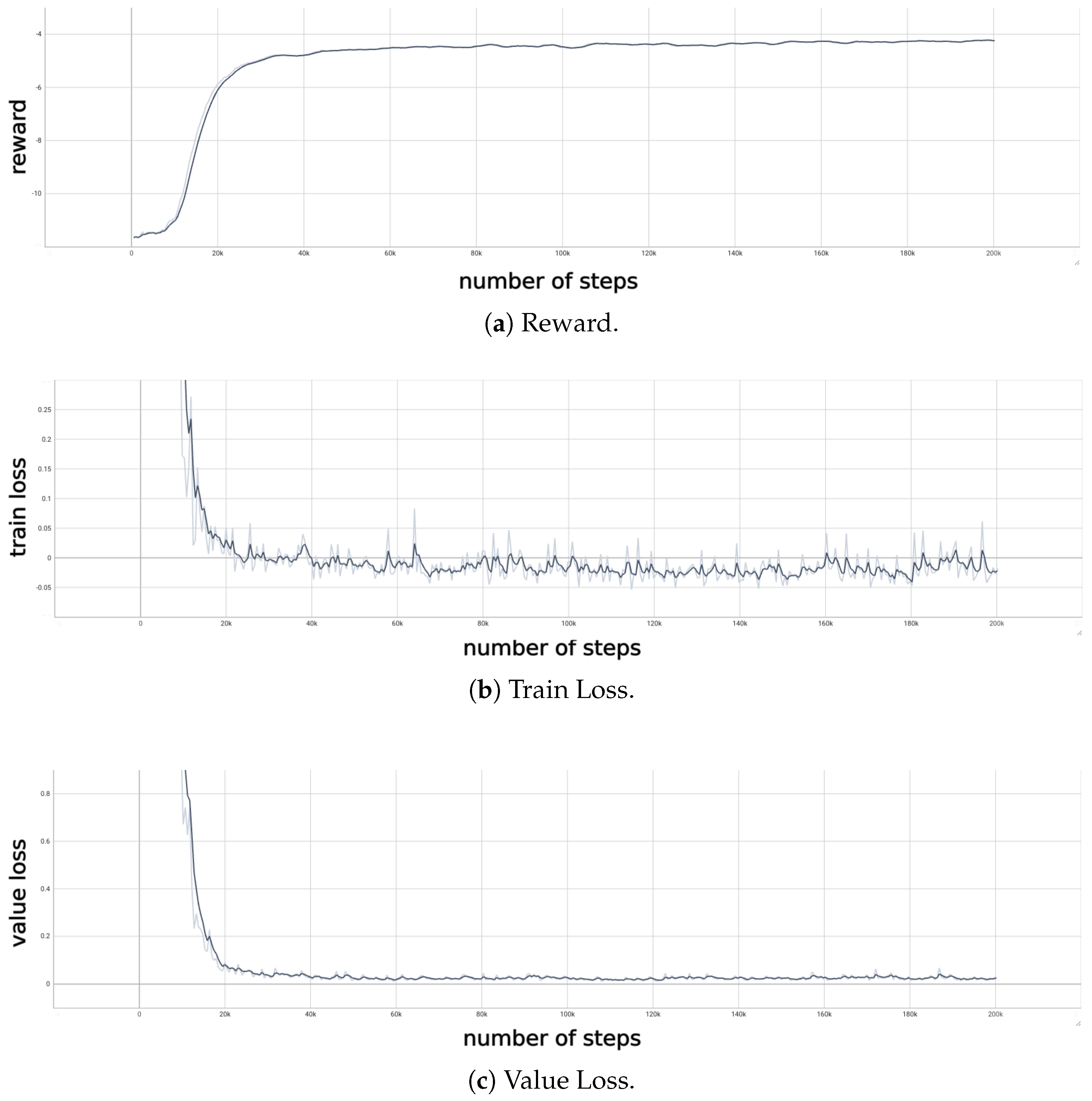

5.3. Final Training Metrics

5.4. Policy Evaluation

- Random Sample: The next frontier is drawn uniformly at random from the frontier set:where is the selected frontier.

- Nearest Frontier: A greedy strategy that selects the frontier closest to the robot in Euclidean distance:where denotes the (Euclidean) norm. [1].

- Information Gain: For each candidate frontier, the information gain is estimated as the number of unknown cells that would become visible from that frontier:where is the set of grid cells whose occupancy state is unknown, is the position of a grid cell, is the maximum sensor range, and is a predicate that evaluates to true if and only if cell c lies within the sensor’s field of view from with an unoccluded line of sight [15].

- Hybrid Approach: A multi-objective strategy that combines distance cost and information gain through a weighted score [12]:where is a tunable weight that trades off exploration reward against travel cost, is the maximum information gain over all current frontiers (used for normalization), is the Euclidean distance from the robot to the frontier, and is the maximum such distance (used for normalization). Three weight configurations are evaluated: .

- TARE Local: The local planner from the TARE framework [14] scores candidate sensor viewpoints by how much new surface area they would reveal, selects a compact subset via a greedy-randomized procedure, and returns the first waypoint of the resulting route as the next frontier.where is a candidate sensor viewpoint sampled within the local planning window, is the total number of sampled viewpoints, is the set of unseen surface elements within the high-resolution local map centered on the robot, s is an individual surface element in that set, is a predicate that is true when surface element s is observable from viewpoint v, denotes the Greedy Randomized Adaptive Search Procedure that selects a compact subset of high-scoring viewpoints, computes a short visitation tour through the selected viewpoints that respects robot motion constraints, and is the first stop on that tour, which is returned as the next frontier .

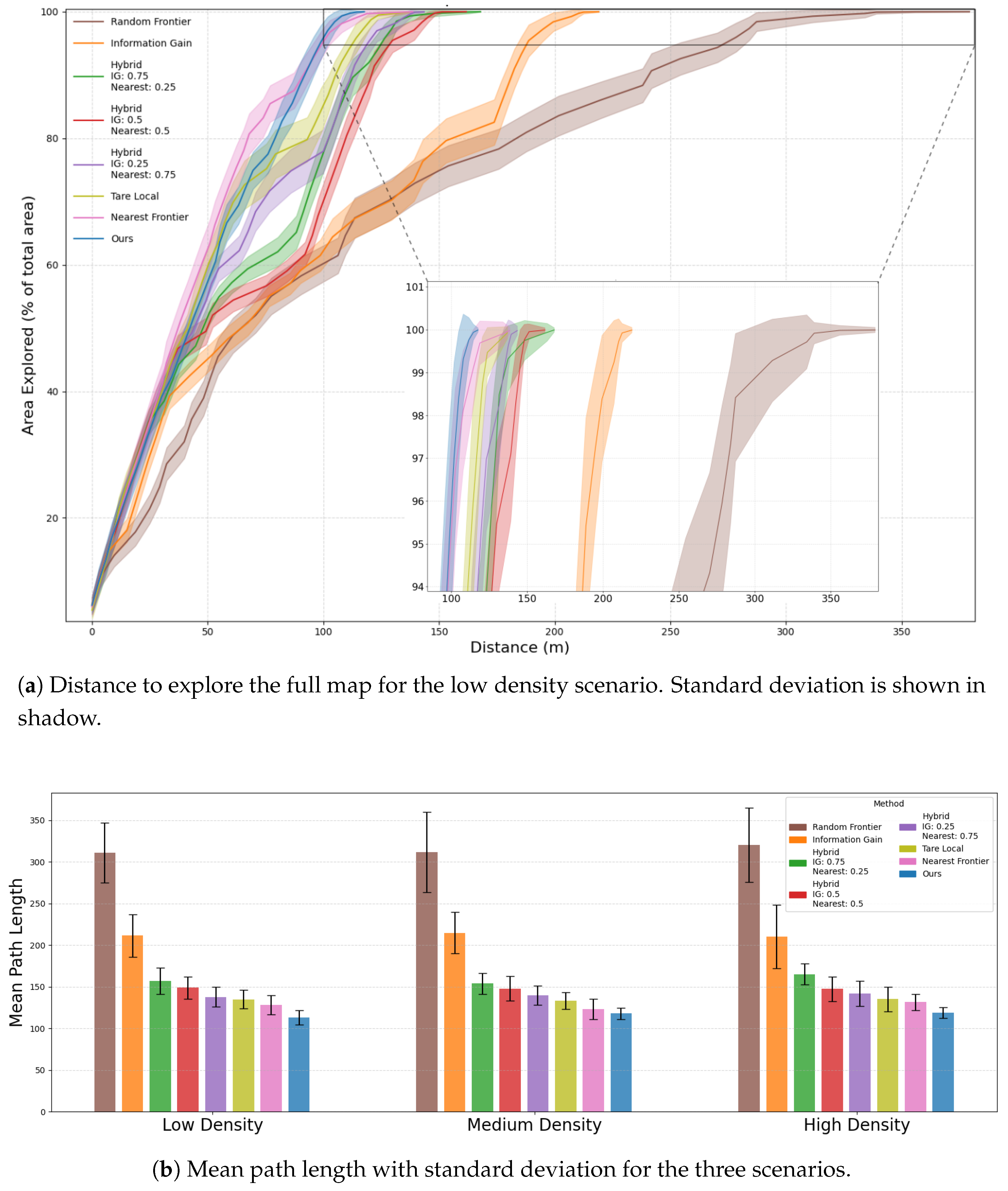

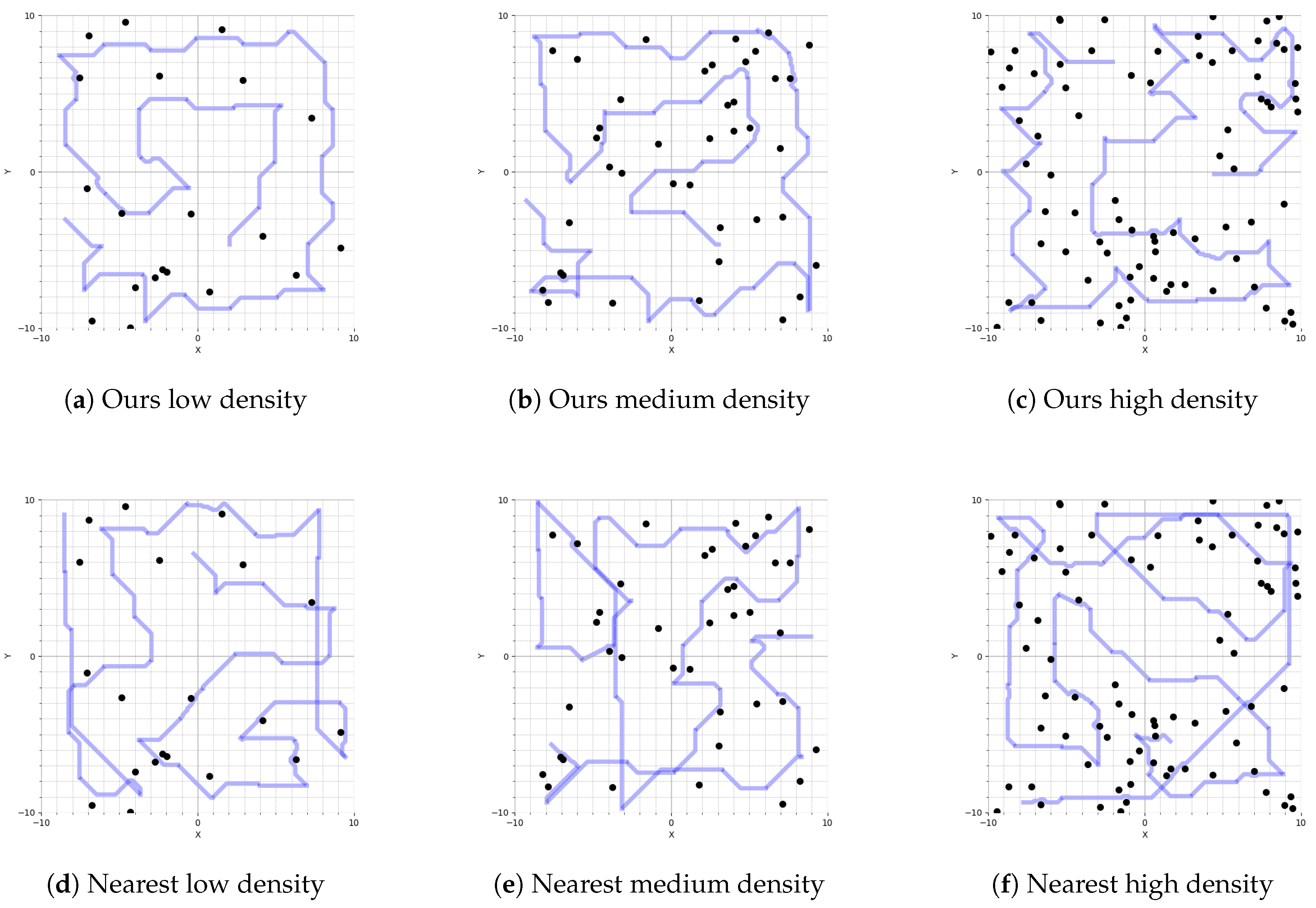

5.5. Simulation Results and Analysis

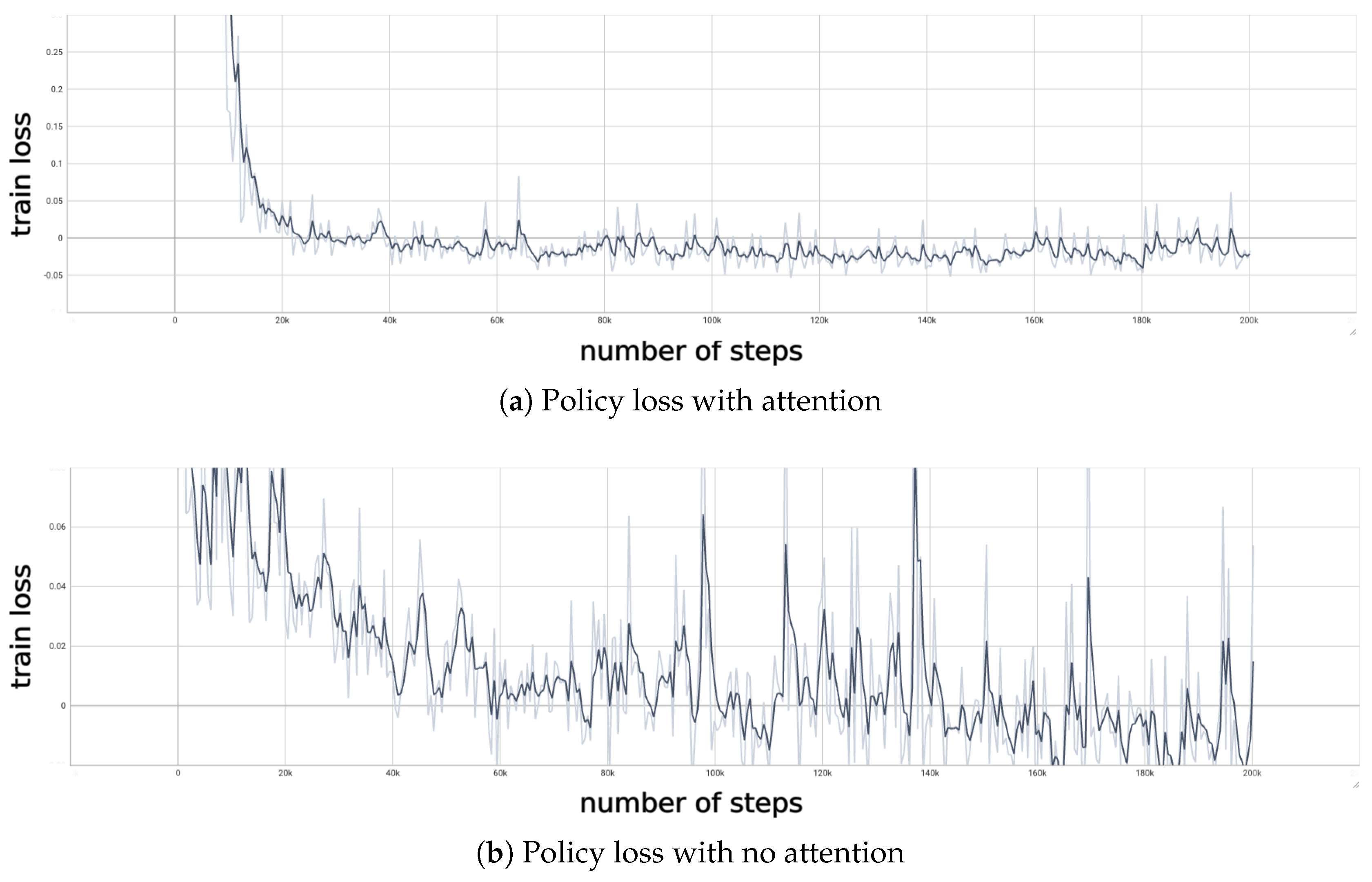

5.6. Ablation Study

| Metric | No Attention | Attention |

|---|---|---|

| Reward Peak (ep_rew_mean) | ||

| Reward Std (last 25% of training) | ||

| Std Norm Last 25% of Train Loss (normalized) | ||

| Std Norm Last 25% of Value Loss (normalized) | ||

| Residual Std of Normalized Train Loss (w=10) | ||

| Mean Abs Diff of Normalized Train Loss |

- Higher peak reward: The Attention model achieves a peak episode return of , compared to without attention, indicating a stronger final policy.

- More stable rewards: Attention reduces the standard deviation of episode returns in the final quarter of training from to , showing smoother and more reliable performance improvements.

- Smoother loss convergence: When normalized to , the last-quarter standard deviation of train loss drops from to , and of value loss from to , confirming that Attention yields much steadier optimization in the later stages.

- Lower high-frequency noise: The residual std around a rolling-mean baseline (window = 10) of normalized train loss falls from to , and the mean absolute step-to-step change from to , further underscoring cleaner gradient updates with Attention.

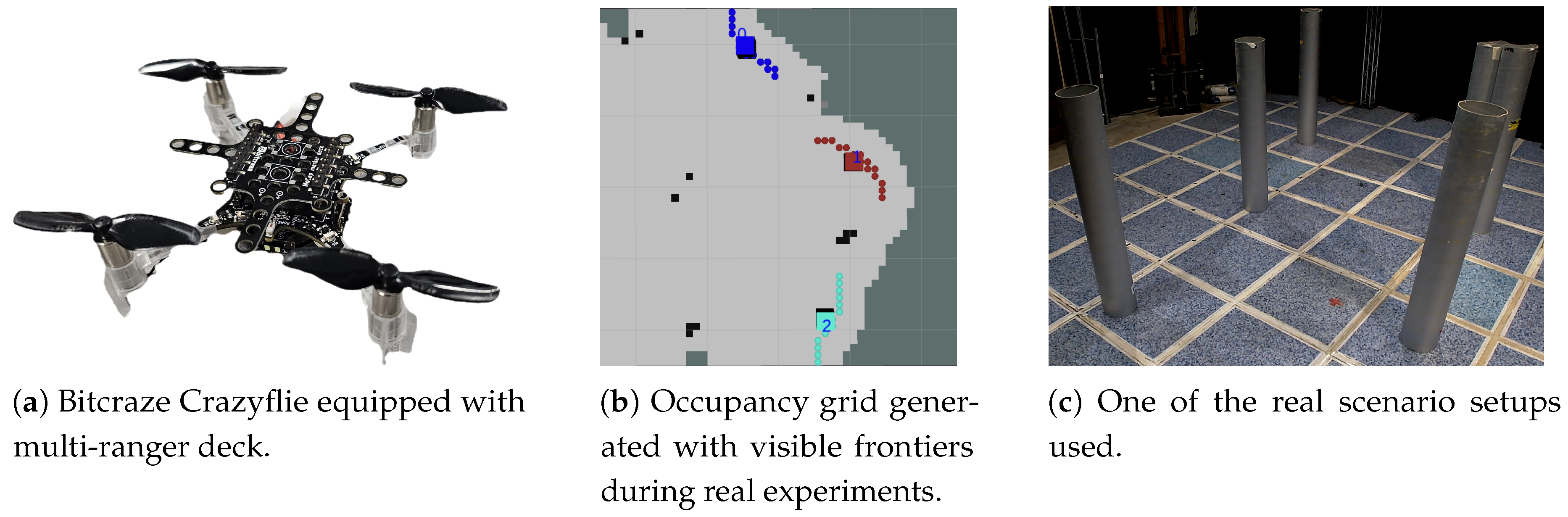

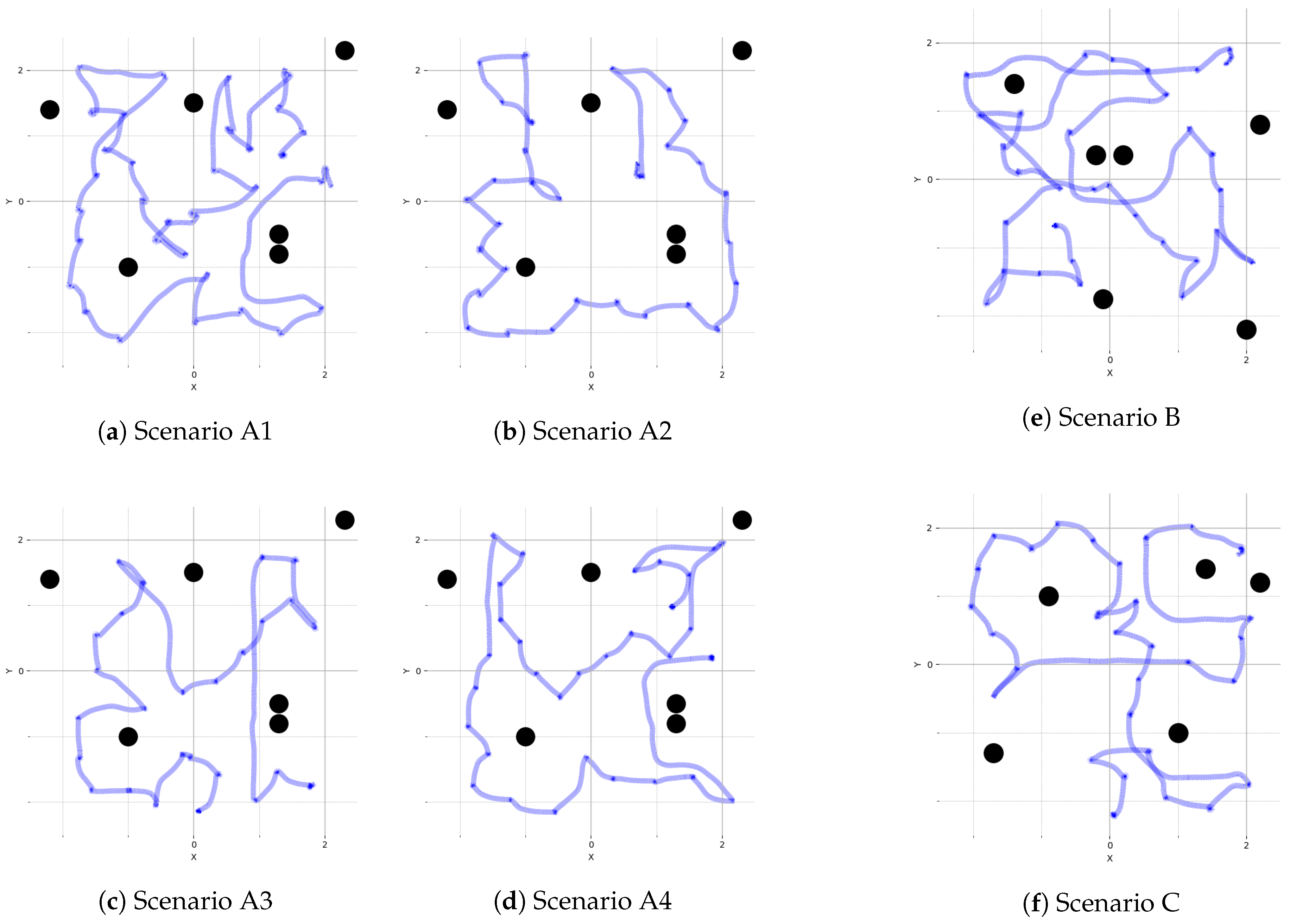

5.7. Real Flight Experiments

- 1.

- Our policy achieves zero-shot sim to real transfer by using the same laser generated occupancy grid map, together with the frontier scalar features.

- 2.

- We demonstrate that even though the policy has been trained with a 200x200 map generated from a 400 squared meter simulated scenario, we are able to upscale the 50x50 map generated from a 25 squared meter real scenario, giving the policy the flexibility to use state matrices of different sizes.

- 3.

- Our policy performs with consistent behavior in minimizing the distance per percentage of area explored for which it was trained.

6. Conclusion

Author Contributions

Funding

Data Availability Statement

DURC Statement

Conflicts of Interest

Abbreviations

References

- Yamauchi, B. A frontier-based approach for autonomous exploration. In Proceedings of the IEEE International Symposium on Computational Intelligence in Robotics and Automation (CIRA), 1997; pp. 146–151. [Google Scholar]

- Amigoni, F. Experimental evaluation of some exploration strategies for mobile robots. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), 2008; pp. 2818–2823. [Google Scholar]

- Senarathne, P.N.; Wang, D. Incremental algorithms for safe and reachable frontier detection for robot exploration. Robot. Auton. Syst. 2015, 72, 189–206. [Google Scholar] [CrossRef]

- Lu, L.; Redondo, C.; Campoy, P. Optimal frontier-based autonomous exploration in unconstructed environment using RGB-D sensor. Sensors 2020, 20, 6507. [Google Scholar] [CrossRef] [PubMed]

- Leong, K. Reinforcement Learning with Frontier-Based Exploration via Autonomous Environment. arXiv 2023, arXiv:2307.07296. Available online: https://arxiv.org/abs/2307.07296. [CrossRef]

- Garaffa, L.C.; Basso, M.; Konzen, A.A.; Freitas, E.P. Reinforcement learning for mobile robotics exploration: A survey. IEEE Trans. Neural Netw. Learn. Syst. 2023, 34, 3796–3810. [Google Scholar] [CrossRef] [PubMed]

- Singh, B.; Kumar, R.; Singh, V.P. Reinforcement learning in robotic applications: a comprehensive survey. Springer Artif. Intell. Rev. 2022, 55, 945–990. [Google Scholar] [CrossRef]

- Arias-Perez, P.; Gautam, A.; Fernandez-Cortizas, M.; Perez-Saura, D.; Saripalli, S.; Campoy, P. Exploring unstructured environments using minimal sensing on cooperative nano-drones. IEEE Robot. Autom. Lett. 2024, 9, 11202–11209. [Google Scholar] [CrossRef]

- Fernandez-Cortizas, M.; Molina, M.; Arias-Perez, P.; Perez-Segui, R.; Perez-Saura, D.; Campoy, P. Aerostack2: A software framework for developing multi-robot aerial systems. arXiv 2024, arXiv:2303.18237. [Google Scholar]

- Zhao, H.; Gao, J.; Lan, T.; Sun, C.; Sapp, B.; Varadarajan, B.; Shen, Y.; Shen, Y.; Chai, Y.; Schmid, C.; Li, C.; Anguelov, D. TNT: Target-driven trajectory prediction. arXiv 2020, arXiv:2008.08294. [Google Scholar]

- Vaswani, A.; et al. Attention is all you need. In Adv. Neural Inf. Process. Syst. (NeurIPS); 2017; pp. 5998–6008. [Google Scholar]

- Basilico, N.; Amigoni, F. Exploration strategies based on multi-criteria decision making for searching environments in rescue operations. Auton. Robots 2011, 31, 401–417. [Google Scholar] [CrossRef]

- Macenski, S.; Foote, T.; Gerkey, B.; Lalancette, C.; Woodall, W. Robot Operating System 2: Design, architecture, and uses in the wild. Sci. Robot. 2022, 7, eabm6074. [Google Scholar] [CrossRef]

- Cao, C.; Zhu, H.; Choset, H.; Zhang, J. TARE: A hierarchical framework for efficiently exploring complex 3D environments. In Robotics: Science and Systems (RSS); 2021. [Google Scholar] [CrossRef]

- Burgard, W.; Moors, M.; Stachniss, C.; Schneider, F. Coordinated multi-robot exploration. IEEE Trans. Robot. 2005, 21, 376–386. [Google Scholar] [CrossRef]

- González-Baños, H.H.; Latombe, J.-C. Navigation strategies for exploring indoor environments. Int. J. Robot. Res. 2002, 21, 829–848. [Google Scholar] [CrossRef]

- Umari, H.; Mukhopadhyay, S. Autonomous robotic exploration based on multiple rapidly-exploring randomized trees. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2017; pp. 1396–1402. [Google Scholar]

- Deng, D.; Duan, R.; Liu, J.; Sheng, K.; Shimada, K. Robotic exploration of unknown 2D environment using a frontier-based automatic-differentiable information gain measure. In Proceedings of the IEEE/ASME International Conference on Advanced Intelligent Mechatronics (AIM), 2020. [Google Scholar] [CrossRef]

- Sun, Z.; Wu, B.; Xu, C.-Z.; Sarma, S.E.; Yang, J.; Kong, H. Frontier detection and reachability analysis for efficient 2D graph-SLAM based active exploration. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2020. [Google Scholar] [CrossRef]

- Zhang, T.; Yu, J.; Li, J.; Pang, M. A heuristic autonomous exploration method based on environmental information gain during quadrotor flight. Int. J. Adv. Robot. Syst. 2024, 21. [Google Scholar] [CrossRef]

- Fan, J.; Zhang, X.; Zou, Y. Hierarchical path planner for unknown space exploration using reinforcement learning-based intelligent frontier selection. Expert Syst. With Appl. 2023, 230, 120630. [Google Scholar] [CrossRef]

- Yu, B.; Kasaei, H.; Cao, M. Frontier semantic exploration for visual target navigation. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), 2023; pp. 4099–4105. [Google Scholar]

- Iyer, G.; Jain, V.; Pratapa, A.; Setlur, A. Learning frontier selection for navigation in unseen structured environment. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), 2020; pp. 2130–2137. [Google Scholar]

- Niroui, F.; Zhang, K.; Kashino, Z.; Nejat, G. Deep reinforcement learning robot for search and rescue applications: Exploration in unknown cluttered environments. IEEE Robot. Autom. Lett. 2019, 4, 610–617. [Google Scholar] [CrossRef]

- Cao, Y.; Hou, T.; Wang, Y.; Yi, X.; Sartoretti, G. Ariadne: A reinforcement learning approach using attention-based deep networks for exploration. arXiv 2023, arXiv:2301.11575. [Google Scholar]

- Liu, Z.; Deshpande, M.; Qi, X.; Zhao, D.; Madhivanan, R.; Sen, A. Learning to Explore (L2E): Deep reinforcement learning-based autonomous exploration for household robot. Robotics: Science and Systems (RSS) Workshop, 2023. [Google Scholar]

- Wang, R.; Zhang, J.; Lyu, M.; Yan, C.; Chen, Y. An improved frontier-based robot exploration strategy combined with deep reinforcement learning. Robot. Auton. Syst. 2024, 181, 104783. [Google Scholar] [CrossRef]

- Schulman, J.; Wolski, F.; Dhariwal, P.; Radford, A.; Klimov, O. Proximal policy optimization algorithms. arXiv 2017, arXiv:1707.06347. [Google Scholar] [CrossRef]

- Raffin, A.; Hill, A.; Gleave, A.; Kanervisto, A.; Ernestus, M.; Dormann, N. Stable-Baselines3: Reliable Reinforcement Learning Implementations. J. Mach. Learn. Res. 2021, 22, 1–8. [Google Scholar]

- Queeney, J.; Paschalidis, Y.; Cassandras, C.G. Generalized proximal policy optimization with sample reuse. Adv. Neural Inf. Process. Syst. (NeurIPS) 2021, Vol. 34, 11909–11919. [Google Scholar]

- Schulman, J.; et al. Proximal policy optimization algorithms. arXiv 2017, arXiv:1707.06347. [Google Scholar] [CrossRef]

| Component | Layer | Input Shape | Output Shape | Parameters |

| Continued on next page | ||||

| Map Feature Extractor (CNN)† | ||||

| Conv2d (, stride 4) + ReLU | 10,272 | |||

| Conv2d (, stride 2) + ReLU | 32,832 | |||

| Conv2d (, stride 2) + ReLU | 73,856 | |||

| Flatten | 0 | |||

| Linear + ReLU | 256 | 3,965,184 | ||

| Map Encoder† | ||||

| Linear + ReLU | 256 | 64 | 16,448 | |

| Frontier Embedding Network (shared encoder) | ||||

| Flatten (Identity) | 5 | 5 | 0 | |

| Linear + ReLU | 5 | 64 | 384 | |

| Actor Cross-Attention | ||||

| MultiheadAttention (4 heads) | Q: , K/V: | 16,640 | ||

| Residual addition | 0 | |||

| Scoring Network | ||||

| Linear + ReLU | 64 | 64 | 4,160 | |

| Linear (score) | 64 | 1 | 65 | |

| Softmax + Multinomial sampling | m | index j | 0 | |

| Critic Cross-Attention | ||||

| MultiheadAttention (4 heads) | Q: , K/V: | 16,640 | ||

| Residual addition | 0 | |||

| Critic Head | ||||

| Mean pooling | 64 | 0 | ||

| Linear + ReLU | 64 | 64 | 4,160 | |

| Linear (value) | 64 | 1 | 65 | |

| Total trainable parameters | ∼4.14M | |||

| Parameter | Value |

|---|---|

| Learning rate | 0.0003 |

| Rollout steps (n_steps) | 512 |

| Batch size | 64 |

| Number of epochs | 5 |

| Discount factor () | 0.99 |

| GAE lambda | 0.95 |

| Clip range | 0.2 |

| Clip range VF | None |

| Normalize advantage | True |

| Entropy coefficient | 0.0 |

| Value function coefficient | 0.5 |

| Max gradient norm | 0.5 |

| 20 Obstacles | 40 Obstacles | 80 Obstacles | ||||

|---|---|---|---|---|---|---|

| Method | Mean Path Length | Std. Dev. | Mean Path Length | Std. Dev. | Mean Path Length | Std. Dev. |

| Random | 311.32 | ± 35.92 | 311.83 | ± 48.10 | 320.51 | ± 44.33 |

| Information Gain | 211.58 | ± 25.47 | 214.98 | ± 24.68 | 210.27 | ± 38.34 |

| Nearest Frontier | 128.33 | ± 11.32 | 123.14 | ± 12.41 | 131.63 | ± 9.97 |

| Hybrid 75-25 | 157.19 | ± 15.92 | 154.02 | ± 12.76 | 165.12 | ± 12.62 |

| Hybrid 50-50 | 148.86 | ± 13.07 | 147.83 | ± 14.80 | 147.45 | ± 14.83 |

| Hybrid 25-75 | 137.85 | ± 11.90 | 139.77 | ± 11.48 | 142.05 | ± 14.82 |

| TARE Local | 134.99 | ± 11.13 | 133.30 | ± 9.84 | 135.29 | ± 14.66 |

| Ours | 113.42 | ± 8.74 | 117.98 | ± 6.98 | 119.02 | ± 6.44 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).