Submitted:

07 April 2026

Posted:

27 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

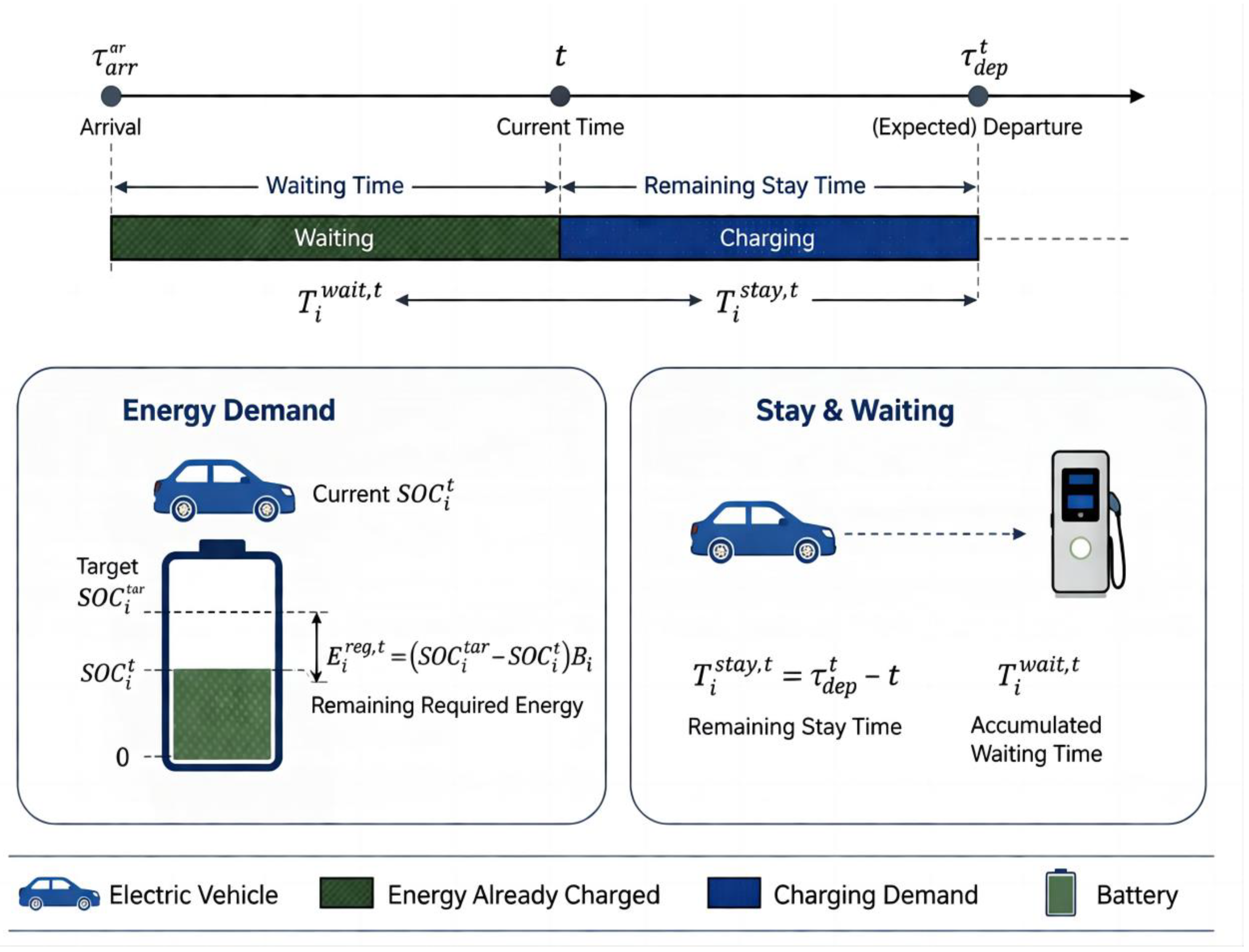

2. System Modeling

2.7. Inter-Stage Coupling Mechanism

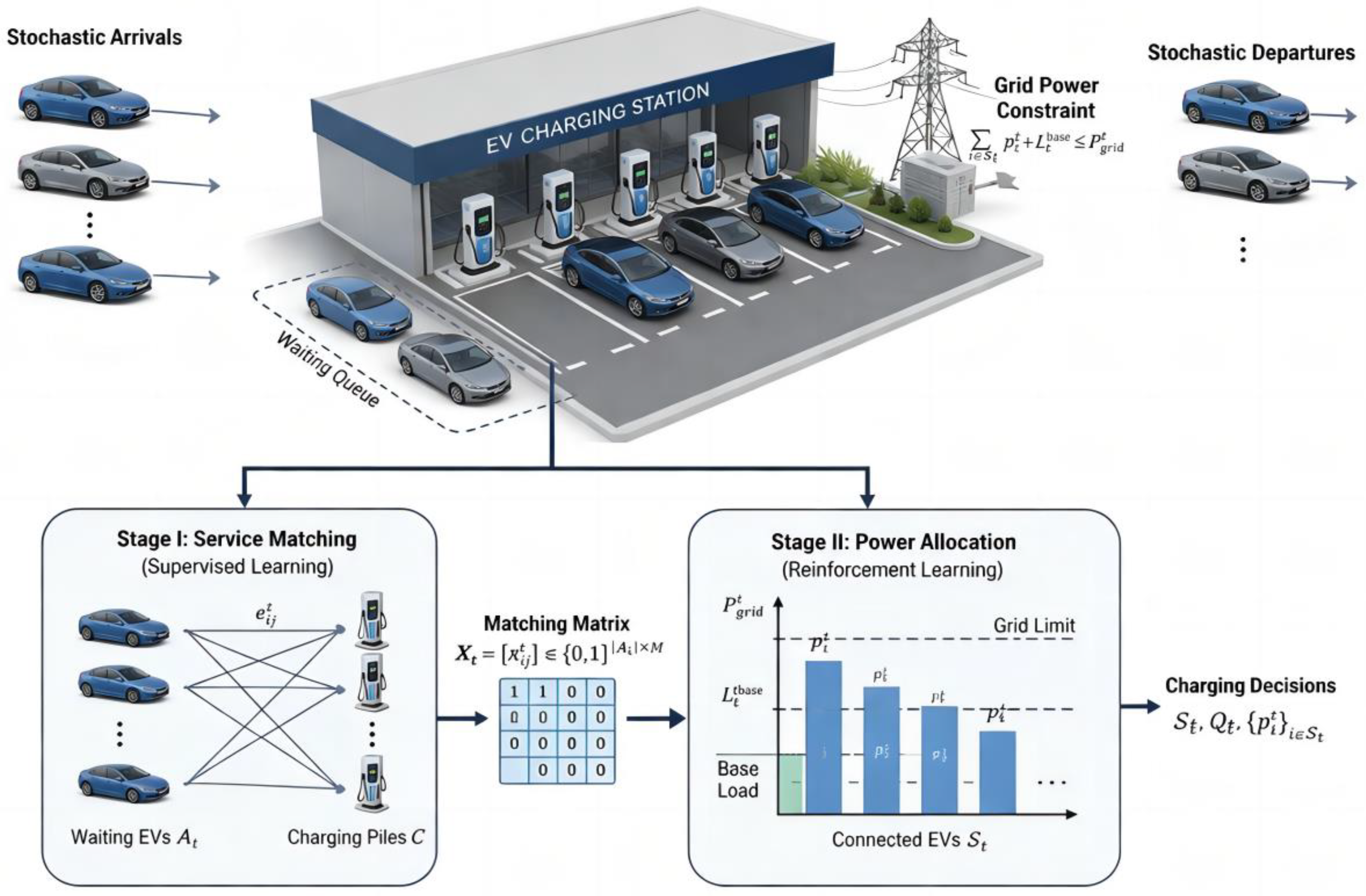

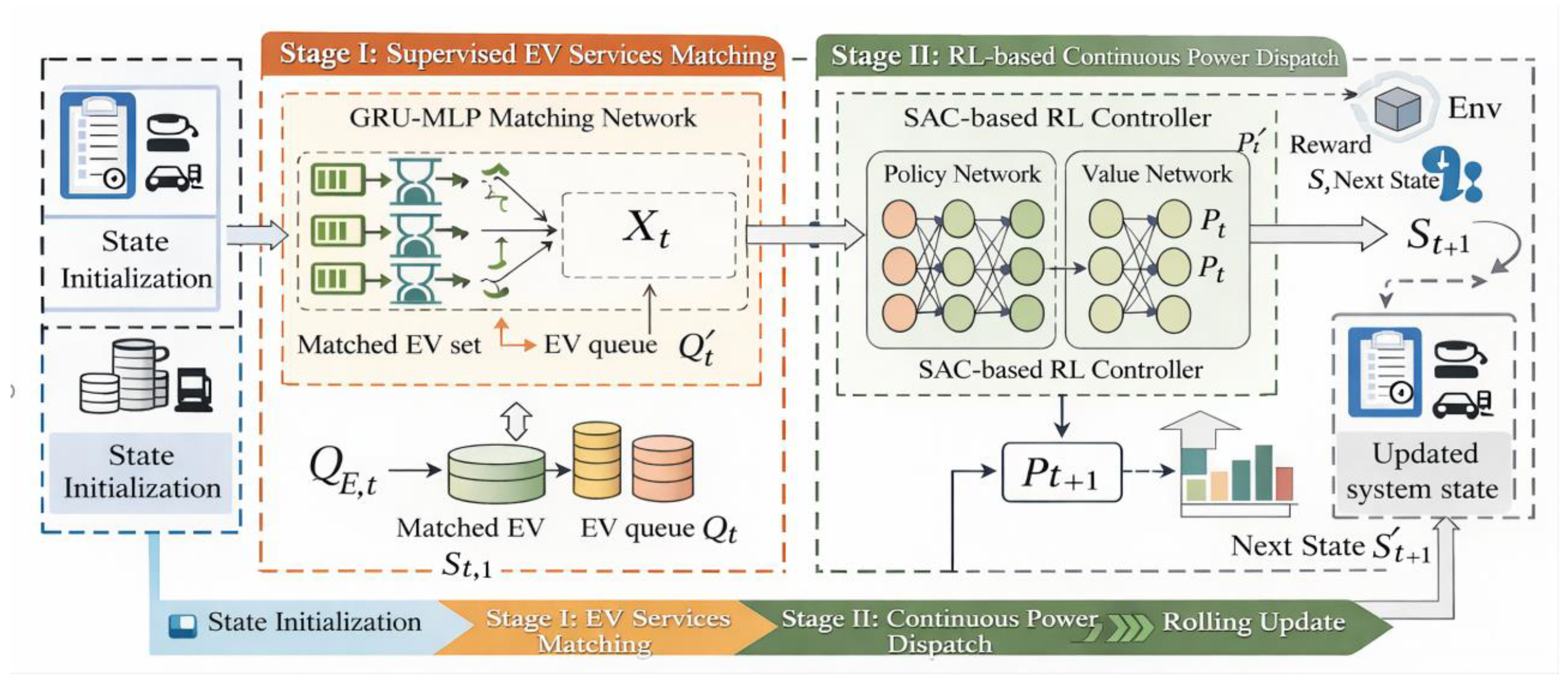

3. Two-Stage Coordinated Optimization Method of SMPD

3.1. Overall SMPD Framework

3.2.2. Matching Score Model

3.2.3. Expert Label Generation and Supervised Training

- (1)

- Policy objective

- (2)

- Value update mechanism

- (3)

- Action execution and constraint handling

3.4. Two-Stage Coupled Decision-Making and Rolling Update Mechanism

| Algorithm 1: The proposed SMPD framework |

|

Input: Initial system state ; decision horizon ; trained GRU-MLP matching network ; trained SAC-based power dispatch policy ; Output:Service matching results ; power dispatch results ; system state trajectory ;

|

4. Experimental Design and Results Analysis

4.1. Experimental Scenario and Parameter Settings

4.2. Benchmark Algorithms and Evaluation Metrics

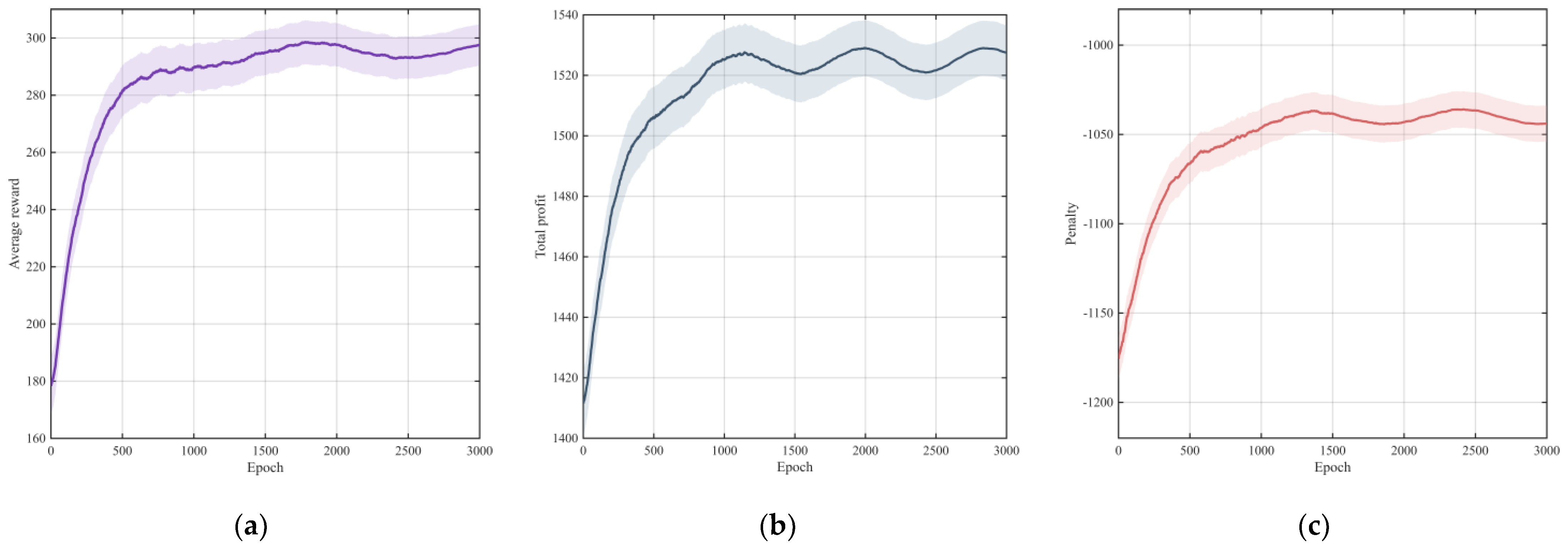

4.3. Learning Performance Analysis

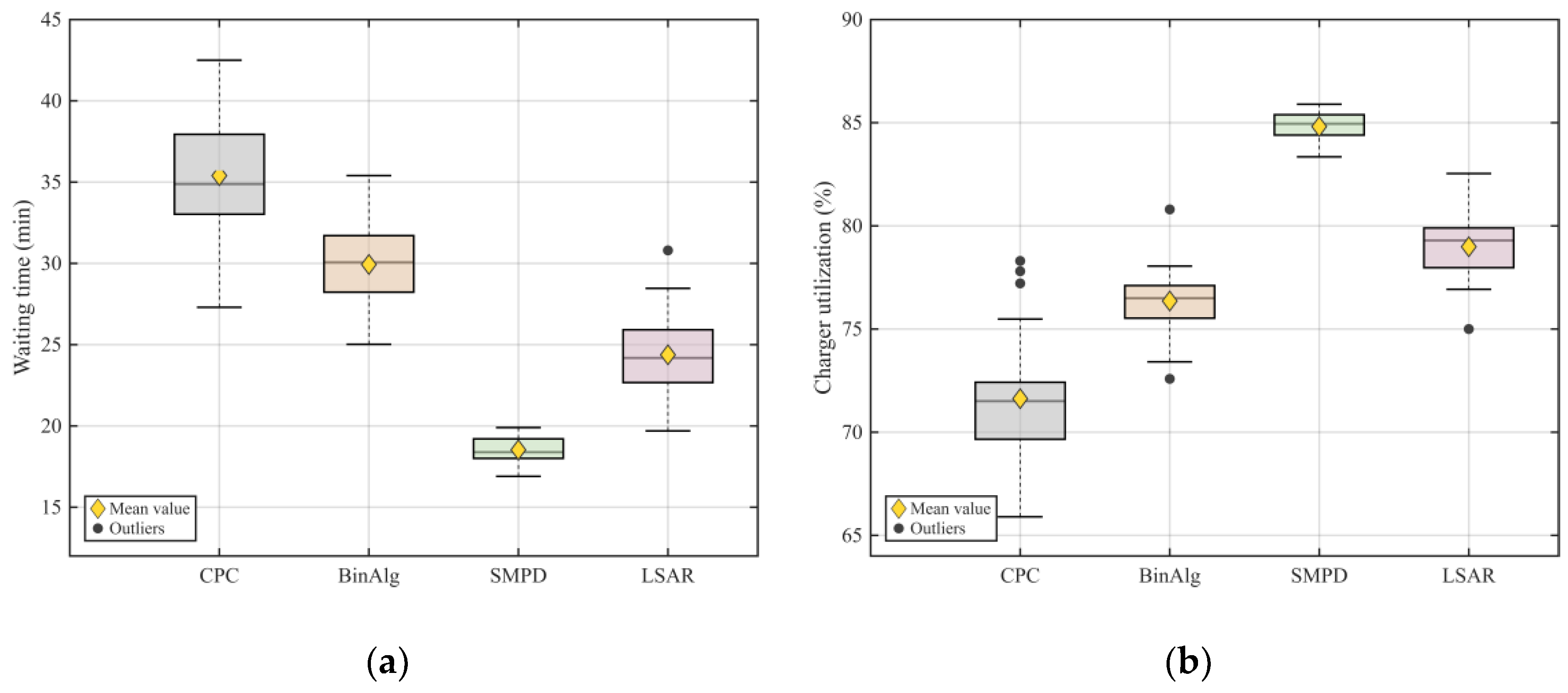

4.4. Overall Performance Comparison

| Method | Waiting time (min) | Charger utilization (%) | Average profit | Penalty |

| CPC | 34.8 ± 2.7 | 71.5 ± 2.1 | 648.4 ± 18.6 | -1186.2 ± 24.3 |

| BinAlg | 29.6 ± 2.1 | 75.9 ± 1.8 | 662.1 ± 16.9 | -1129.4 ± 21.7 |

| LSAR | 24.7 ± 1.8 | 79.3 ± 1.5 | 675.8 ± 14.2 | -1094.7 ± 18.5 |

| SMPD | 18.4 ± 1.2 | 84.8 ± 1.1 | 819.6 ± 11.7 | -1016.3 ± 14.1 |

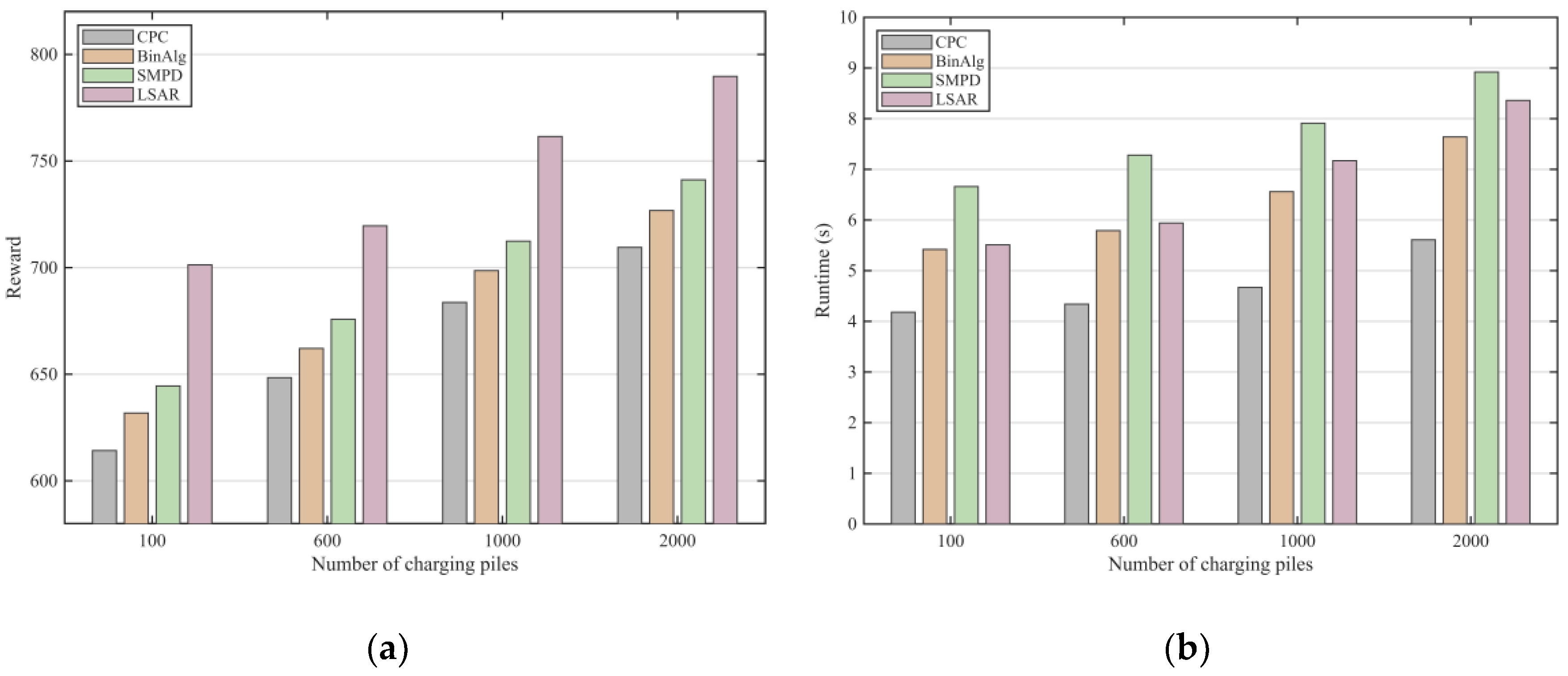

4.5. Reward Comparison Under Different Infrastructure Scales

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Guo, Z.; Zhang, S.; Wang, J.; Huang, X.; Hu, R.; Xu, W. Modeling and Analysis of UAV Charging Scheduling in Fixed/Mobile Charging Station Systems. 2024 14th International Conference on Information Science and Technology (ICIST), Chengdu, China, 2024; pp. 894–899. [Google Scholar] [CrossRef]

- Zhuang, W.; Zhang, H.; Wang, R.; Chen, Z. Optimal Scheduling Strategy of Urban Charging Station with PV and Storage Based on FWA. 2020 International Conference on Electrical Engineering and Control Technologies (CEECT), Melbourne, VIC, Australia, 2020; pp. 1–5. [Google Scholar] [CrossRef]

- Zhang, C. Charging schedule optimization of electric bus charging station considering departure timetable. 8th Renewable Power Generation Conference (RPG 2019), Shanghai, China, 2019; pp. 1–5. [Google Scholar] [CrossRef]

- Putri, S. M.; Ashari, M.; Endroyono; Suryoatmojo, H. EV Charging Scheduling with Genetic Algorithm as Intermittent PV Mitigation in Centralized Residential Charging Stations. 2023 International Seminar on Intelligent Technology and Its Applications (ISITIA), Surabaya, Indonesia, 2023; pp. 286–291. [Google Scholar] [CrossRef]

- Zhang, Y.; Wu, C.; Lu, C. Risk-Limiting Multi-Station EV Charging Scheduling with Imperfect Prediction. 2022 7th IEEE Workshop on the Electronic Grid (eGRID), Auckland, New Zealand, 2022; pp. 1–5. [Google Scholar] [CrossRef]

- Saner, C. B.; Saha, J.; Sharma, A.; Srinivasan, D. Heuristic Methods for EV Charging Scheduling in Fast Charging Stations with Modular Architecture: A Comparative Analysis. 2023 IEEE PES 15th Asia-Pacific Power and Energy Engineering Conference (APPEEC), Chiang Mai, Thailand, 2023; pp. 1–6. [Google Scholar] [CrossRef]

- Alirezazadeh, A.; Disfani, V. Profit-Maximizing Scheduling of Mobile Charging Stations Using Deep Reinforcement Learning: A Case Study in Chattanooga. 2025 IEEE Power & Energy Society General Meeting (PESGM), Austin, TX, USA; 2025, pp. 1–5. [CrossRef]

- Qarebagh, A.J.; Sabahi, F.; Nazarpour, D. Optimized Scheduling for Solving Position Allocation Problem in Electric Vehicle Charging Stations. 2019 27th Iranian Conference on Electrical Engineering (ICEE), Yazd, Iran, 2019; pp. 593–597. [Google Scholar] [CrossRef]

- Yang, Z.; Wang, S.; Deng, J.; Peng, X.; Jiang, N.; Li, Y. Intelligent Energy Management Method of EV Charging Station with Photovoltaic and Energy Storage System Considering EV Charging Scheduling. 2024 8th International Conference on Power Energy Systems and Applications (ICoPESA), Hong Kong, Hong Kong, 2024; pp. 488–494. [Google Scholar] [CrossRef]

- Qureshi, U.; Ghosh, A.; Panigrahi, B. K. Dynamic Routing and Scheduling of Mobile Charging Stations for Electric Vehicles Using Deep Reinforcement Learning. 2024 IEEE Power & Energy Society General Meeting (PESGM), Seattle, WA, USA, 2024; pp. 1–5. [Google Scholar] [CrossRef]

- Wang, R.; Huang, Q.; Chen, Z.; Xing, Q.; Zhang, Z. An Optimal Scheduling Strategy for PhotovoltaicStorage-Charging Integrated Charging Stations. 2020 12th IEEE PES Asia-Pacific Power and Energy Engineering Conference (APPEEC), Nanjing, China, 2020; pp. 1–5. [Google Scholar] [CrossRef]

- Zhang, Y.; Yang, Y.; Zhang, X.; Jiao, F. Research Progress on Scheduling Algorithms for Mobile Charging Station. 2025 9th International Conference on Power Energy Systems and Applications (ICoPESA), Nanjing, China; 2025, pp. 634–640. [CrossRef]

- Zhou, J.; Zhou, Y.; Zhang, K.; Liu, S.; Shi, S.; Li, H. Scheduling Optimization Method for Charging Piles in Electric Vehicle Charging Stations Based on Mixed Integer Linear Programming. 2023 IEEE 7th Conference on Energy Internet and Energy System Integration (EI2), Hangzhou, China, 2023; pp. 220–224. [Google Scholar] [CrossRef]

- Lu, X.; Liu, N.; Chen, Q.; Zhang, J. Multi-objective optimal scheduling of a DC micro-grid consisted of PV system and EV charging station. 2014 IEEE Innovative Smart Grid Technologies - Asia (ISGT ASIA), Kuala Lumpur, Malaysia, 2014; pp. 487–491. [Google Scholar] [CrossRef]

- Chi, Y.; Sun, J.; Ma, K.; Hong, Y.; Wang, S. Real Time Scheduling and Intelligent Prediction Algorithm for Electric Vehicle Charging Station Based on Big Data Analysis. 2024 International Conference on Electrical Drives, Power Electronics & Engineering (EDPEE), Athens, Greece, 2024; pp. 324–328. [Google Scholar] [CrossRef]

- Fang; Xiang, S.; Zhang, X. Optimal Scheduling Strategy of Distributied Electric Bus Charging Stations. 2024 IEEE 7th International Electrical and Energy Conference (CIEEC), Harbin, China, 2024; pp. 4371–4376. [Google Scholar] [CrossRef]

- Saha, M.; Thakur, S. S.; Bhattacharya, A. Optimal Scheduling Strategy for Swapped Battery Station of Electric Vehicle by Human Felicity Algorithm. 2022 IEEE 6th International Conference on Condition Assessment Techniques in Electrical Systems (CATCON), Durgapur, India, 2022; pp. 427–431. [Google Scholar] [CrossRef]

| Method | =100 | =600 | =1000 | =2000 | ||||

| Reward | Runtime(s) | Reward | Runtime(s) | Reward | Runtime(s) | Reward | Runtime(s) | |

| CPC | 614.2 | 4.18 | 648.4 | 4.34 | 683.7 | 4.67 | 709.5 | 5.61 |

| BinAlg | 631.8 | 5.42 | 662.1 | 5.79 | 698.6 | 6.56 | 726.8 | 7.64 |

| SMPD | 644.5 | 6.66 | 675.8 | 7.28 | 712.4 | 7.91 | 741.2 | 8.92 |

| LSAR | 701.3 | 5.51 | 719.6 | 5.94 | 761.5 | 7.17 | 789.7 | 8.36 |

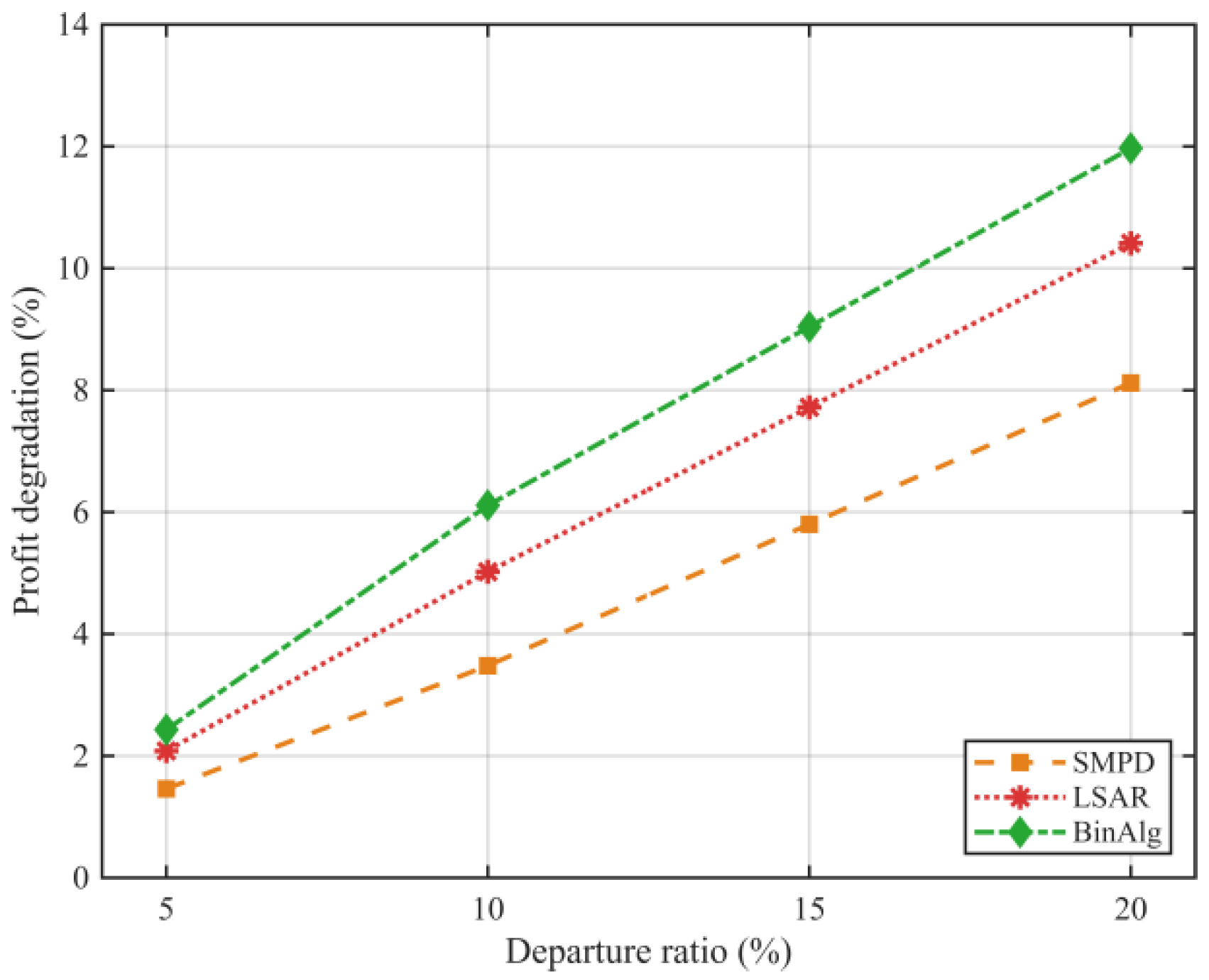

| Departure ratio | BinAlg | LSAR | SMPD |

| 5% | -2.43% | -2.08% | -1.46% |

| 10% | -6.11% | -5.02% | -3.48% |

| 15% | -9.04% | -7.72% | -5.80% |

| 20% | -11.97% | 10.41% | -8.12% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).