Submitted:

24 April 2026

Posted:

24 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- We conduct the first comprehensive empirical study of open-source LLMs synthesizing verifiable Dafny code from real-world requirements, evaluating seven LLM models across three prompting strategies to establish baseline performance in formal code generation.

- We introduce NL2VC-60 dataset, a novel benchmark consisting of 60 hand-authored programs to bridges the gap between simple textbook tasks and the nuanced demands of competitive programming tasks.

- We provide the first categorization of Dafny-specific compilation and verification errors in the literature, creating a diagnostic dataset of model failure modes to guide future improvements in the synthesis of formal verification.

- We establish a rigorous evaluation pipeline for functional correctness by being the first to integrate extensive uDebug community test suites, ensuring synthesized programs are both formally verified and correct across thousands of real-world edge cases.

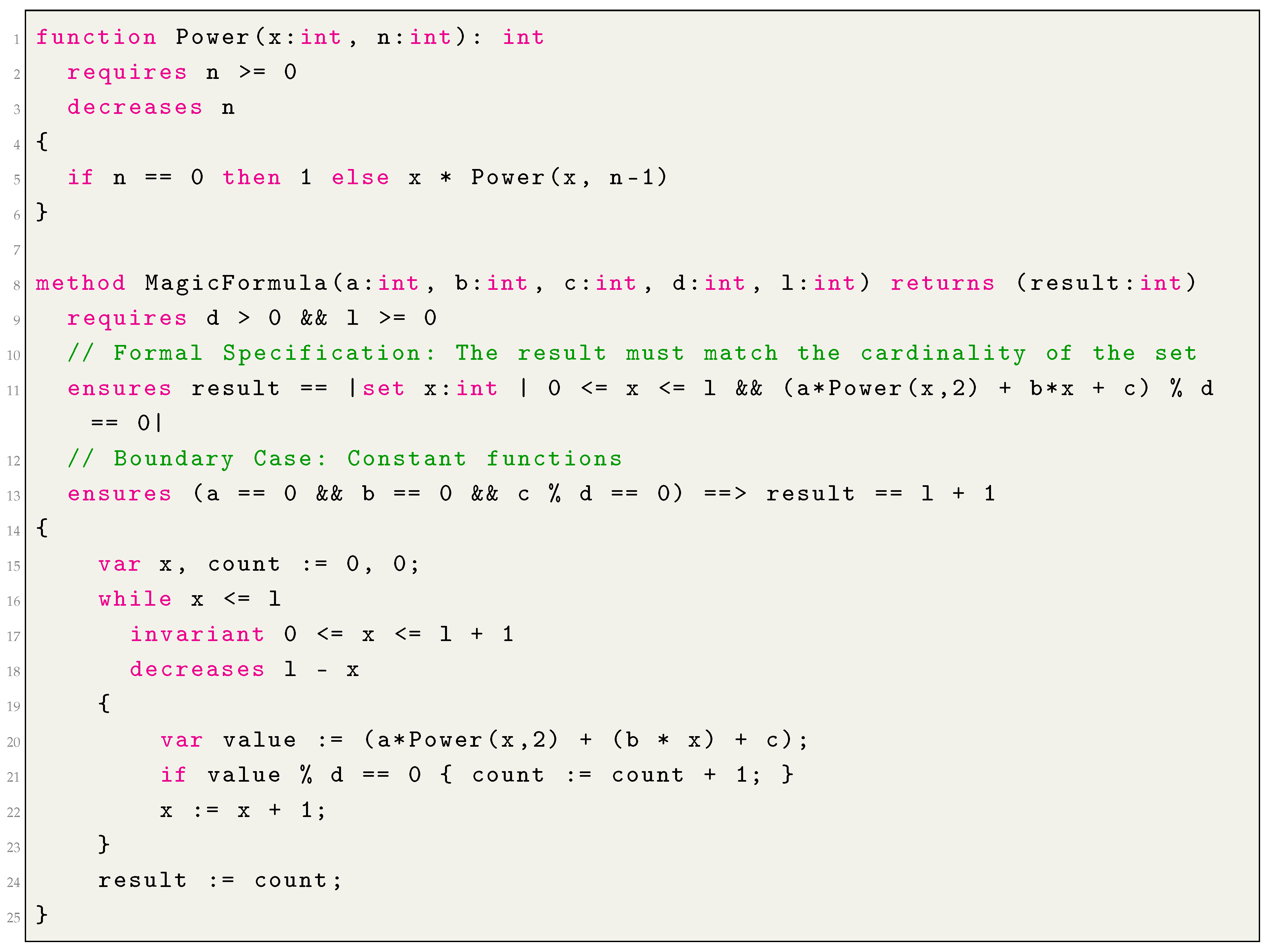

2. Motivational Example

| Listing 1. Dafny implementation (Magic Formula problem) |

|

3. Background

3.1. Dafny: A Verification-Aware Programming Language

3.2. Large Language Models and Open-Weight Models

3.3. Prompt Engineering and Self-Healing

3.4. uDebug: Beyond Vacuous Verification

- Formal Layer: The Dafny verifier proves that the code is logically consistent with its formal specifications.

- Functional Layer: uDebug ensures the code is semantically correct by testing the generated code against extreme edge cases and boundary conditions contributed by the competitive programming community.

4. Summary of the Literature

4.1. Program Synthesis and Verification with Dafny

4.2. LLMs for Formal Methods and Software Engineering

4.3. Benchmarking Dafny Generation

5. Approach

5.1. Research Questions

- RQ1

- - (Contextless Prompting): How effective are LLMs at synthesizing fully verified Dafny methods when provided only with a natural language description, without any formal structural hints?

- RQ2

- - (Signature Prompting): How does the provision of a formal method signature and accompanying functional tests affect the initial synthesis success rate compared to contextless prompting?

- RQ3

-

- (Self-Healing Capabilities): To what extent can an iterative feedback loop recover failed synthesis attempts under varying initial conditions?

- RQ3a (Self-Healing with Contextless Prompting): Can LLMs repair verification failures when the initial attempt was generated from NL alone?

- RQ3b (Self-Healing with Method Signature): Does the presence of a pre-defined method signature provide a superior result for the self-healing process, leading to higher repair rates than contextless healing?

- RQ4

- - (Error Analysis ): To what extent can error descriptions help to overcome errors by using the signature prompt and the self-healing method?

5.2. Problem Curation and Abstraction

5.2.1. Test Dataset

5.2.2. Problems Generalization

5.2.3. Empirical Problem Selection

5.3. Human Written Dataset: NL2VC-60

5.4. Functional Validation via uDebug

5.5. LLM Selection and Evaluation Setup

5.6. Prompt Design

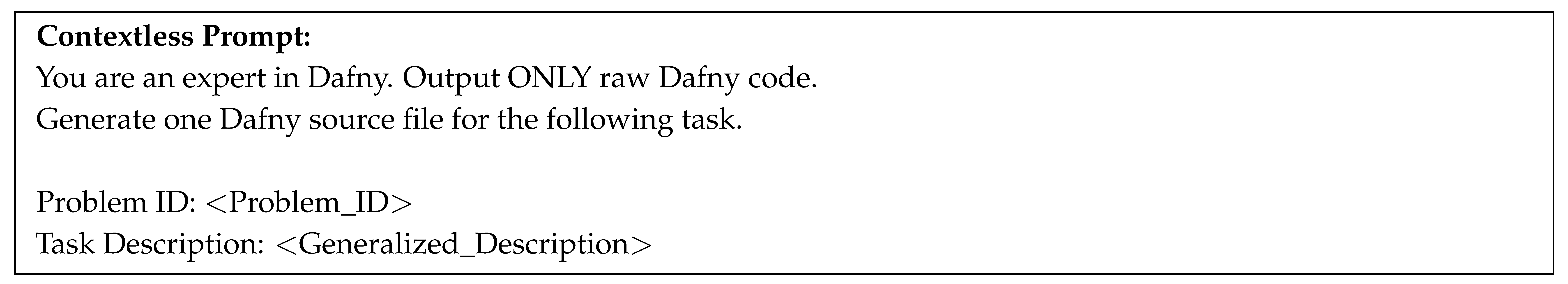

5.6.1. RQ1 [Contextless Prompting]

5.6.2. RQ2 [Method Signature Prompting]

5.6.3. RQ3 [Self-Healing Prompting]

5.7. Evaluation Metrics

5.7.1. Quantitative Metrics: verify@k and functional@k

5.7.2. Qualitative Assessment: Specification Strength and Error Analysis

5.8. Temperature Tuning

5.9. Error Analysis

5.9.1. Syntax Errors

5.9.2. Semantic / Type Errors

5.9.3. Verification Errors

5.10. Experimental Setup

6. Results

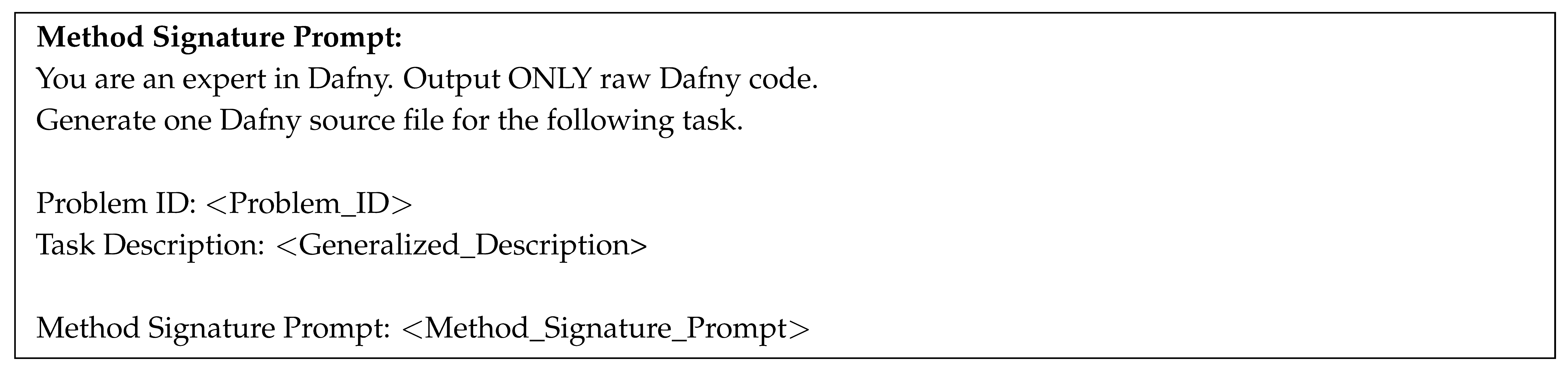

6.1. RQ1: Performance of Contextless Prompting

- Unlike the other general purpose models, Gemma 4-31B showed a surprising aptitude for generating verifiable programs even without external context. The model achieved a peak verify@5 success rate of 54.55% at a temperature of 0.2. This performance indicates that its pretraining likely involved a higher density of formal or algorithmic logic, allowing it to guess correct loop invariants and post-conditions that other models completely missed.

- Codestral was the only other model to consistently yield results, reaching a peak verify@5 of 27.27% at . The success of this model at higher temperatures suggests that while the model possesses the basic syntactic intuition for Dafny. The model often requires more stochastic exploration to arrive at the precise formal annotations needed to satisfy the Z3 SMT solver.

- The 0% success rate of the remaining five models highlights a fundamental challenge in the field. Even highly capable models struggle to infer complex formal specifications from scratch. The findings are reinforcing the need for more structured prompting techniques or retrieval augmentation to bridge the gap between informal requirements and mathematical proof.

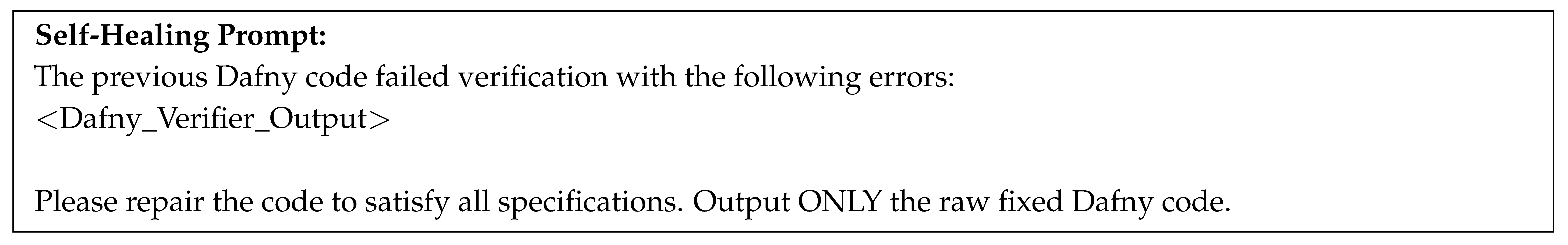

6.2. RQ2: Performance of Signature Prompting

- The most striking improvement was observed in GPT-OSS-120B. While this model recorded a 0% success rate in RQ1, the introduction of method signatures allowed it to achieve a peak verify@5 rate of 63.64% at . This suggests that the model possesses a deep latent knowledge of formal verification and Dafny logic but lacks the ability to self-structure the initial code container from raw requirements.

- Surprisingly, Qwen 3.5-9B emerged as the top performer in this category, reaching a peak verify@5 success rate of 72.73%. This indicates that signature prompting provides constraint to allow smaller models to focus their computational budget on the complex task, often outperforming much larger general-purpose models.

- Unlike the zero-shot results, peak performance under signature prompting was consistently achieved at higher temperatures (typically ). This suggests that once the structural constraints are fixed via the signature, the models benefit from increased stochastic exploration to identify the precise mathematical formulations. For example, specific loop invariants or termination measures required to satisfy the SMT solver.

- All seven models demonstrated signs of life in this setting, with even the weakest models surpassing a 30% success rate at their peak. This confirms that the primary bottleneck in verifiable code generation is not necessarily the logic itself, but the difficulty of mapping informal natural language to the rigid formal signatures required by the Dafny compiler.

6.3. RQ3: Performance of Self-Healing Mechanisms

- RQ3a: Contextless Healing: When attempting to heal from the zero-shot failures of RQ1, most models remained stagnant. GPT-OSS-120B and several others continued to post 0% success rates. The findings suggests that without an initial structural foundation, compiler error messages are too abstract for the model to navigate toward a valid solution. However, Gemma 4-31B proved to be a notable exception, demonstrating a remarkable self-correction capability. By leveraging compiler feedback, it achieved a peak verify@5 rate of 90.91% at and , indicating that it can effectively use error logs to guess missing loop invariants and post-conditions.

- RQ3b: Signature-Guided Healing: Self-healing proved most potent when initiated from the signature-guided prompts of RQ2. In this setting, the structural skeleton provided enough stability for the compiler feedback to be actionable. GPT-OSS-120B demonstrated the most significant turnaround, rising to a peak of 81.82% success at . This suggests that when the method signature is fixed, larger models are efficient at using error feedback to refine mathematical proofs and satisfy the SMT solver.

- Unlike previous rounds, several models (such as Qwen 3 Coder 30B) reached a plateau where performance remained consistent across temperatures in the signature-guided setting. Conversely, Gemma 4-31B maintained high performance (over 80%) in both RQ3a and RQ3b, establishing itself as the most robust model for autonomous Dafny development, regardless of the initial prompt’s context level.

6.4. RQ4: Qualitative Error Analysis and Failure Taxonomy

6.4.1. Syntactic Fragility and Contextual Dependence

6.4.2. Semantic Drift and Invariant Generation

6.4.3. Functional Robustness and Vacuity

| Model | Prompt Strategy | Total Runs | Syntax Errors | Semantic/Type Errors | Verification | Verified |

|---|---|---|---|---|---|---|

| GPT-OSS-120B | Contextless | 564 | 435 | 45 | 0 | 0 |

| Signature Prompt | 816 | 397 | 53 | 111 | 150 | |

| Self-Healing | 1,134 | 620 | 471 | 5 | 20 | |

| GPT-OSS-20B | Contextless | 672 | 597 | 75 | 0 | 0 |

| Signature Prompt | 816 | 397 | 53 | 111 | 150 | |

| Self-Healing | 1,564 | 793 | 149 | 166 | 395 | |

| Codestral-22B | Contextless | 1,285 | 732 | 518 | 0 | 33 |

| Signature Prompt | 792 | 407 | 217 | 0 | 138 | |

| Self-Healing | 1,666 | 1,116 | 188 | 58 | 217 | |

| Qwen 3.6-35B | Contextless | 470 | 9 | 23 | 0 | 438 |

| Signature Prompt | 495 | 0 | 29 | 0 | 466 | |

| Self-Healing | 827 | 39 | 127 | 10 | 651 | |

| Qwen 3-Coder-30B | Contextless | 1,510 | 579 | 657 | 15 | 46 |

| Signature Prompt | 205 | 53 | 16 | 47 | 29 | |

| Self-Healing | 1,893 | 589 | 569 | 351 | 101 | |

| Qwen 3.5-9B | Contextless | 910 | 430 | 350 | 23 | 56 |

| Signature Prompt | 861 | 557 | 77 | 0 | 224 | |

| Self-Healing | 280 | 182 | 14 | 27 | 52 | |

| Gemma 4-31B | Contextless | 1,016 | 489 | 209 | 25 | 251 |

| Signature Prompt | 562 | 145 | 5 | 41 | 369 | |

| Self-Healing | 1,008 | 368 | 217 | 110 | 296 |

7. Findings and Discussion

7.1. Summary of Findings

- RQ1 [Contextless Prompting]: Our experiments show that while most LLMs fail to generate verifiable Dafny code from raw requirements, Gemma 4-31B and Codestral-22B demonstrate a surprising aptitude for the task. Specifically, Gemma 4-31B achieved a peak verify@5 success rate of 54.55% at . However, the 0% success rate of the remaining five models suggests that without structural guidance or external context, most systems struggle to navigate the strict formal constraints of the Dafny language.

- RQ2 [Signature Prompting]: Providing the method signature as additional context drastically improved performance across the board, reversing the widespread failures observed in RQ1. Most notably, GPT-OSS-120B rose from a 0% success rate to 63.64%, while the smaller Qwen 3.5-9B achieved the highest overall verify@5 score of 72.73% at . These results indicate that the primary bottleneck in verifiable synthesis is the structural mapping of requirements to formal signatures, rather than the generation of the underlying verification logic.

- RQ3 [Self-Healing]: Iterative self-healing significantly amplifies success rates, provided a structural foundation (method signature) is present. Gemma 4-31B emerged as the most resilient model, achieving a near-perfect 90.91% success rate in contextless healing. Meanwhile, GPT-OSS-120B achieved its performance ceiling (81.82%) only when signature-guided, suggesting that large-scale general purpose models require structural constraints to effectively interpret and act upon formal compiler feedback.

- RQ4 [Error Distributions]: Our analysis of compilation failures shows that Syntax Errors are the primary barrier in contextless settings, often exceeding 80% of total failures for models like GPT-OSS-20B. While Signature Prompting significantly reduces syntax issues, it shifts the bottleneck to Verification Errors, particularly for the largest models. Notably, Self-Healing effectively converts semantic and syntax errors into verified solutions for most models, though Codestral-22B and Qwen 3-Coder-30B show a tendency to regress into higher syntax error counts during iterative repair, suggesting a struggle to maintain syntactic integrity under compiler-driven feedback.

7.2. Discussion

8. Threats to Validity

9. Conclusion

References

- Mesbah, A.; Van Deursen, A.; Roest, D. Invariant-based automatic testing of modern web applications. IEEE Trans. Softw. Eng. 2011, 38, 35–53. [Google Scholar] [CrossRef]

- Rushby, J. Model checking and other ways of automating formal methods. Position paper for panel on model checking for concurrent programs. Software Quality Week, San Francisco, 1995. [Google Scholar]

- ter Beek, M.; Broy, M.; Dongol, B. The role of formal methods in computer science education. ACM Inroads 2024, 15, 58–66. [Google Scholar] [CrossRef]

- Dipu, N.F.; Hossain, M.M.; Azar, K.Z.; Farahmandi, F.; Tehranipoor, M. Formalfuzzer: Formal verification assisted fuzz testing for soc vulnerability detection. In Proceedings of the 2024 29th Asia and South Pacific Design Automation Conference (ASP-DAC); IEEE, 2024; pp. 355–361. [Google Scholar]

- Paul, S.; Cruz, E.; Dutta, A.; Bhaumik, A.; Blasch, E.; Agha, G.; Patterson, S.; Kopsaftopoulos, F.; Varela, C. Formal verification of safety-critical aerospace systems. IEEE Aerosp. Electron. Syst. Mag. 2023, 38, 72–88. [Google Scholar] [CrossRef]

- Barrett, C.; De Moura, L.; Stump, A. SMT-COMP: Satisfiability modulo theories competition. In Proceedings of the International Conference on Computer Aided Verification, 2005; Springer; pp. 20–23. [Google Scholar]

- Klein, G.; Derrin, P.; Elphinstone, K. Experience report: sel4: formally verifying a high-performance microkernel. In Proceedings of the Proceedings of the 14th ACM SIGPLAN international conference on Functional programming, 2009; pp. 91–96. [Google Scholar]

- Murray, T.; Matichuk, D.; Brassil, M.; Gammie, P.; Bourke, T.; Seefried, S.; Lewis, C.; Gao, X.; Klein, G. seL4: from general purpose to a proof of information flow enforcement. In Proceedings of the 2013 IEEE Symposium on Security and Privacy. IEEE, 2013; pp. 415–429. [Google Scholar]

- Leroy, X. The CompCert C verified compiler: Documentation and user’s manual. PhD thesis, Inria, 2025. [Google Scholar]

- Leroy, X. Formal verification of a realistic compiler. Commun. ACM 2009, 52, 107–115. [Google Scholar] [CrossRef]

- Anysphere, Inc. Cursor: The AI Code Editor. 2024. (accessed on 2026-04-21). [Google Scholar]

- Amazon Web Services; Inc. Amazon Q Developer: AI coding companion. 2024. (accessed on 2026-04-21).

- Ray, P.P. A review on vibe coding: Fundamentals, state-of-the-art, challenges and future directions. Authorea Preprints, 2025. [Google Scholar]

- Ji, Z.; Lee, N.; Frieske, R.; Yu, T.; Su, D.; Xu, Y.; Ishii, E.; Bang, Y.J.; Madotto, A.; Fung, P. Survey of hallucination in natural language generation. ACM Comput. Surv. 2023, 55, 1–38. [Google Scholar] [CrossRef]

- Leino, K.R.M. Dafny: An automatic program verifier for functional correctness. In Proceedings of the International conference on logic for programming artificial intelligence and reasoning, 2010; Springer; pp. 348–370. [Google Scholar]

- Hoare, C.A.R. An axiomatic basis for computer programming. Commun. ACM 1969, 12, 576–580. [Google Scholar] [CrossRef]

- Noble, J.; Streader, D.; Gariano, I.O.; Samarakoon, M. More programming than programming: Teaching formal methods in a software engineering programme. In Proceedings of the NASA Formal Methods Symposium, 2022; Springer; pp. 431–450. [Google Scholar]

- GitHub Community. Dafny Repositories Search Results. 2026. (accessed on 2026-04-23).

- UVa Online Judge. UVa Online Judge. Available online: https://onlinejudge.org/ (accessed on 2026-04-20).

- uDebug Team. uDebug: Online Debugging Tool for Competitive Programming. 2026. Available online: https://www.udebug.com/ (accessed on 2026-04-19).

- Judge, UVa Online. Problem 11934: Magic Formula. 2010. Available online: https://onlinejudge.org/external/119/11934.pdf (accessed on 2026-04-21).

- UVa Online Judge. 2026. Available online: https://onlinejudge.org/ (accessed on 2026-04-19).

- Leino, K.R.M. Developing verified programs with Dafny. In Proceedings of the Proceedings of the 2012 ACM conference on High integrity language technology, 2012; pp. 9–10. [Google Scholar]

- Dafny Team. Dafny Reference Manual; Dafny Software Foundation, 2024; (accessed on 2026-04-20). [Google Scholar]

- Microsoft Research. Dafny: A Language and Program Verifier for Functional Correctness. Official Project Page. 2024. [Google Scholar]

- Fedchin, A.; Dean, T.; Foster, J.S.; et al. A Toolkit for Automated Testing of Dafny. In Amazon Science; 2023. [Google Scholar]

- Le Goues, C.; Leino, K.R.M.; Moskal, M. The boogie verification debugger (tool paper). In Proceedings of the International Conference on Software Engineering and Formal Methods, 2011; Springer; pp. 407–414. [Google Scholar]

- De Moura, L.; Bjørner, N. Z3: An efficient SMT solver. In Proceedings of the International conference on Tools and Algorithms for the Construction and Analysis of Systems, 2008; Springer; pp. 337–340. [Google Scholar]

- Cook, B. Formal reasoning about the security of amazon web services. In Proceedings of the International Conference on Computer Aided Verification, 2018; Springer; pp. 38–47. [Google Scholar]

- Wang, Y.; Le, H.; Gotmare, A.; Bui, N.; Li, J.; Hoi, S. Codet5+: Open code large language models for code understanding and generation. In Proceedings of the Proceedings of the 2023 conference on empirical methods in natural language processing, 2023; pp. 1069–1088. [Google Scholar]

- Copet, J.; Carbonneaux, Q.; Cohen, G.; Gehring, J.; Kahn, J.; Kossen, J.; Kreuk, F.; McMilin, E.; Meyer, M.; Wei, Y.; et al. Cwm: An open-weights llm for research on code generation with world models. arXiv 2025, arXiv:2510.02387. [Google Scholar]

- Grattafiori, A.; Dubey, A.; Jauhri, A.; Pandey, A.; Kadian, A.; Al-Dahle, A.; Letman, A.; Mathur, A.; Schelten, A.; Vaughan, A.; et al. The llama 3 herd of models. arXiv 2024, arXiv:2407.21783. [Google Scholar] [CrossRef]

- Yang, A.; Li, A.; Yang, B.; Zhang, B.; Hui, B.; Zheng, B.; Yu, B.; Gao, C.; Huang, C.; Lv, C.; et al. Qwen3 technical report. arXiv 2025, arXiv:2505.09388. [Google Scholar] [CrossRef]

- Kamath, A.; Ferret, J.; Pathak, S.; Vieillard, N.; Merhej, R.; Perrin, S.; Matejovicova, T.; Ramé, A.; Rivière, M.; Rouillard, L.; et al. Gemma 3 technical report. arXiv 2025, 4. arXiv:2503.19786. [CrossRef]

- Manik, M.M.H.; Wang, G. Gemma 4, Phi-4, and Qwen3: Accuracy-Efficiency Tradeoffs in Dense and MoE Reasoning Language Models. arXiv 2026, arXiv:2604.07035. [Google Scholar]

- Cao, R.; Chen, M.; Chen, J.; Cui, Z.; Feng, Y.; Hui, B.; Jing, Y.; Li, K.; Li, M.; Lin, J.; et al. Qwen3-coder-next technical report. arXiv 2026, arXiv:2603.00729. [Google Scholar]

- White, J.; Fu, Q.; Hays, S.; Sandborn, M.; Olea, C.; Gilbert, H.; Elnashar, A.; Spencer-Smith, J.; Schmidt, D.C. A prompt pattern catalog to enhance prompt engineering with chatgpt. arXiv 2023, arXiv:2302.11382. [Google Scholar] [CrossRef]

- Giray, L. Prompt engineering with ChatGPT: a guide for academic writers. Ann. Biomed. Eng. 2023, 51, 2629–2633. [Google Scholar] [CrossRef]

- Reynolds, L.; McDonell, K. Prompt programming for large language models: Beyond the few-shot paradigm. In Proceedings of the Extended abstracts of the 2021 CHI conference on human factors in computing systems, 2021; pp. 1–7. [Google Scholar]

- Tihanyi, N.; Charalambous, Y.; Jain, R.; Ferrag, M.A.; Cordeiro, L.C. A new era in software security: Towards self-healing software via large language models and formal verification. In Proceedings of the 2025 IEEE/ACM International Conference on Automation of Software Test (AST). IEEE, 2025; pp. 136–147. [Google Scholar]

- Gulwani, S.; Polozov, O.; Singh, R. Program synthesis. Found. Trends Program. Lang. 2017, 4, 1–119. [Google Scholar] [CrossRef]

- Ringer, T.; Palmskog, K.; Sergey, I.; Milos, G.; Tatlock, Z. QED at large: A survey of engineering of formally verified software. Found. Trends Program. Lang. 2019, 5, 102–281. [Google Scholar] [CrossRef]

- Jones, C.B.; Misra, J. Theories of programming: the life and works of Tony Hoare; ACM, 2021. [Google Scholar]

- Yang, Z.; Wang, W.; Casas, J.; Cocchini, P.; Yang, J. Towards a correct-by-construction FHE model. Cryptol. ePrint Arch. 2023. [Google Scholar]

- Cassez, F.; Fuller, J.; Ghale, M.K.; Pearce, D.J.; Quiles, H.M. Formal and executable semantics of the ethereum virtual machine in dafny. In Proceedings of the International Symposium on Formal Methods, 2023; Springer; pp. 571–583. [Google Scholar]

- Li, L.; Zhu, M.; Cleaveland, R.; Nicolellis, A.; Lee, Y.; Chang, L.; Wu, X. Qafny: A quantum-program verifier. arXiv 2022, arXiv:2211.06411. [Google Scholar]

- Garavel, H.; Ter Beek, M.H.; Van De Pol, J. The 2020 expert survey on formal methods. In Proceedings of the International Conference on Formal Methods for Industrial Critical Systems, 2020; Springer; pp. 3–69. [Google Scholar]

- Irfan, A.; Porncharoenwase, S.; Rakamarić, Z.; Rungta, N.; Torlak, E. Testing Dafny (experience paper). In Proceedings of the Proceedings of the 31st ACM SIGSOFT International Symposium on Software Testing and Analysis, 2022; pp. 556–567. [Google Scholar]

- Chakarov, A.; Fedchin, A.; Rakamarić, Z.; Rungta, N. Better counterexamples for Dafny. In Proceedings of the International Conference on Tools and Algorithms for the Construction and Analysis of Systems, 2022; Springer; pp. 404–411. [Google Scholar]

- First, E.; Rabe, M.N.; Ringer, T.; Brun, Y. Baldur: Whole-proof generation and repair with large language models. In Proceedings of the Proceedings of the 31st ACM Joint European Software Engineering Conference and Symposium on the Foundations of Software Engineering, 2023; pp. 1229–1241. [Google Scholar]

- Jiang, A.Q.; Li, W.; Tworkowski, S.; Czechowski, K.; Odrzygóźdź, T.; Miłoś, P.; Wu, Y.; Jamnik, M. Thor: Wielding hammers to integrate language models and automated theorem provers. Adv. Neural Inf. Process. Syst. 2022, 35, 8360–8373. [Google Scholar]

- Czajka, Ł.; Ekici, B.; Kaliszyk, C. Concrete semantics with Coq and CoqHammer. In Proceedings of the International Conference on Intelligent Computer Mathematics, 2018; Springer; pp. 53–59. [Google Scholar]

- Wu, Y.; Jiang, A.Q.; Li, W.; Rabe, M.; Staats, C.; Jamnik, M.; Szegedy, C. Autoformalization with large language models. Adv. Neural Inf. Process. Syst. 2022, 35, 32353–32368. [Google Scholar]

- Madaan, A.; Zhou, S.; Alon, U.; Yang, Y.; Neubig, G. Language models of code are few-shot commonsense learners. In Proceedings of the Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, 2022; pp. 1384–1403. [Google Scholar]

- Frieder, S.; Pinchetti, L.; Chevalier, C.; Griffiths, R.R.; Salvatori, T.; Lukasiewicz, T.; Petersen, P.; Berner, J. Mathematical capabilities of chatgpt. Adv. Neural Inf. Process. Syst. 2023, 36, 27699–27744. [Google Scholar]

- Narkawicz, A.; Munoz, C.A.; Dutle, A.M. The MINERVA software development process. In Proceedings of the NASA Formal Methods Symposium (NFM) 2017, 2017; pp. number NF1676L–26800. [Google Scholar]

- Lewis, G.A.; Comella-Dorda, S.; Gluch, D.P.; Hudak, J.; Weinstock, C. Model-based verification: Analysis guidelines. Technical report. 2001. [Google Scholar]

- Nashid, N.; Sintaha, M.; Mesbah, A. Retrieval-based prompt selection for code-related few-shot learning. In Proceedings of the 2023 IEEE/ACM 45th International Conference on Software Engineering (ICSE); IEEE, 2023; pp. 2450–2462. [Google Scholar]

- Tufano, R.; Pascarella, L.; Bavota, G. Automating code-related tasks through transformers: The impact of pre-training. In Proceedings of the 2023 IEEE/ACM 45th International Conference on Software Engineering (ICSE); IEEE, 2023; pp. 2425–2437. [Google Scholar]

- Sellergren, A.; Kazemzadeh, S.; Jaroensri, T.; Kiraly, A.; Traverse, M.; Kohlberger, T.; Xu, S.; Jamil, F.; Hughes, C.; Lau, C.; et al. Medgemma technical report. arXiv 2025, arXiv:2507.05201. [Google Scholar] [CrossRef]

- Sun, C.; Sheng, Y.; Padon, O.; Barrett, C. Clover: Clo sed-loop ver ifiable code generation. In Proceedings of the International Symposium on AI Verification, 2024; Springer; pp. 134–155. [Google Scholar]

- Austin, J.; Odena, A.; Nye, M.; Bosma, M.; Michalewski, H.; Dohan, D.; Jiang, E.; Cai, C.; Terry, M.; Le, Q.; et al. Program synthesis with large language models. arXiv 2021, arXiv:2108.07732. [Google Scholar] [CrossRef]

- Banerjee, D.; Bouissou, O.; Zetzsche, S. DafnyPro: LLM-Assisted Automated Verification for Dafny Programs. arXiv 2026, arXiv:2601.05385. [Google Scholar]

- Loughridge, C.; Sun, Q.; Ahrenbach, S.; Cassano, F.; Sun, C.; Sheng, Y.; Mudide, A.; Misu, M.R.H.; Amin, N.; Tegmark, M. Dafnybench: A benchmark for formal software verification. arXiv 2024, arXiv:2406.08467. [Google Scholar] [CrossRef]

- Wang, C.; Scazzariello, M.; Kostić, D.; Chiesa, M. Toward Automated, Contamination-free Dafny Benchmark Generation.

- Baksys, M.; Zetzsche, S.; Bouissou, O.; Delmas, R.; Kong, S.; Holden, S.B. ATLAS: Automated Toolkit for Large-Scale Verified Code Synthesis. arXiv 2025, arXiv:2512.10173. [Google Scholar] [CrossRef]

- Ma, L.; Liu, S.; Li, Y.; Xie, X.; Bu, L. Specgen: Automated generation of formal program specifications via large language models. In Proceedings of the 2025 IEEE/ACM 47th International Conference on Software Engineering (ICSE); IEEE, 2025; pp. 16–28. [Google Scholar]

- The Dafny Project. Dafny Reference Manual; Amazon Web Services, 2024; (accessed on Apr. 22 2026). [Google Scholar]

- Misu, M.R.H.; Lopes, C.V.; Ma, I.; Noble, J. Towards ai-assisted synthesis of verified dafny methods. Proc. ACM Softw. Eng. 2024, 1, 812–835. [Google Scholar] [CrossRef]

- OpenAI. Introducing GPT-OSS: Open-Weight Reasoning Models. 2025. (accessed on Apr. 22 2026). [Google Scholar]

- Google DeepMind. Gemma 4: Next-Generation Open Multimodal Models, 2026. (accessed on Apr. 22 2026).

- Alibaba Qwen Team. Qwen3.5 and Qwen3.6-MoE: Advancing Open-Weight Foundation Models, 2026. (accessed on Apr. 22 2026).

- Alibaba Qwen Team. Qwen3-Coder-30B: Specialized Models for Agentic Code Intelligence, 2025. (accessed on Apr. 22 2026).

- Mistral, A.I. Codestral-22B-v0.1: An Open-Weight Model for Professional Coders, 2024. (accessed on Apr. 22 2026).

- Kulal, S.; Pasupat, P.; Chandra, K.; Lee, M.; Padon, O.; Aiken, A.; Liang, P.S. Spoc: Search-based pseudocode to code. Adv. Neural Inf. Process. Syst. 2019, 32. [Google Scholar]

- Chen, M.; Tworek, J.; Jun, H.; Yuan, Q.; Pinto, H.P.D.O.; Kaplan, J.; Edwards, H.; Burda, Y.; Joseph, N.; Brockman, G.; et al. Evaluating large language models trained on code. arXiv 2021, arXiv:2107.03374. [Google Scholar] [CrossRef]

- Achiam, J.; Adler, S.; Agarwal, S.; Ahmad, L.; Akkaya, I.; Aleman, F.L.; Almeida, D.; Altenschmidt, J.; Altman, S.; Anadkat, S.; et al. Gpt-4 technical report. arXiv 2023, arXiv:2303.08774. [Google Scholar] [CrossRef]

- Troshin, S.; Mohammed, W.; Meng, Y.; Monz, C.; Fokkens, A.; Niculae, V. Control the Temperature: Selective Sampling for Diverse and High-Quality LLM Outputs. arXiv 2025, arXiv:2510.01218. [Google Scholar]

- Ryan, A.; Khalil, I.; Jahid, A.A.; Erfan, M.; Park, S.; Rahman, A.A.U.; Rahman, M.R. Mind the Gap: Evaluating LLMs for High-Level Malicious Package Detection vs. Fine-Grained Indicator Identification. arXiv 2026, arXiv:2602.16304. [Google Scholar]

- The Dafny Project. Dafny NuGet Package: The Dafny Compiler and Verifier. NuGet Package Manager, 2024. Version 4.x.x. (accessed on Apr. 22 2026).

- Siegmund, J.; Siegmund, N.; Apel, S. Views on internal and external validity in empirical software engineering. Proceedings of the 2015 IEEE/ACM 37th IEEE International Conference on Software Engineering. IEEE 2015, Vol. 1, 9–19. [Google Scholar]

- Feldt, R.; Magazinius, A. Validity threats in empirical software engineering research-an initial survey. In Proceedings of the Seke, 2010; pp. 374–379. [Google Scholar]

| Work / Dataset | Input Type | Problem Type | Dafny | Size | Avg Code | Limitations |

|---|---|---|---|---|---|---|

| Clover [61] | Short NL / Ann. | Textbook | Yes | 63–66 | ∼19 LoC | Simple problems, small scale |

| MBPP-Dafny [62] | NL (short) | Basic Python | Yes | 164 | ∼19 LoC | Entry-level tasks only |

| HumanEval-Dafny [63] | NL (short) | Algorithmic | Yes | 132 | ∼50 LoC | Still benchmark-style |

| DafnyBench [64] | Mixed | Real + Textbook | Yes | 782 | ∼53 LoC | Limited human-written verified programs |

| TacoDafny [65] | NL (Gen.) | Synthetic | Yes | Auto. | Varies | Synthetic, not real-world |

| ATLAS [66] | Alg. + Ref. | Algorithmic | Yes | Large | Varies | No direct NL to Dafny |

| SpecGen [67] | NL | LeetCode | No | N/A | N/A | Uses OpenJML, not Dafny |

| Component | Original UVa Problem Description (Competitive Flavor) | Generic Description (Requirement Focused) |

|---|---|---|

| Description | Some operators checks about the relationship between two values and these operators are called relational operators. Given two numerical values your job is just to find out the relationship between them that is (i) First one is greater than the second (ii) First one is less than the second or (iii) First and second one is equal. | Some operators checks about the relationship between two values and these operators are called relational operators. Given two numerical values your job is just to find out the relationship between them that is (i) First one is greater than the second (ii) First one is less than the second or (iii) First and second one is equal. |

| Input | First line of the input file is an integer t () which denotes how many sets of inputs are there. Each of the next t lines contain two integers a and b (). | The input contain two integers a and b. |

| Output | For each line of input produce one line of output. This line contains any one of the relational operators ’>’, ’<’ or ’=’, which indicates the relation that is appropriate for the given two numbers. | The output contains any one of the relational operators ’>’, ’<’ or ’=’, which indicates the relation that is appropriate for the given two numbers. |

| Sample Input | 3 10 20 20 10 10 10 | 10 20 20 10 10 10 |

| Sample Output | <> = | <> = |

| Model | Params | Context | Type | Category |

|---|---|---|---|---|

| GPT-OSS-120B | 120B | 131k | OS | General |

| Qwen 3.6-35B-A3B | 35B | 256k | OS | Agentic |

| Gemma 4-31B | 31B | 256k | OS | Multimodal |

| Qwen 3 Coder 30B | 30B | 160k | OS | Coder |

| Codestral-22B-v0.1 | 22B | 32k | OS | Coder |

| GPT-OSS-20B | 20B | 128k | OS | General |

| Qwen 3.5-9B | 9B | 262k | OS | General |

| OS = Open-Source Weights | ||||

| Model | Temp (T) | verify@1 | verify@3 | verify@5 | ||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Succ. | Total | % | Succ. | Total | % | Succ. | Total | % | ||

| GPT-OSS-120B | 0.0 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 0 | 11 | 0.00% |

| 0.2 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 0 | 11 | 0.00% | |

| 0.4 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 0 | 11 | 0.00% | |

| 0.6 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 0 | 11 | 0.00% | |

| 0.8 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 0 | 11 | 0.00% | |

| Qwen 3.5-9B | 0.0 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 0 | 11 | 0.00% |

| 0.2 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 0 | 11 | 0.00% | |

| 0.4 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 0 | 11 | 0.00% | |

| 0.6 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 0 | 11 | 0.00% | |

| 0.8 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 0 | 11 | 0.00% | |

| Qwen 3 Coder 30B | 0.0 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 0 | 11 | 0.00% |

| 0.2 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 0 | 11 | 0.00% | |

| 0.4 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 0 | 11 | 0.00% | |

| 0.6 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 0 | 11 | 0.00% | |

| 0.8 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 0 | 11 | 0.00% | |

| GPT-OSS 20B | 0.0 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 0 | 11 | 0.00% |

| 0.2 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 0 | 11 | 0.00% | |

| 0.4 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 0 | 11 | 0.00% | |

| 0.6 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 0 | 11 | 0.00% | |

| 0.8 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 0 | 11 | 0.00% | |

| Codestral-22B | 0.0 | 1 | 11 | 9.09% | 1 | 11 | 9.09% | 1 | 11 | 9.09% |

| 0.2 | 1 | 11 | 9.09% | 1 | 11 | 9.09% | 2 | 11 | 18.18% | |

| 0.4 | 1 | 11 | 9.09% | 2 | 11 | 18.18% | 2 | 11 | 18.18% | |

| 0.6 | 1 | 11 | 9.09% | 2 | 11 | 18.18% | 1 | 11 | 9.09% | |

| 0.8 | 1 | 11 | 9.09% | 1 | 11 | 9.09% | 3 | 11 | 27.27% | |

| Qwen 3.6-35B | 0.0 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 0 | 11 | 0.00% |

| 0.2 | 0 | 11 | 0.00% | 1 | 11 | 9.09% | 2 | 11 | 18.18% | |

| 0.4 | 0 | 11 | 0.00% | 1 | 11 | 9.09% | 2 | 11 | 18.18% | |

| 0.6 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 1 | 11 | 9.09% | |

| 0.8 | 0 | 11 | 0.00% | 0 | 11 | 0.00% | 1 | 11 | 9.09% | |

| Gemma 4-31B | 0.0 | 3 | 11 | 27.27% | 3 | 11 | 27.27% | 3 | 11 | 27.27% |

| 0.2 | 0 | 11 | 0.00% | 4 | 11 | 36.36% | 6 | 11 | 54.55% | |

| 0.4 | 0 | 11 | 0.00% | 5 | 11 | 45.45% | 3 | 11 | 27.27% | |

| 0.6 | 2 | 11 | 18.18% | 5 | 11 | 45.45% | 4 | 11 | 36.36% | |

| 0.8 | 2 | 11 | 18.18% | 3 | 11 | 27.27% | 4 | 11 | 36.36% | |

| Model | Temp (T) | verify@1 | verify@3 | verify@5 | ||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Succ. | Total | % | Succ. | Total | % | Succ. | Total | % | ||

| GPT-OSS-120B | 0.0 | 6 | 11 | 54.55% | 6 | 11 | 54.55% | 6 | 11 | 54.55% |

| 0.2 | 5 | 11 | 45.45% | 6 | 11 | 54.55% | 6 | 11 | 54.55% | |

| 0.4 | 6 | 11 | 54.55% | 6 | 11 | 54.55% | 6 | 11 | 54.55% | |

| 0.6 | 6 | 11 | 54.55% | 5 | 11 | 45.45% | 6 | 11 | 54.55% | |

| 0.8 | 6 | 11 | 54.55% | 7 | 11 | 63.64% | 7 | 11 | 63.64% | |

| Qwen 3.5-9B | 0.0 | 3 | 11 | 27.27% | 2 | 11 | 18.18% | 2 | 11 | 18.18% |

| 0.2 | 4 | 11 | 36.36% | 3 | 11 | 27.27% | 5 | 11 | 45.45% | |

| 0.4 | 2 | 11 | 18.18% | 5 | 11 | 45.45% | 4 | 11 | 36.36% | |

| 0.6 | 2 | 11 | 18.18% | 4 | 11 | 36.36% | 3 | 11 | 27.27% | |

| 0.8 | 4 | 11 | 36.36% | 5 | 11 | 45.45% | 8 | 11 | 72.73% | |

| Qwen 3 Coder 30B | 0.0 | 3 | 11 | 27.27% | 4 | 11 | 36.36% | 3 | 11 | 27.27% |

| 0.2 | 3 | 11 | 27.27% | 4 | 11 | 36.36% | 3 | 11 | 27.27% | |

| 0.4 | 4 | 11 | 36.36% | 5 | 11 | 45.45% | 5 | 11 | 45.45% | |

| 0.6 | 4 | 11 | 36.36% | 4 | 11 | 36.36% | 5 | 11 | 45.45% | |

| 0.8 | 4 | 11 | 36.36% | 4 | 11 | 36.36% | 4 | 11 | 36.36% | |

| GPT-OSS 20B | 0.0 | 3 | 11 | 27.27% | 3 | 11 | 27.27% | 3 | 11 | 27.27% |

| 0.2 | 4 | 11 | 36.36% | 5 | 11 | 45.45% | 5 | 11 | 45.45% | |

| 0.4 | 2 | 11 | 18.18% | 4 | 11 | 36.36% | 5 | 11 | 45.45% | |

| 0.6 | 5 | 11 | 45.45% | 6 | 11 | 54.55% | 4 | 11 | 36.36% | |

| 0.8 | 2 | 11 | 18.18% | 6 | 11 | 54.55% | 5 | 11 | 45.45% | |

| Codestral-22B | 0.0 | 3 | 11 | 27.27% | 3 | 11 | 27.27% | 3 | 11 | 27.27% |

| 0.2 | 4 | 11 | 36.36% | 4 | 11 | 36.36% | 5 | 11 | 45.45% | |

| 0.4 | 3 | 11 | 27.27% | 2 | 11 | 18.18% | 5 | 11 | 45.45% | |

| 0.6 | 2 | 11 | 18.18% | 3 | 11 | 27.27% | 7 | 11 | 63.64% | |

| 0.8 | 3 | 11 | 27.27% | 2 | 11 | 18.18% | 6 | 11 | 54.55% | |

| Qwen 3.6-35B | 0.0 | 2 | 11 | 18.18% | 3 | 11 | 27.27% | 7 | 11 | 63.64% |

| 0.2 | 2 | 11 | 18.18% | 3 | 11 | 27.27% | 3 | 11 | 27.27% | |

| 0.4 | 2 | 11 | 18.18% | 3 | 11 | 27.27% | 4 | 11 | 36.36% | |

| 0.6 | 1 | 11 | 9.09% | 3 | 11 | 27.27% | 4 | 11 | 36.36% | |

| 0.8 | 0 | 11 | 0.00% | 3 | 11 | 27.27% | 3 | 11 | 27.27% | |

| Gemma 4-31B | 0.0 | 3 | 11 | 27.27% | 3 | 11 | 27.27% | 5 | 11 | 45.45% |

| 0.2 | 3 | 11 | 27.27% | 3 | 11 | 27.27% | 6 | 11 | 54.55% | |

| 0.4 | 3 | 11 | 27.27% | 3 | 11 | 27.27% | 4 | 11 | 36.36% | |

| 0.6 | 3 | 11 | 27.27% | 3 | 11 | 27.27% | 4 | 11 | 36.36% | |

| 0.8 | 5 | 11 | 45.45% | 7 | 11 | 63.64% | 7 | 11 | 63.64% | |

| Model | Temp (T) | Contextless Healing (RQ3a) | Signature-Guided Healing (RQ3b) | ||||

|---|---|---|---|---|---|---|---|

| Succ. | Total | % | Succ. | Total | % | ||

| GPT-OSS-120B | 0.0 | 0 | 11 | 0.00% | 7 | 11 | 63.64% |

| 0.2 | 0 | 11 | 0.00% | 9 | 11 | 81.82% | |

| 0.4 | 0 | 11 | 0.00% | 7 | 11 | 63.64% | |

| 0.6 | 0 | 11 | 0.00% | 8 | 11 | 72.73% | |

| 0.8 | 0 | 11 | 0.00% | 7 | 11 | 63.64% | |

| Qwen 3.5-9B | 0.0 | 3 | 11 | 27.27% | 2 | 11 | 18.18% |

| 0.2 | 0 | 11 | 0.00% | 3 | 11 | 27.27% | |

| 0.4 | 2 | 11 | 18.18% | 5 | 11 | 45.45% | |

| 0.6 | 0 | 11 | 0.00% | 4 | 11 | 36.36% | |

| 0.8 | 0 | 11 | 0.00% | 3 | 11 | 27.27% | |

| Qwen 3 Coder 30B | 0.0 | 2 | 11 | 18.18% | 6 | 11 | 54.55% |

| 0.2 | 2 | 11 | 18.18% | 6 | 11 | 54.55% | |

| 0.4 | 3 | 11 | 27.27% | 6 | 11 | 54.55% | |

| 0.6 | 1 | 11 | 9.09% | 6 | 11 | 54.55% | |

| 0.8 | 6 | 11 | 54.55% | 6 | 11 | 54.55% | |

| GPT-OSS 20B | 0.0 | 0 | 11 | 0.00% | 4 | 11 | 36.36% |

| 0.2 | 1 | 11 | 9.09% | 4 | 11 | 36.36% | |

| 0.4 | 0 | 11 | 0.00% | 7 | 11 | 63.64% | |

| 0.6 | 0 | 11 | 0.00% | 5 | 11 | 45.45% | |

| 0.8 | 1 | 11 | 9.09% | 4 | 11 | 36.36% | |

| Codestral-22B | 0.0 | 0 | 11 | 0.00% | 2 | 11 | 18.18% |

| 0.2 | 0 | 11 | 0.00% | 3 | 11 | 27.27% | |

| 0.4 | 0 | 11 | 0.00% | 5 | 11 | 45.45% | |

| 0.6 | 1 | 11 | 9.09% | 6 | 11 | 54.55% | |

| 0.8 | 0 | 11 | 0.00% | 3 | 11 | 27.27% | |

| Qwen 3.6-35B | 0.0 | 0 | 11 | 0.00% | 4 | 11 | 36.36% |

| 0.2 | 0 | 11 | 0.00% | 4 | 11 | 36.36% | |

| 0.4 | 1 | 11 | 9.09% | 4 | 11 | 36.36% | |

| 0.6 | 0 | 11 | 0.00% | 4 | 11 | 36.36% | |

| 0.8 | 2 | 11 | 18.18% | 6 | 11 | 54.55% | |

| Gemma 4-31B | 0.0 | 8 | 11 | 72.73% | 7 | 11 | 63.64% |

| 0.2 | 10 | 11 | 90.91% | 7 | 11 | 63.64% | |

| 0.4 | 9 | 11 | 81.82% | 8 | 11 | 72.73% | |

| 0.6 | 10 | 11 | 90.91% | 9 | 11 | 81.82% | |

| 0.8 | 8 | 11 | 72.73% | 9 | 11 | 81.82% | |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).