Submitted:

23 April 2026

Posted:

24 April 2026

You are already at the latest version

Abstract

Keywords:

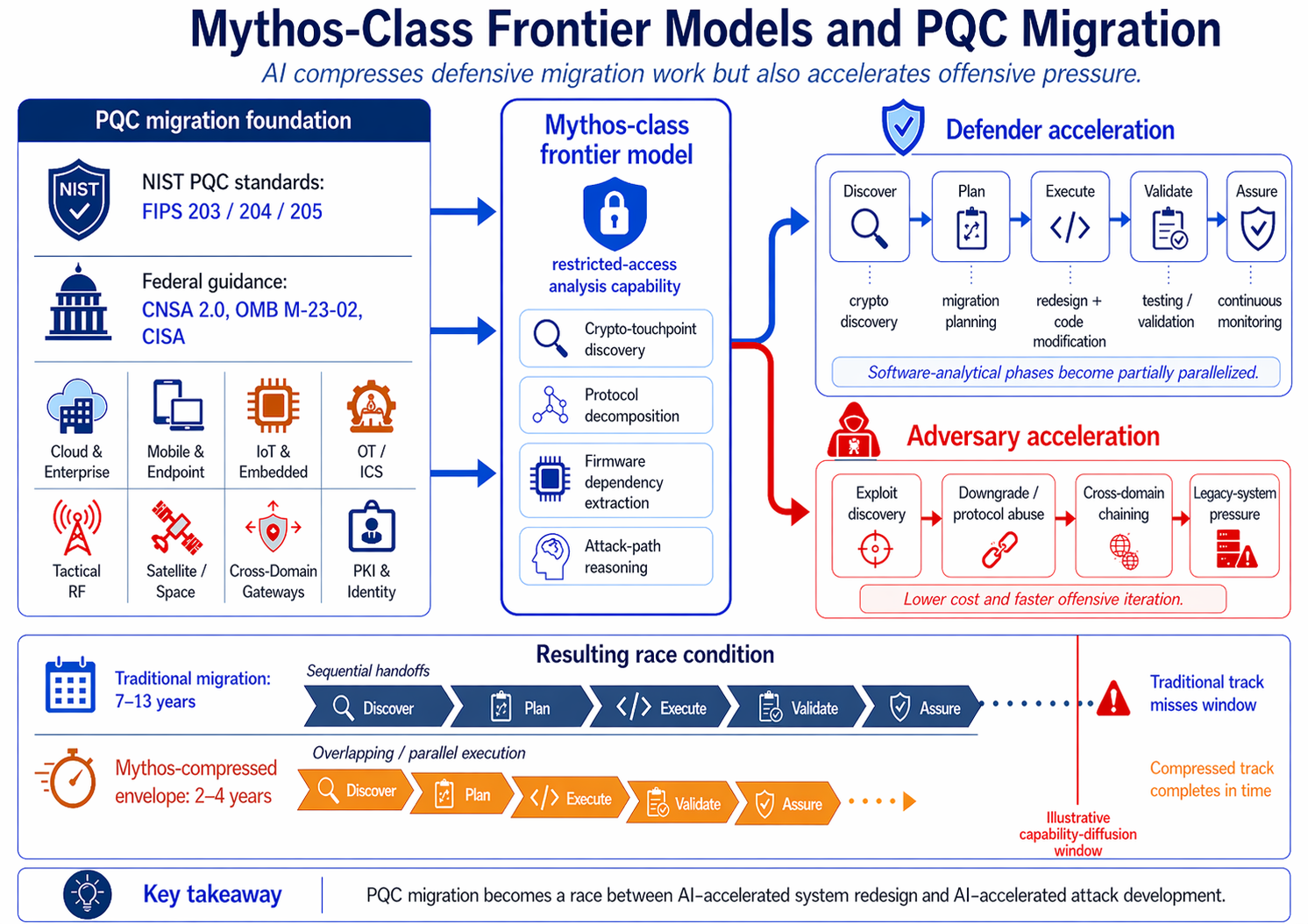

1. Introduction

- A systems-engineering architecture modeling Mythos as a system actor.

- A lifecycle-aligned PQC migration model incorporating AI-accelerated analysis.

- A revised cost and timeline model.

- Governance and risk recommendations for frontier-model access.

1.1. Method

1.2. Epistemic Status

2. System Definition

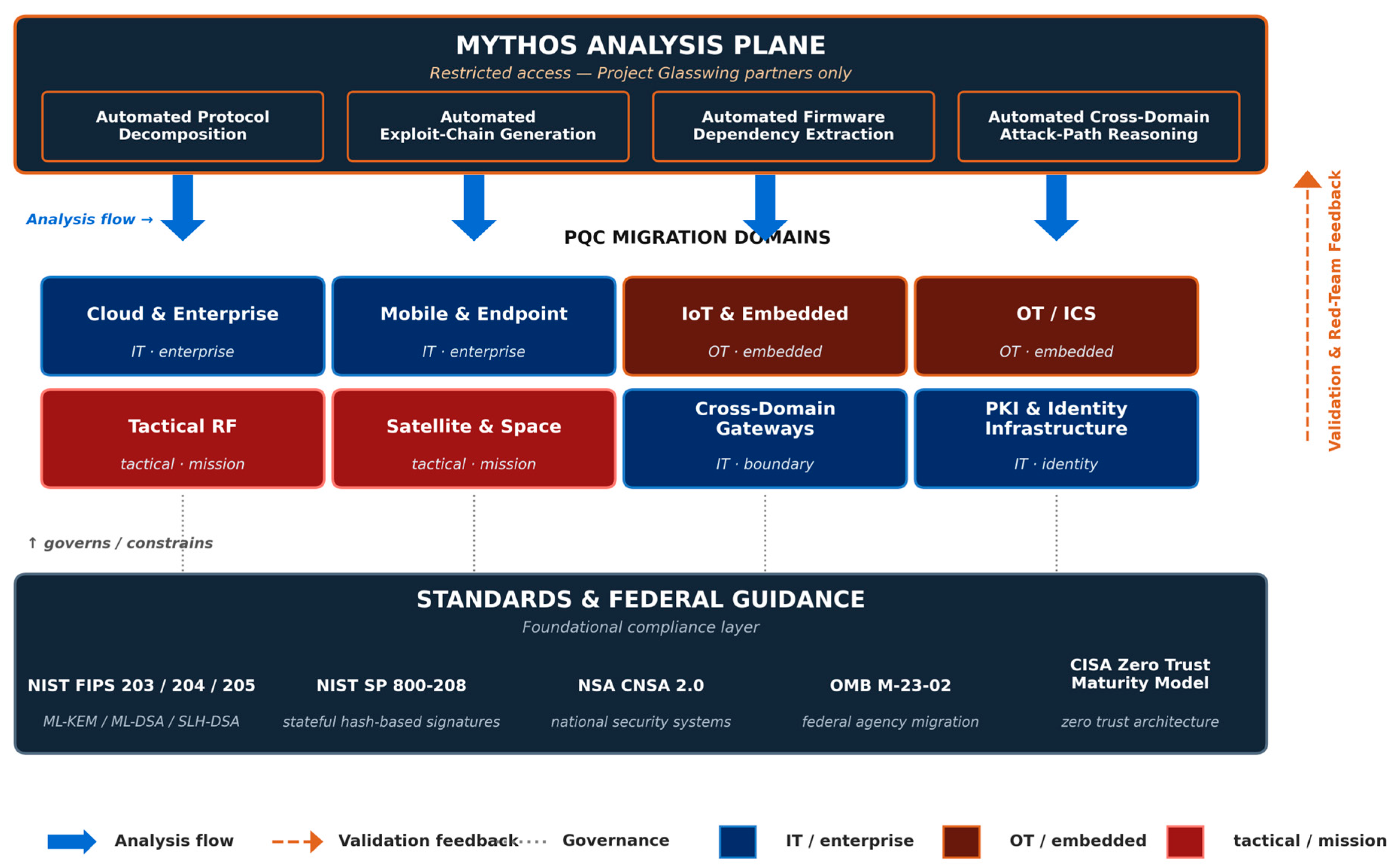

2.1. PQC Migration as a System-of-Systems Transformation

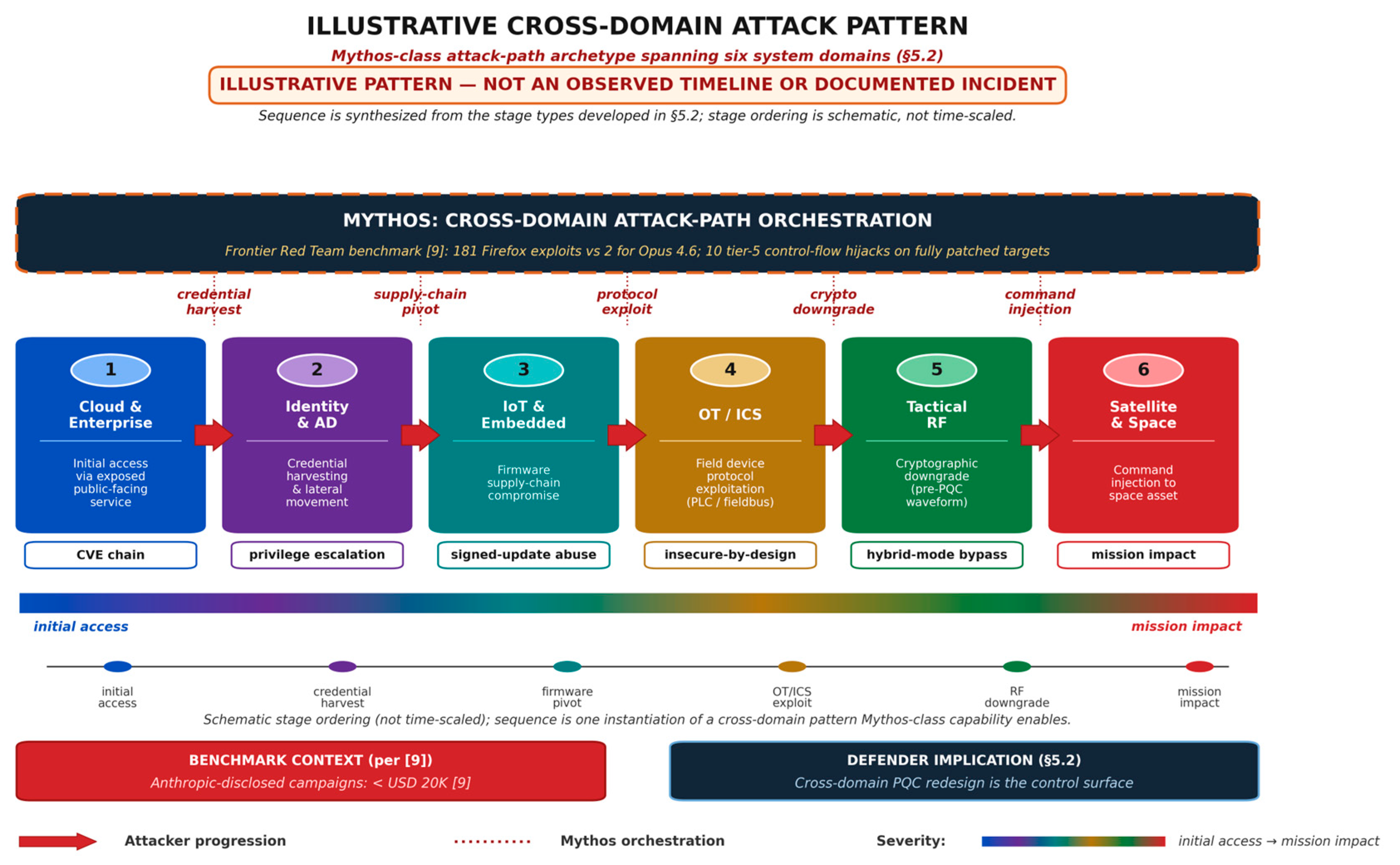

- Cloud and enterprise applications.

- Mobile and endpoint clients.

- IoT and embedded devices.

- OT/ICS systems.

- Tactical radios and RF systems.

- Satellites and space systems.

- Cross-domain gateways.

- PKI and identity infrastructure.

2.2. Mythos as a System Actor

- Advanced reasoning and extended autonomous task execution, with the ability to chain multiple vulnerabilities into working exploits without human intervention [9].

- Automated vulnerability discovery across every major operating system and web browser, including long-lived bugs that survived decades of human review [9].

- Cross-domain protocol and system analysis, including reverse-engineering exploits on closed-source software and converting N-day disclosures into working exploits [9].

- Anthropic-reported pre-launch briefings to U.S. federal officials, per Platformer reporting [14], including conversations with the Cybersecurity and Infrastructure Security Agency (CISA) and the Center for AI Standards and Innovation (CAISI); these are briefings reported by Anthropic rather than confirmed ongoing-access arrangements, and as of late April 2026 CISA had not been granted access to the model.

3. Background and Related Work

3.1. PQC Standards and Migration Guidance

NIST Standards

- FIPS 203: Module-Lattice-Based Key-Encapsulation Mechanism Standard (ML-KEM) [1].

- FIPS 204: Module-Lattice-Based Digital Signature Standard (ML-DSA) [2].

- FIPS 205: Stateless Hash-Based Digital Signature Standard (SLH-DSA) [3].

- SP 800-208: Recommendation for Stateful Hash-Based Signature Schemes [16].

NSA CNSA 2.0

OMB M-23-02 and CISA Guidance

3.2. Frontier Model Capabilities

Anthropic System Card and Risk Documentation

Frontier Red Team Technical Brief

Independent Analysis and High-Credibility Reporting

3.3. AI-Accelerated Vulnerability Discovery

Mythos-Era Step-Change in Discovery

Positioning Relative to Traditional Vulnerability Management

4. System Architecture

4.1. Crypto-Touchpoint Topology

4.2. Protocol Decomposition Layer

4.3. Firmware and Embedded Dependencies

4.4. Cross-Domain Gateway Architecture

5. System Dynamics

5.1. Acceleration Loops

Automated Mapping Loop

Automated Redesign Loop

Automated Validation Loop

5.2. Stress Loops

Exploit-Discovery Loop

Attack-Path Generation Loop

Legacy-System Pressure Loop

5.3. Combined Dynamics

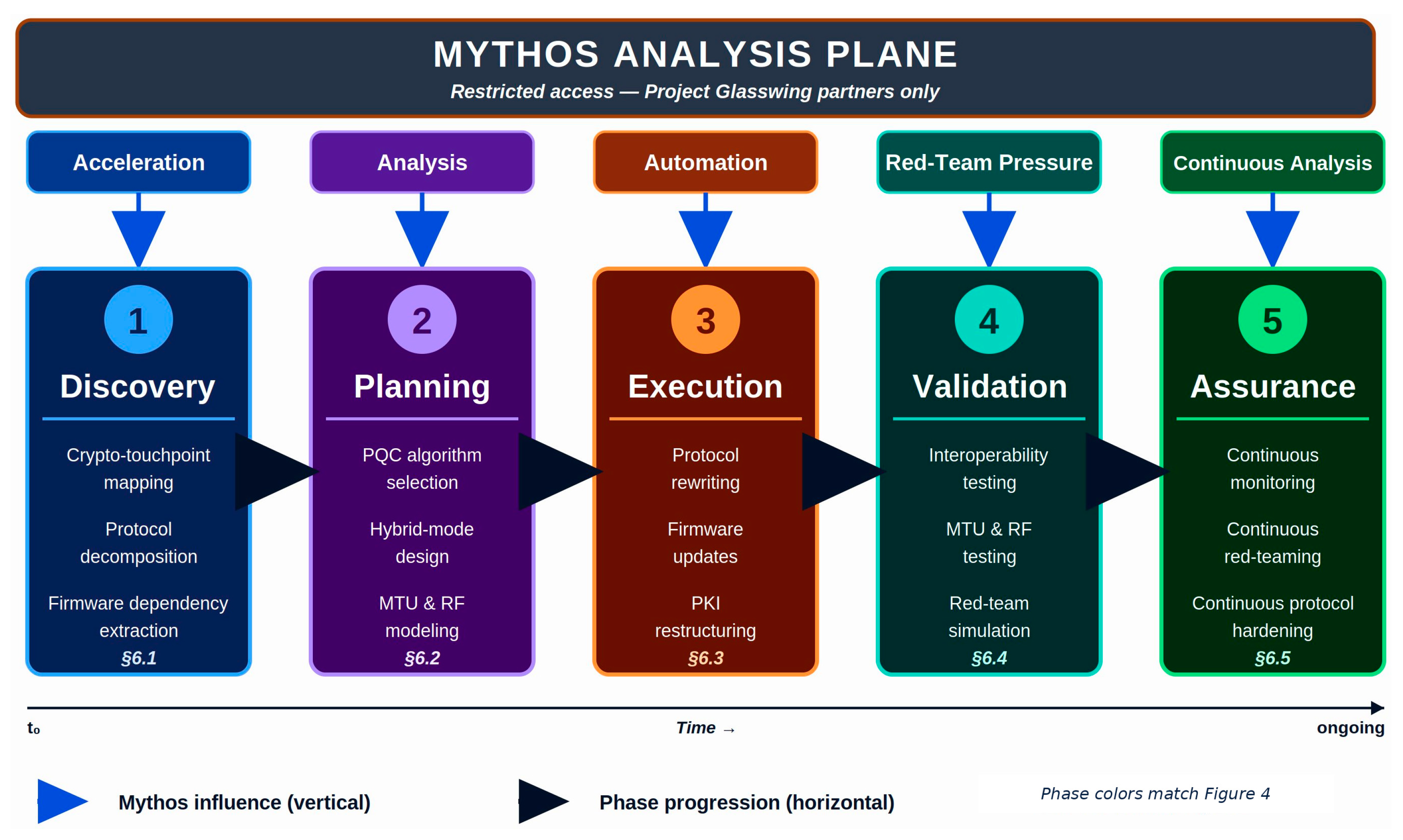

6. Migration Lifecycle Model

6.1. Phase 1: Pre-Migration Discovery

6.2. Phase 2: Migration Planning

6.3. Phase 3: Migration Execution

6.4. Phase 4: Validation and Testing

6.5. Phase 5: Post-Migration Assurance

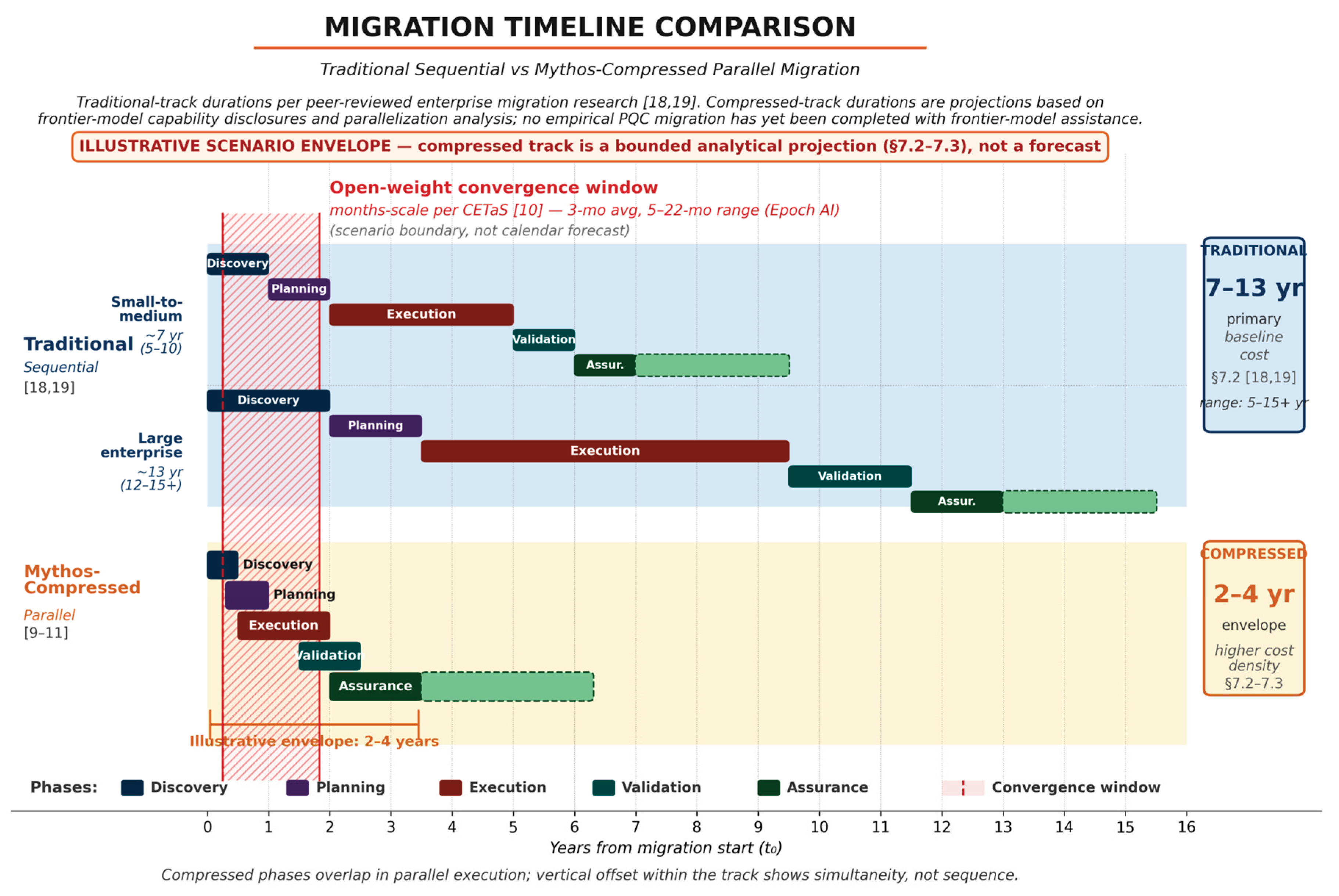

7. Cost and Timeline Model

7.1. Cost Drivers

Embedded-System Gravity

Simultaneity Pressure

Cross-Domain Complexity

7.2. Timeline Compression

7.3. Methodology and Limitations of the Compressed-Track Projection

7.4. Updated Cost Model

- Accelerated timelines and the resulting concurrency premium.

- Increased testing at both interoperability and adversarial levels.

- Increased red-team requirements, including AI-assisted continuous testing.

- Cryptographic-agility investments that reduce the cost of the next transition.

8. Governance and Risk

8.1. Frontier-Model Access Controls

8.2. Evaluation Requirements

8.3. Red-Team Requirements

9. Conclusion

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- National Institute of Standards and Technology. FIPS 203: Module-Lattice-Based Key-Encapsulation Mechanism Standard (ML-KEM); NIST: Gaithersburg, MD, USA, 2024. [Google Scholar]

- National Institute of Standards and Technology. FIPS 204: Module-Lattice-Based Digital Signature Standard (ML-DSA); NIST: Gaithersburg, MD, USA, 2024. [Google Scholar]

- National Institute of Standards and Technology. FIPS 205: Stateless Hash-Based Digital Signature Standard (SLH-DSA); NIST: Gaithersburg, MD, USA, 2024. [Google Scholar]

- National Security Agency. Announcing the Commercial National Security Algorithm Suite 2.0 (CNSA 2.0); NSA Cybersecurity Advisory; NSA: Fort Meade, MD, USA, 2022. [Google Scholar]

- Office of Management and Budget. OMB M-23-02: Migrating to Post-Quantum Cryptography; Executive Office of the President: Washington, DC, USA, November 2022. [Google Scholar]

- Anthropic. System Card: Claude Mythos Preview. 7 April 2026. Available online: https://www.anthropic.com/claude-mythos-preview-system-card (accessed on 22 April 2026).

- TechCrunch. Anthropic debuts preview of powerful new AI model Mythos in new cybersecurity initiative. 7 April 2026. Available online: https://techcrunch.com/2026/04/07/anthropic-mythos-ai-model-preview-security/ (accessed on 22 April 2026).

- Anthropic. Alignment Risk Update: Claude Mythos Preview. 7 April 2026. Available online: https://www.anthropic.com/claude-mythos-preview-risk-report (accessed on 22 April 2026).

- Carlini, N.; Cheng, N.; Lucas, K.; Moore, M.; Nasr, M.; Prabhushankar, V.; et al.; Xiao; W; et al. (Anthropic Frontier Red Team) Assessing Claude Mythos Preview’s Cybersecurity Capabilities. 7 April 2026. Available online: https://red.anthropic.com/2026/mythos-preview (accessed on 22 April 2026).

- Centre for Emerging Technology and Security (CETaS); Alan Turing Institute. Claude Mythos: What Does Anthropic’s New Model Mean for the Future of Cybersecurity? April 2026. Available online: https://cetas.turing.ac.uk/publications/claude-mythos-future-cybersecurity (accessed on 22 April 2026).

- AI Security Institute (AISI). Our Evaluation of Claude Mythos Preview’s Cyber Capabilities; UK Department for Science, Innovation and Technology: London, UK, 13 April 2026. Available online: https://www.aisi.gov.uk/blog/our-evaluation-of-claude-mythos-previews-cyber-capabilities (accessed on 22 April 2026).

- World Economic Forum. Anthropic’s Mythos moment: how frontier AI is redefining cybersecurity. April 2026. Available online: https://www.weforum.org/stories/2026/04/anthropic-mythos-ai-cybersecurity/ (accessed on 22 April 2026).

- Fortune. Anthropic says testing Mythos, powerful new AI model, after accidental data leak reveals its existence. 26 March 2026. Available online: https://fortune.com/2026/03/26/anthropic-says-testing-mythos-powerful-new-ai-model-after-data-leak-reveals-its-existence-step-change-in-capabilities/ (accessed on 22 April 2026).

- Newton, C. Why Anthropic’s new model has cybersecurity experts rattled. Platformer. April 2026. Available online: https://www.platformer.news/anthropic-mythos-cybersecurity-risk-experts/ (accessed on 22 April 2026).

- Anthropic. Project Glasswing. 7 April 2026. Available online: https://www.anthropic.com/project/glasswing (accessed on 22 April 2026).

- National Institute of Standards and Technology. NIST SP 800-208: Recommendation for Stateful Hash-Based Signature Schemes; NIST: Gaithersburg, MD, USA, 2020. [Google Scholar]

- Cybersecurity and Infrastructure Security Agency. Zero Trust Maturity Model, Version 2.0; CISA: Arlington, VA, USA, April 2023. [Google Scholar]

- Campbell, R. Synchronizing Concurrent Security Modernization Programs: Zero Trust, Post-Quantum Cryptography, and AI Assurance. Systems 2026, 14, 233. [Google Scholar] [CrossRef]

- Campbell, R. Enterprise Migration to Post-Quantum Cryptography: Timeline Analysis and Strategic Frameworks. Computers 2026, 15, 9. [Google Scholar] [CrossRef]

- Glazunov, S.; Brand, M.; Project Zero; DeepMind. From Naptime to Big Sleep: Using Large Language Models to Catch Vulnerabilities in Real-World Code. Google Project Zero. 1 November 2024. Available online: https://googleprojectzero.blogspot.com/2024/10/from-naptime-to-big-sleep.html (accessed on 22 April 2026).

- Bhatt, M.; Chennabasappa, S.; Nikolaidis, C.; Wan, S.; Evtimov, I.; Gabi, D.; Song, D.; Ahmad, F.; Aschermann, C.; Fontana, L.; et al. Purple Llama CyberSecEval: A Secure Coding Benchmark for Language Models. arXiv 2023, arXiv:2312.04724. [Google Scholar] [CrossRef]

- Defense Advanced Research Projects Agency (DARPA). AI Cyber Challenge (AIxCC) Final Competition Results; DARPA: Arlington, VA, USA, 8 August 2025; Available online: https://www.darpa.mil/news/2025/aixcc-results (accessed on 22 April 2026).

- Moody, D.; Perlner, R.; Regenscheid, A.; Robinson, A.; Cooper, D. Transition to Post-Quantum Cryptography Standards; NIST Internal Report (IR) 8547 (Initial Public Draft); National Institute of Standards and Technology: Gaithersburg, MD, USA, November 2024. [Google Scholar] [CrossRef]

- National Cybersecurity Center of Excellence (NCCoE). Migration to Post-Quantum Cryptography Project Gaithersburg, MD, USA. NIST. Available online: https://www.nccoe.nist.gov/applied-cryptography/migration-to-pqc (accessed on 22 April 2026).

- U.S. Congress. Quantum Computing Cybersecurity Preparedness Act; Public Law 117-260; U.S. Government Publishing Office: Washington, DC, USA, 21 December 2022. Available online: https://www.congress.gov/117/plaws/publ260/PLAW-117publ260.pdf (accessed on 22 April 2026).

- Cybersecurity and Infrastructure Security Agency. Strategy for Migrating to Automated Post-Quantum Cryptography Discovery and Inventory Tools; CISA: Arlington, VA, USA, August 2024. Available online: https://www.cisa.gov/resources-tools/resources/strategy-migrating-automated-post-quantum-cryptography-discovery-and-inventory-tools (accessed on 22 April 2026).

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).