5. Experimental Platform and Design

5.1. Experimental Objectives and Research Questions

This section systematically evaluates whether ICG-Restore can stably generate restoration task packages that are executable, structurally coherent, and beneficial under complex constraints, dynamic environments, and heterogeneous inputs. Five research questions are considered:

RQ1: Compared with classical restoration optimization, network-science heuristics, learning-based sequential restoration, and existing LLM planning approaches, can ICG-Restore consistently improve constraint satisfaction and overall restoration quality?

RQ2: Does graph enhancement improve target selection and stage organization so that the generated plans align more closely with structurally critical objects in the scenario graph?

RQ3: Compared with “regenerate after validation failure”, can rule-consistent minimal-edit repair convert infeasible candidates into executable plans with smaller disturbance and lower cost?

RQ4: Under dynamic conditions such as secondary failure, congestion escalation, delayed observation, and regional isolation drift, does the proposed framework remain stable and robust?

RQ5: Can the proposed method strike a reasonable balance among plan quality, repair cost, and planning latency, rather than improving quality only by spending more inference budget?

To avoid mistaking incidental instance-level fluctuations for real methodological differences, the empirical design follows a four-part evidence chain: overall performance, module contribution, cross-backbone robustness, and mechanistic case analysis.

5.2. Benchmark Topologies and Task Construction

To test the method under different scales and structural complexities, we construct three benchmark emergency communication topologies: EC-S, EC-M, and EC-L, as summarized in

Table 1. All three topologies share the same multi-layer heterogeneous semantic design and include coordination centers, backbone gateways, core service nodes, relay nodes, frontier access nodes, and channel-resource nodes. They differ in node scale, redundancy, cross-layer coupling, and channel scarcity.

EC-S contains 24 nodes and is mainly used to verify basic feasibility. EC-M contains 40 nodes, with more dependency chains and more candidate restoration paths. EC-L contains 60 nodes and represents the most challenging setting, where cross-layer coupling, local redundancy, and resource conflict become simultaneously prominent.

Across these topologies, we define four classes of restoration tasks to cover the most typical high-level planning needs in post-disaster emergency communication recovery: backbone service path restoration, which tests the ability to identify and organize critical connectivity paths; backup-path activation and continuity assurance, which evaluates how effectively backup resources are used after primary-path failure; core service restoration, which examines whether the planner can organize stages around key service chains and priority services; and regional access-cluster coverage restoration, which assesses the coordination of relay deployment, channel management, and local coverage reinforcement.

For every combination of topology × task × environmental mode, 20 test instances are constructed, and each method is run with 5 random seeds. Thus, for each topology, one method is evaluated on 4×5×20=400 test instances per seed; across all three topologies, each method completes 6000 planning–execution evaluations in total.

5.3. Environmental Evolution Modes and the Safety-Aware Agent Executor

To evaluate robustness under dynamic conditions, each test instance is assigned one of five environmental evolution modes, shown in

Table 2. M1: stable recovery after initial damage, with no additional failures; M2: secondary failures in local components during Stages 2–3; M3: congestion escalation caused by increasing service demand and intensified channel conflict; M4: delayed observation, where structured observations lag by 1–2 stages and are corrupted by noise; and M5: regional isolation drift, where local reachability fluctuates and coordination delay increases.

These modes are not intended to replicate low-level protocol behavior. Instead, they abstract the most common forms of uncertainty faced by high-level restoration planners.

All methods are evaluated through the same safety-aware agent executor. The executor operates only on abstract restoration primitives and never accesses device-level commands or real operational procedures. Its maintained state variables include node availability, residual link capacity, service integrity, channel availability, regional coverage, target confidence, and average coordination delay. Different primitives update these variables according to predefined templates: wide-area assessment increases target confidence; backup-path activation improves critical-path reachability; portable relay deployment increases local coverage; service migration improves core service continuity; and channel reallocation mitigates conflict and congestion. This shared execution environment provides a common basis for fair comparison.

5.4. Baselines

To evaluate ICG-Restore comprehensively, we compare it against representative methods from four directions: classical restoration optimization, network-science heuristics, learning-based sequential restoration, and LLM planning.

For classical optimization, we use the Interdependent Network Design Problem (INDP) [

11] as a representative method. INDP solves structured restoration under budget and operational constraints and serves as a strong reference for hard-constrained recovery planning.

For heuristic restoration, we use Cent-Restore [

43], which schedules restoration based on the importance ranking of service-network components. It serves as a representative network-science baseline and tests whether structural centrality alone is sufficient for high-level recovery planning.

For learning-based restoration, we use DRL-Restore, a DDQN-based sequential restoration method [

10]. This baseline models post-disaster restoration as a sequential decision process and allows us to compare learned recovery sequences with our constrained graph-enhanced planning framework.

For LLM baselines, we include three representative variants. Direct-LLM [

44] directly feeds natural-language requirements and observations into an LLM and asks it to output a stage plan. PS-LLM (Plan-and-Solve) [

18] first generates an explicit subtask plan and then solves it. GraphRAG-LLM [

34] enhances generation with graph-structured retrieval, allowing us to isolate the contribution of graph retrieval alone.

Together, these baselines cover four major paradigms—structured optimization, heuristic restoration, learning-based restoration, and open-ended LLM planning—and provide a broad foundation for answering the five research questions.

5.5. Implementation Details and Fairness Settings

Unless otherwise stated in the cross-backbone experiments, all main experiments use gpt-oss:20b as the default backbone. All LLM-based methods—Direct-LLM, PS-LLM, GraphRAG-LLM, and ICG-Restore—share the same output JSON schema, the same set of abstract restoration primitives, the same budget/time-window encoding, the same candidate-plan parser, and the same safety-aware agent executor. The only differences are whether task-intent compilation, joint graph retrieval, and rule-consistent minimal-edit repair are used.

To keep comparisons with traditional methods as fair as possible, INDP, Cent-Restore, and DRL-Restore are given the same structured state, constraints, and resource information as provided by the experimental platform. Their outputs are uniformly mapped to the same stage-task-package format before being sent to the common agent executor. In other words, we do not compare “who writes better natural language”; we compare which method can recover more critical objects with more appropriate stage logic and achieve a higher integrated restoration benefit under a shared execution standard.

Table 3.

Experimental environment and major parameters.

Table 3.

Experimental environment and major parameters.

| Environment |

Specification |

Parameter |

Value |

| Operating System |

Microsoft Windows 11 |

seed |

5 |

| CPU |

AMD Ryzen 9 9950X3D |

llm |

gpt-oss:20b |

| GPU |

NVIDIA GeForce RTX 5090 D |

llm_temperature |

0.2 |

| Language |

Python 3.11.15 |

max_tokens |

3072 |

| cuda |

13.1 |

text_top_k |

8 |

5.6. Evaluation Metrics

We evaluate the proposed method from four aspects: executability, alignment with critical targets, integrated restoration effectiveness, and computational efficiency.

First, we use the Constraint Satisfaction Rate (CSR) to measure the proportion of final plans that satisfy all constraints.

where 1[·] is the indicator function. A plan is deemed feasible only if it simultaneously satisfies rule consistency, causal consistency, budget feasibility, and time-window feasibility. Higher CSR indicates a stronger ability to output executable restoration task packages.

Second, we use the Weighted Critical Target Coverage (WCTC@k) to evaluate how well the selected targets align with the graph-enhanced structural reference set.

where

Pi is the set of targets selected by the plan,

Ti(k) is the top-k structural reference set derived from the structural score, and

Si(

v) is the structural criticality score of object

v. Higher WCTC@k indicates better coverage of truly important restoration objects.

Third, we adopt the Composite Recovery Score (CRS) to measure the overall quality of plan execution.

where

Rpath,

Rserv, and R

cov denote critical-path recovery, core-service restoration, and regional-coverage improvement, respectively;

C is resource cost; Risk is operational risk; and Rob is cross-mode robustness. All subterms are normalized. A higher CRS indicates a better balance among restoration benefit, cost control, and robustness.

Finally, for the ablation and cross-backbone experiments, we also report average planning latency and total token consumption. Since traditional optimizers and LLMs differ substantially in computational paradigm, these efficiency metrics are mainly compared within the LLM family.

5.7. Main Results and Analysis

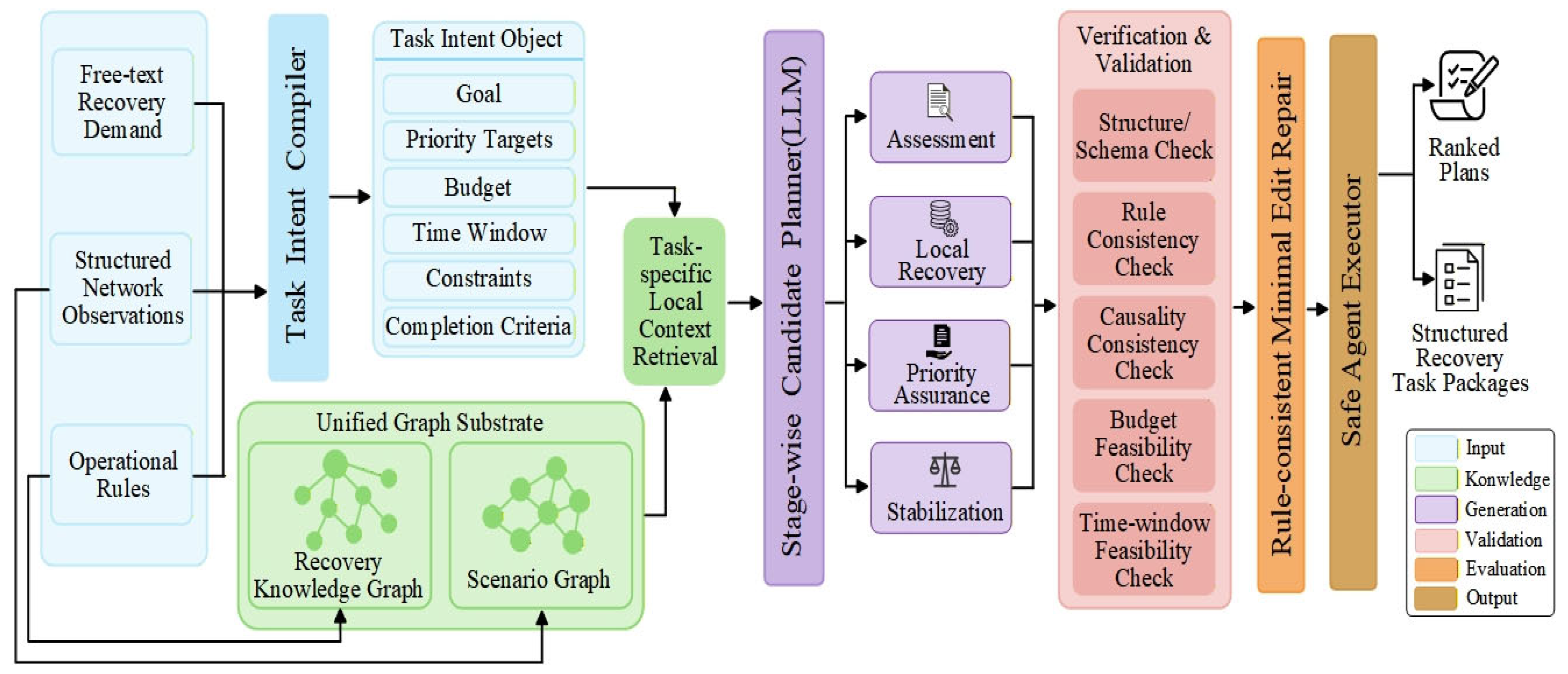

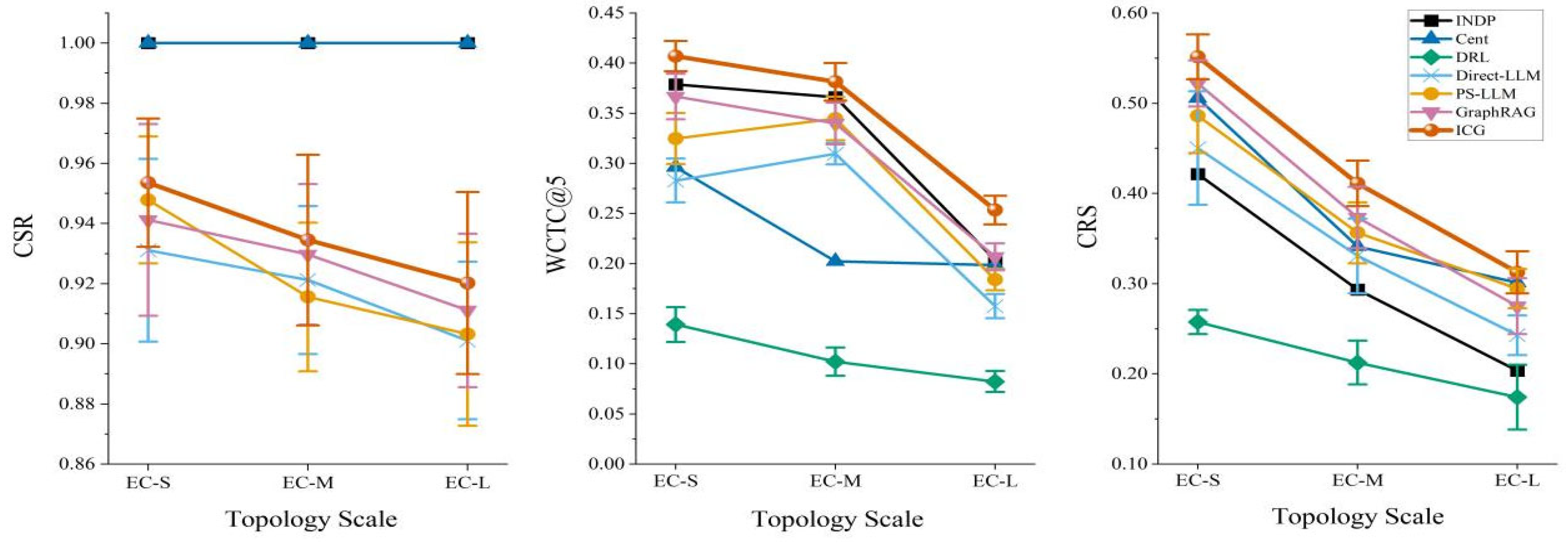

Table 4 reports the quantitative results of all methods on the three topology scales, while

Figure 4 provides a visual summary. Overall, ICG-Restore achieves the highest WCTC@5 and CRS across all three topology scales and the highest CSR among the LLM-based baselines. This indicates that its advantage is not confined to a single metric, but extends simultaneously to critical-target selection, stage organization, and integrated restoration utility.

Averaged over the three topologies, ICG-Restore obtains 0.9361 CSR, 0.3472 WCTC@5, and 0.4251 CRS. Relative to Direct-LLM, these values improve by 1.99%, 38.87%, and 24.56%, respectively. Relative to PS-LLM, the improvements are 1.51%, 22.05%, and 12.17%. Relative to GraphRAG-LLM, the gains are 0.95%, 14.03%, and 8.94%. These results indicate that direct generation, plan-then-solve prompting, or graph retrieval alone is not sufficient for stable high-constraint restoration planning. What matters is the closed-loop synergy among task-intent constraints, stage-wise generation, rule-consistent repair, and execution evaluation.

Figure 4 further suggests that the performance gap among LLM-based methods is relatively modest on CSR, but much larger on WCTC@5 and CRS. This implies that once all methods are forced to output structured plans under a common schema, the main bottleneck is no longer whether the model can produce a syntactically well-formed plan; instead, the bottleneck is whether it can organize recovery around the right critical objects, in the right stage order, under the right resource allocation. This is exactly where ICG-Restore offers the clearest advantage.

The benefit of ICG-Restore is more pronounced on larger topologies. On the most difficult setting, EC-L, ICG-Restore reaches 0.2534 in WCTC@5, exceeding 0.2068 for GraphRAG-LLM and 0.1840 for PS-LLM. Its CRS on EC-L is 0.3126, exceeding 0.2752 for GraphRAG-LLM and 0.2428 for Direct-LLM. Relative to GraphRAG-LLM, ICG-Restore improves WCTC@5 and CRS on EC-L by 22.53% and 13.59%, respectively. This suggests that as topology size grows, cross-layer dependencies deepen, and resource competition intensifies, the value of task-intent constraints and minimal-edit repair becomes increasingly important. In other words, the more complex the topology, the closer pure retrieval-enhanced generation gets to its ceiling, whereas the full loop of graph enhancement + rule-consistent repair continues to pay dividends.

One additional point deserves emphasis: feasibility and restoration utility do not always rise together. INDP, Cent-Restore, and DRL-Restore all achieve CSR = 1.000 on all three topologies, yet none surpasses ICG-Restore in CRS. Thus, being feasible by construction does not mean that a method restores the most valuable targets, nor that it yields the highest utility under the common executor. INDP achieves relatively strong WCTC@5 on EC-S and EC-M but lower CRS, indicating that it is good at recovering structurally critical objects under hard constraints but less effective at balancing service continuity and coverage restoration. Cent-Restore attains a relatively high CRS on EC-S (0.5057), showing that simple centrality heuristics can work surprisingly well on small topologies, but its WCTC@5 drops sharply on EC-M and EC-L, which suggests that local centrality alone cannot capture cross-layer dependencies, business priorities, or inter-stage causal structure. DRL-Restore remains feasible throughout, but its WCTC@5 and CRS are consistently weak, implying that reward-driven recovery sequences tend to become conservative strategies that are executable in form yet insufficiently focused on critical targets.

Overall, ICG-Restore narrows the executability gap between open-ended LLM planning and classical closed-form restoration methods, while outperforming them in critical-target coverage and integrated recovery quality. GraphRAG-LLM already demonstrates that graph enhancement is useful, but ICG-Restore further shows that graph retrieval alone is not enough. The decisive factor is the closed loop of task-intent constraints → graph-enhanced stage-wise generation → rule-consistent repair → unified execution evaluation.

5.8. Ablation Study

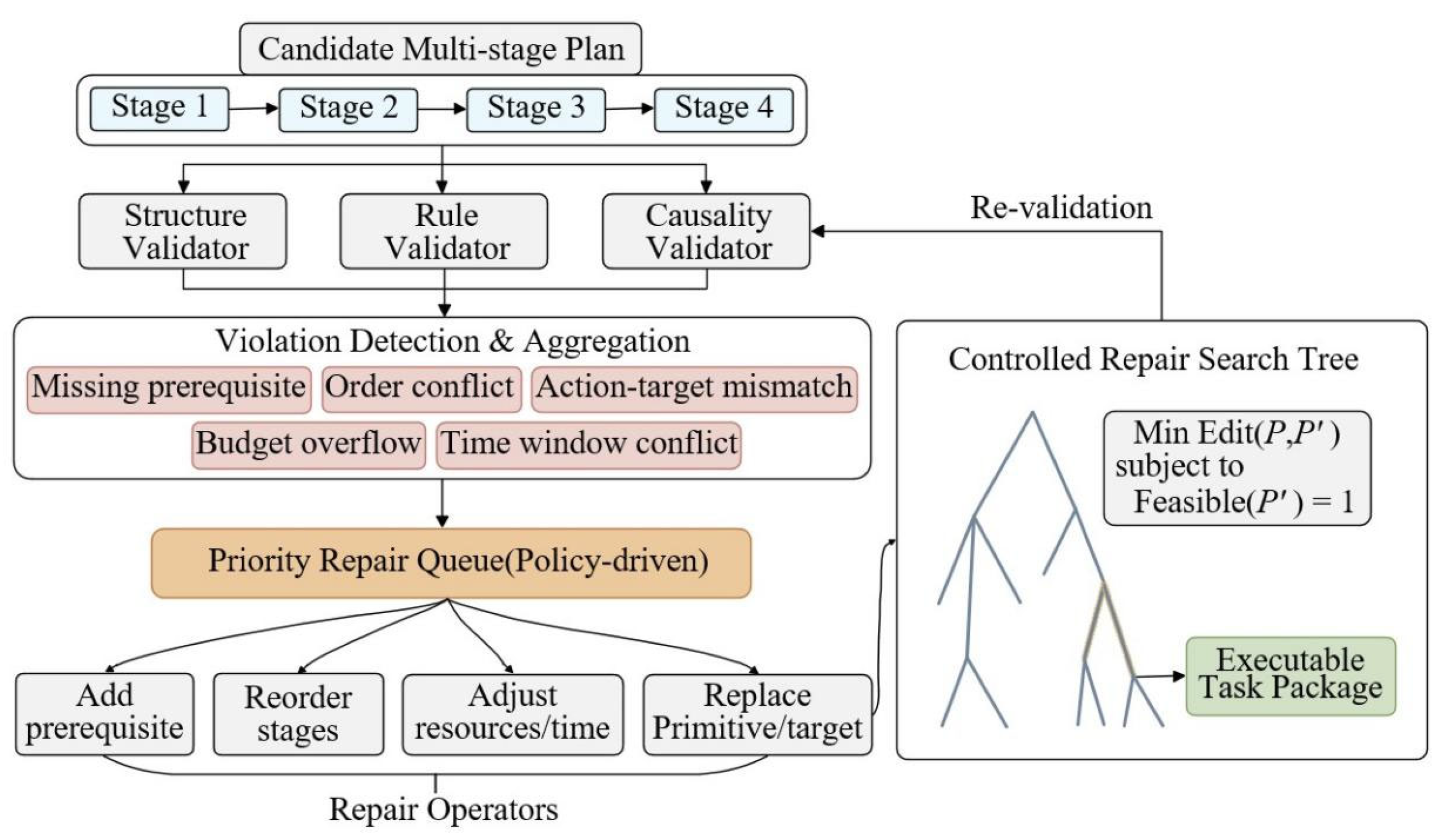

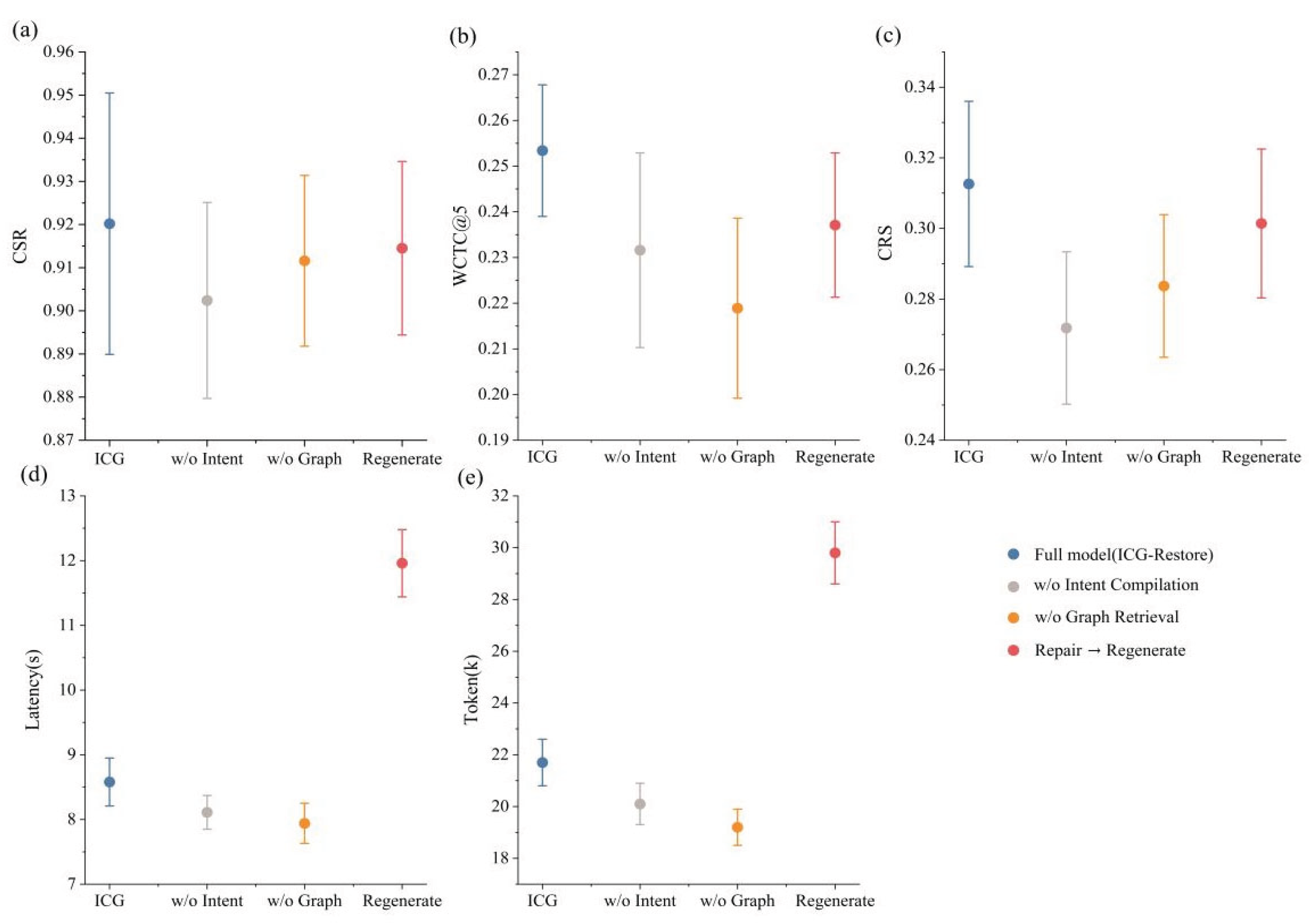

To examine the contribution of each core module, we perform ablations on the most challenging topology, EC-L. Three variants are considered: w/o Intent Compilation, w/o Graph Retrieval, and Repair → Regenerate, in which minimal-edit repair is replaced by “regenerate after validation failure”. Exact results are given in

Table 5, and relative shifts are visualized in

Figure 5.

Removing task-intent compilation reduces CSR, WCTC@5, and CRS to 0.9024, 0.2316, and 0.2718, respectively. Relative to the full model, the declines are 1.93%, 8.60%, and 13.05%. This confirms that the task-intent object is not a superficial summary but a critical intermediate layer that stabilizes constraints and stage objectives. Without it, the model is more likely to drift in target scope, miss priority objects, or weaken completion criteria, leading to simultaneous deterioration in structural alignment and execution quality.

Removing graph retrieval causes only a modest drop in CSR, from 0.9202 to 0.9116, but WCTC@5 drops sharply from 0.2534 to 0.2189, and CRS falls to 0.2837. Relative to the full model, the declines in WCTC@5 and CRS reach 13.61% and 9.25%, respectively. This indicates that graph enhancement mainly improves planning quality, especially the selection of critical objects and the structural rationality of stage organization, rather than merely increasing formal feasibility.

Replacing minimal-edit repair with regeneration after validation failure causes only moderate decreases in CSR, WCTC@5, and CRS, but planning latency rises from 8.58 s to 11.96 s, and token consumption increases from 21.7k to 29.8k, corresponding to increases of 39.39% and 37.33%, respectively. This is an important finding: in high-constraint restoration planning, regeneration is not the safer option it might appear to be. It often incurs a large computational penalty without delivering commensurate quality gains, and it is more likely to destroy the original phase backbone of the plan. By contrast, rule-consistent minimal-edit repair preserves useful structure while achieving a better balance between quality and cost.

The ablation results therefore answer RQ2, RQ3, and RQ5 directly. Task-intent compilation stabilizes constraint boundaries and stage goals; graph retrieval improves critical-target coverage and structural plan quality; and minimal-edit repair is the key mechanism that turns semantically plausible yet structurally infeasible candidates into high-quality executable task packages. These modules are not interchangeable. Their gains are complementary.

5.9. Cross-Backbone Robustness

To examine whether the framework’s benefit depends on a particular LLM backbone, we further test four backbone models: gpt-oss:20b, qwen3:14b, gemma3:27b, and mistral-small3.1:24b. The results are reported in

Table 6.

ICG-Restore improves executability over Direct-LLM on all four backbones. The CSR gains are 1.99% on gpt-oss:20b, 2.74% on qwen3:14b, 0.78% on gemma3:27b, and 1.27% on mistral-small3.1:24b. This shows that the improvement does not depend on a particular model’s output style; it arises from the framework design itself.

At the same time, the backbones exhibit stable but distinct quality–efficiency trade-offs. Under ICG-Restore, gpt-oss:20b achieves the highest WCTC@5 (0.3472), suggesting the strongest performance in critical-target selection and stage organization. mistral-small3.1:24b achieves the highest CRS (0.4285), indicating slightly better integrated recovery utility. qwen3:14b yields the lowest latency and token consumption (6.41 s and 19.7k), though with lower WCTC@5 and CRS. gemma3:27b incurs the highest cost. Thus, different backbones mainly shift the position of the quality–efficiency frontier, rather than changing whether the framework works at all.

In short, the advantage of ICG-Restore is framework-level, not a by-product of one particularly strong LLM backbone.

5.10. Case Study: From an Infeasible Candidate to an Executable Restoration Task Package

To further illustrate the source of the performance gains, we analyze one representative instance from the main test set. The case is drawn from the EC-M topology, under the core service restoration task and the M2 (secondary failure) mode. Unlike the aggregate results in

Section 5.7,

Section 5.8 and

Section 5.9, this subsection focuses on how ICG-Restore transforms heterogeneous inputs into an executable restoration task package in a concrete planning episode.

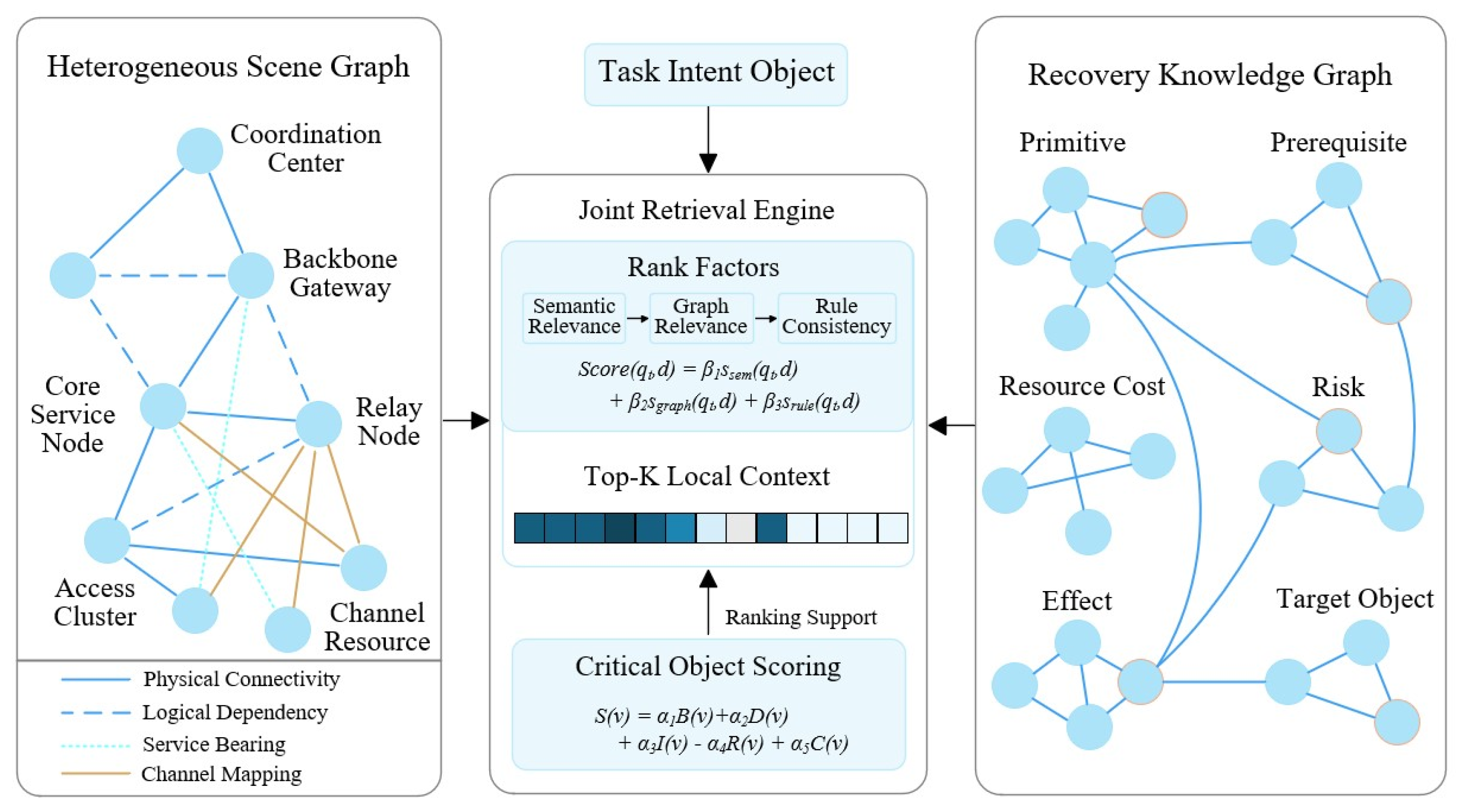

The input consists of a free-text restoration request, structured network observations, and operational rule boundaries. The task requires the planner to prioritize emergency command and medical-data return services under limited budget and a strict time window, while avoiding high-risk restoration primitives and retaining fallback capability. The damaged scenario shows a disrupted critical service chain between a core service node and a backbone gateway, degraded local access-cluster coverage, and additional constraints imposed by channel competition and coordination delay. In such a setting, direct LLM generation tends to produce plans with incomplete constraints and unstable phase organization. By contrast, ICG-Restore first compiles the heterogeneous input into a unified task-intent object, explicitly specifying the restoration goal, priority objects, budget, time window, forbidden primitives, and completion criteria.

The framework then performs joint retrieval of task-relevant local structural context. The heterogeneous scenario graph identifies the damaged subgraph centered on the backbone gateway–core service chain–damaged access cluster, while the restoration knowledge graph provides relevant high-level primitives and dependency patterns, including backup-path activation, service migration, portable relay deployment, channel reallocation, and stabilization. By combining semantic relevance, graph-structural relevance, and rule consistency, the retrieval module supplies a compact structural context for stage-wise planning.

Conditioned on the task intent and retrieved context, the LLM generates an initial candidate plan. Although semantically plausible, the candidate remains infeasible in two respects. First, it schedules service migration and coverage reinforcement before restoring the connectivity required to support them, leading to missing preconditions and causal-order violations. Second, it places relay deployment and high-cost channel adjustment in the same stage, causing budget and time-window conflicts. Rather than discarding the candidate, ICG-Restore applies minimal-edit repair, which preserves the original planning backbone while correcting local infeasibilities.

Table 7.

Violation diagnosis and minimal-edit repair trajectory in the representative case.

Table 7.

Violation diagnosis and minimal-edit repair trajectory in the representative case.

| Original Candidate Stage |

Main Problem |

Repair Operation |

Repaired Stage Package |

| Service migration to backup service node |

Missing prerequisite of critical-link reachability; causal-order violation |

Postpone the operation and insert a preceding “critical-link recovery” stage |

Stage 2: Core service migration and priority-service assurance |

| Portable relay deployment to damaged access cluster |

Misaligned with service priority; overly coupled with channel constraints |

Bind it with channel reallocation and postpone it |

Stage 3: Local coverage reinforcement and channel coordination |

| Backup-path activation |

Scheduled too late to support later recovery |

Move it forward and merge it with situation assessment |

Stage 1: Situation assessment and critical-link recovery |

| Stabilization stage |

Missing fallback and secondary-failure monitoring |

Add explicit fallback and revalidation logic |

Stage 4: Stabilization and fallback monitoring |

After repair, the plan becomes a coherent recovery sequence: situation assessment and critical-link recovery → core service migration and priority-service assurance → local coverage reinforcement and channel coordination → stabilization and fallback monitoring. Importantly, this result is not a full rewrite, but a low-disturbance correction that preserves the original service-priority backbone while restoring structural executability, rule compliance, and causal consistency.

The repaired task package passes structural, rule-based, and causal validation and then enters the common agent-based execution evaluation. Compared with the unrepaired candidate, it achieves better service continuity and regional coverage while reducing coordination delay and operational risk. This suggests that minimal-edit repair improves not only formal feasibility, but also execution quality.

This case also explains the ablation result of Repair → Regenerate in

Table 5. Full regeneration increases token cost and planning latency, yet does not yield proportional quality gains. The key advantage of ICG-Restore therefore lies not in repeatedly rewriting candidate plans, but in preserving useful structure and converting it, with minimal disturbance, into an executable restoration task package.

Overall, this case confirms that the effectiveness of ICG-Restore arises from the closed-loop interaction of task-intent compilation, graph-enhanced retrieval, stage-wise generation, minimal-edit repair, and agent-based execution assessment. This integrated process enables the framework to move beyond plans that are merely plausible in language and toward task packages that are executable, evaluable, and directly usable by downstream systems.