Submitted:

22 April 2026

Posted:

23 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- 1.

- Semantic-Guided Enhancement Framework: We propose a novel LLIE method that integrates CLIP-based semantic priors from natural language prompts, enabling interpretable, region-aware enhancement without pixel-level supervision. This allows users to specify which regions or semantic content should be prioritized during enhancement.

- 2.

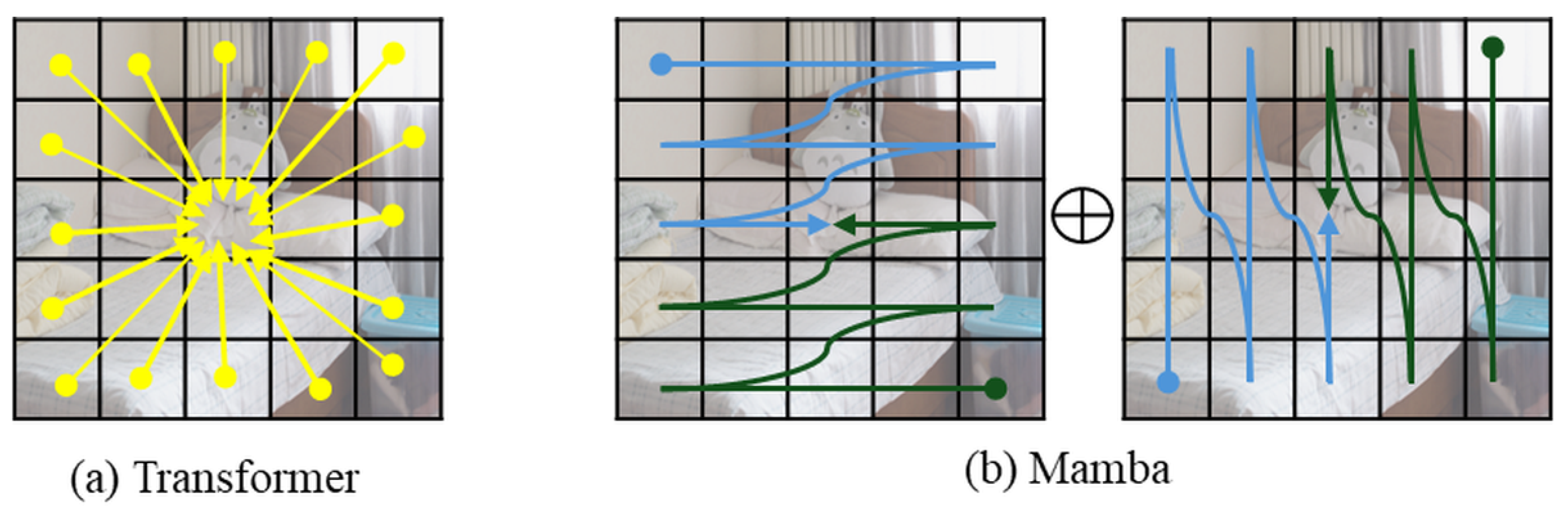

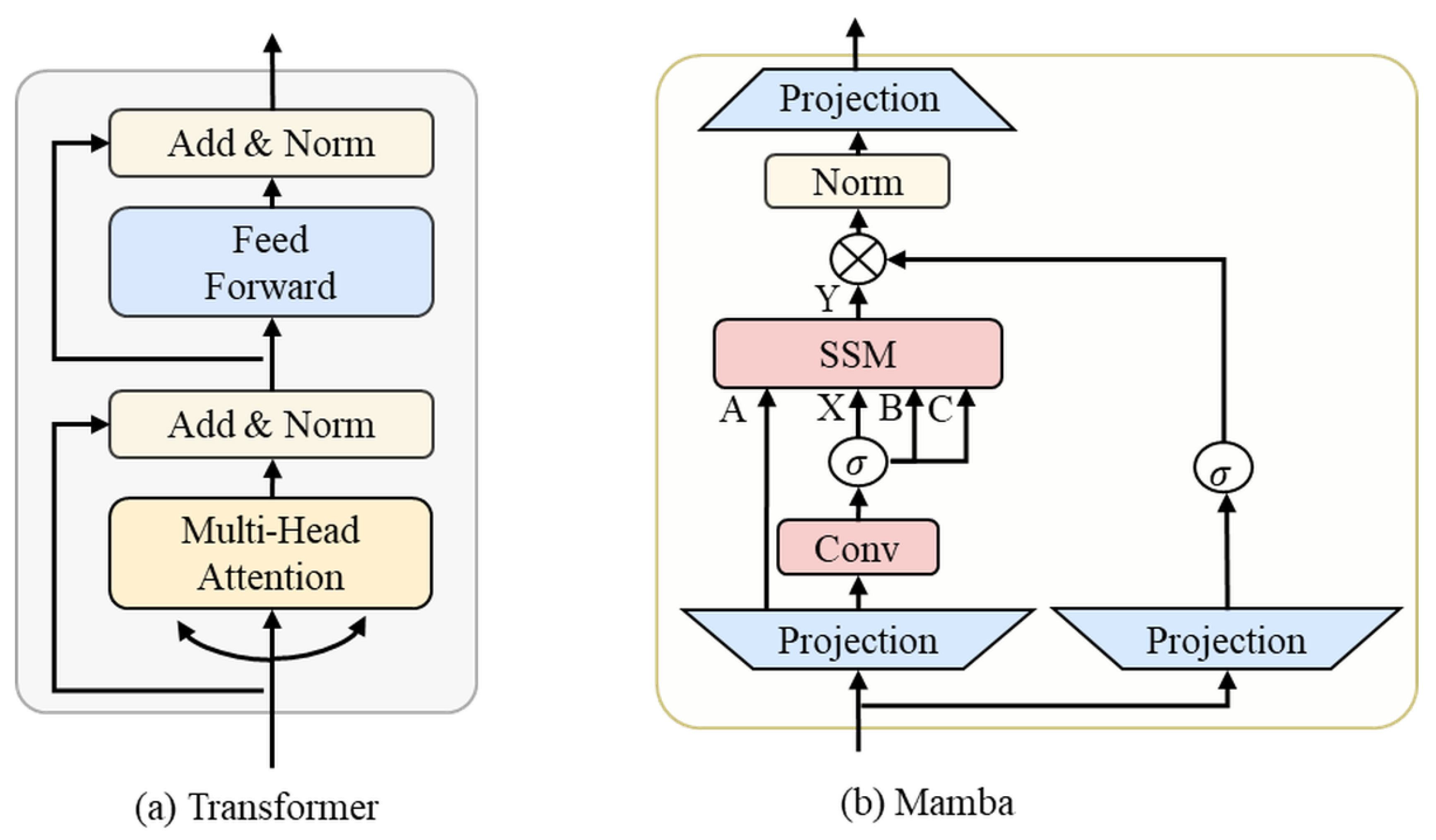

- Hybrid Transformer-Mamba Architecture: We design a novel U-Net backbone that synergistically combines Transformer blocks for global context modeling and Mamba layers for efficient sequential processing. This hybrid architecture achieves superior performance with significantly reduced computational overhead compared to pure Transformer-based models.

- 3.

- Perturbation-Aware Retinex Decomposition: We introduce a physically grounded decomposition framework that explicitly models noise and illumination estimation errors, improving the realism and robustness of the enhancement process under challenging real-world conditions.

- 4.

- Comprehensive Experimental Validation: Extensive experiments on multiple benchmarks (LOL v1, LOL-v2-real, LOL-v2-synthetic, SID, SMID, and SDSD-out) demonstrate that SeMaNet achieves state-of-the-art performance in both quantitative metrics (PSNR, SSIM) and qualitative visual quality, particularly excelling in preserving semantic coherence and fine details under challenging lighting conditions.

2. Related Work

2.1. Traditional and CNN-Based Low-Light Enhancement

2.2. Transformer-Based Architectures and Attention Mechanisms

2.3. Efficient State Space Models and Mamba Architecture

2.4. Vision-Language Models

3. Methodology

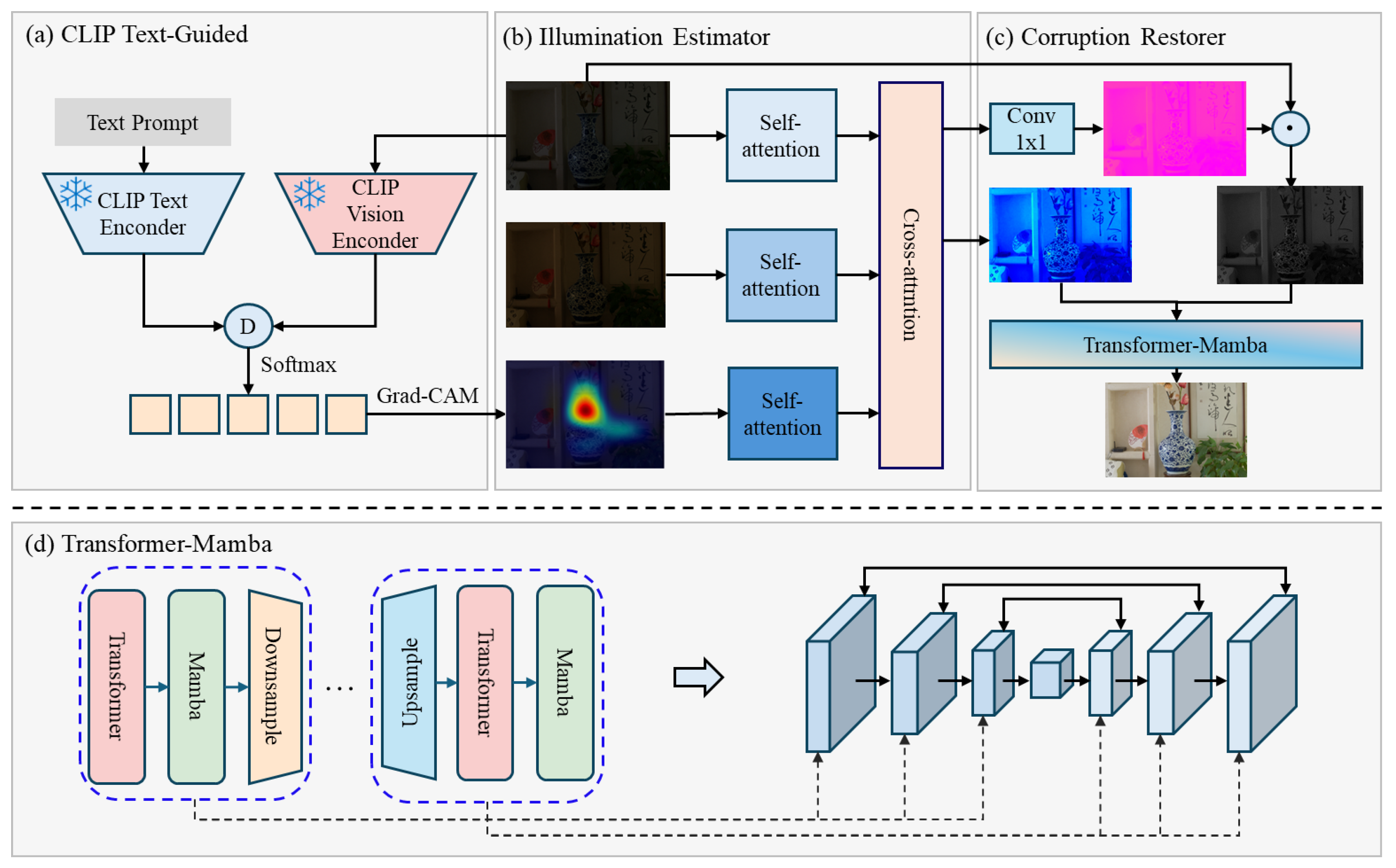

3.1. Overall Architecture of SeMaNet

3.2. Text-Guided Semantic Prior Extraction Module

3.3. Transformer-Mamba Attention Model Architecture

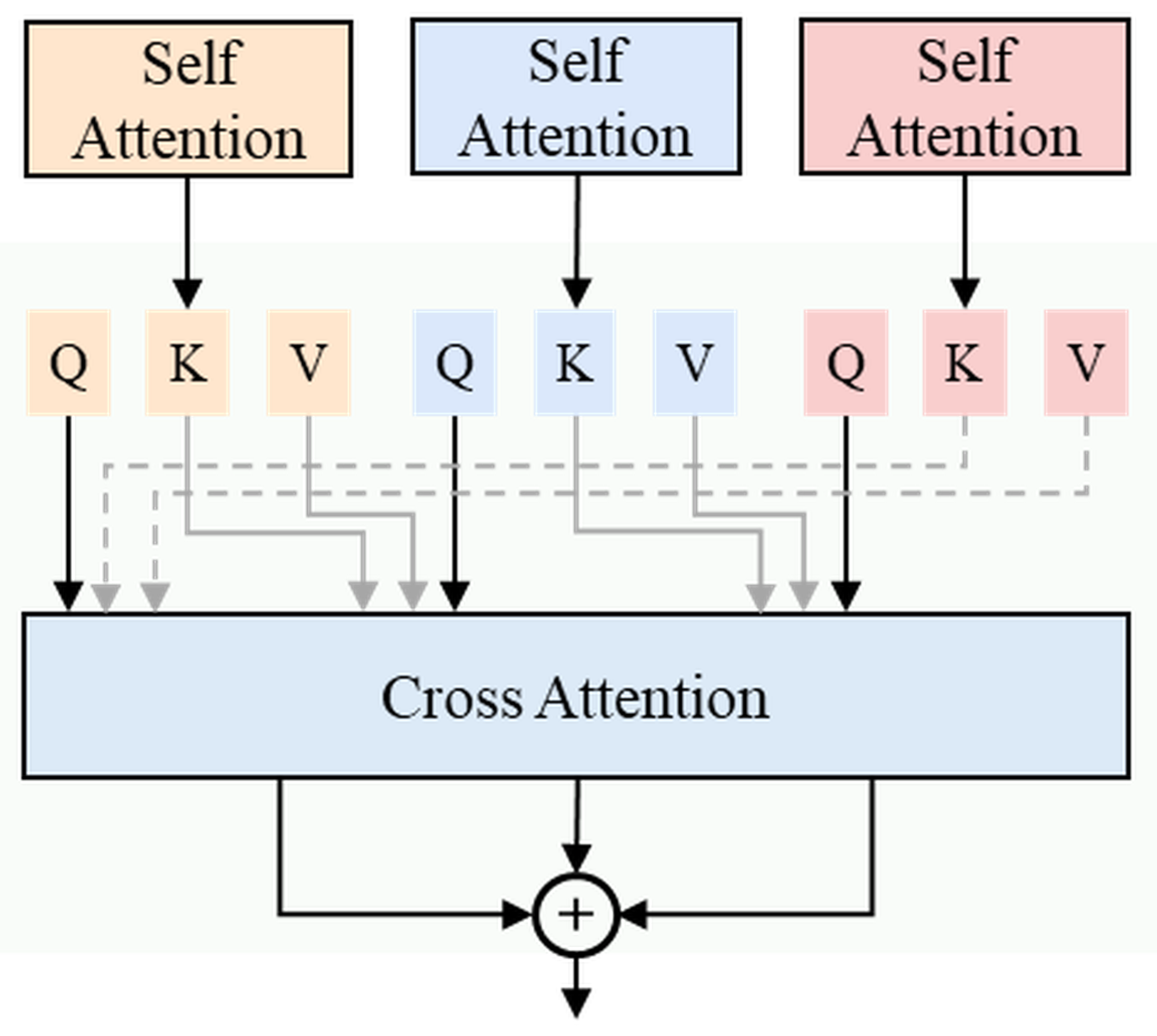

3.4. Retinex Decomposition and Semantic-Guided Fusion Module

3.5. Loss Function

4. Experiments

4.1. Experimental Setup

4.2. Experimental Results

4.2.1. Quantitative Comparison

| Methods | LOL-v1 | LOL-v2-real | LOL-v2-syn | |||

|---|---|---|---|---|---|---|

| PSNR | SSIM | PSNR | SSIM | PSNR | SSIM | |

| SID [38] | 14.35 | 0.436 | 13.24 | 0.442 | 15.04 | 0.610 |

| DeepUPE [39] | 14.38 | 0.446 | 13.27 | 0.452 | 15.08 | 0.623 |

| DeepLPF [40] | 15.28 | 0.473 | 14.10 | 0.480 | 16.02 | 0.587 |

| UFormer [41] | 16.36 | 0.771 | 18.82 | 0.771 | 19.66 | 0.871 |

| RetinexNet [13] | 16.77 | 0.560 | 15.47 | 0.567 | 17.13 | 0.798 |

| EnGAN [17] | 17.48 | 0.650 | 18.23 | 0.617 | 16.57 | 0.734 |

| RUAS [42] | 18.23 | 0.720 | 18.37 | 0.723 | 16.55 | 0.652 |

| FIDE [18] | 18.27 | 0.665 | 16.85 | 0.678 | 15.20 | 0.612 |

| KinD [14] | 20.86 | 0.790 | 14.74 | 0.641 | 13.29 | 0.578 |

| Restormer [21] | 22.43 | 0.823 | 19.94 | 0.827 | 21.41 | 0.830 |

| MIRNet [43] | 24.14 | 0.830 | 20.02 | 0.820 | 21.94 | 0.876 |

| SNR-Net [44] | 24.61 | 0.842 | 21.48 | 0.849 | 24.14 | 0.928 |

| RetinexFormer [22] | 25.16 | 0.845 | 22.80 | 0.840 | 25.67 | 0.930 |

| SeMaNet | 25.46 | 0.850 | 22.89 | 0.852 | 25.71 | 0.938 |

| Methods | SID | SMID | SDSD-out | |||

|---|---|---|---|---|---|---|

| PSNR | SSIM | PSNR | SSIM | PSNR | SSIM | |

| SID [38] | 16.97 | 0.591 | 24.78 | 0.718 | 24.90 | 0.693 |

| DeepUPE [39] | 17.01 | 0.604 | 23.91 | 0.690 | 21.94 | 0.698 |

| DeepLPF [40] | 18.07 | 0.600 | 24.36 | 0.688 | 22.76 | 0.658 |

| UFormer [41] | 18.54 | 0.577 | 27.20 | 0.792 | 23.85 | 0.748 |

| RetinexNet [13] | 16.48 | 0.578 | 22.83 | 0.684 | 20.96 | 0.629 |

| EnGAN [17] | 17.23 | 0.543 | 22.62 | 0.674 | 20.10 | 0.616 |

| RUAS [42] | 18.44 | 0.581 | 25.88 | 0.744 | 23.84 | 0.743 |

| FIDE [18] | 18.34 | 0.578 | 24.42 | 0.692 | 22.20 | 0.629 |

| KinD [14] | 18.02 | 0.583 | 22.18 | 0.634 | 21.97 | 0.654 |

| Restormer [21] | 22.27 | 0.649 | 26.97 | 0.758 | 24.79 | 0.802 |

| MIRNet [43] | 20.84 | 0.605 | 25.66 | 0.762 | 27.13 | 0.837 |

| SNR-Net [44] | 22.87 | 0.625 | 28.49 | 0.805 | 28.66 | 0.866 |

| RetinexFormer [22] | 24.44 | 0.680 | 29.15 | 0.815 | 29.84 | 0.877 |

| SeMaNet | 24.55 | 0.687 | 29.35 | 0.826 | 29.88 | 0.883 |

4.2.2. Qualitative Comparison

4.2.3. Ablation Study

| Components | LOL-v2-real | LOL-v2-syn | ||

|---|---|---|---|---|

| PSNR | SSIM | PSNR | SSIM | |

| Baseline | 21.42 | 0.802 | 24.03 | 0.901 |

| + H-TM | 22.15 | 0.826 | 24.88 | 0.920 |

| + H-TM + EAL | 22.56 | 0.841 | 25.32 | 0.931 |

| + H-TM + EAL + CLIP-SP (SeMaNet) | 22.89 | 0.852 | 25.71 | 0.938 |

5. Conclusion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| LLIE | Low-Light Image Enhancement |

| CLIP | Contrastive Language–Image Pre-training |

| Grad-CAM | Gradient-weighted Class Activation Mapping |

| SSM | State Space Model |

| FFN | Feed-Forward Neural Network |

| MSE | Mean Squared Error |

| PSNR | Peak Signal-to-Noise Ratio |

| SSIM | Structural Similarity Index Measure |

References

- Cordts, M.; Omran, M.; Ramos, S.; Rehfeld, T.; Enzweiler, M.; Benenson, R.; Franke, U.; Roth, S.; Schiele, B. The Cityscapes Dataset for Semantic Urban Scene Understanding. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 3213–3223. [Google Scholar]

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning; MIT Press: Cambridge, MA, USA, 2016. [Google Scholar]

- Guo, C.; Li, C.; Guo, J.; Loy, C.C.; Hou, J.; Kwong, S.; Cong, R. Zero-Reference Deep Curve Estimation for Low-Light Image Enhancement. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 1777–1786. [Google Scholar]

- Zhao, L.; Wang, K.; Zhang, J.; Wang, A.; Bai, H. Learning Deep Texture-Structure Decomposition for Low-Light Image Restoration and Enhancement. Neurocomputing 2023, 524, 126–141. [Google Scholar] [CrossRef]

- Wang, W.; Wu, X.; Yuan, X.; Gao, Z. An Experiment-Based Review of Low-Light Image Enhancement Methods. IEEE Access 2020, 8, 87884–87917. [Google Scholar] [CrossRef]

- Pizer, S.M.; Amburn, E.P.; Austin, J.D.; Cromartie, R.; Geselowitz, A.; Greer, T.; ter Haar Romeny, B.; Zimmerman, J.B.; Zuiderveld, K. Adaptive Histogram Equalization and Its Variations. Comput. Vis. Graph. Image Process. 1987, 39, 355–368. [Google Scholar] [CrossRef]

- Rahman, S.; Rahman, M.M.; Abdullah-Al-Wadud, M.; Al-Quaderi, G.D.; Shoyaib, M. An Adaptive Gamma Correction for Image Enhancement. EURASIP J. Image Video Process. 2016, 2016, 35. [Google Scholar] [CrossRef]

- Land, E.H.; McCann, J.J. Lightness and Retinex Theory. J. Opt. Soc. Am. 1971, 61, 1–11. [Google Scholar] [CrossRef] [PubMed]

- Land, E.H. The Retinex Theory of Color Vision. Sci. Am. 1977, 237, 108–128. [Google Scholar] [CrossRef]

- Jobson, D.J.; Rahman, Z.-u.; Woodell, G.A. A Multiscale Retinex for Bridging the Gap between Color Images and the Human Observation of Scenes. IEEE Trans. Image Process. 1997, 6, 965–976. [Google Scholar] [CrossRef]

- Jobson, D.J.; Rahman, Z.; Woodell, G.A. Properties and Performance of a Center/Surround Retinex. IEEE Trans. Image Process. 1997, 6, 451–462. [Google Scholar] [CrossRef]

- Cai, J.; Gu, S.; Zhang, L. Learning a Deep Single Image Contrast Enhancer from Multi-Exposure Images. IEEE Trans. Image Process. 2018, 27, 2049–2062. [Google Scholar] [CrossRef]

- Wei, C.; Wang, W.; Yang, W.; Liu, J. Deep Retinex Decomposition for Low-Light Enhancement. arXiv 2018, arXiv:1808.04560. [Google Scholar]

- Zhang, Y.; Zhang, J.; Guo, J.; Chen, X.; Chen, X.; Zhu, X. Kindling the Darkness: A Practical Low-Light Image Enhancer. In Proceedings of the 27th ACM International Conference on Multimedia (ACM MM), Nice, France, 21–25 October 2019; pp. 1635–1643. [Google Scholar]

- Liu, J.; Xu, D.; Yang, W.; Fan, M.; Huang, H. Benchmarking Low-Light Image Enhancement and Beyond. Int. J. Comput. Vis. 2021, 129, 1153–1184. [Google Scholar] [CrossRef]

- Li, C.; Guo, C.; Loy, C.C. Learning to Enhance Low-Light Image via Zero-Reference Deep Curve Estimation. arXiv 2021, arXiv:2103.00860. [Google Scholar] [CrossRef] [PubMed]

- Jiang, Y.; Gong, X.; Liu, D.; Cheng, Y.; Fang, C.; Shen, X.; Yang, J.; Zhou, P.; Wang, Z.; Lin, L. EnlightenGAN: Deep Light Enhancement without Paired Supervision. IEEE Trans. Image Process. 2021, 30, 2340–2349. [Google Scholar] [CrossRef] [PubMed]

- He, Z.; Ran, W.; Liu, S.; Li, K.; Lu, J.; Xie, C.; Liu, Y.; Lu, H. Low-Light Image Enhancement with Multi-Scale Attention and Frequency-Domain Optimization. IEEE Trans. Circuits Syst. Video Technol. 2024, 34, 2861–2873. [Google Scholar] [CrossRef]

- Chen, L.; Chu, X.; Zhang, X.; Sun, J. Simple Baselines for Image Restoration. Computer Vision – ECCV 2022, Tel Aviv, Israel, 23–27 October 2022; pp. 17–33. [Google Scholar]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An Image is Worth 16×16 Words: Transformers for Image Recognition at Scale. arXiv 2020, arXiv:2010.11929. [Google Scholar]

- Zamir, S.W.; Arora, A.; Khan, S.; Hayat, M.; Khan, F.S.; Yang, M.-H. Restormer: Efficient Transformer for High-Resolution Image Restoration. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 19–24 June 2022; pp. 5718–5729. [Google Scholar]

- Cai, Y.; Bian, H.; Lin, J.; Wang, H.; Timofte, R.; Zhang, Y. Retinexformer: One-Stage Retinex-Based Transformer for Low-Light Image Enhancement. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Paris, France, 2–6 October 2023; pp. 12470–12479. [Google Scholar]

- Wang, T.; Zhang, K.; Shen, T.; Luo, W.; Stenger, B.; Lu, T. Ultra-High-Definition Low-Light Image Enhancement: A Benchmark and Transformer-Based Method. Proc. AAAI Conf. Artif. Intell. 2023, 37, 2974–2982. [Google Scholar] [CrossRef]

- Gu, A.; Goel, K.; Kumar, A.; et al. Mamba: Linear-Time Sequence Modeling with Selective State Spaces. arXiv 2023, arXiv:2312.00752. [Google Scholar]

- Zhu, L.; Hou, M.R.; Wang, Y.; et al. Vision Mamba: Efficient Visual Representation Learning with Bidirectional State Space Model. arXiv 2024, arXiv:2401.09417. [Google Scholar]

- Archit, A.; Pape, C. ViM-UNet: Vision Mamba for Biomedical Segmentation. arXiv 2024, arXiv:2404.07705. [Google Scholar]

- Li, K.; Li, X.; Wang, Y.; He, Y.; Wang, Y.; Wang, L.; Qiao, Y. VideoMamba: State Space Model for Efficient Video Understanding. In Computer Vision – ECCV 2024; Milan, Italy, 29 September–4 October 2024; pp. 237–255.

- Guo, H.; Li, J.; Dai, T.; Ouyang, Z.; Ren, X.; Xia, S.-T. MambaIR: A Simple Baseline for Image Restoration with State-Space Model. In Computer Vision – ECCV 2024; Milan, Italy, 29 September–4 October 2024; pp. 222–241.

- Bai, J.; Yin, Y.; He, Q.; Li, Y.; Zhang, X. Retinexmamba: Retinex-Based Mamba for Low-Light Image Enhancement. In Neural Information Processing; 31st International Conference, ICONIP 2024, Auckland, New Zealand, 2–6 December 2024, Proceedings, Part VIII; Springer: Singapore, 2025; pp. 427–442.

- Radford, A.; Kim, J.W.; Hallacy, C.; Ramesh, A.; Goh, G.; Agarwal, S.; Sastry, G.; Askell, A.; Mishkin, P.; Clark, J.; et al. Learning Transferable Visual Models from Natural Language Supervision. arXiv 2021, arXiv:2103.00020. [Google Scholar]

- Patashnik, O.; Wu, Z.; Shechtman, E.; Cohen-Or, D.; Lischinski, D. StyleCLIP: Text-Driven Manipulation of StyleGAN Imagery. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 11–17 October 2021; pp. 2065–2074. [Google Scholar]

- Xu, D.; Zhu, Y.; Choy, C.B.; Fei-Fei, L. Scene Graph Generation by Iterative Message Passing. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 5410–5419. [Google Scholar]

- Ramesh, A.; Dhariwal, P.; Nichol, A.; et al. Hierarchical Text-Conditional Image Generation with CLIP Latents. arXiv 2022, arXiv:2204.06125. [Google Scholar]

- Zhang, K.; Zuo, W.; Chen, Y.; Meng, D.; Zhang, L. Beyond a Gaussian Denoiser: Residual Learning of Deep CNN for Image Denoising. IEEE Trans. Image Process. 2017, 26, 3142–3155. [Google Scholar] [CrossRef]

- Zhu, J.-Y.; Park, T.; Isola, P.; Efros, A.A. Unpaired Image-to-Image Translation with Cycle-Consistent Adversarial Networks. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 2242–2251. [Google Scholar]

- Anaya, J.; Barbu, A. RENOIR: A Dataset for Real Low-Light Image Noise Reduction. J. Vis. Commun. Image Represent. 2018, 51, 144–154. [Google Scholar] [CrossRef]

- Zheng, S.; Ma, Y.; Pan, J.; Lu, C.; Gupta, G. Low-Light Image and Video Enhancement: A Comprehensive Survey and Beyond. arXiv 2022, arXiv:2212.10772. [Google Scholar]

- Chen, C.; Chen, Q.; Xu, J.; Koltun, V. Learning to See in the Dark. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–22 June 2018; pp. 3291–3300. [Google Scholar]

- Wang, R.; Zhang, Q.; Fu, C.-W.; Shen, X.; Zheng, W.-S.; Jia, J. Underexposed Photo Enhancement Using Deep Illumination Estimation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 16–20 June 2019; pp. 6849–6857. [Google Scholar]

- Moran, S.; Marza, P.; McDonagh, S.; Parisot, S.; Slabaugh, G. DeepLPF: Deep Local Parametric Filters for Image Enhancement. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 12823–12832. [Google Scholar]

- Wang, Z.; Cun, X.; Bao, J.; Zhou, W.; Liu, J.; Li, H. Uformer: A General U-Shaped Transformer for Image Restoration. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 19–24 June 2022; pp. 17662–17672. [Google Scholar]

- Liu, R.; Ma, L.; Zhang, J.; Fan, X.; Luo, Z. Retinex-Inspired Unrolling with Cooperative Prior Architecture Search for Low-Light Image Enhancement. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Virtual Event, 19–25 June 2021; pp. 10561–10570. [Google Scholar]

- Zamir, S.W.; Arora, A.; Khan, S.; Hayat, M.; Khan, F.S.; Yang, M.-H.; Shao, L. Learning Enriched Features for Real Image Restoration and Enhancement. In Computer Vision – ECCV 2020; Glasgow, UK, 23–28 August 2020; pp. 492–511.

- Xu, X.; Wang, R.; Fu, C.-W.; Jia, J. SNR-Aware Low-Light Image Enhancement. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 19–24 June 2022; pp. 17693–17703. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).