Submitted:

20 April 2026

Posted:

22 April 2026

You are already at the latest version

Abstract

Keywords:

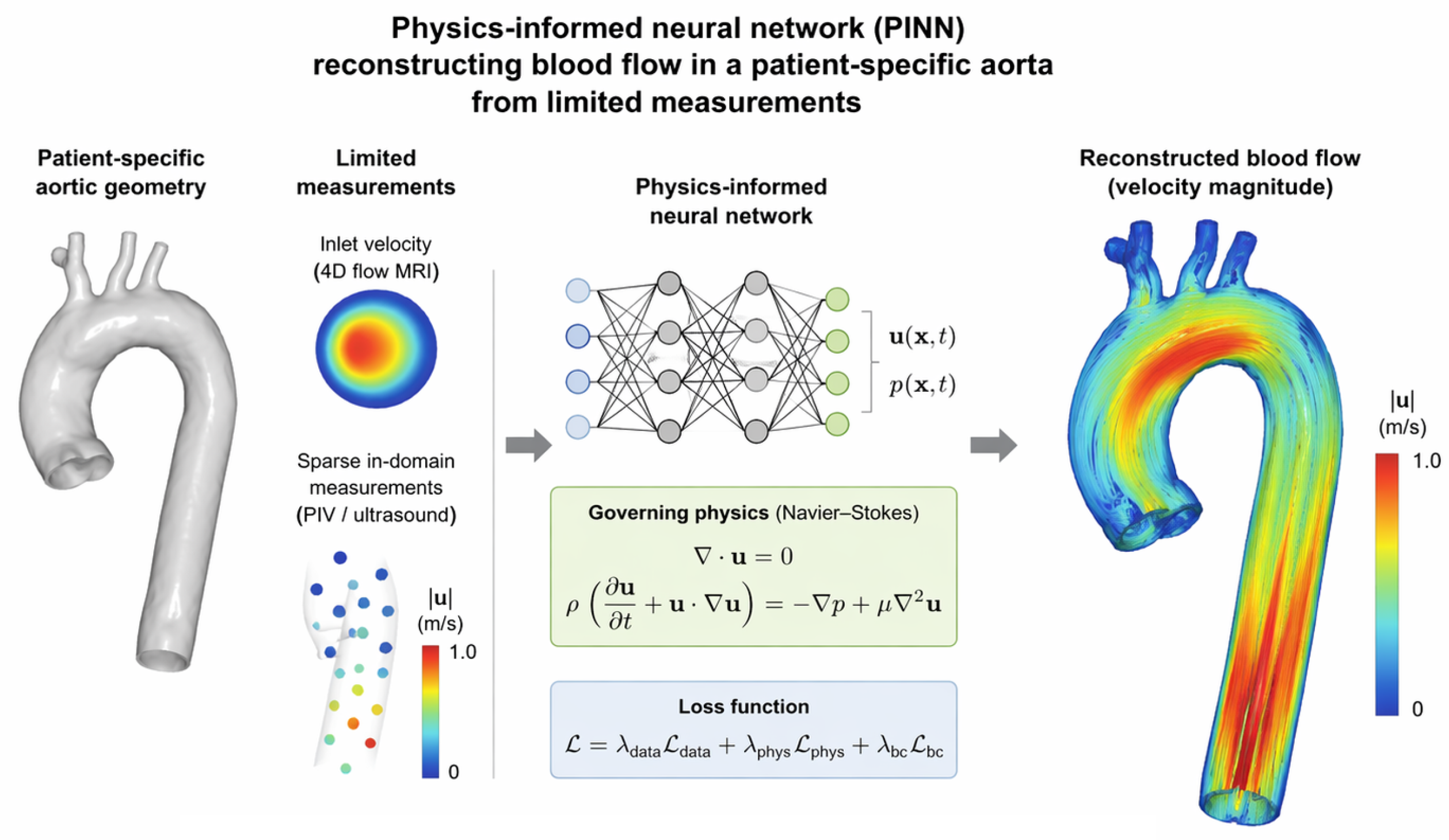

1. Introduction

Novelty and Contribution

- 1.

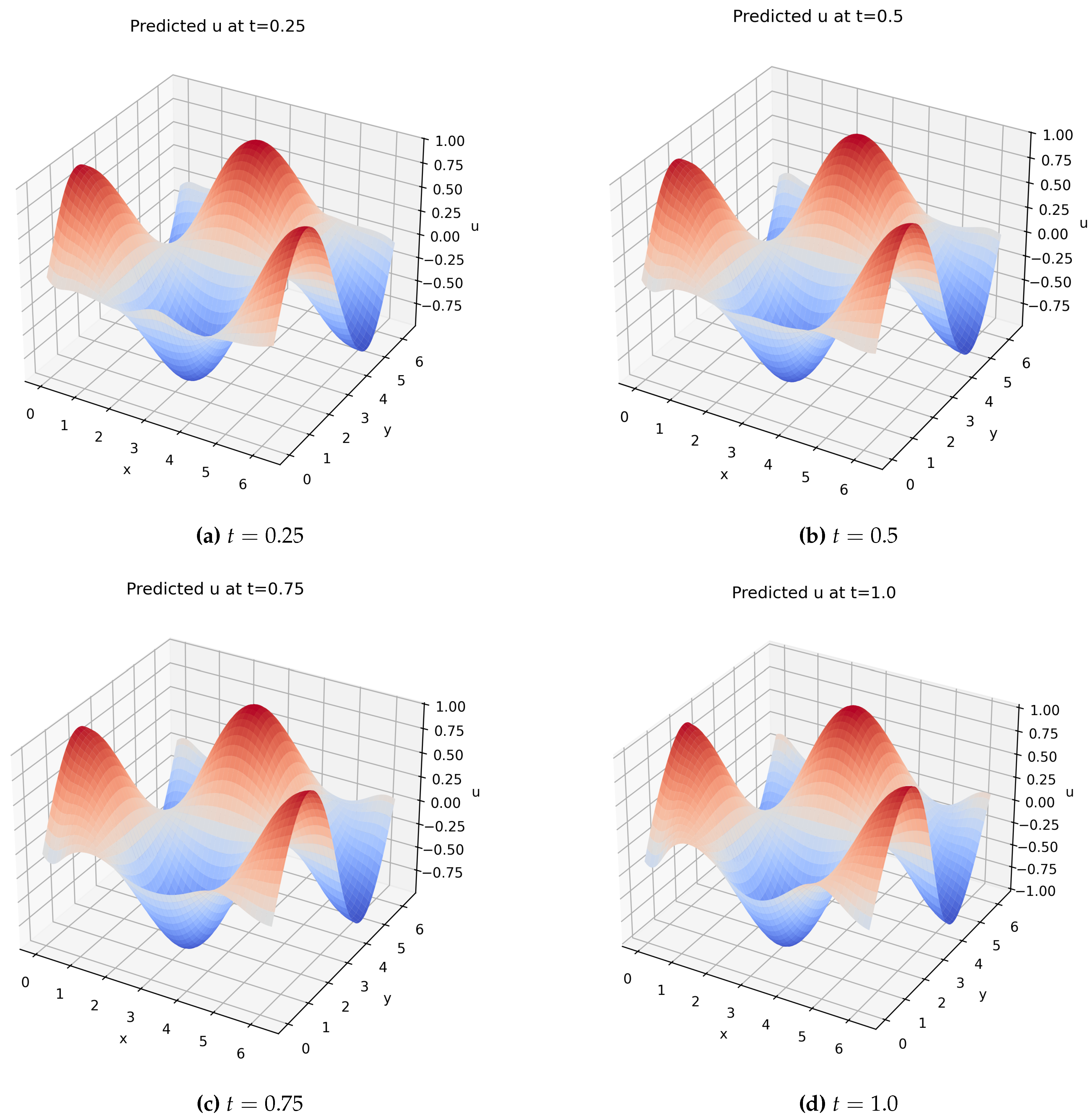

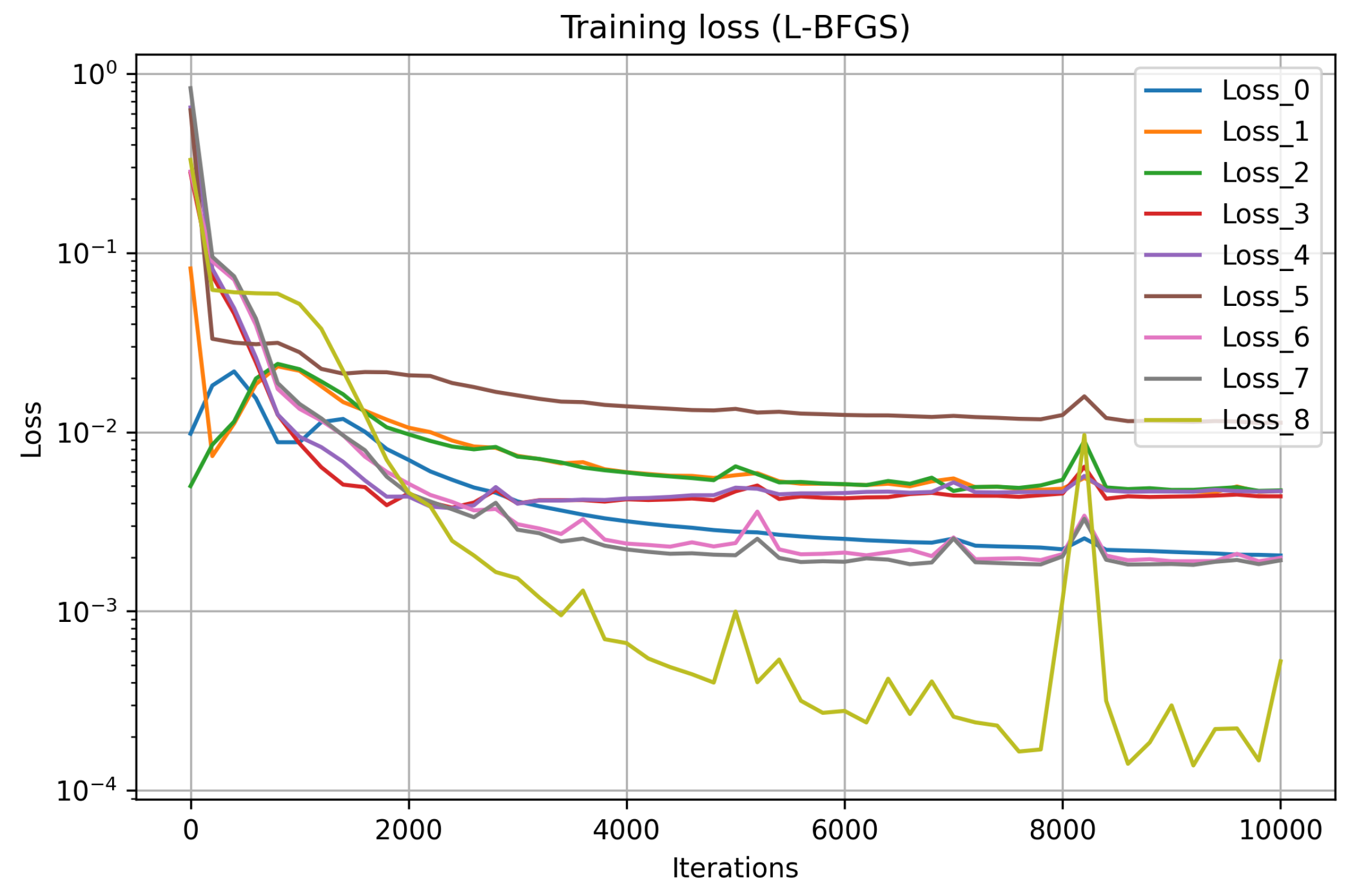

- A complete description of the network architecture (4 hidden layers, 128 neurons each, tanh activation), training setup (20000 collocation points, Adam for 10000 iterations), and post-processing (100×100 grid, four time slices).

- 2.

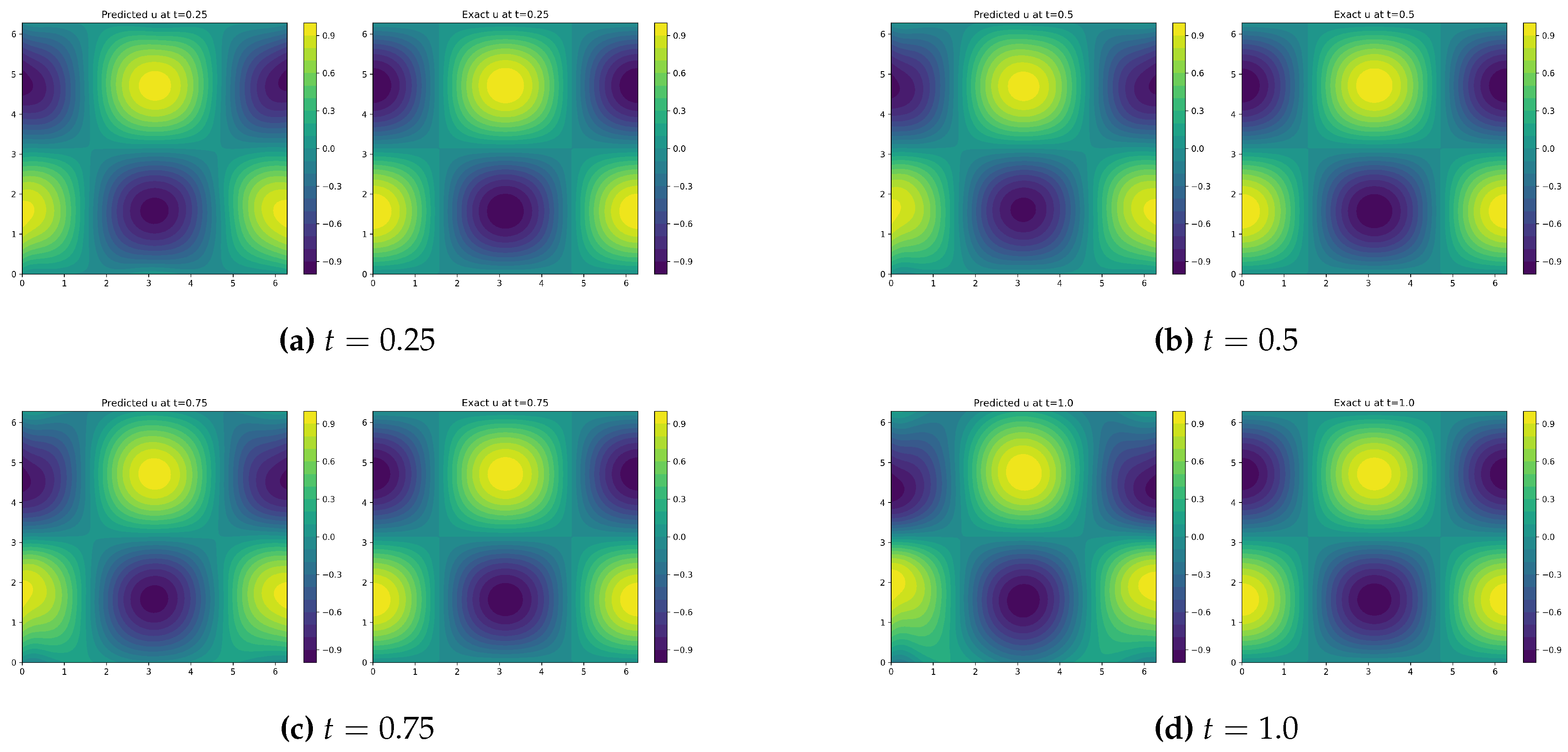

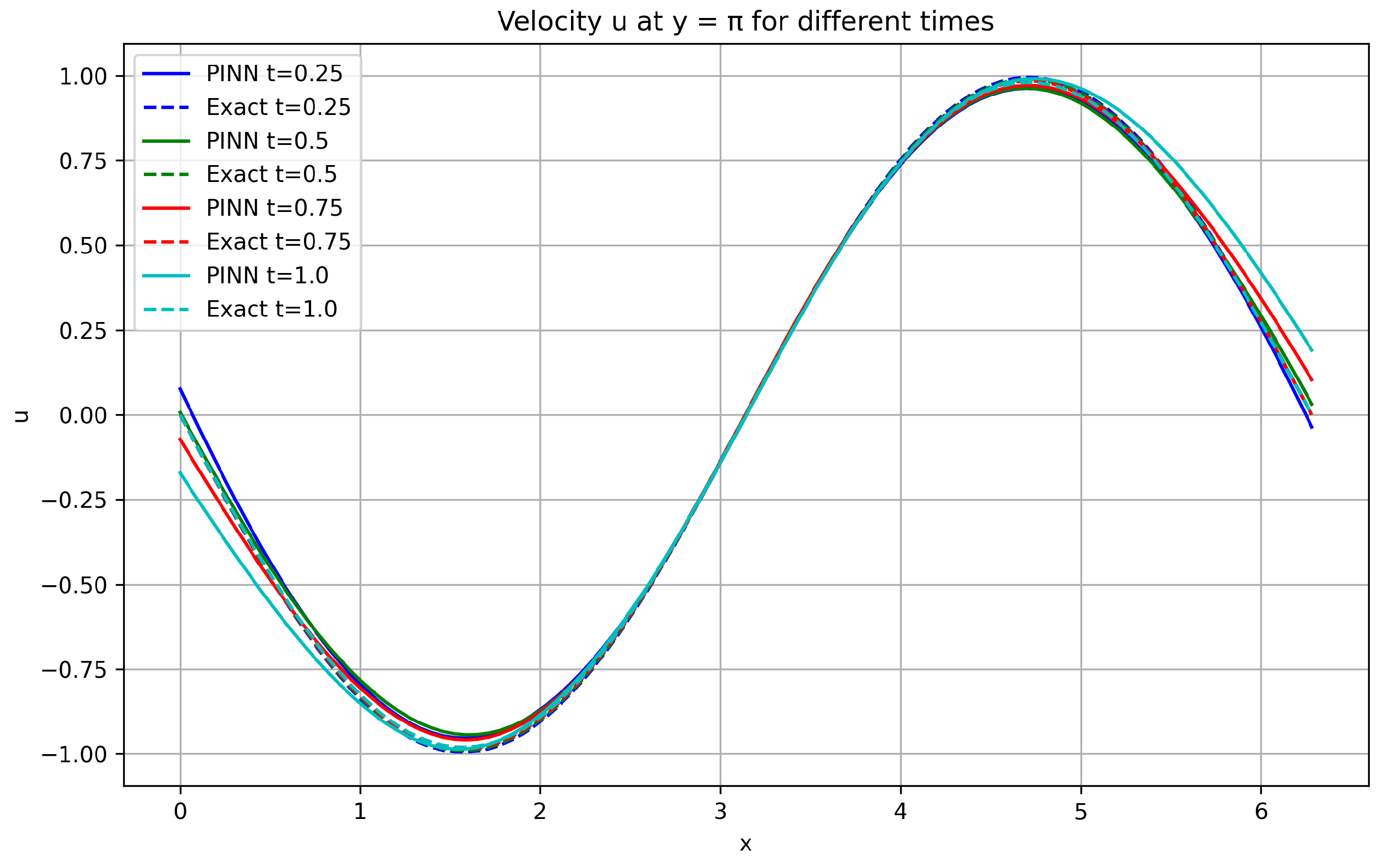

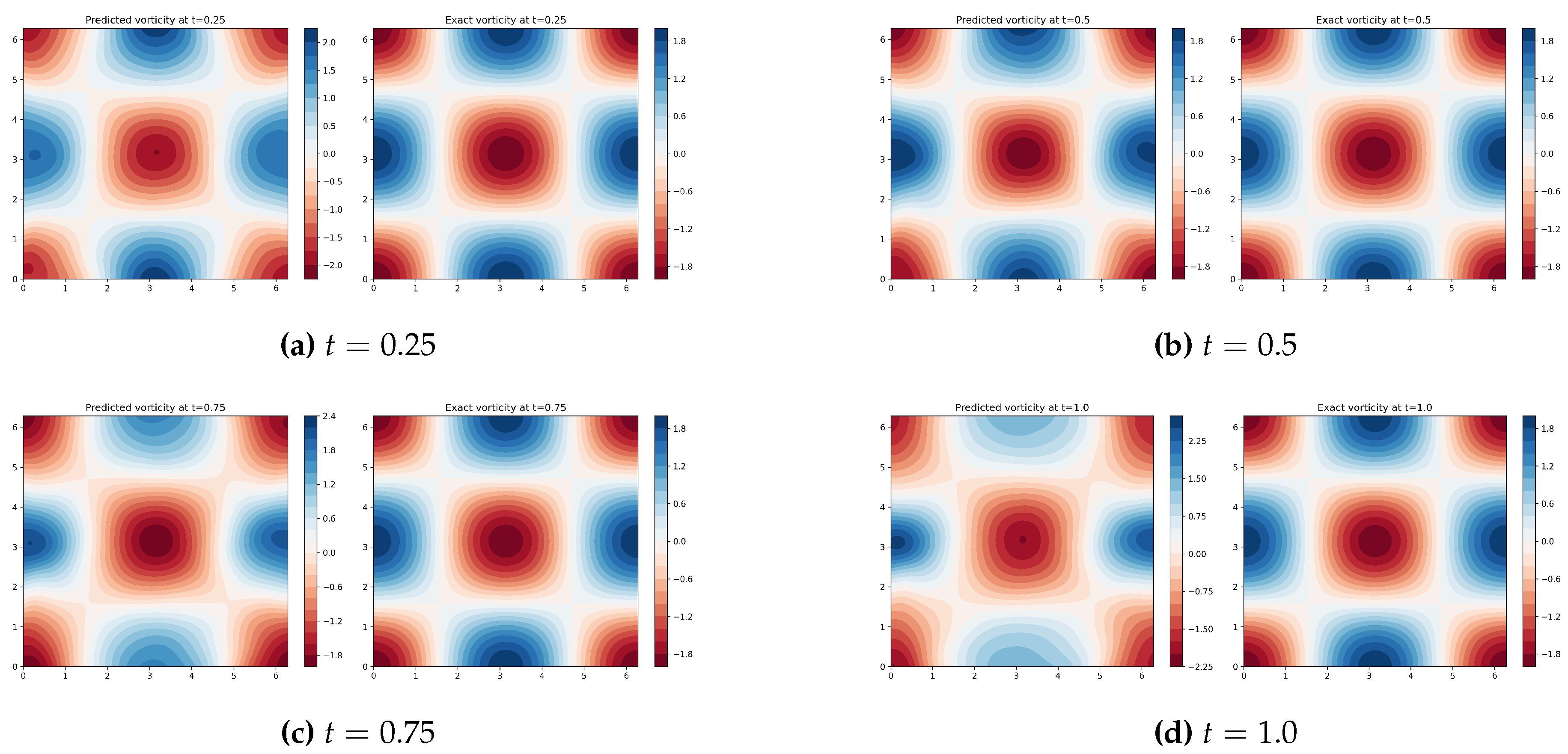

- Quantitative errors for u, v, and p at , showing that velocity errors increase from to while pressure errors remain high () but decrease over time.

- 3.

- Qualitative visualisations including contour plots, line cuts along , vorticity fields, and three-dimensional surface plots all generated automatically from the trained model.

- 4.

- A discussion of error accumulation over time and the particular difficulty of pressure prediction, which we attribute to the lack of a pressure-Poisson constraint in the standard PINN loss.

- 5.

- An openly shared code (available upon request) that can serve as a template for researchers wishing to apply PINNs to more complex unsteady flows.

2. Methodology

2.1. Physics-Informed Neural Network Approximation

2.2. Loss Function

2.3. Training Strategy and Sampling of Collocation Points

- 20 000 interior collocation points in ,

- 2 000 boundary points on the spatial boundaries,

- 2 000 initial points on the plane .

2.4. Computational Environment and Implementation Details

2.5. Post-Processing and Error Evaluation

3. Results

3.1. Qualitative comparison of velocity fields

3.2. Vorticity Field Analysis

3.3. Three-Dimensional Visualization of Velocity Decay

3.4. Quantitative Error Analysis and Convergence Behavior

4. Conclusions

Author Contributions

Nomenclature

| Symbol | Description | Unit |

| Velocity components in x and y directions | ||

| p | Pressure (divided by constant density) | |

| Spatial coordinates | ||

| t | Time | |

| Kinematic viscosity | ||

| Reynolds number, | ||

| T | Final simulation time | |

| Spatial domain | ||

| Velocity vector | ||

| Vorticity, | ||

| PDE residuals (continuity, x-momentum, y-momentum) | ||

| Number of interior collocation points | ||

| Number of boundary points | ||

| Number of initial condition points | ||

| Loss component for PDE residuals | ||

| Loss component for boundary conditions | ||

| Loss component for initial conditions | ||

| Total loss function | ||

| Neural network predictions for u, v, p | ||

| Input vector to the neural network | ||

| Relative error for variable |

References

- Raissi, M.; Perdikaris, P.; Karniadakis, G.E. Physics-informed neural networks: A deep learning framework for solving forward and inverse problems involving nonlinear partial differential equations. Journal of Computational Physics 2019, 378, 686–707. [Google Scholar] [CrossRef]

- Karniadakis, G.E.; Kevrekidis, I.G.; Lu, L.; Perdikaris, P.; Wang, S.; Yang, L. Physics-informed machine learning. Nature Reviews Physics 2021, 3, 422–440. [Google Scholar] [CrossRef]

- Raissi, M.; Perdikaris, P.; Karniadakis, G.E. Physics informed deep learning (part I): Data-driven solutions of nonlinear partial differential equations. arXiv 2017, arXiv:1711.10561. [Google Scholar] [CrossRef]

- Raissi, M.; Perdikaris, P.; Karniadakis, G.E. Physics informed deep learning (part II): Data-driven discovery of nonlinear partial differential equations. arXiv 2017, arXiv:1711.10566. [Google Scholar] [CrossRef]

- Wang, S.; Yu, X.; Perdikaris, P. When and why PINNs fail to train: A neural tangent kernel perspective. Journal of Computational Physics 2022, 449, 110768. [Google Scholar] [CrossRef]

- Jagtap, A.D.; Kawaguchi, K.; Karniadakis, G.E. Adaptive activation functions accelerate convergence in deep and physics-informed neural networks. Journal of Computational Physics 2020, 404, 109136. [Google Scholar] [CrossRef]

- Shukla, K.; Jagtap, A.D.; Karniadakis, G.E. Parallel physics-informed neural networks via domain decomposition. Journal of Computational Physics 2021, 447, 110683. [Google Scholar] [CrossRef]

- Wang, S.; Sankaran, S.; Perdikaris, P. Respecting causality is all you need for training physics-informed neural networks. arXiv 2022, arXiv:2203.07404. [Google Scholar] [CrossRef]

- Cuomo, S.; Di Cola, V.S.; Giampaolo, F.; Rozza, G.; Raissi, M.; Piccialli, F. Scientific machine learning through physics-informed neural networks: Where we are and what’s next. Journal of Scientific Computing 2022, 92, 88. [Google Scholar] [CrossRef]

- Huang, S.; Feng, W.; Tang, C.; He, Z.; Yu, C.; Lv, J. Partial differential equations meet deep neural networks: A survey. IEEE Transactions on Neural Networks and Learning Systems, 2025. [Google Scholar]

- Mao, Z.; Jagtap, A.D.; Karniadakis, G.E. Physics-informed neural networks for high-speed flows. Computer Methods in Applied Mechanics and Engineering 2020, 360, 112789. [Google Scholar] [CrossRef]

- Jin, X.; Cai, S.; Li, H.; Karniadakis, G.E. NSFnets (Navier-Stokes flow nets): Physics-informed neural networks for the incompressible Navier-Stokes equations. Journal of Computational Physics 2021, 426, 109951. [Google Scholar] [CrossRef]

- Sun, L.; Gao, H.; Pan, S.; Wang, J.X. Surrogate modeling for fluid flows based on physics-constrained deep learning without simulation data. Computer Methods in Applied Mechanics and Engineering 2020, 361, 112732. [Google Scholar] [CrossRef]

- Hu, B.; McDaniel, D. Applying physics-informed neural networks to solve Navier–Stokes equations for laminar flow around a particle. Mathematical and Computational Applications 2023, 28, 102. [Google Scholar] [CrossRef]

- Cai, S.; Mao, Z.; Wang, Z.; Yin, M.; Karniadakis, G.E. Physics-informed neural networks (PINNs) for fluid mechanics: A review. Acta Mechanica Sinica 2021, 37, 1727–1738. [Google Scholar] [CrossRef]

- Faroughi, S.A.; Pawar, N.M.; Fernandes, C.; Raissi, M.; Das, S.; Kalantari, N.K.; Kourosh Mahjour, S. Physics-guided, physics-informed, and physics-encoded neural networks and operators in scientific computing: Fluid and solid mechanics. Journal of Computing and Information Science in Engineering 2024, 24, 040802. [Google Scholar] [CrossRef]

- Wang, S.; Teng, Y.; Perdikaris, P. Understanding and mitigating gradient pathologies in physics-informed neural networks. SIAM Journal on Scientific Computing 2021, 43, A3055–A3081. [Google Scholar] [CrossRef]

- De Ryck, T.; Mishra, S. Error analysis for physics-informed neural networks (PINNs) approximating Kolmogorov PDEs. Advances in Computational Mathematics 2022, 48, 79. [Google Scholar] [CrossRef]

- Markidis, S. The old and the new: Can physics-informed deep learning replace traditional linear solvers? Frontiers in Big Data 2021, 4, 669097. [Google Scholar] [CrossRef]

- Chuang, P.Y.; Barba, L.A. Experience report of physics-informed neural networks in fluid simulations: pitfalls and frustration. arXiv 2022, arXiv:2205.14249. [Google Scholar] [CrossRef]

- Chuang, P.Y.; Barba, L.A. Predictive limitations of physics-informed neural networks in vortex shedding. arXiv 2023, arXiv:2306.00230. [Google Scholar] [CrossRef]

- Taylor, G.I.; Green, A.E. Mechanism of the production of small eddies from large ones. Proceedings of the Royal Society of London. Series A-Mathematical and Physical Sciences 1937, 158, 499–521. [Google Scholar] [CrossRef]

- Brachet, M.; Meneguzzi, M.; Vincent, A.; Politano, H.; Sulem, P. Numerical evidence of smooth self-similar dynamics and possibility of subsequent collapse for three-dimensional ideal flows. Physics of Fluids A: Fluid Dynamics 1992, 4, 2845–2854. [Google Scholar] [CrossRef]

- Lu, L.; Meng, X.; Mao, Z.; Karniadakis, G.E. DeepXDE: A deep learning library for solving differential equations. SIAM Review 2021, 63, 208–228. [Google Scholar] [CrossRef]

- Arzani, A.; Wang, J.X.; Sacks, M.S.; Shadden, S.C. Machine learning for cardiovascular biomechanics modeling: challenges and beyond. Annals of Biomedical Engineering 2022, 50, 615–627. [Google Scholar] [CrossRef]

- Garay, J.; Dunstan, J.; Uribe, S.; Costabal, F.S. Physics-informed neural networks for blood flow inverse problems. arXiv 2023, arXiv:2308.00927. [Google Scholar] [CrossRef]

- Dong, S.; Li, Z. A general approach for enforcing periodic boundary conditions in physics-informed neural networks. arXiv 2021, arXiv:2108.05991. [Google Scholar]

- Lu, L. DeepXDE documentation. 2021. Available online: https://deepxde.readthedocs.io/.

- Rahimi, A.; Recht, B. Random features for large-scale kernel machines. In Proceedings of the Advances in Neural Information Processing Systems (NIPS), 2007; Curran Associates, Inc.; Vol. 20. [Google Scholar]

- Lu, L.; Gao, Y.; Karniadakis, G.E. A comprehensive study of adaptive sampling for physics-informed neural networks. arXiv 2021, arXiv:2106.06003. [Google Scholar]

| Time t | |||

|---|---|---|---|

| 0.25 | 5.30e-2 | 5.79e-2 | 2.84e0 |

| 0.50 | 6.14e-2 | 6.74e-2 | 2.59e0 |

| 0.75 | 9.90e-2 | 1.05e-1 | 2.27e0 |

| 1.00 | 1.67e-1 | 1.79e-1 | 2.06e0 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).