Submitted:

20 April 2026

Posted:

22 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- We propose CiteGuard, a multi-agent framework that unifies chain-of-evidence reasoning, graph-enhanced detection, and gated behavior tree policies for reliable and efficient miscitation detection in scientific literature.

- We introduce a knowledge distillation approach that transfers LLM reasoning capabilities into graph neural networks, significantly reducing inference costs while preserving detection accuracy.

- We demonstrate that externalizing agent policies as gated behavior trees ensures verifiable, safe, and efficient execution, reducing LLM invocations by 30% while achieving state-of-the-art performance on three benchmark datasets.

2. Related Work

2.1. Citation Verification and Miscitation Detection

2.2. Safe and Verifiable LLM Agent Policies

3. Method

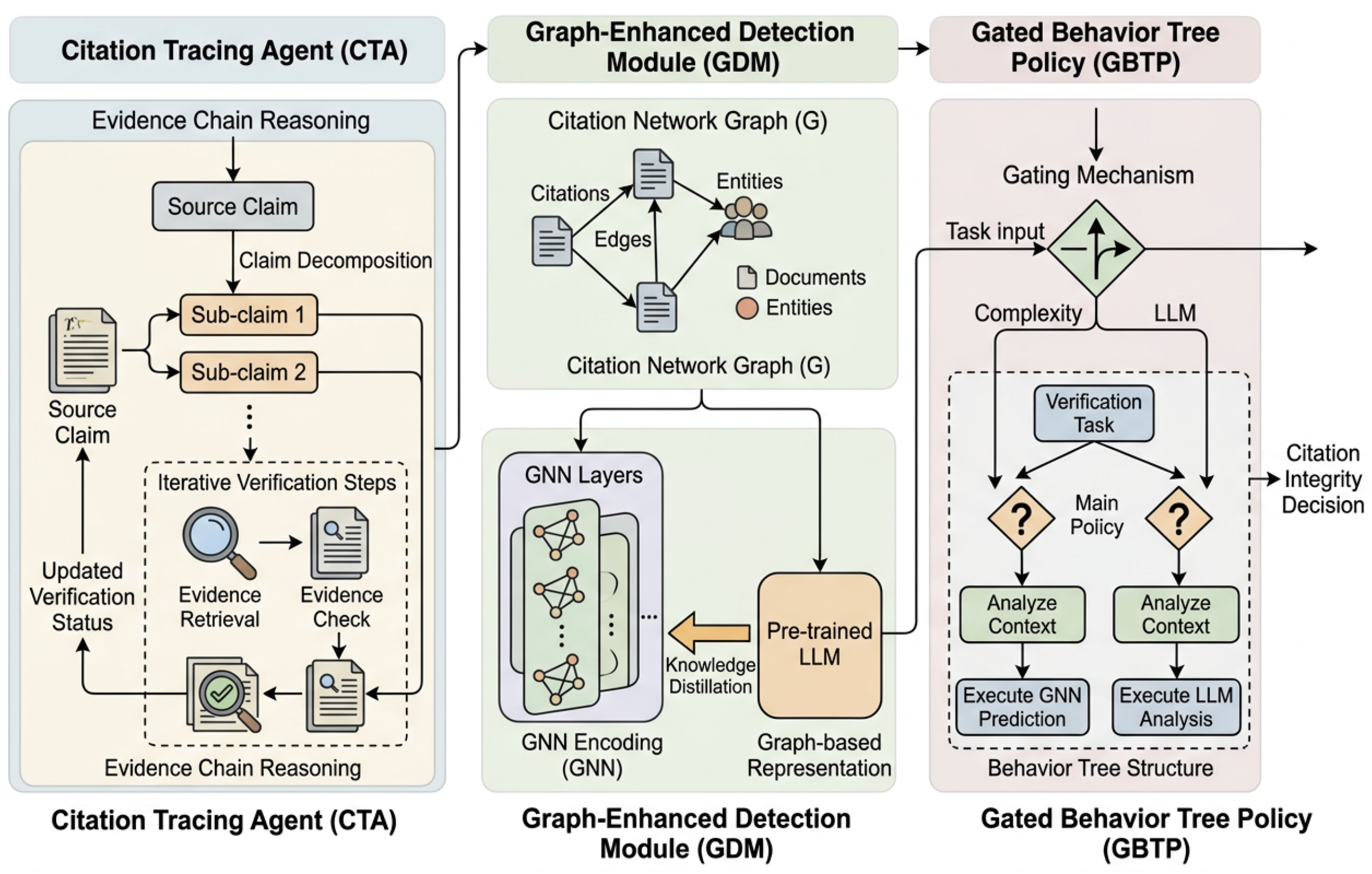

3.1. Citation Tracing Agent

3.1.1. Claim Decomposition

3.1.2. Evidence Chain Construction

3.2. Graph-Enhanced Detection Module

3.2.1. Citation Graph Construction

3.2.2. Graph Neural Network Encoder

3.2.3. Knowledge Distillation from LLM to GNN

3.3. Gated Behavior Tree Policy

3.3.1. Behavior Tree Construction

3.3.2. Gated Execution Mechanism

3.3.3. Verification Execution

3.4. Training Objective

4. Experiments

4.1. Experimental Setup

4.2. Main Results

4.3. Effectiveness of CiteGuard Components

4.4. Human Evaluation

| Method | Correct | Partially Correct | Avg Score |

|---|---|---|---|

| GPT-4 Zero-shot | 62.5% | 18.0% | 1.43 |

| BibAgent | 71.0% | 14.5% | 1.57 |

| LAGMiD | 76.5% | 12.0% | 1.65 |

| CiteGuard (Ours) | 82.0% | 10.5% | 1.75 |

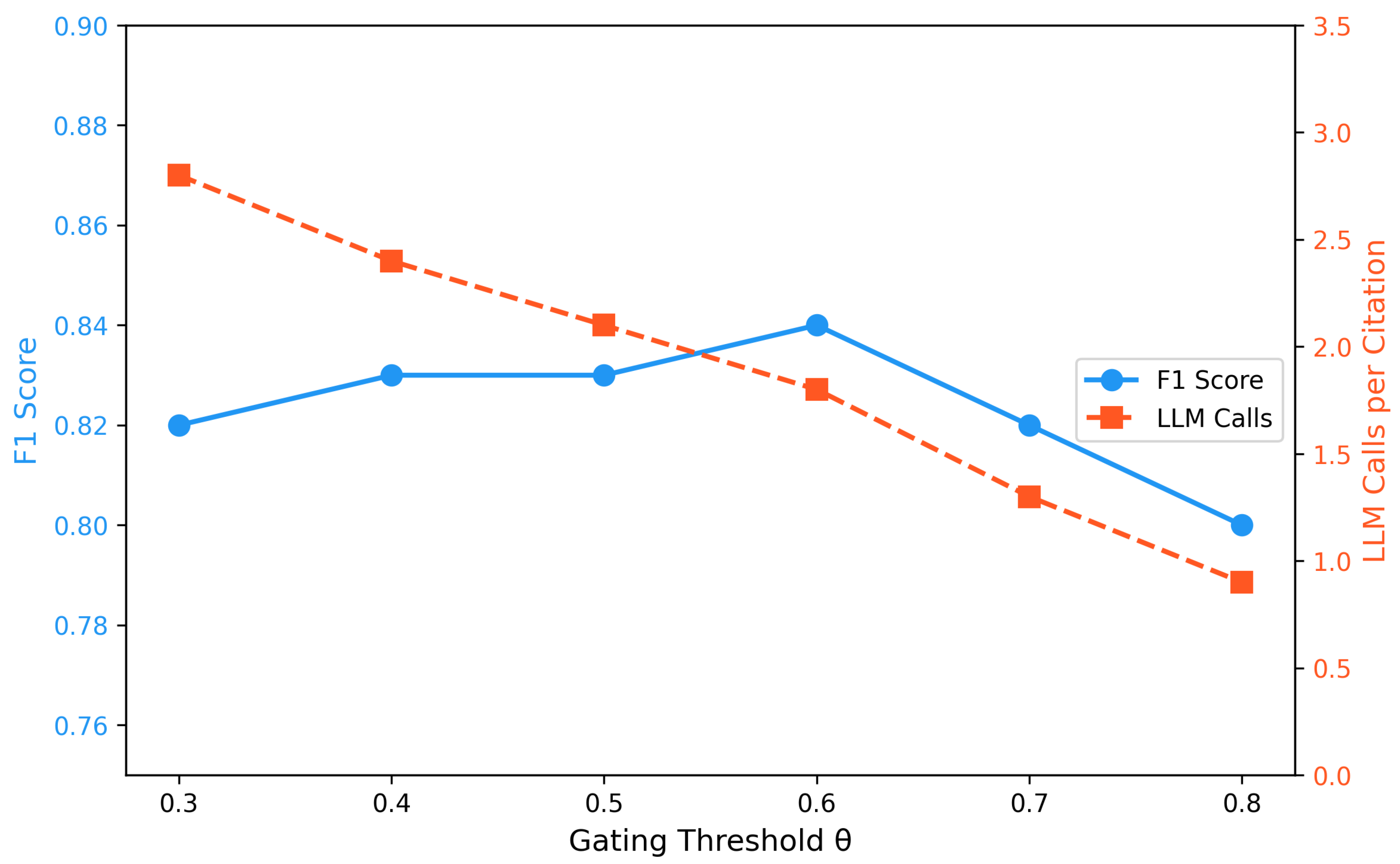

4.5. Impact of Gating Threshold

4.6. Cross-Domain Generalization

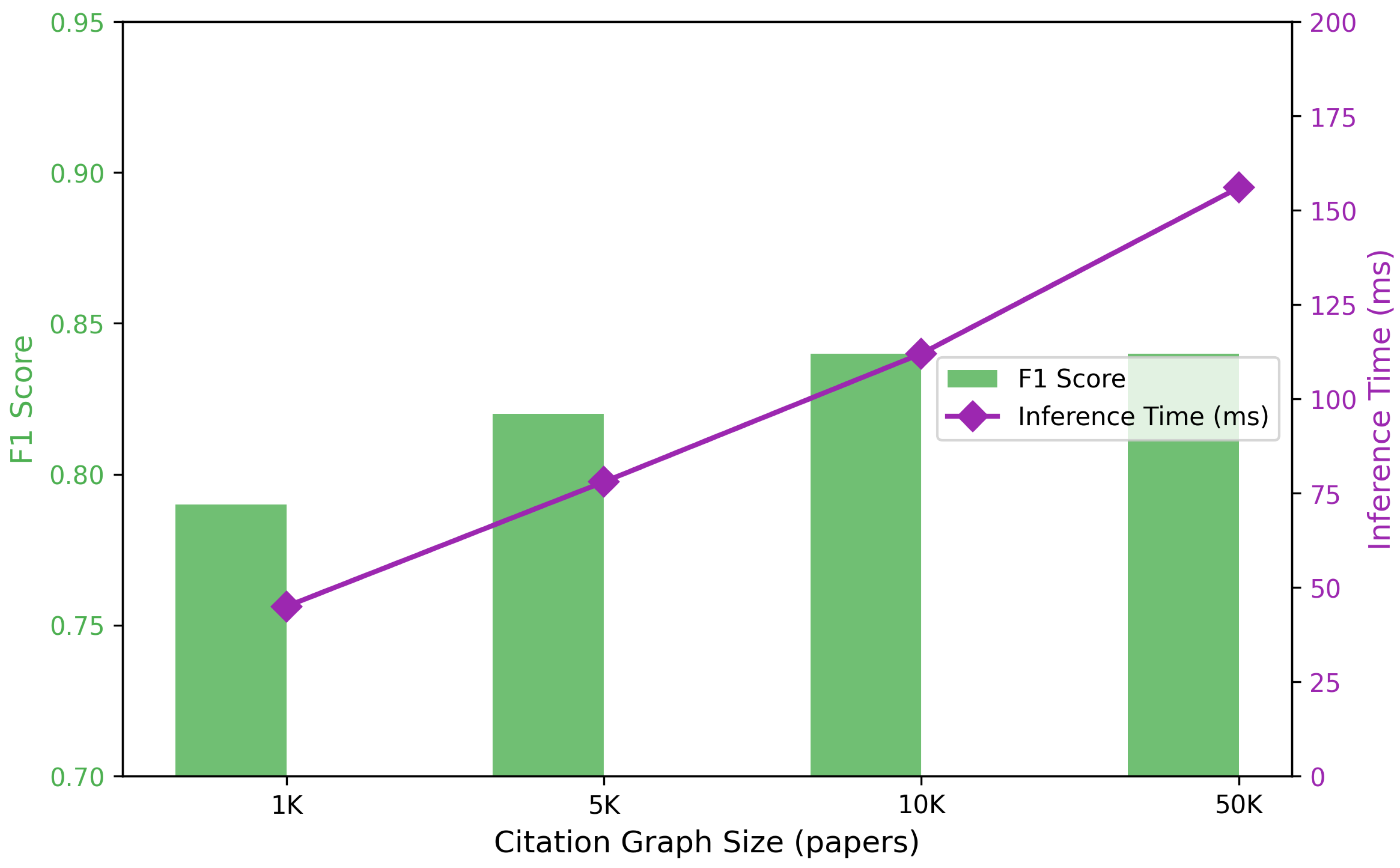

4.7. Scalability Analysis

5. Conclusions

References

- Ding, Y.; et al. Assessing citation integrity in biomedical literature. Bioinformatics 2024, 40, btae420. [Google Scholar]

- Liu, X.; et al. Detecting Reference Errors in Scientific Literature with Large Language Models. Journal of Biomedical Informatics 2025. [Google Scholar]

- Liu, J.; et al. Deep Graph Learning for Anomalous Citation Detection. IEEE Transactions on Neural Networks and Learning Systems, 2022. [Google Scholar]

- Liu, X.; Bai, C. Anomalous citations detection in academic networks. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence, 2020. [Google Scholar]

- Zhang, Z.; Lin, F.; Liu, H.; Morales, J.; Zhang, H.; Yamada, K.D.; Kolachalama, V.B.; Saligrama, V. GPS: A Probabilistic Distributional Similarity with Gumbel Priors for Set-to-Set Matching. In Proceedings of the The Thirteenth International Conference on Learning Representations, ICLR 2025, Singapore, April 24-28, 2025. OpenReview.net, 2025.

- Zhang, Z.; Shao, Y.; Zhang, Y.; Lin, F.; Zhang, H.K.; Rundensteiner, E.A. Deep Loss Convexification for Learning Iterative Models. IEEE Trans. Pattern Anal. Mach. Intell. 2025, 47, 1501–1513. [Google Scholar] [CrossRef] [PubMed]

- Huang, M.; et al. An automated framework for assessing how well LLMs cite. Nature Communications 2025. [Google Scholar]

- Yang, N.; Lin, H.; Liu, Y.; Tian, B.; Liu, G.; Zhang, H. Token-Importance Guided Direct Preference Optimization. arXiv 2025, arXiv:2505.19653. [Google Scholar] [CrossRef]

- Wang, L.; et al. A survey on large language model based autonomous agents. In Frontiers of Computer Science; 2024. [Google Scholar]

- Chen, Y.; Kang, o. ShieldAgent: Shielding Agents via Verifiable Safety Policy Reasoning. In Proceedings of the Proceedings of the International Conference on Machine Learning, 2025. [Google Scholar]

- Yang, N.; Fan, M.; Wang, W.; Zhang, H. Decision-Making Large Language Model for Wireless Communication: A Comprehensive Survey on Key Techniques. IEEE Communications Surveys & Tutorials, 2025. [Google Scholar]

- Yang, N.; Zhong, H.; Zhang, H.; Berry, R. Vision-LLMs for Spatiotemporal Traffic Forecasting. arXiv 2025, arXiv:2510.11282. [Google Scholar] [CrossRef]

- Dai, Z.; Wang, L.; Lin, F.; Wang, Y.; Li, Z.; Yamada, K.D.; Zhang, Z.; Lu, W. A Language Anchor-Guided Method for Robust Noisy Domain Generalization. CoRR 2025, abs/2503.17211, 2503.17211. [Google Scholar] [CrossRef]

- Li, P.; Lin, F.; Xing, S.; Sun, J.; Zhang, D.; Yang, S.; Ni, C.; Tu, Z. Let the Abyss Stare Back Adaptive Falsification for Autonomous Scientific Discovery. arXiv 2026, arXiv:2603.29045. [Google Scholar] [CrossRef]

- Xu, X.; Wang, Y.; Xu, D.; Peng, Y.; Zhang, C.; Jia, J.; Chen, B. Vsegan: Visual speech enhancement generative adversarial network. In Proceedings of the ICASSP 2022-2022 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP); IEEE, 2022; pp. 7308–7311. [Google Scholar]

- Xu, X.; Tu, W.; Yang, Y. CASE-Net: Integrating local and non-local attention operations for speech enhancement. Speech Communication 2023, 148, 31–39. [Google Scholar] [CrossRef]

- Xu, X.; Tu, W.; Yang, Y. Selector-enhancer: learning dynamic selection of local and non-local attention operation for speech enhancement. Proceedings of the Proceedings of the AAAI conference on artificial intelligence 2023, Vol. 37, 13853–13860. [Google Scholar] [CrossRef]

- Li, P.; Lin, F.; Xing, S.; Zheng, X.; Hong, X.; Yang, S.; Sun, J.; Tu, Z.; Ni, C. Bibagent: An agentic framework for traceable miscitation detection in scientific literature. arXiv 2026, arXiv:2601.16993. [Google Scholar]

- Wu, H.; Xiang, H.; Gao, J.; Zhao, X.; Wu, D.; Li, J. Detecting Miscitation on the Scholarly Web through LLM-Augmented Text-Rich Graph Learning. arXiv 2026, arXiv:2603.12290. [Google Scholar]

- Li, Y.; et al. SemanticCite: Citation Verification with AI-Powered Full-Text Analysis and Evidence-Based Reasoning. arXiv 2025, arXiv:2511.16198. [Google Scholar]

- Xiao, B.; Bennie, M.; Bardhan, J.; Wang, D.Z. Towards Human Cognition: Visual Context Guides Syntactic Priming in Fusion-Encoded Models. arXiv 2025, arXiv:2502.17669. [Google Scholar] [CrossRef]

- Zhang, Y.; et al. GuardAgent: Safeguard LLM Agents via Knowledge-Enabled Constraint Checks. In Proceedings of the Proceedings of the International Conference on Machine Learning, 2025. [Google Scholar]

- Liu, Y.; et al. VeriGuard: Enhancing LLM Agent Safety via Verified Code Generation. arXiv 2025, arXiv:2510.05156. [Google Scholar] [CrossRef]

- Li, P.; Sun, J.; Lin, F.; Xing, S.; Fu, T.; Feng, S.; Ni, C.; Tu, Z. Traversal-as-policy: Log-distilled gated behavior trees as externalized, verifiable policies for safe, robust, and efficient agents. arXiv 2026, arXiv:2603.05517. [Google Scholar]

- Xiao, B.; Yin, Z.; Shan, Z. Simulating public administration crisis: A novel generative agent-based simulation system to lower technology barriers in social science research. arXiv 2023, arXiv:2311.06957. [Google Scholar] [CrossRef]

- Xiao, B.; Shen, Q.; Wang, D.Z. From Text to Multi-Modal: Advancing Low-Resource-Language Translation through Synthetic Data Generation and Cross-Modal Alignments. Proceedings of the Proceedings of the Eighth Workshop on Technologies for Machine Translation of Low-Resource Languages (LoResMT 2025, 2025, 24–35. [Google Scholar]

- Wu, Y.; Yu, Y.; Yang, Z.; Zeng, Z.; Chen, G.; Xu, J. Brain-SAM: Modality-Agnostic Model for Brain Lesion Segmentation. In Proceedings of the 2025 IEEE International Conference on Bioinformatics and Biomedicine (BIBM); IEEE; Volume 2025, pp. 3000–3005.

| Method | ACL-ARC | PubMed-Cite | arXiv-Cite | Avg F1 | Cost |

|---|---|---|---|---|---|

| Semantic Similarity | 0.58 | 0.61 | 0.55 | 0.58 | 0 |

| Graph Anomaly Detection | 0.64 | 0.67 | 0.62 | 0.64 | 0 |

| GPT-4 Zero-shot | 0.73 | 0.75 | 0.71 | 0.73 | 1.0 |

| BibAgent | 0.76 | 0.78 | 0.74 | 0.76 | 3.2 |

| LAGMiD | 0.81 | 0.82 | 0.79 | 0.81 | 2.5 |

| CiteGuard (Ours) | 0.84 | 0.85 | 0.82 | 0.84 | 1.8 |

| Model Variant | Precision | Recall | F1 |

|---|---|---|---|

| Full CiteGuard | 0.85 | 0.83 | 0.84 |

| w/o Chain-of-Evidence | 0.80 | 0.79 | 0.79 |

| w/o Graph-Enhanced Module | 0.81 | 0.80 | 0.80 |

| w/o Behavior Tree Policy | 0.82 | 0.81 | 0.81 |

| w/o Knowledge Distillation | 0.83 | 0.81 | 0.82 |

| w/o Gating Mechanism | 0.84 | 0.82 | 0.83 |

| Train → Test | ACL-ARC | PubMed-Cite | arXiv-Cite |

|---|---|---|---|

| ACL-ARC | 0.84 | 0.71 | 0.68 |

| PubMed-Cite | 0.69 | 0.85 | 0.70 |

| arXiv-Cite | 0.67 | 0.72 | 0.82 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).