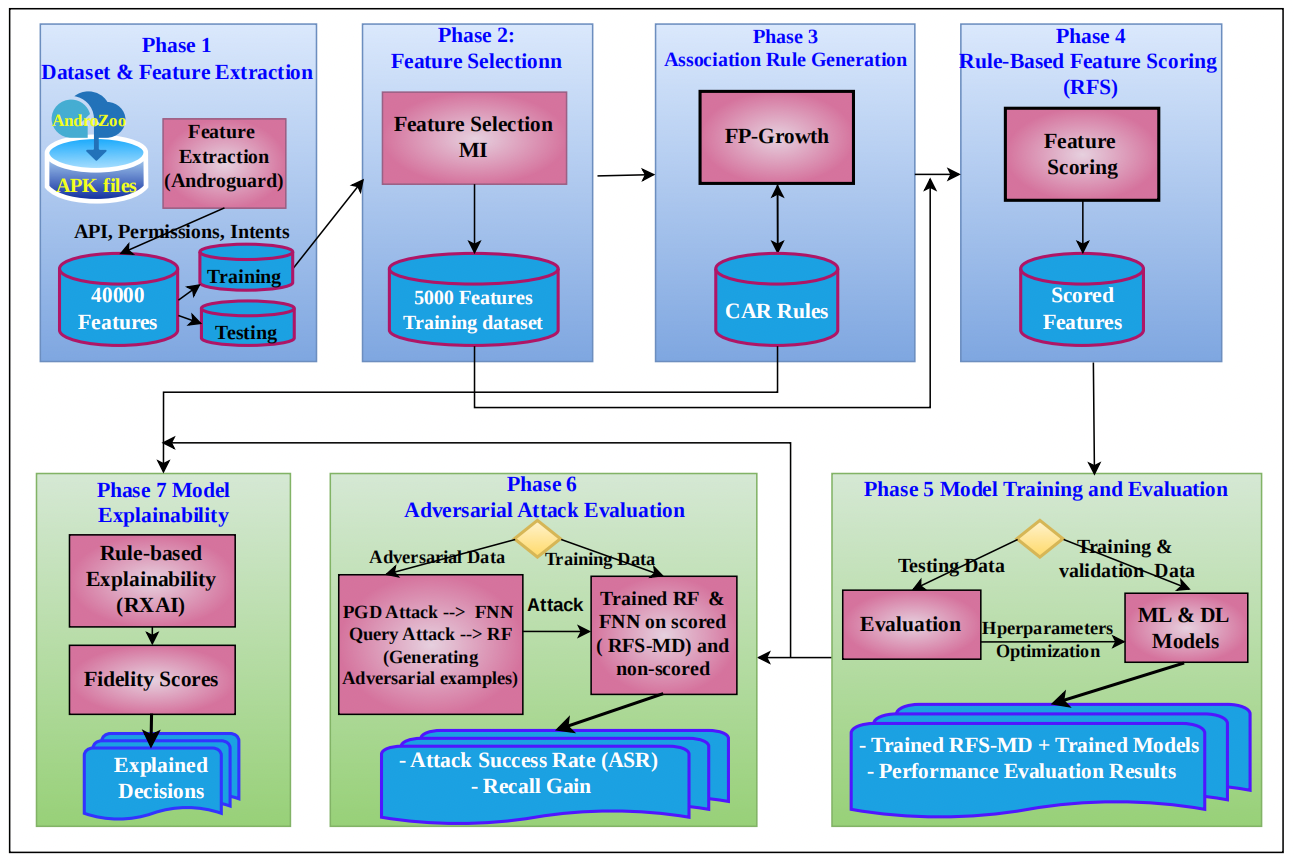

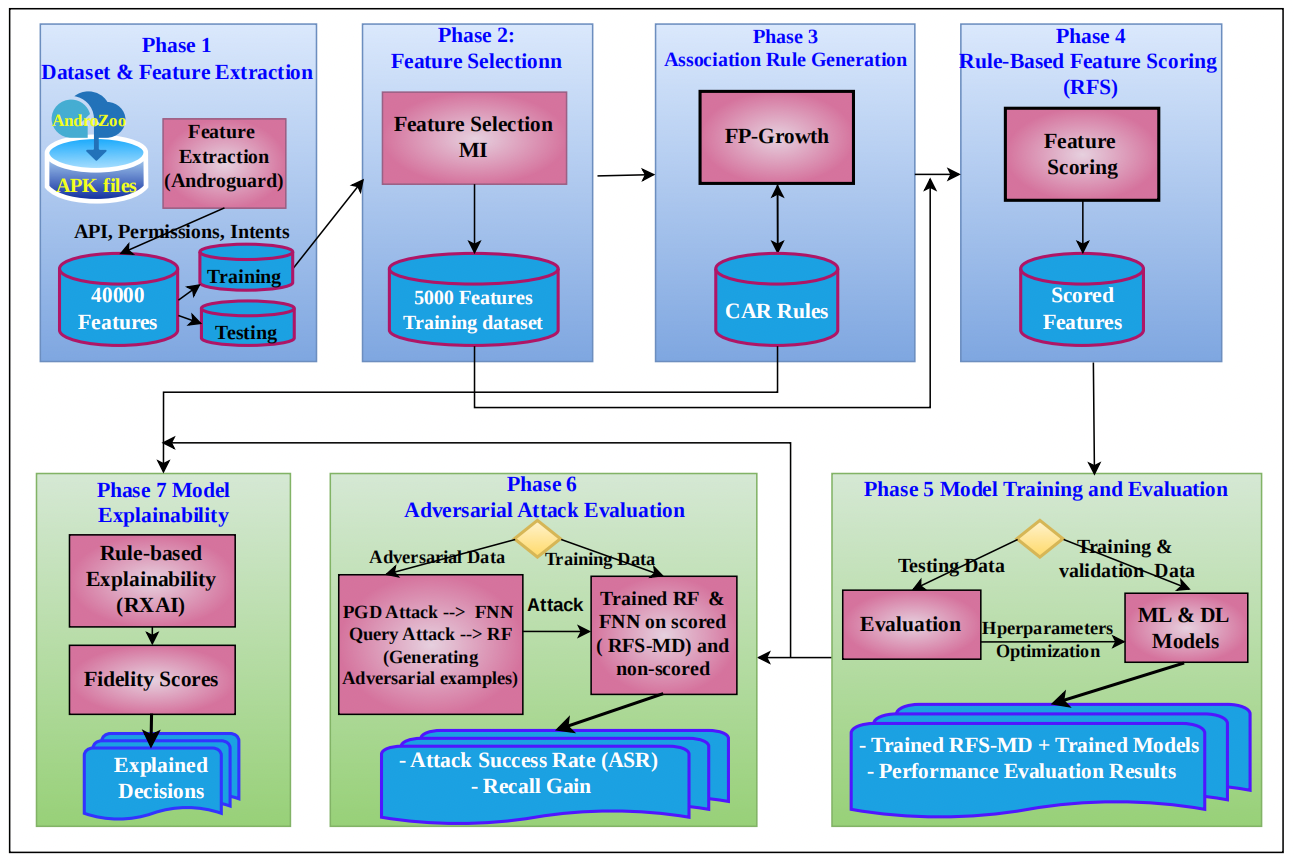

The rapidly evolving Android malware that employs obfuscation and adversarial techniques has become a challenge for cybersecurity malware detection systems. This study proposes an explainable adversarial defense framework, namely RFS-MD (Rule-based Feature Scoring for Malware Detection), that integrates feature importance scores derived from classification association rules, along with the rules themselves, into malware detection models to enhance detection performance, robustness, and explainability. Several experiments were performed on a balanced static feature dataset across several machine learning (ML) and deep learning (DL) classifiers to demonstrate that scored features consistently improved.

accuracy and recall, compared to non-scored features under both default and tuned parameters across all classifiers. Furthermore, RFS-MD enhanced the model’s robustness against adversarial attacks, reducing attack success rates (ASR) and maintaining a positive recalgain compared to baseline models. In addition, a rule-based explanability approach (RXAI) is introduced to generate transparent and human-readable explanations of the model decisions, where the fidelity analysis confirms that RXAI captures interacting malicious feature patterns that align with classifier results. Overall, the results indicate that the rule-based feature scoring technique, along with rules, presents an effective approach towards android malware detection systems that simultaneously improve accuracy, robustness, and explainability, contributing to trustworthy AI-driven cybersecurity solutions.