Submitted:

19 April 2026

Posted:

21 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- 1.

- A novel rule-based feature scoring framework for malware detection (RFS-MD). It is a unified framework that combines rule-based importance feature scores with rules derived from the FP-growth algorithm to identify and quantify meaningful feature interactions in Android applications. The extracted rules are used to assign importance scores to features, which serve as inputs to ML and DL classifiers, embedding feature interpretability into the learning process to ensure the explanations of the model’s decisions truly reflect the influence of the features on the classifier predictions.

- 2.

- Improved detection performance with emphasis on recall. RFS-MD consistently improves the malware detection performance in ML and DL models, with stability in recall gains. Recall is considered a critical performance metric in security applications, where it is used to evaluate the defense system’s ability to discover malware samples correctly to avoid significant security risks.

- 3.

- A unified framework for performance, robustness, and explainability, where RFS-MD bridges the gap between critical issues in the field of Android malware detection through using a rule-based feature scoring technique with the extracted rules.

- 4.

- Enhanced robustness against adversarial attacks. The proposed framework exhibits malware detection robustness against white-box and black-box attacks. It improves the resistance compared to baseline models, where it highlights important features using rule-based feature scoring to reduce attack success rates.

- 5.

- Reliable and high fidelity of A rule-based explainability mechanism (RXAI) integrated within RFS-MD. Our proposed RXAI provides a human-readable explanation of the model decisions that capture interacting feature relationships that align with underlying classifier results. Unlike traditional XAI models, RXAI doesn’t focus on individual features, where fidelity confirms that RXAI accurately reflects the underlying model decisions, particularly for malware features.

- 6.

- Compatibility with both ML and DL classifiers. RFS-MD with RXAI works as a model-agnostic and post-hoc approach with intrinsic capability, where it includes scored feature representations as structured inputs to several classifiers, while still allowing post-hoc explanation through extracted rules, demonstrating model flexibility and applicability without changing the architecture of the classifiers.

2. Literature Review

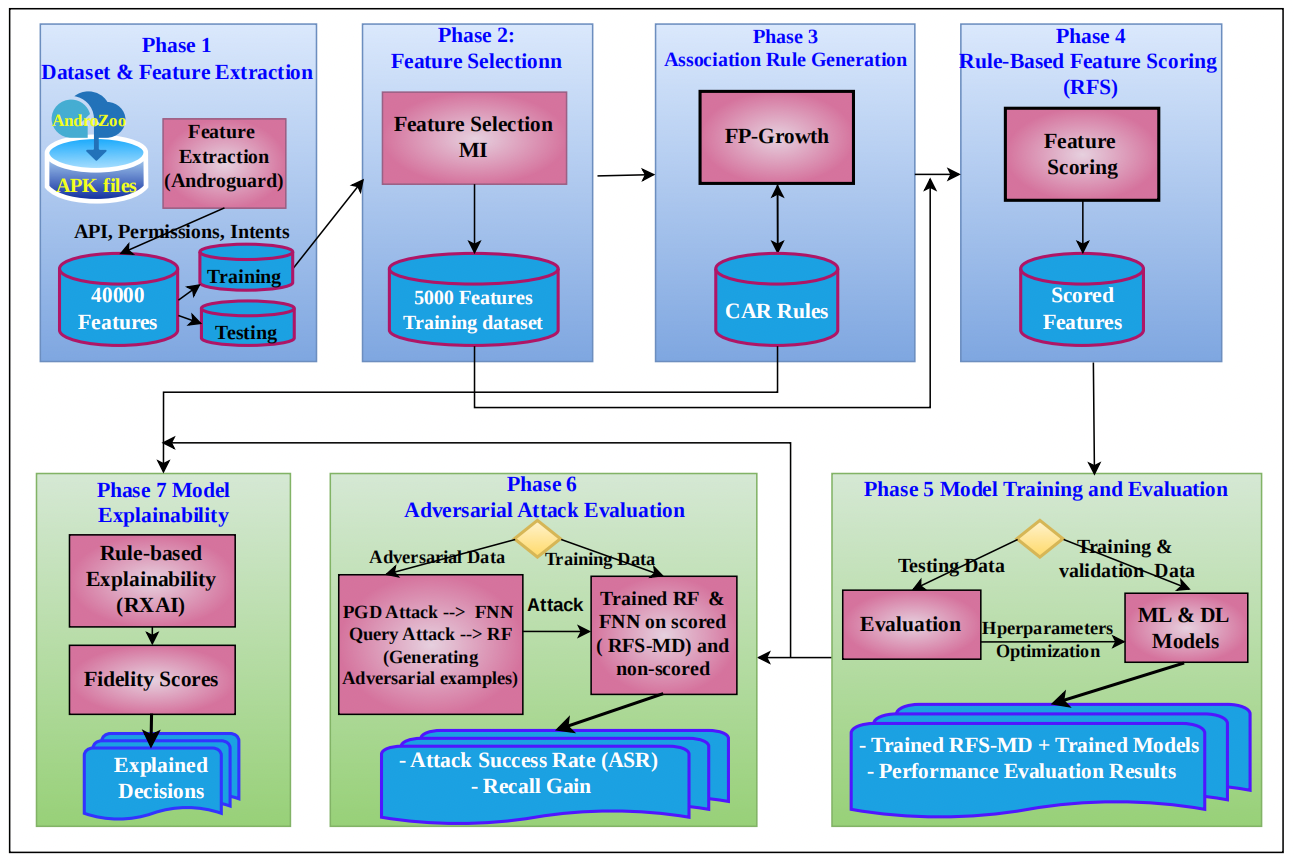

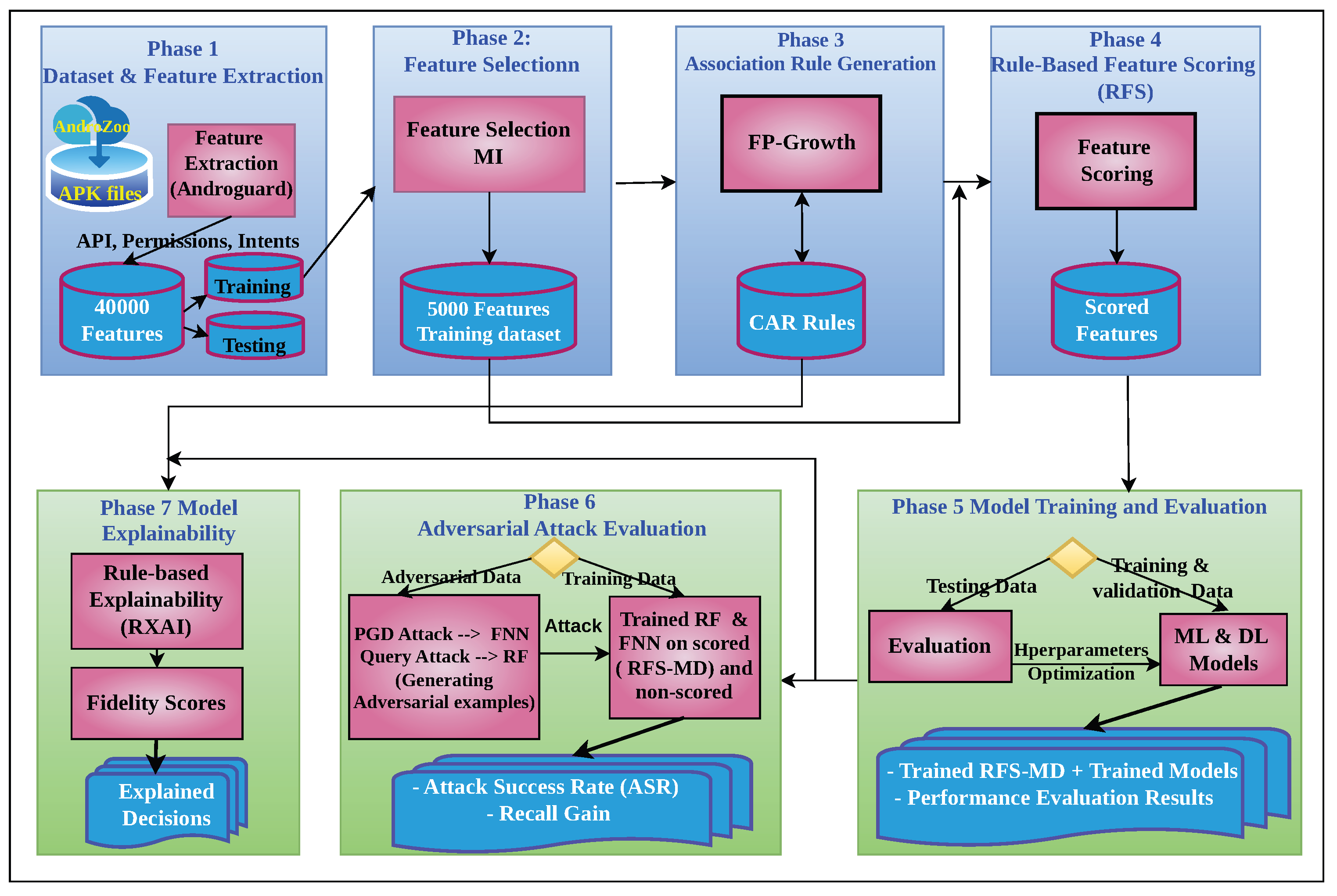

3. Methodology

| Algorithm 1:Proposed Explainable Malware Detection Framework |

|

3.1. Dataset preparation

| Algorithm 2:Dataset Preparation and Feature Extraction |

|

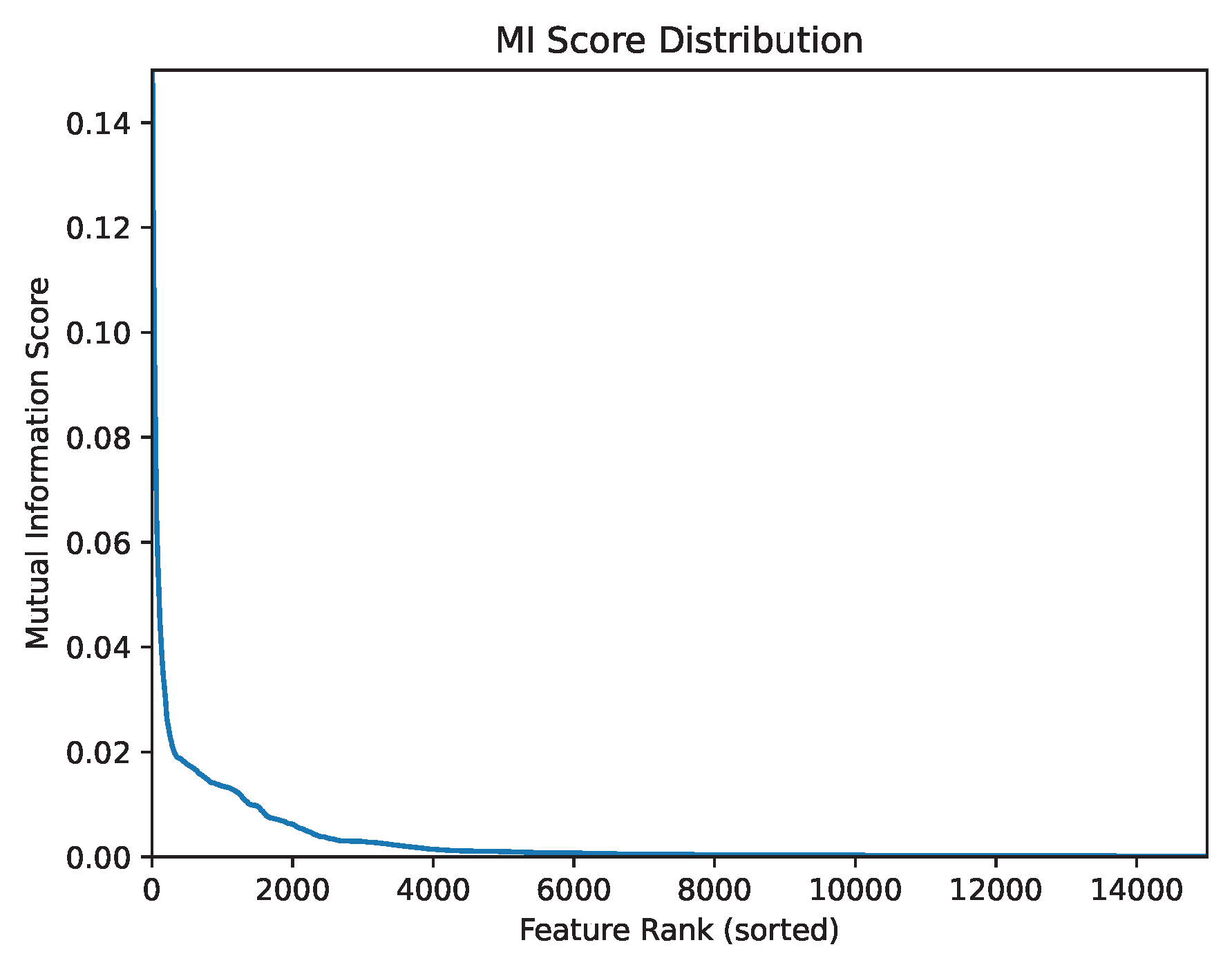

3.2. Feature Selection and Dimensionality Reduction

| Algorithm 3:Feature Selection using Mutual Information |

|

3.3. Rules Generation

| Algorithm 4:Association Rule Generation using FP-Growth |

|

3.4. Rule-Based Feature Scoring

| Algorithm 5:Rule-Based Feature Scoring (RFS) |

|

3.5. Experimental Setup

3.5.1. Classification Models

3.5.2. Evaluation Metrics

3.5.3. Default Hyperparameters

3.5.4. Hyperparameter Optimization

| Algorithm 6:Model Training and Hyperparameter Optimization |

|

3.6. Adversarial Attack Configuration

| Algorithm 7:Adversarial Attack Evaluation using Feature based attack |

|

3.7. Explainability Analysis

3.7.1. Rule-Based Explanations

3.7.2. Fidelity Evaluation of Rule-Based Explanations

| Algorithm 8:Rule-based Explainability RXAI and Fidelity Evaluation |

|

4. Experimental results

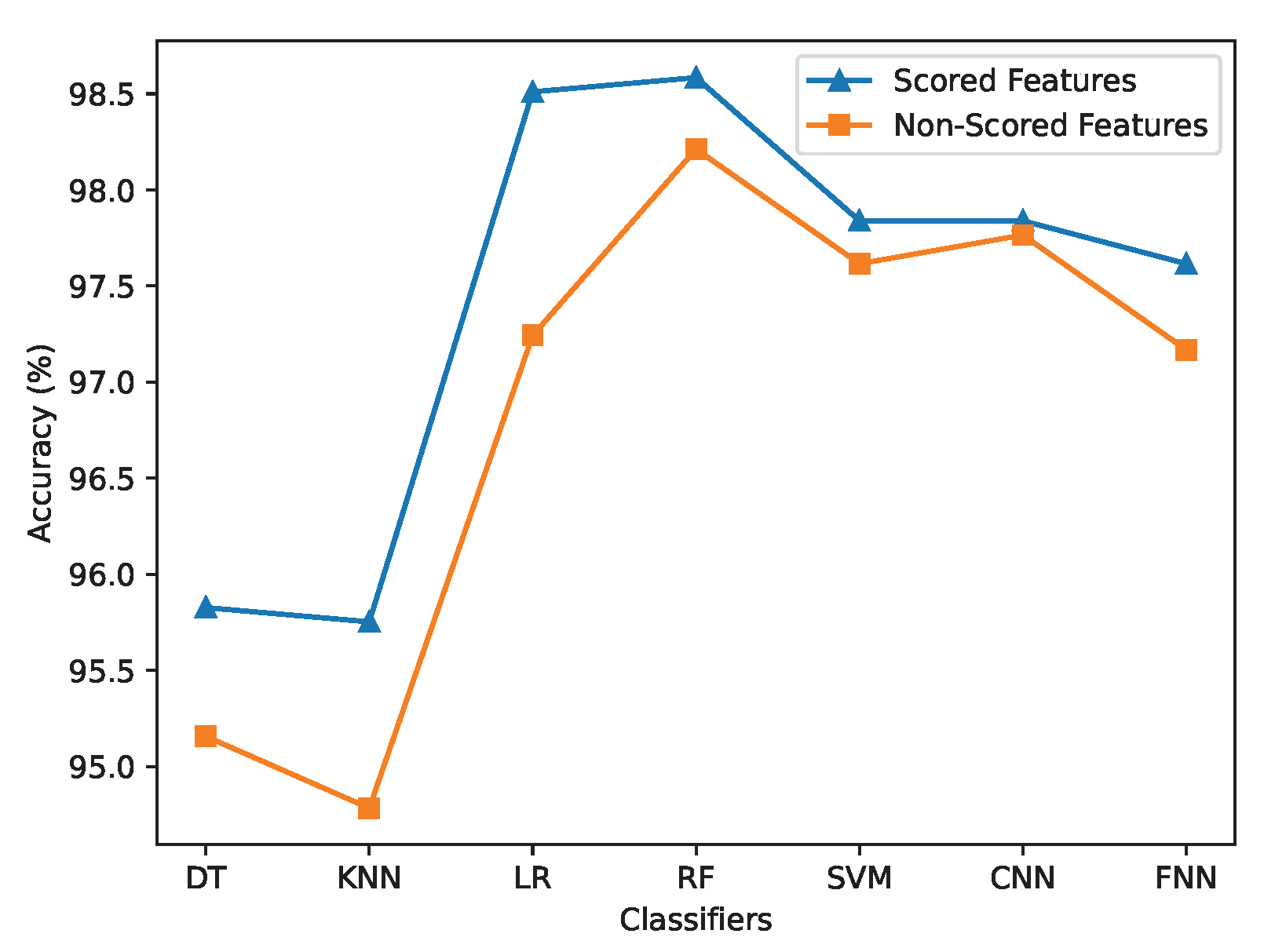

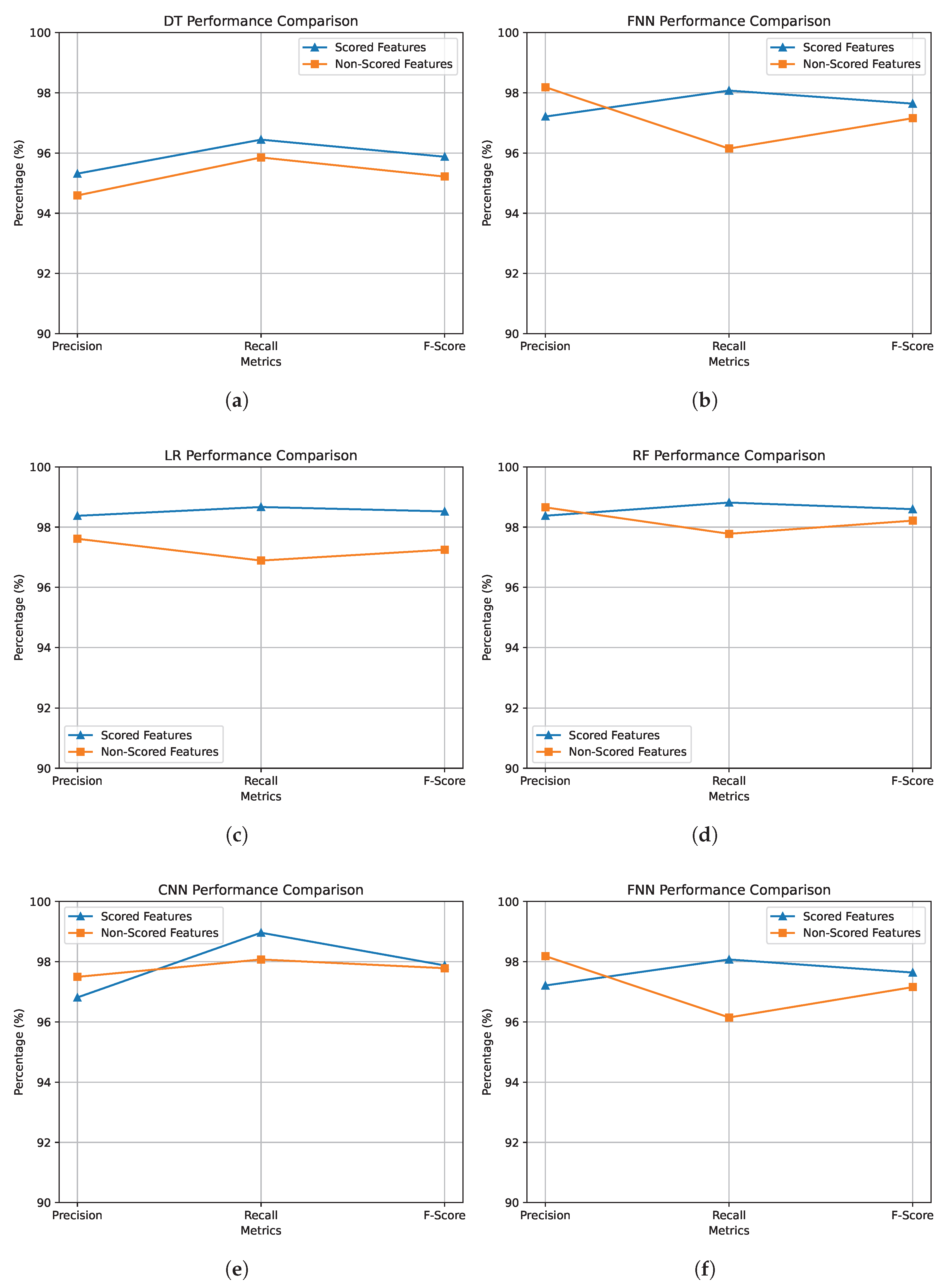

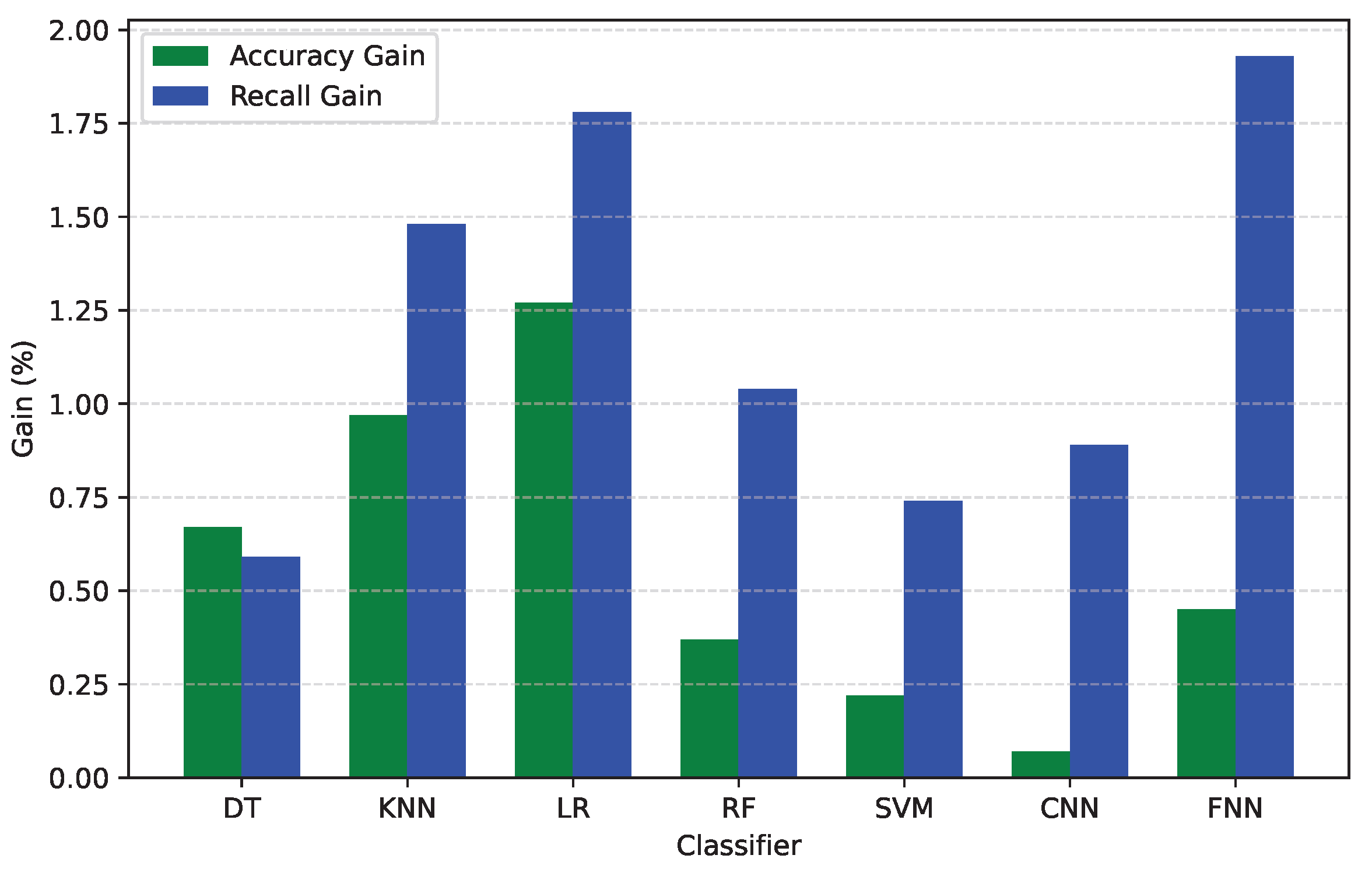

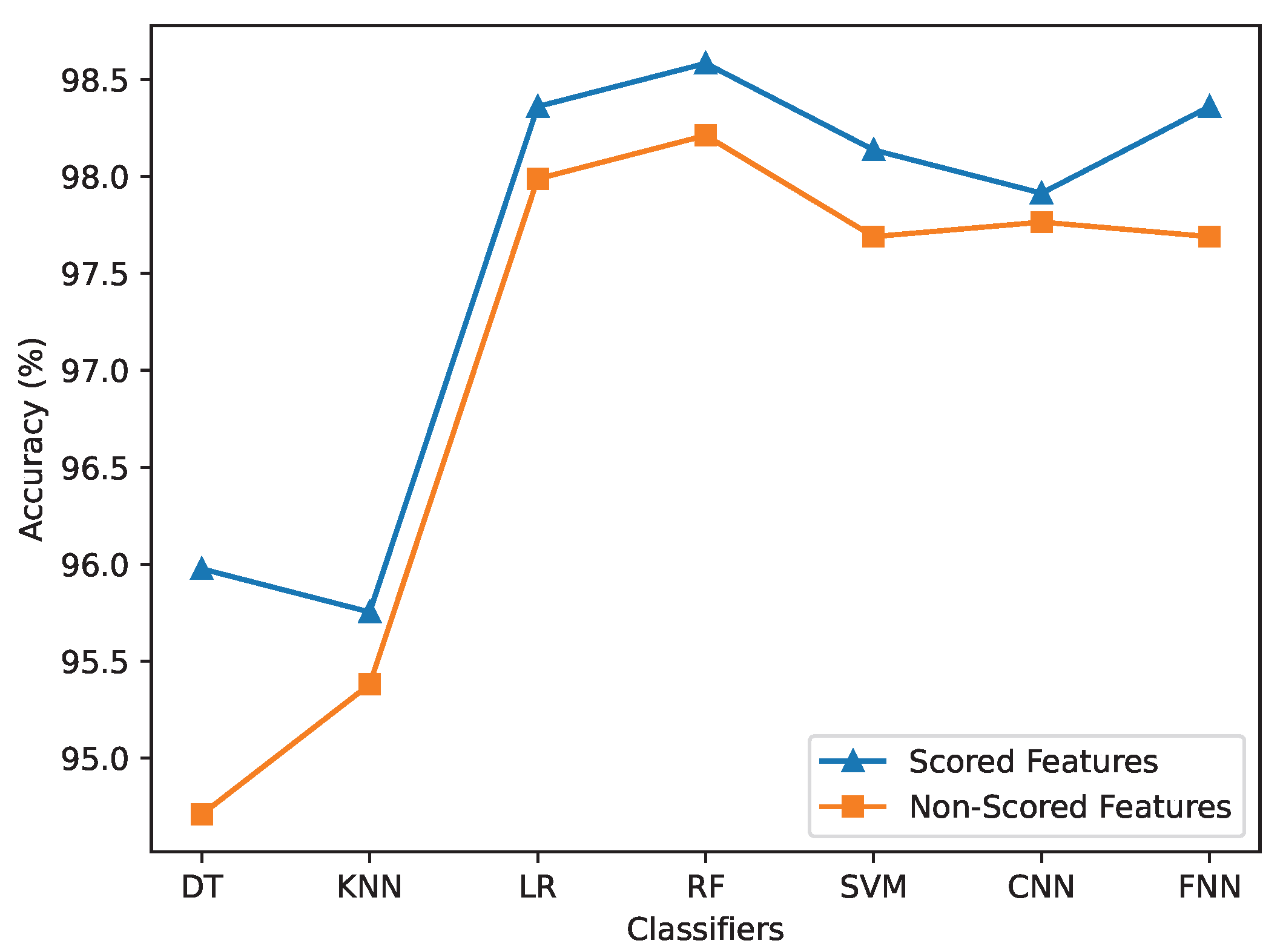

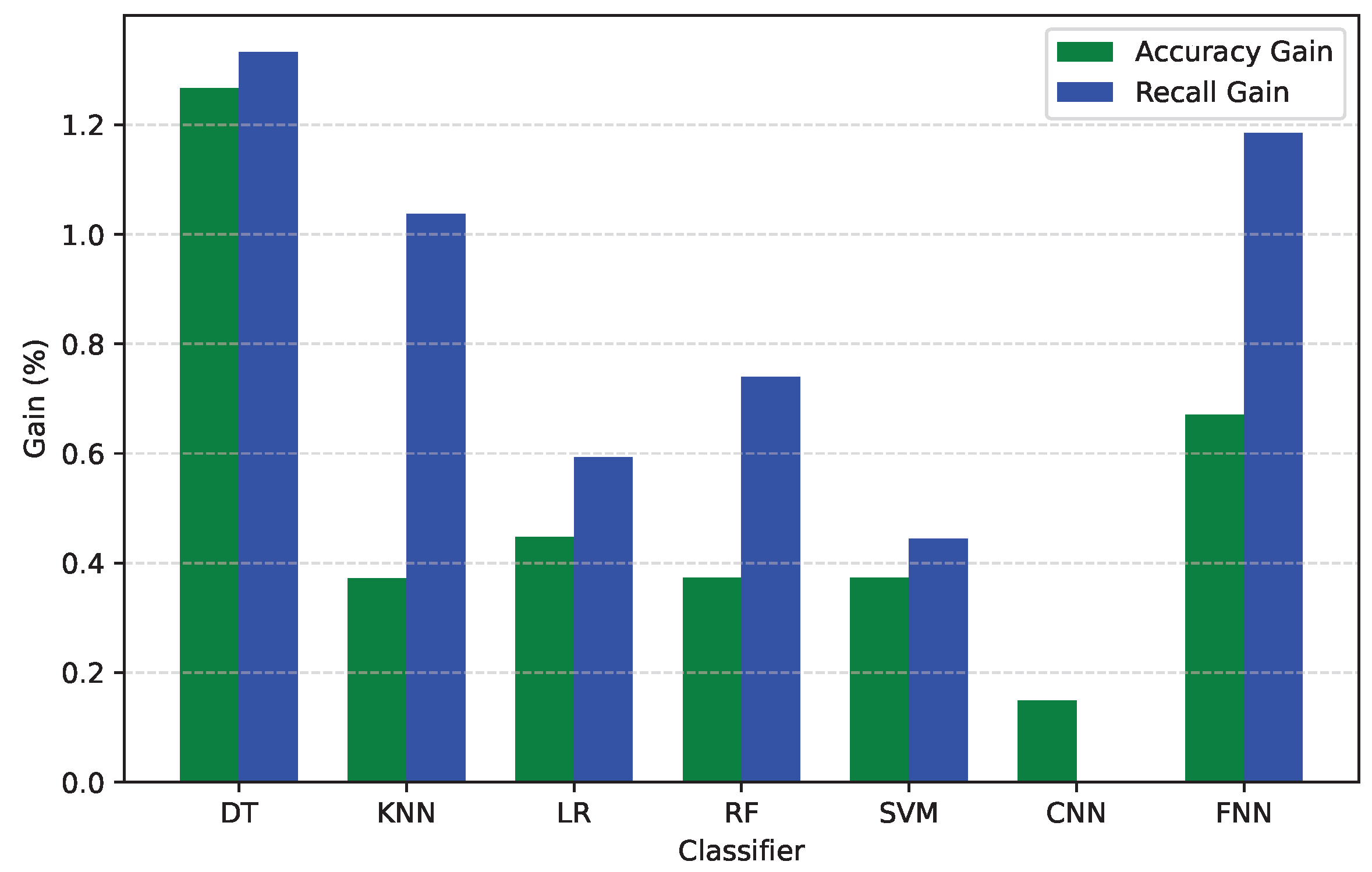

4.1. Impact of Rule-based Feature Scoring on Malware Detection

4.1.1. Performance with Default Parameters

| Classifier | Dataset | Acc (%) | Prec (%) | Rec (%) | F-Score (%) |

|---|---|---|---|---|---|

| DT | Scored Features | 95.827 | 95.315 | 96.444 | 95.876 |

| Non-Scored Features | 95.157 | 94.591 | 95.852 | 95.217 | |

| KNN | Scored Features | 95.753 | 98.892 | 92.593 | 95.639 |

| Non-Scored Features | 94.784 | 98.400 | 91.111 | 94.615 | |

| LR | Scored Features | 98.510 | 98.375 | 98.667 | 98.521 |

| Non-Scored Features | 97.243 | 97.612 | 96.889 | 97.249 | |

| RF | Scored Features | 98.584 | 98.378 | 98.815 | 98.596 |

| Non-Scored Features | 98.212 | 98.655 | 97.778 | 98.214 | |

| SVM | Scored Features | 97.839 | 98.353 | 97.333 | 97.841 |

| Non-Scored Features | 97.616 | 98.638 | 96.593 | 97.605 | |

| CNN | Scored Features | 97.839 | 96.812 | 98.963 | 97.876 |

| Non-Scored Features | 97.765 | 97.496 | 98.074 | 97.784 | |

| FNN | Scored Features | 97.616 | 97.210 | 98.074 | 97.640 |

| Non-Scored Features | 97.168 | 98.185 | 96.148 | 97.156 |

4.1.2. Performance After Hyperparameter Optimization

4.2. Adversarial Robustness Evaluation

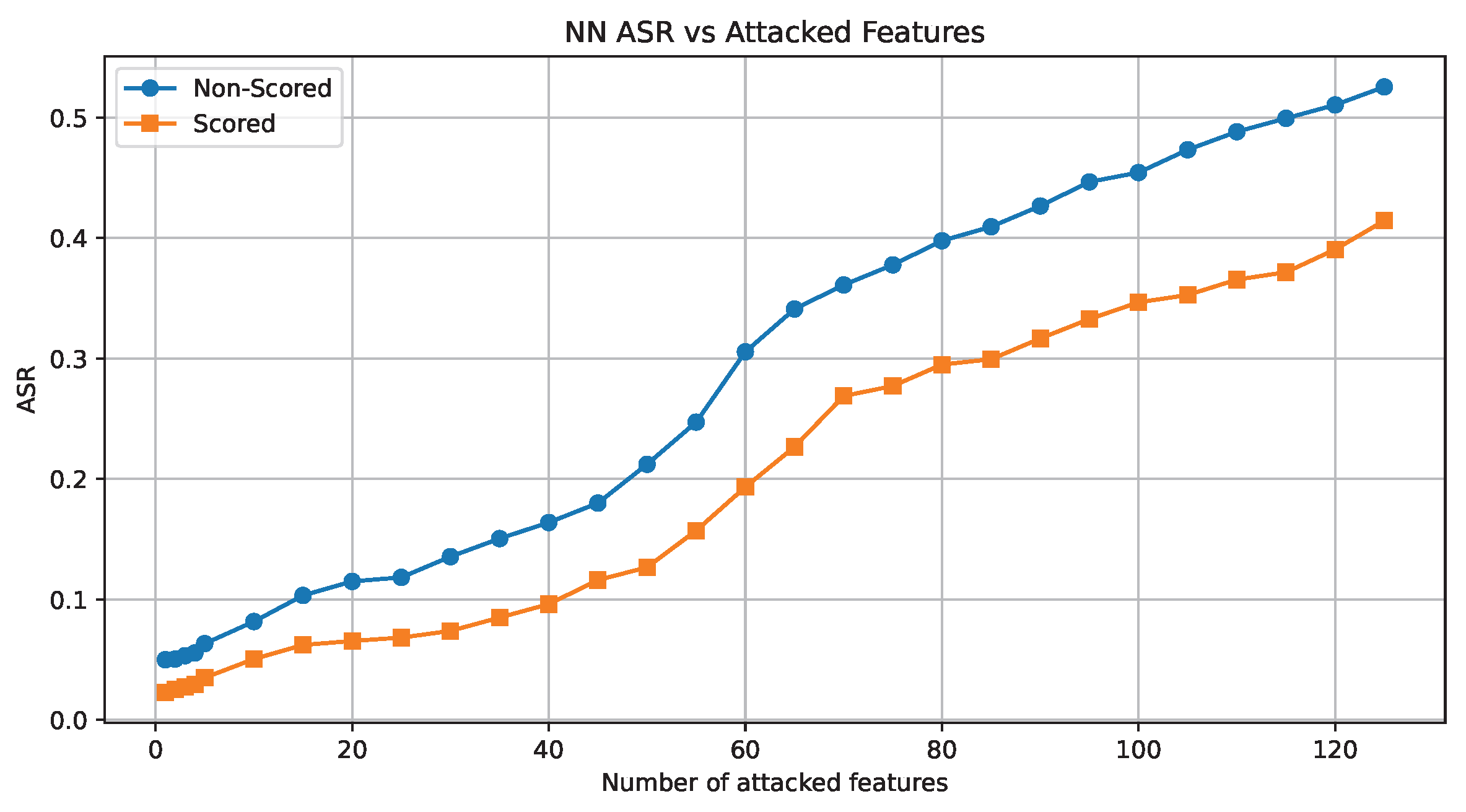

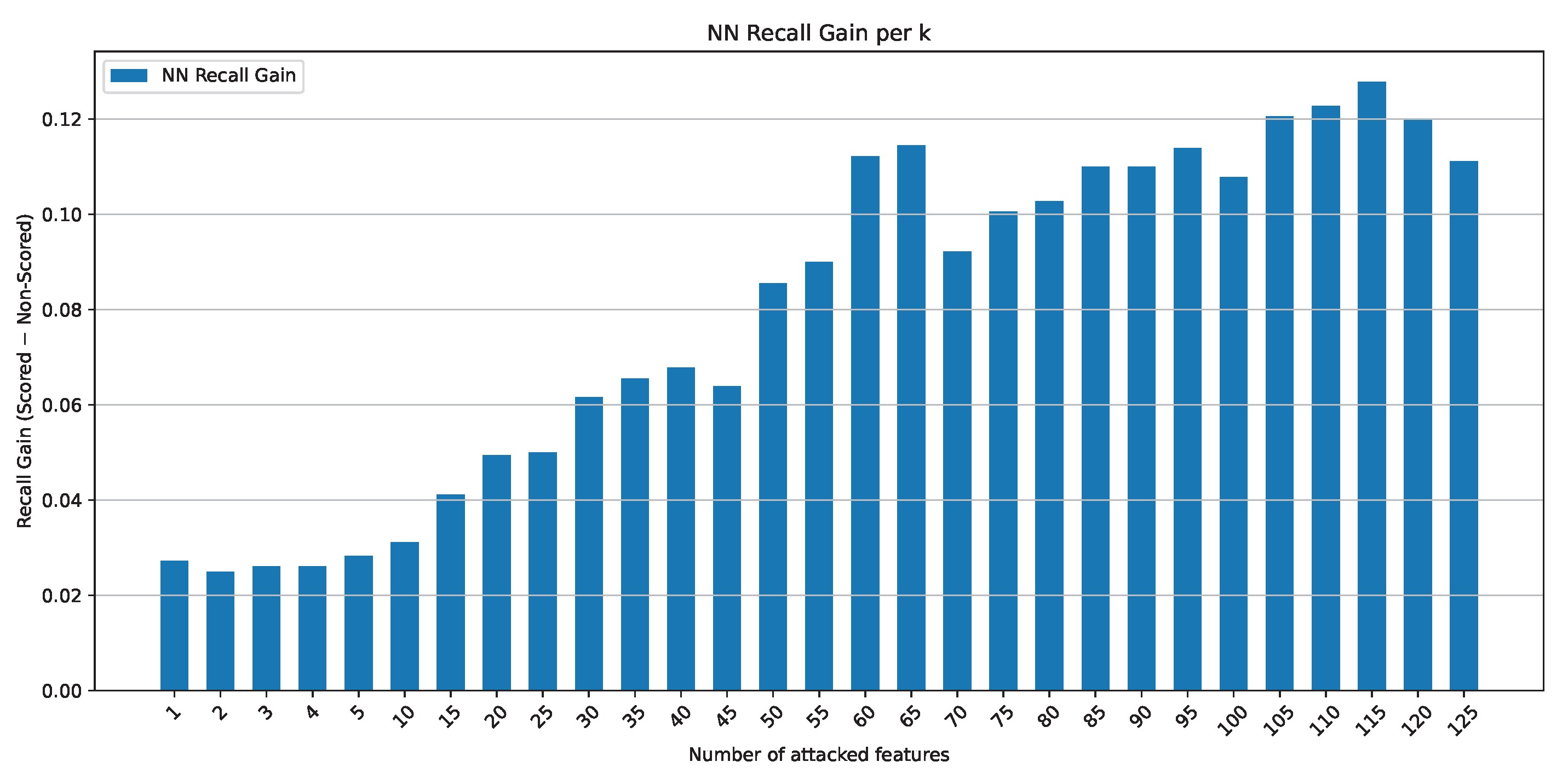

4.2.1. Adversarial Attack Evaluation on the Neural Network Model

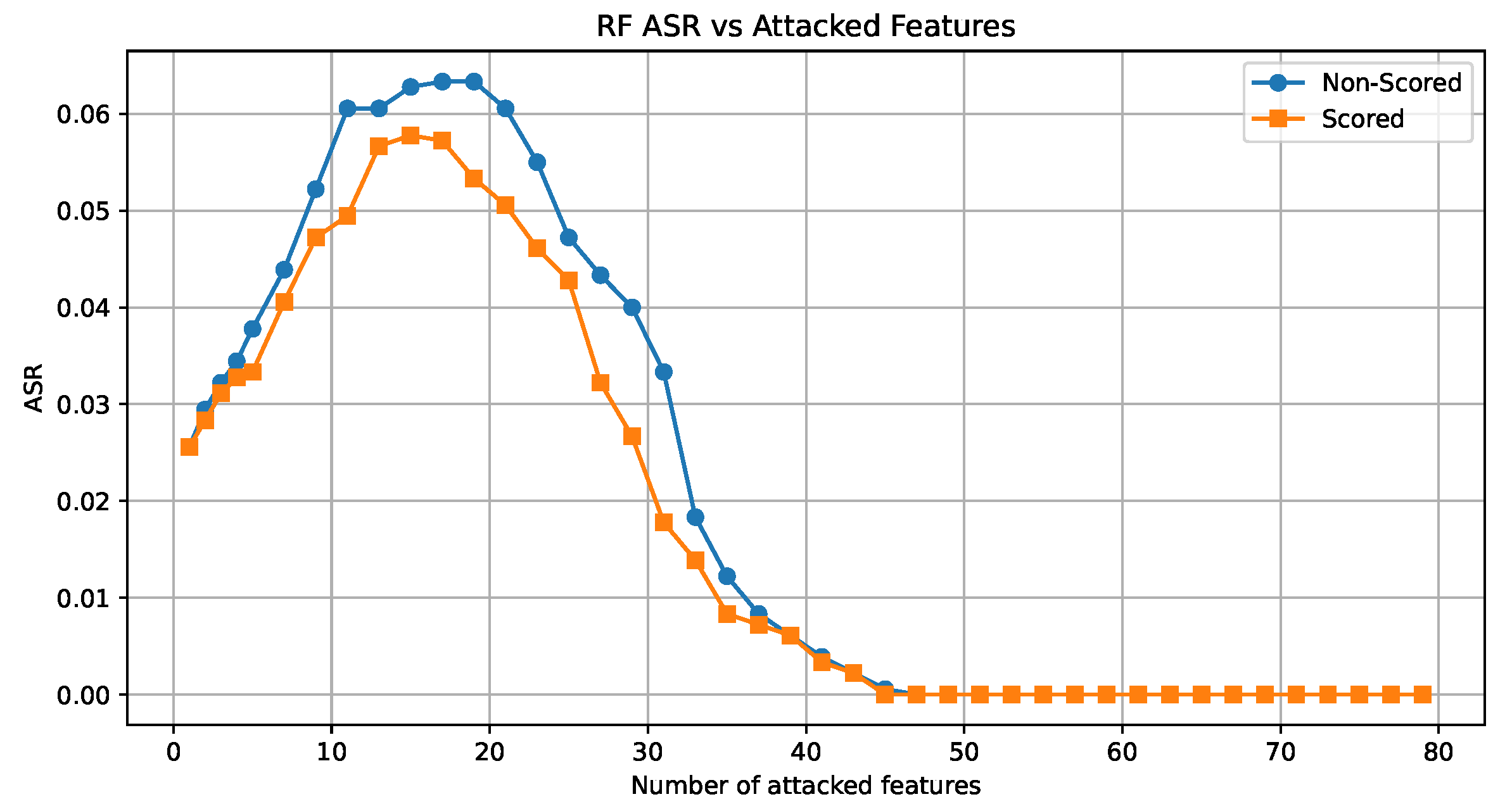

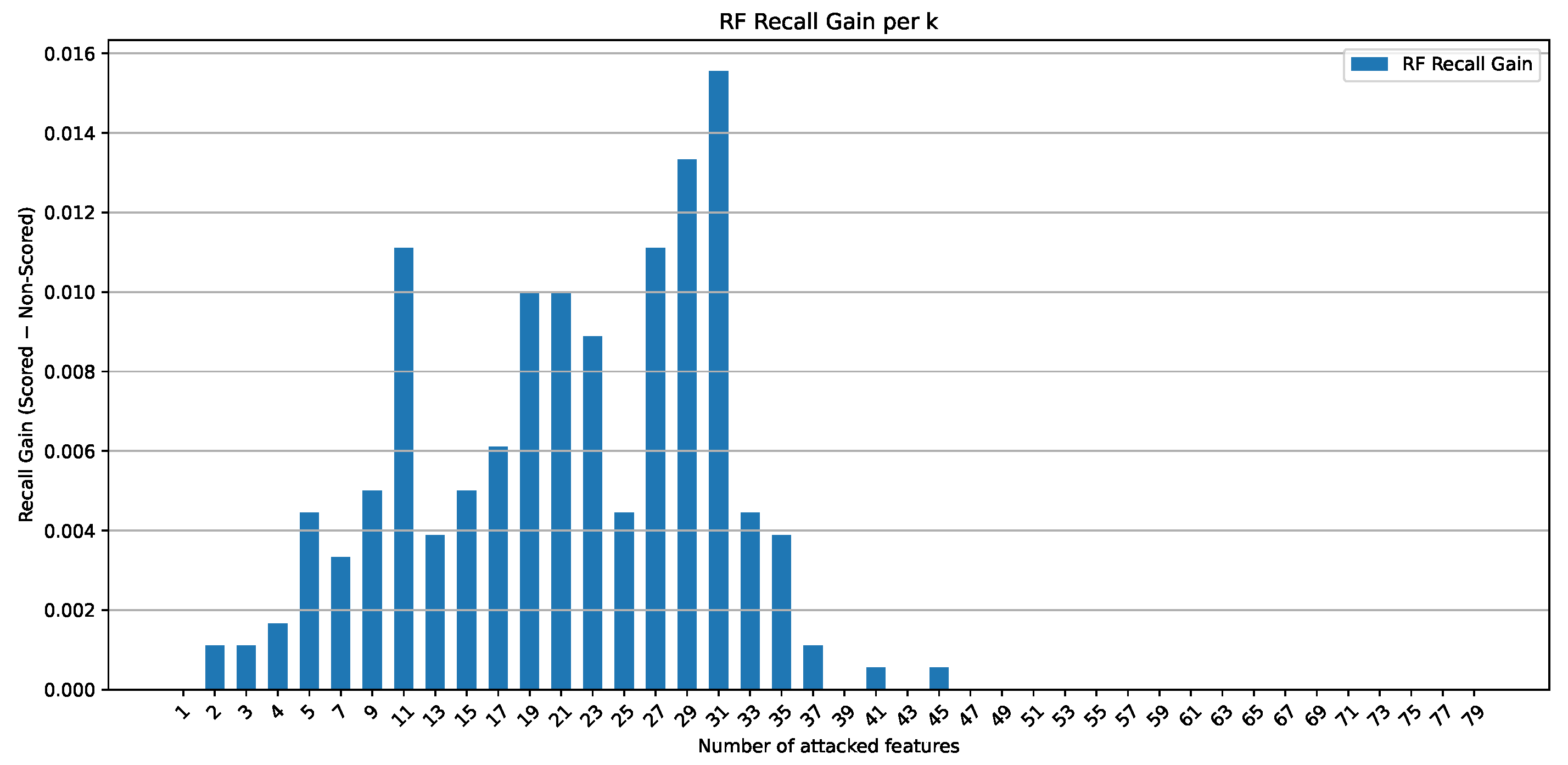

4.2.2. Adversarial Attack Evaluation on the Random Forest Model

4.3. Model Explainability

4.3.1. Rule-Based Explanations of model predictions

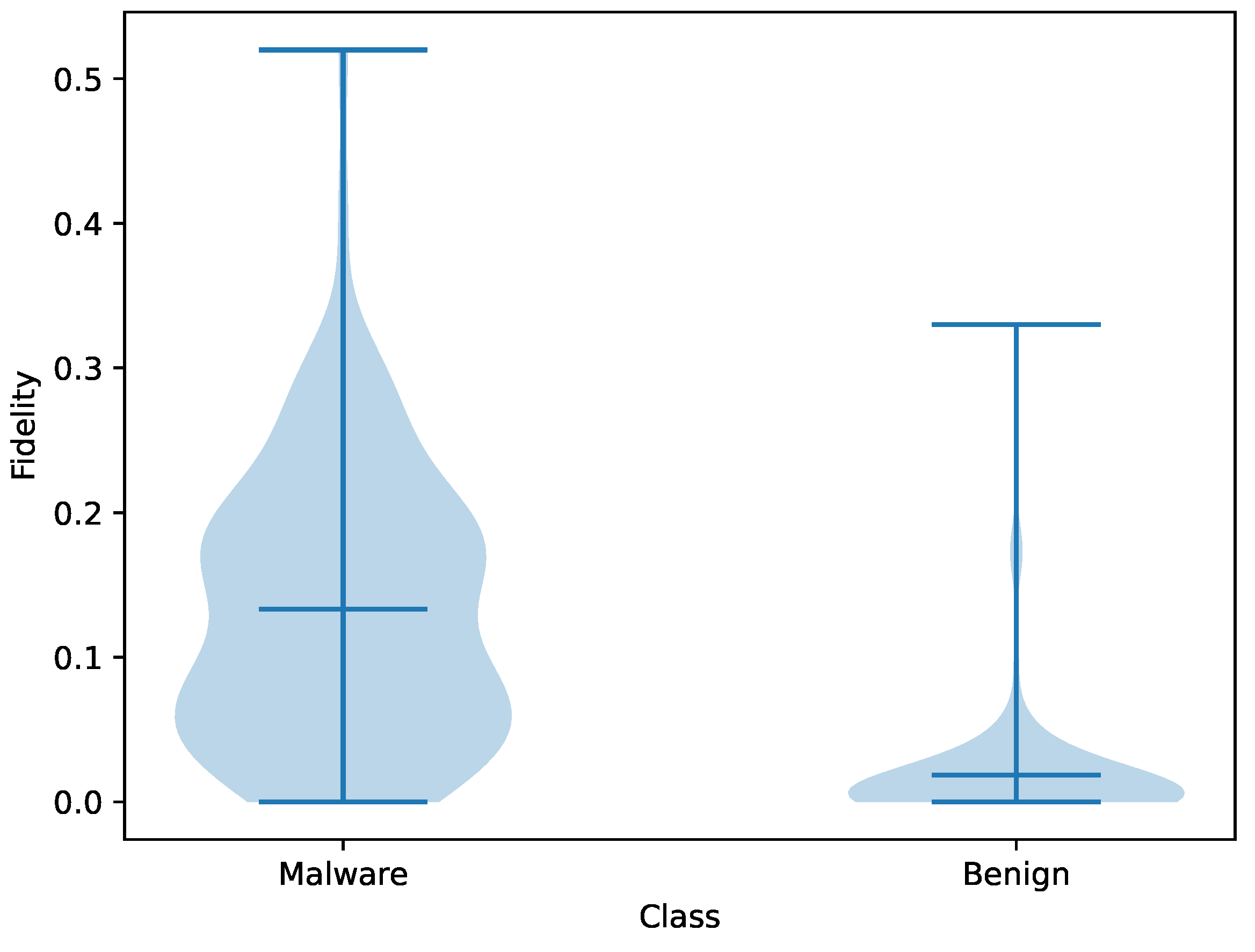

4.3.2. Fidelity Evaluation of Rule-Based Explanations

5. Discussion

5.1. Malware Detection Performance

5.2. Model Robustness: Adversarial attacks

5.3. Explainability

6. Conclusion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Jafari, M.; Shameli-Sendi, A. Evaluating the robustness of adversarial defenses in malware detection systems. Computers and Electrical Engineering 2026, 130, 110845. [Google Scholar] [CrossRef]

- Imran, M.; Appice, A.; Malerba, D. Evaluating Realistic Adversarial Attacks against Machine Learning Models for Windows PE Malware Detection. Future Internet 2024, 16. [Google Scholar] [CrossRef]

- Kozak, M.; Demetrio, L.; Trizna, D.; Roli, F. Updating Windows malware detectors: Balancing robustness and regression against adversarial EXEmples. Computers and Security 2025, 155, 104466. [Google Scholar] [CrossRef]

- He, W.; Baig, M.J.A.; Iqbal, M.T. An Internet of Things—Supervisory Control and Data Acquisition (IoT-SCADA) Architecture for Photovoltaic System Monitoring, Control, and Inspection in Real Time. Electronics 2025, 14. [Google Scholar] [CrossRef]

- Poornima, S.; Mahalakshmi, R. Automated malware detection using machine learning and deep learning approaches for android applications. Measurement: Sensors 2024, 32, 100955. [Google Scholar] [CrossRef]

- Rey, V.; Sánchez, P.; Celdrán, A.; Gérôme, B. Federated learning for malware detection in IoT devices. Computer Networks 2022, 204, 108693. [Google Scholar] [CrossRef]

- Siddiqui, S.; Khan, T. An Overview of Techniques for Obfuscated Android Malware Detection. SN Computer Science 2024, 5, 328. [Google Scholar] [CrossRef]

- Qiang, W.; Yang, L.; Jin, H. Efficient and Robust Malware Detection Based on Control Flow Traces Using Deep Neural Networks. Computers & Security 2022, 122, 102871. [Google Scholar] [CrossRef]

- Babu, G.; Uma, J. Adversarial Attacks and Defenses against Deep Learning in Cybersecurity; 2022; pp. 281–296. [Google Scholar] [CrossRef]

- Martins, N.; Cruz, J.; Cruz, T.; Henriques Abreu, P. Adversarial Machine Learning Applied to Intrusion and Malware Scenarios: A Systematic Review. IEEE Access 2020, PP, 1–1. [Google Scholar] [CrossRef]

- Lundberg, S.; Lee, S.I. A Unified Approach to Interpreting Model Predictions. arXiv 2017, arXiv:1705.07874. [Google Scholar] [CrossRef]

- Ribeiro, M.T.; Singh, S.; Guestrin, C. Why Should I Trust You?": Explaining the Predictions of Any Classifier. In Proceedings of the Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, New York, NY, USA, 2016; KDD ’16, pp. 1135–1144. [Google Scholar] [CrossRef]

- Nadeem, A.; Vos, D.; Cao, C.; Pajola, L.; Dieck, S.; Baumgartner, R.; Verwer, S. SoK: Explainable Machine Learning for Computer Security Applications. 2022. [Google Scholar] [CrossRef]

- Zhang, Z.; Al Hamadi, H.; Damiani, E.; Yeun, C.; Taher, D.F. Explainable Artificial Intelligence Applications in Cyber Security: State-of-the-Art in Research. IEEE Access 2022, PP, 1–1. [Google Scholar] [CrossRef]

- Ali, S.; Abuhmed, T.; El-Sappagh, S.; Muhammad, K.; Alonso-Moral, J.M.; Confalonieri, R.; Guidotti, R.; Ser, D.; Díaz-Rodríguez, N.; Herrera, F. Explainable Artificial Intelligence (XAI): What we know and what is left to attain Trustworthy Artificial Intelligence. Information Fusion 2023, 99, 101805. [Google Scholar] [CrossRef]

- Kouliaridis, V.; Kambourakis, G. A Comprehensive Survey on Machine Learning Techniques for Android Malware Detection. Information 2021, 12, 185. [Google Scholar] [CrossRef]

- Senanayake, J.; Kalutarage, H.; Al-Kadri, M.O. Android Mobile Malware Detection Using Machine Learning: A Systematic Review. Electronics 2021, 10, 1606. [Google Scholar] [CrossRef]

- Kauser Sk, H.; Anu V, M. Hybrid deep learning model for accurate and efficient android malware detection using DBN-GRU. 2025. [Google Scholar]

- Muzaffar, A.; Hassen, H.; Lones, M.; Zantout, H. An in-depth review of machine learning based Android malware detection. Computers and Security 2022, 121, 102833. [Google Scholar] [CrossRef]

- Tawfik, M.; Tarazi, H.; Dalalah, A.; et al. Few-Shot Android Malware Classification with Quantum-Enhanced Prototypical Learning and Drift Detection. Scientific Reports 2026, 16, 10744. [Google Scholar] [CrossRef] [PubMed]

- Akhtar, M.S.; Feng, T. Detection of Malware by Deep Learning as CNN-LSTM Machine Learning Techniques in Real Time. Symmetry 2022, 14. [Google Scholar] [CrossRef]

- Redhu, A.; Choudhary, P.; Srinivasan, K.; Das, T.K. Deep learning-powered malware detection in cyberspace: a contemporary review. Frontiers in Physics 2024, 12–2024. [Google Scholar] [CrossRef]

- Bostani, H.; Moonsamy, V. EvadeDroid: A practical evasion attack on machine learning for black-box Android malware detection. Computers & Security 2024, 139, 103676. [Google Scholar] [CrossRef]

- Kulkarni, M.; Stamp, M. XAI and Android Malware Models. arXiv 2024, arXiv:2411.16817. [Google Scholar] [CrossRef]

- Nazim, S.; Alam, M.; Rizvi, S.; Mustapha, J.; Hussain, S.; Suud, M. Advancing Malware Imagery Classification with Explainable Deep Learning: A State-of-the-Art Approach Using SHAP, LIME and Grad-CAM. PLoS One 2025, 20, e0318542. [Google Scholar] [CrossRef] [PubMed]

- Salih, A.M.; Raisi-Estabragh, Z.; Galazzo, I.B.; Radeva, P.; Petersen, S.E.; Lekadir, K.; Menegaz, G. A Perspective on Explainable Artificial Intelligence Methods: SHAP and LIME. Advanced Intelligent Systems 2025, 7, 2400304. [Google Scholar] [CrossRef]

- Manthena, H.; Shajarian, S.; Kimmell, J.C.; Abdelsalam, M.; Khorsandroo, S.; Gupta, M. Explainable Artificial Intelligence (XAI) for Malware Analysis: A Survey of Techniques, Applications, and Open Challenges. IEEE Access 2025, 13, 61611–61640. [Google Scholar] [CrossRef]

- Saqib, M.; Mahdavifar, S.; Fung, B.C.M.; Charland, P. A Comprehensive Analysis of Explainable AI for Malware Hunting. ACM Comput. Surv. 2024, 56. [Google Scholar] [CrossRef]

- D’Angelo, G.; Ficco, M.; Palmieri, F. Association rule-based malware classification using common subsequences of API calls. Applied Soft Computing 2021, 105, 107234. [Google Scholar] [CrossRef]

- Anthony, P.; Galadima, K.R.; Adams, Z.; Onoja, M.; Arp, D.; Homola, M.; Balogh, Š. Rule Extraction and Interaction-Aware Explainability for AI-Driven Malware Detection. In Proceedings of the Rules and Reasoning; Hogan, A., Satoh, K., Dağ, H., Turhan, A.Y., Roman, D., Soylu, A., Eds.; Cham, 2026; pp. 137–155. [Google Scholar]

- Alohali, M.A.; Alahmari, S.; Aljebreen, M.; Asiri, M.M.; Miled, A.B.; Albouq, S.S.; Alrusaini, O.; Alqazzaz, A. Two Stage Malware Detection Model in Internet of Vehicles (IoV) Using Deep Learning-Based Explainable Artificial Intelligence with Optimization Algorithms. Scientific Reports 2025, 15, 20615. [Google Scholar] [CrossRef]

- Anthony, P.; Giannini, F.; Diligenti, M.; Homola, M.; Gori, M.; Balogh, S.; Mojzis, J. Explainable Malware Detection with Tailored Logic Explained Networks. arXiv 2024, arXiv:2405.03009. [Google Scholar] [CrossRef]

- Al-Dujaili, A.; Huang, A.; Hemberg, E.; O’Reilly, U.M. Adversarial Deep Learning for Robust Detection of Binary Encoded Malware. 2018. [Google Scholar] [CrossRef]

- Slack, D.; Hilgard, S.; Jia, E.; Singh, S.; Lakkaraju, H. Fooling LIME and SHAP: Adversarial Attacks on Post hoc Explanation Methods. In Proceedings of the Proceedings of the AAAI/ACM Conference on AI, Ethics, and Society, New York, NY, USA, 2020; AIES ’20, pp. 180–186. [Google Scholar] [CrossRef]

- Allix, K.; Bissyandé, T.F.; Klein, J.; Le Traon, Y. AndroZoo: collecting millions of Android apps for the research community. In Proceedings of the Proceedings of the 13th International Conference on Mining Software Repositories, New York, NY, USA, 2016; MSR ’16, pp. 468–471. [Google Scholar] [CrossRef]

- Alecci, M.; Jiménez, P.J.R.; Allix, K.; Bissyandé, T.F.; Klein, J. AndroZoo: A Retrospective with a Glimpse into the Future. In Proceedings of the Proceedings of the 21st International Conference on Mining Software Repositories, New York, NY, USA, 2024; MSR ’24, pp. 389–393. [Google Scholar] [CrossRef]

- Curebal, F.; Dağ, H. Enhancing Malware Classification: A Comparative Study of Feature Selection Models with Parameter Optimization. 2024 Systems and Information Engineering Design Symposium (SIEDS), 2024; pp. 511–516. [Google Scholar]

- Han, J.; Pei, J.; Yin, Y.; Mao, R. Mining Frequent Patterns without Candidate Generation: A Frequent-Pattern Tree Approach. Data Mining and Knowledge Discovery 2004, 8, 53–87. [Google Scholar] [CrossRef]

- Lawal, M.; Ogedengbe, M.; Ng. FP-Growth Algorithm: Mining Association Rules without Candidate Sets Generation. Kasu Journal of Computer Science 2024, 1, 392–411. [Google Scholar] [CrossRef]

- WICAKSONO, D.; Jambak, M.; SAPUTRA, D. The Comparison of Apriori Algorithm with Preprocessing and FP-Growth Algorithm for Finding Frequent Data Pattern in Association Rule. 2020. [Google Scholar] [CrossRef]

- Hahsler, M.; Buchta, C.; Hornik, K. Selective Association Rule Generation. Computational Statistics 2008, 23, 303–315. [Google Scholar] [CrossRef]

- D’Angelo, G.; Ficco, M.; Palmieri, F. Association rule-based malware classification using common subsequences of API calls. Applied Soft Computing 2021, 105, 107234. [Google Scholar] [CrossRef]

- Rafiq, H.; Aslam, N.; Ahmed, U.; Lin, J. Mitigating Malicious Adversaries Evasion Attacks in Industrial Internet of Things. IEEE Transactions on Industrial Informatics 2022, PP, 1–9. [Google Scholar] [CrossRef]

- Yu, Z.; Li, S.; Bai, Y.; Han, W.; Wu, X.; Tian, Z. REMSF: A Robust Ensemble Model of Malware Detection Based on Semantic Feature Fusion. IEEE Internet of Things Journal 2023, PP, 1–1. [Google Scholar] [CrossRef]

- Sokolova, M.; Lapalme, G. A systematic analysis of performance measures for classification tasks. Information Processing & Management 2009, 45, 427–437. [Google Scholar] [CrossRef]

- Kim, G.; Yoon, D.; Kwak, N.; Lee, B. Representation-Centric Approach for Android Malware Classification: Interpretability-Driven Feature Engineering on Function Call Graphs. Applied Sciences 2026, 16. [Google Scholar] [CrossRef]

- Jain, S.; Cretu, A.M.; de Montjoye, Y.A. Adversarial Detection Avoidance Attacks: Evaluating the robustness of perceptual hashing-based client-side scanning. arXiv 2022, arXiv:cs. [Google Scholar]

- Papernot, N.; McDaniel, P.; Goodfellow, I.; Jha, S.; Celik, Z.B.; Swami, A. Practical Black-Box Attacks against Machine Learning. arXiv 2017, arXiv:1602.02697. [Google Scholar] [CrossRef]

- Zou, D.; Zhu, Y.; Xu, S.; Li, Z.; Jin, H.; Ye, H. Interpreting Deep Learning-based Vulnerability Detector Predictions Based on Heuristic Searching. ACM Trans. Softw. Eng. Methodol. 2021, 30. [Google Scholar] [CrossRef]

- Tanveer, M.; Munir, K.; Alyamani, H.J.; Hassan, S.; Sheraz, M.; Chuah, T. Graph-augmented multi-modal learning framework for robust android malware detection. Scientific Reports 2025, 15. [Google Scholar] [CrossRef] [PubMed]

- Muzaffar, A.; Hassen, H.R.; Zantout, H.; Lones, M.A. Reassessing Feature-Based Android Malware Detection in a Contemporary Context. PLOS ONE 2026, 21, e0341013. [Google Scholar] [CrossRef]

- Rashid, M.; Qureshi, S.; Abid, A.; Alqahtany, S.; A., A.; Mscs, M.; Al Reshan, M.; Shaikh, A. Hybrid Android Malware Detection and Classification Using Deep Neural Networks. International Journal of Computational Intelligence Systems 2025, 18. [Google Scholar] [CrossRef]

- Wei, X.; Cheng, Z.; Li, N.; Lv, Q.; Yu, Z.; Sun, D. DWFS-Obfuscation: Dynamic Weighted Feature Selection for Robust Malware Familial Classification under Obfuscation. 2025. [Google Scholar] [CrossRef]

- Pathak, A.; Barman, U.; Kumar, T.S. Machine learning approach to detect android malware using feature-selection based on feature importance score. Journal of Engineering Research 2025, 13, 712–720. [Google Scholar] [CrossRef]

- Arrowsmith, J.; Susnjak, T.; Jang-Jaccard, J. Multimodal Deep Learning for Android Malware Classification. Machine Learning and Knowledge Extraction 2025, 7. [Google Scholar] [CrossRef]

- Palma, C.; Ferreira, A.; Figueiredo, M. Explainable Machine Learning for Malware Detection on Android Applications. Information 2024, 15. [Google Scholar] [CrossRef]

- Mitchell, J.; McLaughlin, N.; del Rincon, J.M. Generating sparse explanations for malicious Android opcode sequences using hierarchical LIME. Computers & Security 2024, 137, 103637. [Google Scholar] [CrossRef]

- Soi, D.; Sanna, A.; Maiorca, D.; Giacinto, G. Enhancing android malware detection explainability through function call graph APIs. Journal of Information Security and Applications 2024, 80, 103691. [Google Scholar] [CrossRef]

- Syed, T.A.; Nauman, M.; Khan, S.; Jan, S.; Zuhairi, M.F. ViTDroid: Vision Transformers for Efficient, Explainable Attention to Malicious Behavior in Android Binaries. Sensors 2024, 24. [Google Scholar] [CrossRef]

- Alani, M.M.; Mashatan, A.; Miri, A. XMal: A lightweight memory-based explainable obfuscated-malware detector. Computers & Security 2023, 133, 103409. [Google Scholar] [CrossRef]

- Shokouhinejad, H.; Razavi-Far, R.; Higgins, G.; Ghorbani, A.A. Enhancing GNN Explanations for Malware Detection with Dual Subgraph Matching. Machine Learning and Knowledge Extraction 2026, 8. [Google Scholar] [CrossRef]

- Li, F.; Wang, S.; Liew, A.W.C.; Ding, W.; Liu, G. Large-Scale Malicious Software Classification With Fuzzified Features and Boosted Fuzzy Random Forest. IEEE Transactions on Fuzzy Systems, 2020. [Google Scholar] [CrossRef]

| ML Classifier | Default Values Used |

|---|---|

| DT | criterion = gini, max_depth = None, min_samples_split = 2, min_samples_leaf = 1 |

| KNN | n_neighbors = 5, weights = uniform, metric = minkowski, p = 2 |

| LR | penalty = l2, C = 1.0, solver = lbfgs, max_iter = 1000, class_weight = None |

| RF | n_estimators = 100, criterion = gini, max_depth = None, max_features = sqrt, min_samples_split = 2, min_samples_leaf = 1 |

| SVM | kernel = rbf, C = 1.0, gamma = scale, probability = True |

| CNN | Conv1D(filters = 32, kernel_size = 3), Conv1D(filters = 64, kernel_size = 3), Dropout = 0.3, Dense = 64, optimizer = Adam (lr = 0.001), batch_size = 64, epochs = 10 |

| FNN | Layers = 512–256–128, activation = ReLU, Dropout = 0.1, L2 = 0.001, optimizer = Adam (lr = 0.001), batch_size = 64, epochs = 100, EarlyStopping (patience = 10) |

| Classifier | Hyperparameter Search Space |

|---|---|

| DT | max_depth: [None, 10, 20, 30, 40, 50], min_samples_split: [2, 5, 10, 20], min_samples_leaf: [1, 2, 5, 10] |

| KNN | n_neighbors: [3, 5, 7, 9], weights: [uniform, distance], metric: [euclidean, manhattan] |

| LR | penalty: [l1, l2], C: [0.01, 0.1, 1, 10, 100], solver: [lbfgs, liblinear], class_weight: [None, balanced] |

| RF | n_estimators: [100, 200], max_depth: [None, 20, 40], min_samples_split: [2, 5], min_samples_leaf: [1, 2], max_features: [sqrt] |

| SVM | C: [0.1, 1, 10], kernel: [linear, rbf], gamma: [scale, 0.01, 0.001] |

| CNN | filters: [32, 64], kernel_size: [3, 5], dropout: [0.3, 0.5], dense_units: [64, 128], learning_rate: [0.001, 0.0005], batch_size: [32, 64] |

| FNN | layers_config: [[512,256,128], [512,256], [256,128]], dropout: [0.1, 0.2], l2_reg: [0.001, 0.0005], learning_rate: [0.001, 0.0005], batch_size: [64, 32] |

| ML Classifier | Tuned Parameters |

|---|---|

| Decision Tree | max_depth=None (unlimited depth), min_samples_split=5, min_samples_leaf=1 |

| KNN | metric=Manhattan, n_neighbors=3, weights=distance |

| Logistic Regression | C=0.1, class_weight=balanced, penalty=, solver=lbfgs |

| Random Forest | max_depth=None, max_features=sqrt, min_samples_leaf=1, min_samples_split=2, n_estimators=100 |

| SVM | C=10, kernel=RBF, gamma=scale |

| CNN | filters=64, kernel_size=3, dropout=0.3, dense_units=128, learning_rate=0.0005, batch_size=32 |

| Fully Connected NN | batch_size=64, dropout=0.1, regularization=0.0005, layers=[512,256], learning_rate=0.0005 |

| Classifier | Dataset | Acc (%) | Prec (%) | Rec (%) | F-Score (%) |

|---|---|---|---|---|---|

| DT | Scored Features | 95.976 | 95.729 | 96.296 | 96.012 |

| Non-Scored Features | 94.709 | 94.543 | 94.963 | 94.752 | |

| KNN | Scored Features | 95.753 | 98.131 | 93.333 | 95.672 |

| Non-Scored Features | 95.380 | 98.116 | 92.593 | 95.274 | |

| LR | Scored Features | 98.361 | 98.514 | 98.222 | 98.368 |

| Non-Scored Features | 97.988 | 98.214 | 97.778 | 97.996 | |

| RF | Scored Features | 98.584 | 98.378 | 98.815 | 98.596 |

| Non-Scored Features | 98.212 | 98.655 | 97.778 | 98.214 | |

| SVM | Scored Features | 98.137 | 98.508 | 97.778 | 98.141 |

| Non-Scored Features | 97.690 | 98.204 | 97.185 | 97.692 | |

| CNN | Scored Features | 97.914 | 98.356 | 97.482 | 97.917 |

| Non-Scored Features | 97.765 | 98.063 | 97.482 | 97.771 | |

| FNN | Scored Features | 98.361 | 98.514 | 98.222 | 98.368 |

| Non-Scored Features | 97.690 | 98.348 | 97.037 | 97.688 |

| Sample Index |

Rule Antecedents | Rules Consequent |

Confidence |

|---|---|---|---|

| 998 prediction (0.95) |

api_call_getLine1Number, api_call_obtainMessage | malware | 0.947 |

| api_call_getSimSerialNumber | malware | 0.924 | |

| api_call_getDeviceSoftwareVersion, api_call_getSystemService, android.permission.INTERNET | malware | 0.919 | |

| api_call_setIcon, api_call_getDeviceId | malware | 0.898 | |

| api_call_setRepeatCount | malware | 0.898 | |

| 1815 prediction (0.97) |

api_call_getExtraInfo, api_call_setBackgroundResource, api_call_execSQL | malware | 1 |

| intent_WIFI_STATE_CHANGED, permission_ACCESS_WIFI_STATE, api_call_onDestroy | malware | 0.958 | |

| permission_INSTALL_SHORTCUT, permission.INTERNET, permission_READ_PHONE_STATE | malware | 0.976 | |

| api_call_getParams, api_call_getDeviceId | malware | 0.94 | |

| api_call_addDataScheme | malware | 0.93 | |

| 275 prediction (0.98) |

api_call_setAnimationStyle, api_call_rotateY, api_call_getLocationOnScreen, api_call_decodeStream | malware | 1 |

| api_call_rotateZ, api_call_onStart, api_call_setHeight, permission.READ_PHONE_STATE | malware | 1 | |

| api_call_setLightTouchEnabled, api_call_onTrackballEvent | malware | 0.996 | |

| api_call_getSubscriberId | malware | 0.958 | |

| api_call_writeByte, api_call_setWebViewClient | malware | 0.94 |

| Sample Index |

Rule Antecedents | Rules Consequent |

Confidence |

|---|---|---|---|

| 8595 prediction (0.98) |

api_call_addRequestHeader, api_call_setAppCachePath | benign | 0.976 |

| api_call_getExternalStoragePublicDirectory, api_call_getDataString, api_call_isFinishing | benign | 0.92 | |

| api_call_guessFileName | benign | 0.9 | |

| api_call_getCookie | benign | 0.85 | |

| api_call_setSystemUiVisibility | benign | 0.87 | |

| 7773 prediction (0.59) |

api_call_getPreferences, api_call_canGoBack, api_call_requestFocus | benign | 0.94 |

| api_call_onReachedMaxAppCacheSize, api_call_getDefaultSize, api_call_onTouch | benign | 0.85 | |

| api_call_setDisplayHomeAsUpEnabled, api_call_getChildAt, api_call_putBoolean | benign | 0.84 | |

| api_call_setSupportedAdSizes, api_call_getLocationOnScreen | benign | 0.79 | |

| api_call_setCustomClose, api_call_setOnTouchListener | benign | 0.78 |

| Samples | Mean | Median | Standard deviation | Maximum |

|---|---|---|---|---|

| Malware | 0.133 | 0.12 | 0.096 | 0.52 |

| Benign | 0.0185 | 0 .01 | 0.045 | 0.33 |

| Study | Dataset | Feature Type | Feature Processing | Model | Best Acc (%) | Recall (%) | Key Contribution |

|---|---|---|---|---|---|---|---|

| [46] | MalNet-Tiny | Graph-based (FCG) | Interpretability-driven FS (IG, GradCAM, SHAP) | JK-GraphSAGE (GNN) | 94.47 | 94 | Representation-centric approach improving GNN performance via explainable feature engineering |

| [51] | 124K Android apps | Static (API calls, opcodes) + Dynamic (network traffic) | Feature-based ML (MI, PCC, T-Test, SFS, PCA, Chi-square) | Ensemble ML | 0.973 | – | Demonstrated effectiveness of feature-based ML with ensemble models along with API calls and opcodes |

| [53] | AndroZoo | Static (API, Permissions, Opcodes) | Dynamic Weighted Feature Selection (DWFS) using RF importance | GNN | 95.56 | 95.68 | Robust detection under obfuscation using weighted feature importance |

| [50] | Drebin | Static + Dynamic + Graph | Graph augmentation + multimodal fusion | Multimodal DL | 99 | 99 | Graph-based multimodal learning for robust malware detection |

| [55] | Multiple datasets | Hybrid (Static + Dynamic) | Multimodal deep fusion | CNN/GNN Ensemble, DenseNet-GIN | 90.6 | 91.1 | Multimodal feature integration to improve classification |

| [52] | Drebin, AndroZoo, VirusShare | Static (API, Permissions, Intents) | Information Gain + hybrid preprocessing | DNN, ML models | 93 | 93 | Hybrid framework improving feature quality across malware families |

| [54] | Defense Droid | Static (Permissions) | Feature importance (GB) | RF, KNN, NB, DT | 93.96 | 91.54 | Reduced dimensionality while maintaining performance |

| [56] | Drebin, CICAndMal2017 | API, Permissions, Opcodes | RRFS | SVM, RF, ML models | 98.5 | 91.39 | Dimensionality reduction with high accuracy |

| RFS-MD (Proposed) | AndroZoo | API, Permissions, Intents | MI + Rule-based Scoring | RF, FNN, ML/DL | 98.584 | 98.815 | Rule-based feature scoring improving performance, robustness, and explainability |

| Study | XAI Method | Explanation Type | Provides Rules | Feature Importance | Model Explanation | Robustness Awareness | Evaluation Metrics |

|---|---|---|---|---|---|---|---|

| [57] | LIME (hierarchical) | Local (post-hoc, model-agnostic) | × | √ | √ | × | SHAP values |

| [58] | SHAP + feature analysis | Local + Global (post-hoc) | × | √ | √ | × | Accuracy, SHAP consistency |

| [46] | GradCAM + SHAP | Local (post-hoc) | × | √ | √ | × | Accuracy, explanation quality |

| [59] | Attention mechanism | Local + Global (intrinsic) | × | √ | √ | × | Accuracy, attention visualization |

| [60] | Feature importance + memory-based XAI | Local + Global | × | √ | √ | × | Accuracy, robustness analysis |

| [61] | GNN matching subgraph | Local (post-hoc) | × | √ | √ | × | Fidelity, subgraph similarity |

| [62] | Fuzzy rules / Decision tree | Local (intrinsic) | √ | √ | × | × | Accuracy |

| RFS-MD (Proposed) | Rule-based (RXAI) | Local + Global (intrinsic + post-hoc) | √ | √ | √ | √ | Fidelity, Accuracy, Recall, ASR |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).